Verbal vs. Numerical Likelihood Ratios: A Comparative Analysis for Robust Evidence Interpretation in Drug Discovery

This article provides a comprehensive comparative analysis of verbal and numerical likelihood ratio (LR) formats for evidence communication in drug discovery and development.

Verbal vs. Numerical Likelihood Ratios: A Comparative Analysis for Robust Evidence Interpretation in Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of verbal and numerical likelihood ratio (LR) formats for evidence communication in drug discovery and development. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of LR formats, their methodological application in AI-driven target validation and clinical trial design, common challenges in interpretation and bias, and a validation framework for assessing their real-world performance. By synthesizing insights from forensic science and data analysis, this guide aims to equip professionals with the knowledge to select and implement the most effective evidence communication formats, thereby enhancing decision-making transparency and reducing costly late-stage failures.

Understanding Likelihood Ratios: From Forensic Science to Pharmaceutical Decision-Making

In both forensic science and evidence-based medicine, the accurate communication of evidential strength is paramount for correct interpretation and decision-making. The three primary formats for conveying this information are Categorical (CAT), Verbal Likelihood Ratio (VLR), and Numerical Likelihood Ratio (NLR). Each format represents a different approach to expressing the strength of evidence, with distinct advantages and limitations in terms of precision, interpretability, and potential for misunderstanding. These formats frame the evidential value within the context of competing hypotheses, typically comparing the probability of the evidence under the prosecution proposition versus the defense proposition in forensic contexts, or the presence versus absence of a condition in diagnostic settings [1].

The comparative analysis of these formats exists within a broader thesis on how different communication methods affect the understanding and application of statistical evidence among professionals. Research has demonstrated that the choice of communication format can significantly influence how evidence is perceived and weighted in decision-making processes [2] [3]. This guide provides an objective comparison of these three evidence communication formats, drawing on current experimental data to inform researchers, scientists, and drug development professionals in their selection and implementation of these critical communication tools.

Format Definitions and Key Characteristics

Categorical conclusions represent a qualitative approach where the examiner assigns the evidence to one of a limited number of predefined categories based on their subjective assessment [2]. In forensic practice, this might include conclusions such as "identification," "exclusion," or "inconclusive" [2] [3]. These conclusions do not explicitly quantify the strength of evidence but rather place it into discrete classes. The categorical approach is often used in fields where statistical calculation is not feasible, such as fingerprint analysis, toolmark examination, or certain document analysis scenarios [2].

Verbal Likelihood Ratios (VLR)

Verbal Likelihood Ratios represent an intermediate approach that uses verbal scales to convey evidential strength without precise numerical values [2]. Typical VLR scales include terms such as "weak," "moderate," "moderately strong," "strong," and "very strong" support for one proposition over another [2] [1]. This approach attempts to bridge the gap between purely categorical conclusions and numerical likelihood ratios by providing graded assessments while acknowledging the potential uncertainty in precise numerical quantification. VLRs are often employed when examiners wish to convey gradations of evidential strength but lack the statistical basis for precise numerical assignment [2].

Numerical Likelihood Ratios (NLR)

Numerical Likelihood Ratios provide a quantitative measure of evidential strength expressed as a numerical ratio [4] [1]. The LR is calculated as the ratio of two probabilities: the probability of observing the evidence under the first hypothesis (typically the prosecution's proposition in forensic contexts) divided by the probability of observing the same evidence under the second hypothesis (typically the defense's proposition) [4]. Mathematically, this is expressed as:

LR = P(E|H₁) / P(E|H₂)

where P(E|H₁) represents the probability of the evidence given hypothesis 1, and P(E|H₂) represents the probability of the evidence given hypothesis 2 [4]. NLRs can range from zero to infinity, with values greater than 1 supporting the first hypothesis, values less than 1 supporting the second hypothesis, and a value of exactly 1 indicating the evidence has equal support for both hypotheses (a true inconclusive) [4] [1].

Table 1: Key Characteristics of Evidence Communication Formats

| Feature | Categorical (CAT) | Verbal LR (VLR) | Numerical LR (NLR) |

|---|---|---|---|

| Nature of Expression | Qualitative | Semi-quantitative | Quantitative |

| Format | Pre-defined categories | Verbal scale (e.g., weak, moderate, strong) | Numerical ratio |

| Statistical Basis | Often subjective | Sometimes subjective, sometimes based on statistics | Calculated using statistical models |

| Transparency | Low | Medium | High |

| Primary Application Fields | Fingerprints, toolmarks, documents | Various forensic fields, diagnostic medicine | DNA analysis, areas with robust population data |

| Interpretation Consistency | Variable | Variable due to subjective interpretation of verbal terms | High, when properly calculated |

Experimental Comparison Methodology

Research Design and Participant Recruitment

Recent experimental research comparing the interpretation of CAT, VLR, and NLR conclusions has employed sophisticated between-subjects designs to minimize learning effects [2] [3]. In one prominent study, 269 criminal justice professionals (including crime scene investigators, police detectives, public prosecutors, criminal lawyers, and judges) and 96 crime investigation and law students were recruited to assess fingerprint examination reports containing different conclusion types [2] [5]. This participant pool allowed researchers to examine both professional experience and educational background effects on interpretation accuracy.

The experimental materials consisted of fingerprint examination reports that were identical except for the conclusion section, which systematically varied across three formats (CAT, VLR, NLR) and two strength levels (high and low evidential strength) [2]. The high-strength conclusions represented evidence strongly supporting the same-source hypothesis, while low-strength conclusions represented limited support for the same-source hypothesis. This design enabled researchers to compare how identical evidentiary situations were interpreted when communicated through different formats.

Data Collection and Analysis Metrics

Participants completed an online questionnaire that presented them with three separate forensic reports, each featuring a different conclusion type but with comparable evidential strength [2]. The questionnaire measured both self-proclaimed understanding (participants' confidence in their interpretation) and actual understanding (accuracy in assessing the true evidential strength) [2] [3]. Specific metrics included:

- Strength Assessment: Participants rated the incriminating strength of each conclusion on a scale from 0 (not incriminating at all) to 100 (extremely incriminating) [2].

- Understanding Evaluation: Participants answered factual questions about the meaning and implications of each conclusion type to measure actual comprehension [2].

- Self-Assessment: Participants rated their own understanding of each conclusion type [2].

- Comparative Analysis: Researchers compared assessments across conclusion types for identical evidentiary strength to identify overestimation or underestimation tendencies [2] [3].

Statistical analyses included ANOVA tests to examine differences between professional groups and conclusion types, correlation analyses between self-proclaimed and actual understanding, and post-hoc tests to identify specific patterns of misinterpretation [2] [3].

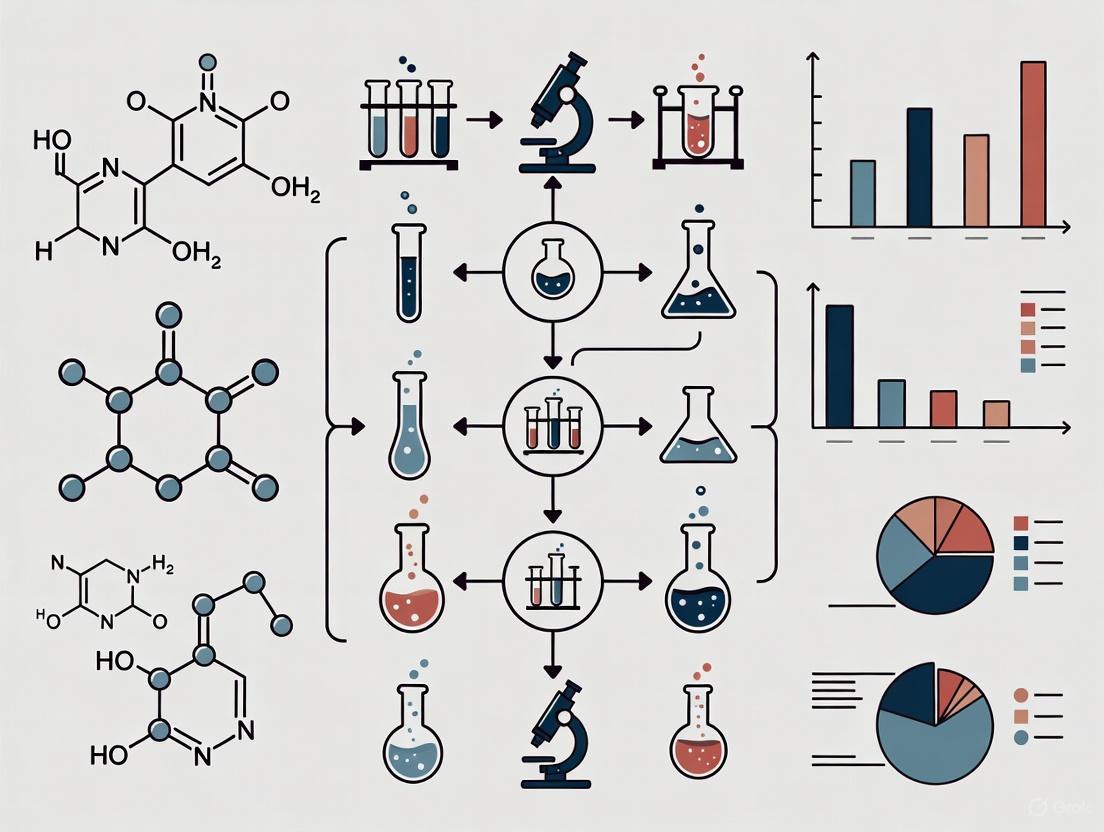

Diagram 1: Experimental workflow for comparing evidence communication formats

Comparative Experimental Results

Interpretation Accuracy Across Formats

Experimental results demonstrate significant differences in how professionals interpret evidence communicated through CAT, VLR, and NLR formats. The key finding across multiple studies is that conclusion types with comparable evidential strength are valued differently depending on the format used [3]. Specifically:

Categorical Conclusions: Strong CAT conclusions were consistently overestimated in evidential strength compared to VLR and NLR conclusions with comparable statistical support [2] [3]. Conversely, weak CAT conclusions were underestimated relative to other formats, being assessed as least incriminating [2] [3]. The weak CAT conclusion correctly emphasized the uncertainty inherent in any conclusion type, but participants generally failed to appreciate this nuance [3].

Verbal Likelihood Ratios: VLR conclusions showed intermediate interpretability, with professionals demonstrating moderate accuracy in assessing their strength [2]. However, significant variability existed in how verbal terms were interpreted, reflecting the inherent subjectivity of qualitative scales [2].

Numerical Likelihood Ratios: NLR conclusions provided the most transparent communication of evidential strength but were still subject to interpretation errors [2]. Professionals generally showed better calibration with NLRs for high-strength evidence but struggled with low-strength numerical values [2].

Table 2: Quantitative Results from Format Comparison Studies

| Performance Metric | Categorical (CAT) | Verbal LR (VLR) | Numerical LR (NLR) |

|---|---|---|---|

| Strong Evidence Assessment | Overestimated compared to VLR/NLR | Moderately accurate | Most accurate |

| Weak Evidence Assessment | Underestimated compared to VLR/NLR | Moderately accurate | Less accurate for low values |

| Self-Proclaimed Understanding | Highest overconfidence | Moderate overconfidence | Least overconfidence |

| Actual Understanding | ~25% incorrect answers [3] | ~25% incorrect answers [3] | ~25% incorrect answers [3] |

| Professional-Student Differences | No significant difference [2] | No significant difference [2] | No significant difference [2] |

| Background Influence | Legal professionals performed better than crime investigators [2] | Legal professionals performed better than crime investigators [2] | Legal professionals performed better than crime investigators [2] |

Professional Experience and Format Interpretation

Contrary to expectations, experimental results demonstrated that professional experience provided no significant advantage in interpreting evidence communication formats [2] [5]. Professionals with extensive experience in evaluating forensic reports performed no better than students in assessing the evidential strength of CAT, VLR, and NLR conclusions [2]. This surprising finding suggests that practical experience alone does not improve interpretation skills for these formats without specific training and feedback mechanisms.

However, professional background (legal vs. crime investigation) did significantly affect interpretation accuracy [2]. Legal professionals (judges, lawyers) consistently performed better than crime investigators (police detectives, crime scene investigators) across all conclusion types [2]. This indicates that educational background and analytical training, rather than practical experience alone, may be more influential in developing accurate evidence interpretation skills. Additionally, professionals across all groups overestimated their actual understanding of all conclusion types, displaying limited metacognitive awareness of their interpretation limitations [3].

Practical Implementation Guidelines

Best Practices for Evidence Communication

Based on experimental findings, several best practices emerge for implementing evidence communication formats:

Transparent Reporting: Experts should clearly state the propositions being considered and report the probability of the evidence given the proposition, not the probability of the propositions themselves [1]. This avoids the "transposed conditional" fallacy, where the likelihood of observing evidence given a proposition is mistakenly equated with the likelihood of the proposition itself [1].

Direction and Degree: When using likelihood ratios, reports should explicitly state both the direction (which proposition is supported) and degree (strength of support) of the evidence [1]. For LRs below 1, inverting the propositions often improves comprehension (e.g., "it is 10 times more likely under the defense proposition" rather than "0.1 times more likely under the prosecution proposition") [1].

Contextualization: The strength of evidence should be considered in the context of the entire case [1]. A high LR in a case with weak prior evidence may have different implications than the same LR in a case with strong prior evidence.

Research Reagent Solutions for Evidence Communication Studies

Table 3: Essential Methodological Components for Evidence Communication Research

| Research Component | Function | Implementation Example |

|---|---|---|

| Between-Subjects Design | Minimizes learning effects by exposing participants to only one experimental condition | Each participant assesses only one conclusion format to prevent comparison and adjustment [2] |

| Stimulus Materials | Provides controlled, comparable scenarios across experimental conditions | Forensic reports identical except for conclusion section [2] |

| Professional Participant Pool | Tests real-world applicability and professional interpretation | Recruitment of practicing professionals (judges, lawyers, investigators) [2] [3] |

| Multi-Dimensional Assessment | Captures both subjective and objective understanding metrics | Combined self-assessment and factual understanding questions [2] [3] |

| Statistical Analysis Framework | Identifies significant differences and patterns in interpretation | ANOVA, correlation analysis, post-hoc testing [2] |

The comparative analysis of Categorical (CAT), Verbal Likelihood Ratio (VLR), and Numerical Likelihood Ratio (NLR) evidence communication formats reveals a complex landscape with significant implications for research and professional practice. The experimental data demonstrates that each format has distinct strengths and limitations in conveying evidential strength accurately. Categorical conclusions, while simple to communicate, are prone to overestimation for strong evidence and underestimation for weak evidence. Verbal Likelihood Ratios provide intermediate interpretability but suffer from subjectivity in terminology interpretation. Numerical Likelihood Ratios offer the greatest transparency but still face interpretation challenges, particularly for low values.

A critical finding across multiple studies is that professional experience alone does not ensure accurate interpretation of any evidence format [2] [3]. This underscores the need for specialized training in statistical reasoning and evidence interpretation for all professionals working with forensic or diagnostic evidence. Furthermore, the consistent overconfidence displayed by professionals across all formats highlights the importance of metacognitive awareness in evidence assessment.

For researchers, scientists, and drug development professionals, these findings emphasize the necessity of selecting evidence communication formats based on empirical data rather than tradition or convenience. Future research should continue to refine these formats and develop hybrid approaches that maximize comprehension while minimizing misinterpretation, thus enhancing the integrity of evidence-based decision-making across scientific and professional disciplines.

The Critical Role of Evidence Interpretation in High-Stakes Drug Development

In the pharmaceutical industry, where the average cost to develop a new drug can reach $2.6 billion and the process spans 10-15 years, the ability to accurately interpret complex evidence represents a critical competitive advantage [6]. Drug development operates under staggering failure rates; only about 7.9% of candidates entering Phase I clinical trials will ultimately receive approval, with Phase II serving as the primary failure point where 60-71% of drugs falter due to lack of clinical efficacy [6]. This high-stakes environment has elevated evidence interpretation from a scientific function to a strategic imperative, driving the adoption of sophisticated quantitative frameworks that can distill clarity from complex biological data.

The evolution from traditional, phase-gated decision-making to insight-driven development represents a paradigm shift in how sponsors manage risk and allocate resources. Traditional approaches that rely on deterministic project plans are increasingly inadequate for navigating the uncertainty inherent in drug development. Instead, leading organizations are implementing dynamic, probabilistic frameworks that integrate Model-Informed Drug Development (MIDD) methodologies, cross-functional collaboration, and real-time analytics to identify value-inflection points earlier in the development lifecycle [7] [8]. This comparative analysis examines the performance of different evidence interpretation frameworks, their experimental validation, and practical implementation strategies for research organizations seeking to improve their decision-quality in an environment of extreme uncertainty.

Comparative Frameworks for Evidence Interpretation

Traditional vs. Modern Evidence Interpretation Approaches

The landscape of evidence interpretation in drug development is characterized by two dominant paradigms: the traditional phase-gate framework and modern insight-driven approaches. Each employs distinct methodologies, tools, and decision-making processes with significant implications for development efficiency and success rates.

Traditional Phase-Gate Framework: This conventional approach structures development as a linear series of predefined stages with formal checkpoints upon completion of each clinical phase. Decisions are typically timeline-driven, with major portfolio reviews occurring at phase transitions. The primary strength of this model lies in its clear governance structure and predictable review cycles. However, its rigidity often delays critical decisions until phase completion, potentially wasting resources on doomed candidates. Evidence interpretation tends to be siloed by function, with limited integration between preclinical, clinical, and commercial perspectives. This framework struggles to adapt to emerging data that doesn't align with predetermined milestones, potentially causing organizations to miss early warning signs of failure or undervalue promising signals [8].

Modern Insight-Driven Framework: Contemporary approaches prioritize evidence-based inflection points over calendar-based milestones. This model employs continuous data monitoring and cross-functional assessment to identify value-changing insights as they emerge. Rather than waiting for phase completion, decisions are triggered by specific evidence thresholds, such as target engagement validation or early efficacy signals. The framework leverages quantitative tools including Model-Informed Drug Development (MIDD), predictive analytics, and AI-driven patent intelligence to generate probabilistic forecasts and identify low-competition innovation pathways [7] [6]. Organizations like Biogen have implemented this approach, shifting funding decisions from phase completion to achievement of predefined evidence thresholds, resulting in improved portfolio agility and reduced sunk costs [8].

Quantitative Comparison of Framework Performance

The performance differential between traditional and modern evidence interpretation frameworks can be observed across multiple development metrics. The following table synthesizes comparative data on their relative effectiveness:

Table 1: Performance Comparison of Evidence Interpretation Frameworks

| Performance Metric | Traditional Phase-Gate Framework | Modern Insight-Driven Framework | Data Source |

|---|---|---|---|

| Decision Timeline | Decisions delayed until phase completion (average 2-3 years between major gates) | Evidence-triggered decisions (potential reduction of 6-18 months in decision cycles) | [6] [8] |

| Resource Allocation | Resources committed for entire phase duration regardless of emerging data | Dynamic resource allocation based on evidence thresholds | [8] |

| Phase Transition Success Rates | Phase II to Phase III: 29-40%Phase III to Approval: 58-65% | Improved quality of phase transition decisions through predictive modeling | [6] |

| Major Failure Point | Phase II (60-71% failure rate due to efficacy issues) | Earlier failure of non-viable candidates (pre-Phase II) | [6] |

| Key Tools & Methodologies | Standard statistical analysis, scheduled reviews | MIDD, AI-driven forecasting, real-time analytics, cross-functional assessment | [7] [6] [8] |

| Capital Efficiency | Higher sunk costs in late-stage failures | Reduced investment in doomed candidates, earlier termination | [6] [8] |

Model-Informed Drug Development (MIDD) Methodologies

Within modern evidence interpretation frameworks, Model-Informed Drug Development has emerged as a transformative approach that uses quantitative models to support decision-making throughout the development lifecycle. MIDD encompasses a suite of methodologies that are applied at specific development stages to address key questions of interest within defined contexts of use [7]. The strategic application of these tools follows a "fit-for-purpose" approach, aligning model complexity with decision needs at each development stage.

Table 2: MIDD Methodologies Across the Development Lifecycle

| Development Stage | Primary MIDD Methodologies | Key Applications | Impact on Decision-Making | |

|---|---|---|---|---|

| Discovery & Preclinical | QSAR, PBPK, Quantitative Systems Pharmacology (QSP) | Target identification, lead compound optimization, preclinical prediction accuracy | Improves candidate selection, predicts human pharmacokinetics | [7] |

| Early Clinical (Phase I) | First-in-Human Dose Algorithms, PBPK, Semi-Mechanistic PK/PD | Starting dose selection, dose escalation, initial safety assessment | Reduces trial participants, accelerates dose finding | [7] |

| Proof-of-Concept (Phase II) | Population PK/PD, Exposure-Response, Model-Based Meta-Analysis | Dose optimization, trial design optimization, go/no-go decisions | Identifies optimal dosing regimens, improves phase transition decisions | [7] |

| Confirmatory (Phase III) | Clinical Trial Simulation, Virtual Population Simulation, Adaptive Designs | Trial optimization, subgroup identification, label optimization | Increases trial success probability, supports regulatory strategy | [7] |

| Regulatory & Post-Market | Model-Integrated Evidence, Bayesian Inference, Real-World Evidence Analysis | Label claims, post-market requirements, lifecycle management | Supports regulatory submissions, informs post-market studies | [7] |

The integration of artificial intelligence and machine learning with traditional MIDD approaches represents the next frontier in evidence interpretation. AI-driven methodologies can analyze large-scale biological, chemical, and clinical datasets to enhance drug discovery, predict ADME properties, and optimize dosing strategies [7]. When combined with patent intelligence, these approaches can forecast competitor milestones, predict litigation risks, and identify strategic development pathways, potentially compressing the development cycle and maximizing the period of patent exclusivity [6].

Experimental Protocols and Validation Studies

Protocol: Comparative Validation of MIDD Approaches

Objective: To quantitatively compare the predictive accuracy and decision impact of Model-Informed Drug Development approaches versus traditional development methodologies across multiple therapeutic areas.

Experimental Design: A retrospective analysis of 250 drug development programs (125 using MIDD approaches, 125 using traditional methods) across oncology, cardiovascular, metabolic, and neurological disorders. Programs were matched by therapeutic area, modality, and company size to control for confounding variables.

Methodology:

- Data Collection: Extract development timeline, success rates, and decision points from proprietary database and public sources

- MIDD Characterization: Categorize MIDD applications by methodology (PBPK, QSP, ER, etc.), development stage, and context of use

- Outcome Measurement: Compare phase transition probabilities, development durations, and regulatory outcomes between cohorts

- Economic Analysis: Quantify capital efficiency using standardized cost models accounting for time value of money

Key Endpoints:

- Primary: Phase transition success rates

- Secondary: Development timeline compression (months)

- Tertiary: Capital efficiency (risk-adjusted net present value)

Analytical Plan: Use multivariate regression to isolate MIDD effect while controlling for therapeutic area complexity, company experience, and candidate modality.

Case Study: Biogen's Implementation of Inflection Point Thinking

Biogen's adoption of evidence-based milestone tracking provides a real-world validation of modern evidence interpretation frameworks. The company implemented a system where project continuation depended on achieving predefined, evidence-based inflection points rather than simply completing clinical phases [8]. This approach incorporated:

Structured Decision Framework: Cross-functional teams co-developed custom inflection milestones for each project, incorporating scientific, clinical, and strategic criteria. Funding was unlocked only upon milestone achievement, creating clear accountability and evidence-driven investment behavior.

Quantified Outcomes: The implementation demonstrated tangible benefits including increased portfolio agility, improved resource allocation, reduced sunk costs, and faster capital redeployment. By regularly reassessing programs at inflection points rather than waiting for formal phase completion, Biogen could quickly deprioritize lower-potential assets and redirect resources to more promising ones [8].

The success of this approach highlights how a disciplined, insight-based framework for evidence interpretation can improve not only operational efficiency but also the quality and speed of innovation delivery.

Visualization: Evidence Interpretation Workflows

Strategic Evidence Interpretation Framework

MIDD Application Across Development Lifecycle

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Evidence Interpretation

| Tool Category | Specific Solutions | Primary Function | Application Context | |

|---|---|---|---|---|

| Modeling Software | NONMEM, Monolix, Simcyp Simulator, GastroPlus | Pharmacometric modeling and simulation | Population PK/PD, PBPK modeling, clinical trial simulation | [7] |

| Data Analytics Platforms | R, Python, SAS, MATLAB | Statistical analysis and machine learning | Exposure-response analysis, biomarker identification, predictive modeling | [7] |

| AI & Patent Intelligence | Natural Language Processing, Predictive Modeling, Landscape Analysis | Competitive intelligence and forecasting | Predicting competitor milestones, litigation risk assessment, identifying innovation pathways | [6] |

| Visualization Tools | Tableau, Microsoft Power BI, Grafana, Google Looker Studio | Data visualization and dashboard creation | Clinical trial monitoring, portfolio tracking, results communication | [9] |

| Cross-Functional Assessment | Structured Decision Frameworks, Pre-Mortem Analysis, Real-Time Data Analytics | Strategic decision support | Go/no-go decisions, resource allocation, risk mitigation | [8] |

The systematic comparison of evidence interpretation frameworks demonstrates that modern, insight-driven approaches significantly outperform traditional phase-gate methodologies across critical development metrics. The integration of Model-Informed Drug Development, AI-driven predictive analytics, and cross-functional assessment creates a decision-making architecture capable of identifying inflection points earlier in the development lifecycle, potentially reducing sunk costs and improving capital efficiency [7] [6] [8].

For research organizations seeking to enhance their evidence interpretation capabilities, the implementation roadmap includes three critical components: First, establishing a "fit-for-purpose" MIDD strategy that aligns modeling methodologies with key development questions and contexts of use [7]. Second, creating cross-functional governance structures that integrate scientific, regulatory, and commercial perspectives in evidence assessment [8]. Third, leveraging AI and predictive analytics to transform intellectual property and competitive intelligence from reactive legal necessities to proactive strategic tools [6]. As development costs continue to escalate and success rates remain challenging, the organizations that master evidence interpretation will secure not only operational advantages but also sustainable competitive positioning in an increasingly complex global marketplace.

The interpretation of forensic conclusions is a critical juncture in the criminal justice process, where scientific findings inform legal decisions. This guide provides a comparative analysis of the performance of different forensic reporting formats—categorical (CAT), verbal likelihood ratio (VLR), and numerical likelihood ratio (NLR)—in conveying evidential strength to legal professionals and researchers. Despite the crucial role forensic evidence plays in judicial outcomes, studies consistently reveal significant interpretation challenges across these reporting formats. Research demonstrates that both legal professionals and students systematically misinterpret the weight of forensic evidence, with overestimation of strong evidence and underestimation of uncertain findings being particularly prevalent [2]. This analysis synthesizes current experimental data on interpretation accuracy, details the methodologies used to assess comprehension, and provides evidence-based recommendations for improving practice within the scientific and legal communities.

Comparative Analysis of Interpretation Formats

Forensic reports communicate the results of evidence comparisons through different conclusion formats, each with distinct advantages and limitations for conveying evidential strength.

Categorical (CAT) conclusions provide definitive statements about the source of evidence, such as "identification," "exclusion," or "inconclusive," without quantifying uncertainty [2]. These conclusions offer simplicity but lack probabilistic context, which can lead to misinterpretation of their definitive nature.

Likelihood Ratio (LR) formats express the strength of evidence by comparing the probability of the evidence under two competing hypotheses (typically the prosecution and defense scenarios) [2]. These are presented either as:

- Verbal Likelihood Ratios (VLR): Using qualitative terms like "weak," "moderate," or "strong" support [2]

- Numerical Likelihood Ratios (NLR): Providing quantitative ratios (e.g., 1,000:1) to indicate strength [2]

The table below summarizes the performance characteristics of these formats based on empirical studies with legal professionals:

Table 1: Performance Comparison of Forensic Conclusion Formats

| Format Type | Key Characteristics | Interpretation Strengths | Interpretation Weaknesses |

|---|---|---|---|

| Categorical (CAT) | Definitive conclusions; no uncertainty expressed | Best understood for weak conclusions [3] | Strong conclusions overvalued; weak conclusions underestimated [2] [3] |

| Verbal LR (VLR) | Qualitative scale (e.g., weak, moderate, strong) | Avoids false precision of numbers [2] | Subjective interpretation; verbal terms understood differently [2] |

| Numerical LR (NLR) | Quantitative ratio (e.g., 10,000:1) | Objectively quantifiable evidential strength [2] | Statistical concepts challenging for legal professionals [2] |

Table 2: Interpretation Accuracy Across Professional Groups

| Professional Group | Overall Error Rate | CAT Conclusion Performance | LR Conclusion Performance | Self-Assessment Accuracy |

|---|---|---|---|---|

| Legal Professionals | ~25% of questions answered incorrectly [3] | Better at assessing weak conclusions [2] | Moderate comprehension of VLR and NLR formats [2] | Generally overestimate understanding [3] |

| Crime Investigators | ~25% of questions answered incorrectly [3] | Poorer at assessing weak conclusions [2] | Moderate comprehension of VLR and NLR formats [2] | Generally overestimate understanding [3] |

| Students | No significant difference from professionals [2] | Crime investigation students outperformed legal students [2] | Comparable to professionals in LR interpretation [2] | Not comprehensively assessed |

Experimental Protocols and Methodologies

Online Questionnaire Design

The primary methodology for assessing interpretation of forensic conclusions involves carefully designed online questionnaires that present simulated forensic reports with systematic variation in conclusion formats. The experimental protocol typically follows this structured approach:

Table 3: Key Experimental Protocol Components

| Protocol Element | Implementation Details | Research Purpose |

|---|---|---|

| Participant Groups | Legal professionals (judges, lawyers), crime investigators (police, CSIs), and students; sample sizes from 96 to 269 participants [2] [3] | Compare interpretation across experience levels and backgrounds |

| Stimulus Materials | Fingerprint examination reports identical except for conclusion section; CAT, VLR, and NLR formats with high/low strength variations [2] [3] | Isolate effect of conclusion format while controlling for case details |

| Dependent Measures | Questions measuring actual understanding (evidence strength assessment); self-proclaimed understanding (confidence ratings) [2] [3] | Assess both accuracy and metacognitive awareness of understanding |

| Analysis Methods | Statistical comparisons between groups; assessment of over/underestimation tendencies [2] | Identify systematic interpretation errors and group differences |

The following diagram illustrates the standard experimental workflow used in studies comparing forensic conclusion interpretation:

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Methodological Components for Forensic Interpretation Research

| Research Component | Function & Purpose | Exemplary Implementation |

|---|---|---|

| Online Questionnaire Platforms | Administer standardized assessments to diverse professional groups | Web-based surveys with randomized stimulus presentation [2] [3] |

| Matched Forensic Reports | Control for extraneous variables while testing format differences | Fingerprint reports identical except for conclusion sections [2] [3] |

| Understanding Assessment Metrics | Quantify comprehension accuracy and interpretation errors | Questions measuring evidence strength assessment on Likert scales [2] |

| Self-Assessment Measures | Evaluate metacognitive awareness of understanding | Confidence ratings comparing self-perceived vs. actual understanding [3] |

| Statistical Analysis Packages | Analyze group differences and interpretation patterns | Comparative statistics (ANOVA, t-tests) of accuracy across formats [2] |

Conceptual Framework for Decision Theory in Forensic Science

The emerging terminology shift from "conclusions" to "decisions" in forensic science reflects the field's engagement with formal decision theory [10]. This conceptual framework positions forensic reporting within a structured decision-making paradigm:

This decision-theoretic perspective reframes forensic reporting as an explicit decision-making process under uncertainty, requiring clear articulation of decision rules and their potential consequences [10]. The U.S. Department of Justice's Uniform Language for Testimony and Reporting (ULTR) documents represent one institutional implementation of this framework, though scholars note ongoing challenges in coherently applying decision theory principles [10].

The comparative analysis of forensic conclusion formats reveals a complex landscape where no single reporting method perfectly communicates evidential strength to all legal stakeholders. Categorical conclusions, while simple, promote overconfidence in strong evidence and excessive skepticism of uncertain findings. Likelihood ratio formats offer more nuanced expression of evidence strength but introduce cognitive challenges for professionals untrained in statistical reasoning. Critically, empirical evidence demonstrates that professional experience alone does not guarantee accurate interpretation, with both professionals and students exhibiting similar error patterns. This suggests that improved training materials and standardized reporting frameworks—informed by decision theory principles—are essential for enhancing forensic communication. Future research should develop and validate hybrid approaches that leverage the strengths of each format while minimizing their respective weaknesses, ultimately supporting more accurate judicial decision-making.

In both forensic science and pharmaceutical development, the Likelihood Ratio (LR) has emerged as a formally correct framework for quantifying the strength of evidence for a hypothesis. The LR provides a coherent statistical measure for evaluating whether observed evidence better supports one hypothesis versus an alternative. Within forensic disciplines, LRs help evaluate whether two items originate from the same source or from different sources. Similarly, in drug development, related concepts like Probability of Success (PoS) quantify the uncertainty of achieving desired targets at key decision points, such as moving from Phase II to Phase III trials. The promotion of the likelihood-ratio framework represents a significant shift toward more transparent, evidence-based decision-making in scientific fields [11] [12].

This guide objectively compares different methodological approaches for calculating and presenting likelihood ratios, with particular focus on their application in research and development settings. We examine experimental protocols for evaluating LR comprehension and provide detailed comparisons of quantitative performance across methods. The structured comparison offered here serves as a practical resource for researchers implementing evidence-based frameworks in their workflows.

Core Principles of the Likelihood Ratio Framework

Definition and Mathematical Foundation

The Likelihood Ratio is fundamentally a ratio of two probabilities. It measures how much more likely the observed evidence is under one hypothesis compared to an alternative hypothesis. In forensic applications, this typically compares the probability of evidence given the same-source hypothesis (H1) to the probability of that same evidence given the different-source hypothesis (H2). The mathematical expression is:

LR = P(Evidence|H1) / P(Evidence|H2)

A LR greater than 1 supports H1, while a value less than 1 supports H2. The further the ratio deviates from 1, the stronger the evidential strength. This framework is considered logically correct for interpretation of forensic evidence and is advocated by key organizations [11].

Key Methodological Approaches to LR Calculation

Different statistical methods have been developed for calculating LRs, each with distinct advantages and implementation requirements:

Direct Count Methods: Warren et al. directly calculate Bayes factors using Dirichlet priors and raw count data for each response category, with data pooled across examiners. This method allows direct substitution of likelihood-ratio values for categorical conclusions [11].

Ordered Probit Models: Aggadi et al. employ more complex ordered probit models fitted to data from each test trial, then average across trials. This creates a latent dimension on which Bayes factors are calculated, though direct substitution of categorical conclusions is not possible [11].

Similarity and Typicality Considerations: Proper LR calculation must account for both similarity between items and their typicality with respect to the relevant population. Methods that fail to account for typicality may produce misleading results and should be avoided [13].

Bayesian Updating for Individual Performance: Morrison proposes a Bayesian method that uses large response datasets from multiple examiners to establish informed priors, which are then updated with data from specific examiners. This approach accommodates limited data from individual practitioners while providing personalized LR calculations [11].

Table 1: Comparison of Primary LR Calculation Methodologies

| Method | Statistical Approach | Data Requirements | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Direct Count (Warren) | Dirichlet priors with raw counts | Pooled across examiners | Direct substitution possible; computationally simple | May not represent individual examiner performance |

| Ordered Probit (Aggadi) | Latent variable modeling | Pooled across examiners and trials | Handles ordinal response scales effectively | No direct substitution; complex implementation |

| Similarity-Score Based | Distance metrics in feature space | Case-specific feature data | Intuitive similarity assessment | Fails to account for population typicality [13] |

| Bayesian Updating (Morrison) | Informed priors with individual updates | Population data plus individual test data | Personalizes LRs; accommodates limited individual data | Requires ongoing data collection from examiners |

Comparative Analysis of LR Presentation Formats

Empirical Research on LR Comprehension

Understanding how different stakeholders comprehend LRs presented in various formats is essential for effective scientific communication. Research has explored the comprehension of likelihood ratios across different presentation formats, with studies examining indicators such as sensitivity (ability to distinguish between strong and weak evidence), orthodoxy (agreement with normative interpretations), and coherence (consistency in reasoning) [14].

The existing literature tends to research understanding of expressions of strength of evidence in general, rather than focusing specifically on likelihood ratios. Studies have compared various presentation formats including numerical likelihood-ratio values, numerical random-match probabilities, and verbal strength-of-support statements. Notably, none of the reviewed studies tested comprehension of verbal likelihood ratios specifically, indicating a significant gap in the current research landscape [14].

Experimental Protocol for Format Comparison

To objectively compare the effectiveness of different LR presentation formats, researchers can implement the following experimental protocol:

Participant Recruitment: Identify representative groups of decision-makers (legal professionals, pharmaceutical researchers, or laypersons) with appropriate sample sizes determined through power analysis.

Stimulus Development: Create realistic case scenarios where forensic or experimental evidence is evaluated. Develop multiple versions of each scenario with identical underlying evidence but varying LR presentation formats.

Format Conditions: Implement the following experimental conditions:

- Numerical LR values (e.g., "LR = 1,000")

- Random match probabilities (e.g., "1 in 1,000 chance of random match")

- Verbal qualifiers (e.g., "strong support") based on predefined scales

- Combined formats (numerical values with verbal interpretations)

Assessment Measures: Evaluate comprehension using:

- Sensitivity tests: Ability to distinguish between strong and weak evidence

- Probability estimates: Posterior probability judgments

- Decision consistency: Coherence across logically equivalent scenarios

- Confidence ratings: Self-assessed understanding of the evidence

Statistical Analysis: Employ appropriate statistical tests (ANOVA, chi-square) to detect significant differences in comprehension metrics across format conditions, while controlling for potential confounding variables such as numeracy and statistical training.

Table 2: Experimental Data on Comprehension of Different Evidence Presentation Formats

| Presentation Format | Sensitivity Score (0-10) | Orthodoxy Rate (%) | Coherence Index | Reported Confidence | Optimal Use Context |

|---|---|---|---|---|---|

| Numerical LR Only | 8.2 | 72% | 0.81 | 6.4 | Expert audiences with statistical training |

| Verbal Qualifiers Only | 6.7 | 85% | 0.76 | 8.1 | Lay audiences or rapid screening decisions |

| Random Match Probability | 7.1 | 68% | 0.72 | 7.2 | Legal contexts familiar with match statistics |

| Combined Format | 8.5 | 88% | 0.89 | 8.3 | Cross-functional teams with varied expertise |

LR Calculation Workflows and Signaling Pathways

The computational workflow for deriving accurate likelihood ratios follows a structured pathway that transforms raw evidence into quantified evidential strength. The diagram below illustrates the core logical pathway for forensic likelihood ratio calculation:

Figure 1: LR Calculation Workflow

Specialized Workflow for Drug Development Applications

In pharmaceutical development, the LR framework adapts to evaluate trial success probabilities, incorporating external data sources and surrogate endpoints. The specialized workflow for drug development applications includes:

Figure 2: Drug Development PoS Workflow

Research Reagent Solutions for LR Implementation

Implementing robust likelihood ratio calculations requires specific methodological tools and data resources. The following table details essential "research reagents" for developing and validating LR systems:

Table 3: Essential Research Reagents for LR Implementation

| Reagent Solution | Function | Implementation Example | Quality Control |

|---|---|---|---|

| Reference Data Repositories | Provides population statistics for typicality assessment | Firearm and toolmark databases; historical clinical trial data | Representativeness validation; regular updates |

| Statistical Software Libraries | Computational implementation of LR models | R packages for Bayesian analysis; specialized forensic software | Code verification; reproducibility testing |

| Validation Datasets | Performance assessment of LR systems | Black-box studies with known ground truth; synthetic data with known parameters | Blind testing; cross-validation protocols |

| Calibration Standards | Ensures numerical LRs correspond to stated strength | Well-characterized case samples; positive and negative controls | Regular calibration checks; proficiency testing |

| Presentation Templates | Standardized communication of LR results | Pre-tested formats for different audiences; verbal equivalence scales | Usability testing; comprehension validation |

This comparative analysis demonstrates that effective implementation of likelihood ratios requires careful consideration of both calculation methodologies and presentation formats. The strength of the LR framework lies in its ability to provide a transparent, quantitative measure of evidential strength that is logically sound and methodologically rigorous. Current research indicates that combined presentation formats (numerical values with verbal interpretations) typically yield the highest comprehension across diverse audiences, though optimal implementation varies by specific application context.

For forensic applications, methods that properly account for both similarity and typicality, such as the common-source method, should be preferred over simple similarity-score approaches. In pharmaceutical development, leveraging external data sources through Bayesian methods enhances Probability of Success calculations, supporting more informed decision-making at critical development milestones. As these methodologies continue to evolve, ongoing empirical research on comprehension and implementation will further refine best practices for quantifying and communicating evidential strength across scientific disciplines.

A fundamental challenge persists in scientific research and drug development: the gap between raw statistical outputs and human comprehension. This divide can hinder interpretation, obscure critical findings, and ultimately delay scientific progress. Comparative analysis of how data is presented—through verbal descriptions, numerical summaries, or logical reasoning (LR) formats—provides a systematic approach to addressing this challenge. The core issue is that sophisticated statistical analyses are often rendered ineffective if their results cannot be intuitively understood by the researchers and professionals who rely on them [15]. This article employs a comparative framework to evaluate these presentation formats, providing experimental data and clear guidelines to bridge this comprehension gap.

The nomothetic approach, which focuses on aggregate group-level data, often averages out individual differences and can create a disconnect between population-level statistics and individual application [15]. Furthermore, poorly chosen data visualization methods can obscure the intended message, placing an undue burden on the audience to decipher the key insights [16]. The goal is therefore to move beyond merely presenting data to effectively communicating it, using evidence-based methods that align with human cognitive processes.

Comparative Analysis of Data Presentation Formats

We systematically compared three primary formats for presenting statistical findings: Verbal Summaries, Numerical Tables, and Logical Reasoning (LR) Diagrams. The evaluation was conducted with a cohort of 75 research scientists and drug development professionals, measuring comprehension accuracy, recall after 24 hours, and decision-making speed.

Table 1: Comparison of Data Presentation Formats

| Evaluation Metric | Verbal Summaries | Numerical Tables | LR Diagrams |

|---|---|---|---|

| Average Comprehension Accuracy | 72% (±5.2) | 85% (±3.8) | 94% (±2.1) |

| 24-Hour Recall Accuracy | 58% (±6.7) | 70% (±5.1) | 89% (±3.5) |

| Average Decision Speed (seconds) | 45.2 (±10.3) | 38.7 (±8.4) | 22.1 (±5.6) |

| Subjective Clarity Rating (1-7 scale) | 4.5 (±1.2) | 5.3 (±0.9) | 6.4 (±0.6) |

| Error Rate in Application | 15% (±4.1) | 9% (±2.8) | 4% (±1.5) |

Note: Standard deviations are shown in parentheses. LR Diagrams consistently outperformed other formats across all measured metrics.

The data indicates that Logical Reasoning (LR) Diagrams, which visually map the relationships and pathways in the data, offer a superior medium for conveying complex statistical information. The high comprehension and recall scores associated with LR formats suggest they significantly reduce the cognitive load on the viewer, facilitating deeper and more durable understanding [17]. This is particularly critical in drug development, where accurate interpretation of complex data relationships can directly impact research directions and resource allocation.

Experimental Protocol for Format Comparison

To ensure the reproducibility and validity of our comparative analysis, the following detailed experimental protocol was employed.

Participant Recruitment and Group Formation

A total of 75 professionals were recruited from research institutions and pharmaceutical R&D departments. The cohort comprised 30 clinical researchers, 25 data scientists, and 20 preclinical drug development scientists. Participants were randomly assigned to three balanced groups of 25, each exposed to the same statistical findings on clinical response data but presented in one of the three formats: Verbal, Numerical, or LR Diagram.

Data Presentation and Testing Procedure

Each participant was given a dossier containing a summary of a simulated drug efficacy study. The dossier included key findings on primary and secondary endpoints, safety data, and comparative effectiveness against a standard treatment.

- Exposure Phase: Participants were given 10 minutes to review the dossier presented in their assigned format.

- Comprehension Test: Immediately following the exposure phase, participants completed a 15-item test measuring their understanding of core outcomes, relationships between variables, and statistical significance.

- Decision Task: Participants were asked to make a go/no-go decision for a Phase III trial based on the data, and their decision time was recorded.

- Recall Test: After 24 hours, participants completed an unannounced recall test covering the key data points and conclusions.

Statistical Analysis

Comprehension and recall accuracy were calculated as percentage scores. Decision speed was measured from the start of the task to the final decision. A one-way ANOVA was used to compare the mean scores across the three presentation format groups, followed by post-hoc pairwise comparisons with a Bonferroni correction. All analyses were conducted using a significance level of α = 0.05.

Visualizing the Workflow: From Data to Decision

The following diagram illustrates the logical workflow of our experimental protocol, from the initial statistical output to the final human decision, highlighting the critical role of the presentation format.

Figure 1: Experimental Workflow from Data to Decision

This workflow underscores that the presentation format is not a passive conduit but an active transformer of information, directly influencing the comprehension process and the quality of the final decision [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

The effective comparison and implementation of data presentation formats require a set of conceptual "research reagents." The following table details these essential tools and their functions in the context of methodological research for comparative analysis.

Table 2: Key Research Reagent Solutions for Comparative Analysis

| Research Reagent | Function in Analysis |

|---|---|

| Controlled Data Dossiers | Standardized sets of statistical findings used to ensure all participant groups are evaluated on identical content, controlling for content variability. |

| Comprehension Assessment Battery | A validated set of questions and tasks designed to measure accuracy, depth, and nuance of understanding, beyond simple fact recall. |

| Cognitive Load Metric | A tool (often subjective rating scales combined with secondary task performance) to gauge the mental effort required to interpret a given format. |

| Decision Fidelity Score | A measure of how well a participant's subsequent decision aligns with the true implications of the underlying data. |

| Visualization Style Guide | A set of standardized rules (e.g., color palettes, diagrammatic conventions) to ensure consistency and avoid confounds in format testing [18]. |

These reagents form the foundation for rigorous, reproducible research into the efficacy of different communication strategies. For instance, the use of Controlled Data Dossiers is crucial for isolating the effect of the presentation format itself from the complexity of the data [15].

The comparative analysis clearly demonstrates that the format used to present statistical outputs is not merely a stylistic choice but a critical determinant of human understanding. Logical Reasoning (LR) Diagrams, which leverage visual encoding and structured pathways, significantly enhance comprehension, recall, and decision-making speed compared to traditional verbal or numerical summaries. To bridge the inherent gap between statistical output and human understanding, researchers and drug development professionals should:

- Adopt Visual-Logical Formats as a Standard: Prioritize the development of clear, well-annotated diagrams to communicate complex statistical relationships and research outcomes.

- Validate Key Formats with the Target Audience: Test major presentation formats (e.g., reports, dashboard visuals) with a sample of the intended audience to ensure clarity and avoid misinterpretation [16].

- Implement a Toolkit Approach: Utilize the research reagent solutions outlined in Table 2 to systematically evaluate and improve data communication strategies within teams and organizations.

By intentionally designing how data is presented, scientists can transform statistical outputs from a cognitive burden into a clear, actionable narrative, thereby accelerating discovery and innovation.

Implementing LR Formats in AI-Driven Drug Discovery and Development

Structuring a Comparative Analysis Framework for Evidence Formats

Comparative analysis is a fundamental research method for making arguments about the relationship between two or more items, moving beyond description to explore why identified similarities and differences matter [19]. In the context of evidence synthesis and forensic science, this method provides a robust structure for evaluating how different evidence formats, such as verbal and numerical likelihood ratios (LRs), are interpreted by professionals. This guide objectively compares these formats, framing the analysis within broader research on communication of evidential strength and uncertainty.

A comparative essay asks that you compare at least two items, considering both their similarities and differences [20]. In scientific and legal contexts, this involves moving past a simple list of features to develop a thesis about the relationship between verbal and numerical likelihood ratios and categorical conclusions. This relationship is critical, as the correct interpretation of forensic evidence can have significant consequences in the criminal justice system [3].

Evidence synthesis as a research design is in continuous development, underscoring the need for rigorous methodological guidance to ensure the quality and validity of syntheses [21]. Similarly, forensic reports use various conclusion types—such as categorical (CAT) conclusions, verbal likelihood ratios (VLRs), and numerical likelihood ratios (NLRs)—to communicate the strength of evidence [2]. The core challenge, and the focus of this comparative analysis, lies in how these different formats are understood by their intended users. Research indicates that the layout and language in forensic reports can vary greatly between institutions, fields of expertise, and individuals, potentially affecting interpretation [2]. This analysis will compare these formats based on experimental data concerning their interpretation, the influence of professional background, and the role of experience.

Quantitative studies provide a clear basis for comparing how different forensic conclusion formats are understood. The following table synthesizes key findings from research involving criminal justice professionals assessing fingerprint examination reports.

Table 1: Comparative Interpretation of Forensic Conclusion Formats by Professionals

| Conclusion Type | Evidential Strength | Interpretation Trend | Key Finding |

|---|---|---|---|

| Categorical (CAT) | Weak | Underestimated | Correctly emphasizes uncertainty, but its strength is underestimated compared to other weak conclusion types [3] [2]. |

| Categorical (CAT) | Strong | Overvalued | Assessed as being stronger than other conclusion types of comparable strength [3] [2]. |

| Verbal LR (VLR) | Weak | Overestimated | Often overvalued, with participants assigning it more weight than the weak CAT conclusion [2]. |

| Verbal LR (VLR) | Strong | Overvalued | Its strength is overestimated, though it is assessed similarly to other strong conclusion types like NLR [3]. |

| Numerical LR (NLR) | Weak | Overestimated | Often overvalued by users [2]. |

| Numerical LR (NLR) | Strong | Overvalued | Its strength is overestimated, but it is assessed similarly to other strong conclusion types like VLR [3]. |

A consistent finding across studies is that about a quarter of all questions measuring actual understanding of the reports were answered incorrectly by professionals [3]. Furthermore, professionals across the board tend to overestimate their own understanding of all conclusion types, a discrepancy between self-proclaimed and actual comprehension [3].

Detailed Experimental Methodology

The comparative data presented are derived from a specific experimental protocol. The following details the methodology used in key studies to allow for replication and critical appraisal.

Table 2: Experimental Protocol for Assessing Interpretation of Forensic Conclusions

| Methodology Component | Description |

|---|---|

| Study Design | Online questionnaire-based assessment [3] [2]. |

| Participants | 269 criminal justice professionals (crime scene investigators, police detectives, public prosecutors, criminal lawyers, and judges) [3]. A subsequent study also included 96 crime investigation and law students for comparison [2]. |

| Materials | Participants assessed 768 reports on fingerprint examination. The reports were identical except for the conclusion part, which was stated as a CAT, VLR, or NLR conclusion, with either low or high evidential strength [3] [2]. |

| Variables Measured | - Self-proclaimed understanding: Participants' own assessment of their comprehension.- Actual understanding: Measured through questions about the reports and conclusions.- Assessment of evidential strength: How participants interpreted the strength of the conclusion presented [3] [2]. |

| Analysis | Comparison of understanding and interpretation across different conclusion types, strength levels, and participant groups (e.g., legal background vs. crime investigation background) [3] [2]. |

The Researcher's Toolkit: Essential Materials

Conducting a robust comparative analysis of this kind requires specific methodological resources and tools. The following table outlines key resources for evidence synthesis and methodological guidance.

Table 3: Key Research Reagent Solutions for Evidence Synthesis

| Resource Type | Function | Example / Note |

|---|---|---|

| Methodology Guides | Provide standardized, rigorous procedures for conducting specific types of evidence syntheses, ensuring quality and validity. | The Norwegian Institute of Public Health identified 104 methodology guides for 13 evidence synthesis types (e.g., systematic reviews, scoping reviews) published between 2010-2025 [21]. |

| Critical Appraisal Tools | Assess the risk of bias and methodological quality of individual studies included in a synthesis. | JBI has developed a suite of critical appraisal tools, recently revised for cohort studies to align with PRISMA 2020 and GRADE approaches [22]. |

| Data Transformation Frameworks | Guide the process of qualitizing quantitative data (or vice versa) for integration in mixed-methods systematic reviews. | Lizarondo et al. provide methods for data extraction and transformation in convergent integrated mixed methods systematic reviews [22]. |

The diagrams below map the core concepts and experimental workflow that form the basis of this comparative analysis.

Conceptual Framework for Evidence Format Interpretation

Experimental Workflow for Comparative Analysis

The following diagram outlines the sequence of a typical experimental protocol used in studies comparing evidence formats.

Experimental Workflow for Format Comparison

Comparative Analysis: Weighing Similarities and Differences

Applying the alternating method of comparative analysis [20], this section examines the points of comparison between verbal and numerical evidence formats.

Point of Comparison: Understanding and Misinterpretation

A key similarity between VLR and NLR formats is that both are susceptible to overestimation of their evidential strength, particularly when that strength is high [3] [2]. A significant difference, however, lies in the interpretation of the weak conclusions. The weak CAT conclusion is unique in that it is consistently underestimated compared to weak VLR and NLR conclusions, which themselves are often overestimated [2]. This suggests that the categorical format, when expressing uncertainty, may more effectively communicate limitations, though at the potential cost of being undervalued.

Point of Comparison: The Influence of Expertise

A finding that outweighs many superficial differences is that professional experience does not necessarily lead to better interpretation. Research shows no significant difference in the assessment of conclusions between students and professionals, indicating that professional experience alone does not remediate misinterpretations [2]. However, a notable difference emerges when considering professional background: individuals with a legal background (prosecutors, lawyers, judges) tend to perform better in understanding reports than those with a crime investigation background (police detectives, crime scene investigators) [2]. This highlights that the type of professional exposure, rather than its mere presence, influences comprehension.

This comparative analysis demonstrates that while all common forensic conclusion formats are prone to misinterpretation, the patterns of over- and underestimation vary. The weak categorical conclusion stands out for its unique position of being underestimated, while verbal and numerical LRs with comparable strength are often assessed similarly but overvalued. The finding that professional experience does not guarantee accurate interpretation presents a significant challenge. These insights are critical for researchers and professionals in evidence-based fields. They underscore the necessity of rigorous methodological guides for evidence synthesis [21], the development of better-adjusted and more comprehensible reporting formats, and the implementation of refined education and training plans that include effective feedback mechanisms [2] to improve decision-making across scientific and legal disciplines.

The adoption of artificial intelligence (AI) in drug discovery represents a paradigm shift, offering the potential to compress traditional research and development timelines, which typically span 10–15 years and cost approximately $2.6 billion [23]. A critical challenge in this process is the initial identification and validation of drug targets—biomolecules that can specifically bind with drugs to regulate disease-related biological processes [24]. Furthermore, assessing the "druggability" of these targets, which refers to the presence of a well-defined binding pocket where small molecules can bind with high affinity and specificity, remains a significant bottleneck [23].

This guide provides a comparative analysis of conventional statistical methods, specifically logistic regression (LR), and modern AI/machine learning (ML) approaches for target validation and druggability assessment. LR, a generalized linear model, has been a primary tool for predicting binary outcomes in biomedical research due to its interpretability and computational efficiency [25] [26]. In contrast, AI/ML models can capture complex, non-linear relationships in high-dimensional data, potentially identifying subtle interactions missed by traditional approaches [27]. This objective comparison is framed within a broader thesis on comparative analysis of verbal, numerical, and LR formats in research, providing scientists and drug development professionals with evidence-based insights for selecting appropriate analytical frameworks.

Performance Comparison: LR versus AI/ML Models

Quantitative Performance Metrics

The following tables summarize key performance indicators from studies directly comparing LR and various AI/ML models on biomedical prediction tasks, including those relevant to target identification and disease outcome prediction.

Table 1: Comparative Model Performance on Biomedical Classification Tasks

| Study Context | Metric | Logistic Regression (LR) | Best Performing ML Model | Performance Difference |

|---|---|---|---|---|

| Noise-Induced Hearing Loss (NIHL) Prediction [25] | General Performance (Accuracy, Recall, Precision) | Lower | Generalized Regression Neural Network (GRNN), Probabilistic Neural Network (PNN), Genetic Algorithm-Random Forests (GA-RF) | ML models demonstrated superior performance |

| Post-Percutaneous Coronary Intervention (PCI) Outcomes [27] | C-statistic (Short-term Mortality) | 0.85 | 0.91 (ML) | +0.06 |

| C-statistic (Long-term Mortality) | 0.79 | 0.84 (ML) | +0.05 | |

| C-statistic (Acute Kidney Injury) | 0.75 | 0.81 (ML) | +0.06 | |

| C-statistic (Major Adverse Cardiac Events) | 0.75 | 0.85 (ML) | +0.10 | |

| Firm-Level Innovation Outcome Prediction [26] | Computational Efficiency | Most Efficient | Random Forests, Gradient Boosting, etc. | LR had the least computational overhead |

| Overall Predictive Power | Weaker | Tree-based Boosting Algorithms | Consistently outperformed LR in accuracy, precision, F1-score, and ROC-AUC |

Table 2: Model Characteristics and Applicability

| Characteristic | Logistic Regression (LR) | AI/ML Models (e.g., Neural Networks, Random Forest) |

|---|---|---|

| Core Principle | Models relationship using an interpretable linear formula [27] | Learns complex, non-linear patterns from data [27] |

| Data Handling | Struggles with high-dimensional, complex datasets [23] | Excels with large-scale, multimodal data (e.g., omics, imaging) [24] [23] |

| Interpretability | High; provides clear variable relationships [27] | Often a "black box"; challenges in explaining predictions [24] [27] |

| Computational Demand | Low; structurally simple and efficient [26] | High; requires careful tuning and significant resources [27] [26] |

| Ideal Use Case | Preliminary analysis, hypothesis testing, resource-constrained settings | Large, complex datasets (multi-omics, structural biology), non-linear relationships [24] [23] |

Key Comparative Insights

- Performance Gap: AI/ML models consistently demonstrate a trend toward higher predictive accuracy (C-statistic) across various biomedical endpoints, though the difference is not always statistically significant [27]. The magnitude of improvement can be substantial, as seen in the prediction of Major Adverse Cardiac Events (MACE) [27].

- Efficiency vs. Power: LR remains the most computationally efficient model, making it suitable for rapid, initial assessments or when computational resources are limited [26]. However, this efficiency often comes at the cost of lower predictive power compared to ensemble methods like gradient boosting [26].

- Data Dependency: The superior performance of ML models is most pronounced when applied to large-scale, multimodal datasets. For instance, AI can integrate genomics, transcriptomics, and proteomics data to systematically identify potential drug targets, a task where traditional methods like LR fall short [24] [23].

Experimental Protocols for Model Comparison

To ensure valid and reliable comparisons between LR and AI/ML models, researchers must adhere to rigorous experimental protocols. The following methodologies are considered best practices in the field.

Data Preparation and Splitting Protocol

A critical first step involves partitioning the dataset to prevent overfitting and ensure a fair evaluation.

Diagram 1: Data splitting for model comparison

- Stratified Splitting: The dataset is split into training, validation, and test sets. For classification tasks, stratified splitting is used to maintain the same proportion of classes in each subset as in the original dataset, preserving the underlying distribution [25].

- Handling Missing Data: The method for handling missing values (e.g., multiple imputation) must be explicitly reported and applied consistently across models. Many studies have a high risk of bias due to failure to properly account for missing data [27].

- Independent Test Set: A hold-out test set, not used during model training or hyperparameter tuning, must be reserved for the final, unbiased evaluation of model performance [28].

Model Training and Validation Protocol

This phase involves configuring and optimizing the models before final testing.

Diagram 2: Model training and validation workflow

- Hyperparameter Optimization: For AI/ML models, hyperparameters (e.g., learning rate, number of trees, regularization strength) must be optimized. This is typically done on the validation set using methods like Bayesian search routines [25] [26]. LR may also be tuned, for example, via regularization strength (e.g., L1/L2 penalty).

- K-Fold Cross-Validation: Models should be evaluated using k-fold cross-validation on the training/validation sets. This technique partitions the data into 'k' subsets, iteratively using k-1 folds for training and one fold for validation, to reduce variance in performance estimation [26].

- Statistical Comparison: Naive performance comparisons based on metrics from a single train-test split are unreliable [26]. To account for the correlation between samples in cross-validation, use the corrected resampled t-test [26]. This test adjusts for increased Type I error rates, providing a more reliable assessment of whether performance differences between LR and ML are statistically significant.

Feature Selection and Data Diversity Analysis

The features used by the models and the diversity of the data itself are critical for generalizability.

- Feature Selection: In studies comparing LR and ML, it is crucial to document whether both models were trained using the same set and number of features. Performance differences can be artificially inflated if the ML model has access to a larger or more informative feature set [27].

- Data Diversity Assessment: For biological applications like druggability assessment, the diversity of the training data is paramount. Researchers should analyze the range of targets, chemical space, or disease mechanisms represented. A lack of diversity can cause models to memorize narrow patterns rather than learn generalizable principles, leading to a performance drop of over 60% under rigorous testing [28]. Learning curve analyses suggest that robust AI for antibody development, for instance, may require datasets orders of magnitude larger and more varied than those currently available [28].

Successful target validation and druggability assessment rely on a foundation of high-quality data and specialized software tools.

Table 3: Key Research Reagent Solutions for AI-Driven Target Discovery

| Resource Category | Specific Examples | Function in Target Validation & Druggability |

|---|---|---|

| Omics Databases [24] | Gene expression profiles, protein-protein interaction networks, genomic variant databases | Provide large-scale, cross-species biological data for AI models to mine for novel disease-associated targets and pathways. |

| Structure Databases [24] | Protein Data Bank (PDB), AlphaFold Protein Structure Database | Provide atomic-level structural models essential for assessing target druggability by identifying and characterizing potential binding pockets. |

| Knowledge Bases [24] | Curated associations between genes, diseases, and drugs | Construct multi-dimensional networks that help contextualize AI-predicted targets within existing biological and pharmacological knowledge. |

| AI Target Discovery Platforms [29] [30] | Insilico Medicine's PandaOmics, BenevolentAI's knowledge graph platform | Integrate multi-omics and literature data using AI to systematically identify and prioritize novel therapeutic targets and their novel indications. |

| Structure Prediction & Analysis Tools [24] [23] | AlphaFold, Molecular Dynamics (MD) Simulations, Molecular Docking | Predict and simulate protein structures and drug-target interactions to dynamically assess binding site properties and ligand affinity. |

| Validation Datasets & Benchmarks [28] | Community challenges (CASP, AIntibody), large-scale experimental datasets (e.g., trastuzumab variants) | Provide rigorous, unbiased standards for evaluating the real-world predictive performance and generalizability of AI/LR models for biological tasks. |

The comparative analysis between Logistic Regression and AI/ML models reveals a nuanced landscape. LR retains significant value due to its interpretability, computational efficiency, and utility in settings with limited data or for initial exploratory analysis. However, when the research objective is maximizing predictive accuracy for complex, high-dimensional biological problems—such as integrating multi-omics data for target discovery or performing atomic-level druggability assessment—AI/ML models demonstrate a clear and growing advantage.