Validation of Forensic Evaluation Methods: A Comprehensive Guide to Cllr, EER, and Tippett Plots

This article provides a comprehensive framework for researchers and forensic professionals on the validation of Likelihood Ratio (LR) methods used in forensic evidence evaluation.

Validation of Forensic Evaluation Methods: A Comprehensive Guide to Cllr, EER, and Tippett Plots

Abstract

This article provides a comprehensive framework for researchers and forensic professionals on the validation of Likelihood Ratio (LR) methods used in forensic evidence evaluation. It covers the foundational principles of performance metrics, including the Likelihood Ratio Cost (Cllr), Equal Error Rate (EER), and Tippett plots, detailing their calculation and interpretation. A methodological guide for implementing a validation matrix is presented, alongside strategies for troubleshooting common issues and optimizing system performance. Finally, the article establishes robust validation criteria and comparative analysis techniques to ensure the reliability and admissibility of forensic evaluation methods in scientific and legal contexts, with direct implications for the validation of tools in digital and biometric forensics.

Core Principles of Forensic Validation: Understanding Cllr, EER, and Tippett Plots

Theoretical Foundations of the Likelihood Ratio

The Likelihood Ratio (LR) framework is a quantitative method increasingly used by forensic experts to convey the weight of evidence in criminal and civil cases [1]. Rooted in Bayesian reasoning, the LR provides a mechanism for updating beliefs about competing propositions based on new evidence. The core formula expresses how prior odds are updated to posterior odds through the evidence: Posterior Odds = Likelihood Ratio × Prior Odds [1]. In the forensic context, this typically translates to a ratio of two probabilities: the probability of observing the evidence if the prosecution's proposition (Hp) is true, divided by the probability of the same evidence if the defense's proposition (Hd) is true [2].

Forensic scientists follow three fundamental principles when applying this framework. Principle #1 mandates always considering at least one alternative hypothesis, ensuring a balanced comparison. Principle #2 emphasizes calculating the probability of the evidence given the proposition, not the probability of the proposition given the evidence, thus avoiding the prosecutor's fallacy. Principle #3 requires always considering the framework of circumstances, incorporating case context into the interpretation [2]. This approach minimizes bias in forensic investigation when used correctly, though proponents note that the personal, subjective nature of the LR means its transfer from an expert to a separate decision maker lacks firm foundation in Bayesian decision theory [1].

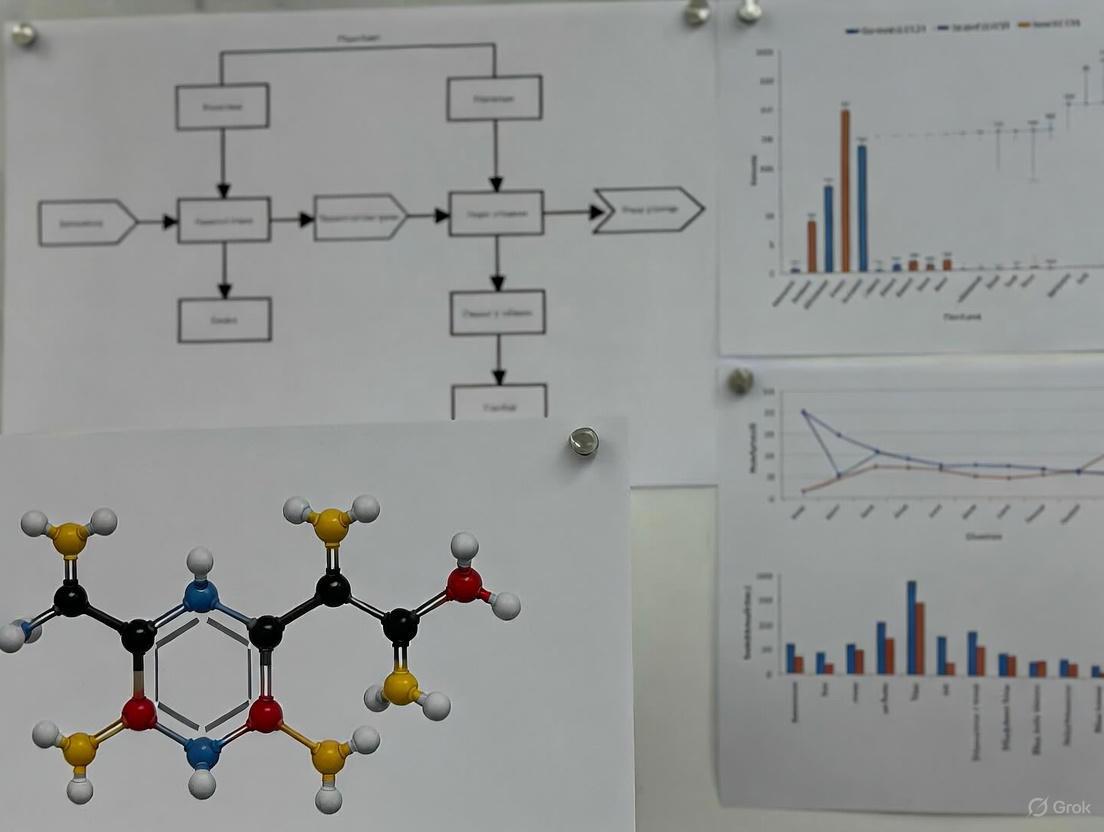

The following diagram illustrates the logical workflow and key decision points in the LR framework:

LR Validation Framework and Performance Metrics

Core Performance Characteristics

Validation of LR methods requires assessing multiple performance characteristics to ensure reliable, accurate, and forensically sound results. The validation matrix organizes these characteristics, their metrics, graphical representations, and validation criteria [3]. Six key characteristics form the foundation of LR method validation, with specific metrics and graphical tools available for each.

Table 1: Performance Characteristics for LR Validation

| Performance Characteristic | Performance Metrics | Graphical Representations | Validation Purpose |

|---|---|---|---|

| Accuracy | Cllr | ECE Plot | Measures how close LRs are to their ideal values; indicates calibration quality |

| Discriminating Power | EER, Cllrmin | ECEmin Plot, DET Plot | Assesses ability to distinguish between same-source and different-source evidence |

| Calibration | Cllrcal | ECE Plot, Tippett Plot | Evaluates whether LRs are properly scaled to represent true evidential strength |

| Robustness | Cllr, EER, Range of LR | ECE Plot, DET Plot, Tippett Plot | Tests method stability under varying conditions or data perturbations |

| Coherence | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Ensures internal consistency of results across related evidence types |

| Generalization | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Validates performance on new, unseen data beyond development datasets |

Understanding Key Metrics and Visualizations

The Cllr (Log Likelihood Ratio Cost) serves as a primary metric for assessing both accuracy and discrimination. It measures the average cost of using LRs in a decision-making process, with lower values indicating better performance [3]. The Equal Error Rate (EER) represents the point where false positive and false negative rates are equal, providing a single value summary of discrimination performance [3].

Tippett plots graphically display the distribution of LRs for both same-source and different-source comparisons, showing the cumulative proportion of cases that exceed particular LR thresholds [3]. These plots allow visual assessment of how well the LR method separates evidence types and whether LRs are properly calibrated.

Experimental Protocols for LR Validation

Validation Study Design

Proper validation of LR methods requires carefully designed experiments using appropriate datasets and protocols. A fundamental principle is using different datasets for development (training) and validation (testing) stages to ensure realistic performance assessment [3]. For fingerprint evidence validation, researchers have used real forensic fingermarks with 5-12 minutiae compared against reference fingerprints, with LRs computed using AFIS (Automated Fingerprint Identification System) scores [3] [4].

The experimental protocol involves several critical steps. First, propositions must be clearly defined at the appropriate level (typically source level). For fingerprint analysis, this involves: Hp (Same-Source): The fingermark and fingerprint originate from the same finger of the same donor; Hd (Different-Source): The fingermark originates from a random finger of another donor from the relevant population [3]. Similarity scores are then generated using specialized comparison algorithms, such as the Motorola BIS 9.1 algorithm for fingerprints or PCE (Peak-to-Correlation Energy) calculations for PRNU (Photo Response Non-Uniformity) in digital image analysis [3] [5].

LR Calculation Methods

Two primary approaches exist for calculating LRs from forensic data. The plug-in scoring method involves post-processing of similarity scores using statistical modeling to compute LRs [5]. This approach is more straightforward to implement and facilitates evaluation and inter-model comparison. The direct method outputs LR values instead of similarity scores but is more complex to implement due to the necessity to integrate-out uncertainties when feature vectors are compared under either proposition [5].

For source camera attribution using PRNU, researchers have successfully implemented score-based plug-in methods that convert PCE similarity scores into LRs using Bayesian evidence evaluation frameworks [5]. The performance of these resulting LR values is then measured using the standard methodology and metrics described in the validation framework.

Comparative Experimental Data Across Forensic Disciplines

LR Performance in Various Applications

Table 2: LR Method Performance Across Forensic Disciplines

| Forensic Discipline | Data Type | LR Calculation Method | Key Performance Results | Validation Approach |

|---|---|---|---|---|

| Fingerprint Analysis | 5-12 minutiae fingermarks vs. fingerprints | AFIS score conversion using plug-in method | Accuracy (Cllr), Discriminating Power (EER, Cllrmin), Calibration (Cllrcal) | Validation matrix with six performance characteristics [3] |

| Digital Image/Video Source Attribution | PRNU-based similarity scores (PCE) | Plug-in scoring method with Bayesian framework | Cllr values for different PRNU creation strategies (RT1, RT2) | Performance measured following standardized methodology for forensic LR validation [5] |

| DNA Mixture Interpretation | STR profiles from 2-5 person mixtures | Probabilistic genotyping (STRmix) | Conditional LRs show higher true donor differentiation than simple propositions | Comparative study of proposition types (simple, conditional, compound) [6] |

| Digital Video with Motion Stabilization | PRNU from stabilized video frames | Highest Frame Score (HFS) method | Enhanced performance for DMS-affected content compared to baseline | Comparison of multiple matching strategies for challenging video data [5] |

Impact of Proposition Formulation in DNA Evidence

Research on DNA mixture interpretation reveals how proposition formulation significantly impacts LR values and their interpretative value. Studies comparing simple, conditional, and compound propositions using STRmix software demonstrate that conditional propositions have a much higher ability to differentiate true from false donors than simple propositions [6]. Conversely, compound propositions can misstate the weight of evidence given the propositions strongly in either direction, potentially overinflating the evidence against individuals who show small inclusionary or uninformative LRs when considered individually [6].

For a two-person DNA mixture with two persons of interest (POIs), the different proposition types yield meaningfully different LRs:

- Simple Proposition: LR1u/uu = L1,u/Lu,u (evaluates POI1 with one unknown)

- Compound Proposition: LR12/uu = L1,2/Lu,u (evaluates POI1 and POI2 together)

- Conditional Proposition: Isolates evidence for each POI while accounting for known contributors [6]

Research Reagent Solutions for LR Validation

Table 3: Essential Research Tools for LR Method Development and Validation

| Tool/Resource | Function in LR Research | Example Applications | Key Features |

|---|---|---|---|

| AFIS Comparison Algorithms | Generates similarity scores from fingerprint data | Motorola BIS/Printrak 9.1 for fingerprint LRs | Converts minutiae patterns into comparable scores for LR calculation [3] |

| Probabilistic Genotyping Software | Computes DNA LRs from complex mixture data | STRmix for DNA mixture interpretation | Handles complex multi-contributor profiles with different proposition types [6] |

| PRNU Extraction & Analysis Tools | Creates camera-specific digital fingerprints | Source camera attribution for images/videos | Extracts sensor-based noise patterns for media authentication [5] |

| Validation Datasets | Provides ground-truthed data for method testing | Real forensic fingermarks with known sources | Enables performance testing with forensically relevant material [3] [4] |

| Performance Evaluation Software | Calculates validation metrics and creates plots | Cllr, EER computation; Tippett plot generation | Standardized assessment of LR method performance [3] |

The following diagram illustrates the relationship between these research tools in a typical LR validation workflow:

Challenges and Implementation Considerations

The implementation of LR frameworks faces several significant challenges that require careful consideration. A primary concern is the uncertainty characterization in reported LR values, which depends on personal choices made during assessment [1]. There is no objectively authoritative model for translating data into probabilities, necessitating transparent documentation of assumptions and methodologies.

The communication of LRs to legal decision-makers presents another challenge, with ongoing research investigating the most effective presentation formats—whether numerical LR values, numerical random-match probabilities, or verbal strength-of-support statements [7]. Current empirical literature does not definitively answer which format maximizes understandability, indicating a need for further methodological research [7].

Furthermore, the assumptions lattice and uncertainty pyramid concept provides a framework for assessing uncertainty in LR evaluations, exploring the range of LR values attainable by models that satisfy stated reasonableness criteria [1]. This approach helps experts and consumers of forensic evidence understand the relationships among interpretation, data, and assumptions, ultimately supporting more informed decisions about the weight of forensic evidence.

The empirical evaluation of forensic evidence systems demands robust and interpretable performance metrics. Within the likelihood-ratio (LR) framework, which is increasingly supported for reporting evidential strength, the Log-Likelihood Ratio Cost (Cllr) and the Equal Error Rate (EER) serve as fundamental benchmarks for validating the reliability of (semi-)automated systems [8] [9]. These metrics provide distinct yet complementary insights into system performance. The EER offers an intuitive measure of a system's discriminating power at a specific operational threshold, while the Cllr provides a more comprehensive assessment by evaluating the validity of the LR values themselves across all possible thresholds, penalizing both poor discrimination and poor calibration [8] [10]. The adoption of these metrics is critical for advancing a paradigm shift in forensic science towards methods that are transparent, reproducible, empirically validated, and resistant to cognitive bias [11].

This guide provides a structured comparison of Cllr and EER, detailing their theoretical foundations, calculation methodologies, and practical application. We present summarized experimental data from forensic voice comparison studies to illustrate their use and offer protocols for their implementation within a validation framework that includes Tippett plots.

Metric Definitions and Comparative Analysis

The following table provides a structured comparison of the core characteristics of EER and Cllr.

Table 1: Fundamental comparison between EER and Cllr metrics.

| Feature | Equal Error Rate (EER) | Log-Likelihood Ratio Cost (Cllr) |

|---|---|---|

| Definition | The point where the False Acceptance Rate (FAR) and False Rejection Rate (FRR) are equal [10]. | A scalar metric that measures the overall performance of a likelihood-ratio system, penalizing misleading LRs more heavily the further they are from 1 [8] [9]. |

| Primary Focus | Discriminating power (Same-Source vs. Different-Source separation) at a specific threshold [12]. | Overall performance, incorporating both discrimination and calibration [8]. |

| Interpretation | Lower values indicate higher accuracy. The value is a rate (e.g., 0.1 for 10% error) [10] [13]. | Lower values indicate better performance. Cllr=0 is perfect, Cllr=1 is uninformative (equivalent to always reporting LR=1) [8] [9]. |

| Strengths | Intuitive, easy to understand, provides a single operating point for system comparison [10]. | A "strictly proper scoring rule" with sound information-theoretic interpretation; provides a global performance measure [8]. |

| Limitations | Does not assess the validity (calibration) of the LR values themselves; only measures discrimination [8]. | Interpretation of non-extreme values (e.g., 0.3) is not intuitive and is highly domain-dependent [8] [9]. |

Visualizing the Logical Relationship Between Metrics

The diagram below illustrates the logical relationship between EER, Cllr, and the broader validation process for forensic evaluation systems.

Experimental Data and Performance Benchmarking

Empirical Data from Forensic Speaker Comparison

Experimental data from acoustic-phonetic studies provides a practical context for comparing EER and Cllr. The table below summarizes findings from research involving 20 male Brazilian Portuguese speakers, comparing performance across different acoustic parameters and speaking styles [14].

Table 2: Comparative performance of acoustic-phonetic parameters in speaker discrimination, measured by EER and Cllr [14].

| Acoustic-Phonetic Parameter Class | Example Parameters | Relative EER Performance | Relative Cllr Performance | Remarks |

|---|---|---|---|---|

| Spectral | High formant frequencies (F3, F4) | Best (Lowest) | Best (Lowest) | Most discriminatory individually. |

| Melodic | Fundamental frequency (f0) estimates (baseline, central tendency) | Good | Good | f0 baseline found most reliable in twin studies [15]. |

| Temporal | Duration-related parameters | Worst (Highest) | Worst (Highest) | Weakest speaker contrasting power. |

Key Experimental Findings:

- Speaking Style Impact: A significant performance asymmetry was observed between spontaneous dialogues and interviews. A mismatch in speaking style between compared samples considerably undermined discriminatory performance for all parameters [14].

- Parameter Combination: A statistical model combining different acoustic-phonetic estimates outperformed any single parameter, demonstrating the value of multivariate approaches [14].

- Twin Studies: Research on identical twins found that while f0 patterns were very similar, some pairs could be differentiated acoustically in connected speech, but not based on lengthened vowels. This reinforces the relevance of long-term f0 metrics like f0 baseline for speaker comparison [15].

Interpreting Metric Values in Practice

Understanding what constitutes a "good" value for these metrics is context-dependent.

- EER: A lower EER is always better. The value represents a percentage; for example, an EER of 0.1 (or 10%) means the system has a 10% error rate at the crossover threshold. The "acceptability" of an EER depends on the security and usability requirements of the application [10].

- Cllr: While Cllr=0 is perfect and Cllr=1 is uninformative, interpreting values between them is less straightforward. A review of 136 publications found that Cllr values "lack clear patterns and depend on the area, analysis and dataset" [8] [9]. Therefore, a Cllr value should not be judged in isolation but by comparing it to benchmarks established on relevant benchmark datasets within the same forensic discipline [8].

Experimental Protocols for Metric Validation

Workflow for System Validation

A robust validation protocol for a forensic evaluation system involves a sequence of steps to calculate and interpret these metrics, as shown in the workflow below.

Detailed Methodological Steps

Step 1: Input Data Collection The foundation of any validation is a dataset with known ground truth. This requires a collection of item pairs where it is definitively known whether they are from the same source (H1) or different sources (H2). The dataset should be representative of casework conditions in terms of sample quality, variability, and complexity [8] [14]. For example, the speaker comparison study used 20 male Brazilian Portuguese speakers of the same dialect, with speech material consisting of both spontaneous telephone conversations and interviews [14].

Step 2: Feature Extraction From each item in the dataset, forensically relevant features are extracted. The choice of features is discipline-specific. In the cited speech studies, these included [15] [14]:

- Spectral parameters: High formant frequencies (F3, F4).

- Melodic parameters: Fundamental frequency (f0) estimates like baseline and central tendency.

- Temporal parameters: Duration-related features.

Step 3: LR System Processing The core analysis method (e.g., a statistical model, an automated algorithm) processes pairs of feature sets and outputs a likelihood ratio (LR). This LR quantifies the strength of evidence for the same-source (H1) hypothesis relative to the different-source (H2) hypothesis.

Step 4a: Calculate EER

- Vary the Decision Threshold: System outputs (scores) are compared against a range of decision thresholds.

- Calculate FAR and FRR: For each threshold, compute the False Acceptance Rate (FAR) – the proportion of different-source pairs incorrectly accepted as same-source – and the False Rejection Rate (FRR) – the proportion of same-source pairs incorrectly rejected [10].

- Plot DET/ROC Curve: Plot FAR against FRR (a Detection Error Tradeoff or DET curve) or plot the True Acceptance Rate against the FAR (a Receiver Operating Characteristic or ROC curve) [12].

- Find the Crossover: The EER is the point on the curve where FAR equals FRR [10] [12]. The system with the lowest EER is generally the most accurate [12].

Step 4b: Calculate Cllr The Cllr is computed directly from the output LRs and the ground truth labels using the formula [8]:

Cllr = 1/(2*N_H1) * Σ log₂(1 + 1/LR_H1[i]) + 1/(2*N_H2) * Σ log₂(1 + LR_H2[j])

Where:

N_H1andN_H2are the number of same-source and different-source trials.LR_H1[i]are the LR values for same-source trials.LR_H2[j]are the LR values for different-source trials.

To deconstruct performance, Cllr can be split:

- Cllr_min: The discrimination cost, obtained after applying the Pool Adjacent Violators (PAV) algorithm to the LRs to achieve perfect calibration. This represents the best possible Cllr for the system's inherent ability to separate classes.

- Cllrcal = Cllr - Cllrmin: The calibration cost, representing the error due to the LRs being inaccurately scaled (over- or understating the evidence) [8].

Step 5: Generate Tippett Plot A Tippett plot is a crucial visualization tool. It shows the cumulative distribution of the LR values for both the same-source (H1) and different-source (H2) conditions [8]. A well-calibrated system will show LRs greater than 1 for most H1 trials and LRs less than 1 for most H2 trials. The plot instantly reveals the rate of misleading evidence (e.g., LR>1 for an H2 trial).

Step 6: Interpret and Validate The final step is a holistic interpretation:

- Compare EER and Cllr_min to assess raw discrimination.

- A high Cllr_cal indicates a need for better calibration of the LR output.

- Use the Tippett plot to understand the real-world implications of the metrics.

- Validation requires that performance is assessed on data not used to build the system (e.g., a separate test set).

Essential Research Reagents and Tools

The following table details key solutions and materials essential for conducting research and validation in this field.

Table 3: Essential research reagents and tools for forensic metric validation.

| Tool / Solution | Function / Description | Example / Reference |

|---|---|---|

| Benchmark Datasets | Publicly available datasets with known ground truth, crucial for comparable and reproducible validation of LR systems across different studies and labs. | The research community advocates for their use to advance the field [8]. |

| Scripting & Analysis Environments | Flexible software for implementing custom feature extraction, LR models, and performance metric calculations. | Praat [14], R, Python. |

| Likelihood Ratio Framework Software | Specialized software for building and validating LR systems using established statistical models and calibration methods. | --- |

| Performance Metric Libraries | Pre-written code modules for calculating Cllr, EER, and generating validation plots like Tippett and ECE plots. | evaluate library for EER [13]. |

| Bi-Gaussian Calibration Method | A proposed method for calibrating likelihood ratios to achieve a perfectly-calibrated bi-Gaussian system, improving the reliability of the LR output [11]. | A method involving mapping empirical LR distributions to target bi-Gaussian distributions [11]. |

The evaluation of forensic evidence often hinges on quantifying its strength, typically expressed through a Likelihood Ratio (LR). The LR is a metric that assesses the probability of the evidence under two competing propositions, usually the prosecution's hypothesis (H1) and the defense's hypothesis (H2) [3]. For a forensic method that computes LRs to be considered scientifically sound and admissible, it must undergo a rigorous validation procedure to demonstrate its performance and reliability [3]. This validation process relies on specific performance characteristics, metrics, and graphical tools, among which the Tippett plot is a fundamental instrument for visualizing the method's validity and discriminating power.

This guide objectively compares the core components of LR validation research, focusing on the Cllr, EER, and Tippett plots, and provides the experimental protocols and reagents needed to implement this validation framework.

Core Concepts and Performance Metrics

Validation of an LR method requires assessing multiple performance characteristics. The table below summarizes the key characteristics, their definitions, and corresponding metrics as outlined in validation frameworks [3].

Table 1: Key Performance Characteristics for LR Validation

| Performance Characteristic | Description | Primary Performance Metric(s) |

|---|---|---|

| Accuracy | Measures how well the computed LRs agree with the true state of affairs; reflects the reliability of the LR values. | Cllr (Cost of log likelihood ratio) |

| Discriminating Power | The ability of the method to distinguish between comparisons under H1 and comparisons under H2. | EER (Equal Error Rate), Cllrmin |

| Calibration | Assesses whether the LRs are correctly scaled. For example, an LR of 100 should mean that the evidence is 100 times more likely under H1 than under H2. | Cllrcal |

| Robustness | The performance stability of the method when conditions deviate from those used during its development. | Cllr, EER, Range of the LR |

| Coherence | Ensures the method's performance is consistent across different subsets of data or population strata. | Cllr, EER |

| Generalization | The ability of the method to perform well on new, unseen data that was not used in its development. | Cllr, EER |

Explanation of Key Metrics

- Cllr (Cost of log likelihood ratio): This is a single scalar metric that summarizes the overall accuracy of the LR system. A lower Cllr value indicates better performance. It penalizes both misleadingly strong LRs (e.g., a large LR for a comparison that actually comes from H2) and misleadingly weak LRs (e.g., an LR close to 1 for a comparison that comes from H1) [3].

- EER (Equal Error Rate): This metric directly measures discriminating power. It represents the point on a Detection Error Tradeoff (DET) plot where the rate of false positive errors (e.g., assigning an LR > 1 for a H2 case) equals the rate of false negative errors (e.g., assigning an LR < 1 for a H1 case). A lower EER signifies better discrimination [3].

- Tippett Plot: A graphical tool that provides a visual assessment of both discrimination and calibration. It displays the cumulative distribution of LRs for both H1 (Same-Source) and H2 (Different-Source) comparisons, allowing for an intuitive evaluation of the method's performance [3].

The Validation Matrix and Experimental Protocol

A structured approach to validation is often encapsulated in a Validation Matrix, which organizes the entire process from performance characteristics to a final pass/fail decision [3].

Table 2: The Validation Matrix for an LR Method [3]

| Performance Characteristic | Performance Metric | Graphical Representation | Validation Criteria | Validation Decision |

|---|---|---|---|---|

| Accuracy | Cllr | ECE plot | Cllr < 0.2 (Example) | Pass/Fail |

| Discriminating Power | EER, Cllrmin | ECEmin plot, DET plot | EER < X% (Lab-defined) | Pass/Fail |

| Calibration | Cllrcal | ECE plot, Tippett plot | Cllrcal within Y% of baseline | Pass/Fail |

| Robustness | Cllr, EER, Range of LR | ECE plot, DET plot, Tippett plot | Performance degradation < Z% | Pass/Fail |

| Coherence | Cllr, EER | ECE plot, DET plot, Tippett plot | Consistent performance across strata | Pass/Fail |

| Generalization | Cllr, EER | ECE plot, DET plot, Tippett plot | Performance on unseen data meets criteria | Pass/Fail |

Detailed Experimental Protocol for Validation

The following workflow outlines the key steps for generating and validating likelihood ratios, culminating in the creation of a Tippett plot. This protocol is adapted from forensic fingerprint validation studies [3].

Protocol Steps:

- Data Collection and Score Generation: A dataset of known origin is required. For fingerprint validation, this involves using an Automated Fingerprint Identification System (AFIS) "black box" algorithm (e.g., Motorola BIS Printrak 9.1) to generate comparison scores. These scores are labeled as Same-Source (SS) if the fingermark and fingerprint originate from the same finger, or Different-Source (DS) if they originate from different, unrelated donors [3].

- Data Splitting: The dataset of SS and DS scores is split into two independent sets: a development set used to train or build the LR model, and a validation set used exclusively for testing the final model's performance. This separation is critical for assessing generalization [3].

- Model Training: An LR method is applied to the development set. This involves modeling the probability distributions of the AFIS scores under both the SS (H1) and DS (H2) propositions. The output is a function that can convert a raw AFIS score into a calibrated LR [3].

- LR Computation: The trained LR model is applied to the held-out validation set. For every comparison in this set, an LR value is computed [3].

- Performance Evaluation: The computed LRs are used to calculate performance metrics.

- Cllr is computed from all the LRs and their ground truth labels.

- EER is derived by plotting a DET curve based on the LRs.

- Tippett Plot Generation: The LRs from the validation set are separated into two groups based on their true origin (SS or DS). For each group, the LRs are sorted. The cumulative proportion of comparisons is then plotted against the log10(LR) value. A well-validated method will show the SS curve rising steeply at high LR values and the DS curve rising steeply at low LR values, with a clear separation between the two curves [3].

- Validation Decision: The analytical results (metrics and plots) are compared against pre-defined validation criteria (e.g., Cllr < 0.2). The method is validated only if it passes all criteria for the essential performance characteristics [3].

The Scientist's Toolkit: Research Reagents and Materials

The following tools and materials are essential for conducting LR validation research, particularly in the context of forensic fingerprint analysis.

Table 3: Essential Research Reagents and Materials for LR Validation

| Item Name | Type / Category | Function in Validation |

|---|---|---|

| AFIS Algorithm (e.g., Motorola BIS Printrak 9.1) | Software / Core Technology | Acts as a "black box" to generate the primary comparison scores from fingerprint and fingermark pairs. These scores are the raw data for LR computation [3]. |

| Forensic Fingerprint Dataset | Data | A collection of real forensic fingermarks and fingerprints with known ground truth (SS or DS). This is used for the development and validation stages of the LR method [3]. |

| LR Computation Method | Software / Statistical Model | A model (e.g., a kernel density function or a machine learning classifier) that transforms raw AFIS scores into calibrated likelihood ratios [3]. |

| Validation Framework Software (e.g., R, Python with custom scripts) | Software / Analysis Environment | Provides the computational backbone for calculating performance metrics (Cllr, EER), generating plots (Tippett, DET), and executing the validation protocol [3]. |

| Statistical Plots (Tippett, DET, ECE) | Diagnostic Tool | Graphical representations used to visually assess the performance characteristics of the LR method, including its discriminating power, calibration, and accuracy [3]. |

Comparative Performance Data

The quantitative performance of an LR method is summarized by its metrics. The following table presents example data from a validation study, comparing a proposed method against a baseline.

Table 4: Example Comparative Performance Data of LR Methods [3]

| LR Method | Performance Characteristic | Performance Metric | Analytical Result | Validation Decision |

|---|---|---|---|---|

| Baseline Method | Accuracy | Cllr | 0.20 | Pass |

| Proposed Method | Accuracy | Cllr | 0.15 (-25%) | Pass |

| Baseline Method | Discriminating Power | EER | 5.0% | Pass |

| Proposed Method | Discriminating Power | EER | 3.5% (-30%) | Pass |

| Baseline Method | Calibration | Cllrcal | 0.21 | Pass |

| Proposed Method | Calibration | Cllrcal | 0.16 (-24%) | Pass |

Interpreting Comparative Data

- Relative Improvement: The proposed method shows a 25% improvement in accuracy (lower Cllr) and a 30% improvement in discriminating power (lower EER) compared to the baseline. This demonstrates a superior overall performance [3].

- Validation Outcome: Both methods meet the example validation criteria (e.g., Cllr < 0.2), so both would receive a "Pass" decision for these characteristics. However, the proposed method is objectively better [3].

- Role of the Tippett Plot: The numerical superiority of the proposed method would be visually confirmed in a Tippett plot. Its SS curve would shift further to the right (higher LRs for true SS comparisons) and its DS curve would shift further to the left (lower LRs for true DS comparisons) compared to the baseline, indicating clearer separation and fewer misleading LRs [3].

Advanced Visualization: Interpreting a Tippett Plot

A Tippett plot is the definitive visual tool for assessing the practical utility of an LR method. The following diagram breaks down its components and interpretation.

Key Interpretation Guidelines:

- An Ideal Plot: In a perfect system, the SS (H1) curve would be a vertical line at an infinitely high LR, and the DS (H2) curve would be a vertical line at zero LR. Real-world systems approximate this ideal.

- Good Performance: A well-validated method shows curves that are steep and widely separated. The SS curve should climb rapidly at the high end of the LR scale, indicating that most true SS cases are assigned strongly supportive LRs. Conversely, the DS curve should climb rapidly at the low end [3].

- Poor Performance: If the curves are shallow and overlap significantly around LR=1, the method has poor discriminating power and produces many misleading LRs. The amount of overlap directly correlates with the rate of errors (e.g., the EER) [3].

- Calibration Assessment: The plot also reveals calibration issues. If, for example, the SS curve is too far to the left, it means the method is systematically underestimating the strength of evidence for true SS comparisons.

In the rigorous fields of forensic science and drug development, the validation of analytical methods is paramount for ensuring the reliability and admissibility of scientific evidence. A Validation Matrix serves as a critical organizational tool, systematically mapping the relationship between performance characteristics, the metrics used to quantify them, and the pre-defined acceptance criteria that define success. This framework is essential for demonstrating that a method is fit for its intended purpose, providing a clear, auditable trail for regulatory compliance. Within the specific context of Cllr (Cost of log-likelihood-ratio), EER (Equal Error Rate), and Tippett plot validation research, this structured approach becomes indispensable for evaluating the performance of likelihood ratio (LR) systems in forensic evidence evaluation, such as source camera attribution [5].

The core challenge in validation is selecting the right metrics and criteria that accurately reflect the domain interest. Flawed metric use can lead to futile resource investment and obscure true scientific progress, hindering the translation of methods into practice [16]. This guide objectively compares validation approaches, focusing on the quantitative data and experimental protocols that underpin robust method evaluation for researchers and scientists.

Validation relies on quantifying a set of core performance characteristics. The choice of metrics depends on the analytical task, whether it is a classification problem, a regression-based assay, or a forensic likelihood ratio system.

Core Characteristics for Classification and Quantitative Assays

For classification models and quantitative methods, a standard set of characteristics is used to measure performance from different angles. The table below summarizes these key characteristics and their associated metrics.

Table 1: Key Performance Characteristics and Metrics for Classification and Quantitative Assays

| Performance Characteristic | Description | Common Metrics & Formulae |

|---|---|---|

| Accuracy/Truthfulness | Closeness of agreement between test results and an accepted reference value [17]. | Accuracy: (TP+TN)/(TP+TN+FP+FN)Mean Absolute Error (MAE): ( \frac{1}{N} \sum |yj - \hat{y}j| ) [18] [19] |

| Precision/Reliability | Closeness of agreement between independent measurements under specified conditions [17]. | Precision: TP/(TP+FP)Recall/Sensitivity: TP/(TP+FN)F1-Score: ( 2 \times \frac{ \text{Precision} \times \text{Recall}} {\text{Precision} + \text{Recall}} ) [18] [20] |

| Linearity | Ability of the method to obtain results directly proportional to analyte concentration [17]. | R-squared (R²): ( 1 - \frac{\sum (yj - \hat{y}j)^2}{\sum (y_j - \bar{y})^2} )Slope and y-intercept of the regression line [18] [17] |

| Range | The interval between upper and lower concentration where linearity, accuracy, and precision are demonstrated [17]. | Verified by acceptable performance at minimum and maximum concentration levels. |

| Specificity/Selectivity | Ability to assess the analyte unequivocally in the presence of other components [17]. | Demonstrated by no interference from blank samples and spiked matrices. |

| Sensitivity | The lowest amount of analyte that can be detected or quantified. | Detection Limit (DL): ( (3 \times \sigma)/S ) Quantitation Limit (QL): ( (10 \times \sigma)/S ) where ( \sigma ) is standard deviation and ( S ) is the slope of the calibration curve [17]. |

Specialized Metrics for Forensic LR Validation: Cllr, EER, and Tippett Plots

For systems outputting Likelihood Ratios (LRs)—a preferred method in forensic evidence evaluation—a distinct set of metrics is used to assess validity and reliability [5].

- Equal Error Rate (EER): This is a scalar performance metric derived from Detection Error Trade-off (DET) or Receiver Operating Characteristic (ROC) curves. It represents the point where the false positive rate (FPR) and false negative rate (FNR) are equal. A lower EER indicates a better overall ability of the system to distinguish between two classes (e.g., same source vs. different source) [5].

- Cllr (Cost of log-likelihood-ratio): This metric evaluates the validity of the LR system itself. It measures the overall quality of the LR values by penalizing not only incorrect decisions but also poorly calibrated LRs (i.e., LRs that are over- or under-confident). A lower Cllr indicates better performance. Cllr is calculated using the formula: ( \text{Cllr} = \frac{1}{2} \left( \frac{1}{N{\text{same}}} \sum{i=1}^{N{\text{same}}} \log2(1 + \frac{1}{LRi}) + \frac{1}{N{\text{diff}}} \sum{j=1}^{N{\text{diff}}} \log2(1 + LRj) \right) ) where ( N{\text{same}} ) and ( N{\text{diff}} ) are the number of same-source and different-source comparisons, respectively [5].

- Tippett Plots: These are graphical tools for visualizing the performance of an LR system. A Tippett plot shows the cumulative distributions of the LRs for both the same-source (true) and different-source (false) hypotheses. The plot allows for a visual assessment of the discrimination and calibration of the system. For example, the point where the two curves cross can be related to the EER, and the spread of the curves indicates the confidence and reliability of the LRs [5].

Table 2: Comparative Analysis of Forensic LR Validation Metrics

| Metric | Measures | Interpretation | Comparative Advantage |

|---|---|---|---|

| Equal Error Rate (EER) | Discrimination power at a specific threshold. | Lower value = better discrimination. | Simple, single-value summary of classifier performance. |

| Cllr | Overall validity and calibration of LR values. | Lower value = better overall quality and calibration of LRs. | Penalizes misleading LRs even if they lead to the correct decision; measures "goodness" of the LR itself. |

| Tippett Plot | Empirical distribution of LRs for both hypotheses. | Visual assessment of discrimination, calibration, and reliability. | Provides a comprehensive view of system performance across all possible decision thresholds. |

Experimental Protocols for Validation

A robust validation is built on carefully designed experiments. The following protocols outline the methodologies for general analytical method validation and specific forensic LR validation.

Protocol for Analytical Method Validation (e.g., Drug Assay)

This protocol is aligned with ICH Q2(R1/R2) guidelines and is critical for drug development [17].

Linearity and Range:

- Method: Prepare a minimum of 5 standard solutions at concentrations spanning the intended range (e.g., 80-120% of test concentration for assay). Analyze each solution in triplicate.

- Data Analysis: Plot mean response against concentration. Calculate the regression line (y = mx + b) using the least-squares method. The coefficient of determination (R²) should typically be >0.95 [17].

Accuracy:

- Method: For a drug product, spike a known amount of analyte (reference material) into a synthetic matrix (placebo) lacking the analyte. Prepare at least 3 concentration levels across the range (e.g., 80%, 100%, 120%), with 3 replicates per level.

- Data Analysis: Calculate the recovery (%) for each sample: (Measured Concentration / Theoretical Concentration) × 100. The mean recovery at each level should be within predefined limits (e.g., 98-102%) [17].

Precision:

- Repeatability: Analyze a homogeneous sample at 100% concentration at least 6 times under the same operating conditions. Calculate the relative standard deviation (RSD%) of the results.

- Intermediate Precision (Ruggedness): Repeat the analysis on a different day, with a different analyst, or using different equipment. The RSD% from the combined repeatability and intermediate precision experiments should meet acceptance criteria [17].

Protocol for Forensic LR System Validation (e.g., Source Camera Attribution)

This protocol details the process for validating an LR-based system, using source camera attribution via Photo Response Non-Uniformity (PRNU) as an example [5].

Reference Database Creation:

- Method: Acquire a set of flat-field images or videos from a known set of cameras under controlled conditions. Extract the PRNU noise pattern for each camera, which serves as its digital fingerprint [5].

Similarity Score Generation:

- Method: For a questioned image, extract its PRNU pattern. Compare this pattern to the reference PRNU patterns from the database using a similarity measure like Peak-to-Correlation Energy (PCE). This generates a set of similarity scores [5].

Likelihood Ratio Calculation:

- Method: Using a "plug-in" score-based approach, model the distribution of PCE scores for both same-source (H1) and different-source (H0) comparisons. Convert the raw PCE similarity scores into Likelihood Ratios (LRs) using the ratio of the two probability densities: ( LR = \frac{P(\text{score} \mid H1)}{P(\text{score} \mid H0)} ) [5].

Performance Assessment with Cllr, EER, and Tippett Plots:

- Method: Using a separate, labeled test set of comparisons, calculate the Cllr to assess the overall quality of the LRs. Generate the Tippett plot to visualize the cumulative distributions of LRs for same-source and different-source comparisons. From the underlying distributions, calculate the Equal Error Rate (EER) [5].

Visualization of the Validation Workflow

The following diagram illustrates the logical workflow for setting up a validation matrix and executing a validation study, integrating both general analytical and specific forensic LR principles.

Val Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The following reagents and materials are essential for conducting the experiments cited in the validation protocols, particularly in pharmaceutical and forensic analytical contexts.

Table 3: Essential Research Reagents and Materials for Validation Studies

| Reagent/Material | Function in Validation | Application Example |

|---|---|---|

| Certified Reference Material (CRM) | Serves as the primary standard with known purity and concentration to establish accuracy and calibration [17]. | Used in drug assay accuracy experiments to spike placebo mixtures and calculate recovery percentages. |

| Placebo/Blank Matrix | A synthetic mixture containing all components of the sample except the analyte of interest. Critical for demonstrating specificity and accuracy [17]. | Used in drug product validation to ensure the analytical method does not produce a false positive signal from excipients. |

| Standard Stock Solutions | Solutions of the analyte at known, high concentration. Used to prepare calibration standards for linearity, range, and accuracy studies [17]. | Serially diluted to create the calibration curve in an HPLC-UV method for impurity testing. |

| Flat-Field Image/Video Sets | A set of images or videos of a uniform, bright scene acquired under controlled conditions. Serves as the reference for extracting a camera's PRNU fingerprint [5]. | Used in source camera attribution validation to build the reference database for calculating similarity scores and LRs. |

| Spiked Impurity Samples | Samples (drug substance/product) spiked with known amounts of known or potential impurities. Used to validate the accuracy and quantitation limit of impurity methods [17]. | Essential for demonstrating that an analytical procedure can accurately detect and quantify low levels of degradation products. |

The Validation Matrix is more than a documentation tool; it is the blueprint for scientific rigor in method development. By systematically organizing performance characteristics, metrics, and criteria, it provides an objective framework for comparing method performance and ensuring regulatory compliance. The comparative data and detailed protocols presented here highlight that while core principles of accuracy, precision, and linearity are universal, the specific metrics and visual tools like Cllr and Tippett plots are tailored to the domain interest—whether it is quantifying a drug substance or evaluating the weight of forensic evidence. For researchers in drug development and forensic science, adopting this structured matrix approach is fundamental to demonstrating that their methods are not only operational but also reliable, valid, and fit for purpose.

The rigorous comparison of Same-Source (SS) and Different-Source (DS) hypotheses forms the cornerstone of modern forensic evidence evaluation. This framework provides a logically sound structure for quantifying the strength of forensic evidence, moving beyond subjective judgment to data-driven decision-making. The SS proposition asserts that two specimens originate from a common source, while the DS proposition contends they come from different sources. The forensic evaluation process computationally evaluates evidence under these competing propositions, typically outputting a Likelihood Ratio (LR) that numerically expresses how much more likely the evidence is under one proposition versus the other [4] [5].

The shift toward this probabilistic paradigm represents a fundamental transformation in forensic science, replacing human-perception-based analysis and subjective interpretation with methods grounded in relevant data, quantitative measurements, and statistical models [11]. This paradigm shift emphasizes the need for transparent, reproducible, and empirically validated methods that are intrinsically resistant to cognitive bias. Central to this validation are specific metrics and tools, including Cllr (log-likelihood-ratio cost), Equal Error Rate (EER), and Tippett plots, which provide standardized means of assessing system performance and reliability under both SS and DS conditions [11] [5].

Methodological Frameworks for SS/DS Evaluation

The Likelihood Ratio Framework

The Likelihood Ratio framework provides a coherent logical structure for comparing SS and DS propositions. The LR is calculated as the ratio of the probability of observing the evidence (E) under the SS proposition to its probability under the DS proposition:

LR = P(E|SS) / P(E|DS)

An LR value greater than 1 supports the SS proposition, while a value less than 1 supports the DS proposition. The magnitude of the LR indicates the strength of the evidence [5]. This framework enables forensic scientists to present evidence strength in a balanced manner that separately considers the prosecution and defense propositions.

Computational Approaches for LR Calculation

Different computational strategies have been developed to calculate LRs from forensic evidence:

Plug-in scoring methods: These approaches involve post-processing of similarity scores using statistical modeling to compute LRs. They typically use continuous similarity score outputs and convert them to probabilistically meaningful LRs through calibration [5].

Direct methods: These more complex approaches output LR values directly instead of similarity scores. They require integrating out uncertainties when feature vectors are compared under competing propositions but produce probabilistically sound LRs without intermediate conversion steps [5].

Bi-Gaussian calibration method: A recently developed approach that maps empirical score distributions to perfectly calibrated bi-Gaussian systems where same-source and different-source LR distributions follow specific Gaussian parameters with equal variance [11].

Experimental Protocols for Method Validation

Forensic Evidence Validation Protocol

Comprehensive validation of SS/DS evaluation methods requires carefully designed experimental protocols that assess performance across varied forensic evidence types. The following workflow illustrates the standard validation process for forensic evidence evaluation methods:

This validation framework applies across multiple forensic disciplines, including fingerprint analysis, digital media authentication, and speaker recognition. The protocol begins with collecting known SS and DS sample pairs, followed by feature extraction, similarity computation, LR calculation, and comprehensive performance assessment using standardized metrics [4] [5].

Source Camera Attribution Protocol

For digital image and video source attribution, a specific experimental protocol based on Photo Response Non-Uniformity (PRNU) analysis has been developed:

PRNU Extraction Phase:

- Reference PRNU creation: Acquire multiple flat-field images from the camera sensor and extract PRNU using maximum likelihood estimation [5].

- Sensor pattern estimation: Calculate reference PRNU pattern using the formula:

K̂(x,y) = ΣI_l(x,y)·K_l(x,y) / ΣI_l²(x,y)[5]. - Video-specific processing: For videos with Digital Motion Stabilization, employ frame alignment strategies or combine flat-field images and videos to create robust reference patterns [5].

Comparison Phase:

- Questioned content analysis: Extract noise pattern from the questioned image or video.

- Similarity scoring: Calculate Peak-to-Correlation Energy (PCE) between questioned sample and reference PRNUs.

- LR calculation: Convert PCE scores to likelihood ratios using statistical modeling.

- Performance evaluation: Assess system using Cllr, EER, and Tippett plots following validation guidelines [5].

Performance Metrics and Quantitative Assessment

Key Performance Metrics for SS/DS Validation

The performance of forensic evaluation systems comparing SS and DS propositions is quantified using several standardized metrics:

Cllr (Log-Likelihood-Ratio Cost): Measures the overall quality of LR values, with lower values indicating better calibration and discrimination ability. For a perfectly calibrated bi-Gaussian system, there is a direct bidirectional mapping between the system's variance parameter and its Cllr value [11].

Equal Error Rate (EER): Represents the error rate where false positive and false negative rates are equal. Lower EER values indicate better discrimination performance between SS and DS conditions [5].

Tippett Plots: Graphical representations that show the cumulative distribution of LRs for both SS and DS conditions, allowing visual assessment of system performance across the entire range of evidentiary strength [5].

Experimental Data and Performance Comparison

Recent validation studies across multiple forensic domains have generated quantitative performance data for SS/DS proposition testing. The table below summarizes key findings from published research:

Table 1: Performance Metrics for SS/DS Evaluation Methods Across Forensic Domains

| Forensic Domain | Evaluation Method | Cllr | EER | Discrimination & Calibration Notes |

|---|---|---|---|---|

| Fingerprint Analysis | Likelihood Ratio (5-12 minutiae) | Not Reported | Not Reported | Adequate validation for casework; Performance varies with feature extraction algorithms and AFIS systems [4] |

| Source Camera Attribution (Images) | PRNU-based PCE to LR conversion | Not Reported | Not Reported | Enables probabilistic interpretation; Follows LR validation guidelines [5] |

| Source Camera Attribution (Videos) | PRNU with DMS compensation | Not Reported | Not Reported | Improved robustness to motion stabilization; Better than baseline approaches [5] |

| Forensic Voice Comparison | Bi-Gaussian calibration | Direct mapping to σ² | Not Reported | Perfectly calibrated when same-source and different-source distributions are Gaussian with equal variance and means of -σ²/2 and +σ²/2 [11] |

The data demonstrates that LR methods provide a mathematically rigorous framework for evaluating evidence under SS and DS propositions across diverse forensic disciplines. However, performance varies significantly based on the specific algorithm, evidence type, and implementation details.

Table 2: Comparison of LR Calculation Methodologies

| LR Method | Implementation Complexity | Probabilistic Soundness | Required Data | Best Application Context |

|---|---|---|---|---|

| Plug-in Score-Based | Moderate | Calibration-dependent | Similarity scores from known SS/DS pairs | Continuous similarity scores; PRNU comparisons [5] |

| Direct Methods | High | High | Raw feature vectors from known sources | Maximum probabilistic rigor; Speaker recognition [5] |

| Bi-Gaussian Calibration | Moderate | High with proper fitting | Calibrated score distributions | Voice comparison; General forensic evaluation systems [11] |

The Scientist's Toolkit: Research Reagent Solutions

Implementing robust SS/DS evaluation systems requires specific technical components and methodological solutions. The following table outlines essential "research reagents" for developing and validating forensic evaluation methods:

Table 3: Essential Research Reagents for SS/DS Hypothesis Testing

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Reference Datasets | Provide known SS/DS pairs for validation | Fingerprint datasets with 5-12 minutiae; Paired image/PRNU data; Voice recording databases [4] [5] |

| Similarity Metrics | Quantify correspondence between specimens | Peak-to-Correlation Energy (PCE) for PRNU; Minutiae correspondence in fingerprints; Acoustic feature similarity [5] |

| Calibration Algorithms | Transform similarity scores to valid LRs | Logistic regression; Bi-Gaussian calibration; Pool-adjacent-violators algorithm [11] [5] |

| Validation Metrics | Assess system performance and reliability | Cllr, EER, Tippett plots; Discriminability analysis; Calibration diagnostics [11] [5] |

| Feature Extraction Tools | Extract discriminative features from raw data | PRNU estimation algorithms; Minutiae detectors; Acoustic feature extractors [5] |

Advanced Calibration: The Bi-Gaussian Method

The bi-Gaussian calibration method represents a significant advancement in producing well-calibrated LRs for SS/DS hypothesis testing. This approach ensures that output LRs have proper probabilistic interpretation, which is essential for forensic applications. The following diagram illustrates the calibration workflow:

A system is considered perfectly calibrated when the distributions of log(LR) for both SS and DS conditions are Gaussian with equal variance (σ²) and means of -σ²/2 and +σ²/2 for DS and SS distributions respectively [11]. In this state, for any LR value, the probability density ratio of the SS and DS distributions at the corresponding log(LR) value equals the LR value itself, ensuring perfect calibration across the entire range of evidentiary strength.

The establishment and validation of SS versus DS propositions through likelihood ratio frameworks represents a fundamental advancement in forensic science. The rigorous application of validation metrics including Cllr, EER, and Tippett plots ensures that forensic evaluation systems meet required standards of reliability and accuracy. As the field continues its paradigm shift from subjective judgment to data-driven methodologies, continued refinement of calibration techniques and validation protocols will further strengthen the scientific foundation of forensic evidence evaluation.

The experimental data and methodologies reviewed demonstrate that while approaches may differ across forensic domains, the core principles of transparent, empirically validated, and probabilistically sound evidence evaluation remain constant. This consistency enables more meaningful communication of evidentiary strength and facilitates more informed decision-making in legal contexts.

Implementing the Validation Framework: From Theory to Practical Application

Step-by-Step Guide to Computing Cllr for Accuracy and Calibration Assessment

This guide provides a detailed methodology for using the Log-Likelihood Ratio Cost (Cllr) to assess the performance of forensic evaluation systems. Cllr is a key metric for validating Likelihood Ratio (LR) systems, measuring both their discriminatory power and calibration [8].

Understanding Cllr and Its Role in Validation

The Cllr is a strictly proper scoring rule that offers a probabilistic interpretation of a forensic system's performance. It penalizes LRs that are misleading, with heavier penalties for more egregious errors [8]. A perfect system achieves a Cllr of 0, while an uninformative system that always returns LR=1 scores 1 [8].

Cllr can be decomposed into two components, providing deeper diagnostic insight:

- Cllrmin: Measures the intrinsic discrimination error of a system. It represents the best possible calibration and is computed after applying the Pool Adjacent Violators (PAV) algorithm to the LRs [8].

- Cllrcal: Measures the calibration error, calculated as Cllrcal = Cllr - Cllrmin. A large value indicates the system consistently overstates or understates the strength of the evidence [8].

Computational Workflow for Cllr

The following diagram illustrates the complete process for computing and interpreting Cllr, from data preparation to final performance assessment.

The Core Cllr Formula

The Cllr is calculated using the following formula [8]:

Cllr = 1/2 * [ (1/NH1) * Σ log2(1 + 1/LRH1,i) + (1/NH2) * Σ log2(1 + LRH2,j) ]

Where:

- NH1: Number of samples where hypothesis H1 is true.

- NH2: Number of samples where hypothesis H2 is true.

- LRH1,i: LR values predicted by the system for samples where H1 is true.

- LRH2,j: LR values predicted by the system for samples where H2 is true.

Experimental Protocol for Validation

To ensure reliable validation, a structured experimental design is critical. The following protocol is adapted from a fingerprint validation case study [3] [4].

Data Preparation and Propositions

- Datasets: Use separate datasets for development (training the LR model) and validation (testing its performance). The validation set should consist of forensically relevant data, such as real case fingermarks [3] [4].

- Propositions: Clearly define the competing hypotheses for the LR calculation. In a source attribution context, these are typically:

- H1 (Same-Source): The questioned piece of evidence and the known reference originate from the same source.

- H2 (Different-Source): The questioned piece of evidence originates from a different, unrelated source within a relevant population [3].

Generating Likelihood Ratios

The process for generating LRs varies by forensic discipline. The table below outlines methods from different fields.

Table 1: LR Generation Methods in Different Forensic Disciplines

| Forensic Discipline | Data Type | Similarity Score/Method | LR Computation Method |

|---|---|---|---|

| Fingerprint Analysis [3] | AFIS comparison scores | Scores from commercial AFIS algorithms (e.g., Motorola BIS) | Plug-in score-based LR method using distributions of Same-Source and Different-Source scores. |

| Source Camera Attribution [5] | Sensor Pattern Noise (PRNU) | Peak-to-Correlation Energy (PCE) | Score-based LR method using statistical modeling to convert PCE scores to LRs. |

| DNA Mixture Interpretation [21] | DNA profiles | Probabilistic Genotyping (PG) software (e.g., DNAStatistX, EuroForMix) | Direct LR calculation based on probability distributions of DNA profiles under H1 and H2. |

Performance Metrics and Visualization

A comprehensive validation requires multiple metrics and visualizations to assess different performance characteristics [3].

The Validation Matrix

A validation matrix should be established before testing to define the criteria for success. The following matrix serves as a template [3].

Table 2: Example Validation Matrix for LR System Performance

| Performance Characteristic | Performance Metric | Graphical Representation | Example Validation Criterion |

|---|---|---|---|

| Accuracy | Cllr | Empirical Cross-Entropy (ECE) Plot | Cllr < 0.3 [3] |

| Discriminating Power | Cllrmin, EER | ECEmin Plot, DET Plot | Improvement over a baseline method [3] |

| Calibration | Cllrcal | ECE Plot, Tippett Plot | Cllrcal |

| Robustness | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Performance degradation < 20% under varied conditions [3] |

Key Visualization Tools

- Empirical Cross-Entropy (ECE) Plots: These plots show the Cllr across different prior probabilities, allowing for a comprehensive view of system performance. The ECE plot typically includes three curves [8] [21]:

- The Cllr of the validated system.

- The Cllrmin, showing the best possible calibration for that system's discrimination.

- The Cllr of an uninformative system (LR=1).

- Tippett Plots: These plots show the cumulative distribution of log10(LR) for both H1-true and H2-true scenarios. A well-calibrated system will show H1-true LRs above 0 and H2-true LRs below 0, with minimal overlap [21].

- Fiducial Calibration Discrepancy Plots: These plots indicate whether LRs are overstated or understated for specific ranges of LR values. Perfect calibration is shown by a horizontal line at zero discrepancy [21].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools for Cllr Validation

| Item / Tool | Function / Description | Relevance to Cllr Experiment |

|---|---|---|

| Validation Dataset | A set of evidence samples with known ground truth (e.g., fingermarks from known sources) [3]. | Essential for computing empirical LRs and ground truth labels (H1-true, H2-true) required for the Cllr formula. |

| LR System / Software | The automated or semi-automated system under validation (e.g., Probabilistic Genotyping software, AFIS with LR calculation) [8] [21]. | Generates the set of LRs from the validation dataset, which are the primary inputs for the Cllr calculation. |

| Pool Adjacent Violators (PAV) Algorithm | A non-parametric algorithm for isotonic regression [8]. | Applied to the raw LRs to compute Cllrmin, separating discrimination and calibration performance. |

| ECE Plot Software | Software routines (e.g., in R or MATLAB) to generate Empirical Cross-Entropy plots [21]. | Critical for visualizing overall accuracy (Cllr), discrimination (Cllrmin), and calibration. |

Interpreting Results and Benchmarking

Interpreting whether a Cllr value is "good" is highly context-dependent. A review of 136 publications found that Cllr values vary substantially between different forensic analyses and datasets, with no clear universal patterns [8]. Therefore, benchmarking against a known baseline or performance criteria defined in your validation matrix is essential.

When evaluating performance, pay close attention to the decomposition of Cllr. A high Cllrcal suggests the system's calibration can be improved via a transformation like the PAV algorithm, while a high Cllrmin indicates a fundamental limitation in the system's ability to distinguish between the hypotheses [8]. For advanced calibration diagnostics, fiducial calibration discrepancy plots can pinpoint exactly which ranges of LR values are overstated or understated [21].

Experimental Design for Generating Same-Source and Different-Source Scores

The evaluation of forensic evidence, particularly in source attribution tasks, relies fundamentally on the robust generation and interpretation of same-source and different-source scores. These scores form the empirical foundation for calculating the Likelihood Ratio (LR), which represents a logically correct framework for expressing the strength of evidence [5]. The transition from simple similarity scores to properly calibrated LRs marks a significant paradigm shift in forensic science, moving from subjective judgment toward transparent, quantitative, and empirically validated methods [11].

This paradigm shift requires rigorous experimental designs that properly generate comparison scores under controlled conditions. The design must account for the specific challenges of different forensic disciplines while maintaining statistical validity. Proper score generation enables not only more accurate evidence evaluation but also the validation of forensic systems through performance metrics like the log-likelihood-ratio cost (Cllr) and Equal Error Rate (EER) [11] [5].

Foundational Principles of Score Generation

The Likelihood Ratio Framework

The LR framework provides a coherent structure for evidence evaluation, combining the same-source and different-source scores into a single measure of evidentiary strength. The LR is calculated as the ratio of the probability of the evidence under the same-source proposition to the probability of the evidence under the different-source proposition [5]. This Bayesian approach allows for logical updating of prior beliefs based on forensic evidence.

Role of Scores in Forensic Inference

Similarity scores serve as the initial quantitative measure of correspondence between two samples. However, these scores lack inherent probabilistic meaning and must be transformed through proper statistical modeling to become forensically meaningful. The experimental design for generating these scores must therefore ensure they accurately represent the underlying distributions of same-source and different-source comparisons [5].

Experimental Design Methodology

Core Experimental Protocol

A properly designed experiment for generating same-source and different-source scores requires careful consideration of data collection, comparison strategy, and statistical modeling. The following workflow outlines the key components:

Figure 1: Experimental workflow for generating and validating forensic comparison scores.

Data Collection and Reference Database Creation

The foundation of any score-generation experiment is a comprehensive reference database that adequately represents the population of interest. For source camera attribution, this involves collecting flat-field images and videos from multiple devices under controlled conditions [5]. The database should include sufficient samples to capture both within-source variability (multiple samples from the same source) and between-source variability (samples from different sources).

In PRNU-based camera attribution, reference PRNU patterns are estimated using the Maximum Likelihood criterion from multiple flat-field images [5]. The estimation formula is expressed as:

where Il(x,y) represents the images and Kl(x,y) their associated PRNU estimates.

Comparison Strategies and Score Generation

The experimental design must implement systematic comparison strategies that generate both same-source scores (comparing samples from the same origin) and different-source scores (comparing samples from different origins). For PRNU-based methods, the Peak-to-Correlation Energy (PCE) serves as the similarity measure, calculated as [5]:

where ϱ(u₀,v₀) represents the correlation peak energy and the denominator calculates the energy of correlations outside a neighborhood surrounding the peak.

Specialized Considerations for Different Media Types

The experimental design must adapt to the specific challenges of different digital media. For video source attribution, additional complexities arise from Digital Motion Stabilization (DMS) techniques that alter geometric alignment between frames [5]. The experimental protocol should include specific strategies for handling these challenges:

Table 1: Comparison Strategies for Video Source Attribution

| Strategy | Description | Application Context |

|---|---|---|

| Baseline | PRNU obtained by cumulating noise patterns from multiple frames with PCE computation | Basic video comparison without DMS |

| Highest Frame Score (HFS) | Frame-by-frame PRNU extraction and comparison | Videos with moderate DMS effects |

| Reference Type 1 (RT1) | Flat-field video recordings to extract key-frame sensor noise | Standardized video reference creation |

| Reference Type 2 (RT2) | Combined use of flat-field images and videos | Comprehensive reference database minimizing DMS impact |

Performance Metrics and Validation

Essential Metrics for Score Validation

The performance of forensic evaluation systems must be rigorously validated using standardized metrics that assess both discrimination capability and calibration quality. The relationship between these metrics provides a comprehensive validation framework:

Figure 2: Relationship between validation metrics for forensic evaluation systems.

Equal Error Rate (EER) and Discrimination

The EER represents the point where false positive and false negative rates are equal, providing a scalar measure of discrimination performance [5]. Lower EER values indicate better discrimination between same-source and different-source distributions.

Log-Likelihood-Ratio Cost (Cllr) and Calibration

The Cllr metric assesses the quality of LR calibration, measuring both the discrimination and reliability of the LR values [11]. A perfectly calibrated system produces LRs where the same-source and different-source distributions follow a specific bi-Gaussian pattern with equal variance and means at +σ²/2 and -σ²/2 respectively [11].

Calibration Methods for LR Generation

The transformation from similarity scores to forensically valid LRs requires careful calibration. The bi-Gaussian calibration method represents an advanced approach [11]:

- Initial Processing: Calculate uncalibrated LRs using a traditional method

- Preliminary Calibration: Apply monotonic calibration (e.g., logistic regression)

- Performance Assessment: Calculate Cllr from the calibrated outputs

- Variance Determination: Derive the σ² value for the perfectly-calibrated bi-Gaussian system

- Mapping Function: Determine the cumulative distribution mapping to the theoretical bi-Gaussian system

- Final Calibration: Map uncalibrated outputs to calibrated LRs using the derived function

Research Reagent Solutions

The implementation of robust experimental designs for score generation requires specific methodological components that function as essential "research reagents" in forensic science.

Table 2: Essential Research Reagents for Forensic Score Generation Experiments

| Reagent Category | Specific Implementation | Function in Experimental Design |

|---|---|---|

| Reference Database | Flat-field images and videos from multiple devices | Provides controlled samples for known-source comparisons |

| Similarity Metric | Peak-to-Correlation Energy (PCE) | Quantifies correspondence between two PRNU patterns |

| Statistical Model | Bi-Gaussian calibration model | Transforms similarity scores to probabilistically meaningful LRs |

| Performance Metrics | Cllr and EER | Validates discrimination and calibration performance |

| Validation Tools | Tippett plots and Tippett plot | Visualizes distributions of LRs for same-source and different-source comparisons |

Advanced Experimental Considerations

Addressing Domain-Specific Challenges

Different forensic domains present unique challenges for score generation. In speaker recognition, for example, the experimental design must account for linguistic familiarity effects, with listeners from different language backgrounds showing varied performance [11]. For digital media, the experimental protocol must address technical factors like video compression levels and Digital Motion Stabilization impacts on PRNU extraction [5].

Validation Under Casework Conditions

The ultimate test of any experimental design is its performance under casework conditions. Validation should include [11]:

- Transparency and Reproducibility: Methods must be fully documented and repeatable

- Cognitive Bias Resistance: Automated score generation minimizes human judgment biases

- Empirical Validation: Systems must be tested using data reflecting real casework conditions

- Logical Consistency: The LR framework must be properly implemented without logical flaws

The experimental design for generating same-source and different-source scores represents a critical component of modern forensic science validation. Through proper implementation of systematic comparison strategies, rigorous statistical calibration, and comprehensive performance validation using Cllr, EER, and Tippett plots, forensic practitioners can advance the paradigm shift toward more transparent, quantitative, and scientifically valid evidence evaluation methods. The methodologies outlined provide a framework for developing forensically sound score generation protocols across multiple disciplines, from digital evidence to traditional forensic domains.

Constructing Tippett Plots and DET Curves for Discriminating Power Analysis

In the validation of forensic evidence evaluation and biometric recognition systems, discriminating power analysis provides the quantitative foundation for assessing system performance. The core of this analysis relies on two powerful graphical tools: Detection Error Trade-off (DET) curves and Tippett plots. These visualization methods enable researchers to objectively compare system performance under different conditions and against various alternatives. Within the broader context of Cllr (Cost of log-likelihood ratio) and Equal Error Rate (EER) validation research, these tools form an essential framework for establishing the reliability of forensic evidence reporting, particularly in domains where likelihood ratios (LRs) are used to convey the strength of evidence [5].

The transition from simple similarity scores to probabilistically sound LRs represents a critical advancement in forensic disciplines. Where similarity scores often lack probabilistic interpretation, LRs provide a mathematically rigorous framework that can be directly incorporated into forensic casework and combined with other case-related evidence [5]. This paper examines the construction, interpretation, and application of Tippett plots and DET curves within this evolving paradigm, providing researchers with practical methodologies for implementing these analytical techniques in validation studies.

Theoretical Foundations and Performance Metrics

The Likelihood Ratio Framework

The likelihood ratio framework derives from Bayes' theorem and represents the preferred method for presenting findings from criminal investigations across forensic disciplines [5]. An LR quantitatively expresses the strength of evidence by comparing the probability of the evidence under two competing propositions: the same-source proposition (Hp) and different-source proposition (Hd). The formula for calculating the LR is:

LR = P(E|Hp) / P(E|Hd)

Where E represents the observed evidence, P(E|Hp) is the probability of observing the evidence if Hp is true, and P(E|Hd) is the probability of observing the evidence if Hd is true. LR values greater than 1 support Hp, while values less than 1 support Hd. The log-LR (log-likelihood ratio) is often used to create a symmetric scale centered at zero [5].

Key Performance Metrics

| Metric | Formula/Calculation | Interpretation |

|---|---|---|

| Equal Error Rate (EER) | Point where FAR = FRR | Lower values indicate better performance |

| False Acceptance Rate (FAR) | Number of false acceptances / Total impostor attempts | Probability of incorrect match |

| False Rejection Rate (FRR) | Number of false rejections / Total genuine attempts | Probability of incorrect non-match |