Validation Frameworks in Forensics: A Comparative Analysis of Traditional and Digital Methodologies

This article provides a comprehensive analysis of validation frameworks across traditional and digital forensic disciplines.

Validation Frameworks in Forensics: A Comparative Analysis of Traditional and Digital Methodologies

Abstract

This article provides a comprehensive analysis of validation frameworks across traditional and digital forensic disciplines. It explores the foundational principles of forensic validation, examines methodological applications in evolving crime labs, addresses critical troubleshooting and optimization challenges, and delivers a rigorous comparative assessment. Designed for forensic researchers, scientists, and developers, the content synthesizes current standards, tool-specific considerations, and emerging trends to equip professionals with the knowledge to ensure evidentiary reliability and legal admissibility in a rapidly changing technological landscape.

Core Principles and Definitions: Building the Foundation of Forensic Validation

Forensic validation is a fundamental testing and confirmation practice implemented across all forensic disciplines to ensure the tools and methods used to analyze evidence are accurate, reliable, and legally admissible [1]. It functions as a critical safeguard against error, bias, and misinterpretation, forming the bedrock of scientific credibility in judicial proceedings. The rapid evolution of technology, particularly in digital forensics where new operating systems, encrypted applications, and cloud storage continuously emerge, demands constant revalidation of forensic tools and practices [1]. Within this context, validation is systematically broken down into three core components: Tool Validation, which ensures forensic software or hardware performs as intended; Method Validation, which confirms procedures produce consistent outcomes; and Analysis Validation, which evaluates whether interpreted data accurately reflects its true meaning and context [1]. This framework ensures that forensic conclusions are supported by scientific integrity, are reproducible under scrutiny, and are robust enough to withstand legal challenges.

Core Components of Forensic Validation

Tool Validation

Tool validation focuses on the forensic software and hardware used to extract and report data. It verifies that these tools function correctly without altering the original source evidence. In digital forensics, tools like Cellebrite UFED, Oxygen Forensic Detective (OFD), and OpenText EnCase Forensic are frequently updated, and each update necessitates re-validation to ensure parsing capabilities and data extraction remain accurate [2] [1]. Without this step, tools may introduce errors or omit critical data. For instance, two different tools extracting data from the same mobile phone may yield divergent results based on their individual parsing algorithms and support for specific device models [1].

Key practices in tool validation include:

- Using cryptographic hash values (e.g., SHA-256) to confirm data integrity before and after creating a forensic image [1].

- Comparing tool outputs against known datasets or test cases with pre-defined content [1].

- Cross-validating results across multiple, independent tools to identify inconsistencies or omissions [1].

Method Validation

Method validation confirms that the specific procedures and techniques followed by forensic analysts produce consistent and reliable outcomes across different cases, devices, and practitioners. This component addresses the "how" of the investigative process, ensuring that the methodology is sound, documented, and repeatable by other qualified professionals. This is especially crucial with advanced or destructive extraction techniques, such as those used for NAND flash memories in damaged or locked devices [2].

The levels of data acquisition methods, ranked by destructiveness, are [2]:

- Level 1: Manual Extraction: A low-complexity, non-destructive search. Its primary limitation is the locked device.

- Level 2: Logical Extraction: Data extraction via a connection to a forensic workstation using tools like OFD or EnCase. Not all data may be accessible.

- Level 3: JTAG and HEX Dump: A semi-destructive method using Test Access Ports (TAPs) on device motherboards to bypass OS restrictions.

- Level 4: Chip-Off: A complex, destructive technique involving the physical extraction of the memory chip for reading.

- Level 5: Microreading: A highly resource-intensive method involving the physical observation of chips using scanning electron microscopy.

Analysis Validation

Analysis validation is the process of evaluating whether an analyst's interpretation of the data accurately reflects its true meaning and context. It ensures that the software presents a valid representation of the underlying evidence and that the conclusions drawn are forensically sound [1]. This is particularly important for complex data artifacts, such as mobile device operating system logs where timestamps can be misleading without proper context [1]. The rise of artificial intelligence (AI) in forensic tools introduces new complexities for analysis validation, as algorithms may produce "black box" results that experts cannot easily explain, necessitating rigorous interpretation and validation of AI-generated findings [1] [3].

Experimental Protocols for Validation

Protocol for Validating a Digital Forensics Tool

This protocol outlines the steps to validate a tool like Cellebrite UFED or Oxygen Forensic Detective for a specific task, such as extracting data from a mobile device.

Objective: To verify that the tool accurately extracts and reports all accessible data from a designated mobile device model without alteration. Methodology:

- Preparation: Use a control mobile device that has been forensically wiped and loaded with a known dataset. This dataset should include a variety of file types (e.g., contacts, SMS, images, application data) whose locations and hashes are documented.

- Acquisition: Use the tool to perform a logical and/or physical extraction of the control device's data.

- Integrity Check: Compare the hash value of the extracted forensic image against the expected hash to ensure the data was not modified during acquisition.

- Data Verification: Analyze the extraction output using the tool's reporting function. Verify the presence and integrity of all known data items from the control dataset.

- Cross-Validation: Perform the same extraction on the control device using a different, previously validated tool. Compare the outputs from both tools for consistency.

Table 1: Sample Results from a Mobile Tool Validation Experiment

| Data Type | Known Data Value (Control) | Extracted by Tool A | Extracted by Tool B | Validation Result |

|---|---|---|---|---|

| SMS Text | "Test Message 123" | "Test Message 123" | "Test Message 123" | Pass |

| Contact Name | "John Doe" | "John Doe" | "John Doe" | Pass |

| Image File Hash | a1b2c3... | a1b2c3... | a1b2c3... | Pass |

| Deleted File Hash | d4e5f6... | Not Recovered | d4e5f6... | Fail for Tool A |

Protocol for Validating a Data Extraction Method

This protocol is for validating a specific forensic method, such as the Chip-Off technique for NAND flash memory.

Objective: To confirm that the chip-off procedure reliably recovers data from a specific memory chip type without data loss or corruption. Methodology:

- Sample Preparation: Select several identical, non-critical memory chips (e.g., from decommissioned devices) programmed with a known data set.

- Procedure Execution: Perform the chip-off extraction process, which involves:

- Desoldering: Physically removing the memory chip from the circuit board using a hot air rework station.

- Cleaning: Preparing the chip for reading.

- Reading: Placing the chip in a specialized adapter and reading its contents with a chip programmer (e.g., RT809H).

- Data Analysis: Compare the checksum of the data read from the chip against the checksum of the original known data.

- Repeatability: The procedure should be repeated multiple times by different trained technicians to establish an error rate and ensure consistency.

Table 2: Comparison of Data Acquisition Methods [2]

| Method Level | Method Name | Destructiveness | Key Tools | Primary Use Case |

|---|---|---|---|---|

| 1 | Manual Extraction | Non-destructive | ZRT Screen Capture | Functional, unlocked devices |

| 2 | Logical Extraction | Non-destructive | Oxygen Forensic Detective, EnCase | Standard data extraction |

| 3 | JTAG | Semi-destructive | RIFF/Medusa Box, JTAG adapter | Bypassing OS restrictions on damaged devices |

| 4 | Chip-Off | Destructive | Hot air station, RT809H programmer | Data recovery from physically damaged devices |

| 5 | Microreading | Highly Destructive | Scanning Electron Microscope | Extreme cases in high-priority investigations |

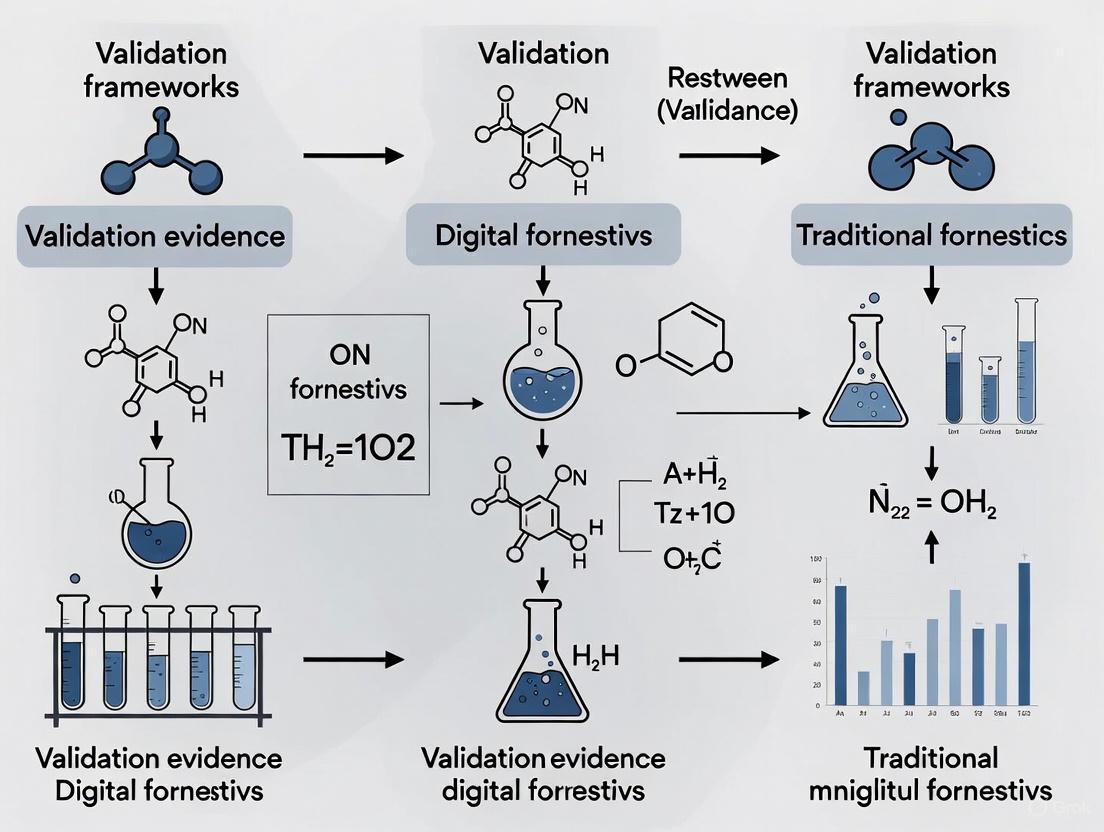

Visualization: Forensic Validation Workflow

The following diagram illustrates the logical relationship and workflow between the three core components of forensic validation, showing how they build upon one another to ensure overall reliability.

The Scientist's Toolkit: Essential Research Reagents & Materials

Forensic validation relies on a suite of specialized tools and materials to execute experiments and verify results. The following table details key solutions and their functions in a validation context.

Table 3: Essential Research Reagents & Materials for Forensic Validation

| Tool/Solution | Primary Function in Validation | Example in Use |

|---|---|---|

| Control Data Sets | Pre-defined, known data used to verify tool accuracy and method reliability. | A smartphone loaded with a specific set of SMS, contacts, and images for tool output verification [1]. |

| Forensic Write-Blockers | Hardware devices that prevent any write operations to the source evidence during acquisition. | Used during the disk imaging process to ensure the integrity of the original evidence for tool and method validation. |

| Hex Editors & Viewers | Software that allows for the bit-level inspection of data, independent of forensic tools. | Used for analysis validation to manually verify the raw data behind a tool's interpretation or report [2]. |

| Cryptographic Hash Calculators | Algorithms (e.g., SHA-256, MD5) that generate a unique digital fingerprint for a file or dataset. | The cornerstone of integrity checks; used to confirm that evidence is unaltered before and after any forensic process [1]. |

| Reference Devices | Known, functional devices (phones, hard drives) used as standardized test platforms. | Allow for the repeatable testing of tools and methods across different labs and by different practitioners. |

| JTAG/Chip-Off Equipment | Specialized hardware for advanced data extraction from damaged or locked devices. | Used to validate methods for Level 3 and 4 acquisitions, establishing their success rate and potential for data loss [2]. |

Comparative Analysis: Digital vs. Traditional Forensic Validation

While the core principles of validation—reproducibility, transparency, and error rate awareness—apply across all forensic disciplines, their implementation differs significantly between digital and traditional fields like DNA or chemistry.

Shared Foundations: Both domains require rigorous validation to meet legal admissibility standards, such as the Daubert Standard, which judges the reliability of scientific evidence based on factors like testability, peer review, and known error rates [1]. The principle of continuous validation is also universal, as methods in both domains evolve, though the pace of change is drastically faster in digital forensics.

Key Differences:

- Evidence Nature: Digital evidence is inherently volatile and easily manipulated, whereas traditional physical evidence (e.g., DNA, fibers) is more stable, though susceptible to contamination [1].

- Tool Evolution: Digital forensic tools are updated frequently (sometimes monthly) to handle new devices and apps, demanding near-constant re-validation [1]. Traditional lab equipment and chemical reagents are more stable, with validation cycles typically tied to new research or technology refreshes.

- Automation and AI: Digital forensics is rapidly integrating AI for tasks like media analysis and data sifting, creating new "black box" challenges for analysis validation [4] [3]. The use of AI in traditional fields like DNA phenotyping is emerging but is not yet as widespread in routine analysis [5] [6].

Case Studies in Validation Failure and Success

Case Study: FL vs. Casey Anthony (2011) - A Validation Failure

In this case, the prosecution's digital forensic expert initially testified that 84 searches for "chloroform" had been made on the family computer. However, through forensic validation conducted by the defense, expert Larry Daniel demonstrated that the software used had grossly overstated the results. His analysis confirmed that only a single instance of the search term had occurred, directly contradicting the earlier claims. This case underscores the critical consequence of inadequate tool validation: the potential for misinterpreted evidence to wrongly sway a jury [1].

Case Study: MA vs. Karen Read (2025) - Validation in Practice

Cellebrite Senior Digital Intelligence Expert Ian Whiffin emphasized the importance of rigorous validation when interpreting complex data artifacts from mobile devices. He explained that timestamps and operating system logs can be misleading without proper context. To ensure accuracy, he conducted tests across multiple devices to validate his conclusions before testifying. This demonstrates a core principle of method and analysis validation: verifying interpretations through controlled testing to ensure they are reliable and contextually accurate [1].

Forensic validation—spanning tool, method, and analysis—is not an optional step but an ethical and professional imperative. It is the linchpin that ensures forensic conclusions are rooted in scientific integrity and are robust enough to support the weight of legal proceedings. As forensic science continues to evolve, particularly with the integration of AI and the growing complexity of digital evidence, the commitment to transparent, repeatable, and scientifically sound validation practices becomes ever more critical. By adhering to these principles, forensic professionals uphold the trust placed in them by the justice system and ensure the accurate and accountable pursuit of truth.

Forensic science is undergoing a fundamental transition, moving from craft-based practices toward a rigorous scientific discipline grounded in objectivity, statistical reasoning, and quality assurance [7]. This evolution centers on three interdependent pillars that form the foundation of reliable forensic evidence: reproducibility, transparency, and error rate awareness. These principles apply across both traditional forensic disciplines (like fingerprints and DNA analysis) and digital forensics, though their implementation varies significantly based on the nature of evidence and technological considerations. Where traditional forensics often deals with physical evidence, digital forensics confronts volatile, easily manipulated data in rapidly evolving technological environments [1]. This comparison guide examines how these distinct forensic domains implement validation frameworks, objectively comparing their approaches to achieving scientific reliability.

Comparative Analysis: Three Pillars Across Forensic Disciplines

The table below summarizes how traditional and digital forensics implement the three core pillars of reliability.

Table 1: Implementation of Reliability Pillars in Traditional vs. Digital Forensics

| Reliability Pillar | Traditional Forensics | Digital Forensics |

|---|---|---|

| Reproducibility | Focus on procedural standardization and empirical foundation for pattern-matching disciplines [8] [7]. | Relies on tool verification, hash-based data integrity checks, and cross-validation across multiple tools [1] [9]. |

| Transparency | Movement toward disclosing limitations, methodologies, and uncertainties in expert reports [10] [8]. | Requires detailed documentation of tools, procedures, and chain of custody; mandates disclosure of unvalidated results [1] [11]. |

| Error Rate Awareness | Growing acknowledgment of false positives; research to establish foundational validity and quantify error rates [8] [7]. | Emphasis on tool testing, known error rates for specific functions, and acknowledgment of parsing inaccuracies [1] [9]. |

Experimental Validation and Performance Data

Tool Reliability Testing

Experimental studies directly compare the performance of digital forensic tools to establish reliability metrics. The following table summarizes results from controlled tests evaluating commercial versus open-source tools in key forensic functions.

Table 2: Digital Forensic Tool Performance Comparison (Based on Controlled Experiments) [9]

| Tool Type | Tool Name | Data Preservation Integrity | Deleted File Recovery Rate | Targeted Search Accuracy | Legal Admissibility Support |

|---|---|---|---|---|---|

| Commercial | FTK | Consistent hash verification | High | High | Established |

| Commercial | Forensic MagiCube | Consistent hash verification | High | High | Established |

| Open-Source | Autopsy | Consistent hash verification | Comparable to Commercial | High | Satisfies Daubert when validated |

| Open-Source | ProDiscover Basic | Consistent hash verification | Comparable to Commercial | High | Satisfies Daubert when validated |

Methodological Foundation

The experimental protocol for generating the comparative data in Table 2 involved:

- Controlled Testing Environment: Two Windows-based workstations configured with identical evidence samples [9].

- Test Scenarios: Three distinct forensic tasks: (1) preservation and collection of original data; (2) recovery of deleted files via data carving; (3) targeted artifact searching in case-specific scenarios [9].

- Validation Metrics: Each experiment conducted in triplicate to establish repeatability; error rates calculated by comparing acquired artifacts against control references; data integrity verified via hash value comparison [9].

- Legal Standard Compliance: Testing framework designed to satisfy Daubert criteria, including testability, error rate assessment, and peer review potential [9].

Visualization of Forensic Validation Workflows

Digital Forensic Validation Logic

Digital forensic validation employs a sequential, tool-dependent workflow where each stage requires specific technical validation checks.

Traditional Forensic Validation Logic

Traditional forensic validation follows a more iterative, human-centric workflow focused on comparative analysis and probabilistic assessment.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Digital Forensic Research Toolkit [1] [12] [9]

| Tool/Category | Function | Examples |

|---|---|---|

| Commercial Forensic Suites | Comprehensive evidence processing and analysis | Cellebrite UFED, FTK, EnCase, Magnet AXIOM |

| Open-Source Tools | Cost-effective alternatives; method transparency | Autopsy, The Sleuth Kit, ProDiscover Basic |

| Validation Utilities | Integrity verification and tool testing | Hash calculators (MD5, SHA-1), write blockers |

| Reference Standards | Standardized procedures for evidence handling | ISO/IEC 27037, NIST Computer Forensics Tool Testing |

| Specialized Modules | Domain-specific forensic analysis | Mobile (XRY, Oxygen), Network (Wireshark), IoT |

The pillars of reproducibility, transparency, and error rate awareness provide a unified framework for validating forensic evidence across traditional and digital domains. While digital forensics relies heavily on technical tool validation and data integrity verification, traditional forensics emphasizes human expertise and probabilistic assessment. Both disciplines face the ongoing challenge of establishing foundational validity while maintaining practical applicability. The experimental data demonstrates that when properly validated using rigorous methodologies, both commercial and open-source solutions can produce forensically sound results that meet legal admissibility standards. As forensic science continues its transition toward greater scientific rigor, these three pillars will remain essential for ensuring reliable outcomes in both investigative and judicial contexts.

The admissibility of expert testimony, a cornerstone of modern litigation, is governed by distinct legal standards that act as validation frameworks for scientific evidence. In the realm of forensics—both digital and traditional—these standards determine which methodologies, principles, and expert opinions can be presented to a trier of fact. The Daubert Standard and the Frye Standard are the two primary frameworks performing this gatekeeping function [13] [14]. Their application ensures that expert testimony is not only relevant but also derived from reliable scientific methods, thereby safeguarding the integrity of the judicial process.

Understanding the differences between these standards is critical for researchers and forensic professionals who must validate their techniques and present their findings in court. This guide provides a comparative analysis of the Daubert and Frye standards, examining their core criteria, procedural applications, and implications for the validation of novel forensic methods.

The Frye Standard: "General Acceptance" Test

The Frye Standard originates from the 1923 case Frye v. United States, which dealt with the admissibility of polygraph (systolic blood pressure deception test) evidence [15] [16]. The court established a "general acceptance" test, ruling that for an expert's scientific testimony to be admissible, the methodology underlying it must be "sufficiently established to have gained general acceptance in the particular field in which it belongs" [16].

Core Principle and Application

- Primary Test: General acceptance within the relevant scientific community [15] [17].

- Judicial Role: The court's role is limited to determining whether the principle or discovery has crossed the line from experimental to demonstrable stages based on its acceptance by specialists in the field [15] [18].

- Focus: The Frye standard focuses narrowly on the consensus within the scientific community regarding the methodology, not on the ultimate conclusions drawn by the expert [17].

Limitations and Critique

The Frye Standard has been criticized for being conservative and potentially excluding novel but reliable scientific techniques simply because they are new and have not yet gained widespread acceptance [15] [14]. This can be a significant hurdle for emerging fields like digital forensics, where technologies and methods evolve rapidly.

The Daubert Standard: A "Reliability and Relevance" Test

The Daubert Standard emerged from the 1993 U.S. Supreme Court case Daubert v. Merrell Dow Pharmaceuticals, Inc. [13]. This decision held that the Federal Rules of Evidence, particularly Rule 702, had superseded the Frye Standard in federal courts. The Daubert Standard assigns trial judges a "gatekeeping" role, requiring them to ensure that all expert testimony is not only relevant but also based on a reliable foundation [13] [19].

Core Principles and Factors

Under Daubert, judges evaluate the admissibility of expert testimony using a non-exhaustive list of factors [13] [19]:

- Testing and Falsifiability: Whether the expert's theory or technique can be (and has been) tested.

- Peer Review: Whether the method has been subjected to peer review and publication.

- Error Rate: The known or potential error rate of the technique.

- Standards: The existence and maintenance of standards controlling the technique's operation.

- General Acceptance: Whether the method has attracted widespread acceptance within a relevant scientific community (incorporating the Frye test as one factor among several).

Subsequent cases, General Electric Co. v. Joiner (1997) and Kumho Tire Co. v. Carmichael (1999), solidified this standard. The Kumho Tire decision extended the judge's gatekeeping function to all expert testimony, including non-scientific, technical, and other specialized knowledge [13] [14] [19]. This trilogy of cases is collectively known as the "Daubert Trilogy."

Comparative Analysis: Daubert vs. Frye

The following table summarizes the key differences between the two standards.

Table 1: Core Criteria Comparison of Daubert and Frye Standards

| Feature | Daubert Standard | Frye Standard |

|---|---|---|

| Originating Case | Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993) [13] | Frye v. United States (1923) [15] |

| Primary Test | Relevance and reliability of the methodology [13] [19] | "General acceptance" in the relevant scientific community [15] [17] |

| Judge's Role | Active "gatekeeper" who assesses scientific validity [13] | Arbiter of consensus within the scientific field [18] |

| Scope of Application | Applies to all expert testimony (scientific, technical, specialized) [13] [19] | Primarily applied to novel scientific evidence [18] |

| Flexibility | More flexible; allows for newer methods that are reliable but not yet widely accepted [14] | More rigid; can exclude novel science until it gains broad acceptance [15] [20] |

| Key Factors | Testability, peer review, error rate, standards, general acceptance [13] | Solely general acceptance [15] |

Practical Implications for Forensic Research and Testing

The choice of standard has profound implications for how forensic researchers validate and present their methodologies in court.

Table 2: Practical Implications for Forensic Evidence

| Aspect | Under Daubert | Under Frye |

|---|---|---|

| Novel Methodologies | More likely to be admitted if proponent can demonstrate reliability through testing, low error rates, etc., even without widespread acceptance [15] [14]. | Likely to be excluded until the technique achieves "general acceptance" in its field [15] [20]. |

| Judicial Scrutiny | High; judges actively evaluate the scientific rigor of the methodology itself [13] [19]. | Limited; judges primarily determine the level of acceptance by the scientific community [17] [18]. |

| Burden on Expert | Must be prepared to defend the reliability and application of their method in detail [19]. | Must be prepared to demonstrate that the method is generally accepted [18]. |

| Impact on Digital Forensics | Allows for the admission of newer digital forensic techniques if they can be shown to be reliable and applied rigorously [14]. | May pose a higher barrier for new digital tools and techniques that have not yet become industry standards. |

The logical progression of a court's analysis under each standard is distinct, as illustrated below.

Experimental Protocols for Legal Validation

For a forensic researcher, preparing for a Daubert or Frye hearing is akin to designing a rigorous experiment. The "experimental protocol" involves building a comprehensive record that validates the methodology against the relevant legal standard.

Validation Protocol for the Daubert Standard

To satisfy Daubert, the proponent of the evidence must demonstrate reliability by a preponderance of the evidence [21]. The required "materials and methods" are extensive:

- Testing and Validation Studies: Conduct and document controlled experiments that test the methodology's reliability. The focus should be on whether the principle can be and has been tested [13] [19].

- Peer-Reviewed Publication: Submit studies and explanations of the methodology to peer-reviewed journals. Document the peer review process to show scrutiny by the scientific community [19].

- Error Rate Calculation: Quantify the technique's known or potential error rate through validation studies. Be prepared to explain the methodology's accuracy and limitations [13] [19].

- Standard Operating Procedures (SOPs): Develop, document, and maintain strict standards and controls that govern the technique's application to ensure consistency and reliability [19].

- Survey of the Field: Gather literature, textbooks, and statements from other experts to demonstrate the technique's growing or established acceptance, though this is not solely dispositive under Daubert [13].

Validation Protocol for the Frye Standard

The protocol under Frye is more narrowly focused:

- Literature Review for Consensus: Compile a comprehensive body of scholarly publications, judicial decisions from other jurisdictions, and practical applications that attest to the technique's acceptance [18].

- Expert Affidavits: Secure declarations from recognized, independent experts in the field who can attest that the methodology is generally accepted as reliable for its intended purpose [16] [18].

- Focus on Methodology, Not Conclusions: Ensure that all evidence is directed at the general acceptance of the underlying principles and methods, not the correctness of the expert's specific conclusions in the case [18].

Essential Research Reagents for Admissibility Testing

Navigating an admissibility hearing requires a toolkit of "research reagents"—conceptual tools and materials needed to build a valid and convincing case for the court.

Table 3: Research Reagent Solutions for Legal Admissibility

| Research Reagent | Function in Validation | Primary Applicable Standard |

|---|---|---|

| Peer-Reviewed Studies | Provides objective evidence of scientific scrutiny and validation of the underlying principles [13] [19]. | Daubert (Critical), Frye (Supportive) |

| Error Rate Analysis | Quantifies the reliability and limitations of the method; essential for a scientific assessment of validity [13] [19]. | Daubert |

| Standard Operating Procedures (SOPs) | Demonstrates that the method is applied in a consistent, controlled manner, reducing variability and arbitrariness [19]. | Daubert |

| Scholarly Treatises & Textbooks | Establishes that the method is recognized and taught as valid within the field, showing integration into the body of scientific knowledge [18]. | Frye (Critical), Daubert (Supportive) |

| Expert Witness Credentials | Establishes the qualifications of the individual applying the method, though the focus remains on the methodology itself [19] [18]. | Both |

| Survey of Jurisdictional Precedent | Shows how other courts have ruled on the admissibility of the same or similar methods, providing persuasive legal authority. | Both |

The choice between Daubert and Frye fundamentally shapes the validation strategy for forensic evidence. The Daubert Standard, with its multi-factor, flexible approach, is more suited to rapidly evolving fields like digital forensics, as it allows novel but rigorously tested methods to be presented in court. In contrast, the Frye Standard's singular focus on general acceptance provides predictability but may slow the integration of innovative techniques.

For researchers and legal professionals, the jurisdiction dictates the standard. However, a robust validation protocol that includes testing, peer review, error rate analysis, and standardized procedures will not only satisfy the more demanding Daubert standard but also strongly support an argument for general acceptance under Frye. As forensic science continues to advance, understanding and applying these legal frameworks remains essential for bridging the gap between scientific innovation and the rules of evidence.

Forensic validation is the fundamental process of testing and confirming that forensic techniques and tools yield accurate, reliable, and repeatable results [1]. It functions as a critical safeguard against error, bias, and misinterpretation across all forensic disciplines, from traditional DNA analysis to modern digital forensics [1]. Without rigorous validation, the credibility of forensic findings and the outcomes of investigations and legal proceedings can be severely undermined, potentially leading to miscarriages of justice [1]. The legal system itself requires the use of scientifically validated methods, applying standards such as Daubert and Frye to ensure that evidence presented in court is derived from reliable principles [22].

This article explores the critical consequences of inadequate validation through case studies that highlight failures in both traditional and digital forensic contexts. We examine how validation frameworks are evolving to address challenges posed by new technologies, particularly the rise of artificial intelligence and complex digital evidence. By comparing validation methodologies across forensic domains and presenting standardized experimental protocols, this analysis provides researchers with frameworks for ensuring the scientific integrity of their forensic analyses.

The Fundamentals of Forensic Validation

Core Principles and Components

Forensic validation encompasses three distinct but interrelated components [1]:

- Tool Validation: Ensures that forensic software or hardware performs as intended, extracting and reporting data correctly without altering the source evidence.

- Method Validation: Confirms that the procedures followed by forensic analysts produce consistent outcomes across different cases, devices, and practitioners.

- Analysis Validation: Evaluates whether the interpreted data accurately reflects its true meaning and context, ensuring that software presents a valid representation of underlying evidence.

These components rest upon foundational principles that include reproducibility, transparency, error rate awareness, peer review, and continuous validation [1]. In digital forensics, specific validation practices include using hash values to confirm data integrity, comparing tool outputs against known datasets, cross-validating results across multiple tools, and ensuring all procedures are thoroughly documented [1].

Legal and Scientific Standards

Validation requirements are codified in accreditation standards such as ISO/IEC 17025, which forensic service providers must meet to maintain accreditation [22]. The Daubert Standard, which governs the admissibility of expert testimony in federal courts, requires that forensic methods be tested, peer-reviewed, have known error rates, and be generally accepted in the relevant scientific community [1]. These legal frameworks make proper validation not merely a scientific best practice but a legal necessity for evidence to be admissible in judicial proceedings.

Case Studies: Justice Compromised by Validation Failures

Digital Forensics: Florida v. Casey Anthony (2011)

The prosecution's digital forensic expert initially testified that searches for the word "chloroform" had been conducted on the Anthony family computer 84 times, suggesting high interest and intent [1]. This number was repeatedly cited by the prosecution as strong circumstantial evidence of planning in the death of Caylee Anthony.

However, through rigorous forensic validation conducted by the defense team with assistance from Envista Forensics, this critical piece of evidence was revealed to be grossly inaccurate. Re-examination and validation of the forensic software's output confirmed that only a single instance of the search term had occurred, directly contradicting earlier claims of extensive search activity [1].

This case exemplifies a critical failure in tool validation - the forensic software either misinterpreted data or presented it in a misleading manner, and the initial examiner failed to validate the tool's output. The consequences were profound: what appeared to be compelling evidence of premeditation was actually an artifact of flawed forensic processing.

Digital Forensics: Massachusetts v. Karen Read (2025)

In this more recent case, Cellebrite Senior Digital Intelligence Expert Ian Whiffin underscored the importance of rigorous validation in digital forensics [1]. He explained that timestamps and data artifacts require careful interpretation, as mobile device operating system logs can be misleading without proper context.

The investigation demonstrated proper validation methodology through cross-device testing - Whiffin conducted tests across multiple devices to ensure the accuracy of his conclusions about timestamp interpretations [1]. This approach highlights how proper validation practices help ensure that digital evidence is interpreted correctly and reliably, preventing misinterpretations that could lead to unjust outcomes.

Comparative Analysis: Traditional vs. Digital Forensic Validation

The challenges of validation differ significantly between traditional and digital forensic domains, as illustrated in the table below:

Table 1: Validation Challenges in Traditional vs. Digital Forensics

| Aspect | Traditional Forensics | Digital Forensics |

|---|---|---|

| Evidence Nature | Relatively stable physical evidence | Volatile, easily manipulated digital evidence [1] |

| Tool Evolution | Methodologies evolve slowly | Rapid tool updates requiring constant revalidation [1] |

| Standardization | Established protocols (e.g., NIJ standards) | Lack of standardized datasets and formal testing procedures [23] |

| Error Rate Quantification | Generally established through repeated testing | Often unknown or poorly documented [23] |

| Primary Validation Focus | Technique reliability and reproducibility | Tool output accuracy and interpretation validity [1] |

Validation Frameworks: Traditional and Digital Paradigms

Established Traditional Validation Models

Traditional forensic sciences have long employed structured validation approaches. The collaborative method validation model proposed for crime laboratories emphasizes efficiency through standardization and shared methodology [22]. In this model, an originating Forensic Science Service Provider (FSSP) publishes comprehensive validation data in peer-reviewed journals, enabling other FSSPs to conduct abbreviated verifications rather than full validations, provided they adhere strictly to the published parameters [22].

This approach offers significant advantages: it reduces redundant validation efforts across laboratories, promotes standardization of methods, establishes benchmarks for comparison, and increases overall efficiency in implementing new technologies [22]. The model acknowledges that while forensic service providers may operate in different jurisdictions, they examine common evidence types using similar technologies and methods, making collaborative validation feasible and beneficial [22].

Emerging Digital Forensic Validation Standards

In digital forensics, the National Institute of Standards and Technology (NIST) has established the Computer Forensic Tool Testing (CFTT) Program to address validation needs [23] [24]. The CFTT aims to establish a methodology for testing computer forensic tools through development of general tool specifications, test procedures, test criteria, test sets, and test hardware [24].

Inspired by the CFTT program, researchers have proposed standardized methodologies for evaluating emerging technologies like Large Language Models (LLMs) in digital forensic tasks [25] [23]. These methodologies include quantitative evaluation using metrics such as BLEU and ROUGE, originally developed for machine translation but now adapted for assessing forensic timeline analysis [25] [23]. The development of Computer Forensic Reference Data Sets (CFReDS) by NIST provides documented sets of simulated digital evidence that examiners can use for validation and proficiency testing [24].

Table 2: Digital Forensic Tool Validation Framework

| Validation Component | Methodology | Output Metrics |

|---|---|---|

| Tool Functionality | Testing against CFReDS reference data sets [24] | Accuracy, error rates, missed evidence |

| Performance | Processing standardized evidence volumes | Processing speed, resource utilization |

| Reliability | Repeated testing across multiple environments | Consistency, reproducibility measures |

| Legal Compliance | Verification of hash values, evidence preservation [1] | Chain-of-custody documentation, data integrity |

Experimental Protocols for Forensic Validation

Protocol 1: Digital Forensic Tool Validation

Based on the NIST CFTT methodology, this protocol provides a framework for validating digital forensic tools [24]:

Test Preparation: Acquire standardized hardware test fixtures and reference data sets from CFReDS that represent typical case scenarios [24].

Tool Specification: Define clear specifications for the tool's intended functions, including supported file systems, data types, and output formats.

Test Execution:

- Process reference data sets using the tool under validation

- Verify hash values before and after processing to confirm data integrity [1]

- Execute identical procedures across multiple hardware platforms and operating environments

Result Analysis:

- Compare tool outputs against known ground truth in reference data sets

- Calculate accuracy metrics for data extraction, parsing, and interpretation

- Identify any false positives, false negatives, or data alterations

Cross-Validation: Process the same data sets using multiple tools and compare results to identify inconsistencies or tool-specific artifacts [1].

This experimental protocol emphasizes transparency, with thorough documentation of all procedures, software versions, system configurations, and results to ensure reproducibility and facilitate peer review [1].

Protocol 2: LLM Validation for Forensic Timeline Analysis

The adoption of Artificial Intelligence, particularly Large Language Models (LLMs), in digital forensics necessitates novel validation approaches [25] [23]. The following protocol provides a standardized methodology for evaluating LLM performance in forensic timeline analysis:

Dataset Development: Create forensic timeline datasets from controlled environments (e.g., Windows 11 systems) using tools like Plaso, ensuring comprehensive ground truth documentation [23].

Task Definition: Define specific timeline analysis tasks, such as event summarization, anomaly detection, or pattern identification.

Experimental Execution:

- Process timeline data through the LLM under evaluation

- Generate outputs for defined tasks using standardized prompts

- Conduct multiple trials to ensure consistency

Quantitative Assessment:

Qualitative Assessment:

- Subject matter expert evaluation of output relevance and accuracy

- Assessment of potential biases in model outputs

- Determination of clinical utility in real-world investigative contexts

This protocol addresses the unique challenges of validating "black box" AI systems, where the internal decision-making processes may not be transparent or easily interpretable [1].

Diagram 1: Forensic Tool Validation Workflow. This diagram illustrates the sequential phases of a comprehensive validation process for forensic tools, from initial preparation through testing to final analysis and reporting.

Reference Data Sets and Standards

Table 3: Essential Resources for Forensic Validation Research

| Resource | Function | Source/Availability |

|---|---|---|

| CFReDS (Computer Forensic Reference Data Sets) | Provides simulated digital evidence for testing and validation [24] | NIST [24] |

| NSRL (National Software Reference Library) | Reference database of software profiles for file identification [24] | NIST [24] |

| Standardized Forensic Timelines | Datasets for evaluating timeline analysis tools and LLMs [23] | Research publications [23] |

| CFTT Test Specifications | Standardized methodologies for testing computer forensic tools [24] | NIST [24] |

| Plaso | Open-source tool for timeline generation used in creating validation datasets [23] | Open source [23] |

Validation Metrics and Analytical Tools

- BLEU (Bilingual Evaluation Understudy): Algorithm for evaluating the quality of text which has been machine-translated; adapted for assessing LLM outputs in forensic contexts [25] [23]

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): Set of metrics for evaluating automatic summarization and translation of texts; used for quantitative assessment of LLM-generated forensic summaries [25] [23]

- Hash Value Algorithms (MD5, SHA-1, SHA-256): Cryptographic hash functions used to verify data integrity throughout forensic processes [1]

- YARA Rules: Pattern-matching tool for identifying and classifying malware and other suspicious files during forensic analysis

The case studies and frameworks presented demonstrate that inadequate validation poses serious threats to justice systems, regardless of whether evidence is derived from traditional or digital sources. The Casey Anthony case illustrates how unvalidated digital evidence can dramatically misrepresent facts, while emerging challenges with AI and LLMs highlight the need for novel validation approaches tailored to complex, non-transparent systems [1] [25].

Moving forward, the field must address several critical needs: developing standardized datasets for benchmarking [23], establishing collaborative validation models to reduce redundancy [22], creating AI-specific validation protocols that address explainability and hallucination risks [25] [23], and promoting transparent reporting of validation methodologies and results [1]. As forensic technologies continue to evolve, maintaining scientific rigor through comprehensive validation remains essential for ensuring that forensic evidence serves rather than subverts justice.

Diagram 2: Validation Framework Integration. This diagram shows how validation approaches from different forensic domains contribute to shared objectives of standardization, reliability, and legal robustness.

Applied Validation Methodologies in Modern Forensic Practice

In digital forensics, the principle of tool validation is paramount for ensuring the integrity and admissibility of evidence. Unlike traditional forensics, where physical evidence can be directly observed, digital evidence is often interpreted and presented through software tools. This creates a fundamental reliance on the accuracy and completeness of these tools. A robust validation framework requires that findings from one tool be verified by an independent tool to mitigate the risk of inherent biases, parsing errors, or overlooked data. This process is not merely a best practice but a scientific necessity to uphold the standards of evidence in judicial proceedings.

The transition from an acquisition tool like Cellebrite UFED to an analysis platform like Magnet AXIOM provides a canonical use case for such validation. This guide objectively compares the performance of these two industry-leading solutions within a validation framework, providing researchers and forensic professionals with experimental data and methodologies to support rigorous, defensible investigations.

Tool Comparison: Capabilities and Features

A side-by-side comparison of core capabilities provides the foundation for understanding how these tools can be used complementarily in a validation workflow.

Table 1: Digital Forensics Tool Capability Comparison

| Feature | Cellebrite UFED | Magnet AXIOM |

|---|---|---|

| Primary Function | Data extraction from mobile devices [26] | Data analysis from multiple sources (mobile, computer, cloud) [27] [26] |

| Key Strength | Broad device support & physical extraction [26] [28] | Unified case analysis & artifact recovery [27] [26] |

| Supported Platforms | iOS, Android, Windows Mobile [26] | Windows, macOS, Linux, iOS, Android [26] |

| Cloud Forensics | Supported [26] | Supported [27] [26] |

| Key Differentiating Features | Advanced decryption for encrypted apps [26] | Magnet.AI for categorization; Timeline and Connections analysis [27] [26] |

This divergence in primary function is precisely what makes their sequential use so powerful. UFED excels at the preservation phase, reliably acquiring data from a wide array of mobile devices. AXIOM, in contrast, shines in the examination and analysis phases, cross-correlating data from mobiles, computers, and cloud sources to build a holistic view of user activity [27]. Internal testing by Magnet Forensics suggests that this approach allows AXIOM to find up to 25% more evidence than other tools when analyzing the same extraction, a critical metric for validation [27].

Experimental Data for Validation

Performance metrics are essential for validating not just evidence, but the efficiency of the investigative process itself. The following data, drawn from comparative testing, highlights operational differences.

Table 2: Digital Forensics Tool Performance Metrics

| Performance Metric | Cellebrite UFED (via Physical Analyzer) | Magnet AXIOM |

|---|---|---|

| Processing Time | Information Missing | 2 hours, 31 minutes, 49 seconds (for a 500GB HDD) [29] |

| Keyword Search Speed | 6 minutes, 12 seconds (for "guest") [29] | 9 seconds (for "guest") [29] |

| Timeline Analysis Load Time | 6 minutes, 53 seconds (~509k records) [29] | 40 seconds (~509k records) [29] |

| Artifact Support | Strong for mobile apps and file systems [26] | Extensive, with community-driven "Custom Artifacts" for unsupported apps [27] |

The most striking performance differentiator lies in analytical speed. On identical hardware, AXIOM completed a keyword search for the term "guest" (~50k results) in 9 seconds, a task that took another tool 6 minutes and 12 seconds—making AXIOM over 40 times faster in this specific operation [29]. This performance advantage extends to complex filtering; applying a date filter to all data from a specific year (~119k results) was reported to be near instantaneous in AXIOM, compared to 18 minutes and 42 seconds in another tool [29]. This directly impacts an examiner's ability to rapidly test hypotheses and validate findings during an investigation.

Experimental Protocol for Performance Benchmarking

The quantitative data presented in Table 2 was derived from a controlled performance test. The methodology is outlined below for transparency and potential replication.

- Testing Machine Specifications: The tests were conducted on a professional forensic workstation with the following specs: Dual Intel Xeon E5-2640 v4 CPUs (20 cores, 40 logical processors), 128 GB DDR4 RAM, an 8TB RAID10 array for the source image, and a 2TB SSD for the active case [29].

- The Dataset: A single 500GB hard drive image was used for consistency. The drive had 89.9GB of allocated data, an NTFS file system, and contained a Windows 7 Home Premium operating system that had been in use for approximately four years. The E01 image was 82.2GB in size and contained approximately 723,000 records and artifacts [29].

- Processing Settings: For both tools, the processing phase was configured with all artifacts selected and a "Full Search" conducted on all partitions. No additional processing options were enabled, ensuring a like-for-like comparison of the core processing engines [29].

A Validation Workflow: From UFED to AXIOM

A practical validation protocol leverages the strengths of both tools, beginning with Cellebrite UFED for acquisition and culminating with Magnet AXIOM for deep analysis and verification. The following diagram maps this multi-tool workflow.

(Core Validation Workflow: This diagram illustrates the sequential and iterative process of using Cellebrite UFED for data acquisition and Magnet AXIOM for independent analysis and validation.)

The Researcher's Toolkit: Essential File Formats & Functions

Navigating this workflow requires an understanding of the key "research reagents"—the file formats and components that facilitate the exchange and validation of data between tools.

Table 3: Essential Digital Forensics File Formats and Functions

| Item | Function in Validation |

|---|---|

| .UFD/.UFDX File | A configuration file from Cellebrite UFED containing metadata about the extraction. It can be ingested directly by AXIOM to locate the actual image files [30]. |

| CLBX File | A container format from Cellebrite for full file system extractions. It is a ZIP archive that AXIOM can process, often including valuable iOS keychain data for decryption [30]. |

| Physical Image (e.g., .BIN) | A bit-for-bit copy of a storage device. Segmented .BIN files from Android physical extractions can be loaded into AXIOM for analysis [30]. |

| File System Image (e.g., .TAR, .ZIP) | A logical extraction containing a device's file system. Common in iOS and Android file system extractions, these can be loaded into AXIOM as "Images" [30]. |

| Custom Artifacts | Community-created scripts (XML/Python) that allow AXIOM to parse artifacts from new or unsupported apps, extending its validation capabilities [27]. |

Within a modern digital forensics validation framework, reliance on a single tool is a methodological vulnerability. The practice of using Cellebrite UFED for robust data acquisition and Magnet AXIOM for independent, multi-source analysis constitutes a defensible validation protocol. The experimental data shows that AXIOM can not only confirm UFED findings but also uncover significant additional evidence—up to 25% more in internal tests—while providing orders-of-magnitude faster analysis speeds [27] [29]. For researchers and professionals building a scientifically sound, court-defensible process, this multi-tool "toolbox" approach is not just recommended; it is essential.

Validating Methods for Cloud Forensics and Distributed Data

The exponential growth of cloud computing and distributed data environments has fundamentally transformed the digital forensics landscape. Unlike traditional digital forensics, which focuses on physical storage media under the investigator's direct control, cloud forensics must navigate a complex ecosystem of virtualized, multi-tenant, and geographically dispersed data [31] [32]. This paradigm shift necessitates the development and validation of new forensic methods that can ensure evidence meets the stringent requirements for legal admissibility. The core challenge lies in establishing scientific validity and reliability for forensic techniques applied in environments where direct physical access to evidence is often impossible [3] [33].

This article frames the comparison of cloud and traditional forensic methods within the broader context of validation frameworks for digital forensics research. For evidence to be admissible in legal proceedings, particularly under standards like the Daubert Standard, the methods used to collect and analyze it must be tested, peer-reviewed, have known error rates, and be widely accepted in the scientific community [33] [34]. We objectively compare the performance of forensic approaches, providing a structured analysis of their characteristics, challenges, and the experimental protocols required to validate them in a court-of-law context.

Comparative Analysis: Traditional vs. Cloud Forensics

The following table summarizes the core distinctions between traditional and cloud forensics, which form the basis for their validation requirements.

Table 1: Comparative Analysis of Traditional Digital Forensics and Cloud Forensics

| Characteristic | Traditional Digital Forensics | Cloud Forensics |

|---|---|---|

| Data Location & Control | Physical media (e.g., hard drives, phones) within the investigator's jurisdiction [31]. | Virtualized data distributed across multi-tenant, geographically diverse servers and data centers [31] [32]. |

| Primary Challenges | Data encryption, device diversity, data volume [33]. | Jurisdictional issues, data volatility (ephemeral resources), multi-tenancy, and complex data acquisition from CSPs [31] [35]. |

| Chain of Custody | Managed directly by the investigator; easier to document a linear history [35]. | Extremely complex; requires automated tracking of access across multiple cloud providers and third parties to be legally defensible [35]. |

| Investigation Scope | Well-defined physical artifact [33]. | Dynamic and boundary-less; often requires cross-cloud correlation [32] [35]. |

| Legal & Regulatory Focus | Primarily domestic laws on search and seizure [33]. | Must navigate conflicting international data privacy laws (e.g., GDPR, cross-border data transfer restrictions) [31] [32]. |

| Tool Validation | Focused on tool accuracy for data recovery and analysis from static images [33]. | Requires validation for API-based collection, integration with cloud-native services, and automated evidence handling [3] [35]. |

Experimental Protocols for Validating Forensic Methods

To satisfy the requirements of a validation framework, any forensic method, whether for traditional or cloud environments, must be subjected to rigorous, repeatable testing. The following protocols outline core experiments for validating key forensic capabilities.

Protocol 1: Evidence Integrity and Chain of Custody Validation

1. Objective: To verify that a cloud forensics platform can automatically create and maintain a tamper-evident log of all actions performed on digital evidence, preserving its integrity for legal admissibility [35].

2. Methodology: A controlled environment is established using a cloud account (e.g., AWS or Azure). A series of simulated investigative actions are performed, including data acquisition from a cloud storage bucket, memory capture of a virtual machine, and isolation of a compromised resource. The platform's automated logging capabilities are stressed by introducing multiple concurrent users and actions.

3. Data Collection & Metrics: The experiment measures the platform's ability to generate immutable, time-stamped logs for every action. Key metrics include the completeness of the audit trail (%), the granularity of logged details (e.g., user, timestamp, action, target resource), and the ability to detect and alert on any unauthorized attempt to alter the logs [35].

Protocol 2: Data Acquisition and Volatile Data Recovery

1. Objective: To evaluate the effectiveness of forensic tools in acquiring data from ephemeral cloud resources (e.g., containers, serverless functions) before they are terminated, and to compare the recovery rates of open-source versus commercial tools [33] [35].

2. Methodology: This experiment involves deploying short-lived cloud resources programmed to execute a predefined set of activities and then self-terminate after a random interval. Investigators use both commercial (e.g., FTK, Forensic MagiCube) and open-source (e.g., Autopsy, ProDiscover Basic) tools, triggering automated evidence collection the moment malicious activity is detected by a monitoring system.

3. Data Collection & Metrics: The primary quantitative metric is the Data Recovery Rate (%), calculated by comparing the artifacts acquired by the tool against a known control set of actions performed on the ephemeral resource. Furthermore, the Mean Time to Response (MTTR) is critical, measuring the time from detection to successful evidence capture [35]. Each experiment should be performed in triplicate to establish repeatability and calculate error rates [33].

Protocol 3: Tool Reliability and Repeatability Testing

1. Objective: To determine the reliability and repeatability of digital forensic tools, a requirement for admissibility under the Daubert Standard [33] [34].

2. Methodology: Following methodologies from NIST Computer Forensics Tool Testing standards, a controlled testing environment is set up. Tools are tasked with three distinct scenarios: preservation of original data, recovery of deleted files via data carving, and targeted artifact searching. The same set of experiments is performed using both commercial and open-source tools.

3. Data Collection & Metrics: The key metric is the Tool Error Rate, quantified by comparing the acquired artifacts with control references. Repeatability is established by conducting each experiment in triplicate and ensuring consistent results across all runs [33].

Visualization of Forensic Validation Workflows

The following diagram illustrates the logical workflow for validating a digital forensic method, from evidence collection to legal admission, highlighting critical decision points.

Diagram 1: Forensic Method Validation Workflow. This chart outlines the pathway from evidence collection to legal admissibility, showing the critical validation checkpoints based on the Daubert Standard [33].

The Researcher's Toolkit: Essential Solutions for Forensic Validation

The table below details key reagents, tools, and platforms that constitute the essential toolkit for conducting research in forensic method validation.

Table 2: Research Reagent Solutions for Digital Forensics Validation

| Tool / Solution | Type / Category | Primary Function in Validation |

|---|---|---|

| FTK (Forensic Toolkit) | Commercial Forensic Suite | Serves as a benchmark commercial tool for comparative studies on evidence collection, data carving, and artifact analysis [33]. |

| Autopsy | Open-Source Forensic Suite | Provides a cost-effective, transparent alternative for validating forensic processes; allows for peer review of methodologies [33]. |

| OPC UA with Kafka | Data Integration Framework | Enables standardized collection and real-time processing of heterogeneous data in industrial cloud environments, useful for building testbeds [36]. |

| Darktrace/CLOUD w/ Cado | Cloud Forensics & Incident Response Platform | Used to test and validate automated evidence collection, chain of custody tracking, and analysis in multi-cloud environments [35]. |

| DataSHIELD | Federated Analysis Platform | Provides a platform with built-in privacy-preserving technologies (e.g., differential privacy) for validating analytical methods on distributed data without centralization [37]. |

| NIST CFTT Standards | Testing Standards & Protocols | Provides the methodological foundation for designing rigorous, repeatable experiments to establish tool reliability and error rates [33]. |

The validation of methods for cloud and distributed data forensics is not merely a technical exercise but a foundational requirement for the integrity of modern judicial processes. As this comparison demonstrates, cloud forensics introduces a layer of complexity that traditional methods are not designed to address, necessitating new validation frameworks and experimental protocols. The core differentiator is the shift from validating tools for static data analysis to validating processes for dynamic, remote, and automated evidence handling in a legally compliant manner.

The future of validation research lies in the development of standardized, practitioner-driven frameworks that incorporate explainable AI (XAI) to mitigate the "black-box" nature of advanced analytics [3]. Furthermore, the empirical demonstration that properly validated open-source tools can produce reliable and repeatable results promises to democratize access to high-quality forensic capabilities [33]. For researchers and professionals, the priority must be on generating robust, empirical data on method performance—including error rates and reliability under controlled conditions—to build the scientific foundation that will support the next generation of digital forensics.

In both digital and traditional forensics, the validity and reliability of analytical methods are paramount. The core principle of forensic science hinges on the ability to demonstrate that a technique produces consistent, accurate, and reproducible results that are admissible as evidence. Within this context, cross-validation emerges as a critical statistical methodology for evaluating the performance and generalizability of predictive models [38]. This guide objectively compares prevalent cross-validation procedures and their implementation tools, framing them within the broader need for robust validation frameworks in forensic research. As digital evidence becomes increasingly complex, leveraging standardized cross-validation with known datasets is not just a best practice but a foundational requirement for scientific and legal acceptance [34] [3].

Core Concepts of Cross-Validation

Cross-validation is a model assessment technique used to estimate how the results of a statistical analysis will generalize to an independent dataset [38]. Its primary purpose is to test a model's ability to predict new data that was not used in its training, thereby flagging critical issues like overfitting or selection bias [39] [38]. In overfitting, a model memorizes the noise and specific details of the training data to an extent that it negatively impacts its performance on new, unseen data. Cross-validation helps detect this by revealing a significant gap between performance on training data and validation data [40].

The fundamental process involves partitioning a sample of data into complementary subsets, performing the analysis on one subset (called the training set), and validating the analysis on the other subset (called the validation set or testing set) [38]. To reduce variability, most cross-validation methods perform multiple rounds of this partitioning with different splits and then combine (e.g., average) the results over the rounds [39] [38]. This process provides a more accurate and reliable estimate of a model's predictive performance than a single train-test split [39].

Cross-Validation Types and Methodologies

Several cross-validation techniques exist, each with specific strengths, weaknesses, and ideal use cases. Understanding these is crucial for selecting the appropriate method for a given forensic analysis scenario.

K-Fold Cross-Validation

K-Fold Cross-Validation is one of the most widely used and robust methods [40]. In this procedure, the original dataset is randomly partitioned into k equal-sized subsamples, or "folds" [39] [38]. Of the k folds, a single fold is retained as the validation data for testing the model, and the remaining k-1 folds are used as training data. The cross-validation process is then repeated k times, with each of the k folds used exactly once as the validation data. The k results are then averaged to produce a single performance estimate [39] [38]. The choice of k involves a bias-variance tradeoff; common choices are k=5 or k=10, which provide a good balance between computational cost and reliable estimation [39] [40]. A lower value of k is computationally cheaper but can lead to higher bias, while a very high k (approaching the number of data points) leads to the Leave-One-Out Cross-Validation (LOOCV) method, which has low bias but high variance and computational cost [39] [40].

Stratified K-Fold Cross-Validation

Stratified K-Fold Cross-Validation is a variation of the standard k-fold method that preserves the percentage of samples for each class in every fold [39] [41]. This is particularly useful for imbalanced datasets where one or more classes are underrepresented [39]. By ensuring that each fold is a good representative of the overall class distribution, stratified cross-validation provides a more reliable performance estimate for classification models on such data and helps the model generalize better [39]. Recent comparative studies on both balanced and imbalanced datasets have reaffirmed that traditional stratified cross-validation consistently performs better on imbalanced data, showing lower bias, variance, and computational cost, making it a safe and recommended choice [42].

Leave-One-Out Cross-Validation (LOOCV)

LOOCV is an exhaustive cross-validation method where the number of folds k is set equal to the number of data points (n) in the dataset [38]. This means that for each iteration, the model is trained on all data points except one, which is left out as the validation set [39] [41]. This process is repeated n times until each data point has been used once as the test set. The advantage of LOOCV is that it utilizes nearly all data for training, resulting in a low-bias estimate [39] [41]. However, a significant drawback is that it can be computationally expensive for large datasets, as the model must be trained n times. Furthermore, testing on a single data point can cause high variance in the performance estimate, particularly if that point is an outlier [39].

Holdout Validation

The Holdout Method is the simplest form of validation. It involves randomly splitting the dataset into two parts: a training set and a testing (or holdout) set [39] [38]. A typical split is 70-80% of data for training and the remaining 20-30% for testing [41]. While this method is simple and fast to execute, its major drawback is its high dependence on a single random split [39]. If the split is not representative of the overall data distribution, the performance estimate can be unreliable and have high variance. It also may not utilize data efficiently for training, especially in smaller datasets, potentially leading to a model with high bias if it misses important patterns in the held-out data [39].

Table 1: Comparison of Common Cross-Validation Techniques

| Feature | K-Fold Cross-Validation | Stratified K-Fold | Leave-One-Out (LOOCV) | Holdout Method |

|---|---|---|---|---|

| Data Split | Divided into k equal folds [39] | Divided into k folds, preserving class distribution [39] | n folds; each fold is a single data point [39] | Single split into training and testing sets [39] |

| Training & Testing | Model is trained and tested k times [39] | Model is trained and tested k times [39] | Model is trained n times and tested n times [39] | Model is trained once and tested once [39] |

| Bias & Variance | Lower bias; variance depends on k [39] [40] | Lower bias; better for imbalanced data [39] [42] | Low bias, but can result in high variance [39] | Higher bias if split is not representative [39] |

| Execution Time | Slower, as model is trained k times [39] | Slower, similar to K-Fold [42] | Very slow for large datasets [39] | Fast, only one training cycle [39] |

| Best Use Case | Small to medium datasets for accurate estimation [39] | Classification problems with imbalanced datasets [39] [42] | Very small datasets where data is limited [39] | Very large datasets or for quick evaluation [39] |

Experimental Protocols for Cross-Validation

A standardized experimental protocol is essential for obtaining credible and reproducible cross-validation results. The following workflow details the key steps, from data preparation to final evaluation, which can be applied in forensic research contexts.

Diagram 1: Cross-validation workflow

Data Preparation and Preprocessing

The initial step involves loading and preparing the dataset for analysis. This includes handling missing values, encoding categorical variables if necessary, and potentially scaling features. For the Iris dataset, a common benchmark, the data is readily available and structured. It is crucial that any preprocessing steps, such as standardization, are learned from the training set and applied to the held-out validation set to prevent data leakage [43]. Using a Pipeline from scikit-learn is a best practice as it ensures that all transformations are correctly contained within the cross-validation loop [43].

Defining the Cross-Validation Strategy

The researcher must select and define the cross-validation strategy based on the dataset's characteristics and the experiment's goals. For a standard k-fold approach, this involves instantiating a KFold object from scikit-learn and specifying the number of splits (n_splits or k). It is good practice to set shuffle=True to randomize the data before splitting and to use a fixed random_state to ensure the results are reproducible [39] [40]. For imbalanced datasets, a StratifiedKFold object should be used instead [39] [43].

Model Training and Evaluation Loop

The core of the experiment is the cross-validation loop. For each split generated by the chosen k-fold object, the following steps are executed:

- Split Data: The data indices are split into training and validation sets for the current fold [39].

- Train Model: The model is trained (fit) on the training subset of the data [39] [40].

- Validate Model: The trained model is used to generate predictions on the validation subset [40].

- Record Metrics: A performance metric (e.g., accuracy, mean squared error) is calculated by comparing the predictions to the true values of the validation set [39] [40].

This process is repeated for each of the k folds.

Performance Analysis and Reporting

After all k folds have been processed, the k performance scores are combined for a final evaluation. The mean of these scores is reported as the overall performance estimate of the model, providing a more reliable measure than a single train-test split [39] [43]. The standard deviation of the scores is also calculated, as it indicates the variance of the model's performance across different data subsets—a high standard deviation suggests the model's performance is sensitive to the specific training data [43] [40]. Finally, the results from multiple models can be compared to select the best-performing algorithm or set of hyperparameters [40].

Implementation with Multiple Tools

The theoretical protocols are implemented using programming tools and libraries. The following section provides a comparative analysis of implementation methods using Python's scikit-learn library, which is a standard tool for machine learning tasks.

Table 2: Comparison of scikit-learn Implementation Tools

| Tool | Primary Function | Key Features | Sample Code Snippet | Output |

|---|---|---|---|---|

KFold Class |

Provides indices to split data into k folds [40]. | Full manual control over the splitting, training, and evaluation process [40]. | kfold = KFold(n_splits=5, shuffle=True, random_state=42)for train_idx, val_idx in kfold.split(X): X_train, X_val = X[train_idx], X[val_idx] model.fit(X_train, y_train) y_pred = model.predict(X_val) # Calculate metrics manually |

Provides the training/validation indices for manual loop implementation [40]. |

cross_val_score Function |

Evaluate a score by cross-validation [43]. | Simple and quick for evaluating a single metric [43] [40]. | scores = cross_val_score(model, X, y, cv=5, scoring='accuracy')print("Average Accuracy: %0.2f" % scores.mean()) |

Returns an array of scores for each fold [43]. |

cross_validate Function |

Evaluate one or multiple metrics by cross-validation [43]. | Allows multiple scoring metrics; returns fit/score times and optional training scores [43]. | scoring = ['accuracy', 'f1_macro']scores = cross_validate(model, X, y, scoring=scoring, cv=5, return_train_score=True)print(scores['test_accuracy']) |

Returns a dict with test/train scores and times [43]. |

Tool Comparison and Use Cases

- Manual

KFoldfor Maximum Flexibility: Using theKFoldclass in a manual loop is ideal for complex scenarios where custom operations are needed during each fold, such as specialized logging, intermediate saving of models, or complex data manipulations that are not supported by higher-level functions [40]. cross_val_scorefor Quick Model Assessment: Thecross_val_scorefunction is the most straightforward tool for a quick and efficient evaluation of a model's performance using a single primary metric [43] [40]. It automates the looping and averaging process, making the code concise.cross_validatefor Comprehensive Model Diagnostics: Thecross_validatefunction is the best choice for a thorough evaluation. Its ability to handle multiple metrics simultaneously and return additional data like computation times and training scores makes it invaluable for robust model selection and for diagnosing issues like overfitting by comparing training and validation performance [43].

The Scientist's Toolkit: Research Reagent Solutions

In the context of computational forensics and model validation, "research reagents" refer to the essential software tools, libraries, and benchmark datasets that form the foundation for reproducible experiments.

Table 3: Essential Research Reagents for Cross-Validation Experiments

| Tool / Dataset | Type | Primary Function in Validation | Application Context |

|---|---|---|---|

| scikit-learn | Python Library | Provides implementations of KFold, cross_val_score, cross_validate, and various ML models [39] [43]. |

Standard tool for building and evaluating machine learning models in Python. |

| Iris Dataset | Benchmark Data | A classic, multi-class dataset used as a known benchmark for evaluating classification models [39] [43]. | Serves as a controlled "known dataset" for initial method validation and teaching. |

| California Housing Dataset | Benchmark Data | A real-world regression dataset used to evaluate model performance on continuous value prediction [40]. | Used for testing models in a regression context with multiple numerical features. |

| StratifiedKFold | Algorithm | A cross-validation object that ensures relative class frequencies are preserved in each fold [39] [43]. | Crucial for validating models on imbalanced datasets, common in forensic scenarios. |

| Pipeline | Software Construct | Ensures that preprocessing steps are correctly fitted on the training data and applied to the validation data within the CV loop [43]. | Prevents data leakage, ensuring a purer and more reliable performance estimate. |

Application in Digital Forensics Validation Frameworks