Validating Likelihood Ratio Systems in Forensic Text Comparison: Methodologies, Challenges, and Best Practices

This article provides a comprehensive examination of the validation frameworks for Likelihood Ratio (LR) systems in Forensic Text Comparison (FTC).

Validating Likelihood Ratio Systems in Forensic Text Comparison: Methodologies, Challenges, and Best Practices

Abstract

This article provides a comprehensive examination of the validation frameworks for Likelihood Ratio (LR) systems in Forensic Text Comparison (FTC). Aimed at researchers and forensic practitioners, it explores the foundational LR framework for evaluating evidence, details methodological approaches from score-based to feature-based models, and addresses critical challenges like topic mismatch and data requirements. The content emphasizes the necessity of rigorous empirical validation that replicates real casework conditions to ensure the reliability and admissibility of forensic text evidence in legal proceedings. Future directions for establishing scientifically defensible FTC practices are also discussed.

The Likelihood Ratio Framework: Foundations for Forensic Text Evidence

Theoretical Foundation of the Likelihood Ratio

The Likelihood Ratio (LR) has become a cornerstone of forensic evidence evaluation, providing a logical and quantitative framework for expressing the strength of evidence. Rooted in Bayesian decision theory, the LR offers a coherent method for updating beliefs about competing propositions based on new evidence [1]. This framework separates the role of the forensic expert, who assesses the evidence, from that of the legal decision-maker, who considers prior case circumstances.

The fundamental Bayesian equation underlying this approach can be expressed in its odds form as:

Posterior Odds = Prior Odds × Likelihood Ratio [1]

This formula demonstrates how a decision-maker's initial beliefs (prior odds) are updated by considering the forensic evidence (as quantified by the LR) to form revised beliefs (posterior odds). The LR itself evaluates two competing propositions typically used in forensic contexts: the prosecution hypothesis (Hp) that the evidence originates from a specific known source, and the defense hypothesis (Hd) that the evidence originates from an alternative source within a relevant population [2]. The LR is calculated as the ratio of the probability of observing the evidence under Hp versus under Hd.

Despite its theoretical appeal, the application of this framework requires careful consideration. The LR value provided by an expert represents their subjective evaluation, and Bayesian decision theory does not inherently support the direct transfer of a personal LR from an expert to a separate decision-maker [1]. This theoretical limitation underscores the importance of comprehensive uncertainty characterization to assess the fitness for purpose of any reported LR value [1].

LR Computational Methodologies and Score-Based Systems

Forensic science employs various methodologies for calculating LRs, with score-based systems being particularly prominent across multiple disciplines. These systems typically operate in two stages: first, a function processes measured features from known-source and questioned-source items to produce comparison scores; second, a model converts these scores into interpretable LRs [3].

Research demonstrates that not all score types perform equally. Effective scores must account for both similarity (the degree of agreement between the known-source and questioned-source specimens) and typicality (how common or rare the observed features are within the relevant population) [3]. Studies comparing different scoring approaches through Monte Carlo simulations have revealed that scores considering only similarity produce forensically inadequate LRs, whereas those incorporating both similarity and typicality yield more valid and interpretable results [3].

Table 1: Comparison of Score-Based LR Calculation Approaches

| Score Type | Components Considered | LR Validity | Key Characteristics |

|---|---|---|---|

| Non-anchored Similarity-Only | Similarity | Poor | Measures only feature agreement; ignores population distribution |

| Non-anchored Similarity and Typicality | Similarity + Typicality | Good | Considers both feature agreement and population rarity |

| Known-Source Anchored | Same-origin and different-origin scores | Better | Uses anchored comparisons for enhanced discrimination |

The process of converting raw comparison data into a calibrated LR often employs automated systems, such as Automated Fingerprint Identification System (AFIS) algorithms, which generate comparison scores that are subsequently transformed into LRs using statistical models [2]. The performance of these systems depends heavily on the quality and quantity of data used to train the conversion models, with larger datasets generally leading to more reliable LR values [2].

Validation Frameworks for LR Systems

Validating LR systems requires rigorous assessment against multiple performance characteristics to ensure their forensic reliability. A comprehensive validation matrix should specify these characteristics, along with corresponding metrics, graphical representations, and validation criteria [2].

Table 2: Essential Performance Characteristics for LR System Validation

| Performance Characteristic | Performance Metrics | Graphical Representations | Validation Purpose |

|---|---|---|---|

| Accuracy | Cllr | ECE Plot | Measures how well calculated LRs reflect actual evidence strength |

| Discriminating Power | EER, Cllr-min | ECE-min Plot, DET Plot | Assesses system's ability to distinguish between same-source and different-source evidence |

| Calibration | Cllr-cal | Tippett Plot | Evaluates whether LR values are properly scaled (e.g., LR>1 when Hp is true) |

| Robustness | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Tests system stability under varying conditions or with different data inputs |

| Coherence | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Ensures internal consistency across different system components or methodologies |

| Generalization | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | Determines how well the system performs on new, unseen data |

The validation process requires different datasets for development and validation stages to prevent overfitting and ensure realistic performance assessment [2]. For forensic applications, using case-relevant data is crucial, as system performance can vary significantly across different evidence types and population characteristics.

Recent research has investigated how the reliability of LR-based systems is affected by sampling variability, particularly regarding author numbers in text comparison databases. Findings indicate that systems can achieve stable performance with sufficient samples (e.g., 30-40 authors contributing multiple documents), with variability mostly attributable to calibration processes rather than discrimination capability [4].

Experimental Comparisons and Performance Data

Experimental comparisons provide critical insights into the real-world performance of different LR approaches. Monte Carlo simulation studies offer particularly valuable evidence by enabling comparison of calculated LR values against reference values derived from fully specified probability distributions [3].

In one such simulation comparing three score-based procedures, researchers established that:

- Procedures using similarity-only scores produced poorly calibrated LRs that failed to accurately reflect evidence strength

- Procedures incorporating similarity and typicality demonstrated significantly better performance, with LR values closer to reference values

- The superiority of similarity-typicality scores held across various experimental conditions and evidence types [3]

Performance data from forensic fingerprint evaluation further illustrates these principles. When using AFIS comparison scores to compute LRs, researchers established specific validation criteria including accuracy thresholds (Cllr < 0.2) to determine whether LR methods met required standards for casework implementation [2].

Table 3: Example Experimental Results from Fingerprint LR Validation

| Performance Aspect | Baseline Method Result | Improved Method Result | Relative Change | Validation Decision |

|---|---|---|---|---|

| Accuracy (Cllr) | 0.25 | 0.18 | -28% | Pass |

| Discriminating Power (EER) | 8.5% | 6.2% | -27% | Pass |

| Calibration (Cllr-cal) | 0.30 | 0.20 | -33% | Pass |

These experimental protocols typically involve comparing evidence items under controlled conditions where ground truth is known, enabling precise measurement of how well LR systems discriminate between same-source and different-source specimens while properly calibrating the strength of evidence [2].

Research Reagents and Essential Materials

Implementing and validating LR systems requires specific research reagents and computational materials that form the essential toolkit for forensic researchers:

Reference Databases: Curated collections of known-source specimens with verified provenance, essential for establishing relevant population distributions and calculating typicality [3] [2]. These databases must be representative of casework materials and sufficiently large to ensure stable system performance.

Validation Datasets: Separate collections of known-source and questioned-source specimens with established ground truth, used exclusively for testing system performance without influencing development [2]. These datasets should reflect realistic casework conditions.

AFIS Algorithms: Automated comparison systems (e.g., Motorola BIS/Printrak) that generate similarity scores from pattern evidence such as fingerprints [2]. These algorithms function as "black boxes" to produce comparison metrics without revealing internal methodologies.

Statistical Modeling Software: Computational tools for converting comparison scores into calibrated LRs, typically implementing methods such as kernel density estimation or logistic regression [3] [2]. These models transform raw scores into forensically interpretable LRs.

Performance Evaluation Metrics: Quantitative measures including Cllr, EER, and related statistics that provide standardized assessment of system validity [2]. These metrics enable objective comparison across different LR methodologies.

Monte Carlo Simulation Environments: Computational frameworks for generating synthetic data from fully specified probability distributions, allowing comparison of LR methods against known reference values [3]. These controlled environments enable rigorous testing of methodological assumptions.

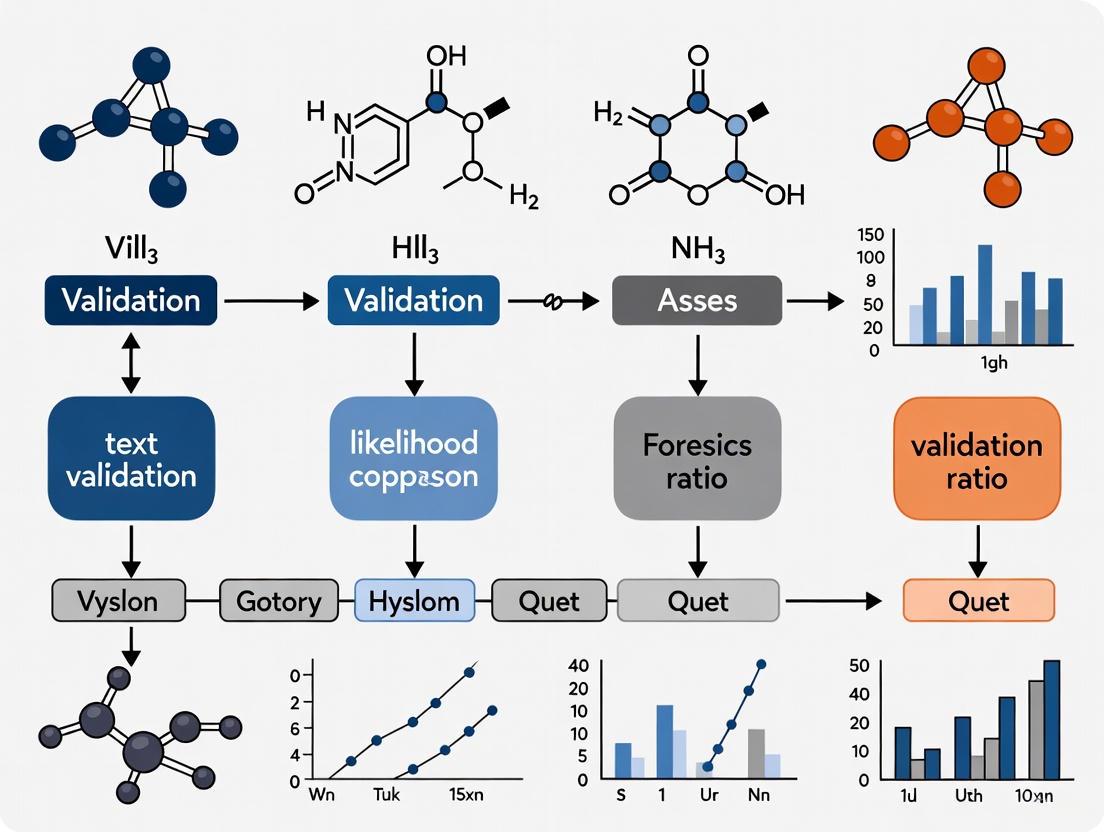

Logical Framework and Validation Workflow

The following diagrams illustrate the logical framework of LR evidence evaluation and the comprehensive validation workflow for LR systems, created using Graphviz DOT language with the specified color palette and contrast requirements.

Logical Framework of LR Evidence Evaluation

LR System Validation Workflow

The uncertainty assessment phase represents a critical component of LR system validation, addressing the potential variability in LR values resulting from different modeling assumptions and methodological choices [1]. This process acknowledges that even with optimal scoring approaches, LR values may vary based on subjective decisions made during system development and application.

In the realm of statistical reasoning and evidence-based disciplines, Bayes' theorem provides a formal mechanism for updating beliefs in light of new evidence. While often expressed in its probability form, the odds form of Bayes' theorem offers distinct advantages for computational efficiency and interpretive clarity, particularly in specialized fields such as forensic text comparison [5] [6]. This formulation transforms the traditional Bayesian update into a more streamlined mathematical relationship that separates prior beliefs from the strength of new evidence.

The theorem fundamentally bridges prior beliefs with new evidence through a simple multiplicative operation: posterior odds = prior odds × likelihood ratio [6]. This elegant relationship allows researchers to quantify how much new evidence should shift their initial beliefs about competing hypotheses. The odds form is especially valuable in forensic science where experts must communicate the strength of evidence without encroaching on the domain of the trier of fact, who maintains responsibility for prior odds assessments [1] [7].

Mathematical Formulation and Comparison

Fundamental Equations

The odds form of Bayes' theorem provides a direct mathematical relationship between competing hypotheses. For two mutually exclusive and exhaustive hypotheses A and B, the formula can be expressed as [6]:

Where:

o(A|D)represents the posterior odds of hypothesis A given data Do(A)represents the prior odds of hypothesis AP(D|A)/P(D|B)represents the likelihood ratio (Bayes factor)

This formulation reveals a critical insight: the normalizing constant required in the probability form of Bayes' theorem cancels out, significantly simplifying calculations [6].

Comparison of Bayesian Forms

Table 1: Comparison of Bayes' Theorem Formulations

| Feature | Probability Form | Odds Form | |||||

|---|---|---|---|---|---|---|---|

| Mathematical Expression | P(A | D) = [P(D | A)P(A)]/P(D) | o(A | D) = o(A) × [P(D | A)/P(D | B)] |

| Normalizing Constant | Requires P(D) | Cancels out in calculation | |||||

| Computational Efficiency | More computationally intensive | Simplified computation | |||||

| Hypothesis Comparison | Indirect comparison of single hypothesis | Direct comparison of competing hypotheses | |||||

| Interpretive Clarity | Less intuitive for evidence strength | Clearly separates evidence strength from prior beliefs |

The probability form computes updated belief in a hypothesis given evidence through a comprehensive probability calculation, while the odds form focuses specifically on comparing competing hypotheses by leveraging the likelihood ratio [5] [6]. This makes the odds form particularly valuable in forensic applications where the evidence must be evaluated in the context of prosecution and defense hypotheses [7].

Application in Forensic Text Comparison

The Likelihood Ratio Framework

In forensic text comparison, the odds form of Bayes' theorem provides the mathematical foundation for the likelihood ratio framework, which has been described as "the logically and legally correct approach for evaluating forensic evidence" [7]. The standard formulation for the likelihood ratio in this context is:

Where:

p(E|Hp)is the probability of the evidence given the prosecution hypothesis (that the suspect is the author)p(E|Hd)is the probability of the evidence given the defense hypothesis (that someone else is the author) [7]

This LR quantitatively expresses the strength of the textual evidence, indicating how much more likely the evidence is under one hypothesis compared to the other.

Casework Application

The complete Bayesian updating process in forensic text comparison follows the odds form [7]:

This formulation properly separates the roles of the forensic scientist (who provides the LR) from the trier of fact (who assesses the prior odds) [1] [7]. This separation is crucial both logically and legally, as it prevents forensic experts from encroaching on the ultimate issue of guilt or innocence [7].

Experimental Validation Protocols

Core Validation Requirements

Empirical validation of likelihood ratio systems in forensic text comparison must fulfill two critical requirements [7]:

Reflecting casework conditions: Experiments must replicate the specific conditions of the case under investigation, including potential mismatches in topics, genres, or communicative situations between compared documents.

Using relevant data: Validation must employ data appropriate to the case circumstances, as the performance of text comparison methods can vary significantly with different types of textual evidence.

These requirements ensure that validation studies accurately represent real-world forensic scenarios, providing meaningful estimates of system performance when applied to actual casework.

Experimental Workflow

The standard experimental protocol for validating likelihood ratio systems in forensic text comparison involves a structured process with multiple stages, as illustrated below:

Validation Metrics and Performance Assessment

Table 2: Key Metrics for Validating Likelihood Ratio Systems

| Metric | Calculation | Interpretation | Application in Text Comparison |

|---|---|---|---|

| Log-Likelihood-Ratio Cost (Cllr) | Complex weighting of LR values | Overall system performance | Primary metric recommended by forensic regulators [7] |

| Tippett Plots | Graphical representation of LRs | Visual assessment of calibration | Shows proportion of LRs supporting true vs. false hypotheses [7] |

| False Positive Rate | Incorrect support for Hp when Hd true | Rate of errors favoring prosecution | Essential for understanding system limitations |

| False Negative Rate | Incorrect support for Hd when Hp true | Rate of errors favoring defense | Balanced assessment of system performance |

These metrics provide comprehensive assessment of both the discrimination ability (how well the system distinguishes between same-author and different-author texts) and calibration (how accurately the LRs represent the actual strength of evidence) of forensic text comparison systems.

Bayesian Reasoning Process Visualization

The fundamental process of Bayesian updating through the odds form involves a systematic integration of prior beliefs with new evidence, as shown in the following workflow:

This visualization highlights how the odds form cleanly separates the contribution of prior beliefs (typically within the domain of the trier of fact) from the strength of new evidence (typically within the domain of the forensic expert).

Research Reagent Solutions for Forensic Text Comparison

Table 3: Essential Research Materials for Likelihood Ratio Validation

| Research Component | Function | Implementation Example |

|---|---|---|

| Text Corpora | Provide relevant data for validation | Domain-specific collections reflecting casework topics [7] |

| Statistical Models | Calculate probabilities under competing hypotheses | Dirichlet-multinomial models for text [7] |

| Calibration Methods | Adjust raw scores to meaningful LRs | Logistic regression calibration [7] |

| Validation Metrics | Assess system performance and reliability | Cllr, Tippett plots, error rates [7] |

| Experimental Protocols | Ensure scientifically defensible validation | Black-box studies with known ground truth [1] |

| Computational Frameworks | Implement LR calculation and validation | Custom software packages for forensic text analysis |

These components form the essential toolkit for developing, implementing, and validating likelihood ratio systems in forensic text comparison research. The selection of appropriate text corpora is particularly critical, as the performance of authorship analysis methods can vary significantly across different types of texts, topics, and genres [7]. Similarly, proper calibration methods are necessary to ensure that the numerical values of LRs accurately represent the strength of evidence, enabling meaningful interpretation by legal decision-makers.

Sensitivity, Typicality, and the Probative Value of LRs

The likelihood ratio (LR) serves as a fundamental framework for evaluating forensic evidence, providing a logically and legally correct approach to quantify the strength of evidence in forensic text comparison (FTC) [7]. The LR framework enables forensic practitioners to move beyond subjective opinions toward transparent, reproducible, and quantitatively validated methodologies [7] [8]. This formal approach is increasingly mandated by international standards, including ISO 21043, which provides requirements and recommendations to ensure quality throughout the forensic process [8].

Within forensic text comparison, the LR quantitatively expresses the ratio of two probabilities under competing hypotheses concerning the source of a questioned document. As expressed in Equation (1), the LR equals the probability of the evidence assuming the prosecution hypothesis ((Hp)) is true, divided by the probability of the same evidence assuming the defense hypothesis ((Hd)) is true [7]. In typical FTC casework, (Hp) posits that the questioned and known documents originate from the same author, while (Hd) proposes they originate from different authors [7]. The further the LR value deviates from 1, the stronger the support for either (Hp) (LR > 1) or (Hd) (LR < 1).

Table 1: Core Components of the Likelihood Ratio Framework

| Component | Formula Notation | Interpretation in Forensic Text Comparison |

|---|---|---|

| Evidence | (E) | The textual data under examination (e.g., writing style features) |

| Prosecution Hypothesis | (H_p) | "The questioned and known documents were produced by the same author" |

| Defense Hypothesis | (H_d) | "The questioned and known documents were produced by different authors" |

| Similarity | (p(E|H_p)) | Probability of observing the evidence given the same author wrote both documents |

| Typicality | (p(E|H_d)) | Probability of observing the evidence given a different author wrote the documents |

| Likelihood Ratio | (LR = \frac{p(E|Hp)}{p(E|Hd)}) | Quantitative measure of the strength of the evidence |

Conceptual Foundations: Sensitivity and Typicality

The probabilistic foundation of the LR framework rests upon two interconnected concepts: sensitivity and typicality. These concepts provide the conceptual underpinnings for the two probabilities that form the LR.

Sensitivity: The Similarity Component

Sensitivity refers to the probability of the evidence given the prosecution hypothesis, (p(E\|H_p)) [7]. This component assesses how similar the textual features are between the questioned document and known documents from a suspected author. In practical terms, a high degree of sensitivity indicates that the writing styles across the documents are consistent with originating from the same author. Forensic text comparison systems evaluate sensitivity by measuring the alignment between documents across various linguistic features, such as lexical patterns, syntactic structures, or character n-grams [9].

Typicality: The Distinctiveness Component

Typicality refers to the probability of the evidence given the defense hypothesis, (p(E\|H_d)) [7]. This component evaluates how distinctive the observed similarities are by assessing whether the writing style in the questioned document commonly appears in the broader population of potential authors. A low typicality value (making the LR higher) indicates that the shared features are unusual and not widely distributed across other authors, thus strengthening the evidence against a coincidental match. Typicality is measured by comparing the questioned document's features against a relevant background population [7] [10].

Experimental Protocols for LR System Validation

The Consensus Validation Framework

Empirical validation under casework conditions represents a critical requirement for forensically valid LR systems [7] [11]. The consensus in the forensic science community mandates that validation experiments must fulfill two primary requirements: (1) reflecting the conditions of the case under investigation, and (2) using data relevant to the case [7]. This approach ensures that performance metrics accurately represent real-world applicability rather than ideal laboratory conditions.

For forensic text comparison specifically, researchers must carefully determine specific casework conditions requiring validation, identify what constitutes relevant data, and establish the necessary quality and quantity of data for robust validation [7]. This is particularly crucial given the complexity of textual evidence, where authors' idiolects interact with numerous contextual factors including topic, genre, formality, and emotional state [7].

Addressing Mismatched Conditions

Validation protocols must specifically test system performance under mismatched conditions that reflect real-world forensic challenges. The experimental protocol for testing topic mismatch involves:

- Database Construction: Compiling document collections with controlled topic variations, including matched-topic and cross-topic comparisons [7].

- LR Calculation: Computing likelihood ratios using appropriate statistical models such as the Dirichlet-multinomial model for textual data [7].

- Performance Assessment: Evaluating system outputs using the log-likelihood-ratio cost (Cllr) metric and visualizing results with Tippett plots [7] [12].

- Calibration: Applying logistic-regression calibration to improve the alignment of LR values with their intended meaning [7].

Similar protocols apply to other mismatched conditions, such as variations in within-speaker sample sizes, where researchers systematically manipulate token numbers between test/development databases and background databases to assess performance degradation [12].

Figure 1: LR System Validation Workflow. This diagram illustrates the sequential protocol for validating likelihood ratio systems under forensically relevant conditions.

Quantitative Performance Comparison of LR Methodologies

The search results reveal several methodological approaches for implementing LR systems in forensic text comparison, each with distinct performance characteristics. The table below summarizes key experimental findings from validation studies.

Table 2: Performance Comparison of LR Methodologies in Textual Evidence

| Methodology | Application Context | Performance Metrics | Key Findings |

|---|---|---|---|

| Dirichlet-Multinomial Model [7] | Forensic Text Comparison (Topic Mismatch) | Cllr, Tippett Plots | Proper validation with relevant data and case conditions produces more reliable LRs than non-validated approaches |

| Authorship Verification Methods [9] | Forensic Voice Comparison (Speech Data) | Cllr < 1 threshold | N-gram tracing exploiting typicality & similarity performed best; Cllr below 1 for most experiments |

| Multivariate Kernel Density [12] | Forensic Voice Comparison (Sample Size) | Cllr | Performance improved with more tokens in background database; 6+ tokens showed marginal improvement |

| Cosine Delta, Impostors Method [9] | Authorship Verification (Speech Data) | Cllr | Demonstrated speaker discriminatory power in word frequency information from speech transcripts |

The Uncertainty Pyramid: Assessing LR Reliability

A critical yet often overlooked aspect of LR systems involves comprehensive uncertainty characterization. The uncertainty pyramid framework provides a structured approach to assess the range of LR values attainable under different reasonable modeling assumptions [1]. This is essential because even career statisticians cannot objectively identify a single authoritative model for translating data into probabilities [1].

The uncertainty pyramid operates through a lattice of assumptions, where each level represents different criteria for model reasonableness. Exploring multiple ranges of LR values corresponding to different criteria enables researchers and legal decision-makers to better understand the relationships between interpretation, data, and assumptions [1]. This approach acknowledges that sampling variability, measurement errors, and variability in choice of assumptions and models all contribute to uncertainty in final LR values.

Essential Research Reagents for Experimental LR Research

Table 3: Research Reagent Solutions for LR System Validation

| Research Reagent | Function in LR Validation | Application Examples |

|---|---|---|

| Relevant Text Corpora | Provides population data for estimating typicality | Topic-controlled documents, representative genre samples [7] |

| Statistical Software Platforms | Implements LR calculation models | Dirichlet-multinomial modeling, kernel density estimation [7] [12] |

| Performance Metrics | Quantifies system validity and reliability | Cllr (log-likelihood-ratio cost), Tippett plots [7] [12] |

| Calibration Algorithms | Adjusts raw LR outputs to improve accuracy | Logistic regression calibration [7] [12] |

| Validation Databases | Tests system performance under casework conditions | Databases with known ground truth and controlled variables [11] |

The probative value of likelihood ratios in forensic text comparison fundamentally depends on the rigorous validation of both sensitivity ((p(E\|Hp))) and typicality ((p(E\|Hd))) components under casework conditions. The experimental data demonstrates that properly validated systems employing relevant data and appropriate statistical models can provide scientifically defensible evidence for legal decision-makers [7] [11]. The international movement toward standardized frameworks, including ISO 21043 and the LR framework mandate in the United Kingdom by October 2026, underscores the growing consensus on these methodological requirements [7] [8].

Future research must continue to address the complex interplay of linguistic variables affecting writing style while developing more sophisticated approaches to uncertainty quantification. Only through transparent, empirically validated, and forensically grounded LR systems can the field of forensic text comparison fulfill its scientific obligations to the justice system.

The Current State of LR Comprehension and Presentation for Legal Decision-Makers

Forensic text comparison (FTC) plays a crucial role in the justice system by providing scientific evidence regarding the authorship of questioned documents. The likelihood ratio (LR) framework has emerged as the logically and legally correct approach for evaluating and presenting the strength of such forensic evidence [7]. This framework quantitatively expresses how much more likely the evidence is under the prosecution's hypothesis (e.g., that the defendant authored the questioned text) compared to the defense's hypothesis (e.g., that someone else authored it) [7]. Proper comprehension of LRs is therefore critical for legal decision-makers, including judges and jurors, who must update their beliefs about case hypotheses based on forensic testimony.

Despite its scientific superiority, the translation of this statistical framework into practical legal understanding faces significant challenges. Recent research highlights that legal decision-makers often struggle with probabilistic reasoning, creating a substantial gap between statistical presentation and legal comprehension [13]. Simultaneously, the validation of LR systems used in forensic text comparison has emerged as a critical scientific issue, with researchers emphasizing that validation studies must replicate actual case conditions to produce meaningful results [7] [14]. This article examines the current state of LR comprehension and presentation, focusing specifically on recent advances in forensic text comparison research and the critical validation methodologies required to ensure reliable evidence presentation in legal contexts.

The Comprehension Challenge: Bridging Statistical Science and Legal Decision-Making

Current Understanding of Likelihood Ratios

The comprehension of likelihood ratios by legal decision-makers remains an area of significant concern and active research. A comprehensive review of existing literature reveals that the empirical research specifically focusing on LR comprehension is surprisingly limited [13]. Most studies have investigated the understanding of "strength of evidence" in general terms rather than focusing specifically on the LR framework that forensic scientists increasingly advocate as the gold standard.

Legal decision-makers, including judges and jurors, often lack the statistical literacy required to properly interpret LRs in isolation. The challenge is compounded by the fact that LRs are part of a Bayesian framework, where the prior odds (based on other case evidence) must be combined with the LR to obtain posterior odds [7]. This process involves probabilistic reasoning that does not come naturally to most laypeople and many legal professionals. The communication challenge is further exacerbated by the fact that forensic scientists cannot legally present posterior odds, as this would encroach on the ultimate issue of guilt or innocence that is reserved for the trier-of-fact [7].

Presentation Formats and Their Limitations

Several presentation formats for LRs have been explored in the literature, each with distinct advantages and limitations:

- Numerical LR values: The purest form of presentation, but difficult for laypersons to interpret accurately

- Numerical random-match probabilities: An alternative formulation that may be more intuitive but changes the focus from evidence strength to match probability

- Verbal strength-of-support statements: Qualitative descriptions (e.g., "moderate support") that are more accessible but lack precision and standardization [13]

Critically, none of the existing studies have specifically tested comprehension of verbal likelihood ratios, creating a significant gap in our understanding of how to best communicate LR values to legal decision-makers [13]. The existing research body does not currently provide a definitive answer regarding the optimal presentation format, though it does offer methodological recommendations for future studies aiming to address this critical question.

Validation in Forensic Text Comparison: A Critical Foundation

The Validation Imperative

In forensic text comparison, as in all forensic disciplines, proper validation of methods is fundamental to producing reliable evidence. There is growing consensus that scientific validation of forensic inference systems must include four key elements: (1) quantitative measurements, (2) statistical models, (3) the LR framework, and (4) empirical validation [7]. The validation process must meet two critical requirements: replicating the conditions of the case under investigation (Requirement 1), and using data relevant to the case (Requirement 2) [7] [14].

The importance of proper validation was highlighted in landmark reports by the National Research Council (2009) and the President's Council of Advisors on Science and Technology (2016), which revealed that many forensic methods, including some used in textual analysis, lacked proper scientific validation [15]. These reports fundamentally challenged the judiciary's historical reliance on the "myth of accuracy" in forensic science and emphasized the need for rigorous validation based on empirical testing rather than mere expert testimony [15].

Topic Mismatch: A Case Study in Validation

Recent research has demonstrated the critical importance of proper validation through experiments examining topic mismatch in forensic text comparison. Ishihara et al. (2024) performed simulated experiments comparing validation approaches that properly replicated case conditions versus those that overlooked this requirement [7] [14]. Their study used a Dirichlet-multinomial model to calculate LRs, followed by logistic-regression calibration, with results assessed using the log-likelihood-ratio cost (Cllr) and visualized using Tippett plots [7].

The experiments revealed that when validation fails to account for topic mismatch between questioned and known documents—a common scenario in real cases—the resulting LRs can be highly misleading. This occurs because writing style varies substantially across topics, genres, and communicative situations [7]. Without properly accounting for these variables in validation studies, the performance metrics of an FTC system may not reflect its actual casework performance, potentially leading to incorrect legal decisions.

Table 1: Key Experimental Findings in FTC Validation

| Research Focus | Methodology | Key Finding | Practical Implication |

|---|---|---|---|

| Topic Mismatch Effects | Dirichlet-multinomial model + logistic regression calibration | LRs can be misleading when validation doesn't replicate case conditions | Validation must account for specific mismatch types present in casework |

| Background Data Size | Cosine distance + Monte Carlo simulation | System stabilizes with 40-60 authors; poor performance with limited data due to calibration issues | Minimum background data requirements exist for reliable FTC [16] |

| Score-based vs. Feature-based | Comparative analysis using Cllr metric | Score-based approach more robust to data scarcity than feature-based approach | Methodology choice impacts performance with limited data [16] |

Experimental Protocols in Forensic Text Comparison Research

Core Methodological Framework

The experimental protocols used in FTC validation studies follow a systematic process to ensure reliable and reproducible results:

Data Collection and Preparation: Researchers gather text corpora that represent the relevant population, ensuring appropriate metadata for author profiling and topic classification.

Feature Extraction: Documents are typically represented using a bag-of-words model or more sophisticated linguistic features, transforming qualitative textual characteristics into quantitative measurements [7].

Score Generation: Using similarity measures such as Cosine distance, the system generates scores representing the similarity between questioned and known documents [16].

LR Calculation: Statistical models (e.g., Dirichlet-multinomial) calculate likelihood ratios based on the similarity scores and background data [7].

Calibration: Methods like logistic regression calibrate the raw scores to produce well-calibrated LRs that accurately represent the strength of evidence [7].

Performance Assessment: The Cllr metric evaluates system performance, measuring the cost of the LRs in terms of their discriminative ability and calibration [16] [7].

This methodological framework ensures that FTC systems undergo rigorous testing under conditions that mirror real casework, providing meaningful information about their reliability and limitations.

Background Data Considerations

The size and composition of background data significantly impact FTC system performance. Research has demonstrated that score-based LR systems exhibit robust performance even with relatively small background datasets, stabilizing with data from approximately 40-60 authors [16]. This finding is particularly important for practical applications where comprehensive background data may be difficult to obtain.

Performance issues with limited background data are primarily attributed to poor calibration rather than problems with discriminative ability [16]. This suggests that calibration methods should be carefully selected and validated, especially when working with smaller reference populations. The robustness of score-based approaches appears superior to feature-based methods in data-scarce environments, though further research is needed to confirm this finding [16].

Quantitative Performance Data in Forensic Text Comparison

System Performance Metrics

The performance of LR systems in forensic text comparison is quantitatively evaluated using specific metrics, with the log-likelihood-ratio cost (Cllr) serving as a primary measure. Cllr assesses both the discrimination and calibration of a system, with lower values indicating better performance [16] [7]. Research has demonstrated that properly validated systems can achieve stable performance with manageable background data sizes, making FTC practically feasible even with limited reference populations.

Table 2: Performance Data for Forensic Comparison Systems Across Disciplines

| Forensic Discipline | Methodology | Performance Metric | Key Finding | Reference |

|---|---|---|---|---|

| Forensic Text Comparison | Score-based LR with Cosine distance | Cllr | System stabilizes with 40-60 authors in background data | [16] |

| Forensic Voice Comparison | GMM-UBM vs. MVKD | Cllr and 95% credible interval | GMM-UBM outperformed MVKD in accuracy and precision | [17] |

| Fingerprint Comparison | Score-based LR with AFIS | Rates of misleading evidence | Substantial evidential strength even for comparisons\nnot meeting 12-point standard | [18] |

Implications for Legal Proceedings

The quantitative performance data from validation studies has profound implications for legal proceedings. Understanding the error rates and limitations of forensic methods is essential for judges exercising their gatekeeping function regarding the admissibility of evidence [15]. The Daubert standard, followed by federal courts and many state courts, requires judges to assess whether forensic methodology has been properly tested, its error rate established, and whether it has been subject to peer review and publication [15].

Recent research suggests that courts must transition from "trusting the examiner" to "trusting the scientific method" [15]. This shift necessitates that legal professionals understand the validation metrics used in forensic science, including the meaning of Cllr values and their implications for the reliability of evidence. Furthermore, the finding that poor performance in limited data situations stems primarily from calibration issues rather than discriminative ability [16] provides important guidance for both forensic developers and legal professionals evaluating the robustness of forensic evidence.

The Scientist's Toolkit: Essential Research Reagents in FTC

Table 3: Essential Research Reagents for Forensic Text Comparison

| Research Reagent | Function | Application in FTC |

|---|---|---|

| Text Corpora | Provides background data for reference populations | Represents relevant population for casework validation [7] |

| Bag-of-Words Model | Transforms textual data into quantitative representations | Feature extraction for authorship analysis [16] [7] |

| Cosine Distance | Measures similarity between document representations | Score generation in score-based LR systems [16] |

| Dirichlet-Multinomial Model | Statistical model for text data | Calculates likelihood ratios from textual features [7] |

| Logistic Regression Calibration | Adjusts raw scores to produce well-calibrated LRs | Ensures LRs accurately represent evidence strength [7] |

| Monte Carlo Simulation | Technique for synthesizing population data | Tests system robustness against background data size [16] |

The current state of LR comprehension and presentation for legal decision-makers reveals a field in transition. While the scientific foundation for likelihood ratios in forensic text comparison has advanced significantly—with robust validation methodologies and quantitative performance metrics—the translation of this scientific progress into legal comprehension remains challenging. The critical gap between statistical presentation and legal understanding must be addressed through targeted research on comprehension and improved presentation formats.

For researchers and practitioners in forensic text comparison, the imperative is clear: validation studies must rigorously replicate casework conditions, including challenging scenarios like topic mismatch, and must use relevant background data. The experimental protocols and quantitative metrics discussed provide a framework for such validation. As courts increasingly demand scientific rigor in forensic evidence, driven by the findings of the NRC and PCAST reports [15], the continued refinement of both LR systems and their communication to legal decision-makers will be essential for the proper administration of justice.

The concept of idiolect represents a foundational principle in forensic linguistics, referring to the distinctive, individuating way of speaking and writing that characterizes each individual [7]. This linguistic fingerprint is fully compatible with modern theories of language processing in cognitive psychology and cognitive linguistics, forming a scientifically-grounded basis for authorship analysis [7]. In forensic text comparison (FTC), the idiolect is understood as a complex manifestation of authorship that encodes not only identity but also information about the author's social group, community affiliations, and the communicative situations under which texts were composed [7].

The scientific validation of authorship analysis methods has become increasingly crucial in forensic science, with emerging consensus that robust approaches must incorporate quantitative measurements, statistical models, and the likelihood-ratio (LR) framework for interpreting evidence [7]. This article examines the scientific basis of stylometry through the lens of idiolect, comparing leading methodological approaches and their validation within the rigorous requirements of forensic evidence evaluation. As the field moves toward more empirically defensible practices—with jurisdictions like the United Kingdom mandating the LR framework across forensic science disciplines by October 2026—understanding the technical protocols and performance characteristics of different stylometric methods becomes essential for researchers, scientists, and legal professionals [7].

Theoretical Framework and Key Concepts

Stylometry operates on the premise that every author exhibits consistent, quantifiable patterns in their use of language, which can be discriminated from other authors through appropriate statistical analysis. The theoretical underpinnings of this field bridge computational linguistics, forensic science, and cognitive psychology, with the idiolect serving as the central object of study.

The likelihood ratio framework provides the logical and legal foundation for evaluating forensic text evidence, expressed mathematically as:

[ LR = \frac{p(E|Hp)}{p(E|Hd)} ]

where (E) represents the linguistic evidence, (Hp) typically denotes the prosecution hypothesis that the suspect authored the questioned document, and (Hd) represents the defense hypothesis that someone else authored it [7]. The LR quantitatively expresses how much more likely the evidence is under one hypothesis versus the other, providing a transparent and statistically sound measure of evidential strength [7]. This framework logically updates the prior beliefs of the trier-of-fact through Bayes' Theorem, formally expressed as:

[ \frac{p(Hp)}{p(Hd)} \times \frac{p(E|Hp)}{p(E|Hd)} = \frac{p(Hp|E)}{p(Hd|E)} ]

where the prior odds multiplied by the LR equal the posterior odds [7]. This mathematical formalization ensures logical consistency in evidence interpretation while maintaining the appropriate separation of roles between forensic experts (who provide LRs) and legal decision-makers (who assess prior and posterior odds).

Stylometric Approaches and Methodological Comparisons

Taxonomy of Authorship Analysis Methods

Authorship attribution methods encompass several distinct tasks with different operational objectives [19]. Authorship Attribution (AA) identifies the author of an unknown document from a set of candidate authors; Authorship Verification (AV) determines whether two texts were written by the same author; Authorship Characterization detects sociolinguistic attributes like gender, age, or educational level; Authorship Discrimination checks if two different texts share authorship; and Plagiarism Detection identifies reproduced text segments [19]. The methodological approaches to these tasks can be broadly categorized into five paradigms: stylistic, statistical, language modeling, machine learning, and deep learning approaches [19].

Table 1: Classification of Authorship Analysis Methods

| Model Category | Key Features | Representative Techniques |

|---|---|---|

| Stylistic Models | Analyze authorial fingerprints through writing-style markers | Stylometric analysis, punctuation patterns, semantic frames [19] |

| Statistical Models | Quantify linguistic features using statistical distributions | Burrows' Delta, Cosine Delta, Z-scores [20] [21] |

| Language Models | Model probability distributions of linguistic units | N-gram models, character-level language modeling [19] |

| Machine Learning | Apply classification algorithms to feature sets | Ensemble methods, SVM, Random Forests [22] |

| Deep Learning | Utilize neural networks for feature learning | DistilBERT, transformer-based architectures [22] |

Experimental Protocols in Stylometric Analysis

Burrows' Delta Methodology for Stylistic Comparison

Burrows' Delta stands as a foundational method in computational stylistics, particularly prominent in authorship attribution studies [20]. The protocol involves several methodical steps:

Corpus Preparation: Assemble a collection of texts with known authorship, ensuring balance in text length and genre where possible. The test texts (those of unknown authorship) should be comparable in domain and register.

Feature Selection: Identify the Most Frequent Words (MFW) in the corpus—typically ranging from 100 to 1000 words, with function words being particularly discriminative. The exact number is determined through empirical testing.

Frequency Calculation: Compute the relative frequency of each MFW in each text, creating a document-term matrix where rows represent texts and columns represent word frequencies.

Standardization: Convert raw frequencies to Z-scores by subtracting the corpus mean and dividing by the corpus standard deviation for each word. This normalization accounts for different baselines in word usage across the corpus.

Delta Calculation: For each pair of texts, compute the mean absolute difference between their Z-scores across all MFW. The formula for Burrows' Delta between text A and text B is:

[ \Delta{AB} = \frac{1}{N}\sum{i=1}^{N}|Z{iA} - Z{iB}| ]

where (N) is the number of MFW, and (Z{iA}) and (Z{iB}) are the Z-scores for word (i) in texts A and B respectively [20].

Visualization and Interpretation: Apply clustering techniques (hierarchical clustering, multidimensional scaling) to visualize relationships between texts and identify groupings by authorship [20].

This methodology has demonstrated particular effectiveness in discriminating human from AI-generated texts, with studies revealing clear stylistic distinctions—human-authored texts form broader, more heterogeneous clusters reflecting individual expression diversity, while LLM outputs display higher stylistic uniformity, clustering tightly by model [20].

Score-Based Likelihood Ratio Framework

The score-based likelihood ratio approach represents a more forensically-oriented methodology for authorship analysis [21]. The experimental protocol involves:

Text Representation: Convert text data into a numerical representation using a bag-of-words model with Z-score normalized relative frequencies of selected most-frequent words.

Score Generation: Calculate similarity scores between questioned and known documents using distance measures such as Euclidean, Manhattan, or Cosine distance as score-generating functions [21].

Model Building: Construct score-to-likelihood-ratio conversion models using a common source method, fitting parametric models (Normal, Log-normal, Gamma, Weibull distributions) to same-author and different-author score distributions.

Validation: Assess system validity using the log-likelihood-ratio cost (Cllr) and visualize strength and calibration of derived LRs using Tippett plots [21].

Performance Optimization: Experiment with different feature vector lengths (N) and document lengths to optimize system performance, with research indicating the Cosine measure consistently outperforms other distance functions, particularly with N = 260 regardless of document length [21].

This methodology has demonstrated robust performance across different document lengths, with Cllr values of 0.70640, 0.45314, and 0.30692 for 700, 1400, and 2100-word documents respectively, showing improved discrimination with longer texts [21].

Diagram 1: Score-based LR workflow

Comparative Performance Analysis

Quantitative Performance Metrics

Empirical evaluations of different stylometric approaches reveal distinct performance characteristics across methodologies and application contexts. The table below summarizes key performance metrics from recent studies:

Table 2: Performance Comparison of Authorship Attribution Methods

| Method | Dataset | Accuracy/Performance | Key Findings |

|---|---|---|---|

| Ensemble Learning + DistilBERT [22] | "All the news" (10 authors) | 3.14% accuracy gain over baseline | Combined count vectorizer and bi-gram TF-IDF features enhanced performance |

| Ensemble Learning [22] | "All the news" (20 authors) | 5.25% accuracy gain over baseline | Effective for larger author sets |

| DistilBERT [22] | "All the news" (20 authors) | 7.17% accuracy gain over baseline | Superior performance with larger author sets |

| Score-Based LR (Cosine) [21] | Amazon Product Data (700 words) | Cllr: 0.70640 | Cosine measure consistently outperformed other distance functions |

| Score-Based LR (Cosine) [21] | Amazon Product Data (1400 words) | Cllr: 0.45314 | Performance improved with longer documents |

| Score-Based LR (Cosine) [21] | Amazon Product Data (2100 words) | Cllr: 0.30692 | Logistic regression fusion achieved Cllr of 0.23494 |

| Burrows' Delta [20] | Beguš Corpus (Human vs AI) | Clear stylistic separation | Human texts: heterogeneous clusters; AI: uniform, model-specific clusters |

Methodological Strengths and Limitations

Each major approach to authorship analysis exhibits distinctive strengths and limitations in forensic applications:

Burrows' Delta and Variants demonstrate particular effectiveness in literary and creative texts, with advantages including simplicity, interpretability, and minimal requirement for linguistic annotation [20]. Limitations include sensitivity to topic variation and potentially reduced performance with very short texts. The method has proven highly effective in discriminating human from AI-generated creative writing, revealing that while GPT-4 shows greater internal consistency than GPT-3.5, both remain distinguishable from human writing [20].

Score-Based Likelihood Ratio Approaches offer the key advantage of providing mathematically rigorous, forensically-valid evidence evaluation within the likelihood ratio framework [21]. These methods produce well-calibrated LRs that properly weigh evidence and maintain robustness with limited background data. Challenges include computational complexity and the need for sufficient reference data for model building.

Machine Learning and Deep Learning Methods achieve state-of-the-art performance in many authorship attribution tasks, particularly with large author sets [22]. Ensemble methods and transformer-based architectures like DistilBERT demonstrate significant accuracy gains, but may face challenges in interpretability and adherence to forensic validation standards.

Validation in Forensic Text Comparison

Empirical Validation Requirements

The validation of forensic inference systems demands strict adherence to two key requirements: (1) reflecting the conditions of the case under investigation, and (2) using data relevant to the case [7]. These requirements are particularly critical in forensic text comparison, where factors such as topic mismatch between questioned and known documents can significantly impact system performance [7]. Research demonstrates that validation experiments overlooking these requirements—for instance, using same-topic training data when casework involves cross-topic comparisons—can substantially mislead the trier-of-fact regarding actual method capabilities [7].

The complex nature of textual evidence necessitates careful consideration of validation protocols. Beyond topic influences, authorship analysis must account for numerous potential confounding factors including genre, register, modality, document length, time between compositions, and the author's emotional state [7]. Each factor represents a dimension along which realistic validation must test system robustness, particularly because these conditions are highly variable and case-specific in real forensic contexts [7].

Diagram 2: FTC validation framework

Research Gaps and Future Directions

Despite advances in authorship analysis methodologies, significant research gaps remain in forensic text comparison validation. Three crucial issues require further investigation: (1) determining specific casework conditions and mismatch types that require validation; (2) establishing what constitutes relevant data for different forensic contexts; and (3) defining the quality and quantity of data required for robust validation [7]. Additionally, the field must address challenges including the lack of universal feature extraction techniques applicable across domains, language dependencies in methodology, and limitations in existing datasets [19].

Future research directions should prioritize developing validation frameworks that systematically test method robustness across the full range of forensically-relevant conditions, establishing standardized protocols for data relevance assessment, and creating shared evaluation resources that enable proper comparison of different approaches [7] [19]. Furthermore, as AI-generated text becomes more prevalent, research must explore whether human and machine writing styles are converging or remaining distinguishable through advanced stylometric analysis [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for Stylometric Analysis

| Research Reagent | Function | Application Context |

|---|---|---|

| Burrows' Delta Algorithm | Measures stylistic similarity using most frequent word z-scores | Authorship attribution, historical text analysis, AI vs human discrimination [20] |

| Score-Based LR System | Converts stylistic distances to likelihood ratios | Forensic text comparison, evidence evaluation in legal contexts [21] |

| Bag-of-Words Model with Z-score Normalization | Represents texts for quantitative comparison | Feature extraction for authorship verification [21] |

| Cosine Distance Metric | Calculates stylistic similarity between vectorized texts | Distance measurement in high-dimensional feature spaces [21] |

| Hierarchical Clustering | Visualizes relationships between texts based on stylistic similarity | Exploratory data analysis, validation of authorship groups [20] |

| Multidimensional Scaling (MDS) | Projects high-dimensional stylistic relationships into 2D/3D space | Visual assessment of authorship clusters [20] |

| Cllr (Log-Likelihood-Ratio Cost) | Evaluates the validity and discrimination of LR systems | Validation of forensic evidence evaluation systems [21] |

| Tippett Plots | Visualizes the distribution of LRs for same-source and different-source comparisons | Performance assessment of forensic inference systems [21] |

Stylometric analysis grounded in the concept of idiolect provides a scientifically defensible framework for authorship analysis when implemented with rigorous methodological protocols and empirical validation. The comparison of leading approaches—from established methods like Burrows' Delta to emerging machine learning techniques and forensically-validated likelihood ratio systems—reveals distinct performance characteristics and application contexts for each methodology. As the field advances, the integration of quantitative measurements, statistical modeling, and proper validation within the likelihood ratio framework offers the most promising path toward reliable, transparent, and scientifically-grounded forensic text comparison that meets evolving legal and scientific standards. Future progress will depend on addressing key research gaps in validation methodologies, particularly regarding realistic casework conditions and relevant data requirements, to ensure that forensic text analysis delivers robust, demonstrably reliable results in legal proceedings.

Building Robust FTC Systems: From Poisson Models to Feature Selection

Within the domain of forensic text comparison (FTC), the likelihood ratio (LR) framework has emerged as a fundamental methodology for quantifying the strength of evidence. This framework formally assesses the probability of the evidence under two competing propositions: that a suspect and a questioned document share the same origin (prosecution hypothesis) versus that they originate from different sources (defense hypothesis) [23]. The successful application of this framework hinges on the choice of method for calculating the LR. Score-based methods represent a prominent class of approaches for this task, wherein the high-dimensional data of a text is reduced to a single, scalar distance metric. This guide provides a comparative analysis of two principal score-based methods—one employing cosine distance and the other utilizing Burrows's Delta—situating their performance and operational characteristics within the critical context of validating LR systems for forensic textual evidence [23] [14].

Experimental Comparisons of Score-Based Methods

Empirical evaluations consistently reveal a performance gap between score-based and feature-based methods in LR estimation, underscoring the importance of method selection in system validation.

The following table summarizes key findings from a large-scale empirical study that compared score-based and feature-based methods for LR estimation on the same dataset [24] [23].

| Method Category | Specific Method | Key Performance Metric (Cllr) | Relative Performance |

|---|---|---|---|

| Score-Based | Cosine Distance | Not explicitly stated (Baseline) | Outperformed by feature-based methods |

| Feature-Based | One-Level Poisson Model | Cllr improvement of 0.14-0.2 | Best Performance |

| Feature-Based | One-Level Zero-Inflated Poisson Model | Cllr improvement of 0.14-0.2 | Best Performance |

| Feature-Based | Two-Level Poisson-Gamma Model | Cllr improvement of 0.14-0.2 | Best Performance |

Critical Performance Characteristics for Forensic Validation

The core finding is that feature-based methods demonstrably outperform the cosine distance score-based method, with a Cllr improvement of 0.14 to 0.2 when comparing their best results [24] [23]. The log-likelihood ratio cost (Cllr) is a primary metric for assessing the validity of an LR system, measuring both its discriminatory power (Cllrmin) and its calibration reliability (Cllrcal). Furthermore, research indicates that score-based methods can produce LRs that are conservative in magnitude and may be prone to instability, particularly when the dimensionality of the feature vector is high [23] [4]. This instability directly impacts the reliability of the system, a critical factor in forensic validation.

Detailed Methodologies and Protocols

Understanding the experimental protocols is essential for critically evaluating the performance data and ensuring the validity of a forensic text comparison system.

Common Experimental Protocol for Comparison

The comparative study of cosine distance and feature-based methods adhered to a rigorous, standardized protocol [23]:

- Data: Documents from 2,157 authors were used.

- Feature Set: A bag-of-words model was constructed for each document, using the N-most frequent words across all documents (with N ranging from 5 to 400). This creates a high-dimensional feature space where each document is represented by a vector of word counts.

- Model Training & Evaluation: The derived LRs were assessed using the Cllr metric and visualized using Tippett plots, which show the cumulative distribution of LRs for same-author and different-author cases. Performance was also evaluated under varying conditions of document length and feature vector size.

Cosine Distance Methodology

The score-based method using cosine distance operates as follows [23]:

- Feature Vector Creation: Each document is converted into a feature vector, typically using the relative frequencies of the most common words (e.g., the 400 most frequent words).

- Score Calculation: The similarity between a known document (from a suspect) and a questioned document is calculated using cosine distance. This measures the angle between the two document vectors in the high-dimensional space.

- LR Estimation: The calculated cosine distance score is then used to compute a likelihood ratio. This involves modeling the distribution of scores for same-author comparisons and different-author comparisons, often using continuous probability distributions.

Burrows's Delta Methodology

While not the primary subject of the main comparative study, Burrows's Delta is a foundational score-based method in stylometry, and its properties are highly relevant [23]:

- Feature Vector Creation: Similar to the cosine approach, it uses a vector of word frequencies, typically of very frequent words like function words.

- Score Calculation: The Delta statistic is calculated as the mean of the absolute differences between the z-scores of the word frequencies in the two documents being compared.

- Underlying Assumption: A key distinction is that Burrows's Delta implicitly assumes the feature data follows a Laplace (double exponential) distribution [23]. This contrasts with cosine distance, which assumes a normal distribution, and highlights a significant methodological difference.

The diagram below illustrates the shared initial workflow and the point of divergence for these two score-based methods.

The Scientist's Toolkit: Key Research Reagents

The experimental application and validation of score-based methods rely on a set of core "research reagents." The following table details these essential components and their functions in the context of FTC research.

| Research Reagent | Function & Role in Experimental Protocol |

|---|---|

| Reference & Calibration Databases | A collection of texts from a large number of authors (e.g., 2,157) used to model population statistics, calibrate systems, and evaluate performance stability [23] [4]. |

| Bag-of-Words Feature Vector | A text representation model that simplifies a document to a multiset of word counts, typically focusing on the most frequent words (e.g., 400). This is the primary input for the models [23]. |

| Poisson-Based Models | A class of feature-based statistical models that directly model the discrete, count-based nature of textual data (e.g., word frequencies) and are used as a performance benchmark [23]. |

| Log-Likelihood Ratio Cost (Cllr) | The primary validation metric for assessing the overall performance, discrimination, and calibration of an LR system [24] [23]. |

| Tippett Plot | A graphical tool for visualizing the empirical performance and calibration of a forensic evidence evaluation system, showing the cumulative proportion of LRs for same-source and different-source cases [14]. |

Critical Analysis for Forensic Validation

When validating an LR system for forensic text comparison, several critical issues specific to score-based methods must be considered [23] [14]:

- Information Loss: The reduction of a multivariate feature vector to a single score necessarily discards information, which can limit the strength of the evidence.

- Typicality Assessment: A key criticism of score-based methods is that they primarily evaluate the similarity between documents but do not directly incorporate the typicality of the features in the broader population. The LR is formally defined as the ratio of similarity and typicality.

- Distributional Assumptions: Methods like cosine distance and Burrows's Delta rely on assumptions about the underlying data distribution (e.g., normal or Laplace) that may not hold for real-world, discrete textual data, which often follows a positively skewed distribution better modeled by Poisson-based models [23].

- Validation with Relevant Data: It is imperative that validation experiments replicate casework conditions. Performance can degrade significantly with a mismatch in topics between known and questioned documents, or if the reference database is not representative [14].

In forensic text comparison (FTC), the core task is to quantify the strength of evidence for authorship by comparing documents of known and unknown origin. The likelihood ratio (LR) framework provides a rigorous statistical foundation for this process, requiring models that can effectively handle the discrete, non-negative, and often sparse nature of textual data [23]. Feature-based methods that operate directly on multivariate feature counts—such as word frequencies—have emerged as a powerful approach. Among these, models based on the Poisson distribution and its extension, the Zero-Inflated Poisson (ZIP) model, are theoretically well-suited for this task as they naturally model count data and can account for the excess zeros common in text representations like the bag-of-words model [23]. This guide provides an objective comparison of these two models, detailing their implementation, performance, and applicability within a forensic validation framework.

Model Fundamentals: Theoretical Foundations and Data Generation

The Poisson Model

The standard Poisson regression model is a starting point for count data analysis. It assumes that the dependent variable ( Y ), conditional on independent variables ( X ) and parameters ( \beta ), follows a Poisson distribution. The probability mass function is given by: [ P(Yi = yi) = \frac{e^{-\mui} \mui^{yi}}{yi!} ] where ( \mui ) is the mean of the distribution for the ( i )-th observation [25]. In the context of FTC, ( Y ) could represent the frequency of a specific word in a document, and ( \mui ) is modeled as a log-linear function of the covariates: ( \log(\mui) = \boldsymbol{x}i^T\boldsymbol{\alpha} ).

The Zero-Inflated Poisson (ZIP) Model

The ZIP model addresses a common issue in real-world count data: an excess of zero observations beyond what the standard Poisson distribution can accommodate. It is a two-component mixture model that combines a point mass at zero with a Poisson count distribution [26]. Its probability mass function is: [ P(Yi = yi) = \begin{cases} \pii + (1-\pii)e^{-\mui} & \text{if } yi=0 \ (1-\pii)\frac{e^{-\mui}\mui^{yi}}{yi!} & \text{if } yi>0 \end{cases} ] Here, ( \pii ) is the probability of a structural zero (a zero that occurs deterministically, for instance, because a word is not part of an author's vocabulary), and ( \mui ) is the mean of the Poisson component (which accounts for counts, including sampling zeros that occur by chance) [25] [26]. Both parameters can be modeled as functions of covariates using, for example, a logit link for ( \pii ) and a log link for ( \mui ): ( \text{logit}(\pii) = \boldsymbol{z}i^T\boldsymbol{\beta} ), ( \log(\mui) = \boldsymbol{x}i^T\boldsymbol{\alpha} ) [26].

Table 1: Core Components of Poisson and Zero-Inflated Poisson (ZIP) Models

| Model Aspect | Poisson Model | Zero-Inflated Poisson (ZIP) Model |

|---|---|---|

| Data Generation | Single process: All zeros are "sampling zeros" from the Poisson distribution. | Two processes: A binary process for "structural zeros" & a Poisson process for counts [26]. |

| Handling of Zeros | Models zeros only via the Poisson component (( e^{-\mu_i} )). Can underestimate zeros if they are excessive. | Explicitly models excess zeros via a mixture of a degenerate distribution at zero and a Poisson distribution [25] [26]. |

| Variance Assumption | Mean = Variance (( E(yi) = Var(yi) = \mu_i )). Can be violated by overdispersion. | Variance > Mean (( Var(yi) = (1-\pii)\mui(1 + \mui\pi_i) )) [26]. |

| Key Parameters | ( \mu_i ) (mean of the Poisson distribution) [25]. | ( \pii ) (probability of a structural zero), ( \mui ) (mean of the Poisson distribution) [26]. |

| Covariate Modeling | A single set of parameters (( \alpha )) models the effect on the mean ( \mu_i ) [25]. | Two sets of parameters: ( \beta ) for the zero-inflation probability ( \pii ) and ( \alpha ) for the Poisson mean ( \mui ) [25] [26]. |

Experimental Comparison: Performance in Forensic Text Analysis

To objectively compare the performance of Poisson and ZIP models, we draw on empirical studies from forensic text comparison and other fields dealing with zero-inflated count data.

Experimental Protocol

A representative study by Carne & Ishihara (2020) provides a direct comparison within the FTC context [23]. The experimental setup involved:

- Data: Documents from 2,157 authors.

- Feature Extraction: A bag-of-words model was constructed for each document by counting the N most common words (with N ranging from 5 to 400).

- Model Implementation:

- Feature-based Poisson: A one-level Poisson model where word counts are modeled directly.

- Feature-based ZIP: A one-level Zero-Inflated Poisson model that accounts for excess zeros in word counts.

- Evaluation Metric: The performance of the models in estimating LRs was assessed using the log-likelihood ratio cost (Cllr). This metric evaluates the overall performance, decomposable into discrimination (Cllrmin) and calibration (Cllrcal) costs [23]. Lower Cllr values indicate better performance.

Quantitative Results and Analysis

The following table summarizes key performance data from the comparative experiments.

Table 2: Experimental Performance Comparison of Poisson and ZIP Models

| Study Context | Model | Performance Metric | Result | Interpretation |

|---|---|---|---|---|

| Forensic Text Comparison [23] | One-Level Poisson Model | Log-Likelihood Ratio Cost (Cllr) | Baseline | Found to be less effective for text evidence with sparse word counts. |

| One-Level Zero-Inflated Poisson (ZIP) Model | Log-Likelihood Ratio Cost (Cllr) | Outperformed Poisson by a Cllr margin of 0.14-0.2 in best cases [23]. | Better accounts for the zero-inflated nature of text data, leading to more accurate LR estimation. | |

| Crowd Counting (Computer Vision) [27] | Mean Squared Error (MSE) Baseline | Mean Absolute Error (MAE) / RMSE | Baseline (Higher error) | MSE corresponds to a Gaussian error model, a poor match for discrete count data. |

| Zero-Inflated Poisson (ZIP) Framework | Mean Absolute Error (MAE) / RMSE | Outperformed MSE-based method; on UCF-QNRF, outperformed mPrompt by ~3 MAE and 12 RMSE [27]. | ZIP's explicit modeling of structural vs. sampling zeros improves count estimation accuracy. | |

| Dark Spots in Sheep Fleece (Biology) [28] | Poisson Model with Residual | Deviance Information Criterion (DIC) | Favored by DIC | Both models performed reasonably, but their relative performance can depend on the data. |

| ZIP Model with Residual | Parameter Estimate Proximity to True Values | Closer to true values across simulation scenarios [28]. | The ZIP model can provide more accurate parameter estimates in the presence of true zero-inflation. |

The consensus across multiple domains is that the ZIP model consistently outperforms the standard Poisson model when the data exhibits a significant excess of zeros [23] [27]. In FTC, this superiority stems from the ZIP model's ability to more realistically represent the data generation process for word counts, where many zeros are "structural" (a word is not in an author's lexicon) rather than "sampling" zeros (a word from the author's lexicon happened to appear zero times in a given document) [23].

Implementation Guide: Methodologies for Model Deployment

Workflow for Model Selection and Application

The following diagram outlines a logical workflow for implementing and validating Poisson and ZIP models in a forensic text comparison context.

Detailed Experimental Protocols

For researchers seeking to replicate or adapt these models, the following protocols are essential.

Protocol 1: Feature-Based Poisson Regression for FTC

- Data Preparation: Compile a corpus of text documents from known authors. Preprocess the text (tokenization, lowercasing, stop-word removal, stemming) [29].

- Feature Vector Construction: Create a document-term matrix using the N-most frequent words across the corpus (e.g., N=400) [23]. Each cell contains the count of a specific word in a specific document.

- Model Fitting: For a given document pair (known vs. questioned), model the word counts using the Poisson log-linear regression. The model parameters are estimated by maximizing the log-likelihood, potentially with L2 regularization to prevent overfitting [25].

- Likelihood Ratio Calculation: Compute the LR by taking the ratio of the probabilities of the observed word counts under the prosecution (same author) and defense (different authors) hypotheses [23].

Protocol 2: Feature-Based Zero-Inflated Poisson (ZIP) Regression for FTC

- Data & Feature Preparation: Follow Steps 1 and 2 of Protocol 1.

- Model Specification: The ZIP model requires defining two separate model components:

- The Poisson component for the count data: ( \log(\mui) = \boldsymbol{x}i^T\boldsymbol{\alpha} ).

- The Zero-inflation component (Bernoulli) for the probability of a structural zero: ( \text{logit}(\pii) = \boldsymbol{z}i^T\boldsymbol{\beta} ) [26].

- The sets of covariates ( \boldsymbol{x}i ) and ( \boldsymbol{z}i ) can be the same or different.

- Parameter Estimation: Optimize the combined log-likelihood function for the ZIP model. This can be implemented directly without the Expectation-Maximization (EM) algorithm by using numerical optimization techniques on the marginal likelihood [25].

- Validation and LR Calculation: Calculate LRs based on the fitted ZIP model. Performance must be rigorously validated using metrics like Cllr on a separate test set [23].

The Scientist's Toolkit: Essential Research Reagents