Validating GC×GC for Legal Evidence: A Roadmap from Method Development to Courtroom Admissibility

This article provides a comprehensive framework for researchers and drug development professionals seeking to validate Comprehensive Two-Dimensional Gas Chromatography (GC×GC) for legal evidence.

Validating GC×GC for Legal Evidence: A Roadmap from Method Development to Courtroom Admissibility

Abstract

This article provides a comprehensive framework for researchers and drug development professionals seeking to validate Comprehensive Two-Dimensional Gas Chromatography (GC×GC) for legal evidence. It explores the foundational principles and separation power of GC×GC, details methodological approaches for forensic and clinical applications, offers troubleshooting and optimization strategies for robust method development, and critically addresses the validation protocols and legal standards required for courtroom admissibility. By synthesizing current research and legal criteria, this guide serves as an essential resource for transitioning GC×GC from a powerful research tool to a validated technique for legal proceedings.

The Power of GC×GC: Unlocking Superior Separation for Complex Evidence

Comprehensive two-dimensional gas chromatography (GC×GC) has emerged as a powerful analytical technique that provides superior separation for complex mixtures compared to traditional one-dimensional GC (1D-GC). This guide explores the core principles underlying GC×GC's enhanced performance, particularly its unmatched peak capacity and sensitivity, with special consideration to its growing application in forensic science where method validation is paramount for legal admissibility. We examine the technological foundations, present experimental data comparing GC×GC to 1D-GC alternatives, and detail protocols that demonstrate its superior capabilities for analyzing complex forensic samples such as illicit drugs, arson debris, and explosive residues.

Comprehensive two-dimensional gas chromatography (GC×GC) represents a significant advancement in separation science, addressing fundamental limitations of conventional one-dimensional gas chromatography (1D-GC). While 1D-GC provides adequate separation for many applications, its limited peak capacity often results in co-elution of compounds in complex mixtures, which is particularly problematic in forensic analysis where complete characterization of samples is essential for evidentiary purposes [1] [2].

GC×GC overcomes these limitations through a novel instrumental configuration where two separate chromatography columns of different selectivity are connected in series via a special interface called a modulator. This setup creates an orthogonal separation system that dramatically increases resolving power [3]. The first dimension typically employs a conventional non-polar column that separates compounds primarily based on volatility, while the second dimension utilizes a shorter polar column that provides separation based on polarity. The modulator periodically traps, focuses, and reinjects effluent from the first dimension onto the second dimension, creating a highly structured chromatogram with peaks spread across a two-dimensional retention plane [2] [4].

The relevance of GC×GC in forensic science continues to grow, though it has not yet been fully established in routine forensic practice due to challenges in standardized methodology and data interpretation consistency required for legal proceedings. Nevertheless, its ability to perform target analysis, compound class analysis, and chemical fingerprinting makes it increasingly valuable for forensic applications including human scent analysis, arson investigations, security-relevant substances, and environmental forensics [1].

Fundamental Principles of GC×GC Separation

Enhanced Peak Capacity Through Orthogonal Separation

Peak capacity refers to the maximum number of peaks that can be separated with unit resolution in a chromatographic separation. In GC×GC, the overall peak capacity becomes approximately the product of the peak capacities of each dimension, theoretically providing a dramatic increase over 1D-GC [2].

The Orthogonal Separation Principle: True orthogonality in GC×GC requires that the separation mechanisms in the two dimensions are independent. The first dimension (1D) typically utilizes a non-polar stationary phase (e.g., 5% phenyl polysilphenylene-siloxane) that separates compounds primarily by volatility. The second dimension (2D) employs a polar phase (e.g., polyethylene glycol) that separates compounds based on polarity. This orthogonality ensures that compounds co-eluting from the first dimension have a high probability of being separated in the second dimension [3].

Modulation Process: The modulator serves as the heart of the GC×GC system, positioned between the two columns. It operates by periodically collecting small effluent fractions from the first dimension (typically 2-8 second intervals) and focusing them into narrow bands through thermal or flow-based processes before reinjecting them onto the second dimension. This focusing effect not only maintains the separation achieved in the first dimension but also creates very narrow peaks (100-200 ms) in the second dimension, contributing to the exceptional resolution of the technique [5] [6].

Table 1: Comparison of Key Separation Parameters Between 1D-GC and GC×GC

| Parameter | 1D-GC | GC×GC | Advantage Factor |

|---|---|---|---|

| Theoretical Peak Capacity | 100-500 | 1,000-10,000 | 10-20x |

| Peak Width | 2-10 seconds | 100-200 ms (2D) | 10-50x narrower |

| Separation Mechanism | Single (volatility) | Orthogonal (volatility + polarity) | Dual mechanism |

| Probability of Complete Separation | Low for complex mixtures | High for complex mixtures | Significant improvement |

| Chemical Fingerprinting | Limited | Highly structured chromatograms | Enhanced pattern recognition |

Sensitivity Enhancement Through Peak Focusing

The modulation process in GC×GC provides not only improved separation but also significant sensitivity enhancement through two primary mechanisms: band compression and increased signal-to-noise ratio [5].

Band Compression: As the modulator focuses the first dimension effluent into narrow bands before injection onto the second dimension, the resulting peaks have significantly higher peak heights compared to the original broad peaks from the first dimension. Since detection limits are influenced by peak height rather than peak area, this compression directly improves sensitivity. The focusing process typically reduces peak widths by a factor of 10-20, resulting in proportional increases in peak height [5].

Signal-to-Noise Enhancement: The increased peak height achieved through modulation improves the signal-to-noise ratio (S/N) by increasing the mass flow rate of analytes into the detector. Studies have demonstrated S/N enhancement factors of 10-27× through modulation compared to conventional 1D-GC separations [5]. This improvement makes GC×GC particularly valuable for detecting trace-level compounds in complex forensic matrices, where target analytes may be present at low concentrations amidst overwhelming matrix interferences.

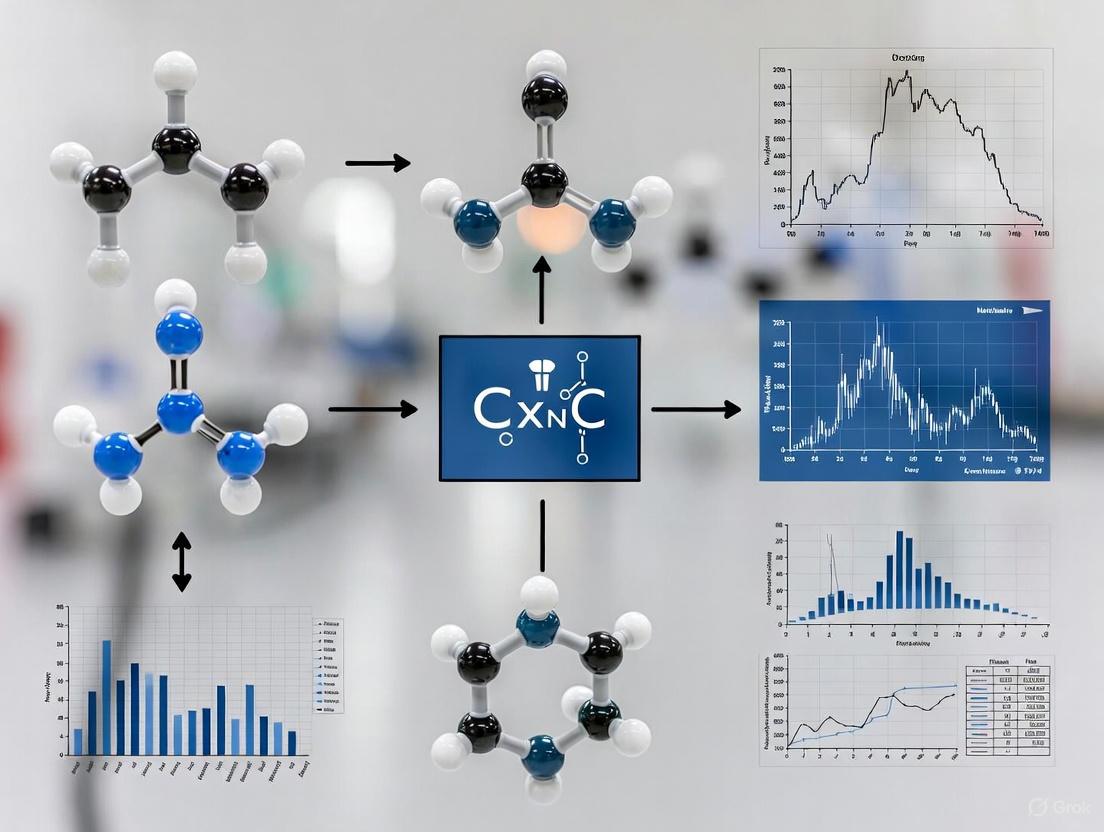

The following diagram illustrates the GC×GC operational workflow and how the modulation process enhances separation and sensitivity:

Diagram 1: GC×GC System Workflow. The modulation process focuses broad first-dimension peaks into narrow second-dimension peaks, enhancing both separation and sensitivity.

Experimental Comparisons: GC×GC vs. 1D-GC

Sensitivity and Detection Limit Studies

A critical study directly compared method detection limits (MDLs) between GC×GC and 1D-GC systems using both time-of-flight mass spectrometry (TOF-MS) and flame ionization detection (FID). The research followed U.S. Environmental Protection Agency (EPA) methodology for MDL determination, providing statistically robust comparisons [5].

Table 2: Experimental Method Detection Limit (MDL) Comparison Between 1D-GC and GC×GC

| Analyte | Detection System | 1D-GC MDL | GC×GC MDL | Improvement Factor |

|---|---|---|---|---|

| n-Nonane | GC-TOF-MS | 5.2 pg/μL | 0.8 pg/μL | 6.5× |

| n-Decane | GC-TOF-MS | 4.8 pg/μL | 0.7 pg/μL | 6.9× |

| n-Dodecane | GC-TOF-MS | 5.1 pg/μL | 0.6 pg/μL | 8.5× |

| 3-Octanol | GC-TOF-MS | 6.2 pg/μL | 0.9 pg/μL | 6.9× |

| n-Eicosane | GC-FID | 4.5 pg/μL | 0.5 pg/μL | 9.0× |

| n-Tetracosane | GC-FID | 5.3 pg/μL | 0.6 pg/μL | 8.8× |

| Pyrene | GC-FID | 7.1 pg/μL | 0.8 pg/μL | 8.9× |

The experimental data demonstrate consistent improvement in detection limits across compounds of varying polarity and molecular weight, with GC×GC providing 6.5-9× lower MDLs compared to 1D-GC. This enhancement is attributed to the peak focusing effect of modulation, which increases analyte mass flow rate to the detector, thereby improving signal intensity [5].

Peak Capacity and Resolution in Complex Mixtures

The superior separation power of GC×GC becomes particularly evident when analyzing complex mixtures. In a study analyzing exhaled breath volatile organic compounds (VOCs), GC×GC-TOF-MS detected approximately 260 compounds compared to only about 40 compounds detected by 1D-GC-MS using the same column and sampling protocol - representing a 7-fold increase in detected components [7].

This dramatic improvement in detection capability stems from GC×GC's ability to resolve co-eluting compounds that would appear as a single peak in 1D-GC. For example, in forensic analysis of illicit drugs and explosives, GC×GC has been shown to resolve isomeric compounds and structurally similar analytes that cannot be separated by 1D-GC, providing more definitive identification for legal evidence [1] [8].

Methodologies and Experimental Protocols

Standard GC×GC Protocol for Complex Mixture Analysis

Instrumentation: A typical GC×GC system consists of a gas chromatograph equipped with a modulator, two columns of different stationary phases, and an appropriate detector (most commonly TOF-MS or FID) [5] [3].

Column Selection:

- First Dimension: Mid-polarity or non-polar column (e.g., 30m × 0.25mm ID × 0.25μm df VF-1MS or DB-5MS)

- Second Dimension: Polar column (e.g., 1-5m × 0.25mm ID × 0.25μm df SolGel-Wax or SLB-IL60 ionic liquid phase) [5] [6]

Modulation Conditions:

- Period: 2-8 seconds depending on first dimension peak widths

- Type: Cryogenic (liquid N₂) or flow modulation

- Hot Pulse Time: 0.1-0.5 seconds for cryogenic modulators [5] [6]

Temperature Programming:

- Initial Temperature: 40-50°C held for 0.5-2 minutes

- Ramp Rate: 4-10°C/min depending on complexity

- Final Temperature: 280-300°C held for 5-10 minutes [5] [7]

Detection Parameters:

- TOF-MS Acquisition Rate: 50-200 spectra/second

- Mass Range: m/z 35-500

- FID Data Rate: 50-200 Hz [5]

Protocol for Method Detection Limit (MDL) Determination

The EPA-recommended procedure for MDL determination provides a standardized approach for sensitivity comparison [5]:

- Estimate Detection Limit (EDL): Determine a concentration that produces a signal 2.5-5× higher than noise

- Prepare Samples: Prepare 8 aliquots at 1-5× the EDL concentration

- Analyze Replicates: Analyze all 8 aliquots using the optimized method

- Calculate Standard Deviation: Determine standard deviation (S) of peak heights/areas

- Compute MDL: Apply the formula MDL = t₍ₙ₋₁,₁₋α=0.₉₉₎ × S, where t is the Student's t-value for 99% confidence level

This protocol ensures statistically valid comparison of detection limits between different analytical methods and has been successfully applied to demonstrate GC×GC's sensitivity advantages [5].

Advanced GC×GC Technologies and Forensic Applications

Modulator Technologies: Cryogenic vs. Flow Modulation

Two primary modulation technologies dominate modern GC×GC systems:

Cryogenic Modulators: Utilize liquid nitrogen or carbon dioxide to thermally focus analytes at the interface between dimensions. These provide excellent focusing efficiency and are considered high-performance modulators, but require ongoing consumable costs [5] [3].

Flow Modulators: Use valve-based systems to redirect carrier gas flow for focusing and reinjection. These offer reduced operating costs and easier automation as they don't require cryogenic gases, making them increasingly popular for routine analysis [3] [6].

Recent advancements in flow modulator design, particularly reverse flow fill/flush (RFF) modulators, have improved performance by focusing analytes in the opposite direction of the first dimension flow, enhancing peak capacity and sensitivity, especially for samples with wide concentration ranges [6].

Detection Systems for Forensic Applications

Time-of-Flight Mass Spectrometry (TOF-MS): The high acquisition speed (50-200 spectra/second) of TOF-MS makes it ideally suited for GC×GC, where very narrow (100-200 ms) peaks require fast detection. TOF-MS provides full-spectrum data acquisition, essential for non-targeted analysis and retrospective data mining [1] [2].

High-Resolution MS with Soft Ionization: Coupling GC×GC with high-resolution mass spectrometry (HRMS) using soft ionization techniques like tube plasma ionization (TPI) improves confidence in compound identification by preserving molecular ion information. This is particularly valuable in forensic applications where definitive identification is required for legal admissibility [6].

Data Analysis and Chemometric Approaches

The complex data sets generated by GC×GC require advanced chemometric tools for effective interpretation, particularly in forensic applications where statistical certainty is essential for evidence admissibility [1] [2].

Pixel-Based Analysis: Examines raw data points without peak matching, preserving all chemical information Peak Table-Based Analysis: Utilizes detected peaks with associated spectral and retention time data Tile-Based Approaches: Compresses data by dividing the 2D separation space into tiles for efficient pattern recognition [2]

These computational approaches are increasingly important for comparing complex chemical profiles in arson investigations, illicit drug analysis, and environmental forensics, where likelihood ratio approaches and statistical validation of results are required for legal proceedings [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials and Reagents for GC×GC Analysis

| Item | Function/Purpose | Example Specifications |

|---|---|---|

| Standard Mixtures | Method development and calibration | n-Alkane series (C₈-C₄₀) in hexane or CS₂ |

| Derivatization Reagents | Enhance volatility of polar compounds | N-Methyl-N-(trimethylsilyl)trifluoroacetamide (MSTFA), N,O-Bis(trimethylsilyl)trifluoroacetamide (BSTFA) |

| Internal Standards | Quantification and quality control | Deuterated analogs of target analytes, 1,4-Dichlorobenzene-d₄ |

| Solid-Phase Microextraction (SPME) Fibers | Sample preparation and concentration | DVB/CAR/PDMS (50/30 μm), PDMS (100 μm) |

| GC×GC Columns | Orthogonal separation | 1D: DB-5MS (30m × 0.25mm × 0.25μm); 2D: SLB-IL60 (5m × 0.25mm × 0.20μm) |

| Modulator Consumables | System operation | Liquid N₂ (cryogenic modulators), discharge gases (plasma-based detectors) |

| Quality Control Standards | Method validation | Certified reference materials (CRMs) matrix-matched to samples |

GC×GC provides unmatched peak capacity and sensitivity compared to conventional 1D-GC, primarily through orthogonal separation mechanisms and peak focusing via modulation. Experimental data demonstrates 6.5-9× improvement in method detection limits and up to 7× increase in detected compounds in complex mixtures. These advantages make GC×GC particularly valuable for forensic applications where complete characterization of complex samples and detection of trace-level analytes is essential. While standardization and validation challenges remain for routine implementation in forensic laboratories, ongoing advancements in modulator technology, detection systems, and data processing methods continue to strengthen the case for GC×GC as a powerful analytical tool for legal evidence research.

Complex samples present significant analytical challenges for conventional one-dimensional gas chromatography (1D GC), primarily due to co-elution and matrix effects that compromise quantitative accuracy. This guide objectively compares the performance of comprehensive two-dimensional gas chromatography (GC×GC) with 1D GC alternatives, focusing on applications requiring legal defensibility. Experimental data demonstrate GC×GC's superior peak capacity, enhanced sensitivity, and robust handling of matrix interferences—critical factors for evidentiary research where analytical precision is paramount. The systematic evaluation presented herein provides a foundation for validating GC×GC methodologies in forensic and pharmaceutical applications.

Conventional one-dimensional gas chromatography (1D GC) faces fundamental limitations when analyzing complex samples such as biological extracts, environmental contaminants, and forensic evidence. Co-elution occurs when multiple compounds share similar retention times, preventing accurate identification and quantification. Simultaneously, matrix effects can cause signal suppression or enhancement, leading to inaccurate quantification [9]. Biological samples contain substances across multiple chemical classes (sugars, organic acids, amino acids, fatty acids) at concentrations differing by several orders of magnitude, creating ideal conditions for these analytical challenges [9]. In legal evidence research, where results must withstand rigorous scrutiny, these limitations pose significant risks to analytical validity.

Matrix-induced enhancement effects represent a particular challenge, causing unexpected high recovery in GC analysis [10]. This phenomenon occurs when matrix components partially deactivate active sites in the injection port or compete with analytes for these sites, improving mass transfer to the detector compared to pure solvent standards [10]. While sometimes viewed as problematic, this effect can potentially enhance sensitivity when properly controlled through matrix-matched calibration [10].

Fundamental Principles: How GC×GC Addresses 1D GC Limitations

Core Technology of Comprehensive Two-Dimensional Chromatography

GC×GC involves coupling two columns with different stationary phases through a special interface called a modulator. The first dimension (1D) typically uses a longer nonpolar column (20-30 m), while the second dimension (2D) employs a shorter polar column (1-5 m) [11]. The modulator collects portions of the first dimension effluent and injects them as narrow pulses into the second dimension at regular intervals [5]. This process provides separation based on two distinct chemical properties—typically volatility in the first dimension and polarity in the second [12].

Two primary modulation types exist:

- Thermal modulators use temperature differentials (hot and cold jets) to trap and desorb analytes [11]

- Flow modulators use precise control of carrier gas flows to fill and flush sampling loops [11]

The modulation process focuses primary column eluate into narrow injection bands, increasing secondary column resolution and providing a theoretical 10-20-fold increase in peak capacity compared to 1D GC [12] [11].

Structured Separation and Visualization

GC×GC data is visualized using two-dimensional color plots where the x-axis represents first-dimension retention time (1tR), the y-axis represents second-dimension retention time (2tR), and color intensity represents signal strength [11]. A key advantage is the structured ordering or "roof-tiling" effect, where compounds from the same chemical class elute in characteristic bands [11]. This structured pattern enables tentative identification of chemical families based on position, greatly facilitating the interpretation of complex mixtures [11].

GC×GC Instrumental Workflow

Performance Comparison: Experimental Data

Resolution and Peak Capacity

GC×GC provides dramatically increased separation power compared to 1D GC. Where 1D GC might separate dozens to hundreds of compounds in complex mixtures, GC×GC can resolve thousands of individual constituents [13]. This enhanced resolution directly addresses co-elution problems prevalent in 1D GC analysis of complex samples.

Table 1: Quantitative Comparison of Separation Performance

| Parameter | 1D GC | GC×GC | Measurement Conditions |

|---|---|---|---|

| Theoretical Peak Capacity | 100-400 | 1,000-10,000 | Based on 30m 1D column coupled with 1-5m 2D column [12] [11] |

| Modulation Period | N/A | 2-8 seconds | Cryogenic trap cooled to -196°C using liquid nitrogen [5] |

| Typical Peak Width (2D) | N/A | < 100 ms | Narrow bands from modulation process [11] |

| Structured Chromatograms | No | Yes | Chemical class-based "roof-tiling" patterns [11] |

Sensitivity and Detection Limits

Sensitivity comparisons between 1D GC and GC×GC have yielded conflicting reports in the literature. The modulation process theoretically increases signal-to-noise ratios through band compression. Experimental studies have demonstrated 10-27× increase in S/N ratio through modulation [5]. However, the overall method detection limits (MDLs) depend on multiple factors including detector type and noise contributors.

Table 2: Sensitivity Comparison Using Method Detection Limits (MDLs)

| Analyte | 1D GC-TOF-MS MDL | GC×GC-TOF-MS MDL | Enhancement Factor | Experimental Conditions |

|---|---|---|---|---|

| n-Nonane | 5 pg/μL | 0.5 pg/μL | 10× | 4s modulation, m/z=71, liquid nitrogen cryogenic trap [5] |

| n-Decane | 7 pg/μL | 0.7 pg/μL | 10× | Same conditions as above [5] |

| 3-Octanol | 10 pg/μL | 1.5 pg/μL | 6.7× | Same conditions as above [5] |

When using flame ionization detection (FID), GC×GC shows particular advantages due to the detector's consistent response factor across hydrocarbon classes and excellent signal-to-noise ratio [13]. The structured chromatograms also facilitate group-type quantification for complex mixtures like petroleum substances [13].

Matrix Effect Tolerance

Matrix effects present significant challenges in 1D GC analysis, particularly for biological and environmental samples. In 1D GC, matrix components can cause signal suppression or enhancement up to a factor of 2 for carbohydrates and organic acids, with amino acids potentially more affected [9]. These effects primarily stem from incomplete transfer of derivatives during injection and compound interactions at the separation start [9].

GC×GC mitigates matrix effects through orthogonal separation, spreading matrix interferences across two dimensions rather than allowing them to concentrate in a single retention time region. This reduces the likelihood of a matrix component directly co-eluting with and interfering with a target analyte [14]. The enhanced separation power is particularly valuable for non-targeted analysis where unknown matrix components may be present [14].

Experimental Protocols for Method Validation

Method Detection Limit Determination

The U.S. Environmental Protection Agency (EPA) approach for determining Method Detection Limits (MDLs) provides a standardized protocol for comparing 1D GC and GC×GC sensitivity [5]:

- Estimate Detection Limit (EDL): Determine a concentration value producing instrument S/N ratio of 2.5-5

- Prepare Standards: Prepare 8 aliquots at 1-5× the EDL concentration

- Analyze Replicates: Analyze all 8 aliquots using identical chromatographic conditions

- Calculate MDL: MDL = tn-1, 1-α × S, where S is standard deviation of replicate measurements and tn-1, 1-α is Student's t-value for 99% confidence with n-1 degrees of freedom

For GC×GC analyses, MDL estimation should use the tallest second-dimension peak for a given analyte, as this peak would be most visible near the detection limit [5].

Assessing Matrix Effects Protocol

Systematic evaluation of matrix effects follows this experimental design [9]:

- Prepare Model Mixtures: Create compound mixtures of different compositions to simulate biological samples

- Derivatization: Apply standard derivatization protocols (e.g., trimethylsilylation for GC-MS analysis)

- Comparative Analysis: Analyze both neat standards and matrix-matched standards across concentration ranges

- Quantify Effects: Calculate signal suppression/enhancement as recovery percentage compared to neat standards

- Parameter Optimization: Test different injection-liner geometries and temperatures to minimize effects

This protocol revealed that matrix effects from biological samples typically cause signal variations not exceeding a factor of ~2 for most compounds, though certain amino acids experience greater effects [9].

GC×GC Method Optimization Parameters

Critical parameters requiring optimization for GC×GC analysis include [11] [5]:

- Modulation Period: Typically 2-8 seconds, must be optimized to preserve 1D separation

- Temperature Program: Should balance separation efficiency with analysis time

- Column Selection: Normal-phase (nonpolar→polar) vs. reversed-phase (polar→nonpolar) configurations

- Detector Acquisition Rate: 30-200 Hz for TOF-MS, ~100 Hz for FID to capture narrow 2D peaks

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for GC×GC Method Development

| Item | Function/Purpose | Example Specifications |

|---|---|---|

| Reference Standards | Retention time calibration in both dimensions | n-Alkane series (C8-C40) for retention index calculation [15] |

| Derivatization Reagents | Render polar compounds amenable to GC analysis | Trimethylsilylation agents (e.g., MSTFA) for metabolite profiling [9] |

| Internal Standards | Correction for injection volume and matrix effects | Stable isotope-labeled analogs of target compounds [9] |

| Column Sets | Orthogonal separation mechanisms | 30m × 0.25mm × 1.00μm VF-1MS (1D) + 1.5m × 0.25mm × 0.25μm SolGel-Wax (2D) [5] |

| Modulation Consumables | Cryogenic trapping or flow control | Liquid nitrogen for cryogenic modulators [5] |

| Matrix-Matched Calibrators | Compensation for matrix enhancement effects | Control matrix extracts from blank samples [10] |

Data Processing and Software Considerations

GC×GC generates complex, information-rich datasets requiring specialized software for processing. Commercial software platforms like GC Image GC×GC provide comprehensive tools for visualization, baseline correction, peak detection, and integration [16]. These tools are essential for transforming raw data into actionable information, particularly for legal evidence applications.

Critical data processing steps include:

- Data "Folding": Converting linear detector output into 2D chromatograms based on modulation time

- Peak/Blob Detection: Identifying resolved compounds in 2D space

- Deconvolution: Separating co-eluting compounds using mathematical algorithms

- Alignment: Correcting retention time variations across sample batches

- Compound Identification: Library searching against mass spectral databases [15]

Advanced processing may incorporate multivariate analysis and custom scripting through command-line interfaces to automate workflows and ensure reproducibility [15]. For legal applications, maintaining complete data processing audit trails is essential.

Applications in Legal Evidence Research

GC×GC provides particular advantages for forensic applications requiring the highest level of confidence:

Forensic Toxicology

Complex biological matrices like blood, urine, and tissues present significant analytical challenges. GC×GC-TOF-MS can simultaneously screen for hundreds of drugs and metabolites while minimizing false positives from matrix interferences [14]. The structured chromatograms facilitate identification of metabolite patterns consistent with specific substances.

Arson Investigation

Fire debris analysis benefits tremendously from GC×GC's separation power. Traditional 1D GC struggles to distinguish ignitable liquid residues from pyrolysis products, while GC×GC provides clear chemical class separation [17]. This enables more confident identification of accelerants and fire origin determination.

Environmental Forensics

Petroleum hydrocarbon fingerprinting for contamination source identification represents an ideal application for GC×GC [13]. The technique can separate thousands of hydrocarbon constituents into distinct chemical classes, enabling precise pattern matching between environmental samples and potential sources.

GC×GC represents a significant advancement over 1D GC for analyzing complex samples in legal evidence research. Experimental data demonstrate clear advantages in peak capacity, sensitivity, and matrix tolerance—all critical factors for generating defensible results. While method development requires careful optimization of multiple parameters and specialized data processing tools, the analytical benefits justify this investment for applications requiring the highest level of confidence. As the technique continues to mature and become more accessible, GC×GC is poised to become the gold standard for complex mixture analysis in forensic and pharmaceutical laboratories.

The transition from traditional one-dimensional gas chromatography (1D-GC) to comprehensive two-dimensional gas chromatography (GC×GC) represents a paradigm shift in the separation sciences, offering unprecedented resolving power for complex mixtures. Within the context of legal evidence research, where the unequivocal identification and quantification of trace-level compounds can determine the outcome of a case, the validation of analytical methods is paramount. GC×GC meets this demand through its core components: orthogonal columns that maximize compound separation, modulators that preserve first-dimension resolution, and time-of-flight mass spectrometry (TOF-MS) that provides rapid, sensitive detection. This instrumentation deep dive objectively compares the performance of these components with 1D-GC alternatives, supported by experimental data. The ultimate goal is to frame their technical capabilities within the rigorous requirements of forensic validation, establishing a foundation for legally defensible chemical analysis where the integrity of evidence is non-negotiable.

The Heart of Separation: Orthogonal Column Selection and Configuration

The primary column ensemble in GC×GC is responsible for the vast increase in peak capacity, which is the multiplicative product of the peak capacities of each individual dimension. Proper selection and configuration of these columns is the first critical step in method development.

The Principle of Orthogonality and Forensic Utility

Orthogonality refers to the use of two separation mechanisms that are as independent as possible. A common and highly effective configuration pairs a non-polar first dimension (1D) column (e.g., 5% phenyl polysilphenylene-siloxane) with a mid- or high-polarity second dimension (2D) column (e.g., polyethylene glycol or a cyanopropyl-phenyl phase) [18]. This arrangement separates compounds in the first dimension primarily by their boiling point, and in the second dimension by their polarity. The forensic utility of this orthogonality is profound. For example, in the analysis of synthetic cannabinoids or complex drug mixtures, isomers that are chromatographically indistinguishable in 1D-GC are often fully resolved in the 2D space, preventing false identifications and providing a more confident foundation for expert testimony [19].

Practical Rules for Column Matching and Performance

Successful implementation requires more than just orthogonal phases; the column dimensions must be harmonized to maintain optimal flow conditions and detection sensitivity.

- Maximize First Dimension Resolution: The foundation of a good GC×GC separation is a well-resolved first dimension. A good starting point is a 30 m x 0.25 mm id x 0.25 µm df column. For exceptionally complex samples, a 60 m column can be used to further increase the peak capacity of the first dimension [20].

- Match Column Dimensions for Efficiency: To ensure optimal sample transfer and minimize band broadening, the second dimension column should be a short, narrow-bore column (e.g., 1-2 m x 0.10-0.25 mm id). It is also recommended to match the phase thickness (df) of the second dimension to the first; for instance, a 0.25 µm df 1D column should be paired with a 0.25 µm df 2D column. This configuration maintains consistent sample loading capacity and carrier gas velocity [20].

- Achieve Structural Ordering: A major benefit of a well-chosen column set is the creation of highly structured 2D chromatograms. Compounds elute in the 2D space in patterns based on their chemical class. For instance, in a non-polar x polar setup, alkanes will have shorter 2D retention times than esters of a similar 1D retention, which in turn will elute before alcohols. This provides an immediate visual diagnostic tool for confirming compound identity and spotting novel or unexpected compounds in a forensic sample [18].

Table 1: Common Orthogonal Column Setups for Forensic and Chemical Analysis

| Application Focus | 1D Column (Non-Polar) | 2D Column (Polar) | Separation Rationale | Key Forensic Application |

|---|---|---|---|---|

| General Volatiles | 5% Phenyl Polysilphenylene-siloxane (e.g., RTX-5, HP-5) | Polyethylene Glycol (e.g., SolGel-Wax) | Boiling point → Polarity | Arson debris (accelerants), illicit spirits |

| Targeted Isomer Separation | 5% Phenyl Polysilphenylene-siloxane | 50% Phenyl Polysilphenylene-siloxane | Boiling point → Polarizability | Synthetic cannabinoid, drug isomer distinction [19] |

| Fatty Acids & Metabolites | Polyethylene Glycol (e.g., DB-WAX) | Trifluoropropylpolysiloxane (e.g., RTX-200) | Polarity → Specific dipole interactions | Food fraud (oil adulteration), metabolomics [21] |

Critical Interface: The Role and Types of Modulators

The modulator is the operational centerpiece of a GC×GC system, serving as the interface between the two columns. Its function is to periodically collect, refocus, and reinject effluent from the end of the first dimension column onto the head of the second dimension column.

Modulation Fundamentals and Sensitivity Enhancement

The process of modulation transforms a broad, 5-10 second wide peak from the first dimension into a series of sharp, narrow pulses (typically 50-200 ms wide) for the second dimension. This band compression is a primary source of the sensitivity enhancement observed in GC×GC compared to 1D-GC. By concentrating the analyte into a narrower band, the peak height is significantly increased, leading to a higher signal-to-noise (S/N) ratio. Studies have demonstrated that this modulation process can lead to a 5- to 10-fold sensitivity enhancement compared to 1D-GC when using flame ionization detection (FID) or time-of-flight mass spectrometry (TOF-MS) [5].

Comparing Thermal and Flow Modulator Technologies

Two primary classes of modulators are used today: thermal and flow modulators. The choice between them has significant implications for method performance and applicability.

- Thermal Modulators: These use a cryogenic fluid (typically liquid nitrogen or CO₂) to trap and focus analytes at the junction between the two columns. A subsequent hot pulse rapidly releases the trapped band into the second dimension. Thermal modulators are renowned for producing very narrow (~100 ms) second dimension peaks, which is ideal for achieving high peak capacity. A key parameter is the modulation period (Pₘ), which is the total time between successive injections to the second dimension. As a rule of thumb, a first-dimension peak should be sliced 3 to 4 times to preserve the resolution earned in the first dimension. Therefore, for a typical first-dimension peak width of 6-8 seconds, a Pₘ of 1.5 to 3 seconds is appropriate [20] [21].

- Flow Modulators: These devices use valve-based systems and auxiliary gas flows to direct and trap the column effluent in a loop or a tee-union before flushing it onto the second column. Flow modulators operate at higher temperatures than cryogenic modulators, making them suitable for very high-boiling compounds. A significant advancement is direct flow modulation, which can achieve long secondary separation times (up to 120 s in one study) and produce secondary peak widths at base (2Wb) as narrow as 65 ms, all with 100% transfer of analyte from the first to the second dimension [22].

Table 2: Performance Comparison of GC×GC Modulator Types

| Characteristic | Thermal Modulator (Cryogenic) | Flow Modulator (Direct Flow) |

|---|---|---|

| Principle | Trapping via cooling, release via heating | Flow switching and trapping using gas pressure |

| Typical 2D Peak Width | ~100 ms or less | Can be <100 ms (e.g., 65 ms) [22] |

| Modulation Period (Pₘ) | Short (1-6 s) | Can be very short or very long (e.g., up to 120 s) [22] |

| Operational Temperature | Limited by cryogen requirement | Up to maximum GC oven/column temperature |

| Primary Advantage | Excellent peak capacity, high sensitivity | Robustness, no cryogens, high temp. capability |

| Key Forensic Consideration | Ideal for complex volatiles (e.g., arson, explosives) | Ideal for less volatile compounds (e.g., heavy oils, cannabinoids) |

Detection and Identification: The TOF-MS Imperative

The rapid, narrow peaks produced by the second dimension separation demand a detector with equally fast acquisition capabilities. This makes time-of-flight mass spectrometry (TOF-MS) the detector of choice for GC×GC.

High-Speed Acquisition and Deconvolution

While 1D-GC can often use slower-scanning quadrupole MS detectors, the peaks in GC×GC are too narrow for such technology. TOF-MS operates by accelerating ions into a flight tube, where their mass-to-charge ratio (m/z) is determined by their time of arrival. This process allows for full-range mass spectra acquisition at very high speeds (50-500 Hz), making it perfectly suited to capture even the narrowest peaks from a GC×GC run without skewing the data [23] [18]. Furthermore, the ability to collect full, non-skewed mass spectra enables powerful deconvolution algorithms. These algorithms can mathematically resolve the mass spectra of coeluting compounds, a common occurrence in complex samples. For example, in a study of edible oils, GC×GC-TOF-MS was able to chromatographically resolve and identify coeluting hexanal and octane, which were unresolved in 1D-GC-HRMS analysis [23].

Sensitivity and Quantitative Performance Data

The sensitivity of the overall GC×GC system is a product of both the modulator's focusing effect and the detector's performance. A direct comparison study measured Method Detection Limits (MDLs) for a series of compounds using both 1D-GC and GC×GC, each coupled to TOF-MS and FID. The results demonstrated that GC×GC-TOF-MS provided superior sensitivity (lower MDLs) than 1D-GC-TOF-MS for the tested compounds. This sensitivity enhancement is attributed to the band compression in the modulator, which increases the peak height and improves the signal-to-noise ratio in the mass spectrometer [5].

Experimental Protocols for Forensic Validation

To ensure the data generated is fit for legal purposes, rigorous experimental protocols must be followed. The following outlines a general workflow for a non-targeted analysis, pertinent to forensic casework involving unknown substances.

Diagram 1: Experimental workflow for non-targeted analysis, highlighting the core instrumentation.

Sample Preparation and Instrumental Analysis

- Sample Collection & Preparation: Forensic samples (e.g., latent fingerprints [19], plant material, wastewater [14]) require strict chain-of-custody protocols. Preparation depends on the matrix: headspace solid-phase microextraction (HS-SPME) is ideal for volatiles from solids or liquids [18], while liquid-liquid extraction may be used for wastewater [14]. For polar metabolites, a standard protocol involves methoximation followed by trimethylsilylation to increase volatility and thermal stability [21].

- GC×GC-TOF-MS Instrumental Parameters: Based on cited methodologies [21] [18], a typical method is as follows:

- Columns: 1D: 20-30 m, 0.25 mm id, 0.25 µm df, mid-polarity (e.g., SolGel-Wax) or non-polar (e.g., RTX-5MS). 2D: 1-2 m, 0.10-0.25 mm id, 0.10-0.25 µm df, orthogonal phase (e.g., OV1701, RTX-200MS).

- Modulation: Thermal modulator with a period (Pₘ) of 2-4 seconds.

- Oven Program: Initial temp 40-60°C, held briefly, then ramped at 3-8°C/min to 240-280°C.

- TOF-MS: Acquisition rate: 50-200 Hz; Mass range: 40-500 m/z; Ionization: Electron Ionization (EI) at 70 eV.

Data Processing and Chemometric Analysis

The raw data file is a complex three-dimensional data cube (1D retention time × 2D retention time × m/z). Processing involves:

- Peak Finding and Deconvolution: Software (e.g., LECO ChromaTOF, GC Image) is used to find peaks, deconvolute coelutions, and extract pure mass spectra [21] [18].

- Compound Identification: Tentative identification is achieved by comparing deconvoluted spectra to commercial EI libraries (e.g., NIST). Confidence is greatly increased by matching experimentally determined linear retention indices (LRI) in both dimensions against literature values [18] [14].

- Chemometric Analysis for Objectivity: To objectively find patterns and differences between sample classes (e.g., authentic vs. adulterated food [18], or repressed vs. derepressed yeast cells [21]), multivariate statistics are applied. Principal Component Analysis (PCA) is used to reduce data dimensionality and highlight the most significant variables (compounds) responsible for variance. For quantitative analysis of specific targets, Parallel Factor Analysis (PARAFAC) can be used to resolve pure concentration profiles from the complex data, providing robust quantification even in the presence of coelution [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for GC×GC-TOF-MS Analysis

| Item | Function/Application | Example from Literature |

|---|---|---|

| Orthogonal Column Set | Core separation unit; provides peak capacity and structured chromatograms. | 30 m RTX-5MS (1D) + 2 m RTX-200MS (2D) [21] |

| SPME Fiber (DVB/CAR/PDMS) | Extracts and pre-concentrates volatile analytes from headspace for enhanced sensitivity. | Used for profiling volatiles in cocoa and olive oil [18] |

| Derivatization Reagents | Increases volatility of polar, non-GC-amenable analytes (e.g., metabolites). | Methoxyamine + BSTFA (with TMCS) [21] |

| n-Alkane Standard Solution | Used for empirical calculation of Linear Retention Indices (LRI) for compound identification. | C9-C25 alkane mix in cyclohexane [18] |

| Internal Standard (IS) | Corrects for instrumental variability and injection volume inaccuracies for quantification. | α-Thujone [18] or stable isotope-labeled analogs |

| Retention Index Markers | Chemically inert compounds spaced throughout the chromatogram to monitor system stability. | Not specified in results, but common in practice. |

| Quality Control (QC) Sample | A pooled sample used to monitor instrument performance and data reproducibility over time. | Not specified in results, but critical for validation. |

The synergy of orthogonal columns, high-performance modulators, and rapid TOF-MS detection establishes GC×GC as a superior analytical platform compared to 1D-GC for the analysis of complex mixtures. The data unequivocally shows enhancements in resolution, sensitivity, and chemical specificity. For the forensic scientist, this translates to a greater ability to separate critical isomers, detect trace-level contaminants, and generate chemically comprehensive profiles from limited evidence. When coupled with rigorous experimental protocols and validated chemometric data processing, GC×GC-TOF-MS moves beyond a mere analytical tool to become a robust and defensible platform for generating evidence that meets the exacting standards of the legal system.

Chromatographic fingerprinting is an analytical methodology that uses instrumental signals to obtain information about a material's identity or quality based on its chemical composition [24]. In forensic science, this approach has become indispensable for analyzing complex evidentiary materials, where the chemical fingerprint provides a characteristic profile that can be used for source attribution, age estimation, and comparative analysis. Unlike targeted analysis that focuses on specific known compounds, fingerprinting utilizes non-specific instrumental signals that contain hidden information about the sample's complete chemical makeup, requiring specialized chemometric tools for interpretation [24]. This comprehensive perspective is particularly valuable in forensic intelligence, where subtle chemical patterns can reveal connections between evidence items, estimate time since deposition, and identify the origin of unknown materials.

The theoretical foundation of chromatographic fingerprinting rests on the principle that complex mixtures from a common source exhibit reproducible and characteristic chemical distributions. In legal contexts, the validity of these chemical fingerprints depends on demonstrating that the methodology is reproducible, reliable, and capable of discriminating between different sources with statistical confidence [1]. For comprehensive two-dimensional gas chromatography (GC×GC), this has required addressing standardization challenges and establishing rigorous validation protocols that meet forensic admissibility standards such as the Daubert criteria [1]. The transition of GC×GC from a research technique to a forensically validated methodology represents a significant advancement in the field's ability to deconvolute complex mixtures encountered in evidence such as ignitable liquid residues, illicit drugs, and explosive materials.

Technical Comparison of Chromatographic Methods

Fundamental Separation Principles

Forensic applications require chromatographic methods that can resolve complex mixtures into their individual chemical components to generate characteristic fingerprints. One-dimensional gas chromatography (1D GC) provides separation based on a single chemical property, typically using a single column with a specific stationary phase where compounds separate based on their differential partitioning between the mobile and stationary phases [25]. While effective for simpler mixtures, 1D GC often proves inadequate for forensic samples containing hundreds or thousands of chemical components, resulting in unresolved complex mixtures where critical forensic markers remain co-eluted and undetectable [26].

Comprehensive two-dimensional gas chromatography (GC×GC) fundamentally enhances separation power by employing two separate columns with different stationary phases connected in series through a special interface called a modulator [25]. The modulator periodically collects effluent from the first column and injects it as narrow chemical pulses into the second column, achieving orthogonal separation based on two different chemical properties [27]. In the most common configuration, the first dimension separates primarily by volatility (boiling point) using a non-polar column, while the second dimension separates by polarity using a polar column, though reversed-phase configurations are also possible for specific applications [25]. This two-dimensional separation creates a structured chromatographic space where chemically related compounds elute in characteristic patterns, significantly enhancing the informational content of the chemical fingerprint.

Heart-cut two-dimensional GC (GC-GC) represents an alternative approach where specific regions of interest from the first dimension separation are selectively transferred to a second column for further separation [28]. Unlike GC×GC, which comprehensively analyzes the entire sample across both dimensions, GC-GC targets only predetermined chromatographic regions, making it particularly valuable when coupled with olfactometry detection for identifying odor-active compounds in arson and contraband investigations [28]. Each approach offers distinct advantages for forensic fingerprinting, with selection dependent on the specific analytical requirements of the case.

Comparative Performance Metrics

Table 1: Technical Comparison of Chromatographic Methods for Forensic Fingerprinting

| Parameter | 1D GC | GC×GC | GC-GC |

|---|---|---|---|

| Peak Capacity | ~400 | ~1,000-2,000 | Variable (targeted) |

| Separation Mechanism | Single chemical property | Two orthogonal chemical properties | Two orthogonal properties for selected regions |

| Sensitivity | Standard | 5-10x increase due to modulation | Similar to 1D GC for targeted compounds |

| Chemical Class Separation | Limited | Structured chromatograms with compound grouping | Targeted for pre-selected regions |

| Data Dimensionality | 1D (retention time) | 2D (retention time × retention time) | 1D with enhanced regions |

| Olfactometry Compatibility | Full run | Not practical (rapid second dimension) | Full compatibility |

| Forensic Applications | Simple mixtures, targeted analysis | Complex mixtures (ILR, drugs, explosives), untargeted screening | Odor-active compounds, targeted complex regions |

The enhanced performance of GC×GC directly addresses critical challenges in forensic chemical analysis. The dramatically increased peak capacity enables resolution of thousands of individual compounds within a single analysis, revealing minor components that would be obscured by major constituents in 1D GC separations [27]. This is particularly valuable for forensic samples where trace-level diagnostic compounds provide crucial intelligence, such as ignitable liquid residue markers in arson investigations that are masked by substrate interference [26]. The structured separation achieved through orthogonal mechanisms causes chemically related compounds (e.g., alkanes, aromatics, polar compounds) to elute in organized bands or clusters within the two-dimensional separation space, facilitating compound identification and classification even without complete mass spectral characterization [27] [26].

The modulation process in GC×GC provides not only separation enhancement but also significant sensitivity improvements through peak focusing effects. As the modulator traps and concentrates narrow bands of first dimension effluent before reinjection into the second dimension, the resulting peaks are sharper and more intense, with signal-to-noise ratio improvements of 5-10 times compared to 1D GC [27]. This enhanced sensitivity enables detection of trace-level compounds that would otherwise fall below detection limits, expanding the discriminatory power of chemical fingerprints in forensic comparisons where sample amounts may be limited.

Experimental Protocols for Forensic Applications

GC×GC Method Development for Ignitable Liquid Residues

The analysis of ignitable liquid residues (ILRs) from fire debris represents one of the most forensically established applications of GC×GC fingerprinting. The following protocol has been validated for the identification and classification of petroleum-based accelerants in complex matrix samples [26]:

Sample Preparation: Fire debris samples are collected in sealed nylon pouches or metal cans. Headspace concentration is performed using activated charcoal strips exposed to the heated debris sample (65°C for 16 hours) to adsorb volatile compounds. The strips are subsequently extracted with 100-500 µL of carbon disulfide (GC-MS grade) to desorb the concentrated analytes. For samples with significant interference, an additional clean-up step using silica gel column chromatography may be employed to remove polar matrix components.

Instrumental Parameters:

- GC×GC System: Agilent 7890B GC with dual-stage thermal modulator (LN2 cryogenic cooling)

- First Dimension Column: Rxi-1MS, 30 m × 0.25 mm × 0.25 µm (non-polar)

- Second Dimension Column: Rxi-17SilMS, 1.5 m × 0.25 mm × 0.25 µm (mid-polarity)

- Temperature Program: 40°C (2 min hold) to 300°C at 3°C/min

- Modulation Period: 6 s (3 s hot pulse, 3 s cold pulse)

- Carrier Gas: Helium, constant flow 1.2 mL/min

- Injection: 1 µL splitless (250°C injector temperature)

- Detection: TOF-MS with acquisition rate 200 spectra/s, mass range 40-450 m/z

Data Processing: Raw data is processed to generate two-dimensional contour plots with first dimension retention time on the x-axis and second dimension retention time on the y-axis. Peak finding and alignment are performed using dedicated GC×GC software (ChromaTOF or equivalent). Pattern recognition for ILR classification employs principal component analysis (PCA) on the entire peak table, with cross-validation to ensure model robustness.

This method has demonstrated significantly reduced false negatives in wildfire arson investigations compared to standard 1D GC methods due to enhanced separation of ILR markers from natural substrate interferences [26].

Fingerprint Aging Studies Using GC×GC–TOF-MS

Determining the time since deposition of forensic evidence such as fingerprints represents an emerging application of chemical fingerprinting. The following protocol, adapted from Vozka's research, enables monitoring of time-dependent chemical changes in latent fingerprint residues [19]:

Sample Collection: Fingerprints are deposited on clean glass or aluminum substrates by volunteer donors following hand washing and air drying. Donors refrain from product application for at least 2 hours prior to deposition. Samples are aged under controlled conditions (22°C, 45% RH) with analysis at predetermined time points (0, 1, 3, 7, 14, 21, 28 days).

Sample Preparation: Aged fingerprint residues are recovered by solvent extraction with 200 µL of 1:1 (v/v) dichloromethane:methanol, with 30 s vortex mixing followed by 5 min ultrasonication. The extract is transferred to a GC vial with internal standards (tetracosane-d50 and cholesteryl heptadecanoate at 10 µg/mL).

Instrumental Parameters:

- GC×GC System: LECO Pegasus 4D with dual-jet thermal modulator

- First Dimension Column: DB-5MS, 30 m × 0.25 mm × 0.25 µm

- Second Dimension Column: DB-17MS, 1 m × 0.25 mm × 0.25 µm

- Temperature Program: 60°C (1 min hold) to 320°C at 10°C/min

- Modulation Period: 4 s

- Detection: TOF-MS at 100 Hz acquisition rate, mass range 50-650 m/z

Chemometric Modeling: Time-dependent changes are modeled using ratio-based approaches (squalene/cholesterol, fatty acid ratios) to minimize individual variability. Multivariate models employing partial least squares regression are built using the entire chemical profile to predict sample age, with model validation through cross-validation and independent test sets.

This approach has demonstrated the ability to track the evaporation of volatiles followed by oxidative degradation of lipids, creating predictive models that can estimate fingerprint age with potential error margins of ±2-3 days within the first week of deposition [19].

GC×GC Analytical Workflow for Forensic Fingerprinting

Validation Framework for Legal Admissibility

Meeting Daubert Criteria for Forensic Evidence

The admissibility of scientific evidence in legal proceedings, particularly in the United States under the Daubert standard, requires demonstration of several key attributes. For GC×GC-based chemical fingerprinting to transition from research to courtroom application, specific validation studies must address each criterion [1]:

Empirical Testing and Error Rates: Establish standardized operating procedures with documented performance characteristics including precision, accuracy, and detection limits. Determine false positive and false negative rates through interlaboratory studies and proficiency testing. For example, in ILR analysis, GC×GC methods have demonstrated false negative rates below 5% compared to 15-20% for 1D GC methods when analyzing weathered gasoline in complex matrices [26].

Peer Review and Publication: The foundational principles and specific forensic applications of GC×GC have been documented in peer-reviewed literature across multiple disciplines, including dedicated reviews of forensic applications [1] and method validation studies [26]. This established body of literature provides the scientific foundation for expert testimony.

Standards and Controls: Implementation of quality assurance protocols including calibration verification, continuing calibration checks, system suitability tests, and control charts for key performance metrics. Use of certified reference materials for retention index calibration and response factor determination ensures methodological consistency.

General Acceptance: While GC×GC is not yet universally implemented in forensic laboratories, it has gained acceptance in specific application areas including environmental forensics, where the first accredited GC×GC method was established by the Canadian Ministry of the Environment for persistent organic pollutants analysis [1]. The technical capabilities are recognized as superior to 1D GC for complex mixture analysis.

Standardization Challenges and Solutions

Table 2: Validation Parameters for GC×GC Forensic Methods

| Validation Parameter | GC×GC Specific Considerations | Recommended Acceptance Criteria |

|---|---|---|

| Precision (Retention Time) | First dimension: RSD < 1%Second dimension: RSD < 5% | Modulation period consistency > 98% |

| Accuracy | Use of certified reference materials with structurally similar compounds | Mean accuracy 80-120% across calibration range |

| Detection Limits | Matrix-specific due to enhanced separation | 3-10x improvement over 1D GC demonstrated |

| Linearity | Verified for each analyte across calibrated range | R² > 0.990 for 5-point calibration |

| Robustness | Column choice, modulation parameters, temperature programs | Method performs within specifications after deliberate variations |

| Specificity | Two-dimensional retention coordinates + mass spectrum | Co-elution < 5% of target peaks in representative matrix |

The implementation of GC×GC in forensic laboratories faces specific challenges related to method standardization. Unlike 1D GC methods with established protocols, GC×GC methods require additional validation parameters including modulator performance, second dimension retention stability, and data processing reproducibility [1]. Successful adoption requires development of instrument-agnostic databases that can transfer chemical fingerprints between different instrumental platforms, a current limitation in the field [24].

To address admissibility challenges, forensic laboratories implementing GC×GC should conduct comparative studies demonstrating equivalence or superiority to established methods, maintain comprehensive documentation of validation data, and implement rigorous proficiency testing programs. The visual nature of GC×GC contour plots provides compelling demonstrative evidence that can be effectively communicated to judges and juries, though this must be supported by statistical validation of pattern recognition algorithms and discrimination power [26].

Validation Pathway for Legal Admissibility

Essential Research Reagents and Materials

The implementation of robust GC×GC methods for forensic fingerprinting requires specific reagents, reference materials, and specialized instrumentation. The following table details essential components for establishing this capability in a forensic laboratory.

Table 3: Essential Research Reagents and Materials for GC×GC Forensic Analysis

| Category | Specific Items | Forensic Application |

|---|---|---|

| Chromatography Columns | Primary: DB-5MS, Rxi-1MS (30m, 0.25mm, 0.25µm)Secondary: Rxi-17SilMS, DB-17MS (1-2m, 0.25mm, 0.25µm) | Orthogonal separation for petroleum, ignitable liquids, drugs |

| Reference Standards | n-Alkane series (C8-C40)Ignitable Liquid MixturesDrug Isomer MixturesDeuterated Internal Standards | Retention index calibration, method validation, quantitation |

| Sample Preparation | Activated charcoal stripsCarbon disulfide (GC-MS grade)Solid-phase microextraction fibersSilica gel cleanup cartridges | Fire debris analysis, trace evidence concentration, matrix cleanup |

| Quality Control | Certified reference materials (NIST)System suitability mixturesColumn performance test mixes | Method validation, ongoing quality assurance, proficiency testing |

| Data Processing | ChromaTOF, GC Image, or equivalentMultivariate statistics softwareCustom spectral libraries | Data visualization, chemometric analysis, pattern recognition |

The selection of appropriate column combinations represents a critical methodological decision, with normal-phase (non-polar → polar) configurations providing optimal separation for most petroleum-based forensic samples, while reversed-phase configurations may be preferred for specific applications involving oxygenated compounds or certain drug mixtures [25] [27]. The modulator technology represents another essential consideration, with thermal modulators using liquid nitrogen cryogen providing superior sensitivity for trace-level forensic markers, while flow modulators offer operational convenience without cryogen requirements [25].

The development of customized spectral libraries enhanced with second-dimension retention indices represents an ongoing need in the field. While standard EI-MS libraries are directly applicable to GC×GC-TOF-MS data, the additional retention dimension provides orthogonal confirmation of compound identity when coupled with mass spectral matching [26]. Implementation of internal standard mixtures containing stable isotopically labeled analogs of target compounds is essential for controlling analytical variability and ensuring quantitative reliability in legal proceedings.

The integration of comprehensive two-dimensional gas chromatography into forensic practice represents a significant advancement in chemical fingerprinting capabilities. The enhanced separation power of GC×GC provides forensic scientists with unprecedented ability to resolve complex mixtures encountered in evidence items, revealing chemical patterns and trace-level markers that remain hidden to conventional one-dimensional chromatography [27] [26]. When coupled with appropriate chemometric tools and validation frameworks, these structured chromatograms deliver robust chemical intelligence that meets the rigorous standards of legal admissibility.

The ongoing transition of GC×GC from research technique to forensically validated methodology requires addressing standardization challenges through interlaboratory collaboration and reference material development [1]. As these foundational elements are established across application domains—from ignitable liquid identification to fingerprint aging studies—the legal acceptance of this powerful analytical approach will continue to grow. The compelling visual nature of two-dimensional separations, combined with statistical validation of pattern recognition algorithms, provides both scientific rigor and communicative power in legal proceedings [26].

For forensic intelligence applications, the rich chemical information embedded in GC×GC fingerprints enables not only comparative analyses but also temporal and source attribution modeling that extends traditional forensic capabilities. As the field advances, the implementation of instrument-agnostic databases and standardized reporting frameworks will further strengthen the legal standing of this powerful analytical methodology, establishing GC×GC as an indispensable tool in the forensic chemist's arsenal.

GC×GC in Practice: Method Development for Forensic and Clinical Analysis

Forensic toxicology faces unprecedented challenges due to the rapid emergence of new psychoactive substances (NPS) and sophisticated attempts to circumvent drug testing [29]. Within this landscape, analytical chemists must strategically select between targeted and non-targeted approaches to detect illicit drugs, their metabolites, and associated biomarkers. This guide provides an objective comparison of these methodologies, with particular focus on validating comprehensive two-dimensional gas chromatography (GC×GC) for legal evidence research. The distinction between these approaches is fundamental: targeted methods provide precise quantification of known compounds, while non-targeted strategies offer a broader discovery capability for unknown substances and metabolic patterns [29] [1]. As forensic evidence must withstand legal scrutiny, understanding the performance characteristics, applications, and validation requirements of each approach is paramount for researchers and drug development professionals.

Core Principles and Analytical Strategies

Targeted Analysis

Targeted analysis refers to hypothesis-driven approaches focused on detecting and quantifying specific predefined analytes. These methods are typically optimized for maximum sensitivity and precision for a predetermined list of compounds, such as specific drugs, their known metabolites, or adulterants [29] [30].

Key Characteristics:

- Utilizes predefined compound libraries and reference standards

- Optimized for specific analytes of interest

- Employs selective detection methods (e.g., multiple reaction monitoring MRM)

- Provides precise quantification with established detection limits

- Requires prior knowledge of target compounds [29] [30]

Non-Targeted Analysis

Non-targeted analysis adopts a discovery-oriented approach, aiming to comprehensively characterize samples without predetermined analytical targets. This strategy is particularly valuable for detecting novel compounds, identifying unknown metabolites, and discovering metabolic patterns indicative of drug exposure [29] [1].

Key Characteristics:

- Does not require predefined compound lists

- Captures global chemical profiles or "fingerprints"

- Ideal for discovering novel compounds and metabolic patterns

- Relies on high-resolution separation and detection

- Requires advanced chemometric tools for data interpretation [29] [1] [8]

Comparative Performance in Forensic Applications

Table 1: Performance Comparison of Targeted vs. Non-Targeted Approaches

| Parameter | Targeted Analysis | Non-Targeted Analysis |

|---|---|---|

| Primary Objective | Confirm and quantify known compounds | Discover unknown compounds and patterns |

| Throughput | High for routine targets | Lower due to complex data processing |

| Sensitivity | Higher (optimized for specific analytes) | Variable (not optimized for specific compounds) |

| Specificity | High with reference standards | Depends on separation power and detection |

| Compound Identification | Confirmed with standards | Tentative without standards, requires confirmation |

| Best Applications | Routine drug testing, confirmation, quantification | NPS detection, biomarker discovery, adulteration screening |

| Legal Defensibility | Well-established | Requires rigorous validation [29] [1] [30] |

Table 2: Capabilities for Specific Forensic Challenges

| Analytical Challenge | Targeted Approach | Non-Targeted Approach |

|---|---|---|

| New Psychoactive Substances (NPS) | Limited; requires reference standards | Excellent; can detect unknown compounds without standards |

| Metabolite Identification | Targeted metabolite profiling | Comprehensive metabolic pathway mapping |

| Urine Adulteration | Targeted detection of known adulterants | Pattern recognition of chemical changes |

| Metabolomic Biomarkers | Limited to predefined biomarkers | Discovery of novel biomarker patterns |

| Differentiating Drug Sources | Specific metabolite ratios [30] | Comprehensive metabolic signatures [29] |

Experimental Protocols and Methodologies

Protocol for Targeted Opioid Metabolite Analysis

This protocol exemplifies a targeted approach for differentiating therapeutic from illicit opioid use [30]:

Sample Preparation:

- Matrix: Urine (primary), plasma, or hair for long-term use assessment

- Hydrolysis: Enzymatic (β-glucuronidase) or chemical hydrolysis to liberate conjugated metabolites

- Extraction: Solid-phase extraction (SPE) or liquid-liquid extraction (LLE)

- Derivatization: Silylation for GC-based methods

Instrumental Analysis:

- Platform: LC-MS/MS or GC-MS

- Separation: C18 column (LC) or mid-polarity column (GC)

- Detection: Multiple reaction monitoring (MRM) for specific opioid metabolites

- Target Analytes: Morphine, codeine, 6-monoacetylmorphine (6-MAM), oxycodone, norfentanyl

- Quantification: Isotope-labeled internal standards for each analyte

Data Interpretation:

- Metabolite Ratios: Morphine-to-codeine ratio, presence of 6-MAM as heroin marker

- Concentration Thresholds: Established cutoffs for therapeutic vs. illicit use

- Chiral Analysis: For amphetamine-type stimulants to differentiate sources [30]

Protocol for Non-Targeted Metabolomic Biomarker Discovery

This protocol outlines a non-targeted approach for discovering endogenous biomarkers of drug use [29] [8]:

Sample Preparation:

- Matrix: Urine, blood, or tissues with consideration for perfusion effects in organs

- Quenching: Rapid freezing in liquid nitrogen to preserve metabolic profile

- Extraction: Dual extraction protocols (e.g., chloroform/methanol/water) for comprehensive metabolite coverage

- Derivatization: Methoximation and silylation for GC-based platforms

Instrumental Analysis:

- Platform: GC×GC-TOFMS provides enhanced separation power

- Primary Dimension: Non-polar or mid-polarity column (e.g., DB-5)

- Secondary Dimension: Polar column (e.g., PEG-type)

- Modulation: Cryogenic modulator with 4-8 second period

- Detection: TOFMS with acquisition rate ≥100 Hz

- Mass Range: m/z 40-600

Data Processing:

- Peak Detection: Automated peak finding with deconvolution

- Alignment: Retention time alignment in both dimensions

- Normalization: Internal standard and probabilistic quotient normalization

- Statistical Analysis: Multivariate analysis (PCA, PLS-DA) to identify significant features [29] [8] [31]

GC×GC Method for Comprehensive Volatile Profiling

This specialized protocol demonstrates GC×GC application for complex forensic samples [31]:

Sample Collection:

- Headspace SPME: Carboxen/PDMS/DVB fiber for broad volatility range

- Sampling Temperature: 60°C for 30 minutes

- Standards: Deuterated internal standards for quality control

GC×GC-TOFMS Conditions:

- Primary Column: DB-5MS (30m × 0.25mm × 0.25μm)

- Secondary Column: DB-17MS (1.5m × 0.1mm × 0.1μm)

- Modulation: LN2 cryogenic modulator with 6s period

- Temperature Program: 40°C (2min) to 260°C at 5°C/min

- Mass Spectrometer: TOFMS with 100 Hz acquisition rate

- Mass Range: m/z 35-550

Data Analysis:

- Software: Commercial GC×GC data processing platforms

- Identification: NIST library matching with retention index validation

- Quantification: Relative abundance with internal standard normalization [31]

Experimental Workflows and Signaling Pathways

The following diagram illustrates the typical workflow for integrating targeted and non-targeted approaches in forensic drug analysis:

Forensic Analysis Workflow

The diagram above illustrates how targeted and non-targeted approaches diverge after data acquisition but converge during data interpretation to produce legally admissible evidence.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Forensic Drug Analysis

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Deuterated Internal Standards | Quantification control, recovery correction | d3-Morphine, d5-Amphetamine for targeted quantification [30] |

| Derivatization Reagents | Volatility and stability enhancement for GC analysis | MSTFA, BSTFA for silylation; methoxyamine for oxime formation [8] |

| SPME Fibers | Headspace sampling of volatile compounds | Carboxen/PDMS/DVB for broad-range VOC collection [31] |

| Reference Standards | Compound identification and method calibration | Certified drug standards, metabolite references [29] [30] |

| Solid-Phase Extraction Cartridges | Sample clean-up and analyte concentration | Mixed-mode cation exchange for basic drugs [30] |

| GC Stationary Phases | Compound separation | DB-5 (non-polar), DB-17 (mid-polar) for GC×GC [1] [31] |

| Quality Control Materials | Method validation and performance monitoring | Certified reference materials, pooled quality control samples [29] |

Validation for Legal Evidence

The admissibility of scientific evidence in court depends on demonstrated reliability, robustness, and significance [1]. For comprehensive two-dimensional GC methods to meet legal standards, several key validation parameters must be addressed:

Method Validation Parameters:

- Accuracy and Precision: Established using quality control materials at multiple concentrations

- Specificity: Demonstrated through separation from potentially interfering compounds

- Sensitivity: Limit of detection/quantification established for targeted analyses

- Reproducibility: Inter-laboratory studies for standardized methods

- Robustness: Testing under varying operational conditions [1]

Legal Standards:

- Daubert Criteria: Empirical verifiability, peer-reviewed publication, error rates, use of standards and controls, general acceptance in scientific community [1]

- Standardized Methods: Accredited methods following established protocols (e.g., ISO standards)

- Data Processing Consistency: Validated algorithms for non-targeted fingerprinting and chemometric analysis [1]

The first accredited GC×GC method for routine application was developed by the Canadian Ministry of the Environment and Climate Change for persistent organic pollutants analysis, establishing a precedent for forensic applications [1].

Targeted and non-targeted analytical strategies offer complementary approaches for the analysis of illicit drugs, metabolites, and biomarkers in forensic research. Targeted methods provide the precision, sensitivity, and defensibility required for routine drug testing and confirmation, while non-targeted strategies offer powerful discovery capabilities for novel substances and metabolic patterns. Comprehensive two-dimensional gas chromatography, particularly when coupled with time-of-flight mass spectrometry, significantly enhances both approaches through increased peak capacity, structured chromatograms, and improved sensitivity.

Validation of these methodologies for legal evidence requires rigorous attention to standardized protocols, demonstration of reproducibility, and adherence to legal standards for scientific evidence. As the field continues to evolve with increasing chemical complexity, the strategic integration of targeted and non-targeted approaches will be essential for advancing forensic science and providing defensible evidence in legal contexts.

Sample Preparation and Solid-Phase Extraction (SPE) for Complex Matrices

Sample preparation is a critical step in analytical workflows, especially when dealing with complex matrices such as biological fluids, environmental samples, and food products [32]. In the context of legal evidence research, where the validity and defensibility of analytical data are paramount, robust sample preparation becomes indispensable. Traditional methods like liquid-liquid extraction (LLE) have been widely used but present significant limitations, including extensive organic solvent consumption, lengthy operation times, and potential for emulsion formation [33]. These shortcomings are particularly problematic in forensic applications where reproducibility and minimal sample manipulation are essential.

Solid-phase extraction (SPE) has emerged as a powerful alternative to traditional techniques, offering reduced solvent consumption, simplified workflows, and improved compatibility with modern analytical platforms [32]. The growing need for greener and more sustainable analytical approaches has further accelerated the adoption of SPE methodologies [32]. When coupled with comprehensive two-dimensional gas chromatography (GC×GC), SPE provides an unparalleled foundation for the analysis of complex mixtures, enabling the resolution of hundreds of components that would otherwise remain unresolved by conventional one-dimensional chromatography [11] [34]. This technical synergy is particularly valuable in legal evidence research, where comprehensive characterization of complex samples and confident identification of trace components can be crucial to case outcomes.

Fundamentals of Solid-Phase Extraction

Theoretical Principles and Mechanisms

SPE operates on principles similar to liquid-liquid extraction but replaces one liquid phase with a solid sorbent [33]. The process involves the distribution of analytes between a liquid sample matrix and a solid sorbent phase to which the analytes have greater affinity. A liquid sample is passed through adsorbent particles, allowing target compounds to be selectively retained while interfering matrix components are washed away. Subsequently, the purified analytes are recovered using an appropriate elution solvent [33]. This extraction mechanism simplifies subsequent analysis by removing much of the sample matrix and potentially concentrating the analytes of interest.