Validating Forensic Genealogy Tools: A Scientific Framework for Investigative Genetic Genealogy

This article provides a comprehensive framework for the validation of forensic genealogy tools, addressing the critical needs of researchers and forensic scientists.

Validating Forensic Genealogy Tools: A Scientific Framework for Investigative Genetic Genealogy

Abstract

This article provides a comprehensive framework for the validation of forensic genealogy tools, addressing the critical needs of researchers and forensic scientists. It explores the foundational principles of Investigative Genetic Genealogy (IGG), details methodological workflows for applying forensic-grade genome sequencing, identifies key challenges in troubleshooting and optimization, and establishes rigorous protocols for technical and bioethical validation. By synthesizing current standards, technological advancements, and ethical considerations, this resource aims to guide the responsible and effective implementation of IGG in both forensic and biomedical contexts.

The Genomic Revolution in Forensics: From STRs to SNP Profiling and IGG

The field of forensic genetics is in the midst of a significant transition, moving from traditional methods based on short tandem repeats (STRs) to new approaches leveraging dense single nucleotide polymorphism (SNP) testing. This shift is primarily driven by the growing application of Forensic Investigative Genetic Genealogy (FIGG), which requires genetic markers capable of identifying distant familial relationships beyond the immediate family members. For researchers and forensic science service providers, understanding the technical capabilities, limitations, and appropriate applications of each marker type is fundamental to advancing investigative genetic genealogy research. This guide provides an objective, data-driven comparison of these two technologies, contextualized within the framework of validating tools for forensic genealogy.

Fundamental Marker Characteristics

Short Tandem Repeats (STRs) are regions of the genome consisting of short, repeating sequences of DNA (typically 2-6 base pairs in length). The highly polymorphic nature of these repeats, combined with a relatively high mutation rate (approximately 1 in 1000), makes them excellent for distinguishing between individuals [1]. For decades, they have been the gold standard in forensic science for direct matching and paternity testing, with standard kits analyzing between 16 and 27 loci [2].

Single Nucleotide Polymorphisms (SNPs), in contrast, are variations at a single base position in the DNA sequence. They are bi-allelic (typically only two possible alleles), have a very low mutation rate (approximately 1 in 100 million), and are abundant across the entire genome [1]. While individually less informative than an STR locus, their power comes from their density; testing panels can include from hundreds of thousands to over a million markers [3] [2].

Table 1: Core Characteristics of STRs and SNPs in Forensic Applications

| Characteristic | Short Tandem Repeats (STRs) | Dense Single Nucleotide Polymorphisms (SNPs) |

|---|---|---|

| Molecular Nature | Repetitive DNA sequences | Single base pair variations |

| Mutation Rate | High (~1 in 1,000) [1] | Low (~1 in 100 million) [1] |

| Typical Markers Analyzed | 16 - 27 loci [2] | 600,000 - 1,000,000+ loci [2] [4] |

| Primary Forensic Application | Direct matching, CODIS database searches, paternity testing | Forensic Genetic Genealogy, distant kinship, ancestry inference |

| Database | National (criminal) DNA databases (e.g., CODIS) [2] | Genetic Genealogy databases (e.g., GEDmatch, FamilyTreeDNA) [2] |

Performance Comparison in Key Forensic Applications

Kinship Analysis and Investigative Genetic Genealogy

The capability to identify familial relationships is where the most significant performance divergence occurs.

- STR Performance: STR profiling is highly reliable for identifying first-degree relationships, such as parent-child or full-sibling relationships [3]. However, the high mutation rate and limited number of loci make it ineffective for identifying relatives beyond this close circle [3] [1]. Familial DNA Searching (FDS) in criminal databases using STRs is therefore limited in scope.

- SNP Performance: Dense SNP testing is the foundation of FIGG because it can detect identity by descent (IBD) segments shared between distant relatives. With hundreds of thousands of markers, it can reliably identify relatives as distant as third to fourth cousins, and even seventh-degree or beyond [5] [3] [1]. This allows investigators to build family trees and generate investigative leads for cold cases and unidentified human remains (UHRs) where the person of interest is not in a criminal database [3] [2].

Analysis of Challenged Forensic Samples

Forensic evidence is often degraded, fragmented, or of low quantity.

- STR Limitations: The successful PCR amplification of STRs requires relatively long, intact DNA fragments. With degraded DNA, allele drop-out and incomplete profiles are common, leading to no viable investigative leads [1].

- SNP Advantages: SNPs can be detected in much smaller DNA fragments than STRs, making them particularly advantageous for analyzing highly degraded samples [1]. Furthermore, whole genome sequencing (WGS) methods can be applied to low-quality samples. For instance, one study successfully sequenced degraded femur DNA from a 16-year-old case, identifying over 1 million SNPs, which led to potential kinship matches [4].

Mixture Deconvolution

Forensic samples often contain DNA from multiple contributors, which complicates analysis.

- STR Challenges: The presence of stutter peaks in STR analysis can mask alleles from a minor contributor, making the interpretation of complex mixtures difficult [6].

- Emerging SNP-based Solutions: Alternative markers like microhaplotypes (MHs)—which are sets of closely linked SNPs—are being explored. One study directly compared an MH panel to a standard STR kit and found the MH panel provided a higher recovery of minor contributor alleles and yielded higher Likelihood Ratio (LR) values for mixture detection [6].

Table 2: Performance Comparison in Operational Forensic Scenarios

| Application | STR Performance & Characteristics | Dense SNP Performance & Characteristics |

|---|---|---|

| Direct Matching | Excellent; the established standard for CODIS. | Theoretically higher discrimination with sufficient markers; not used in CODIS. |

| Kinship Analysis | Accurate for 1st-degree relatives; ineffective for distant relatives [3]. | Capable of identifying up to 7th-degree and beyond relatives; essential for FIGG [1]. |

| Degraded DNA | Poor; requires long, intact DNA templates for amplification. | Superior; works with short, fragmented DNA [1]. |

| Mixture Deconvolution | Challenged by stutter artifacts that obscure minor contributors [6]. | Microhaplotype panels show better minor allele recovery and higher LRs than STRs [6]. |

| Primary Database | CODIS (government, criminal) | GEDmatch PRO, FamilyTreeDNA, DNASolves (consumer, public) [2] [7] |

Experimental Validation and Methodologies

Validating these technologies for research and casework requires robust experimental protocols and performance metrics.

Genotype Imputation for Augmenting Forensic Data

A key methodological advancement is genotype imputation, a computational technique that predicts missing genotypes using reference panels of known haplotypes.

- Protocol: A 2024 study performed a simulation-based assessment using Beagle software (v5.2) [8]. Test samples with known genotypes were pruned to create partial datasets (e.g., 4,000 to 300,000 SNPs). These were then imputed against a reference panel from the 1000 Genomes Project. Parameters included: burn-in=6, iterations=12, and a genotype probability threshold (Qgp) of 0.5-0.99 [8].

- Key Findings: The study demonstrated that imputation can significantly increase SNP count, but its accuracy is dependent on the quality and density of the input data and the genetic similarity between the test sample and the reference population [8]. This is critical for FIGG, where attempting to impute data from very low-quality or low-quantity samples may lead to inaccurate profiles and false leads.

Evaluating Kinship Inference Methods

Different statistical approaches are used to infer relationships from dense SNP data.

- Protocol: A study compared three core methods for kinship inference using simulated data from the 1000 Genomes Project [9]:

- Likelihood Ratio (LR): Tests the probability of the genetic data under competing kinship hypotheses.

- Identity by State (IBS): Measures the total length of shared genomic segments.

- Identity by Descent (IBD): Estimates the proportion of the genome shared due to a recent common ancestor.

- Key Findings: The traditional LR approach was as good as, and in some cases better than, the alternative methods, particularly for classifying distant relationships. However, the LR method is computationally intensive. Combining different approaches did not generally increase classification accuracy [9].

Determining Optimal Sequencing Depth for SNP Data

For WGS, a critical practical consideration is the balance between data quality, accuracy, and cost.

- Protocol: Researchers performed WGS on the MGISEQ-200RS platform at varying depths of coverage (e.g., 2x, 5x, 10x, 30x) [4]. They then extracted a panel of 645,199 autosomal SNPs (matching a commercial SNP chip) and systematically evaluated both genotyping accuracy and efficacy in pedigree inference.

- Key Findings: While high-depth sequencing (e.g., 30x) provided the highest genotype accuracy, low-depth sequencing data (e.g., 2x) could achieve comparable pedigree inference accuracy after stringent quality control [4]. This is vital for cost-effective scaling of FIGG for cold case investigations.

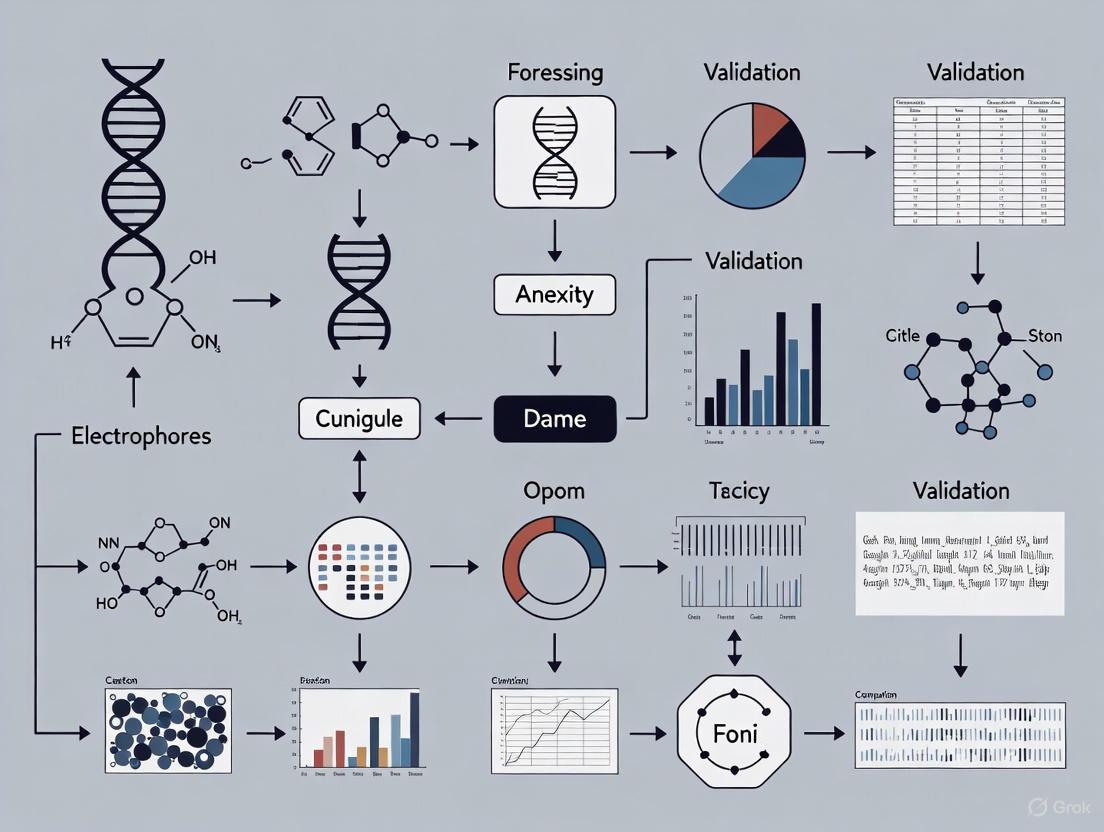

The following diagram illustrates a generalized workflow for validating and applying dense SNP data in a forensic genealogy context, integrating the experimental methods described above.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of forensic genomic testing relies on a suite of specialized reagents, software, and reference materials.

Table 3: Essential Reagents and Resources for Forensic Genomic Research

| Tool / Reagent | Function / Application | Example Products / Databases |

|---|---|---|

| STR Amplification Kits | Multiplex PCR amplification of core STR loci for capillary electrophoresis. | GlobalFiler, PowerPlex Fusion |

| SNP Microarrays | Genotyping hundreds of thousands to millions of SNPs simultaneously from high-quality DNA. | Illumina Infinium GSA, OmniExpress [2] [4] |

| Next-Generation Sequencers | Enabling whole genome sequencing and targeted sequencing for SNP discovery and genotyping. | MGISEQ-200RS, Illumina platforms [4] |

| Imputation Software | Statistical prediction of missing genotypes to augment sparse genetic datasets. | Beagle [8] |

| Kinship Inference Tools | Statistical classification of familial relationships using LR, IBD, and IBS algorithms. | EuroForMix, Custom Pipelines [6] [9] |

| Reference Panels | Curated genomic datasets used for imputation, ancestry inference, and algorithm training. | 1000 Genomes Project [8] [9] |

| Genetic Genealogy Databases | Databases of consumer genetic data, searched to find relatives of an unknown sample. | GEDmatch PRO, FamilyTreeDNA, DNASolves [2] [7] |

STR and dense SNP testing are complementary technologies with distinct strengths in the forensic genomics landscape. STR profiling remains the undisputed method for direct matching and database searches within the established CODIS framework. However, for the transformative application of Investigative Genetic Genealogy, dense SNP testing is indispensable. Its ability to detect distant kinship through the analysis of hundreds of thousands of markers, coupled with its superior performance on degraded DNA, has fundamentally expanded the capabilities of forensic science. Validation studies emphasize that factors such as input data quality, reference panel selection, and sequencing depth are critical for generating reliable, actionable investigative leads. As the field continues to evolve, the rigorous, objective comparison of these tools will ensure that forensic genealogy research is built on a solid, scientifically valid foundation.

Core Principles of Investigative Genetic Genealogy (IGG) and Forensic DNA Phenotyping

Forensic science has been revolutionized by two powerful DNA-based tools that serve distinct but complementary roles in criminal investigations and human identification: Investigative Genetic Genealogy (IGG) and Forensic DNA Phenotyping (FDP). IGG is a groundbreaking investigative technique that combines traditional genealogy with advanced DNA analysis to identify suspects or human remains by tracing familial connections [10] [2]. In contrast, FDP is a DNA typing method that predicts externally visible physical characteristics and biogeographic ancestry from genetic material to provide investigative leads when no suspect is known [11] [12]. While both techniques analyze human DNA, they differ fundamentally in their underlying principles, applications, and technological requirements.

These tools have transformed forensic investigations, particularly in cold cases where traditional methods have been exhausted. IGG gained international recognition after its successful application in the 2018 Golden State Killer case, leading to hundreds of additional solved cases [10] [2]. FDP has proven valuable in generating investigative leads for unknown perpetrators and identifying human remains by predicting physical characteristics that can be combined with facial reconstruction [12]. This article provides a comprehensive comparison of these methodologies, their experimental protocols, validation data, and implementation requirements for researchers and forensic professionals.

Fundamental Principles and Technical Foundations

Investigative Genetic Genealogy (IGG)

IGG operates on the fundamental genetic principle that individuals inherit specific DNA segments from their ancestors, creating identifiable shared segments between relatives [2]. The technique examines hundreds of thousands to over a million Single Nucleotide Polymorphisms (SNPs) across the human genome [10] [2]. These SNPs are scattered throughout both coding and non-coding regions and provide the dense genomic coverage necessary to detect shared segments between distant relatives who may be separated by several generations [2].

The statistical power of IGG comes from the analysis of Identical-by-Descent (IBD) segments—sections of DNA that are identical between individuals because they were inherited from a common ancestor without recombination. The length and quantity of these shared segments indicate the degree of relatedness, with closer relatives sharing longer and more numerous segments than distant relatives [2]. Genealogists then use this genetic data alongside traditional documentary research (birth, marriage, death records) to build family trees backward in time to identify common ancestors, then forward to identify potential candidates who match the unknown sample's characteristics [10] [2].

Forensic DNA Phenotyping (FDP)

FDP operates on fundamentally different principles, focusing on predicting physical appearance and ancestry rather than familial relationships. This technique identifies variations in specific genes known to influence physical traits, focusing primarily on Single Nucleotide Polymorphisms (SNPs) in coding regions associated with pigmentation, morphology, and other visible characteristics [12].

The prediction models used in FDP are developed through large-scale genome-wide association studies (GWAS) that correlate specific genetic variants with observable physical traits across diverse populations [12]. These models employ either statistical approaches or machine learning algorithms trained on reference populations with known genotypes and phenotypes [12]. For example, the HIrisPlex-S system analyzes 41 carefully selected SNPs to predict eye, hair, and skin color with reported accuracies exceeding 90% for some traits in validation studies [12].

Unlike IGG which focuses on neutrally-inherited genomic regions for kinship analysis, FDP specifically targets functional genetic variants that directly influence physical appearance through biological pathways such as melanin production and distribution [12].

Key Technical Distinctions

Table 1: Fundamental Comparison of IGG and FDP

| Parameter | Investigative Genetic Genealogy (IGG) | Forensic DNA Phenotyping (FDP) |

|---|---|---|

| Primary Goal | Identify specific individuals through familial relationships | Predict physical characteristics and ancestry |

| Genetic Markers | 600,000-1,000,000 SNPs (genome-wide) | 22-41 SNPs (targeted, trait-associated) [12] |

| Genomic Regions | Neutral regions across entire genome | Functional, trait-associated coding regions |

| Core Principle | Segregation of genetic material through inheritance | Genotype-phenotype associations |

| Data Output | List of genetic relatives, family trees | Physical trait predictions (probabilistic) |

| Reference Data | Genetic genealogy databases (GEDmatch, FamilyTreeDNA) [2] | Curated trait-associated SNP databases |

Methodologies and Experimental Protocols

IGG Workflow and Experimental Protocol

The IGG process follows a meticulous, multi-stage protocol that integrates laboratory analysis, genetic matching, and genealogical research:

Step 1: Evidence Screening and DNA Extraction - Biological evidence from crime scenes (e.g., semen, blood, saliva) or unidentified remains is subjected to DNA extraction using standard forensic methods. The quantity and quality of DNA are assessed via quantification methods [10].

Step 2: SNP Genotyping - Unlike traditional forensic DNA analysis that uses Short Tandem Repeats (STRs), IGG requires SNP data. When DNA is degraded or in low quantity, SNPs provide an advantage due to their smaller amplicon size [10]. Extraction is followed by genotyping using SNP microarrays or Next-Generation Sequencing (NGS) technologies that simultaneously genotype hundreds of thousands of SNPs across the genome [2]. The resulting data file (typically in FASTQ format) contains the sequence information for the unknown sample [10].

Step 3: Database Upload and Genetic Matching - The SNP data is uploaded to genetic genealogy databases that permit law enforcement usage (GEDmatch PRO, FamilyTreeDNA, DNASolves) [2]. These databases compare the unknown profile against their existing datasets, generating a list of individuals who share significant DNA segments, with match lists typically ranking relatives from closest to most distant [10] [2].

Step 4: Genetic Genealogy Analysis - Using the shared DNA segments and their sizes, analysts estimate the possible biological relationships between the unknown sample and each genetic match. The amount of shared DNA, measured in centimorgans (cM), is used to calculate probabilities for possible relationships [2].

Step 5: Genealogical Research and Tree Building - Genealogists then build family trees for the genetic matches using public records (census, birth, marriage, death certificates) to identify common ancestors. By working backward through generations to find these ancestors, then building trees forward through time, investigators identify potential candidates who fit the timeline, location, and other case details [10] [2].

Step 6: Investigative Follow-up and Confirmation - Traditional investigation is used to assess identified candidates, followed by collection of reference samples for standard forensic STR testing to confirm or exclude the individual through direct DNA comparison [10].

IGG Workflow: From Evidence to Identification

FDP Workflow and Experimental Protocol

The FDP process follows a targeted, trait-specific analytical protocol:

Step 1: DNA Extraction and Quantification - Biological evidence undergoes standard forensic DNA extraction. The DNA quantity and quality are assessed, with special consideration for potential degradation which may affect downstream analyses [12].

Step 2: Targeted SNP Analysis - Unlike the genome-wide approach of IGG, FDP uses targeted analysis of specific SNPs known to correlate with physical traits. Systems like HIrisPlex-S employ multiplex PCR assays targeting a specific panel of SNPs (e.g., 24 for hair and eye color, 17 for skin color) [12]. The SNaPshot method, a multiplex SNP genotyping technique based on primer extension, is commonly used for this targeted analysis [12].

Step 3: Genotype Interpretation - The resulting SNP genotypes are interpreted using established statistical models and prediction algorithms. For example, the HIrisPlex system uses web-based tools that calculate prediction probabilities for specific trait categories based on the genotype data [12].

Step 4: Trait Prediction and Statistical Weighting - Each physical trait is assigned a predictive value with an associated statistical confidence. For instance, the system may predict "brown eyes" with 97% probability or "black hair" with 99% probability [12]. These predictions are typically presented as probabilities rather than certainties, reflecting the complex interplay between genetics and environmental factors in determining physical appearance.

Step 5: Composite Profile Generation - The collective trait predictions are integrated to create a composite biological profile of the unknown individual. This profile may include ancestry estimation, eye color, hair color, skin pigmentation, and other physical characteristics [12]. In some applications, this data is combined with forensic artistry to create facial reconstructions, particularly in unidentified remains cases [12].

FDP Workflow: From DNA to Physical Trait Predictions

Comparative Performance Data and Validation

Validation Studies and Performance Metrics

Both IGG and FDP have undergone extensive validation studies to assess their reliability and limitations for forensic applications. The performance characteristics differ significantly due to their distinct objectives and methodologies.

Table 2: Performance Validation Data for IGG and FDP

| Performance Metric | Investigative Genetic Genealogy (IGG) | Forensic DNA Phenotyping (FDP) |

|---|---|---|

| Reported Case Success | ~1,000+ cases solved since 2018 [10] [2] | 91.6% accuracy for eye color (HIrisPlex-S) [12] |

| Trait Prediction Accuracy | Not applicable | 90.4% for hair color, 91.2% for skin color (HIrisPlex-S) [12] |

| Database Effectiveness | 60%+ match rates in established systems [13] | Not applicable |

| Success in Degraded DNA | Effective with SNP analysis of challenging samples [10] | Validated on highly decomposed remains [12] |

| Required DNA Quantity | Varies; lower quantities sufficient with advanced sequencing | Validated with low quantity DNA samples [12] |

| Statistical Foundation | Kinship probabilities based on shared DNA segments | Trait probabilities based on genotype-phenotype associations |

Technical Requirements and Resource Considerations

Implementation of IGG and FDP requires distinct technical infrastructures, analytical expertise, and financial resources. These practical considerations significantly influence their adoption in different forensic settings.

Table 3: Technical and Resource Requirements Comparison

| Parameter | Investigative Genetic Genealogy (IGG) | Forensic DNA Phenotyping (FDP) |

|---|---|---|

| Technology Platform | Next-Generation Sequencing, SNP microarrays [2] | SNaPshot, Capillary Electrophoresis, PCR [12] |

| Analytical Expertise | Advanced genealogy, genetic analysis | Forensic genetics, statistical interpretation |

| Turnaround Time | Weeks to months (complex genealogical research) [10] | Weeks (targeted analysis) [12] |

| Cost Considerations | High (reagent costs, specialized expertise) | Moderate (targeted assays) |

| Database Access | Dependent on public genetic genealogy databases [2] | No external database requirements |

| Regulatory Compliance | Complex (privacy, consent, jurisdictional policies) [14] | Standard forensic validation protocols |

Research Reagents and Essential Materials

Successful implementation of both IGG and FDP requires specific research reagents and specialized materials. The following table details key solutions and their applications in experimental protocols for both techniques.

Table 4: Essential Research Reagents for IGG and FDP Protocols

| Reagent/Material | Application | Function | Technique |

|---|---|---|---|

| SNP Microarrays (Illumina Infinium GSA) | Genome-wide SNP genotyping | Simultaneous analysis of 600,000+ SNPs [2] | IGG |

| HIrisPlex-S System | Targeted trait SNP analysis | Multiplex assay for 41 eye, hair, and skin color SNPs [12] | FDP |

| SNaPshot Reagents | Multiplex SNP genotyping | Primer extension for targeted SNP analysis [12] | FDP |

| NGS Library Prep Kits | Whole genome sequencing | Preparation of DNA libraries for sequencing [2] | IGG |

| DNA Quantitation Kits (qPCR-based) | DNA quantity/quality assessment | Measures human DNA content and degradation state [10] | Both |

| Genetic Genealogy Databases (GEDmatch, FamilyTreeDNA) | Genetic matching | Identification of genetic relatives [10] [2] | IGG |

| Prediction Algorithms (HIrisPlex webtool) | Trait prediction | Converts genotype data to trait probabilities [12] | FDP |

Ethical and Legal Considerations

The implementation of both IGG and FDP raises significant ethical and legal considerations that must be addressed through robust frameworks and safeguards. IGG has generated particular controversy regarding privacy implications, as it involves searching genetic databases populated by individuals who typically uploaded their DNA for recreational genealogy purposes rather than law enforcement use [14]. This has been characterized by some as "function creep," where data is used beyond its original intended purpose, potentially undermining reasonable expectations of privacy [14].

The U.S. Department of Justice has established an Interim Policy for Forensic Genetic Genealogical DNA Analysis and Searching that imposes important limitations on IGG use. The policy restricts IGG to violent crimes (murder, attempted murder, sexual assaults) and identification of human remains, requires exhaustion of traditional investigative methods, mandates prosecutor concurrence before proceeding, and stipulates that IGG results serve as investigative leads only rather than grounds for arrest [10].

In Europe, the legal landscape is evolving rapidly, with countries including Sweden, Denmark, Norway, France, and the Netherlands implementing or considering specific legal frameworks for IGG [14]. The European Data Protection framework, particularly the Law Enforcement Directive, presents challenges for IGG implementation, with debates centering on whether data from genetic genealogy databases can be considered "manifestly made public by the data subject" [14].

FDP raises different ethical concerns, primarily related to the potential for reinforcing racial biases and the accuracy of phenotypic predictions, particularly for individuals of mixed ancestry [11]. Surveys of police officers have revealed that expectations of FDP capabilities may not align with current technological realities, with officers ranking predictions of ethnicity, age, and height as most useful, despite current limitations in accurately predicting some of these traits [11].

IGG and FDP represent distinct but complementary approaches in the modern forensic toolkit. IGG excels at identifying specific individuals through familial connections, while FDP provides investigative leads by predicting physical characteristics. The choice between these techniques depends on case specifics, available resources, and legal frameworks.

Future developments in both fields will likely focus on enhanced precision, expanded applications, and reduced costs. Advances in Next-Generation Sequencing technologies are expected to benefit both techniques through increased throughput and sensitivity [15] [13]. Machine learning and AI applications are being explored to improve prediction models in FDP and enhance kinship matching algorithms in IGG [16]. The rapidly growing consumer genetic testing market, which now includes over 41 million individuals in major databases, will continue to enhance the power of IGG [2]. Meanwhile, ongoing research into the genetic architecture of physical traits will expand the capabilities and accuracy of FDP systems.

For researchers and forensic professionals, understanding the distinct principles, methodologies, and applications of these powerful tools is essential for their appropriate implementation in both current casework and future scientific advancements. As both technologies continue to evolve, they will undoubtedly play increasingly important roles in forensic investigations while necessitating ongoing critical evaluation of their ethical implications and regulatory frameworks.

Forensic Genetic Genealogy (FGG) has emerged as a powerful investigative method that combines traditional genetic analysis with genealogical research to identify unknown individuals. This field relies on a specialized ecosystem of genetic databases and laboratory workflows to generate leads in both cold cases and unidentified remains investigations. The integration of these tools has fundamentally expanded the capabilities of forensic science, moving beyond conventional DNA analysis to provide actionable investigative leads where few other options exist.

The efficacy of FGG hinges on the interplay between two distinct categories of resources: public genetic genealogy databases, which enable the identification of relatives through DNA matching, and validated laboratory workflows, which produce the high-quality genetic data required for these comparisons. This article provides a scientific comparison of two key database players—GEDmatch and FamilyTreeDNA—and details the experimental protocols for whole genome sequencing, a laboratory method increasingly validated for forensic applications.

Database Comparison: GEDmatch and FamilyTreeDNA

Genetic genealogy databases form the cornerstone of FGG by providing the extensive kinship networks necessary to triangulate unknown subjects. The two platforms most utilized by the forensic community are GEDmatch and FamilyTreeDNA. The table below provides a quantitative comparison of their key characteristics from a forensic research perspective.

Table 1: Comparative Analysis of GEDmatch and FamilyTreeDNA for Forensic Genetic Genealogy

| Feature | GEDmatch | FamilyTreeDNA (FTDNA) |

|---|---|---|

| Primary Function | Cross-platform DNA comparison and analysis toolkit [17] [18] | Integrated DNA testing and matching services [19] |

| Database Access | Open; accepts uploaded DNA data from all major testing companies [20] [18] | Closed; primarily contains data from tests processed by its own lab [19] |

| Core Forensic Tools | One-to-Many, One-to-One DNA Comparison, Admixture (Heritage) Analysis [17] [20] | Family Finder (autosomal), Y-DNA, mtDNA, and combined matching tools [19] [21] |

| Key Distinguishing Capabilities | Tier 1 tools (e.g., Lazarus, Phasing), Segment Search, AutoClusters [17] | Specialized, deep-lineage Y-DNA and mtDNA tests (e.g., Big Y-700, mtFull Sequence) [19] [21] |

| Law Enforcement Access Policy | Opt-in for law enforcement matching; specific kits can be flagged for forensic use [20] | Voluntary cooperation; users can opt-out of law enforcement matching [22] |

| Reported Database Size | Over 2 million profiles [17] [18] | One of the world's largest Y-DNA databases [19] |

| Data Compatibility | Universal compatibility with data from AncestryDNA, 23andMe, MyHeritage, FTDNA, and others [20] [18] | Optimized for its own tests; accepts uploads from other companies for limited features [19] |

| Typical Data Processing Time | A few hours after upload for basic tools [20] | Family Finder: 3-4 weeks; Big Y-700: 11-14 weeks (as of 2025) [23] |

Analytical Workflow in Forensic Genetic Genealogy

The application of these databases in a forensic investigation follows a structured pathway. The diagram below outlines the generalized FGG workflow, from laboratory processing to genealogical research.

Forensic Genetic Genealogy Workflow

GEDmatch serves as a central hub for data integration, allowing forensic laboratories to compare a single unknown sample against a consolidated database of users who tested with different services. Its One-to-Many DNA Comparison tool is often the starting point, generating a list of genetic relatives ranked by shared centimorgans (cM), a unit of genetic linkage [24] [20]. For closer analysis, the One-to-One Autosomal DNA Comparison provides a chromosome browser to visualize specific shared segments, which is critical for validating biological relationships [24].

FamilyTreeDNA offers a different value proposition through its specialized lineage tests. While its Family Finder (autosomal) test is comparable to others, its Y-DNA and mtDNA tests provide crucial supplementary data for tracing the direct paternal and maternal lines, respectively [19] [21]. This is particularly valuable in FGG for confirming suspected relationships or breaking through genealogical "brick walls." The Big Y-700 test, which sequences over 700 regions of the Y-chromosome, provides high-resolution haplogroup data that can place a male subject within a specific branch of the human family tree [19].

Laboratory Workflows: Whole Genome Sequencing

The generation of reliable genetic data from forensic samples is a prerequisite for successful database research. Whole Genome Sequencing (WGS) is at the forefront of forensic genomics, providing a comprehensive method for generating single nucleotide variant (SNV) profiles suitable for FGG.

Experimental Protocol and Validation

A developmental validation study for a WGS workflow, as documented in Forensic Science International: Genetics, outlines a standardized protocol and performance metrics [25]. The following table details the key reagent solutions and their functions within this workflow.

Table 2: Research Reagent Solutions for Whole Genome Sequencing Workflow

| Component / Reagent | Function in the Experimental Protocol |

|---|---|

| KAPA HyperPrep Kit | Library preparation; fragments DNA, adds adapters, and performs PCR amplification to create sequencing-ready libraries [25]. |

| NovaSeq 6000 System | Sequencing platform; performs high-throughput, massively parallel sequencing of the prepared DNA libraries [25]. |

| Tapir Bioinformatic Workflow | End-to-end data processing; transitions raw data (BCL) from Illumina instruments to sample genotypes in a GEDmatch-compatible format [25]. |

| DNA Input (10 ng - 50 pg) | Sample; used for sensitivity studies to determine the dynamic range and limit of detection of the workflow [25]. |

| Mock Casework Samples | Validation samples; include mixtures at ratios from 1:1 to 1:49 to assess performance with challenging, forensically relevant samples [25]. |

The experimental workflow involves several stages, from sample preparation to data analysis, each critical for ensuring the quality and reliability of the final genetic data.

WGS Wet-Lab and Bioinformatics Workflow

Key Validation Data and Performance Metrics

The validation of the WGS workflow involved rigorous testing to establish its forensic reliability. The following quantitative data summarizes its performance characteristics as reported in the developmental validation study [25]:

- Sensitivity and Dynamic Range: The workflow demonstrated robust performance across a wide range of DNA inputs, from 10 nanograms (ng) down to 50 picograms (pg), establishing its limit of detection and utility with low-quantity forensic samples.

- Reproducibility: Libraries generated by multiple individuals showed consistent results, confirming that the workflow is operator-independent and produces reproducible genetic data.

- Specificity and Contamination Control: Evaluation of negative controls showed no evidence of exogenous DNA, confirming the workflow's specificity and low risk of contamination.

- Performance with Complex Samples: The workflow successfully handled mock casework samples and mixtures at ratios ranging from 1:1 to 1:49, demonstrating its potential for analyzing challenging samples typical in forensic investigations.

- Bioinformatic Output: The Tapir pipeline provides comprehensive quality metrics, including data on coverage (breadth and depth), duplication rates, and call rates, ensuring the resulting genotypes meet quality thresholds for database uploads.

This validated WGS protocol provides a standardized method for generating the extensive SNV profiles required for FGG, ensuring that data uploaded to databases like GEDmatch and FamilyTreeDNA is of high quality and suitable for generating investigative leads.

The Evolution of Legal and Ethical Standards in Forensic Genetic Genealogy

Forensic Genetic Genealogy (FGG), also known as Investigative Genetic Genealogy (IGG), represents a paradigm shift in forensic science, merging advanced DNA analysis with traditional genealogical research to solve violent crimes and identify human remains [2]. This novel investigatory tool emerged prominently in 2018 with the identification of the Golden State Killer, demonstrating how DNA from crime scenes could be matched against publicly available genetic genealogy databases to identify suspects through their relatives [26] [2]. The technique has since experienced rapid growth, benefiting an estimated over five hundred cases in the United States alone, though exact data remains limited due to non-mandatory reporting [2].

The evolution of FGG has necessitated parallel development of legal and ethical frameworks to govern its application. As the field has progressed from pioneering technique to established tool, standards have matured through iterative guideline updates that incorporate practical experience, ethical considerations, and international perspectives [27]. This review examines the technical foundations, comparative methodologies, legal landscape, and ethical considerations that define modern FGG practice, providing researchers and practitioners with a comprehensive analysis of standards governing this transformative forensic discipline.

Technical Foundations: Comparing Genetic Marker Systems

Methodological Differences Between STR and SNP Analysis

Forensic Genetic Genealogy differs fundamentally from traditional forensic DNA profiling in multiple technical aspects, including the types of DNA markers analyzed, technology employed, data generated, and databases searched [2]. These methodological differences underlie the distinctive capabilities and applications of each approach.

Table 1: Comparative Analysis of STR Profiling versus SNP-Based FGG

| Parameter | Traditional Forensic DNA Profiling | Forensic Genetic Genealogy |

|---|---|---|

| DNA Markers | Short Tandem Repeats (STRs) [2] | Single Nucleotide Polymorphisms (SNPs) [2] |

| Genomic Region | Non-coding regions [2] | Coding and non-coding regions [2] |

| Number of Markers | 16-27 markers [2] | >600,000 markers [2] |

| Technology | PCR Amplification and Capillary Electrophoresis [2] | Next Generation Sequencing, Whole Genome Sequencing [2] |

| Data Output | Electropherogram [2] | FASTQ file [2] |

| Primary Database | CODIS (Convicted Offenders, Arrestees) [2] [10] | Genetic Genealogy Databases (GEDmatch, FamilyTreeDNA) [2] |

| Degraded Sample Performance | Limited with highly degraded DNA [10] | Superior due to smaller target regions [3] |

| Familial Searching Capability | Limited to close relatives (parent/child) [2] [3] | Capable of identifying distant relatives (3rd cousins and beyond) [2] [3] |

Analytical Workflow for Forensic Genetic Genealogy

The FGG process follows a structured pathway that integrates forensic science with genealogical research methods. This workflow ensures systematic processing from evidence to identification while maintaining ethical and legal standards.

The FGG process begins with confirming case eligibility, typically involving violent crimes where traditional DNA searches have been exhausted [10] [28]. Following DNA extraction from biological evidence, laboratories generate a Single Nucleotide Polymorphism (SNP) profile containing over 600,000 markers [2]. This SNP profile is uploaded to genetic genealogy databases such as GEDmatch PRO or FamilyTreeDNA, which are explicitly designed for law enforcement use [2]. The database algorithms identify genetic matches - individuals who share segments of DNA with the unknown sample - and predict relationship distances based on shared centimorgans (cM) [29]. Genetic genealogists then construct family trees using public records and other documentary evidence to identify most recent common ancestors and trace lineages forward to potential candidates [2]. The process concludes with traditional STR DNA analysis to confirm the identity of the suspected individual before any arrest is made [10].

Key Reagents and Research Materials

Successful implementation of FGG requires specific reagents and technological resources that enable the generation of high-quality SNP profiles from forensic evidence.

Table 2: Essential Research Reagents and Platforms for FGG

| Reagent/Platform | Function | Specifications |

|---|---|---|

| Illumina Infinium Global Screening Array | Genotyping microarray for SNP analysis [2] | Customizable SNP chips; currently most widely used platform [2] |

| Whole Genome Sequencing | Alternative to targeted SNP chips for comprehensive analysis [3] | Enables recovery of genetic information from highly degraded samples [3] |

| Reference DNA Materials | Quality control and standardization [3] | Critical for benchmarking analytical performance [3] |

| GEDmatch PRO | Law enforcement genetic genealogy database [2] [28] | Secure database with explicit law enforcement access policies [28] |

| FamilyTreeDNA | Consumer genetic database allowing law enforcement use [2] | Population: ~1.77 million users (as of July 2022) [2] |

Legal Frameworks and Policy Guidelines

Evolution of Regulatory Standards

The legal landscape for FGG has evolved significantly since its emergence, progressing from minimal oversight to structured frameworks. The U.S. Department of Justice Interim Policy for Forensic Genetic Genealogical DNA Analysis and Searching establishes critical guardrails, requiring that IGG be reserved primarily for violent crimes (homicide, sexual assault) and identification of human remains [10]. The policy mandates prosecutor concurrence before initiating FGG testing and exhaustion of traditional investigative methods, including an uploaded STR profile to CODIS without matches [10].

The NTVIC Policy and Practice Committee guidelines represent the third iteration of an evolving framework shaped by practitioner experience, bioethicists, and international collaboration [27]. These guidelines now include mechanisms for individuals to challenge FGG practices and call for public consultation and education efforts [27]. Recent survey data indicates strong public support for responsible FGG use, with 91% of respondents supporting its application to violent crimes and 95% supporting identification of human remains and exoneration cases [27].

International Variations in Legal Standards

International approaches to FGG regulation reflect significant jurisdictional variations. While the United States has developed the most extensive framework, other countries are establishing their own standards:

- Canada: Has increased focus on individual rights and privacy under its Charter of Rights and Freedoms, influencing FGG policy development with heightened privacy requirements [27].

- Sweden: Has reported successful use of FGG in forensic casework [2].

- United Kingdom and Australia: Are actively considering FGG for potential future use while developing appropriate legal frameworks [2].

These international differences necessitate flexible yet principled guidelines that can accommodate varying legal traditions while maintaining core ethical standards [27].

Ethical Considerations and Validation Metrics

Privacy and Informed Consent Frameworks

Ethical implementation of FGG requires balancing investigative efficacy with privacy protections for individuals whose genetic data populates genealogy databases. Primary concerns include:

- Informed Consent: While some databases require explicit user consent for law enforcement access (opt-in/opt-out features), historical variations in policies have raised concerns about whether individuals understand investigative uses of their genetic data [26] [30].

- Third-Party Implications: FGG inevitably involves genetic information of relatives who never directly consented to law enforcement use, creating potential privacy implications across entire family networks [26] [29].

- Database Representation: Current genetic genealogy databases predominantly represent individuals of European descent, creating potential justice disparities as FGG may be less effective for cases involving underrepresented populations [30].

The 2025 NTVIC guidelines address these concerns through enhanced transparency requirements and specific provisions for third-party DNA collection, emphasizing that "third parties have autonomy over their DNA" and requiring informed consent with accurate information about investigative participation [27].

Performance Validation and Quality Assurance

Robust validation of FGG methodologies requires assessment across multiple performance dimensions. Key metrics include:

Table 3: FGG Performance Metrics and Validation Standards

| Validation Parameter | Current Standard | Limitations |

|---|---|---|

| Database Effectiveness | 60% of white Americans identifiable from GEDmatch's 1.45M users [29] | Underrepresentation of diverse populations [30] |

| Relationship Detection | Capable of identifying 90-95% of people to 3rd cousin or closer [29] | 10% of 3rd cousins and 50% of 4th cousins share no detectable DNA [29] |

| Degraded Sample Performance | Superior to STR profiling due to smaller target regions [3] | Highly degraded samples still present challenges [30] |

| Laboratory Accreditation | ISO/IEC 17025:2017 for testing laboratories [28] | Not all service providers are accredited [27] |

| Practitioner Certification | IGG Accreditation Board developing professional standards [27] | Gaps in proficiency testing and technical review exist [27] |

Forensic Genetic Genealogy has evolved from a novel technique to a sophisticated forensic discipline with established technical standards and ethical frameworks. The maturation of guidelines reflects increasing emphasis on privacy protections, international harmonization, and quality assurance. Current implementation challenges include addressing database diversity gaps, standardizing practitioner credentials, and developing appropriate funding mechanisms for casework.

Future development will likely focus on enhanced automation through graph-based models of genealogical records and AI-assisted family tree construction, improving both efficiency and objectivity [3]. The creation of SNP crime scene profile databases represents another emerging frontier, though this vision faces significant policy and legal hurdles across jurisdictions [27]. As technological advancements continue, maintaining the balance between investigative potential and ethical safeguards will remain paramount for maintaining public trust and realizing the full potential of forensic genetic genealogy.

For researchers and practitioners, continued attention to both technical validation and ethical implementation will be essential. The evolving standards described in this review provide a framework for responsible application while highlighting areas requiring further development, including standardized validation protocols, diverse reference materials, and international regulatory alignment.

Investigative Genetic Genealogy (IGG) has emerged as a revolutionary forensic technique, capable of solving cold cases and identifying perpetrators by combining DNA analysis with traditional genealogical research. Validation in this context refers to the comprehensive process of establishing, through rigorous and repeated testing, that a specific laboratory workflow, from sample processing to data analysis, is reliable, reproducible, and fit for its intended purpose. This foundation of scientific rigor is not merely an academic exercise; it is the critical link that enables the legal admissibility of IGG findings in a court of law. As IGG evolves from a novel investigative tool into a more established forensic discipline, the demand for standardized and transparent validation protocols has become paramount for both the scientific and legal communities [25] [31].

The validation process ensures that the complex methodologies of IGG can withstand scrutiny under legal standards such as Daubert, which evaluates the validity of the underlying reasoning or methodology, and its potential for error [32]. This article provides a comparative analysis of validation approaches for key IGG workflows, detailing experimental protocols, performance data, and the essential reagents that constitute the scientist's toolkit for this cutting-edge field.

Comparative Analysis of IGG Workflows and Validation Data

The efficacy of IGG hinges on the successful generation of dense single nucleotide polymorphism (SNP) profiles from forensic samples. Laboratories can choose from several technological approaches, each with distinct advantages and validated performance characteristics. The table below summarizes key validation metrics for two primary workflows as established in recent developmental studies.

Table 1: Comparative Validation Data for IGG Workflows

| Workflow Parameter | Whole Genome Sequencing (WGS) Workflow [25] | Multiplex DIP Panel (60-plex) [33] |

|---|---|---|

| Technology | Massively Parallel Sequencing (KAPA HyperPrep, NovaSeq 6000) | Capillary Electrophoresis (SeqStudio) |

| Primary Marker Type | Genome-wide Single Nucleotide Variants (SNVs) | Deletion/Insertion Polymorphisms (DIPs) |

| Dynamic Range / Sensitivity | 50 pg - 10 ng DNA | Consistent detection down to 0.05 ng/µL |

| Key Sensitivity Finding | Robust performance across the dynamic range; limit of detection established at 50 picograms. | Significant allele dropout observed below 0.01 ng/µL. |

| Mixture Analysis Performance | Processed mixtures at ratios from 1:1 to 1:49. | Not explicitly specified in the provided results. |

| Reproducibility | Assessed through libraries generated by multiple individuals; high reproducibility demonstrated. | Demonstrated clean electropherograms and high peak intensities; consistent dropout of one marker (MID-17). |

| Performance on Degraded DNA | Implied capability with damaged/old samples through validation of a bioinformatic workflow (Tapir). | Superior performance of small amplicons (<65 bp) with 67% partial amplification success under degradation. |

| Primary Application in IGG | Broad-scale analysis for highest resolution familial matching. | Ancestry inference and personal identification, particularly in East Asian populations. |

Experimental Protocols for IGG Workflow Validation

To ensure reliability, validation studies follow structured experimental protocols designed to stress-test every stage of the IGG process.

Developmental Validation of a Whole Genome Sequencing Workflow

A comprehensive developmental validation for a WGS-based IGG workflow, as detailed by Forensic Science International: Genetics, involves multiple interconnected studies to confirm the system's robustness [25].

- Library Preparation and Sequencing: The validated protocol uses the KAPA HyperPrep Kit for library construction and sequencing on an Illumina NovaSeq 6000 platform. The accompanying bioinformatic workflow, Tapir, provides an end-to-end solution for converting raw data (BCL files) into sample genotypes compatible with genealogy databases like GEDmatch [25].

- Sensitivity and Dynamic Range: Libraries are generated from a series of DNA inputs ranging from 10 nanograms down to 50 picograms. This study establishes the lower limit of detection while demonstrating performance across the dynamic range expected from variable-quality forensic samples [25].

- Reproducibility and Contamination Assessment: Multiple analysts generate libraries from the same sample source to assess inter-user reproducibility. The potential for contamination is rigorously monitored through the evaluation of negative controls for any evidence of exogenous DNA [25].

- Mock Casework and Mixture Analysis: The workflow's performance is evaluated using mock casework samples, including artificially degraded DNA and mixtures at ratios from 1:1 to 1:49. This tests the bioinformatic pipeline's ability to handle the complexities inherent in real-world evidence [25].

Validation of a DIP Panel for Ancestry Inference

Validation of targeted panels, such as a 60-plex DIP panel, follows similar principles but is tailored to the technology and intended application.

- Panel Design and Population Genetics: A panel of 56 autosomal DIPs, 3 Y-chromosomal DIPs, and Amelogenin is selected. Its forensic efficacy is evaluated by calculating population genetic parameters, including the combined probability of discrimination power and cumulative probability of paternity exclusion, which were reported as 0.999999999999 and 0.9937, respectively [33].

- Ancestry Inference Capacity: The panel's ability to infer biogeographical ancestry is assessed using Principal Component Analysis (PCA), STRUCTURE analysis, and phylogenetic tree construction, which must show consistency with known population genetic structures [33].

- Forensic Conditions Testing: The panel undergoes developmental validation per SWGDAM guidelines. This includes testing PCR conditions, sensitivity, species specificity, stability, mixture analysis, reproducibility, and performance with case-type and degraded samples [33].

- Platform Transferability: As laboratories update instrumentation, validation must be repeated. For example, a 46 AIMs INDEL multiplex panel was re-validated on the SeqStudio Genetic Analyzer, demonstrating a 97.8% call rate and cleaner electropherograms compared to an older ABI 3130 platform, though with some sensitivity differences at lower detection thresholds [34].

Workflow Visualization: IGG Validation and Application

The following diagram illustrates the logical progression from sample intake through to investigative lead, highlighting the critical stages where validation provides scientific foundation for the entire IGG process.

The Scientist's Toolkit: Essential Research Reagents and Materials

The validation and application of IGG rely on a suite of specialized reagents, kits, and bioinformatic tools. The following table catalogs the key components of a functional IGG research toolkit.

Table 2: Key Research Reagent Solutions for IGG Validation and Analysis

| Item Name | Function / Application | Validation Context |

|---|---|---|

| KAPA HyperPrep Kit | Library preparation for Whole Genome Sequencing. | Used in the developmental validation of a WGS workflow for FGG analysis [25]. |

| Illumina NovaSeq 6000 | Massively Parallel Sequencing platform for generating high-density SNP data. | Platform validated for forensic WGS to create profiles compatible with GEDmatch [25]. |

| Tapir Bioinformatic Workflow | End-to-end pipeline for converting raw sequencing data (BCL) into formatted genotypes. | Provides a validated, portable tool for seamless data processing in FGG [25]. |

| 60-Plex DIP Panel | Multiplex assay for DIPs (Deletion/Insertion Polymorphisms). | Validated for forensic ancestry inference and personal identification in East Asian populations [33]. |

| 46 AIMs INDEL Panel | Multiplex assay of Ancestry-Informative Marker INDELs. | Re-validated on the SeqStudio platform for performance under various forensic conditions [34]. |

| SeqStudio Genetic Analyzer | Capillary Electrophoresis instrument for genetic analysis. | Platform validated for running INDEL panels, showing high call rates and clean data [34]. |

The rigorous validation of IGG workflows is the cornerstone that supports their transition from a powerful investigative tool to a scientifically and legally robust forensic discipline. As the data and protocols outlined herein demonstrate, validation requires a multifaceted approach, assessing everything from analytical sensitivity and mixture interpretation to bioinformatic reliability. The resulting performance metrics provide the transparency and foundational data necessary for the courtroom. Looking forward, the field must continue to standardize these validation protocols across laboratories, address database diversity gaps to ensure equitable application, and navigate the evolving legal landscape surrounding genetic privacy [14] [30]. By anchoring IGG in uncompromising scientific rigor, the forensic community can fully harness its potential to deliver justice while maintaining public trust.

Methodological Workflows: From Sample to Investigative Lead

The success of investigative genetic genealogy (IGG) hinges on the ability to generate high-quality genetic data from biological evidence that is often degraded, contaminated, or limited in quantity. Such challenging samples—including ancient skeletal remains, historical artifacts, and crime scene evidence exposed to environmental insults—have traditionally resisted analysis with conventional forensic DNA typing methods [3]. The limitations of traditional short tandem repeat (STR) profiling, particularly for degraded samples, are well-documented; its relatively large amplicon sizes often lead to incomplete or null profiles when DNA is fragmented [35]. The field has therefore undergone a significant paradigm shift, embracing advanced genomic techniques and next-generation sequencing (NGS) to recover information from previously intractable samples.

This guide objectively compares the performance of established and emerging sample processing methods, focusing on their application within a rigorous framework for validating forensic genealogy tools. For researchers and scientists, selecting the appropriate technique is not merely a technical choice but a foundational step that determines the viability of downstream IGG analysis and the ultimate success of an investigation.

Performance Comparison of Genomic Analysis Methods

The evolution from capillary electrophoresis (CE)-based STR analysis to next-generation sequencing (NGS) of single nucleotide polymorphisms (SNPs) represents the most significant advancement in analyzing degraded DNA. The following table summarizes a systematic, empirical comparison of these methodologies.

Table 1: Performance comparison of STR/CE and SNP/NGS methods on aged skeletal remains

| Feature | STR / Capillary Electrophoresis | SNP / Next-Generation Sequencing (ForenSeq Kintelligence) |

|---|---|---|

| Typed Markers | ~20-30 STRs [35] | 10,230 SNPs for kinship, bioancestry, and phenotype [35] |

| Typical Amplicon Size | Larger, can exceed 300 bp [35] | Mostly short; 9,673 of 9,867 kinship SNPs are <150 bp [35] |

| Mutation Rate | Relatively high [35] | Low [35] |

| Success Rate on 83-Year-Old Remains | 17/20 samples met QC for analysis; 0 yielded a complete profile [35] | 18/20 samples generated genetic information; 16 had sufficient SNPs for investigative leads [35] |

| Kinship Resolution | Typically limited to 1st-degree relationships [35] | Can extend to approximately 5th-degree relatives [35] |

| Investigator Leads Generated | 0 from the analyzed set [35] | 5 samples generated a possible kinship association [35] |

The data demonstrates the clear advantage of the NGS/SNP approach for compromised samples. Its success in generating viable genetic information from 90% of the aged skeletal samples, compared to 85% for STR/CE (with none being complete), underscores its superior resilience to DNA degradation. The key technical differentiator is the smaller amplicon size, which allows for the amplification of highly fragmented DNA templates that fail to yield results with conventional STR kits [35].

Experimental Protocols for Challenging Samples

DNA Extraction from Difficult Matrices

Effective DNA extraction is the critical first step. For highly recalcitrant tissues like bone, a combination of chemical and mechanical lysis is required.

- Protocol for Mineralized Tissue (Bone):

- Demineralization: Incubate powdered bone material in a buffer containing EDTA (e.g., 0.5 M, pH 8.0) to chelate calcium and dissolve the inorganic matrix. The incubation time must be optimized, as excess EDTA can inhibit downstream PCR [36].

- Lysis and Digestion: Following demineralization, add a lysis buffer containing Proteinase K and a detergent (e.g., SDS) to digest proteins and disrupt cellular membranes. Incubate at 56°C with agitation for several hours or overnight [36].

- Mechanical Homogenization: Employ a bead-based homogenizer, such as the Bead Ruptor Elite, to provide mechanical disruption. The use of specialized beads (e.g., ceramic or stainless steel) provides a "combo power punch" that enhances lysis efficiency. Parameters like speed, cycle duration, and temperature must be optimized to balance effective disruption with minimizing DNA shearing [36].

- Purification: Purify the lysate using silica-coated magnetic beads or columns, often with a binding buffer optimized for degraded DNA (e.g., "Buffer D") [37]. This method is scalable and avoids large-volume centrifugation steps, facilitating high-throughput processing [37].

Library Preparation for Low-Input and Degraded DNA

The construction of sequencing libraries from compromised DNA requires methods that are efficient, uracil-tolerant, and capable of handling short fragments.

Protocol: Santa Cruz Reaction (SCR) Library Build The SCR method is a low-cost, DIY protocol highly effective for fragmented DNA from museum specimens and is applicable to forensic samples [37].

- DNA Repair and End-Preparation: The fragmented DNA is treated with enzymes to repair damaged ends and prepare them for adapter ligation.

- Adapter Ligation: Unlike enzymatic tagmentation, SCR uses a direct ligation method to attach sequencing adapters. This approach is less biased and more efficient for short fragments.

- Indexing PCR: Amplify the adapter-ligated library using a uracil-tolerant polymerase like AmpliTaq Gold. The cycle number is determined by input DNA quantity to minimize amplification bias [37]:

2–4.9 ng DNA → 10 cycles5–19.9 ng DNA → 8 cycles20–29.9 ng DNA → 6 cycles30–41 ng DNA → 4 cycles

- Clean-up: Purify the final library using a 1.2x ratio of SPRI (bead) clean-up to best retain small fragments [37].

Protocol: Ultra-Mild Bisulfite (UMBS) Sequencing For methylation analysis from precious samples, the harsh conditions of traditional bisulfite treatment cause severe DNA degradation. The UMBS method mitigates this.

- Ultra-Mild Bisulfite Conversion: Incubate the DNA library in a re-engineered chemical conversion buffer. This formulation, with precisely controlled conditions and stabilizing components, achieves high cytosine-to-uracil conversion while preserving DNA integrity [38].

- Post-Conversion Clean-up and Amplification: Purify the converted DNA and amplify it for sequencing. The UMBS method demonstrates dramatically higher DNA recovery rates and more comprehensive CpG coverage than conventional methods [38].

Diagram 1: Degraded DNA analysis workflow decision tree.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and kits are fundamental for implementing the protocols discussed in this guide.

Table 2: Key research reagents and materials for degraded DNA analysis

| Research Reagent / Kit | Primary Function | Key Characteristic / Application Note |

|---|---|---|

| EDTA (Ethylenediaminetetraacetic acid) [36] | Chemical demineralization and nuclease inhibition. | Chelates metal ions; critical for processing bone samples. Concentration must be balanced to avoid PCR inhibition. |

| Proteinase K [36] | Enzymatic digestion of proteins. | Breaks down cellular structures and inactivates nucleases during lysis. |

| Bead Ruptor Elite Homogenizer [36] | Mechanical disruption of tough tissues. | Provides precise control over homogenization parameters (speed, time, temperature) to minimize DNA shearing. |

| Silica-coated Magnetic Beads [37] | DNA purification and clean-up. | Enable scalable, high-throughput DNA extraction and library clean-up without centrifugation. |

| Santa Cruz Reaction (SCR) [37] | DIY NGS library construction. | Low-cost, efficient method for building libraries from fragmented DNA; ideal for high-throughput projects. |

| ForenSeq Kintelligence Kit [35] | Targeted SNP sequencing for IGG. | Simultaneously amplifies 10,230 SNPs for extended kinship, bioancestry, and phenotype prediction from challenging samples. |

| HIrisPlex-S System [12] | Forensic DNA Phenotyping. | A validated SNaPshot-based multiplex assay predicting eye, hair, and skin color from degraded/low-quantity DNA. |

| Ultra-Mild Bisulfite (UMBS) Chemistry [38] | Gentler DNA methylation analysis. | Enables high-conversion efficiency with minimal DNA damage, advancing epigenetic research on precious samples. |

| AmpliTaq Gold Mastermix [37] | PCR amplification of libraries. | A uracil-tolerant polymerase essential for amplifying bisulfite-converted or damaged DNA libraries. |

Diagram 2: Strategy synergy for challenging samples.

The comparative data and protocols presented herein establish that the strategic adoption of NGS-based SNP analysis, coupled with robust, preservation-focused extraction and library construction methods, is fundamental to validating and operationalizing forensic genealogy tools. While traditional STR/CE retains its role in routine evidence analysis, it is the advanced techniques specifically designed for degraded and low-input DNA—exemplified by the ForenSeq Kintelligence kit and the Santa Cruz Reaction library build—that are reshaping the boundaries of IGG. By enabling reliable genetic analysis from the most challenging samples, these methods provide the scientific foundation required to deliver long-awaited answers and justice, thereby fulfilling the transformative promise of investigative genetic genealogy.

Forensic genetics has undergone a paradigm shift with the emergence of Forensic Genetic Genealogy (FGG), moving from traditional targeted analysis to comprehensive genome sequencing. This evolution began gaining significant traction in 2018 and has since revolutionized criminal investigations and unidentified human remains cases [2]. While traditional forensic DNA profiling relies on analyzing 16-27 Short Tandem Repeat (STR) markers using capillary electrophoresis, forensic-grade genome sequencing leverages hundreds of thousands of Single Nucleotide Polymorphisms (SNPs) through next-generation sequencing (NGS) technologies [2]. This fundamental methodological shift enables forensic scientists to overcome the limitations of degraded DNA evidence and generate investigative leads even when no reference profile exists in criminal databases [3].

The robustness of SNP profiles in forensic applications stems from their dense genome-wide distribution, stability across generations, and detectability in highly fragmented DNA [3]. These properties make SNPs particularly valuable for analyzing challenging forensic samples that would yield incomplete or no STR data. Furthermore, the abundance of SNPs throughout the genome enables kinship inference well beyond first-degree relationships, unlocking the potential to identify unknown individuals through distant familial matches in genetic genealogy databases [3]. As the field continues to mature, establishing validated protocols and performance standards for forensic-grade genome sequencing becomes paramount for ensuring the reliability and admissibility of SNP-based evidence in judicial proceedings.

Methodological Foundations: From STRs to Comprehensive SNP Profiling

Fundamental Differences Between Traditional and Genomic Forensic Approaches

The transition from traditional forensic DNA analysis to genomic approaches represents more than merely increasing the number of markers—it constitutes a fundamental transformation in technology, data output, and application. The table below summarizes the core distinctions between these methodologies:

Table 1: Comparison of Traditional Forensic DNA Profiling versus Forensic-Grade Genome Sequencing

| Parameter | Forensic DNA Profiling | Forensic-Grade Genome Sequencing |

|---|---|---|

| DNA Markers | Short Tandem Repeats (STRs) | Single Nucleotide Polymorphisms (SNPs) |

| Genomic Region | Non-coding | Coding and non-coding |

| Number of Markers | 16-27 | >600,000 |

| Technology | PCR amplification and capillary electrophoresis | Next-generation sequencing, whole genome sequencing |

| Data File Generated | Electropherogram | FASTQ |

| Databases Searched | National criminal DNA databases (e.g., CODIS) | Genetic genealogy databases (e.g., GEDmatch, FamilyTreeDNA) |

| Kinship Resolution | Typically limited to first-degree relatives | Can identify relationships beyond first-degree relatives |

Traditional forensic DNA profiling targets specific non-coding regions containing repetitive sequences, generating DNA fingerprints that are excellent for direct matching but limited in genealogical applications [2]. In contrast, forensic-grade genome sequencing captures variation across both coding and non-coding regions, providing a comprehensive genetic snapshot that enables both identity confirmation and ancestral reconstruction [3]. The technological divergence is equally significant—while STR analysis uses targeted amplification followed by size separation, SNP profiling employs massively parallel sequencing to simultaneously read millions of DNA fragments [2].

Workflow Comparison: Traditional Forensic Analysis vs. Forensic Genetic Genealogy

The following diagram illustrates the key procedural differences between traditional forensic analysis and forensic genetic genealogy:

The traditional forensic workflow is designed for efficiency in direct matching against offender databases, while the FGG workflow embraces complexity to generate investigative leads through distant kinship matching and genealogical research [2]. A critical distinction lies in the final validation step—where FGG ultimately returns to traditional STR analysis to confirm the identity of candidates developed through genealogical research [2]. This complementary relationship highlights how both methodologies remain valuable in the forensic toolkit.

Sequencing Platform Comparison for Forensic Applications

Technical Specifications of Relevant Sequencing Platforms

Selecting appropriate sequencing technology is crucial for generating robust SNP profiles in forensic contexts. The table below compares key performance metrics of sequencing platforms relevant to forensic applications:

Table 2: Sequencing Platform Comparison for Forensic Applications

| Platform | Output Range | Run Time | Reads per Run | Maximum Read Length | Relative Price per Sample |

|---|---|---|---|---|---|

| MiSeq FGx | 0.3-15 Gb | 4-55 hours | 1-25 million | 2 × 300 bp | Mid Cost |

| NextSeq 550Dx | ≥ 90 Gb | < 35 hours | > 300 million | 2 × 150 bp | Mid Cost |

| NovaSeq 6000 | 134-6000 Gb | 24-44 hours | Up to 20 billion | 2 × 150 bp | Higher Cost |

| iSeq 100 | 1.2 Gb | 9-19 hours | 4 million | 2 × 150 bp | Highest Cost |

| PacBio Sequel | Varies | Varies | Varies | >10,000 bp | Higher Cost |

| ONT MinION | Varies | Varies | Varies | >10,000 bp | Mid Cost |

The MiSeq FGx system represents the first fully validated sequencing system specifically designed for forensic genomics applications, offering a complete sample-to-answer system with dedicated library preparation kits and analytical software [39]. While platforms like NovaSeq 6000 offer substantially higher throughput, their utility in forensic contexts must be balanced against the specific requirements of casework, including sample quality, batch size, and turnaround time. For typical forensic casework involving limited sample numbers, mid-range platforms like MiSeq FGx and NextSeq 550Dx often provide the optimal balance of data quality, throughput, and cost efficiency [39].

Third-generation sequencing platforms from PacBio and Oxford Nanopore Technologies offer advantages in read length that can be valuable for resolving complex genomic regions, but their currently higher error rates (5-20% for TGS compared to approximately 1% for SGS) may present challenges for forensic applications requiring maximum accuracy [40]. However, these platforms continue to improve and may offer compelling alternatives as error rates decrease and validation studies accumulate.

Platform Selection Considerations for Forensic SNP Genotyping

Forensic applications present unique considerations for sequencing platform selection that differ from research or clinical settings. The optimal platform must demonstrate robust performance with degraded and low-input DNA samples, compatibility with rigorous quality assurance standards, and efficiency in processing typical forensic batch sizes. Dense SNP microarray analysis, which genotypes hundreds of thousands of markers using hybridization rather than sequencing, remains a popular alternative for FGG due to established protocols and lower per-sample costs [2]. However, sequencing-based approaches offer advantages in detecting novel variants, analyzing mixed samples, and providing phased haplotypes.

For forensic-grade sequencing, the Illumina platform currently dominates due to its high accuracy, established forensic validations, and compatibility with degraded DNA [3]. The platform's sequencing-by-synthesis chemistry provides the base-level precision required for reliable SNP calling in legal contexts. Emerging platforms from MGI offer competitive cost structures and improving data quality, with studies showing that DNBSEQ-T7 provides "cheap and accurate" reads suitable for polishing assemblies [40]. As the sequencing landscape evolves, forensic laboratories must balance innovation with the rigorous validation requirements necessary for courtroom admissibility.

Experimental Protocols for Forensic SNP Panel Development

Minimal SNP Panel Design for Backward Compatibility

A critical challenge in implementing forensic SNP profiling is maintaining backward compatibility with existing STR databases. Research has explored developing minimal SNP panels that enable "record-matching" between SNP profiles and traditional STR profiles through linkage disequilibrium between SNPs and physically proximate STRs [41]. The following experimental protocol outlines the methodology for establishing such panels:

Table 3: Experimental Protocol for Developing Minimal SNP Panels for STR Record-Matching

| Step | Procedure | Parameters | Output |

|---|---|---|---|

| 1. Reference Data Collection | Obtain phased SNP-STR haplotypes from diverse populations | 1000 Genomes Project data; 18 CODIS STRs with 1-Mb flanking regions | Phased reference panel |

| 2. Training-Test Partition | Split data into training (75%) and test (25%) sets | 10 replicate partitions | Balanced datasets for validation |

| 3. STR Imputation | Use BEAGLE to impute STR genotypes from SNP profiles | Reference panel from training set | Imputed STR probabilities |

| 4. Match Score Calculation | Compute log-likelihood ratios for profile pairs | Needle-in-haystack matching scenario | Match-score matrix |

| 5. SNP Selection | Apply selection strategies (MAF, physical distance) | Minor Allele Frequency (MAF) thresholds; proximity to STRs | Optimized SNP panels |

| 6. Accuracy Assessment | Measure record-matching accuracy | Proportion of correctly matched profiles | Performance metrics |

This protocol successfully demonstrated that deliberately selected SNP panels of 900-1,800 SNPs could achieve accuracy comparable to randomly selected panels of 8,000-16,000 SNPs, significantly reducing the genomic resource required for backward compatibility with existing STR databases [41]. SNP selection based on minor allele frequency thresholds and physical proximity to target STRs proved particularly efficient, highlighting the importance of strategic marker selection rather than simply expanding panel size.

Validation Framework for Forensic Sequencing Panels

Comprehensive validation of forensic sequencing panels requires assessing multiple performance metrics under conditions mimicking forensic casework. The following diagram illustrates a rigorous validation workflow adapted from established frameworks for diagnostic sequencing panels: