Validating Discriminatory Power in Analytical Methods: An ANOVA Guide for Pharmaceutical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on using Analysis of Variance (ANOVA) to validate the discriminatory power of analytical techniques.

Validating Discriminatory Power in Analytical Methods: An ANOVA Guide for Pharmaceutical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on using Analysis of Variance (ANOVA) to validate the discriminatory power of analytical techniques. It covers foundational statistical principles, practical application methodologies in various experimental designs, strategies for troubleshooting common issues and optimizing analysis, and a framework for validating results against alternative statistical approaches. By integrating ANOVA within the model-informed drug development paradigm, this guide aims to enhance the robustness and interpretability of data in biomedical and clinical research, ensuring reliable analytical method validation.

ANOVA and Discriminatory Power: Core Concepts for Robust Analytical Method Validation

What is ANOVA and Why is It Essential for Demonstrating Discriminatory Power?

Analysis of Variance (ANOVA) serves as a fundamental statistical tool for demonstrating discriminatory power across various scientific domains, from analytical method development to biomedical research. This guide objectively examines ANOVA's performance against alternative statistical methods, detailing its theoretical basis, practical applications, and limitations. By providing structured comparisons of experimental data and methodologies, we illustrate how ANOVA enables researchers to validate the ability of their methods to distinguish between different treatments, conditions, or populations. Within the context of analytical techniques research, proper implementation of ANOVA provides robust evidence of discriminatory power, which is essential for method validation, quality control, and regulatory compliance in drug development and other scientific fields.

Analysis of Variance (ANOVA) is a family of statistical methods used to compare the means of two or more groups by analyzing variance components [1]. Developed by statistician Ronald Fisher in the early 20th century, ANOVA determines whether observed differences between group means are statistically significant by comparing the amount of variation between groups to the amount of variation within groups [1] [2]. The method uses the F-statistic, which calculates the ratio of between-group variance to within-group variance [3]. A higher F-value indicates that between-group variation substantially exceeds within-group variation, suggesting that the group means are likely different [1].

Discriminatory power refers to the ability of an analytical method to reliably detect differences between test groups, conditions, or treatments. In scientific research and method validation, demonstrating strong discriminatory power is essential for establishing that a technique can meaningfully distinguish between different states, compounds, or populations. ANOVA provides a statistical framework for quantifying and validating this discriminatory power by testing whether the factor being studied (e.g., different drugs, experimental conditions, or analytical methods) creates systematic differences that exceed random variation in the data.

The fundamental principle behind ANOVA is the partitioning of total variance into components attributable to different sources [1]. In its simplest form, ANOVA decomposes the total variability in a dataset into:

- Between-group variance: Variability due to the experimental treatments or factors

- Within-group variance: Variability due to random error or individual differences

This decomposition allows researchers to determine whether their experimental manipulation has produced effects that are substantially larger than what would be expected by chance alone, thereby demonstrating discriminatory power.

Theoretical Framework: How ANOVA Quantifies Discriminatory Power

The ANOVA Model and F-Statistic

ANOVA quantifies discriminatory power through its mathematical framework centered on the F-statistic. The core equation for the F-statistic in one-way ANOVA is:

F = Between-group variance / Within-group variance [2]

This can be mathematically expressed as:

F = [∑i=1Kni(Ȳi - Ȳ)2/(K-1)] / [∑ij=1n(Yij - Ȳi)2/(N-K)] [2]

Where:

- Ȳi is the mean of group i

- ni is the number of observations in group i

- Ȳ is the overall mean

- K is the number of groups

- Yij is the jth observational value of group i

- N is the total number of observations [2]

The between-group variance (numerator) measures how different the group means are from each other, while the within-group variance (denominator) measures how much variability exists within each group. When the between-group variance is substantially larger than the within-group variance, the F-ratio increases, indicating that the grouping factor has strong discriminatory power [2].

Conceptual Basis for Discrimination

The discriminatory power of ANOVA can be visualized conceptually. Imagine three different scenarios for grouping data points:

- Weak discrimination: Group means are similar and within-group variance is high

- Moderate discrimination: Group means differ but distributions overlap substantially

- Strong discrimination: Group means are distinct with minimal distribution overlap

ANOVA quantifies this intuitive understanding by providing a statistical test for whether any observed separation between groups exceeds what would be expected by random chance. The method essentially determines whether knowing which group a data point belongs to helps predict its value better than simply using the overall mean [1].

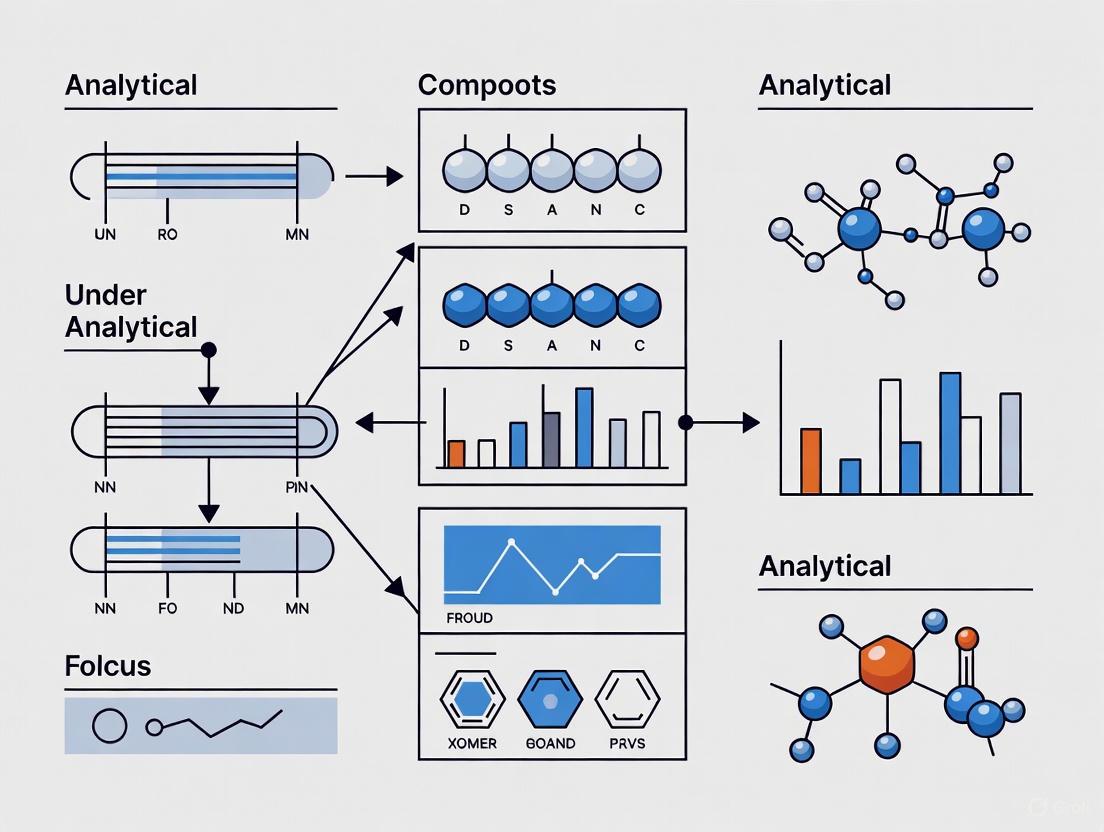

Figure 1: ANOVA conceptual framework showing how total variance is partitioned into between-group and within-group components, which form the F-statistic used to quantify discriminatory power.

Experimental Protocols and Methodologies

Standard ANOVA Protocol for Method Discrimination Studies

Implementing ANOVA to demonstrate discriminatory power requires careful experimental design and execution. The following protocol outlines the key steps:

Experimental Design

- Define the factor(s) of interest with distinct levels/groups

- Determine appropriate sample size for adequate statistical power

- Randomize assignment of experimental units to treatment groups

- Consider blocking factors if needed to control for known sources of variation

Data Collection

- Collect data according to the experimental design

- Ensure consistent measurement conditions across all groups

- Record potential covariates that might influence results

Assumption Checking

- Test for normality of residuals using graphical methods (Q-Q plots) or statistical tests (Shapiro-Wilk)

- Verify homogeneity of variances using Levene's test or Bartlett's test

- Confirm independence of observations based on experimental design

ANOVA Implementation

- Select appropriate ANOVA model (one-way, factorial, repeated measures)

- Compute sum of squares for all model components

- Calculate degrees of freedom for each variance component

- Compute mean squares and F-statistics

- Determine statistical significance using F-distribution

Post-hoc Analysis (if significant)

- Conduct multiple comparisons tests (Tukey HSD, Bonferroni, Scheffé)

- Calculate confidence intervals for mean differences

- Interpret effect sizes (e.g., η²) for practical significance

Specialized ANOVA Extensions for Enhanced Discrimination

For complex experimental designs or specific data types, several ANOVA extensions have been developed to improve discriminatory power:

Multivariate ANOVA (MANOVA): Used when multiple correlated dependent variables are measured simultaneously [4]. MANOVA can provide greater discriminatory power than multiple ANOVAs by accounting for interrelationships between variables.

ANOVA Simultaneous Component Analysis (ASCA): Combines variance factorization of ANOVA with exploratory power of Principal Component Analysis (PCA) [4]. This method is particularly useful for multivariate data in omics sciences, where it models structured data resulting from experimental designs.

Variable-selection ASCA (VASCA): A recent enhancement to ASCA that incorporates variable selection in multivariate permutation testing [4]. This method improves statistical power for detecting factors associated with only a subset of variables, thereby enhancing discriminatory capability in high-dimensional data.

Mixed-effects Models: Used when experiments contain both fixed and random effects, common in longitudinal studies or hierarchical data structures.

Comparative Performance Data

Discrimination Power Across Statistical Methods

The following table summarizes the discriminatory performance of ANOVA compared to alternative statistical methods based on experimental data from various studies:

Table 1: Comparison of Statistical Methods for Demonstrating Discriminatory Power

| Method | Optimal Application Context | Discriminatory Power | Type I Error Control | Key Limitations |

|---|---|---|---|---|

| One-way ANOVA | Single factor with 3+ groups | Moderate to High (when assumptions met) [2] | Strong (when assumptions met) | Assumes normality, homogeneity of variance, independence [1] |

| t-test (multiple) | Single factor with 2 groups | Moderate for individual comparisons | Poor (inflated Type I error with multiple comparisons) [2] | Family-wise error rate increases with number of comparisons [2] |

| MANOVA | Multiple correlated dependent variables | High for multivariate patterns [4] | Moderate | Sensitive to violations of multivariate normality, large variable-to-sample ratio [4] |

| Kruskal-Wallis | Ordinal data or violated normality | Moderate (less powerful than parametric ANOVA) | Strong with large samples | Less powerful than ANOVA when its assumptions are met [5] |

| ASCA | Multivariate designed experiments | High for structured multivariate data [4] | Strong with permutation testing | Limited power for factors affecting few variables [4] |

| VASCA | High-dimensional multivariate data | Very High (enhanced power with variable selection) [4] | Strong with proper variable selection | Computational intensity, implementation complexity [4] |

Experimental Performance in Specific Applications

Table 2: ANOVA Performance in Specific Research Applications

| Application Domain | Experimental Context | Key Findings on Discriminatory Power | Reference |

|---|---|---|---|

| Sensory Science | Comparison of three affective methods for food preference | ANOVA detected significant differences between products, but assumptions of normality and homoscedasticity were frequently violated with hedonic scale data [6] | Villanueva et al., 2000 |

| Biomedical Research | T cell receptor affinity discrimination | Traditional ANOVA would be inappropriate for clustered data; specialized methods required to avoid false positives [5] | Simulation Study |

| Drug Combination Studies | Analysis of interaction effects in factorial experiments | ANOVA can be misleading for drug combination studies due to nonlinear dose-response patterns not captured by linear models [7] | Ashton, 2015 |

| Omics Sciences | Multivariate analysis in designed experiments | ASCA (ANOVA extension) provided enhanced discriminatory power for structured multivariate data compared to univariate approaches [4] | Camacho et al., 2022 |

Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for ANOVA-Based Discrimination Studies

| Reagent/Material | Function in Experimental Design | Specific Application Examples |

|---|---|---|

| Standardized Reference Materials | Provide consistent baseline for method comparison | Certified reference materials in analytical method validation |

| Cell-Based Assay Systems | Biological platform for treatment comparison | T-cell activation studies [8] |

| Surface Plasmon Resonance (SPR) | Measurement of molecular interactions | Ultra-low affinity TCR/pMHC binding studies [8] |

| W6/32 Antibody | Conformation-sensitive detection of correctly folded pMHC | Standard curve generation in SPR studies [8] |

| Multiple Factor Levels | Experimental conditions for ANOVA grouping | Drug doses, temperature levels, pH conditions [4] |

Figure 2: Decision workflow for implementing ANOVA in discriminatory power studies, including assumption checking and alternative methods when ANOVA assumptions are violated.

Critical Considerations for Valid Discrimination Testing

Assumptions and Data Integrity

For ANOVA to provide valid evidence of discriminatory power, several statistical assumptions must be verified:

- Independence of observations: Experimental units must be measured independently [1]

- Normality: The distribution of residuals should approximate a normal distribution [1]

- Homogeneity of variances: Groups should have approximately equal variances [1]

Violations of these assumptions can compromise discriminatory power assessments. When assumptions are not met, researchers should consider:

- Data transformations to achieve normality and homoscedasticity

- Non-parametric alternatives like Kruskal-Wallis test [5]

- Generalized Linear Models (GLMs) or Generalized Least Squares (GLS) for specific data types [9]

- Robust ANOVA methods that are less sensitive to assumption violations

Appropriate Experimental Design

The discriminatory power of ANOVA is highly dependent on proper experimental design:

- Balanced designs (equal sample sizes across groups) provide optimal power and robustness

- Randomization helps ensure independence and controls for confounding factors

- Blocking can increase discriminatory power by accounting for known sources of variation

- Replication provides more reliable variance estimates and enhances power

Multiple Testing Considerations

When using ANOVA followed by post-hoc tests, researchers must account for multiple comparisons to maintain appropriate Type I error rates. Common approaches include:

- Tukey's Honest Significant Difference (HSD) for all pairwise comparisons

- Bonferroni correction for a specified family of comparisons

- False Discovery Rate (FDR) control in high-dimensional settings [4]

ANOVA remains an essential statistical method for demonstrating discriminatory power in analytical techniques research and drug development. Its ability to partition variance into meaningful components provides a robust framework for determining whether experimental factors create systematic differences that exceed random variation. While traditional ANOVA offers strong discriminatory power when its assumptions are met, specialized extensions like MANOVA, ASCA, and VASCA have expanded its applicability to complex, multivariate experimental designs.

The comparative data presented in this guide demonstrates that ANOVA generally provides superior Type I error control compared to multiple t-tests, while maintaining good statistical power for detecting meaningful differences. However, researchers must remain vigilant about assumption validation and consider alternative methods when data characteristics violate core ANOVA assumptions. When properly implemented within a rigorous experimental design, ANOVA serves as a powerful tool for validating the discriminatory power of analytical methods across scientific disciplines.

Null Hypothesis Significance Testing (NHST) is a fundamental statistical method used across scientific disciplines, particularly in clinical trials and analytical techniques research, to determine whether observed data provide sufficient evidence to reject a default position. This framework begins by formulating two competing hypotheses: the null hypothesis (H₀), which typically states that no effect, difference, or relationship exists, and the alternative hypothesis (H₁), which states that a non-random effect or difference is present. For example, in research validating a new analytical method, the null hypothesis might state that the new method shows no significant difference in discriminatory power compared to a standard reference method.

The process involves calculating a test statistic from experimental data and determining the probability (p-value) of obtaining results at least as extreme as those observed, assuming the null hypothesis is true. A p-value less than a predetermined significance level (α), commonly set at 0.05, leads to rejecting the null hypothesis. This decision framework inherently carries the risk of two types of errors: Type I errors (false positives) and Type II errors (false negatives), which researchers must carefully balance through strategic experimental design and analysis. The NHST framework provides a structured approach for making inferences from data, playing a crucial role in validating the discriminatory power of analytical techniques.

Type I and Type II Errors: Definitions and Consequences

In the NHST framework, decision errors are categorized based on whether the null hypothesis is true or false in reality and what the statistical test concludes. Understanding these errors is crucial for interpreting research results accurately.

Type I Errors (False Positives)

A Type I error occurs when the null hypothesis is incorrectly rejected when it is actually true. This is equivalent to a false positive—claiming an effect or difference exists when there is none. The probability of making a Type I error is denoted by α (alpha), which is the significance level set for the test. In most scientific research, α is conventionally set at 0.05, indicating a 5% risk of rejecting a true null hypothesis.

The consequences of Type I errors can be severe, particularly in fields like drug development and healthcare. For example, if a Type I error occurs in a clinical trial evaluating a new drug, researchers might incorrectly conclude the drug is effective when it actually provides no therapeutic benefit. This could lead to pursuing ineffective treatments, raising healthcare costs, and potentially causing patient harm without clinical benefit. A real-world analogy is convicting an innocent person in the courtroom—the system incorrectly rejects the default assumption of innocence.

Type II Errors (False Negatives)

A Type II error occurs when the null hypothesis is not rejected when it is actually false. This represents a false negative—failing to detect a genuine effect or difference that truly exists. The probability of making a Type II error is denoted by β (beta). The complement of this probability (1-β) is known as the statistical power of a test, representing its ability to correctly reject a false null hypothesis.

Type II errors also carry significant consequences in research. For instance, in analytical method development, a Type II error might lead researchers to conclude a new technique lacks sufficient discriminatory power when it actually represents a meaningful improvement over existing methods. This could cause potentially valuable innovations to be abandoned prematurely. In medical testing, a Type II error corresponds to failing to identify a disease when it is actually present, delaying necessary treatment. In the courtroom analogy, this would be equivalent to acquitting a guilty defendant.

Table 1: Decision Matrix for Type I and Type II Errors in NHST

| Null Hypothesis (H₀) is TRUE | Null Hypothesis (H₀) is FALSE | |

|---|---|---|

| Fail to reject H₀ | Correct decision (True negative) | Type II Error (False negative) |

| Reject H₀ | Type I Error (False positive) | Correct decision (True positive) |

Statistical Power: Concept and Importance

Statistical power is a fundamental concept in research design that represents the probability that a test will correctly reject a false null hypothesis. In practical terms, power indicates the likelihood that a study will detect an effect when one truly exists. Power is mathematically defined as 1-β, where β is the probability of a Type II error. Researchers generally consider a power of 80% (β=0.20) as an acceptable minimum standard for well-designed studies, though this target may vary based on field-specific conventions and the consequences of potential errors.

The importance of statistical power extends throughout the research process. During the planning and design phase, power analysis helps researchers determine the appropriate sample size needed to detect a meaningful effect, ensuring efficient resource allocation. When interpreting non-significant results, understanding power helps researchers distinguish between truly absent effects and inadequately powered studies. In the context of research validity, high-powered studies produce more reliable and reproducible results, contributing to the cumulative advancement of scientific knowledge. Underpowered studies not only risk missing true effects but also represent questionable research ethics when human or animal subjects are exposed to risk with little chance of obtaining meaningful results.

Factors Affecting Statistical Power

Several key factors influence the statistical power of an experiment, and understanding their interplay is essential for optimal study design.

Primary Factors Influencing Power

Significance Level (α): The chosen threshold for statistical significance directly affects power. A more lenient α (e.g., 0.10 instead of 0.05) increases power by widening the rejection region but simultaneously raises the risk of Type I errors. This inverse relationship creates a fundamental trade-off that researchers must balance based on the relative consequences of each error type in their specific context.

Sample Size (n): Increasing sample size typically enhances power by reducing the standard error of the test statistic. Larger samples provide more precise estimates of population parameters and increase the likelihood of detecting true effects. However, the relationship between sample size and power follows a diminishing returns pattern, where initial increases provide substantial power gains that gradually level off.

Effect Size: The magnitude of the actual effect being studied significantly impacts power. Larger effects are more easily detectable than smaller ones with the same sample size. Researchers must define the minimum clinically or practically important effect size during study design to conduct appropriate power calculations.

Measurement Variability: Reduced variability in the response variable increases power by making it easier to distinguish true effects from random noise. Researchers can minimize variability through careful experimental control, precise measurement instruments, and specialized study designs such as matched pairs or repeated measures.

Table 2: Relationship Between Key Factors and Statistical Power

| Factor | Direction of Change | Impact on Statistical Power |

|---|---|---|

| Significance Level (α) | Increase | Increases |

| Sample Size (n) | Increase | Increases |

| Effect Size | Increase | Increases |

| Measurement Variability | Increase | Decreases |

Visualizing the Relationship Between Factors and Power

The following diagram illustrates how the primary factors of sample size, effect size, and significance level interact to influence statistical power:

Power Analysis in Practice

Power analysis provides a formal framework for quantifying the relationship between power, sample size, effect size, and significance level. This analytical approach can be conducted at different stages of research with distinct objectives.

Types of Power Analysis

A Priori Power Analysis: Conducted during the planning stages of research before data collection begins. This prospective approach helps researchers determine the necessary sample size to achieve adequate power (typically 80%) for detecting a specified effect size at a predetermined significance level.

Post Hoc Power Analysis: Performed after completing a study and obtaining results. This retrospective approach calculates the actual power of the test based on the observed effect size, sample size, and significance level. However, this method has drawn criticism as it provides little additional information beyond the p-value.

Sensitivity Analysis: Determines the minimum effect size that could be detected with a given sample size and power, helping researchers interpret the practical significance of their findings.

Power Analysis in ANOVA for Discriminatory Power Validation

In research validating analytical techniques, ANOVA is commonly used to compare means across multiple groups or conditions. The power of an ANOVA test depends on several parameters:

- Number of groups (k): The number of comparison groups in the design

- Sample size per group (n): The number of observations in each group

- Effect size (f): The standardized difference between group means

- Significance level (α): The probability threshold for statistical significance

- Within-group variance: The variability of observations within each group

Cohen's f is a commonly used effect size measure for ANOVA, calculated as the standard deviation of standardized group means. Larger values indicate greater differences between groups relative to within-group variability.

Table 3: Comparison of Statistical Approaches for Proof-of-Concept Trials

| Statistical Method | Therapeutic Area | Sample Size for 80% Power | Relative Efficiency |

|---|---|---|---|

| Conventional t-test | Acute Stroke | 388 total patients | Reference |

| Pharmacometric Model | Acute Stroke | 90 total patients | 4.3-fold improvement |

| Conventional t-test | Type 2 Diabetes | 84 total patients | Reference |

| Pharmacometric Model | Type 2 Diabetes | 10 total patients | 8.4-fold improvement |

| Note: Adapted from comparisons of analysis methods for proof-of-concept trials [10]. |

Practical Implementation of Power Analysis

Statistical software packages provide practical tools for conducting power analyses:

G*Power is a freely available tool that enables power analysis for a wide range of statistical tests, including various ANOVA designs. Researchers can perform a priori power analyses by specifying the test type, effect size, α level, and desired power to determine the necessary sample size.

SAS PROC POWER provides similar functionality for power analysis in SAS, with specific procedures for different statistical tests. For one-way ANOVA, researchers can specify group means, standard deviation, and sample size to calculate achieved power.

R Statistical Environment includes multiple packages for power analysis, including the pwr package and built-in power.anova.test() function. These tools allow researchers to calculate power, sample size, effect size, or significance level when the other three parameters are known.

Experimental Protocols for Power Optimization

Protocol for A Priori Power Analysis in ANOVA

Objective: To determine the appropriate sample size for an experiment comparing multiple groups using ANOVA while maintaining adequate statistical power.

Materials and Software: Statistical software (e.g., G*Power, SAS, R), preliminary effect size estimate from pilot data or literature.

Procedure:

- Define the minimum effect size of practical or clinical importance based on literature review or pilot data.

- Set the desired power level (typically 80%) and significance level (typically α=0.05).

- Specify the number of groups in the experimental design.

- Input these parameters into statistical software to calculate the required sample size per group.

- If the calculated sample size is impractical due to resource constraints, consider increasing the minimum detectable effect size or exploring design modifications to reduce variability.

- Document the power analysis parameters and rationale for the final sample size selection.

Protocol for Pharmacometric Model-Based Analysis

Objective: To enhance statistical power in proof-of-concept trials through model-based analysis of longitudinal data.

Materials and Software: Pharmacometric modeling software (e.g., NONMEM, Monolix), longitudinal clinical data.

Procedure:

- Develop a structural model describing the time course of the response variable.

- Incorporate covariate relationships to explain between-subject variability.

- Define the drug effect model component relating exposure to response.

- Estimate model parameters using maximum likelihood or Bayesian methods.

- Conduct clinical trial simulations to evaluate operating characteristics under different design scenarios.

- Compare power of model-based approach to conventional statistical tests using likelihood ratio tests or similar methods.

- Implement the model-based analysis for the primary study endpoint, utilizing all available longitudinal data rather than single timepoint comparisons.

Research Reagent Solutions for Analytical Techniques

Table 4: Essential Research Reagents and Tools for NHST Validation Studies

| Reagent/Tool | Function | Application in NHST Studies |

|---|---|---|

| G*Power Software | Statistical power analysis | Calculating required sample sizes for ANOVA designs during study planning |

| R Statistical Package | Data analysis and visualization | Conducting ANOVA, calculating effect sizes, and creating power curves |

| SAS PROC POWER | Power analysis in SAS environment | Determining sample size requirements for complex experimental designs |

| Pharmacometric Modeling Software | Longitudinal data analysis | Enhancing power through model-based analysis in proof-of-concept trials |

| Pilot Data | Preliminary effect size estimation | Informing realistic power calculations before conducting definitive studies |

Advanced Considerations in NHST

Contrast Analysis in Factorial Designs

In complex factorial designs, contrast analysis provides a more powerful approach for testing specific hypotheses compared to omnibus F-tests. Contrast analysis allows researchers to combine several means into focused comparisons that address central research questions directly. This approach produces unstandardized effect sizes expressed in original measurement units, making interpretation more intuitive for researchers familiar with their specific measurement scales. For example, in analytical method validation, contrast analysis could directly test whether a new method differs from established references while controlling for multiple comparisons.

Limitations and Criticisms of NHST

The NHST framework has faced substantial criticism regarding its implementation and interpretation. Key limitations include:

Misinterpretation of p-values: P-values are often mistakenly interpreted as the probability that the null hypothesis is true, rather than the probability of observing the data assuming the null hypothesis is true.

Dichotomous thinking: The "significant vs. non-significant" dichotomy encourages simplistic interpretations that ignore effect sizes and practical importance.

Publication bias: The focus on statistical significance contributes to the file drawer problem, where studies with non-significant results remain unpublished.

Neglect of assumptions: NHST outcomes depend on various statistical assumptions (normality, independence, homoscedasticity) that are often not properly verified.

Alternative approaches such as confidence intervals, effect sizes, and Bayesian methods provide complementary information that addresses some NHST limitations. Current publication guidelines strongly recommend reporting effect sizes and confidence intervals alongside traditional significance tests.

The NHST framework, with its inherent concepts of Type I/II errors and statistical power, provides a structured approach for making inferences from experimental data. In analytical techniques research, understanding these concepts is essential for designing informative studies, interpreting results appropriately, and validating the discriminatory power of new methodologies. While NHST has limitations that researchers must acknowledge, proper application of power analysis, careful consideration of effect sizes, and appropriate use of statistical methods like ANOVA and contrast analysis can significantly enhance research quality and reproducibility. As statistical practice evolves, the integration of NHST with complementary approaches such as confidence intervals and Bayesian methods will continue to strengthen the scientific research enterprise.

Analysis of Variance (ANOVA) is a powerful statistical method that revolutionized experimental design by allowing researchers to compare means across three or more groups simultaneously. Developed by statistician Ronald A. Fisher, ANOVA addresses a critical limitation of t-tests, which are limited to comparing only two groups and inflate Type I error rates when used for multiple comparisons [11] [1]. The fundamental principle of ANOVA lies in partitioning the total observed variance in a dataset into components attributable to different sources, primarily the variation between groups and the variation within groups [11].

In analytical sciences and pharmaceutical research, this variance partitioning provides a robust framework for making inferences about whether observed differences among group means are statistically significant or merely result from random variation. The core logic of ANOVA involves comparing the between-group variance (differences among group means) to the within-group variance (natural variation within each group) [12]. When between-group variation substantially exceeds within-group variation, we have evidence that the grouping factor (e.g., different formulations, manufacturing processes, or experimental treatments) systematically affects the outcome variable [13] [14].

The concept of variance partitioning is particularly valuable in method validation and discriminatory testing, where researchers must determine whether an analytical technique can reliably detect meaningful differences between products or processes. By quantifying and comparing these two sources of variation, ANOVA provides an objective, statistical basis for decision-making in research and quality control [15].

Theoretical Foundations: Between-Group vs. Within-Group Variation

Defining the Variance Components

In ANOVA, the total variance in a dataset is partitioned into two main components:

Between-Group Variation: This represents the variation of each group's mean from the overall grand mean. It measures how much the group means differ from one another and is attributable to the factor being studied [13]. In experimental contexts, this is often considered the "signal" or "explained variation" because it potentially reflects the effect of the treatment or intervention being investigated [14].

Within-Group Variation: Also called residual, unexplained, or error variation, this measures the variation of individual observations within each group from their respective group mean [13] [14]. This represents the natural variability that occurs even among subjects or samples receiving the same treatment and is often considered "noise" in the data [16].

The relationship between these components is mathematically represented through the sum of squares partitioning, where the Total Sum of Squares (SSTotal) equals the Sum of Squares Between groups (SSBetween) plus the Sum of Squares Within groups (SS_Within) [11].

The F-Statistic: Ratio of Variances

The central test statistic in ANOVA is the F-statistic, calculated as the ratio of between-group variance to within-group variance:

F = Between-Group Variance / Within-Group Variance [13] [12]

This ratio follows a known probability distribution (F-distribution) under the null hypothesis that all group means are equal. When the between-group variance is significantly larger than the within-group variance, the F-statistic increases, producing a smaller p-value [13]. If this p-value falls below a predetermined significance level (typically 0.05), we reject the null hypothesis and conclude that at least one group mean differs significantly from the others [12].

Table 1: Key Components of ANOVA Variance Partitioning

| Component | Description | Interpretation | Mathematical Representation |

|---|---|---|---|

| Between-Group Variation | Differences among group means | Variation explained by the factor/treatment | Σnⱼ(X̄ⱼ - X̄..)² where nⱼ is sample size of group j, X̄ⱼ is mean of group j, X̄.. is overall mean [13] |

| Within-Group Variation | Differences within each group | Unexplained or random variation | Σ(Xᵢⱼ - X̄ⱼ)² where Xᵢⱼ is the ith observation in group j, X̄ⱼ is the mean of group j [13] |

| F-Statistic | Ratio of variances | Measure of signal-to-noise | F = (Between-Group Variance) / (Within-Group Variance) [12] |

Visualizing Variance Partitioning in ANOVA

The following diagram illustrates the logical relationship between variance components in ANOVA and how they contribute to the F-statistic:

Figure 1: Logical Flow of Variance Partitioning in ANOVA

ANOVA in Analytical Science: Experimental Applications

Pharmaceutical Dissolution Testing

In pharmaceutical sciences, ANOVA-based variance partitioning plays a crucial role in developing and validating discriminative dissolution methods. For example, researchers developing dissolution tests for carvedilol tablets (a poorly soluble BCS Class II drug) used ANOVA to compare dissolution profiles across different products [15]. The discriminatory power of a dissolution method—its ability to detect meaningful differences between formulations—depends on effectively partitioning variance to distinguish between genuine product differences and random variability [15].

In one study, researchers evaluated carvedilol tablet dissolution using Apparatus II (paddle) at 50 rpm with 900 ml of pH 6.8 phosphate buffer as the dissolution medium [15]. They calculated between-group and within-group variation across three different products and found the between-group variation (207.2) was substantially different from the within-group variation (363.5), with an F-statistic of 7.6952 and a p-value of .0023 [13]. This statistically significant result confirmed that the dissolution method could discriminate between different formulations, making it suitable for quality control purposes [13] [15].

Formulation Optimization Studies

Similar ANOVA principles apply to formulation development studies. Research on fast-dispersible tablets (FDTs) of domperidone (another BCS Class II drug) used ANOVA to compare dissolution profiles across different formulations and establish the discriminatory power of the dissolution method [17]. The researchers optimized dissolution conditions by testing various media including sodium lauryl sulfate (SLS) solutions at different concentrations, simulated intestinal fluid (pH 6.8), simulated gastric fluid (pH 1.2), and 0.1N hydrochloric acid [17].

The resulting ANOVA tests determined that 0.5% SLS with distilled water provided optimal discriminatory power, with the between-group variation sufficiently exceeding within-group variation to detect meaningful formulation differences [17]. This application demonstrates how variance partitioning helps researchers select appropriate analytical conditions that can distinguish critical quality attributes during formulation development.

Table 2: Experimental Conditions from Pharmaceutical ANOVA Studies

| Study | Objective | Experimental Conditions | Key ANOVA Results |

|---|---|---|---|

| Carvedilol Tablets [15] | Develop discriminative dissolution method | Apparatus II (paddle), 50 rpm, 900 ml pH 6.8 phosphate buffer | Between-group variation: 207.2, Within-group variation: 363.5, F-statistic: 7.6952, p-value: .0023 |

| Domperidone FDTs [17] | Validate discriminatory dissolution method | Various media including 0.5% SLS, SIF pH 6.8, SGF pH 1.2; Apparatus II, 50-75 rpm | 0.5% SLS in distilled water showed optimal discriminatory power with significant ANOVA results (p < 0.05) |

Research Reagent Solutions for Discriminatory Dissolution Testing

Table 3: Essential Materials for Discriminatory Dissolution Studies

| Reagent/Equipment | Function in Experiment | Application Example |

|---|---|---|

| USP Apparatus II (Paddle) | Provides standardized agitation during dissolution testing | Used in both carvedilol and domperidone studies with rotation speeds of 50-75 rpm [15] [17] |

| pH 6.8 Phosphate Buffer | Simulates intestinal environment for dissolution | Optimal medium for carvedilol tablet dissolution testing [15] |

| Sodium Lauryl Sulfate (SLS) | Surfactant that enhances solubility of poorly soluble drugs | Used at 0.5% concentration in distilled water for domperidone FDTs to achieve discriminatory power [17] |

| Simulated Gastric Fluid (SGF) | Simulates stomach environment without enzymes | Tested for domperidone FDT dissolution at pH 1.2 [17] |

| Simulated Intestinal Fluid (SIF) | Simulates intestinal environment without enzymes | Evaluated for dissolution testing at pH 6.8 [17] |

| 0.1N Hydrochloric Acid | Simulates highly acidic gastric conditions | Official dissolution medium for domperidone but lacked discriminatory power [17] |

| UV Spectrophotometer/HPLC | Quantifies drug concentration in dissolution samples | HPLC used for carvedilol analysis; UV spectrophotometry for domperidone [15] [17] |

Methodological Protocols for Variance Partitioning Studies

Experimental Design Considerations

Proper experimental design is essential for valid variance partitioning in analytical studies. The fundamental assumptions of ANOVA must be verified to ensure reliable results:

- Independence: Observations must be independent of each other [12].

- Normality: The dependent variable should be approximately normally distributed within each group [14] [12].

- Homogeneity of Variance: Groups should have similar variances (homoscedasticity) [12].

In pharmaceutical dissolution testing, these assumptions are verified through preliminary experiments. For example, the carvedilol study ensured homogeneity of variance by using consistent experimental conditions across all test groups and verified normality through residual analysis [15].

Step-by-Step ANOVA Implementation

The general protocol for implementing ANOVA in analytical studies involves:

Define Hypotheses:

Collect Data: Gather data for the dependent variable across three or more groups [12]. For dissolution testing, this means measuring percentage dissolved at multiple time points for different formulations [15] [17].

Check Assumptions:

Calculate Variance Components:

- Compute overall mean (X̄..) and group means (X̄ⱼ) [13]

- Calculate Between-Group Sum of Squares: Σnⱼ(X̄ⱼ - X̄..)² [13]

- Calculate Within-Group Sum of Squares: Σ(Xᵢⱼ - X̄ⱼ)² [13]

- Determine degrees of freedom (between groups: k-1; within groups: N-k) where k is number of groups, N is total sample size [14]

Compute F-Statistic:

Interpret Results:

Comparative Analysis of Variance Partitioning Approaches

Advantages of ANOVA in Analytical Method Validation

ANOVA-based variance partitioning offers several advantages for analytical researchers:

- Controlled Error Rates: Unlike multiple t-tests which inflate Type I error rates, ANOVA maintains the experiment-wise error rate at the chosen significance level [12].

- Comprehensive Assessment: By considering both between-group and within-group variation, ANOVA provides a more complete picture of product differences than single-point comparisons [15].

- Objective Decision-Making: The F-statistic provides an objective, quantitative measure of discriminatory power for analytical methods [15] [17].

- Flexibility: ANOVA can be extended to more complex experimental designs (e.g., two-way ANOVA, repeated measures) to account for multiple factors simultaneously [14].

Limitations and Alternative Approaches

While powerful, ANOVA has limitations that researchers should consider:

- Assumption Sensitivity: Violations of normality or homogeneity of variance can affect results, though ANOVA is relatively robust to mild violations [14] [12].

- Non-Specific Results: A significant F-statistic only indicates that not all groups are equal, not which specific groups differ [12]. Post-hoc tests are needed for specific comparisons [14].

- Single-Factor Focus: Standard one-way ANOVA only handles one independent variable [12]. For multiple factors, more complex designs (e.g., factorial ANOVA) are required [14].

Alternative approaches for comparing dissolution profiles include:

- Model-Dependent Methods: Fitting mathematical models to dissolution data and comparing model parameters [15].

- Model-Independent Methods: Using similarity factors (f₂) and difference factors (f₁) to compare profiles [15] [17].

However, ANOVA remains particularly valuable for establishing initial discriminatory power during method development because it directly addresses the core question of whether formulations produce systematically different dissolution behavior [15].

Partitioning variance into between-group and within-group components using ANOVA provides a statistically rigorous framework for validating the discriminatory power of analytical methods. In pharmaceutical research, this approach enables scientists to distinguish meaningful product differences from random variability, supporting robust method development and quality control. The fundamental principle of comparing between-group variation (potentially explained by formulation factors) to within-group variation (inherent randomness) through the F-statistic creates an objective basis for assessing method capability. As demonstrated in dissolution testing for carvedilol and domperidone products, proper application of ANOVA with appropriate experimental designs and validation of assumptions provides critical insights into product performance and method suitability. This variance partitioning approach continues to be indispensable for establishing reliable analytical methods that can detect clinically or quality-relevant differences in pharmaceutical products.

In analytical techniques research and drug development, validating the discriminatory power of a method is paramount. This often involves comparing measurements across multiple groups, such as different sample types, treatment conditions, or analyte concentrations. While the Student's t-test is a well-established tool for comparing two groups, its erroneous application to multi-group comparisons inflates Type I errors, potentially leading to false scientific conclusions and compromised drug quality. This guide explores the statistical rationale for transitioning from multiple t-tests to Analysis of Variance (ANOVA) as the appropriate global test for comparing more than two means, detailing its application within a framework for validating analytical techniques.

The Multiple Comparisons Problem: Why T-Tests Fail for Multiple Groups

When researchers need to compare more than two groups, a common misconception is that performing multiple pairwise t-tests is an acceptable practice. However, this approach introduces a substantial statistical flaw known as the family-wise error rate or multiple comparisons problem.

Error Rate Inflation: Each individual t-test performed at a significance level (α) of 0.05 carries a 5% risk of a Type I error (falsely rejecting a true null hypothesis). When these tests are repeated across multiple pairs, these risks accumulate. For k groups, the number of possible pairwise comparisons is k(k-1)/2. For three groups (A, B, C), this results in three comparisons (A vs. B, A vs. C, B vs. C). The overall chance of committing at least one Type I error across all tests becomes 1-(0.95)³, or approximately 14%, far exceeding the intended 5% threshold [18]. With more groups, this inflated error rate grows rapidly, undermining the reliability of any findings [18] [19].

The Global Null Hypothesis: ANOVA addresses this by reframing the research question. Instead of asking, "Which specific pairs are different?" it first asks a more general, protective question: "Are there any differences among all these groups at all?" [19]. This establishes a single, overall hypothesis test, controlling the Type I error rate at the designated α level for the entire experiment.

ANOVA as the Global Test: Principles and Assumptions

Analysis of Variance (ANOVA) is a parametric statistical technique designed to compare the means of two or more groups simultaneously [18] [20] [19]. Its core logic involves partitioning the total variability observed in the data into two components [20]:

- Variance Between Groups (SSB): Variability due to the different experimental treatments or group factors.

- Variance Within Groups (SSW): Natural variability among subjects or samples treated alike, often considered random error.

The F-statistic, the key output of an ANOVA, is the ratio of the between-group variance (Mean Square Between, MSB) to the within-group variance (Mean Square Within, MSW): F = MSB / MSW [18] [21]. A larger F-value indicates that the differences between group means are large relative to the background noise, suggesting that not all group means are equal [18] [21].

For valid ANOVA results, several key assumptions must be met [20] [19]:

- Normality: The data within each group should be approximately normally distributed.

- Homogeneity of Variances: The variances within each group should be roughly equal.

- Independence: Observations must be independent of each other.

- Randomness: The sample data should be a random sample from the population.

Experimental Protocol: Implementing ANOVA in Analytical Research

The following workflow provides a structured methodology for applying one-way ANOVA in a validation study, such as testing the discriminatory power of an assay across multiple analyte concentrations.

Pre-Experimental Planning and Sample Preparation

- Hypothesis Formulation:

- Experimental Design: For a one-way ANOVA, define one categorical independent variable (e.g., 'Formulation Type') with three or more levels (e.g., 'Reference', 'Test 1', 'Test 2'). The dependent variable is the continuous measurement from your analytical technique (e.g., 'Potency' or 'Dissolution Rate') [19]. Ensure a sufficient sample size; a common guideline is at least 3 observations per group for each independent variable [22].

- Data Collection: Perform analytical measurements in a randomized order to minimize bias from instrument drift or environmental factors.

Data Analysis and Interpretation

- Compute the F-Statistic: Using statistical software, calculate the Sum of Squares, Mean Squares, and the final F-value. The associated p-value indicates the probability of observing the data if the null hypothesis were true.

- Decision Rule: Compare the p-value to the significance level (typically α = 0.05). If the p-value is less than α, reject the null hypothesis, concluding that there is a statistically significant difference among the group means [21] [20].

- Post-Hoc Analysis: A significant ANOVA result only indicates that not all means are equal. To identify which specific pairs differ, post-hoc tests are required [18] [19]. Common choices include:

- Tukey's HSD: Controls the family-wise error rate and is excellent for comparing all possible pairs of means.

- Bonferroni Correction: A more conservative method that adjusts the significance level for each test by dividing α by the number of comparisons.

Table 1: Key Post-Hoc Tests for Following a Significant ANOVA Result

| Test | Best Use Case | Key Characteristic |

|---|---|---|

| Tukey's HSD | Comparing all possible pairs of means. | Controls the family-wise error rate; widely used and recommended. |

| Bonferroni | When a pre-planned, limited number of comparisons are made. | Very conservative; can substantially reduce statistical power. |

| Scheffé | When making complex comparisons beyond simple pairwise (e.g., comparing a control to the average of others). | The most conservative method; protects against all possible linear combinations. |

Comparative Experimental Data: T-Tests vs. ANOVA

To illustrate the perils of multiple t-tests, consider simulated data from an analytical method validation study. The goal is to determine if three different sample preparation methods yield significantly different purity results.

Table 2: Simulated Purity Data (%) for Three Sample Preparation Methods

| Observation | Method A | Method B | Method C |

|---|---|---|---|

| 1 | 98.5 | 99.1 | 97.8 |

| 2 | 99.2 | 99.5 | 98.2 |

| 3 | 98.8 | 98.9 | 97.5 |

| 4 | 99.0 | 99.3 | 98.0 |

| 5 | 98.7 | 99.0 | 97.9 |

| Mean | 98.84 | 99.16 | 97.88 |

Incorrect Approach: Multiple Pairwise T-Tests If we perform three independent t-tests (α=0.05 for each):

- Method A vs. Method B: p-value = 0.07 (Not Significant)

- Method A vs. Method C: p-value = 0.001 (Significant)

- Method B vs. Method C: p-value < 0.001 (Significant)

While two comparisons appear significant, the overall risk of a Type I error across this "family" of three tests is inflated to nearly 15%.

Correct Approach: One-Way ANOVA A single ANOVA test yields an F-statistic of 25.4 and a p-value of < 0.001. This significant global test (at α=0.05) confirms that not all method means are equal, and doing so controls the experiment-wise Type I error at 5%. A subsequent Tukey's HSD test would correctly identify that Methods A and B are not significantly different from each other, but both are significantly different from Method C.

The Researcher's Toolkit for ANOVA

Table 3: Essential Research Reagent Solutions for Analytical Validation

| Item / Solution | Function in Experimental Protocol |

|---|---|

| Statistical Software (R, SPSS, Python) | Performs complex ANOVA calculations, generates F-statistics, p-values, and post-hoc tests accurately and efficiently. |

| Standard Reference Material | Serves as a calibrated control to ensure the analytical instrument is producing accurate and precise measurements across all test groups. |

| Buffers & Mobile Phases (HPLC-grade) | Provide a consistent and contamination-free chemical environment for separations, critical for minimizing within-group variance. |

| Internal Standard | Accounts for sample preparation and instrument variability, improving the precision of measurements and strengthening the assumption of homogeneity of variances. |

| Post-Hoc Test Protocol | A pre-defined statistical plan (e.g., to use Tukey's HSD) that is implemented only upon a significant ANOVA result to identify specific group differences. |

Workflow and Logical Pathway for Validating Discriminatory Power

The following diagram outlines the logical decision process for selecting the correct statistical test when comparing group means in analytical research, culminating in the use of ANOVA and post-hoc analysis.

Statistical Test Selection Workflow

The journey from t-tests to ANOVA is a critical one for scientists and researchers dedicated to rigorous data analysis. While the t-test is powerful for comparing two groups, its misuse in multi-group scenarios leads to an unacceptably high probability of false discoveries. ANOVA provides a robust solution through a global test that controls the experiment-wise error rate, thereby validating the overall discriminatory power of an analytical method. By adopting the ANOVA framework—including careful attention to its assumptions and the proper use of post-hoc tests—researchers in drug development and analytical science can draw more reliable and statistically sound conclusions, ultimately strengthening the validity of their research and the quality of their products.

In the validation of analytical techniques, demonstrating that a method can reliably distinguish between different conditions or treatments is paramount. The Analysis of Variance (ANOVA) F-statistic serves as a fundamental objective metric for quantifying this discriminatory power [23]. It moves beyond mere visual assessment of data, providing a rigorous statistical framework to test whether observed differences among group means are genuine or attributable to random noise [24]. This is crucial in fields like drug development, where decisions to advance a compound to clinical trials hinge on robust preclinical evidence of its effectiveness [25]. The F-statistic formalizes this assessment by quantifying the ratio of systematic variation between groups to the unsystematic variation within groups [23] [26]. A high F-value indicates that the differences between group means are substantial relative to the background variability, providing statistical evidence that the analytical method or treatment possesses the discriminatory power to detect a true effect [27] [24].

Conceptual Foundation: The Signal (Between-Group Variance) vs. Noise (Within-Group Variance)

Understanding the F-statistic requires dissecting its two fundamental components: the variance between groups and the variance within groups.

- Between-Group Variance (The Signal): This numerator of the F-ratio quantifies the dispersion of the different group means around the overall global mean [24] [26]. It captures the treatment effect or the signal that the experiment is designed to detect. A larger spread between the group means indicates a stronger potential effect of the independent variable, leading to a greater between-group variance [23].

- Within-Group Variance (The Noise): This denominator of the F-ratio measures the variability of individual data points around their respective group means [24] [26]. It represents the random error or background noise present in the data, stemming from measurement error, individual differences, or uncontrolled variables [23]. A smaller within-group variance indicates more precise and homogeneous groups, making it easier to detect a true signal should one exist [23].

The following conceptual diagram illustrates how these variances combine to determine the F-statistic and the resulting discriminatory power.

Interpreting the F-Statistic in Practice

The Decision Framework: F-Value, P-Value, and Critical Value

A calculated F-statistic alone is not sufficient for drawing conclusions; it must be interpreted within a statistical decision framework. This involves comparing the F-value to a critical value from the F-distribution or, more commonly, examining its associated p-value [23] [24]. The F-distribution is a probability distribution that describes the behavior of the F-statistic under the assumption that the null hypothesis (all group means are equal) is true [23]. The p-value represents the probability of observing an F-value as extreme as, or more extreme than, the one calculated from your data, assuming the null hypothesis is true [24]. The following workflow outlines the standard decision-making process for interpreting an ANOVA result.

Quantitative Guidelines for F-Value Interpretation

The table below summarizes the relationship between the F-value, p-value, and the statistical conclusion. Note that a "large" F-value is always context-dependent, determined by the degrees of freedom and the chosen significance level (α). A common threshold for statistical significance is α = 0.05 [28].

Table 1: Interpretation Framework for the F-Statistic

| F-Value Relative to Critical Value | P-Value Interpretation | Statistical Conclusion | Implication for Discriminatory Power |

|---|---|---|---|

| F > F-critical | P-value < α (e.g., < 0.05) | Reject the null hypothesis [23] [24]. | Statistically Significant: The data provides sufficient evidence that the method can distinguish between groups. The factor being tested has a significant effect [27]. |

| F ≈ 1 | P-value > α (e.g., > 0.05) | Fail to reject the null hypothesis [23] [26]. | Not Statistically Significant: The observed differences between group means are not large enough to conclude they are real. The method may lack power or the factor may have no effect [24]. |

Experimental Design for Robust Discriminatory Power

Key Assumptions of ANOVA

The validity of the F-test's p-value depends on several statistical assumptions. Violations of these assumptions can increase the probability of false positives (Type I errors) or false negatives (Type II errors), compromising the integrity of the conclusions [23].

Table 2: ANOVA Assumptions and Validation Methods

| Assumption | Description | Impact of Violation | Common Verification Tests |

|---|---|---|---|

| Normality | The residuals (errors) within each group should be approximately normally distributed [1]. | The F-test is generally robust to mild deviations from normality, especially with large sample sizes. | Shapiro-Wilk test, Normal Q-Q plot [23]. |

| Homogeneity of Variances | The variances within each group should be roughly equal (homoscedasticity) [1]. | Increased susceptibility to Type I or Type II errors, particularly with unbalanced sample sizes. | Levene's test, Bartlett's test [23]. |

| Independence of Observations | Data points are not influenced by or correlated with other data points [1]. | Can severely inflate Type I error rates and invalidate the test. | Ensured through proper experimental design and randomization [25]. |

Power Analysis and Sample Size Determination

A study with low statistical power is unethical and wasteful, as it lacks a high probability of detecting a true effect of a meaningful size [25] [29]. Power analysis ensures that a study is designed with a sufficient sample size to achieve adequate discriminatory power.

- The Interrelated Components of Power Analysis: Statistical power is determined by four interrelated factors: the significance level (α), the sample size (N), the effect size, and the desired power (1-β) [28] [29]. To perform an a priori power analysis, researchers specify α (typically 0.05), desired power (typically 0.80 or 80%), and the estimated effect size to calculate the required sample size [29].

- Effect Size in ANOVA: The effect size quantifies the magnitude of the treatment effect. A common measure for ANOVA is Cohen's f or the Root Mean Square Standardized Effect (RMSSE) [30]. Cohen provided tentative guidelines for interpreting these values, with f = 0.1, 0.25, and 0.4 representing small, medium, and large effects, respectively [30]. Detecting a smaller effect size with high power requires a larger sample size.

Table 3: Sample Size Per Group for a One-Way ANOVA (4 Groups, α=0.05, Power=0.80)

| Effect Size (Cohen's f) | RMSSE | Required N per Group | Interpretation |

|---|---|---|---|

| Small (0.10) | ~0.15 | ~ 400 | A very large sample is needed to detect a subtle effect. |

| Medium (0.25) | ~0.29 | ~ 45 | A feasible sample size for a clinically meaningful effect [30]. |

| Large (0.40) | ~0.46 | ~ 20 | A smaller sample can detect a strong, obvious effect [30]. |

Practical Application: Experimental Protocol and Analysis

Sample Experimental Workflow for Method Comparison

This protocol outlines a typical experiment comparing the performance of multiple analytical methods or treatments.

- Define Hypothesis:

- H₀ (Null): There is no difference in the mean measurement outcomes between the analytical methods (μ₁ = μ₂ = ... = μₖ).

- H₁ (Alternative): At least one analytical method yields a mean measurement outcome that is different from the others [23].

- Determine Sample Size: Conduct an a priori power analysis using software like G*Power [29]. Based on a pilot study or literature, estimate the expected effect size (e.g., Cohen's f). For a medium effect (f=0.25) with 4 groups, α=0.05, and power=0.80, the analysis would indicate a requirement of approximately 45 samples per group [30].

- Execute Experiment: Randomly assign samples or subjects to the different analytical methods or treatment groups. Collect data according to a pre-defined and standardized operating procedure to maintain consistency and minimize introduced variability [25].

- Check Assumptions: Before running ANOVA, test the data for homogeneity of variances using Levene's test and for normality of residuals using the Shapiro-Wilk test or graphical methods like a Q-Q plot [23].

- Perform ANOVA and Interpret F-Statistic: Run a one-way ANOVA. Obtain the F-statistic and its associated p-value. Refer to the decision framework in Section 3.1 to interpret the results.

- Run Post-Hoc Analysis (if needed): If the ANOVA result is significant (p < 0.05), conduct post-hoc tests (e.g., Tukey's HSD) to determine which specific group means differ from each other, while controlling for the family-wise error rate [23].

Table 4: Key Research Reagent Solutions and Statistical Tools

| Item | Function in ANOVA and Discriminatory Power Validation |

|---|---|

| G*Power Software | A free, user-friendly tool for performing a priori power analysis and sample size calculation for F-tests and other statistical methods [29]. |

| Statistical Software (R, Python, SPSS, Minitab) | Platforms used to perform the ANOVA calculation, assumption checks, and post-hoc tests. They generate the F-statistic, p-value, and summary tables [27] [26]. |

| Positive Control | A treatment or sample with a known, expected effect. Used to validate that the experimental system and analytical method are functioning with sufficient sensitivity to detect an effect. |

| Standardized Protocols | Detailed, written procedures for sample preparation, data acquisition, and analysis. Critical for minimizing within-group variance (noise) and ensuring the reproducibility of results [25]. |

The ANOVA F-statistic is a robust tool for moving beyond subjective comparison to a quantitative, statistically sound validation of an analytical method's discriminatory power. A significant F-value indicates that the technique can reliably detect differences between groups, a cornerstone of rigorous scientific research. However, a valid interpretation hinges on a well-designed experiment that fulfills the underlying assumptions of ANOVA and is powered adequately to detect a meaningful effect size. By integrating careful planning, power analysis, and diligent interpretation of the F-statistic within its proper context, researchers in drug development and beyond can make confident, data-driven decisions about the discriminatory power of their methods.

Implementing ANOVA for Analytical Method Validation: A Step-by-Step Protocol

Analysis of Variance (ANOVA) is a fundamental statistical technique for determining if there are statistically significant differences between the means of three or more groups. In analytical and pharmaceutical research, it serves as a critical tool for validating the discriminatory power of methods—the ability of an analytical procedure to detect meaningful differences between samples, a requirement for ensuring product quality and consistency. Prof. R.A. Fisher introduced the term in the 1920s to separate variance attributable to assignable causes from that due to chance [31]. Unlike t-tests, which are limited to comparing two groups, ANOVA allows researchers to compare multiple treatments, formulations, or conditions simultaneously, controlling for Type I errors that increase with multiple pairwise comparisons [31] [32].

The core principle of ANOVA is to partition the total variability in a dataset into components attributable to different sources. It compares the variance between groups (treatment effects) to the variance within groups (random error) [31] [33]. A reliable F-statistic, which is the ratio of between-group variance to within-group variance, indicates that the group means are not all equal. This makes ANOVA particularly valuable for experiments designed to prove that an analytical method can reliably distinguish between different product formulations, manufacturing batches, or storage conditions—a cornerstone of method validation [17].

Types of ANOVA and Their Theoretical Frameworks

One-Way ANOVA

Purpose and Design: One-way ANOVA is used to assess the effect of a single independent variable (factor) with three or more levels on a continuous dependent variable [31] [33]. For example, it could be used to compare the dissolution rates of a drug across three different formulation types (e.g., Formulation A, B, and C) [31]. Its primary function is to test the null hypothesis (H₀) that all group means are equal against the alternative hypothesis (H₁) that at least one group mean is different [31].

Key Assumptions: The validity of the one-way ANOVA result depends on three key assumptions:

- Independence: Observations must be independent of each other [31] [33].

- Normality: The data within each group should be approximately normally distributed [33].

- Homogeneity of Variance: The variances within each group should be roughly equal [33].

Two-Way ANOVA

Purpose and Design: Two-way ANOVA extends the analysis to include two independent variables (factors). This allows researchers to examine not only the main effect of each factor but also their interaction effect [31]. An interaction effect occurs when the effect of one factor depends on the level of the other factor [34]. For instance, in an experiment, the two factors could be "Fertilizer Type" (A, B, C) and "Planting Time" (Early, Late). A two-way ANOVA can determine if the effect of fertilizer on plant growth depends on the planting time [31].

Hypotheses Tested: A two-way ANOVA simultaneously tests three sets of hypotheses:

- H₀: There is no difference in the means of factor A.

- H₀: There is no difference in the means of factor B.

- H₀: There is no interaction between factors A and B.

Factorial Designs

Beyond Two Factors: Factorial designs involve manipulating two or more independent variables, each with multiple levels, to study their independent and interactive effects on a dependent variable [34]. A design with two factors, each at two levels, is a 2x2 factorial design; one with three factors at two levels each is a 2x2x2 factorial design, and so on [34].

Key Advantages: The popularity of factorial designs stems from several key advantages:

- Efficiency: They allow for the study of multiple factors in a single, integrated experiment, which is more efficient than studying one factor at a time [34].

- Detection of Interactions: They are the only means to formally quantify and test for interactions between factors [34].

- Increased Power and External Validity: Including relevant factors in the design can reduce residual variance, increasing the power to detect effects. They also better mirror real-world systems where multiple influences co-occur, enhancing external validity [34].

Diagram 1: A workflow to guide researchers in selecting the appropriate ANOVA design based on the number of experimental factors and the objective of assessing interactions.

Comparative Analysis of ANOVA Designs

The table below summarizes the core characteristics, applications, and outputs of the three main ANOVA designs to aid in selection.

Table 1: A comparative overview of One-Way, Two-Way, and Factorial ANOVA designs for analytical research.

| Feature | One-Way ANOVA | Two-Way ANOVA | Factorial ANOVA |

|---|---|---|---|

| Independent Variables | One factor with ≥3 levels [31] | Two factors [31] | Two or more factors [34] |

| Primary Use Case | Comparing group means for a single factor; initial screening [31] [33] | Assessing main effects of two factors and their interaction [31] | Complex experiments analyzing multiple main effects and interactions [34] |

| Hypotheses Tested | H₀: μ₁=μ₂=...=μₖ [31] | 1. H₀ for Factor A main effect2. H₀ for Factor B main effect3. H₀ for A×B interaction [31] | H₀ for each main effect and all possible interaction effects [34] |

| Key Outputs | F-statistic, p-value for the single factor [31] | F-statistic and p-value for Factor A, Factor B, and their Interaction [31] | F-statistics and p-values for all factors and their interactions [34] |

| Discriminatory Power | Tests power to differentiate across levels of one critical factor [17] | Tests power and can determine if discrimination depends on a second factor [31] | Most comprehensive test of power across a multi-factorial experimental space [34] |

Experimental Protocols for Discriminatory Power Validation

Case Study: Validating a Discriminatory Dissolution Method

A prime example of using ANOVA to demonstrate discriminatory power comes from research on fast-dispersible tablets (FDTs) of domperidone, a poorly soluble drug [17]. The official dissolution medium (0.1N HCl) was unable to distinguish between different formulations, necessitating the development and validation of a new discriminatory method.

Objective: To develop and validate a dissolution method capable of detecting differences in the dissolution profiles of various domperidone FDT formulations [17].

Materials and Methods:

- Formulations: Several domperidone FDTs (e.g., DOM-1, DOM-2) were prepared, likely with variations in disintegrants or other excipients to alter dissolution rates [17].

- Dissolution Media: Multiple media were screened, including 0.1N HCl, phosphate buffer (pH 6.8), and distilled water with varying concentrations (0.5%, 1.0%, 1.5%) of sodium lauryl sulfate (SLS) [17].

- Apparatus: USP Apparatus II (paddle), with agitation speeds of 50 and 75 rpm [17].

- Analysis: Samples were analyzed by UV spectrophotometry. Dissolution profiles were compared using one-way ANOVA to determine if the observed differences in drug release between formulations were statistically significant [17].

Results and Conclusion: The study found that 0.5% SLS in distilled water provided the optimal discriminatory power. The percentage of drug release differed significantly between the tested formulations (DOM-1 vs. DOM-2) in this medium, as confirmed by a significant ANOVA result (p < 0.05). This finding validated the method's ability to detect changes in product quality and support formulation development [17].

Protocol for a Two-Way ANOVA Experiment

Objective: To investigate the effects of Drug Type (A, B, C) and Dosage Level (Low, High) on blood pressure reduction, and to determine if the effect of Drug Type depends on the Dosage Level (interaction).

Experimental Design:

- Factors and Levels:

- Factor 1: Drug Type (3 levels: A, B, C)

- Factor 2: Dosage Level (2 levels: Low, High)

- This is a 3x2 factorial design [34].

- Subjects: Randomly assign subjects to one of the 3x2 = 6 experimental groups to ensure independence of observations [33].

- Data Collection: Measure blood pressure reduction after the treatment period.

- Statistical Analysis:

- Perform a Two-Way ANOVA.

- Examine the p-values for the main effects of "Drug Type" and "Dosage Level."

- Critically examine the p-value for the "Drug Type × Dosage Level" interaction. A significant interaction (p < 0.05) indicates that the ranking or effectiveness of the drugs changes from the low to the high dosage.

Table 2: Key reagents, materials, and software solutions for conducting ANOVA in pharmaceutical research.

| Item Category | Specific Examples | Function in Experiment |

|---|---|---|

| Dissolution Apparatus | USP Apparatus II (Paddle), Electrolab TDT-08L [17] | Provides standardized hydrodynamic conditions for in vitro drug release testing. |

| Analytical Instruments | UV Spectrophotometer (e.g., Shimadzu UV-1800) [17] | Quantifies the concentration of drug released in dissolution media at specific time points. |

| Chemicals & Reagents | Sodium Lauryl Sulfate (SLS), Phosphate Buffers, Simulated Gastric/Intestinal Fluids [17] | Create dissolution media with varying pH and solubilizing properties to challenge formulation discrimination. |

| Statistical Software | SPSS, R (aov, anova functions), SAS (PROC ANOVA), Minitab, Python (scipy.stats.f_oneway) [31] [33] [35] |

Performs ANOVA calculations, generates F-statistics and p-values, and conducts post-hoc tests. |

Critical Considerations and Best Practices

Limitations and Cautions

While powerful, ANOVA is not a universal solution and has limitations. A key caution is that it is based on linear modeling. When analyzing drug combinations, which often follow nonlinear dose-response patterns, ANOVA may fail to detect a true interaction unless the dose levels are carefully chosen to be within the linear-response range [7]. Furthermore, a significant ANOVA result only indicates that not all group means are equal; it does not specify which means are different.

The Role of Post-Hoc Analysis

When a One-Way ANOVA yields a significant result (p < 0.05), post-hoc tests are required to identify which specific groups differ. Common post-hoc tests include:

- Tukey's Honestly Significant Difference (HSD): Controls the family-wise error rate and is ideal for comparing all possible pairs of means [31] [32].

- Bonferroni Correction: A more conservative method that adjusts the significance level by dividing it by the number of comparisons [32].

These tests control the probability of making a Type I error (false positive) across multiple comparisons, ensuring the reliability of the discriminatory findings [31] [32].

Reporting and Interpretation