Understanding CASOC Indicators: A Framework for Evaluating Comprehension of Forensic Statistics in Legal and Biomedical Contexts

This article provides a comprehensive analysis of the CASOC (Comprehension and Application of Statistical and Objective Concepts) indicators—sensitivity, orthodoxy, and coherence—as a framework for evaluating how legal decision-makers and biomedical...

Understanding CASOC Indicators: A Framework for Evaluating Comprehension of Forensic Statistics in Legal and Biomedical Contexts

Abstract

This article provides a comprehensive analysis of the CASOC (Comprehension and Application of Statistical and Objective Concepts) indicators—sensitivity, orthodoxy, and coherence—as a framework for evaluating how legal decision-makers and biomedical professionals understand statistical forensic evidence, particularly likelihood ratios. It explores the foundational principles of these indicators, reviews methodological approaches for their assessment in research and practice, addresses key challenges in optimizing comprehension, and examines validation strategies and comparative effectiveness of different evidence presentation formats. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current empirical literature to offer insights and recommendations for improving the communication and interpretation of complex statistical data in high-stakes decision-making environments.

Defining CASOC Indicators: The Core Framework for Measuring Comprehension of Forensic Statistics

The Comprehension Assessment Standards Outcome Criteria (CASOC) framework provides a structured approach for evaluating how well laypersons, such as legal decision-makers or jurors, understand statistical forensic evidence. Within forensic statistics, communicating the strength of evidence in an intelligible manner is paramount to ensuring just legal outcomes. The CASOC indicators—Sensitivity, Orthodoxy, and Coherence—serve as core metrics for empirically assessing this comprehension, moving beyond informal evaluation to a standardized, measurable process [1]. This framework is particularly vital in the context of presenting complex statistical information like Likelihood Ratios (LRs), which quantify the strength of forensic evidence but are frequently misunderstood.

The overarching goal of research utilizing CASOC is to determine the most effective methods for forensic practitioners to present LRs to maximize understandability for non-experts [1]. The existing body of literature has historically investigated the understanding of "strength of evidence" in a broad sense, rather than focusing specifically on the comprehension of LRs themselves. The CASOC framework allows researchers to dissect and measure comprehension in a nuanced way, paving the path for evidence-based communication strategies that can mitigate misinterpretations, such as the prosecutor's fallacy [2].

Defining the Core CASOC Indicators

The three core CASOC indicators—Sensitivity, Orthodoxy, and Coherence—each measure a distinct dimension of comprehension. A detailed breakdown of these metrics is provided in the table below.

Table 1: Core CASOC Indicators of Comprehension

| Indicator | Definition | What It Measures | Research Context |

|---|---|---|---|

| Sensitivity | The ability of an individual's interpretation of evidence to change appropriately in response to variations in the strength of the evidence (e.g., different LR values) [1]. | Whether a layperson's perception of evidence strength shifts as the actual statistical strength changes. | For example, if a presented LR increases from 10 to 1000, does the user's assigned posterior probability also increase significantly? |

| Orthodoxy | The alignment between an individual's interpretation of the evidence and the prescribed Bayesian interpretation [1]. | How closely a layperson's quantitative understanding matches the normative benchmark for updating beliefs based on new evidence. | It assesses if the posterior odds derived from a participant equal their prior odds multiplied by the presented LR. |

| Coherence | The internal consistency of an individual's probabilistic judgments across different presentations of the same or related evidence [1]. | Whether an individual's judgments are logically consistent and not self-contradictory. | For instance, if Evidence A is stronger than Evidence B, a coherent individual should not rank B as stronger than A. |

Experimental Protocols for Assessing CASOC Metrics

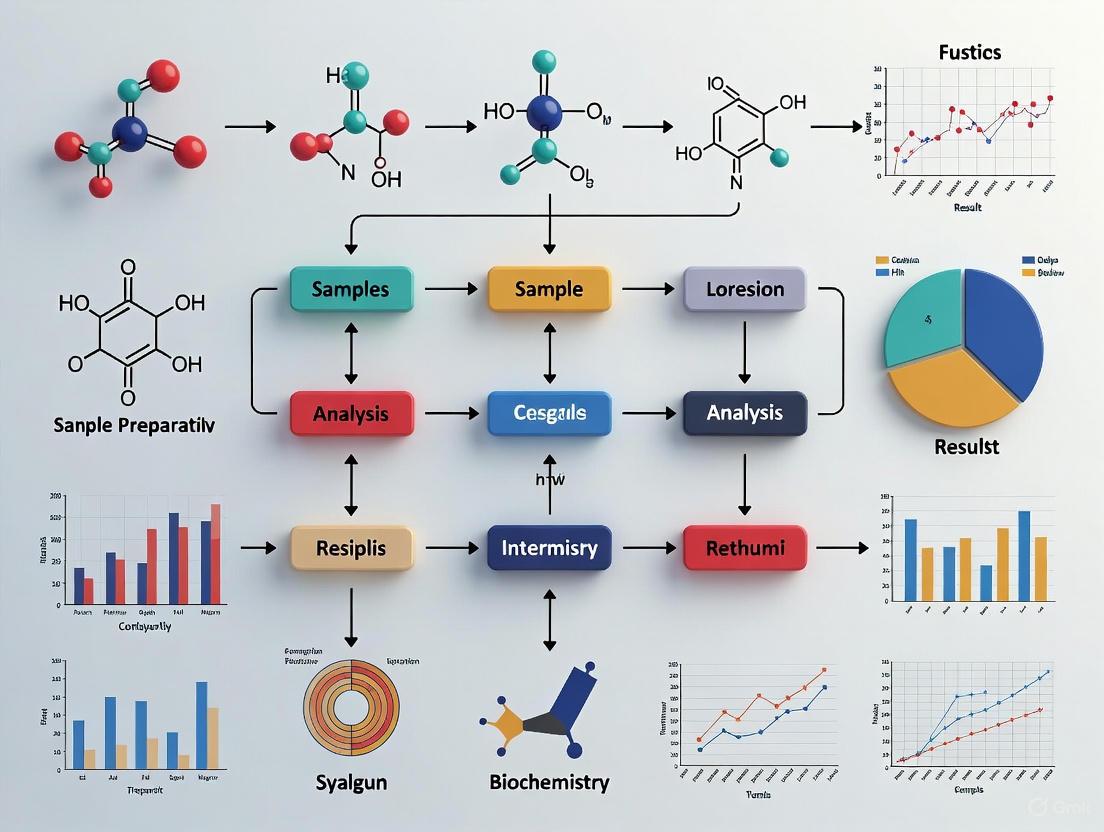

Research into CASOC indicators employs rigorous experimental methodologies, often involving laypersons participating in simulated legal decision-making tasks. A generalized workflow for such studies is illustrated in the following diagram.

Diagram Title: CASOC Comprehension Assessment Workflow

Detailed Methodology

A typical study protocol can be broken down into the following key phases, with the specific example of a 2025 study that used video testimony to test the effect of explaining the meaning of LRs [2]:

- Participant Recruitment and Group Allocation: A sample of laypersons, representative of a jury pool, is recruited. Participants may be randomly assigned to different experimental conditions (e.g., with or without an explanation of the LR, or with different formats of LR presentation) [2].

- Prior Odds Elicitation: Before being presented with the statistical evidence, participants are asked to state their initial belief about the case (e.g., the probability that the suspect is the source of the evidence). This is typically measured on a scale and later converted into prior odds [2].

- Presentation of Forensic Evidence: Participants are presented with the forensic evidence. In modern studies, this is increasingly done via videoed expert witness testimony to enhance ecological validity compared to written formats [2]. The expert presents a Likelihood Ratio, and the experimental manipulation (e.g., the explanation) is embedded in this testimony.

- Explanatory Manipulation: In the 2025 study, the explanation provided to the treatment group defined the LR as "the probability of the evidence if the suspect is the source divided by the probability of the evidence if the suspect is not the source" [2].

- Posterior Odds Elicitation: After exposure to the evidence and the LR, participants are again asked to state their updated belief about the case (e.g., the new probability that the suspect is the source). This is converted into posterior odds.

- Data Calculation and Analysis:

- Effective LR Calculation: For each participant, an Effective Likelihood Ratio is calculated as:

Effective LR = (Elicited Posterior Odds) / (Elicited Prior Odds)[2]. - CASOC Metric Assessment:

- Orthodoxy: The researcher compares the participant's Effective LR to the LR that was presented by the expert. Orthodoxy is high when these values are equal or very close [1] [2].

- Sensitivity: This is assessed by comparing results across groups that were presented with different LR values (e.g., LR=10 vs. LR=1000). If comprehension is sensitive, the group with the higher LR should show a significantly greater increase in their posterior odds [1].

- Coherence: Analyzed by examining the logical consistency of a participant's responses across multiple related questions or scenarios to ensure judgments are not self-contradictory [1].

- Fallacy Identification: The study also analyzed the percentage of participants whose posterior odds were consistent with having committed the prosecutor's fallacy (misinterpreting the probability of the evidence given the suspect is the source as the probability the suspect is the source given the evidence) [2].

- Effective LR Calculation: For each participant, an Effective Likelihood Ratio is calculated as:

Quantitative Data and Research Findings

The application of the CASOC framework has yielded key quantitative insights into the comprehension of likelihood ratios. The findings from a 2025 study are summarized in the table below.

Table 2: Key Findings from a 2025 Study on LR Comprehension and CASOC Metrics

| Research Variable | Condition with LR Explanation | Condition without LR Explanation | Interpretation of Finding |

|---|---|---|---|

| Percentage of participants with Orthodox Effective LRs | Higher Percentage [2] | Lower Percentage [2] | Providing an explanation yielded a small, but statistically detectable, improvement in orthodoxy. |

| Magnitude of Improvement in Orthodoxy | Small Difference [2] | - | The effect of the explanation, while positive, was not large, suggesting other factors are at play. |

| Prevalence of Prosecutor's Fallacy | Not Lower [2] | - | The explanation of the LR's meaning did not reduce the rate of this common logical misinterpretation. |

The overarching conclusion from the review of existing literature is that the empirical research to date does not conclusively answer the question of the single best way to present LRs [1] [2]. The 2025 study concluded that the full set of results did not constitute convincing evidence that presenting a standard explanation of the LR's meaning resulted in better overall understanding, highlighting the complexity of improving comprehension [2].

Essential Research Reagents and Materials

To conduct empirical studies on CASOC indicators, researchers utilize a suite of "research reagents" – standardized materials and tools to ensure validity and reproducibility.

Table 3: Essential Research Materials for CASOC Comprehension Studies

| Research Reagent / Material | Function in the Experiment |

|---|---|

| Likelihood Ratio Stimuli | Prepared numerical (e.g., 100, 1000) or verbal (e.g., "moderate support") statements of evidence strength used as the key independent variable [1]. |

| Video-Recorded Expert Testimony | Standardized, ecologically valid medium for presenting forensic evidence and LRs to participants, controlling for delivery and demeanor [2]. |

| Prior and Posterior Probability Elicitation Tool | A calibrated scale (e.g., 0-100% probability slider) or questionnaire used to quantitatively measure participant beliefs before and after evidence presentation [2]. |

| Demographic and Numeracy Questionnaire | A pre-experiment survey to characterize the participant sample and control for covariates like statistical numeracy, which can influence comprehension. |

| Effective LR Calculation Script | A pre-programmed data analysis script (e.g., in R or MATLAB) to compute each participant's Effective LR and compare it to the presented LR [2]. |

The Critical Role of Comprehension in Evaluating Forensic Evidence

The ongoing paradigm shift in forensic science emphasizes replacing subjective judgment with transparent, quantitative methods based on statistical models, primarily the likelihood ratio (LR) framework [3]. However, the ultimate utility of this evidence hinges on the ability of legal decision-makers to understand it. This whitepaper reviews empirical literature on the comprehension of forensic evidence, analyzing findings through the CASOC indicators of comprehension—sensitivity, orthodoxy, and coherence [1]. We summarize quantitative data on juror understanding, detail experimental methodologies from key studies, and visualize the logical framework for evidence evaluation. The conclusion underscores that without comprehension, even the most mathematically rigorous evidence fails to serve justice.

Forensic science is undergoing a fundamental transformation, moving from analytical methods based on human perception and subjective judgement towards a framework built on relevant data, quantitative measurements, and statistical models [3]. This paradigm shift is logically centered on the likelihood ratio (LR), which provides a logically correct framework for interpreting evidence [3]. Yet, amidst this scientific debate over accuracy and logical correctness, a critical component is often overlooked: the comprehension of the fact-finder, typically a jury. A large body of research from cognitive psychology reveals a significant gap between the intended meaning of expert testimony and what jurors actually understand [4]. This gap renders the most precise evidence moot if it is misunderstood or misapplied. This whitepaper synthesizes the current state of research on this comprehension challenge, framing the discussion within the context of CASOC indicators and providing a scientific toolkit for researchers to advance this critical field.

The CASOC Framework and Evidence Presentation Formats

Core Comprehension Indicators

The CASOC framework provides structured indicators to gauge comprehension of statistical evidence, particularly likelihood ratios [1]. These indicators are:

- Sensitivity: The ability of the fact-finder to perceive how the evidence changes based on the strength of the findings.

- Orthodoxy: The alignment of the fact-finder's interpretation with the intended, logically correct meaning of the evidence.

- Coherence: The consistency and logical soundness of the fact-finder's reasoning when incorporating the evidence.

Analysis of Common Presentation Formats

Forensic evidence is presented to juries in various formats, each with distinct advantages and documented comprehension issues.

Table 1: Comprehension Profile of Evidence Presentation Formats

| Presentation Format | Key Characteristics | Documented Comprehension Issues |

|---|---|---|

| Numerical (e.g., Likelihood Ratio, RMP) | Measurable, provides veneer of objectivity [4]. | Often misinterpreted as the chance the defendant is innocent (source probability error) [4]. Laypeople struggle with required mathematical computations [4]. |

| Verbal Scales | Avoids confusing math, feels more accessible [4]. | Highly subjective; the same words hold different meanings for different people [4]. Lacks a standardized, calibrated scale. |

| Natural Frequencies | Uses frequency statements (e.g., "1 in 100,000") within a relevant reference class [4]. | Requires known feature prevalence in a population, not yet possible for many disciplines [4]. Requires educational context for full effectiveness. |

A critical finding is that jurors frequently underweight statistical evidence, updating their beliefs in the correct direction but at a magnitude hundreds of thousands of times smaller than intended by the expert [4]. Furthermore, the context of the number presentation significantly influences perception; a 15% probability may be considered low risk in one context but high in another [4].

Experimental Protocols in Comprehension Research

Research in this field relies on rigorous experimental designs using laypeople as proxies for jurors. The following summarizes a generalized methodology from key studies.

Protocol for Testing Comprehension of Quantitative Evidence

Objective: To evaluate layperson understanding of statistical measures like Random Match Probability (RMP) and Likelihood Ratios.

- Participant Recruitment: A sample of eligible laypersons is recruited, typically mirroring jury service demographics.

- Stimulus Material Development: A simulated trial transcript or video is created. The key manipulation is the form of the forensic expert's testimony (e.g., RMP vs. natural frequency vs. verbal conclusion).

- Experimental Procedure:

- Participants are randomly assigned to one of the testimony format conditions.

- They review the stimulus material.

- They complete a questionnaire measuring comprehension outcomes.

- Outcome Measures:

- Sensitivity: Participants rate the probability of the suspect's guilt before and after the testimony to measure belief updating.

- Orthodoxy: Direct questions test understanding of the testimony's meaning (e.g., "What does a 1-in-100,000 RMP mean?"). This identifies the source probability error.

- Coherence: Participants perform mathematical calculations (e.g., "How many people in a city of 2 million would be expected to match?") or explain their reasoning [4].

- Data Analysis: Comparisons are made between experimental conditions to determine which presentation format leads to more sensitive, orthodox, and coherent comprehension.

Protocol for Testing Verbal Scale Interpretability

Objective: To assess the variability in interpretation of verbal expressions of evidential strength.

- Participant Recruitment: A similar sample of laypersons is recruited.

- Stimulus Material Development: A list of verbal expressions (e.g., "strong support," "reasonable degree of scientific certainty") is compiled.

- Experimental Procedure: Participants are asked to assign a numerical probability or frequency to each verbal phrase.

- Outcome Measures: The central tendency and, more importantly, the variability (standard deviation) of the numerical assignments for each phrase are calculated.

- Data Analysis: Phrases with lower variability are considered more reliable and less ambiguous for communication.

Visualizing the Logical Framework of Forensic Evidence Evaluation

The following diagram illustrates the logical pathway from evidence to interpretation, highlighting the critical points where comprehension can break down.

The Researcher's Toolkit: Essential Methodologies and Reagents

This section outlines key methodological "reagents" for conducting research into the comprehension of forensic evidence.

Table 2: Essential Research Reagents for Comprehension Studies

| Research Reagent | Function/Description | Application in Comprehension Research |

|---|---|---|

| Simulated Trial Stimuli | Video or written transcripts of mock trials where the expert testimony is systematically varied. | Serves as the primary experimental manipulation to test different presentation formats (e.g., LR vs. RMP vs. verbal) [4]. |

| CASOC Assessment Battery | A standardized questionnaire designed to measure Sensitivity, Orthodoxy, and Coherence. | The core dependent variable measure to quantitatively assess comprehension levels across experimental conditions [1]. |

| Bayesian Inference Framework | A mathematical model for updating the probability of a hypothesis (e.g., guilt) given new evidence. | Provides a normative benchmark (P(H|E)) against which participants' belief updating (sensitivity) can be compared [5]. |

| Natural Frequency Training Module | A brief educational intervention that teaches statistical reasoning using natural frequencies and visual aids. | Used as an experimental intervention to test if comprehension of quantitative testimony can be improved [4]. |

| Demographic & Numeracy Scales | Questionnaires capturing participant background, including measures of statistical numeracy. | Used as covariates to understand which participant factors (e.g., education, numeracy) predict comprehension levels [4]. |

The paradigm shift towards a more quantitative and statistically sound forensic science is a necessary and welcome evolution [3]. However, the success of this shift is contingent on effectively bridging the comprehension gap between experts and legal decision-makers. Current research, while incomplete, clearly demonstrates that laypeople struggle with both quantitative and qualitative presentations of evidence, often misinterpreting their meaning or underweighting their value [1] [4]. The CASOC framework provides a robust structure for evaluating comprehension, but the existing literature does not definitively identify the single best way to present likelihood ratios [1]. Future research must prioritize interdisciplinary collaboration, employing rigorous experimental protocols to identify communication strategies that maximize sensitivity, orthodoxy, and coherence. Only then can the full value of forensic science's quantitative transformation be realized in the pursuit of justice.

Likelihood Ratios as a Primary Focus in Statistical Communication

Likelihood ratios (LRs) represent a fundamental statistical framework for evaluating the strength of evidence across diverse scientific disciplines, from forensic science to clinical diagnostics and drug development. At its core, a likelihood ratio is a measure of diagnostic accuracy that compares the probability of observing a particular test result in individuals with a target condition to the probability of observing that same result in individuals without the condition [6]. This approach provides a unified methodology for evidence interpretation that transcends disciplinary boundaries and offers significant advantages over traditional statistical measures. The LR framework is particularly valuable within forensic statistics research where it provides a mathematically rigorous structure for communicating the weight of evidence in legal proceedings [7].

The mathematical formulation of a likelihood ratio depends on the context of application. In diagnostic medicine, the LR for a positive test result (LR+) is calculated as sensitivity/(1-specificity), while the LR for a negative test result (LR-) is calculated as (1-sensitivity)/specificity [8]. In forensic applications, the general form is LR = P(E|H₁)/P(E|H₂), where E represents the observed evidence, H₁ is the prosecution hypothesis, and H₂ is the defense hypothesis [9]. This formulation explicitly addresses the conditional probabilities that are essential for proper evidence interpretation and aligns with the logical approach required for legal decision-making.

The theoretical underpinnings of likelihood ratios are deeply rooted in Bayesian inference, which provides a coherent framework for updating prior beliefs in light of new evidence. According to Bayes' theorem, the post-test odds of a condition are equal to the pre-test odds multiplied by the likelihood ratio [6]. This mathematical relationship elegantly separates the objective strength of the evidence (LR) from the subjective prior probability, creating a transparent mechanism for evidence interpretation that is particularly valuable in both forensic and clinical contexts where prior probabilities may vary considerably between cases.

Likelihood Ratios in Forensic Statistics and CASOC Framework

Within forensic science, likelihood ratios have emerged as the preferred methodology for conveying the weight of evidence, particularly in Europe where this approach has gained significant traction [7]. The forensic application involves comparing the probability of the evidence under two competing propositions: typically, the probability that the evidence came from a particular source (such as a defendant) versus the probability that it came from a random member of the relevant population [9]. This framework allows forensic experts to communicate the strength of evidence without directly addressing the ultimate issue of guilt or innocence, thereby maintaining appropriate boundaries between statistical evidence and legal decision-making.

The CASOC indicators (Comprehension, Acceptance, Satisfaction, Orthodoxy, and Coherence) provide a crucial framework for evaluating how effectively statistical information is understood by legal decision-makers [1]. Research on the understandability of likelihood ratios has investigated how different presentation formats—including numerical values, random match probabilities, and verbal statements of support—affect comprehension among laypersons acting as legal decision-makers. These studies have revealed significant challenges in communicating statistical concepts in legal contexts, highlighting the need for careful consideration of how likelihood ratios are presented and explained [1].

Table 1: Interpretation Guidelines for Likelihood Ratios in Forensic Contexts

| LR Value Range | Verbal Equivalent | Strength of Evidence |

|---|---|---|

| 1-10 | Limited evidence | Weak support for hypothesis |

| 10-100 | Moderate evidence | Moderate support for hypothesis |

| 100-1000 | Moderately strong evidence | Substantial support for hypothesis |

| 1000-10000 | Strong evidence | Strong support for hypothesis |

| >10000 | Very strong evidence | Very strong support for hypothesis |

The transformation of numerical LR values into verbal equivalents represents an important communication strategy in forensic contexts [9]. However, this approach has limitations, as verbal expressions cannot be mathematically multiplied by prior odds to obtain posterior odds, potentially introducing ambiguity in the interpretation process [7]. Nevertheless, such verbal scales provide valuable guidance for legal decision-makers who may lack statistical expertise, bridging the gap between quantitative evidence and qualitative decision-making.

Clinical and Diagnostic Applications

In clinical medicine and diagnostic test evaluation, likelihood ratios serve as powerful tools for quantifying the diagnostic utility of tests, symptoms, or clinical findings. Unlike sensitivity and specificity, which are fixed properties of a test, LRs provide a direct means for clinicians to update the probability of a disease based on test results [10]. This approach is particularly valuable in drug development and clinical trial design, where understanding the discriminatory power of diagnostic biomarkers is essential for patient stratification and outcome assessment.

The application of LRs in clinical practice follows a systematic process beginning with estimation of the pre-test probability (often based on clinical experience and population prevalence), followed by test selection and interpretation using the appropriate LR, and culminating in calculation of post-test probability to guide clinical decision-making [8]. This process explicitly acknowledges the contextual nature of diagnostic testing, recognizing that the same test result may have different implications depending on the clinical scenario and population characteristics.

Table 2: Likelihood Ratio Ranges and Their Clinical Impact

| LR Value | Clinical Impact | Effect on Post-Test Probability |

|---|---|---|

| >10 | Large increase | Substantially increases likelihood of disease |

| 5-10 | Moderate increase | Moderately increases likelihood of disease |

| 2-5 | Small increase | Slightly increases likelihood of disease |

| 1-2 | Minimal increase | Minimal change in disease likelihood |

| 0.5-1 | Minimal decrease | Minimal change in disease likelihood |

| 0.2-0.5 | Small decrease | Slightly decreases likelihood of disease |

| 0.1-0.2 | Moderate decrease | Moderately decreases likelihood of disease |

| <0.1 | Large decrease | Substantially decreases likelihood of disease |

The versatility of likelihood ratios extends beyond simple dichotomous test results to encompass multicategory and continuous measures [10]. By calculating LRs for specific test result intervals or even individual values, clinicians can extract more nuanced diagnostic information than would be possible with traditional sensitivity and specificity measures alone. This approach is particularly valuable for laboratory tests that yield continuous results, such as many biomarkers used in drug development and clinical research [11].

Quantitative Interpretation and Calculation Methods

The mathematical interpretation of likelihood ratios follows consistent principles across applications. An LR of 1.0 indicates that the test result provides no diagnostic information, as it is equally likely in both affected and unaffected individuals. As LR values increase above 1.0, they provide increasing support for the presence of the target condition, while values below 1.0 provide increasing support for its absence [10]. The magnitude of change from pre-test to post-test probability depends on both the LR value and the pre-test probability, following the mathematical relationship of Bayes' theorem.

The calculation of post-test probability using likelihood ratios involves a conversion between probabilities and odds. The process follows these steps:

- Convert pre-test probability to pre-test odds: Pre-test odds = Pre-test probability / (1 - Pre-test probability)

- Multiply pre-test odds by the LR: Post-test odds = Pre-test odds × LR

- Convert post-test odds to post-test probability: Post-test probability = Post-test odds / (1 + Post-test odds) [6]

For clinical and research applications, this calculation can be simplified through the use of a Fagan nomogram, which provides a graphical method for determining post-test probability without mathematical computation [8] [10]. The nomogram consists of three vertical lines representing pre-test probability, likelihood ratio, and post-test probability, with a straight line connecting the first two values intersecting the third at the appropriate post-test probability.

Table 3: Likelihood Ratio Calculation Methods by Test Type

| Test Result Type | LR+ Calculation | LR- Calculation |

|---|---|---|

| Dichotomous | Sensitivity / (1-Specificity) | (1-Sensitivity) / Specificity |

| Multicategory | Proportion with disease in category / Proportion without disease in category | (Complement of above) |

| Continuous | Slope of tangent to ROC curve at point | Slope of tangent to ROC curve at point |

For continuous tests, the likelihood ratio for a specific value can be determined from the Receiver Operating Characteristic (ROC) curve as the slope of the tangent at the point corresponding to that test result [11]. This approach allows for the full utilization of quantitative test information without the information loss that occurs when continuous measures are dichotomized at arbitrary cut-points. The development of test-specific LRs for continuous biomarkers represents a significant advancement in personalized medicine and precision drug development.

Experimental Protocols and Research Methodologies

The determination of likelihood ratios for diagnostic tests requires rigorous experimental designs and methodological approaches. For novel biomarkers or diagnostic tests, this typically involves a cross-sectional study comparing the test results in a well-defined population of individuals with confirmed disease (typically through a gold standard reference test) and without the disease [11]. The study population must be representative of the intended use population to ensure that the calculated LRs are generalizable to clinical practice.

The fundamental experimental workflow for establishing likelihood ratios begins with subject recruitment and classification based on a reference standard, followed by blinded index test measurement, data collection for all subjects, and calculation of test performance characteristics including LRs for various test result ranges [10]. This process requires careful attention to methodological quality, including blinded assessment, appropriate spectrum of patients, and avoidance of verification bias.

In forensic applications, the experimental approach to likelihood ratio calculation differs significantly from clinical diagnostics. The forensic LR typically compares the probability of the evidence under two competing hypotheses: the prosecution hypothesis (that the evidence came from the suspect) and the defense hypothesis (that the evidence came from a random individual in the population) [9]. This requires detailed knowledge of population genetics and statistical modeling to estimate the probability of observing the evidence under each hypothesis, often involving complex mixture interpretations and accounting for population substructure.

For quantitative genetic studies and heritability estimation, restricted maximum likelihood (REML) methods are employed to estimate genetic variance components [12]. The likelihood function and its derivatives provide insight into the quality of parameter estimates and can be used to validate experimental designs before data collection. Profile likelihood methods offer more appropriate estimates of confidence intervals than large sample approximations, particularly for variance component estimation near parameter space boundaries [12].

Research Reagents and Essential Materials

Table 4: Essential Research Materials for Likelihood Ratio Studies

| Research Reagent | Function/Application | Specific Use Cases |

|---|---|---|

| Reference Standard Materials | Establish ground truth for disease status | Clinical LR studies requiring definitive diagnosis |

| DNA Profiling Kits | Forensic identification and comparison | STR analysis for forensic LRs [9] |

| Automated Immunoassay Systems | Quantitative antibody measurement | Autoantibody testing for autoimmune disease diagnosis [11] |

| ROC Curve Analysis Software | Determine test discrimination performance | Calculating LRs for continuous test results [11] |

| Population Genetic Databases | Estimate allele frequencies | Forensic LRs for DNA evidence [9] |

The quality and appropriateness of research reagents directly impact the validity of calculated likelihood ratios. In clinical diagnostics, the reference standard materials used to establish disease status must represent the best available method for diagnosis, as errors in classification will distort the calculated LRs [10]. Similarly, in forensic applications, the quality of DNA profiling kits and population genetic databases directly affects the reliability of forensic LRs [9].

For autoimmunity testing and other specialized diagnostic areas, standardized reagents and automated test systems are essential for generating reproducible results that can be translated into valid likelihood ratios [11]. The increasing use of automated platforms for antinuclear antibody testing, for example, has enabled the definition of fluorescence intensity units that correspond to specific LR values, facilitating test interpretation and harmonization across testing platforms [11].

Uncertainty Characterization and Limitations

Despite their mathematical elegance, likelihood ratios are subject to multiple sources of uncertainty that must be characterized for proper interpretation. In forensic science, this uncertainty arises from sampling variability, measurement error, model selection, and assumptions about population genetics [7]. The concept of an "assumptions lattice" leading to an "uncertainty pyramid" provides a framework for assessing how different assumptions and methodological choices affect the calculated LR value, enabling decision-makers to evaluate its fitness for purpose [7].

In clinical medicine, the major limitations of LRs include their dependence on the quality of the underlying sensitivity and specificity estimates, the challenge of accurately estimating pre-test probability, and the lack of validation for sequential application of multiple LRs [8]. Clinicians often apply one LR to generate a post-test probability, then use this as a new pre-test probability for a subsequent test, despite the absence of evidence supporting this sequential application [8]. This practice may lead to inaccurate probability estimates, particularly when tests are not conditionally independent.

The computation of likelihood ratios does not eliminate the need for clinical judgment or forensic expertise. Rather, it provides a structured framework for incorporating objective data into decision-making processes while acknowledging the role of subjective interpretation [7]. Even with perfect statistical methodology, the communication and interpretation of LRs require careful consideration of the audience's statistical literacy and the context in which the information will be used [1] [13].

Visualization and Communication Strategies

Effective communication of likelihood ratios requires specialized visualization strategies tailored to the target audience. For forensic applications directed toward legal decision-makers with varying statistical literacy, the transformation of numerical LRs into verbal equivalents provides a bridge between quantitative evidence and qualitative decision-making [9]. However, this approach risks information loss and must be implemented with careful attention to the established verbal equivalence scales.

In clinical practice, the Fagan nomogram remains the most widely used visualization tool for applying LRs to individual patients [10]. This nomogram enables clinicians to quickly determine post-test probability by drawing a straight line from the pre-test probability through the appropriate LR value to the corresponding post-test probability, without requiring mathematical calculations. This visual approach facilitates the integration of quantitative evidence into time-constrained clinical decision-making.

For research applications and communication among scientific professionals, detailed reporting of likelihood ratios with confidence intervals provides the necessary information for evaluating the precision of estimates [10]. The presentation of LRs for multiple test result intervals or as a continuous function of test values offers a more comprehensive understanding of test performance than single summary measures [11]. This approach is particularly valuable in drug development and biomarker research, where understanding the relationship between test values and disease probability is essential for establishing clinical decision points.

The harmonization of test results through likelihood ratios represents a powerful strategy for overcoming the challenges posed by different measurement units, scales, and assay systems [11]. By converting diverse test results to a common LR metric, clinicians and researchers can compare the diagnostic utility of different tests and establish consistent interpretation guidelines across testing platforms. This approach is particularly valuable in multicenter clinical trials and systematic reviews where test standardization may be challenging.

Likelihood ratios provide a unified framework for evaluating and communicating statistical evidence across diverse domains including forensic science, clinical diagnostics, and drug development. Their foundation in Bayesian inference offers a mathematically coherent approach to updating probability estimates based on new evidence, while their flexibility accommodates everything from simple dichotomous tests to complex continuous measures. The CASOC framework provides valuable guidance for optimizing the comprehension of statistical information, particularly in forensic contexts where lay decision-makers must interpret complex evidence.

Despite their advantages, likelihood ratios require appropriate uncertainty characterization and careful communication to avoid misinterpretation. The ongoing research on likelihood ratio presentation formats and understanding indicators will continue to refine best practices for statistical communication. As quantitative methods become increasingly important in evidence-based practice, the thoughtful application of likelihood ratios will play a crucial role in ensuring that statistical evidence is accurately communicated and appropriately interpreted across scientific disciplines and practical applications.

Current Gaps in Empirical Research on Layperson Understanding

A paradigm shift is underway in forensic science, moving from subjective judgement towards evidence evaluation based on quantitative data and statistical models [14]. Central to this shift is the increasing use of the likelihood ratio (LR) framework and other statistical statements to express the strength of forensic evidence. Consequently, effective communication of these concepts to legal decision-makers, particularly laypersons serving as jurors, has become a critical area of study. This whitepaper examines the current state of empirical research on layperson comprehension, framed within the context of CASOC indicators (Comprehension, Acceptability, Satisfaction, Opinion Change), and identifies persistent gaps that hinder the development of optimal communication strategies.

Despite over two decades of research and commentary since the seminal 2009 National Academy of Sciences report, fundamental questions remain unanswered. The scientific rigor of forensic evidence is ultimately compromised if its meaning cannot be accurately conveyed to those who determine its weight in legal proceedings. This analysis synthesizes findings from recent empirical studies to delineate the specific methodological and conceptual limitations that future research must address to bridge this critical gap in forensic science practice.

The Comprehension Challenge: Statistical Evidence in Legal Contexts

The Current State of Understanding

Empirical research consistently demonstrates that laypersons struggle with the statistical concepts fundamental to modern forensic evidence. A 2025 review of existing literature concluded that the current body of research does not definitively identify the best way for forensic practitioners to present likelihood ratios to maximize understandability for legal decision-makers [2]. This foundational limitation persists despite general recognition of the problem.

Recent experimental data reveals the depth of this challenge. A 2025 study on the effects of explaining the meaning of likelihood ratios found only a small improvement in lay understanding when such explanations were provided [2]. More concerningly, the percentage of participants whose posterior odds were consistent with committing the prosecutor's fallacy—a fundamental reasoning error—was not reduced by the explanation. This suggests that current explanatory techniques may be insufficient to counteract deep-seated cognitive biases.

CASOC Indicators Framework

The CASOC framework provides a structured approach for evaluating layperson comprehension:

- Comprehension: The ability to correctly interpret the meaning of statistical evidence, particularly its proper weight and the uncertainty it contains.

- Acceptability: The willingness of jurors to rely on statistical evidence presented in various formats when reaching verdicts.

- Satisfaction: Juror confidence in their understanding of the evidence and its presentation.

- Opinion Change: The degree to which statistical evidence alters pre-existing beliefs or interpretations of case facts.

Current research has largely focused on comprehension, with insufficient attention to the interrelated nature of these indicators and their collective impact on decision-making.

Table 1: Key Empirical Findings on Layperson Understanding of Forensic Statistics

| Study Focus | Key Finding | Implication for CASOC Indicators |

|---|---|---|

| Explanation Efficacy [2] | Providing explanations of LRs yields only small comprehension improvements | Challenges Comprehension and Satisfaction indicators |

| Conclusion Format [15] | Format (LR, probability, verbal) shows no significant impact on evidence weight or verdict | Questions link between Comprehension and Opinion Change |

| Report Context [15] | Participants evaluate expert reports as a whole rather than focusing on conclusion formats | Highlights contextual factors affecting Acceptability |

| Individual Differences [15] | Substantial variation in comprehension across participants based on reasoning skills | Suggests Comprehension not uniform across juror population |

Critical Research Gaps

Methodological Limitations in Experimental Design

A fundamental gap concerns the ecological validity of existing research. Most studies have examined forensic conclusions in isolation rather than embedded within complete expert reports [15]. This artificial presentation increases the salience of the conclusion format while neglecting how laypeople naturally process information in legal contexts. Research indicates that when mock jurors evaluate complete expert reports, the conclusion format (likelihood ratio, random-match probability, verbal label, or categorical statement) shows no significant impact on their evaluations of evidence weight or verdict decisions [15]. This suggests that study designs using isolated statements may artificially inflate format effects.

The field also suffers from inconsistent outcome measures across studies. Research has employed varying dependent variables, including evidence weight, verdict decisions, understanding scores, and susceptibility to fallacious reasoning. Without standardization, cross-study comparisons become problematic, and the development of evidence-based best practices is hampered. The 2025 review by Morrison et al. specifically noted this methodological inconsistency and recommended more uniform approaches [2].

Unexplored Dimensions of Comprehension

Beyond basic understanding, crucial aspects of how laypersons engage with statistical evidence remain unexamined:

- Cognitive Biases: While the prosecutor's fallacy is well-documented, research has insufficiently explored how to effectively counteract this and other reasoning errors through presentation format or explanatory techniques [2].

- Individual Differences: Emerging evidence suggests substantial variation in comprehension across participants, potentially linked to factors like numeracy, scientific reasoning, and cognitive style [15]. Current research has not systematically investigated how to tailor communication to diverse juror capabilities.

- Complex Evidence Interactions: Real-world trials present multiple pieces of evidence, yet minimal research examines how presentation formats for statistical evidence affect its integration with other case information in juror decision-making.

The Verbal Alternatives Gap

Substantial research has compared numerical formats (likelihood ratios, random-match probabilities), but few studies have empirically tested the comprehension of verbal expressions of likelihood ratios, despite their potential use in courtrooms [2]. The existing literature has tended to research "expressions of strength of evidence in general, rather than focusing specifically on likelihood ratios" [2]. This represents a significant practical gap, as verbal expressions may offer a more accessible alternative if properly calibrated.

Experimental Protocols for Addressing Research Gaps

Comprehensive Report Evaluation Protocol

To address the ecological validity gap, researchers should employ experimental designs that present statistical evidence within realistic expert reports.

Table 2: Essential Research Reagents and Materials for Comprehension Studies

| Research Reagent | Function in Experimental Protocol | Implementation Example |

|---|---|---|

| Multi-Page Expert Reports | Provides ecological context for statistical conclusions | Embed different conclusion formats within identical case details [15] |

| Control Flawed Reports | Benchmarks participant sensitivity to evidence quality | Include fundamental methodological errors to assess critical evaluation [15] |

| Video Testimony | Tests comprehension in more realistic presentation format | Present expert evidence orally with visual aids versus written reports [2] |

| Cognitive Assessment Batteries | Measures individual differences affecting comprehension | Include numeracy, scientific reasoning, and cognitive style measures [15] |

Methodology:

- Participant Recruitment: Jury-eligible adults representing diverse educational and demographic backgrounds.

- Case Materials Development: Create realistic criminal case scenarios with forensic evidence (e.g., shoeprint, DNA, or fingerprint evidence).

- Expert Report Construction: Develop multi-page expert reports varying only in the conclusion format (numerical LR, verbal LR, random-match probability, categorical statement).

- Dependent Measures: Assess evidence weight (0-100 scale), verdict decisions, comprehension checks, and cognitive bias susceptibility.

- Analysis: Examine main effects of format, interaction with individual differences, and relationship between comprehension measures.

Longitudinal Learning Protocol

Current research predominantly uses single-exposure designs, failing to capture how comprehension might evolve with repeated exposure or judicial instruction.

Methodology:

- Pre-Test Assessment: Measure baseline statistical literacy and reasoning tendencies.

- Structured Training Intervention: Implement brief educational modules on interpreting statistical evidence.

- Multiple Case Exposure: Present participants with series of case scenarios with statistical evidence.

- Feedback Incorporation: Provide correct interpretations after each case to reinforce learning.

- Post-Test Assessment: Measure comprehension improvement and retention over time.

Future Research Directions

Priority Investigations

To advance the field, research should prioritize:

Verbal Equivalents Standardization: Systematic experimentation to establish verbal expressions that accurately convey specific likelihood ratio values without distorting their statistical meaning.

Multimodal Presentation Development: Create and test integrated presentation approaches combining visual aids, simplified numerical formats, and verbal explanations to enhance comprehension across diverse juror populations.

Individual Difference Mapping: Comprehensive studies to identify which cognitive factors (numeracy, need for cognition, scientific reasoning) most strongly predict statistical evidence comprehension and how presentation formats can be tailored to different capability levels.

Real-World Context Studies: Research examining how presentation formats influence evidence interpretation in the context of full trials, including cross-examination, judicial instructions, and group deliberation effects.

Methodological Recommendations

Future studies should implement several key methodological improvements:

- Standardized Outcome Measures: Develop and validate consistent dependent variables across studies to enable meaningful meta-analyses.

- Diverse Participant Sampling: Ensure research includes participants representing the full spectrum of educational backgrounds and cognitive capabilities found in actual jury pools.

- Mixed-Methods Approaches: Combine quantitative measures of comprehension with qualitative explorations of reasoning processes to identify not just whether formats work, but why they succeed or fail.

Significant gaps persist in empirical research on layperson understanding of forensic statistics, particularly within the CASOC indicators framework. Current research provides insufficient guidance on how to optimally present statistical evidence to maximize comprehension, minimize cognitive biases, and support appropriate weight in legal decision-making. The most pressing needs include developing methodologies with greater ecological validity, systematically exploring verbal expression alternatives, and accounting for individual differences in juror capabilities.

Addressing these gaps requires coordinated research efforts employing rigorous experimental designs, standardized measures, and diverse participant populations. By prioritizing these investigations, the forensic science community can develop evidence-based communication strategies that preserve the scientific integrity of forensic evidence throughout the legal process, ultimately strengthening the foundation of justice systems worldwide.

The Interdisciplinary Importance of CASOC Beyond Legal Contexts

The Comprehension Assessment Standards for Observable Competencies (CASOC) indicators represent a rigorous methodological framework initially developed to evaluate how laypersons comprehend complex statistical information, such as forensic likelihood ratios, within legal settings [2]. The core tripartite structure of CASOC—comprising sensitivity, orthodoxy, and coherence—provides a validated means to assess the quality of understanding. Sensitivity measures how an individual's perception of evidence strength changes in response to variations in the underlying statistical value; orthodoxy evaluates whether the interpretation aligns with normative statistical reasoning principles, such as Bayes' theorem; and coherence assesses the internal consistency of related judgments [2]. Originally applied to problems of evidence interpretation in courts, this framework's utility extends far beyond its legal origins.

The interdisciplinary relevance of CASOC stems from its capacity to objectively quantify comprehension of probabilistic and statistical data across diverse domains. In fields such as drug development, clinical trial design, diagnostic test evaluation, and public health communication, professionals must consistently interpret and act upon complex statistical information. The CASOC framework offers a structured, empirical approach to evaluating and improving this interpretative process. By ensuring that key decision-makers demonstrate sensitivity to data changes, orthodox application of statistical principles, and coherent reasoning across related scenarios, CASOC indicators provide a mechanism to enhance scientific rigor and decision quality throughout the research and development pipeline.

Core CASOC Indicators and Their Methodological Foundations

The three primary CASOC indicators form a composite picture of statistical comprehension, each targeting a distinct aspect of reasoning.

Sensitivity

Sensitivity measures the responsiveness of an individual's perceived strength of evidence to changes in the actual statistical value presented. In practical terms, it assesses whether a professional can correctly distinguish between different magnitudes of statistical evidence. For example, in a forensic context, a sensitive evaluator would perceive a likelihood ratio (LR) of 10,000 as providing stronger support for a proposition than an LR of 10 [2]. This indicator is crucial in research and development settings where professionals must calibrate confidence based on varying strength of evidence, such as interpreting p-values, confidence intervals, or diagnostic test results. Poor sensitivity can lead to over- or under-reaction to statistical findings, potentially misdirecting research resources or clinical decisions.

Orthodoxy

Orthodoxy evaluates whether interpretations adhere to normative statistical frameworks, most notably Bayesian reasoning. In the legal context, this specifically involves assessing whether individuals update their beliefs in a manner consistent with Bayes' theorem when presented with new evidence [2]. A common violation of orthodoxy is the prosecutor's fallacy, where the probability of the evidence given a proposition is mistakenly interpreted as the probability of the proposition given the evidence. In scientific domains, analogous reasoning fallacies can undermine research validity. For drug development professionals, orthodox thinking ensures proper interpretation of clinical trial outcomes, adverse event data, and biomarker associations, preventing costly misinterpretations that could derail development programs or lead to incorrect therapeutic assessments.

Coherence

Coherence assesses the internal consistency of related judgments, ensuring that an individual's interpretations do not contain logical contradictions across different presentations of the same underlying evidence [2]. A coherent reasoner would provide logically compatible interpretations of statistical evidence regardless of whether it's presented numerically, verbally, or visually. This indicator is particularly relevant when communicating complex statistical concepts to diverse audiences, such as when regulatory officials interpret sponsor submissions, investigators explain trial outcomes to participants, or scientists convey findings to interdisciplinary teams. Incoherent reasoning can signal poor comprehension and lead to inconsistent decision-making throughout the research and development lifecycle.

Table 1: Core CASOC Indicators and Their Scientific Interpretation

| CASOC Indicator | Definition | Measurement Approach | Interdisciplinary Relevance |

|---|---|---|---|

| Sensitivity | Responsiveness to changes in statistical evidence strength | Track how perceived evidence strength scales with actual statistical values (e.g., LRs, p-values, effect sizes) | Critical for dose-response interpretation, diagnostic test evaluation, clinical significance assessments |

| Orthodoxy | Adherence to normative statistical frameworks (e.g., Bayes' theorem) | Compare belief updates to Bayesian benchmarks; identify reasoning fallacies | Prevents misinterpretation of clinical trial data, biomarker associations, safety signals |

| Coherence | Internal consistency across related judgments | Evaluate logical compatibility of interpretations across different evidence formats | Ensures consistent communication to regulators, healthcare professionals, and patients |

Interdisciplinary Applications Beyond Legal Contexts

Drug Development and Clinical Research

The drug development pipeline generates enormous volumes of complex statistical data that must be accurately interpreted under significant uncertainty and time pressure. CASOC indicators provide a framework for evaluating and improving how research teams comprehend this information. For instance, when assessing phase 3 trial results for novel therapies like the PI3K-alpha inhibitor inavolisib for PIK3CA-mutated advanced breast cancer, professionals must sensitively distinguish between varying levels of evidence strength regarding overall survival (34 vs. 27 months, HR=0.67) and progression-free survival (17.2 vs. 7.3 months, HR=0.42) [16]. Orthodoxy ensures proper interpretation of these hazard ratios without committing reasoning fallacies, while coherence guarantees consistent understanding across different presentations of the same clinical evidence.

Furthermore, CASOC-compliant comprehension is essential when evaluating predictive biomarkers that guide targeted therapy development. For example, in assessing treatments like vepdegestrant for ESR1-mutated ER+/HER2- advanced breast cancer, researchers must correctly interpret the differential treatment effect observed in biomarker-defined subgroups (median PFS of 5 months vs. 2.1 months for vepdegestrant versus fulvestrant in ESR1-mutated patients) [16]. Misinterpretation of such biomarker-stratified results could lead to incorrect patient selection strategies or misguided development decisions. Applying CASOC frameworks to team training and decision processes helps safeguard against these errors, potentially accelerating the development of precision medicines.

Diagnostic Test Evaluation and Biomarker Validation

The validation of diagnostic tests and disease biomarkers represents another domain where CASOC indicators provide critical methodological rigor. Whether evaluating next-generation sequencing assays for mutation detection or companion diagnostics for targeted therapies, professionals must demonstrate sensitivity to test performance metrics (sensitivity, specificity, predictive values), orthodox interpretation of likelihood ratios in diagnostic contexts, and coherent application of these concepts across different clinical scenarios. The methodological parallels between forensic evidence evaluation and diagnostic test interpretation make CASOC particularly relevant, as both domains involve updating prior beliefs (pre-test probabilities) based on new evidence (test results) using Bayesian reasoning.

Public Health Communication and Health Literacy

CASOC indicators offer valuable insights for designing public health communications about complex statistical concepts, such as vaccine efficacy, treatment risks and benefits, and screening recommendations. By assessing how different populations comprehend statistical information using sensitivity, orthodoxy, and coherence metrics, public health officials can tailor communications to minimize misinterpretation. Research inspired by CASOC methodologies has already demonstrated that explaining the meaning of likelihood ratios produces only modest improvements in lay comprehension [2], suggesting that simply providing statistical information is insufficient for ensuring accurate understanding. These findings have direct implications for how drug development professionals communicate clinical trial results to patients, ethics committees, and the broader medical community.

Table 2: CASOC Applications in Drug Development and Healthcare

| Domain | Specific Application | CASOC Benefits | Representative Research Context |

|---|---|---|---|

| Clinical Trial Interpretation | Overall survival, progression-free survival, hazard ratio comprehension | Prevents overestimation/underestimation of treatment effects; ensures proper statistical reasoning | Inavolisib phase 3 trial (INAVO120): OS 34 vs. 27 months, HR=0.67 [16] |

| Biomarker-Driven Development | Patient stratification, companion diagnostic integration | Improves accuracy in subgroup effect interpretation; reduces biomarker misinterpretation | Vepdegestrant in ESR1-mutated breast cancer: PFS 5 vs. 2.1 months, HR=0.57 [16] |

| Regulatory Decision-Making | Benefit-risk assessment, label comprehension | Enhances consistency in evidence synthesis across multiple studies | FDA approval of novel drugs (2025): 38 novel drug approvals as of November 2025 [17] |

| Healthcare Communication | Patient consent, medical education, public health messaging | Facilitates accurate understanding of statistical concepts across diverse literacy levels | Research on LR explanations showing limited comprehension improvement [2] |

Experimental Protocols for CASOC Assessment

General Methodological Framework

The experimental assessment of CASOC indicators typically employs controlled studies that present participants with statistical evidence in various formats and measure their interpretations using standardized instruments. The core methodology involves several key components that can be adapted across disciplinary contexts:

Participant Selection and Sampling: Studies typically employ stratified sampling to ensure representation of relevant professional groups (e.g., clinical researchers, regulatory affairs specialists, medical affairs professionals). Sample sizes generally range from 100-300 participants to ensure adequate statistical power for detecting comprehension differences [18]. Inclusion criteria typically specify minimum professional experience or statistical training to ensure ecological validity.

Experimental Materials and Design: The core materials present realistic scenarios involving statistical evidence relevant to the target domain. For drug development applications, this might include clinical trial summaries, biomarker performance data, or benefit-risk profiles. The evidence is systematically varied across conditions, particularly in terms of statistical strength (e.g., different likelihood ratios, confidence intervals, or effect sizes) and presentation format (numerical, verbal, visual) [2] [19].

Data Collection Instruments: Standardized questionnaires collect multiple measures, including prior probability assessments, posterior probability assessments, perceived evidence strength (typically on Likert scales), and qualitative reasoning explanations. These measures enable the calculation of all three CASOC indicators through specific analytical procedures.

Specific Protocol for Sensitivity Assessment

Objective: To measure professionals' sensitivity to variations in evidence strength when interpreting clinical trial results or diagnostic test data.

Procedure:

- Participants review a series of evidence scenarios (e.g., different clinical trial outcomes for the same intervention).

- For each scenario, participants provide ratings of perceived evidence strength on a standardized scale (e.g., 0-100).

- The statistical strength of evidence systematically varies across scenarios (e.g., different hazard ratios, p-values, or confidence intervals).

- Participants may also provide categorical interpretations (e.g., "strong," "moderate," or "weak" evidence).

Analysis:

- Calculate correlation coefficients between actual statistical values and perceived evidence strength.

- Perform regression analysis with perceived strength as dependent variable and actual strength as independent variable.

- Assess whether participants can correctly order scenarios by statistical strength.

- Compute sensitivity metrics based on the slope of the relationship between objective and subjective evidence strength.

Specific Protocol for Orthodoxy Assessment

Objective: To evaluate whether professionals update their beliefs in accordance with Bayesian principles when presented with new statistical evidence.

Procedure:

- Participants provide initial probability estimates (prior probabilities) for a specific hypothesis (e.g., "This treatment provides clinically meaningful benefit").

- Participants receive statistical evidence relevant to the hypothesis (e.g., clinical trial results presented as likelihood ratios).

- Participants provide updated probability estimates (posterior probabilities) for the same hypothesis.

- The process may repeat with multiple pieces of sequential evidence.

Analysis:

- Compare participants' posterior probability assessments with Bayesian benchmarks calculated from their priors and the presented evidence.

- Identify specific reasoning fallacies, such as confusions between conditional probabilities.

- Calculate orthodoxy scores based on the deviation from normative Bayesian updating.

- Use measures like the "effective likelihood ratio" (posterior odds divided by prior odds) to quantify reasoning quality [2].

Specific Protocol for Coherence Assessment

Objective: To assess the internal consistency of statistical interpretations across different evidence presentations and related scenarios.

Procedure:

- Participants evaluate multiple forms of logically equivalent evidence presented in different formats (numerical, verbal, visual).

- Participants respond to related statistical scenarios where normative reasoning requires consistent interpretations.

- Evidence pairs are constructed to test specific coherence principles (e.g., extension, complementarity, transitivity).

Analysis:

- Identify logical contradictions between responses to related scenarios.

- Calculate coherence metrics based on the proportion of logically consistent response patterns.

- Assess format independence by comparing interpretations of statistically equivalent information presented differently.

- Evaluate stability of interpretations across time through test-retest procedures.

Diagram 1: CASOC Assessment Workflow

Research Reagent Solutions for CASOC Studies

Conducting rigorous CASOC assessment requires specific methodological tools and analytical approaches. The following table details essential "research reagents" – standardized instruments, scenarios, and analytical methods – that enable valid and reliable measurement of comprehension indicators across disciplinary contexts.

Table 3: Essential Research Reagents for CASOC Studies

| Reagent Category | Specific Tools | Primary Function | Implementation Examples |

|---|---|---|---|

| Evidence Scenarios | Clinical trial summaries; Diagnostic test results; Biomarker data; Safety profiles | Present realistic statistical evidence in systematically varied formats | INAVO120 trial results [16]; VERITAC-2 outcomes [16]; Diagnostic test LRs |

| Response Instruments | Prior/posterior probability scales; Evidence strength ratings; Qualitative reasoning prompts | Capture quantitative and qualitative aspects of statistical interpretation | 0-100 probability scales; 7-point evidence strength Likert items; Open-ended reasoning questions |

| Analytical Metrics | Effective likelihood ratio calculation; Bayesian deviation scores; Logical consistency indices | Quantify CASOC indicators from response data | Effective LR = Posterior Odds / Prior Odds [2]; Orthodoxy deviation scores |

| Statistical Software | R packages (brms, tidyverse); MATLAB scripts; Python (SciPy, NumPy) | Perform Bayesian analyses and calculate comprehension metrics | Custom scripts for bi-Gaussian calibration [14]; Logistic regression models |

Implications for Forensic Statistics and Drug Development

The methodological rigor embodied by CASOC indicators is driving a paradigm shift in forensic statistics toward greater transparency, empirical validation, and logical robustness [14]. This shift emphasizes replacing subjective judgment with data-driven, quantitative methods based on relevant data, quantitative measurements, and statistical models. The parallel applications in drug development are evident, particularly in the movement toward more transparent benefit-risk assessment, standardized clinical outcome interpretation, and validated diagnostic algorithm development. The cross-disciplinary exchange of methodological insights between forensic statistics and pharmaceutical development promises to enhance evidentiary standards in both fields.

Research on CASOC indicators has demonstrated that merely presenting statistical information, even with explanatory guidance, produces only modest improvements in comprehension [2]. This finding has profound implications for how statistical evidence should be communicated in high-stakes domains like drug development and regulatory review. Rather than relying on simplistic explanations, effective communication requires structured approaches that actively address common reasoning fallacies and promote normative statistical thinking. The development of CASOC-aligned communication tools – such as standardized visualizations, interactive calculators, and decision aids – represents a promising direction for improving how complex statistical evidence is understood and utilized across the drug development ecosystem.

Diagram 2: CASOC Interdisciplinary Connections

The CASOC framework transcends its origins in legal evidence evaluation to offer robust methodologies for assessing statistical comprehension across multiple domains, particularly drug development and healthcare. The triad of sensitivity, orthodoxy, and coherence provides a comprehensive approach to evaluating how professionals interpret complex statistical evidence, with direct applications to clinical trial assessment, biomarker validation, regulatory decision-making, and medical communication. As research continues to refine CASOC measurement approaches and applications, this framework promises to enhance the quality of evidentiary reasoning in all domains where complex statistical information informs high-stakes decisions. The ongoing paradigm shift toward more transparent, quantitative, and validated assessment of statistical evidence in both forensic science and drug development underscores the growing importance of CASOC-based approaches for ensuring both scientific rigor and practical impact.

Assessing Comprehension: Methodologies for Applying CASOC Indicators in Research and Practice

Experimental Designs for Testing CASCO Indicator Performance

Cancer cachexia is a complex metabolic syndrome characterized by loss of muscle with or without loss of fat mass, prominently featuring weight loss and frequently associated with anorexia, inflammation, insulin resistance, and increased muscle protein breakdown [20]. The CAchexia SCOre (CASCO) was developed to overcome the significant challenge of patient stratification in cancer cachexia, enabling a quantitative staging approach that facilitates more adequate therapy [20]. Within forensic statistics research and drug development, validating assessment tools like CASCO requires meticulously planned experimental designs that generate statistically robust, reproducible, and clinically relevant evidence. This technical guide outlines comprehensive experimental methodologies for evaluating CASCO indicator performance, framed within the rigorous requirements of forensic statistical analysis and pharmaceutical development.

The validation of CASCO represents a critical advancement over previous qualitative classifications of cachexia. Prior to its development, cachexia staging systems primarily categorized patients qualitatively into stages such as pre-cachexia, cachexia, and refractory cachexia, or incorporated prognostic factors like BMI and weight loss without integrating the multidimensional nature of the syndrome [21]. CASCO addresses this gap through a quantitative scoring system spanning 0-100, classifying patients into mild (15-28), moderate (29-46), and severe (47-100) cachexia based on five essential components: body weight loss and composition, inflammation/metabolic disturbances/immunosuppression, physical performance, anorexia, and quality of life [21]. This multidimensional approach enables more precise patient stratification for clinical trials and therapeutic interventions.

CASCO Component Analysis and Metric Validation

Core Components and Their Weighting in Cachexia Assessment

Table 1: CASCO Components and Their Relative Contributions to the Total Score

| Component | Abbreviation | Weight in CASCO | Key Measured Parameters |

|---|---|---|---|

| Body Weight Loss and Composition | BWC | 40% | Body weight loss, Lean body mass |

| Inflammation/Metabolic Disturbances/Immunosuppression | IMD | 20% | CRP, IL-6, Albumin, Pre-albumin, Lactate, Triglycerides, Urea, Anemia, ROS, Glucose tolerance/HOMA index, Lymphocyte count |

| Physical Performance | PHP | 15% | 5-question physical activity questionnaire |

| Anorexia | ANO | 15% | 4-question questionnaire from SNAQ (St. Louis VA Medical Centre) |

| Quality of Life | QoL | 10% | 25 questions from QLQ-C30 |

The CASCO validation study prospectively enrolled 186 cancer patients and 95 age-matched controls, with patients presenting various carcinoma types (lung, breast, head and neck, colon) at different disease stages (20% Stage I-IIIA, 80% Stage IIIB-IV) [21]. This participant distribution enables comprehensive validation across diverse cancer populations. The metric properties of CASCO were established through statistical analysis, defining three distinct cachexia severity groups with significant correlations found between CASCO scores and other validated indexes such as the Eastern Cooperative Oncology Group (ECOG) performance status [21].

Diagram 1: CASCO Assessment Workflow and Component Weighting

Experimental Design Framework for CASCO Validation

Core Validation Study Design

The foundational validation of CASCO employed an observational prospective case-control design, incorporating both cancer patients and age-matched controls to establish normative comparisons [21]. This design enables researchers to:

- Compare CASCO distributions across well-defined patient subgroups based on cancer type, stage, and treatment status

- Establish discriminant validity by comparing scores between cachectic and non-cachectic populations

- Correlate CASCO components with established clinical markers and outcomes

- Assess reliability through test-retest and inter-rater reliability measurements

For forensic statistical applications, this design must incorporate blinding procedures, pre-specified statistical analysis plans, and appropriate handling of missing data to minimize bias and ensure robust evidence generation.

Longitudinal Study Designs for Predictive Validation

Beyond cross-sectional validation, longitudinal designs are essential for establishing CASCO's predictive validity for clinical outcomes:

- Progressive disease cohorts to evaluate CASCO score trajectories in relation to disease progression

- Interventional trials to assess CASCO responsiveness to nutritional, pharmacological, and supportive care interventions

- Survival studies to correlate baseline and changing CASCO scores with overall survival and time to treatment failure

These designs should implement staggered enrollment, predefined assessment timepoints, and statistical adjustments for potential confounders such as age, cancer type, and concomitant treatments.

Methodological Protocols for CASCO Component Assessment

Body Composition Measurement Protocol

Objective: Quantify lean body mass and fat mass changes using standardized methodologies.

Equipment: Dual X-ray Absorptiometry (DEXA) preferred [20] or Bioelectrical Impedance Analysis (BIA) when DEXA unavailable

Procedure:

- Perform baseline assessment prior to cancer treatment initiation

- Conduct follow-up measurements at 3-month intervals

- Standardize measurement conditions (time of day, patient hydration status, clothing)

- Calculate lean body mass index (LBMI) as lean mass(kg)/height(m²)

- Document weight change from pre-illness stable weight

Statistical Analysis: Calculate absolute and percentage change in lean mass; establish thresholds for significant depletion (>10% loss) [20]

Inflammatory and Metabolic Biomarker Assessment

Objective: Systematically evaluate inflammatory, metabolic, and immunosuppression parameters.

Table 2: Biomarker Measurement Specifications for CASCO IMD Component

| Biomarker Category | Specific Markers | Measurement Method | Clinical Thresholds |

|---|---|---|---|

| Inflammation | CRP | Immunoturbidimetry | >5.0 mg/L [20] |

| Inflammation | IL-6 | ELISA | >4.0 pg/mL [20] |

| Metabolic | Albumin | Bromocresol green | <3.2 g/dL [20] |

| Metabolic | Pre-albumin | Immunoturbidimetry | <15 mg/dL |

| Metabolic | Hemoglobin | Automated analyzer | <12 g/dL [20] |

| Metabolic | Lactate, Triglycerides, Urea | Standard clinical chemistry | Laboratory reference ranges |

| Immunosuppression | Absolute lymphocyte count | Automated flow cytometry | <1.0 × 10⁹/L |

Procedure:

- Collect fasting blood samples in appropriate anticoagulants

- Process samples within 2 hours of collection

- Analyze using standardized, validated assays

- Batch samples to minimize inter-assay variability

- Document any acute conditions that may transiently affect biomarkers

Physical Performance and Patient-Reported Outcome Measures

Physical Performance Assessment:

- Implement validated 5-item physical performance questionnaire

- Consider supplementary objective measures (handgrip strength, 6-minute walk test) when feasible