Uncertainty Characterization in Likelihood Ratio Values: Foundations and Applications in Biomedical Research

This article provides a comprehensive exploration of uncertainty characterization for likelihood ratio (LR) values, tailored for researchers and professionals in drug development.

Uncertainty Characterization in Likelihood Ratio Values: Foundations and Applications in Biomedical Research

Abstract

This article provides a comprehensive exploration of uncertainty characterization for likelihood ratio (LR) values, tailored for researchers and professionals in drug development. It covers the foundational principles of LR and its role in quantifying diagnostic evidence under uncertainty, details methodological advances including uncertainty-aware estimation and application in safety signal detection, addresses key challenges in model miscalibration and small-sample inference, and validates approaches through comparative analysis with Bayesian methods. The synthesis offers a crucial framework for enhancing the reliability of statistical evidence in clinical research and decision-making.

Core Principles: Demystifying Likelihood Ratios and Uncertainty in Diagnostic Evidence

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What is a Likelihood Ratio, and how is it calculated from sensitivity and specificity?

The Likelihood Ratio (LR) is a measure of the strength of evidence provided by a diagnostic test. It compares the likelihood of a specific test result in patients with the target disorder to the likelihood of the same result in patients without the disorder [1]. It is derived directly from a test's sensitivity and specificity.

- Positive Likelihood Ratio (LR+) indicates how much the probability of disease increases when a test is positive. It is calculated as [2] [1]:

LR+ = Sensitivity / (1 - Specificity) - Negative Likelihood Ratio (LR-) indicates how much the probability of disease decreases when a test is negative. It is calculated as [2] [1]:

LR- = (1 - Sensitivity) / Specificity

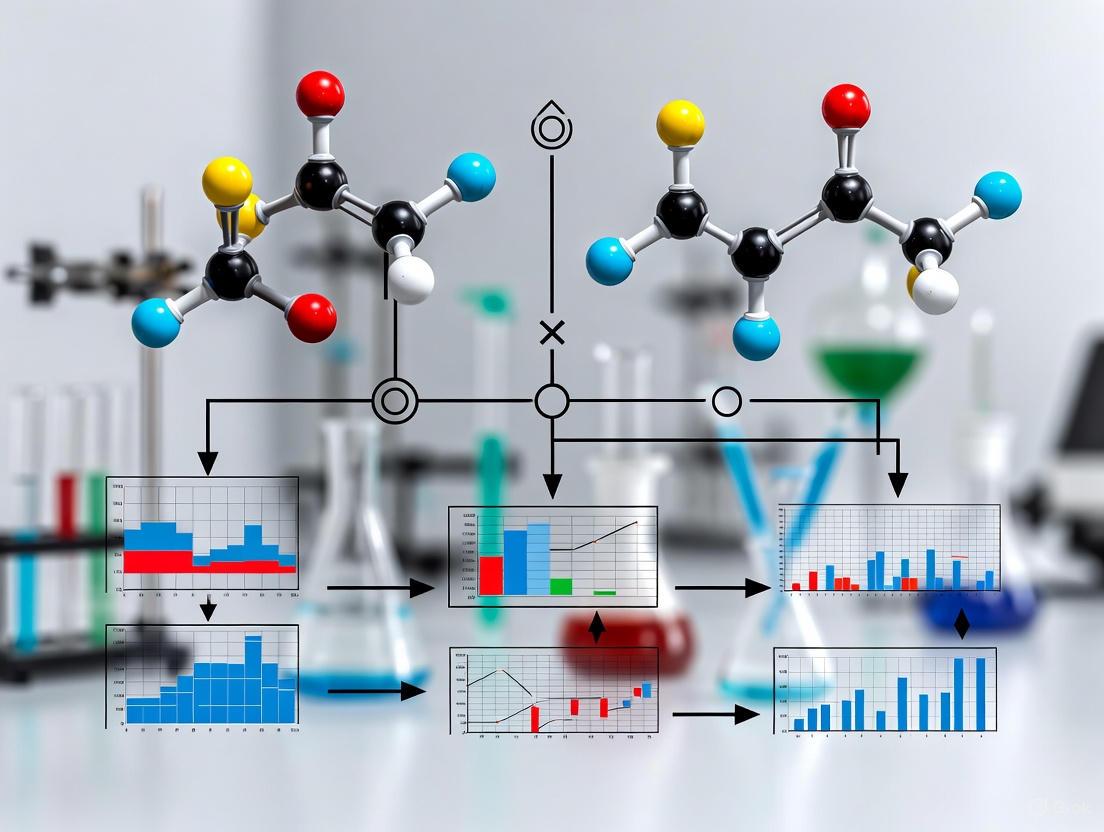

The following workflow illustrates the pathway from fundamental test metrics to the final evidence strength indicator:

FAQ 2: How do I interpret Likelihood Ratio values to update the probability of disease?

Likelihood Ratios are used to update the pre-test probability of disease to a post-test probability. This is done by converting probability to odds, multiplying by the LR, and converting back to probability [1].

- Post-test odds = Pre-test odds × Likelihood Ratio

- Post-test probability = Post-test odds / (Post-test odds + 1)

Interpretation Guide [1]:

- LR+ > 10: A positive test result is a strong predictor for ruling in the disease.

- LR- < 0.1: A negative test result is a strong predictor for ruling out the disease.

- LR values closer to 1: The test result provides little meaningful change to the pre-test probability.

The following chart illustrates the relationship between the pre-test probability, the LR value, and the resulting post-test probability.

FAQ 3: What are the best practices for presenting Likelihood Ratios in research to ensure clarity?

The accurate communication of LRs is critical for scientific and legal decision-makers. Research indicates that simply presenting a LR value without explanation may not be sufficient for full comprehension [3].

Recommendations:

- Present Numerical Values Clearly: Always provide the calculated LR+ and LR- values alongside their confidence intervals where possible.

- Include a Verbal Scale: Supplement numerical LRs with standardized verbal descriptions of the evidence strength (e.g., "moderately strong," "strong") to aid interpretation.

- Explain the Meaning: Contextualize what the LR means in plain language. For example, "An LR+ of 6 means a positive test result is 6 times more likely to be seen in a patient with the disease than without it." [1] [3].

- Show the Calculation: For transparency, consider presenting the underlying 2x2 contingency table or the sensitivity and specificity values used in the calculation [2].

FAQ 4: How can I perform a meta-analysis of Likelihood Ratios for drug safety signal detection across multiple studies?

The Likelihood Ratio Test (LRT) method can be adapted for safety signal detection from multiple observational databases or clinical trials. This is a two-step approach designed to control for heterogeneity across studies [4].

Experimental Protocol: LRT for Meta-Analysis [4]

Study-Level LRT Calculation:

- Apply the regular LRT to the safety data (e.g., drug-adverse event pairs) within each individual study.

- This generates a study-specific LRT statistic for each drug-event combination of interest.

Global Test Statistic Combination:

- Combine the LRT statistics from all studies into a single global test statistic. Common methods include:

- Simple Pooled LRT: Summing the LRT statistics across studies.

- Weighted LRT: Incorporating total drug exposure information or study size as weights to account for study precision.

- Compare the global statistic to a pre-specified significance level to test the null hypothesis that there is no safety signal across all studies.

- Combine the LRT statistics from all studies into a single global test statistic. Common methods include:

Troubleshooting:

- Challenge: Inconsistent drug exposure definitions across studies.

- Solution: Ensure exposure definitions (e.g., total dose, exposure time) are standardized and comparable before pooling data. Imputation may be necessary with clear assumptions [4].

FAQ 5: How does uncertainty quantification impact the interpretation of Likelihood Ratios in model-based research?

In computational models, such as those of biochemical pathways, parameters are estimated from data and are subject to uncertainty. This uncertainty, both aleatoric (inherent randomness) and epistemic (due to limited knowledge), propagates to model predictions, including calculated LRs [5] [6]. Proper uncertainty analysis is therefore essential.

Methodology: Integrated Uncertainty Analysis [6]

A robust strategy involves a multi-step process:

- Parameter Estimation: Find the maximum likelihood estimate (MLE) of model parameters.

- Identifiability Analysis: Use the profile likelihood method to establish confidence intervals for each parameter and identify non-identifiable parameters that cannot be constrained by the available data.

- Uncertainty Propagation: Employ Markov Chain Monte Carlo (MCMC) sampling to generate the posterior distribution of the parameters, which reflects their uncertainty given the data and prior knowledge.

- Prediction Uncertainty: Propagate the sampled parameter sets through the model to generate a distribution of the output (e.g., the LR), allowing you to quantify the confidence in your final evidence strength metric.

The table below summarizes example calculations of diagnostic test metrics, providing a clear reference for interpreting study results.

Table 1: Example Calculations of Diagnostic Test Accuracy Metrics from Sample Data

| Metric | Formula | Example Calculation | Result | Interpretation |

|---|---|---|---|---|

| Sensitivity | True Positives / (True Positives + False Negatives) | 369 / (369 + 15) [2] | 96.1% | Test correctly identifies 96.1% of diseased individuals. |

| Specificity | True Negatives / (True Negatives + False Positives) | 558 / (558 + 58) [2] | 90.6% | Test correctly identifies 90.6% of non-diseased individuals. |

| Positive Likelihood Ratio (LR+) | Sensitivity / (1 - Specificity) | 0.961 / (1 - 0.906) [2] | 10.22 | A positive test is ~10x more likely in a diseased person. |

| Negative Likelihood Ratio (LR-) | (1 - Sensitivity) / Specificity | (1 - 0.961) / 0.906 [2] | 0.043 | A negative test is ~0.04x as likely in a diseased person. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Likelihood Ratio Research

| Item | Function / Application |

|---|---|

| Statistical Software (R, Python, SAS) | For calculating sensitivity, specificity, LRs, performing meta-analyses, and running MCMC simulations for uncertainty quantification [4] [6]. |

| 2x2 Contingency Table | The fundamental data structure for organizing counts of true positives, false positives, false negatives, and true negatives for diagnostic test evaluation [2]. |

| Meta-Analysis Software | Tools that support fixed-effect and random-effects models, and the implementation of weighted Likelihood Ratio Tests for combining data from multiple studies [4]. |

| Markov Chain Monte Carlo (MCMC) Samplers | Computational algorithms used in Bayesian analysis to sample from posterior parameter distributions, crucial for understanding prediction uncertainty [6]. |

| Profile Likelihood Analysis | A computational method used to assess parameter identifiability and establish confidence bounds in model-based research [6]. |

The Critical Role of Pre-Test Probability and Bayes' Theorem in Contextualizing Results

Troubleshooting Guides

Guide 1: Troubleshooting Common Issues in Bayesian Analysis and Pre-Test Probability Estimation

Problem 1: Inaccurate Post-Test Probability Due to Poor Prior Selection

- Symptoms: The final probability of disease or treatment effect seems clinically implausible. Results are overly sensitive to minor changes in the prior.

- Solution: Systematically evaluate the prior. Use a meta-analytic-predictive (MAP) approach to formally incorporate high-quality historical data from previous trials, ensuring the assumption of exchangeability with the current data is met [7]. If such data is unavailable, use a non-informative or skeptical prior to minimize influence, and always conduct a sensitivity analysis to see how different priors affect the posterior results [8] [7].

Problem 2: Diagnostic Test Results Seem Contradictory to Clinical Presentation

- Symptoms: A positive test result occurs in a patient with a very low likelihood of disease (or a negative result in a high-risk patient), leading to confusion over whether to trust the test or clinical judgment.

- Solution: Re-assess the pre-test probability. In a patient with a very low pre-test probability, even a positive result from a good test may yield a post-test probability that is still not high enough to confirm disease [9] [10]. Use Fagan's Nomogram to quantitatively reconcile the test result with the clinical context. If the post-test probability remains indeterminate, consider further testing with a different method [9] [11].

Problem 3: Difficulty in Communicating Uncertainty of a Likelihood Ratio (LR)

- Symptoms: Stakeholders or regulators question the robustness of a single LR value, especially when different statistical models can produce different LRs for the same evidence [12] [13].

- Solution: Move beyond a single LR value. Conduct an extensive uncertainty analysis using a lattice of assumptions and an "uncertainty pyramid" framework [13]. Explore the range of LR values produced by different but reasonable models and assumptions. Use sensitivity analyses to demonstrate how the LR changes under different conditions and to show that your conclusions are robust [12].

Guide 2: Troubleshooting Clinical Trial Design in Rare Diseases

Problem 4: Infeasible Sample Sizes in Rare Disease Trials

- Symptoms: Conventional power calculations require more patients than are available globally, making a traditional randomized controlled trial impossible [7].

- Solution: Implement a Bayesian design that incorporates relevant external control data. This can be done via a meta-analytic-predictive (MAP) prior for the control arm, which effectively reduces the required number of concurrent control patients [7]. Alternatively, consider Bayesian hierarchical models to borrow strength across subgroups or related diseases [14] [7].

Problem 5: Regulatory Scrutiny on the Use of External Information

- Symptoms: Concerns from regulatory agencies about the validity of using historical data or prior information in a new trial.

- Solution: Proactively engage with regulators. Utilize programs like the FDA's Complex Innovative Design (CID) Paired Meeting Program to discuss proposed Bayesian designs [14]. Provide robust evidence for the exchangeability of the external data, and present comprehensive simulation studies showing the operating characteristics (e.g., type I error control, power) of the proposed design under various scenarios [8] [7].

Frequently Asked Questions (FAQs)

FAQ 1: What are Pre-Test and Post-Test Probabilities, and why are they critical?

- The pre-test probability is the estimated chance that a patient has a disease before a diagnostic test result is known. It is based on disease prevalence, patient history, symptoms, and risk factors [9] [10]. The post-test probability is the updated chance of having the disease after the test result is incorporated [11] [10]. They are critical because no test is perfect; the post-test probability provides a clinically actionable probability on which to base treatment decisions or further testing [9].

FAQ 2: How does Bayes' Theorem integrate with this process?

- Bayes' Theorem provides the mathematical framework for updating the pre-test probability to a post-test probability using the likelihood ratio (LR) of the diagnostic test [9] [11]. The formula is applied in terms of odds: Post-test Odds = Pre-test Odds × Likelihood Ratio [11]. This formally recognizes that the context (pre-test probability) is an essential factor in correctly interpreting any test result [9].

FAQ 3: What is a Likelihood Ratio (LR), and how is it interpreted?

- A likelihood ratio (LR) quantifies how much a given test result will raise or lower the probability of disease [9] [11]. The LR for a positive test (LR+) is

Sensitivity / (1 - Specificity). The LR for a negative test (LR-) is(1 - Sensitivity) / Specificity[9] [11]. - Interpretation:

- LR = 1: The test result provides no new information.

- LR > 1: The result increases the probability of disease. The further above 1, the greater the increase.

- LR < 1: The result decreases the probability of disease. The closer to 0, the greater the decrease [11].

FAQ 4: How can Bayesian methods accelerate drug development?

- Bayesian statistics allow for the formal incorporation of existing knowledge (prior information) into the design and analysis of clinical trials [8] [14]. This can lead to more efficient trials by:

- Reducing required sample sizes, especially crucial in rare diseases [7].

- Enabling more flexible and adaptive trial designs that can stop early for success or futility [14].

- Providing more intuitive probabilistic statements about treatment effects (e.g., "There is an 85% probability that the treatment is superior to control") [7].

FAQ 5: What are the key challenges in characterizing uncertainty for Likelihood Ratios?

- The primary challenge is that an LR is not an objective, fixed value. Its calculation depends on subjective choices, including [12] [13]:

- The statistical models and software used.

- The assumptions made about the data (e.g., handling of noise, thresholds).

- The features extracted from complex data as valid evidence.

- There is no single "gold standard" test to determine the one correct LR. Therefore, characterizing uncertainty requires exploring a range of reasonable models and assumptions rather than calculating a simple confidence interval [13].

Data Presentation

Table 1: Interpretation of Likelihood Ratios (LRs) and Their Impact on Diagnostic Certainty

| LR Value | Interpretation | Approximate Change in Probability | Typical Use in Decision-Making |

|---|---|---|---|

| > 10 | Large increase in disease probability | +45% | Strong evidence to rule in disease |

| 5 - 10 | Moderate increase in disease probability | +30% | Useful to rule in disease |

| 2 - 5 | Small increase in disease probability | +15% | Slightly increases disease probability |

| 1 - 2 | Minimal increase in disease probability | +5% | Little practical significance |

| 1 | No change | 0% | Test result is uninformative |

| 0.5 - 1.0 | Minimal decrease in disease probability | -5% | Little practical significance |

| 0.2 - 0.5 | Small decrease in disease probability | -15% | Slightly decreases disease probability |

| 0.1 - 0.2 | Moderate decrease in disease probability | -30% | Useful to rule out disease |

| < 0.1 | Large decrease in disease probability | -45% | Strong evidence to rule out disease |

Source: Adapted from [11]. Note: The approximate change is indicative and depends on the pre-test probability.

Table 2: Advantages of Bayesian Methods in Clinical Drug Development

| Application Area | Bayesian Advantage | Practical Implication |

|---|---|---|

| Rare Disease Trials | Incorporates external data (e.g., historical controls) via priors [7]. | Reduces required sample size; makes trials feasible where they otherwise wouldn't be. |

| Dose-Finding Trials | Provides flexible, model-based estimation of toxicity and efficacy [14]. | Improves accuracy of identifying the maximum tolerated dose (MTD) more efficiently. |

| Pediatric Drug Development | Allows borrowing strength from adult efficacy/safety data where appropriate [14]. | Reduces the number of pediatric patients exposed to clinical trials. |

| Subgroup Analysis | Uses hierarchical models to share information across subgroups [14]. | Yields more accurate and reliable estimates of drug effects in specific patient groups. |

| Complex Adaptive Designs | Naturally accommodates interim analyses and adaptations [14]. | Allows trials to be modified based on accumulating data, saving time and resources. |

Experimental Protocols

Protocol 1: Calculating and Applying Post-Test Probability using Fagan's Nomogram

Objective: To accurately determine the post-test probability of a disease given a pre-test probability and a test's likelihood ratio.

Materials: Fagan's Nomogram [11] [10], ruler, test sensitivity and specificity data.

Methodology:

- Estimate Pre-Test Probability: Based on clinical findings, disease prevalence, and patient risk factors, estimate the pre-test probability (as a percentage) [9] [10].

- Calculate the Likelihood Ratio: Using the known sensitivity and specificity of the diagnostic test, calculate either LR+ (for a positive test) or LR- (for a negative test) [9] [11].

- LR+ = Sensitivity / (1 - Specificity)

- LR- = (1 - Sensitivity) / Specificity

- Use the Nomogram:

- Locate the pre-test probability on the leftmost line of the nomogram.

- Locate the calculated LR on the central line.

- Place a ruler to connect these two points.

- Read the post-test probability where the ruler intersects the rightmost line [10].

Protocol 2: Implementing a Bayesian Meta-Analytic-Predictive (MAP) Prior for Control Arms

Objective: To leverage historical control data to reduce the number of concurrent control patients in a rare disease trial.

Materials: Individual patient data or aggregate statistics from previous, exchangeable, randomized control arms [7].

Methodology:

- Assess Exchangeability: Critically evaluate whether the patients in the historical trials are sufficiently similar (exchangeable) to the control patients expected in the new trial. Differences in inclusion criteria, standard of care, or trial conduct can violate this assumption [7].

- Perform Meta-Analysis: Perform a meta-analysis of the historical control data to estimate the mean effect and the between-trial heterogeneity.

- Form the MAP Prior: Use the results of the meta-analysis to construct an informative prior distribution for the control arm parameter in the new trial. This prior will have a mean based on the historical data and a variance that accounts for both within-trial and between-trial uncertainty [7].

- Design and Analysis: Proceed with the trial design, which may use unequal randomization (e.g., 2:1 in favor of the treatment arm). In the final analysis, the posterior distribution of the treatment effect will be derived by combining the MAP prior with the data from the new trial's control and treatment arms [7].

- Validate with Simulation: Before finalizing the design, conduct extensive simulations to understand the trial's operating characteristics (e.g., power, type I error) under various scenarios [7].

Mandatory Visualization

Diagram 1: Bayesian Probability Update Process

Diagram 2: Uncertainty Characterization for Likelihood Ratios

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Research |

|---|---|

| Fagan's Nomogram | A graphical calculator that eliminates the need for manual computation when converting pre-test probability to post-test probability using a likelihood ratio [11] [10]. |

| Meta-Analytic-Predictive (MAP) Prior | A statistical method to synthesize historical control data into a formal prior distribution for Bayesian analysis, crucial for robust trial design with external information [7]. |

| Sensitivity Analysis Software | Software (e.g., R, Python with PyMC, Stan) used to test how robust study conclusions are to changes in priors, models, or assumptions, which is essential for uncertainty characterization [12] [13]. |

| Likelihood Ratio Calculator | A simple tool (spreadsheet or web-based) to compute LR+ and LR- from a 2x2 contingency table of sensitivity and specificity values [9] [11]. |

| Bayesian Hierarchical Model | A multi-level statistical model that allows borrowing of information across related subgroups (e.g., different patient cohorts), providing more accurate and stable estimates for each group [14]. |

FAQs: Understanding and Troubleshooting Uncertainty in Your Models

What is the core difference between aleatoric and epistemic uncertainty?

The core difference lies in reducibility. Epistemic uncertainty stems from a lack of knowledge and is, in principle, reducible by collecting more data or improving your models [15] [16]. In contrast, aleatoric uncertainty arises from the intrinsic randomness or variability of a system and cannot be reduced, only better characterized, with more data [15] [16].

Imagine predicting a coin toss. Not knowing the coin's exact weight distribution is epistemic uncertainty; you could reduce it by carefully measuring the coin. The inherent randomness of which side lands up, however, is aleatoric uncertainty.

How can I qualitatively distinguish between these uncertainties in my drug development project?

You can distinguish them by considering the source and potential for resolution [17].

- Epistemic Uncertainty often manifests as:

- Limitations in Clinical Trials: Uncertainty arising from the trial's time-limited nature, restricted patient populations due to inclusion/exclusion criteria, or small sample sizes that make it hard to distinguish signal from noise [17].

- Unknown Unknowns: Gaps in knowledge about important "domains of harm" or long-term effects that were not studied [17].

- Aleatoric Uncertainty often manifests as:

- Human Variability: The inherent biological variation across a real-world population, which means a drug will not have the exact same effect in every individual [17].

- Stochastic Processes: The intrinsic randomness in biological systems, such as unpredictable individual patient responses even under identical conditions.

A practical method is to measure the total and aleatoric uncertainty and treat the epistemic uncertainty as the difference. In a deep learning context, you can achieve this with techniques that measure model sensitivity.

- Measure Total Uncertainty: Use a method like Deep Ensembles or Monte Carlo Dropout to train multiple models. The variance in their predictions reflects the total uncertainty.

- Measure Aleatoric Uncertainty: Train a model that outputs a probability distribution (e.g., mean and standard deviation for regression). The predicted variance for a single input captures the aleatoric uncertainty.

- Calculate Epistemic Uncertainty: The difference between the total uncertainty (variance across models) and the aleatoric uncertainty (average predicted variance) provides an estimate of the epistemic uncertainty [16].

If the variance across different models is high, epistemic uncertainty dominates. If the model consistently predicts high variance for individual data points, aleatoric uncertainty dominates.

Why is characterizing this distinction critical for regulatory and HTA submissions?

Effectively characterizing and communicating these uncertainties is a key part of regulatory decision-making. Regulators and Health Technology Assessment (HTA) bodies need to understand the sources of uncertainty to evaluate the robustness of a drug's benefit-risk profile [17] [18].

- Epistemic uncertainty informs what is not yet known but could be, potentially guiding post-market surveillance requirements or risk management plans [17].

- Aleatoric uncertainty defines the inherent variability in the treatment effect, which is crucial for understanding the drug's performance in heterogeneous real-world populations [17].

Clearly distinguishing between them allows for a more transparent discussion about which uncertainties can be mitigated and which are intrinsic and must be managed.

A likelihood ratio is a key output in our forensic evidence evaluation. How does uncertainty affect its interpretation?

The likelihood ratio (LR) itself is subject to significant uncertainty, which must be characterized to assess its fitness for purpose. Presenting a single LR value without acknowledging this uncertainty can be misleading [19].

The uncertainty in an LR stems from both aleatoric and epistemic sources. For example, in a fingerprint comparison:

- Aleatoric: The natural variation in fingerprint patterns across the population.

- Epistemic: The limitations of the automated comparison algorithm, the quality of the fingerprint images, and the specific dataset used to calibrate the scores.

A robust framework involves building an "uncertainty pyramid" by evaluating the LR under a lattice of different assumptions and models. This helps quantify the uncertainty in the LR value and ensures it is communicated alongside the result [19].

Diagnostic Tables for Uncertainty Characterization

| Feature | Aleatoric Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Also Known As | Statistical, stochastic, or intrinsic uncertainty | Systematic, subjective, or model uncertainty |

| Origin | inherent randomness, natural variability | lack of knowledge, incomplete information |

| Reducible? | No | Yes |

| Common in | real-world populations, measurement error | small data, model misspecification |

| Data Relationship | Irreducible with more data | Decreases with more data |

| Modeling Approach | Probabilistic outputs (e.g., predictive variance) | Bayesian inference, model ensembles |

Troubleshooting Guide: Symptoms and Solutions for High Uncertainty

| Symptom | Likely Dominant Uncertainty | Potential Mitigation Strategies |

|---|---|---|

| Model performance improves significantly with more training data. | Epistemic | Collect more data, use a more robust model architecture, perform feature engineering. |

| Model performance plateaus despite more data; predictions are consistently "fuzzy". | Aleatoric | Reframe the problem to predict distributions, incorporate measurement error models, set realistic performance expectations. |

| Model predictions are overconfident and wrong on novel data types. | Epistemic | Use Bayesian methods, ensemble models, or out-of-distribution detection techniques. |

| High variance in model outputs when retrained with different initializations. | Epistemic | Increase model stability with regularization, use ensemble averages as the final prediction. |

Experimental Protocols for Characterizing Uncertainty

Protocol 1: Quantifying Aleatoric Uncertainty with a Probabilistic Output Model

This protocol uses a neural network designed to learn and output the inherent noise (aleatoric uncertainty) in the data.

- Hypothesis: A neural network with a probabilistic output layer can robustly quantify the aleatoric uncertainty in a regression task, and this uncertainty will not decrease with a larger dataset.

- Materials:

- TensorFlow Probability library [16].

- Training dataset (full and a small subset).

- Methodology:

- Model Architecture: Construct a model where the final layer is a

DistributionLambdalayer. The preceding layer should have two neurons, representing the mean and standard deviation of a normal distribution. - Loss Function: Use the negative log-likelihood as the loss function. This allows the model to learn to predict the mean and variance by maximizing the likelihood of the training data.

- Training: Train the model separately on both the full dataset and a small subset of the data.

- Model Architecture: Construct a model where the final layer is a

- Expected Outcome: The predicted standard deviation (aleatoric uncertainty) from models trained on both datasets will be similar, demonstrating that this uncertainty is a property of the data itself and not reducible by adding more samples [16].

Protocol 2: Quantifying Epistemic Uncertainty with Bayesian Neural Networks

This protocol uses variational inference to approximate the posterior distribution over model weights, thereby quantifying epistemic uncertainty.

- Hypothesis: The epistemic uncertainty in a model's predictions, represented by the variation in predictions from a Bayesian neural network, will decrease as the size of the training dataset increases.

- Materials:

- TensorFlow Probability library [16].

- Training dataset (full and a small subset).

- Methodology:

- Model Architecture: Construct a model using

DenseVariationallayers. These layers place prior distributions over their weights and use variational inference to learn the posterior distributions. - Training: Train the model on both the full dataset and a small subset.

- Inference: For a given input, perform multiple forward passes (sampling from the posterior weight distributions each time) to generate a distribution of predictions.

- Model Architecture: Construct a model using

- Expected Outcome: The standard deviation of the predictions (epistemic uncertainty) will be significantly larger for the model trained on the small dataset compared to the model trained on the full dataset. This visually demonstrates that more data reduces epistemic uncertainty [16].

Workflow and Conceptual Diagrams

Aleatoric vs Epistemic Uncertainty Workflow

Likelihood Ratio Uncertainty Assessment

The Scientist's Toolkit: Key Research Reagents & Solutions

Essential Computational Tools for Uncertainty Quantification

| Tool / Solution | Function in Uncertainty Characterization |

|---|---|

| TensorFlow Probability (TFP) | A Python library for probabilistic modeling and Bayesian neural networks. Essential for implementing models that separate aleatoric and epistemic uncertainty [16]. |

| Bayesian Neural Network | A neural network with prior distributions on its weights. The primary tool for quantifying epistemic uncertainty in deep learning [16]. |

| Monte Carlo Dropout | A technique to approximate Bayesian inference by using dropout at test time. Multiple forward passes generate a predictive distribution for estimating uncertainty. |

| Markov Chain Monte Carlo (MCMC) | A class of algorithms for sampling from complex probability distributions, often used for fitting Bayesian models and estimating posterior distributions. |

| Likelihood Ratio Framework | A formal framework for evaluating evidence, requiring careful characterization of its own uncertainty through sensitivity analysis and an "uncertainty pyramid" [19]. |

A Likelihood Ratio (LR) quantifies how much a specific test result will change the odds of having a disease. It is the likelihood that a given test result would occur in a patient with the target disorder compared to the likelihood that the same result would occur in a patient without the disorder [1]. LRs are less likely to change with the prevalence of a disorder compared to other diagnostic metrics, making them particularly valuable for evidence-based assessment [1].

Interpreting LR Values: Strength-of-Evidence Scale

The power of an LR to change your pre-test suspicion into a post-test probability can be categorized on a standard strength-of-evidence scale. The following table provides a consensus framework for interpreting LR values.

Table 1: Strength-of-Evidence Scale for Likelihood Ratios

| LR Value | Interpretive Strength | Effect on Post-Test Probability |

|---|---|---|

| > 10 | Large (and often conclusive) increase | Significantly increases the likelihood of disease |

| 5 - 10 | Moderate increase | Moderate increase in the likelihood of disease |

| 2 - 5 | Small (but sometimes important) increase | Small increase in the likelihood of disease |

| 1 - 2 | Minimal increase | Alters probability to a minimal (and rarely important) degree |

| 1 | No change | No change in probability |

| 0.5 - 1.0 | Minimal decrease | Alters probability to a minimal (and rarely important) degree |

| 0.2 - 0.5 | Small decrease | Small decrease in the likelihood of disease |

| 0.1 - 0.2 | Moderate decrease | Moderate decrease in the likelihood of disease |

| < 0.1 | Large (and often conclusive) decrease | Significantly decreases the likelihood of disease [1] |

Practical Application of the Scale

- LRs greater than 1 increase the probability that the target disorder is present. The higher the LR, the greater the increase.

- LRs less than 1 decrease the probability of the disorder. The closer the LR is to zero, the greater the decrease.

- An LR of 1 does not change the probability at all, making the test result diagnostically useless [1].

Experimental Protocol: Calculating and Applying LRs

This section provides a detailed methodology for calculating LRs and applying them to update disease probability.

Step 1: Organize Data into a 2x2 Contingency Table

The first step involves classifying all patients into one of four groups based on their disease status (as determined by a "gold standard" test) and their result on the new diagnostic test.

Table 2: Diagnostic Test Results 2x2 Table

| Disease Present (Gold Standard +) | Disease Absent (Gold Standard -) | |

|---|---|---|

| Test Positive | True Positives (a) | False Positives (b) |

| Test Negative | False Negatives (c) | True Negatives (d) |

Step 2: Calculate Sensitivity and Specificity

- Sensitivity = a / (a + c)

- Specificity = d / (b + d) [2]

Step 3: Calculate the Likelihood Ratios

- Positive Likelihood Ratio (LR+) = Sensitivity / (1 - Specificity)

- Negative Likelihood Ratio (LR-) = (1 - Sensitivity) / Specificity [2] [1]

Step 4: Apply the LR to Calculate Post-Test Probability

To use an LR, you must first estimate the pre-test probability (your initial suspicion of disease based on history, prevalence, etc.) and then convert it to pre-test odds.

Convert Pre-test Probability to Pre-test Odds: Pre-test Odds = Pre-test Probability / (1 - Pre-test Probability) [1]

Calculate Post-test Odds: Post-test Odds = Pre-test Odds × Likelihood Ratio [1]

Convert Post-test Odds to Post-test Probability: Post-test Probability = Post-test Odds / (Post-test Odds + 1) [1]

Example Calculation: A patient has a pre-test probability of 50% for iron deficiency anemia. A serum ferritin test returns a positive result with an LR+ of 6.

- Pre-test Odds = 0.50 / (1 - 0.50) = 1

- Post-test Odds = 1 × 6 = 6

- Post-test Probability = 6 / (6 + 1) = 86% [1]

The diagram below illustrates this workflow for applying LRs in diagnostic decision-making.

Research Reagent Solutions: Key Materials for Diagnostic Test Evaluation

Table 3: Essential Materials for Diagnostic Test Assessment

| Research Reagent / Material | Primary Function |

|---|---|

| Gold Standard Test | Provides the definitive diagnosis against which the new diagnostic test is validated. Essential for classifying patients into true disease states [2]. |

| New Diagnostic Test / Assay | The test or instrument under investigation. Its results are compared to the gold standard to populate the 2x2 table. |

| Statistical Analysis Software | Used to perform calculations for sensitivity, specificity, predictive values, and likelihood ratios accurately and efficiently [2]. |

| Validated Patient Population | A well-characterized cohort of subjects with and without the target disorder. Crucial for generating reliable and generalizable test metrics [2]. |

Frequently Asked Questions (FAQs)

Q1: Why use LRs instead of sensitivity and specificity? LRs have several advantages: they are less likely to change with disease prevalence, can be calculated for multiple levels of a test (not just positive/negative), can be used to combine multiple test results, and directly enable the calculation of post-test probability [1].

Q2: How do I know if an LR is "good enough" for clinical use? Refer to the Strength-of-Evidence Scale in Table 1. As a general rule, LRs greater than 10 or less than 0.1 generate large and often conclusive shifts in probability. LRs between 5-10 or 0.1-0.2 generate moderate shifts. Results with LRs closer to 1 have minimal diagnostic value [1].

Q3: Can LRs be used for tests with continuous results? Yes. A powerful application of LRs is creating multilevel LRs for different intervals of a continuous test result (e.g., serum ferritin levels of <15, 15-60, >60 mmol/L). This provides a much more nuanced and useful interpretation than a single positive/negative cutoff [1].

Q4: How does this relate to uncertainty characterization in drug development? In drug development, decisions are made under significant uncertainty. Quantitative frameworks like the Probability of Success (PoS) are used to inform key milestones [20] [21]. The rigorous, probabilistic interpretation of evidence via LRs in diagnostics is methodologically aligned with these approaches, emphasizing the need to quantify and manage uncertainty in all stages of biomedical research.

Advanced Methods and Real-World Applications in Clinical Research and Drug Safety

Uncertainty-Aware Likelihood Ratio Estimation for Robust Out-of-Distribution Detection

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind using likelihood ratios for Out-of-Distribution (OoD) detection?

The core principle treats OoD detection not as a task of simple density estimation, but as a model selection problem between two hypotheses: whether the input data comes from the in-distribution (e.g., known classes in your training set) or from an out-of-distribution [22]. A likelihood ratio test provides a principled statistical framework for this comparison. Instead of relying solely on the likelihood of the in-distribution data, the method calculates the ratio of the likelihood under the in-distribution model to the likelihood under a proxy out-of-distribution model. A low score indicates the data is more likely under the OoD model, thus flagging it as anomalous [23] [22]. Incorporating uncertainty awareness allows the model to account for areas where the in-distribution data itself is uncertain, preventing overconfidence on rare or ambiguous inputs [24].

Q2: My OoD detection model is confidently misclassifying unknown objects. What could be wrong?

This is a common pathology of many standard OoD detection methods. As critically examined in [25], if your model is a standard supervised classifier trained only on in-distribution classes, it is fundamentally answering the wrong question. It learns features to distinguish between known classes (e.g., cats vs. dogs) but has no inherent reason to identify something fundamentally different (e.g., an airplane). Such a model can produce high-confidence (low uncertainty) predictions for OoD inputs if they possess features that help discriminate between the known classes. A shift towards uncertainty-aware likelihood ratio methods is recommended, as they explicitly model a distribution for outliers and incorporate epistemic uncertainty, which helps in identifying these "confidently wrong" cases [24] [25].

Q3: How can I improve my model's OoD detection without compromising its performance on known classes?

Retraining a core model on outlier data can disrupt its carefully learned feature representations, harming in-distribution performance. This is especially critical when using large foundational models where retraining is computationally expensive. The solution is to use a lightweight Unknown Estimation Module (UEM). The UEM is a small add-on network that is trained on top of the frozen, pre-trained core model. It learns to model a generic in-distribution and a proxy OoD distribution from data, allowing the calculation of a likelihood ratio score. Because the core model's parameters are fixed, its strong performance on known classes is preserved while OoD detection capabilities are significantly enhanced [23] [22].

Q4: What are the practical computational requirements for implementing these methods?

The uncertainty-aware likelihood ratio method is designed for efficiency. As reported in [24], it achieves state-of-the-art performance with only a negligible computational overhead. The use of an Unknown Estimation Module (UEM) also aligns with this goal, as it is an adaptive, lightweight component that avoids the need for retraining large models [23] [22]. The primary computational cost for methods using foundational models like DINOv2 is the initial feature extraction, but the OoD-specific enhancements themselves are efficient.

Troubleshooting Guides

Problem: High False Positive Rate in Near-OoD Scenarios

The model is incorrectly flaging rare or difficult examples from known classes as out-of-distribution.

| Potential Cause | Recommended Solution |

|---|---|

| In-distribution uncertainty is not accounted for. | Implement an evidential classifier to model epistemic uncertainty. This allows the likelihood ratio test to distinguish between true outliers and hard in-distribution examples [24]. |

| The feature representation is not robust enough. | Leverage a large-scale foundational model (e.g., DINOv2) as a feature backbone. Their rich and generalizable representations improve separation between known and unknown classes [22]. |

| The OoD model is too simplistic. | Replace simple distance-based metrics with a learned likelihood ratio. Train a small module to explicitly model a proxy OoD distribution for more robust comparison [23] [22]. |

Problem: Poor OoD Generalization Despite Outlier Exposure

The model does not generalize well to real-world unknowns despite being trained with proxy outlier data.

| Potential Cause | Recommended Solution |

|---|---|

| Proxy outliers are not representative. | Use a nuisance-aware diffusion model to generate diverse and challenging semantic outliers, which provides better supervision for the OoD detector [26]. |

| Training disrupts the feature space. | Freeze the core feature extractor and only train a lightweight adaptive UEM on top. This utilizes outlier data without corrupting the original feature space [23] [22]. |

| The scoring function is not directly optimized for OoD. | Employ a loss function that directly optimizes the likelihood ratio score, ensuring the training objective is aligned with the OoD detection goal [23]. |

Experimental Protocols & Data

Protocol 1: Implementing an Unknown Estimation Module (UEM)

This protocol outlines the steps to add a UEM to a pre-trained segmentation model for OoD detection [23] [22].

- Feature Extraction: Use a frozen pre-trained model (e.g., a foundational model like DINOv2 or a standard segmentation network) to extract pixel-wise feature vectors for each input image.

- Module Architecture: Define a small, trainable neural network (the UEM) that takes these feature vectors as input.

- Distribution Learning: Train the UEM with two objectives:

- Learn a single, class-agnostic probability distribution that models the features of all in-distribution classes.

- Learn a separate probability distribution using proxy outlier data (e.g., from a different dataset or generated synthetically) to model the OoD features.

- Likelihood Ratio Calculation: For a new test sample's feature ( z ), compute the likelihood ratio ( \Lambda(z) = \frac{p{in}(z)}{p{out}(z)} ), where ( p{in} ) is the likelihood from the in-distribution model and ( p{out} ) is the likelihood from the outlier model.

- Score Fusion: The final anomaly score can be a fusion of the likelihood ratio and the original model's softmax confidence for robust decision-making.

Protocol 2: Training with Uncertainty-Aware Likelihood Ratio

This protocol describes the core method from [24] for pixel-wise OoD detection.

- Model Setup: Employ an evidential classifier (e.g., using Dirichlet distributions) at the output of your segmentation network to capture predictive uncertainty.

- Uncertainty Propagation: Instead of using point estimates for features, propagate the uncertainty through the likelihood ratio test. This means the likelihoods ( p{in}(z) ) and ( p{out}(z) ) are themselves distributions that account for uncertainty in the feature space.

- Synthetic Outlier Exposure: During training, expose the model to synthetically generated outliers. The evidential framework allows the model to explicitly account for the imperfection of these synthetic outliers.

- Testing: At inference, the calculated uncertainty-aware likelihood ratio provides the OoD score. A low score indicates an OoD pixel.

The following table summarizes quantitative results from the cited works, demonstrating the effectiveness of these approaches on standard benchmarks.

Table 1: Performance comparison of likelihood ratio-based OoD detection methods.

| Method | Key Innovation | Average Precision (↑) | False Positive Rate (at 95% TP) (↓) | Key Metric Achievement |

|---|---|---|---|---|

| Uncertainty-Aware Likelihood Ratio [24] | Evidential classifier + likelihood ratio test with uncertainty propagation. | 90.91% (Avg. across 5 benchmarks) | 2.5% (Lowest avg. across 5 benchmarks) | State-of-the-art FPR. |

| Likelihood Ratio with UEM [23] [22] | Lightweight Unknown Estimation Module (UEM) on a foundational model. | Outperformed previous best by +5.74% (Avg. AP) | Lower than previous best | New state-of-the-art AP without affecting inlier performance. |

The Scientist's Toolkit

Table 2: Essential research reagents and computational tools for OoD detection research.

| Research Reagent / Tool | Function in Experiment |

|---|---|

| Foundational Model (DINOv2) | Provides a robust, general-purpose visual feature backbone. Used to extract high-quality features without the need for task-specific retraining [22]. |

| Proxy Outlier Datasets | Datasets (e.g., ImageNet-1K, OpenImages) used as surrogate OoD examples during training to teach the model the concept of "unknown" [23] [22]. |

| Synthetic Outlier Generator | A generative model (e.g., a diffusion model) used to create artificial OoD data, offering greater control and diversity over the outliers seen during training [26]. |

| Evidential Deep Learning Library | Software (e.g., PyTorch or TensorFlow implementations) to model epistemic uncertainty using Dirichlet distributions or other belief functions [24]. |

| Benchmark Datasets | Standardized OoD benchmarks (e.g., Fishyscapes, Segment-Me-If-You-Can) for evaluating and comparing the performance of different OoD detection methods [24] [23]. |

Methodological Workflows

Diagram: Uncertainty-Aware Likelihood Ratio Estimation System

Diagram: Experimental Workflow for UEM Integration

Applying Likelihood Ratio Tests for Drug Safety Signal Detection in Meta-Analyses

Core Concepts and Methodology

What is the fundamental principle behind using Likelihood Ratio Tests (LRT) for drug safety signal detection in multiple studies?

The LRT method for drug safety signal detection uses a two-step approach to identify signals of adverse events (AEs) across multiple studies. In the first step, the regular LRT is applied to safety data from each individual study. In the second step, the LRT test statistics from different studies are combined to derive an overall test statistic for conducting a global test at a prespecified significance level. If the global null hypothesis is rejected, the data provides evidence of a safety signal overall [4].

This approach addresses a key limitation of traditional meta-analysis methods, which don't adequately account for heterogeneity across studies in signal detection. The method works by estimating the log-likelihood ratio function from each study, then summing these functions to obtain a combined function used to derive the total effect estimate [27].

How does the LRT method handle drug exposure information across different studies?

When drug exposure information is available and consistent across studies, the LRT formulation can incorporate this data by replacing simple cell counts with actual exposure measures. However, the drug exposure definition must be consistent and comparable across different studies included in a single meta-analysis. When precise drug exposure information is unavailable, as often occurs in passive surveillance systems, the method uses reported AE counts as an approximation [4].

The log-likelihood ratio statistic with exposure information is calculated as [4]:

logLRij = nij × log(nij) - log(Eij) + (n.j - nij) × log(n.j - nij) - log(n.j - Eij) - n.j × log(n.j) - log(P.)

Where Eij = (Pi × n.j)/P. is the expected count, Pi is the drug exposure for drug i, and P. is the total drug exposure across all drugs.

Implementation Guide

What are the specific LRT methods available for meta-analysis of safety data?

Researchers can employ several LRT-based approaches for drug safety signal detection from large observational databases with multiple studies:

Table 1: LRT Methods for Drug Safety Signal Detection in Meta-Analyses

| Method Name | Description | Key Features | Best Use Cases |

|---|---|---|---|

| Simple Pooled LRT | Combines likelihood ratio statistics across studies without weighting | Simple implementation; assumes homogeneity | Preliminary analysis; homogeneous study designs |

| Weighted LRT | Incorporates total drug exposure information by study | Accounts for varying exposure levels; more precise | When reliable drug exposure data is available |

| Likelihood Ratio Meta-Analysis (LRMA) | Uses intrinsic confidence intervals based on combined likelihood functions | Avoids limitations of traditional 95% CIs in updates | Updated meta-analyses; when avoiding type-I error inflation is critical |

What are the computational steps to implement the weighted LRT method?

The implementation follows a structured workflow:

Step-by-Step Protocol:

- Data Preparation: Organize data into 2×2 contingency tables for each drug-AE pair across all studies

- Study-Level LRT Calculation: Compute regular LRT statistics for each study using the formula [4]:

LRij = (nij/Eij)^nij × ((n.j - nij)/(n.j - Eij))^(n.j - nij) - Exposure Weighting: Calculate weights based on drug exposure metrics (e.g., total patient-time, dose units)

- Statistical Combination: Combine weighted LRT statistics across studies using appropriate meta-analytic models

- Global Testing: Evaluate the combined statistic against appropriate critical values or compute p-values

- Multiple Testing Adjustment: Apply false discovery rate controls to account for many drug-AE pairs tested

Troubleshooting Common Issues

Why should I not use standard likelihood-ratio tests after ML estimation with clustering or sampling weights?

The "likelihood" for clustered or sampling-weighted (pweighted) maximum likelihood estimates is not a true likelihood because it doesn't represent the actual distribution of the sample. When clustering exists, individual observations are no longer independent, and the pseudolikelihood doesn't reflect this dependency. With sampling weights, the likelihood doesn't fully account for the randomness of the weighted sampling process [28].

Solution: Instead of likelihood-ratio tests, use Wald tests after estimating clustered or weighted MLEs. For complex survey data, the svy commands with adjusted Wald tests are recommended, particularly when the total number of clusters is small (<100). The Bonferroni adjustment can also be applied when testing multiple hypotheses, though it may be conservative if hypotheses are highly collinear [28].

How should I handle heterogeneity across studies when applying LRT methods?

Significant heterogeneity across studies can lead to misleading signal detection results. The LRMA framework provides specific approaches to address this:

Fixed Effect vs. Random Effects Considerations:

- Fixed effect LRT assumes each study estimates the same common effect

- Random effects LRT should be used when studies may be measuring different effects

- The choice depends on whether studies share a common analytical protocol and patient populations [27]

Diagnostic Steps:

- Test for heterogeneity using standard measures (I², Q-statistic) before applying LRT methods

- If significant heterogeneity exists, consider random effects LRT extensions

- Evaluate whether heterogeneity stems from study design, population differences, or outcome definitions

What are the common data quality issues affecting LRT implementation?

Table 2: Data Challenges and Solutions in LRT Meta-Analysis

| Data Challenge | Impact on LRT | Recommended Solution |

|---|---|---|

| Inconsistent exposure metrics | Invalid weighting across studies | Standardize exposure definitions; use sensitivity analysis |

| Sparse data (zero cells) | Computational instability in log-LR | Apply continuity corrections; use exact methods |

| Dependent tests across drug-AE pairs | Inflated false discovery rates | Implement hierarchical FDR control procedures |

| Missing studies or outcomes | Selection bias in combined estimates | Conduct systematic literature search; assess publication bias |

Performance Evaluation

How do I evaluate the performance of LRT methods for signal detection?

Simulation studies evaluating LRT methods typically assess both power (ability to detect true signals) and type-I error (false positive rate) under varying heterogeneity across studies. Performance metrics should include [4]:

Key Performance Indicators:

- Type-I Error Rate: Proportion of false signals when no true association exists

- Statistical Power: Proportion of true signals correctly detected

- False Discovery Rate: Proportion of identified signals that are false positives

- Precision-Recall Tradeoffs: Balance between sensitivity and positive predictive value

What are the advantages of LRMA over traditional 95% confidence intervals?

Likelihood ratio meta-analysis provides several key advantages [27]:

- Update Integrity: Unlike traditional 95% CIs, LRMA remains valid when meta-analyses are updated with additional data

- Interpretation Accuracy: LRMA correctly represents relative likelihoods (e.g., CI limits are typically 1/7th as likely as point estimates, not 1/20th)

- Evidence Measurement: Focuses on observed data rather than hypothetical extreme results

- Familiar Output: Produces point estimates and "intrinsic" confidence intervals similar to traditional methods

The Scientist's Toolkit

What are the essential research reagents and computational tools for implementing LRT meta-analysis?

Table 3: Essential Research Reagents and Tools for LRT Implementation

| Tool Category | Specific Solutions | Function/Purpose |

|---|---|---|

| Statistical Software | R metafor package, Stata, Python statsmodels |

Core computational infrastructure for meta-analysis |

| Specialized LRT Implementations | Custom R/Python scripts for LRMA | Implements intrinsic confidence intervals and likelihood combination |

| Data Management Tools | SQL databases, CSV standard formats | Organizes multiple study data with consistent structure |

| Visualization Packages | Graphviz (DOT language), ggplot2, matplotlib | Creates workflow diagrams and result visualizations |

| Safety Databases | FDA FAERS, Clinical trial databases | Sources of drug safety data for analysis |

How can I visualize the relationship between different components of the LRT framework?

Likelihood Ratio-Based Frameworks for Non-Inferiority Trial Analysis with Variable Margins

This technical support guide provides troubleshooting and methodological support for researchers implementing likelihood ratio (LR)-based frameworks in non-inferiority (NI) trials. These frameworks are particularly valuable when analyzing trials with complex, variable margins that require robust uncertainty characterization. In NI trials, the fundamental goal is to demonstrate that a new treatment is not unacceptably worse than an active control, often to establish ancillary benefits like reduced toxicity, lower cost, or easier administration [29] [30]. The LR framework offers a statistically sound approach to quantify evidence for non-inferiority while managing the uncertainty inherent in variable margin definitions and complex trial designs.

The integration of LR methods addresses key challenges in modern NI trials, including the need for interpretable evidence measures, handling of time-to-event outcomes, and adjustment for practical complexities like treatment switching. This guide outlines common experimental challenges, provides targeted solutions, and details essential research reagents to support your work in uncertainty characterization for NI trial analysis.

Troubleshooting Guides & FAQs

FAQ 1: How do I handle margin variability and uncertainty in likelihood ratio calculations?

Challenge: A researcher encounters inconsistent NI conclusions when margins vary due to uncertainty in historical data or clinical judgement.

Solution: Implement a likelihood ratio framework that explicitly incorporates margin uncertainty into the evidential strength calculation.

- Root Cause Analysis: Traditional fixed-margin approaches treat the non-inferiority margin (Δ) as a constant, ignoring its statistical variability. This can lead to overconfident conclusions when margins are estimated from historical data with substantial uncertainty [29] [30].

- Step-by-Step Protocol:

- Quantify Margin Uncertainty: Instead of a single Δ value, define a probability distribution for the margin based on the variability of the historical control effect used to set it. For example, if Δ is derived from a preserved fraction of the active control's effect, propagate the uncertainty of that effect estimate [29] [31].

- Integrate over Margin Distribution: Calculate the likelihood ratio for the NI hypothesis by integrating the likelihood function over the joint distribution of your trial data and the possible margin values. This can be done through Bayesian methods or simulation-based approaches [31].

- Interpret the Evidence: Use the computed LR to evaluate the strength of evidence for non-inferiority. A high LR value indicates strong evidence that the true treatment effect lies within the range of clinically acceptable non-inferiority, even accounting for margin uncertainty.

Preventive Measures: Pre-specify the method for handling margin uncertainty in the statistical analysis plan. Use sensitivity analyses to assess how conclusions change with different assumptions about margin variability [30].

Challenge: A research team observes low statistical power in their NI trial with a time-to-event endpoint when using the Hazard Ratio (HR), potentially missing the NI conclusion.

Solution: Use the Difference in Restricted Mean Survival Time (DRMST) as the summary measure, as it provides greater power and more straightforward clinical interpretation.

- Root Cause Analysis: The hazard ratio is a relative measure that relies on the often-violated proportional hazards assumption. When this assumption does not hold, power is lost, and interpretation becomes difficult [32] [31].

- Step-by-Step Protocol:

- Choose Time Horizon (τ): Select a clinically relevant time point τ that restricts the analysis. This should be pre-specified in the protocol [32].

- Calculate RMST: For each treatment group, compute the Restricted Mean Survival Time (RMST) as the area under the survival curve up to time τ: ( R(\tau) = \int_0^\tau S(t)\,dt ) [32] [31].

- Estimate DRMST: Calculate the difference: ( \Delta(\tau) = R{new}(\tau) - R{control}(\tau) ) [32].

- Construct Likelihood/Test: Model the sampling distribution of the DRMST. The likelihood ratio can then be constructed to test ( H0: \Delta(\tau) \leq -\delta ) versus ( H1: \Delta(\tau) > -\delta ), where δ is the NI margin [32] [31].

Validation: Empirical studies have shown that using DRMST can provide a power advantage of approximately 7.7 percentage points compared to the hazard ratio in NI trials [32]. The table below summarizes a comparison of key summary measures.

Table 1: Comparison of Summary Measures for Time-to-Event Outcomes in NI Trials

| Feature | Hazard Ratio (HR) | Difference in Survival (DS) | Difference in RMST (DRMST) |

|---|---|---|---|

| Interpretation | Relative, unit-less | Absolute risk difference at time τ | Absolute difference in mean event-free time until τ |

| PH Assumption | Required | Not required | Not required |

| Power (Empirical) | Reference | More powerful than HR | Most powerful (7.7% advantage over HR) |

| Data Used | Entire curve, weighted by events | Single point (at τ) | Entire curve up to τ |

| Recommended Use | Avoid if PH is suspect | Good for a single time point of interest | Preferred for power and interpretability |

FAQ 3: How should I adjust the analysis when treatment switching (crossover) occurs?

Challenge: Treatment switching from the control arm to the experimental arm confounds the intention-to-treat (ITT) analysis, biasing results toward no difference and risking an underpowered study or false non-inferiority conclusion.

Solution: Employ a simulation-based approach (e.g., the nifts method) to adjust the non-inferiority margin and power calculations to account for the impact of treatment switching.

- Root Cause Analysis: In an ITT analysis, patients who switch treatments are analyzed according to their original randomization. If switching is common, it dilutes the observed difference between groups, reducing the power to correctly establish non-inferiority [31].

- Step-by-Step Protocol:

- Pre-specify Switching Parameters: Before analysis, define models for the switching process based on prior knowledge: the probability of switching, the distribution of switching times (e.g., uniform, exponential), and patient characteristics that predict switching [31].

- Simulate Trial Outcomes: Use a tool like

niftsto simulate thousands of trial outcomes under the null and alternative hypotheses, incorporating the planned design (accrual, follow-up) and the pre-specified switching process [31]. - Adjust the NI Margin: Based on the simulation results, calculate an adjusted non-inferiority margin (δ_adj) that maintains the desired type I error rate despite the presence of treatment switching.

- Calculate Power/Sample Size: Use the simulations and the adjusted margin to estimate the achieved power or to determine the required sample size [31].

Key Consideration: The nifts approach allows for various entry patterns, survival distributions, and switching rules, making it adaptable to complex real-world scenarios [31].

Experimental Protocols

This protocol is used to compare the empirical power of the Hazard Ratio (HR), Difference in Survival (DS), and Difference in Restricted Mean Survival Time (DRMST) using reconstructed data from published trials [32].

Literature Search & Data Reconstruction:

- Search: Identify published NI trials with time-to-event outcomes from major clinical journals (e.g., NEJM, The Lancet). The search should be conducted on databases like PubMed [32].

- Inclusion Criteria: Select trials that provide Kaplan-Meier (KM) curves, risk tables, and the reported NI margin [32].

- Digitize & Reconstruct: Use software like WebPlotDigitizer to extract coordinates from the KM curves. Reconstruct individual patient data using algorithms like Guyot et al. [32].

Data Analysis:

- For each reconstructed dataset, estimate the three summary measures:

- HR: Using a Cox proportional hazards model.

- DS and DRMST (under PH): Using a flexible parametric survival model (e.g., with two internal knots).

- DS and DRMST (non-parametric): Using the Kaplan-Meier estimator [32].

- Convert Margins: Convert the original trial's NI margin to a margin for each summary measure using the observed data in the control arm to ensure a fair comparison [32].

- For each reconstructed dataset, estimate the three summary measures:

Power Calculation:

- For each method and dataset, test the non-inferiority hypothesis using the appropriate confidence interval and significance level (e.g., α = 0.025).

- Empirical Power: Calculate the proportion of the 65 reconstructed trials in which the null hypothesis was correctly rejected for each summary measure and estimation method [32].

Table 2: Key Reagents for Empirical Power Analysis

| Research Reagent | Function/Description | Application Note |

|---|---|---|

| WebPlotDigitizer | Tool to digitize and extract numerical data from published Kaplan-Meier curve images. | Essential for reconstructing the coordinates needed for the Guyot et al. algorithm. |

| Guyot et al. Algorithm | A computational method to reconstruct time-to-event (individual patient) data from digitized KM curves and risk table data. | The foundation for creating analyzable datasets from published literature. |

| Flexible Parametric Survival Model (e.g., in R) | A model that uses restricted cubic splines to model the baseline hazard, providing a smooth estimate of the survival function. | Used for estimating DS and DRMST under the proportional hazards assumption. |

dani R Package |

A specialized R package for the design and analysis of non-inferiority trials. | Can be used for calculating confidence intervals for DS and DRMST using the delta method. |

Protocol: Sample Size and Power Calculation for Trials with Treatment Switching

This protocol uses the simulation-based nifts method to determine power and sample size for NI trials with DRMST where treatment switching is anticipated [31].

Define Trial Design Parameters:

- Specify accrual time (

Ta), total trial duration (Te), and patient entry pattern (decreasing, uniform, or increasing) [31]. - Choose the desired power (e.g., 80% or 90%) and one-sided significance level (α, typically 0.025).

- Specify accrual time (

Specify Survival and Switching Scenarios:

- Survival Distributions: Define the generalized gamma distribution parameters for event times in the control and experimental groups [31].

- Switching Parameters: Define the proportion of patients expected to switch, the distribution of switching times (e.g., uniform, exponential), and any prognostic factors linked to switching [31].

- Dropout Censoring: Specify the distribution for loss-to-follow-up censoring [31].

Run Simulations and Adjust Margin:

- Use the

niftstool to simulate the trial thousands of times under the null hypothesis (true treatment effect equals -δ). - The tool will output an adjusted non-inferiority margin (δ_adj) that controls the Type I error rate at the desired α level in the presence of the specified switching.

- With δadj, run further simulations under the alternative hypothesis (true treatment effect > -δadj) to estimate the achieved power for a given sample size, or to find the sample size needed for the desired power [31].

- Use the

Conceptual Framework & Workflows

The following diagram illustrates the core logical workflow for implementing a likelihood ratio-based analysis in a non-inferiority trial, integrating the key concepts of margin definition, uncertainty characterization, and analysis in the presence of complexities like treatment switching.

Integrating Likelihood Ratios into Foundational Models for Open-World Segmentation

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using a likelihood ratio over a standard softmax classifier for open-world segmentation? A standard softmax classifier is trained only to discriminate between known classes, often leading to overconfident predictions for unknown objects. The likelihood ratio directly compares the probability that a pixel belongs to a known in-distribution versus an out-of-distribution (unknown) class. This provides a principled statistical framework for detecting unknowns without severely disrupting the feature representation of the foundational model [22].

Q2: Why should I use a lightweight Unknown Estimation Module (UEM) instead of fine-tuning the entire model? Fine-tuning large foundational models (e.g., DINOv2) on proxy outlier data can be computationally expensive and risks "catastrophic forgetting," where the model's performance on known classes degrades. The UEM is a small, adaptive module trained on top of the frozen foundational model. This approach enhances Out-of-Distribution (OoD) segmentation performance without compromising the model's original robust representation space [22].

Q3: My model struggles to distinguish unknown objects from background. How can this be improved? This is a common challenge due to ambiguous boundaries. One effective method is to augment your training pipeline with pseudo-labels for unknown objects generated by a large vision model like the Segment Anything Model (SAM). These pseudo-labels, after filtering with criteria like Intersection over Union (IoU) and aspect ratio, provide auxiliary supervisory signals that improve the model's recall for unknown targets [33].

Q4: How can I quantify the uncertainty of my segmentation model's predictions? Several uncertainty estimation methods can be integrated into segmentation models. Common techniques include:

- Monte Carlo Dropout (MCD): Performing multiple stochastic forward passes during inference.

- Ensemble Methods: Using multiple models with different initializations.

- Test Time Augmentation (TTA): Evaluating the model on multiple augmented versions of the input. These methods produce uncertainty measures like confidence maps, entropy, mutual information, and expected pairwise Kullback–Leibler divergence, which correlate with segmentation quality [34].

Q5: What are the best practices for selecting prompts when using promptable models like SAM for hypothesis generation? To generate a robust distribution of segmentation hypotheses, employ an active prompting strategy. This involves issuing multiple, random point prompts within a region of the image. The consistency (or lack thereof) of the returned masks is a powerful indicator of segmentation uncertainty for that region [35].

Troubleshooting Guides

Issue 1: High False Positive Rate for Unknown Object Detection

Symptoms: The model incorrectly labels background or known objects as unknown.

| Possible Cause | Solution | Relevant Metrics to Check |

|---|---|---|

| Poor quality proxy outlier data. | Curate a more representative proxy dataset. Use cut-and-paste methods or leverage large models like SAM to generate higher-quality pseudo-labels for outliers [22] [33]. | Average Precision (AP) for unknown classes, False Positive Rate (FPR). |

| Incorrectly calibrated likelihood ratio threshold. | Re-calibrate the decision threshold on a held-out validation set containing known and unknown objects. | Precision-Recall curve, FPR vs. True Positive Rate. |

| Bias towards background in source domain training. | Ensure a balanced ratio of foreground to background samples during the initial training phase. A 1:1 ratio is often optimal [33]. | Foreground/Background classification accuracy. |

Issue 2: Poor Performance on Known Classes After Incorporating UEM

Symptoms: A drop in standard metrics (e.g., mIoU) for the original known classes.

| Possible Cause | Solution | Relevant Metrics to Check |

|---|---|---|

| Feature representation of the foundational model is being altered. | Verify that the foundational model (e.g., DINOv2) is completely frozen during UEM training. Only the parameters of the UEM should be updated [22]. | mIoU on known classes, accuracy per class. |

| Leakage of known classes into the proxy outlier dataset. | Audit your proxy outlier dataset to ensure it does not contain any instances from your known classes. | Confusion matrix, known class accuracy. |

Issue 3: Low Recall for Genuine Unknown Objects

Symptoms: The model fails to segment unknown objects, misclassifying them as background or known classes.

| Possible Cause | Solution | Relevant Metrics to Check |

|---|---|---|

| The model is over-regularized on known classes. | Introduce a Self-adaptive Fairness Regularization (SFR) module during UEM training. This encourages diverse predictions and reduces bias toward dominant known classes, especially in early training [33]. | Recall for unknown classes, per-class accuracy. |

| Fixed, overly conservative thresholds for pseudo-labels. | Implement a dual-level dynamic thresholding strategy (SLUDA). Use a global threshold based on Exponential Moving Average (EMA) of confidence scores and class-specific local thresholds that adapt to the learning difficulty of each category [33]. | Pseudo-label quality, recall over training epochs. |

Experimental Protocols & Data Presentation

Protocol 1: Implementing the Unknown Estimation Module (UEM)

This protocol outlines the steps to implement the likelihood-ratio-based UEM on a pre-trained segmentation model [22].

- Leverage a Foundational Model: Start with a robust, pre-trained model like DINOv2 to extract general-purpose features.

- Generate Proxy Outlier Data: Create a dataset of "unknown" objects using techniques like cut-and-paste from an auxiliary dataset not containing the known classes.

- Build the UEM: Attach a small, trainable module to the frozen foundational model. This module should learn two distributions:

- A generic, class-agnostic distribution for all known (inlier) classes.

- A distribution for the proxy outlier data.

- Train the UEM: Train only the UEM parameters using an objective function designed to optimize the likelihood ratio score. The loss function should maximize the likelihood ratio for inliers and minimize it for outliers.

- Fuse Scores for Inference: During inference, calculate the final outlier score for each pixel by fusing the UEM's likelihood ratio with the original segmentation network's confidence.

Protocol 2: Generating and Refining Pseudo-Labels with SAM

This protocol describes how to use large models to create training data for unknown objects [33].

- Initial Mask Generation: Feed your images into the Segment Anything Model (SAM) using a prompting strategy (e.g., a grid of points) to generate a large set of candidate segmentation masks.

- Initial Filtering: Apply basic filters to remove very small or impossibly shaped masks.

- Pseudo-Label Refinement: Refine the candidate masks using criteria such as:

- Intersection over Union (IoU): Merge or remove highly overlapping masks.

- Aspect Ratio: Filter out masks with unrealistic dimensions for objects.

- Validation: Manually inspect a subset of the refined pseudo-labels to ensure quality before using them for training.

Quantitative Data from Key Studies

Table 1: OoD Segmentation Performance Comparison (Average Precision)

| Model / Method | SMIYC Benchmark | PASCAL VOC | MS COCO | Reference |

|---|---|---|---|---|

| UEM (Likelihood Ratio) | State-of-the-Art | State-of-the-Art | State-of-the-Art | [22] |

| Previous Best Method | ~5.74% lower AP | - | - | [22] |

| PixOOD (DINOv2, no training) | Significantly lower | - | - | [22] |

Table 2: Uncertainty Estimation Methods for Segmentation Quality Prediction

| Method | Description | R² Score (HAM10000) | Pearson Correlation (HAM10000) | |

|---|---|---|---|---|

| Proposed Framework (SwinUNet & FPN) | Leverages uncertainty maps & input image | 93.25 | 96.58 | [34] |

| Monte Carlo Dropout (MCD) | Approximates Bayesian inference with dropout at test time | - | - | [34] |

| Ensemble | Combines predictions from multiple models | - | - | [34] |

| Test Time Augmentation (TTA) | Averages predictions on augmented inputs | 85.03 (3D Liver) | 65.02 (3D Liver) | [34] |

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Open-World Segmentation Research

| Item | Function in Research | Example / Specification |

|---|---|---|

| Large Foundational Model | Provides a robust, general-purpose feature representation that is crucial for generalizing to unknown objects. | DINOv2 [22] |