The Multiple Comparisons Problem in Forensic Text Examination: Risks, Mitigation, and Validation Strategies

This article provides a comprehensive analysis of the multiple comparisons problem as it applies to forensic text examination.

The Multiple Comparisons Problem in Forensic Text Examination: Risks, Mitigation, and Validation Strategies

Abstract

This article provides a comprehensive analysis of the multiple comparisons problem as it applies to forensic text examination. It explores the foundational statistical concept and its specific implications for forensic linguistics, including the inflation of false discovery rates. The content details methodological approaches for applying the likelihood-ratio framework, troubleshooting common pitfalls like topic mismatch, and outlines rigorous validation protocols. Designed for researchers and forensic professionals, this guide synthesizes current scientific guidelines to enhance the reliability and admissibility of forensic text evidence.

Understanding the Multiple Comparisons Problem: A Foundational Risk in Forensic Science

Defining the Multiple Comparisons Problem and Family-Wise Error Rate (FWER)

Frequently Asked Questions (FAQs)

What is the Multiple Comparisons Problem?

The multiple comparisons problem refers to the inflation of Type I errors that occurs when a set of statistical inferences are performed simultaneously [1].

- The Core Issue: When you perform a single hypothesis test at a significance level of α=0.05, you accept a 5% chance of a false positive (incorrectly rejecting a true null hypothesis) [2]. However, as you conduct more tests, the probability of committing at least one Type I error across the entire "family" of tests increases substantially [1] [2].

- Practical Implication: Without proper correction, a researcher can easily identify numerous "significant" results purely by chance, leading to false discoveries and invalid scientific conclusions [3].

What is the Family-Wise Error Rate (FWER)?

The Family-Wise Error Rate is the probability of making one or more false discoveries (Type I errors) when performing multiple hypotheses tests [4].

- Formal Definition: FWER = Pr(V ≥ 1), where V is the number of false positives [4].

- Analogy: Imagine rolling a six-sided die. The chance of rolling a "6" on a single roll is 1/6. However, if you roll the die four times, the probability of getting at least one "6" rises to about 52% [2]. Similarly, with multiple tests, the chance of at least one false positive grows rapidly.

- Relationship to Multiple Comparisons: FWER is a stringent metric that quantifies the risk introduced by the multiple comparisons problem, aiming to control the overall error rate for a defined family of tests [4] [5].

Why is Controlling the FWER Important in My Research?

Controlling the FWER is crucial for maintaining the integrity and reliability of your research findings, especially in fields where conclusions have significant consequences.

- Preventing False Positives: It ensures that the probability of reporting any false positive finding across your entire experiment remains below a predefined threshold (e.g., 5%) [4] [3].

- Regulatory and Validation Contexts: In drug development and forensic analysis, false claims can have serious ethical, financial, and safety implications. FWER control provides a strong, conservative standard that is often required in regulatory submissions and rigorous scientific validation [1].

What is the Difference Between FWER and FDR?

FWER and the False Discovery Rate (FDR) are two different measures for handling Type I errors in multiple testing, with varying levels of stringency [1] [5].

- FWER: Controls the probability of at least one false positive. It is a stricter standard [4] [5].

- FDR: Controls the expected proportion of false positives among all discoveries (rejected hypotheses). It is less strict and offers more power, making it suitable for exploratory studies where some false discoveries are acceptable [1] [3].

The table below provides a clear comparison:

| Feature | Family-Wise Error Rate (FWER) | False Discovery Rate (FDR) |

|---|---|---|

| Definition | Probability of at least one Type I error | Expected proportion of Type I errors among all significant findings |

| Control Focus | Per-family (strong control) | Per-discovery (proportional control) |

| Stringency | High (Conservative) | Lower (Less Conservative) |

| Best Use Cases | Confirmatory research, clinical trials, safety-critical fields | Exploratory research, hypothesis generation, high-throughput screening |

When Should I Adjust for Multiple Comparisons?

You should adjust for multiple comparisons whenever you are testing multiple hypotheses that are part of a related family of inferences intended to answer a single broader research question [4] [1].

- Defining a "Family": A family is the smallest set of inferences from which results are selected for action, presentation, or highlighting [4]. In forensic text examination, this could be all the linguistic features compared between two documents.

- Key Principle: If you are making a joint interpretation from several tests or would be concerned about the chance of any false positive in the set, then FWER control is appropriate [2].

What Are Common Methods to Control FWER?

Several procedures exist to control the FWER. The following table summarizes the most common ones:

| Method | Procedure | Key Characteristics |

|---|---|---|

| Bonferroni | Reject a hypothesis if its p-value ≤ α/m (where m is the total number of tests) [4] [1]. | Very simple and guarantees FWER control but is overly conservative, leading to low power [6]. |

| Holm (Step-Down) | Order p-values from smallest to largest (p₁...pₘ). For the i-th p-value, reject if pᵢ ≤ α/(m - i + 1). Stop at the first non-significant test [4] [1]. | More powerful than Bonferroni while still controlling FWER. A closed testing procedure [4]. |

| Hochberg (Step-Up) | Order p-values from smallest to largest. Start from the largest p-value and find the first pᵢ ≤ α/(m - i + 1), then reject all hypotheses with p-values smaller than or equal to pᵢ [4] [1]. | Generally more powerful than Holm but requires an assumption of independent or positively correlated test statistics [4]. |

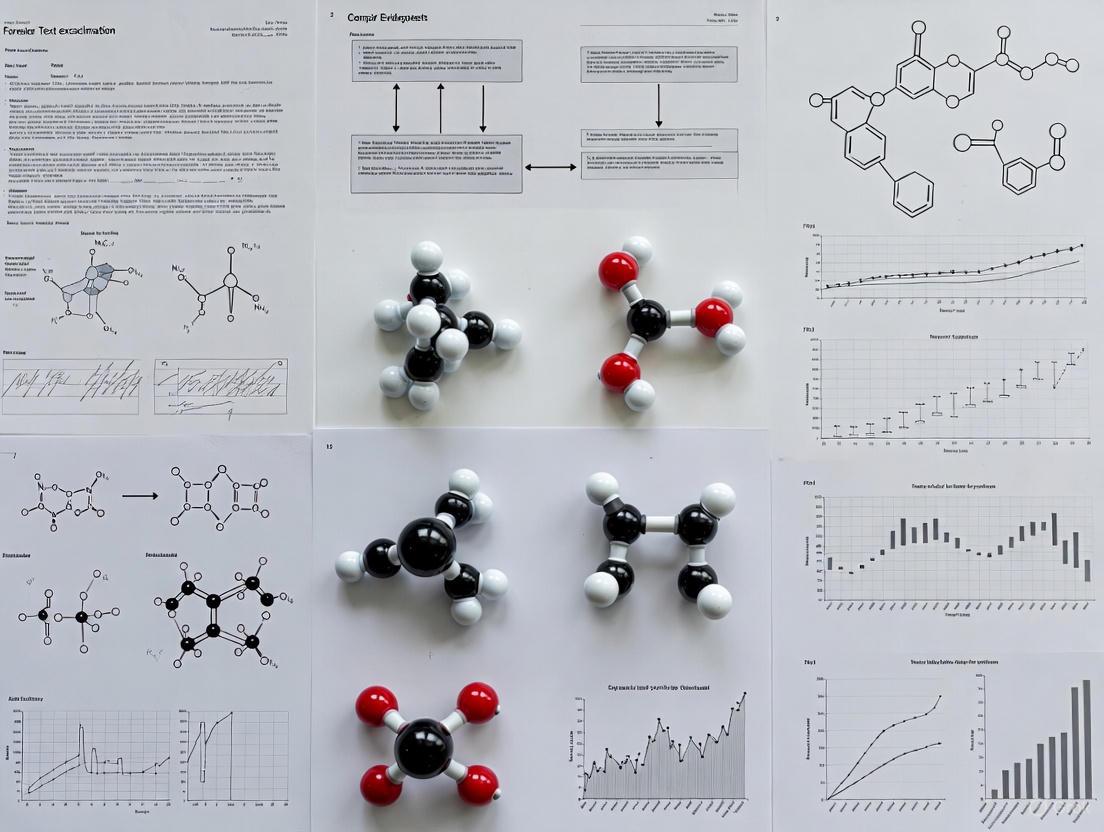

The logical workflow for these stepwise methods can be visualized as follows:

What Are the Trade-Offs of FWER Control?

The primary trade-off in controlling the FWER is between Type I and Type II errors [1] [2].

- Reduced Power: Stringent FWER control methods like Bonferroni reduce the chance of false positives but increase the chance of false negatives (Type II errors), meaning you might miss real effects [6].

- Balancing Act: The choice of whether and how to control for multiple comparisons depends on the costs of each type of error in your specific research context [2]. In confirmatory phases of drug development, avoiding false positives is paramount. In early exploratory research, you might prioritize avoiding false negatives.

How Do I Implement These Corrections in Practice?

Most standard statistical software packages (e.g., R, SAS, Python with statsmodels) include built-in functions for multiple testing corrections.

- Adjusted P-values: Many procedures output "adjusted p-values." You can directly compare these to your original significance level (α). If an adjusted p-value is less than α, the test is significant after correction [1].

- Example: In R, you can use

p.adjust(p_values, method = "bonferroni")orp.adjust(p_values, method = "holm")to get a vector of adjusted p-values.

The Scientist's Toolkit: Key Reagents for Multiple Comparisons Analysis

| Item | Function in Analysis |

|---|---|

| Raw P-values | The original, unadjusted significance probabilities resulting from individual hypothesis tests. The primary input for all correction methods [1]. |

| Significance Level (α) | The pre-specified threshold for significance (e.g., 0.05). After correction, the adjusted threshold (α/m for Bonferroni) is compared to raw p-values, or adjusted p-values are compared to α [4] [1]. |

| Contrasts | In the context of ANOVA or linear models, these are weighted linear combinations of parameters (e.g., group means) used to test specific hypotheses. Some MCPs, like Dunnett's test, use optimized contrasts [7] [5]. |

| Test Statistic Matrix | A collection of the observed test statistics (e.g., t-values, F-values) for all comparisons. Used in resampling-based methods to model the dependence structure between tests [4]. |

| Covariance Matrix | Represents the estimated correlations between test statistics. Advanced methods (e.g., generalized MCP-Mod) use this to account for dependence, improving power over methods that assume independence [7]. |

The Inevitability of Multiple Tests in Forensic Text Comparison

Frequently Asked Questions

Q1: Why can't I just use a single, definitive test for forensic text comparison?

Using a single test is risky because textual evidence is complex and influenced by many factors beyond authorship, such as topic, genre, and the author's emotional state [8]. A single test might be biased by one of these specific conditions. Conducting multiple tests under varied, case-relevant conditions is necessary to validate that your method is robust and to avoid misleading results [8]. Furthermore, relying on a single methodology fails to account for the need to measure both false positive and false negative rates to fully understand a method's accuracy [9].

Q2: What is the Likelihood Ratio (LR) framework and why is it important?

The Likelihood Ratio (LR) framework is the logically and legally correct approach for evaluating the strength of forensic evidence [8] [10]. It provides a transparent, quantitative measure that helps the trier-of-fact update their beliefs about the hypotheses in a case [8]. An LR is calculated as follows [8]:

LR = p(E|Hp) / p(E|Hd)

- E: The observed evidence (e.g., the linguistic features in the text).

- Hp: The prosecution hypothesis (e.g., the suspect is the author).

- Hd: The defense hypothesis (e.g., someone else is the author).

An LR > 1 supports the prosecution's hypothesis, while an LR < 1 supports the defense's hypothesis. The further the value is from 1, the stronger the support.

Q3: What are the key requirements for a validation experiment in forensic text comparison?

For a validation experiment to be scientifically defensible, it must meet two key requirements [8]:

- Reflect Case Conditions: The experiment must replicate the specific conditions of the case under investigation (e.g., mismatches in topic or genre between documents).

- Use Relevant Data: The data used for testing must be relevant to the specific case. Using irrelevant data can lead to misleading performance metrics and incorrect conclusions in casework.

Q4: My tests are producing inconsistent results. What could be the cause?

Inconsistency can arise from several sources related to the multiple testing problem:

- Variable Writing Style: An author's style can change based on topic, formality, or recipient [8]. If your known and questioned documents have different topics, a single model may not be sufficient.

- Unvalidated Conditions: The tests might not be calibrated for the specific conditions (e.g., document type, text length) of your case [10]. Performance can vary significantly between different sets of challenging conditions.

- Data Representativeness: The background data used to calculate typicality may not be representative of the population relevant to your case [8].

Q5: How do I report results from multiple tests in a legally sound way?

It is legally inappropriate to present a posterior probability (the probability that a hypothesis is true) [8]. Your report should focus on the strength of the evidence itself. When multiple tests are used, the report should:

- Clearly state that results are based on a validated system using the LR framework.

- Specify the conditions under which the validation was performed.

- Acknowledge the potential for error and report relevant error rates where possible [9].

Experimental Protocols

Protocol 1: Validating a System for Topic Mismatch

This protocol outlines how to validate a forensic text comparison system for a scenario where the questioned and known documents differ in topic [8].

- Define Hypotheses: Formulate your prosecution (Hp) and defense (Hd) hypotheses.

- Select and Prepare Data:

- Known Documents: Collect a set of texts from a known author.

- Questioned Documents: Collect texts of unknown authorship.

- Background Data: Compile a large, representative corpus of texts from many different authors to model typicality under Hd.

- Induce Mismatch: Ensure the known and questioned documents from the same author cover different topics to simulate the case condition.

- Feature Extraction: Quantitatively measure the linguistic features of interest (e.g., word frequencies, syntactic markers) from all documents.

- Calculate Likelihood Ratios: Use a statistical model (e.g., a Dirichlet-multinomial model) to calculate LRs based on the extracted features [8].

- Calibration: Apply a post-hoc calibration method, such as logistic regression, to the output LRs to improve their reliability [8].

- Performance Assessment: Evaluate the calibrated LRs using metrics like the log-likelihood-ratio cost (Cllr) and visualize the results with Tippett plots [8] [10].

This protocol describes a method for deriving LRs from examiners' traditional categorical conclusions (e.g., Identification, Inconclusive, Elimination) [10].

- Black-Box Studies: Conduct studies where examiners evaluate pairs of items (same-source and different-source) and assign a categorical conclusion for each trial.

- Data Pooling: Collect response data from a large number of test trials and multiple examiners.

- Model Training: Train a statistical model (e.g., using Dirichlet priors or an ordered probit model) on the pooled response data [10].

- LR Calculation: For each categorical conclusion, calculate the corresponding LR as follows [10]:

LR = p(Category|Same-Source) / p(Category|Different-Source) - Case Application: In casework, when an examiner provides a categorical conclusion, the corresponding LR value can be substituted or provided alongside it.

Note: A key limitation of this method is that it relies on data pooled from multiple examiners. For the LR to be meaningful for a specific case, it should ideally be based on data from the particular examiner involved and under conditions that reflect the case details [10].

Data Presentation

This table illustrates how categorical conclusions from a black-box study can be converted into quantitative Likelihood Ratios [10].

| Categorical Conclusion | Probability under Hp (Same-Source) | Probability under Hd (Different-Source) | Likelihood Ratio (LR) |

|---|---|---|---|

| Identification | 0.85 | 0.02 | 42.5 |

| Inconclusive A | 0.10 | 0.08 | 1.25 |

| Inconclusive B | 0.04 | 0.15 | 0.27 |

| Inconclusive C | 0.01 | 0.25 | 0.04 |

| Elimination | 0.00 | 0.50 | 0.00 |

Table 2: Essential Research Reagents and Materials for Forensic Text Comparison

This table lists key components for building and testing a forensic text comparison system [8] [10] [11].

| Item | Function in Research |

|---|---|

| Reference Text Corpus | A large, relevant collection of texts from many authors; provides background data for modeling typicality and estimating the probability of evidence under Hd [8]. |

| Validation Dataset | A dedicated set of text samples with known authorship, used to test system performance under controlled, case-like conditions; should be separate from data used to develop the system [8]. |

| Statistical Software (R/Python) | Platform for implementing statistical models (e.g., Dirichlet-multinomial, logistic regression) and calculating performance metrics like Cllr [8]. |

| Categorical Response Data | Data collected from examiner black-box studies, used to train models that convert traditional conclusions into likelihood ratios [10]. |

| Video-Spectral Comparator | Forensic device used for non-destructive examination of physical documents; employs different light sources to detect alterations in handwriting or ink [11]. |

Workflow Visualization

Forensic Text Comparison Workflow

Multiple Testing & Validation Logic

Frequently Asked Questions

1. What is the multiple comparisons problem in the context of forensic text examination? The multiple comparisons problem, also known as "p-hacking" or "data dredging," occurs when a large number of statistical tests are performed on a dataset, increasing the probability that at least one test will show a statistically significant difference purely by chance (a false positive or Type I error) [12] [13]. In forensic text examination, this can happen when an analyst conducts numerous database searches or compares a questioned text against a vast number of potential authors. Each additional comparison increases the overall risk of incorrectly linking a text to an author [14].

2. How does the error rate inflate with multiple tests? The family-wise error rate (FWER), or the chance of at least one false positive, increases dramatically with the number of comparisons made. The formula for this inflation is: α_inflated = 1 − (1 − α)^N, where N is the number of hypotheses tested, and α is the significance level for a single test (typically 0.05) [15]. The table below shows how the error rate grows:

| Number of Comparisons (N) | Family-Wise Error Rate (α = 0.05) |

|---|---|

| 1 | 5.0% |

| 2 | 9.8% |

| 3 | 14.3% |

| 4 | 18.5% |

| 5 | 22.6% |

| 6 | 26.5% |

Table 1: Inflation of Type I error rate with an increasing number of statistical comparisons. Adapted from [15].

3. What is the difference between controlling the Family-Wise Error Rate (FWER) and the False Discovery Rate (FDR)? Choosing between FWER and FDR control involves a trade-off between statistical power and error control [12] [15].

- FWER Control (e.g., Bonferroni correction): Methods that control the FWER are more conservative. They strictly limit the probability of making any false discoveries. This is crucial in fields like forensic science, where even one false positive can have severe consequences. However, this strictness reduces statistical power, increasing the chance of missing a true positive (Type II error) [12] [15].

- FDR Control (e.g., Benjamini-Hochberg procedure): Methods that control the FDR are less strict. They limit the proportion of false positives among all declared significant results, which helps retain greater statistical power. This can be more suitable for exploratory research, where the goal is to identify candidate positives for future validation [12] [13].

Troubleshooting Guides

Issue: High Risk of False Positives in Database Searches

- Symptoms: Seemingly significant author-text matches are found, but they fail to validate in follow-up studies or lack linguistic plausibility.

- Root Cause: Performing a large number of statistical comparisons without adjusting significance thresholds, leading to alpha inflation [12] [13].

- Solution: Apply multiple testing corrections.

- Bonferroni Correction: A simple single-step procedure. Divide your desired significance level (α) by the number of comparisons (N) to get a new, stricter threshold. For example, for 10 comparisons at α=0.05, the new threshold is 0.005 [12] [15]. This method strongly controls the FWER but can be overly conservative when N is very large.

- Benjamini-Hochberg Procedure: A step-up procedure that controls the False Discovery Rate (FDR).

- Conduct your N tests and order the resulting p-values from smallest to largest: P(1) ≤ P(2) ≤ ... ≤ P(N).

- Find the largest k such that P(k) ≤ (k / N) * α, where α is your desired FDR level.

- Reject the null hypothesis (declare significant) for all tests with p-values less than or equal to P(k) [12] [13].

Issue: Inconsistent or Non-Replicable Findings

- Symptoms: Results from one analysis cannot be replicated in a new, independent dataset.

- Root Cause: Premature stopping of analyses, "p-hacking" (trying different analytical approaches until a significant result is found), or using an inadequate sample size that is underpowered and susceptible to random fluctuations [13].

- Solution: Adopt pre-registered experimental protocols.

- Pre-registration: Before analyzing the data, publicly document your hypotheses, primary outcome measures, and the exact statistical analysis plan. This prevents the unconscious manipulation of analyses to obtain desired results [13].

- Sample Size and Power Analysis: Before data collection, conduct a power analysis to determine the sample size required to detect a meaningful effect with a high probability (e.g., 80% power), while controlling for Type I and Type II errors [13].

Experimental Protocols

Protocol 1: Controlling Error in a Multi-Hypothesis Text Alignment Experiment

Objective: To identify the true author of a questioned text from a database of N potential authors while controlling the Family-Wise Error Rate.

Methodology:

- Feature Extraction: Convert the questioned text and all N reference texts in the database into a quantitative feature set (e.g., word frequency vectors, syntactic markers).

- Similarity Scoring: For each of the N reference authors, perform a statistical test (e.g., a t-test or a similarity metric with a known distribution) to compare their text features against the questioned text. This generates N p-values.

- Multiple Testing Correction: Apply the Bonferroni correction to control the FWER.

- Validation: Any significant match should be subjected to further, confirmatory analysis by a separate team or using a hold-out dataset to establish linguistic validity and robustness.

The workflow for this protocol, including the critical step of error correction, is outlined below.

Workflow for a controlled text alignment experiment.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological "reagents" essential for conducting robust multiple comparisons in research.

| Research Reagent | Function & Explanation |

|---|---|

| Bonferroni Correction | A single-step procedure that controls the Family-Wise Error Rate (FWER). It provides a strict adjustment, ideal for confirmatory studies where any false positive is costly [12] [15]. |

| Benjamini-Hochberg Procedure | A step-up procedure that controls the False Discovery Rate (FDR). It offers a better balance between discovering true effects and limiting false positives, making it suitable for exploratory analysis [12] [13]. |

| Tukey's Honestly Significant Difference (HSD) | A single-step MCT used specifically after ANOVA to compare all possible pairs of group means. It is best suited for balanced data and controls the FWER for all pairwise comparisons [15]. |

| Dunnett's Test | A specialized MCT used when comparing several treatment groups against a single control group. It is more powerful than Bonferroni for this specific scenario while still controlling the FWER [15]. |

| Pre-registration Protocol | A plan documented before data analysis begins. It specifies hypotheses, primary outcomes, and analysis methods, safeguarding against "p-hacking" and ensuring the integrity of findings [13]. |

Visualizing the Multiple Comparisons Problem

The relationship between the number of tests and the inflation of error is fundamental. The following diagram illustrates this core concept and the two primary statistical pathways for controlling it.

Core problem and correction pathways.

Troubleshooting Guides

Guide 1: Resolving Unexpected High Numbers of Significant Findings

Problem: After running multiple statistical tests and applying False Discovery Rate (FDR) correction, an unexpectedly high number of features (e.g., genes, metabolites) show statistically significant differences, raising concerns about false positives.

Explanation: In high-dimensional data common in omics research and forensic text examination, strong dependencies between features can lead to counter-intuitive results. Even when all null hypotheses are true, FDR correction methods like Benjamini-Hochberg (BH) can sometimes report very high numbers of false positives in datasets with correlated features [16].

Solution:

- Assess Feature Dependencies: Check for correlations between features in your dataset. High correlation structures, similar to linkage disequilibrium in genetics or patterned language in texts, increase the risk.

- Use Negative Controls: Employ synthetic null data where no true effects exist to benchmark your analysis pipeline and identify inherent false positive rates [16].

- Consider Alternative Methods: For data with known strong dependencies, explore methods beyond global FDR correction, such as permutation testing or hierarchical procedures that account for local correlation structures [16].

Guide 2: Addressing Concerns About False Negatives in Forensic Comparisons

Problem: A forensic comparison using elimination based on class characteristics excludes a potential source, but there is a risk of a false negative error, especially when dealing with a closed suspect pool.

Explanation: In forensic science, recent reforms have focused heavily on reducing false positives. However, eliminations—decisions to exclude a potential source—can function as de facto identifications in a closed suspect pool and carry a risk of false negatives that is often overlooked [9].

Solution:

- Demand Balanced Error Reporting: Ensure validation studies and reports provide both false positive rates (FPR) and false negative rates (FNR) to give a complete picture of method accuracy [9].

- Validate Intuitive Judgments: "Common sense" eliminations must be supported by empirical data. Use rigorous testing to validate the criteria used for elimination [9].

- Mitigate Contextual Bias: Be aware that knowing the investigative constraints (e.g., a small suspect pool) can introduce bias. Implement procedures to blind examiners to such contextual information where possible [9].

Frequently Asked Questions (FAQs)

Q1: We control the FDR at 5%. Does this mean only 5% of our reported discoveries are false? A: Not necessarily. The FDR is the expected proportion of false discoveries. In practice, the actual False Discovery Proportion (FDP) in your specific dataset can vary. In datasets with highly correlated features, there is a chance that the FDP is much higher than the nominal FDR level, even when the procedure's long-run average is controlled [16].

Q2: Why is our permutation-based FDR estimate different from the BH-corrected FDR? A: The Benjamini-Hochberg procedure operates under certain theoretical assumptions and can be influenced by the dependency structure between tests. Permutation methods, which empirically estimate the null distribution from your specific data, can often account for these dependencies more accurately, providing a more realistic FDR estimate, particularly for data with complex correlations like those found in genomics or forensic linguistics [16].

Q3: How can we convert a forensic examiner's categorical conclusion (e.g., "Identification," "Elimination") into a likelihood ratio? A: Statistical models can be trained to perform this conversion using data from "black-box" studies. However, for the resulting likelihood ratio (LR) to be meaningful for a specific case, two conditions are critical [10]:

- Examiner-Specific Performance: The model should be trained on, or calibrated with, data from the specific examiner who performed the analysis, as performance can vary substantially between individuals.

- Case-Relevant Conditions: The data used to train the model must reflect the specific conditions of the case evidence (e.g., quality of the sample, number of features).

Q4: Our negative control experiments show a high false positive rate. What should we do? A: A high false positive rate in negative controls is a major red flag. You should [16]:

- Re-examine your data preprocessing steps (normalization, batch effect correction).

- Check the integrity of your negative controls.

- Investigate the dependency structure in your data and consider using a multiple testing correction strategy that is robust to such dependencies.

Data Summaries

Table 1: Key Statistical Error Rates in Multiple Testing

| Error Rate | Definition | Controlled By | Interpretation in Forensic Context |

|---|---|---|---|

| Family-Wise Error Rate (FWER) | The probability of making at least one false discovery (Type I error) among all hypotheses. | Bonferroni, Holm | Highly conservative; suitable when even one false positive is unacceptable. |

| False Discovery Rate (FDR) | The expected proportion of false discoveries among all rejected hypotheses. | Benjamini-Hochberg (BH) | Less conservative; allows a fraction of findings to be false, but this proportion can be volatile with correlated tests [16]. |

| False Discovery Proportion (FDP) | The actual proportion of false discoveries in a specific set of results. | Not directly controlled | A random variable. FDR is the expectation of FDP. In practice, FDP can exceed the nominal FDR, especially with dependencies [16]. |

| False Negative Rate (FNR) | The probability of incorrectly failing to reject a false null hypothesis (Type II error). | Power analysis, sample size | Critical in forensic eliminations, as a false negative can lead to excluding the true source [9]. |

Table 2: Essential Research Reagent Solutions

| Reagent / Material | Function in Analysis |

|---|---|

| Synthetic Null Data | Generated data where no true effects exist. Used as a negative control to empirically estimate and benchmark the false positive rate of an entire analysis pipeline [16]. |

| Permutation Framework | A computational method that randomly shuffles labels (e.g., case/control) to empirically construct the null distribution of test statistics. Crucial for validating FDR in dependent data [16]. |

| Likelihood Ratio Model | A statistical model (e.g., using Dirichlet priors or ordered probit) designed to convert subjective, categorical conclusions into a quantitative Likelihood Ratio, providing a clearer weight of evidence [10]. |

| Blinded Test Trials | Proficiency tests inserted into an examiner's regular workflow without their knowledge. Essential for collecting unbiased data to estimate examiner-specific error rates and calibrate LR models [10]. |

Experimental Protocols

Protocol 1: Validating FDR Control in Correlated High-Dimensional Data

Objective: To empirically assess the performance of FDR control procedures in the presence of correlated features.

Methodology:

- Data Simulation: Generate multiple datasets (e.g., ~610,000 unique datasets as in cited research) under a global null hypothesis (no true effects). Introduce correlation structures mimicking real-world data (e.g., from DNA methylation arrays, RNA-seq).

- Label Shuffling: For real datasets, randomly assign samples to groups (e.g., "case" and "control") to simulate the null hypothesis.

- Statistical Testing: Perform systematic multiple testing (e.g., differential expression with DESeq2, two-group mean comparisons with t-tests).

- Multiple Testing Correction: Apply Benjamini-Hochberg (BH) procedure at a standard nominal FDR level (e.g., 5%, 10%).

- Evaluation: Record the proportion of simulations where any finding is reported and, crucially, the distribution of the number of false findings in those simulations. Compare the results between independent and correlated feature scenarios [16].

Protocol 2: Estimating Examiner-Specific Likelihood Ratios from Categorical Data

Objective: To calculate a meaningful likelihood ratio for a specific forensic examiner's categorical conclusion.

Methodology:

- Data Collection: Conduct black-box studies where the examiner evaluates many test trials, each involving a questioned-source item and one or more known-source items. For each trial, the examiner selects a categorical response from an ordinal scale (e.g., "Identification," "Inconclusive A," "Inconclusive B," "Inconclusive C," "Elimination").

- Data Pooling (Initial Prior): Pool response data from multiple examiners to establish initial prior models for same-source and different-source probabilities for each categorical conclusion.

- Bayesian Updating: Use a Bayesian framework (e.g., with beta-binomial models) to update these prior models with the specific response data from the individual examiner in question. This yields posterior models reflective of that examiner's personal performance.

- Likelihood Ratio Calculation: For a given conclusion (e.g., "Identification"), the LR is calculated as the probability of that conclusion under the same-source posterior model divided by the probability under the different-source posterior model. This process is repeated as more data from the specific examiner becomes available, refining the LR [10].

Diagrams

DOT Script: FDR Control Workflow

DOT Script: Forensic LR Calculation

FAQs: Forensic Science Validity and Error Management

Q1: What is foundational validity in forensic science and why is it important? Foundational validity means a forensic method has sufficient empirical evidence showing it reliably produces accurate and consistent results. It is crucial for court admissibility under standards like Daubert, which require knowing a method's error rates and scientific validity [17].

Q2: How does the "multiple comparisons problem" affect forensic examination? The multiple comparisons problem occurs when many statistical tests are performed simultaneously. Each test has its own chance of a false positive, so the overall probability of at least one false discovery increases with the number of comparisons. In forensics, this is akin to an examiner comparing one latent print against many candidates in a large database, which elevates the risk of a close non-match being misidentified [18] [12].

Q3: What were the key findings of the major black-box study on latent fingerprint examination? A pivotal 2011 FBI-Noblis black-box study reported a 0.1% false positive rate (wrongly matching two prints from different sources) and a 7.5% false negative rate (failing to match two prints from the same source). This study tested 169 examiners on 744 print pairs, totaling 17,121 decisions [19].

Q4: What procedural weaknesses can lead to forensic misidentifications? High-profile errors have been linked to several key issues:

- Lack of Blind Verification: Verification by a second examiner who knows the first examiner's conclusion can introduce bias and prevent challenges [18].

- Poor Training and Protocols: Inadequate training and low performance standards can lead to errors in complex analyses [18].

- Contextual Bias: An examiner's judgment can be influenced by knowing other evidence or features of a suspect's known prints [18].

Troubleshooting Guides: Mitigating Common Research and Casework Pitfalls

Guide 1: Addressing the Multiple Comparisons Problem in Research Design

- Problem: Uncorrected multiple testing inflates the false discovery rate.

- Solution: Implement statistical corrections.

- Step 1: Define your error rate metric (e.g., Family-Wise Error Rate or False Discovery Rate).

- Step 2: Apply a multiple testing correction like the Bonferroni method, which adjusts the significance threshold by dividing it by the number of comparisons (α/m) [12].

- Step 3: For large-scale exploratory studies, control the False Discovery Rate to identify a set of "candidate positives" for follow-up validation [12].

Guide 2: Validating a Subjective Forensic Method

- Problem: A new forensic feature-comparison method lacks empirical evidence of its accuracy.

- Solution: Conduct a black-box validation study.

- Step 1 - Design: Create a double-blind, open-set test where participants analyze samples with known ground truth. Ensure materials represent the full spectrum of real-case quality and complexity [19].

- Step 2 - Execution: Recruit a substantial number of practicing experts. Each should analyze a randomized set of samples, including both mated (same source) and non-mated (different source) pairs [19] [20].

- Step 3 - Analysis: Calculate the method's false positive and false negative rates based on the examiners' conclusions. Report these rates with confidence intervals [19] [20].

Quantitative Data: Error Rates in Forensic Pattern Evidence

Table 1: False Positive and False Negative Rates from Forensic Black-Box Studies

| Forensic Discipline | False Positive Rate | False Negative Rate | Key Study Details |

|---|---|---|---|

| Latent Fingerprints | 0.1% | 7.5% | 169 examiners, 17,121 decisions [19] |

| Handwriting (General) | 3.1% | 1.1% | 86 examiners, 7,196 conclusions [20] |

| Handwriting (Twins) | 8.7% | Not Specified | Higher complexity due to genetic similarity [20] |

Table 2: Essential Research Reagents for Empirical Validation Studies

| Research "Reagent" | Function in Validation |

|---|---|

| Representative Sample Set | Provides a range of quality and complexity to test method performance under realistic conditions [19]. |

| Ground-Truthed Data | Samples with known source relationships; the essential input for measuring accuracy and error rates [19] [20]. |

| Blinded Experimental Protocol | Prevents bias by ensuring participants and researchers are unaware of expected outcomes during testing [19]. |

| Standardized Conclusion Scale | Enables consistent measurement and comparison of examiner decisions (e.g., "Identification," "Exclusion," "Inconclusive") [20]. |

| Statistical Power Analysis | Determines the necessary sample size of examiners and test materials to achieve reliable and meaningful results [21]. |

Experimental Protocol: The Black-Box Study Workflow

This diagram outlines the core methodology for a black-box study as used in latent print and handwriting research [19] [20].

Case Studies: Anatomy of Forensic Misidentifications

Case 1: The Brandon Mayfield Fingerprint Misidentification

- Incident: In 2004, the FBI mistakenly identified Oregon attorney Brandon Mayfield as the source of a fingerprint linked to the Madrid train bombings [18].

- Lessons for Research & Practice:

- Database Size: Large databases increase the risk of finding a confusingly similar non-match [18].

- Confirmation Bias: Examiners' interpretations were influenced by reasoning backward from Mayfield's known prints [18].

- Bias in Verification: The verification process was not blind, allowing the initial conclusion to bias the subsequent review [18].

Case 2: The Stephan Cowans Fingerprint Misidentification

- Incident: Stephan Cowans was convicted of murder based on fingerprint evidence but was later exonerated by DNA testing. The fingerprint identification was revealed to be erroneous [18].

- Lessons for Research & Practice:

- Training is Critical: An investigation blamed the error on poor training and a lack of standardized protocols in the fingerprint unit [18].

- Need for Robust Evidence: This case demonstrates the extreme difficulty of overturning a wrongful conviction based on forensic error without definitive exonerating evidence like DNA [18].

Implementing Robust Methodologies: The Likelihood-Ratio Framework for Textual Evidence

Adopting the Likelihood-Ratio (LR) Framework for Evidence Evaluation

Troubleshooting Guides and FAQs

Conceptual Foundations & Interpretation

Q1: What does a Likelihood Ratio (LR) actually mean, and how should I interpret it in the context of my evidence?

- A: A Likelihood Ratio is a measure of the strength of your forensic evidence. It quantifies how much more likely the evidence is under one proposition (e.g., the specimen and reference originated from the same source) compared to an alternative proposition (e.g., they originated from different sources) [22]. An LR greater than 1 supports the first proposition, while an LR less than 1 supports the alternative. An LR of 1 means the evidence does not help distinguish between the propositions.

Q2: Our team understands the LR value, but legal decision-makers find it confusing. What is the best way to present LRs to maximize understandability?

- A: Research indicates there is no single best method, and the existing literature does not provide a definitive answer [23]. Studies have explored different formats, including:

- Numerical LR values.

- Numerical random-match probabilities.

- Verbal statements of the strength of support. The key is that comprehensibility cannot be assumed. When presenting results, consider your audience and be prepared to explain the meaning of the LR clearly and accurately, as none of the reviewed studies tested comprehension of verbal LRs [23].

Q3: Why is it critical to report both false positive and false negative rates when validating an LR method?

- A: Reporting both error rates provides a complete picture of a method's accuracy [9]. Focusing only on false positives (e.g., incorrectly associating evidence with a source) overlooks the risk of false negatives (e.g., incorrectly eliminating a true source). This is especially critical in cases with a closed suspect pool, where an elimination can function as a de facto identification. A balanced reporting of both error rates is essential for a scientifically rigorous and transparent assessment of method performance [9].

Validation & Performance

Q4: What are the essential performance characteristics we need to validate for our new LR method?

- A: A comprehensive validation should assess several key performance characteristics. The table below outlines the core set as defined by established guidelines [24].

Table 1: Essential Performance Characteristics for LR Method Validation

| Performance Characteristic | Description | Example Performance Metric |

|---|---|---|

| Accuracy | How close the LRs are to their ideal, well-calibrated values. | Cllr (Log Likelihood Ratio Cost) [24] |

| Discriminating Power | The ability of the method to distinguish between same-source and different-source evidence. | EER (Equal Error Rate), Cllrmin [24] |

| Calibration | The property that LRs correctly represent the strength of the evidence; for example, an LR of 100 should be 100 times more likely under one proposition than the other. | Cllrcal [24] |

| Robustness | The reliability of the method when faced with variations in input data or conditions. | Variation in Cllr and EER [24] |

| Coherence | The internal consistency of the method's results. | Cllr, EER [24] |

| Generalization | The method's performance when applied to new, unseen data that differs from the development data. | Cllr, EER [24] |

Q5: We have pooled data from multiple examiners to train our model. Can we use this to report an LR for a specific examiner's casework conclusion?

- A: No, this is not appropriate. A model trained on pooled data from multiple examiners reflects average performance and may not be representative of a specific examiner's skill [10]. An individual examiner may perform substantially better or worse. To report a meaningful LR for a specific case, the statistical model should be representative of both the particular examiner and the specific conditions of the case [10]. A proposed solution is to use a Bayesian framework that leverages data from multiple examiners as an informed prior, which is then updated with the specific examiner's own proficiency test data as it becomes available [10].

Implementation & Technical Challenges

Q6: How can we model complex, real-world scenarios like multiple DNA transfer events in an LR framework?

- A: Complex activity-level propositions can be analyzed using specialized Bayesian networks. For example, one framework uses Bayesian logistic regression to model the probability of DNA recovery after direct and secondary transfer and persistence over time [25]. This model can account for multiple contacts and background DNA. Such analyses can be automated using open-source tools like ALTRaP (Activity Level Transfer, Recovery and Persistence), which is written in R and can be modified for different datasets and variables [25].

Q7: Our research involves inferring kinship from dense SNP data. How can we implement an LR framework for relationship testing?

- A: For kinship analysis, such as with whole genome sequencing data, you can use an LR-based method that dynamically selects highly informative SNPs. The KinSNP-LR method, for example, curates a panel of SNPs based on high minor allele frequency (MAF) and ensures they are not linked by selecting them a certain genetic distance apart (e.g., 30-50 centimorgans) [26]. The cumulative LR is calculated by multiplying the individual LR values for each selected SNP, assuming independence [26]. This approach provides the statistical rigor required for forensic acceptance.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for LR-Based Research

| Item / Tool | Function in LR Framework Research |

|---|---|

| ALTRaP (Activity Level, Transfer, Recovery and Persistence) | An open-source program written in R that automates the analysis of complex multiple transfer propositions for DNA evidence at the activity level [25]. |

| KinSNP-LR | A statistical method for computing LRs from whole genome sequencing SNP data for kinship analysis, focusing on close relationships [26]. |

| Validation Dataset (Forensic) | A dataset consisting of real casework material (e.g., fingermarks) used exclusively for the validation stage of an LR method to ensure forensically relevant performance assessment [24]. |

| Development Dataset (Simulated) | A dataset, which may include simulated data, used to build and train the LR method before final validation with real forensic data [24]. |

| Bayesian Network Software | Software used to build probabilistic models that can analyze complex, activity-level propositions by incorporating variables like transfer probabilities and background presence [25]. |

| gnomAD v4 SNP Panel | A large, preselected panel of single nucleotide polymorphisms (SNPs) used as a foundation for allele frequencies and genetic distances in kinship LR calculations [26]. |

Experimental Protocols

Protocol 1: Validation of an LR Method for Source Level Evidence

This protocol is based on the guideline for validating LR methods used for forensic evidence evaluation [22] [24].

- Define Scope and Propositions: Clearly state that the validation is for source-level inference and define the specific prosecution (H1) and defense (H2) propositions.

- Establish Validation Matrix: Create a matrix specifying the performance characteristics to be assessed (see Table 1), the corresponding metrics, graphical representations, and predefined validation criteria for each [24].

- Prepare Datasets: Secure separate datasets for development and validation. The validation dataset should be as forensically relevant as possible (e.g., real fingermarks from cases) [24].

- Run Experiments and Generate LRs: Compute LR values for all comparisons in the validation dataset using the developed method.

- Measure Performance Characteristics: For the generated LRs, calculate the performance metrics outlined in your validation matrix.

- Make Validation Decision: For each performance characteristic, compare the analytical result against the validation criterion. The method is considered validated for a characteristic if the result passes the criterion [24].

This protocol addresses methods for fields like firearms and toolmarks, where examiners traditionally use categorical conclusions [10].

- Collect Data: Conduct black-box studies where examiners compare specimens and provide categorical conclusions (e.g., Identification, Inconclusive, Elimination) for both same-source and different-source test trials.

- Model Examiner-Specific Performance (Critical Step): Avoid pooling data across all examiners. For a meaningful casework LR, the model must be tailored to the individual examiner. Use a Bayesian approach:

- Use data from multiple examiners to create informed prior models for same-source and different-source responses.

- Update these priors with the specific examiner's own (even if limited) proficiency data to create posterior models [10].

- Calculate Examiner-Specific LRs: The LR for a given conclusion (e.g., "Identification") is the probability of that conclusion under the same-source proposition divided by the probability under the different-source proposition, derived from that examiner's posterior models [10].

- Account for Case Conditions: Ensure the test trials used for modeling reflect the specific conditions (e.g., quality of evidence) of the case in question [10].

Workflow Diagrams

LR Method Validation Workflow

The diagram below outlines the key stages in the validation of a Likelihood Ratio method, from defining propositions to the final validation decision [22] [24].

Kinship Analysis LR Framework

This diagram illustrates the process of using dynamically selected SNPs for Likelihood Ratio-based kinship analysis, as used in methods like KinSNP-LR [26].

Quantitative Measurement of Textual Features for Comparison

Frequently Asked Questions

What is the core challenge of multiple comparisons in forensic text examination? The primary challenge is the overlooked risk of false negative errors. While recent reforms have focused on reducing false positives, eliminations based on class characteristics or intuitive judgments often escape empirical scrutiny. In cases with a closed pool of suspects, an elimination can act as a de facto identification, introducing significant error risk if not properly validated [9].

How can likelihood ratios address quantification in forensic comparisons? The likelihood-ratio framework is the logically correct method for interpreting forensic evidence [10]. It provides a transparent and reproducible statistical measure. For meaningful case context, the statistical model must be trained on data representative of the specific examiner's performance and the specific case conditions, rather than on data pooled from multiple examiners and varied conditions [10].

What is the difference between qualitative and quantitative text analysis methods?

- Quantitative text analysis emphasizes numerical data and statistical validity, identifying trends and patterns across large datasets for broader generalizations. It uses techniques like word frequency analysis, cluster analysis, and topic modeling [27] [28].

- Qualitative text analysis focuses on understanding context, themes, and underlying meanings through subjective interpretation, providing a nuanced understanding of complex phenomena [27] [28].

Why are both false positive and false negative rates important? Many existing validity studies and professional guidelines only report false positive rates, providing an incomplete accuracy assessment. A complete evaluation requires balanced reporting of both false positive and false negative rates to ensure proper validation of forensic methods [9].

Troubleshooting Guides

Issue: Inconsistent Text Comparison Results

Problem: Findings from textual feature comparisons are not reproducible across different examinations or examiners.

Solution:

- Implement Rigorous Validation: Conduct validation studies under conditions that closely mimic casework. Test the method with known samples covering a range of complexities and challenges [10].

- Quantify Error Rates: Calculate and report both false positive and false negative rates for the specific methodology and the conditions under which it is applied [9].

- Control Contextual Bias: Minimize the examiner's exposure to extraneous case information that could influence their judgment during the comparison process [9].

- Use Data Representative of the Case: Ensure the data used to train any statistical model reflects the performance of the specific examiner and the specific conditions of the case items, rather than relying solely on pooled data from multiple sources [10].

Issue: Selecting Between Qualitative and Quantitative Methods

Problem: Uncertainty about whether to use qualitative or quantitative methods for a text analysis project.

Solution: Consider the following comparison to guide your selection:

| Aspect | Quantitative Text Analysis | Qualitative Text Analysis |

|---|---|---|

| Core Focus | Measuring trends, patterns, and frequencies at scale [27] | Exploring underlying themes, context, and nuanced meanings [27] |

| Data Type | Numerical, structured data [28] | Non-numerical, discursive data [28] |

| Typical Output | Statistical metrics, generalizable trends [27] | Rich, narrative insights and in-depth understanding [27] |

| Best Used For | Answering "what" and "how much" questions; identifying prevalence [29] | Answering "why" and "how" questions; understanding complex phenomena [29] |

For a comprehensive understanding, a mixed-methods approach that combines both quantitative and qualitative analysis is often most effective [28].

Experimental Protocols

Protocol for a Quantitative Text Feature Comparison Study

This protocol outlines a method for comparing textual features using a quantitative approach, incorporating principles for robust forensic measurement.

1. Define Research Question and Hypothesis

- Formulate a clear hypothesis. For example: "The use of a specific set of lexical features can distinguish between authors from two different predefined groups with high accuracy."

2. Data Collection and Preparation

- Gather Text Corpora: Collect a comprehensive set of text samples. These should be divided into a training set (for model development) and a test set (for validation).

- Preprocessing: Clean and standardize the text. This may include:

- Tokenization (splitting text into words or phrases)

- Lowercasing

- Removing punctuation and special characters

- Handling stop words (common words like "the," "is")

3. Feature Extraction

- Transform the raw text into quantifiable features. Common textual features include:

- Lexical Features: Word n-grams, character n-grams, vocabulary richness.

- Syntactic Features: Part-of-speech tags, sentence length, grammar patterns.

- Semantic Features: Topic model distributions, word embeddings.

4. Statistical Modeling and Analysis

- Model Training: Use the training set to build a statistical model (e.g., a classifier) that learns the relationship between the extracted features and the target categories (e.g., author groups).

- Cross-Validation: Employ techniques like k-fold cross-validation to optimize the model and prevent overfitting.

5. Validation and Error Rate Calculation

- Blind Testing: Apply the finalized model to the held-out test set. This test must be performed blind—without the examiner knowing the ground truth—to avoid bias [10].

- Calculate Performance Metrics: Generate a confusion matrix and calculate key metrics to quantify the method's accuracy. The table below outlines these essential metrics:

| Metric | Definition | Formula (Conceptual) |

|---|---|---|

| True Positive (TP) | The model correctly identifies a positive case. | - |

| True Negative (TN) | The model correctly identifies a negative case. | - |

| False Positive (FP) | The model incorrectly identifies a negative case as positive (Type I error). | - |

| False Negative (FN) | The model incorrectly identifies a positive case as negative (Type II error). | - |

| False Positive Rate (FPR) | The proportion of true negatives that are incorrectly identified as positives. | FP / (FP + TN) |

| False Negative Rate (FNR) | The proportion of true positives that are incorrectly identified as negatives. | FN / (TP + FN) |

| Likelihood Ratio (LR) | How much more likely the evidence is under one hypothesis compared to another [10]. | Probability of evidence given Hypothesis 1 / Probability of evidence given Hypothesis 2 |

- Report Both Error Rates: To give a complete picture of the method's performance, explicitly report both the False Positive Rate and the False Negative Rate [9].

6. Interpretation and Reporting

- Contextualize the findings within the limitations of the study.

- Clearly state the conditions under which the method was validated and report all relevant error rates.

The Scientist's Toolkit: Research Reagent Solutions

| Item or Concept | Function in Textual Feature Analysis |

|---|---|

| Natural Language Processing (NLP) | A field of computer science that gives machines the ability to read, understand, and derive meaning from human language [28]. |

| Topic Modeling | A quantitative text mining technique used to discover abstract themes (topics) that occur in a collection of documents [28]. |

| Likelihood Ratio | A statistical framework that quantifies the strength of evidence by comparing the probability of the evidence under two competing hypotheses (e.g., same source vs. different sources) [10]. |

| Error Rate Validation | The process of empirically measuring a method's false positive and false negative rates through controlled, blind testing to establish its reliability [9]. |

| Deep Learning Models | Advanced neural networks capable of automatically learning complex patterns and feature hierarchies from raw text data for tasks like classification [30]. |

Experimental Workflow and Logic Diagrams

Quantitative Text Analysis Workflow

Multiple Comparisons Problem in Forensics

Frequently Asked Questions

Q1: What are the minimum color contrast ratios for text in research visualizations, and why are they critical for forensic text examination?

A1: Adhering to minimum color contrast ratios is essential for ensuring that research visualizations are readable by all team members, reducing interpretive errors in collaborative forensic analysis. The requirements are as follows [31] [32] [33]:

| Text Type | Minimum Contrast Ratio | Example Use Case in Research |

|---|---|---|

| Normal Text | 4.5:1 | Labels on charts, methodology descriptions, data table text. |

| Large Text | 3:1 | Section headings, titles on presentation slides, large-scale dashboards. (Large text is defined as approximately 18pt/24px or 14pt/19px and bold [32] [34]) |

| Non-Text Elements | 3:1 | Graphs, charts, icons, and user interface components [34]. |

Q2: A workflow diagram I generated has poor text legibility on a colored background. How can I programmatically determine the correct text color?

A2: You can use a luminance-based algorithm to automatically choose black or white text for a given background color. This ensures high contrast without manual calculation. Below is a methodology using the W3C-recommended formula [35]:

Experimental Protocol: Automated Text Color Selection

- Input: Acquire the background color's RGB values (e.g., from your diagramming tool's output).

- Processing: Calculate the relative luminance using the formula:

luminance = (R * 0.299 + G * 0.587 + B * 0.114) / 1000[35]. - Decision: Apply a threshold to determine the text color.

- If the calculated luminance value is greater than 125, use black text (

#202124). - If the luminance value is 125 or less, use white text (

#FFFFFF) [35].

- If the calculated luminance value is greater than 125, use black text (

Q3: Our team uses a variety of tools for creating diagrams. What is a fundamental rule for setting colors to maintain accessibility?

A3: The fundamental rule is to never rely solely on color to convey meaning. Always use color in combination with other indicators such as patterns, shapes, or direct labels. Furthermore, you must explicitly set the fontcolor property for any text-containing node to ensure it contrasts sufficiently with the node's fillcolor; do not rely on automatic defaults.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| Color Contrast Analyzer | Software or browser extensions used to measure the contrast ratio between foreground and background colors, validating compliance with WCAG guidelines [32] [36]. |

| Scripting Environment (e.g., Python, R) | Used to implement and run the automated text color selection algorithm, ensuring consistency across a large batch of generated visualizations. |

| Documented Color Palette | A pre-defined, restricted set of colors (like the one specified in the Diagram Specifications) that guarantees visual consistency and accessibility across all research materials. |

| Accessibility Linter/Framework | A programming library or tool that can be integrated into a build process to automatically check visualization code for color contrast violations before publication [37]. |

Experimental Protocols for Color Application

Protocol 1: Validating Contrast in Existing Visualizations

- Objective: To audit and identify contrast errors in a corpus of pre-existing research diagrams.

- Methodology:

- Output: A quantitative report detailing the conformance level of the research corpus.

Protocol 2: Implementing an Automated High-Contrast Workflow

- Objective: To integrate an automatic text color function into a diagram generation script.

- Methodology:

- Within your script, define the background color for a node.

- Implement the luminance calculation and decision logic from Q2 as a function.

- Use this function's output to set the

fontcolorproperty dynamically. - Generate the diagram and manually spot-check for legibility.

- Output: A programmatically verified diagram where all node text has high contrast.

Mandatory Visualizations

Diagram 1: Forensic text analysis workflow.

Diagram 2: Automated text color selection logic.

Frequently Asked Questions

Q1: When I use HTML-like labels in Graphviz to color parts of my node text, the entire node label disappears and I get a warning about "Table formatting not available." What is wrong?

A: This error occurs when your Graphviz installation lacks the necessary libexpat library for processing HTML-like labels [38]. To resolve this:

- Re-install Graphviz: Download a current version of Graphviz that includes libexpat support [38].

- Use a Compatible Web Tool: Switch to a Graphviz visualization tool that supports HTML-like labels, such as the Graphviz Visual Editor or tools based on

@hpcc-js/wasm[38].

Q2: How can I make only a few words inside a node label bold, instead of the entire label?

A: Use HTML-like labels with the <B> tag. Enclose your entire label within <...> and wrap the text you want to emphasize with <B> and </B> [39].

Q3: What is the difference between the color and fontcolor attributes?

A: The color attribute sets the color for the node's border or the edge's line [40]. The fontcolor attribute specifically controls the color of the text [40]. To change text color, always use fontcolor.

Q4: My HTML-like labels are not sizing correctly; the node is much larger than the text. How can I fix this?

A: Use shape=plain for nodes with HTML-like labels. This setting ensures the node's size is determined entirely by the label's content, with no extra margin or padding [41].

Troubleshooting Common Graphviz Workflow Issues

Problem: Diagram Generation Fails on Web Platforms

Issue: Your DOT code works on a local machine but fails in an online Graphviz tool. Solution: Online tools may use older Graphviz engines. For complex diagrams with HTML-like labels, use the Graphviz Visual Editor or a local installation [38].

Problem: Poor Color Contrast in Rendered Diagrams

Issue: Text is difficult to read against the node's background color.

Solution: Explicitly set the fontcolor and fillcolor attributes to ensure high contrast. The fontcolor of a node must be set explicitly against its fillcolor for readability.

Experimental Protocols for Diagram Generation

Protocol 1: Creating Multi-Color Node Labels

Objective: To highlight specific parts of a node's text, such as a p-value or hypothesis identifier, using different colors. Methodology:

- Format the node's label using HTML-like syntax, enclosed in

<...>. - Use the

<FONT>element with itsCOLORattribute to specify colors for specific text segments. Color can be specified by name (e.g.,red) or hex code (e.g.,#EA4335) [42]. - Ensure the node uses

shape=plainfor optimal sizing [41].

Example DOT Script:

Visual Output:

Protocol 2: Visualizing Multiple Comparison Workflows

Objective: To diagram a sequential statistical testing procedure, clearly distinguishing different stages and outcomes. Methodology:

- Use distinct node colors (

fillcolor) and explicit text colors (fontcolor) to represent different stages (e.g., input, process, output). - Use HTML-like labels to create rich, multi-line node content.

- Apply consistent edge styles to connect the workflow stages.

Example DOT Script:

Visual Output:

Quantitative Data Presentation

Table 1: Graphviz Color Attributes for Statistical Workflow Diagramming

| Attribute | Applies To | Default Value | Description | Use Case in Statistical Diagramming |

|---|---|---|---|---|

color |

Nodes, Edges, Clusters | black [40] |

Sets the color of a node's border or an edge's line. | Outlining nodes, drawing connections between hypotheses. |

fontcolor |

Nodes, Edges, Graphs, Clusters | black [43] |

Sets the color of text. | Displaying p-values, hypothesis labels, and significance annotations. |

fillcolor |

Nodes, Edges, Clusters | lightgrey (nodes) [44] |

Sets the background fill color. Must be used with style=filled. |

Color-coding different types of nodes (e.g., input=data, process=test, output=result). |

fontname |

Nodes, Edges, Graphs, Clusters | "Times-Roman" [43] |

Specifies the font family for text. | Differentiating between primary and secondary labels. |

fontsize |

Nodes, Edges, Graphs, Clusters | 14.0 [43] |

Specifies the font size in points. | Emphasizing key findings or main hypotheses. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Multiple Comparisons Research

| Item | Function | Application in Forensic Text Examination |

|---|---|---|

| Statistical Software (R, Python) | Provides libraries for advanced statistical correction procedures. | Executing algorithms for False Discovery Rate (FDR) control and Bonferroni correction on sets of authorship attribution tests. |

| Graphviz Software | Generates clear, reproducible diagrams of complex analytical workflows. | Visualizing the decision pathways in a forensic text analysis, showing how evidence is evaluated against multiple hypotheses. |

| Reference Text Corpus | A curated collection of authentic text samples. | Serving as a baseline for establishing normative linguistic patterns and testing the specificity of proposed authorship markers. |

| Hypothesis Tracking Framework | A structured log for documenting all tested hypotheses. | Maintaining an auditable record of all comparisons made during an analysis, which is critical for transparently calculating and reporting posterior odds. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the multiple comparisons problem, and why is it critical in forensic text examination?

A1: The multiple comparisons problem occurs when numerous statistical tests are performed simultaneously. In such cases, the probability of incorrectly declaring a random match (a Type I error or false positive) increases substantially. In forensic text comparison, if you test thousands of linguistic features, you might find some that appear to discriminate between authors purely by chance. This can lead to unsupported and erroneous conclusions, potentially misleading the trier-of-fact. Controlling for this problem is a fundamental requirement for scientifically defensible research [12] [45] [1].

Q2: How can I control the risk of false positives when analyzing a large set of linguistic features?

A2: You can apply statistical adjustment methods to control the error rate. The choice of method depends on your study's goal:

- To Control Family-Wise Error Rate (FWER): Use the Bonferroni correction. This stringent method controls the probability of making even one false discovery. The adjusted significance level is calculated as α' = α / m, where 'm' is the total number of tests performed [45] [1].

- To Control False Discovery Rate (FDR): Use the Benjamini-Hochberg procedure. This less conservative method controls the proportion of false discoveries among all features declared significant, offering greater statistical power when screening a large number of features, such as in exploratory analysis [45] [1].

Q3: What are the key requirements for empirically validating a forensic text comparison system?

A3: Empirical validation must replicate the conditions of the case under investigation using relevant data. The two main requirements are:

- Reflect Case Conditions: The validation experiment must mimic the specific challenges of the case, such as mismatches in topic, genre, or register between the known and questioned texts.

- Use Relevant Data: The background data used to estimate the typicality of features must be appropriate for the case context. Using irrelevant data (e.g., data from the wrong topic domain) can invalidate the strength of the evidence and mislead the fact-finder [8].

Q4: What is the role of the Likelihood Ratio (LR) in interpreting textual evidence?

A4: The Likelihood Ratio (LR) is the logically and legally correct framework for evaluating forensic evidence, including text. It quantifies the strength of the evidence by comparing two probabilities [8]:

- p(E|Hp): The probability of observing the evidence (the linguistic features) if the prosecution hypothesis is true (e.g., the suspect is the author).

- p(E|Hd): The probability of observing the same evidence if the defense hypothesis is true (e.g., someone else is the author). An LR greater than 1 supports the prosecution hypothesis, while an LR less than 1 supports the defense hypothesis. The LR should be presented to the trier-of-fact to update their prior beliefs, without the expert opining on the ultimate issue of guilt or innocence [8].

Troubleshooting Common Experimental Issues

Problem: My analysis yields significant results that fail to replicate in follow-up studies.

- Potential Cause: This is a classic symptom of the multiple comparisons problem. Without proper statistical adjustment, some "significant" findings are likely false positives.

- Solution: Apply an FDR correction like the Benjamini-Hochberg procedure during your initial exploratory analysis. This controls the proportion of expected false discoveries and provides a more reliable list of candidate features for validation [1].

Problem: The Likelihood Ratios my system produces are misleading or non-discriminative.

- Potential Cause: The model may be trained or validated on data that is not relevant to the case conditions (e.g., training on news articles but analyzing text messages).

- Solution: Ensure your validation experiments satisfy the two key requirements: reflecting case conditions and using relevant data. Re-run your experiments with a background corpus that matches the topic, genre, and style of the questioned text [8].

Problem: My statistical power is too low after applying a Bonferroni correction.

- Potential Cause: The Bonferroni correction is highly conservative, especially when the number of tests (m) is very large. It can dramatically increase the rate of false negatives (Type II errors).

- Solution: Consider using a less stringent correction method like FDR, which is more appropriate for high-dimensional data. Alternatively, a preliminary feature selection step can reduce the number of tests before formal analysis [1].

Quantitative Data and Experimental Protocols

The table below summarizes common methods for adjusting statistical significance to account for the multiple comparisons problem.

| Method | Controlled Error Rate | Brief Description | Use Case |

|---|---|---|---|

| Bonferroni | Family-Wise Error Rate (FWER) | Divides the significance level (α) by the total number of tests (m). A very stringent correction. | Ideal when a single false positive would be very costly; for a small number of tests. |

| Holm | Family-Wise Error Rate (FWER) | A stepwise procedure that is less conservative than Bonferroni while still controlling FWER. | A robust default choice for controlling FWER in most situations. |

| Benjamini-Hochberg | False Discovery Rate (FDR) | Controls the expected proportion of false discoveries among all rejected hypotheses. Less conservative. | Preferred for exploratory studies with a large number of tests (e.g., genomic or text feature analysis) [45] [1]. |

WCAG Color Contrast Standards for Visualization

When creating diagrams and visualizations, ensuring sufficient color contrast is essential for accessibility and clarity. The following table outlines the Web Content Accessibility Guidelines (WCAG) for contrast ratios.

| Element Type | Level AA Minimum Ratio | Level AAA Enhanced Ratio | Notes |

|---|---|---|---|

| Normal Text | 4.5:1 | 7:1 | Applies to most text. Text that is purely decorative has no requirement [31] [46]. |

| Large Text | 3:1 | 4.5:1 | Large text is defined as 18pt+ or 14pt+ and bold [31] [46] [47]. |

| User Interface Components & Graphical Objects | 3:1 | - | Applies to visual information required to identify UI states and parts of graphics essential to understanding [46]. |

Detailed Experimental Protocol: A Likelihood Ratio-Based Forensic Text Comparison

This protocol outlines the key steps for a forensically sound text comparison, integrating the principles of validation and the LR framework as discussed by Ishihara et al. (2024) [8].

1. Hypothesis Formulation:

- Define the prosecution hypothesis (Hp): "The known and questioned texts were written by the same author."

- Define the defense hypothesis (Hd): "The known and questioned texts were written by different authors."

2. Feature Extraction & Quantification:

- Select and extract a set of quantifiable linguistic features (e.g., lexical, syntactic, or character-based features) from both the known and questioned texts.

- Address Multiple Comparisons: If testing a large number of features for discriminative power, apply an appropriate multiple testing correction (e.g., Benjamini-Hochberg FDR control) to select a robust feature set.

3. Model Training & Likelihood Ratio Calculation:

- Use a relevant background corpus to model the population distribution of the selected features.

- Calculate the Likelihood Ratio using a statistical model (e.g., a Dirichlet-multinomial model followed by logistic regression calibration). The LR is: LR = p(E \| Hp) / p(E \| Hd) [8].

4. System Validation:

- Crucially, this must replicate case conditions. If the case involves cross-topic comparisons, the validation must be performed using data with similar topic mismatches.

- Assess the validity of the computed LRs using metrics like the log-likelihood-ratio cost (Cllr) and visualize the results with Tippett plots [8].

5. Interpretation & Reporting:

- Report the LR as a measure of the strength of the evidence.

- Clearly state the conditions and data used for validation to provide context for the reported LR.

Visualizations and Workflows

Diagram: Forensic Text Comparison Workflow

The below diagram illustrates the end-to-end process for a validated forensic text comparison.

Diagram: Multiple Comparisons Problem & Solutions

This diagram outlines the problem of multiple testing and pathways to its solution.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological components and their functions in forensic text comparison research.

| Research 'Reagent' | Function & Explanation |

|---|---|

| Likelihood Ratio (LR) Framework | The core logical structure for evaluating evidence. It quantitatively compares the probability of the evidence under two competing hypotheses, providing a transparent measure of evidential strength [8]. |

| Relevant Background Corpus | A collection of texts used to model the population distribution of linguistic features. It must be relevant to the case (e.g., matching in topic, genre, and medium) to accurately estimate the typicality of features and ensure valid LRs [8]. |

| Multiple Comparison Adjustment | A statistical procedure (e.g., Bonferroni, FDR) applied to control the inflation of false positive rates when testing many linguistic features simultaneously. It is a critical reagent for ensuring the reliability of feature selection [12] [1]. |

| Validation Dataset with Mismatches | A controlled dataset designed to test the system's performance under specific adverse conditions, such as topic mismatches. This 'reagent' is essential for demonstrating the method's robustness and applicability to real-world case conditions [8]. |