The Likelihood Ratio Framework in Forensic Linguistics: A Scientific Foundation for Authorship Analysis

This article provides a comprehensive introduction to the Likelihood Ratio (LR) framework as a scientifically rigorous method for evaluating forensic linguistic evidence.

The Likelihood Ratio Framework in Forensic Linguistics: A Scientific Foundation for Authorship Analysis

Abstract

This article provides a comprehensive introduction to the Likelihood Ratio (LR) framework as a scientifically rigorous method for evaluating forensic linguistic evidence. It explores the foundational Bayesian principles that underpin the LR, detailing its application in practical casework such as authorship verification and forensic text comparison. The content addresses key methodological challenges, including topic mismatch and model uncertainty, and outlines established troubleshooting approaches like the assumptions lattice and uncertainty pyramid. Furthermore, it emphasizes the critical role of empirical validation under casework conditions, reviewing consensus guidelines and performance metrics essential for ensuring the reliability and admissibility of evidence in legal proceedings. This resource is designed for researchers and practitioners seeking to implement or critically evaluate statistically sound practices in forensic science.

Bayesian Foundations: Understanding the Likelihood Ratio and its Role in Evidence Interpretation

The likelihood ratio (LR) is a fundamental statistical measure for evaluating the strength of forensic evidence. Within the field of forensic linguistics, this framework provides a logically sound and transparent method for experts to communicate how strongly evidence supports one hypothesis over another. The LR represents a paradigm shift in forensic science, moving away from subjective assertions toward quantitative, empirically testable methods [1]. This approach is increasingly recognized as the logically correct framework for forensic evidence evaluation, as it forces the explicit consideration of competing hypotheses and requires validation through performance testing [1].

The core principle underlying the LR framework is that forensic scientists should not ultimately decide whether a suspect is the source of evidence; rather, they should present a quantitative measure of how much the evidence supports one proposition over another. This distinction is crucial for maintaining the scientific integrity of forensic testimony while respecting the role of legal decision-makers. The LR framework has been applied across various forensic disciplines, including DNA analysis, fingerprint comparison, forensic voice analysis, and authorship identification [1] [2].

Defining the Likelihood Ratio

Conceptual Foundation and Mathematical Formulation

The likelihood ratio is defined as the ratio of two probabilities of observing the same evidence under two competing hypotheses. In forensic contexts, these are typically the prosecution hypothesis (Hp) and the defense hypothesis (Hd) [3]. The mathematical expression of the LR is:

LR = P(E|Hp) / P(E|Hd)

Where:

- P(E|Hp) is the probability of observing the evidence (E) if the prosecution's hypothesis is true

- P(E|Hd) is the probability of observing the evidence (E) if the defense's hypothesis is true [3]

This formula calculates how much more likely the evidence is under one hypothesis compared to the other. The numerator typically represents the probability of the evidence if the identified person is the source, while the denominator represents the probability of the evidence if an unidentified person from a relevant population is the source [3].

Interpreting Likelihood Ratio Values

The numerical value of the LR indicates the direction and strength of the evidence in supporting one hypothesis over the other:

- LR > 1: The evidence supports the numerator hypothesis (Hp)

- LR < 1: The evidence supports the denominator hypothesis (Hd)

- LR = 1: The evidence supports neither hypothesis over the other [3]

To facilitate interpretation, numerical LR values are often translated into verbal equivalents, though these should be considered guides rather than absolute categories [3].

Table 1: Verbal Equivalents for Likelihood Ratio Values

| Strength of Evidence | Likelihood Ratio Range |

|---|---|

| Limited evidence to support | LR < 1-10 |

| Moderate evidence to support | LR 10-100 |

| Moderately strong evidence to support | LR 100-1000 |

| Strong evidence to support | LR 1000-10000 |

| Very strong evidence to support | LR > 10000 |

The LR Framework in Forensic Linguistics Research

Application to Forensic Voice Comparison

Forensic voice comparison represents a significant application of the LR framework in linguistic analysis. The traditional aural-spectrographic approach has been criticized for its subjective judgment and lack of empirical testing [1]. The LR framework addresses these concerns through quantitative measurements and statistical models.

In a landmark Chinese case involving voice comparison between two sisters, researchers implemented the LR framework by:

- Defining competing hypotheses: whether an unknown speaker was speaker A or speaker B

- Collecting relevant data: telephone recordings of known speakers using the same device type as the evidence recording

- Making quantitative measurements: analyzing acoustic properties of speech signals

- Developing statistical models: using Gaussian Mixture Models and Multivariate Kernel Density Functions to calculate LRs [1]

This approach demonstrated how the LR framework could be successfully applied in real forensic linguistic casework, providing a transparent and replicable methodology superior to subjective assessment approaches [1].

Application to Authorship Identification

The LR framework has also transformed forensic authorship analysis. Research has demonstrated its application to real-life authorship identification cases involving text messages using the General Impostors method with writeprints [2]. This approach uses a manually curated static feature set similar to a writeprint (stylometric features) to achieve excellent performance while limiting the capture of confounding information like topic and register variation [2].

The adoption of the LR framework for forensic authorship identification represents a significant advancement, moving the field toward more objective and defensible conclusions. As Ishihara (2021) demonstrated, score-based likelihood ratios can be effectively applied to linguistic text evidence using a bag-of-words model, providing a statistically robust method for authorship analysis [2].

Experimental Protocols and Methodologies

General Workflow for Forensic Linguistic Analysis

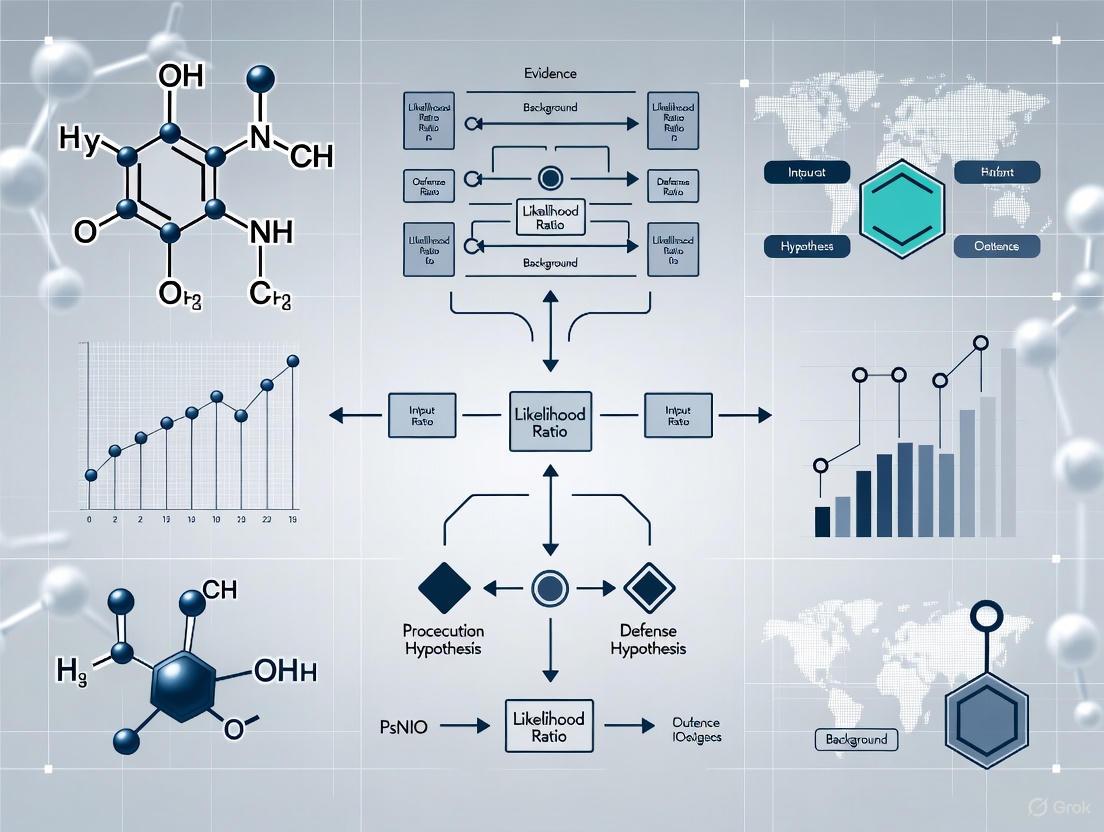

The implementation of the LR framework in forensic linguistics follows a systematic workflow that can be visualized as follows:

Detailed Methodological Components

Data Collection Protocol

In the Chinese voice comparison case, researchers collected data using case-matched conditions:

- Recordings were made of 5 separate telephone conversations with each known speaker

- The same recording device type (OPPO Electronics Corp. model R809T smartphone, running Android OS4.2) was used as in the evidence recording

- Recordings were made over the same telephone network (China Mobile's GSM/TD-SCDMA network)

- This approach controlled for channel effects and other contextual variables [1]

Feature Extraction and Measurement

For voice comparison, the analysis included:

- Acoustic-phonetic measurements: including formants (F1, F2, F3) and fundamental frequency (f0)

- Spectral measurements: using Mel-frequency cepstral coefficients (MFCCs)

- Duration measurements: of specific speech segments

- All measurements were performed using automated algorithms to ensure objectivity and replicability [1]

For authorship analysis, the General Impostors method uses:

- Writeprint features: manually curated static feature sets for stylistic analysis

- Bag-of-words models: for text representation

- These features limit the capture of confounding information related to topic and register variation [2]

Statistical Modeling Approaches

Different statistical models can be applied depending on the data structure and forensic question:

Table 2: Statistical Models for LR Calculation in Forensic Linguistics

| Model Type | Application | Key Features |

|---|---|---|

| Gaussian Mixture Models (GMM) | Forensic voice comparison | Effective for modeling acoustic feature distributions; robust with limited training data [1] |

| Multivariate Kernel Density Functions (MVKD) | Forensic voice comparison | Non-parametric approach; flexible for various feature distributions [1] |

| General Impostors Method | Authorship identification | State-of-the-art for authorship verification; uses reference populations [2] |

| Score-based Likelihood Ratios | Linguistic text evidence | Applied with bag-of-words models for textual analysis [2] |

Validation and Performance Testing

A critical component of the LR framework is empirical validation of the system's performance:

- Test validity is assessed using metrics like log likelihood ratio cost (Cllr), which was 0.003 (very low/very good) in the voice comparison case [1]

- System reliability is evaluated through metrics like 95% coverage intervals (2.35 to +2.85 orders of magnitude in the voice case) [1]

- Black-box studies where practitioners assess control cases with known ground truth are recommended to establish error rates [4]

The Scientist's Toolkit: Essential Materials and Reagents

Implementing the LR framework in forensic linguistics requires specific technical resources and methodological components:

Table 3: Research Reagent Solutions for Forensic Linguistic Analysis

| Tool/Component | Function | Application Example |

|---|---|---|

| Digital Audio Recording Equipment | Evidence preservation and control sample collection | OPPO R809T smartphone used in Chinese voice case to match evidentiary recording conditions [1] |

| Acoustic Analysis Software | Feature extraction and measurement | Programs for formant tracking, fundamental frequency analysis, and MFCC calculation [1] |

| Statistical Modeling Platforms | LR calculation and system validation | R, Python, or specialized forensic software for implementing GMM, MVKD, and other models [1] [5] |

| Reference Population Databases | Providing relevant background distributions | Databases of voice recordings or writing samples for estimating feature variability in relevant populations [2] |

| Validation Frameworks | Testing system performance and error rates | Protocols for black-box studies and calculation of performance metrics like Cllr [1] [4] |

Uncertainty Assessment and Implementation Considerations

The Uncertainty Pyramid Framework

A critical aspect of implementing the LR framework is proper uncertainty characterization. The concept of a "lattice of assumptions leading to an uncertainty pyramid" provides a framework for assessing uncertainty in LR evaluations [4]. This approach explores the range of LR values attainable by models satisfying different criteria for reasonableness, helping experts and legal decision-makers understand how personal choices during assessment affect the reported LR.

The uncertainty pyramid consists of multiple levels:

- Base level: Different statistical models that satisfy basic criteria for reasonableness

- Middle levels: Different assumptions about data relevance and feature selection

- Apex: The specific LR value reported in a case

This framework acknowledges that career statisticians cannot objectively identify one model as authoritatively appropriate; rather, they can suggest criteria for assessing whether a given model is reasonable [4].

Practical Implementation Challenges

Several challenges emerge when implementing the LR framework in forensic linguistics:

- Data requirements: Collecting sufficient relevant data can be time-consuming and costly, particularly for specific-source models [5]

- Model selection: The choice between specific-source and common-source models depends on feasibility and case circumstances [5]

- Communicating results: Research indicates that explaining the meaning of LRs to legal decision-makers produces only slight improvements in understanding [6]

Simulation studies have compared the performance of different LR systems, finding that common-source feature-based methods perform best when dimensionality is not too high and sources are equally variable [5]. For score-based methods, using a percentile-rank preprocessor can improve performance for large sample sizes by considering the rarity of measurements [5].

The likelihood ratio represents the core conceptual framework for evaluating the strength of forensic evidence in linguistics and other forensic disciplines. Its mathematical formulation as the ratio of probabilities of evidence under competing hypotheses provides a logically rigorous approach that forces explicit consideration of assumptions and alternatives. The implementation of the LR framework in forensic linguistics through quantitative measurements, statistical models, and empirical validation represents a significant advancement over subjective assessment methods.

Future developments in forensic linguistics will likely focus on refining statistical models, expanding reference databases, and improving communication of LR values and their associated uncertainties to legal decision-makers. As the field continues to mature, the LR framework provides the necessary foundation for scientifically defensible forensic linguistic analysis.

Bayesian reasoning provides a formal probabilistic framework for updating beliefs in the presence of uncertainty, a capability of paramount importance in forensic science where evidence must be evaluated systematically and transparently. This approach, increasingly recognized as normative for reasoning under uncertainty, separates the weight of evidence from prior assumptions about a case, allowing forensic experts to present their findings in a logically rigorous manner [4]. The mathematical backbone of this framework—Bayes' Theorem—describes the fundamental constraints that probability theory places on how rational individuals should update their uncertainties when encountering new information [7]. Within forensic linguistics specifically, this framework offers a structured methodology for evaluating authorship attribution, stylistic analysis, and other linguistic evidence, moving the discipline toward more quantitative and empirically grounded practices.

The core of this approach lies in the likelihood ratio (LR), which quantitatively expresses the strength of evidence by comparing how likely the evidence is under two competing propositions—typically those advanced by prosecution and defense perspectives [4]. Forensic science communities, particularly in Europe, have increasingly advocated for this paradigm, with support growing for its adoption in the United States as well [4]. The framework's appeal stems from its ability to provide clear separation between the expert's evaluation of evidence and the fact-finder's prior beliefs about a case, thus maintaining appropriate boundaries between scientific testimony and juridical decision-making [4].

Theoretical Foundations: Bayes' Theorem and the Likelihood Ratio

The Odds Form of Bayes' Theorem

The application of Bayesian reasoning to forensic evidence evaluation centers on the odds form of Bayes' Theorem, which provides a mathematical structure for updating beliefs in light of new evidence. This formulation can be expressed as:

Posterior Odds = Prior Odds × Likelihood Ratio

Or, more formally:

$$ \frac{P(Hp|E)}{P(Hd|E)} = \frac{P(Hp)}{P(Hd)} \times \frac{P(E|Hp)}{P(E|Hd)} $$

Where:

- Prior Odds = $P(Hp)/P(Hd)$ represent the fact-finder's belief about the competing hypotheses before considering the forensic evidence

- Likelihood Ratio = $P(E|Hp)/P(E|Hd)$ quantifies the strength of the evidence under the two competing propositions

- Posterior Odds = $P(Hp|E)/P(Hd|E)$ represent the updated beliefs after considering the evidence [4]

This formulation "separates the ultimate degree of doubt a DM feels regarding the guilt of a defendant, as expressed via posterior odds, into degree of doubt felt before consideration of the evidence at hand (prior odds) and the influence or weight of the newly considered evidence expressed as a likelihood ratio" [4]. This separation is crucial in forensic contexts as it delineates the respective roles of the fact-finder (who brings prior case knowledge) and the forensic expert (who assesses the strength of specific evidence).

The Likelihood Ratio as the Weight of Evidence

The likelihood ratio serves as a quantitative measure of evidence strength, providing a balanced framework for comparing prosecution and defense perspectives. When the LR exceeds 1, the evidence supports the prosecution's proposition; when it falls below 1, it supports the defense's proposition; and when it equals 1, the evidence has no probative value [4]. The magnitude of the LR indicates the strength of support, with values further from 1 representing stronger evidence.

Table 1: Interpreting Likelihood Ratio Values

| LR Value | Strength of Evidence | Direction of Support |

|---|---|---|

| >10,000 | Very strong | Supports Hp |

| 1,000-10,000 | Strong | Supports Hp |

| 100-1,000 | Moderately strong | Supports Hp |

| 10-100 | Moderate | Supports Hp |

| 1-10 | Limited | Weak support for Hp |

| 1 | No value | Neither proposition |

| 0.1-0.9 | Limited | Weak support for Hd |

| 0.01-0.1 | Moderate | Supports Hd |

| 0.0001-0.01 | Strong | Supports Hd |

| <0.0001 | Very strong | Supports Hd |

This framework is particularly valuable in forensic linguistics, where evidence is often complex and multidimensional. For instance, in authorship analysis, the LR can assess how specific linguistic features—lexical choices, syntactic patterns, or discourse markers—support either the proposition that a suspect authored a questioned text or that they did not [8].

Diagram 1: Bayesian updating of hypotheses through evidence.

The Likelihood Ratio Framework in Forensic Linguistics

Theoretical Basis for Linguistic Individuality

The application of the likelihood ratio framework in forensic linguistics is grounded in the Theory of Linguistic Individuality, which posits that "each individual possesses a unique repertoire of linguistic units, defined following Langacker (1987) as structures that a person can produce automatically and that are stored as traces of procedural memory" [8]. This theoretical foundation provides the justification for treating linguistic features as distinctive patterns that can provide evidence of authorship.

Recent methodological advances have developed set-theory methods that generalize n-gram tracing approaches and reportedly "outperform traditional computational methods based on frequency of features" while remaining "compatible with the likelihood ratio framework" [8]. These techniques have been tested across diverse corpora simulating various forensic scenarios, "from emails to academic papers, including cross-domain problems" [8]. The results demonstrate that these methods not only outperform state-of-the-art approaches in authorship verification but also offer the advantage of being "more explorable by a human analyst"—a crucial consideration in legal contexts where interpretability is essential.

Machine Learning Enhancements in Linguistic Analysis

The field of forensic linguistics has undergone a significant transformation "from manual textual analysis to machine learning (ML)-driven methodologies" [9]. Research synthesizing 77 studies reveals that "ML algorithms—notably deep learning and computational stylometry—outperform manual methods in processing large datasets rapidly and identifying subtle linguistic patterns" with one study reporting that "authorship attribution accuracy increased by 34% in ML models" [9].

Table 2: Comparison of Methodological Approaches in Forensic Linguistics

| Method | Strengths | Limitations | Accuracy in Authorship Attribution |

|---|---|---|---|

| Manual Analysis | Superior interpretation of cultural nuances and contextual subtleties | Time-consuming, subjective, difficult to scale | Limited by human cognitive capacity |

| Traditional Computational Methods | Faster than manual analysis, systematic | Limited to frequency-based features, less explorable | Lower than ML approaches |

| Machine Learning (Deep Learning, Computational Stylometry) | Processes large datasets rapidly, identifies subtle patterns, 34% accuracy increase | Algorithmic bias, opaque decision-making, requires large datasets | Highest reported accuracy |

| Hybrid Frameworks | Merges human expertise with computational scalability, addresses nuances | Requires careful implementation, more complex | Potentially optimal balance |

However, despite these technological advances, "manual analysis retains superiority in interpreting cultural nuances and contextual subtleties, underscoring the need for hybrid frameworks that merge human expertise with computational scalability" [9]. This balance is particularly important in forensic applications, where understanding register, dialect, idiolect, and pragmatic features often requires human linguistic expertise.

Methodological Implementation and Experimental Protocols

Workflow for Forensic Linguistic Analysis

Implementing the likelihood ratio framework in forensic linguistics requires a systematic workflow that ensures methodological rigor while maintaining transparency and interpretability. The process begins with the definition of competing propositions based on the specific facts of the case, followed by careful selection and analysis of relevant linguistic features.

Diagram 2: Methodological workflow for forensic linguistic analysis.

Performance Validation Using Cllr

The log likelihood ratio cost (Cllr) serves as a crucial validation metric for assessing the performance of likelihood ratio systems in forensic applications. This metric is defined as:

$$ Cllr = \frac{1}{2} \cdot \left( \frac{1}{N{H1}} \sum{i}^{N{H1}} \log2 \left(1 + \frac{1}{LR{H1}^i}\right) + \frac{1}{N{H2}} \sum{j}^{N{H2}} \log2 (1 + LR{H2}^j) \right) $$

Where $N{H1}$ and $N{H2}$ represent the number of samples for which propositions H₁ and H₂ are true, respectively, and $LR{H1}$ and $LR{H2}$ are the likelihood ratio values predicted by the system for these samples [10].

The Cllr metric offers several advantages for forensic validation: it is a strictly proper scoring rule with favorable mathematical properties, provides indications of both calibration and discriminating power, imposes strong penalties for highly misleading LRs, and enables comparability between different systems and methods [10]. A Cllr value of 0 indicates perfect performance, while a value of 1 represents an uninformative system equivalent to always reporting LR=1 [10].

Table 3: Cllr Performance Benchmarking Across Forensic Domains (Based on 136 Publications)

| Forensic Domain | Typical Cllr Range | Reporting Frequency | Notes |

|---|---|---|---|

| Forensic Speaker Recognition | 0.1-0.5 | High | Most established domain for Cllr use |

| Authorship Analysis | 0.2-0.6 | Moderate | Varies by text type and feature set |

| Digital Forensics | 0.3-0.7 | Low | Emerging application area |

| Document Examination | 0.4-0.8 | Low to Moderate | Depends on feature stability |

| DNA Analysis | Not typically reported | Absent | Uses different validation approaches |

Research examining 136 publications on automated LR systems reveals that Cllr values "lack clear patterns and depend on the area, analysis and dataset," highlighting the importance of domain-specific validation and the use of appropriate benchmark datasets [10]. Despite increasing publications on automated LR systems over time, "the proportion reporting Cllr remains stable" [10].

The Researcher's Toolkit: Essential Materials and Methods

Computational Tools for Forensic Linguistic Analysis

Implementing the Bayesian backbone in forensic linguistics research requires specialized computational tools and frameworks. The following essential resources form the core of the modern forensic linguist's toolkit:

Table 4: Essential Research Reagent Solutions for Forensic Linguistic Analysis

| Tool/Resource | Function | Application in Likelihood Ratio Framework |

|---|---|---|

| R Package "idiolect" | Implements set-theory methods for authorship analysis | Enables calculation of likelihood ratios based on Theory of Linguistic Individuality [8] |

| Computational Stylometry Platforms | Identifies subtle linguistic patterns across large datasets | Provides feature extraction for LR calculation; ML models show 34% accuracy improvement [9] |

| Bayesian Network Software | Constructs narrative Bayesian networks for evidence evaluation | Supports activity-level proposition evaluation in complex cases [11] |

| Validation Databases | Benchmark datasets with known ground truth | Enables calculation of performance metrics (Cllr) for method validation [10] |

| Deep Learning Architectures | Processes complex linguistic features automatically | Enhances discrimination between authorship styles for more informative LRs [9] |

Uncertainty Assessment and Sensitivity Analysis

A critical but often overlooked component of the likelihood ratio framework is the comprehensive uncertainty assessment. As noted in research from the National Institute of Standards and Technology, "if a likelihood ratio is reported, experts should also provide information to enable triers of fact to assess its fitness for the intended purpose" [4]. This is particularly important given that "even career statisticians cannot objectively identify one model as authoritatively appropriate for translating data into probabilities, nor can they state what modeling assumptions one should accept" [4].

The lattice of assumptions and uncertainty pyramid framework provides a structured approach for evaluating how different modeling choices and assumptions affect LR values [4]. This involves exploring "the range of likelihood ratio values attainable by models that satisfy stated criteria for reasonableness," which helps understand "the relationships among interpretation, data, and assumptions" [4]. In forensic linguistics, this might involve testing how different feature sets, statistical models, or reference populations affect the calculated LR.

Critical Perspectives and Methodological Considerations

Challenges in Bayesian Implementation

While the Bayesian framework offers a mathematically rigorous approach to evidence evaluation, its implementation faces significant challenges. One fundamental issue concerns the subjectivity of the likelihood ratio itself. As noted by critics, "the likelihood ratio is subjective and personal," which creates tension when "a forensic expert provides a likelihood ratio for others to use in Bayes' equation" [4]. This approach is "unsupported by Bayesian decision theory, which applies only to personal decision making and not to the transfer of information from an expert to a separate decision maker" [4].

Bayesian methods have also been observed to "subvert the authoritative instrumentality of science and technology as applied to western law" by exposing "a series of intractable lacunae, which were alternately revealed to forensic analysts or rendered silent in technical black boxes" [12]. The implementation of forensic Bayesianism "created messy entanglements between evidence, place and subjectivity" and "destabilised practices of material witnessing by disruptively reconfiguring the relationship between seeing and testifying" [12].

Ethical and Interpretative Considerations

The increasing integration of machine learning approaches in forensic linguistics introduces additional ethical and interpretative challenges. ML algorithms can exhibit algorithmic bias based on their training data and may involve "opaque algorithmic decision-making," creating "unresolved barriers to courtroom admissibility" [9]. These challenges "highlight the potential to consider Bayesianism more as a social phenomenon rather than simply a quantification of individual subjective belief" [12].

Research indicates that effective implementation requires "standardized validation protocols and interdisciplinary collaboration to advance forensic linguistics into an era of ethically grounded, AI-augmented justice" [9]. This "dual emphasis on technological innovation and critical oversight positions the field to address evolving demands for precision and interpretability in legal evidence analysis" [9].

The Bayesian backbone provides a rigorous mathematical framework for evaluating forensic evidence, offering a structured approach to address the complex challenges of evidence interpretation in legal contexts. The likelihood ratio paradigm serves as a crucial bridge between mathematical theory and practical application, enabling forensic linguists to quantify the strength of linguistic evidence while maintaining appropriate boundaries between scientific testimony and juridical decision-making.

As the field continues to evolve, the integration of machine learning methodologies with human expertise through hybrid frameworks offers promising pathways for enhancing both the accuracy and interpretability of forensic linguistic analysis. However, the successful implementation of these approaches requires ongoing attention to validation, uncertainty assessment, and ethical considerations to ensure that quantitative methods enhance rather than obscure the search for justice.

The continued development and refinement of the Bayesian backbone in forensic linguistics will depend on interdisciplinary collaboration, transparent methodology, and critical engagement with both the strengths and limitations of this powerful analytical framework.

In forensic linguistics and related disciplines, a fundamental principle governs the presentation of evidence: the expert provides a Likelihood Ratio (LR), not the Posterior Odds. This division of labor is not arbitrary but is rooted in the mathematical framework of Bayes' theorem, legal norms, and scientific best practices. The LR quantitatively expresses the support the evidence provides for one hypothesis over another, while the Posterior Odds incorporate the prior beliefs about the hypotheses, which fall outside the expert's remit. This paper explores the theoretical, practical, and legal rationale for this separation, providing a technical guide for researchers and practitioners implementing the Likelihood Ratio framework in forensic science.

The evaluation of forensic evidence, whether linguistic, genetic, or otherwise, operates within a probabilistic framework to quantify the strength of evidence. The core of this framework is Bayes' theorem, which describes how prior beliefs are updated in the face of new evidence.

The theorem, in its odds form, is expressed as:

Posterior Odds = Prior Odds × Likelihood Ratio [13] [4]

Or, more formally: [ \frac{P(Hp|E)}{P(Hd|E)} = \frac{P(Hp)}{P(Hd)} \times \frac{P(E|Hp)}{P(E|Hd)} ]

Here:

- Posterior Odds: The updated odds in favor of the prosecution's hypothesis ((Hp)) against the defense's hypothesis ((Hd)) after considering the evidence ((E)).

- Prior Odds: The odds in favor of (Hp) against (Hd) before considering the evidence (E).

- Likelihood Ratio (LR): The ratio of the probability of observing the evidence (E) under the prosecution's hypothesis (Hp) to the probability of observing (E) under the defense's hypothesis (Hd).

The following diagram illustrates the logical relationship and the distinct roles within this Bayesian updating process.

The Critical Distinction: Likelihood Ratio vs. Posterior Odds

Understanding the conceptual difference between the Likelihood Ratio and the Posterior Odds is paramount.

The Likelihood Ratio is a measure of the evidence's strength. It addresses the question: "How much more likely is the observed evidence if the prosecution's hypothesis is true compared to if the defense's hypothesis is true?" It is a property of the evidence itself and the competing hypotheses. The LR is not a probability distribution over the hypotheses and is not normalized [14] [15].

The Posterior Odds are a measure of the updated belief about the hypotheses. They address the question: "After considering the evidence, what are the relative odds that the prosecution's hypothesis is true compared to the defense's hypothesis?" The Posterior Odds incorporate both the strength of the evidence (via the LR) and the initial, context-dependent beliefs about the hypotheses (via the Prior Odds) [13].

The table below summarizes the key differences.

Table 1: Conceptual and Practical Differences between Likelihood Ratio and Posterior Odds

| Aspect | Likelihood Ratio (LR) | Posterior Odds |

|---|---|---|

| Core Question | How well does the evidence support (Hp) vs. (Hd)? | What are the updated odds of (Hp) vs. (Hd)? |

| Based On | The properties of the evidence under given hypotheses. | The evidence (LR) AND prior beliefs (Prior Odds). |

| Role of Expert | To calculate and provide the LR. | Outside the expert's scope. |

| Role of Trier-of-Fact | To use the LR in their reasoning. | To determine (implicitly or explicitly). |

| Dependence | Ideally, independent of prior beliefs about the hypotheses. | Heavily dependent on prior beliefs about the hypotheses. |

The Rationale for the Separation of Roles

The strict separation of the forensic expert's role (providing the LR) from the juror's or judge's role (assessing the Posterior Odds) is upheld for several compelling reasons.

Adherence to Bayesian Decision Theory

Bayesian decision theory is fundamentally personal and subjective. The Likelihood Ratio (LR) used in Bayes' rule must be the personal LR of the decision-maker (e.g., the juror) because its calculation involves subjective judgments about which scenarios to consider and how to model the evidence [4]. An expert providing their own personal LR and presenting it for others to use in a Bayesian update is a "hybrid adaptation" that has no basis in Bayesian decision theory [4]. The theory applies to personal decision-making, not to the transfer of information from an expert to a separate decision-maker.

The Domain of the Court vs. The Domain of the Expert

The Prior Odds are solely within the domain of the judge or jury [15]. These priors are based on all the other evidence presented in the case (witness testimony, alibis, motives, etc.), which the forensic expert is not privy to and is not qualified to evaluate. For an expert to present a Posterior Odds would require them to make an assumption about the Prior Odds, thereby usurping the court's responsibility [4]. The consensus, therefore, is that "likelihoods and the LR should constitute the only case-relevant outcome of their experimental work" [15].

Preserving Scientific Objectivity and Avoiding Bias

Providing an LR allows the forensic scientist to remain objective and report on the scientific value of their evidence without venturing into legal judgments. The LR is a measure of evidential strength that is separate from the probative value of the case, the latter being a combination of evidential strength and prior circumstances. This separation helps prevent the expert from appearing as an advocate for either side and maintains the scientific integrity of their testimony [4].

Methodological Protocol for LR Calculation in Forensic Linguistics

Implementing the LR framework in forensic linguistics involves a structured process. The following workflow outlines the key methodological stages for a robust LR calculation.

Step 1: Define Competing Hypotheses

The expert must work with legal professionals to define two mutually exclusive hypotheses.

- Prosecution Hypothesis ((H_p)): Typically posits that the suspect is the author of the questioned text.

- Defense Hypothesis ((H_d)): Typically posits that the questioned text was authored by someone else from a relevant population [4].

Step 2: Feature Selection

The linguist identifies and operationalizes the linguistic features that will be analyzed. These can include:

- Lexical Features: Vocabulary richness, keyword usage, function word frequency.

- Syntactic Features: Sentence length distribution, phrase structures, punctuation habits.

- Stylistic Features: Use of metaphors, repetition, discourse markers.

Step 3: Data Collection & Model Building

This step involves gathering data to model the probability of observing the evidence under each hypothesis.

- For (P(E|H_p)): The analyst examines a known reference corpus from the suspect to see how likely the observed features ((E)) are, given the suspect's writing style.

- For (P(E|H_d)): The analyst examines a reference corpus from a relevant population to determine how likely the features ((E)) are to occur in the general population or a specific alternative group [9]. The choice of this population is a critical and often challenging modeling decision [4].

Step 4 & 5: Probability Calculation and LR Computation

Using the models developed in Step 3, the probabilities are calculated and the LR is computed. The interpretation follows established scales, such as the one proposed by Jeffreys [13].

Table 2: Quantitative LR Interpretation Guide (Jeffreys' Scale)

| LR Value | Verbal Equivalent | Strength of Evidence |

|---|---|---|

| > 100 | Extreme support for (H_p) | Very Strong |

| 32 - 100 | Very strong support for (H_p) | Strong |

| 10 - 32 | Strong support for (H_p) | Moderate |

| 3.2 - 10 | Moderate support for (H_p) | Limited |

| 1 - 3.2 | Anecdotal support for (H_p) | Weak |

| 1 | No support for either hypothesis | None |

| Reciprocals of above | Support for (H_d) | Inverse of above |

The Scientist's Toolkit: Essential Reagents for Forensic LR Analysis

In forensic linguistics, the "research reagents" are not chemical but methodological and data-driven. The following table details the essential components for conducting a valid LR analysis.

Table 3: Essential Methodological Components for LR Analysis in Forensic Linguistics

| Tool / Component | Function & Explanation | |

|---|---|---|

| Specialized Text Corpora | Large, contextually relevant collections of text used to model the language of a relevant population for estimating (P(E | H_d)). |

| Computational Stylometry Software | ML-driven tools (e.g., deep learning models) to identify and quantify subtle stylistic patterns beyond manual analysis, improving authorship attribution accuracy [9]. | |

| Statistical Modeling Platform | Software (e.g., R, Python with scikit-learn) used to build probabilistic models of language use and calculate the underlying probabilities for the LR. | |

| Validated Feature Set | A standardized set of linguistic features (lexical, syntactic, discursive) whose behavior and discriminative power have been empirically established. | |

| Uncertainty Assessment Framework | A methodology (e.g., the "lattice of assumptions" and "uncertainty pyramid" [4]) to evaluate how sensitive the LR is to choices in models, features, and reference populations. |

The principle that a forensic expert provides the Likelihood Ratio and not the Posterior Odds is a cornerstone of scientifically rigorous and legally sound evidence evaluation. This separation is not a mere technicality but a fundamental demarcation of roles: the expert qua expert speaks to the objective strength of the scientific evidence, while the trier-of-fact retains the responsibility of integrating this information with all other aspects of the case. Adhering to this principle, supported by the Bayesian framework and robust methodological protocols, ensures that fields like forensic linguistics continue to evolve as reliable, transparent, and indispensable tools in the pursuit of justice.

The Likelihood Ratio (LR) framework represents a fundamental paradigm shift in the evaluation of forensic evidence, moving away from subjective judgment towards a transparent, reproducible, and logically valid method for expressing the strength of evidence [16]. This framework is particularly crucial in forensic linguistics, where language evidence—whether written or spoken—must be evaluated scientifically to assist legal decision-makers. The LR provides a logically correct framework for interpretation of evidence that is intrinsically resistant to cognitive bias [16]. The ongoing paradigm shift in forensic science involves replacing methods based on human perception and judgment with methods based on relevant data, quantitative measurements, and statistical models [16]. This shift requires the wholesale adoption of an entire constellation of new methods and new ways of thinking, particularly in forensic linguistics where language evidence presents unique challenges for quantitative analysis.

Theoretical Foundation of the Likelihood Ratio

Basic Logical Form and Interpretation

The Likelihood Ratio is a statistical measure that compares the probability of observing the evidence under two competing hypotheses [17]. In forensic linguistics, this typically involves:

- Prosecution Hypothesis (Hp): The known and questioned linguistic materials originate from the same source.

- Defense Hypothesis (Hd): The known and questioned linguistic materials originate from different sources.

The LR is calculated as: LR = P(E|Hp) / P(E|Hd), where E represents the observed evidence [17]. The value of the LR indicates how much more likely the evidence is under one hypothesis compared to the other. An LR greater than 1 supports the prosecution hypothesis, while an LR less than 1 supports the defense hypothesis. The magnitude indicates the strength of this support [16].

The Paradigm Shift in Forensic Evidence Evaluation

The adoption of the LR framework represents a true Kuhnian paradigm shift that requires rejection of existing methods and the ways of thinking that underpin them [16]. This shift encompasses four critical elements:

- Transition from subjective judgment to transparent, reproducible methods

- Replacement of human perception with instrumental measurement where possible

- Adoption of the logically correct LR framework for evidence interpretation

- Implementation of empirical validation under casework conditions [16]

This paradigm is particularly relevant for forensic linguistics, where traditional approaches have relied heavily on human expertise and subjective interpretation [18].

Application in Forensic Linguistics

Core Linguistic Applications

Forensic linguistics applies the LR framework across multiple domains where language serves as evidence [18]:

- Authorship Attribution: Determining whether a specific individual authored a particular text by comparing incriminated texts with samples from a suspect [18]

- Voice Identification: Using quantitative methods to compare speech samples, moving beyond perceptual judgments [16]

- Linguistic Profiling: Analyzing language to draw inferences about author characteristics when no specific suspect is identified [18]

- Document Analysis: Examining threatening communications, forged documents, or disputed utterances [18]

The Idiolect Concept in Forensic Linguistics

A central concept enabling the application of the LR framework in linguistics is the idiolect—an individual's unique linguistic variety [18]. This encompasses:

- Phonological Patterns: Pronunciation of specific sounds and sound combinations

- Lexical Preferences: Characteristic vocabulary and stable idioms

- Grammatical Structures: Consistent syntactic patterns

- Sociolectal Influences: Language variations shaped by social factors [18]

The idiolect is shaped by multiple factors including regional dialect, exposure to foreign languages, educational background, professional jargon, and familial language patterns [18]. The core assumption is that no two people use language in exactly the same way, providing the theoretical foundation for discrimination between sources [18].

Quantitative Methodologies and Experimental Protocols

Research Design for Forensic Linguistics

Quantitative methods in forensic linguistics research require careful preplanning to isolate variables and design procedures that yield meaningful findings [19]. Key considerations include:

- Sample Selection: Determining appropriate sample sizes and sources for both known and questioned materials

- Variable Isolation: Identifying and controlling for confounding variables in linguistic data

- Instrument Development: Creating reliable methods for data collection and analysis [19]

Experimental designs typically involve comparison of same-source and different-source pairs to establish the performance of the methodology [16].

LR Calculation for Categorical Count Data

In digital forensic linguistics, where evidence may consist of user-generated event data, Longjohn et al. (2022) developed a method for calculating LRs for categorical count data [17]. The experimental protocol involves:

- Data Collection: Gathering event counts from unknown sources tied to a crime and event counts generated by a known source

- Model Specification: Developing a Bayesian model that can calculate the LR in closed form

- Performance Validation: Testing the method on real-world event datasets representing variety of event types [17]

Theoretical analysis of this approach examines how the LR is affected by the amount of data observed, the number of event types considered, and the prior distributions used in the Bayesian model [17].

Bi-Gaussian Calibration Method

Morrison (2023) proposed a bi-Gaussian calibration method for likelihood ratios to improve the reliability of forensic evaluation systems [16]. The methodology consists of six steps:

Table: Bi-Gaussian Calibration Protocol

| Step | Procedure | Output |

|---|---|---|

| 1 | Calculate uncalibrated LR using a forensic-evaluation system | Raw LR value |

| 2 | Apply traditional monotonic calibration (e.g., logistic regression) | Initially calibrated output |

| 3 | Calculate log-likelihood-ratio cost (Cllr) for the calibrated output | Performance metric |

| 4 | Determine σ² value of perfectly-calibrated bi-Gaussian system with same Cllr | Variance parameter |

| 5 | Map empirical cumulative distribution to two-Gaussian mixture | Mapping function |

| 6 | Apply mapping function to uncalibrated LR from Step 1 | Final calibrated LR |

A perfectly-calibrated bi-Gaussian system produces log-LR distributions where both same-source and different-source distributions are Gaussian with the same variance, and means of -σ²/2 and +σ²/2 respectively [16]. This calibration approach enables more reliable and interpretable LR values for forensic decision-making.

Data Presentation and Performance Metrics

Quantitative Data in Forensic Linguistics Research

Table: Factors Affecting Likelihood Ratio Performance in Categorical Count Data

| Factor | Effect on LR | Research Finding |

|---|---|---|

| Amount of data observed | Increases discriminability with more data | Significant impact on LR reliability [17] |

| Number of event types | Affects specificity of model | More types provide better discrimination [17] |

| Choice of prior in Bayesian model | Influences calibration | Requires careful selection based on application [17] |

| System calibration | Determines validity of LR interpretation | Bi-Gaussian method improves reliability [16] |

Validation Metrics for Forensic Evaluation Systems

The performance of LR-based forensic evaluation systems is measured using specific metrics:

- Log-Likelihood-Ratio Cost (Cllr): A comprehensive performance measure that accounts for both discrimination and calibration [16]

- Empirical Validation: Testing under conditions reflecting casework realities [16]

- Trier-of-Fact Assistance: Assessing whether the expert testimony would assist legal decision-makers beyond unaided lay judgment [16]

Research comparing speaker identification by lay listeners versus automated systems demonstrates the critical importance of validation to ensure that forensic methods actually improve upon naive judgment [16].

Visualization of Workflows and Logical Relationships

Forensic Linguistics Analysis Workflow

Likelihood Ratio Calibration Process

Research Reagent Solutions for Forensic Linguistics

Table: Essential Research Materials for Forensic Linguistics Studies

| Research Reagent | Function/Application | Implementation Example |

|---|---|---|

| Linguistic Corpora | Reference data for establishing population statistics | Compilation of texts/speech from relevant population [18] |

| Automated Speaker Recognition Technology | Instrumental measurement for voice comparison | ASR systems for quantitative feature extraction [16] |

| Natural Language Processing Tools | Pattern recognition in written texts | Machine learning models for authorship attribution [18] |

| Statistical Software Platforms | Quantitative analysis and LR calculation | R, Python with specialized forensic statistics packages [19] |

| Validation Datasets | Empirical testing under casework conditions | Collections of known-source materials with ground truth [16] |

These research reagents enable the implementation of the forensic data science paradigm in linguistics, facilitating the transition from subjective judgment to quantitative, validated methods [18] [16].

The Likelihood Ratio framework provides forensic linguistics with a legally and logically rational approach to evidence evaluation that promotes transparency, reproducibility, and scientific rigor. By embracing this framework alongside appropriate quantitative methodologies and validation protocols, forensic linguists can provide more meaningful and defensible evidence in legal proceedings. The ongoing paradigm shift toward forensic data science represents a fundamental transformation in how language evidence is analyzed and interpreted, with potential to significantly enhance the administration of justice.

From Theory to Text: Implementing the LR Framework in Forensic Linguistics

Within the likelihood ratio framework for forensic linguistics research, the formulation of competing propositions—typically designated as the prosecution proposition (Hp) and the defense proposition (Hd)—represents the foundational step that determines the scientific validity and legal relevance of any analysis. The likelihood ratio framework provides a coherent logical structure for evaluating evidence by quantifying the strength of forensic findings under two competing propositions about the case [20]. This framework enables researchers and forensic experts to move beyond simple source attribution (e.g., "Who wrote this document?") to addressing more complex activity-level questions (e.g., "How did this document come to be written?") that are increasingly central to modern forensic linguistics practice [20] [8]. Properly operationalizing Hp and Hd requires careful consideration of the case context, relevant scientific methodology, and the boundaries of inferential reasoning, ensuring that the resulting analysis provides transparent, robust, and actionable insights for the criminal justice system.

The shift from source-level to activity-level propositions marks a significant evolution in forensic linguistics. Where traditional approaches might ask "Did this suspect author this document?", contemporary frameworks now address more nuanced questions such as "Did the suspect author this document under the specific circumstances alleged by the prosecution?" versus "Could the document have been produced through alternative means consistent with the defense's position?" [20]. This transition reflects a growing recognition that the mere identification of a source often provides insufficient guidance to triers of fact, who must ultimately make determinations about actions and responsibilities rather than mere associations.

Theoretical Foundation: The Hierarchy of Propositions

Understanding the Proposition Spectrum

Forensic propositions exist within a hierarchical structure that ranges from source-level to activity-level to offense-level propositions. Each level represents a different type of inference requiring distinct forms of evidence and analytical approaches:

Source-level propositions concern the origin of specific trace materials and typically represent the most fundamental level of forensic analysis. In authorship verification, this corresponds to the

AV_Knowndecision problem: given a set of documents by a known author and a document of unknown authorship, has the known author also written the unknown document [21]? At this level, the analysis focuses primarily on comparative features between known and questioned materials.Activity-level propositions address how a particular trace arrived where it was found or was created under specific circumstances. These propositions consider not just the source but also transfer mechanisms, persistence factors, and background prevalence. In forensic linguistics, this might involve determining whether a document was created as part of a criminal conspiracy or as an innocent communication [20].

Offense-level propositions directly relate to the legal issues before the court, such as whether a crime occurred or whether the defendant possessed the necessary mental state for criminal liability. While forensic linguists rarely address offense-level propositions directly, their analyses at lower propositional levels provide crucial building blocks for addressing these ultimate issues.

The following table summarizes key characteristics of these proposition levels:

Table 1: Hierarchy of Propositions in Forensic Linguistics

| Proposition Level | Core Question | Typical Form in Authorship Analysis | Key Considerations |

|---|---|---|---|

| Source | What is the origin of this trace? | AV_Known: Has author A also written document D? [21] |

Profile rarity, discriminative features, reference populations |

| Activity | How did this trace come to be here? | Was document D produced as part of criminal activity X? | Transfer mechanisms, persistence, context, background levels |

| Offense | Did the defendant commit the offense? | Does document D prove the defendant's guilt for offense O? | Legal standards, mental state, actus reus, complete elements of crime |

The Logic of Competing Propositions

The likelihood ratio framework requires the formulation of exactly two competing propositions that represent mutually exclusive explanations for the available evidence. The logical relationship between these propositions follows the structure of Bayes' theorem, where:

- Hp (Prosecution Proposition): Typically represents the explanation put forward by the prosecution, though in scientific terms it is simply one of the two competing explanations being evaluated.

- Hd (Defense Proposition): Represents an alternative explanation, typically aligning with the defense position, though it may encompass other reasonable alternatives.

The likelihood ratio (LR) then quantifies the strength of the evidence (E) by comparing the probability of observing that evidence under both propositions: LR = P(E|Hp) / P(E|Hd) [4]. A LR greater than 1 supports Hp, while a LR less than 1 supports Hd. The magnitude of the LR indicates the strength of this support, with more extreme values indicating stronger evidence.

Formulating Forensically Valid Propositions

Criteria for Effective Proposition Development

Well-constructed propositions must satisfy specific criteria to ensure they yield forensically meaningful results:

Mutual Exclusivity: Hp and Hd must represent alternative explanations that cannot simultaneously be true in the context of the case. This exclusivity ensures that evidence supporting one proposition necessarily weakens the other within the likelihood ratio framework [4].

Exhaustiveness Within Scope: The propositions should collectively cover the reasonable possibilities suggested by the case circumstances, ensuring that the LR provides a complete picture of the evidentiary strength. Unexamined alternative explanations undermine the validity of the analysis.

Clarity and Specificity: Propositions must be precisely defined to enable the identification of relevant data and appropriate analytical methodologies. Vague propositions lead to ambiguous analyses and inconclusive results [22].

Relevance to Legal Issues: While forensic scientists typically address source or activity level propositions, these must logically connect to the ultimate legal issues before the court. The forensic linguist should understand how their analysis of linguistic evidence at their proposition level informs determinations at higher levels.

Testability: Valid propositions must be empirically testable using available scientific methods and data. Propositions that cannot be operationalized or measured yield analyses that are speculative rather than scientific [23].

Practical Challenges in Proposition Formulation

Several practical challenges commonly arise when operationalizing propositions in forensic linguistics casework:

Incomplete Case Information: Forensic linguists often receive limited information about the alleged activities, creating difficulties in specifying appropriate propositions. As noted in forensic DNA research, "It is often the case that scientists will be informed about the competing propositions regarding activities alleged by the parties only at trial, if at all" [20]. The solution involves close collaboration with legal counsel and the use of sensitivity analyses to assess how different assumptions might affect the conclusions.

Uncertainty About Activities: The exact details of how a document was created are rarely known with certainty. However, "it is a common misconception that the scientist who is evaluating the observations in light of competing posited activities needs to know every aspect of what has allegedly happened" [20]. Experimental data and logical frameworks can accommodate uncertainty through weighted probabilities of different possible states.

Multiple Reasonable Alternatives: Complex cases may present more than two reasonable explanations. The solution involves either grouping alternatives into two coherent propositions or conducting sequential analyses comparing different pairs of propositions, with clear documentation of the approach.

Defense Cooperation: Limited cooperation from the defense sometimes presents obstacles to understanding the alternative proposition [20]. In such situations, forensic linguists should develop propositions based on the available information and clearly state their assumptions, potentially offering to revise their analysis if additional information becomes available.

Methodological Protocols for Authorship Verification

Experimental Framework for Authorship Analysis

The following diagram illustrates the core workflow for conducting authorship verification within the likelihood ratio framework:

Diagram 1: Authorship Verification Workflow

Technical Approaches to Likelihood Ratio Calculation

Different technical approaches to calculating likelihood ratios in authorship verification include:

Grammar Model Approach: This method calculates "the ratio between the likelihood of a document given a model of the Grammar for the candidate author and the likelihood of the same document given a model of the Grammar for a reference population" [21]. These Grammar Models are estimated using n-gram language models trained solely on grammatical features, providing a cognitively plausible approach to authorship analysis that aligns with theories of linguistic individuality [8].

Unary Methods: These approaches rely solely on documents from a known author to determine a decision criterion, accepting the candidate author as the author of the questioned document if it is sufficiently similar to the known documents [21].

Binary Methods: These approaches use documents from both the candidate author and reference authors to establish the decision criterion, potentially offering greater robustness through explicit comparison to alternative sources.

The following table compares quantitative results from different authorship verification methods across multiple datasets, demonstrating the performance advantages of the grammar model approach (LambdaG):

Table 2: Performance Comparison of Authorship Verification Methods (Accuracy %)

| Dataset | Grammar Model (LambdaG) | Unary Method | Binary-Intrinsic Method | Binary-Extrinsic Method |

|---|---|---|---|---|

| Email Corpus | 94.2 | 87.5 | 89.8 | 85.3 |

| Academic Papers | 91.7 | 84.1 | 86.9 | 82.6 |

| Social Media | 88.9 | 81.3 | 83.7 | 79.4 |

| Cross-Genre | 85.4 | 72.8 | 76.1 | 70.5 |

| Historical Documents | 90.1 | 83.6 | 85.2 | 81.9 |

| Average | 90.1 | 81.9 | 84.3 | 79.9 |

Adapted from empirical evaluation of twelve datasets showing LambdaG outperforming other established methods in eleven cases [21].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Analytical Tools for Forensic Authorship Research

| Tool Category | Specific Examples | Function in Proposition Testing |

|---|---|---|

| Grammar Modeling | n-gram language models, Idiolect R package [8] | Captures individual grammatical patterns to distinguish between authors |

| Reference Corpora | Genre-matched text collections, demographic samples | Provides population data for estimating expected feature frequencies under Hd |

| Statistical Software | R packages (e.g., "idiolect"), Python libraries | Implements likelihood ratio calculations and statistical validation |

| Feature Extraction | Syntactic parsers, lexical diversity measures, character n-gram algorithms | Identifies and quantifies discriminative linguistic features |

| Validation Frameworks | Black-box testing protocols, case simulation databases | Assesses method reliability and error rates under controlled conditions |

Uncertainty Assessment and Sensitivity Analysis

The Uncertainty Pyramid Framework

Even with properly formulated propositions and robust methodologies, forensic linguists must acknowledge and quantify uncertainty in their likelihood ratio calculations. The "uncertainty pyramid" framework provides a structured approach to this essential task [4]. This framework explores the range of likelihood ratio values attainable by models that satisfy stated criteria for reasonableness, with each level of the pyramid representing different assumptions about the data, features, or population parameters.

The assumptions lattice underlying the uncertainty pyramid should include variations in:

- Feature Selection: Testing how different linguistic features (lexical, syntactic, structural) affect the LR value.

- Reference Population: Assessing the impact of using different reference populations when estimating the denominator P(E|Hd).

- Model Parameters: Exploring how sensitive the LR is to changes in algorithmic parameters or statistical assumptions.

- Data Quality: Evaluating how missing data, document length, or genre variations might influence the results.

Sensitivity analyses determine how much effect any unknown factors of the activities have on the value of the findings [20]. If the strength of the observations is particularly sensitive to some aspects, then efforts should be made to find additional information about those aspects rather than every aspect of the activity.

Communicating Uncertainty and Limitations

Transparent communication of uncertainty is essential for the proper interpretation of forensic linguistic evidence. This includes:

- Clear Documentation: Explicitly stating all assumptions, data limitations, and methodological choices that could affect the LR value.

- Range Reporting: Where appropriate, providing a range of plausible LR values rather than a single point estimate to reflect uncertainty.

- Verbal Equivalents: If using verbal scales of conclusion, ensuring they are properly mapped to numerical ranges and that their limitations are explained.

- Alternative Explanations: Acknowledging and evaluating alternative explanations that might not be fully captured by Hd.

Operationalizing prosecution and defense propositions represents both a scientific and practical foundation for implementing the likelihood ratio framework in forensic linguistics research. Properly formulated Hp and Hd propositions enable researchers to move beyond mere descriptive analysis to providing quantitative, logically coherent assessments of evidence strength that directly address the issues relevant to judicial decision-makers. The grammar model approach to authorship verification exemplifies how modern computational linguistics can be integrated with forensic reasoning to create robust, cognitively plausible methods for addressing questions of authorship.

As forensic linguistics continues to develop more sophisticated analytical techniques, the fundamental importance of carefully operationalized propositions remains constant. By adhering to the principles of mutual exclusivity, exhaustiveness, clarity, relevance, and testability, researchers can ensure their work provides maximum value to the justice system while maintaining scientific integrity. Future research should focus on expanding reference databases, validating methods across diverse linguistic contexts, and developing more nuanced approaches to quantifying and communicating the uncertainty inherent in all forensic linguistic analyses.

Within the domain of forensic linguistics, the likelihood ratio (LR) framework provides a formal method for evaluating the strength of evidence, offering a coherent structure for comparing competing hypotheses regarding authorship. A core challenge in its application lies in the objective and quantifiable analysis of linguistic style. This technical guide details the process of selecting and measuring linguistic features for robust style comparison, contextualized within the broader thesis of introducing the LR framework to forensic linguistics research. It provides a systematic approach for researchers and forensic professionals, focusing on the operationalization of style through computational and statistical means.

The LR framework is increasingly advocated for communicating the weight of forensic evidence, including in textual analysis [4]. It is fundamentally a measure of the strength of evidence, quantifying how much more likely the evidence is under one hypothesis (e.g., the questioned document was written by a specific author) compared to an alternative hypothesis (e.g., it was written by someone else). The accurate computation of an LR depends critically on the ability to quantify the defining characteristics of an author's style in a reproducible and empirically grounded manner [4] [21].

Core Linguistic Feature Clusters for Analysis

The selection of linguistic features is a critical first step in building a reliable authorship analysis system. The features must be discriminative—capable of distinguishing between authors—yet sufficiently frequent in text to allow for stable statistical modeling. The following table summarizes key feature clusters amenable to quantitative measurement.

Table 1: Core Linguistic Feature Clusters for Authorship Analysis

| Feature Cluster | Specific Features | Quantification Method | Forensic Utility |

|---|---|---|---|

| Grammar & Syntax | N-gram profiles, part-of-speech tags, syntactic production rules [21] | Relative frequency, language model likelihood [21] | Captures subconscious, habitual patterns of language construction; highly discriminative [21]. |

| Lexical Choice | Word unigrams, character n-grams, vocabulary richness, function word frequency | Frequency analysis, type-token ratio | Measures overall vocabulary and preference for common, often unconscious, words [24]. |

| Semantic & Pragmatic | Emotional polarity, topic models, semantic vector representations | Sentiment analysis (e.g., LIWC), topic model inference (e.g., LDA) | Infers underlying psychological state or communicative intent [25]. |

| Structural | Average sentence length, paragraph length, punctuation frequency | Descriptive statistics (mean, variance) | Captures macro-level organizational preferences. |

The Likelihood Ratio Framework in Forensic Linguistics

The likelihood ratio is the central metric for evaluating evidence within a Bayesian framework for forensic science [4]. For authorship verification, it is calculated as the ratio of the probability of observing the linguistic evidence given the prosecution hypothesis (e.g., the known and questioned texts share an author) to the probability of the same evidence given the defense hypothesis (e.g., the texts originate from different authors).

The fundamental formula is:

[ LR = \frac{P(E|Hp)}{P(E|Hd)} ]

Where:

- ( E ) represents the linguistic evidence (the quantified features from the questioned document).

- ( H_p ) is the prosecution hypothesis (same author).

- ( H_d ) is the defense hypothesis (different authors).

A critical aspect of applying this framework is the acknowledgment and quantification of uncertainty. As noted in research, a single LR value provided by an expert lacks a full characterization of its reliability [4]. It is therefore necessary to employ a framework such as an assumptions lattice and uncertainty pyramid to explore the range of plausible LR values derived from different reasonable modeling choices and data sources [4]. This process ensures the fact-finder understands the potential variability and fitness for purpose of the reported LR.

Table 2: Interpreting Likelihood Ratio Values

| LR Value Range | Verbal Equivalent | Strength of Support for ( H_p ) |

|---|---|---|

| > 10,000 | Very strong | Extremely strong support |

| 1,000 - 10,000 | Strong | Strong support |

| 100 - 1,000 | Moderately strong | Moderate support |

| 10 - 100 | Weak | Limited support |

| 1 - 10 | Very weak | Negligible support |

| 1 | No value | No support for either hypothesis |

Experimental Protocol for Authorship Verification

The following workflow details a standardized experimental protocol for conducting an authorship verification study based on the LambdaG method, which uses the likelihood ratio of grammar models [21]. This protocol can be adapted for other feature sets.

Workflow Diagram

Protocol Steps

Problem Formulation (

AV_Core,AV_Known,AV_Batch): Define the authorship verification problem according to one of the standard decision problems [21]. ForAV_Known, this involves a set of documents from a known author and a questioned document of unknown authorship.Data Curation and Preprocessing:

- Source: Gather the known author documents and a relevant reference corpus representing the appropriate population of potential authors (e.g., same genre, topic, language) [21].

- Processing: Clean and tokenize all text data. For the LambdaG method, the focus is on extracting grammatical features, which may involve part-of-speech tagging or using character n-grams that capture morphological and syntactic patterns [21].

Model Training:

Likelihood Calculation & LR Computation:

- Extract the same grammatical features from the questioned document.

- Calculate the likelihood of these features given the author model, ( P(E | MA) ), and the population model, ( P(E | MP) ).

- Compute the likelihood ratio: ( \lambdaG = \frac{P(E | MA)}{P(E | M_P)} ) [21].

Decision and Uncertainty Quantification:

- Compare the computed LR to a pre-defined threshold (( \theta )) to make a same-author/different-author decision.

- Conduct a sensitivity analysis by varying the reference corpus, model parameters (e.g., n-gram size), or feature sets. Present the range of resulting LRs to communicate the uncertainty in the findings, aligning with the concept of an uncertainty pyramid [4].

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Key Research Reagents and Solutions for Computational Stylistics

| Reagent / Tool | Function / Purpose | Example / Notes |

|---|---|---|

| Reference Corpus | Provides a representative sample of language for building the population model under ( H_d ) [21]. | Must be matched for genre, topic, and time period to be valid [21]. |

| N-gram Language Model | A probabilistic model used to estimate the likelihood of a sequence of linguistic tokens (words, characters) [21]. | Core component of the LambdaG method; can be trained on grammatical features [21]. |

| Feature Extraction Library | Software to automatically extract and count linguistic features from raw text. | Tools like NLTK, spaCy, or the Linguistic Inquiry and Word Count (LIWC) dictionary [25]. |

| Assumptions Lattice | A conceptual framework for mapping and testing the impact of different analytical choices on the final LR [4]. | Used to structure uncertainty analysis by varying models, corpora, and features [4]. |

| Validation Dataset | A collection of texts with known authorship used to calibrate model parameters and decision thresholds. | Critical for establishing empirical error rates and validating the entire methodology [4]. |

The following diagram illustrates the logical sequence and dependencies involved in the quantitative style analysis process, from raw text to a forensically valid conclusion.

Authorship Verification (AV) is a core discipline within forensic linguistics concerned with determining whether a specific individual authored a given questioned document [21]. In its simplest form, AV addresses the problem: given a document of known authorship and a document of questioned authorship, did the same author write both? [21]. This task is forensically critical, arising in contexts ranging from analyzing ransom notes and blackmail letters to investigating social media posts, emails, and other digital communications [21] [26]. The proliferation of digital text has amplified the need for robust, scientifically defensible AV methods.

The Likelihood Ratio (LR) framework has emerged as the dominant paradigm for formally evaluating the strength of forensic evidence, including textual evidence [2] [27]. This framework provides a standardized method for quantifying how much more likely the evidence is under one hypothesis (typically the prosecution hypothesis, Hp: "The suspect authored the questioned document") than under an alternative hypothesis (typically the defense hypothesis, Hd: "Some other person authored the questioned document") [27]. An LR greater than 1 supports Hp, while an LR less than 1 supports Hd. This framework is ideal for expert testimony as it reflects the expert's duty to express the strength of evidence clearly and transparently [2].

Core Methodologies in Authorship Verification

AV methods can be broadly categorized by their operational approach and their implementation within the LR framework. The table below summarizes the principal methodological categories and their characteristics.

Table 1: Categories and Methodologies of Authorship Verification

| Category | Description | Key Features | LR Implementation |

|---|---|---|---|

| Unary Methods [21] | Relies solely on documents from a known author. Accepts authorship if the questioned document is sufficiently similar. | Does not require external reference data; can be sensitive to topic-specific language. | Less common, as typicality is hard to assess without a population reference. |

| Binary-Intrinsic Methods [21] | Compares the questioned document directly against the known author documents. | A direct comparison; does not explicitly use a population model. | Can be used with score-based LR approaches. |

| Binary-Extrinsic Methods [21] | Compares the questioned document to both the known author documents and a reference population. | Assesses both similarity (to the suspect) and typicality (within a population). | Highly compatible; the LR naturally incorporates the reference population. |

| Feature-Based LR Methods [27] | Computes LRs by directly modeling the multivariate distribution of linguistic features. | Uses discrete statistical models (e.g., Poisson); preserves more information but requires more data. | Direct; the model outputs a probability for the observed feature set under each hypothesis. |

| Score-Based LR Methods [27] | Reduces the multivariate data to a univariate similarity/distance score (e.g., cosine distance), then models the score distributions. | Simpler modeling; robust with limited data; suffers from information loss due to dimensionality reduction. | Indirect; LRs are estimated based on the probability density of the calculated score under Hp and Hd. |

The LambdaG Method: A Cognitive Linguistic Approach

A recent innovation in AV is the LambdaG (λG) method, which is based on the likelihood ratio of grammar models [21]. This method calculates the ratio between the likelihood of a questioned document given a grammar model of the candidate author and the likelihood of the same document given a grammar model of a reference population. The Grammar Models are estimated using n-gram language models trained exclusively on grammatical features, such as part-of-speech tags [21].