The Human Factor: Understanding and Mitigating Reasoning Challenges in Forensic Science Decisions

This article provides a comprehensive analysis of human reasoning challenges in forensic science decision-making.

The Human Factor: Understanding and Mitigating Reasoning Challenges in Forensic Science Decisions

Abstract

This article provides a comprehensive analysis of human reasoning challenges in forensic science decision-making. It explores the foundational cognitive limitations, from systemic biases to workplace stress, that can compromise forensic analysis. The content details proven methodological frameworks and procedural safeguards, such as Linear Sequential Unmasking, for mitigating error. It further examines troubleshooting via error typologies from wrongful conviction data and offers a comparative evaluation of human versus AI performance. Synthesizing key insights, the article concludes with strategic recommendations for embedding high-reliability principles to enhance accuracy and fairness in forensic practice and related biomedical fields.

The Inherent Flaws: How Human Cognition Shapes Forensic Judgment

The success of forensic science depends heavily on human reasoning abilities, yet decades of psychological science research reveal that human reasoning is not always rational [1]. Dual-process theory, a fundamental framework in cognitive psychology, provides a critical lens for understanding the cognitive mechanisms underlying forensic decision-making. This theory posits two distinct modes of thinking: System 1 (fast, automatic, intuitive) and System 2 (slow, deliberate, analytical) [2] [3]. In forensic science contexts, where decisions carry substantial consequences, the interplay between these systems significantly influences analytical outcomes. System 1 operates effortlessly and automatically, drawing on patterns and experiences to enable quick judgments, while System 2 requires intentional effort for complex problem-solving and analytical tasks [2].

Forensic science often demands that practitioners reason in "non-natural ways," countering the brain's inherent tendency to automatically integrate information from multiple sources to create coherent narratives [1]. This automatic integration, while typically advantageous for navigating daily life, introduces vulnerability to cognitive biases when forensic analysts must evaluate pieces of evidence independently of contextual information about a case. The tension between these natural cognitive processes and forensic science requirements creates critical challenges for analytical accuracy. This technical guide examines the manifestations of dual-process theory in forensic decision-making, explores specific cognitive challenges, and presents evidence-based protocols to mitigate bias through structured analytical procedures.

Theoretical Foundations of Dual-Process Theory

Defining System 1 and System 2 Characteristics

Dual-process theories in psychology describe how thought arises through two qualitatively distinct processes, often characterized as an implicit (automatic), unconscious process and an explicit (controlled), conscious process [3]. The table below summarizes the core characteristics of these two systems based on extensive psychological research:

Table 1: Core Characteristics of System 1 and System 2 Thinking

| Feature | System 1 (Intuitive) | System 2 (Deliberative) |

|---|---|---|

| Speed | Fast, immediate | Slow, sequential |

| Processing | Parallel, associative | Serial, analytical |

| Cognitive Demand | Low effort, automatic | High effort, controlled |

| Conscious Awareness | Unconscious, intuitive | Conscious, reflective |

| Evolutionary History | Older, shared with animals | Recent, predominantly human |

| Learning Mechanism | Associative conditioning | Logical inference, explicit instruction |

| Error Proneness | Vulnerable to cognitive biases | More reliable but not infallible |

| Dependency | Independent of working memory | Dependent on working memory capacity |

System 1 thinking is grounded in preconscious, automatic processing where information is processed rapidly and in parallel through associative networks [4]. This system operates effortlessly and opaquely, placing minimal demands on cognitive resources and acting upon schemas derived from concrete, emotionally significant, or repetitive experiences. In contrast, System 2 employs slow, deliberate information processing in a controlled and self-aware fashion, utilizing deductive reasoning that is effortful and cognitively demanding [4]. This system acquires knowledge through conscious learning from explicit sources rather than automatically established associations.

Neuropsychological Evidence and Theoretical Evolution

Neuropsychological research provides compelling evidence supporting the neural differentiation of intuitive and deliberate reasoning. Functional MRI studies reveal that deliberate reasoning activates the right inferior prefrontal cortex, while intuitive, belief-based responses associate with activation of the ventral medial prefrontal cortex [4]. These findings corroborate the behavioral distinction between the two systems and suggest System 2 processes can intervene in or inhibit System 1 processes.

The theoretical framework continues to evolve, with recent research challenging traditional classifications that associate intuitive processes solely with noncompensatory models and deliberate processes exclusively with compensatory ones [4]. Instead, a more nuanced framework suggests intuitive and deliberate characteristics coexist within both compensatory and noncompensatory processes, indicating greater complexity in the interaction between cognitive systems than previously theorized.

Cognitive Architecture of Forensic Decision-Making

Dual-Process Interactions in Analytical Contexts

In forensic decision-making environments, System 1 and System 2 do not operate in isolation but rather engage in dynamic interaction. The default-interventionist model suggests System 1 processes generate initial intuitive responses automatically, while System 2 monitors these outputs and may intervene when errors are detected or when cognitive conflict arises [5]. During forensic analysis, this interaction manifests when an examiner's initial impression of evidence similarity (System 1) is subsequently verified through deliberate point-by-point comparison (System 2).

The two systems operate in parallel, competing to determine final responses [4]. When forensic examiners face analytical tasks, System 1 processes the most accessible information and immediately proposes an intuitive answer, while System 2 simultaneously monitors the quality of this response, potentially approving, altering, or overriding it. The relative contribution of each system depends on situational factors (time pressure, complexity, contextual information) and decision-maker characteristics (expertise, cognitive capacity, training) [4].

Cognitive Flow in Forensic Analysis

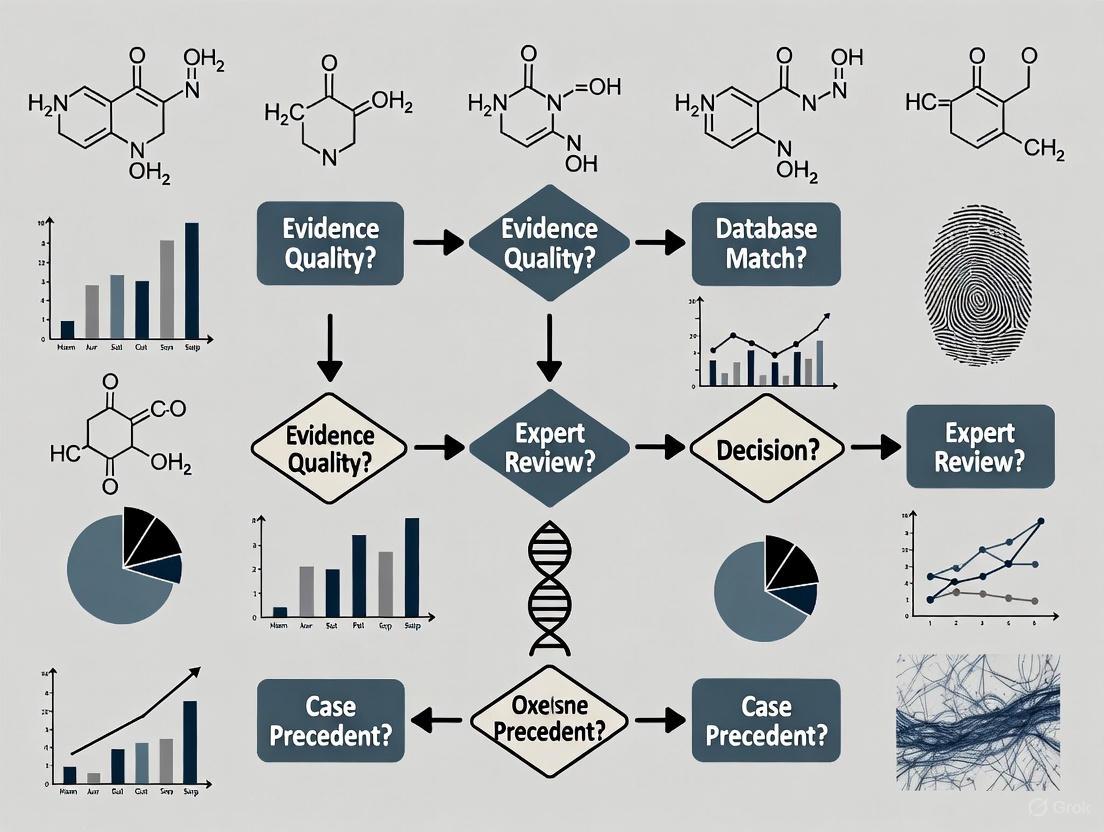

The following diagram illustrates the interaction between System 1 and System 2 during typical forensic evidence analysis:

This cognitive architecture creates inherent vulnerabilities when System 2 monitoring fails to engage adequately, potentially allowing automatic System 1 judgments to proceed without sufficient scrutiny—particularly under conditions of time pressure, fatigue, or high cognitive load [1] [6].

System 1 Heuristics and Cognitive Biases in Forensic Analysis

Mechanisms of Heuristic Thinking in Forensic Contexts

System 1 thinking relies on cognitive heuristics—mental shortcuts that enable efficient decision-making but introduce predictable errors in forensic contexts. These heuristics operate outside conscious awareness, making them particularly challenging to recognize and control [7]. The human brain develops numerous heuristics that support reasonably good decisions quickly, but this efficiency comes at the cost of occasional inaccurate conclusions based on insufficient analysis [8].

The automatic nature of System 1 processing creates specific vulnerabilities in forensic science, where practitioners must often resist natural cognitive tendencies toward coherence and pattern completion. Forensic examiners automatically combine information from multiple sources, create coherent narratives from potentially unrelated events, and construct interpretations through bottom-up (data-driven) and top-down (knowledge-driven) processing [1]. While this information integration generally serves us well, in forensic contexts it can lead to seeing "what we expect to see" and interpreting information in ways that confirm pre-existing beliefs [8].

Critical Biases in Forensic Decision-Making

Table 2: System 1 Heuristics and Corresponding Biases in Forensic Science

| Heuristic | Cognitive Mechanism | Forensic Manifestation | Impact on Analysis |

|---|---|---|---|

| Confirmation Bias | Seeking information that confirms existing beliefs | Selectively attending to features that match initial hypothesis | Premature closure on suspect identity; ignoring contradictory evidence |

| Anchoring Effect | Relying too heavily on initial information | Initial exposure to contextual information influences subsequent judgments | Initial suspect information "anchors" interpretation of ambiguous evidence |

| Representativeness | Judging probability by similarity to prototypes | Overemphasizing typical features while ignoring base rates | Assuming evidence matches suspect based on superficial similarity |

| Availability | Estimating likelihood based on ease of recall | Recent or memorable cases disproportionately influencing current analysis | Overestimating frequency of rare pattern matches based on memorable case |

| Affect Heuristic | Emotional responses influencing judgments | Emotional reaction to crime details affecting evidence interpretation | Gruesome crime scenes increasing perceived strength of ambiguous evidence |

These automatic System 1 processes demonstrate "cognitive impenetrability"—even when analysts know certain perceptions are false, they cannot always make themselves perceive the information differently [1]. This phenomenon explains why forensic examiners may continue to perceive a match between non-matching fingerprints even after learning they are from different sources, as System 1 processing continues to influence perception despite contradictory knowledge.

Experimental Studies and Empirical Evidence

Key Experimental Paradigms in Forensic Decision Research

Research examining dual-process theory in forensic contexts employs rigorous experimental designs to isolate the effects of System 1 and System 2 thinking on analytical accuracy. These studies typically utilize between-subjects designs where different groups of examiners receive varying levels of contextual information while analyzing identical evidence samples.

One foundational experimental protocol examined fingerprint analysis under different contextual conditions [6]. Participants were randomly assigned to either a biasing context group (exposed to emotional case details and suggestions of suspect guilt) or a blind group (no extraneous context). The biasing context group received a case narrative describing a violent crime with emotional victim impact statements, while the control group received only the prints without contextual information. All participants then analyzed ambiguous fingerprint pairs where ground truth had been established. Results demonstrated significantly higher match declarations in the biasing context group, particularly for ambiguous prints, revealing how System 1 processing integrates emotionally charged contextual information into analytical judgments.

Another experimental approach utilizes evidence "line-ups" to reduce comparative bias [6]. In this protocol, rather than comparing a single suspect sample to crime scene evidence, examiners evaluate multiple reference materials (including known innocent samples) presented simultaneously. This method counters the System 1 assumption that the provided suspect is the source, instead engaging System 2 to deliberately compare the evidence against multiple possibilities. Implementation of this protocol has demonstrated reduced false positive rates in firearms and toolmark identification.

Quantitative Findings on Cognitive Bias in Forensic Analysis

Table 3: Empirical Evidence of System 1 Vulnerabilities in Forensic Decisions

| Forensic Discipline | Experimental Manipulation | Effect on Decision Accuracy | Research Findings |

|---|---|---|---|

| Fingerprint Analysis | Contextual information about case | Increased false positives with biasing context | 52% of examiners changed conclusions when exposed to biasing context [6] |

| DNA Mixture | Base rate expectations | Altered threshold for declaring match | 23% variance in inclusion probabilities with different contextual cues [6] |

| Forensic Pathology | Order of information presentation | Premature closure on cause of death | 38% of pathologists ignored contradictory evidence after forming initial hypothesis [1] |

| Firearms Identification | Evidence line-ups vs. single suspect | Reduced confirmation bias | False positives decreased by 46% with multiple reference samples [6] |

| Handwriting Analysis | Emotional content of writing | Increased match declarations with disturbing content | Examiners 3.2x more likely to declare match when content was violent [6] |

These experimental findings consistently demonstrate that System 1 processing automatically incorporates task-irrelevant information into forensic judgments, even among highly trained and experienced examiners. The magnitude of these effects varies by discipline, with pattern recognition fields (fingerprints, firearms, handwriting) particularly vulnerable to contextual influences, while disciplines relying on instrumental analysis show somewhat less susceptibility.

Methodological Framework for System 2 Engagement

Procedural Safeguards Against Automatic Biases

Since cognitive biases operate largely outside conscious awareness, simply warning analysts about bias or encouraging them to "be objective" proves ineffective [6] [8]. Instead, structured procedural frameworks that systematically engage System 2 thinking provide the most reliable defense against automatic System 1 errors. These methodologies explicitly design analytical workflows to minimize exposure to potentially biasing information and create decision points that require deliberate, reflective thinking.

Linear Sequential Unmasking (LSU) and its expanded version LSU-E represent comprehensive approaches to managing the sequence of information exposure during forensic analysis [6]. This protocol emphasizes controlling the flow of task-relevant information to practitioners at times that minimize biasing influence while maintaining transparency about what information was received and when. The LSU-E framework utilizes three evaluation parameters—biasing power (information's perceived strength of influence), objectivity (variability of interpretation across individuals), and relevance (perceived relevance to analysis)—to determine optimal information sequencing.

Blind verification procedures constitute another essential methodological safeguard, providing true independence in technical review [6] [8]. In this protocol, a second examiner reviews the evidence with no knowledge of the first examiner's conclusions or any potentially biasing contextual information. This approach creates genuine System 2 engagement by preventing automatic alignment with the initial examiner's judgment and requiring independent analytical reasoning.

Experimental Workflow for Minimizing Cognitive Bias

The following diagram illustrates a structured experimental workflow designed to engage System 2 thinking and minimize System 1 biases in forensic analysis:

This methodological framework systematically engages System 2 at critical decision points throughout the analytical process, creating multiple opportunities for deliberate reasoning to override automatic intuitive judgments. The protocol emphasizes documentation at each stage to maintain transparency and create an audit trail of the decision-making process.

Research Reagents and Methodological Tools

Essential Materials for Dual-Process Research in Forensic Science

Research investigating dual-process theory in forensic contexts utilizes specific methodological tools and experimental materials designed to isolate cognitive mechanisms and measure their effects on decision quality. These "research reagents" enable standardized investigation across laboratories and facilitate direct comparison of findings.

Table 4: Essential Research Materials for Studying Dual-Process Theory in Forensic Contexts

| Research Tool | Composition/Configuration | Experimental Function | Application in Forensic Domains |

|---|---|---|---|

| Ambiguous Evidence Samples | Pre-validated evidence with known ground truth but ambiguous features | Measures susceptibility to contextual influences | Fingerprints, firearms, handwriting with borderline characteristics |

| Contextual Manipulation Stimuli | Case narratives with varying emotional content and suggestive elements | System 1 priming for confirmation bias studies | Emotional victim statements, suggestions of suspect guilt or innocence |

| Evidence Line-Up Sets | Multiple known samples including innocent sources alongside suspect | Counters presumption of guilt in single-suspect comparisons | Firearm cartridges, fingerprints, shoeprints with distractor items |

| Process-Tracing Software | Eye-tracking, mouselab, or verbal protocol analysis tools | Tracks information acquisition and processing strategies | Identifies heuristic versus systematic processing during evidence comparison |

| Cognitive Load Tasks | Simultaneous working memory tasks (e.g., digit retention) | Depletes cognitive resources available for System 2 monitoring | Measures expertise degradation under high cognitive demand |

| Blinded Verification Protocols | Standardized procedures for independent technical review | Tests effectiveness of System 2 engagement strategies | Validation of sequential unmasking in various forensic disciplines |

These research tools enable precise experimental manipulation of factors that influence the balance between System 1 and System 2 processing in forensic decision-making. By systematically employing these materials across studies, researchers can identify domain-specific vulnerabilities and develop targeted interventions to promote analytical reasoning.

Dual-process theory provides a powerful explanatory framework for understanding human reasoning challenges in forensic science decisions. The automatic, intuitive operations of System 1 thinking—while efficient for everyday decisions—create systematic vulnerabilities in forensic contexts where objectivity is paramount. Conversely, the deliberate, analytical processes of System 2 offer protection against these biases but require cognitive resources and structured implementation to function effectively.

The experimental evidence and methodological frameworks presented in this technical guide demonstrate that effective bias mitigation requires more than awareness or intention—it demands systematic procedural safeguards that explicitly manage information flow, engage analytical reasoning at critical decision points, and create accountability through documentation and verification. As forensic science continues to evolve its scientific foundations, integrating these cognitive principles into standard practice represents an essential step toward enhancing the reliability and validity of forensic evidence analysis.

Future research should continue to refine our understanding of the complex interactions between System 1 and System 2 across different forensic disciplines, develop more effective protocols for engaging analytical reasoning under operational constraints, and explore individual differences in cognitive style that might predict bias susceptibility. Through such efforts, the forensic science community can transform theoretical insights from dual-process research into practical advances that strengthen the foundation of justice systems worldwide.

The success of forensic science depends heavily on human reasoning abilities. Although we typically navigate our lives well using those abilities, decades of psychological science research shows that human reasoning is not always rational [9] [10]. Cognitive contamination refers to the process by which task-irrelevant information—such as investigative context, suspect background, or other extraneous knowledge—inappropriately influences the collection, perception, or interpretation of forensic evidence [11] [12]. This phenomenon represents a critical challenge to the validity and reliability of forensic science, particularly in disciplines that rely on human judgment for pattern matching and evidence interpretation.

The forensic community has undergone a significant transformation in recognizing these challenges since the 2009 National Academy of Sciences (NAS) report, which highlighted that pattern-matching disciplines are susceptible to cognitive bias effects due to their reliance on people to make judgments about evidence without sufficient scientific safeguards [11]. This technical guide examines the mechanisms of cognitive contamination, its impact on forensic decision-making, and evidence-based mitigation strategies, framed within the broader context of human reasoning challenges in forensic science decisions.

Theoretical Foundations: The Cognitive Science of Forensic Reasoning

Defining Cognitive Contamination in Forensic Contexts

Cognitive contamination occurs when forensic examiners are exposed to information that should not logically influence their analytical decisions, yet unconsciously affects their judgments [13] [12]. Unlike physical contamination of evidence, cognitive contamination operates through psychological mechanisms that can alter perception and interpretation without the examiner's awareness. Technical definition for cognitive biases is decision patterns that occur when people's "preexisting beliefs, expectations, motives, and the situational context may influence their collection, perception, or interpretation of information, or their resulting judgments, decisions, or confidence" [11].

Research has identified multiple sources of bias that can contribute to cognitive contamination in forensic practice. Dror (2020) summarized eight distinct sources of bias that have unique and compounding effects on expert decisions [11]:

- The Data: The evidence obtained in connection with the crime event can contain biasing elements and evoke emotions that can influence decisions.

- Reference Materials: The materials gathered to compare to the data and infer something about its source can affect forensic examiner's conclusions.

- Contextual Information: Extraneous details about the case, suspect, or investigation can unconsciously shape interpretation.

- Base-Rate Expectations: Prior expectations about the likelihood of certain events or matches can influence current judgments.

- Organizational Factors: Laboratory policies, productivity pressures, and hierarchical structures can introduce systematic biases.

- Motivational Factors: Career ambitions, desire to please investigators, or avoidance of cognitive dissonance can affect decision-making.

- Human Factors: General cognitive limitations in attention, memory, and perception underlie all forensic judgments.

- Educational and Training Factors: The way examiners are trained creates foundational assumptions and approaches to evidence.

The table below summarizes the key bias types, their mechanisms, and representative examples from forensic practice:

Table 1: Cognitive Bias Types in Forensic Evidence Interpretation

| Bias Type | Technical Definition | Mechanism of Influence | Forensic Examples |

|---|---|---|---|

| Contextual Bias | Extraneous case information inappropriately influencing perceptual judgment | Prior knowledge shapes expectation, which directs attention toward confirming information | Fingerprint examiners changing judgments when told suspect confessed or had verified alibi [12] |

| Confirmation Bias | Seeking or interpreting evidence in ways that confirm pre-existing beliefs | Selective attention to confirming features while discounting disconfirming evidence | Emphasizing similarities between evidence and reference materials while minimizing differences [11] |

| Automation Bias | Over-reliance on automated systems or algorithmic outputs | Technology usurps rather than supplements expert judgment | Examiners favoring candidate images presented at top of AFIS/FRT list regardless of actual match quality [12] |

| Memory Bias | Systematic errors in encoding, storage, or retrieval of forensic data | Prior experiences and cases influence perception of current evidence | Analysts overlooking critical details in current case due to similarity with previous case [13] |

Experimental Evidence: Quantifying Cognitive Contamination Effects

Foundational Studies in Pattern Evidence Disciplines

Seminal research by Dror and Charlton (2006) demonstrated that contextual information could cause fingerprint examiners to change 17% of their own prior judgments when presented with the same prints but different contextual information [12]. In this protocol, five experienced fingerprint examiners were presented with pairs of fingerprints they had previously evaluated and found to be matches. The experimental manipulation involved providing extraneous contextual information suggesting the prints should not match (verified alibi) or should match (suspect confession). The results demonstrated that even highly trained experts were vulnerable to cognitive contamination from task-irrelevant information.

A similar study with DNA analysts found they formed different opinions of the same DNA mixture when they knew that one of the suspects had accepted a plea bargain, demonstrating that cognitive contamination affects even disciplines considered more objective [12].

Recent Experimental Protocols: Facial Recognition Technology

A 2025 study examined cognitive bias in simulated facial recognition searches using a rigorous experimental protocol [12]. The methodology was designed to test both contextual and automation bias effects:

Participants: N = 149 participants acting as mock forensic facial examiners.

Materials: Two simulated FRT tasks, each containing a probe image of a perpetrator's face and three candidate faces that FRT allegedly identified as possible matches.

Experimental Conditions:

- Automation bias condition: Each candidate randomly paired with either a high, medium, or low numerical confidence score.

- Contextual bias condition: Each candidate randomly paired with extraneous biographical information (e.g., prior criminal record).

Dependent Variables: Perceived similarity ratings and identification decisions.

Results: Participants rated whichever candidate's face was paired with guilt-suggestive information or a high confidence score as looking most like the perpetrator's face, even though those details were assigned at random. Furthermore, candidates randomly paired with guilt-suggestive information were most often misidentified as the perpetrator [12].

This experimental protocol demonstrates that cognitive contamination can systematically distort face matching judgments, with significant implications for the use of FRT in criminal investigations.

Historical Case Studies: Dreyfus and Mayfield

The Dreyfus Affair (late 19th century) and Brandon Mayfield case (2004) provide historical examples of cognitive contamination with profound consequences [14]. In the Dreyfus case, handwriting analysis was distorted by antisemitic prejudice, while in the Mayfield case, multiple fingerprint examiners misidentified an innocent man in connection with the Madrid train bombing, partly due to knowledge that other examiners had already made the identification [14]. These cases highlight how cognitive contamination can occur even with experienced examiners and can propagate through verification processes.

Mitigation Strategies: Technical Protocols for Reducing Cognitive Contamination

Linear Sequential Unmasking-Expanded (LSU-E)

Linear Sequential Unmasking-Expanded is a procedural safeguard designed to manage the flow of information to forensic examiners [11]. The protocol involves:

- Documenting all relevant information available to the examiner before the analysis begins.

- Conducting the initial examination using only the essential information needed for the analysis.

- Recording conclusions from the initial examination before additional information is revealed.

- Systematically revealing additional information only when necessary, with documentation of how each new piece of information affects the conclusions.

The Costa Rican Department of Forensic Sciences implemented LSU-E in a pilot program within their Questioned Documents Section, demonstrating its practical feasibility and effectiveness in reducing cognitive contamination [11].

Blind Verification and Case Management

Blind verification prevents one examiner's conclusions from influencing another by ensuring that verifying examiners do not know the initial examiner's results or have access to potentially biasing contextual information [14]. This approach is particularly important for difficult or ambiguous evidence where cognitive contamination risk is highest.

The case manager model separates the forensic examiner from direct communication with investigators, controlling the information flow to ensure examiners receive only task-relevant information [11]. This system has been successfully implemented in the Costa Rican pilot program, providing a practical model for other laboratories.

Analytical Flowcharts for Cognitive Bias Mitigation

The following diagram illustrates a standardized workflow for implementing cognitive bias mitigation strategies in forensic analysis:

Diagram 1: Cognitive bias mitigation workflow

Laboratory Implementation Framework

Successful implementation of cognitive bias mitigation strategies requires addressing common misconceptions within the forensic community. The table below identifies six fallacies about cognitive bias and provides evidence-based corrections:

Table 2: Correcting Misconceptions About Cognitive Bias in Forensic Science

| Fallacy Name | Common Misconception | Evidence-Based Correction |

|---|---|---|

| Ethical Issues | "Only bad people are biased" | Cognitive bias is not an ethical issue but a normal decision-making process with limitations [11] |

| Bad Apples | "Only incompetent people are biased" | Bias does not result from lack of skill; even highly competent experts are vulnerable [11] |

| Expert Immunity | "Experience makes me immune to bias" | Expertise may increase reliance on automatic decision processes, potentially increasing bias [11] |

| Technological Protection | "Technology will eliminate subjectivity" | AI and algorithms are built, programmed, and interpreted by humans, so cannot eliminate bias [11] |

| Blind Spot | "I know bias exists, but I'm not vulnerable" | People consistently show a "bias blind spot," underestimating their own susceptibility [11] |

| Illusion of Control | "Awareness alone prevents bias" | Willpower cannot overcome automatic processes; systematic safeguards are necessary [11] |

Specialized Research Reagents and Materials for Cognitive Contamination Studies

The experimental study of cognitive contamination requires specific materials and methodological approaches. The following table details key resources for designing rigorous experiments in this domain:

Table 3: Research Reagent Solutions for Cognitive Contamination Experiments

| Reagent/Material | Technical Specification | Research Application | Example Use Case |

|---|---|---|---|

| Forensic Comparison Stimuli | Matched sets of pattern evidence (fingerprints, faces, handwriting) with ground truth established | Creating experimental tasks with known correct answers | Testing bias effects using fingerprints previously evaluated by same examiners [12] |

| Contextual Manipulation Protocols | Standardized textual case information (e.g., suspect confessions, prior records) | Experimental manipulation of contextual variables | Providing false contextual information to test confirmation bias [12] |

| Automation Bias Probes | Simulated algorithm confidence scores (high/medium/low) for pattern matches | Testing over-reliance on technological outputs | Assigning random confidence scores to facial recognition candidates [12] |

| Blind Analysis Software | Information management systems controlling revelation of case details | Implementing sequential unmasking in laboratory settings | Limiting examiner access to non-essential information during initial analysis [11] |

| Eye-Tracking Equipment | Gaze pattern and fixation duration measurement systems | Quantifying attentional allocation during evidence examination | Identifying how contextual information directs attention to confirming features [13] |

Emerging Challenges: Artificial Intelligence and Cognitive Contamination

The integration of artificial intelligence into forensic practice introduces new dimensions to cognitive contamination. Research indicates that AI systems can both reduce and amplify biases depending on their design and implementation [14]. Dror and Mnookin (2010) proposed a taxonomy of human-technology interaction that helps analyze these effects:

- Offloading: Experts delegate routine tasks to machines while retaining ultimate judgment.

- Collaborative Partnership: Humans and algorithms jointly negotiate interpretation.

- Subservient Use: Humans defer to machine outputs and suspend critical scrutiny.

Each interaction mode produces distinct epistemic vulnerabilities at the human-AI interface [14]. A 2025 study found that participants using facial recognition technology were biased by both extraneous biographical information and algorithmic confidence scores, demonstrating that automation bias represents a significant form of cognitive contamination in modern forensic systems [12].

The following diagram illustrates the bidirectional relationship between human cognition and AI systems in forensic contexts:

Diagram 2: Human-AI interaction in forensic decision-making

Cognitive contamination represents a fundamental challenge to the validity and reliability of forensic science. The research evidence demonstrates that contextual information and extraneous knowledge can systematically distort forensic decision-making across multiple disciplines, from traditional pattern evidence fields to emerging technologies like facial recognition. Mitigating these effects requires implementing evidence-based procedural safeguards such as Linear Sequential Unmasking-Expanded, blind verification, and case management systems.

The future of forensic science depends on building a culture that acknowledges the inherent limitations of human cognition while implementing systematic protections against cognitive contamination. This approach requires moving beyond the fallacy of expert immunity and recognizing that bias mitigation is not an ethical failing but a scientific necessity. As forensic science continues to evolve with new technologies, maintaining focus on the human factors underlying evidence interpretation will be essential for ensuring both the accuracy and integrity of forensic practice.

The integrity of forensic science and forensic mental health assessment is foundational to the administration of justice. Despite advanced training and professional credentials, forensic experts remain vulnerable to systematic cognitive errors that can compromise objectivity and accuracy. Groundbreaking work by cognitive neuroscientist Itiel Dror has demonstrated that even highly competent professionals are susceptible to biases influenced by cognitive processes and external pressures [15]. This technical analysis examines the core expert fallacies that perpetuate what is termed the "bias blind spot" - the pervasive tendency to recognize biases in others while denying their influence on one's own judgments [16]. Within the context of human reasoning challenges in forensic science decisions research, we explore the psychological mechanisms underlying these fallacies, present empirical evidence of their effects across forensic disciplines, and propose structured mitigation protocols grounded in cognitive science.

The challenge is particularly acute in forensic mental health assessments, where evaluators often operate in feedback vacuums, cutoff from corrective feedback, peer review, and consultation [15]. This isolation allows fallacies and biasing influences to threaten objectivity and fairness in evaluations, ultimately undermining the validity of findings and potentially compromising justice [15]. Understanding these cognitive vulnerabilities is essential for developing effective countermeasures and advancing toward more aspirational forensic practice [17].

Theoretical Framework: Dual-Process Theory and Expert Cognition

Systems of Reasoning in Forensic Decision-Making

Human reasoning operates through two distinct cognitive systems, as theorized by Daniel Kahneman, who integrated these insights into psychological research on judgment and decision-making under uncertainty [18]. The application of this framework to forensic science reveals fundamental tensions between natural reasoning patterns and the demands of rigorous forensic analysis.

Table 1: Cognitive Systems in Forensic Decision-Making

| Attribute | System 1 Thinking | System 2 Thinking |

|---|---|---|

| Process | Fast, intuitive, reflexive | Slow, analytical, deliberate |

| Cognitive Effort | Low effort, automatic | High effort, controlled |

| Awareness | Subconscious | Conscious and intentional |

| Basis | Innate predispositions, learned patterns | Logic, rule application, deliberate memory search |

| Vulnerability | Highly susceptible to cognitive biases | Less susceptible but requires cognitive resources |

| Role in Expertise | Enables pattern recognition through experience | Facilitates careful evidence weighing and hypothesis testing |

The interplay between these systems explains how experienced experts can simultaneously demonstrate remarkable pattern recognition abilities while remaining vulnerable to elementary cognitive errors. System 1 thinking enables efficient processing of complex information through learned patterns but introduces vulnerabilities through what Kahneman terms "fast thinking" or snap judgments based on minimal data [15]. Forensic practice demands System 2 thinking - slow, effortful, and intentional reasoning executed through logic and conscious rule application - yet the cognitive economy of System 1 creates persistent vulnerabilities [15] [18].

Dror's Pyramidal Model of Biasing Elements

Itiel Dror's cognitive framework conceptualizes how biases infiltrate expert decisions through a pyramidal structure demonstrating how cognitive processes interact with case-specific and baseline biases to influence outcomes [15]. This model provides a systematic architecture for understanding how ostensibly objective forensic analyses can be compromised through multiple pathways.

Figure 1: Dror's Pyramidal Model of Biasing Elements in Forensic Decision-Making

The pyramidal model illustrates how baseline biases rooted in professional socialization, education, training, worldview, and experience create foundational vulnerabilities [15]. These baseline influences shape how case-specific biases - including contextual information, motivational factors, and organizational pressures - are processed [15]. These biasing elements ultimately affect cognitive processes through data selection (what information is collected), data weighting (what importance is assigned), and data interpretation (how information is understood), ultimately influencing the final forensic decision [15].

The Six Expert Fallacies: Manifestations and Mechanisms

Dror identified six expert fallacies that increase risk for bias by creating false security about vulnerability to cognitive contamination [15]. These fallacies represent fundamental misunderstandings about the nature and operation of bias in expert judgment.

Fallacy 1: Only Unethical Practitioners Are Biased

The belief that cognitive bias primarily affects unscrupulous peers driven by greed or ideology represents a fundamental misunderstanding of cognitive science [15]. In reality, vulnerability to cognitive bias is a human attribute unrelated to character or ethical commitment [15]. Forensic psychiatrists and psychologists may correctly view themselves as ethical practitioners who adhere to ethics mandates, yet as humans in a complex world, even the most ethical practitioners experience cognitive biases [15]. This fallacy stems from confusion between cognitive biases (unconscious processing errors) and intentional discriminatory biases.

Fallacy 2: Bias Stems from Incompetence

This fallacy presumes that only incompetent evaluators fall prey to biasing influences, and that technical competence provides immunity [15]. In reality, an evaluation can be well-written, logical, and employ widely accepted assessment instruments yet conceal biased data gathering through selective attention to certain data types or failure to consider contextual factors [15]. For example, an evaluator might overrely on criminal history while omitting discussion of how risk instruments may be racially biased or inapplicable to specific populations [15]. Technical competence does not obviate the crucial need for bias-mitigating actions.

Fallacy 3: Expert Immunity Through Experience

Paradoxically, the mantle of "expert" may itself enhance bias risk through the development of cognitive efficiencies or shortcuts [15]. Experience may lead experts to selectively attend to data that comports with preconceived notions while neglecting novel, potentially salient data points [15]. The cognitive mechanisms that enable pattern recognition and predictive expectations can simultaneously create blind spots. For example, a forensic evaluator specializing in malingering assessments might automatically dismiss certain symptom presentations based on past experience, failing to consider alternative explanations [15].

Fallacy 4: Technological Protection

Forensic experts may believe that technological methods - including instrumentation, machine learning, artificial intelligence, or actuarial risk tools - eliminate bias [15]. This technological protection fallacy overlooks how algorithms and statistical values can foster false empiricism [15]. Risk assessment tools may incorporate inadequate normative representation of racial groups, potentially overestimating risk in minority populations [15] [14]. The assumption that statistical data represents "good psychological science" ignores how risk factors reflect researcher values and dominant cultural norms about maladaptive behavior [15] [16].

Fallacy 5: The Bias Blind Spot

The bias blind spot represents the well-documented phenomenon where experts perceive others as vulnerable to bias but not themselves [15] [16]. Because cognitive biases operate beyond awareness, experts frequently fail to recognize their own susceptibility [15]. Survey research with forensic mental health professionals demonstrates this blind spot clearly: while 86% acknowledge bias impacts forensic sciences generally and 79% recognize its influence on forensic evaluation specifically, only 52% acknowledge its effect on their own evaluations [16]. This self-other asymmetry creates significant barriers to effective bias mitigation.

Fallacy 6: Willpower and Introspection Are Sufficient

Most evaluators express concern about cognitive bias but hold the incorrect view that mere willpower or conscious effort can reduce bias [16]. Survey data indicates that 87% of forensic evaluators believe that consciously trying to set aside preexisting beliefs reduces their influence [16]. This perspective misunderstands the automatic, unconscious nature of cognitive biases, which cannot be eliminated through introspection alone [16]. Decades of research overwhelmingly indicate that cognitive bias operates automatically and cannot be eliminated through willpower [16].

Experimental Evidence: Quantifying Bias Effects

Contextual Bias in Forensic Pattern Matching

Empirical studies across multiple forensic disciplines demonstrate how extraneous contextual information systematically distorts expert judgment. In a seminal study, Dror and Charlton found that fingerprint examiners changed 17% of their own prior judgments of the same prints when presented with contextual information suggesting the suspect had confessed or provided a verified alibi [19] [12]. Similar effects have been documented in DNA analysis, where analysts formed different opinions of the same DNA mixture when aware a suspect had accepted a plea bargain [19] [12]. Contextual bias effects are particularly pronounced in ambiguous or difficult judgments, where cognitive uncertainty creates greater reliance on contextual cues [19] [12].

Automation Bias in Technological Systems

Automation bias occurs when examiners become overly reliant on metrics generated by technology, allowing the technology to usurp rather than supplement their judgment [19] [12]. In fingerprint analysis, when examiners were presented with AFIS search results in randomized order, they spent more time analyzing whichever print appeared at the top of the list and more frequently identified that print as a match - regardless of its actual validity [19] [12]. This automation bias demonstrates how human experts may defer to algorithmic outputs rather than maintaining independent critical assessment.

Experimental Protocol: Facial Recognition Technology Study

Objective: To test whether contextual and automation biases distort judgments in facial recognition technology (FRT) searches [19] [12].

Participants: 149 participants acting as mock forensic facial examiners [19] [12].

Design: Participants completed two simulated FRT tasks, each comparing a probe image of a perpetrator against three candidate faces that FRT allegedly identified as potential matches [19] [12].

- Automation Bias Condition: Candidates were randomly paired with high, medium, or low numerical confidence scores [19] [12].

- Contextual Bias Condition: Candidates were randomly paired with extraneous biographical information (prior similar crimes, already incarcerated, or military service) [19] [12].

Measures: Participants rated each candidate's similarity to the probe and indicated which candidate they believed was the perpetrator [19] [12].

Results: Participants consistently rated candidates paired with guilt-suggestive information or high confidence scores as looking most similar to the perpetrator, despite random assignment [19] [12]. Those randomly paired with guilt-suggestive information were most frequently misidentified as the perpetrator [19] [12].

Table 2: Quantitative Findings from FRT Bias Study

| Bias Type | Experimental Manipulation | Effect on Similarity Ratings | Misidentification Rate |

|---|---|---|---|

| Contextual Bias | Biographical information (prior crimes, incarceration, military service) | Significant increase for guilt-suggestive candidates | Highest for candidates with criminal history |

| Automation Bias | Confidence scores (high, medium, low) | Significant increase for high-confidence candidates | Elevated for high-confidence candidates |

| Combined Effects | Interaction of contextual and automation cues | Potentially additive biasing effects | Requires further investigation |

This experimental protocol demonstrates that even when using technologically advanced identification systems, human cognitive biases significantly influence outcomes, supporting the need for structured safeguards in forensic procedures [19] [12].

Bias Mitigation: Structured Approaches

Linear Sequential Unmasking-Expanded (LSU-E)

Linear Sequential Unmasking-Expanded represents a procedural approach to minimizing cognitive contamination by controlling the sequence and exposure of information during forensic analysis [15] [20]. This method extends basic linear sequential unmasking by incorporating additional safeguards against contextual influences.

The core principles of LSU-E include:

- Information Sequencing: Ensuring that base analysis of evidence is conducted before exposure to potentially biasing contextual information [15] [20].

- Documentation of Initial Impressions: Recording preliminary conclusions before accessing domain-irrelevant information [15] [20].

- Transparent Reporting: Clearly documenting all information considered at each decision point in the analytical process [15] [20].

Implementation of LSU-E and related procedural safeguards in forensic laboratories has demonstrated feasibility and effectiveness in reducing subjectivity and enhancing reliability [20]. The Department of Forensic Sciences in Costa Rica successfully piloted a program incorporating LSU-E, blind verification, and case managers to mitigate bias in questioned document analysis [20].

The Scientist's Toolkit: Research Reagents for Bias Mitigation

Table 3: Essential Methodological Components for Bias Research and Mitigation

| Tool/Component | Function | Application Context |

|---|---|---|

| Linear Sequential Unmasking-Expanded (LSU-E) | Controls information flow to prevent contextual bias | Forensic pattern comparison, document analysis |

| Blind Verification | Prevents one examiner's conclusions from influencing another | All forensic disciplines requiring verification |

| Context Management Protocols | Limit exposure to irrelevant potentially biasing information | Crime scene analysis, forensic evaluations |

| Alternative Hypothesis Testing | Requires explicit consideration of competing explanations | Forensic mental health, autopsy decisions |

| Cognitive Bias Training | Increases awareness of inherent vulnerabilities | Foundational for all forensic practitioners |

| Decision Documentation Tools | Creates record of analytical process and timing | Quality assurance, procedural transparency |

Bayesian Frameworks for Evaluative Reporting

The European Network of Forensic Science Institutes and other standards bodies have developed protocols requiring reporting of evidence probability under multiple hypotheses using likelihood ratios [18]. This approach requires forensic scientists to consider the probability of evidence under both prosecution and defense hypotheses, providing a more balanced and transparent framework [18].

The likelihood ratio is expressed as:

LR = p(E|Hp) / p(E|Hd)

Where:

- p(E|Hp) = probability of evidence given prosecution hypothesis

- p(E|Hd) = probability of evidence given defense hypothesis

This methodological approach directly addresses cognitive vulnerabilities in human reasoning, particularly the tendency to neglect alternative explanations and baseline probabilities [18]. Proper application requires training in elementary probability theory to avoid common reasoning errors such as transposing conditional probabilities (the prosecutor's fallacy) [18].

The research evidence unequivocally demonstrates that expertise and ethical commitment provide insufficient protection against cognitive biases that systematically influence forensic decision-making. The six expert fallacies identified in Dror's framework create dangerous misconceptions about vulnerability to these influences, while the bias blind spot prevents professionals from recognizing their own susceptibility [15] [16].

Advancing beyond competent to exceptional forensic practice requires acknowledging these inherent vulnerabilities and implementing structured safeguards rather than relying on introspection or willpower [16] [17]. Procedural approaches like Linear Sequential Unmasking-Expanded, blind verification, Bayesian frameworks, and cognitive bias training represent evidence-based strategies for mitigating these universal human reasoning challenges [15] [20] [18].

Future directions should emphasize cross-domain research integrating insights from cognitive psychology, forensic science, and decision theory. Additionally, forensic training programs must incorporate comprehensive education about cognitive vulnerabilities alongside technical skill development. By embracing these approaches, forensic professionals can progressively narrow the gap between actual practice and aspirational standards, enhancing both the accuracy and fairness of forensic science within the justice system.

Within the rigorous domain of forensic science, the accuracy of expert decision-making is a cornerstone of justice. Traditional research has rightly focused on methodological precision and technological advancements. However, a critical human factor often remains overlooked: the pervasive impact of workplace stress and well-being on forensic experts' decision quality and error rates. A growing body of evidence suggests that stress is not merely an individual comfort issue but a significant variable that can systematically influence forensic judgments [21]. This whitepaper synthesizes current research to argue that workplace stress acts as a pivotal, yet frequently unaccounted for, factor in forensic decision-making. By exploring its mechanisms, impacts, and potential mitigations within the context of human reasoning challenges, this document aims to provide forensic researchers, scientists, and drug development professionals with a scientific framework for understanding and integrating this variable into their quality control and research paradigms.

Theoretical Framework: Stress as a Human Factor in Forensic Decisions

The Challenge-Hindrance Stressor Framework in Forensic Science

The impact of stress on professional performance is not monolithic. The Challenge-Hindrance Stressor Framework provides a useful lens for understanding its dual nature in forensic contexts. Within this model, stressors can be categorized as either challenge stressors or hindrance stressors [21].

- Challenge Stressors: These are work demands perceived as potential opportunities for growth and mastery, such as a complex, novel case that stretches an expert's analytical skills. When managed effectively, these stressors can potentially contribute to improved performance and engagement.

- Hindrance Stressors: These are work demands perceived as impediments to personal growth and goal achievement, such as unrealistic administrative demands, lack of organizational support, or unfair treatment. These stressors are consistently associated with negative outcomes, including reduced job satisfaction and increased error rates [21] [22].

The net effect of stress on a forensic expert's decision-making is posited to depend on the type, level, and context of the stress experienced, creating a complex relationship that requires context-specific understanding [21].

Decision Fatigue in High-Stakes Environments

A specific manifestation of cognitive resource depletion highly relevant to forensic work is decision fatigue. This psychological phenomenon refers to the deterioration in decision quality after a long sequence of choice-making [23]. Rooted in the concept of "ego depletion," it suggests that the mental energy required for self-control and deliberate decision-making is a finite resource that can be exhausted [23]. In fields like emergency medicine—a useful analogue for the high-stakes, rapid-turnaround environment of some forensic labs—physicians face a relentless stream of complex decisions. Evidence indicates that as cognitive resources diminish, professionals are more likely to resort to impulsive, less-considered decisions or even avoid making decisions altogether [23]. For a forensic expert examining fingerprint after fingerprint or complex DNA mixtures, decision fatigue could manifest as a tendency toward default "inconclusive" judgments or an increased likelihood of overlooking critical details as a shift progresses.

Quantitative Evidence: Linking Stress to Decision-Making Outcomes

Empirical studies across various high-stakes professions provide quantitative data on the correlation between workplace stress, decision-making processes, and outcomes. The table below summarizes key findings from relevant fields.

Table 1: Quantitative Evidence of Stress and Fatigue Impacts on Professional Decision-Making

| Profession / Context | Key Stressor | Impact on Decision-Making | Measured Outcome | Citation |

|---|---|---|---|---|

| Forensic Fingerprint Experts | Induced stress (experimental) | - Performance: Improved accuracy for same-source evidence.- Risk-Aversion: Increased reports of "inconclusive" on difficult same-source prints.- Confidence: Minimal impact on expert confidence. | - Performance metrics- Conclusion rates- Confidence ratings | [24] |

| Forensic Fingerprint Novices | Induced stress (experimental) | - Performance: Mixed impacts.- Response Time: Significant impact.- Confidence: Significant impact on overall confidence levels. | - Performance metrics- Response time- Confidence ratings | [24] |

| General Workforce | Adverse working conditions & management practices | - Causes of Stress: Unrealistic demands, lack of support, unfair treatment, low decision latitude, effort-reward imbalance.- Reported Prevalence: Working conditions cited as a main stress source by 42 of 51 interviewees. | - Qualitative interview data- Frequency analysis | [22] |

| Emergency Medicine Physicians | Decision fatigue from prolonged, high-stakes shifts | - Error Rates: Correlated with increased diagnostic errors and medication errors.- Decision Quality: Decline in appropriateness and effectiveness of clinical judgments. | - Observed error rates- Quality assessment of decisions | [23] |

The data reveals a nuanced picture. In controlled studies, stress can sometimes coincide with improved performance on specific tasks, as seen with fingerprint experts [24]. However, it also alters decision-making patterns, promoting risk-aversion. In real-world settings, stressors like poor management and high workloads are consistently linked to negative perceptual and health outcomes, which are known precursors to performance degradation [22]. The correlation between fatigue and error rates in emergency medicine further solidifies the link between resource depletion and diminished decision quality [23].

Experimental Protocols for Investigating Stress in Forensic Decision-Making

To rigorously study this variable, controlled experimental protocols are essential. The following methodology, adapted from a seminal study on forensic decision-making, provides a template for investigating the impact of stress on expert judgment.

Protocol: Fingerprint Decision-Making Under Induced Stress

1. Objective: To examine the effect of acute psychosocial stress on the accuracy, conclusion types, and confidence of fingerprint experts and novices.

2. Participants:

- Expert Group: Practicing fingerprint experts with significant casework experience (e.g., N=34).

- Novice Group: Individuals with no professional fingerprint identification experience (e.g., N=115).

- Design: Randomized controlled trial, typically with a between-subjects design (Stress Group vs. Control Group).

3. Stress Induction Manipulation:

- Stress Group: Participants are subjected to a standardized psychosocial stress protocol, such as the Trier Social Stress Test (TSST), which involves public speaking and mental arithmetic tasks in front of an evaluative panel. Salivary cortisol or subjective stress scales (e.g., State-Trait Anxiety Inventory) are collected to physiologically and psychologically validate the stress induction.

- Control Group: Participants complete a non-stressful control task of similar duration.

4. Decision-Making Task:

- Following the stress/control manipulation, participants complete a computerized fingerprint matching task.

- Stimuli: The task contains a series of fingerprint pairs. The set should include:

- Same-Source Pairs (SS): Prints from the same finger, varying in quality and clarity.

- Different-Source Pairs (DS): Prints from different fingers that may share some similar features.

- The difficulty level (easy vs. difficult) should be controlled and pre-tested.

5. Data Collection Measures:

- Primary Outcome 1 - Decision Accuracy: The proportion of correct identifications and exclusions across SS and DS pairs.

- Primary Outcome 2 - Decision Type: The rate of each possible conclusion ("Identification," "Exclusion," "Inconclusive").

- Primary Outcome 3 - Response Time: The time taken to reach each decision.

- Primary Outcome 4 - Confidence: Self-reported confidence in each decision on a Likert scale (e.g., 1-7).

6. Data Analysis:

- Employ statistical tests (e.g., ANOVA, t-tests) to compare the Stress and Control groups on all outcome measures.

- Analyze interactions between Group (Expert/Novice), Condition (Stress/Control), and Print Difficulty.

- Mediation analyses can explore whether the effect of stress on accuracy is mediated by changes in risk-taking (e.g., inconclusive rates) or confidence [24].

Pathways and Workflows: Visualizing the Stress-Decision Relationship

The following diagrams, generated using Graphviz, illustrate the conceptual and experimental relationships between workplace stress and forensic decision quality.

Conceptual Model of Stress Impact on Forensic Decisions

Experimental Workflow for Stress Testing

The Scientist's Toolkit: Research Reagents and Key Materials

To conduct rigorous research into workplace stress and decision-making, specific tools and methodologies are required. The following table details essential "research reagents" for this field.

Table 2: Key Research Reagents and Tools for Studying Stress and Decision-Making

| Tool or Material | Function/Description | Application in Research |

|---|---|---|

| Trier Social Stress Test (TSST) | A standardized protocol for reliably inducing moderate psychosocial stress in laboratory settings, involving public speaking and mental arithmetic. | Used as the primary independent variable (stress manipulation) to study its causal effect on subsequent decision-making tasks [24]. |

| Salivary Cortisol Assay Kits | Biochemical kits for measuring cortisol levels in saliva. Cortisol is a key hormonal biomarker of the body's physiological stress response. | Objective verification of the effectiveness of the stress induction manipulation (e.g., TSST). Samples are typically taken pre- and post-manipulation. |

| Standardized Decision Tasks | Curated sets of forensic stimuli (e.g., fingerprint pairs, DNA profiles) with ground truth established. These include both "same-source" and "different-source" samples of varying difficulty. | Serves as the dependent variable task to measure decision outcomes—accuracy, conclusion type, and response time—in a controlled and ecologically valid manner [24]. |

| Psychometric Scales | Validated self-report questionnaires. Key examples include: - Decisional Regret Scale (DRS): Measures distress after a decision.- CollaboRATE: Measures shared decision-making.- PHQ-2/9: Measures depressive symptoms.- Subjective Well-being Scales (e.g., ICECAP-A). | Quantifies psychological states such as regret, perceived collaboration, mental health, and well-being, which may mediate or moderate the stress-decision relationship [25]. |

| Statistical Analysis Software (R, Python, SPSS) | Software platforms capable of running advanced statistical analyses, including Analysis of Variance (ANOVA), mediation analysis, and structural equation modeling (SEM). | Used to analyze complex datasets, test for significant group differences, and model the direct and indirect pathways through which stress impacts decision outcomes [25]. |

The evidence is compelling: workplace stress and well-being are not peripheral concerns but central variables that can fundamentally shape the quality and nature of forensic decision-making. The relationship is complex, with stress sometimes sharpening focus on specific tasks but at the potential cost of increased risk-aversion and, under conditions of fatigue or hindrance, a clear pathway to heightened error rates. For a field built on the pillars of objectivity and reliability, integrating the science of human factors is no longer optional but essential. Future research must move beyond correlation to causation, employing the rigorous experimental protocols and tools outlined herein. Furthermore, the development and validation of evidence-based interventions—from structured decision breaks and cognitive debiasing techniques to organizational reforms that reduce hindrance stressors—are critical next steps. By acknowledging and systematically studying the overlooked variable of workplace well-being, the forensic science community can safeguard not only the health of its professionals but also the integrity of the justice system it serves.

This whitepaper examines the automatic integration of information through top-down processing and preexisting schemas, a fundamental characteristic of human reasoning that enables efficiency at the cost of potential systematic error. Framed within forensic science decision-making research, we explore how these cognitive processes contribute to the formation of coherent yet potentially flawed narratives. The mechanisms underlying these reasoning challenges are detailed through quantitative data synthesis, experimental protocols from cognitive neuroscience, and visualizations of signaling pathways. Finally, we present a scientist's toolkit of research reagents and methodologies for investigating and mitigating these biases in forensic practice, providing researchers with practical resources for advancing the field's accuracy and reliability.

Human reasoning demonstrates a paradoxical duality: it is both remarkably efficient and systematically fallible. This dichotomy stems from core cognitive architectures that automatically integrate information from multiple sources to construct coherent interpretations of the world. Top-down processing leverages preexisting knowledge, expectations, and experience to interpret incoming sensory information, while bottom-up processing builds perceptions purely from external stimuli [1]. In most daily functions, the integration of these processes serves us well; however, in specialized domains like forensic science, this automatic integration can introduce significant vulnerabilities into decision-making [1] [26].

The success of forensic science depends heavily on human reasoning abilities, yet the field often demands that practitioners reason in "non-natural ways" – evaluating pieces of evidence independently of contextual information that their brains automatically strive to incorporate [1]. This conflict between natural cognitive tendencies and forensic ideals creates a critical challenge: preexisting schemas (organized knowledge structures about events, situations, or concepts) automatically influence the interpretation of new information, potentially leading to coherent but forensically inaccurate narratives [1]. Understanding these mechanisms is essential for developing procedures that decrease errors and improve analytical accuracy in forensic contexts ranging from feature comparison to causal attribution.

Quantitative Synthesis: Key Experimental Findings on Top-Down Influences

Research across cognitive psychology and neuroscience has quantified how top-down processes influence perception and judgment. The table below synthesizes key experimental findings relevant to forensic decision-making.

Table 1: Quantitative Data on Top-Down Processing Effects in Perception and Judgment

| Experimental Paradigm | Key Finding | Effect Size/Magnitude | Implication for Forensic Science |

|---|---|---|---|

| Müller-Lyer Illusion [1] | Participants perceive equal lines as different lengths due to contextual cues | Illusion strength varies by environment; stronger in industrialized urban areas | Context can distort basic visual perception, potentially affecting evidence measurement |

| Bank Robbery Schema Memory Test [1] | Participants falsely recalled schema-consistent elements not present in original stimulus | Not specified; significant injection of non-present elements | Preexisting event schemas can corrupt memory of case details over time |

| Dot Perspective Task (dPT) with Forensic Cases [27] | Borderline personality disorder patients with court-ordered measures (BDL-COM) showed altered neural activation during perspective-taking | Significantly lower beta oscillation power (400-1300ms post-stimulus) in Avatar-Other condition | Population-specific neural processing differences may affect perspective-taking in legal contexts |

| Visual Processing Pathways [28] | Magnocellular (M) pathway processes information faster (80-120ms) than parvocellular (P) pathway (~150-200ms) | M pathway: 5-15% contrast sensitivity; P pathway: color-sensitive, <8% contrast ineffective | Fast, coarse processing may initiate top-down predictions before detailed analysis completes |

These quantitative findings demonstrate that top-down influences are not merely theoretical concepts but measurable phenomena with significant effects on perception, memory, and judgment. The neural evidence indicates that these processes occur rapidly and automatically, often outside conscious awareness, making them particularly challenging to mitigate in forensic contexts where objective analysis is paramount.

Neurocognitive Mechanisms: The Signaling Pathways of Top-Down Processing

The neural basis of top-down processing involves complex interactions between brain regions responsible for prior knowledge, sensory processing, and prediction. The following diagram illustrates the primary signaling pathways involved in top-down visual processing, which serves as a model system for understanding these mechanisms more broadly.

Visual Pathways of Top-Down Processing

This neural architecture demonstrates how higher-order cognitive regions (prefrontal cortex, temporoparietal junction) generate predictions that influence sensory processing regions (visual cortex, ventral and dorsal streams) through top-down signaling pathways [28]. The magnocellular pathway provides rapid, coarse information that initiates preliminary interpretations, while the slower parvocellular pathway provides detailed information that refines these interpretations [28]. In forensic contexts, this means initial impressions based on limited evidence can persistently influence subsequent analysis, creating a coherence that may not align with ground truth.

Experimental Protocols: Investigating Top-Down Influences in Forensic Reasoning

Dot Perspective Task (dPT) with EEG Recording

The dPT has emerged as a key experimental paradigm for investigating neural correlates of perspective-taking, with particular relevance to forensic populations [27].

Objective: To dissect differences in neural generator activation between forensic cases with court-ordered measures and healthy controls during visual perspective taking, specifically examining the distinction between mentalizing (Avatar) and non-mentalizing (Arrow) stimuli.

Participants:

- 15 borderline personality disorder patients with court-ordered measures (BDL-COM)

- 54 age-matched healthy controls without history of convictions

- All participants male gender to control for gender effects

Stimuli and Task Design:

- Participants view displays containing an Avatar or Arrow positioned in a room with dots on the walls

- Two conditions: Self-perspective ("How many dots do you see?") and Other-perspective ("How many dots does the Avatar/Arrow see?")

- Trials are classified as consistent (Self and Other see same number) or inconsistent (conflict between Self and Other perspective)

- Analysis focuses on inconsistent trials to maximize cognitive conflict

EEG Recording and Analysis:

- High-density EEG recording with 128 electrodes

- Analysis of event-related potentials (ERPs) at multiple time windows: <80ms, 80-400ms, and 400-1300ms post-stimulus

- Time-frequency decomposition to examine beta oscillation power (13-30Hz)

- Source localization to identify neural generators of electrical activity

Key Outcome Measures:

- Activation patterns in mentalizing network (temporoparietal junction, inferior frontal gyrus)

- Beta oscillation power differences between groups and conditions

- Timing differences in neural processing between forensic cases and controls

This protocol revealed that BDL-COM patients showed altered topography of EEG activation patterns and reduced abilities to mobilize beta oscillations during treatment of mentalistic stimuli, indicating neural correlates of their perspective-taking deficits [27].

Schema Intrusion Memory Paradigm

This behavioral protocol examines how preexisting schemas influence memory reconstruction in forensically relevant contexts.

Objective: To quantify how preexisting event schemas distort memory for case-relevant details.

Stimuli and Procedure:

- Participants listen to audio recording of a bank robbery trial testimony

- Testimony contains some schema-consistent and some schema-inconsistent details

- Control condition contains neutral testimony without strong schema associations

- After distraction task, participants complete free recall and recognition tests

Measurement:

- Number of schema-consistent intrusions (details not in original but consistent with bank robbery schema)

- Accuracy for schema-consistent versus schema-inconsistent details

- Confidence ratings for correct and incorrect memories

This paradigm demonstrates cognitive impenetrability – even when participants know about the potential for bias, they cannot completely prevent schema-based intrusions into their memories [1].

The Scientist's Toolkit: Research Reagents for Studying Forensic Reasoning

Table 2: Essential Methodologies and Assessment Tools for Forensic Reasoning Research

| Research Tool | Primary Function | Application in Forensic Reasoning Research | Key Metrics |

|---|---|---|---|

| High-Density EEG | Records electrical brain activity with high temporal resolution | Measures neural correlates of perspective-taking and decision-making in real-time | Event-related potentials (ERPs), beta oscillation power, neural source localization |

| fMRI | Measures brain activity through blood oxygen level-dependent (BOLD) signal | Identifies brain networks involved in top-down control and schema activation | Activation in mentalizing network (TPJ, medial PFC), attentional control regions |

| Psychopathy Checklist-Revised (PCL-R) [27] | Assesss psychopathic traits in clinical and forensic populations | Evaluates relationship between personality traits and perspective-taking abilities | Two-factor structure: interpersonal-affective traits and social deviance traits |

| HCR-20 [27] | Assesss historical, clinical, and risk management factors for violence | Examines how risk assessment correlates with cognitive processing patterns | 20 items across historical, clinical, and risk management domains |

| Mini-Social cognition and Emotional Assessment (SEA) [27] | Brief clinical assessment of Theory of Mind and emotion recognition | Quantifies social cognitive deficits in forensic populations | Theory of Mind score, emotion recognition score, composite social cognition score |

| Wechsler Adult Intelligence Scale (WAIS) [27] | Measures cognitive abilities and intelligence quotient (IQ) | Controls for general cognitive ability in forensic cognition studies | Verbal Comprehension, Perceptual Reasoning, Working Memory, Processing Speed indices |

| Dot Perspective Task (dPT) [27] | Assesss implicit and explicit perspective-taking abilities | Differentiates mentalizing from attention-orienting processes in forensic groups | Response times, accuracy rates, self-other consistency effects |

Discussion: Implications for Forensic Science Practice

The automatic integration of information through top-down processing presents fundamental challenges for forensic science. In feature comparison judgments (e.g., fingerprints, firearms, bitemarks), the primary challenge is avoiding biases from extraneous knowledge or from the comparison method itself [1] [26]. The cognitive tendency to create coherent narratives can lead analysts to prematurely converge on matches despite ambiguous evidence. In causal and process judgments (e.g., fire scenes, pathology), the main challenge is maintaining multiple potential hypotheses as investigations continue, resisting the brain's natural inclination to settle on a single coherent story [1].

The experimental protocols and tools detailed in this whitepaper provide pathways for both researching these phenomena and developing evidence-based mitigations. For instance, the temporal dynamics revealed by EEG studies suggest specific time windows during which cognitive interventions might be most effective. The individual differences observed in dPT performance indicate that certain forensic populations may require tailored approaches to minimize reasoning biases.