Technology Readiness Levels (TRL) in Forensic Chemistry: A Roadmap from Research to Courtroom Adoption

This article provides a comprehensive guide to Technology Readiness Levels (TRLs) and their critical role in translating forensic chemistry research from basic concepts into legally admissible, routine casework methods.

Technology Readiness Levels (TRL) in Forensic Chemistry: A Roadmap from Research to Courtroom Adoption

Abstract

This article provides a comprehensive guide to Technology Readiness Levels (TRLs) and their critical role in translating forensic chemistry research from basic concepts into legally admissible, routine casework methods. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of TRLs, their specific application to forensic techniques like comprehensive two-dimensional gas chromatography (GC×GC) and chemometrics, and the path to overcoming validation and optimization challenges. By synthesizing current research and legal standards, including the Daubert Standard and Frye Standard, this review offers a practical framework for developing forensically sound technologies that meet the rigorous demands of the justice system.

From Concept to Court: Understanding TRLs and the Forensic Science Landscape

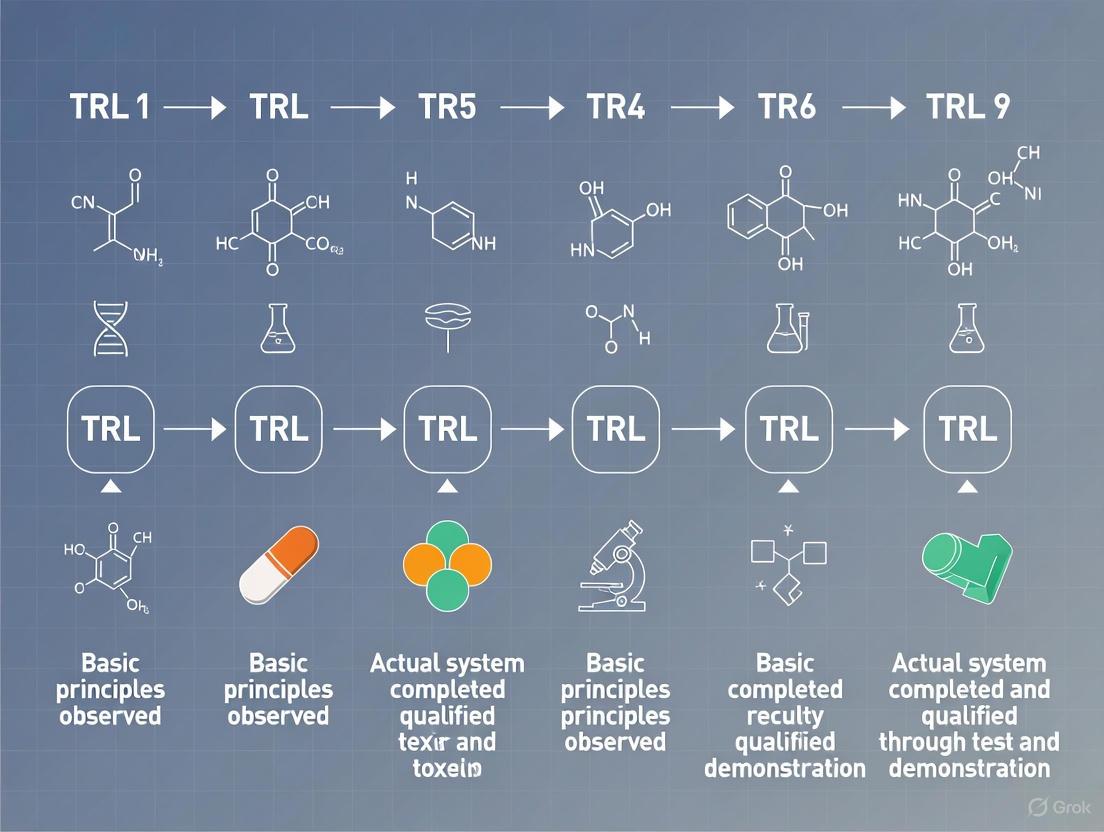

Technology Readiness Levels (TRLs) are a systematic metric used to assess the maturity of a particular technology. The framework consists of a scale from 1 to 9, where TRL 1 is the lowest level of maturity (basic principles observed) and TRL 9 is the highest (actual system proven in operational environment) [1]. This measurement system enables consistent, uniform discussions of technical maturity across different types of technology, allowing engineers, managers, and investors to quantify progress and evaluate risk throughout the development lifecycle [2] [3].

Originally developed by NASA in the 1970s, the TRL methodology has since been adopted far beyond its aerospace origins. By 2008, the European Space Agency (ESA) had implemented the scale, and the European Commission began advising EU-funded research to adopt TRLs in 2010 [2]. Today, TRLs are utilized across diverse fields including defense, energy, healthcare, and increasingly, forensic science, where they provide a structured approach for evaluating the maturity of novel analytical methods and technologies before their implementation in casework and courtroom proceedings [4] [5].

The NASA Origin of TRLs

Historical Development at NASA

The Technology Readiness Level methodology was conceived at NASA in 1974 by Stan Sadin at NASA Headquarters [2]. The approach was originally developed to provide a disciplined way to differentiate between the maturity levels of various technologies being considered for space missions. The initial application occurred when Ray Chase, the JPL Propulsion Division representative on the Jupiter Orbiter design team, used Sadin's methodology to assess the technology readiness of the proposed spacecraft design [2].

The first formal TRL definitions included seven levels, which were standardized by NASA in 1989 [2]. These original definitions were:

- Level 1 – Basic Principles Observed and Reported

- Level 2 – Potential Application Validated

- Level 3 – Proof-of-Concept Demonstrated, Analytically and/or Experimentally

- Level 4 – Component and/or Breadboard Laboratory Validated

- Level 5 – Component and/or Breadboard Validated in Simulated or Realspace Environment

- Level 6 – System Adequacy Validated in Simulated Environment

- Level 7 – System Adequacy Validated in Space [2]

In the 1990s, NASA expanded this original seven-level scale to the current nine-level version, which subsequently gained widespread acceptance across government, industry, and research sectors [2].

The Nine TRL Levels: NASA Definitions

Table 1: The Nine Technology Readiness Levels According to NASA

| TRL | Description | Key Activities and Milestones |

|---|---|---|

| 1 | Basic principles observed and reported | Scientific research begins; results translated into future R&D [1] |

| 2 | Technology concept and/or application formulated | Practical application identified but speculative; no experimental proof [1] |

| 3 | Analytical and experimental critical function proof-of-concept | Active R&D begins; analytical studies and laboratory demonstrations validate predictions [1] |

| 4 | Component validation in laboratory environment | Low-fidelity breadboard built and operated to demonstrate basic functionality [1] |

| 5 | Component validation in relevant environment | Medium-fidelity brassboard tested in simulated operational environment [1] |

| 6 | System/subsystem model demonstration in relevant environment | High-fidelity prototype demonstrated in relevant environment [1] |

| 7 | System prototype demonstration in operational environment | High-fidelity engineering unit demonstrated in actual operational environment [1] |

| 8 | Actual system completed and "flight qualified" | Final product demonstrated through test and analysis for intended environment [1] |

| 9 | Actual system proven through successful mission operations | Final product successfully operated in actual mission [1] |

Figure 1: TRL Progression from Basic Research to Mission Operations

Global Adoption and Evolution

The TRL framework progressively expanded beyond NASA throughout the 1990s and 2000s. The United States Air Force adopted TRLs in the 1990s, followed by the Department of Defense (DOD) which began using the scale for procurement in the early 2000s [2]. A pivotal 1999 report by the United States General Accounting Office examined technology transition differences between the DOD and private industry, concluding that the DOD took greater risks with less mature technologies and recommending wider use of TRLs to assess maturity prior to transition [2].

Internationally, the European Space Agency adopted the TRL scale in the mid-2000s, and the European Commission formally implemented TRLs in the Horizon 2020 research program in 2014 [2]. This global adoption led to the canonization of the TRL scale by the International Organization for Standardization (ISO) through publication of the ISO 16290:2013 standard [2].

TRLs in Forensic Chemistry Research

The Need for TRL Assessment in Forensic Science

Forensic science faces unique challenges in technology development and implementation. Analytical methods must not only demonstrate technical efficacy but also meet rigorous legal standards for admissibility as evidence in court proceedings [4]. The convergence of increasing demands for forensic services with diminishing resources creates a critical need for structured assessment of technology maturity before implementation [6].

Traditional forensic methods based on human perception and subjective judgment are increasingly recognized as susceptible to cognitive bias and logical flaws [7]. A paradigm shift is underway toward methods based on relevant data, quantitative measurements, and statistical models that are transparent, reproducible, and resistant to bias [7]. Within this context, TRLs provide a framework for systematically developing and validating new forensic technologies from basic research through courtroom implementation.

Current State of Forensic Technology Maturity

The application of TRLs in forensic science is still emerging. A 2025 review of comprehensive two-dimensional gas chromatography (GC×GC) in forensic applications utilized a simplified technology readiness scale (levels 1-4) to characterize advancements across seven forensic chemistry applications [4]. This review found that most GC×GC applications remain at lower TRLs, with only a few approaches approaching higher maturity levels suitable for courtroom implementation.

Table 2: Technology Readiness Levels in Forensic Applications: GC×GC Case Study

| Forensic Application | Current TRL Range | Key Development Needs |

|---|---|---|

| Illicit Drug Analysis | 2-3 | Standardization, validation studies, error rate analysis [4] |

| Forensic Toxicology | 2-3 | Method validation, inter-laboratory studies [4] |

| Fingermark Chemistry | 2-3 | Reproducibility studies, validation against casework samples [4] |

| Odor Decomposition | 3-4 | Database development, standardization [4] |

| CBNR Forensics | 2-3 | Sensitivity and specificity validation [4] |

| Ignitable Liquid Residue | 3-4 | Inter-laboratory validation, standardization [4] |

| Oil Spill Tracing | 3-4 | Database development, validation studies [4] |

Legal Standards and Technology Readiness

For forensic technologies to transition to higher TRLs (8-9), they must satisfy legal admissibility standards. In the United States, the Daubert Standard (from Daubert v. Merrell Dow Pharmaceuticals, Inc., 1993) requires that scientific evidence be based on methods that: (1) can be and have been tested; (2) have been peer-reviewed and published; (3) have a known error rate; and (4) are generally accepted in the relevant scientific community [4]. Similarly, Canada's Mohan Criteria require expert evidence to be relevant, necessary, absent exclusionary rules, and presented by a qualified expert [4].

These legal standards directly influence TRL progression in forensic chemistry. Technologies at TRL 7-9 must demonstrate not only technical functionality but also compliance with these legal frameworks, including defined error rates, extensive validation, and general acceptance within the forensic science community [4].

Adapting TRLs for Forensic Chemistry

Methodologies for TRL Advancement in Forensic Science

Advancing forensic technologies through TRL levels requires systematic validation and implementation strategies. The National Institute of Justice (NIJ) Forensic Science Strategic Research Plan, 2022-2026 outlines priority objectives specifically designed to mature forensic technologies [6]:

Applied Research and Development (TRL 2-4)

- Development of methods that increase sensitivity and specificity of forensic analysis

- Machine learning methods for forensic classification

- Nondestructive or minimally destructive methods that maintain evidence integrity

- Novel differentiation techniques for biological evidence

Technology Demonstration and Validation (TRL 5-7)

- Tools and workflows to enhance investigative processes

- Automated tools to support examiners' conclusions

- Standard criteria for analysis and interpretation

- Evaluation of expanded conclusion scales and likelihood ratios

System Implementation (TRL 8-9)

- Development of reference materials and databases

- Interlaboratory validation studies

- Implementation of new technologies with cost-benefit analyses

- Proficiency tests that reflect complexity and workflows

Experimental Protocols for TRL Validation

Protocol 1: Method Validation for Novel Analytical Techniques This protocol supports advancement from TRL 3-4 to TRL 5-6 for novel forensic analytical methods:

Analytical Sensitivity and Specificity Assessment: Determine limits of detection (LOD) and quantification (LOQ) using serial dilutions of reference standards. Evaluate specificity against commonly interfering substances found in forensic evidence [6] [4].

Reproducibility and Repeatability Testing: Conduct intra-day and inter-day precision studies with multiple operators. Perform tests across different environmental conditions relevant to forensic laboratory settings [6].

Reference Material and Quality Control Development: Establish certified reference materials and quality control protocols suitable for routine implementation in forensic laboratories [6].

Comparison with Established Methods: Perform parallel analysis of casework-type samples using both the novel method and currently accepted standard methods to demonstrate comparative performance [4].

Protocol 2: Legal Admissibility Preparation (TRL 7-8) This protocol supports the transition from demonstrated technology to court-admissible methodology:

Error Rate Determination: Conduct comprehensive validation studies to establish known error rates using blinded samples that represent casework complexity [4].

Inter-laboratory Validation: Coordinate multi-laboratory studies to demonstrate reproducibility across different instruments, operators, and environments [6] [4].

Standard Operating Procedure Development: Create detailed, standardized protocols suitable for implementation across diverse forensic laboratory settings [6].

Proficiency Testing: Develop and administer proficiency tests that reflect real-world casework conditions and complexities [6].

Figure 2: Forensic Technology Development Workflow with Legal Requirements

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Forensic Chemistry Development

| Reagent/Material | Function in Forensic Technology Development | TRL Application Range |

|---|---|---|

| Certified Reference Materials | Provide traceable standards for method validation and quality control; essential for establishing method accuracy and precision [6] | TRL 3-9 |

| Quality Control Materials | Monitor analytical process performance; detect systematic errors and ensure ongoing method reliability [6] | TRL 4-9 |

| Proficiency Test Samples | Assess analyst competency and method performance using blinded samples that simulate casework evidence [6] | TRL 6-9 |

| Complex Matrix Simulants | Evaluate method specificity and robustness against common forensic evidence matrices (blood, soil, fabric, etc.) [6] [4] | TRL 3-7 |

| Data Analysis Software | Provide statistical interpretation tools, chemometric analysis, and likelihood ratio calculations for evidence evaluation [7] [6] | TRL 2-9 |

| Standard Operating Procedure Templates | Ensure consistent application of methods across different laboratories and analysts [6] | TRL 5-9 |

The Technology Readiness Level framework, born from NASA's need to manage technological risk in space missions, provides an invaluable structured approach for advancing forensic chemistry technologies from basic research to court-admissible applications. The systematic progression through TRL stages enables researchers, laboratory directors, and funding agencies to make evidence-based decisions about technology development, resource allocation, and implementation timelines.

For forensic chemistry, successful TRL progression requires not only technical validation but also careful attention to legal admissibility standards such as the Daubert criteria. The ongoing paradigm shift toward quantitative, statistically-grounded forensic methods creates unprecedented opportunities for TRL-guided development. By adopting and adapting the NASA-born TRL framework, the forensic science community can more effectively bridge the notorious "Valley of Death" between promising prototypes and operational implementation, ultimately strengthening the scientific foundation of forensic evidence in the courtroom.

The integration of new technologies into forensic chemistry laboratories is constrained by stringent legal and operational requirements, necessitating a robust framework to assess their maturity prior to courtroom adoption. This technical guide proposes a specialized four-level Technology Readiness Level (TRL) scale tailored for forensic chemistry applications. The framework provides a structured pathway from initial analytical research (Level 1) to legal recognition and routine casework application (Level 4). It incorporates established legal standards—including the Daubert Standard and Federal Rule of Evidence 702 in the United States and the Mohan Criteria in Canada—as critical milestones for admission as scientific evidence in legal proceedings [4]. This guide details the experimental protocols, validation requirements, and essential research tools necessary for forensic technologies to achieve practical implementation, offering a clear roadmap for researchers, scientists, and drug development professionals in the field.

Forensic science exists at the complex intersection of analytical chemistry, law enforcement, and the judicial system. The successful transition of a novel analytical technique from the research laboratory to the courtroom requires more than just demonstrated analytical performance; it must also meet rigorous legal standards for the admissibility of expert testimony [4]. General TRL scales, such as the well-known 9-level system from NASA, provide a foundational concept for technological maturity but lack the specific legal and validation benchmarks unique to forensic science [1] [2].

The development of this four-level framework is a direct response to identified crises in the field, including a documented lack of funding for forensic science research and the pressing need for objective, quantifiable interpretation of results to replace subjective conclusions [8] [9]. Emerging technologies, such as comprehensive two-dimensional gas chromatography (GC×GC), rapid DNA analysis, and Artificial Intelligence (AI)-assisted pattern recognition, show immense potential but face significant barriers to adoption without a clear, standardized path to demonstrate their reliability and validity for casework [4] [10] [11]. This guide bridges that gap by defining a forensic-specific pathway that synchronizes analytical validation with legal readiness.

The Four-Level Forensic Chemistry TRL Framework

The proposed framework consolidates traditional technology development phases into four critical levels for forensic application. Each level is defined by specific analytical and legal milestones that must be achieved before progression.

Table 1: The Four-Level Forensic Chemistry TRL Framework

| TRL Level | Designation | Analytical Milestone | Legal & Validation Milestone |

|---|---|---|---|

| Level 1 | Foundational Research & Proof of Concept | Basic principles observed; initial proof-of-concept demonstrated in a controlled laboratory environment [12]. | Research is peer-reviewed and published, establishing scientific validity for the core theory/technique [4]. |

| Level 2 | Method Development & Laboratory Validation | Technology validated in a laboratory environment; standard operating procedure (SOP) developed; initial reference materials established [13]. | Known or potential error rates are characterized through controlled studies; method is tested and has been subjected to some peer review [4]. |

| Level 3 | Real-World Demonstration & Inter-laboratory Validation | Prototype system demonstrated in an operational (casework-like) environment across multiple laboratories [1]. | Intra- and inter-laboratory validation studies completed; method demonstrates robustness and reproducibility across relevant environments [4]. |

| Level 4 | Legal Adoption & Routine Casework | Actual system proven through successful deployment in routine casework under a full range of conditions [12]. | Technology is "generally accepted" in the relevant forensic scientific community and meets legal admissibility standards (e.g., Daubert, Mohan) [4]. |

Level 1: Foundational Research & Proof of Concept

The primary goal of Level 1 is to translate basic scientific research into a practical application concept for a forensic problem.

- Core Activities: Identify basic principles and undertake analytical and experimental studies to achieve proof-of-concept [12]. For a technique like GC×GC, this involves demonstrating increased peak capacity and signal-to-noise ratio for a simple, defined mixture compared to 1D-GC [4].

- Experimental Protocol:

- Hypothesis Formulation: Define the specific forensic problem (e.g., "GC×GC-TOFMS can separate co-eluting peaks in complex illicit drug mixtures that 1D-GC-MS cannot").

- Sample Preparation: Acquire or synthesize a well-characterized control mixture of known analytes.

- Instrumental Analysis: Analyze the control mixture using both the traditional method (e.g., 1D-GC-MS) and the novel method (e.g., GC×GC-TOFMS).

- Data Analysis: Compare chromatographic data, focusing on metrics like peak resolution, number of detected analytes, and signal-to-noise ratio.

- Output: Peer-reviewed publication demonstrating the analytical proof-of-concept and its potential forensic relevance.

Level 2: Method Development & Laboratory Validation

At Level 2, the focus shifts from concept to a validated laboratory method, with an emphasis on characterizing the method's performance and limitations.

- Core Activities: Integration of basic technological components in a laboratory environment; development of a draft SOP; initial determination of error rates and validation parameters such as specificity, sensitivity, and reproducibility [4] [13].

- Experimental Protocol:

- SOP Development: Document a detailed, repeatable procedure for sample preparation, instrumental analysis, and data processing.

- Determination of Figures of Merit: Conduct experiments to establish:

- Specificity/Sensitivity: Analyze known positive and negative samples to determine false positive/negative rates.

- Linear Dynamic Range & LOD/LOQ: Analyze a series of standard concentrations.

- Precision: Perform repeatability (short-term) and intermediate precision (different days, analysts) studies.

- Error Rate Analysis: Systematically challenge the method with blank samples, closely related compounds, and complex matrices to characterize potential sources of error [4].

- Output: A validated laboratory method with a draft SOP, a detailed report on method performance characteristics, and a preliminary estimate of error rates.

Level 3: Real-World Demonstration & Inter-laboratory Validation

Level 3 assesses the method's performance in a realistic, operational environment and its transferability between laboratories, which is critical for establishing general acceptance.

- Core Activities: A model or prototype is demonstrated at pilot scale in a simulated or relevant environment, leading to inter-laboratory validation [12]. This is a critical step for assessing robustness.

- Experimental Protocol:

- Pilot Demonstration: Apply the method to authentic, casework-type samples provided by a collaborating forensic laboratory. Maintain a chain of custody.

- Blinded Study: Conduct a single-laboratory study using blinded samples with a known ground truth to assess performance under realistic conditions.

- Inter-laboratory Validation: Coordinate a multi-laboratory study using the same SOP, reference materials, and sample sets. The participating laboratories should represent a range of expected operational environments.

- Data Analysis: Statistically analyze the results from all laboratories to determine reproducibility, concordance rates, and sources of inter-laboratory variation.

- Output: A comprehensive inter-laboratory validation study report published in a peer-reviewed journal, providing strong evidence for the method's robustness and reliability.

Level 4: Legal Adoption & Routine Casework

The final level is achieved when the technology is fully integrated into forensic laboratory workflows and its results are deemed admissible in court.

- Core Activities: The technology is used in its final form under a full range of operational conditions in routine casework [12]. Its underlying principles and methodology are generally accepted by the forensic science community [4].

- Implementation Milestones:

- Implementation into Casework: The method is fully adopted by one or more operational forensic laboratories for use in actual casework.

- Legal Precedent: The methodology and its conclusions have been successfully defended under cross-examination in court, setting a precedent for admissibility.

- Standardization: The method is incorporated into official guidelines or standards by recognized bodies (e.g., ASTM International, OSAC).

- Output: Widespread adoption of the technology by forensic laboratories, successful court testimony, and formal standardization.

Experimental Workflow and Legal Pathway

The journey of a technology through the TRL framework involves parallel progress along both experimental and legal tracks. The following diagram visualizes this integrated pathway, highlighting key decision points and milestones.

Figure 1: Integrated experimental and legal readiness pathway. The horizontal flow represents the progression through experimental TRL levels, while the vertical connections show the specific legal and validation milestones required at each stage to satisfy admissibility criteria [4].

The Scientist's Toolkit: Essential Research Reagent Solutions

The development and validation of forensic chemical methods require a suite of reliable reference materials and reagents. The following table details key components essential for conducting experiments across the TRL scale.

Table 2: Key Research Reagent Solutions for Forensic Chemistry Development

| Reagent/Material | Function & Purpose | TRL Application Level |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides the ground truth for method development, calibration, and specificity testing. Essential for determining error rates and validating identifications [9]. | Level 1 - Level 4 |

| Internal Standards (Isotope-Labeled) | Corrects for analytical variability in sample preparation and instrument response; critical for achieving precise and quantitative results [9]. | Level 2 - Level 4 |

| Quality Control (QC) Check Samples | Used to monitor the ongoing performance and stability of an analytical method; a cornerstone of laboratory accreditation and method validation. | Level 2 - Level 4 |

| Complex Matrix Simulants | Mimics the composition of real-world evidence (e.g., street drug mixtures, biological fluids, fire debris) to test method robustness, selectivity, and sample cleanup protocols. | Level 2 - Level 3 |

| Characterized Proficiency Test Samples | Provides a blinded, external assessment of laboratory performance; crucial for inter-laboratory studies (Level 3) and demonstrating competency for court (Level 4). | Level 3 - Level 4 |

The Forensic Chemistry TRL Scale provides a structured, four-level framework to guide the maturation of novel technologies from foundational research to court-admissible evidence. By explicitly integrating legal admissibility criteria with established analytical validation milestones, this framework addresses a critical gap in the forensic science innovation ecosystem. It offers a clear and practical roadmap for researchers, funding agencies, and laboratory managers to prioritize resources, assess progress, and ultimately accelerate the adoption of reliable and robust scientific methods into the criminal justice system. The adoption of this scale will bolster the scientific robustness of forensic chemistry, enhance the comparability of research maturity, and help fulfill the urgent need for objective, quantifiable evidence in the courtroom.

Forensic science currently faces a dual crisis: severe funding constraints that impede operational capacity coexist with pressing innovation needs required to meet evolving judicial standards. This paradoxical state demands a systematic evaluation of technology readiness levels (TRLs) across emerging forensic methodologies, particularly in forensic chemistry. The American Academy of Forensic Sciences 2025 conference highlighted these issues, with experts like Heidi Eldridge noting that federal funding uncertainties have left "agencies trying to do more with less," unable to purchase new equipment or conduct research with the latest technologies [14]. Simultaneously, novel analytical techniques such as comprehensive two-dimensional gas chromatography (GC×GC) must navigate rigorous legal admissibility standards, including the Daubert Standard and Federal Rule of Evidence 702, which require demonstrated reliability, peer review, known error rates, and general scientific acceptance [4]. This whitepaper examines this critical juncture through the lens of TRLs, providing a technical roadmap for researchers and drug development professionals working at the intersection of analytical chemistry and judicial admissibility.

The Funding Landscape: Quantitative Analysis of Resource Constraints

The forensic science funding ecosystem relies heavily on federal grant programs that have faced significant reductions, creating substantial operational challenges for laboratories nationwide. The data reveals a systematic disinvestment from critical infrastructure.

Table 1: Federal Forensic Grant Program Funding Trends (2024-2026)

| Grant Program | Primary Focus | FY 2024-2025 Funding | FY 2026 Proposed | Change | Impact |

|---|---|---|---|---|---|

| Paul Coverdell Forensic Science Improvement Grants | Multi-disciplinary forensic capacity | $35 million | $10 million | -70% | Affects all forensic disciplines, including DNA, toxicology, and trace evidence [15] |

| Capacity Enhancement for Backlog Reduction (CEBR) | DNA-specific casework backlog | $94-95 million | ~$95 million (est.) | -37% from authorized level | Remains below the $151 million authorized by Congress under the Debbie Smith Act [15] |

These funding reductions have produced measurable impacts on laboratory performance metrics. Between 2017 and 2023, turnaround times for DNA casework increased by 88%, while post-mortem toxicology ballooned by 246% and controlled substances analysis climbed 232% [15]. The National Institute of Justice's 2019 Needs Assessment identified a $640 million annual shortfall merely to meet current demand, with another $270 million needed to address the opioid crisis [15]. As Scott Hummel, president of the American Society of Crime Laboratory Directors, warned, limiting these resources "would have dire consequences on a lot of crime laboratories who depend on those funds for maintaining operations" [15].

Technology Readiness Levels in Forensic Chemistry

TRL Framework for Forensic Applications

Technology Readiness Levels provide a systematic metric for assessing the maturity of evolving technologies prior to incorporating them into operational forensic workflows. For forensic applications, this framework must integrate both analytical validation and legal admissibility requirements. We propose a modified TRL scale specific to forensic chemistry:

- TRL 1-2 (Basic Research): Initial proof-of-concept studies establishing fundamental separation science principles.

- TRL 3-4 (Applied Research): Laboratory-based validation of forensic applications using controlled samples.

- TRL 5-6 (Technology Demonstration): Intra-laboratory validation using casework-like samples and initial error rate estimation.

- TRL 7-8 (System Validation): Inter-laboratory validation, standard operating procedure development, and peer-reviewed publication.

- TRL 9 (Operational Deployment): Routine casework implementation with established proficiency testing and legal acceptance.

Current TRL Assessment of GC×GC in Forensic Applications

Comprehensive two-dimensional gas chromatography represents one of the most promising advanced separation techniques for complex forensic evidence analysis. The technique expands upon traditional 1D GC by adjoining two columns of different stationary phases in series with a modulator, dramatically increasing peak capacity and signal-to-noise ratio for trace compound analysis [4]. Current research applications have achieved varying levels of technological maturity.

Table 2: Technology Readiness Levels of GC×GC in Forensic Applications

| Forensic Application | Current TRL | Key Research Developments | Legal Admissibility Status |

|---|---|---|---|

| Illicit Drug Analysis | TRL 6-7 | Non-targeted screening for novel psychoactive substances; impurity profiling [4] | Methods peer-reviewed; error rates being established |

| Toxicology | TRL 5-6 | Simultaneous screening of pharmaceuticals, metabolites, and drugs of abuse in complex matrices [4] | Limited validation for specific analyte classes |

| Fingermark Chemistry | TRL 4-5 | Analysis of endogenous compounds and exogenous contaminants for chemical fingerprinting [4] | Primarily research phase; admissibility not established |

| Odor Decomposition | TRL 5-6 | Volatile organic compound profiling for postmortem interval estimation [4] | Validation studies ongoing; error rates not well characterized |

| Ignitable Liquid Analysis | TRL 6-7 | Improved chemical fingerprinting for arson evidence through enhanced separation of complex mixtures [4] | Some laboratory adoption; moving toward general acceptance |

| Oil Spill Tracing | TRL 7 | Environmental forensic applications with established biomarker analysis protocols [4] | Higher maturity due to environmental (non-criminal) applications |

The workflow for GC×GC analysis demonstrates the increased separation capability of this technique, which is particularly valuable for non-targeted forensic applications where a wide range of analytes must be analyzed simultaneously [4].

Diagram: GC×GC Analytical Workflow. The modulator serves as the "heart" of the system, preserving separation from the first dimension and reinjecting focused bands into the second dimension for orthogonal separation.

Experimental Protocols: Methodologies for Advancing TRLs

GC×GC-MS Method for Illicit Drug Analysis

Objective: Develop and validate a non-targeted screening method for novel psychoactive substances in complex mixtures using GC×GC-Time-of-Flight Mass Spectrometry.

Materials and Reagents:

- GC×GC System: Configured with thermal modulator

- Primary Column: Rxi-5Sil MS (30m × 0.25mm ID × 0.25μm df)

- Secondary Column: Rxi-17Sil MS (1m × 0.15mm ID × 0.15μm df)

- Mass Spectrometer: Time-of-Flight (TOF) detector with ≥50 Hz acquisition rate

- Reference Standards: Certified drug standards and internal deuterated analogs

- Solvents: HPLC-grade methanol, dichloromethane, and ethyl acetate

Sample Preparation Protocol:

- Liquid-Liquid Extraction: Add 1mL sample to 3mL ethyl acetate:dichloromethane (2:1 v/v) in a glass centrifuge tube.

- Vortex and Centrifuge: Mix vigorously for 60 seconds, then centrifuge at 3500 rpm for 5 minutes.

- Concentration: Transfer organic layer to a clean vial and evaporate under nitrogen stream at 40°C to near dryness.

- Reconstitution: Reconstitute in 100μL ethyl acetate with 10ppm internal standard (tetracosane-d50).

- Injection: Transfer to GC vial with micro-insert for 1μL splitless injection.

Instrumental Parameters:

- Injector: PTV in solvent vent mode (50°C for 0.1min, then 14.5°C/s to 300°C)

- Carrier Gas: Helium, constant flow 1.2mL/min

- Primary Oven: 40°C (2min hold), then 10°C/min to 320°C (5min hold)

- Secondary Oven: Offset +5°C relative to primary oven

- Modulator: 2.5s modulation period, 0.7s hot pulse time

- Transfer Line: 280°C

- MS Source: 230°C, electron energy 70eV, acquisition range m/z 40-600

Data Processing:

- Peak Finding: Automated peak detection with minimum S/N=100

- Deconvolution: Spectral deconvolution for coeluting compounds

- Library Matching: Forward search against NIST and custom drug libraries (minimum match factor 800/1000)

- Quantitation: Internal standard method with 5-point calibration curves

Validation Protocol for TRL Advancement

To advance from TRL 5 to TRL 7, laboratories must implement comprehensive validation studies addressing legal admissibility requirements:

Accuracy and Precision: Analyze six replicates at three concentration levels (low, medium, high) across five separate days. Calculate intra-day and inter-day precision as %RSD, with acceptance criteria ≤15% for mid and high concentrations, ≤20% for low concentration.

Specificity: Analyze 20 different blank matrix samples to demonstrate absence of interference at retention times of target analytes.

Robustness: Deliberately vary instrumental parameters (oven temperature ±2°C, flow rate ±0.1mL/min) to determine critical method parameters.

Limit of Detection/Quantitation: Serial dilution to determine LOD (S/N≥3) and LOQ (S/N≥10, precision ≤20%, accuracy 80-120%).

Carryover: Injection of blank solvent after highest calibration standard to demonstrate ≤20% of LOD response.

Stability: Bench-top, processed sample, and freeze-thaw stability assessments under various storage conditions.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of advanced forensic methodologies requires specific reagents and materials designed to meet the rigorous demands of forensic analysis while maintaining chain-of-custody integrity.

Table 3: Essential Research Reagents and Materials for Advanced Forensic Chemistry

| Reagent/Material | Function | Technical Specifications | Forensic Application |

|---|---|---|---|

| Certified Reference Materials | Quantitative calibration and method validation | Certified purity ≥98.5%, with expiration date and stability data | All quantitative analyses; essential for courtroom testimony |

| Deuterated Internal Standards | Compensation for matrix effects and recovery variations | Isotopic purity ≥99%, chemical purity ≥95% | Mass spectrometric quantification; improves accuracy and precision |

| SPME Fibers | Solventless extraction of volatile compounds | Various coatings (PDMS, CAR/PDMS, DVB/CAR/PDMS) optimized for analyte polarity | Arson analysis, decomposition odor, drug detection |

| Molecularly Imprinted Polymers | Selective solid-phase extraction | Custom synthesized for target analyte classes | Sample clean-up for complex matrices; novel psychoactive substance isolation |

| Derivatization Reagents | Enhancement of volatility and detection | MSTFA, BSTFA, PFPA for specific functional groups | Steroids, acids, polar compounds not amenable to direct GC analysis |

| Stable Isotope Labeled Compounds | Distinguish exogenous from endogenous compounds | 13C, 15N labeled versions of target analytes | Doping control, testosterone/epitestosterone ratio determination |

Innovation Under Constraints: Strategic Implementation Pathways

Despite funding challenges, several laboratories have successfully implemented innovative workflows through strategic approaches. The following decision framework illustrates pathways for laboratories to advance forensic methodologies despite resource constraints:

Diagram: Strategic Implementation Pathway for Forensic Methods. Laboratories can navigate funding constraints by aligning method selection with current TRL status and available resources.

Specific success stories demonstrate this framework in action:

Michigan State Police: Utilized competitive CEBR grants to validate low-input and degraded DNA extraction methods, resulting in a 17% increase in interpretable DNA profiles from complex evidence [15].

Louisiana State Police Crime Laboratory: Implemented Lean Six Sigma principles through a $600,000 NIJ Efficiency Grant, reducing average DNA turnaround time from 291 days to just 31 days and tripling monthly case throughput [15].

Connecticut Forensic Laboratory: Addressed a backlog of over 12,000 cases through workflow redesign supported by Coverdell grants, achieving reduction to under 1,700 cases and average DNA turnaround under 60 days [15].

The current state of forensic research represents a critical inflection point. While advanced analytical techniques like GC×GC offer unprecedented capability for complex evidence analysis, their progression to court-admissible methodologies (TRL 9) requires both strategic funding investment and systematic validation approaches. The proposed framework integrates technical advancement with practical implementation strategies, enabling laboratories to navigate the dual challenges of funding constraints and innovation demands. As forensic science continues to evolve within the judicial ecosystem, the collaboration between analytical chemists, forensic practitioners, and legal stakeholders becomes increasingly essential to ensure that scientific innovation translates to just outcomes.

The integration of novel scientific techniques into the legal system presents a significant challenge for researchers and practitioners in forensic chemistry. The admissibility of scientific evidence in a court of law serves as the ultimate benchmark for technology readiness, determining whether a method transitions from a research tool to accepted forensic practice. This transition is governed by distinct legal standards that act as gatekeepers, ensuring the reliability and relevance of expert testimony [4]. For forensic researchers, understanding these frameworks is not merely an academic exercise but a critical component of method development and validation.

In the United States, the Frye Standard and Daubert Standard provide the foundational criteria for admitting scientific evidence, while in Canada, the Mohan criteria serve a similar gatekeeping function [4] [16]. These legal precedents establish the procedural requirements that scientific evidence must meet before it can be presented to a trier of fact, whether judge or jury. For forensic chemistry research, particularly in emerging areas like comprehensive two-dimensional gas chromatography (GC×GC), meeting these criteria represents the final stage of technology readiness, signifying that a method has sufficient scientific rigor for use in legal proceedings [4].

This whitepaper provides an in-depth technical analysis of these admissibility standards, examining their historical development, core principles, and practical implications for forensic chemistry research and method validation. By framing legal admissibility as the end goal, we establish a framework for evaluating technology readiness levels in forensic science.

The Frye Standard: General Acceptance Test

Historical Development and Core Principle

The Frye Standard originated from the 1923 case Frye v. United States in the District of Columbia Court of Appeals [17] [18]. The case involved James Alphonzo Frye, who was convicted of murder and sought to introduce expert testimony based on a systolic pressure deception test, a precursor to the modern polygraph [17]. The court rejected this evidence, establishing what would become known as the "general acceptance" test.

The court's ruling articulated a fundamental principle for scientific evidence admissibility: "Just when a scientific principle or discovery crosses the line between the experimental and demonstrable stages is difficult to define. Somewhere in this twilight zone the evidential force of the principle must be recognized, and while the courts will go a long way in admitting experimental testimony deduced from a well-recognized scientific principle or discovery, the thing from which the deduction is made must be sufficiently established to have gained general acceptance in the particular field in which it belongs" [17] [18].

Application in Forensic Science

Under the Frye standard, the proponent of scientific evidence must demonstrate that the methodology, technique, or principle underlying the expert's opinion has gained widespread acceptance within the relevant scientific community [17] [19]. This requirement imposes a unique hurdle beyond having a qualified expert testify – the technique itself must be generally accepted [17].

The Frye standard has been applied to numerous forensic science techniques throughout its history, including:

- Traditional forensic methods: DNA analysis, fingerprint analysis, hair analysis, and bite-mark comparison [17]

- Instrumental analysis: Voiceprint analysis, breath tests for blood alcohol, and neutron activation blood analysis [17]

- Scientific testimony: Expert testimony on rape trauma syndrome, battered woman syndrome, eyewitness reliability, and drug trafficking practices [17]

For nearly 70 years, Frye served as the dominant standard for admitting scientific evidence in U.S. courts until it was superseded in federal courts by the Daubert standard in 1993 [17]. However, Frye remains the standard in several state jurisdictions, highlighting its enduring influence [17] [20].

The Daubert Standard: A Flexible Reliability Framework

Evolution from Frye

In 1993, the U.S. Supreme Court decision in Daubert v. Merrell Dow Pharmaceuticals, Inc. established a new standard for admitting expert testimony in federal courts [21] [22]. The Court held that the Frye standard had been superseded by the Federal Rules of Evidence, specifically Rule 702, which governs expert testimony [22] [20]. This decision transformed the trial judge's role, assigning them as "gatekeepers" responsible for ensuring that expert testimony rests on a reliable foundation and is relevant to the case [21] [22].

The Daubert standard emerged from a product liability case involving allegations that the drug Bendectin caused birth defects [22] [16]. The petitioners offered expert testimony based on chemical structure analyses, animal studies, and reanalysis of previously published studies, but the lower court dismissed the case, finding that this evidence did not meet Frye's "general acceptance" requirement [22]. The Supreme Court's ruling fundamentally changed the approach to scientific evidence by emphasizing flexibility and judicial discretion over Frye's rigid general acceptance test [22].

The Five Daubert Factors

The Daubert decision provided a non-exhaustive list of factors that trial judges may consider when evaluating the admissibility of expert testimony [21] [22]:

Whether the theory or technique can be (and has been) tested: The scientific validity of a technique is assessed by its falsifiability, refutability, and testability [22] [20].

Whether the theory or technique has been subjected to peer review and publication: Peer review and publication help identify methodological flaws and ensure that the technique meets disciplinary standards [22] [20].

The known or potential error rate: The court should consider the technique's error rate and the existence and maintenance of standards controlling its operation [21] [22].

The existence and maintenance of standards controlling the technique's operation: The court examines whether there are standards and controls for the application of the technique [22] [19].

General acceptance in the relevant scientific community: While Frye's general acceptance test is no longer the sole determinant, it remains a relevant factor under Daubert [22] [19].

Table 1: The Five Daubert Factors for Evaluating Expert Testimony

| Factor | Description | Application in Forensic Chemistry |

|---|---|---|

| Testability | Whether the method can be and has been empirically tested | Method validation studies, reproducibility experiments |

| Peer Review | Whether the method has been subjected to peer review | Publication in reputable scientific journals |

| Error Rate | The known or potential rate of error | Determination of accuracy, precision, and uncertainty measurements |

| Standards | Existence of standards and controls | Use of standard operating procedures (SOPs) and quality control measures |

| General Acceptance | Acceptance in the relevant scientific community | Adoption by professional organizations, use in multiple laboratories |

The Daubert Trilogy and Expansion

The Daubert standard was clarified and expanded through two subsequent Supreme Court cases, collectively known as the "Daubert Trilogy" [21] [22]:

General Electric Co. v. Joiner (1997): Established that appellate courts should review a trial court's decision to admit or exclude expert testimony under an "abuse of discretion" standard. The Court also emphasized that there must be a valid connection between the expert's methodology and their conclusions – an analytical gap between data and opinion cannot be bridged by the ipse dixit (unsupported assertion) of the expert [22].

Kumho Tire Co. v. Carmichael (1999): Expanded the Daubert standard to include all expert testimony, not just scientific evidence. The Court held that Daubert's factors for relevance and reliability apply to "technical, or other specialized knowledge" specified in Rule 702, including engineering and other non-scientific expertise [21] [22].

These decisions collectively strengthened the trial judge's gatekeeping role and established a more comprehensive framework for evaluating all types of expert testimony.

The Mohan Criteria: The Canadian Approach

Origins and Framework

In Canada, the admissibility of expert testimony is governed by the criteria established in R. v. Mohan [1994] 2 S.C.R. 9 [23] [4]. This case involved a pediatrician charged with sexual assault who sought to introduce expert psychiatric testimony suggesting that he did not fit the profile of someone who would commit such crimes [16]. The Supreme Court of Canada outlined a four-factor test for admitting expert evidence:

- Relevance: The evidence must be relevant to the facts at issue in the case [23] [4].

- Necessity in assisting the trier of fact: The evidence must provide information that is likely outside the ordinary knowledge and experience of the trier of fact [23] [4].

- Absence of any exclusionary rule: The evidence must not be subject to any other exclusionary rule of evidence [23] [4].

- A properly qualified expert: The witness must have sufficient specialized knowledge, skill, or training to provide the proposed evidence [23] [4].

Application and Refinement

The Mohan test employs a two-stage analytical approach for determining admissibility [23]:

First Stage – Threshold Requirements: The proponent of the evidence must establish the preconditions to admissibility, including logical relevance, necessity, absence of exclusionary rules, a properly qualified expert, and for novel science, reliability of the underlying methodology [23].

Second Stage – Gatekeeper Analysis: The judge conducts a cost-benefit analysis, weighing the potential risks and benefits of admitting the evidence. This includes considering factors such as legal relevance, necessity, reliability, and the expert's impartiality, independence, and absence of bias [23].

The Mohan criteria emphasize that expert evidence should not be admitted if its potential for prejudice outweighs its probative value, or if it would distort the fact-finding process [16]. Canadian courts have also recognized the influence of Daubert in their evolving approach to expert evidence, particularly regarding the requirement for threshold reliability [16].

Comparative Analysis of Admissibility Standards

Key Differences and Similarities

While the Frye, Daubert, and Mohan standards share the common goal of ensuring reliable expert testimony, they differ in their approaches and emphasis:

Table 2: Comparison of Legal Admissibility Standards

| Criterion | Frye Standard | Daubert Standard | Mohan Criteria |

|---|---|---|---|

| Jurisdiction | Some U.S. state courts | U.S. federal courts and most states | Canadian courts |

| Primary Focus | General acceptance in relevant scientific community | Reliability and relevance of methodology | Relevance, necessity, and reliability |

| Judicial Role | Limited gatekeeping | Active gatekeeper assessing scientific validity | Gatekeeper with discretionary balancing |

| Key Test | "General acceptance" test | Flexible five-factor reliability test | Four-factor threshold test with cost-benefit analysis |

| Novel Science | High barrier until generally accepted | More flexible approach using multiple factors | Additional reliability requirement for novel science |

| Expert Qualifications | Implicit in general acceptance | Explicit requirement under Rule 702 | Explicit threshold requirement |

Legal Admissibility and Technology Readiness in Forensic Chemistry

The progression of a forensic analytical technique from basic research to legally admissible evidence can be conceptualized through a technology readiness framework, with legal admissibility representing the highest level of maturity [4]. For techniques like comprehensive two-dimensional gas chromatography (GC×GC), meeting admissibility standards requires systematic validation and acceptance within both scientific and legal communities [4].

Diagram 1: Technology Readiness Levels for Forensic Methods

As shown in Diagram 1, legal admissibility represents the pinnacle of technology readiness for forensic methods. Current research on GC×GC applications in forensic chemistry demonstrates varying levels of technology readiness, with most applications requiring further validation before achieving legal admissibility under these standards [4].

Experimental Protocols for Meeting Admissibility Standards

Validation Framework for Novel Forensic Methods

For forensic chemistry researchers developing new analytical methods, designing validation studies that address legal admissibility criteria is essential. The following experimental protocols provide a framework for establishing reliability under Daubert, Frye, and Mohan:

Protocol 1: Method Validation and Error Rate Determination

- Objective: Establish known error rates and operational characteristics of the analytical method

- Procedure: Conduct repeated analyses (n≥30) of certified reference materials across multiple concentration levels by different analysts on different days

- Data Analysis: Calculate accuracy (percent recovery), precision (relative standard deviation), limits of detection and quantification, and uncertainty measurements

- Legal Significance: Provides known error rates required under Daubert and demonstrates reliability under Mohan [4] [22]

Protocol 2: Interlaboratory Comparison and Standardization

- Objective: Demonstrate general acceptance and transferability of the method

- Procedure: Develop standardized operating procedure and distribute to minimum of 8 independent laboratories for blinded analysis of standardized sample sets

- Data Analysis: Apply statistical analysis of variance (ANOVA) to determine interlaboratory reproducibility and consistency of results

- Legal Significance: Addresses "general acceptance" under Frye and standardization factors under Daubert [17] [4]

Protocol 3: Case-type Sample Analysis

- Objective: Establish relevance and reliability for specific forensic applications

- Procedure: Apply method to authentic case-type samples with demonstrated provenance and compare results to established reference methods

- Data Analysis: Calculate sensitivity, specificity, and likelihood ratios for method performance in realistic conditions

- Legal Significance: Demonstrates practical relevance and necessity under Mohan and relevance under Daubert [23] [22]

The Scientist's Toolkit: Essential Materials for Forensic Method Validation

Table 3: Essential Research Reagents and Materials for Forensic Method Validation

| Item | Specification | Function in Validation |

|---|---|---|

| Certified Reference Materials | NIST-traceable with documented uncertainty | Establishing accuracy and calibration traceability |

| Quality Control Materials | Independent source with predetermined acceptance criteria | Monitoring method performance and stability |

| Blinded Sample Sets | Authentic or simulated case samples with known ground truth | Assessing real-world applicability and error rates |

| Internal Standards | Stable isotope-labeled analogs of target analytes | Correcting for matrix effects and instrumental variation |

| System Suitability Test Mix | Compounds verifying instrumental performance | Ensuring proper system operation before analysis |

Legal admissibility represents the ultimate end goal for forensic chemistry research, serving as the benchmark for technology readiness and methodological maturity. The Frye, Daubert, and Mohan criteria, while jurisdiction-specific, share the common objective of ensuring that scientific evidence presented in legal proceedings meets threshold standards of reliability and relevance.

For researchers developing novel forensic methods, understanding these legal frameworks is not merely an ancillary consideration but a fundamental aspect of experimental design and validation strategy. By incorporating admissibility requirements early in the research lifecycle – through rigorous error rate determination, peer-reviewed publication, interlaboratory validation, and standardization – forensic chemists can bridge the gap between innovative research and legally admissible evidence.

As analytical technologies continue to advance, particularly in separation science and instrumentation, the interplay between scientific innovation and legal admissibility will remain critical. Future research directions should emphasize comprehensive validation studies, error rate quantification, and standardization efforts to facilitate the transition of promising techniques from experimental methods to forensically validated tools capable withstanding judicial scrutiny under the relevant admissibility standards.

The Role of Basic Research and Proof-of-Concept Studies (TRL 1-2) in Addressing Forensic Backlogs

Forensic laboratories worldwide are grappling with persistent casework backlogs, a issue that undermines criminal justice by causing investigative delays and impeding timely resolutions for victims and the accused [24] [25]. These backlogs, particularly in areas like DNA and seized drug analysis, are often perceived as a volume-based warehousing problem, leading to a cycle of short-term funding and linear solutions that have proven ineffective [24]. A shift in perspective is required: backlogs are a dynamic system, influenced by factors such as increasing case complexity, the rapid emergence of new psychoactive substances (NPS), unfunded legislative mandates, and resource constraints [24] [25] [9].

This whitepaper posits that a sustainable solution lies in strategically strengthening the earliest stages of the forensic research and development pipeline—specifically, Technology Readiness Levels (TRL) 1 and 2. The TRL framework, a systematic metric for assessing technology maturity, provides a crucial scaffold for this approach [1] [2]. TRL 1 involves basic principles observed through foundational scientific research, while TRL 2 focuses on formulating technology concepts and practical applications based on those initial findings [1]. At this stage, technologies are still speculative, with no experimental proof of concept [1]. By targeting research at these foundational levels, the forensic community can seed the development of next-generation tools and methodologies that are inherently more efficient, rapid, and robust, thereby addressing the root causes of backlog accumulation rather than just its symptoms.

Understanding the Framework: Technology Readiness Levels (TRLs) in a Forensic Context

The TRL scale, originally developed by NASA, provides a standardized framework for assessing the maturity of a given technology, from basic principles to proven operational use [1] [2]. For forensic research and development, this framework is indispensable for managing risk, guiding funding decisions, and ensuring new methods are sufficiently validated before implementation in casework.

Table: Technology Readiness Levels (TRLs) 1-4: From Basic Research to Proof-of-Concept

| TRL | Title | Description | Forensic Chemistry Example |

|---|---|---|---|

| 1 | Basic Principles Observed and Reported | Lowest level of technology readiness. Scientific research begins to be translated into applied research and development [1]. | Study of the fundamental fluorescence properties of carbon quantum dots (CQDs) or the decomposition kinetics of Tetrahydrocannabinol (THC) to Cannabinol (CBN) [25] [26]. |

| 2 | Technology Concept Formulated | Invention begins. Once basic principles are observed, practical applications can be invented. Application is speculative, and there is no proof or detailed analysis to support the concept [1] [2]. | Formulating a concept for using CQDs as a fluorescent sensor for a specific new psychoactive substance (NPS) or proposing a new GC×GC-MS data processing algorithm for ignitable liquid analysis [4] [26]. |

| 3 | Experimental Proof of Concept | Active research and development is initiated. This includes analytical studies and laboratory studies to physically validate predictions of separate elements of the technology [1]. | Constructing and testing a proof-of-concept CQD-based assay in a controlled laboratory setting to detect fentanyl analogues, yielding initial positive results [26]. |

| 4 | Technology Validated in Lab | Basic technological components are integrated to establish that they will work together. This is relatively low-fidelity compared to the eventual system [1]. | Validating a prototype CQD sensor and portable reader with multiple drug targets in a laboratory environment, demonstrating component integration [1] [26]. |

The progression from TRL 1 to TRL 4 is a critical valley of death for many forensic technologies. Research at TRL 1-2 is characterized by high uncertainty and is often considered too risky for laboratory operational budgets. However, this is precisely where the greatest potential for transformative efficiency gains exists. The transition to higher TRLs, where technologies are validated in relevant environments (TRL 5-6) and eventually proven in operational casework (TRL 7-9), is impossible without a robust pipeline of ideas emerging from foundational research [1] [4].

The Forensic Backlog as a System: Why Traditional Approaches Fail

A systems thinking approach reveals that forensic backlogs are not simple linear problems but are complex systems with feedback loops, interdependencies, and emergent behaviors [24]. Viewing a forensic laboratory as a dynamic system helps diagnose the true leverage points for intervention.

Table: Key Contributors to Forensic Casework Backlogs

| Contributing Factor | Impact on Backlog | Supporting Evidence |

|---|---|---|

| Emergence of NPS | Increases analysis time, requires specialized expertise and reference materials, complicates identification [25] [9]. | "Analytical identification of these compounds is complex as properly certified reference materials... are not readily available and are expensive" [25]. |

| Increased Case Complexity & Volume | Overwhelms existing laboratory capacity; more evidence submissions and complex analyses strain resources [24] [25]. | "New legislation regarding sexual assault kits resulted in a 150% increase in submission of kits for one laboratory" [24]. |

| Inadequate Resources & Funding | Limits hiring capacity, restricts acquisition of new instrumentation, and prevents investment in research and development [14] [25]. | "Agencies are trying to do more with less... There’s always new technology coming out... but those things are very expensive" [14]. |

| Slow Adoption of New Technology | Laboratories lack time and resources for validation and training on new, more efficient methods, perpetuating use of slower legacy techniques [4] [9]. | "Laboratories are often eager to adopt new technology, but they lack the time and resources to go through the validation, training and method development processes" [9]. |

| Evidence Degradation | Delay in analysis can lead to evidence degradation (e.g., THC loss in marijuana), causing inconclusive results and wasted resources [25]. | "As THC and CBN content significantly alters based on the storage time... the delay in examining some marijuana samples... [can cause] inconclusive results" [25]. |

The mechanistic response of simply providing more funding for backlog reduction, without addressing these systemic drivers, has proven unsuccessful [24]. A more holistic strategy involves using basic research (TRL 1-2) to reconfigure the system itself, creating technologies that reduce analysis time, simplify identification, and automate interpretation.

Core Methodologies and Experimental Protocols for TRL 1-2 Research

Strategic basic research at TRL 1-2 is the cornerstone for generating the disruptive concepts needed to overcome systemic backlog challenges. The following protocols outline foundational investigations with high potential for creating future efficiency gains.

Protocol 1: Investigating Carbon Quantum Dots (CQDs) as Fluorescent Sensors for NPS

Objective: To formulate a technology concept (TRL 2) for rapid, presumptive testing of NPS using the tunable optical properties of CQDs, potentially reducing confirmatory analysis time.

Background: CQDs are nanoscale carbon materials with exceptional optical properties, including tunable fluorescence, high biocompatibility, and ease of surface functionalization [26]. Their potential for chemical sensing and trace evidence detection makes them a compelling candidate for novel assay development.

Detailed Methodology:

- CQD Synthesis (Bottom-Up Hydrothermal Method):

- Precursor Preparation: Dissolve a carbon source (e.g., 2g citric acid) in 100 mL deionized water. For nitrogen-doping, add a nitrogen source (e.g., 1g urea) to the solution.

- Hydrothermal Reaction: Transfer the solution to a Teflon-lined stainless-steel autoclave. Heat at 180°C for 6-12 hours in a laboratory oven.

- Purification: Cool the autoclave to room temperature. The resulting brownish-yellow solution contains CQDs. Purify via filtration (0.22 μm membrane) and dialysis (500-1000 Da MWCO) against deionized water for 24 hours to remove unreacted precursors.

- Characterization: Use UV-Vis spectroscopy to confirm optical absorption and fluorescence spectroscopy to determine emission profiles. Transmission Electron Microscopy (TEM) can be used to determine particle size and morphology [26].

Surface Functionalization for NPS Targeting:

- Concept: Functionalize CQD surfaces with molecular receptors (e.g., molecularly imprinted polymers or host-guest complexes) specific to a target NPS scaffold, such as synthetic cathinones.

- Experimental Procedure: Activate carboxyl groups on CQDs using EDC/NHS chemistry. Incubate with the selected amine-functionalized receptor molecule in buffer (e.g., 0.1 M PBS, pH 7.4) for 12 hours under gentle stirring. Purify the functionalized CQDs via dialysis or centrifugation [26].

Proof-of-Concept Sensing Assay (TRL 2):

- Prepare a series of solutions containing the target NPS at various concentrations in a suitable solvent.

- Add a fixed volume of the functionalized CQD solution to each.

- Measure the fluorescence intensity (e.g., at 450 nm emission with 360 nm excitation) of each solution.

- Expected Outcome (Concept): A measurable, concentration-dependent change in fluorescence (quenching or enhancement) upon binding of the target NPS, forming the basis for a future rapid sensor.

Diagram: CQD Sensor Development Workflow. This workflow outlines the key stages for developing a carbon quantum dot-based sensor, from synthesis to proof-of-concept validation at TRL 2.

Protocol 2: Developing a Comprehensive Two-Dimensional Gas Chromatography (GC×GC) Method for Complex Mixtures

Objective: To establish the basic principles (TRL 1) and formulate a concept (TRL 2) for applying GC×GC with high-resolution mass spectrometry to achieve unparalleled separation of complex forensic samples like fire debris or NPS mixtures, reducing re-analysis and inconclusive results.

Background: GC×GC offers a significant increase in peak capacity over traditional 1D GC by using two separate separation columns connected via a modulator, resolving co-eluting compounds that would otherwise be unidentifiable [4].

Detailed Methodology:

- System Configuration and Principle Observation (TRL 1):

- Instrumentation: A GC×GC system equipped with a dual-stage thermal modulator, a primary column (e.g., non-polar 30m Rxi-5Sil MS), and a secondary column (e.g., polar 2m Rxi-17Sil MS). Detection is performed with a time-of-flight mass spectrometer (TOF-MS).

- Modulation Principle: The modulator traps and re-injects effluents from the first column onto the second column at high frequency (2-8 seconds). This creates a continuous series of fast, high-resolution second-dimension separations [4].

- Data Output: The result is a two-dimensional chromatogram where each analyte has a unique coordinate (1D retention time, 2D retention time).

- Method Formulation for Ignitable Liquid Analysis (TRL 2):

- Sample Preparation: Extract ignitable liquid residues from fire debris using headspace solid-phase microextraction (HS-SPME).

- Conceptual Separation Design: Propose an analytical method where the first column separates compounds primarily by boiling point, and the second column separates by polarity.

- Data Analysis Concept: Propose the use of structured chromatographic patterns in the 2D space for more reliable and objective identification of ignitable liquid classes compared to 1D GC, reducing reliance on subjective pattern matching [4].

The Scientist's Toolkit: Essential Reagents and Materials for TRL 1-2 Research

Table: Key Research Reagent Solutions for Foundational Forensic Chemistry Studies

| Research Reagent / Material | Function in TRL 1-2 Research | Application Example |

|---|---|---|

| Carbon Precursors (e.g., Citric Acid) | Serves as the fundamental starting material for the bottom-up synthesis of Carbon Quantum Dots (CQDs) [26]. | Synthesizing CQDs with intrinsic fluorescence properties via hydrothermal methods. |

| Heteroatom Dopants (e.g., Urea) | Modifies the electronic and optical properties of CQDs during synthesis, enhancing fluorescence and enabling selective analyte interactions [26]. | Creating nitrogen-doped CQDs (N-CQDs) with improved sensor performance for NPS. |

| Cross-linking Agents (e.g., EDC/NHS) | Activates surface carboxyl groups on nanomaterials to facilitate covalent attachment of targeting ligands or receptors [26]. | Functionalizing CQD surfaces with molecular receptors for specific drug detection. |

| GC×GC Modulator & Column Set | The heart of the GC×GC system; enables the transfer and focusing of analyte bands from the first to the second dimension, creating comprehensive 2D separation [4]. | Developing high-resolution separation methods for complex forensic mixtures like fire debris. |

| Certified Reference Materials (CRMs) | Provides the ground truth for method development and validation; essential for identifying unknowns and quantifying analytes [9]. | Confirming the identity of NPS and establishing retention indices in chromatographic methods. |

Navigating the Path to Implementation: From Concept to Courtroom

Translating a successful TRL 2 concept into a validated, court-ready methodology (TRL 9) requires early and continuous attention to legal and standardization frameworks. The admissibility of scientific evidence in the United States is governed by standards such as Daubert, which requires that a technique be tested, peer-reviewed, have a known error rate, and be generally accepted in the relevant scientific community [4]. Therefore, research at TRL 1-2 should be designed with these end goals in mind. Future work must place "a focus on increased intra- and inter-laboratory validation, error rate analysis, and standardization" to ensure eventual adoption [4]. This foresight during foundational research phases smooths the otherwise difficult transition of new technologies from the research bench to the forensic laboratory.

The pervasive challenge of forensic backlogs cannot be solved by linear thinking or simply working harder within the constraints of existing technologies. A paradigm shift is necessary, one that recognizes backlogs as a dynamic system and invests strategically in the foundational research that can reshape that system. Basic research and proof-of-concept studies at TRL 1 and 2 are not academic indulgences; they are critical, high-leverage investments in the future efficiency and effectiveness of forensic science. By fostering innovation at these earliest stages—developing novel sensors like CQDs, leveraging powerful separation science like GC×GC, and designing for objectivity and speed from the outset—the forensic community can build a pipeline of disruptive technologies. These technologies will be key to creating a more agile, robust, and timely forensic service, ultimately strengthening the administration of justice.

Bridging the Gap: Applying TRLs to Cutting-Edge Forensic Chemistry Techniques

Comprehensive two-dimensional gas chromatography (GC×GC) represents a significant advancement in analytical chemistry, offering superior separation power for complex mixtures compared to traditional one-dimensional GC. Since its first successful demonstration in 1991, GC×GC has developed into a powerful technique with growing applications across multiple scientific fields, including forensic chemistry [4]. This technique expands upon traditional separation by adjoining two columns of different stationary phases in series with a modulator, which preserves the separation from the first column by sending short retention time windows to be separated on the secondary column [4]. The modulator, often called the heart of GC×GC, allows analytes' different affinities for each column to dictate their separation, dramatically increasing overall peak capacity and the signal-to-noise ratio [4]. This case study examines the development pathway of GC×GC within the specific context of forensic chemistry research, evaluating its progress using the Technology Readiness Level (TRL) framework and analyzing the specialized requirements for adoption in legal settings where evidence must meet rigorous scientific and judicial standards.

Technical Fundamentals of GC×GC

Core Principles and Mechanism

The fundamental principle of GC×GC involves sequential separation of volatile and semi-volatile compounds through two independent separation mechanisms. A sample is first injected onto a primary column (1D column) where analytes elute according to their affinity for its stationary phase [4]. The critical differentiator from conventional GC is the modulator, which collects eluate from the primary column for set time periods (typically 1–5 seconds) and then passes these collected fractions onto the secondary column (2D column) at repeated intervals known as the modulation period [4]. The secondary column, typically shorter and with a different stationary phase, performs rapid secondary separation based on a different retention mechanism, with each modulation cycle creating a high-resolution chromatographic slice that together form a comprehensive two-dimensional data set [4].

Comparative Advantages Over Traditional GC

GC×GC offers several distinct advantages that make it particularly valuable for forensic applications involving complex mixtures:

- Enhanced Peak Capacity: The combined peak capacity equals the product of each dimension's peak capacity, far exceeding conventional GC [4]

- Improved Sensitivity and Signal-to-Noise Ratio: The focusing effect of the modulator and separation of analytes from chemical background noise significantly lowers detection limits [4]

- Structured Chromatograms: Compounds with similar chemical properties form recognizable patterns in the 2D separation space, aiding in compound identification and class separation [4]

- Increased Resolution: Co-eluting compounds in the first dimension are often resolved in the second dimension, as demonstrated by GC×GC's ability to resolve analytes that co-elute in 1D GC [4]

Table 1: Evolution of GC×GC Detection Systems

| Detection Method | Time Period | Key Capabilities | Common Forensic Applications |

|---|---|---|---|

| Flame Ionization (FID) | Early development | Robust, quantitative analysis | Petroleum products, ignitable liquids |

| Mass Spectrometry (MS) | 1990s-present | Compound identification | Drug analysis, toxicology |

| High-Resolution MS | Recent advances | Improved specificity for complex mixtures | Chemical warfare agents, trace evidence |

| Time-of-Flight MS | Recent advances | Fast acquisition rates | Decomposition odor, non-targeted analysis |

| Dual Detection (e.g., TOFMS/FID) | Cutting-edge | Simultaneous identification and quantification | Comprehensive forensic screening |

Technology Readiness Level Assessment in Forensic Chemistry

TRL Framework for Forensic Analytical Techniques

Technology Readiness Levels provide a systematic framework for assessing the maturity of developing technologies, originally developed by NASA and since adapted to various fields including analytical chemistry [27] [12] [28]. For forensic applications, the TRL scale must be considered alongside legal admissibility standards, creating a dual requirement for both technical and judicial readiness [4]. The following experimental workflow illustrates the progression of GC×GC technology through research, development, and validation stages:

GC×GC Forensic Development Workflow

Current TRL Assessment for GC×GC Forensic Applications

The technology readiness of GC×GC varies significantly across different forensic applications, reflecting diverse stages of development and validation. The following table synthesizes the current status based on published research as of 2024:

Table 2: TRL Assessment of GC×GC in Forensic Applications (as of 2024)

| Forensic Application | Current TRL | Key Demonstrations | Remaining Development Needs |

|---|---|---|---|

| Illicit Drug Analysis | TRL 4-5 | Characterization of complex drug mixtures, novel psychoactive substances [4] | Standardized methods, inter-laboratory validation, established error rates |

| Forensic Toxicology | TRL 4 | Screening for drugs and metabolites in biological samples [4] | Reference databases, quantitative validation |

| Ignitable Liquid Analysis (Arson) | TRL 5-6 | Extensive research base (30+ works), improved classification of petroleum products [4] | Transition from research to standardized casework methods |