Technology Readiness Levels in Forensic Science: A Researcher's Guide to Development and Courtroom Adoption

This guide provides forensic researchers and scientists with a comprehensive framework for navigating the Technology Readiness Level (TRL) pathway, from fundamental research to court-admissible methods. It details the TRL scale specific to forensic chemistry, explores methodological applications like Comprehensive Two-Dimensional Gas Chromatography (GC×GC), and addresses critical troubleshooting and optimization challenges. The article culminates with a thorough analysis of the inter-laboratory validation, error rate analysis, and legal admissibility standards required under the Daubert Standard and Federal Rule of Evidence 702 to ensure new technologies meet the rigorous demands of the justice system.

Technology Readiness Levels in Forensic Science: A Researcher's Guide to Development and Courtroom Adoption

Abstract

This guide provides forensic researchers and scientists with a comprehensive framework for navigating the Technology Readiness Level (TRL) pathway, from fundamental research to court-admissible methods. It details the TRL scale specific to forensic chemistry, explores methodological applications like Comprehensive Two-Dimensional Gas Chromatography (GC×GC), and addresses critical troubleshooting and optimization challenges. The article culminates with a thorough analysis of the inter-laboratory validation, error rate analysis, and legal admissibility standards required under the Daubert Standard and Federal Rule of Evidence 702 to ensure new technologies meet the rigorous demands of the justice system.

Understanding Technology Readiness Levels: The Forensic Science Framework

The Critical Role of TRLs in Forensic Method Development and Funding Acquisition

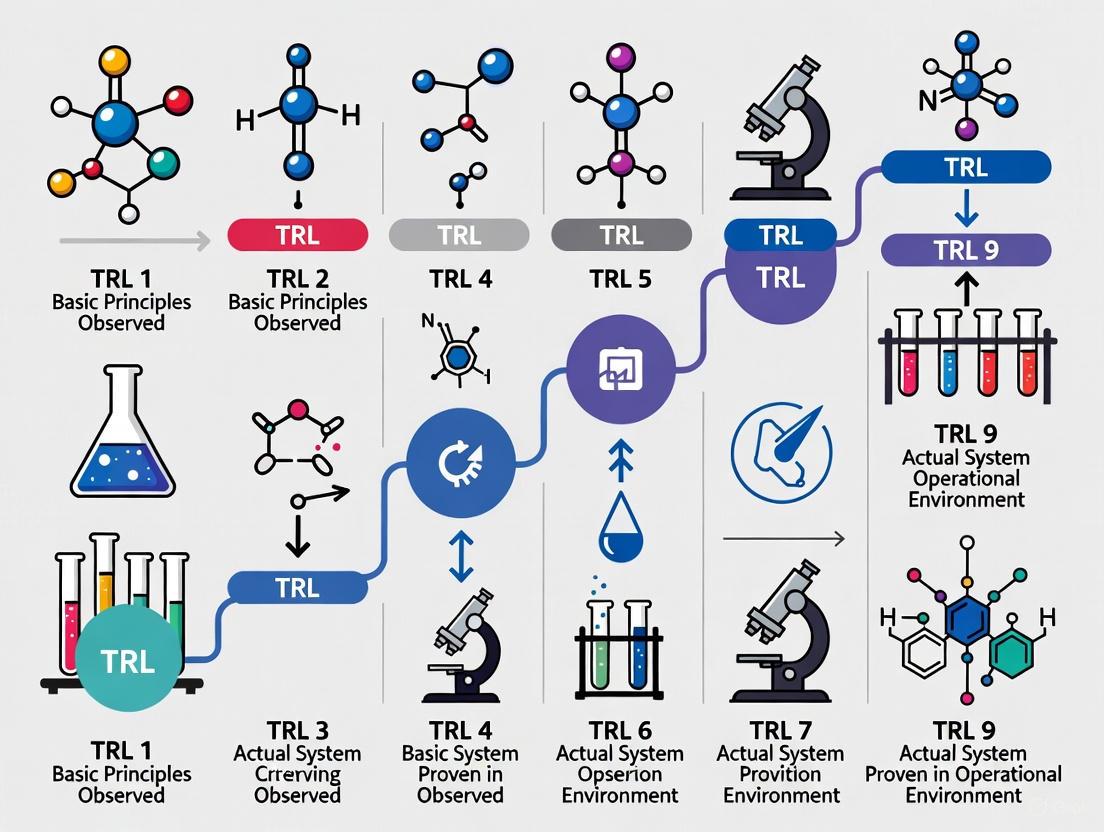

Technology Readiness Levels (TRLs) are a systematic metric used to assess the maturity of a particular technology. The scale typically ranges from 1 (basic principles observed) to 9 (system proven in operational environment). For forensic researchers, understanding and utilizing this framework is paramount for both methodological development and successful funding acquisition. The integration of TRLs provides a standardized language for communicating project status to stakeholders, including funding agencies, laboratory directors, and legal professionals. In an field where new analytical techniques must withstand intense legal scrutiny, the structured pathway offered by the TRL framework ensures that forensic methods meet the rigorous standards required for courtroom admissibility [1].

The forensic science community currently faces significant challenges in adopting advanced technologies. A recent National Institute of Standards and Technology (NIST) report has highlighted four "grand challenges," including the need to quantify accuracy and reliability of complex methods, develop new analytical techniques, establish science-based standards, and promote the adoption of these advances [2] [3]. Within this context, the TRL framework serves as a crucial tool for systematically addressing these challenges by providing a clear pathway from basic research to implemented practice. For forensic researchers, strategically applying this framework can dramatically enhance both the development of robust methods and the success of funding proposals aimed at advancing forensic capabilities.

TRLs and Legal Admissibility Standards

The Interplay Between Technical and Legal Readiness

For any forensic methodology, technical maturity must be paralleled by legal acceptability. Court systems maintain specific standards for admitting scientific evidence and expert testimony. In the United States, the Daubert Standard requires that a technique can be tested, has been peer-reviewed, has a known error rate, and is generally accepted in the relevant scientific community [1]. Similarly, Canada's Mohan Criteria emphasize relevance, necessity, absence of exclusionary rules, and properly qualified experts [1]. The TRL framework provides forensic researchers with a structured approach to meeting these legal requirements by systematically addressing validation, error rate determination, and community acceptance at specific maturity levels.

Forensic Science Grand Challenges and TRLs

The National Institute of Standards and Technology (NIST) has identified four grand challenges facing forensic science, each directly connected to technology maturation through the TRL framework [2] [3]:

Table: Alignment Between NIST Grand Challenges and TRL Development Stages

| NIST Grand Challenge | Relevant TRL Stages | TRL Development Focus |

|---|---|---|

| Accuracy and reliability of complex methods | TRL 3-6 | Establish statistically rigorous measures of accuracy and validity across evidence of varying quality |

| New methods and techniques | TRL 1-4 | Develop novel analytical methods leveraging AI, advanced instrumentation, and algorithms |

| Science-based standards and guidelines | TRL 6-8 | Develop rigorous standards and conformity assessment schemes across disciplines |

| Adoption of advanced methods | TRL 7-9 | Promote implementation of validated methods into routine casework |

TRL Framework for Forensic Method Development

Defining TRL Stages for Forensic Applications

The following table outlines a customized TRL framework specifically designed for forensic method development, incorporating both technical and legal readiness considerations:

Table: Technology Readiness Levels (TRLs) for Forensic Science Applications

| TRL | Stage Definition | Forensic Application Requirements | Legal Readiness Considerations |

|---|---|---|---|

| 1-2: Basic Research | Basic principles observed and formulated | Concept development for novel forensic techniques | Research idea with potential forensic relevance |

| 3-4: Proof of Concept | Experimental validation in laboratory environment | Analytical proof of concept using control samples | Initial testing of scientific foundation for Daubert considerations |

| 5-6: Technology Development | Validation in relevant environment | Testing with simulated case samples, comparison with established methods | Begin establishing error rates, peer-reviewed publications |

| 7-8: System Demonstration | Demonstration in operational environment | Validation in multiple forensic laboratories, standard operating procedure development | Meeting Daubert criteria, demonstrating general acceptance in research community |

| 9: System Proof | Actual system proven in operational environment | Successful implementation in casework, proficiency testing | Admissibility established in court, general acceptance in forensic community |

Case Study: GC×GC-MS Implementation in Forensic Chemistry

Comprehensive two-dimensional gas chromatography coupled with mass spectrometry (GC×GC-MS) provides an illustrative example of TRL progression in forensic science. This technique offers enhanced separation capabilities for complex mixtures encountered in forensic evidence, including illicit drugs, fingerprint residues, and fire debris [1].

Current TRL Status: As of 2024, GC×GC-MS applications in forensic chemistry span different readiness levels [1]:

- TRL 3-4: Forensic toxicology and chemical, biological, nuclear, radioactive (CBNR) substance analysis

- TRL 5-6: Illicit drug analysis and fingerprint residue characterization

- TRL 7-8: Petroleum analysis for arson investigations and oil spill tracing

Experimental Protocols for TRL Advancement

Protocol for TRL 3-4: Proof of Concept Validation

Objective: Establish analytical proof of concept for a novel forensic method using control samples.

Materials and Methods:

- Instrumentation: Comprehensive two-dimensional gas chromatography system with modulator, secondary column, and appropriate detector (MS, FID, or TOF-MS)

- Reference Materials: Certified reference standards of target analytes, negative controls

- Sample Preparation: Appropriate extraction and concentration techniques for target analytes

- Data Analysis: Multivariate statistical software for pattern recognition and analyte identification

Experimental Workflow:

- Optimize separation conditions using standard mixtures

- Establish analytical figures of merit (precision, accuracy, detection limits)

- Compare performance with established 1D-GC methods

- Conduct initial validation with fortified samples

Protocol for TRL 5-6: Technology Development and Validation

Objective: Validate the method with simulated case samples and establish performance metrics for admissibility.

Materials and Methods:

- Sample Types: Simulated case samples mimicking real evidence (e.g, contaminated substrates, mixed samples)

- Comparison Methods: Established reference methods for forensic analysis

- Statistical Tools: Software for calculating error rates, confidence intervals, and uncertainty measurements

- Validation Parameters: Specificity, sensitivity, reproducibility, robustness, and stability

Experimental Workflow:

- Conduct method validation following SWGDRUG or other relevant guidelines

- Determine false positive and false negative rates using blinded samples

- Establish standard operating procedures for the method

- Perform intra-laboratory reproducibility studies

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful advancement through TRL stages requires specific materials and reagents tailored to forensic applications. The following table details essential components for developing and validating novel forensic methods:

Table: Essential Research Reagent Solutions for Forensic Method Development

| Reagent/Material | Function in Development | TRL Application Stage |

|---|---|---|

| Certified Reference Standards | Quantitation, method calibration, and quality control | TRL 3-9 |

| Simulated Case Samples | Method validation using forensically relevant matrices | TRL 5-7 |

| Quality Control Materials | Monitoring analytical performance and reproducibility | TRL 4-9 |

| Sample Preparation Kits | Extraction, purification, and concentration of target analytes | TRL 3-9 |

| Internal Standards | Correction for analytical variability and matrix effects | TRL 3-9 |

| Proficiency Test Materials | Inter-laboratory comparison and competency assessment | TRL 7-9 |

| Data Analysis Software | Multivariate statistics, pattern recognition, and chemometrics | TRL 2-9 |

Funding Acquisition Strategies Aligned with TRLs

Aligning Proposals with TRL Stages

Funding acquisition requires precise alignment between project scope and TRL positioning. Different funding mechanisms target specific readiness levels:

- TRL 1-3 (Basic Research): Basic science grants (e.g., NSF, NIH R01), academic research programs

- TRL 4-6 (Technology Development): Applied research grants (e.g., NIJ, FDA), public-private partnerships

- TRL 7-9 (Implementation): Implementation science funding, technology transfer programs, commercialization grants

Addressing Legal Standards in Funding Proposals

Successful forensic science funding proposals must explicitly address legal admissibility requirements. The following diagram illustrates how TRL advancement corresponds with meeting legal standards:

The integration of Technology Readiness Levels into forensic method development provides a crucial framework for advancing novel techniques from basic research to courtroom implementation. By systematically addressing both technical maturity and legal admissibility requirements at each TRL stage, forensic researchers can significantly enhance their methodological rigor and funding acquisition success. The current NIST-identified grand challenges in forensic science underscore the urgent need for this structured approach to technology development and implementation. As the field continues to evolve with emerging technologies like artificial intelligence, advanced separation techniques, and rapid analysis methods, the TRL framework offers a standardized pathway for ensuring these innovations meet the exacting standards required for forensic evidence in the judicial system.

Technology Readiness Levels (TRL) are a systematic metric used to assess the maturity level of a particular technology. The framework consists of nine levels, with TRL 1 being the lowest (basic principles observed) and TRL 9 being the highest (actual system proven in operational environment) [4]. This classification system provides a common understanding of technology status and helps in research planning, funding decisions, and technology transition strategies. In forensic chemistry, applying the TRL scale enables researchers, laboratory directors, and funding agencies to objectively evaluate the maturity and implementation readiness of new analytical methods, instruments, and techniques.

The adoption of TRL assessments in forensic science is particularly crucial due to the field's direct impact on the criminal justice system. Novel forensic technologies must not only demonstrate analytical validity but also meet stringent legal standards for admissibility as evidence. In the United States, the Daubert Standard guides the admission of expert testimony and requires assessment of whether the theory or technique has been tested, has a known error rate, has been peer-reviewed, and is generally accepted in the relevant scientific community [1]. Similarly, Canada's Mohan criteria emphasize necessity, relevance, reliability, and the absence of any exclusionary rule [1]. The TRL framework provides a structured pathway for forensic researchers to systematically advance their technologies from basic research to court-admissible methods.

The TRL Scale: Definitions and Forensic Science Interpretation

Detailed TRL Definitions

Table 1: Technology Readiness Levels (TRL) and Corresponding Definitions

| TRL | Stage | Definition | Description in Forensic Context |

|---|---|---|---|

| TRL 1 | Fundamental Research | Basic principles observed and reported | Scientific research begins with observation of properties of potential forensic techniques. |

| TRL 2 | Fundamental Research | Technology concept formulated | Practical application of basic scientific principles to forensic challenges is identified. |

| TRL 3 | Research & Development | Experimental proof of concept | Active R&D begins with analytical and laboratory studies to validate forensic feasibility. |

| TRL 4 | Research & Development | Validation in laboratory environment | Basic technology components are integrated and tested in a laboratory environment. |

| TRL 5 | Research & Development | Validation in simulated environment | Component validation occurs in a simulated forensic laboratory environment. |

| TRL 6 | Pilot & Demonstration | Prototype demonstration in simulated environment | A model or prototype representing near-desired configuration undergoes pilot-scale testing. |

| TRL 7 | Pilot & Demonstration | Prototype demonstration in operational environment | A full-scale prototype is demonstrated in an operational forensic laboratory under limited conditions. |

| TRL 8 | Pilot & Demonstration | System complete and qualified | Technology is proven to work in its final form under expected conditions in forensic laboratories. |

| TRL 9 | Early Adoption | Actual system proven through successful deployment | The technology is successfully used in casework and has been admitted as evidence in court. |

TRL Assessment Process for Forensic Chemistry

The process of determining the TRL for a forensic chemistry technology requires careful evaluation against specific criteria at each level. When conducting TRL assessments, it is essential to: start with the broader technology development stage; err on the conservative side when uncertainties exist; ensure the operating environment is well understood; and recognize that a TRL is only valid for the specific operational environment for which the technology was tested [5].

For forensic technologies, the "operational environment" extends beyond the laboratory to include court admissibility requirements. A technology is considered to have achieved a specific TRL only if it has met the requirements for that level and all prior levels [5].

Forensic Chemistry Case Study: Comprehensive Two-Dimensional Gas Chromatography (GC×GC)

Comprehensive two-dimensional gas chromatography (GC×GC) represents a significant advancement in separation science for forensic chemistry. This technique expands upon traditional 1D GC by adjoining two columns of different stationary phases in series with a modulator, dramatically increasing peak capacity and separation power [1]. The modulator, often called the "heart of GC×GC," preserves separation from the first column by sending short retention time windows to be separated on the secondary column, leveraging different affinities of analytes for each stationary phase [1].

GC×GC has been explored in multiple forensic applications, including:

- Illicit drug analysis for improved separation of complex mixtures and novel psychoactive substances [1]

- Toxicology for detecting and quantifying drugs and metabolites in biological samples [1]

- Fire debris analysis for identifying ignitable liquid residues in arson investigations [1]

- Explosives and chemical threat detection for Chemical, Biological, Nuclear, and Radioactive (CBNR) forensics [1]

- Fingermark residue analysis for chemical profiling of latent print residues [1]

- Decomposition odor analysis for volatile organic compound profiling in death investigations [1]

TRL Assessment of GC×GC in Forensic Applications

Table 2: TRL Assessment of GC×GC Across Forensic Chemistry Applications

| Forensic Application | Current TRL | Key Evidence | Legal Readiness |

|---|---|---|---|

| Illicit Drug Analysis | TRL 4-5 | Proof-of-concept studies demonstrating separation of complex drug mixtures; validation in laboratory environments [1] | Limited peer-reviewed publications; no known error rates established for court |

| Toxicology | TRL 4 | Experimental data showing detectability of drugs and metabolites; limited integration with existing workflows [1] | Meets some Daubert criteria (peer review) but lacks error rate documentation |

| Fire Debris Analysis | TRL 6-7 | Prototype methods demonstrated in simulated and operational environments; 30+ publications [1] | Closer to general acceptance; inter-laboratory validation ongoing |

| Oil Spill Tracing | TRL 6-7 | Extensive research (30+ works); demonstrated in relevant environments [1] | Well-characterized methods; approaching general acceptance |

| Fingermark Chemistry | TRL 3-4 | Early proof-of-concept studies; laboratory validation of component processes [1] | Primarily research phase; not yet ready for court |

| CBNR Forensics | TRL 3-4 | Component validation in laboratory settings; limited system integration [1] | Early research phase; significant development needed |

Experimental Protocols for GC×GC Method Development

Protocol for GC×GC Method Validation in Illicit Drug Analysis

Objective: To establish a validated GC×GC-MS method for the separation and identification of complex synthetic drug mixtures.

Materials and Equipment:

- Comprehensive two-dimensional gas chromatograph with liquid nitrogen or quad-jet thermal modulator

- Mass spectrometer detector (preferably time-of-flight for non-targeted analysis)

- Primary column: non-polar stationary phase (e.g., Rxi-5Sil MS, 30m × 0.25mm i.d. × 0.25μm df)

- Secondary column: mid-polar stationary phase (e.g., Rxi-17Sil MS, 1.5m × 0.15mm i.d. × 0.15μm df)

- Standard reference materials of target analytes

- Internal standards (e.g., deuterated analogs)

- Data processing software with GC×GC capabilities

Methodology:

- Modulator Optimization: Establish modulation period (typically 2-8 seconds) based on primary column flow rate and secondary column separation characteristics.

- Temperature Program Development: Optimize primary oven temperature ramp rate to achieve optimal wrap-around avoidance and secondary oven offset (+5-15°C).

- Flow Rate Calibration: Adjust carrier gas flow rates to achieve optimal secondary column separation within modulation period.

- Mass Spectrometer Parameters: Set transfer line temperature (typically 250-300°C), ion source temperature, acquisition rate (≥100 Hz), and mass range (e.g., 40-550 m/z).

- Method Validation:

- Precision and Accuracy: Analyze quality control samples at low, medium, and high concentrations (n=6 each) across three separate days.

- Linearity: Prepare eight-point calibration curve with internal standard correction.

- Limit of Detection (LOD) and Quantitation (LOQ): Determine via signal-to-noise ratio of 3:1 and 10:1 respectively.

- Specificity: Assess separation of 37 common synthetic cannabinoids or other target analytes in complex mixtures.

Data Analysis:

- Process raw data using GC×GC software for peak finding, integration, and identification.

- Generate contour plots for visualization of two-dimensional separation.

- Calculate peak capacity (1D × 2D) and compare to 1D-GC methods.

- Apply statistical analysis (ANOVA) for precision assessment.

Protocol for Inter-laboratory Validation Study

Objective: To assess reproducibility and transferability of GC×GC methods across multiple forensic laboratories (advancing from TRL 5 to TRL 7).

Study Design:

- Participant Recruitment: Enroll 8-10 forensic laboratories with GC×GC capabilities.

- Reference Material Preparation: Distribute identical sets of blinded samples including:

- Simple mixture standards for retention index alignment

- Complex case-type samples (e.g., seized drug material, fire debris extract)

- Quality control samples with known concentrations

- Standardized Protocol: Provide detailed operating procedures including:

- Instrument parameters (flow rates, temperature programs)

- Data acquisition settings

- Quality control criteria

- Data Submission: Collect raw data files, processed results, and method deviations.

Assessment Metrics:

- Intra-laboratory precision (repeatability)

- Inter-laboratory reproducibility

- Quantitative accuracy

- Correct identification rates

- Robustness to minor methodological variations

Advancing TRL in Forensic Chemistry: Strategic Approaches

Bridging the Valley of Death: From Research to Practice

The transition from promising research (TRL 3-4) to court-admissible methodology (TRL 8-9) represents the most significant challenge in forensic chemistry. This "valley of death" can be bridged through strategic approaches:

Collaborative Validation Networks: Establishing multi-laboratory validation consortia accelerates TRL advancement by generating the necessary data for legal acceptance. The National Institute of Justice (NIJ) facilitates such partnerships through its Forensic Science Research and Development Technology Working Group, which identifies operational needs and prioritizes research directions [6].

Standardization and Quality Assurance: Development of standardized protocols, reference materials, and proficiency tests is essential for TRL progression beyond level 6. The NIJ's research priorities specifically emphasize "standard criteria for analysis and interpretation" and "practices and protocols" that support technology implementation [7].

Error Rate Characterization: A fundamental requirement for court admissibility under Daubert is establishing known error rates [1]. Forensic chemistry research must incorporate comprehensive error rate studies through:

- Black box studies measuring accuracy and reliability of forensic examinations

- White box studies identifying specific sources of error

- Interlaboratory studies assessing reproducibility across different practitioners and laboratories [7]

Table 3: Research Reagent Solutions for Forensic Chemistry Technology Development

| Tool/Resource | Function in TRL Advancement | Representative Examples |

|---|---|---|

| Reference Materials | Method validation and quality control at TRL 4-7 | Certified synthetic drug standards, matrix-matched quality controls, internal standards |

| Data Processing Algorithms | Feature detection, peak deconvolution, and pattern recognition at TRL 3-6 | GC×GC data processing software, chemometric packages, machine learning classifiers |

| Quality Control Frameworks | Establishing reproducibility and error rates for TRL 6-8 | Standardized operating procedures, proficiency test programs, statistical quality control charts |

| Database Systems | Supporting statistical interpretation and evidence weighting at TRL 7-9 | Mass spectral libraries, retention index databases, population frequency data |

| Legal Admissibility Resources | Transitioning from TRL 8 to TRL 9 | Frye/Daubert/Mohan criteria checklists, validation documentation templates, expert testimony frameworks |

The application of the TRL scale to forensic chemistry publications provides a structured framework for assessing methodological maturity and implementation readiness. As demonstrated in the GC×GC case study, most forensic applications of advanced separation techniques currently reside at mid-TRL levels (4-7), indicating significant progress in analytical development but ongoing challenges in legal adoption. The progression from promising research to court-admissible methodology requires systematic attention to inter-laboratory validation, error rate characterization, and standardization – components that are often underrepresented in early-stage research.

Future directions for TRL advancement in forensic chemistry should emphasize increased intra- and inter-laboratory validation, explicit error rate analysis, and standardization to meet legal admissibility standards [1]. The forensic science research community must prioritize these components through collaborative networks, shared databases, and practitioner-researcher partnerships. By systematically addressing TRL progression criteria, forensic chemistry researchers can accelerate the translation of innovative technologies from the laboratory to the courtroom, ultimately enhancing the scientific foundation of forensic evidence.

For researchers, scientists, and drug development professionals, the ultimate validation of a new forensic or analytical technique extends beyond publication in scientific journals to its acceptance in a court of law. The admissibility of expert testimony based on novel scientific methods is governed by specific legal standards that act as critical gatekeepers. In the United States, the Frye and Daubert standards form the foundation for admitting expert evidence, while in Canada, the Mohan criteria serve a parallel function [1]. These legal frameworks demand that scientific evidence is not only relevant but also reliable, creating a direct bridge between the rigor of scientific research and the requirements of the justice system.

For forensic research, understanding these standards is not merely an academic exercise but a fundamental aspect of methodological development. The legal system subjects new analytical methods to special scrutiny to determine whether they meet a basic threshold of reliability before they can inform legal decisions [1] [8]. This guide provides an in-depth technical overview of these legal standards and connects them to a research and development lifecycle, providing a roadmap for forensic researchers to navigate the path from experimental concept to courtroom-ready evidence.

Core Legal Standards for Expert Evidence

The Frye Standard: General Acceptance

The Frye Standard, originating from the 1923 case Frye v. United States, established the earliest formal test for the admissibility of expert testimony in the United States [9]. This standard focuses on the "general acceptance" test, which dictates that an expert opinion is admissible only if the scientific technique on which the opinion is based is "generally accepted" as reliable in the relevant scientific community [9] [10]. The court in Frye affirmed the exclusion of testimony concerning a systolic blood pressure polygraph test, reasoning that the technique had not yet gained standing and scientific recognition among physiological and psychological authorities [9].

Application and Scope: The Frye inquiry is narrow, focusing primarily on whether the methodology, when properly performed, generates results generally accepted as reliable in the scientific community [11]. A Frye hearing is typically required only for novel scientific evidence, and universal acceptance is not a prerequisite [9]. The standard provides a clear framework for admissibility that emphasizes reliability through community consensus, though it can exclude emerging scientific techniques that have not yet gained widespread acceptance [10].

The Daubert Standard and Federal Rule of Evidence 702

In the 1993 case Daubert v. Merrell Dow Pharmaceuticals, Inc., the U.S. Supreme Court held that the Frye standard had been superseded by the Federal Rules of Evidence, establishing a new framework for federal courts [11]. The Daubert Standard enforces the role of trial judges as "gatekeepers" and shifts the inquiry from "general acceptance" to a broader assessment of reliability and relevance [11] [9].

The Daubert Court provided a non-exhaustive list of factors for judges to consider:

- Whether the method or theory can be or has been tested

- Whether it has been subjected to peer review and publication

- The known or potential rate of error

- The existence and maintenance of standards controlling the technique's operation

- Whether the method has gained general acceptance in the scientific community [11] [1]

This standard was subsequently codified in Federal Rule of Evidence 702, which was amended in 2023 to clarify and emphasize courts' gatekeeping responsibilities [12] [13]. The current rule states that an expert witness may testify if the proponent demonstrates that:

- The expert's scientific, technical, or other specialized knowledge will help the trier of fact

- The testimony is based on sufficient facts or data

- The testimony is the product of reliable principles and methods

- The expert's opinion reflects a reliable application of the principles and methods to the facts of the case [12]

The Mohan Criteria: The Canadian Framework

In Canada, the admissibility of expert evidence is governed by the criteria established in R. v. Mohan [14] [15]. This Supreme Court of Canada case set a precedent indicating that expert evidence should be excluded if it does not pass four key tests:

- Relevance: The evidence must be logically relevant to the matter at hand

- Necessity: The evidence must be necessary to assist the trier of fact

- Absence of any exclusionary rule: The evidence must not be barred by other exclusionary rules

- A properly qualified expert: The witness must have special knowledge through study or experience [15] [8]

The Mohan test comprises two steps. The first step involves determining whether the evidence passes these threshold requirements of admissibility. The second step, described as a "discretionary gatekeeping step," requires the trial judge to establish whether the benefits stemming from the admission of the expert evidence outweigh the potential harms that may result from its admission [14]. The Supreme Court of Canada has confirmed that special scrutiny is needed when determining the admissibility of novel scientific evidence, adopting the Daubert criteria for this purpose [8].

Comparative Analysis of Legal Standards

The following table provides a detailed comparison of the three primary legal standards for expert evidence admissibility:

Table 1: Comprehensive Comparison of Expert Evidence Admissibility Standards

| Criterion | Frye Standard | Daubert Standard & FRE 702 | Mohan Criteria |

|---|---|---|---|

| Origin | Frye v. United States (1923) [9] | Daubert v. Merrell Dow (1993); Federal Rules of Evidence [11] | R. v. Mohan (1994) [15] |

| Primary Focus | "General acceptance" in the relevant scientific community [10] | Relevance and reliability of methodology [11] | Relevance, necessity, and proper qualification [15] |

| Key Factors | • Acceptance within scientific field [9] | • Testability• Peer review• Error rates• Standards & controls• General acceptance [11] [1] | • Logical relevance• Necessity to trier of fact• Absence of exclusionary rules• Properly qualified expert [14] |

| Judicial Role | Limited determination of general acceptance [10] | Active gatekeeping role assessing reliability [11] [12] | Discretionary gatekeeping with cost-benefit analysis [14] |

| Scope | Primarily novel scientific evidence [9] | All expert testimony (scientific, technical, specialized) [12] | All expert witness evidence [15] |

| Burden of Proof | Not explicitly defined in original standard | Preponderance of evidence (explicit in amended FRE 702) [12] [13] | Balance of probabilities [14] |

| Current Jurisdiction | Some state courts (CA, NY, IL) [10] | U.S. federal courts and many state courts [11] [10] | Canadian courts [1] |

Visualizing the Admissibility Pathways

The following diagram illustrates the decision pathways and key criteria for each major admissibility standard:

Technology Readiness and Legal Admissibility: An Integrated Framework

For forensic researchers, the path from methodological development to courtroom application can be conceptualized through a technology readiness framework that aligns with legal admissibility requirements. The following diagram illustrates this integrated pathway:

Research Progression Through Technology Readiness Levels

Table 2: Research Maturity Alignment with Legal Admissibility Requirements

| Technology Readiness Level | Research Activities | Legal Standard Alignment | Validation Requirements |

|---|---|---|---|

| TRL 1-3 (Basic Research) | Observation of basic principles; Initial experimental studies | Potential relevance established; Initial peer review possible | Formulation of hypotheses; Laboratory-scale testing |

| TRL 4-5 (Technology Development) | Experimental proof of concept; Laboratory validation | Daubert factors: testing and peer review initiation; Error rate estimation begins | Component validation in laboratory environment; Initial method specification |

| TRL 6-7 (Technology Demonstration) | Prototype system in relevant environment; Independent validation | Daubert: Known error rates; Standards development; Mohan: Necessity assessment | System validation in relevant environment; Inter-laboratory reproducibility testing |

| TRL 8-9 (System Validation) | System complete and qualified in operational environment; Multiple validations | Frye: General acceptance building; Daubert: All factors satisfied; Mohan: Benefits outweigh costs | Actual system proven in operational environment; Widespread adoption in relevant scientific community |

Methodological Protocols for Admissible Research

Comprehensive Validation Framework

To meet legal admissibility standards, forensic research methodologies must undergo rigorous validation. The protocol below outlines critical validation components:

Table 3: Essential Methodological Validation Components for Legal Admissibility

| Validation Component | Experimental Protocol | Documentation Requirements | Legal Standard Addressed |

|---|---|---|---|

| Method Testing | Design experiments to test hypotheses under controlled conditions; Vary parameters to establish operating boundaries | Detailed experimental procedures; Raw data; Statistical analysis; Positive and negative controls | Daubert: Testability factor; Mohan: Relevance |

| Peer Review | Submit complete methodology, data, and conclusions to independent scientific journals | Manuscripts; Reviewers' comments; Revisions; Publication in reputable journals | Daubert: Peer review factor; Frye: General acceptance evidence |

| Error Rate Determination | Conduct repeated measurements on reference materials; Inter-laboratory comparisons; Proficiency testing | Quantitative error analysis; Uncertainty measurements; Statistical confidence intervals | Daubert: Known error rate factor; Mohan: Reliability assessment |

| Standards & Controls | Implement standard operating procedures (SOPs); Positive and negative controls; Calibration protocols | SOP documentation; Quality control records; Training documentation; Audit trails | Daubert: Standards & controls factor; All standards: Reliability |

| General Acceptance | Present at scientific conferences; Encourage independent verification; Publish application studies | Citations in literature; Independent validation studies; Adoption by other laboratories | Frye: General acceptance test; Daubert: General acceptance factor |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for Forensic Method Development

| Research Reagent / Material | Technical Function | Application in Legal Readiness |

|---|---|---|

| Certified Reference Materials | Provides traceable standards with known properties for method calibration and validation | Establishes method accuracy and reliability for Daubert standards compliance |

| Proficiency Test Samples | Blind samples for inter-laboratory comparison and ongoing quality assurance | Demonstrates laboratory competency and method reproducibility for error rate determination |

| Quality Control Materials | Stable, well-characterized materials for routine monitoring of analytical performance | Supports maintenance of standards and controls required by all legal frameworks |

| Data Processing Software | Algorithms for data analysis, peak integration, and statistical evaluation | Must be validated and transparent to address Daubert's reliable principles requirement |

| Documentation Systems | Electronic lab notebooks, chain of custody forms, and audit trails | Creates sufficient facts/data record required by FRE 702 and Mohan criteria |

The integration of technology readiness levels with legal admissibility standards provides a strategic framework for forensic researchers to systematically advance their methodologies from basic research to courtroom application. By understanding the specific requirements of the Frye, Daubert, and Mohan standards early in the research process, scientists can design validation studies that simultaneously address scientific rigor and legal expectations.

The recent 2023 amendment to FRE 702 emphasizes that the proponent of expert testimony must demonstrate admissibility by a preponderance of the evidence, reinforcing the need for thorough methodological validation before courtroom presentation [12] [13]. Similarly, Canadian courts continue to apply the Mohan criteria with careful attention to whether expert evidence is necessary and whether the potential benefits outweigh the costs to the trial process [14].

For forensic researchers, this integrated approach represents more than procedural compliance—it embodies the essential connection between scientific validity and justice. By building legal readiness into the research lifecycle, scientists can ensure that their work not only advances their field but also serves the equitable administration of justice.

From Theory to Practice: Implementing TRLs in Forensic Research and Development

Comprehensive two-dimensional gas chromatography (GC×GC) represents a revolutionary advancement in analytical separations, offering unparalleled resolution for complex mixtures encountered in forensic evidence analysis, from fire debris to controlled substances. The deployment of such sophisticated analytical techniques within the rigorous and legally defensible context of forensic science requires a systematic approach to technology development and validation. The Technology Readiness Level (TRL) scale provides this essential framework. Originally developed by NASA, TRLs are a measurement system used to assess the maturity level of a particular technology, with levels ranging from TRL 1 (basic principles observed) to TRL 9 (actual system proven through successful mission operations) [4]. For forensic researchers, this framework ensures that GC×GC methods transition from promising research concepts to robust, legally admissible analytical tools with defined performance characteristics at each stage of development.

Technology Readiness Levels: A Framework for Forensic Method Development

The Technology Readiness Level framework consists of nine distinct levels that provide a common set of definitions for determining the progress of research and development programs [4] [16]. When applied to GC×GC method development for forensic applications, each TRL represents a specific stage of methodological maturity:

TRL 1-2 (Basic Research): At these initial levels, fundamental studies of GC×GC separation mechanisms for forensically relevant compounds are conducted. Scientific research begins with translation of results into future research directions, establishing practical applications for initial findings with little to no experimental proof of concept [4].

TRL 3-4 (Proof of Concept): Active research and design begin, with both analytical and laboratory studies conducted to determine GC×GC viability for specific evidence types. Technologies advance to TRL 4 once proof-of-concept is established and multiple component pieces (column combinations, modulation schemes, detection systems) are tested together [4].

TRL 5-6 (Technology Validation): At TRL 5, the GC×GC system undergoes rigorous testing in environments that simulate forensic casework conditions. TRL 6 is achieved when a fully functional prototype or representational model is established that can handle authentic forensic samples [4].

TRL 7-9 (Operational Deployment): TRL 7 requires demonstration of the working model in an operational forensic laboratory environment. TRL 8 indicates the method has been fully validated and "qualified" for routine use, while TRL 9 signifies the technique has been "proven" through successful application to actual case evidence, establishing legal precedent [4].

Table 1: Technology Readiness Levels for GC×GC Method Development in Forensic Science

| TRL | Stage of Development | Key Activities for GC×GC Methods | Forensic Validation Requirements |

|---|---|---|---|

| TRL 1-2 | Basic principles observed and formulated | Literature review of separation mechanisms, preliminary feasibility studies | Theoretical basis for application to evidence types |

| TRL 3-4 | Experimental proof-of-concept established | Testing column combinations, modulator performance with standards | Basic separation metrics for target compounds |

| TRL 5-6 | Component/system validation in relevant environment | Method optimization with authentic matrices, comparison to standard methods | Precision, accuracy, robustness studies with controls |

| TRL 7-8 | System demonstrated in operational environment | Mock casework samples, collaborative exercises, standard operating procedure development | Full validation per SWGTOOL/SWGDRUG guidelines, uncertainty measurements |

| TRL 9 | Actual system proven through successful mission operations | Routine casework application, testimony acceptance, proficiency testing | Ongoing proficiency testing, continuous method monitoring |

Core Principles and Instrumentation of GC×GC

Fundamental Separation Mechanism

GC×GC employs two separate separation mechanisms with orthogonal selectivity, typically connected through a thermal or flow modulator. The entire effluent from the first dimension column is sequentially focused and injected into the second dimension column in very short pulses (typically 2-8 seconds), creating a continuous series of high-speed second-dimension separations. This modulation process produces a comprehensive two-dimensional chromatogram where compounds are characterized by two independent retention times, significantly increasing peak capacity and resolution compared to conventional GC.

Instrumentation Components

A complete GC×GC system consists of several integrated components that must be carefully selected and optimized based on the specific forensic application:

GC Oven and Injector: Standard GC hardware is utilized but requires optimization for the specific column set and modulation scheme. Inlet conditions, carrier gas selection, and injection techniques must be compatible with the thermal requirements of the modulator.

First Dimension Column: Typically a conventional capillary column (20-30 m × 0.25-0.32 mm i.d.) with a non-polar stationary phase (e.g., 100% dimethylpolysiloxane, 5% phenyl polysilphenylene-siloxane) that provides the primary separation based on volatility.

Modulator: The heart of the GC×GC system, responsible for trapping, focusing, and reinjecting effluent from the first dimension to the second dimension. Modern systems primarily use thermal modulators with either liquid nitrogen or carbon dioxide for cooling and electrical heating for rapid desorption.

Second Dimension Column: A short, narrow-bore capillary column (1-2 m × 0.1-0.18 mm i.d.) with a polar stationary phase that provides very fast secondary separation based on polarity, typically completed in 2-8 seconds.

Detector: Requires high data acquisition rates (50-200 Hz) to properly define the very narrow peaks (100-200 ms baseline width) produced in the second dimension. Time-of-flight mass spectrometry (TOFMS) is particularly compatible due to its inherent fast acquisition capabilities.

Table 2: GC×GC Component Specifications for Forensic Applications

| System Component | Technical Specifications | Performance Requirements | Common Configurations for Forensic Analysis |

|---|---|---|---|

| First Dimension Column | 20-30 m length, 0.25-0.32 mm i.d., 0.25-1.0 μm film thickness | Non-polar phase (100% PDMS, 5% phenyl) | High thermal stability for temperature programming with complex mixtures |

| Second Dimension Column | 1-2 m length, 0.1-0.18 mm i.d., 0.05-0.18 μm film thickness | Polar phase (polyethylene glycol, 50% phenyl polysilphenylene-siloxane) | Ultra-fast separations (2-8 second cycles) with minimal bleed |

| Modulator | Thermal modulation with cryogenic cooling (LN₂ or CO₂) | 2-8 second modulation periods, narrow injection bands (<100 ms) | Capable of handling high boiling point compounds encountered in forensic samples |

| Detector | Time-of-flight mass spectrometer (TOFMS) | Acquisition rate 50-200 Hz, mass range 40-550 m/z | Deconvolution algorithms for coeluting peaks, library search capabilities |

| Data System | Specialty software for GC×GC data handling | Capable of processing 3D data (time1 × time2 × intensity), peak finding algorithms | Color-coded contour plots, structured chromatogram visualization, statistical comparison |

Experimental Protocol: GC×GC Method Development for Forensic Applications

Initial Method Setup and Parameter Optimization

The development of a robust GC×GC method requires systematic optimization of multiple interdependent parameters. Begin with establishing the first dimension separation by adapting a validated one-dimensional GC method for the target analytes. The temperature program should be optimized to balance separation and analysis time, typically utilizing a moderate ramp rate (1.5-3.0°C/min) to provide sufficient first-dimension peak widths (10-20 seconds) for effective modulation. The modulation period must then be carefully selected to provide 3-4 modulations across the first-dimension peak width, typically ranging from 2-8 seconds depending on the complexity of the sample and the speed of the second-dimension separation.

The second dimension separation operates under nearly isothermal conditions, with the temperature offset typically 5-20°C above the first dimension oven temperature at the time of modulation. This thermal gradient across the two dimensions enhances the orthogonality of the separation. Carrier gas linear velocity must be optimized for both dimensions, with the second dimension operating at higher linear velocity to achieve the required fast separations. Finally, detector parameters must be established to ensure sufficient data acquisition rate (50-200 Hz) to accurately capture the narrow (50-200 ms) peaks eluting from the second dimension.

Comprehensive Method Validation Protocol

For forensic applications, GC×GC methods require rigorous validation to meet evidentiary standards. The validation protocol should include the following experiments performed over at least five independent runs on different days:

Linearity and Range: Analyze a minimum of five calibration standards across the expected concentration range, including concentrations near the limit of quantification, using internal standard calibration. Acceptance criterion: r² ≥ 0.995.

Accuracy and Precision: Analyze QC samples at three concentration levels (low, medium, high) in quintuplicate over three separate days. Calculate intra-day and inter-day precision (%RSD) and accuracy (%bias). Acceptance criteria: Precision ≤ 15% RSD (≤20% at LLOQ), accuracy 85-115% (80-120% at LLOQ).

Limit of Detection (LOD) and Quantification (LOQ): Establish based on signal-to-noise ratios of 3:1 and 10:1, respectively, with verification by analysis of samples at these concentrations meeting precision and accuracy requirements.

Selectivity: Demonstrate absence of interference from blank matrix samples (minimum n=6 from different sources) at the retention times of target analytes.

Robustness: Deliberately vary critical method parameters (modulation period, temperature ramp rate, carrier gas velocity) within small ranges and measure impact on key performance metrics.

Carryover: Inject blank solvent samples following the highest calibration standard and verify absence of peaks >20% of LLOQ.

Data Analysis and Interpretation in GC×GC

Data Visualization Techniques

GC×GC generates complex three-dimensional data sets (1tʀ × 2tʀ × intensity) that require specialized visualization and processing. The most common representation is the two-dimensional contour plot, where first-dimension retention time is plotted on the x-axis, second-dimension retention time on the y-axis, and peak intensity is represented by color gradients. Structured patterns emerge in these plots, with compounds of similar chemical characteristics forming ordered clusters that aid in compound identification, even for unknown components.

Data processing involves peak detection, integration, and identification across both dimensions. Modern GC×GC software utilizes advanced algorithms for peak finding that account for the unique shape and distribution of peaks in the two-dimensional separation space. For mass spectrometric detection, spectral deconvolution algorithms are essential for resolving coeluting compounds that may be incompletely separated even in the two-dimensional space.

Quantitative Analysis Approaches

While GC×GC provides exceptional separation power, quantitative analysis requires careful method design to address the unique characteristics of the technique. Internal standardization is essential, preferably using multiple internal standards that cover different regions of the separation space. The choice of quantification approach depends on the analysis requirements:

Target Compound Analysis: For known analytes, peak volume integration in the 2D space provides the highest precision, with integration regions defined based on first- and second-dimension retention time windows.

Group-Type Analysis: For characterizing complex mixtures by chemical class, template-based integration regions can be applied to group compounds based on their position in the 2D separation space.

Non-Target Analysis: For comprehensive sample characterization, pixel-based approaches that consider the entire data set without prior peak detection enable advanced statistical analysis, including principal component analysis (PCA) for sample classification.

Table 3: Advanced Data Processing Techniques for GC×GC in Forensic Analysis

| Data Processing Technique | Methodology | Application in Forensic Science | Implementation Considerations |

|---|---|---|---|

| Structured Chromatogram Analysis | Identification of ordered patterns (homologous series, chemical classes) | Chemical profiling of complex mixtures (drug impurities, ignitable liquids) | Requires validated retention index systems in both dimensions |

| Pixel-Based Data Analysis | Statistical analysis of raw data points without peak finding | Non-targeted screening for unknown compounds, sample comparison and classification | Computationally intensive, requires specialized software |

| Multivariate Statistical Analysis | Principal component analysis (PCA), linear discriminant analysis (LDA) | Objective comparison of complex evidence samples, source attribution | Large sample sets required for statistical significance |

| Peak Capacity Calculations | Measurement of theoretical separation power under specific conditions | Method optimization, comparison with alternative techniques | Actual utilization typically 20-40% of theoretical maximum |

| Contour Plot Visualization | Color-coded intensity representation with optimized color gradients | Data interpretation, presentation in legal proceedings | Color schemes must be accessible (color blindness compatible) |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of GC×GC in forensic research requires carefully selected reagents, reference materials, and consumables. The following table details key research reagent solutions essential for developing and validating GC×GC methods for complex evidence analysis.

Table 4: Essential Research Reagent Solutions for GC×GC Method Development

| Reagent/Consumable | Technical Specifications | Function in GC×GC Analysis | Quality Control Requirements |

|---|---|---|---|

| Certified Reference Materials | Purity ≥98%, certificate of analysis with uncertainty measurements | Quantitative calibration, method validation, quality control | Traceability to national standards, stability documentation |

| Internal Standards | Stable isotope-labeled analogs of target analytes (²H, ¹³C, ¹⁵N) | Correction for matrix effects, injection volume variations, recovery calculations | Minimal isotopic interference, different retention from native compounds |

| Quality Control Materials | Characterized matrix-matched materials with assigned values | Method performance verification, ongoing quality assurance | Commutability with authentic samples, sufficient volume for long-term use |

| Derivatization Reagents | High purity silylation, acylation, or esterification reagents | Enhancement of volatility, stability, or detection of polar compounds | Reaction efficiency verification, stability under storage conditions |

| Column Qualification Test Mixes | Compounds with varying functional groups and polarities | Performance verification of column combinations, monitoring of column degradation | Coverage of relevant chemical space, stability at elevated temperatures |

| Matrix-Matched Calibrators | Prepared in extracted negative matrix with known additions | Compensation for matrix-induced enhancement or suppression | Consistent matrix source, demonstration of absence of interference |

The systematic development of comprehensive two-dimensional gas chromatography methods through the Technology Readiness Level framework provides forensic researchers with a structured pathway from fundamental investigation to court-admissible analytical capability. By progressing through defined maturity stages with specific deliverables and validation milestones at each level, laboratories can efficiently allocate resources while building the necessary documentation for legal defensibility. The exceptional separation power of GC×GC addresses fundamental challenges in forensic science, particularly for complex mixture analysis where conventional techniques prove inadequate. As this advanced analytical technique continues to mature within the forensic community, its application to evidentiary materials promises enhanced discrimination power, improved confidence in identification, and ultimately, stronger scientific evidence for the legal system.

Forensic science laboratories are continually advancing their analytical capabilities to handle complex evidence. Comprehensive Two-Dimensional Gas Chromatography (GC×GC) represents a significant evolution beyond traditional one-dimensional GC, offering superior separation power for forensic applications including illicit drug analysis, toxicology, and arson investigations [1]. This technique connects two columns of different stationary phases in series via a modulator, creating two independent separation mechanisms that dramatically increase peak capacity and improve detection of trace compounds in complex mixtures [1]. The modulator, often described as the heart of GC×GC, preserves separation from the first column by transferring narrow retention time windows to the secondary column for further separation [1]. This review examines the current state of GC×GC applications across key forensic disciplines, evaluating both analytical methodologies and technology readiness levels (TRL) for implementation in routine casework.

Core Principles and Technical Advancements of GC×GC

Fundamental Operational Mechanism

The GC×GC analytical process begins with sample injection onto the primary column (1D column), where separation occurs based on analyte affinity for its stationary phase [1]. As compounds elute from this column, the modulator collects effluent for brief periods (typically 1-5 seconds) and injects these concentrated plugs onto the secondary column (2D column) at repeated intervals known as the modulation period [1]. The secondary column employs a different retention mechanism, providing orthogonal separation that distributes compounds across a two-dimensional retention plane rather than a linear timeline [1]. This configuration produces significantly higher peak capacity compared to conventional GC, enabling resolution of co-eluting compounds that would be indistinguishable in one-dimensional analysis [1].

Detection and Data Analysis Evolution

Detection systems for GC×GC have advanced substantially from early implementations using flame ionization detection (FID) and mass spectrometry (MS). Current platforms frequently incorporate high-resolution (HR) MS and time-of-flight (TOF) MS detectors, with dual detection methods such as TOFMS/FID gaining traction for their complementary data streams [1]. These technological improvements have enhanced both sensitivity and compound identification capabilities, particularly valuable for non-targeted forensic applications where a wide range of unknown analytes must be characterized simultaneously [1]. The increased signal-to-noise ratio inherent to GC×GC modulation techniques further improves detectability of minor components in complex forensic samples [1].

Forensic Applications and Technology Readiness Assessment

Technology Readiness Levels Framework

For adoption in forensic laboratories, analytical methods must meet rigorous standards and adhere to legal admissibility criteria including the Frye Standard, Daubert Standard, Federal Rule of Evidence 702 in the United States, and the Mohan Criteria in Canada [1]. These legal frameworks emphasize reliable principles and methods, known error rates, peer review, and general acceptance in the scientific community [1]. A technology readiness scale (TRL 1-4) categorizes the advancement of GC×GC research across forensic applications as of 2024, with future directions focusing on intra- and inter-laboratory validation, error rate analysis, and standardization [1].

Illicit Drug Analysis

GC×GC-MS demonstrates particular utility for characterizing complex drug mixtures, including emerging psychoactive substances and cutting agents [1]. The technique's enhanced separation power helps resolve isomeric compounds and trace components that may be forensically significant but undetectable with traditional GC-MS [1]. Current research focuses on method development for specific drug classes, with technology readiness assessed at Level 2-3, indicating established proof-of-concept but requiring further validation for routine implementation [1].

Methodology for Drug Analysis: Sample preparation typically involves solid-phase extraction or liquid-liquid extraction from seized materials or biological matrices [17]. Following derivatization if necessary, samples are injected into the GC×GC system with a primary non-polar column (e.g., 5% phenyl polysilphenylene-siloxane) and secondary mid-polarity column (e.g., 50% phenyl polysilphenylene-siloxane) [1]. Modulation is achieved using thermal or flow-based modulators, with detection via TOF-MS for untargeted analysis or tandem MS for targeted compounds [1]. Data analysis employs specialized software to deconvolute complex two-dimensional chromatograms and compare mass spectra against spectral libraries [1].

Forensic Toxicology

In systematic toxicological analysis (STA), GC×GC-HRMS provides broad screening capabilities for drugs, metabolites, and other toxicologically relevant compounds in biological samples [17]. The technique addresses a major challenge in forensic toxicology: the simultaneous detection of numerous substances with diverse physicochemical properties, including new psychoactive substances (NPS) [17]. GC×GC complements LC-MS methods by better detecting volatile compounds like propofol, chloral hydrate, and pregabalin [1] [17]. Technology readiness for toxicological applications is currently at Level 2, with active research but limited standardization for casework [1].

Toxicology Analysis Protocol: Biological samples (blood, urine, tissues) undergo protein precipitation, enzymatic hydrolysis of conjugates, and solid-phase extraction [17]. Extracts are concentrated and derivatized if necessary before GC×GC-TOFMS analysis [17]. Data interpretation combines retention index matching in both dimensions with high-resolution mass spectral comparison against databases such as the Maurer/Pfleger/Weber mass spectral library [17]. Quality control includes analysis of positive controls, blanks, and internal standards to monitor extraction efficiency and instrument performance [17].

Arson Investigations

GC×GC applications in arson investigation focus on identifying ignitable liquid residues (ILR) from fire debris, a complex analytical challenge due to background interference from pyrolysis products [1]. The technique's enhanced separation capacity better distinguishes petroleum-based accelerants from substrate decomposition compounds compared to standard GC-MS [1]. Additionally, GC×GC is employed in environmental forensics for oil spill tracing, which shares analytical approaches with fire investigation [1]. This application area has reached Technology Readiness Level 3-4, indicating more mature methodology with some laboratories implementing routine analysis [1].

Arson Analysis Procedure: Fire debris samples are collected in airtight containers and subjected to passive headspace concentration using activated charcoal strips or solid-phase microextraction (SPME) [1]. Extracted compounds are analyzed by GC×GC-FID or GC×GC-TOFMS with column selection optimized for hydrocarbon separation [1]. Data interpretation employs pattern recognition algorithms to classify ignitable liquids based on two-dimensional chromatographic profiles and differentiate them from background interferences [1].

Table 1: Technology Readiness Levels for Forensic Applications of GC×GC

| Application Area | Technology Readiness Level | Key Advantages | Validation Needs |

|---|---|---|---|

| Illicit Drug Analysis | TRL 2-3 | Enhanced separation of complex mixtures and isomers | Standardized protocols, error rate studies |

| Forensic Toxicology | TRL 2 | Broad screening capability, detection of novel psychoactive substances | Reference databases, inter-laboratory validation |

| Arson Investigations (ILR) | TRL 3-4 | Better discrimination of ignitable liquids from background | Quantitative criteria, standardized data interpretation |

| Oil Spill Tracing | TRL 3-4 | Chemical fingerprinting of complex petroleum mixtures | Source correlation databases, standardized reporting |

| Odor Decomposition | TRL 2-3 | Comprehensive volatile organic compound profiling | Temporal studies, compound identification validation |

Experimental Workflows and Signaling Pathways

Generalized GC×GC Analytical Workflow

GC×GC Analytical Workflow

Forensic Substance Identification Pathway

Substance Identification Pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for GC×GC Forensic Analysis

| Reagent/Material | Function | Application Specifics |

|---|---|---|

| Derivatization Reagents (e.g., MSTFA, BSTFA) | Enhances volatility and thermal stability of polar compounds | Critical for drug metabolites, steroids, and acidic compounds in toxicology |

| Solid-Phase Extraction (SPE) Cartridges | Extracts and concentrates analytes from complex matrices | Used for biological samples (blood, urine) and fire debris extraction |

| Headspace Vials and SPME Fibers | Extracts volatile compounds for analysis | Essential for arson investigations (ILR) and decomposition odor studies |

| Color Test Reagents (Marquis, Scott's, Duquenois) | Presumptive identification of drug classes | Initial screening tool; requires confirmatory analysis by GC×GC-MS [18] |

| Stationary Phase Columns (varied polarities) | Provides orthogonal separation mechanisms | Combination of non-polar (1D) and mid-polar (2D) columns most common |

| Quality Control Standards | Verifies instrument performance and method validity | Includes internal standards, continuing calibration verification |

| Reference Spectral Libraries | Compound identification through mass spectral matching | NIST, Maurer/Pfleger/Weber libraries; custom databases for novel compounds |

Analytical Considerations and Legal Admissibility

Method Validation Requirements

For GC×GC methods to transition from research to routine forensic application, comprehensive validation must address specificity, sensitivity, accuracy, precision, and robustness [1]. Key parameters include establishing limits of detection and quantification, linear dynamic range, recovery efficiency, and reproducibility across multiple instruments and operators [1]. Method robustness testing should evaluate impacts of minor variations in operational parameters such as modulation period, temperature programming, and carrier gas flow rates [1].

Legal Adherence Framework

Forensic methods must satisfy legal standards for expert testimony admission, including the Daubert Standard's requirements that techniques be tested, peer-reviewed, have known error rates, and enjoy general acceptance in the relevant scientific community [1]. For GC×GC, this necessitates establishing standardized protocols, conducting inter-laboratory studies to determine reproducibility and error rates, and publishing validation data in peer-reviewed literature [1]. The technology's foundation in generally accepted GC and MS principles provides a pathway for legal recognition, but application-specific validation remains essential [1].

GC×GC represents a powerful separation platform with demonstrated potential across multiple forensic disciplines, particularly for complex evidence analysis where conventional techniques prove inadequate. While applications in illicit drug analysis, toxicology, and arson investigations show varying technology readiness levels, ongoing research focuses on method validation, error rate determination, and standardization necessary for adoption in forensic laboratories. The technique's enhanced separation power and detection sensitivity position it as a valuable tool for addressing evolving forensic challenges, including emerging drugs and complex mixture analysis, though further work is required to establish legal admissibility across all application domains.

Within the framework of Technology Readiness Levels (TRLs) for forensic research, the systematic validation of analytical methods is a critical gateway for any technology to progress from foundational research (TRL 1-3) to routine laboratory implementation (TRL 7-9) [19] [20]. Method robustness is formally defined as "a measure of an analytical procedure's capacity to remain unaffected by small but deliberate variations in procedural parameters listed in the documentation, providing an indication of the method's suitability and reliability during normal use" [21]. In practical terms, it evaluates how well a method withstands minor, inevitable fluctuations in laboratory conditions—such as mobile phase pH or instrument temperature—that occur between analysts, instruments, and days. For forensic applications, establishing robustness is not merely a scientific formality; it is a prerequisite for legal admissibility, ensuring methods meet standards such as the Daubert Standard and Federal Rule of Evidence 702 by demonstrating reliable performance under realistic operational conditions [19].

This technical guide provides forensic researchers and drug development professionals with a structured approach to building method robustness. It details the core figures of merit for quantification, protocols for uncertainty measurement, and strategies for intra-laboratory validation, all framed within the technology maturation pathway essential for successful courtroom adoption.

Core Concepts: Robustness, Ruggedness, and Figures of Merit

A clear understanding of terminology is essential for proper experimental design. The terms robustness and ruggedness, often used interchangeably, refer to distinct concepts in method validation [21].

- Robustness assesses the impact of internal parameters specified within the method (e.g., mobile phase composition, flow rate, column temperature, pH). It is an measure of the method's inherent stability.

- Ruggedness, increasingly referred to as intermediate precision, assesses the impact of external factors not specified in the method (e.g., different analysts, laboratories, instruments, or days). It measures the method's reproducibility under normal, expected operational variations [21].

The reliability of a method is quantified using specific Figures of Merit (FMs). These metrics provide the quantitative foundation for assessing both method performance and the impact of parameter variations during robustness testing. The following table summarizes the key figures of merit, their definitions, and their role in uncertainty measurement.

Table 1: Key Figures of Merit for Quantifying Method Performance and Uncertainty

| Figure of Merit | Definition | Role in Uncertainty Measurement |

|---|---|---|

| Accuracy | The closeness of agreement between a measured value and a true reference value. | Quantifies systematic error (bias). |

| Precision | The closeness of agreement between independent measurement results obtained under stipulated conditions. | Quantifies random error; often measured as repeatability and intermediate precision. |

| Selectivity/Specificity | The ability to measure the analyte unequivocally in the presence of other components. | Ensures the uncertainty budget is not inflated by interferences. |

| Linearity & Range | The ability to obtain results directly proportional to analyte concentration within a given range. | Defines the operational bounds where uncertainty is characterized. |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be detected. | Contributes to uncertainty at trace levels. |

| Limit of Quantification (LOQ) | The lowest concentration of an analyte that can be quantified with acceptable precision and accuracy. | Defines the lower limit for reliable quantitative uncertainty estimation. |

| Sensitivity | The slope of the analytical calibration curve. | Describes how the response changes with concentration. |

Experimental Design for Robustness Testing

A robust method is one that has been systematically challenged. Moving away from inefficient univariate approaches (changing one factor at a time), modern robustness testing employs multivariate screening designs to study the effects of multiple variables simultaneously. This efficient approach reveals interactions between factors that would otherwise remain undetected [21].

Screening Design Selection

The choice of experimental design depends on the number of factors (parameters) to be investigated. The three most common screening designs are:

- Full Factorial Designs: Investigates all possible combinations of factors at their high and low levels. For k factors, this requires 2k runs. This design is comprehensive but becomes impractical for more than five factors due to the high number of runs [21].

- Fractional Factorial Designs: A carefully chosen subset (e.g., 1/2, 1/4) of the full factorial design runs. This is a highly efficient approach for investigating a larger number of factors, based on the principle that most processes are dominated by main effects and low-order interactions. The trade-off is that some effects may be confounded (aliased) [21].

- Plackett-Burman Designs: Extremely economical designs for screening a large number of factors (e.g., up to 11 factors in 12 runs) where the primary goal is to identify the most critical factors affecting the method. They are ideal for determining whether a method is robust to many changes rather than precisely quantifying each individual effect [21].

Protocol for Executing a Robustness Study

The following workflow provides a detailed methodology for planning, executing, and analyzing a robustness study.

Step 1: Define Scope and Parameters The first step involves a critical review of the analytical method to list all procedural parameters that could plausibly vary during routine use. For a liquid chromatography method, this typically includes: mobile phase pH, buffer concentration, percent organic solvent, flow rate, column temperature, detection wavelength, and gradient conditions [21]. The selection of factors and their ranges should be based on chromatographic knowledge gained during method development.

Step 2: Select Factors and Ranges For each parameter, define a nominal value (the value specified in the method) as well as a high and low value representing a small, deliberate variation. The ranges should reflect the expected variations in a routine laboratory environment. The table below provides an example for an isocratic HPLC method.

Table 2: Example Robustness Factor Selection and Limits for an Isocratic Method

| Factor | Nominal Value | Low Value (-) | High Value (+) |

|---|---|---|---|

| Mobile Phase pH | 3.10 | 3.00 | 3.20 |

| Buffer Concentration (mM) | 25 | 23 | 27 |

| % Organic Solvent | 45% | 43% | 47% |

| Flow Rate (mL/min) | 1.0 | 0.9 | 1.1 |

| Column Temperature (°C) | 30 | 28 | 32 |

| Detection Wavelength (nm) | 254 | 252 | 256 |

Source: Adapted from [21]

Step 3: Choose an Experimental Design Select an appropriate screening design based on the number of factors. For the 6 factors listed in Table 2, a 12-run Plackett-Burman design or a 16-run fractional factorial design would be highly efficient choices [21].

Step 4: Execute Runs and Collect Data Perform the experiments in a randomized order to minimize the impact of uncontrolled variables. For each run, record the responses for the key figures of merit, such as retention time, peak area, resolution from the closest peak, and tailing factor.

Step 5: Analyze Data and Identify Critical Control Factors (CCFs) Analyze the data using statistical software to perform analysis of variance (ANOVA). The goal is to identify which parameters have a statistically significant effect on each response. Parameters that exert a significant and undesirable effect on critical FMs are deemed Critical Control Factors (CCFs). These parameters may require tighter control limits in the method documentation or may trigger a method optimization step.

Step 6: Establish System Suitability Parameters Based on the results, define system suitability test (SST) limits that will ensure the validity of the analytical system throughout its use. For example, if the study shows that resolution is highly sensitive to pH, the SST must include a stringent resolution requirement [21].

Intra-laboratory Validation and the Path to Technology Readiness

Intra-laboratory validation, encompassing both robustness and intermediate precision (ruggedness), is a cornerstone of technology maturation for forensic methods. It provides the necessary data to advance from lower TRLs, focused on proof-of-concept, to higher TRLs, where methods are refined and prepared for inter-laboratory transfer [20].

The following diagram illustrates how robustness testing and validation activities integrate into the broader Technology Readiness Level framework for forensic science.

As shown, robustness evaluation begins early (TRL 4-5) and becomes more formalized through TRL 6-7, culminating in a complete intra-laboratory validation package. This package is essential for meeting the "intra- and inter-laboratory validation" and "error rate analysis" requirements outlined by legal standards for forensic evidence [19]. Successfully demonstrating method robustness directly contributes to a technology's readiness for routine forensic analysis and court-room admissibility.

The Scientist's Toolkit: Essential Research Reagent Solutions