Synthetic Pathways and Analytical Characterization in Modern Drug Development: AI, Methods, and Validation

This article provides a comprehensive analysis of the current landscape and future directions in pharmaceutical synthetic pathway development and analytical characterization.

Synthetic Pathways and Analytical Characterization in Modern Drug Development: AI, Methods, and Validation

Abstract

This article provides a comprehensive analysis of the current landscape and future directions in pharmaceutical synthetic pathway development and analytical characterization. Tailored for researchers, scientists, and drug development professionals, it explores foundational principles of new drug modalities and regulatory drivers. The scope spans methodological advances in AI-driven retrosynthesis and Quality-by-Design, tackles troubleshooting complex molecule synthesizability, and details validation paradigms for regulatory compliance. By synthesizing insights across these four intents, this resource aims to equip practitioners with the knowledge to accelerate the development of safe, effective, and manufacturable therapies.

The Evolving Landscape of Drug Modalities and Regulatory Frameworks

The landscape of pharmaceutical development has been fundamentally transformed by the advent of sophisticated biological therapeutics. These novel modalities—monoclonal antibodies (mAbs), antibody-drug conjugates (ADCs), and cell and gene therapies—represent a paradigm shift from traditional small-molecule drugs toward targeted, mechanism-based treatments [1]. By leveraging the body's own biological systems, these therapeutics offer unprecedented precision in treating complex diseases, particularly in oncology, autoimmune disorders, and rare genetic conditions. The integration of advanced technologies including artificial intelligence, CRISPR gene editing, and sophisticated characterization methods has accelerated the development and optimization of these therapies, creating new possibilities for personalized medicine and addressing previously untreatable conditions [2] [1] [3]. This whitepaper provides an in-depth technical examination of these therapeutic classes, their mechanisms of action, analytical characterization requirements, and future directions within the context of modern drug development pathways.

Monoclonal Antibodies (mAbs)

Evolution and Technical Specifications

Monoclonal antibodies have evolved from murine origins to fully human constructs, significantly reducing immunogenicity while improving therapeutic efficacy. The technological progression has been marked by several key platforms:

Hybridoma Technology: The initial method developed by Köhler and Milstein in 1975 enabled mass production of identical monoclonal antibodies but yielded murine antibodies with high immunogenicity [1].

Chimeric and Humanized Antibodies: Chimeric antibodies (e.g., rituximab) fuse murine variable regions with human constant regions, reducing immunogenicity. Humanized antibodies (e.g., trastuzumab) further refine this approach by grafting complementarity-determining regions (CDRs) onto human framework regions [1].

Fully Human Antibodies: Developed through phage display technology (e.g., adalimumab) or transgenic mouse platforms (e.g., panitumumab), these antibodies eliminate murine components, dramatically reducing immunogenic potential [1].

Bispecific Antibodies: Engineered to bind two different epitopes simultaneously, bispecific antibodies (e.g., blinatumomab) can redirect immune cells to tumor cells or engage multiple signaling pathways [1].

Table 1: Key Technological Platforms for Therapeutic Antibody Development

| Platform | Mechanism | Representative Drug | Advantages | Limitations |

|---|---|---|---|---|

| Hybridoma | Fusion of immune B-cells with myeloma cells | Muromonab-CD3 | Well-established, high affinity | Murine origin, high immunogenicity |

| Phage Display | Selection from human antibody gene libraries | Adalimumab | Fully human, in vitro selection | Limited natural immune context |

| Transgenic Mice | Human Ig genes in mouse genome | Panitumumab | Fully human, in vivo affinity maturation | Complex intellectual property |

| Single B-Cell Sorting | Isolation and cloning of individual B-cells | Multiple anti-viral mAbs | Preserves natural pairs, rapid discovery | Technically challenging |

Mechanism of Action and Therapeutic Applications

mAbs exert therapeutic effects through multiple mechanisms tailored to specific disease pathways:

Target Neutralization: Binding and inactivation of soluble ligands or cell-surface receptors (e.g., TNF-α inhibition by adalimumab in autoimmune diseases) [1].

Immune Effector Function: Engagement of Fcγ receptors on immune cells leading to antibody-dependent cellular cytotoxicity (ADCC), antibody-dependent cellular phagocytosis (ADCP), and complement-dependent cytotoxicity (CDC) [4]. IgG1 subtypes are particularly effective at initiating these responses due to their high binding affinity for Fc receptors [4].

Receptor Internalization and Downregulation: Antibody binding induces receptor internalization and degradation, reducing surface expression (e.g., HER2 downregulation by trastuzumab) [4].

Immunomodulation: Checkpoint inhibitors (e.g., pembrolizumab) block inhibitory receptors on T cells, restoring anti-tumor immunity [1].

The global market for therapeutic antibodies has grown exponentially, reaching USD 267 billion in annual sales by 2024, with 144 FDA-approved antibody drugs and over 1,500 candidates in clinical development as of August 2025 [1].

Antibody-Drug Conjugates (ADCs)

Core Components and Design Principles

ADCs represent a novel class of biopharmaceuticals that combine the specificity of monoclonal antibodies with the potent cytotoxicity of small-molecule drugs [5]. These sophisticated "biological missiles" consist of three core components:

Monoclonal Antibody: Serves as the targeting moiety, designed to recognize antigens preferentially expressed on target cells. Ideal target antigens should have high tumor-specific expression, non-secreted nature, and efficient internalization capability [4]. Key targets in approved ADCs include HER2, TROP2, CD19, CD22, and BCMA [4] [6].

Linker: Determines ADC stability in circulation and payload release efficiency intracellularly. Cleavable linkers (e.g., peptide linkers susceptible to cathepsin B, acid-labile hydrazone) enable specific release in target cells, while non-cleavable linkers require antibody degradation for payload release [7].

Payload: Highly potent cytotoxic agents (typically IC50 values in picomolar to nanomolar range) that kill target cells upon internalization and release. Common payload classes include microtubule inhibitors (e.g., auristatins, maytansinoids), DNA damaging agents (e.g., calicheamicin, duocarmycins), and topoisomerase inhibitors (e.g., deruxtecan, govitecan) [5] [4].

Table 2: Approved HER2-Targeted ADCs and Technical Specifications

| ADC Drug (Generation) | Payload Mechanism | Linker Type | DAR | Key Indications |

|---|---|---|---|---|

| Trastuzumab Emtansine (T-DM1, 2nd) | Microtubule inhibition (DM1) | Non-cleavable | 3.5 | HER2+ metastatic breast cancer, adjuvant therapy |

| Trastuzumab Deruxtecan (T-DXd, 4th) | Topoisomerase I inhibition (DXd) | Cleavable tetrapeptide | 8 | HER2+ breast cancer, HER2-low BC, gastric cancer, NSCLC |

| Disitamab Vedotin (RC48) | Microtubule inhibition (MMAE) | Cleavable | 4 | HER2+ gastric cancer, urothelial carcinoma |

| Trastuzumab Rezetecan (SHR-A1811) | Topoisomerase I inhibition (rezetecan) | Not specified | 6 | HER2-mutant NSCLC |

Mechanism of Action and Bystander Effect

The therapeutic activity of ADCs follows a multi-step process:

- Antibody-Antigen Binding: The antibody component specifically binds to target antigens on the cell surface [5].

- Internalization: The ADC-antigen complex undergoes receptor-mediated endocytosis, trafficking through endosomes to lysosomes [5].

- Payload Release: Lysosomal enzymes and acidic environment cleave the linker, releasing the active cytotoxic payload [5].

- Target Cell Death: The payload binds its intracellular target (DNA or microtubules), triggering apoptosis [5].

A critical advancement in ADC technology is the "bystander effect" exhibited by certain ADCs (particularly those with membrane-permeable payloads like deruxtecan). This effect allows the cytotoxic payload to diffuse into neighboring cells, including those with heterogeneous or low target antigen expression, significantly enhancing antitumor efficacy in mixed cell populations [5].

Generational Evolution of ADC Technology

ADC development has progressed through four distinct generations, each addressing limitations of its predecessors:

First-Generation ADCs: Utilized murine antibodies and unstable linkers, leading to immunogenicity and premature payload release (e.g., gemtuzumab ozogamicin) [5].

Second-Generation ADCs: Incorporated humanized antibodies, more stable linkers, and improved payloads (e.g., brentuximab vedotin, trastuzumab emtansine) with better therapeutic indices [5].

Third-Generation ADCs: Employed site-specific conjugation techniques for homogeneous drug-to-antibody ratio (DAR), fully human antibodies, and hydrophilic linkers to improve pharmacokinetics (e.g., enfortumab vedotin) [5].

Fourth-Generation ADCs: Further optimized DAR values (~8) and incorporated novel payload classes with enhanced bystander effects (e.g., trastuzumab deruxtecan, sacituzumab govitecan) [5].

Cell and Gene Therapies

CAR-T Cell Therapy: Engineering Immune Cells

Chimeric antigen receptor (CAR)-T cell therapy represents a groundbreaking approach in cancer treatment by genetically engineering patients' own T cells to recognize and eliminate tumor cells [8]. CAR constructs have evolved through multiple generations:

First-Generation CARs: Comprised of single-chain variable fragment (scFv) extracellular domain, transmembrane domain, and intracellular CD3ζ signaling domain. Limited persistence and efficacy [8].

Second-Generation CARs: Incorporated one costimulatory domain (CD28 or 4-1BB) alongside CD3ζ, significantly enhancing T-cell activation, proliferation, and persistence [8].

Third-Generation CARs: Combined multiple costimulatory domains (e.g., CD28 and 4-1BB) for further enhanced antitumor activity and persistence [8].

Fourth-Generation CARs ("TRUCKs"): Engineered to express cytokine genes (e.g., IL-12) upon CAR signaling, modifying the tumor microenvironment and enhancing efficacy against solid tumors [8].

Fifth-Generation CARs: Utilize an intermediate system separating scFv from signaling domains or incorporate cytokine receptor domains (e.g., IL-2Rβ) to activate JAK-STAT pathways, promoting enhanced proliferation [8].

CRISPR/Cas9 Gene Editing in Cell Therapy

CRISPR/Cas9 technology has revolutionized CAR-T cell engineering by enabling precise genomic modifications that enhance efficacy, safety, and manufacturing [8] [3]. Key applications include:

Immune Checkpoint Disruption: Knockout of inhibitory receptors (PD-1, CTLA-4, TIGIT) to enhance CAR-T cell persistence and antitumor activity [8].

Universal CAR-T Cells: Disruption of endogenous T-cell receptor (TCR) and HLA class I genes to create allogeneic, off-the-shelf CAR-T products that minimize graft-versus-host disease [8] [3].

Enhanced Trafficking and Function: Genetic modifications to improve tumor homing, resistance to exhaustion, and proliferation capacity [8].

Safety Switches: Incorporation of controllable suicide genes or safety switches to mitigate toxicity concerns [3].

The CRISPR/Cas9 system offers multiple platforms for these applications, including standard Cas9 for gene knockout, base editors for precise nucleotide changes, and CRISPRi/a for transcriptional regulation without DNA cleavage [3].

Analytical Characterization and Synthetic Pathways

Critical Quality Attributes and Analytical Methods

Comprehensive characterization of novel therapeutic modalities requires sophisticated analytical approaches to monitor critical quality attributes (CQAs):

Drug-Antibody Ratio (DAR): Determines the average number of payload molecules per antibody, typically characterized by hydrophobic interaction chromatography (HIC) and mass spectrometry [5].

Aggregation and Stability: Assessed by size-exclusion chromatography (SEC), dynamic light scattering (DLS), and differential scanning calorimetry (DSC) [5].

Payload Distribution and Conjugation Sites: Analyzed by peptide mapping with LC-MS/MS, particularly important for site-specific ADCs [5].

Potency and Biological Activity: Cell-based cytotoxicity assays, internalization assays, and binding affinity measurements (SPR, ELISA) [5].

Vector Copy Number and Transgene Expression: For cell and gene therapies, qPCR/ddPCR for vector copy number, and flow cytometry for CAR expression [8].

AI-Driven Optimization in Drug Development

Artificial intelligence has emerged as a transformative tool in optimizing the development of novel therapeutics:

Retrosynthetic Analysis: AI-powered tools predict feasible synthetic routes for complex payload molecules by learning from chemical reaction databases [2].

Reaction Prediction and Optimization: Machine learning models analyze reaction parameters (temperature, solvent, catalysts) to optimize yield and selectivity while minimizing byproducts [2].

High-Throughput Screening: AI-directed robotic systems perform rapid experimentation, accelerating ADC candidate screening and optimization [2].

Protein Engineering: AI models predict antibody-antigen interactions and optimize binding affinity, stability, and developability profiles [1].

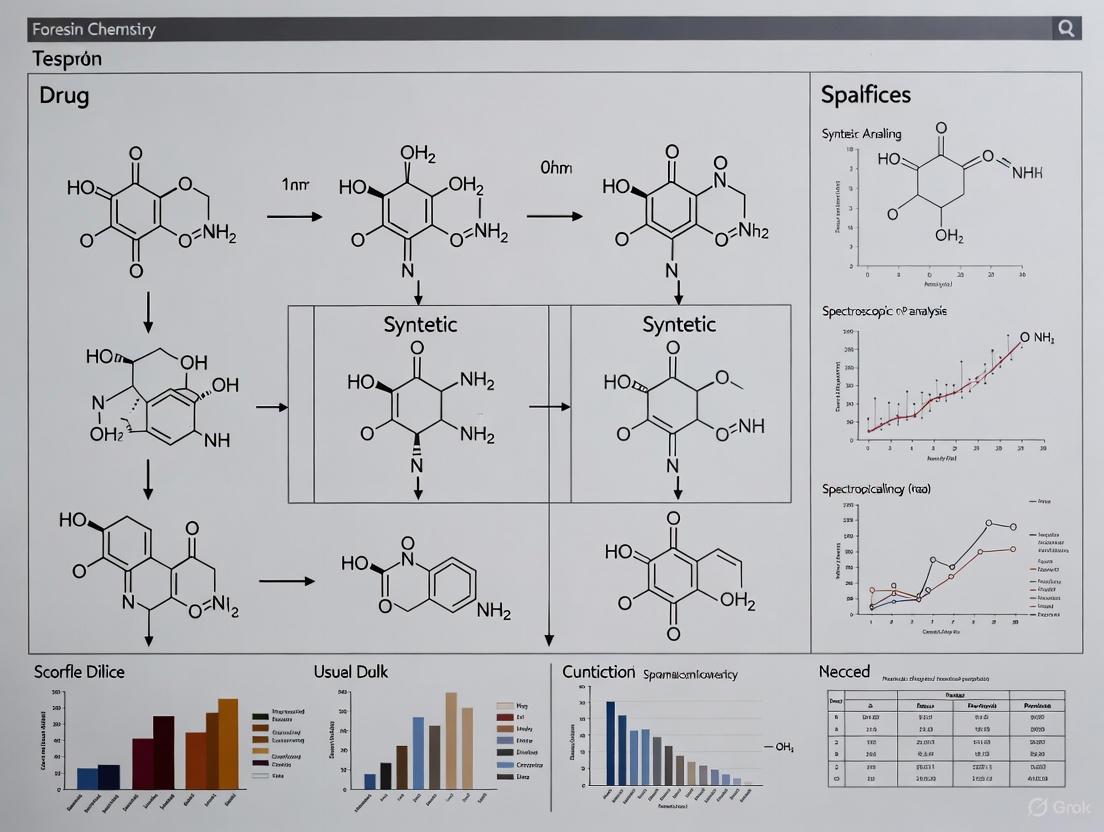

Diagram 1: ADC Mechanism of Action with Bystander Effect

Experimental Protocols

ADC In Vitro Potency Assay

Objective: Quantify ADC-mediated cytotoxicity against target-positive and target-negative cell lines to establish potency and evaluate bystander effect.

Materials:

- Target antigen-positive and isogenic antigen-negative cell lines

- ADC test articles and appropriate controls (naked antibody, free payload)

- Cell culture medium and supplements

- 96-well tissue culture plates

- CellTiter-Glo Luminescent Cell Viability Assay

- Microplate reader capable of luminescence detection

Procedure:

- Seed target-positive and target-negative cells in separate 96-well plates at optimal density (typically 5,000-10,000 cells/well in 100μL medium) and incubate overnight at 37°C, 5% CO₂.

- Prepare 8-point 1:3 serial dilutions of ADC test articles in complete medium, with concentrations typically ranging from 0.001 nM to 100 nM.

- Replace medium in assay plates with 100μL of diluted ADC solutions or controls (n=3 replicates per concentration).

- Incubate plates for 96-120 hours at 37°C, 5% CO₂.

- Equilibrate plates and CellTiter-Glo reagent to room temperature for 30 minutes.

- Add 50μL CellTiter-Glo reagent to each well, mix for 2 minutes on an orbital shaker, and incubate for 10 minutes to stabilize luminescent signal.

- Record luminescence using a microplate reader with integration time of 0.5-1 second/well.

- Calculate percent viability relative to untreated controls and generate dose-response curves using four-parameter logistic regression to determine IC₅₀ values.

Data Analysis: Compare IC₅₀ values between target-positive and target-negative cells. A significant bystander effect is indicated when cytotoxicity is observed in co-cultures or target-negative cells with membrane-permeable payloads.

CRISPR-Mediated PD-1 Knockout in CAR-T Cells

Objective: Generate PD-1 knockout CAR-T cells to enhance antitumor persistence and activity.

Materials:

- Human T-cells from healthy donor or patient

- PD-1-specific sgRNA and Cas9 protein (RNP complex)

- CAR transgene construct (lentiviral or retroviral vector)

- T-cell culture medium (TexMACS or similar with IL-7/IL-15)

- Electroporation system (e.g., Lonza 4D-Nucleofector)

- Flow cytometry antibodies (anti-CD3, anti-CD4, anti-CD8, anti-PD-1)

- PD-1/B7-H1 Blockade Binding ELISA

Procedure:

- Isolate PBMCs from whole blood using Ficoll density gradient centrifugation and activate T-cells with anti-CD3/CD28 beads for 48 hours.

- Prepare sgRNA:Cas9 ribonucleoprotein (RNP) complex by incubating 60pmol sgRNA with 40pmol Cas9 protein for 10 minutes at room temperature.

- Harvest activated T-cells and resuspend in electroporation buffer at 10-20×10⁶ cells/mL.

- Mix 20μL cell suspension with RNP complex and transfer to electroporation cuvette.

- Electroporate using appropriate program (e.g., EH-115 for human T-cells).

- Immediately transfer cells to pre-warmed culture medium with cytokines.

- Transduce with CAR-encoding lentivirus 24 hours post-electroporation (MOI 3-5).

- Expand CAR-T cells for 7-14 days with medium changes every 2-3 days.

- Confirm PD-1 knockout efficiency by flow cytometry and functional assays.

Quality Controls:

- Assess editing efficiency via T7E1 assay or next-generation sequencing

- Measure CAR expression percentage by flow cytometry

- Evaluate off-target editing potential using computational prediction tools

- Verify functional enhancement in repeated antigen stimulation assays

Table 3: Research Reagent Solutions for Novel Therapeutic Development

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Cell Culture Media | TexMACS, X-VIVO15, AIM-V | T-cell expansion and maintenance for cell therapy |

| Cytokines/Growth Factors | IL-2, IL-7, IL-15, IL-21 | T-cell differentiation, expansion, and persistence |

| Gene Editing Tools | CRISPR-Cas9 RNP, Cas12a, Base editors | Precise genomic modifications in cell therapies |

| Conjugation Reagents | Maleimide-based linkers, Peptide linkers, Site-specific conjugating enzymes | ADC construction and optimization |

| Analytical Standards | NIST mAb Reference Material, Characterized ADC standards | System suitability and method qualification |

| Detection Reagents | CellTiter-Glo, Annexin V apoptosis detection, CFSE cell proliferation kit | Potency and mechanism-of-action studies |

The convergence of monoclonal antibodies, ADCs, and cell/gene therapies represents a new era in precision medicine. Future development will focus on several key areas:

Next-Generation ADC Platforms: Development of conditionally active antibodies, dual-payload ADCs, and immune-stimulating antibody conjugates (ISACs) that combine targeted cytotoxicity with immune activation [9] [5].

Expansion Beyond Oncology: Application of ADC technology to autoimmune diseases, persistent bacterial infections, and other non-oncological indications through targeted depletion of pathogenic immune cells [9] [5].

Enhanced Gene Editing Tools: Advancement of base editing, prime editing, and CRISPR-associated transposase systems for more precise genetic modifications with reduced off-target effects [8] [3].

Automation and AI Integration: Implementation of fully automated screening platforms and AI-driven design algorithms to accelerate candidate optimization and reduce development timelines [2] [1].

Novel Delivery Platforms: Development of in vivo delivery systems including mRNA-LNP platforms for direct expression of therapeutic antibodies and CARs, bypassing complex manufacturing processes [1].

Diagram 2: Evolution of CAR-T Cell Generations

The integration of these advanced therapeutic modalities with cutting-edge analytical techniques and AI-driven optimization represents a fundamental shift in drug development. As characterization methods continue to advance alongside biological understanding, these targeted therapies will increasingly offer personalized treatment options for complex diseases, ultimately improving patient outcomes across diverse therapeutic areas. The ongoing challenge for researchers and drug development professionals will be to balance innovation with rigorous safety assessment as these powerful technologies continue to evolve.

The landscape of drug development is undergoing a significant transformation, driven by advances in synthetic pathway technologies and the corresponding evolution of global regulatory standards. The introduction of ICH Q2(R2) on analytical procedure validation, ICH Q14 on analytical procedure development, and the enduring ALCOA+ framework for data integrity represents a fundamental shift toward a more holistic, risk-based, and scientifically rigorous approach to pharmaceutical analysis [10] [11] [12]. These guidelines are particularly crucial in the context of modern drug synthesis, which increasingly employs AI-driven optimization and complex synthetic pathways that demand robust analytical control strategies [2].

The integration of these frameworks establishes a comprehensive lifecycle management system for analytical procedures, from initial development through post-approval changes. This harmonized approach ensures that analytical methods remain fit-for-purpose despite evolving manufacturing processes, technological advancements, and the increasing molecular complexity of new therapeutic agents [13]. For researchers engaged in cutting-edge synthetic pathway development and characterization, understanding these regulatory drivers is essential for ensuring both innovation and compliance throughout the drug development lifecycle.

ICH Q2(R2) - Validation of Analytical Procedures

ICH Q2(R2) provides an updated framework for the validation of analytical procedures, expanding on the original Q2(R1) to address more complex techniques and modern analytical challenges [12]. The guideline emphasizes a science-based approach to validation, detailing validation characteristics and methodologies appropriate for different types of analytical procedures, including traditional small molecules and complex biological compounds [11].

ICH Q14 - Analytical Procedure Development

ICH Q14 outlines a structured approach to analytical procedure development and lifecycle management, introducing the key concepts of the Analytical Target Profile (ATP) and enhanced approach to development [10] [11]. The ATP forms the cornerstone of this framework, explicitly defining the required quality of the analytical measurement based on the intended purpose of the procedure [11] [13]. ICH Q14 establishes two complementary approaches:

- Minimal Approach: A traditional, direct development path suitable for straightforward procedures.

- Enhanced Approach: A systematic, risk-based development process that provides greater product and procedure understanding, potentially facilitating post-approval changes [11] [13].

ALCOA+ - Data Integrity Framework

The ALCOA+ framework provides the foundational principles for ensuring data integrity throughout the analytical procedure lifecycle. Originally encompassing Attributable, Legible, Contemporaneous, Original, and Accurate principles, it was expanded to include Complete, Consistent, Enduring, and Available [14] [15]. This framework is critical for maintaining trust in analytical data generated under ICH Q2(R2) and Q14, particularly as laboratories increasingly adopt digital systems and automated workflows [14].

Table 1: Core Principles of the ALCOA+ Framework for Data Integrity

| Principle | Core Requirement | Practical Application in Drug Analysis |

|---|---|---|

| Attributable | Data clearly linked to source and creator | Electronic signatures, detailed audit trails [14] [15] |

| Legible | Data permanently readable | Permanent ink, validated electronic records [15] |

| Contemporaneous | Documented at time of activity | Real-time recording, direct instrument integration [14] |

| Original | Original record or certified copy preserved | Secure storage, access controls [14] |

| Accurate | Error-free, truthful representation | Instrument calibration, procedure validation [14] [15] |

| Complete | All data including repeats/revisions | Comprehensive audit trails, no deletion [14] [15] |

| Consistent | Chronological, standardized sequencing | Sequential dating, standardized formats [15] |

| Enduring | Lasting and durable over required period | Archival-quality media, robust storage systems [15] |

| Available | Accessible for review and reference | Searchable databases, organized archives [14] [15] |

Interrelationship Between Guidelines

These three frameworks function as an integrated system rather than separate requirements. ICH Q14 provides the front-end development principles, ICH Q2(R2) establishes the validation requirements, and ALCOA+ ensures ongoing data integrity throughout the procedure lifecycle [11] [14] [12]. This interconnected relationship creates a continuum of quality from initial procedure conception through retirement, which is visually represented in the following workflow:

Diagram 1: Analytical procedure lifecycle management

Implementation in Drug Synthesis & Characterization Research

Application to AI-Optimized Synthesis Pathways

The pharmaceutical industry is increasingly adopting AI-driven approaches to optimize drug synthesis pathways, including retrosynthetic analysis, reaction prediction, and route optimization [2]. These advanced approaches generate complex synthetic pathways that require equally sophisticated analytical control strategies. The ICH Q14 enhanced approach, with its emphasis on method robustness and parameter ranges, provides the necessary framework to ensure analytical methods can effectively characterize compounds synthesized through these novel pathways [11] [13].

For example, AI tools like EZSpecificity—which predicts enzyme-substrate interactions for biocatalysis with 91.7% accuracy—generate novel synthetic routes that may produce unexpected impurities or complex molecular structures [16]. Implementing an ATP for these analyses ensures the analytical method remains focused on its intended purpose, while the knowledge management elements of ICH Q14 facilitate continuous improvement as more data is gathered on method performance with these novel compounds [11].

Analytical Procedure Development and Validation Workflow

The following diagram illustrates the integrated workflow for analytical procedure development and validation according to ICH Q14 and Q2(R2), particularly as applied to characterizing compounds from novel synthetic pathways:

Diagram 2: Analytical procedure development workflow

Experimental Protocols for Method Validation

Protocol for Specificity Assessment for Novel Synthetic Compounds

Purpose: To demonstrate that the analytical procedure can unequivocally assess the analyte in the presence of potential impurities, degradants, or matrix components that are expected to be present in AI-optimized synthetic pathways [11].

Materials:

- Reference standards of target compound

- Synthesized potential impurities and degradants

- Forced degradation samples (acid, base, oxidative, thermal, photolytic stress)

- Appropriate chromatography system (HPLC/UPLC) with diode array or mass spectrometric detection

Procedure:

- Inject individual solutions of the target compound and each potential impurity to determine retention times and detector response factors.

- Inject a solution containing all components to demonstrate resolution between all peaks.

- Perform forced degradation studies on the drug substance to demonstrate stability-indicating properties and resolution from degradation products.

- For impurity methods, establish the limit of detection and limit of quantitation for each known impurity.

- Verify peak homogeneity using photodiode array detection or mass spectrometry.

Acceptance Criteria: Resolution between critical pair of peaks should be ≥2.0; Peak purity index should be ≥990 for the main analyte; All impurities should be adequately resolved from the main peak [11].

Protocol for Accuracy and Precision Evaluation

Purpose: To demonstrate that the analytical procedure provides results that are both exact (close to true value) and reproducible (consistent on repeated measurement).

Materials:

- Certified reference standard with known purity

- Placebo matrix (if evaluating drug product method)

- Appropriate solvents and reagents

Procedure:

- Prepare a minimum of nine determinations over a minimum of three concentration levels covering the specified range (e.g., 50%, 100%, 150% of target concentration).

- For each concentration level, prepare three independent samples.

- Analyze all samples following the validated procedure.

- Calculate accuracy as percentage recovery for each concentration.

- Calculate precision as relative standard deviation (RSD) within each concentration level (repeatability) and between concentration levels (intermediate precision).

Acceptance Criteria: Mean recovery should be 98.0-102.0% for drug substance assays; RSD for repeatability should be ≤2.0% for drug substance assays [11] [12].

Table 2: Key Validation Parameters and Criteria for Drug Substance Assay

| Validation Characteristic | Experimental Design | Acceptance Criteria |

|---|---|---|

| Accuracy | 9 determinations at 3 concentration levels | Recovery: 98.0-102.0% |

| Precision | ||

| - Repeatability | 6 determinations at 100% concentration | RSD ≤ 2.0% |

| - Intermediate Precision | Different analyst, instrument, day | RSD ≤ 2.0% overall |

| Specificity | Resolution from impurities/degradants | Resolution ≥ 2.0; Peak purity pass |

| Linearity | Minimum 5 concentration levels | Correlation coefficient ≥ 0.999 |

| Range | From LOQ to 150% of test concentration | Meets accuracy, precision, linearity |

| Robustness | Deliberate variations of parameters | System suitability criteria met |

Change Management in the Analytical Procedure Lifecycle

Post-Approval Changes Under ICH Q14

A significant advancement introduced by ICH Q14 is the structured approach to analytical procedure changes throughout the product lifecycle [13]. This framework is particularly valuable for drug synthesis research, where synthetic pathways may be optimized post-approval, potentially requiring corresponding analytical method adjustments.

The change management process involves:

- Risk Assessment: Evaluating the potential impact of the proposed change on the procedure's ability to meet the ATP [13].

- Bridging Studies: Comparative testing using both the current and modified procedures to demonstrate equivalent performance [13].

- Regulatory Reporting: Determining the appropriate reporting category based on the risk assessment and established conditions [13].

Practical Application Example: Technology Update

A common scenario in modern laboratories involves updating analytical technology to replace obsolete instrumentation. For example, transitioning from HPLC to UPLC technology for dissolution testing endpoint analysis [13]. Under the ICH Q14 framework, this change can be efficiently managed through:

- Demonstrating through the ATP that the performance characteristics (accuracy, precision, specificity) are maintained or improved with the new technology.

- Conducting bridging studies comparing results from both methods across multiple batches.

- Leveraging enhanced knowledge management to justify that the change in technology does not impact the fundamental measurement principles defined in the ATP [13].

This systematic approach facilitates continuous improvement of analytical procedures while maintaining regulatory compliance, ensuring that control methods can evolve alongside synthetic pathway optimizations [13].

The Scientist's Toolkit: Essential Research Reagent Solutions

The implementation of robust analytical procedures requires specific reagents and materials that ensure reliability and reproducibility. The following table details essential solutions for analytical development and validation in drug synthesis research:

Table 3: Essential Research Reagent Solutions for Analytical Development

| Reagent/Material | Function in Analytical Development | Application Examples |

|---|---|---|

| Certified Reference Standards | Provides exact known quantity of analyte for method calibration and validation | Quantification of drug substance, impurity method validation [11] |

| System Suitability Solutions | Verifies chromatographic system performance before analysis | Resolution mixtures, tailing factor measurements [13] |

| Forced Degradation Materials | Generates degradation products for specificity validation | Acid/base, oxidative, thermal stress conditions [11] |

| High-Purity Mobile Phase Components | Ensures reproducible chromatographic separation and detection | HPLC/UPLC grade solvents, ultrapure water [11] |

| Column Qualification Kits | Characterizes and validates chromatographic column performance | USP column efficiency test mixtures [13] |

The harmonized implementation of ICH Q2(R2), ICH Q14, and the ALCOA+ framework represents a significant advancement in pharmaceutical analytical science. For researchers focused on drug synthesis pathways and characterization, these guidelines provide a structured foundation for developing robust, reliable analytical methods that can keep pace with innovation in synthetic chemistry [2] [11].

The lifecycle approach embodied in these guidelines facilitates continuous improvement and adaptation of analytical procedures, ensuring they remain fit-for-purpose even as synthetic routes are optimized and technologies evolve [13]. Furthermore, the emphasis on science- and risk-based principles encourages greater scientific rigor while potentially streamlining post-approval changes [10] [13].

As drug development continues to embrace AI-driven synthesis optimization and more complex molecular entities [2] [16], these regulatory frameworks provide the necessary flexibility and robustness to ensure product quality while fostering innovation. For pharmaceutical scientists, mastering these guidelines is no longer merely a regulatory requirement but an essential component of modern analytical practice in drug development.

The global market for technologies delivering proteins, antibodies, and nucleic acids represents a critical frontier in biomedical advancement, positioned at the intersection of biotechnology innovation and therapeutic development. This sector has evolved from a niche research area into a cornerstone of modern precision medicine, driven by unprecedented capabilities in targeting previously undruggable pathways. The market, estimated at $9.75 billion in 2025, is anticipated to grow at a compound annual growth rate (CAGR) of 12.86% through 2033, reaching approximately $20.15 billion [17]. This expansion is fundamentally fueled by the convergence of several paradigm shifts: the clinical success of biologics and nucleic acid-based therapies, breakthroughs in delivery technologies such as lipid nanoparticles, and the integration of artificial intelligence throughout the drug development pipeline [17] [2]. Within the broader context of drug analysis synthetic pathways and characterization research, these biomolecules are not merely therapeutic agents but complex engineering challenges whose synthesis, delivery, and functional characterization are redefining pharmaceutical development.

This analysis provides a comprehensive technical examination of the market dynamics, pipeline composition, and experimental frameworks shaping the development of antibodies, proteins, and nucleic acids as therapeutic modalities. It is structured to offer researchers, scientists, and drug development professionals with a detailed guide to the current landscape, including quantitative market data, key technological innovations, and standardized experimental protocols that underpin cutting-edge research and development in this field.

The global market for antibody, protein, and nucleic acid technologies demonstrates robust growth and diversification across therapeutic areas, delivery platforms, and geographic regions. Market expansion is primarily driven by the increasing prevalence of chronic diseases, rising demand for personalized medicine, and continuous technological innovations that enhance the efficacy and specificity of therapeutic agents [17] [18].

Market Size and Growth Projections

Table 1: Global Market Overview for Biomolecule Technologies

| Metric | 2025 (Estimate) | 2033 (Projection) | CAGR (2026-2033) |

|---|---|---|---|

| Overall Market Size | $9.75 Billion [17] | $20.15 Billion [17] | 12.86% [17] |

| Antibody Drug Market Size | >$200 Billion (2023 base) [19] | Sustained Growth | ~10-12% (5-year CAGR) [19] |

| Biotechnology Market (Broader Context) | $1.55 Billion (2024 base) [18] | $4.48 Billion by 2032 [18] | 13.4% (2024-2032) [18] |

Market Segmentation and Key Indicators

The market can be segmented by type, application, and end-user, each revealing distinct trends and opportunities.

Table 2: Market Segmentation and Key Application Areas

| Segment | Sub-category | Key Characteristics & Trends |

|---|---|---|

| By Type [20] [18] | Antibody | Dominated by monoclonal antibodies (mAbs); over 120 approved drugs globally [19]. Key innovations: ADCs, bispecific antibodies, Fc engineering. |

| Nucleic Acid | Includes DNA, RNA, and oligonucleotide therapies (e.g., mRNA vaccines, aptamers). Rapid growth segment [18]. | |

| Protein | Involves therapeutic proteins and enzymes (e.g., insulin). Critical for replacing deficient proteins and enzymatic functions. | |

| By Application [17] [18] | Biopharmaceutical Production | Primary application area. Focus on manufacturing proteins, vaccines, and monoclonal antibodies for chronic diseases. |

| Gene Therapy | Emerging as a revolutionary segment, aiming to correct genetic defects via gene editing (e.g., CRISPR) and gene delivery [18]. | |

| Pharmacogenomics & Genetic Testing | Enables personalized medicine by tailoring treatments based on individual genetic profiles [18]. | |

| By End-user [17] | Pharmaceutical & Biotech Companies | Lead R&D and commercialization efforts. Driven by extensive R&D investments and pipeline expansion. |

| Research & Academic Institutes | Focus on basic research, target discovery, and early-stage translational development. | |

| CROs & CDMOs | Provide specialized outsourcing for research, development, and manufacturing. |

The competitive landscape is characterized by the dominance of large multinational pharmaceutical companies such as Johnson & Johnson, Roche, Merck, and Bristol-Myers Squibb, alongside rapidly emerging biotechnology firms specializing in innovative immunotherapies, bispecific antibodies, and antibody-drug conjugates (ADCs) [19]. The Chinese antibody drug market has shown remarkable growth, expected to increase from 9.8 billion yuan in 2016 to 181 billion yuan by 2025 [19].

Technology Innovation and Pipeline Analysis

Antibody Engineering and Design Innovations

The antibody therapeutic pipeline has evolved significantly from murine to fully human antibodies, reducing immunogenicity and improving safety profiles [19]. Current innovation focuses on structural engineering to enhance functionality.

Table 3: Evolution of Antibody Drug Modalities

| Antibody Modality | Key Feature | First Approval/Discovery | Example (Brand Name) |

|---|---|---|---|

| Murine mAb | Mouse-derived; high immunogenicity | 1986 (Muromonab-CD3) [19] | Orthoclone OKT3 |

| Chimeric mAb | Constant region humanized | 1997 (Rituximab) [19] | Rituxan |

| Humanized mAb | Complementarity-determining regions (CDRs) from mouse | 1998 (Trastuzumab) [19] | Herceptin |

| Fully Human mAb | Fully human sequence | 2002 (Adalimumab) [19] | Humira |

| Antibody-Drug Conjugate (ADC) | Antibody linked to cytotoxic drug | 2000 (Gemtuzumab ozogamicin) [19] | Mylotarg |

| Bispecific Antibody (BsAb) | Binds two different antigens | 2014 (Blinatumomab) [19] | Blincyto |

| Fc-Engineered Antibody | Modified Fc region for enhanced effector function | 2013 (Obinutuzumab) [19] | Gazyva |

| Nanobody | Single-domain antibodies from camelids | 2018 (Caplacizumab) [19] | Cablivi |

Artificial intelligence (AI) is now revolutionizing antibody discovery. AI and computer-aided drug design (CADD) accelerate key processes including antibody screening, affinity optimization, and stability prediction. Tools like DeepMind's AlphaFold2 predict 3D antibody structures with high accuracy, dramatically improving the efficiency of modeling antibody-antigen interactions and optimizing antibody drug-like properties [19].

Nucleic Acid Therapeutics and Delivery Platforms

Nucleic acid therapeutics, including mRNA, siRNA, and aptamers, represent a rapidly growing segment. The global market for nucleic acid aptamers alone was projected to grow from $340.5 million in 2014 to approximately $5.4 billion in 2019, reflecting a remarkable CAGR of 73.5% [21]. Critical to this growth has been the development of advanced delivery systems, notably lipid nanoparticles (LNPs), which gained prominence through the success of mRNA vaccines. LNPs protect nucleic acids from degradation and enable efficient cellular delivery and endosomal escape [17]. Other innovations include biodegradable polymers and dendrimers for controlled release and targeted delivery, reducing systemic toxicity [17].

AI-Driven Synthesis and Pathway Optimization

AI is transforming the optimization of synthesis pathways for drugs and biologics, leveraging machine learning (ML), reinforcement learning, and generative models to predict optimal reaction conditions, streamline multi-step synthesis, and identify novel synthetic routes [2]. Key applications include:

- Retrosynthetic Analysis: AI-powered tools (e.g., Molecular Transformer, Graph Neural Networks) learn from vast chemical reaction databases to predict plausible retrosynthetic routes, significantly reducing the time required for synthesis planning [2].

- Reaction Prediction and Optimization: Machine learning models analyze chemical reaction data to predict reaction feasibility, yield, and side-product formation. Bayesian optimization and AI-controlled robotic labs iteratively refine reaction parameters (e.g., temperature, solvent, catalyst) to achieve optimal conditions with minimal experiments [2].

- Route Optimization: AI methods like genetic algorithms and reinforcement learning evaluate multiple synthetic pathways based on cost, yield, scalability, and environmental impact, enhancing the sustainability and cost-effectiveness of drug manufacturing [2].

A specific example of an AI-powered tool is EZSpecificity, developed by researchers at the University. This model predicts which chemicals can be substrates for a particular enzyme, achieving a 91.7% accuracy in identifying the single potential reactive substrate when validated by experiments. This tool aids in advancing drug development and synthetic biology by figuring out metabolism and enzyme-substrate relationships [16].

Experimental Protocols for Discovery and Characterization

This section outlines critical experimental methodologies for the discovery, optimization, and characterization of antibodies, proteins, and nucleic acids, with an emphasis on standardized, automatable protocols.

Protocol: AI-Enhanced Enzyme-Substrate Specificity Screening

Objective: To rapidly identify and validate specific enzyme-substrate pairs for biocatalysis or drug target discovery using an AI-prediction-guided workflow [16].

Materials:

- EZSpecificity AI model or equivalent in-house platform [16]

- PDBind+ and ESIBank datasets for model training/validation [16]

- Target enzymes and candidate substrate libraries

- Robotic liquid handling system (e.g., Tecan Veya, SPT Labtech firefly+) [22]

- Analytical instrumentation (e.g., LC-MS, HPLC)

Methodology:

- Data Preparation and Model Training:

- Curate a dataset of known enzyme-substrate complexes. Utilize public databases like PDBind+ and ESIBank [16].

- Train a cross-attention-based algorithm on the structural data. The source sequence is the enzyme-substrate complex, and the model learns to predict interactions between specific substrate chemical groups and enzyme amino acid residues [16].

In Silico Prediction:

- Input the amino acid sequence or 3D structure of the target enzyme and a virtual library of candidate substrates into the trained model.

- The AI model will output a ranked list of predicted substrates with a binding affinity or reactivity score.

Experimental Validation:

- Automated High-Throughput Screening: Using a robotic liquid handler, set up reactions with the top AI-predicted substrates against the target enzyme in a 96- or 384-well plate format [22].

- Reaction Monitoring: Employ a coupled assay (e.g., spectrophotometric, fluorometric) or direct analytical methods (e.g., LC-MS) to monitor product formation in real-time.

- Kinetic Analysis: For confirmed hits, determine kinetic parameters (Km, kcat) under optimized conditions.

Model Refinement:

- Feed the experimental results (validated hits and misses) back into the AI model to retrain and improve its prediction accuracy for future screens [16].

Visualization of Workflow: The following diagram illustrates the integrated computational and experimental workflow for AI-enhanced enzyme-substrate screening.

Protocol: High-Throughput Characterization of Protein-Protein Interactions (PPIs)

Objective: To systematically identify and characterize synthetic lethal interactions for cancer drug discovery using a combination of CRISPR-based screening and multi-omic validation [23].

Materials:

- CRISPR/Cas9 knockout or base-editing libraries [23]

- Isogenic cell line pairs (e.g., BRCA1 wild-type vs. mutant)

- Automated cell culture system (e.g., mo:re MO:BOT for 3D cultures) [22]

- High-content imaging system

- Multi-omics analysis platform (e.g., Sonrai Discovery platform) [22]

Methodology:

- Genetic Screen Setup:

- Transduce a lentiviral CRISPR library into isogenic cell line pairs. A common application is in a BRCA1 mutant background to find genes synthetically lethal with BRCA1 loss, mimicking PARP inhibitor mechanisms [23].

- Culture cells in a robust, reproducible manner. For enhanced biological relevance, use automated 3D cell culture systems (e.g., MO:BOT) to grow organoids [22].

Phenotypic Readout:

- Cell Fitness: Use sequencing to track guide RNA abundance over time to identify drops (gene knockouts that cause cell death or reduced fitness) [23].

- High-Content Imaging: Employ multiplexed immunofluorescence and automated imaging to capture phenotypic changes beyond fitness, such as morphological alterations and DNA damage markers (e.g., γH2AX) [23].

Data Integration and Validation:

- Integrate Multi-Modal Data: Use an analytical platform (e.g., Sonrai) to layer CRISPR screen data with imaging data, proteomics, and transcriptomics to build a comprehensive network of interactions [22].

- Hit Validation: Validate top candidate synthetic lethal genes using secondary assays with individual guide RNAs and small-molecule inhibitors in relevant in vivo models.

Visualization of Workflow: The following diagram outlines the key steps in a synthetic lethality screening workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, platforms, and technologies that are fundamental to research and development in the biomolecule sector.

Table 4: Essential Research Reagent Solutions and Platforms

| Tool Category | Specific Technology/Reagent | Function & Application |

|---|---|---|

| AI & Data Analytics | EZSpecificity Model [16] | Predicts enzyme-substrate interactions to advance synthetic biology and drug discovery. |

| Sonrai Discovery Platform [22] | Integrates complex imaging, multi-omic, and clinical data to generate biological insights. | |

| Cenevo (Titian Mosaic/Labguru) [22] | Provides sample management and R&D digital platforms to connect data, instruments, and processes for effective AI application. | |

| Automation & Robotics | Tecan Veya Liquid Handler [22] | Offers walk-up automation for consistent, reliable liquid handling in assays. |

| SPT Labtech firefly+ [22] | A compact unit that combines pipetting, dispensing, mixing, and thermocycling for genomic workflows. | |

| mo:re MO:BOT [22] | Automates 3D cell culture (seeding, media exchange) to produce reproducible, human-relevant tissue models for screening. | |

| Delivery Technologies | Lipid Nanoparticles (LNPs) [17] | Enable efficient cellular delivery of nucleic acids (e.g., mRNA), protecting them from degradation. |

| Biodegradable Polymers [17] | Used for controlled release and targeted delivery of proteins and nucleic acids, reducing systemic toxicity. | |

| Protein Production | Nuclera eProtein Discovery System [22] | Automates protein expression and purification from DNA to soluble, active protein in under 48 hours. |

| Critical Reagents | Agilent SureSelect Kits [22] | Target enrichment kits for genomic sequencing, automated on platforms like firefly+. |

| CRISPR/Cas9 Libraries [23] | Enable genome-wide knockout screens to identify genetic dependencies and synthetic lethal interactions. |

The market and pipeline for antibodies, proteins, and nucleic acids are in a period of exceptional growth and technological transformation. Driven by the clinical and commercial success of targeted biologics and nucleic acid therapies, this sector is poised to maintain a strong growth trajectory, with the underlying technologies market expected to expand at a CAGR of 12.86% to surpass $20 billion by 2033 [17]. The future of this field will be shaped by the deepening integration of AI and machine learning into every stage of drug discovery, from target identification and antibody engineering to the optimization of synthetic pathways [2] [19]. Concurrently, the rise of automated, high-throughput, and biologically relevant screening platforms is enhancing the reproducibility and predictive power of preclinical research [22]. For researchers and drug development professionals, mastering the converging disciplines of computational biology, automation engineering, and advanced delivery system design will be paramount to leveraging these trends and delivering the next generation of transformative biomolecule-based therapeutics.

The Impact of Project Optimus and Modernized Dosage Optimization Paradigms

The development of oncology therapeutics has undergone a fundamental transformation in its approach to dose selection, moving from a historical maximum tolerated dose (MTD) paradigm toward optimized dosing strategies that better align with the mechanisms of modern targeted therapies and immunotherapays. Project Optimus, an initiative launched in 2021 by the FDA's Oncology Center of Excellence, represents a systematic effort to reform the dose optimization and dose selection paradigm in oncology drug development [24]. This shift responds to the recognized limitations of traditional approaches, where the "more is better" philosophy of cytotoxic chemotherapeutics—which exhibit linear dose-response and dose-toxicity relationships—has proven inadequate for molecularly targeted agents that may achieve maximum biological effect before reaching MTD [25]. The initiative aims to ensure that patients receive doses that maximize efficacy while minimizing toxicity, particularly important as newer therapies are often administered over longer periods [24].

This whitepaper examines the technical framework of Project Optimus within the broader context of drug analysis synthetic pathways and characterization research. We provide a comprehensive analysis of the quantitative evidence, methodological approaches, and implementation strategies that define modern dose optimization, specifically designed for researchers, scientists, and drug development professionals engaged in oncology therapeutic development.

The Limitations of Traditional Oncology Dose Finding

The MTD Paradigm and Its Shortcomings

Traditional oncology dose-finding has relied predominantly on the 3+3 trial design, introduced in the 1940s and formalized in the 1980s [26]. This approach was developed for cytotoxic chemotherapeutics and follows a simple escalation strategy: small patient cohorts receive increasing doses until dose-limiting toxicities (DLTs) are observed in ≥1/6 patients across two cohorts, establishing the MTD [26]. This MTD then typically becomes the recommended dose for subsequent trials and eventual clinical use.

Quantitative Evidence of Traditional Approach Limitations

Recent analyses demonstrate significant limitations in this traditional paradigm:

Table 1: Documented Limitations of Traditional MTD-Based Dose Finding

| Metric | Finding | Implication |

|---|---|---|

| Dose Modification Rate | 48% of patients in late-stage trials of molecularly targeted agents required dose reductions [26] | High rates of post-approval dose adjustments indicate poor initial dose selection |

| Post-Marketing Requirements | FDA required additional dose optimization studies for >50% of recently approved cancer drugs [26] | Inadequate dose characterization during development |

| Dose Interruption/Discontinuation | Registration trials showed median dose reduction (28%), interruption (55%), and discontinuation (10%) rates [27] | Poor tolerability at approved doses limits treatment continuity |

| Post-Marketing Dose Changes | Approximately 15% of oncology drugs (2010-2022) required post-marketing dose-optimization trials [28] | Delayed optimization impacts patient care and treatment benefit |

The fundamental issue lies in the mismatch between trial design and drug mechanism. The 3+3 design does not assess whether a drug is effective at treating cancer, fails to represent longer treatment courses typical with modern therapeutics, and correlates poorly with how newer drug classes function mechanistically [26]. Furthermore, these trials typically assess safety over short durations that may not reflect long-term treatment tolerability, particularly problematic for chronic administration schedules [27].

The Project Optimus Framework: Principles and Regulatory Context

Core Principles and Goals

Project Optimus aims to "educate, innovate, and collaborate with companies, academia, professional societies, international regulatory authorities, and patients to move forward with a dose-finding and dose optimization paradigm across oncology that emphasizes selection of a dose or doses that maximizes not only the efficacy of a drug but the safety and tolerability as well" [24]. Specific goals include:

- Communicating expectations for dose-finding through guidance, workshops, and public meetings

- Encouraging early engagement with FDA Oncology Review Divisions before registration trials

- Developing strategies that leverage nonclinical and clinical data, including randomized dose evaluations [24]

The initiative shifts focus from identifying the maximum tolerated dose to determining the optimal biological dose (OBD)—the dose that offers the best efficacy-tolerability balance [29].

Regulatory Guidance and Implementation Timeline

The FDA has codified Project Optimus principles through finalized guidance titled "Optimizing the Dosage of Human Prescription Drugs and Biological Products for the Treatment of Oncologic Diseases" [25]. This guidance recommends that sponsors select two doses for Phase II trials—typically the MTD and a dose below it—then determine through randomized evaluation which provides the superior benefit-risk profile [25]. The guidance does not specifically address starting doses for first-in-human trials, radiopharmaceuticals, cellular and gene therapies, or pediatric development, though some recommendations may apply to these areas [25].

Technical Methodologies and Experimental Approaches

Model-Informed Drug Development (MIDD) Strategies

The foundation of Project Optimus implementation rests on model-informed drug development approaches that integrate diverse data sources to build quantitative evidence for dose selection [27]. MIDD employs pharmacological modeling and simulation to improve dose optimization practices through adaptive study designs, preclinical insight integration, real-time assimilation of pharmacokinetic (PK) and pharmacodynamic (PD) data, and comprehensive data utilization [27].

Table 2: Core Components of Model-Informed Drug Development for Dose Optimization

| Component | Function | Application in Dose Optimization |

|---|---|---|

| Population PK/PD Modeling | Characterizes drug exposure and biological effects across patient populations | Identifies optimal dosage from larger clinical datasets; combines safety and efficacy evaluation [26] |

| Exposure-Response (E-R) Modeling | Quantifies relationship between drug exposure, efficacy, and toxicity | Extrapolates effects of doses and schedules not clinically tested; addresses confounding factors [26] [30] |

| Quantitative Systems Pharmacology (QSP) | Uses computational modeling to represent drug mechanisms in biological systems | Predicts first-in-human dosing; optimizes trial design; evaluates drug formulations [26] [31] |

| Bayesian Adaptive Designs | Statistical approaches that update probability estimates as data accumulate | Enables more nuanced dose escalation/de-escalation; responds to efficacy and late-onset toxicities [26] [32] |

First-in-Human (FIH) Trial Design Innovations

Selecting appropriate dose ranges for FIH trials requires moving beyond traditional animal-to-human dose scaling based solely on weight. Modern approaches incorporate mathematical models that account for receptor occupancy differences between humans and animal models, a critical factor for targeted therapies [26]. These models consider a wider variety of factors to determine starting doses and have demonstrated success in recommending higher starting doses that could provide more patient benefit [26].

Novel FIH dose-escalation designs utilizing mathematical modeling instead of the traditional algorithmic 3+3 approach include:

- Bayesian Optimal Interval (BOIN) Design: A model-assisted approach that provides simple decision rules for dose escalation/de-escalation while having desirable statistical properties [25]

- Backfill BOIN Design: Enables enrollment of additional patients at doses below the current dose level to collect more pharmacodynamic and efficacy data [25]

- Bayesian Latent-Subgroup Platform Design: Allows simultaneous identification of optimal biological doses across multiple indications and combination partners using a master-protocol framework [32]

These designs respond not only to immediate toxicity but also to efficacy measures and late-onset toxicities, providing more comprehensive dose evaluation [26].

Dose Selection and Optimization Methodologies

After initial dose exploration, Project Optimus emphasizes rigorous dose selection through randomized comparisons. The FDA recommends sponsors select two doses to advance into Phase II trials—typically the MTD and a lower dose—then determine which provides the superior benefit-risk profile [25]. Methodologies to support this selection include:

- Backfill and Expansion Cohorts: Increasing patient numbers at specific dose levels of interest within early-stage trials to strengthen understanding of benefit-risk ratios [26]

- Biomarker Integration: Measuring changes in circulating tumor DNA (ctDNA) levels or other biomarkers to identify responses not detected due to short follow-up [26]

- Clinical Utility Indices (CUI): Quantitative frameworks that provide collaborative mechanisms to integrate diverse data types and determine concrete doses of interest [26]

- Seamless Clinical Trial Designs: Adaptive trials that combine traditionally distinct development phases, allowing more rapid enrollment and accumulation of long-term safety and efficacy data [26]

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementation of Project Optimus principles requires specific methodological tools and approaches throughout the drug development pipeline.

Table 3: Essential Research Reagents and Methodological Solutions for Dose Optimization

| Tool Category | Specific Solution | Function in Dose Optimization |

|---|---|---|

| Bioanalytical Assays | Circulating tumor DNA (ctDNA) analysis | Measures molecular responses to treatment; identifies efficacy signals not detected by imaging alone [26] |

| Pharmacodynamic Biomarkers | Target occupancy assays | Verifies engagement of drug with intended biological target; confirms mechanism of action [26] |

| Computational Modeling Platforms | Quantitative Systems Pharmacology (QSP) platforms | Predicts first-in-human dosing; optimizes trial design through simulation of different scenarios [31] |

| Statistical Software | Bayesian adaptive design applications | Implements complex dose-finding algorithms; enables real-time dose decision-making [26] [32] |

| Patient-Reported Outcome (PRO) Tools | Quality of life and symptom burden instruments | Captures treatment tolerability from patient perspective; informs risk-benefit assessment [29] |

| Population PK/PD Software | Nonlinear mixed-effects modeling programs | Characterizes drug exposure-response relationships; identifies patient factors influencing dosing [27] |

Implementation Framework and Operational Considerations

Clinical Trial Design Modifications

Implementing Project Optimus principles necessitates significant modifications to traditional oncology trial designs:

- Larger Early-Phase Trials: Phase I studies now typically include more patients, multiple arms for dose evaluation, and broader patient groups including older adults and those with additional health conditions [28]

- Randomized Dose Evaluation: Sponsors are expected to directly compare multiple doses in trials designed to assess antitumor activity, safety, and tolerability [26]

- Extended Observation Periods: Trials incorporate longer follow-up to characterize later-cycle dose adjustments, discontinuations, and milder adverse events experienced over extended durations [27]

- Adaptive Designs: Protocols include simulations for multiple scenarios and allow modification based on interim results, improving efficiency in dose identification [26]

Quantitative Impact on Development Programs

The adoption of Project Optimus frameworks has measurable impacts on development programs:

Table 4: Quantitative Comparison of Traditional vs. Optimus-Informed Development

| Development Parameter | Traditional Approach | Optimus-Informed Approach | Impact |

|---|---|---|---|

| Phase I Trial Duration | 6-12 months | 12-18 months [25] | Increased initial timeline |

| Patient Numbers in Early Development | Limited cohorts (e.g., 20-50 patients) | Expanded cohorts (e.g., 100+ patients) [28] | Higher initial resource investment |

| Doses Evaluated in Registrational Trials | Typically single dose (MTD) | Multiple doses (typically 2+) [25] | Enhanced dose characterization |

| Post-Marketing Dose Changes | 15% of drugs (2010-2022) [28] | Expected significant reduction | Reduced post-approval modifications |

| Patient Dose Modifications in Practice | 48% requiring reduction [26] | Expected significant reduction | Improved real-world tolerability |

Regulatory Strategy and Engagement

Successful implementation requires proactive regulatory planning:

- Early FDA Engagement: Sponsors are strongly encouraged to discuss dose optimization plans during pre-IND meetings and other formal interactions to align on expectations [25]

- Integrated Evidence Generation: Development programs should generate and utilize comprehensive data—including PK, PD, biomarkers, and patient-reported outcomes—rather than relying primarily on short-term toxicity [26]

- Fit-for-Purpose Approach: Each drug development program should be tailored to the specific drug mechanism and target population, with justification for selected dose optimization strategies [26]

Challenges and Future Directions

Implementation Challenges

Despite its benefits, Project Optimus implementation presents several challenges:

- Increased Complexity and Cost: Phase I trials designed with Project Optimus principles are typically longer, more expensive, and operationally complex due to additional patients and data requirements [25]

- Patient Enrollment Considerations: Larger patient requirements in early-phase trials may present challenges for rare diseases and pediatric studies [25]

- Analytical Capability Requirements: Implementing sophisticated modeling approaches requires specialized expertise in clinical pharmacology, statistics, and computational biology [27]

- Combination Therapy Considerations: Most dose optimization approaches were designed for single agents, creating challenges for combination regimens where dose optimization becomes multidimensional [26]

Emerging Innovations and Future Applications

The dose optimization landscape continues to evolve with several promising developments:

- Master-Protocol Platform Designs: Approaches that enable simultaneous evaluation of multiple doses across different indications and combination partners using Bayesian latent-subgroup models [32]

- Digital Twin Technologies: Creating virtual patient representations to simulate outcomes across different dosing strategies [27]

- Real-World Evidence Integration: Using real-world data to inform dose optimization and validate trial findings in broader populations [27]

- Patient-Focused Endpoint Development: Advancing quality-of-life metrics and patient-reported outcomes as critical components of dose optimization [29]

Project Optimus represents a fundamental paradigm shift in oncology drug development, moving from historical maximum tolerated dose approaches toward optimized dosing strategies that balance efficacy and tolerability based on comprehensive quantitative assessment. This transformation requires implementation of model-informed drug development strategies, innovative clinical trial designs, and early regulatory engagement throughout the development process.

For researchers and drug development professionals, successful navigation of this new landscape requires multidisciplinary expertise integrating clinical pharmacology, statistical modeling, biomarker science, and patient-focused endpoints. While implementation presents challenges including increased complexity in early development, the long-term benefits include improved patient outcomes, reduced post-marketing dose changes, and more efficient drug development pathways.

As oncology therapeutics continue to evolve toward increasingly targeted mechanisms and personalized approaches, the principles embodied by Project Optimus will become increasingly essential for maximizing therapeutic benefit while minimizing treatment-related toxicity, ultimately advancing the quality and effectiveness of cancer care.

AI-Driven Retrosynthesis and Advanced Analytical Method Development

AI and Machine Learning in Retrosynthetic Planning and Route Prediction

The optimization of drug synthesis pathways is a critical challenge in pharmaceutical research, requiring efficient strategies to enhance yield, reduce costs, and minimize environmental impact [33]. Retrosynthetic analysis, a problem-solving technique formalized by E.J. Corey, involves systematically deconstructing a target molecule into simpler precursor structures to identify feasible synthetic routes from commercially available starting materials [33] [34]. Traditionally, this process relied heavily on expert knowledge, experimental trial-and-error, and heuristic-based planning, which often led to prolonged development timelines, limited scalability, and unpredictable reaction outcomes [33].

Artificial Intelligence (AI) has emerged as a transformative force in chemical and pharmaceutical research, offering data-driven solutions to accelerate drug synthesis [33]. By leveraging machine learning (ML), deep learning, reinforcement learning, and cheminformatics, AI-powered models can predict reaction outcomes, suggest optimal synthetic routes, and refine reaction conditions with greater precision and speed than traditional methods [33]. The integration of AI into retrosynthetic planning is particularly timely, addressing the growing need for innovative methods that can optimize synthetic pathways while reducing resource consumption and environmental impact, ultimately making drug production more sustainable, cost-effective, and scalable [33].

This technical guide explores the core AI methodologies revolutionizing retrosynthetic planning and route prediction, framed within the broader context of drug analysis synthetic pathways and characterization research. It is intended for researchers, scientists, and drug development professionals seeking to understand and implement these advanced computational techniques.

Core AI Methodologies in Retrosynthesis

Template-Based and Template-Free Approaches

AI-driven retrosynthetic planning strategies can be broadly categorized into template-based and template-free methods.

Template-Based Approaches: These methods rely on reaction templates—encoded transformation rules derived from known chemical reactions—to deconstruct target molecules into precursors [34]. They often use molecular fingerprints combined with neural networks to recommend plausible templates [34]. A key limitation is that constructing reaction templates typically requires manual encoding or complex subgraph isomorphism, making it difficult to explore potential reaction templates in vast chemical space [34].

Template-Free and Semi-Template Methods: These emerging alternatives avoid the constraints of pre-defined templates and are generally categorized into sequence-based and graph-based approaches [34]. Sequence-based approaches represent molecules using linearized strings like SMILES (Simplified Molecular-Input Line-Entry System) and employ sequence-to-sequence models, such as Transformers, for retrosynthetic "translation" [34]. However, they often suffer from loss of molecular structural information and can generate invalid syntaxes [34]. Graph-based approaches represent molecules as graph structures and typically employ a two-stage paradigm involving Reaction Center Prediction (RCP) and Synthon Completion (SC) using Graph Neural Networks (GNNs) [34].

Key Machine Learning Techniques

Several specialized AI methodologies play crucial roles in enhancing retrosynthetic planning:

Graph Neural Networks (GNNs): Since molecules are inherently graph-structured, GNNs are particularly suited for molecular representation learning. Models such as Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), and Message Passing Neural Networks (MPNNs) can directly model molecular structures and predict reactivity patterns by capturing atomic relationships and bond structures [33] [34].

Transformer Architectures: Adapted from natural language processing, Transformer models process linearized molecular representations (e.g., SMILES) for retrosynthetic prediction. With self-attention mechanisms, they effectively capture long-range dependencies in molecular data [34].

Reinforcement Learning (RL): RL agents learn optimal synthesis pathways through trial-and-error in simulated environments, refining strategies based on rewards for successful outcomes. This approach is valuable for adaptive synthesis planning and multi-step route optimization [33].

Generative Models: Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) design novel synthesis routes and propose new molecular structures with desirable properties, enabling de novo molecular design [33].

Energy-Based Models (EBMs): These models define probabilities for synthesis tasks using an energy function, allowing assessment of the likelihood of synthetic routes being successful. Conditional Residual Energy-Based Models (CREBMs) have been proposed to evaluate entire synthetic routes based on specific criteria like cost, yield, and feasibility [35].

Neurosymbolic Programming for Group Retrosynthesis

A recent innovation inspired by human learning is neurosymbolic programming, which abstracts common synthesis patterns from known routes and reuses them for new, similar molecules [36]. This approach is particularly valuable for AI-generated small molecules, which often share structural similarities [36].

The system operates through three alternating phases:

- Wake Phase: The system attempts to solve retrosynthetic planning tasks, constructing an AND-OR search graph while recording successful routes and failures [36].

- Abstraction Phase: The system extracts reusable multi-step reaction strategies ("cascade chains" for consecutive transformations and "complementary chains" for interacting reactions) from recorded experiences and adds them as abstract reaction templates to the library [36].

- Dreaming Phase: Neural models are refined using generated "fantasies" (simulated retrosynthesis data) to improve their ability to apply the expanded template library effectively in subsequent cycles [36].

This learning-evolution cycle allows the system to progressively decrease marginal inference time as it processes more molecules, significantly improving efficiency for groups of similar compounds [36].

Quantitative Performance Comparison

Extensive benchmarking studies evaluate the performance of various AI-driven retrosynthesis models. The table below summarizes key performance metrics across different approaches and datasets, particularly focusing on top-k exact match accuracy, which measures whether the predicted reactants exactly match the ground truth.

Table 1: Performance Comparison of Retrosynthesis Models on USPTO-50K Dataset

| Model | Type | Top-1 Accuracy (Known Class) | Top-3 Accuracy (Known Class) | Top-1 Accuracy (Unknown Class) | Top-3 Accuracy (Unknown Class) |

|---|---|---|---|---|---|

| RetroExplainer [34] | Molecular Assembly | 55.2% | 74.6% | 53.9% | 72.8% |

| LocalRetro [34] | Graph-based | 54.1% | - | 52.5% | - |

| R-SMILES [34] | Sequence-based | - | - | 52.4% | - |

| G2G [34] | Graph-based | 48.1% | 66.8% | 48.9% | 67.2% |

| GraphRetro [34] | Graph-based | 50.9% | - | 46.2% | - |

| Neurosymbolic Model [36] | Neurosymbolic | ~61% (Success rate) | - | - | - |

Note: Performance metrics can vary based on data splitting methods and evaluation criteria. "-" indicates data not provided in the source material.

Additional performance insights include:

The RetroExplainer model, which formulates retrosynthesis as a molecular assembly process, achieved optimal performance in five out of nine metrics on the USPTO-50K dataset and demonstrated strong performance on USPTO-FULL and USPTO-MIT benchmarks [34].

When extended to multi-step retrosynthesis planning, RetroExplainer identified 101 pathways, with 86.9% of the single reactions corresponding to literature-reported reactions, demonstrating high practical validity [34].

The neurosymbolic programming approach demonstrated superior performance in success rate and reduced inference time for single-molecule retrosynthesis, particularly showing a significant reduction in marginal inference time when planning synthesis for groups of similar molecules [36].

CREBM frameworks have been shown to consistently boost performance across various synthesis strategies, outperforming previous state-of-the-art top-1 accuracy by a margin of 2.5% [35].

Experimental Protocols and Methodologies

Benchmarking Retrosynthesis Models

Objective: To evaluate the performance of AI-driven retrosynthesis models using standardized datasets and metrics.

Materials and Reagents:

- Hardware: High-performance computing cluster with GPU acceleration

- Software: Python with deep learning frameworks (PyTorch/TensorFlow), RDKit for cheminformatics

- Datasets: USPTO-50K, USPTO-FULL, USPTO-MIT, or custom datasets of reaction data

Methodology:

- Data Preprocessing:

- Extract and clean reaction data from source datasets

- Apply canonicalization and standardization to molecular representations (SMILES)

- Split data into training, validation, and test sets using random splitting or similarity-based splitting to avoid scaffold bias [34]

Model Training:

- Initialize model with appropriate architecture (e.g., GNN, Transformer)

- Train using maximum likelihood estimation or reinforcement learning objectives

- Validate performance on validation set and adjust hyperparameters accordingly

Evaluation:

- Calculate top-k exact match accuracy by comparing predicted reactants with ground truth

- Assess route feasibility through expert validation or literature comparison

- Measure computational efficiency (inference time, memory usage)

Validation:

- For promising routes, conduct laboratory validation through experimental synthesis

- Compare predicted yields and byproducts with experimental results

Implementing Neurosymbolic Retrosynthesis

Objective: To apply the wake-abstraction-dreaming cycle for retrosynthetic planning of molecule groups.

Methodology:

- Wake Phase Implementation:

- Construct AND-OR search graph starting from target molecule

- Utilize two neural networks: one for selecting graph expansion points, another for guiding expansion method

- Record successful synthesis routes and failed molecules

Abstraction Phase Implementation: