Synthesizing the Science: Emerging Trends in Environmental Degradation Evidence for Research and Development

This article provides a comprehensive analysis of the rapidly evolving field of environmental degradation evidence synthesis.

Synthesizing the Science: Emerging Trends in Environmental Degradation Evidence for Research and Development

Abstract

This article provides a comprehensive analysis of the rapidly evolving field of environmental degradation evidence synthesis. Tailored for researchers, scientists, and drug development professionals, it explores the foundational drivers necessitating robust evidence compilation, from the 'triple planetary crisis' to regulatory pressures. It delves into cutting-edge methodological advancements, including AI-driven systematic reviews and rapid evidence synthesis, and addresses critical challenges in data integration and interdisciplinary collaboration. By presenting validation frameworks and comparative analyses of synthesis approaches, this resource aims to equip scientific professionals with the knowledge to enhance the rigor, efficiency, and applicability of environmental evidence in research and development contexts, ultimately fostering more sustainable and resilient scientific practices.

The Urgent Drive: Why Evidence Synthesis is Critical for Modern Environmental Science

The triple planetary crisis—comprising climate change, biodiversity loss, and pollution—represents an existential threat to global ecosystem stability and human wellbeing. These three challenges are not isolated phenomena but exist in a tightly-coupled relationship of mutual reinforcement, creating a feedback loop that accelerates environmental degradation. According to the United Nations, this interlinked crisis constitutes the central environmental challenge of our time, requiring integrated solutions rather than siloed approaches [1]. The scientific community has reached consensus that human activities are the dominant cause of contemporary changes in Earth's climate system and biodiversity patterns, with unprecedented rates of change being observed across multiple indicators [2] [3].

The framework of interconnected global risks highlights how environmental crises dominate the long-term threat landscape. The Global Risks Report 2025 identifies environmental risks as the most severe threats over a ten-year horizon, with extreme weather events, biodiversity loss and ecosystem collapse, critical changes to Earth systems, and natural resource shortages comprising the top four global risks [4] [5]. This positioning of environmental threats ahead of geopolitical, societal, and technological risks underscores the fundamental nature of the triple planetary crisis to global stability and security. The persistence of these interconnected risks despite decades of scientific warnings suggests the need for deeper structural changes rather than incremental solutions [6].

Quantitative Assessment of Crisis Dimensions

Current State and Trends Across Crisis Components

Table 1: Key Quantitative Indicators of the Triple Planetary Crisis

| Indicator Category | Specific Metric | Current Value/Status | Trend & Timeline | Primary Source |

|---|---|---|---|---|

| Climate Change | Human-induced warming | 1.22°C [1.0 to 1.5] above 1850-1900 | 0.27°C/decade (2015-2024) | [3] |

| GHG emissions | 53.6 ± 5.2 Gt CO₂e/yr | At all-time high | [3] | |

| Remaining carbon budget (1.5°C) | 200 Gt CO₂ (as of 2024) | Decreasing by ~40 Gt/yr | [3] | |

| Biodiversity Loss | Species population decline | Average 73% decline | Since 1970 (50 years) | [4] |

| Species at extinction risk | ~1 million species | Many within decades | [1] | |

| Local species reduction | 20% lower at impacted sites | Compared to unaffected sites | [2] | |

| Pollution | Air pollution deaths | 7.9 million annually | 86% from NCDs | [7] [8] |

| PM2.5 exposure | 36% of global population >35 μg/m³ | Above WHO interim target | [7] [8] | |

| Plastic ocean input | 14 million tons/year | Projected 29M tons by 2040 | [9] |

The quantitative assessment reveals the accelerating nature of all three crisis dimensions. The climate change indicators demonstrate that human influence on the climate system is now progressing at unprecedented rates in the instrumental record [3]. The 2024 observed global surface temperature reached 1.52°C above pre-industrial levels, exceeding the best estimate of human-induced warming (1.36°C) due to combined human forcing and internal variability associated with El Niño phases [3]. This acceleration occurs despite a slight reduction in the rate of CO₂ emissions increase compared to the 2000s, highlighting the complex dynamics of Earth's climate system.

The biodiversity metrics paint a picture of catastrophic decline, with the WWF's Living Planet Report 2024 documenting a 73% average decline in monitored wildlife populations over just 50 years [4]. A comprehensive synthesis of 2,000 global studies confirms that human activities have resulted in "unprecedented effects on biodiversity" across all species groups and ecosystems, with particularly severe losses among reptiles, amphibians, and mammals [2]. The analysis, covering nearly 100,000 sites across all continents, found that the number of species at human-impacted sites was almost 20% lower than at sites unaffected by humans, demonstrating the pervasive nature of anthropogenic impact.

Pollution indicators reveal a substantial health burden, with the State of Global Air 2025 report attributing 7.9 million deaths annually to air pollution exposure, 86% of which are from noncommunicable diseases (NCDs) [7] [8]. For the first time, the 2025 report linked air pollution to dementia, with related exposure resulting in more than 625,000 deaths and nearly 12 million healthy years of life lost globally in 2023 [7]. The pollution crisis extends beyond air quality to plastic contamination, with research indicating that without action, the plastic crisis will grow to 29 million metric tons per year by 2040 [9].

Economic and Social Impact Metrics

Table 2: Socioeconomic Consequences of Environmental Degradation

| Impact Category | Economic/Social Metric | Scale/Value | Affected Systems |

|---|---|---|---|

| Economic Dependencies | GDP dependent on nature | >50% of global GDP | All economic sectors |

| Livelihood reliance on forests | >1 billion people | Forest-dependent communities | |

| Agricultural output from pollinators | $235-577 billion/year | Global food production | |

| Health Impacts | Air pollution healthcare burden | 161 million healthy years lost (2023) | Global healthcare systems |

| Wetlands loss since 1970 | 35% of global coverage | Freshwater security | |

| Zoonotic disease emergence | >75% of emerging diseases | Pandemic risk management | |

| Ecosystem Service Loss | Coral reef loss (2009-2018) | 14% of global reefs | Coastal protection, fisheries |

| Wetland carbon storage | 2x forests (per unit area) | Climate regulation | |

| Soil fertility maintenance | 75% global food crops | Agricultural sustainability |

The socioeconomic dimensions of the triple planetary crisis highlight the profound dependencies of human systems on functioning ecosystems. The economic valuation of ecosystem services reveals that over half of global GDP is dependent on nature, with more than 1 billion people relying directly on forests for their livelihoods [1]. The agricultural sector demonstrates particularly critical dependencies, with more than 75% of global food crops relying on animal pollinators, contributing $235-577 billion annually to global agricultural output [10]. These dependencies create significant vulnerability to ecosystem degradation, with the global economic impact of biodiversity loss estimated at $10 trillion annually [10].

The health implications extend far beyond direct pollution effects, encompassing nutritional security, disease regulation, and medicinal resources. Significant medical and pharmacological discoveries continue to emerge from biological diversity, with over 50% of modern medicines derived from natural sources and 60% of the world's population utilizing traditional medicines primarily based on natural products [10]. The disruption of disease regulation ecosystems services has significant consequences, with over 75% of emerging infectious diseases being zoonotic and often arising in areas where ecosystems have been disrupted by deforestation or land-use change [10].

Mechanisms of Interconnection and Feedback Loops

The triple planetary crisis exhibits strong interconnections and feedback mechanisms that amplify individual effects. Understanding these coupling dynamics is essential for developing effective intervention strategies.

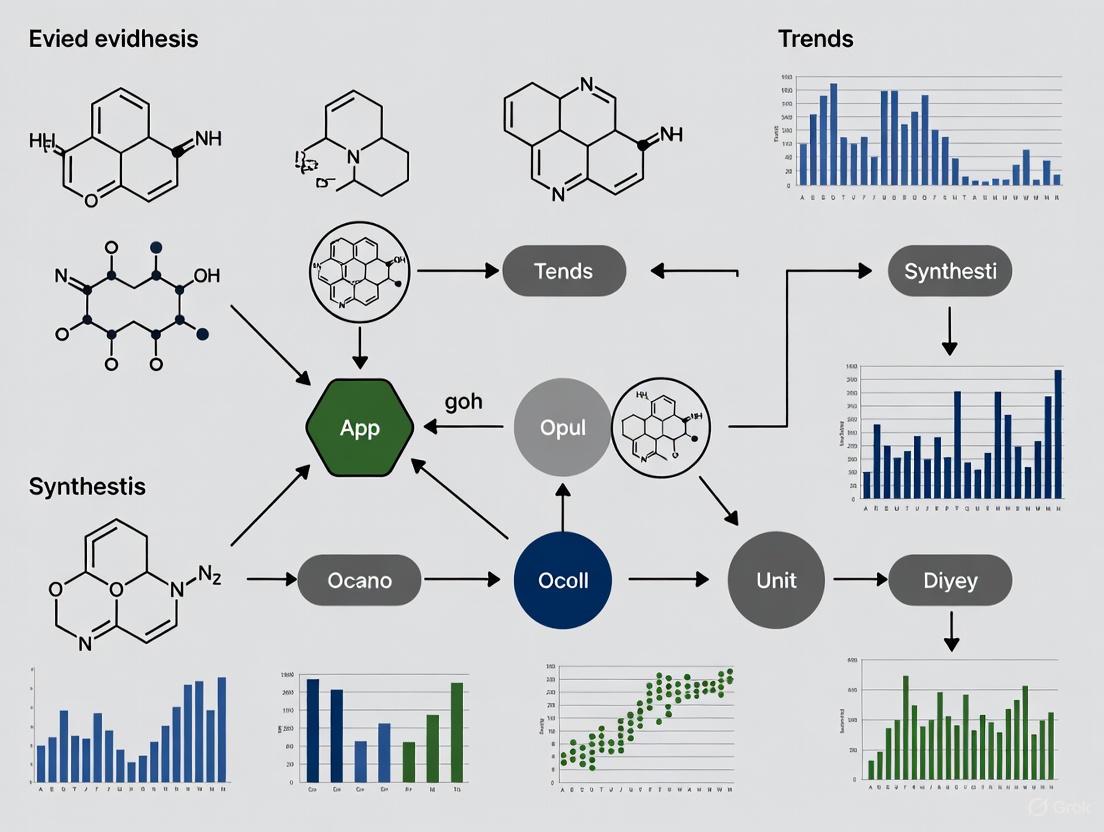

Diagram 1: Interconnection of planetary crises. This systems map illustrates the primary drivers (top) and the reinforcing feedback loops (center) between the three components of the planetary crisis.

Climate-Biodiversity Feedback Mechanisms

The climate-biodiversity nexus represents one of the most critical interconnections in the planetary crisis. Climate change has altered marine, terrestrial, and freshwater ecosystems worldwide, causing loss of local species, increased diseases, and driving mass mortality of plants and animals [1]. On land, higher temperatures have forced animals and plants to move to higher elevations or higher latitudes, with many moving toward the Earth's poles, creating far-reaching consequences for ecosystem functioning [1]. The risk of species extinction increases with every degree of warming, creating a direct relationship between climate forcing and biodiversity loss.

Conversely, biodiversity loss diminishes ecosystems' capacity to function as carbon sinks, accelerating climate change. When human activities produce greenhouse gases, approximately half of the emissions remain in the atmosphere, while the other half is absorbed by the land and ocean [1]. These ecosystems—and the biodiversity they contain—are natural carbon sinks, and their degradation reduces this vital service. For example, irreplaceable ecosystems like parts of the Amazon rainforest are turning from carbon sinks into carbon sources due to deforestation [1]. This represents a critical tipping point where previously stabilizing systems become amplifiers of climate change.

Pollution-Climate-Biodiversity Cross-Interactions

The pollution dimension interacts with both climate and biodiversity through multiple pathways. Air pollution from particulate matter (PM2.5) and other aerosols creates complex forcing effects on climate systems while simultaneously directly damaging ecosystems through acid deposition and toxicity effects [7] [8]. Plastic pollution represents another significant cross-cutting threat, with approximately 14 million tons of plastic entering the oceans annually, harming wildlife habitats and the animals that live in them [9]. Since 91% of all plastic ever made is not recycled and plastic takes 400 years to decompose, this pollution creates persistent stressors on ecosystems already threatened by other factors [9].

The interconnected nature of these challenges means they cannot be effectively addressed in isolation. As noted in the Interconnected Disaster Risks 2025 report, many current solutions represent superficial fixes that often impede real change because they fail to address the systemic couplings between these crises [6]. Effective intervention requires understanding and targeting these interconnection points, particularly the shared drivers that simultaneously affect multiple crisis dimensions.

Research Methodologies for Studying Interconnected Systems

Large-Scale Biodiversity Assessment Protocols

The comprehensive understanding of biodiversity decline emerges from rigorous standardised assessment methodologies. The recent synthesis of 2,000 global studies—covering nearly 100,000 sites across all continents—exemplifies the scale of evidence required to make robust conclusions about global biodiversity trends [2]. This methodology incorporated:

- Standardised sampling protocols across terrestrial, freshwater and marine habitats

- Inclusion of all organism groups from microbes and fungi to plants, invertebrates, fish, birds, and mammals

- Quantification of five key drivers of decline: habitat change, direct exploitation of resources, climate change, invasive species, and pollution

- Multi-dimensional biodiversity metrics including species richness, population abundance, and community composition

- Statistical analysis of human impact gradients comparing affected and unaffected sites

This protocol revealed not just declines in species numbers (with approximately 20% lower species richness at human-impacted sites) but also significant shifts in community composition, a phenomenon known as biotic homogenization [2]. In mountainous areas, for example, specialised plants are being replaced by those that typically grow at lower altitudes—a process termed the "elevator to extinction" as high-altitude plants have nowhere else to go [2]. This methodological approach provides the evidentiary foundation for global biodiversity assessments and conservation priority-setting.

Climate Attribution and Carbon Budget Methodologies

The precise quantification of human influence on climate systems relies on sophisticated attribution methodologies aligned with IPCC assessment protocols. The Indicators of Global Climate Change (IGCC) initiative provides annual updates using methods consistent with the IPCC Sixth Assessment Report (AR6) Working Group One report [3]. The key methodological components include:

- Greenhouse gas emissions accounting combining multiple datasets (Global Carbon Budget, EDGAR, PRIMAP-hist) to address coverage limitations

- Radiative forcing calculations incorporating both well-mixed greenhouse gases and short-lived climate forcers

- Earth energy imbalance estimation using satellite observations and ocean heat content measurements

- Attribution of observed warming to human and natural influences using detection and attribution techniques

- Carbon budget calculations integrating updated understanding of climate sensitivity and historical warming

This methodology revealed that human-induced warming has been increasing at a rate unprecedented in the instrumental record, reaching 0.27°C per decade over the 2015-2024 period [3]. This high rate of warming results from a combination of greenhouse gas emissions being at an all-time high (53.6±5.2 Gt CO₂e yr⁻¹ over the last decade) coupled with reductions in the strength of aerosol cooling [3]. The robustness of these findings depends critically on the transparent, reproducible methodologies employed.

Health Impact Assessment Frameworks

The quantification of pollution health impacts employs standardized burden of disease assessment frameworks, as exemplified by the State of Global Air 2025 report [7] [8]. The methodological approach includes:

- Integrated exposure-response functions derived from epidemiological cohort studies

- Global exposure modeling combining satellite data, chemical transport models, and ground monitoring stations

- Cause-specific mortality and morbidity analysis for cardiorespiratory diseases, cancers, diabetes, and dementia

- Years of life lost and disability-adjusted life year calculations to quantify population health impact

- Uncertainty propagation through Monte Carlo simulation techniques

This methodology enables the attribution of specific health outcomes to air pollution exposures, revealing that 95% of air pollution-attributable deaths in adults over 60 are due to noncommunicable diseases [7]. The incorporation of new health endpoints like dementia-related outcomes demonstrates the evolving understanding of pollution health impacts, with the 2025 report finding that dementia related to air pollution resulted in more than 625,000 deaths and nearly 12 million healthy years of life lost globally in 2023 [7].

Research Reagents and Analytical Tools

Table 3: Essential Research Tools for Environmental Crisis Investigation

| Tool Category | Specific Technology/Platform | Research Application | Key Function |

|---|---|---|---|

| Remote Sensing Platforms | Satellite-based atmospheric spectrometers | GHG concentration monitoring | Quantifying CO₂, CH₄ sources and sinks |

| MODIS/Landsat imagery | Deforestation and land use change tracking | Habitat loss quantification | |

| Sentinel series satellites | Air pollution dispersion modeling | PM2.5 exposure assessment | |

| Biodiversity Assessment Tools | eDNA (environmental DNA) sampling | Aquatic and terrestrial biodiversity surveys | Non-invasive species detection |

| Acoustic monitoring networks | Ecosystem health assessment | Bioacoustics diversity indices | |

| Camera trapping grids | Wildlife population dynamics | Species abundance estimation | |

| Climate Analytics | Earth System Models (ESMs) | Climate projection and scenario analysis | Attribution of climate extremes |

| Carbon budget accounting tools | Emissions pathway assessment | Paris Agreement compatibility | |

| Paleoclimate proxies | Historical climate reconstruction | Pre-industrial baseline establishment | |

| Pollution Measurement | Aerosol mass spectrometers | Particulate matter composition | Source apportionment analysis |

| Passive sampling devices | Persistent organic pollutant monitoring | Bioaccumulation potential assessment | |

| Microplastic identification tools | Environmental plastic contamination | Polymer typing and quantification |

The investigation of interconnected environmental crises requires sophisticated research infrastructure and standardized analytical frameworks. Remote sensing technologies have revolutionized our ability to monitor environmental changes at global scales, with satellite-based atmospheric spectrometers providing critical data on greenhouse gas concentrations and sources [3]. Similarly, satellite imagery enables consistent tracking of deforestation and land use change, with analysis revealing that human activity has altered over 70% of all ice-free land, primarily for food production [1]. These observational technologies provide the foundational data for understanding large-scale environmental trends.

The emerging field of environmental DNA (eDNA) represents a transformative approach to biodiversity monitoring, enabling non-invasive species detection across aquatic and terrestrial ecosystems. This methodology was incorporated into the large-scale synthesis that found human pressures distinctly shift community composition and decrease local diversity across all major ecosystems [2]. Combined with traditional biodiversity assessment methods including acoustic monitoring and camera trapping, eDNA technologies enhance the spatial and temporal resolution of biodiversity tracking, essential for detecting early warning signs of ecosystem degradation.

Advanced analytical frameworks for integrating diverse data streams are equally critical. The WWF has developed key tools to enable the private sector to better understand their nature-related risks, which have already been used by more than 17,000 users to assess over 2 million sites [4]. These tools provide clarity on what and where a company's risks are, thus outlining a pathway for how to address them, representing the application of research methodologies to practical decision-making contexts.

Integrated Solution Frameworks

Addressing the triple planetary crisis requires transformative approaches that target the root causes rather than symptoms of environmental degradation. The Interconnected Disaster Risks 2025 report proposes a theory of "Deep Change" that examines global challenges by tracing them to their root causes, revealing the underlying structures and societal assumptions that allow these problems to persist [6]. This framework identifies five essential shifts needed to address the interconnected crises:

- Rethink waste through circular economy principles that eliminate pollution at source

- Realign with nature by recognizing ecosystem services as fundamental to human wellbeing

- Reconsider responsibility across entire supply chains and product lifecycles

- Reimagine the future beyond growth-based paradigms

- Redefine value to incorporate natural capital and wellbeing metrics

The implementation of these shifts requires leveraging existing international agreements in a synergistic manner. The Kunming-Montreal Global Biodiversity Framework and the Paris Agreement on climate change represent complementary governance frameworks that must be implemented in coordination [1]. As expressed by Inger Andersen, head of the UN Environment Programme: "Delivering on the framework will contribute to the climate agenda, while full delivery of the Paris Agreement is needed to allow the framework to succeed. We can't work in isolation if we are to end the triple planetary crises" [1].

Nature-based solutions represent particularly promising integrated approaches that simultaneously address multiple crisis dimensions. Protecting, managing, and restoring forests, for example, offers roughly two-thirds of the total mitigation potential of all nature-based solutions [1]. Similarly, ocean habitats such as seagrasses and mangroves can sequester carbon dioxide at rates up to four times higher than terrestrial forests while providing critical biodiversity habitat and coastal protection services [1]. About one-third of the greenhouse gas emissions reductions needed in the next decade could be achieved by improving nature's ability to absorb emissions, highlighting the potential of these integrated approaches [1].

Diagram 2: Deep change intervention framework. This diagram illustrates the pathway from root cause analysis through systemic interventions to simultaneous benefits across all three crisis domains.

The critical role of Indigenous knowledge and leadership in implementing effective solutions is increasingly recognized. The UN Secretary-General has emphasized that "Indigenous Peoples, people of African descent, and local communities are guardians of our nature. Their traditional knowledge is a living library of biodiversity conservation" [1]. With Indigenous Peoples managing over 38 million square kilometers of land globally—including nearly 40% of all protected areas—their inclusion in environmental governance is essential for effective conservation outcomes [10].

Despite the compelling evidence documenting the triple planetary crisis, significant research gaps remain. The complex feedback mechanisms between climate change, biodiversity loss, and pollution require further elucidation, particularly regarding non-linear responses and potential tipping points [6] [3]. The full extent of climate change impacts on species and ecosystems is not entirely understood, necessitating continued monitoring and model refinement [2]. Similarly, the health implications of emerging pollutants and interactive effects between multiple stressors represent priority research areas.

The methodological challenges of integrated assessment remain substantial. As noted in climate indicator research, "despite extensive literature on GHG emissions, there remains important differences in reporting conventions and system boundaries between assessments" [3]. Harmonizing methodologies across disciplines is essential for producing coherent policy recommendations. The development of multi-dimensional indicators that simultaneously capture climate, biodiversity, and pollution dimensions would represent a significant advance in monitoring capabilities.

The accelerating pace of change underscores the urgency of response. With the past decade (2015-2024) being the warmest on record and greenhouse gas concentrations reaching new highs, the window for preventing irreversible tipping points is rapidly closing [9] [4]. The next five years are critical for establishing pathways for transformative action, with system-wide changes needed in how food and energy are produced and consumed, and in how finance is mobilized [4]. By 2030, increased conservation and restoration efforts will be vital in ensuring the decline in nature is reversed, making this decade decisive for the future of planetary systems [4].

Global environmental policy is undergoing a transformative shift, moving from voluntary commitments toward integrated, legally binding frameworks that demand unprecedented scientific rigor. The Kunming-Montreal Global Biodiversity Framework (GBF) and the European Green Deal (EGD) represent the vanguard of this change, establishing ambitious 2030 targets that require sophisticated monitoring, predictive modeling, and standardized evidence synthesis [11] [12]. These frameworks are not merely political declarations but are fundamentally reshaping what constitutes valid evidence in environmental science, creating new demands for data interoperability, predictive validation, and interdisciplinary methodologies that bridge ecological, social, and economic domains.

The core challenge identified across recent assessments is the transition from retrospective monitoring to forward-looking predictive capabilities [13]. Where previous biodiversity strategies relied on tracking past performance through indicators like the Red List Index, the current policy imperative requires forecasting outcomes under alternative scenarios—a methodological shift comparable to the evolution of climate modeling decades ago. This technical whitepaper examines how these evidence needs manifest across regulatory requirements, research methodologies, and practical implementation challenges, providing researchers with a comprehensive toolkit for navigating this new landscape.

Policy Framework Analysis: Evidence Requirements and Monitoring Mechanisms

The Global Biodiversity Framework's Evidence Architecture

The Kunming-Montreal GBF establishes 23 action-oriented targets for 2030, organized around reducing threats to biodiversity, meeting human needs through sustainable use and benefit-sharing, and implementing tools and solutions for mainstreaming and integration [11] [14]. The framework's monitoring approach combines mandatory headline indicators with optional component and complementary indicators, creating a layered evidence system that demands both standardized data collection and contextual interpretation [15].

Critical evidence gaps have emerged in the GBF's implementation, particularly regarding predictive modeling capacity. As noted in a 2025 scientific assessment, the GBF "lacks forward-looking, predictive tools to evaluate whether current actions or new commitments can deliver desired outcomes" and surprisingly does not mention "model" or "prediction" anywhere in its text [13]. This creates a fundamental tension between the framework's ambition and its current methodological foundations, requiring researchers to develop new approaches that connect specific conservation actions to projected outcomes across multiple spatial and temporal scales.

The European Green Deal's Integrated Evidence Framework

The European Green Deal, with its Biodiversity Strategy for 2030 as a core component, establishes an even more prescriptive evidence regime, characterized by legally binding targets and cross-compliance mechanisms that link biodiversity evidence to economic decision-making [12]. Key regulatory instruments include the Corporate Sustainability Reporting Directive (CSDDD), Carbon Border Adjustment Mechanism (CBAM), and EU Regulation on Deforestation-free Products (EUDR), each creating distinct evidence requirements for researchers and regulated entities [16].

According to the 2025 European Green Deal Barometer, sustainability experts identify several evidence-related implementation challenges, including policy coherence (72% of experts note misalignment between EU external policies and Green Deal objectives) and monitoring capacity (89% recognize significant challenges for countries outside the EU) [16]. The Barometer also indicates that nearly two-thirds of experts believe CBAM revenues should be recycled toward climate-vulnerable countries, highlighting the equity dimensions of evidence-based policy mechanisms.

Table 1: Key Policy Frameworks and Their Evidence Requirements

| Policy Framework | Primary Evidence Mechanisms | Critical Knowledge Gaps | Implementation Timeline |

|---|---|---|---|

| Kunming-Montreal GBF [11] [14] | Headline indicators (e.g., Red List Index), National Biodiversity Strategies and Action Plans, participatory monitoring | Predictive modeling capacity, ecosystem integrity metrics, biodiversity-economic tradeoff analysis | National targets submitted 2023-2024, reporting every 5 years |

| EU Biodiversity Strategy 2030 [12] | EU Nature Restoration Law, Strict protection zones, Corporate sustainability reporting | Nature-based Solutions effectiveness, soil health indicators, cross-compliance mechanisms | Legal adoption 2022-2024, implementation through 2030 |

| European Green Deal [16] | Carbon Border Adjustment Mechanism, Deforestation-free supply chain tracing, Green Capital Allocation | Policy coherence metrics, spillover effects assessment, just transition indicators | Phased implementation 2021-2030, with review mechanisms |

Methodological Innovations: Addressing Evidence Gaps Through Predictive Modeling and Standardization

The Predictive Modeling Imperative

The most significant methodological shift demanded by current policy frameworks is the move from descriptive to predictive biodiversity models that can forecast outcomes under alternative policy scenarios and intervention strategies [13]. These models use quantitative tools and simulations to project changes in key biodiversity components (genetic diversity, species distributions, ecosystem services) in response to various human activities and conservation interventions.

The technical architecture of these models ranges from correlative species distribution models to mechanistic models that incorporate biological processes such as physiology, demography, dispersal, and interspecific interactions [13]. For drug development professionals and researchers, these models offer critical insights into how ecosystem changes might impact natural product discovery, disease vector distributions, and ecosystem services relevant to human health.

Standardized Methodologies for Policy-Relevant Research

The implementation of global frameworks requires standardized, reproducible methodologies that enable cross-jurisdictional comparison while accommodating local ecological and social contexts. Based on analysis of GBF implementation guidance and European Nature-Based Solutions platforms, several core methodological approaches have emerged as essential for policy-relevant research.

Table 2: Essential Methodological Protocols for Framework Implementation

| Methodology Category | Core Technical Requirements | Policy Application | Standardization Status |

|---|---|---|---|

| Ecological Integrity Assessment [15] | Ecosystem composition/structure/function measurement, natural range of variation determination, resilience capacity evaluation | Target 1 (spatial planning), Target 2 (ecosystem restoration) | Emerging standards (Ecosystem Integrity Index) |

| Nature-based Solutions Effectiveness Monitoring [17] | Long-term socio-ecological monitoring, counterfactual scenario development, multidimensional benefit assessment | EU Nature Restoration Law, GBF Targets 2, 8, 11 | IUCN Global Standard (8 criteria, 28 indicators) |

| Corporate Biodiversity Impact Assessment [18] | Supply chain mapping, site-specific impact quantification, dependency analysis, materiality determination | Corporate Sustainability Reporting Directive, EU Taxonomy | Multiple competing standards (EFRAG, TNFD) |

| Spatial Planning Integration [15] | Participatory GIS, biodiversity-inclusive land/sea use allocation, connectivity modeling, high biodiversity importance identification | GBF Target 1, EU Biodiversity Strategy protected areas | Key Biodiversity Areas standardized identification |

The Research Reagent Toolkit: Essential Solutions for Policy-Relevant Biodiversity Research

Table 3: Key Research Reagent Solutions for Biodiversity Evidence Generation

| Research Reagent Category | Specific Examples | Primary Function in Evidence Generation | Policy Relevance |

|---|---|---|---|

| Standardized Biodiversity Indicators | Red List Index, Living Planet Index, Ecosystem Integrity Index | Track status and trends of species and ecosystems; provide headline indicators for GBF monitoring | Mandatory reporting under GBF monitoring framework [13] [15] |

| Modeling Platforms & Tools | Species Distribution Models (SDMs), Integrated Assessment Models, Systematic Conservation Planning Software | Project biodiversity outcomes under alternative scenarios; optimize conservation interventions | Required for predictive assessment of target achievement [13] |

| Genetic Sequencing Technologies | DNA barcoding libraries, environmental DNA (eDNA) metabarcoding kits, genomic reference databases | Detect species presence/absence; monitor genetic diversity; identify illegal trade | Supports GBF Target 13 (genetic diversity maintenance) [18] |

| Remote Sensing & Earth Observation | Satellite imagery analysis tools, vegetation indices, habitat fragmentation algorithms, land cover classification systems | Monitor ecosystem extent and condition; identify degradation hotspots; track restoration progress | Essential for GBF Targets 1-2 (spatial planning, restoration) [15] |

| Social-Ecological Assessment Frameworks | Nature's Contributions to People (NCP) valuation toolkit, IPBES methodological assessments, participatory monitoring protocols | Integrate diverse knowledge systems; assess equitable benefits; document traditional knowledge | Required for rights-based implementation of GBF [11] [17] |

Implementation Challenges: Bridging the Science-Policy Divide

Evidence Gaps and Methodological Limitations

Despite the sophisticated policy architecture, significant evidence gaps persist in implementing both the GBF and European Green Deal. The 2025 Science-Policy Forum on Nature-based Solutions identified critical limitations in long-term socio-ecological monitoring systems, economic valuation methodologies, and context-specific effectiveness data [17]. These limitations create fundamental challenges for researchers and policymakers attempting to design evidence-based interventions.

For the business and finance sector, implementation challenges center on metric harmonization and capacity constraints, particularly for Small and Medium Enterprises (SMEs) that account for 99% of EU enterprises but have significantly fewer resources to invest in biodiversity capacity building [18]. Recent assessments note that "companies struggle to align with biodiversity policies and require better data flows, indicators, and tools to assess impacts across scales" [18], highlighting the practical limitations of current evidence systems.

Interdisciplinary and Equity Considerations

The implementation of global frameworks demands interdisciplinary approaches that integrate ecological, social, and economic evidence while respecting diverse knowledge systems. The GBF specifically acknowledges that "successful implementation will depend on ensuring gender equality and empowerment of women and girls" and requires a "human rights-based approach" [11]. These considerations translate into specific methodological requirements for researchers, including:

- Free, prior and informed consent protocols for research involving Indigenous Peoples and Local Communities [11]

- Intergenerational equity assessments that evaluate impacts on future generations [11]

- Gender-responsive indicators that track differential impacts and benefits across gender lines [11]

- Social justice safeguards in Nature-based Solutions implementation to prevent gentrification or community disruption [17]

The technical implementation of these principles requires sophisticated methodological approaches that bridge quantitative and qualitative evidence traditions, creating new demands for researchers working at the science-policy interface.

Future Directions: Institutional Innovation and Research Priorities

Addressing the evidence needs of global frameworks requires institutional innovation alongside methodological advances. Scientific assessments have proposed establishing a World Biodiversity Research Programme (WBRP) analogous to the World Climate Research Programme, which would coordinate international research efforts, standardize modeling approaches, and ensure equitable access to technical capacity [13]. Such an institution could address critical gaps in predictive modeling capacity while facilitating the "iterative learning cycle" between monitoring and management that currently limits framework implementation.

Priority research investments identified across frameworks include:

- Developing robust biodiversity-economic models that reconcile conservation targets with development imperatives [13] [18]

- Creating harmonized valuation metrics for Nature-based Solutions to attract private investment [17]

- Establishing long-term socio-ecological monitoring networks to assess intervention effectiveness [17]

- Building adaptive management frameworks that enable course correction based on new evidence [13]

- Enhancing data interoperability across disciplines and knowledge systems [18]

The successful implementation of the European Green Deal and Kunming-Montreal GBF depends fundamentally on closing these evidence gaps through coordinated research efforts that align scientific inquiry with policy imperatives, creating a new paradigm for evidence-based environmental governance.

The pharmaceutical industry faces a dual challenge: addressing global health needs while minimizing its environmental footprint. The European Pharmaceutical Strategy highlights the environmental implications across the entire life cycle of pharmaceuticals, from design and production to use and disposal [19]. Traditional drug discovery and development processes are resource-intensive, with E-Factors (a measure of waste generated per kilogram of product) often ranging from 25 to over 100 in pharmaceutical manufacturing, meaning 25-100 kg of waste is produced for every 1 kg of active pharmaceutical ingredient (API) manufactured [19]. Solvents alone constitute 80-90% of the total mass used in pharmaceutical manufacturing processes, presenting a significant opportunity for green chemistry innovations [19].

Evidence synthesis—the systematic collection, evaluation, and integration of research findings—enables data-driven decisions in sustainable drug development. Exponential increases in scientific publications combined with disciplinary differences in reporting make traditional literature synthesis challenging [20]. Emerging computational approaches, including machine learning (ML), natural language processing (NLP), and large language models (LLMs), now promise to accelerate cross-disciplinary evidence synthesis, providing researchers with comprehensive insights to guide sustainable protocol development [20]. This whitepaper examines how systematic evidence synthesis informs green chemistry adoption in pharmaceutical research and development.

Green Chemistry Foundations and Quantitative Metrics

Green chemistry, defined as "the design of chemical products and processes that reduce or eliminate the use and generation of hazardous substances," operates on 12 principles established by Anastas and Warner [19]. These principles provide a framework for designing chemical processes that minimize environmental impact while maintaining economic viability. In pharmaceutical contexts, several quantitative green metrics enable objective evaluation of sustainability improvements (Table 1).

Table 1: Key Green Chemistry Metrics for Pharmaceutical Development

| Metric | Calculation | Application in Pharma | Optimal Range |

|---|---|---|---|

| E-Factor | Total waste (kg) / Product (kg) | Process environmental impact assessment | Lower values preferred (ideal: 0) |

| Atom Economy | (Molecular weight of product / Molecular weight of reactants) × 100% | Reaction efficiency evaluation | Higher percentages preferred (ideal: 100%) |

| Process Mass Intensity (PMI) | Total mass in process (kg) / Mass of product (kg) | Resource efficiency measurement | Lower values preferred (ideal: 1) |

| Carbon Efficiency | (Carbon in product / Carbon in reactants) × 100% | Environmental impact assessment | Higher percentages preferred |

| Solvent Intensity | Mass of solvents (kg) / Mass of product (kg) | Solvent use optimization | Lower values preferred |

The transition toward sustainable pharmaceuticals requires benchmarking current processes against these metrics. Evidence synthesis enables researchers to identify the most effective green chemistry approaches by aggregating performance data across multiple studies, establishing baselines, and tracking improvement over time [19]. For instance, systematic analysis of solvent use patterns can identify high-impact substitution opportunities, while comparative synthesis of catalytic methodologies can guide investment in the most efficient technologies.

Evidence Synthesis Methodologies for Green Chemistry

Automated Literature Processing Frameworks

Systematic evidence synthesis in green chemistry involves a structured workflow for identifying, evaluating, and integrating relevant research (Figure 1). Machine learning and natural language processing technologies significantly accelerate this process, enabling researchers to efficiently navigate the vast and dispersed chemical literature [20].

Figure 1: Evidence Synthesis Workflow for Green Chemistry. This automated process enables comprehensive literature analysis for sustainable drug development.

Specialized tools have been developed to automate various stages of evidence synthesis. litsearchR uses text mining and keyword co-occurrence to identify optimal search terms, while colandr provides a semi-automated, human-in-the-loop platform for screening abstracts for relevance [20]. These tools leverage NLP to identify sentences or word clusters common among relevant articles, progressively improving accuracy as more articles are screened [20]. For large-scale synthesis projects, such as a global review of climate adaptation evidence that screened 48,000 articles, such automation is indispensable [20].

Experimental Protocols for Sustainable Synthesis

Evidence synthesis identifies several high-impact green chemistry approaches with validated experimental protocols for pharmaceutical applications:

Microwave-Assisted Synthesis Protocol

Principle: Microwave irradiation uses electromagnetic radiation (0.3-300 GHz) to directly transfer energy to reactants via dipole polarization and ionic conduction, reducing reaction times from hours/days to minutes [19].

Materials:

- Microwave reactor with temperature and pressure control

- Polar solvents (DMF, DMA, DMSO, ethanol, methanol) or reaction components that absorb microwave energy

- Sealed reaction vessels compatible with microwave irradiation

Methodology:

- Dissolve reactants in appropriate solvent (1-5 mL per mmol substrate)

- Transfer solution to microwave-compatible sealed vessel

- Program reactor: set temperature (typically 80-150°C), pressure limits, and irradiation time (5-30 minutes)

- After irradiation, cool reaction mixture to room temperature

- Isolate product through standard techniques (extraction, crystallization, chromatography)

Applications: Synthesis of five-membered nitrogen heterocycles (pyrroles, pyrrolidines, fused pyrazoles, indoles) with reported advantages including cleaner reaction profiles, shorter times, higher purity, and improved yields compared to conventional heating [19].

Mechanochemical Synthesis Protocol

Principle: Mechanical energy (through grinding or ball milling) drives chemical reactions without solvents, eliminating a major source of pharmaceutical waste [21].

Materials:

- Ball mill apparatus (planetary or mixer mill)

- Grinding jars and balls (various materials and sizes)

- reactants in solid form

Methodology:

- Weigh solid reactants and any catalysts (typical total mass: 0.5-5 g)

- Add materials to grinding jar with grinding balls (ball-to-powder mass ratio 10:1 to 50:1)

- Set milling frequency (15-30 Hz) and duration (30 minutes to several hours)

- After milling, extract product with minimal solvent

- Purify through recrystallization or other appropriate methods

Applications: Synthesis of solvent-free imidazole-dicarboxylic acid salts for fuel cell applications, pharmaceutical cocrystals, and metal-organic frameworks, providing high yields with minimal solvent usage and reduced energy consumption [21].

On-Water and In-Water Reaction Protocol

Principle: Water replaces organic solvents, leveraging its unique hydrogen bonding, polarity, and surface tension to facilitate chemical transformations, even with water-insoluble reactants [21].

Materials:

- Water (deionized or distilled)

- Emulsifying agents or surfactants (if needed)

- Standard laboratory glassware

- Agitation equipment (magnetic stirrer, shaker)

Methodology:

- Add reactants to water (typical concentration 0.1-0.5 M)

- For water-insoluble reactants, add appropriate surfactant (0.1-1 mol%) to create emulsion

- Stir or agitate reaction mixture at appropriate temperature (25-80°C)

- Monitor reaction progress by TLC, HPLC, or GC

- Extract product with eco-friendly solvents (ethyl acetate, cyclopentyl methyl ether)

- Purify using standard techniques

Applications: Diels-Alder reactions, silver nanoparticle synthesis, and various organic transformations, reducing production costs and expanding access to chemical synthesis in low-resource settings [21].

AI-Enabled Synthesis for Green Chemistry Optimization

Artificial intelligence transforms green chemistry by enabling predictive modeling of reaction outcomes, catalyst performance, and environmental impacts. AI optimization tools evaluate reactions based on sustainability metrics including atom economy, energy efficiency, toxicity, and waste generation [21]. These models suggest safer synthetic pathways and optimal reaction conditions—temperature, pressure, and solvent choice—reducing trial-and-error experimentation [21].

Machine learning algorithms accelerate evidence synthesis by automatically categorizing and labeling data at scale. For example, researchers trained a relevance classifier on 2,000 abstracts to predict whether over 600,000 abstracts contained information on climate impacts [20]. Similar approaches can identify green chemistry applications across dispersed literature. AI-guided retrosynthesis tools increasingly prioritize environmental impact alongside performance, helping medicinal chemists select sustainable pathways early in drug development [21].

Table 2: AI Applications in Green Chemistry Synthesis

| AI Technology | Application in Green Chemistry | Impact |

|---|---|---|

| Predictive Modeling | Catalyst behavior prediction without physical testing | Reduces waste, energy usage, and hazardous chemical use |

| Natural Language Processing | Automated extraction of reaction parameters from literature | Accelerates evidence synthesis and data aggregation |

| Retrosynthesis Planning | Sustainable pathway identification prioritizing green solvents & atom economy | Embeds sustainability early in drug design |

| Autonomous Optimization | High-throughput experimentation integrated with machine learning | Rapid identification of optimal green reaction conditions |

| Sustainability Scoring | Standardized environmental impact assessment of chemical processes | Enables comparative analysis of synthetic routes |

The maturation of these AI tools supports the development of standardized sustainability scoring systems for chemical reactions, providing quantitative metrics that guide pharmaceutical companies toward greener manufacturing processes [21].

Research Reagent Solutions for Green Chemistry

Implementing green chemistry in pharmaceutical development requires specialized reagents and materials that reduce environmental impact while maintaining efficiency (Table 3).

Table 3: Essential Research Reagents for Sustainable Pharmaceutical Synthesis

| Reagent/Material | Function | Green Chemistry Advantage |

|---|---|---|

| Deep Eutectic Solvents (DES) | Customizable, biodegradable solvents for extraction and synthesis | Low-toxicity, low-energy alternative to conventional solvents; align with circular economy goals [21] |

| Bio-Based Surfactants | Replace PFAS-based surfactants and etchants | Biodegradable alternatives (e.g., rhamnolipids, sophorolipids) reduce persistent environmental contaminants [21] |

| Earth-Abundant Catalysts | Replace rare-earth elements in catalytic processes | Iron nitride (FeN), tetrataenite (FeNi) avoid geopolitical and environmental costs of rare earth mining [21] |

| Water as Reaction Medium | Solvent for organic transformations | Non-toxic, non-flammable, widely available replacement for organic solvents [21] |

| Renewable Feedstocks | Starting materials for API synthesis | Reduce dependence on petrochemical resources; often biodegradable |

These reagent solutions emerge from systematic evidence synthesis that identifies high-performing, sustainable alternatives to conventional chemical materials. For example, DES—typically mixtures of choline chloride (hydrogen bond acceptor) with urea, glycols, carboxylic acids, or sugars (hydrogen bond donors) in 1:2 or 1:3 ratios—enable metal extraction from electronic waste and bioactive compound recovery from agricultural residues [21].

Implementation Framework and Future Directions

Translating synthesized evidence into practical drug development requires a systematic implementation framework (Figure 2). This process integrates continuous literature monitoring with experimental validation and process optimization.

Figure 2: Green Chemistry Implementation Cycle. This iterative framework enables continuous improvement of pharmaceutical processes based on emerging evidence.

Future developments in green chemistry synthesis will likely focus on several key areas. The scale-up of DES-based systems for industrial metal recovery and biomass processing will support circular economy approaches in pharmaceutical manufacturing [21]. Industrial-scale mechanochemical reactors promise to bring solvent-free synthesis to commercial pharmaceutical production [21]. AI-guided discovery of novel catalysts and reactions will accelerate the development of sustainable synthetic pathways [21]. Additionally, integration of flow chemistry with continuous manufacturing systems will enhance the efficiency of water-based reactions and other green synthetic methodologies [21].

Pharmaceutical companies adopting these evidence-based green chemistry approaches position themselves for regulatory compliance, cost reduction, and leadership in sustainable manufacturing. As environmental regulations tighten and consumer preference for sustainable products grows, systematic evidence synthesis provides the critical foundation for informed decision-making in drug development.

In the context of environmental degradation evidence synthesis, tracking core global environmental indicators is essential for researchers and scientists to quantify the human impact on Earth's systems. These indicators provide the empirical foundation for assessing sustainability, evaluating intervention strategies, and informing policy development. This technical guide focuses on three critical metric categories: Ecological Footprint, which measures human demand on bioproductive areas; CO2 and Greenhouse Gas (GHG) Emissions, the primary drivers of climate change; and Biodiversity Metrics, which track the state and trends of biological diversity. The integration of data from these domains enables a comprehensive understanding of the pressures on the global environment and the effectiveness of response measures. The following sections detail the latest data, methodological frameworks, and monitoring protocols for each indicator category, providing a foundational primer for research professionals engaged in environmental evidence synthesis.

Ecological Footprint and Biocapacity

The Ecological Footprint is a comprehensive metric that quantifies human demand on nature by measuring the biologically productive areas required to produce the resources a population consumes and to absorb its waste, most notably carbon dioxide emissions [22]. It is contrasted with biocapacity, which measures the regenerative capacity of a region's ecosystems. When a population's footprint exceeds its biocapacity, the region operates in an ecological deficit, a state indicative of unsustainable resource use. At a global scale, this overshoot means humanity is consuming more resources than the planet can regenerate annually [23].

Current Global and National Data

As of 2025, humanity's ecological footprint corresponds to approximately 1.71 planet Earths, with Earth Overshoot Day falling on July 24th [22]. This indicates a global ecological overshoot of 71%. The following table summarizes the ecological footprint and biocapacity for selected countries based on 2025 data, highlighting the disparity between resource consumption and regenerative capacity across nations [24].

Table 1: Ecological Footprint and Biocapacity by Country (2025)

| Country | Total Ecological Footprint (million ha) | Footprint per Person (ha/capita) | Total Biocapacity (million ha) | Biocapacity per Person (ha/capita) | Ecological Reserve or Deficit |

|---|---|---|---|---|---|

| China | 5,300 | 3.6 | 1,100 | 0.7 | -400% |

| United States | 2,700 | 7.9 | 1,300 | 3.8 | -110% |

| India | 1,600 | 1.1 | 467 | 0.3 | -240% |

| Russia | 878 | 6.1 | 1,100 | 7.5 | +24% |

| Japan | 529 | 4.3 | 76.9 | 0.6 | -590% |

| Brazil | 520 | 2.4 | 1,800 | 8.1 | +237% |

| Germany | 384 | 4.6 | 136 | 1.6 | -180% |

| Canada | 321 | 8.4 | 556 | 14.4 | +73% |

| Australia | 191 | 7.3 | 321 | 12.3 | +68% |

Methodology and Calculation

The Ecological Footprint accounting methodology, standardized by the Global Footprint Network and now maintained by the Footprint Data Foundation (FoDaFo) and York University, is based on United Nations and affiliated datasets [23]. The calculation involves tracking the demand for six primary types of bioproductive areas [22]:

- Cropland

- Grazing land

- Forest land (for timber and other forest products)

- Fishing grounds

- Built-up land

- Carbon uptake land (forest area required to sequester carbon dioxide emissions from fossil fuels)

The fundamental calculation translates resource consumption and waste generation into a standardized area unit, the global hectare (gha), which represents a hectare with world-average biological productivity for a given year. A country's consumption is calculated using the formula: Consumption = Production + Imports - Exports. All results are subject to quality scoring to ensure data reliability [23].

Diagram: Ecological Footprint Accounting Workflow

Research Reagent Solutions: Ecological Footprint Analysis

Table 2: Essential Resources for Ecological Footprint Research

| Resource / Tool | Function in Research | Source / Provider |

|---|---|---|

| National Footprint and Biocapacity Accounts (NFBA) | Core dataset for national-level time-series analysis (1961-present). | Footprint Data Foundation (FoDaFo), York University [23] |

| Ecological Footprint Explorer | Open data platform for accessing and visualizing Footprint data. | Global Footprint Network [23] |

| UN Data Sets | Primary data for production, trade, and population (e.g., FAO, UN Commodity Trade). | United Nations and affiliated agencies [23] |

| Ecological Footprint Standards 2009 | Operational standards ensuring consistent and transparent assessments. | Global Footprint Network [23] |

CO2 and Greenhouse Gas Emissions

Greenhouse gas emissions are the primary driver of anthropogenic climate change, with carbon dioxide (CO2) from fossil fuel combustion being the single largest contributor. Tracking the sources, sinks, and atmospheric concentrations of these gases is fundamental to assessing global warming trends and the efficacy of mitigation policies.

Current Global and National Data

In 2025, fossil CO2 emissions are projected to reach a record high of 38.1 billion tonnes, a 1.1% increase from 2024 [25]. Total global GHG emissions (excluding LULUCF) reached 53.2 Gt CO2eq in 2024, with fossil CO2 accounting for 74.5% of this total [26]. The remaining carbon budget to have a 50% chance of limiting global warming to 1.5°C is approximately 170 billion tonnes of CO2, which is equivalent to just four years of emissions at the current rate, rendering the 1.5°C goal "virtually exhausted" [25].

Table 3: Greenhouse Gas Emissions of Major Emitting Countries (2024)

| Country/Region | 2024 GHG Emissions (Mt CO2eq) | % of Global Total | Key Trends and Notes |

|---|---|---|---|

| China | 14,776 | 27.8% | Projected 2025 fossil CO2 increase: +0.4% [25] |

| United States | 5,824 | 10.9% | Projected 2025 fossil CO2 increase: +1.9% [25] |

| India | 3,892 | 7.3% | Projected 2025 fossil CO2 increase: +1.4% [25] |

| European Union (EU27) | 3,165 | 5.9% | 35% lower than 1990 levels; 2024 decrease: -1.8% [26] |

| Russia | 2,516 | 4.7% | Increased emissions in 2024 [26] |

| Indonesia | 1,347 | 2.5% | Largest relative increase in 2024 among top emitters: +5.0% [26] |

| Japan | 1,215 | 2.3% | Projected 2025 fossil CO2 decrease: -2.2% [25] |

| Brazil | 1,299 | 2.4% | Emissions heavily influenced by LULUCF (not included here) [26] |

Methodology and Calculation

The Global Carbon Budget is a leading annual assessment that provides a comprehensive, peer-reviewed update on carbon sources and sinks. Its methodology is fully transparent and involves an international team of over 130 scientists [25]. The budget is constructed by quantifying major carbon fluxes:

- Sources: Fossil CO2 emissions and land-use change emissions (e.g., deforestation).

- Sinks: Partitioning of CO2 between the atmosphere, the terrestrial biosphere (vegetation, soils), and the ocean.

The report relies on multiple data sources, including energy statistics from the International Energy Agency (IEA), land-use change data, and observations of atmospheric CO2 concentrations and ocean uptake. National GHG inventories, such as those reported by the European Commission's EDGAR (Emissions Database for Global Atmospheric Research), use activity data (e.g., fuel consumption, industrial production) and emission factors derived from the IPCC guidelines to calculate emissions by sector and country [26].

Diagram: Global Carbon Budget Assessment Workflow

Biodiversity Metrics

Biodiversity metrics are designed to track the state of and trends in biological diversity, from genetic variation to ecosystem integrity. These indicators are critical for monitoring the health of the planet's life-support systems and for assessing progress towards international conservation goals, such as the Kunming-Montreal Global Biodiversity Framework.

Monitoring Priorities and Frameworks

For the 2025-2028 period, Biodiversa+, a European biodiversity partnership, has identified 12 refined monitoring priorities that address critical gaps and policy needs [27]. These priorities guide transnational cooperation and standardize data collection. The framework promotes the use of Essential Biodiversity Variables (EBVs) as a common, interoperable standard for data collection and reporting. This approach is scale-agnostic and spans terrestrial, freshwater, and marine environments. The Driver–Pressure–State–Impact–Response (DPSIR) framework is recognized as a complementary tool for understanding the socio-ecological dynamics behind biodiversity change [27].

The 12 biodiversity monitoring priorities for 2025-2028 are [27]:

- Bats

- Common Species

- Genetic Composition

- Habitats

- Insects

- Invasive Alien Species (IAS)

- Marine Biodiversity

- Protected Areas

- Soil Biodiversity

- Urban Biodiversity

- Wetlands

- Wildlife Diseases

These priorities are supplemented by Transversal Activities, which support monitoring through governance, standardized metrics, information systems, novel technologies, and social sciences.

Contextualizing the Biodiversity Crisis

The Living Planet Report 2022 documented a 69% average decline in global vertebrate population sizes between 1970 and the present [22]. This decline is largely attributed to humanity exceeding global biocapacity. A 2021 analysis further indicated that the sixth mass extinction is accelerating, with more than 500 species of land animals on the brink of extinction—a rate of loss that would have taken thousands of years without human activity [9]. The primary direct drivers of biodiversity loss are land-use change (especially conversion to agriculture), overexploitation, climate change, pollution, and invasive alien species [27] [9].

Diagram: Biodiversity Monitoring and Assessment Framework

Research Reagent Solutions: Biodiversity Monitoring

Table 4: Key Frameworks and Tools for Biodiversity Monitoring

| Framework / Tool | Function in Research | Application Example |

|---|---|---|

| Essential Biodiversity Variables (EBVs) | Provides standardized metrics for interoperable data collection across taxa and ecosystems. | Monitoring genetic composition, species populations, or ecosystem structure [27]. |

| Driver–Pressure–State–Impact–Response (DPSIR) | A causal framework for organizing information on the interactions between society and the environment. | Analyzing the chain of events from economic drivers to conservation responses [27]. |

| Multi-taxa Standardized Approaches | Enables harmonized monitoring of multiple species groups, including common species. | Tracking insect pollinators and soil fauna simultaneously in agricultural landscapes [27]. |

Synthesis and Interconnections

The three indicator categories are deeply interconnected. CO2 emissions are a dominant component of the ecological footprint, primarily through the carbon footprint. This emission, in turn, drives climate change, which acts as a powerful pressure on biodiversity by altering habitats, species distributions, and ecosystem functions [25] [9]. Concurrently, the conversion of natural habitats for resource production (a key factor in the ecological footprint) is a leading cause of both biodiversity loss and carbon sink reduction [9]. The 2025 Global Carbon Budget report notes that climate change and deforestation have already turned Southeast Asian and large parts of South American tropical forests from carbon sinks into carbon sources, illustrating this critical feedback loop [25].

Understanding these synergies is paramount for effective environmental governance. The 2025 Sustainable Development Goals Report underscores that progress has been "fragile and unequal," and while success stories exist—such as the elimination of neglected tropical diseases in 54 countries—the current pace of change is insufficient to achieve the 2030 Agenda [28]. A holistic evidence synthesis approach that integrates footprint, emission, and biodiversity data is therefore not merely an academic exercise but a necessary tool for navigating the complex trade-offs and synergies between economic development, climate stability, and the conservation of natural capital.

Next-Generation Tools and Techniques for Accelerating Evidence Synthesis

The field of evidence synthesis, a cornerstone of scientific research and policy-making, is undergoing a profound transformation through artificial intelligence (AI) and machine learning (ML). This shift is particularly critical in addressing complex, urgent challenges like environmental degradation, where the volume of scientific literature is vast and rapidly expanding. Evidence syntheses, including systematic reviews, are research methodologies that use systematic, replicable methods to evaluate all available evidence on a specific question, built on principles of research integrity, rigour, transparency, and reproducibility [29]. Traditional systematic review methods, while methodologically robust, are notoriously time-consuming and resource-intensive, creating significant bottlenecks in translating evidence into timely policy and action.

AI and automation present a paradigm shift, potentially transforming the way we produce evidence syntheses, making the process significantly more efficient [29]. By automating labour-intensive tasks such as literature screening, data extraction, and bias assessment, these technologies can accelerate the synthesis process from months to weeks or even days. This is especially vital for "living reviews" that require continuous updating with emerging evidence, a approach highly relevant to fast-moving environmental topics. However, this technological promise is tempered by significant challenges, including the opaque "black-box" nature of some algorithms, potential embedded biases, and risks of fabricated outputs or "hallucinations" [29]. This technical guide explores the current state, methodologies, and practical applications of AI and ML for automating literature reviews, data extraction, and trend analysis, with a specific focus on environmental evidence synthesis.

AI for Literature Review and Screening

The initial phases of a systematic review—searching for and screening thousands of potentially relevant studies—represent one of the most demanding tasks, traditionally requiring dozens to hundreds of hours of human effort. AI technologies are now effectively targeting this bottleneck.

Current Adoption and Performance

A recent investigation into evidence syntheses published by leading organizations like Cochrane and Campbell Collaboration revealed that the explicit use of machine learning in published reviews remains limited, with only approximately 5% of studies reporting its use [30]. However, when employed, most applications are concentrated in the screening phase. Furthermore, living reviews show a higher relative ML integration of about 15%, underscoring the technology's value for ongoing, updated syntheses [30]. Despite its potential, a significant implementation gap exists, with common barriers including limited guidance, low user awareness, and concerns over reliability [30].

Technical Workflow and Protocols

The AI-assisted screening process typically follows a supervised machine learning workflow known as supervised active learning. The core protocol involves the following steps, which can be implemented in tools such as ASReview, Rayyan, or Cochrane's own systems:

- Seed Set Preparation: A human reviewer initially screens a small, random sample of references (e.g., 50-100) from the total search results, labelling them as "relevant" or "irrelevant." This becomes the initial training data for the algorithm.

- Model Training: A classification algorithm (e.g., a Naive Bayes or Support Vector Machine model) is trained on this seed set. The model learns the linguistic patterns and features (e.g., specific words in titles and abstracts) that distinguish relevant from irrelevant studies.

- Active Learning Loop: The trained model then prioritizes the remaining unlabeled references, presenting those it calculates as most likely to be relevant at the top of the list for the human reviewer. The reviewer screens these prioritized records, and their decisions are fed back into the model in near real-time, continuously refining its predictions.

- Stopping Criteria: The process continues until a pre-defined stopping criterion is met. This can be a practical criterion, such as screening a fixed number of consecutive records without finding a relevant study (e.g., 50-100), or a statistical criterion based on the model's assessed recall level [30].

This workflow can drastically reduce the screening burden, as the model rapidly surfaces the most pertinent papers, allowing reviewers to identify the majority of included studies after screening only a fraction of the total references.

Research Reagent Solutions: Key Tools for AI-Assisted Screening

Table 1: Essential Tools for Automating Literature Review and Screening

| Tool Category | Example Tools | Primary Function | Key Considerations |

|---|---|---|---|

| Dedicated Screening Tools | ASReview, Rayyan, EPPI-Reviewer | Provides integrated environments for importing search results, manual screening, and AI-powered prioritization. | Assess model transparency, interoperability with reference managers, and flexibility of stopping rules. |

| Systematic Review Suites | Cochrane's RSR Tool, DistillerSR | End-to-end platforms managing the entire review process, often including AI modules for screening. | Suited for large, multi-reviewer teams; can involve higher cost and complexity. |

| General-Purpose ML Frameworks | Scikit-learn, TensorFlow, AutoML | Offers maximum flexibility for building custom prioritization models tailored to specific research domains. | Requires significant in-house ML expertise and development resources. |

Diagram 1: AI-assisted literature screening workflow.

Automated Data Extraction Techniques

Once relevant studies are identified, the next major bottleneck is data extraction—the process of systematically pulling specific data points (e.g., sample sizes, effect estimates, outcomes) from included full-text articles. This is a prime area for innovation, particularly for unstructured text.

Machine Learning Extraction Patterns

In 2025, ML-driven data extraction, which combines Optical Character Recognition (OCR) and Natural Language Processing (NLP), is achieving accuracy rates of 98-99%, far surpassing manual methods [31]. This approach is particularly effective for complex, unstructured documents. The technical process involves:

- Document Conversion: OCR engines first convert physical text or PDF images into machine-readable digital text. Modern ML-based OCR is robust against layout variations and poor image quality.

- Entity Recognition: NLP models, specifically pre-trained transformer models (e.g., BERT, SciBERT) fine-tuned on scientific text, then analyze the digital text to identify and classify relevant entities. This involves Named Entity Recognition (NER) to find data points like chemical names, species, locations, or quantitative values.

- Relationship Extraction: More advanced models go beyond simple identification to understand the contextual relationships between entities. For example, determining that a specific numerical value is the "mean concentration" of a "heavy metal" in a "soil sample."

Real-world implementations demonstrate significant efficiency gains. For instance, a leading financial institution used ML-driven extraction to cut loan application processing time by 40% [31]. In a research context, this translates directly to faster data extraction from primary studies.

Quantitative Data Extraction Performance

Table 2: Comparative Analysis of Data Extraction Methods (2025)

| Extraction Method | Speed | Setup Complexity | Primary Data Type | Accuracy / Key Benefit |

|---|---|---|---|---|

| Manual Extraction | Very Slow | Low | All Types | High but prone to human error & fatigue |

| Rule-Based ETL | Batch Processing | High | Structured | High for consistent, predictable sources |

| API Data Extraction | Real-time | Moderate | Structured | Direct, reliable access to structured data |

| ML Extraction (OCR+NLP) | Fast (minutes) | Variable | Unstructured | 98-99% accuracy on complex documents [31] |

Experimental Protocol for Custom ML Data Extraction

For research teams needing to extract specific, domain-related data points (e.g., pollutant levels, biodiversity metrics), a tailored approach is required:

- Corpus Creation and Annotation:

- Data Collection: Gather a representative sample of full-text PDFs from the domain of interest (e.g., environmental science journals).

- Annotation: Using an annotation tool like Label Studio or brat, human experts manually label the text in these PDFs, marking the spans of text that correspond to the target data points (e.g., highlighting every instance of a "sample size" or "effect size" and tagging it). This creates a "gold-standard" training dataset.

- Model Selection and Fine-Tuning:

- Model Choice: Select a domain-specific pre-trained language model, such as SciBERT, which is trained on a massive corpus of scientific literature.

- Fine-Tuning: Further train (fine-tune) this model on the annotated corpus. This process adapts the model's general language understanding to the specific task of identifying relevant data points in environmental science papers.

- Validation and Deployment:

- Performance Metrics: Validate the fine-tuned model on a held-out test set of annotated documents. Key metrics include precision, recall, and F1-score.

- Human-in-the-Loop Verification: Deploy the model to suggest extractions from new papers, but maintain a human-in-the-loop to verify and correct its outputs, especially in the early stages or for critical data points. This feedback can also be used to further refine the model.

Trend Analysis and Automated Synthesis

Beyond extraction, AI is increasingly used to identify trends, patterns, and even synthesize findings across a body of literature, moving towards automated thematic analysis.

Advanced Analytical Techniques

- Topic Modeling: Unsupervised ML techniques like Latent Dirichlet Allocation (LDA) and more advanced methods like BERTopic can automatically discover latent thematic structures (topics) across a large collection of documents [32]. This is invaluable for mapping the evolution of research foci in environmental degradation over time.

- Sentiment and Bias Analysis: NLP can be used to assess the sentiment or tone of literature, or to automatically apply risk-of-bias assessment criteria (e.g., Cochrane's RoB 2 tool) by analyzing the methodological descriptions in study texts.

- Composite AI and Agentic Analytics: A emerging trend is the use of Composite AI, which leverages multiple AI techniques (e.g., knowledge graphs, machine learning, optimization) in combination to enhance the impact and reliability of insights [33]. Furthermore, Agentic Analytics involves AI systems that can autonomously set goals, plan tasks (e.g., "find all recent studies on ocean acidification and summarize their consensus"), and execute actions without continuous human oversight [34] [33].

Research Reagent Solutions: Key Tools for Trend Analysis

Table 3: Essential Tools for Automated Trend Analysis and Synthesis

| Tool / Technique | Function | Application in Evidence Synthesis |

|---|---|---|

| Topic Modeling (LDA/BERTopic) | Discovers latent themes in a document corpus. | Mapping the conceptual landscape of environmental degradation research; tracking emergence of new sub-fields. |

| Knowledge Graphs | Represents relationships between entities (e.g., studies, methods, findings). | Visualizing the interconnectedness of evidence; identifying key studies or conflicting results. |

| Small Language Models (SLMs) | Compact LLMs optimized for specific domains. | Generating more accurate, contextually appropriate summaries of evidence within the environmental domain compared to general-purpose LLMs [33]. |

| Decision Intelligence Platforms | Models and automates complex decision-making processes. | Structuring the synthesis process itself, from question formulation to conclusion-drawing [33]. |

Diagram 2: AI-driven trend analysis and synthesis framework.

Responsible AI Implementation and RAISE Framework

The power of AI in evidence synthesis comes with significant responsibilities. Leading organizations, including Cochrane, the Campbell Collaboration, JBI, and the Collaboration for Environmental Evidence (CEE), have jointly established a position statement on AI use, endorsing the Responsible use of AI in evidence SynthEsis (RAISE) recommendations [29] [35].

Core Principles for Researchers

The RAISE framework outlines several non-negotiable principles:

- Ultimate Human Responsibility: Evidence synthesists are ultimately responsible for their work, including the decision to use AI and ensuring adherence to legal and ethical standards. AI should be used with human oversight, not as a replacement for critical judgment [29].

- Transparency and Reporting: Any use of AI or automation that makes or suggests judgments must be fully and transparently reported in the evidence synthesis report [29]. This includes specifying the tool, version, purpose, and how its use was validated.

- Justification and Validation: Researchers must be able to demonstrate that the use of AI will not compromise the methodological rigour or integrity of their synthesis [29]. This may involve piloting or calibrating the AI tool on a subset of data to validate its performance for the specific context.

- Acknowledgment of Limitations: A critical approach is essential. Independent evaluations have found that in complex socio-economic or environmental contexts, AI-assisted synthesis can frequently produce "superficial" results and is ill-suited to addressing multifaceted queries [36]. Building capacity to critically evaluate AI outputs is therefore paramount.

Reporting Template for AI Use

To ensure transparency, the joint position statement suggests a reporting template for protocols [29]: