Subjective Probability in Forensic Science: A Paradigm Shift Toward Statistical Rigor and Validation

This article examines the critical role and ongoing evolution of subjective probability in the interpretation of forensic evidence.

Subjective Probability in Forensic Science: A Paradigm Shift Toward Statistical Rigor and Validation

Abstract

This article examines the critical role and ongoing evolution of subjective probability in the interpretation of forensic evidence. We explore the foundational concepts of this inferential framework, its methodological application through tools like the Likelihood Ratio, and the significant challenges it faces, including cognitive bias and the need for transparent, reproducible methods. Furthermore, the article provides a comprehensive analysis of validation requirements and compares emerging objective, data-driven methodologies against traditional subjective approaches. Designed for researchers, scientists, and legal professionals, this review synthesizes current debates and future directions, highlighting the implications for developing more reliable, statistically sound forensic practices in biomedical and clinical contexts.

The Foundations of Subjective Probability in Forensic Inference

Defining Subjective Probability and its Role in Forensic Decision-Making

Subjective probability represents a paradigm shift in forensic science, moving from abstract statistical calculations to justified, evidence-based personal judgments. This technical guide explores the theoretical underpinnings, methodological frameworks, and practical applications of subjective probability within forensic decision-making. By examining its role across various forensic disciplines—from evidence interpretation to machine learning applications—we demonstrate how justified subjective probability serves as a robust framework for reasoning under uncertainty. The integration of this approach enhances the logical foundation of expert testimony while acknowledging the inescapable role of expert judgment in forensic practice, provided appropriate constraints and safeguards are implemented to ensure objectivity and reliability.

Subjective probability refers to the probability of an event occurring based on an individual's own experience or personal judgment rather than solely on classical statistical calculations or historical frequency data [1]. In essence, it represents a quantified degree of belief held by a particular individual at a specific time, given their available information and expertise. Unlike classical probability (based on formal reasoning) or empirical probability (based on historical data), subjective probability explicitly incorporates personal beliefs while remaining grounded in available evidence [1].

Within forensic science, this approach has been refined into the concept of justified subjective probability or constrained subjective probability, which emphasizes that these probabilistic assessments are not arbitrary opinions but rather conditional assessments based on task-relevant data and information [2]. This distinction is crucial for forensic applications, where unconstrained subjective opinions would be inappropriate. The forensic interpreter develops a probability assignment that is justified by the specific data and information relevant to the case at hand, constrained by scientific principles and analytical frameworks.

Theoretical Foundations and Justification

Epistemological Basis

The theoretical foundation of subjective probability in forensics rests on the understanding that probability does not represent a physical property of evidence but rather a measure of the uncertainty in our knowledge about that evidence [2]. This epistemological view positions probability as a conditional assessment based on available information, which aligns perfectly with the forensic context where evidence is always interpreted within the framework of case-specific circumstances and alternative propositions.

When experts assert that "the probability of this correspondence if the suspect is not the source is 1 in 1,000," they are expressing a justified subjective probability—a constrained assessment based on their expertise, available data, and the specific features of the case [2]. This stands in contrast to the misunderstanding of subjective probability as mere unconstrained opinion, which does not correspond to how probability assignment is understood by current evaluative guidelines such as those from the European Network of Forensic Science Institutes (ENFSI) [2].

Relationship to Bayesian Framework

Subjective probability naturally integrates with Bayesian statistical methods, which provide a mathematical framework for updating probabilities as new evidence is considered. The Bayesian approach allows forensic experts to combine prior beliefs (expressed as subjective probabilities) with case-specific evidence to form posterior probabilities that represent updated degrees of belief. This framework is particularly valuable for expressing the strength of evidence through likelihood ratios, which quantify how much more likely the evidence is under one proposition compared to an alternative proposition.

Application in Forensic Decision-Making

Evidence Interpretation Framework

Subjective probability provides a structured framework for interpreting forensic evidence through an inferential process. This process involves assessing evidence against at least two competing propositions—typically one proposed by the prosecution and one by the defense. The forensic expert evaluates how likely the observed evidence is under each proposition, expressing this relationship through a likelihood ratio that quantifies the strength of the evidence [3].

The justified subjective probability approach acknowledges that while experts should base their assessments on available data and scientific principles, their final probability assignments inevitably incorporate professional judgment honed through experience. This is particularly important in disciplines where complete statistical data may be lacking, but where experts have developed calibrated judgment through extensive casework and validation studies.

Case Study: DNA Evidence Evaluation

A 2025 murder case from Austin, Texas demonstrates the practical application of subjective probability in evaluating DNA evidence given activity-level propositions [4]. In this case, Bayesian networks were constructed to evaluate competing propositions about how biological material was transferred. The analysis incorporated published data alongside explicitly stated subjective probability assignments, resulting in a likelihood ratio of approximately 1300 in favor of the prosecution's proposition [4].

This case illustrates how subjective probability, when properly constrained by scientific data and explicitly stated, provides a transparent method for evaluating complex evidence scenarios where multiple explanations are possible. The use of Bayesian networks forced explicit acknowledgment of all probability assignments, allowing for logical consistency and transparency in the reasoning process.

Machine Learning Applications

Subjective probability frameworks have advanced into computational methods through machine learning applications. Recent research has demonstrated how ensemble machine learning models can generate subjective opinions for forensic classification problems, such as fire debris analysis [5]. These computational opinions consist of three components: belief mass, disbelief mass, and uncertainty mass, which together provide a more nuanced understanding of classification confidence than traditional binary outputs.

In practice, researchers have applied multiple machine learning models—including linear discriminant analysis (LDA), random forest (RF), and support vector machines (SVM)—to classification problems in forensic chemistry [5]. For each method, multiple models were trained on bootstrapped datasets, with the distribution of posterior probabilities used to calculate subjective opinions for each validation sample. This approach allows identification of high-uncertainty predictions that require additional scrutiny.

Table 1: Performance Metrics of ML Methods in Forensic Fire Debris Analysis

| Machine Learning Method | Median Uncertainty | ROC AUC | Optimal Training Set Size | Training Speed |

|---|---|---|---|---|

| Linear Discriminant Analysis (LDA) | Lowest | 0.849 (with RF) | >200 samples | Fastest |

| Random Forest (RF) | Moderate | 0.849 | 60,000 samples | Moderate |

| Support Vector Machines (SVM) | Highest | Not specified | 20,000 samples (max) | Slowest |

Methodological Protocols and Experimental Approaches

Protocol for Justified Probability Assignment

The assignment of justified subjective probabilities in forensic practice follows a structured protocol to ensure scientific rigor:

- Proposition Development: Clearly define competing propositions based on the framework of circumstances.

- Relevant Data Identification: Identify all task-relevant data and information applicable to the probability assignment.

- Reference Material Consultation: Review appropriate population data, validation studies, and relevant scientific literature.

- Expertise Calibration: Draw upon calibrated expertise developed through structured training and feedback.

- Probability Encoding: Translate the assessment into a quantitative probability statement.

- Sensitivity Analysis: Evaluate how changes in assumptions affect the probability assignment.

- Transparent Documentation: Clearly document the reasoning process, data sources, and any subjective components.

Ensemble Machine Learning Protocol

For computational applications, the generation of subjective opinions follows an experimental protocol based on ensemble machine learning [5]:

- Data Generation: Create ground truth data in silico through computational methods (e.g., linear combinations of gas chromatography-mass spectrometry data).

- Bootstrapping: Sample from the base dataset to generate multiple training datasets.

- Model Training: Train multiple copies of ensemble learners (LDA, RF, or SVM) on each bootstrapped dataset.

- Validation: Apply trained models to previously unseen validation data to obtain posterior probabilities.

- Distribution Fitting: Fit posterior probabilities to beta distributions to obtain shape parameters.

- Opinion Calculation: Calculate subjective opinions (belief, disbelief, uncertainty) from distribution parameters.

- Decision Projection: Convert opinions to decisions using projected probabilities and calculate performance metrics.

Table 2: Key Research Reagents and Computational Tools for Subjective Probability Research

| Research Component | Function | Example Implementation |

|---|---|---|

| In silico Data Generation | Creates synthetic ground truth data for training | Linear combination of GC-MS data from ignitable liquids and pyrolysis products [5] |

| Bootstrap Sampling | Generates multiple training sets from base data | Random sampling with replacement to create dataset variants [5] |

| Ensemble Machine Learning Models | Provides multiple predictions for uncertainty quantification | LDA, Random Forest, and Support Vector Machines [5] |

| Beta Distribution Fitting | Models distribution of posterior probabilities | Shape parameter estimation from ensemble model outputs [5] |

| Subjective Opinion Framework | Quantifies belief, disbelief, and uncertainty | Calculation of belief, disbelief, and uncertainty masses summing to 1 [5] |

| Likelihood Ratio Calculation | Quantifies strength of evidence | Log-likelihood ratio scores from projected probabilities [5] |

Current Research and Implementation Challenges

Cognitive Biases and Reasoning Challenges

Human reasoning presents both strengths and weaknesses for implementing subjective probability in forensic science. While humans excel at automatically integrating information from multiple sources to create coherent narratives, this very strength can introduce vulnerabilities in forensic contexts [6]. Forensic science often demands that analysts evaluate pieces of evidence independently of other case information, which requires reasoning in ways that contradict natural cognitive tendencies.

Specific challenges include:

- Automated Information Integration: The unconscious combination of contextual information with analytical decisions.

- Cognitive Impenetrability: The inability to "unsee" interpretations even after learning they are incorrect.

- Schema-Driven Reasoning: The application of generalized knowledge structures that may not fit specific cases.

- Coherence Creation: The tendency to create causal stories that explain all available information, potentially overlooking alternative explanations.

These cognitive challenges highlight the importance of structured frameworks and validation protocols to support the appropriate use of subjective probability in forensic decision-making.

Discipline-Specific Applications

The implementation of subjective probability varies across forensic disciplines, with distinct challenges for feature comparison fields versus causal analysis fields:

- Feature Comparison Disciplines (fingerprints, firearms, handwriting): These fields focus on similarity judgments and can often remove external biasing influences but face challenges during the comparison process itself [6].

- Causal Analysis Disciplines (fire debris, bloodstain pattern analysis, pathology): These fields typically search for explanatory stories of how events occurred and often require contextual information for analysis, introducing different reasoning challenges [6].

Standardization and Reporting Frameworks

Current research addresses the tension between traditional categorical reporting and probabilistic approaches. For example, the ASTM E1618-19 standard for fire debris analysis requires categorical statements about ignitable liquid residue identification, corresponding to "absolute opinions" in subjective opinion terminology with no expressed uncertainty [5]. This contrasts with the ENFSI approach that embraces evaluative reporting using likelihood ratios to convey strength of evidence [5].

The development of standardized frameworks for expressing uncertain opinions represents an active research area, particularly regarding how to communicate subjective probabilities effectively in legal contexts while maintaining scientific rigor.

Subjective probability, when properly constrained and justified, provides a robust framework for forensic decision-making that acknowledges the essential role of expert judgment while maintaining scientific rigor. The integration of this approach across forensic disciplines—from traditional evidence interpretation to advanced machine learning applications—enhances the logical foundation of forensic science and provides more transparent reasoning structures.

Future research directions include further development of computational methods for uncertainty quantification, standardization of probabilistic reporting frameworks, and enhanced training protocols to improve the calibration of expert judgment. As forensic science continues to evolve, justified subjective probability offers a pathway toward more nuanced, transparent, and scientifically sound evaluation of forensic evidence.

The objective analysis of forensic evidence is invariably mediated by human perception and subjective judgment. This technical guide examines the current paradigm of subjective probability in forensic science interpretation, framing it not as a flaw but as a structured cognitive process that can be modeled and quantified. Within forensic practice, analysts must often evaluate evidence and render conclusions under conditions of uncertainty. The concept of justified subjectivism provides a framework for understanding how expert subjective probability assessments can be constrained and validated through rigorous methodology and task-relevant data [2].

Recent experimental research from cognitive neuroscience provides a mechanistic understanding of how the human brain constructs judgments about perceptual evidence. This review integrates these findings into a forensic context, offering experimental protocols and computational models that can inform the development of more robust forensic interpretation frameworks. By understanding the fundamental processes underlying evidence analysis, researchers and practitioners can work toward standardizing subjective judgments without disregarding the essential role of expert interpretation.

Theoretical Foundation: Justified Subjectivism in Forensic Evaluation

Conceptual Framework

Subjective probability in forensic evaluation represents a constrained assessment rather than an unqualified opinion. When properly formulated, it constitutes a justified assertion grounded in task-relevant data and information [2]. This stands in contrast to misconceptions that subjective probability is inherently unconstrained or unreliable. The justified subjectivism paradigm maintains that there is no operational gap between reasonable subjective probability and other probability concepts when assessments are soundly based on available relevant information.

Reconciliation with Objective Analysis

The theoretical framework of justified subjectivism does not reject objectivity but rather establishes how subjective judgments can be structured to maintain scientific rigor:

- Conditional Assessment: All probability assessments are conditional on the available information and the framework of analysis [2]

- Empirical Constraint: Subjective probabilities must be constrained by empirical data and validated methodologies

- Transparent Reasoning: The process of forming subjective probabilities must be explicit and open to scrutiny

This approach acknowledges that while the initial perception may be subjective, the interpretive process can be systematically structured to produce reliable, defensible conclusions.

Computational Models of Perceptual Decision Making

Evidence Accumulation Framework

Research on perceptual decision-making has established that humans make decisions by accumulating sensory evidence over time until a threshold is reached [7]. In controlled experiments, participants viewed dynamic random dot displays and made judgments about the dominant color. The difficulty was controlled by color coherence - the probability of a dot being blue versus yellow (pblue) - with the unsigned quantity |pblue - 0.5| determining color strength and task difficulty [7].

The standard drift diffusion model successfully explains choice and reaction time by applying a stopping bound to the accumulation of noisy color evidence [7]. This model conceptualizes decision-making as a process where sensory evidence is integrated over time until it reaches a critical threshold, triggering a decision.

Difficulty Judgment Models

When evaluating which of two perceptual tasks would be easier (prospective difficulty judgment), humans employ comparative evidence accumulation. Several computational models have been proposed to explain this process:

- Race Model: Difficulty decision is determined by which of two color decisions terminates first [7]

- Absolute Evidence Comparison: Participants compare the absolute accumulated evidence from each stimulus and terminate their decision when they differ by a set amount [7]

- Confidence Comparison Model: An alternative model where participants compare the confidence one would have in making each color decision [7]

Experimental evidence favors the absolute evidence comparison model, which extends evidence accumulation frameworks to prospective judgments of difficulty [7].

Quantitative Relationships in Perceptual Decisions

Table 1: Performance Metrics in Color Judgment Tasks [7]

| Color Strength | Accuracy (%) | Reaction Time (s) | Evidence Accumulation Rate |

|---|---|---|---|

| 0.000 | 50.0 (chance) | 2.50 | 0.00 |

| 0.128 | 65.2 | 2.15 | 0.18 |

| 0.256 | 78.7 | 1.85 | 0.41 |

| 0.384 | 88.3 | 1.60 | 0.67 |

| 0.512 | 94.1 | 1.45 | 0.89 |

| 0.640 | 97.5 | 1.35 | 1.12 |

Table 2: Reaction Times in Difficulty Judgments by Stimulus Combination [7]

| S1 Strength | S2 Strength | Mean RT (s) | Std. Deviation | Correct Choice Probability |

|---|---|---|---|---|

| 0.000 | 0.000 | 1.99 | 0.60 | 0.50 |

| 0.000 | 0.640 | 1.45 | 0.42 | 0.95 |

| 0.640 | 0.000 | 1.44 | 0.41 | 0.94 |

| 0.640 | 0.640 | 1.30 | 0.36 | 0.50 |

Experimental Protocols for Studying Evidence Analysis

Perceptual Decision Task (Experiment 1a)

Objective: To establish baseline performance in a color judgment task under varying difficulty levels [7].

Stimuli:

- Dynamic random dot displays with blue and yellow dots

- Color coherence varies across six levels: {0, 0.128, 0.256, 0.384, 0.512, 0.64}

- Stimulus duration: Until response or timeout

Procedure:

- Participants fixate on central cross

- Single patch of dynamic random dots appears

- Participants decide whether blue or yellow is dominant

- Response and reaction time recorded

- Multiple trials across all difficulty levels

Analysis:

- Calculate accuracy and reaction time for each color strength

- Fit drift diffusion model to choice and RT data

- Estimate evidence accumulation rate (drift rate) for each difficulty level

Prospective Difficulty Judgment Task (Experiment 1b)

Objective: To investigate how humans judge relative task difficulty without performing the tasks [7].

Stimuli:

- Two patches of dynamic random dots presented simultaneously

- All 12 × 12 coherence combinations presented in randomized order

- Patches positioned to left and right of central fixation

Procedure:

- Participants fixate on central cross

- Two patches appear simultaneously

- Participants select which patch would be easier for color judgment

- No color judgment is actually performed

- Response and reaction time recorded

Analysis:

- Model comparison between race, absolute evidence, and confidence models

- Analyze criss-cross pattern in reaction times

- Test prediction that when dominant color of each stimulus is known, RTs depend only on difficulty difference

Data Classification and Management Framework

Objective: To establish proper data handling procedures for forensic research data [8].

Table 3: Data Classification Framework for Forensic Research [8]

| Data Type | Subclassification | Description | Example in Forensic Analysis |

|---|---|---|---|

| Quantitative | Discrete | Distinct, separate values that can be counted but not measured | Number of ridge characteristics in fingerprint |

| Continuous | Values that can be measured and divided into smaller parts | Concentration of substance in toxicology | |

| Interval | Ordered scale with defined spacing where difference between values is meaningful | Likert scale responses in proficiency tests | |

| Ratio | Continuous measurements with true zero point | Mass of drug evidence | |

| Qualitative | Nominal | Discrete units describing general attributes without order | Hair color, fabric type |

| Ordinal | Attributes that provide an order of scale without defined intervals | Quality ratings of evidence (poor, fair, good) | |

| Dichotomous | Nominal data with exactly two possible outcomes | Match/no-match decisions |

FAIR Principles Implementation:

- Findable: Rich metadata with persistent identifiers

- Accessible: Standardized protocols for retrieval with authentication where necessary

- Interoperable: Use of common data standards and vocabulary

- Reusable: Detailed provenance information and usage licenses [8]

Visualizing Decision Processes

Evidence Accumulation Model

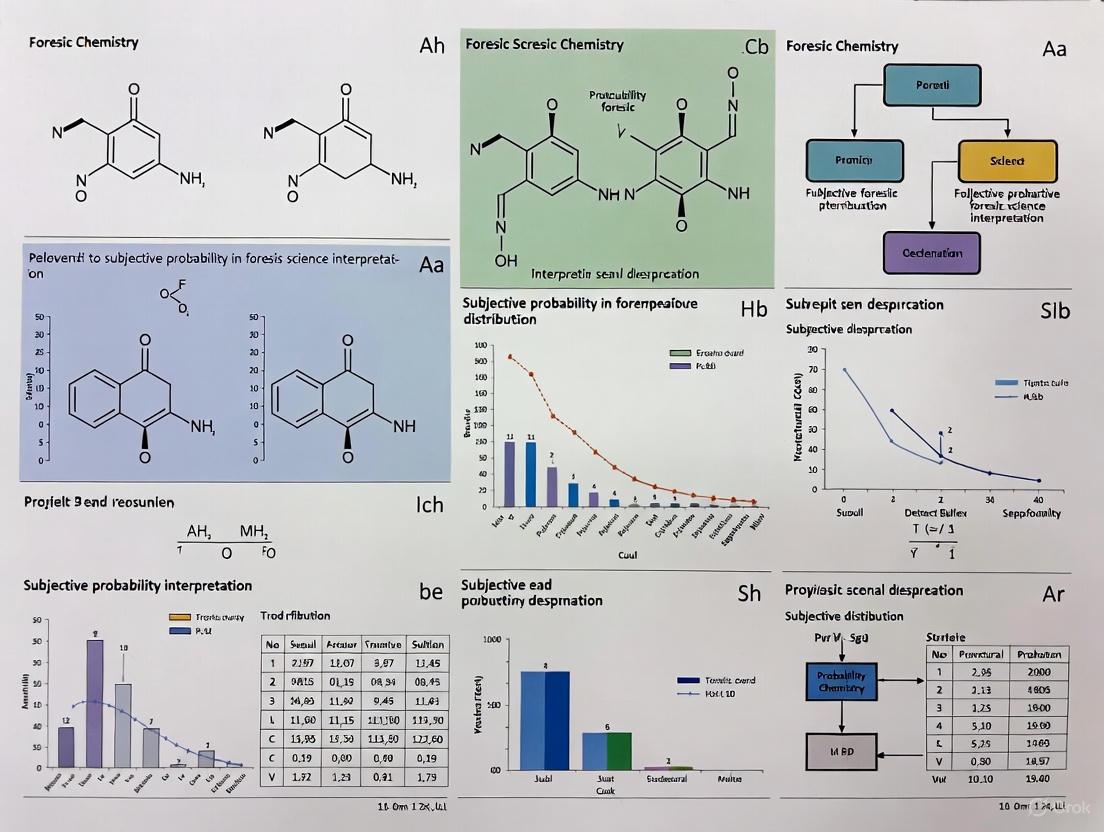

Figure 1: Sequential Sampling Model for Perceptual Decisions

Comparative Difficulty Judgment

Figure 2: Absolute Evidence Comparison Model for Difficulty Judgments

Forensic Evidence Analysis Workflow

Figure 3: Subjective Probability in Forensic Evidence Evaluation

Research Reagent Solutions

Table 4: Essential Research Materials for Perceptual Decision Studies [7]

| Reagent/Resource | Function in Research | Specifications | Forensic Analog |

|---|---|---|---|

| Dynamic Random Dot Stimuli | Visual stimuli for perceptual decisions | Color coherence control: probability pblue of dot being blue | Trace evidence patterns with variable signal-to-noise |

| Eye Tracking System | Monitor fixation and attention | Sampling rate ≥ 250Hz, spatial accuracy < 0.5° | Documentation of visual examination sequence |

| Response Time Apparatus | Measure decision latency | Millisecond precision input device | Timestamped decision logging in forensic analysis |

| Drift Diffusion Modeling Software | Fit computational models to behavioral data | Hierarchical Bayesian estimation preferred | Quantitative models of forensic decision processes |

| Data Management Platform | Store and structure experimental data | FAIR principles compliance [8] | Forensic case management systems |

| Stimulus Presentation Software | Precise control of experimental protocols | Millisecond timing accuracy, flexible design | Standardized evidence presentation protocols |

The integration of expert opinion into legal proceedings represents a critical junction between science and law. Within forensic science, the interpretation of evidence is fundamentally an exercise in subjective probability, where examiners assess the likelihood that two samples originate from the same source. This whitepaper examines the core critiques of unvalidated expert opinion through the lens of subjective probability forensic science interpretation research, addressing how cognitive biases, organizational deficiencies, and unscientific practices contribute to wrongful convictions. Recent research indicates that forensic science errors constitute a significant factor in wrongful convictions, with the National Registry of Exonerations recording over 3,000 cases of wrongful convictions in the United States as of 2023 [9]. This analysis provides researchers and legal professionals with a comprehensive framework for understanding and addressing the systemic vulnerabilities in forensic evidence evaluation.

The Scope of the Problem: Quantitative Analysis of Forensic Error

Forensic science disciplines demonstrate substantial variation in their association with erroneous convictions. Analysis of 732 wrongful conviction cases from the National Registry of Exonerations reveals distinct patterns of error distribution across forensic specialties [9].

Table 1: Forensic Discipline Error Rates in Wrongful Conviction Cases

| Discipline | Number of Examinations | Percentage of Examinations Containing At Least One Case Error | Percentage of Examinations Containing Individualization/Classification Errors |

|---|---|---|---|

| Seized drug analysis | 130 | 100% | 100% |

| Bitemark | 44 | 77% | 73% |

| Shoe/foot impression | 32 | 66% | 41% |

| Fire debris investigation | 45 | 78% | 38% |

| Forensic medicine (pediatric sexual abuse) | 64 | 72% | 34% |

| Blood spatter (crime scene) | 33 | 58% | 27% |

| Serology | 204 | 68% | 26% |

| Firearms identification | 66 | 39% | 26% |

| Forensic medicine (pediatric physical abuse) | 60 | 83% | 22% |

| Hair comparison | 143 | 59% | 20% |

| Latent fingerprint | 87 | 46% | 18% |

| Fiber/trace evidence | 35 | 46% | 14% |

| DNA | 64 | 64% | 14% |

| Forensic pathology (cause and manner) | 136 | 46% | 13% |

The data reveals that seized drug analysis and bitemark analysis represent the most error-prone disciplines, with the latter associated with a disproportionate share of incorrect identifications and wrongful convictions [9]. Notably, 100% of seized drug analysis errors resulted from field testing kit errors rather than laboratory mistakes. In approximately half of wrongful convictions analyzed, improved technology, testimony standards, or practice standards might have prevented the erroneous outcome at trial [9].

Theoretical Framework: Subjective Probability in Forensic Decision-Making

The Psychology of Probability Estimation

Forensic evidence interpretation inherently involves estimating probabilities under conditions of uncertainty. Research on subjective probability demonstrates that human cognition systematically deviates from mathematical probability theory through several mechanisms [10]:

- Conservatism: The tendency to avoid extreme probability estimates, resulting in overestimation of small probabilities and underestimation of large probabilities

- Representativeness Heuristic: Using similarity to a category as a proxy for probability, leading to conjunction errors (e.g., judging a specific combination of characteristics as more probable than a general category)

- Emotional Modulation: Recent experimental evidence indicates that emotional dominance - characterized by perceived control, autonomy, and influence - increases both conservatism and reliance on representativeness in probability judgments [10]

These cognitive patterns directly impact forensic decision-making, particularly in disciplines relying on subjective pattern matching.

Cognitive Bias in Forensic Analysis

The theoretical framework of subjective probability explains how contextual information and cognitive biases influence forensic judgments [9]:

- Contextual Bias: Forensic disciplines vary in their susceptibility to cognitive bias, with bitemark comparison, fire debris investigation, forensic medicine, and forensic pathology being particularly vulnerable

- Domain Differences: Disciplines such as seized drug analysis, latent palm print comparisons, toxicology, and DNA analysis demonstrate lower susceptibility to cognitive bias, though not immune

- Bayesian Interpretation: Forensic conclusions are inherently probabilistic and should be expressed as such, though practitioners often present them as categorical determinations

Structural and Methodological Deficiencies

Error Typology in Forensic Science

Research commissioned by the National Institute of Justice has developed a comprehensive taxonomy of forensic errors, categorizing factors contributing to wrongful convictions [9]:

Table 2: Forensic Error Typology

| Error Type | Description | Examples |

|---|---|---|

| Type 1 – Forensic Science Reports | Misstatement of the scientific basis of a forensic science examination | Lab error, poor communication, resource constraints |

| Type 2 – Individualization or Classification | Incorrect individualization/classification of evidence or interpretation of results | Interpretation error, fraudulent interpretation |

| Type 3 – Testimony | Erroneous presentation of forensic science results at trial | Mischaracterized statistical weight or probability |

| Type 4 – Officer of the Court | Error related to forensic evidence created by legal professionals | Excluded evidence, faulty testimony accepted over objection |

| Type 5 – Evidence Handling and Reporting | Failure to collect, examine, or report potentially probative forensic evidence | Chain of custody issues, lost evidence, police misconduct |

This typology reveals that testimony errors and evidence handling issues extend beyond laboratory analysis to encompass the entire judicial ecosystem.

The Validation Gap

Many forensic disciplines lack robust scientific validation, having developed through an "ad-hoc," non-scientific process [11]. For instance, fingerprint identification entered U.S. courts in 1911 but only began receiving scientific verification in the past two decades [11]. The Presidential Council of Advisers on Science and Technology (PCAST) 2016 report emphasized the necessity of empirical validation for forensic methods, including error rate studies [12].

Experimental Protocols for Studying Forensic Fallibility

Protocol 1: Error Rate Validation Studies

Objective: To establish base error rates for specific forensic disciplines through controlled testing.

Methodology:

- Sample Preparation: Create ground truth datasets with known matches and non-matches

- Participant Selection: Recruit practicing forensic analysts across multiple laboratories

- Blinding Procedure: Remove all contextual case information to minimize bias

- Task Administration: Present samples in randomized order with counterbalancing

- Data Collection: Record individualizations, exclusions, and inconclusive determinations

- Analysis: Calculate false positive rates, false negative rates, and reliability metrics

Validation Criteria: Studies should demonstrate repeatability (same analyst, same evidence), reproducibility (different analysts, same evidence), and measurement uncertainty quantification [12].

Protocol 2: Cognitive Bias Testing

Objective: To measure the impact of contextual information on forensic decision-making.

Methodology:

- Experimental Design: Between-subjects design with contextual manipulation

- Stimulus Creation: Develop identical forensic evidence with varying contextual narratives

- Condition Assignment: Randomly assign participants to high-bias or low-bias conditions

- Dependent Measures: Record conclusion decisions and confidence levels

- Statistical Analysis: Compare conclusion rates across conditions using chi-square tests and calculate effect sizes

This protocol directly investigates how emotional dominance and other affective states modulate probability judgments in forensic contexts [10].

Protocol 3: Open Science Assessment

Objective: To evaluate the transparency and replicability of forensic science research.

Methodology:

- Journal Screening: Assess forensic science publications for data sharing requirements

- Methodological Transparency: Code articles for availability of protocols, materials, and data

- Replication Analysis: Attempt direct replications of key forensic validation studies

- Barrier Identification: Document financial, cultural, and security-related obstacles to transparency

A recent study of 30 forensic science journals found that most lack requirements for open data or open materials, creating fundamental barriers to verification [11].

Visualizing the Ecosystem of Forensic Fallibility

Ecosystem of Forensic Fallibility

Research Reagent Solutions for Forensic Validation

Table 3: Essential Research Materials for Forensic Science Validation

| Research Reagent | Function/Application |

|---|---|

| Ground Truth Datasets | Validated sample sets with known ground truth for proficiency testing and error rate studies |

| Cognitive Bias Task Battery | Standardized experimental protocols for measuring contextual bias effects |

| Statistical Analysis Toolkit | Software and algorithms for calculating likelihood ratios and confidence intervals |

| Open Forensic Data Repositories | Curated, anonymized case data for method validation and replication studies |

| Blind Proficiency Testing Materials | Commercially prepared evidence samples for ongoing quality assessment |

| Standardized Reporting Frameworks | Structured formats for expressing conclusions with uncertainty quantification |

These research reagents address fundamental gaps in current forensic practice, particularly the need for empirical validation and uncertainty quantification [12] [11].

The fallibility of unvalidated expert opinion represents a critical challenge at the intersection of science and law. Through the theoretical framework of subjective probability, this analysis demonstrates how cognitive biases, structural deficiencies, and methodological limitations contribute to erroneous forensic conclusions. The quantitative evidence reveals significant disparities in reliability across forensic disciplines, with particularly high error rates in fields relying on subjective pattern matching. Addressing these issues requires robust experimental protocols for error rate validation, cognitive bias measurement, and the implementation of open science practices. For researchers and legal professionals, this whitepaper provides both a critical analysis of current deficiencies and a pathway toward more reliable, scientifically-valid forensic practice. The integration of empirical validation, transparent methodology, and appropriate uncertainty quantification will strengthen the scientific foundation of forensic science and enhance the administration of justice.

The evolution of forensic science represents a fundamental shift from categorical claims to probabilistic reasoning—a transition critical for scientific rigor in legal contexts. For decades, many forensic disciplines operated under the individualization fallacy, the unsupported notion that forensic evidence could unequivocally identify a single source to the exclusion of all others in the world. This paradigm has progressively given way to probabilistic frameworks that quantify evidential strength through statistical reasoning, particularly within the broader thesis of subjective probability research in forensic science interpretation [13]. This transition mirrors developments in other scientific fields facing uncertainty, where subjective probability incorporates expert judgment alongside data, especially when information is incomplete or ambiguous [14].

The forensic community's journey toward probabilistic reporting has been met with mixed reactions. While some stakeholders champion these approaches for enhancing scientific rigor, others express concern that the opacity of algorithmic tools complicates meaningful scrutiny of evidence presented against defendants [13]. This tension has left the field without a clear consensus path forward, as each proposed methodology presents countervailing benefits and risks that must be carefully navigated by researchers, laboratory managers, and legal professionals [13]. Understanding this historical context and technical foundation is essential for forensic researchers and practitioners engaged in method development and validation.

The Rise of Probabilistic Genotyping Systems

The Technical Challenge of Complex DNA Mixtures

The adoption of probabilistic thinking emerged from necessity when traditional forensic methods proved inadequate for interpreting complex mixture samples. These challenging samples contain DNA from multiple contributors of varying proportions and clarity, resulting from increasingly sensitive collection techniques that recover genetic material from surfaces touched by numerous individuals [15]. The interpretation of these complex mixtures, known as mixture deconvolution, presents substantial difficulties for laboratory analysts due to issues like allele drop-in/drop-out and poor signal-to-noise ratios that obscure the true number of contributors and their individual DNA profiles [15].

Table 1: Technical Challenges in Traditional DNA Mixture Interpretation

| Challenge | Impact on Analysis | Consequence |

|---|---|---|

| Multiple Contributors | Ambiguous allele combinations | Multiple genotype combinations possible |

| Allele Drop-out | Missing data at genetic loci | Incomplete genetic profiles |

| Allele Drop-in | Contamination from external DNA | False positive alleles |

| Low Template DNA | Poor signal-to-noise ratios | Uncertain allele calls |

| Stochastic Effects | Unpredictable amplification | Inconsistent results |

Algorithmic Solutions and Their Functioning

To address these challenges, probabilistic genotyping systems (PGS) were developed, with STRmix and TrueAllele emerging as the most widely adopted systems in the United States [15]. At their core, these systems employ sophisticated computational algorithms—typically Markov Chain Monte Carlo (MCMC) methods, a type of machine learning—to examine a mixture sample's DNA profile, simulate possible genotype combinations from different contributors, and evaluate the likelihood that specific combinations could generate the observed forensic sample [15].

These systems quantify evidential strength using a likelihood ratio (LR), which compares two competing probabilities: (1) the probability of observing the DNA evidence if the person of interest (POI) was a contributor to the mixture, and (2) the probability of observing the same evidence if the POI was not a contributor [15]. The resulting likelihood ratio is not a measure of innocence or guilt but rather an estimate of the evidence strength regarding whether an individual's DNA is included in the mixture sample [15].

Diagram 1: Probabilistic Genotyping Workflow - This diagram illustrates the computational process of calculating a likelihood ratio by comparing two competing hypotheses about a complex DNA mixture.

The Likelihood Ratio Framework

Statistical Foundation and Interpretation

The likelihood ratio represents a fundamental advancement over previous categorical statements by providing a continuous measure of evidentiary strength that properly separates the statistical evidence from prior assumptions about case circumstances. The mathematical formulation follows:

LR = P(E|H₁) / P(E|H₀)

Where:

- P(E|H₁) represents the probability of the evidence given the prosecution hypothesis (that the presumed individual is the contributor)

- P(E|H₀) represents the probability of the evidence given the defense hypothesis (that the presumed individual is not the contributor) [16]

The numerical value of the likelihood ratio, which can range between zero and infinity, provides a clear metric for evidence assessment [16]. The generally accepted interpretation framework is presented in Table 2.

Table 2: Likelihood Ratio Interpretation Framework

| Likelihood Ratio Value | Verbal Equivalent | Support for H₁ |

|---|---|---|

| < 1 | Limited evidence | More support for H₀ |

| 1 to 10 | Limited evidence | Weak support |

| 10 to 100 | Moderate evidence | Moderate support |

| 100 to 1000 | Moderately strong evidence | Strong support |

| 1000 to 10000 | Strong evidence | Very strong support |

| > 10000 | Very strong evidence | Extremely strong support |

Implementation Considerations and Caveats

While probabilistic genotyping systems promise automated and objective mixture deconvolution, they require careful implementation and interpretation. The contributor-genotype combinations simulated and tested by these systems are constrained by analyst-defined initial settings, particularly the estimated number of contributors to the mixture [15]. Inaccurately specifying this parameter can significantly impact analysis results, as determining the true number of contributors proves exceptionally difficult for complex mixtures requiring probabilistic rather than manual interpretation [15].

Additionally, these systems typically assume that possible contributors are unrelated, meaning they share minimal genetic allele profile similarity. When biological relationships exist between contributors, computations must account for this fact, as genetic relatedness can mask the true number and abundance of alleles [15]. Perhaps most importantly, probabilistic genotyping software will always report a result regardless of sample quality, contributor number, or the algorithm's ability to identify likely contributor-genotype combinations, making validation and quality control essential [15].

Experimental Protocols in Probabilistic Genotyping

Validation Methodologies

Comprehensive validation represents a critical component in implementing probabilistic genotyping systems. The following experimental protocol outlines the essential validation steps:

Protocol 1: Probabilistic Genotyping System Validation

- Sample Preparation: Create reference mixtures with known contributor profiles, varying contributor ratios (1:1, 1:4, 1:19), template DNA quantities (high to low template), and degradation levels.

- Data Generation: Amplify samples using standard STR amplification kits (e.g., GlobalFiler, PowerPlex Fusion) following manufacturer protocols, with replicate amplifications to assess reproducibility.

- Data Analysis: Process electropherograms using the probabilistic genotyping software with varying parameter settings (number of contributors, stutter models, allele drop-out thresholds).

- Result Interpretation: Compare software-generated likelihood ratios to expected outcomes, calculating rates of false inclusions and exclusions across different mixture complexities and quality thresholds.

- Sensitivity Analysis: Assess impact of parameter changes on result stability, particularly regarding the number of contributors specified and stutter model selection.

Casework Application Protocol

For applying probabilistic genotyping to forensic casework, the following standardized protocol ensures consistency and reliability:

Protocol 2: Casework Application Workflow

- Data Quality Assessment: Evaluate electropherogram quality metrics (peak height balance, signal-to-noise ratio, baseline morphology) to determine suitability for probabilistic analysis.

- Parameter Selection: Document all user-selected parameters including number of contributors, biological model assumptions, and relevant population genetic data.

- Hybrid Interpretation: Combine automated probabilistic analysis with expert review to identify potential artifacts (pull-up, stutter, off-ladder alleles) that may require data treatment.

- Result Documentation: Record likelihood ratios for all propositions tested, including appropriate alternative scenarios and sensitivity analyses.

- Reporting: Present results following established guidelines for probabilistic reporting, including clear explanation of limitations and assumptions.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Probabilistic Genotyping Studies

| Item | Function | Application Context |

|---|---|---|

| Reference DNA Standards | Provides known genetic profiles for validation studies | Creating controlled mixture experiments |

| STR Amplification Kits | Multi-locus amplification of forensic markers | Generating genetic data from biological samples |

| Quantification Standards | Accurate DNA concentration measurement | Ensuring input DNA within optimal range |

| Statistical Software Packages | Implementation of probabilistic algorithms | Data analysis and likelihood ratio calculation |

| Validation Datasets | Established mixture data with known ground truth | Method verification and performance assessment |

| Computational Resources | High-performance computing infrastructure | Running resource-intensive MCMC simulations |

| Quality Control Metrics | Monitoring analytical thresholds and noise levels | Ensuring data quality and reproducibility |

Subjective Probability in Forensic Interpretation

The Role of Expert Judgment

The integration of subjective probability represents a crucial dimension in forensic science interpretation, particularly when dealing with limited or ambiguous data. Subjective probability refers to likelihood assessments based on personal judgment, intuition, or expert knowledge rather than solely on mathematical calculations or historical data [14]. In forensic contexts, this approach becomes valuable when objective data is insufficient or when analysts must make decisions under uncertainty, bridging knowledge gaps to enable informed conclusions based on the best available insights [14].

Recent research demonstrates that subjective probability is systematically modulated by emotional states, a finding with significant implications for forensic decision-making. Studies have revealed that individuals experiencing higher levels of emotional dominance—characterized by perceived control, influence, and autonomy—tend toward more conservative probability estimates, avoiding extreme judgments and demonstrating increased use of the representativeness heuristic as a probability proxy [10]. This emotional influence persists even when assessing affectively neutral events, suggesting that emotions shape probabilistic cognition at a fundamental level beyond emotion-congruent memory effects [10].

Experimental Evidence from Psychological Research

The experimental protocols used to investigate subjective probability in psychological research provide methodological insights relevant to forensic science:

Protocol 3: Studying Subjective Probability Modulation

- Participant Selection: Recruit participants representing diverse demographic and professional backgrounds (N > 150 for adequate power).

- Emotional State Assessment: Measure baseline emotional characteristics using validated instruments (e.g., Emotional Dominance Scale) or induce specific states through autobiographical recall tasks.

- Probability Estimation Tasks: Present compound probability scenarios requiring likelihood assessments under varying information conditions.

- Data Collection: Record probability estimates, response times, and confidence levels for each judgment.

- Analysis: Evaluate conservatism (avoidance of extreme probabilities) and representativeness use (similarity-based judgments) relative to mathematical norms.

Diagram 2: Emotional Influence on Probability Judgments - This diagram shows how emotional states, particularly emotional dominance, systematically influence cognitive processes in probability estimation.

Current Challenges and Future Directions

Technical and Legal Considerations

Despite significant advances, probabilistic approaches in forensic science face ongoing challenges. Different probabilistic genotyping software can yield contradictory results when analyzing the same sample, as different systems employ distinct models and assumptions [15]. Even repeated analyses of the same sample using the same software may not produce identical likelihood ratio values due to the stochastic nature of MCMC processes, which generate slightly different probabilities in each simulation run [15].

Legal system integration presents additional complexities, particularly regarding transparency and scrutiny. Third-party audits of source code have identified issues with meaningful case impact in some probabilistic genotyping systems [15]. However, scrutinizing methods and software source code remains challenging when developers claim proprietary protection, though this trade secret principle has been increasingly questioned in legal contexts [15].

Research Priorities

Future research should prioritize several key areas to advance probabilistic thinking in forensic science:

- Validation Standards: Developing comprehensive validation frameworks specifically addressing complex mixtures with more than three contributors, where current validation is often limited.

- Cognitive Factors: Investigating how contextual information and cognitive biases influence probabilistic judgments in forensic casework.

- Computational Transparency: Creating methods to enhance understanding of probabilistic genotyping systems without compromising intellectual property.

- Interdisciplinary Approaches: Integrating insights from psychological research on subjective probability with forensic statistical development.

- Decision Framework Integration: Developing structured approaches for combining statistical results with case-specific information and alternative scenario testing.

The historical transition from individualization fallacies to probabilistic thinking represents an ongoing paradigm shift requiring continued collaboration between forensic scientists, statisticians, psychologists, and legal professionals to ensure both scientific rigor and just outcomes.

The Likelihood Ratio (LR) framework is a formal method for evaluating the strength of scientific evidence, providing a coherent bridge between empirical data and subjective probability assessments. This technical guide details the core principles, computational methodologies, and practical applications of the LR framework, with particular emphasis on its critical role in the objective interpretation of forensic evidence. By quantifying how much more likely evidence is under one proposition compared to an alternative, the LR offers a standardized metric for updating prior beliefs, grounded in Bayes' Theorem. This paper provides an in-depth examination of LR calculation, diagnostic utility thresholds, and experimental protocols for establishing test result-specific LRs, serving as an essential resource for researchers and practitioners engaged in evidence-based scientific disciplines.

The Likelihood Ratio (LR) is a fundamental statistical measure used to assess the strength of diagnostic test results or scientific evidence. It provides a quantitative answer to the question: "How many times more likely is this evidence to be observed if a given hypothesis is true, compared to if an alternative hypothesis is true?" [17] [18]. The LR framework is particularly valuable in fields requiring rigorous evidence evaluation, including forensic science, medical diagnostics, and pharmaceutical development, as it separates the objective strength of the evidence from the subjective prior probability of the hypothesis.

The mathematical foundation of the LR rests on the ratio of two probabilities: (1) the probability of observing the evidence if the hypothesis of interest (e.g., disease presence, guilt) is true, and (2) the probability of observing the same evidence if an alternative hypothesis (e.g., disease absence, innocence) is true [17]. This conceptual framework allows subject matter experts to communicate the probative value of their findings without directly addressing the ultimate issue, which often falls outside their expertise. In forensic science interpretation research, the LR provides a logically sound structure for reporting evaluative conclusions, ensuring transparency and robustness against cognitive biases [4].

The power of the LR framework lies in its direct integration with Bayes' Theorem, which describes how prior beliefs (prior probabilities) should be updated in light of new evidence to yield posterior beliefs (posterior probabilities) [17]. The LR serves as the modifying factor in this updating process. Formally, Bayes' Theorem can be expressed in odds form as: Post-test Odds = Pre-test Odds × Likelihood Ratio [17] [18]. This mathematical relationship ensures that the interpretation of any piece of evidence is contextual, depending explicitly on the circumstances of the case and the initial assumptions. The LR framework thus forces explicit acknowledgment of the relevant alternatives and prevents the transposition of the conditional—a common logical fallacy where the probability of the evidence given the hypothesis is confused with the probability of the hypothesis given the evidence [4].

Theoretical Foundations and Mathematical Formulation

Core Definitions and Calculations

The Likelihood Ratio is formulated as the ratio of two conditional probabilities, each representing the likelihood of the observed evidence under competing propositions. In diagnostic testing, these are typically referred to as LR+ (for positive test results) and LR- (for negative test results) [17].

- LR+ = Probability of a positive test result in diseased individuals / Probability of a positive test result in non-diseased individuals

- Formula: LR+ = Sensitivity / (1 - Specificity) [17]

- LR- = Probability of a negative test result in diseased individuals / Probability of a negative test result in non-diseased individuals

- Formula: LR- = (1 - Sensitivity) / Specificity [17]

The following diagram illustrates the logical workflow of applying the Likelihood Ratio within the Bayesian framework, from defining competing propositions to updating the probability of a hypothesis.

In its simplest form for simple hypotheses, the LR is calculated as: Λ(x) = L(θ₀ | x) / L(θ₁ | x) where L(θ₀ | x) is the likelihood of the null hypothesis given the observed data, and L(θ₁ | x) is the likelihood of the alternative hypothesis given the observed data [19].

Interpreting Likelihood Ratio Values

The value of the LR provides direct insight into the strength of the evidence. The further the LR is from 1, the stronger the diagnostic power of the evidence or test [17].

Table 1: Interpretation of Likelihood Ratio Values

| LR Value | Interpretation of Evidence Strength | Impact on Probability |

|---|---|---|

| > 10 | Strong evidence for the hypothesis/proposition | Large increase |

| 5 - 10 | Moderate evidence for the hypothesis/proposition | Moderate increase |

| 2 - 5 | Weak evidence for the hypothesis/proposition | Small increase |

| 1 | No diagnostic value | No change |

| 0.5 - 0.9 | Weak evidence against the hypothesis/proposition | Small decrease |

| 0.1 - 0.5 | Moderate evidence against the hypothesis/proposition | Moderate decrease |

| < 0.1 | Strong evidence against the hypothesis/proposition | Large decrease |

For example, in a forensic case report from Austin, Texas, DNA evidence was evaluated given activity-level propositions. The analysis resulted in an LR of approximately 1300 in favor of the prosecution's proposition, representing strong evidence [4].

Integration with Bayes' Theorem

The practical utility of the LR is realized through its application in Bayes' Theorem for updating prior beliefs. The process involves converting a pre-test probability to odds, multiplying by the LR, and converting the resulting post-test odds back to a probability [17].

- Pre-test Odds = Pre-test Probability / (1 - Pre-test Probability)

- Post-test Odds = Pre-test Odds × LR

- Post-test Probability = Post-test Odds / (1 + Post-test Odds)

This calculation can be visualized using a Fagan nomogram, which provides a graphical method for deriving the post-test probability by drawing a line connecting the pre-test probability to the LR [17]. The following diagram illustrates the mathematical relationship between the ROC curve and the calculation of interval-specific LRs, which is foundational for quantitative test interpretation.

Experimental Protocols and Methodologies

Establishing LRs from Receiver Operating Characteristic (ROC) Curves

For quantitative tests, Likelihood Ratios can be established for specific test result intervals or even single values using Receiver Operating Characteristic (ROC) curves [18].

Protocol:

- Study Population Selection: Recruit a cohort of participants that accurately represents the target population, including both affected (diseased) and unaffected (non-diseased) individuals. The sample size must provide sufficient statistical power for precise LR estimates.

- Blinded Measurement: Perform the index test and the reference standard (gold standard) test on all participants under blinded conditions, ensuring the personnel interpreting the tests are unaware of the other test's results.

- ROC Curve Construction: Plot the true positive rate (Sensitivity) against the false positive rate (1 - Specificity) for all possible cut-off points of the test [18].

- LR Calculation for Intervals:

- Divide the entire range of test results into multiple intervals or bins.

- For each interval, calculate:

- Sensitivityₓ = Proportion of diseased individuals with test results in that interval.

- 1-Specificityₓ = Proportion of non-diseased individuals with test results in that interval.

- LR for Interval = Sensitivityₓ / (1-Specificityₓ) [18].

- LR for Single Test Results: For a more granular approach, the LR of a single test result can be determined as the slope of the tangent to the ROC curve at the point corresponding to that result [18].

Application in Forensic Casework: Activity Level Propositions

The LR framework is particularly suited for evaluating evidence given activity-level propositions in forensic science, as demonstrated in a murder case from Austin, Texas [4].

Protocol:

- Define Competing Propositions: Formulate mutually exclusive propositions at the activity level. For example:

- Hp: The suspect performed the specific activity (e.g., took the victim's bike from the scene).

- Ha: Someone else performed the activity, and the suspect had no contact with the item [4].

- Identify Relevant Findings: List all scientific findings that require evaluation (e.g., DNA profile, fibers, gunshot residue).

- Assign Probabilities under Each Proposition: For each finding, estimate the probability of observing it if Hp is true and if Ha is true. This may involve:

- Using published data on transfer, persistence, and background prevalence of materials.

- Explicitly stating subjective probability assignments based on expert knowledge when empirical data is lacking [4].

- Calculate the Overall LR: Multiply the individual LRs for each finding (assuming independence) to obtain an overall LR for the set of evidence.

- Report and Interpret: Report the LR with a clear statement explaining its meaning in the context of the case, without infringing on the ultimate issue.

Table 2: Key Research Reagent Solutions for LR Methodology Implementation

| Reagent / Material | Function in LR Framework Implementation |

|---|---|

| ROC Curve Dataset | Raw data required for calculating test result-specific LRs; contains paired data of test values and true disease status for a cohort [18]. |

| Statistical Software (R, Python) | Computational environment for performing complex statistical calculations, generating ROC curves, and determining secant/tangent slopes for LR derivation [18]. |

| Reference Standard Test | The gold standard method used to definitively classify study participants as "diseased" or "non-diseased," forming the basis for sensitivity and specificity calculations [18]. |

| Validated Diagnostic Assay | The index test (e.g., immunoassay, PCR test) whose diagnostic performance is being evaluated; must be precise and accurate to generate reliable LRs [18]. |

| Bayesian Computational Tool | Software or script that automates the application of Bayes' Theorem, converting pre-test probabilities to post-test probabilities using calculated LRs [17]. |

Practical Applications and Clinical Implementation

Harmonization of Diagnostic Test Results

A significant application of the LR framework is in the harmonization of diagnostic test results across different assay platforms, manufacturers, and units of measurement [18]. For example, in antinuclear antibody (ANA) testing, different manufacturers use varying scales (Units/mL, IU/mL, titers), making direct comparison challenging. By establishing the LR associated with specific test result values, clinicians can interpret the clinical meaning of a result without needing to understand the specific scale [18]. A value with an LR of 10 has the same clinical meaning—the result is 10 times more likely in diseased than non-diseased individuals—regardless of whether the original unit was 35 Units, 48.5 CU, or 8.6 IU/mL [18].

Limitations and Considerations

While powerful, the LR framework has important limitations that researchers and practitioners must consider:

- Dependence on Quality Data: The accuracy of an LR depends entirely on the relevance and quality of the studies that generated the sensitivity and specificity estimates used in its calculation [17].

- Estimation of Pre-test Probability: The pre-test probability is often a subjective estimate based on clinician experience and gestalt, which can vary between practitioners and injects an element of judgment into the process [17].

- Assumption of Independence: The framework assumes that findings are independent, which may not always hold true in complex biological systems or forensic scenarios.

- Serial Application Not Validated: Although it may seem intuitive, using one LR to generate a post-test probability and then using that as a pre-test probability for a different LR related to another test has not been formally validated for use in series or parallel [17].

Implementing the Likelihood Ratio Framework in Forensic Practice

A Practical Guide to the Likelihood Ratio Formula and its Calculation

The Likelihood Ratio (LR) is a fundamental statistical measure used to quantify the strength of forensic evidence. It is defined as the probability of observing a specific piece of evidence under one proposition (often the prosecution's hypothesis) compared to the probability of observing that same evidence under an alternative proposition (often the defense's hypothesis) [20]. Within the context of forensic science interpretation research, the LR provides a coherent framework for updating beliefs about competing propositions based on evidence, formally connecting to Bayesian inference and the concept of justified subjectivism in probability assessment [2]. This approach acknowledges that probability assignments are constrained, conditional assessments based on task-relevant data and information, rather than unconstrained subjective opinions. The LR serves as the bridge that allows a forensic scientist to update prior odds (formed before considering the new evidence) into posterior odds (after considering the evidence), thereby providing a transparent and logically sound method for evidence evaluation.

The Core Likelihood Ratio Formula

Fundamental Equation

The generic form of the likelihood ratio is expressed as:

LR = P(E | H₁) / P(E | H₂)

Where:

- P(E | H₁) is the probability of observing the evidence (E) given that hypothesis H₁ is true.

- P(E | H₂) is the probability of observing the same evidence (E) given that hypothesis H₂ is true.

In forensic practice, H₁ and H₂ are mutually exclusive propositions about the source of the evidence or the activities that led to its creation. The LR numerically expresses how much more likely the evidence is under one proposition compared to the other.

Application-Specific Formulations

The fundamental LR formula is adapted based on the nature of the evidence and the propositions being tested. The two primary contexts are source-level and activity-level propositions.

Table: Likelihood Ratio Formulations for Different Types of Evidence

| Evidence Type | Propositions (H₁ vs. H₂) | LR Formula Adaptation | Key References |

|---|---|---|---|

| Discrete Data (e.g., genetic markers) | Same Source vs. Different Sources | LR = Π [ fₛⱼˣʲ (1-fₛⱼ)¹⁻ˣʲ ] / Π [ fFⱼˣʲ (1-fFⱼ)¹⁻ˣʲ ] | [21] |

| Continuous Data (e.g., FBS concentration) | Disease Present vs. Disease Absent | LR(r) = f(r) / g(r) where f and g are Probability Density Functions | [22] |

| Diagnostic Test (Dichotomous) | Target Disorder Present vs. Absent | LR+ = Sensitivity / (1 - Specificity) LR- = (1 - Sensitivity) / Specificity | [23] [20] |

| Activity Level (e.g., BPA) | Specific Activity vs. Alternative Activity | Complex, physics-based models; depends on the specific activity and evidence transferred. | [24] |

For discrete data, such as the presence or absence of genetic alleles, the overall LR is the product of the likelihood ratios for each independent marker [21]. For continuous data, such as a fasting blood sugar concentration, the probability of observing an exact value is zero, so the LR is calculated using probability density functions, f(r) and g(r), for the diseased and non-diseased populations, respectively [22].

Calculating Likelihood Ratios: Methodologies and Protocols

Calculation for Discrete Data - A Genetic Example

Experimental Protocol: Elephant Tusk DNA Analysis

Background: Interpol aims to determine whether a seized elephant tusk originated from a savanna elephant (MS) or a forest elephant (MF) using genetic data [21].

Workflow:

- Data Collection: DNA from the tusk is measured at multiple genetic markers. At each marker, the allele is recorded as either 0 or 1.

- Reference Data Compilation: The frequency of the "1" allele (fSj for savanna, fFj for forest) is obtained from population databases for each marker

j. - Probability Calculation: The probability of the observed genetic profile under each model is calculated. The models assume independence between markers.

- LR Computation: The LR is computed as the ratio of these two probabilities.

Sample Data and Calculation:

Table: Genetic Marker Data for Elephant Tusk Analysis

| Marker (j) | Tusk Allele (x_j) | Savanna Freq (f_Sj) | Forest Freq (f_Fj) | P(xj | MS) | P(xj | MF) |

|---|---|---|---|---|---|

| 1 | 1 | 0.40 | 0.80 | 0.40 | 0.80 |

| 2 | 0 | 0.12 | 0.20 | (1-0.12)=0.88 | (1-0.20)=0.80 |

| 3 | 1 | 0.21 | 0.11 | 0.21 | 0.11 |

| 4 | 0 | 0.12 | 0.17 | (1-0.12)=0.88 | (1-0.17)=0.83 |

| 5 | 0 | 0.02 | 0.23 | (1-0.02)=0.98 | (1-0.23)=0.77 |

| 6 | 1 | 0.32 | 0.25 | 0.32 | 0.25 |

The overall likelihood for each model is the product of the probabilities across all markers:

- P(X | M_S) = 0.40 × 0.88 × 0.21 × 0.88 × 0.98 × 0.32 ≈ 0.020

- P(X | M_F) = 0.80 × 0.80 × 0.11 × 0.83 × 0.77 × 0.25 ≈ 0.009

The Likelihood Ratio is: LR = P(X | MS) / P(X | MF) ≈ 0.020 / 0.009 ≈ 2.22

This result means the observed genetic data are about 2.22 times more likely if the tusk came from a savanna elephant than from a forest elephant [21].

Figure 1: Workflow for calculating a Likelihood Ratio from discrete genetic data.

Calculation for Continuous Data - A Medical Diagnostic Example

Experimental Protocol: Interpreting a Fasting Blood Sugar (FBS) Test

Background: A diagnostic test with continuous results, like FBS concentration, requires a different approach because the probability of any exact value is zero. The LR is instead calculated using probability density functions (PDFs) [22].

Workflow:

- Define Distributions: Establish the PDFs for the test result in the diseased (f(x)) and non-diseased (g(x)) populations. These are often modeled using known distributions (e.g., normal, binormal).

- Obtain Test Result: Measure the analyte (e.g., FBS) for the patient, yielding a specific value

r. - Evaluate PDFs: Calculate the height of the density function for value

rin both the diseased and non-diseased distributions. - LR Computation: The LR for the specific test result

ris the ratio of these two density function values.

Sample Data and Calculation:

Assume FBS is normally distributed:

- Diabetic population (D+): mean = 99.7 mg/dL, SD = 7.2 mg/dL

- Healthy population (D-): mean = 89.7 mg/dL, SD = 5.0 mg/dL

- Patient's FBS result (r): 98 mg/dL

Using the PDF of the normal distribution:

- f(r) = PDF of N(99.7, 7.2) evaluated at 98 mg/dL ≈ 0.0539

- g(r) = PDF of N(89.7, 5.0) evaluated at 98 mg/dL ≈ 0.0201

The Likelihood Ratio for the specific result r=98 mg/dL is: LR(r) = f(r) / g(r) ≈ 0.0539 / 0.0201 ≈ 2.68

A patient with an FBS of 98 mg/dL is therefore about 2.68 times more likely to belong to the diabetic population than the healthy population [22].

The Critical Role of Typicality

A crucial consideration in forensic LR calculation, particularly for source-level propositions, is accounting for both similarity and typicality [25]. Similarity measures how closely two pieces of evidence match each other. Typicality measures how common or rare those characteristics are in the relevant population. A method that considers only similarity but not typicality can substantially overstate the strength of the evidence. Research demonstrates that specific-source and common-source methods inherently account for both factors, while simple similarity-score methods do not [25]. For example, a DNA profile match is powerful not just because the suspect's profile and the crime scene profile are similar, but also because the profile is highly unusual (low typicality) in the general population. Therefore, the recommended practice is to use common-source or specific-source methods that properly incorporate typicality, rather than relying on similarity scores alone [25].

Interpreting the Likelihood Ratio

Quantitative Interpretation

The magnitude of the LR indicates the strength of the evidence.

Table: Interpretation Guide for Likelihood Ratio Values

| LR Value | Interpretation | Strength of Evidence |

|---|---|---|

| > 10 | Strong evidence to support H₁ over H₂ | Strong / Convincing |

| 2 to 10 | Moderate evidence to support H₁ over H₂ | Moderate |

| 1 to 2 | Minimal evidence to support H₁ over H₂ | Weak / Limited |

| 1 | No evidence; the evidence is equally likely under both propositions | Non-informative |

| 0.5 to 1 | Minimal evidence to support H₂ over H₁ | Weak / Limited |

| 0.1 to 0.5 | Moderate evidence to support H₂ over H₁ | Moderate |

| < 0.1 | Strong evidence to support H₂ over H₁ | Strong / Convincing |

In diagnostic medicine, an LR+ > 10 or an LR- < 0.1 are often considered to provide strong, often conclusive, evidence [23] [20].

Integration with Bayesian Framework

The true power of the LR is realized when it is used within a Bayesian framework to update prior beliefs. The relationship is given by:

Posterior Odds = Prior Odds × Likelihood Ratio

Where:

- Prior Odds = P(H₁) / P(H₂) [The odds in favor of H₁ before considering the evidence]

- Posterior Odds = P(H₁ | E) / P(H₂ | E) [The odds in favor of H₁ after considering the evidence]

This can be converted to a probability: Post-test Probability = Post-test Odds / (Post-test Odds + 1)

For example, if a disease has a pre-test probability of 50% (Pre-test Odds = 1:1), and a test has an LR+ of 6, the post-test odds are 1 * 6 = 6. The post-test probability is then 6 / (6+1) = 86% [20].

Figure 2: The Bayesian framework for updating belief using a Likelihood Ratio.

Application in Forensic Science and Research

The LR in a Forensic Context

The LR framework is directly applicable to evaluative reporting in forensic science. It forces the examiner to consider at least two propositions: one offered by the prosecution (e.g., "this fingerprint came from the suspect") and one by the defense (e.g., "this fingerprint came from some other person") [26]. The LR provides a standardized scale for the forensic expert to communicate the weight of the evidence to the court, without encroaching on the ultimate issue, which is the purview of the trier of fact. The ongoing research and debate around subjective probability in this context emphasize that the probabilities used in LRs are not arbitrary but are justified, constrained assessments based on data, experience, and logical reasoning [2] [3] [26].

Current Challenges and Research Directions

Despite its logical appeal, the implementation of the LR faces challenges in some forensic disciplines:

- Bloodstain Pattern Analysis (BPA): The use of LRs in BPA is complex because BPA often concerns activities (activity-level propositions) rather than source identification, and there are significant gaps in the underlying physics-based science that inform the probabilities. Wider adoption requires more research in fluid dynamics, data sharing, and statistical training [24].

- Fingerprint Evidence: Research is focused on developing statistical models to compute LRs for fingerprint comparisons, moving away from non-probabilistic conclusions to quantifiable measures of evidential strength [26].

- Computational Methods: For continuous data, precisely determining LR(r) (the LR for a specific result

r) requires knowing the exact probability density functions, which is often difficult with discrete empirical data. Therefore, likelihood ratios for positive/negative test results (LR+ and LR-) or for ranges of results are more commonly used in practice [22].

The Scientist's Toolkit: Essential Research Reagents

Table: Key Reagents and Materials for LR Research and Application

| Reagent / Material | Function in LR Calculation and Research |

|---|---|

| Reference Population Databases | Provides essential data for estimating probability distributions (e.g., fSj, fFj, f(x), g(x)) under the alternative propositions. |

| Statistical Software (R, Python, MATLAB) | Used to implement probability calculations, fit statistical models (e.g., PDFs), and compute LRs, especially for complex or high-dimensional data. |

| Probability Density Function (PDF) Models (e.g., Normal, Kernel Density Estimates) | Serves as the core model for calculating LRs with continuous data by providing the functions f(x) and g(x). |

| Validated Diagnostic Assays | Provides the standardized, reproducible test results (e.g., FBS, genetic markers) which form the evidence 'E' in the LR formula. |

| Sensitivity and Specificity Data | Derived from validation studies, these metrics are the fundamental inputs for calculating dichotomous test LRs (LR+ and LR-). |