Structural Flexibility in Compound AI Systems: Principles and Applications for Drug Development

This article explores the principles of Compound AI Systems (CAIS) and their inherent structural flexibility, providing a comprehensive guide for researchers and professionals in drug development. It covers the foundational architecture of CAIS, detailing how the integration of multiple specialized components—such as LLMs, retrievers, and tools—overcomes the limitations of monolithic AI. The content then delves into methodological applications in biomedical research, from automating documentation to predicting molecular interactions. Further, it addresses the critical challenges of troubleshooting, optimization, and ensuring robust validation within regulated environments. By synthesizing foundational knowledge with practical, application-oriented guidance, this article serves as a vital resource for leveraging modular, adaptable AI to accelerate and enhance drug discovery and clinical research.

Structural Flexibility in Compound AI Systems: Principles and Applications for Drug Development

Abstract

This article explores the principles of Compound AI Systems (CAIS) and their inherent structural flexibility, providing a comprehensive guide for researchers and professionals in drug development. It covers the foundational architecture of CAIS, detailing how the integration of multiple specialized components—such as LLMs, retrievers, and tools—overcomes the limitations of monolithic AI. The content then delves into methodological applications in biomedical research, from automating documentation to predicting molecular interactions. Further, it addresses the critical challenges of troubleshooting, optimization, and ensuring robust validation within regulated environments. By synthesizing foundational knowledge with practical, application-oriented guidance, this article serves as a vital resource for leveraging modular, adaptable AI to accelerate and enhance drug discovery and clinical research.

Deconstructing Compound AI: From Monolithic Models to Modular Systems

The field of artificial intelligence is undergoing a fundamental architectural transformation, moving from the development of increasingly larger, monolithic models to the design of sophisticated Compound AI Systems (CAIS). This paradigm shift represents a critical evolution in AI engineering, where superior performance is no longer sought solely through scaling model parameters but through the intentional orchestration of multiple, specialized components. Compound AI Systems are formally defined as modular frameworks that integrate large language models (LLMs) with external components, such as retrievers, tools, agents, and orchestrators, to overcome the inherent limitations of standalone models in tasks requiring memory, reasoning, real-time grounding, and multimodal understanding [1]. This architectural approach stands in stark contrast to the traditional paradigm of single, self-contained models attempting to handle all aspects of a task independently.

The limitations of monolithic LLMs have become increasingly apparent as AI applications move from research to real-world deployment. Standalone models frequently struggle with hallucination, producing fluent but factually inaccurate output that undermines trust in high-stakes domains. They suffer from staleness, lacking access to post-training knowledge, which limits their responsiveness to emerging facts. Furthermore, they exhibit bounded reasoning due to finite context windows and inference budgets, constraining multi-hop reasoning and long-horizon task decomposition [1]. These limitations impede safe and effective deployment in dynamic environments that require recency, factual reliability, and compositional reasoning—requirements particularly critical in domains like drug development and healthcare.

This technical guide examines the core principles, architectural patterns, and implementation methodologies of Compound AI Systems, with particular attention to the emerging research on structural flexibility and its implications for AI-driven drug discovery. By synthesizing formal definitions, architectural blueprints, and experimental protocols, we provide researchers and drug development professionals with a comprehensive framework for understanding, designing, and optimizing these systems for complex scientific applications.

Core Architecture and Formal Definitions

Fundamental Components and Mathematical Formalization

At its core, a Compound AI System can be mathematically represented as a function of three essential elements: Compound AI System = f(L, C, D), where L represents the set of LLMs in the system, C encompasses all external components, and D defines the system design governing their interactions [1]. This formalization highlights that neither LLMs nor components alone constitute a CAIS; rather, it is their integration through deliberate architectural choices that creates emergent capabilities beyond what any single element could achieve.

A more granular formalization models a CAIS as Φ = (G, F), where G = (V, E) is a directed graph representing the system topology, and F = {f_i} is a set of operations attached to each node v_i in the graph [2]. In this computational graph representation, each node v_i performs an operation Y_i = f_i(X_i; Θ_i), where X_i is the input, Y_i is the output, and Θ_i are the node parameters decomposable into numerical parameters (θ_i,N) and textual parameters (θ_i,T) [2]. The edges between nodes are governed by Boolean functions c_ij: Ω → {0,1} that determine whether a connection between nodes v_i and v_j is active based on the contextual state τ ∈ Ω, creating a dynamic topology that can adapt to different inputs and intermediate states [2].

Table 1: Core Components of Compound AI Systems

| Component Category | Subtypes | Primary Function | Examples |

|---|---|---|---|

| Large Language Models (L) | General-purpose, Domain-specific, Fine-tuned | Core reasoning, text generation, pattern recognition | GPT-4, Gemini, Claude, domain-specific LLMs |

| External Components (C) | Tools, Retrievers, Symbolic solvers, Multimodal encoders | Extend LLM capabilities with specialized functions | Web search, code interpreters, knowledge graphs, RAG modules |

| System Design (D) | Orchestration frameworks, Routing logic, Communication protocols | Define component interactions and workflow coordination | LangChain, LlamaIndex, AutoGen, custom orchestrators |

Architectural Visualization

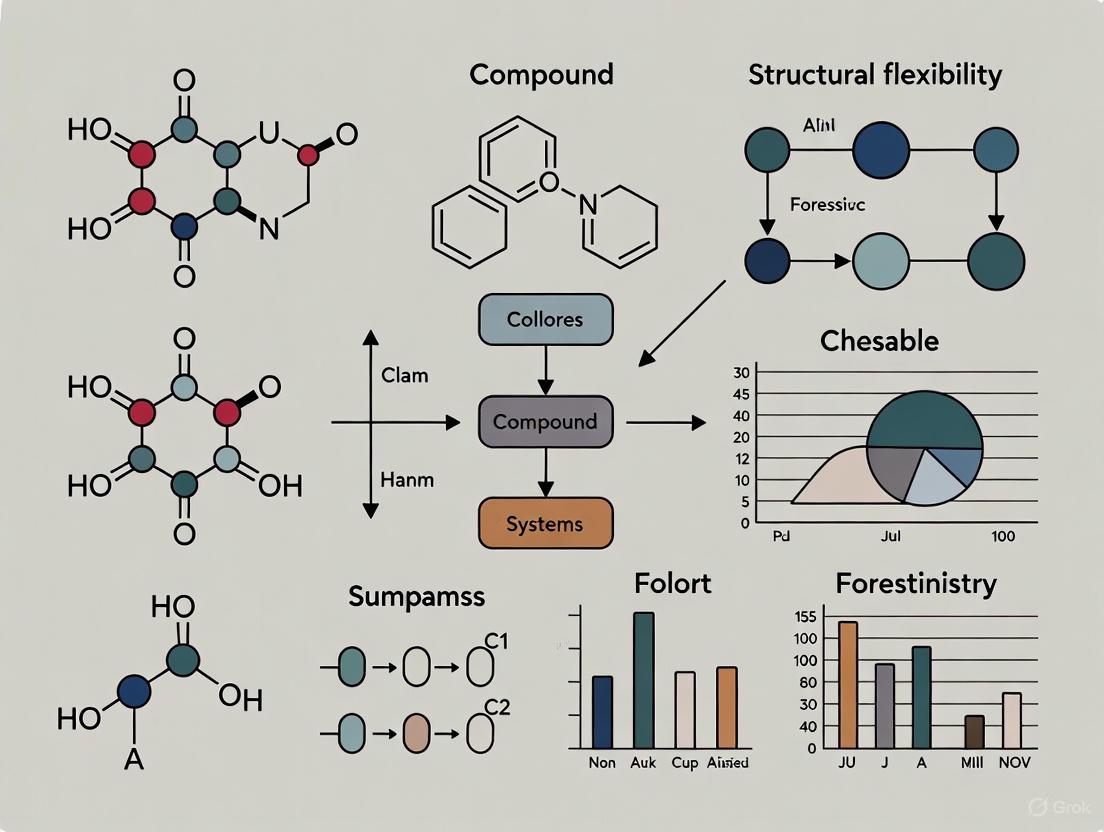

The following diagram illustrates the fundamental architecture of a Compound AI System, showing the integration of core LLMs with specialized components through a structured orchestration layer:

Dimensions of Structural Flexibility in Compound AI Systems

The Spectrum of Architectural Adaptability

Structural flexibility represents a critical dimension in Compound AI System design, referring to the degree to which an optimization method can modify the computational graph G = (V, E) of a system Φ [2]. This flexibility exists along a spectrum from fixed to dynamically evolving architectures, with significant implications for system performance, adaptability, and optimization complexity.

Fixed Structure approaches assume a predefined topology (V, E) and focus optimization efforts exclusively on node parameters {Θ_i}. This includes techniques such as prompt optimization, parameter tuning, and model fine-tuning while maintaining static connections between components. The advantage of this approach lies in its relative simplicity and stability, making it suitable for well-defined problems with predictable workflows. However, it lacks the adaptability to reconfigure system architecture in response to novel challenges or changing requirements [2].

In contrast, Flexible Structure methods acknowledge that optimal performance often requires jointly optimizing both node parameters and the graph structure itself, including edge connections E, node counts |V|, and even the types of operations in F [2]. This approach enables systems to dynamically adapt their architecture based on task requirements, input characteristics, and performance feedback. The trade-off comes in increased complexity, longer optimization cycles, and potential instability during the exploration of novel configurations.

Table 2: Optimization Methods for Structural Flexibility

| Optimization Method | Structural Flexibility | Learning Signals | Key Techniques |

|---|---|---|---|

| Parameter Optimization | Fixed | Numerical, Textual | Supervised fine-tuning, Reinforcement Learning, Prompt tuning |

| Topology Search | Flexible | Numerical, Textual | Neural Architecture Search, Evolutionary algorithms, LLM-generated proposals |

| Feedback-Based Adaptation | Flexible | Natural Language | Textual feedback loops, Self-debugging, Human-in-the-loop refinement |

| Hybrid Approaches | Variable | Mixed | Gradient-based + LLM-driven, Multi-objective optimization |

Dynamic Architecture Selection Framework

The following diagram illustrates how structural flexibility enables dynamic architecture selection based on task requirements and context:

Experimental Protocols and Evaluation Frameworks

Methodologies for Compound AI System Optimization

The optimization of Compound AI Systems requires specialized experimental protocols that account for their multi-component, often non-differentiable nature. Unlike single-model optimization that can rely on gradient-based methods, CAIS optimization must address challenges in credit assignment across components, heterogeneous learning signals, and evaluation of overall system performance.

Protocol 1: End-to-End System Optimization

- Objective: Maximize overall system performance metric

μacross training setD = {(q_i, m_i)}whereq_irepresents queries andm_irepresents optional metadata [2]. - Procedure:

- Define performance metric

μ: A × M → ℝthat measures system output quality against ground truth or task objectives. - Initialize system

Φ = (G, F)with either fixed or flexible structure based on task complexity. - Generate system responses

a_i = Φ(q_i)for all training examples. - Compute performance gradient

∇_Φ μor alternative optimization signal. - Update system parameters

Θand/or structureGbased on optimization method. - Repeat until convergence or performance plateau.

- Define performance metric

- Variants: This protocol can incorporate numerical gradients (when differentiable), textual feedback from auxiliary LLMs, or human preferences as learning signals [2].

Protocol 2: Component-Wise Ablation Studies

- Objective: Isolate contribution of individual components to overall system performance.

- Procedure:

- Establish baseline performance of complete system

Φon benchmark tasks. - For each component

C_i ∈ C, create ablated systemΦ_{-i}with component removed or replaced with null operation. - Measure performance differential

Δμ_i = μ(Φ) - μ(Φ_{-i}). - Rank components by contribution magnitude and identify critical path dependencies.

- Optimize resource allocation toward high-impact components.

- Establish baseline performance of complete system

- Applications: Particularly valuable in resource-constrained environments or when debugging system failures.

Protocol 3: Structural Search and Optimization

- Objective: Identify optimal system topology

G*for specific task domain. - Procedure:

- Define search space of possible architectures

G ∈ 𝒢with constraints on complexity, latency, or resource requirements. - Implement efficient search algorithm (evolutionary, Bayesian optimization, or LLM-guided) to explore architecture space.

- Evaluate candidate architectures using parallelized or proxy evaluation strategies.

- Select optimal architecture

G*that maximizes performanceμwhile satisfying constraints. - Fine-tune component parameters

Θfor selected architecture.

- Define search space of possible architectures

- Challenges: Computational expense grows exponentially with search space size, requiring careful design of search space and evaluation strategies [2].

The Scientist's Toolkit: Research Reagents for CAIS Experimentation

Table 3: Essential Research Tools for Compound AI System Development

| Tool Category | Specific Solutions | Function in CAIS Research | Implementation Considerations |

|---|---|---|---|

| Orchestration Frameworks | LangChain, LlamaIndex, AutoGen | Coordinate component interactions, manage workflows, handle state | Latency, error propagation, debugging visibility |

| Evaluation Benchmarks | HELM Safety, AIR-Bench, FACTS, SWE-bench | Standardized assessment of factuality, reasoning, safety | Domain relevance, difficulty calibration, cost of evaluation |

| Optimization Toolkits | PyCaret, H2O.ai AutoML, Custom RL frameworks | Automated parameter tuning, architecture search | Signal-to-noise ratio, credit assignment, training stability |

| Monitoring & Analysis | Weight & Biases, MLflow, Custom dashboards | Track experiments, visualize component interactions, debug failures | Observability granularity, performance attribution |

| Specialized Components | Symbolic solvers, Knowledge graphs, RAG systems | Extend reasoning capabilities, provide external knowledge | Integration complexity, latency budget, accuracy verification |

Applications in Drug Discovery and Development

Compound AI Systems in Pharmaceutical Research

The pharmaceutical industry has emerged as a particularly promising domain for Compound AI System applications, with demonstrated potential to address long-standing challenges in drug development timelines, costs, and success rates. By integrating specialized AI components for target identification, molecular design, and clinical trial optimization, CAIS platforms enable more efficient and effective drug discovery pipelines.

Leading AI-driven drug discovery companies exemplify the compound system approach in practice. Exscientia developed an end-to-end platform that integrates AI at every stage from target selection to lead optimization, dramatically compressing the design-make-test-learn cycle. Their platform reportedly achieved a clinical candidate after synthesizing only 136 compounds for a CDK7 inhibitor program, compared to thousands typically required in traditional approaches [3]. Insilico Medicine advanced a generative-AI-designed idiopathic pulmonary fibrosis drug from target discovery to Phase I trials in just 18 months, substantially faster than traditional timelines [3]. These examples demonstrate how carefully orchestrated AI systems can accelerate specific aspects of the drug development process.

The merger between Exscientia and Recursion Pharmaceuticals in 2024, valued at $688 million, represents a significant consolidation in the AI drug discovery landscape aimed at creating an "AI drug discovery superpower" by combining Exscientia's generative chemistry capabilities with Recursion's extensive phenomics and biological data resources [3]. This trend toward integrated platforms highlights the growing recognition that compound systems with complementary specialized components may deliver greater value than isolated AI tools.

Quantitative Impact of AI in Drug Discovery

Table 4: Performance Metrics of AI-Driven Drug Discovery Platforms

| Platform/Company | Key AI Capabilities | Reported Efficiency Gains | Clinical Pipeline Status |

|---|---|---|---|

| Exscientia | Generative chemistry, Automated design | 70% faster design cycles, 10x fewer compounds synthesized | Multiple Phase I/II candidates, None in Phase III |

| Insilico Medicine | Target discovery, Generative molecular design | Target-to-Preclinical: 18 months (vs. 5+ years traditional) | Phase I idiopathic pulmonary fibrosis candidate |

| Recursion | Phenotypic screening, Computer vision | High-content cellular imaging analysis at scale | Multiple oncology and neuroscience programs |

| BenevolentAI | Knowledge graphs, Target prioritization | AI-derived novel target identification | Several programs in clinical stages |

| Schrödinger | Physics-based simulations, ML scoring | Accelerated molecular docking and optimization | Partnered programs with major pharma |

Implementation Framework for Drug Discovery CAIS

The following diagram illustrates a typical Compound AI System architecture for drug discovery applications, integrating multiple specialized components:

Future Research Directions and Challenges

Despite significant progress in Compound AI Systems, substantial research challenges remain, particularly regarding optimization methodologies, evaluation standards, and real-world deployment in regulated environments like drug development.

A primary research direction involves developing more sophisticated optimization methods for end-to-end system improvement. Current approaches include reinforcement learning from human feedback (RLHF), process-based reward models (PRMs), and language-based feedback loops that provide learning signals for non-differentiable components [2]. However, credit assignment across multiple components remains challenging, particularly when feedback is sparse or delayed. Future research should explore multi-objective optimization techniques that balance competing goals like accuracy, latency, cost, and interpretability.

In drug development applications, regulatory considerations present unique challenges for Compound AI Systems. The U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) have begun establishing frameworks for AI oversight in drug development, with the FDA adopting a flexible, dialog-driven model while the EMA employs a more structured, risk-tiered approach [4]. Both agencies emphasize validation, transparency, and performance monitoring, but requirements for complex AI systems with multiple interacting components remain evolving. Regulatory uncertainty may be particularly challenging for small- and medium-sized enterprises facing compliance burdens [4].

Additional frontier challenges include developing effective evaluation frameworks that measure overall system performance rather than just component-level metrics, establishing standards for system robustness and failure mode analysis, and creating methods for continuous learning while maintaining safety and performance guarantees. As Compound AI Systems grow more complex, research into interpretability and explainability techniques will become increasingly important, particularly in high-stakes domains like healthcare where understanding system reasoning is essential for trust and adoption.

The integration of emerging AI capabilities with compound systems presents another fertile research direction. Agentic AI systems that can autonomously plan and execute multi-step workflows represent a natural evolution of current CAIS architectures [5]. Similarly, advances in multimodal reasoning and human-AI collaboration paradigms will enable more sophisticated and intuitive interactions between compound systems and human experts, potentially creating new models for scientific discovery and problem-solving across domains, including pharmaceutical research and development.

In the development of complex systems, particularly within the domain of compound artificial intelligence (AI) and structural flexibility research, three core architectural principles emerge as critical: modularity, orchestration, and component interaction. These principles provide the foundational framework for constructing systems capable of handling sophisticated, multi-step problems that exceed the capabilities of any single component working in isolation. Compound AI systems, defined as advanced frameworks where multiple AI components collaborate to perform tasks, represent a significant shift from simple, static AI models to dynamic, multi-functional systems that can handle real-world, complex problems [6]. This architectural approach breaks down complex tasks into smaller sub-tasks, with each sub-system or model contributing its specialized expertise within a unified system.

The significance of these principles extends across multiple domains, from autonomous driving platforms to drug discovery pipelines, where reliability, scalability, and adaptability are paramount. In pharmaceutical research and development, these principles enable the creation of flexible, robust computational infrastructures that can adapt to evolving research needs, integrate diverse data sources, and accelerate the discovery process through specialized, interoperable components. This technical guide examines these core principles through the lens of compound AI systems, providing researchers and drug development professionals with both theoretical foundations and practical implementation methodologies.

Core Principle 1: Modularity in System Architecture

Definition and Theoretical Foundation

Modularity represents a design principle that subdivides a system into smaller, self-contained parts called modules, which can be independently created, modified, replaced, or exchanged with other modules or between different systems [7]. This partitioning enables easier standardization and makes product variability possible through functional decomposition. A truly modular design is characterized by functional partitioning into discrete, scalable, and reusable modules, rigorous use of well-defined modular interfaces, and the application of industry standards for interfaces.

In architectural theory, modular systems exhibit higher dimensional modularity and degrees of freedom compared to simpler platform systems that utilize modular components but with limited flexibility. A modular system design has no distinct lifetime and exhibits flexibility in at least three dimensions, allowing systems to be upgraded and adapted multiple times during their operational lifespan without requiring complete system replacement [7]. This dimensional flexibility enables far greater adaptability in both form and function than systems with limited modularity.

Benefits and Implementation Challenges

The implementation of modular design principles offers significant advantages for complex computational systems, particularly in research environments:

Table 1: Benefits and Drawbacks of Modular Design in Computational Systems

| Benefits | Drawbacks |

|---|---|

| Reduced Costs: Customization limited to specific modules rather than system overhaul [7] | Design Complexity: Significantly higher than platform systems [7] |

| Enhanced Flexibility: Adapts to user needs without complete system redesign [7] | Specialized Expertise Required: Needs experts in design and product strategy [7] |

| Improved Sustainability: Extends product life via module upgrades versus full replacement [7] | Advanced Planning Necessary: Must anticipate flexibility requirements during conception [7] |

| Standardization: Fewer system parts reduce production time and simplify inventory [7] | Integration Challenges: Potential interface compatibility issues between modules |

| Non-Generational Augmentation: Adding new solutions through module integration [7] | Performance Overhead: Inter-module communication may introduce latency |

The most significant challenge in modular system design lies in the initial conception phase, which must anticipate the directions and levels of flexibility necessary to deliver modular benefits effectively. This requires a higher level of design skill and sophistication than more common platform systems [7].

Modularity in Compound AI Systems

In compound AI systems, modularity manifests through the composition of multiple specialized components—such as reasoning models, memory layers, retrieval systems, and external tools—into a unified system [6]. These systems are inherently modular, allowing different AI models, tools, agents, and databases to be combined and orchestrated to work together. The resulting architecture is more robust, adaptable, and intelligent, capable of solving complex, multi-step problems through specialized component contributions.

The Mobileye autonomous driving platform exemplifies sophisticated modular implementation, breaking autonomy into clearly defined components such as sensing, planning, and acting, each corresponding with a dedicated AI model or models [8]. This modular approach allows engineers to focus on specific driving functions, enabling flexibility and specialization while maintaining system cohesion through well-defined interfaces.

Core Principle 2: Orchestration Patterns

The Role of Orchestration in Compound Systems

Orchestration serves as the architectural pattern that controls the flow of data across multiple components in a system, with the primary purpose of simplifying communication between services and decoupling the requirement of knowing the next service in a sequence [9]. The orchestrator acts as the key component that maintains knowledge about requirements to trigger services and manages the overall workflow. This centralized control mechanism ensures that business processes and computational workflows are executed reliably and maintainably, particularly in systems with multiple conditions required to trigger service actions.

In compound AI systems, orchestration enables communication and coordination among various components, allowing different agents and tools to be plugged in and out based on task requirements [6]. This dynamic coordination is essential for adapting to complex workflows and research environments, ensuring that each system component contributes at the right time with the appropriate resources. The orchestrator manages the complexity of component interactions, allowing individual modules to focus on their specialized functions without maintaining extensive knowledge about other system components.

Classification of Orchestration Patterns

Orchestration patterns define proven approaches for coordinating multiple agents or components to work together accomplishing specific outcomes. These patterns optimize for different coordination requirements and complement traditional cloud design patterns by addressing unique challenges of coordinating autonomous components in AI-driven workloads [10].

Table 2: Orchestration Patterns for Multi-Agent AI Systems

| Pattern | Key Characteristics | Optimal Use Cases | Performance Considerations |

|---|---|---|---|

| Sequential Orchestration | Linear agent chain, predefined order, deterministic workflow [10] | Multistage processes with clear dependencies, progressive refinement workflows [10] | Potential bottlenecks from slowest agent; limited parallelization [10] |

| Concurrent Orchestration | Parallel agent execution, independent processing, result aggregation [10] | Tasks benefiting from multiple perspectives, time-sensitive scenarios, ensemble reasoning [10] | Resource-intensive; requires conflict resolution strategy [10] |

| Group Chat Orchestration | Collaborative discussion, shared conversation thread, chat manager coordination [10] | Creative brainstorming, validation workflows, quality control processes [10] | Discussion overhead; potential infinite loops without careful management [10] |

| Handoff Orchestration | Dynamic task delegation, intelligent routing, transfer of full control [10] | Scenarios where optimal agent isn't known upfront, context-dependent task requirements [10] | Routing decision latency; potential transfer overhead [10] |

Implementation Considerations for Orchestration

The implementation of effective orchestration requires careful consideration of several architectural factors. Orchestrators must maintain configurations defining service triggering requirements and store received events, particularly for services requiring multiple events for activation [9]. This typically necessitates storage technology integration for maintaining state and configuration data.

Performance represents another critical consideration, as centralization of control can create single points of failure and potential performance bottlenecks [9]. A careful design and implementation, including considerations for scalability, fault tolerance, and resilience, are crucial and essential to reduce these risks. Orchestrator workload can vary significantly, from managing 100 events daily to 10,000 events daily, requiring appropriate architectural decisions regarding storage technology and processing capacity [9].

Figure 1: AI Agent Orchestration Pattern Classification

Core Principle 3: Component Interaction Modalities

Fundamental Components of AI Systems

Effective component interaction begins with understanding the fundamental elements that constitute AI systems. While capabilities vary across different agent types, several core components consistently appear in sophisticated AI architectures:

Perception and Input Handling: Enables the agent to ingest and interpret information from various sources, including user queries, system logs, structured data from APIs, or sensor readings [11]. This module employs technologies like natural language processing (NLP) for text-based inputs or data extraction techniques for structured sources, cleaning and processing raw data into usable formats.

Planning and Task Decomposition: Unlike reactive agents that respond instinctively, planning agents map out sequences of actions before execution [11]. This component breaks complex problems into smaller, manageable tasks, sequencing actions and determining dependencies between tasks using logic, machine learning models, or predefined heuristics.

Memory: Enables the AI agent to retain and recall information, ensuring it can learn from past interactions and maintain context over time [11]. Memory is typically divided into short-term memory for session-based context and long-term memory for structured knowledge bases, vector embeddings, and historical data.

Reasoning and Decision-Making: Determines how an agent reacts to its environment by weighing different factors, evaluating probabilities, and applying logical rules or learned behaviors [11]. This can range from simple rule-based systems to advanced implementations using Bayesian inference, reinforcement learning, or neural networks.

Action and Tool Calling: Implements the agent's decisions by interacting with users, digital systems, or physical environments [11]. Tool calling enables agents to invoke external tools, APIs, or functions to extend capabilities beyond native reasoning and knowledge.

Learning and Adaptation: Enables agents to learn from past experiences and improve over time through various learning paradigms, including supervised learning, unsupervised learning, and reinforcement learning [11].

Interaction Modalities in Multi-Component Systems

Component interaction in compound AI systems occurs through several well-defined modalities that determine how modules communicate and coordinate:

Direct Communication: Components exchange information through predefined APIs or messaging protocols, maintaining awareness of interacting services. While straightforward to implement, this approach can create tight coupling between components [9].

Orchestrator-Mediated Communication: All components communicate through a central orchestrator that manages workflows and data flow. This approach simplifies component design by eliminating the need for components to maintain knowledge about other services [9].

Shared Memory Space: Components interact through a common memory or knowledge base, reading and writing to shared storage without direct communication. This approach enables asynchronous coordination and context maintenance across interactions [11].

Blackboard Architecture: Multiple specialized components work together by examining and contributing to a shared repository of data and hypotheses, similar to experts gathered around a blackboard [12].

Recent research from UCLA reveals striking parallels between biological and artificial systems during social interaction, with neural activity partitioning into "shared neural subspaces" containing synchronized patterns between interacting entities and "unique neural subspaces" containing activity specific to each individual [13]. This biological analogy informs the design of more efficient component interaction patterns in artificial systems.

Experimental Protocol for Evaluating Component Interaction

To systematically evaluate component interaction effectiveness in compound AI systems, researchers can implement the following experimental protocol:

Hypothesis: System performance in complex tasks correlates with efficient component interaction patterns specific to task characteristics.

Materials and Reagents:

- Modular AI Components: Specialized modules for perception, reasoning, memory, and action [11]

- Orchestration Framework: Control system for managing workflow between components [9]

- Communication Bus: Message-passing infrastructure for inter-component communication

- Evaluation Metrics Suite: Quantitative measures for system performance assessment

- Task Simulation Environment: Controlled setting for reproducing experimental conditions

Methodology:

- Establish baseline performance metrics for monolithic system architecture

- Implement modular architecture with defined component interfaces

- Configure multiple orchestration patterns (sequential, concurrent, group chat, handoff)

- Execute standardized task battery across different orchestration configurations

- Measure performance indicators: task completion rate, latency, resource utilization, error frequency

- Analyze interaction efficiency through component communication patterns

Validation Metrics:

- Task success rate across complexity levels

- System adaptability to novel scenarios

- Resource efficiency during operation

- Fault tolerance and error recovery capability

The Scientist's Toolkit: Research Reagent Solutions

Implementing and experimenting with modular, orchestrated systems requires specific technical components and frameworks. The following toolkit details essential "research reagents" for developing and testing compound AI systems:

Table 3: Essential Research Reagents for Compound AI System Development

| Research Reagent | Function | Application Context |

|---|---|---|

| Modular AI Components | Self-contained functional units performing specialized tasks [6] | System building blocks for perception, reasoning, memory, and action [11] |

| Orchestration Engine | Central controller managing workflow and data flow between components [9] | Coordinating multi-agent systems, managing complex task execution [10] |

| Communication Protocol | Standardized message formats and APIs for component interaction | Enabling interoperability between heterogeneous system components |

| Shared Memory Repository | Centralized knowledge storage for maintaining context and state [11] | Supporting persistent context across interactions, collaborative problem-solving |

| Evaluation Framework | Standardized metrics and testing protocols for system assessment | Quantifying performance across different architectural configurations |

| Tool Calling Interface | Mechanism for invoking external tools, APIs, and functions [11] | Extending system capabilities beyond native model knowledge and reasoning |

Modularity, orchestration, and component interaction represent foundational principles for designing sophisticated compound AI systems capable of addressing complex, multi-step problems in research and development environments. These principles enable the creation of adaptable, scalable architectures that can evolve with changing research requirements and integrate diverse specialized capabilities.

For drug development professionals and researchers, these architectural principles provide a framework for building computational research systems that mirror the complexity of biological systems themselves. The integration of specialized components through thoughtful orchestration creates systems greater than the sum of their parts, accelerating discovery processes and enabling more sophisticated analysis of complex biological phenomena.

As compound AI systems continue to evolve, further research into optimal interaction patterns, standardized interfaces, and evaluation methodologies will enhance our ability to construct increasingly capable systems. The convergence of these architectural principles with domain-specific expertise in pharmaceutical research promises to create powerful platforms for addressing some of the most challenging problems in drug discovery and development.

In the evolving landscapes of both artificial intelligence and molecular science, structural flexibility has emerged as a critical principle for designing systems capable of sophisticated problem-solving. This concept transcends domains, representing the capacity of a system—whether a compound AI platform or a biological receptor—to dynamically adapt its configuration in response to changing demands or environmental conditions. Within compound AI systems, structural flexibility enables the reorganization of computational components to optimize task performance [2]. In structural biology and drug discovery, it refers to the physical conformational changes in biomolecules that govern recognition and function [14] [15]. This technical guide explores the foundational role of structural flexibility in enabling adaptable and scalable workflows, framed within a broader thesis on compound AI systems and structural flexibility research. For researchers and drug development professionals, mastering this principle is paramount for advancing discovery pipelines and developing next-generation therapeutic strategies.

Structural Flexibility in Compound AI Systems

Formal Definition and System Architecture

Compound AI systems are defined as integrated systems that tackle complex tasks using multiple interacting components, moving beyond single, monolithic models [2]. Formally, a compound AI system can be represented as Φ = (G, ℱ), where:

- G = (V, E) is a directed graph representing the system topology (nodes V and edges E).

- ℱ = {f_i} is a set of operations attached to each node (e.g., LLM inference, RAG, tool execution) [2].

In this formalism, each node vi processes its input Xi to produce an output Yi = fi(Xi; Θi), where Θi = (θi,N, θi,T) represents both numerical and textual parameters [2]. The system's structural flexibility is encoded in the edge matrix E = [cij], where Boolean functions c_ij(τ) determine the active connections between components based on the contextual state τ [2]. This dynamic topology allows the system to adapt its workflow in response to specific query requirements and intermediate results, rather than following a fixed execution path.

Optimization Dimensions for Flexible AI Systems

Optimizing structurally flexible compound AI systems involves addressing several key dimensions, with Structural Flexibility representing the degree to which an optimization method can modify the computational graph G = (V, E) [2]. The optimization goal is to maximize system performance metric μ over a training set 𝒟 = {(qi, mi)}:

[ \max{\Phi}\frac{1}{N}\sum{i=1}^{N}\mu\bigl(\Phi(qi),mi\bigr) ]

Table 1: Key Dimensions for Compound AI System Optimization

| Dimension | Description | Implementation Examples |

|---|---|---|

| Structural Flexibility | Degree to which optimization can modify graph topology (V, E) | Joint optimization of node parameters and graph structure [2] |

| Learning Signals | Type of feedback used for optimization (numerical, natural language) | Natural language feedback for non-differentiable components [2] |

| Component Options | Elements available for inclusion in the system | LLMs, retrievers, code interpreters, symbolic solvers [2] |

| System Representations | How the system is modeled for optimization | Graph-based formalisms, natural language descriptions [2] |

Structural Flexibility in Biomolecular Systems

Biomolecular Recognition Mechanisms

In structural biology and drug discovery, structural flexibility is the fundamental property of biomolecules to sample a diverse ensemble of conformations, enabling complex recognition processes. This flexibility is not merely incidental but functionally essential for biological activity and ligand binding [14]. Two primary mechanisms describe how flexibility mediates biomolecular recognition:

- Conformational Selection: The receptor exists in an equilibrium of multiple pre-existing conformations, and the ligand selectively binds to and stabilizes a specific conformational state, causing a population shift [14].

- Induced Fit: The ligand binds to the receptor in an initial conformation, inducing conformational changes that lead to a final, stabilized complex [14].

These mechanisms are not mutually exclusive; extended models often combine characteristics of both to fully describe the binding process [14]. The Monod-Wyman-Changeux (MWC) model of allostery, a specific form of conformational selection, explains how ligand binding at one site can shift the equilibrium between pre-existing conformational states to regulate activity at another distant site [14].

Quantitative Stability/Flexibility Relationships (QSFR)

The Distance Constraint Model (DCM) provides a quantitative framework for analyzing structural flexibility in proteins. This ensemble-based biophysical model integrates thermodynamic and mechanical properties to calculate Quantified Stability/Flexibility Relationships (QSFR) [16]. The DCM outputs multiple structural metrics, with two being particularly insightful:

- Flexibility Index (FI): Quantifies local backbone flexibility, where positive values indicate degrees of freedom and negative values indicate redundant constraints [16].

- Cooperativity Correlation (CC): An N×N matrix (where N is the number of residues) that depicts residue-to-residue couplings and allosteric networks [16].

Comparative QSFR analyses across protein families, such as metallo-β-lactamases (MBLs), reveal that while backbone flexibility is often conserved across homologs, allosteric couplings can be highly variable and sensitive to mutation [16]. For instance, the plasmid-encoded NDM-1 enzyme exhibits several regions of significantly increased rigidity and atypical intramolecular couplings compared to other MBLs, which may relate to its role in fast-spreading drug resistance [16].

Table 2: Experimental Techniques for Flexibility Analysis

| Technique | Measurement Type | Key Flexibility Application |

|---|---|---|

| Accelerometer-Based SHM | Acceleration response time histories | Identifies structural modal flexibility from vibration energy distribution [17] |

| Computer Vision-Based SHM | Displacement response via video | Dense, multi-point measurement of displacement for flexibility identification [17] |

| Molecular Dynamics (MD) | Atomic trajectories over time | Samples conformational ensemble, reveals cryptic pockets [15] |

| Accelerated MD (aMD) | Enhanced sampling of conformations | Smoothes energy landscape to cross barriers and sample distinct states [15] |

| X-ray Crystallography | Static atomic coordinates | Provides snapshots for constructing conformational ensembles [15] |

| Cryo-EM | Static 3D structures in multiple states | Reveals conformational states of large complexes and membrane proteins [15] |

Methodologies and Experimental Protocols

The Relaxed Complex Method for Drug Discovery

A prime example of a workflow leveraging structural flexibility is the Relaxed Complex Method (RCM) in structure-based drug discovery. This approach addresses the critical limitation of traditional docking, which often uses a single, rigid protein structure, by explicitly incorporating receptor flexibility [15].

Detailed Protocol:

System Preparation:

- Obtain the initial 3D structure of the target protein from the PDB or via prediction tools like AlphaFold [15].

- Prepare the protein structure using standard molecular dynamics (MD) setup: add hydrogen atoms, assign partial charges, and solvate the system in an explicit water box.

Molecular Dynamics Simulation:

- Perform extensive MD simulations (nanoseconds to microseconds) of the unliganded protein using production-grade software (e.g., AMBER, GROMACS, NAMD) [15].

- Ensure simulations are run at physiological temperature (e.g., 310 K) and pressure to mimic biological conditions.

Conformational Ensemble Generation:

- From the MD trajectory, extract a representative set of snapshots that capture the diversity of protein conformations. This can be achieved through clustering analysis based on root-mean-square deviation (RMSD) of atomic positions.

- Identify potential cryptic pockets not present in the initial crystal structure but revealed during the simulation [15].

Virtual Screening:

- Dock large libraries of compounds (from on-demand virtual libraries like the Enamine REAL database) into the multiple binding sites of each representative snapshot [15].

- Use standard docking software (e.g., AutoDock Vina, DOCK) and scoring functions.

Hit Identification and Validation:

- Rank compounds based on consensus scoring across the ensemble of protein conformations.

- Select top-ranking compounds for in vitro experimental testing to validate binding affinity and biological activity.

Quantitative Comparison of Flexibility Identification Methods

A quantitative comparative study for structural flexibility identification can be conducted using vibration data, comparing traditional accelerometers with emerging computer vision-based techniques [17].

Detailed Protocol:

Experimental Setup:

- Test Structure: Use a scaled three-story frame structure on a shaking table [17].

- Sensor Deployment: Simultaneously measure the structural response using:

- Accelerometers: Traditional contact sensors measuring acceleration time histories.

- Vision Sensors: Non-contact cameras (e.g., high-speed video) measuring displacement response via techniques like phase-based video motion magnification [17].

Data Acquisition:

- Subject the structure to ambient or active excitation (e.g., via shaking table) [17].

- Record synchronized acceleration and displacement response data.

Modal Analysis and Flexibility Identification:

- For acceleration data: Identify structural modal parameters (frequencies, mode shapes) and assemble the modal flexibility matrix using methods like subspace-based system identification [17].

- For displacement data: Use operational modal analysis techniques on the displacement time histories to identify modal parameters and assemble the flexibility matrix [17].

Uncertainty Quantification:

- Calculate the variance (standard deviations) of the identified modal parameters and flexibility matrices using first-order sensitivity analysis to perturbations in the measured data [17].

- This step accounts for the impact of measurement noise and model uncertainty.

Comparative Analysis:

- Theoretically investigate the characteristic vibration energy distribution for both response types, noting the relationship (Xa(ω) = -ω^2 Xd(ω)) in the frequency domain [17].

- Compare the precision of the identified modal flexibility from both methods based on the calculated uncertainties. Studies show displacement data can yield smoother, more accurate mode shapes due to dense multi-point measurements, while accelerometers are more accurate for higher frequencies [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Flexibility-Focused Experiments

| Reagent / Material | Function / Application |

|---|---|

| Accelerometers | Measures structural acceleration response for modal flexibility identification in SHM [17]. |

| High-Speed Vision Sensors | Provides non-contact, dense measurement of structural displacement response for vision-based flexibility ID [17]. |

| Molecular Dynamics Software | Simulates protein dynamics to generate conformational ensembles for the Relaxed Complex Method [15]. |

| Ultra-Large Virtual Libraries | Source of billions of drug-like compounds for virtual screening against flexible targets [15]. |

| AlphaFold2 Protein Structure Database | Provides predicted 3D structural models for targets lacking experimental structures, enabling SBDD [15]. |

| Structured Target Rank Approximation Algorithm | Identifies structural modal flexibility from measured acceleration response data [17]. |

| Phase-Based Video Motion Magnification | Data processing technique to improve the quality of displacement data from video, crucial for vision-based SHM [17]. |

| Distance Constraint Model | Computes Quantitative Stability/Flexibility Relationships from protein structures [16]. |

Visualizing Workflows and Structural Relationships

Compound AI System Optimization Workflow

AI System Optimization

Relaxed Complex Method for Drug Discovery

Relaxed Complex Method

Structural Flexibility Identification in SHM

SHM Flexibility Identification

The principle of structural flexibility serves as a unifying framework for advancing both computational and biological systems. In compound AI, it enables the creation of dynamically optimized workflows that transcend the capabilities of monolithic models. In drug discovery and structural health monitoring, it provides the fundamental mechanism for understanding and exploiting adaptive biomolecular recognition and structural dynamics. The methodologies detailed herein—from the Relaxed Complex Method to quantitative flexibility analysis—provide researchers with robust protocols for integrating this critical principle into their work. As AI systems grow more complex and drug targets become more challenging, the conscious design for structural flexibility will be a defining factor in developing scalable, adaptable, and successful workflows capable of addressing the multifaceted problems of modern science.

Compound AI systems (CAIS) represent a paradigm shift in artificial intelligence, moving away from reliance on single, monolithic models towards architectures that integrate multiple specialized components. Defined as systems that tackle AI tasks using multiple interacting components—including multiple calls to models, retrievers, or external tools—compound systems leverage the strengths of various AI elements to achieve performance levels unattainable by individual models alone [18]. This approach mirrors trends observed in other advanced AI fields, such as self-driving cars, where state-of-the-art implementations consistently rely on systems with multiple specialized components rather than single models [18].

The emergence of compound AI systems is driven by several fundamental limitations of large language models (LLMs) and other monolithic AI approaches. While LLMs demonstrate remarkable capabilities in understanding and generating natural language, they face constraints including high operational costs, limited domain-specific expertise, lack of real-time knowledge integration, and challenges in handling complex, multi-step tasks across different systems [19]. Compound systems address these limitations through specialized division of labor, enabling more dynamic, controllable, and cost-effective AI solutions [20].

This technical guide examines the four core components that constitute modern compound AI systems: large language models as reasoning engines, specialized tools for functional extension, AI agents for orchestration, and multimodal encoders for cross-modal understanding. Framed within the context of structural flexibility research—a concept critical to advanced fields like computational protein design—we explore how the principled integration of these components creates systems capable of solving complex real-world problems across domains, including pharmaceutical research and drug development.

Core Component 1: Large Language Models (LLMs) as Reasoning Engines

Technical Foundation of LLMs

Large language models serve as the central reasoning and language processing engines within compound AI systems. Technically, LLMs are deep learning models trained on immense datasets of text, built upon the transformer architecture introduced in 2017 [21] [22]. The transformer's self-attention mechanism represents the core innovation that enabled modern LLMs, allowing the model to "pay attention to" different tokens in a sequence and calculate relationships and dependencies between them, even over long distances [22]. This architecture processes text by first tokenizing input into smaller units, then converting these tokens into vector embeddings that capture semantic meaning [21].

During operation, LLMs function as statistical prediction machines that repeatedly predict the next token in a sequence. The model passes token embeddings through multiple transformer layers, with each layer progressively refining the contextual representation. At each layer, the self-attention mechanism projects embeddings into query, key, and value vectors, computing alignment scores that determine how much focus to place on different parts of the input sequence when generating outputs [22]. The model's predictive capability emerges from training on vast text corpora, where it learns patterns in grammar, facts, reasoning structures, and writing styles through iterative prediction and weight adjustment via backpropagation [22].

LLM Specialization and Reasoning Enhancement

Within compound AI systems, LLMs rarely operate in their raw, general-purpose form. Instead, they undergo specialized tuning processes to optimize them for particular roles:

- Instruction Tuning: This process specifically improves a model's ability to follow human instructions by training on datasets where inputs resemble user requests and outputs demonstrate desirable responses [22].

- Reinforcement Learning from Human Feedback (RLHF): Used for alignment, RLHF involves humans ranking model outputs, with the model trained to prefer higher-ranked responses, making outputs more useful, safe, and consistent with human values [21] [22].

- Reasoning Model Development: Advanced fine-tuning techniques, particularly using reinforcement learning, create LLMs capable of breaking complex problems into smaller steps or "reasoning traces" before generating final outputs [22]. Models like OpenAI's o1 exemplify this approach, generating long chains of thought to improve final answer quality [21].

Table 1: LLM Capabilities and Specialization Techniques in Compound AI Systems

| LLM Capability | Description | Specialization Technique | Application in CAIS |

|---|---|---|---|

| Next-Token Prediction | Statistical prediction of subsequent tokens in a sequence | Pre-training on vast text corpora | Core text generation capability |

| Instruction Following | Executing tasks based on human instructions | Instruction tuning with human feedback | Translating user requests into system actions |

| Chain-of-Thought Reasoning | Breaking problems into intermediate steps | Reinforcement learning on reasoning traces | Complex problem-solving in multi-component systems |

| Tool Interaction | Understanding and utilizing external tools | Fine-tuning with tool documentation and examples | Orchestrating calls to specialized components |

The context window—the maximum number of tokens a model can process at once—represents another critical capability for LLMs in compound systems. Modern LLMs feature context windows of hundreds of thousands of tokens, enabling them to process entire research papers, large codebases, or extended conversations, which is essential for coordinating complex multi-component systems [22].

Core Component 2: Tools and External Systems

The Tool Ecosystem in Compound AI Systems

Tools and external systems form the functional extension layer of compound AI systems, providing specialized capabilities beyond the core competencies of LLMs. These components enable CAIS to overcome fundamental limitations of pure neural approaches, including knowledge currency constraints, lack of precise computational capabilities, and inability to interact directly with external environments and data sources [19] [18].

The tool ecosystem in compound systems encompasses several categories of specialized components:

- Retrieval Systems: These components, central to retrieval-augmented generation (RAG) architectures, connect LLMs with external knowledge bases, enabling access to current, domain-specific, or proprietary information beyond their training data [22] [18]. By passing retrieved information into the model's context window, these systems enhance response accuracy and relevance without model retraining [22].

- Code Interpreters and Computational Tools: Systems like the Code Interpreter plugin in ChatGPT Plus provide capabilities for executing code, performing mathematical computations, and processing data, extending the analytical abilities of compound systems beyond statistical pattern matching [18].

- API Integrations: Connections to external services and databases enable compound systems to incorporate real-time information such as weather data, financial markets, or inventory systems, addressing the static knowledge limitation of pre-trained models [19] [18].

- Specialized Analytical Tools: Domain-specific tools for tasks such as molecular modeling, image analysis, or sensor data processing provide capabilities that would be inefficient or impossible to implement within LLM architectures alone [19].

Tool Integration Patterns

The integration of tools into compound AI systems follows several architectural patterns, each with distinct advantages and implementation considerations:

- Programmatically Orchestrated Tools: In this pattern, traditional code (e.g., Python) defines control logic that calls tools and models under specific conditions, offering reliability and predictable behavior through programmatic control flow [20] [18].

- Model-Driven Tool Use: Alternatively, LLM agents can determine when and how to call tools based on contextual understanding, providing greater flexibility in interpreting and acting on complex inputs, though potentially with some sacrifice of reliability [20] [18].

- Hybrid Approaches: Many production systems combine programmatic and model-driven tool use, with fixed pipelines for well-defined operations and LLM-directed tool use for ambiguous or creative tasks.

Table 2: Tool Categories and Their Functions in Compound AI Systems

| Tool Category | Representative Examples | Primary Function | Benefit to CAIS |

|---|---|---|---|

| Information Retrieval | Vector databases, search APIs, SQL queriers | Accessing current or domain-specific information | Overcoming knowledge cutoffs and expanding beyond training data |

| Computational Tools | Code interpreters, mathematical solvers, symbolic engines | Performing precise calculations and logical operations | Adding deterministic capabilities to statistical approaches |

| Domain-Specialized Tools | Molecular simulators, medical imaging analyzers | Executing tasks requiring domain expertise | Extending system capability into technical domains |

| Sensor Integration | Camera systems, environmental sensors, IoT devices | Processing real-world signal data | Connecting digital intelligence with physical environments |

The effectiveness of tool integration often depends on co-optimization between the LLM and tool components. For instance, in RAG systems, an LLM may need tuning to generate search queries that work effectively with a particular retrieval system, while the retriever might be optimized to return content that aligns with the LLM's processing capabilities [18]. This co-optimization represents one of the key challenges in compound system design, as it requires coordinated adjustment of potentially non-differentiable components [18].

Core Component 3: AI Agents and Orchestration

Architectural Principles of AI Agents

AI agents represent the orchestration layer within compound AI systems, providing the decision-making framework that determines how and when to utilize various components. Architecturally, these agents move beyond single model calls to implement multi-step reasoning, tool selection, and action sequencing [19] [18]. The BAIR research blog notes that increasingly, state-of-the-art AI results are obtained by compound systems with multiple components rather than monolithic models, with 30% of enterprise LLM applications utilizing multi-step chains [18].

Advanced agent architectures incorporate several principled design approaches:

- Dual-Process Frameworks: Psychologically enhanced AI agents often implement dual-process architectures, integrating "denotative" (System 2, deliberative, symbolic) representation with "connotative" (System 1, affective, low-dimensional) representation for social interaction and decision-making [23]. This approach, exemplified by the BayesAct model, forces the agent to reason about both rational-symbolic and emotional-affective consequences, driving actions that maintain alignment within culturally learned bounds [23].

- Personality and Behavioral Conditioning: Agents can be conditioned using established psychological frameworks (MBTI, Big Five, HEXACO) to produce consistent, contextually appropriate behaviors [23]. Research demonstrates that agents engineered for high agreeableness achieved a 63.7% confusion rate in Turing Tests, statistically more likely to be judged as human compared to neutral agents [23].

- Dynamic Goal Management: Sophisticated agent frameworks enable dynamic goal evolution based on changing contexts and priorities, moving beyond static task execution to adaptive problem-solving [23].

Agent Coordination in Multi-Agent Systems

Complex compound AI systems often employ multiple specialized agents operating in coordination. These multi-agent systems distribute capabilities across specialized components that interact through structured communication patterns:

- Role-Specialized Agents: Different agents assume specialized roles (e.g., researcher, analyst, executor) with tailored capabilities and permissions, creating a division of labor that mirrors effective human organizational structures [23].

- Debate and Consensus Mechanisms: Frameworks like MoodAngels implement multi-step, debate-driven processes where personality-grounded agents with different perspectives collaborate on complex tasks such as psychiatric assessment [23].

- Hierarchical Coordination: Some systems implement nested agent architectures where a master agent decomposes problems and distributes sub-tasks to specialized sub-agents, with coordination mechanisms to integrate partial solutions [23].

Diagram: Multi-Agent Orchestration Architecture in Compound AI Systems

The orchestration of multiple agents introduces significant design complexity, including challenges around consistency management, conflict resolution, and system observability. However, when properly implemented, multi-agent compound systems demonstrate capabilities substantially exceeding those of individual models or single-agent approaches, particularly for complex, multi-domain problems [23] [18].

Core Component 4: Multimodal Encoders

Technical Architecture of Multimodal Encoding

Multimodal encoders form the sensory apparatus of compound AI systems, enabling the processing and interpretation of diverse data types including text, images, audio, video, and sensor data [24] [25]. These components implement cross-modal representation learning, creating shared semantic spaces where different data types can be related and combined [25].

The technical architecture of multimodal AI systems typically consists of three main components:

- Encoders: Convert raw data from different modalities into vector representations stored in a shared latent space. Modern systems employ specialized encoders for different data types—CNNs for images, transformers for text, audio processing networks for sound—that project diverse inputs into a unified representation space [24].

- Fusion Mechanisms: Combine information from multiple modalities to identify cross-modal relationships and patterns. Fusion may occur at various levels—early (raw data), intermediate (feature level), or late (decision level)—depending on the application requirements [24] [25].

- Decoders: Translate the fused representations into outputs understandable to humans or usable by downstream systems, effectively reversing the encoding process to generate appropriate responses or actions [24].

This architecture enables what researchers describe as a "discovery tool" capability, where the AI finds connections across modalities similar to how Amazon's recommendation system identified that "people who shopped for this item also bought that item," but extended to complex patterns like identifying relationships between sleep data and medical conditions [24].

Cross-Modal Representation Learning

The core capability of multimodal encoders lies in cross-modal representation learning—creating a shared semantic space where concepts can be related across different data types. This process enables:

- Cross-Modal Retrieval: Finding related content across different modalities, such as locating images relevant to a text query or generating descriptions for visual content [25].

- Multimodal Reasoning: Drawing inferences based on evidence from multiple data types simultaneously, such as combining medical images, lab results, and clinical notes for diagnostic support [24].

- Cross-Modal Generation: Creating content in one modality based on inputs from another, such as generating images from text descriptions or creating audio summaries from video content [25].

Table 3: Multimodal Encoder Types and Their Applications in Compound AI Systems

| Modality | Encoder Type | Technical Approach | Domain Applications |

|---|---|---|---|

| Visual (Images/Video) | Convolutional Neural Networks (CNNs), Vision Transformers | Feature extraction through hierarchical pattern recognition | Medical imaging analysis, product identification, environmental monitoring |

| Textual | Transformer-based Encoders | Self-attention mechanisms for contextual understanding | Document processing, sentiment analysis, information extraction |

| Auditory | Recurrent Neural Networks, Audio Spectrogram Transformers | Spectral analysis and temporal pattern recognition | Voice interfaces, emotion detection, sound event classification |

| Sensor Data | Multilayer Perceptrons, Sensor-Specific Encoders | Time-series analysis and signal processing | Healthcare monitoring, industrial IoT, environmental sensing |

In compound AI systems, multimodal encoders enable more comprehensive understanding of complex real-world phenomena by integrating complementary information from diverse sources. For example, in healthcare applications, multimodal AI can combine medical images, clinical notes, lab results, and sensor data to provide more accurate diagnostic support than any single data type would permit [24]. Similarly, in eCommerce, multimodal systems enable users to search using images, text, or context descriptions interchangeably, significantly enhancing discovery capabilities [24].

Structural Flexibility: A Unifying Principle for CAIS Design

The Structural Flexibility Analogy

Structural flexibility represents a fundamental design principle that connects advanced compound AI systems with cutting-edge research in computational protein design. In protein engineering, structural flexibility refers to the controlled incorporation of dynamic, adaptable regions within protein subunits that enables the formation of multiple stable architectures rather than rigid, monomorphic structures [26] [27]. This principle is increasingly recognized as essential for creating functional protein assemblies that can adapt to varied cargos and environmental conditions.

The analogy to compound AI systems is remarkably precise. Just as flexible protein subunits can reconfigure to form different architectural outcomes, the components of compound AI systems maintain precisely constrained flexibility that enables adaptive problem-solving without system instability [26] [27]. Research in computational protein design has demonstrated that introducing flexibility at specific junction points enables proteins to explore defined ranges of architectures rather than nonspecific aggregation [27]. Similarly, in compound AI systems, strategic flexibility at component integration points enables adaptation to diverse problems while maintaining overall system coherence.

Flexibility-Informed CAIS Architecture

Applying structural flexibility principles to compound AI system design involves several key considerations:

- Constrained Flexibility at Interfaces: Like the hinge-like loops between domains in naturally flexible proteins [27], compound AI systems benefit from well-defined flexibility at component interfaces. This enables components to interact in varied but controlled ways, adapting to different problem types while maintaining system integrity.

- Oligomorphic Capability: Borrowing from the concept of oligomorphic protein assemblies that can adopt a limited set of distinct structures [27], effective compound AI systems can reconfigure their component interactions to form different "architectural states" optimized for particular problem categories.

- Dynamic Reconfiguration: Structural flexibility research demonstrates that natural protein assemblies involved in cargo packaging and transport adapt to target cargos by adopting a range of architectures [27]. Similarly, compound AI systems can dynamically reconfigure component relationships based on task requirements, moving beyond static pipelines to adaptive workflows.

Diagram: Structural Flexibility in CAIS vs. Rigid Architectures

The structural flexibility principle provides a powerful framework for understanding why compound AI systems increasingly outperform monolithic models—they embrace controlled adaptability at multiple levels rather than attempting to solve all problems through a single, rigid architecture. This approach mirrors the evolutionary advantage that flexible protein assemblies hold over rigid structures in biological systems [26] [27].

Experimental Protocols and Methodologies

CAIS Component Evaluation Framework

Rigorous evaluation methodologies are essential for developing and optimizing compound AI systems. Unlike single-model assessment, CAIS evaluation requires measuring both end-to-end system performance and individual component effectiveness, with particular attention to component interactions [20] [18]. The experimental framework includes:

- End-to-End Task Success Metrics: Application-specific quality measures that evaluate the final output of the complete system, such as accuracy on domain-specific benchmarks or user satisfaction scores [18].

- Component-Level Isolation Testing: Individual assessment of each component (LLM, retriever, tools, agents) to establish baseline capabilities and identify performance bottlenecks [20].

- Interaction Effect Measurement: Quantitative evaluation of how component modifications affect overall system behavior, recognizing that optimizing one component in isolation may degrade system performance [18].

For example, in a RAG system, researchers might evaluate retrieval accuracy separately from generation quality, while also measuring how changes to the retrieval component affect the final output accuracy [20]. The BAIR researchers note that evaluation approaches must be application-specific, with some systems benefiting from discrete end-to-end metrics while others require component-level assessment [18].

Protein Flexibility Analysis Protocol

Drawing from structural biology research, we can adapt experimental protocols for analyzing flexibility in computational systems. The methodology for characterizing flexible protein assemblies involves:

- Heterogeneity Analysis: Using techniques like cryo-EM single-particle reconstructions to identify and classify structural variations within assembled complexes [27].

- Interface Flexibility Assessment: Computational prediction of flexibility hotspots through methods like AlphaFold2 pLDDT analysis and Rosetta-calculated solvent accessible surface area measurements [27].

- Dynamic Behavior Modeling: Molecular dynamics simulations to explore the range of motion and conformational sampling within designed assemblies [27].

Table 4: Experimental Methods for Analyzing Flexibility in Biological and AI Systems

| Method Category | Biological Applications | CAIS Analog | Key Metrics |

|---|---|---|---|

| Structural Analysis | Cryo-EM, X-ray crystallography | Architecture visualization tools | Resolution, heterogeneity classification |

| Dynamic Simulation | Molecular dynamics simulations | Component interaction tracing | Conformational sampling, state transitions |

| Stability Assessment | Thermal shift assays, native mass spectrometry | System stress testing under varied loads | Resilience metrics, failure modes |

| Functional Testing | Enzyme activity assays, binding studies | Task-specific performance benchmarks | Accuracy, efficiency, robustness |

These methodologies provide a framework for quantitatively assessing the flexibility and adaptability of compound AI systems, moving beyond static performance benchmarks to dynamic capability evaluation.

Core Development Tools for CAIS

Building effective compound AI systems requires specialized tools and frameworks that support the development, integration, and evaluation of multiple components. Key resources include:

- Orchestration Frameworks: Platforms like IBM watsonx Orchestrate provide environments for bringing together custom-built agents, pre-built agents, and third-party frameworks in a unified experience [19]. These systems enable seamless multi-agent orchestration, essential for complex compound systems.

- Evaluation Suites: Tools like MLflow offer flexible approaches to evaluation that can accommodate different aspects of compound AI systems, including retrieval and generation components [20]. Robust evaluation infrastructure is particularly critical given the multi-component nature of CAIS.

- Model Servering Infrastructure: Platforms such as Databricks Lakehouse Monitoring provide visibility into the complex data and modeling pipelines in compound AI systems, addressing operational challenges [20].

- Optimization Tools: Frameworks like DSPy offer general optimization for pipelines of pretrained LLMs and other components, helping coordinate non-differentiable elements within compound systems [18].

Specialized Components for Drug Development Applications

For researchers in pharmaceutical and life sciences, several specialized resources enable the application of compound AI systems to drug development challenges:

- Protein Design Tools: Methods like the Geometric Algebra Flow Matching (GAFL) model enable generation of protein structures with customized flexibility patterns, supporting the design of proteins with specific functional properties [26].

- Multimodal Biomedical Encoders: Specialized models trained on diverse biological data types (chemical structures, genomic sequences, clinical notes, medical images) enable cross-modal reasoning for drug discovery and development [24] [25].

- Molecular Simulation Tools: Integration with molecular dynamics engines and docking software provides compound AI systems with domain-specific computational capabilities essential for pharmaceutical applications [26] [27].

- Biomedical Knowledge Graphs: Structured biological databases serve as retrieval components within RAG architectures, ensuring AI systems utilize current, validated biomedical knowledge [22] [18].

The strategic selection and integration of these tools enables researchers to construct compound AI systems specifically optimized for the complex, multi-faceted challenges of modern drug development, from target identification through clinical trial optimization.

Compound AI systems represent a fundamental architectural shift in artificial intelligence, moving beyond monolithic models to integrated systems of specialized components. The four core components—LLMs as reasoning engines, tools as functional extensions, agents as orchestrators, and multimodal encoders as sensory apparatus—each play distinct but complementary roles in creating systems capable of solving complex, real-world problems.

Framed within the context of structural flexibility research, we see that the most capable systems, whether computational or biological, incorporate precisely constrained flexibility at key integration points. This principle, drawn from computational protein design, explains why compound AI systems increasingly outperform even the largest monolithic models—they embrace adaptive reconfiguration rather than rigid uniformity.

For researchers and drug development professionals, compound AI systems offer a powerful framework for addressing the multifaceted challenges of modern pharmaceutical research. By strategically combining specialized components within a flexibility-informed architecture, these systems can integrate diverse data types, leverage domain-specific tools, and adapt their problem-solving approaches to the unique requirements of each research challenge. As the field advances, the principles of structural flexibility and component specialization will likely guide the development of increasingly sophisticated AI systems capable of transforming drug discovery and development.

Building Agile AI for Biomedicine: Applications in Drug Discovery and Development