Streamlining Justice: How Rapid Technologies Are Revolutionizing Forensic Chemistry Workflows

This article explores the transformative impact of rapid technologies on forensic chemistry workflows, addressing a critical need for efficiency in laboratories facing growing evidence backlogs.

Streamlining Justice: How Rapid Technologies Are Revolutionizing Forensic Chemistry Workflows

Abstract

This article explores the transformative impact of rapid technologies on forensic chemistry workflows, addressing a critical need for efficiency in laboratories facing growing evidence backlogs. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning from foundational principles to practical implementation. We examine cutting-edge methodologies like rapid GC-MS and direct analysis techniques, delve into optimization strategies for enhanced sensitivity and throughput, and critically evaluate validation frameworks and comparative performance against traditional methods. The synthesis of these insights offers a roadmap for integrating accelerated technologies to achieve faster, reliable, and actionable forensic results.

The Driving Force for Speed: Understanding the Need for Rapid Forensic Technologies

The convergence of rising drug-related crimes and increasing operational pressures has created a critical bottleneck in forensic laboratories worldwide. This backlog delays justice, compromises public safety, and hinders the effective prosecution of drug offenses. Data from a 2025 market analysis projects the global forensic technology market to expand from USD 6.46 billion in 2025 to USD 15.86 billion by 2035, driven significantly by these escalating challenges [1]. A primary growth driver is the surging popularity of DNA testing, which provides highly reliable evidence but requires significant time and resources [1].

Forensic laboratories are further strained by legislative mandates requiring the testing of all sexual assault kits, often without additional funding, and growing pressure to apply DNA analysis to property crimes and cold cases [2]. The core of the problem is a resource gap; the 2019 NIJ Needs Assessment identified an annual shortfall of $640 million just to meet current forensic demand, with another $270 million needed to address the opioid crisis [2]. The consequences are quantifiable: between 2017 and 2023, turnaround times for DNA casework increased by 88%, while controlled substances analysis ballooned by 232% [2]. This document outlines advanced protocols and data-driven strategies to enhance efficiency and throughput in forensic chemistry workflows, directly addressing this systemic backlog.

Quantitative Data on Forensic Backlogs and Efficiency

The following tables synthesize key quantitative data illustrating the scale of the forensic backlog and the measurable impact of implemented efficiency solutions.

Table 1: Forensic Casework Turnaround Time Increases (2017-2023) Data sourced from Project FORESIGHT and the Consortium of Forensic Science Organizations (CFSO) [2]

| Forensic Discipline | Increase in Turnaround Time |

|---|---|

| DNA Casework | 88% |

| Crime Scene Evidence | 25% |

| Post-mortem Toxicology | 246% |

| Controlled Substances | 232% |

Table 2: Impact of Efficiency Interventions on Laboratory Performance

| Laboratory / Initiative | Key Intervention | Outcome |

|---|---|---|

| Louisiana State Police | Lean Six Sigma Implementation [2] | Turnaround time dropped from 291 days to 31 days; throughput tripled to 160 cases/month. |

| Michigan State Police | CEBR-Funded Technical Innovation (Validated low-input DNA methods) [2] | 17% increase in interpretable DNA profiles from complex evidence within 12 months. |

| Global Forensic Tech Market | Adoption of advanced technologies (AI, Rapid DNA) [1] | Projected market growth from USD 6.46B (2025) to USD 15.86B (2035) at a 9.4% CAGR. |

Experimental Protocols for Enhanced Forensic Workflows

Protocol: Statistical Design of Experiments (DoE) for Method Optimization

This protocol provides a systematic approach to optimizing complex analytical methods, such as the extraction of drugs from biological specimens, by efficiently evaluating multiple variables simultaneously [3].

1. Principle Statistical Design of Experiments (DoE) is a mathematical framework that evaluates the relationship between independent variables (factors) and dependent variables (responses). It supersedes the inefficient "one-factor-at-a-time" (OFAT) approach by allowing for the assessment of interaction effects between factors, leading to fewer experiments, lower costs, and reduced consumption of valuable samples and reagents [3].

2. Applications in Forensic Analysis

- Optimization of Sample Preparation: DoE is predominantly used to optimize parameters in extraction techniques like Liquid-Liquid Extraction (LLE), Solid-Phase Extraction (SPE), and dispersive liquid–liquid microextraction (DLLME) [3].

- Critical Variables: Common factors include solvent type and volume, pH, temperature, extraction time, and sorbent type [3].

- Chromatographic Parameters: While less common, DoE can also optimize instrument settings for LC-MS/MS or GC-MS, such as injection volume, flow rate, and column temperature [3].

3. Step-by-Step Procedure

- Step 1: Selection of Independent Variables. Identify critical factors (e.g., pH, solvent volume, temperature) through literature review, preliminary OFAT studies, or screening designs [3].

- Step 2: Screening Design Execution. When dealing with many potential factors, use a screening design (e.g., Plackett-Burman or Fractional Factorial Design) to identify the variables with the most significant impact on the response (e.g., analyte recovery, peak area) [3].

- Step 3: Response Surface Methodology (RSM). Employ a quadratic design like Box-Behnken or Central Composite Design to model the system and locate the optimal conditions. These designs generate a polynomial equation that describes the relationship between factors and responses [3].

- Step 4: Model Validation and Optimization. Statistically validate the generated model for adequacy and predictive utility. Use Response Surface Methodology (RSM) to visualize the relationship and pinpoint the factor levels that produce a maximal or minimal response, as defined by the researcher's criteria [3].

Protocol: Quantitative Analysis via Standard Addition

This protocol details the standard addition method, an alternative quantitative approach particularly valuable for analyzing emerging novel psychoactive substances (NPS) in complex biological matrices [4].

1. Principle Standard addition is an internal calibration technique used to determine the concentration of an analyte in a sample where the matrix may cause interference. Known amounts of the analyte standard are added directly to aliquots of the sample. The concentration in the original sample is determined by extrapolating the calibration curve back to the x-axis [4].

2. Applications in Forensic Toxicology

- Emerging Novel Psychoactive Substances (NPS): Ideal for quantifying NPS like isotonitazene (opioid) or eutylone (stimulant) where traditional validated methods may not exist and their short lifespan makes full validation impractical [4].

- Complex Matrices: Effective for analyzing drugs in blood, urine, and other biological samples where matrix effects can suppress or enhance instrument signal [4].

3. Step-by-Step Procedure

- Step 1: Sample Aliquoting. Aliquot a case sample into four replicates [4].

- Step 2: Standard Fortification.

- Leave one aliquot as an unfortified "blank".

- Fortify the three remaining aliquots with the target drug standard at appropriate, increasing concentrations [4].

- Step 3: Sample Preparation and Analysis. Add internal standard to all four aliquots. Proceed with a standardized sample preparation (e.g., Liquid-Liquid Extraction). Analyze all aliquots using LC-MS/MS [4].

- Step 4: Data Calculation and Interpretation.

- Plot the peak area ratio (analyte/internal standard) against the concentration of the standard added to each aliquot [4].

- Fit a linear trendline through all data points (R² > 0.98 is recommended) [4].

- Calculate the x-intercept of the trendline. The absolute value of the x-intercept represents the concentration of the drug in the original, unfortified sample [4].

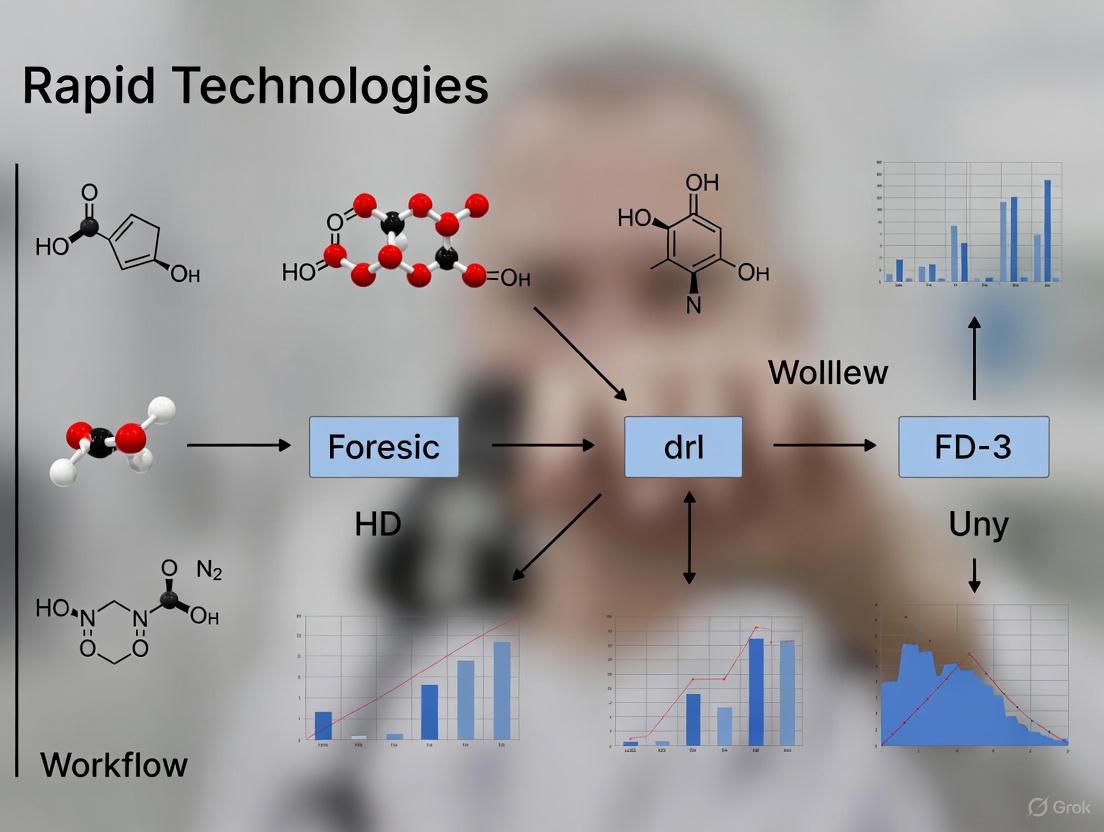

Workflow Visualization

The following diagram synthesizes the experimental protocols into a unified, efficient workflow for the analysis of controlled substances in forensic casework.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Advanced Forensic Toxicology

| Item | Function / Application |

|---|---|

| Liquid Chromatography Tandem Mass Spectrometry (LC-MS/MS) | High-sensitivity detection, identification, and quantification of a wide range of drugs and metabolites, including Novel Psychoactive Substances (NPS) [4]. |

| Statistical Design of Experiments (DoE) Software | Software tools to plan screening and optimization experiments, analyze results, and build predictive models for method development [3]. |

| Novel Psychoactive Substance (NPS) Standards | Certified reference materials for emerging drugs, essential for accurate identification and quantification using techniques like standard addition [4]. |

| Liquid-Liquid Extraction (LLE) Solvents | Solvent systems (e.g., N-butyl chloride and ethyl acetate) for isolating drugs from complex biological matrices like blood prior to analysis [4]. |

| Buffers (e.g., Borax Buffer, pH 10.4) | Used to adjust the pH of samples during extraction to ensure optimal recovery of specific drug classes [4]. |

| Internal Standards (Isotope-Labeled) | Compounds added to samples to correct for variability in sample preparation and instrument analysis, improving quantitative accuracy [4]. |

The integration of rapid diagnostic technologies is revolutionizing forensic chemistry workflows, dramatically increasing efficiency from sample to result. This application note details the implementation of a portable molecular diagnostic platform, leveraging power-free nucleic acid extraction and colorimetric LAMP chemistry, to achieve high-sensitivity detection in under 40 minutes. We provide a detailed protocol and quantitative performance data to guide researchers in adopting these accelerated methodologies for forensic analysis, demonstrating how they address critical bottlenecks in evidence processing and casework prioritization [5].

In the context of forensic science, "rapid" is evolving from a qualitative desire to a quantitative metric, defined by specific technological benchmarks that compress traditional multi-day laboratory processes into workflows lasting minutes. The 2025 Emanuele Russo Delphi consensus on rapid microbiological methods emphasizes the critical importance of interpretation within a specific clinical context and the clinical usefulness of turnaround times <24 hours, principles that are directly transferable to forensic evidence analysis [6]. The pressing need for such efficiency is underscored by substantial backlogs in crime labs, where advanced technologies can enable data-driven case management and evidence prioritization to accelerate justice [7]. This document outlines a sample-to-result platform and protocol that embodies this new definition of "rapid," providing forensic chemists and toxicologists with a framework to integrate speed without compromising analytical rigor.

Performance Data & Comparative Analysis

The Dragonfly platform was validated for the detection of skin-tropic viruses, a model system with direct relevance to forensic investigations of infectious agents. The platform's performance, benchmarked against gold-standard extracted qPCR, demonstrates that rapid technologies can deliver high fidelity [5].

Table 1: Analytical and Clinical Performance of the Rapid Platform

| Performance Metric | Result | Validation Method |

|---|---|---|

| Time-to-Result | < 40 minutes | Full workflow from sample input to visual readout [5] |

| Nucleic Acid Extraction Time | < 5 minutes | Power-free magnetic bead-based method [5] |

| Analytical LoD (Mpox Virus) | 100 genome copies per reaction | Colorimetric LAMP assay [5] |

| Clinical Sensitivity (OPXV) | 96.1% | Testing on 164 clinical samples (51 mpox-positive) [5] |

| Clinical Specificity (OPXV) | 100% | Testing on 164 clinical samples [5] |

| Clinical Sensitivity (MPXV) | 94.1% | Testing on 164 clinical samples [5] |

| Clinical Specificity (MPXV) | 100% | Testing on 164 clinical samples [5] |

The power-free nucleic acid extraction, a key innovation, completes in under 5 minutes, eliminating a major bottleneck in traditional lab workflows and the need for centralized instrumentation [5].

Experimental Protocol: Rapid Molecular Detection

Principle

This protocol utilizes a portable, sample-to-result system that integrates power-free nucleic acid extraction via a magnetic SmartLid and magnetic beads with lyophilised colourimetric Loop-Mediated Isothermal Amplification (LAMP) for the specific detection of target nucleic acids. Amplification causes a pH shift, resulting in a colour change of the reaction mix from pink (negative) to yellow (positive), enabling equipment-free visual interpretation [5].

Reagents and Equipment

- Portable Extraction Kit: Cardboard tray containing colour-coded, pre-aliquoted buffer tubes (Lysis-binding [red], Wash [yellow], Elution [green]), disposable exact-volume pipettes, and the

SmartLid[5]. - Lyophilised Colourimetric LAMP Panel: Single-tube assays stored at room temperature.

- Low-Cost Isothermal Heat Block: Maintains a constant temperature of 60-65 °C.

- Sample Collection Kit: Swab and inactivating medium (e.g., COPAN eNAT).

- Timer.

Procedure

A. Sample Preparation

- Collect the specimen using a swab and place it into the inactivating medium tube.

- Vortex the sample tube briefly to ensure the specimen is well-mixed in the medium.

B. Power-Free Nucleic Acid Extraction (< 5 min)

Note: The cardboard packaging of the extraction kit functions as the workstation. Place the sample tube in the designated space.

- Lysis-Binding: Using the provided exact-volume pipette, transfer the specified volume of inactivated sample to the red-capped lysis-binding tube. Close the cap and mix by inverting 10 times. Incubate for 1 minute at room temperature.

- Bead Capture: Place the

SmartLidonto the red tube to capture the magnetic beads with bound nucleic acids. Wait for 30 seconds. - Wash Transfer: While holding the

SmartLidwith the captured beads, transfer it to the yellow-capped wash tube. Submerge the beads, release them from the lid by agitating, and close the cap. Mix by inverting 10 times. - Bead Re-capture: Place the

SmartLidback onto the yellow tube to re-capture the beads. Wait for 30 seconds. - Elution: Transfer the

SmartLidwith the beads to the green-capped elution tube. Submerge the beads, release them, and close the cap. Mix by inverting 10 times. Incubate for 2 minutes at room temperature. - Final Capture: Place the

SmartLidonto the green tube one final time to capture the beads, leaving purified nucleic acids in the elution buffer. The eluate is now ready for amplification.

C. Colorimetric LAMP Amplification and Detection (< 35 min)

- Reconstitute the lyophilised LAMP pellet in the specified tube with the required volume of the purified nucleic acid eluate from the previous step.

- Incubate the reaction tube in the pre-heated isothermal heat block at 65 °C for 35 minutes.

- Interpret Results immediately after incubation. A colour change from pink to yellow indicates a positive result. No colour change (pink remains) indicates a negative result.

Safety and Quality Control

- Personal Protective Equipment (PPE): Wear gloves, a lab coat, and eye protection during specimen handling and testing [8].

- Prevent Cross-Contamination: Change gloves between handling different samples, especially when processing specimens in batches [8].

- Waste Disposal: Decontaminate all instruments after use and dispose of all used test components as biohazardous waste in compliance with local regulations [8].

Workflow Visualization

Rapid Sample-to-Result Workflow: This diagram illustrates the streamlined, sub-40-minute process from sample input to final readout, highlighting the power-free nucleic acid extraction steps and the colorimetric detection [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Rapid Molecular Workflow

| Item | Function | Key Characteristic |

|---|---|---|

| SmartLid & Magnetic Beads | Power-free nucleic acid extraction and purification from complex samples. | Enables sub-5-minute extraction without centrifugation or electricity [5]. |

| Lyophilised Colourimetric LAMP Mix | Isothermal amplification of target DNA/sequences. | Room-temperature stable; visual (colorimetric) readout eliminates need for fluorescent detection hardware [5]. |

| Pre-aliquoted Buffer Tray | Contains all necessary reagents for the extraction process. | Color-coded (red-yellow-green) for foolproof workflow; integrated with packaging [5]. |

| Inactivating Sample Medium | Stabilizes the specimen and inactivates pathogens for safe transport and handling. | Ensures user safety and sample integrity from point-of-collection to testing [5]. |

| Low-Cost Isothermal Heat Block | Maintains constant temperature required for LAMP reaction. | Eliminates need for expensive thermocyclers; enables deployment in low-resource settings [5]. |

Advanced instrumentation is fundamentally transforming forensic chemistry workflows by integrating miniaturization, automation, and intelligent data analysis. These core principles directly address critical challenges in modern forensic laboratories, including growing case backlogs, the complexity of new psychoactive substances (NPS), and the need for rapid, on-site intelligence [9] [10]. The migration of analytical techniques from centralized laboratories to the field or production environment enables a paradigm shift from delayed, batch-processed results to immediate, data-driven decision-making.

This application note details how these principles are applied in specific technological implementations, providing validated protocols and quantitative data to illustrate the dramatic gains in analytical efficiency.

Principles and Instrumentation

The push for faster analysis is underpinned by three interconnected core principles, each enabled by specific technological advancements.

Principle 1: Miniaturization and Portability

The development of compact, portable analytical devices allows for preliminary testing and evidence triage at the point of need, such as a crime scene or border checkpoint. This eliminates the delay associated with evidence transport and chain-of-custody procedures, providing immediate investigative leads.

Exemplar Technology: Portable Voltammetric Sensor for Synthetic Cannabinoids. This system utilizes a 3D-printed electrochemical cell integrated with a commercial boron-doped diamond electrode (BDDE) and a smartphone-controlled portable potentiostat [9].

- Quantitative Performance Data:

| Parameter | Performance Metric | Impact on Efficiency |

|---|---|---|

| Analysis Time | < 1 minute per sample [9] | Enables rapid screening of multiple samples on-site. |

| Limit of Detection (LOD) | 0.28 µmol L⁻¹ [9] | Sufficiently sensitive for typical concentrations in seized materials. |

| Linear Range | 1.0 – 200.0 µmol L⁻¹ [9] | Covers a wide range of potential concentrations without dilution. |

| Accuracy (vs. GC-MS) | 83% (in seized street drug samples) [9] | Provides reliable preliminary data to prioritize lab resources. |

Principle 2: Automation and Integrated Workflows

End-to-end automation of analytical processes—from sample introduction to result interpretation and reporting—minimizes manual intervention, reduces operator-to-operator variability, and maximizes throughput.

Exemplar Technology: NMR-based Advanced Chemical Profiling (ACP). This software solution automates the entire NMR workflow, from sample loading and data acquisition to processing, identification, quantification, and report filing [11]. It is designed for use by non-expert operators in high-throughput environments like quality control and forensic narcotics testing.

- Quantitative Workflow Enhancement:

| Workflow Stage | Traditional Manual Process | Automated ACP Process | Efficiency Gain |

|---|---|---|---|

| Data Processing | Manual phase and baseline correction | Fully automated, operator-independent | Saves minutes to hours per sample; ensures consistency. |

| Identification/Quantification | Expert spectroscopist analysis | Automated database matching and calibration | Frees expert time; allows 24/7 operation. |

| Report Generation | Manual compilation | Automated, standardized report filing | Eliminates transcription errors and reporting delays. |

Principle 3: Intelligent Data Analysis and Statistical Learning

Advanced instrumentation generates complex, high-dimensional data. Chemometrics and statistical learning tools are required to extract meaningful, objective, and defensible conclusions from this data, moving beyond subjective pattern matching.

Exemplar Technology: Quantitative Fracture Surface Topography Analysis. This method uses 3D microscopy to map the topography of fractured surfaces (e.g., a broken knife tip). Spectral analysis and multivariate statistics are then employed to quantitatively classify surfaces as "match" or "non-match" with a calculable error rate [12].

- Quantitative Matching Performance:

| Data Feature | Application in Statistical Learning | Impact on Reliability |

|---|---|---|

| Surface Roughness | Height-height correlation function captures uniqueness at a transition scale of ~50-70 μm [12]. | Provides an objective, measurable fingerprint of the fracture surface. |

| Spectral Topography | Multiple topographical frequency bands are combined into a multivariate model [12]. | Improves discrimination power between matching and non-matching surfaces. |

| Model Output | Generates a likelihood ratio for classification [12]. | Provides a statistical foundation for testimony, addressing legal standards like Daubert. |

Experimental Protocols

Protocol 1: Rapid On-Site Screening of ADB-butinaca via Portable Voltammetry

Application: Preliminary identification of the synthetic cannabinoid ADB-butinaca in seized materials.

1.1 Materials and Reagents

- Portable Electrochemical Platform: 3D-printed cell with integrated Boron-Doped Diamond Electrode (BDDE), Ag/AgCl reference electrode, and Pt auxiliary electrode [9].

- Handheld Potentiostat: Smartphone-controlled device.

- Britton-Robinson (BR) Buffer: pH 2.0 – 10.0, as supporting electrolyte.

- Methanol: HPLC grade, for sample dissolution.

- Standard Solutions: ADB-butinaca certified reference standard for calibration.

1.2 Sample Preparation

- Extract a small sub-sample (e.g., 1-2 mg) from the seized material.

- Dissolve the sub-sample in 1.0 mL of methanol and vortex mix for 30 seconds.

- Dilute a 100 µL aliquot of the methanolic solution in 10.0 mL of BR buffer (pH 7.0) to achieve a suitable concentration within the sensor's linear range.

1.3 Instrumental Analysis

- Place 3.0 mL of the diluted sample solution into the 3D-printed electrochemical cell.

- Using the smartphone application, initiate the voltammetric method:

- Technique: Square-Wave Voltammetry (SWV).

- Potential Range: Optimized for ADB-butinaca oxidation (e.g., +0.8 to +1.4 V vs. Ag/AgCl).

- Parameters: Frequency 20 Hz, amplitude 50 mV, step potential 2 mV.

- The acquisition is complete in less than 60 seconds. The software displays the voltammogram and identifies the characteristic peak of ADB-butinaca.

1.4 Data Interpretation

- A positive screening result is indicated by the presence of an oxidation peak at the characteristic potential for ADB-butinaca.

- All positive screening results must be confirmed by a validated laboratory-based technique, such as GC-MS [9].

Protocol 2: Automated Identification and Quantification of Narcotics via NMR

Application: High-throughput, operator-independent analysis of narcotics and new psychoactive substances (NPS) in pure form or complex mixtures.

2.1 Materials and Reagents

- NMR Spectrometer: Equipped with liquid handler and Advanced Chemical Profiling (ACP) software [11].

- NMR Solvent: Deuterated solvent (e.g., DMSO-d6, CDCl3).

- Internal Standard: Certified reference standard for quantification (e.g., TMS, maleic acid).

- NMR Tubes: Standard 5 mm tubes.

2.2 Sample Preparation

- Accurately weigh ~2-5 mg of the unknown forensic sample.

- Transfer the sample to an NMR tube.

- Add 0.6 mL of deuterated solvent containing a known concentration of internal standard.

2.3 Instrumental Analysis

- Load the sample tray with prepared NMR tubes.

- In the ACP software, select the pre-validated method for "Narcotics Screening".

- Initiate the automated sequence. The system (IconNMR and TopSpin) automatically:

- Locks, shims, and tunes the spectrometer for each sample.

- Acquires the 1D 1H-NMR spectrum.

- Processes the data (Fourier transform, phasing, baseline correction).

- Compares the processed spectrum against a curated database of narcotics and cutting agents.

- Identifies and quantifies all detectable constituents using the internal standard.

- Generates a comprehensive report.

2.4 Data Interpretation

- The ACP report provides a list of identified compounds with their respective concentrations.

- The report flags unknown signals that do not match the database, potentially indicating a New Psychoactive Substance (NPS) [11].

Workflow Visualizations

On-Site Drug Screening Workflow

Automated NMR Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function / Application |

|---|---|

| Boron-Doped Diamond Electrode (BDDE) | Robust, reusable sensor for electrochemical detection; provides a wide potential window and low background current [9]. |

| Britton-Robinson (BR) Buffer | A versatile supporting electrolyte for electroanalysis; its pH can be adjusted to optimize the electrochemical response of different analytes [9]. |

| Deuterated NMR Solvents (e.g., DMSO-d6) | Provides a signal-free environment for NMR analysis, allowing the solute's signals to be observed without interference. |

| Internal Standard (e.g., TMS) | Added in known concentration to the NMR sample; allows for precise quantification of identified compounds [11]. |

| Certified Reference Standards | Pure, authenticated analytical standards of target analytes (e.g., ADB-butinaca); essential for method development, calibration, and validation [9] [11]. |

| 3D Printing Filament (e.g., PLA, ABS) | Enables rapid, low-cost, and customizable fabrication of analytical devices and sample holders, such as the electrochemical cell [9]. |

Forensic science is undergoing a significant transformation, driven by the need for greater efficiency, reliability, and throughput in crime laboratories. The National Institute of Justice (NIJ) has established a comprehensive Forensic Science Strategic Research Plan for 2022-2026 to address these challenges through coordinated research and development [13]. This document frames the strategic priority of advancing rapid technologies within the context of this national framework, providing detailed application notes and experimental protocols to support researchers and forensic practitioners in enhancing workflow efficiency, particularly in the analysis of seized drugs and other chemical evidence.

A core objective of the NIJ's strategic plan is the "Application of Existing Technologies and Methods for Forensic Purposes," which explicitly calls for "rapid technologies to increase efficiency" [13]. This aligns with the broader community goal of meeting increasing demands for quality forensic services in the face of constrained resources. The integration of both qualitative analysis (identifying the presence or absence of substances) and quantitative analysis (determining their precise concentrations) is fundamental to this process, forming the basis for reliable and actionable forensic results [14] [15].

Strategic Priority: Rapid Technologies for Increased Efficiency

The NIJ's first strategic priority is to "Advance Applied Research and Development in Forensic Science" [13]. Within this priority, several objectives directly support the adoption and development of technologies that streamline forensic chemistry workflows.

Table 1: NIJ Strategic Objectives Supporting Workflow Efficiency

| Strategic Objective | Description | Impact on Forensic Efficiency |

|---|---|---|

| I.1. Application of Existing Technologies | Tools that increase sensitivity/specificity and rapid technologies to increase efficiency [13]. | Enables faster screening and analysis with fewer resources, reducing case backlogs. |

| I.4. Technologies Expediting Information Delivery | Expanded triaging tools and techniques to develop actionable results [13]. | Allows labs to prioritize evidence and provide investigators with timely intelligence. |

| I.6. Standard Criteria for Analysis | Evaluation of expanded conclusion scales and methods to express the weight of evidence [13]. | Streamlines interpretation and reporting, making results more consistent and understandable. |

The transition from traditional, often slower, wet-chemical techniques to advanced instrumental methods is key to this efficiency gain. While qualitative tests can confirm the presence of a substance, quantitative analysis is crucial for determining the concentration of an analyte, such as the precise amount of an illicit drug in a seized sample or the blood alcohol level in a suspect [14] [15]. Techniques like chromatography and spectroscopy, which can be adapted for both qualitative and quantitative purposes, are at the forefront of this modernization effort [14].

Analytical Techniques and Protocols for Efficient Workflows

Modern forensic laboratories employ a suite of sophisticated analytical techniques that provide both high-throughput screening and confirmatory quantitative results. The following protocols outline key methods for the analysis of seized drugs, a common and time-sensitive task in forensic chemistry.

Protocol 1: Rapid Screening of Seized Drugs Using FTIR Spectroscopy

Fourier Transform Infrared (FTIR) Spectroscopy is a powerful technique for the rapid identification of organic compounds based on their molecular bond vibrations and functional groups [16].

- Principle: A sample is bombarded with multiple wavelengths of infrared light. The resulting absorption spectrum creates a unique molecular "fingerprint" that can be compared against reference libraries for identification [16].

- Materials:

- FTIR spectrometer

- Attenuated Total Reflectance (ATR) accessory

- Solid or liquid sample

- Hydrophobic wipes and solvent (e.g., methanol) for cleaning

- Procedure:

- Clean the ATR crystal thoroughly with solvent and allow it to dry.

- Place a small amount of the solid sample directly onto the ATR crystal. For liquids, apply a small droplet.

- Apply pressure to ensure good contact between the sample and the crystal.

- Acquire the infrared spectrum (typically over a range of 4000-400 cm⁻¹).

- Compare the acquired spectrum against a validated library of known controlled substances and cutting agents.

- Applications: FTIR is highly effective for distinguishing between explosives, solvents, plastics, and different drug classes [16]. It is primarily used for identification (qualitative analysis) but can be used for semi-quantitative analysis [16].

Protocol 2: Confirmatory Analysis and Quantitation Using Gas Chromatography-Mass Spectrometry (GC-MS)

GC-MS is a gold-standard confirmatory technique that separates complex mixtures and provides definitive identification and quantitation of individual components [14] [16].

- Principle: The sample is vaporized and separated by Gas Chromatography (GC) based on the interaction of its components with a stationary phase and a gaseous mobile phase. The separated compounds are then ionized and analyzed by the Mass Spectrometer (MS), which identifies them based on their mass-to-charge ratio [16].

- Materials:

- GC-MS system

- Analytical column

- Inert carrier gas (e.g., helium)

- Certified reference standards for target analytes

- Appropriate solvents for sample preparation

- Procedure:

- Prepare a standard solution of the target analyte at a known concentration for calibration.

- Dissolve a weighed portion of the seized drug sample in a suitable solvent.

- Inject a small, precise volume of the sample solution into the GC inlet.

- The sample is vaporized and carried through the column by the carrier gas, separating the components based on their chemical properties.

- As each component elutes from the column, it enters the MS, is ionized, and produces a characteristic mass spectrum.

- Identify the compound by comparing its retention time and mass spectrum to the reference standard.

- Quantify the amount present by comparing the peak area or height of the analyte to the calibration curve generated from the standard.

- Applications: GC-MS is widely used for the confirmatory and quantitative analysis of drugs, alcohols in body fluids, and volatile compounds like fire accelerants [14] [16]. Liquid Chromatography-Mass Spectrometry (LC-MS) is another powerful tool for drug screening and is particularly useful for less volatile or thermally labile compounds [14].

The logical workflow from initial suspicion to confirmed, quantitative result integrates these techniques strategically, as shown in the following diagram.

Diagram 1: Drug Analysis Workflow from Screening to Quantitation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of efficient forensic protocols relies on the use of specific, high-quality reagents and materials.

Table 2: Key Research Reagent Solutions for Forensic Chemistry

| Item | Function/Application |

|---|---|

| Certified Reference Standards | Pure, certified materials used for instrument calibration, method validation, and quantitative analysis of drugs and toxins [14]. |

| LC-MS Grade Solvents | High-purity solvents (e.g., methanol, acetonitrile) for mobile phase preparation and sample extraction, minimizing background interference in sensitive analyses [16]. |

| Derivatization Reagents | Chemicals that modify target analytes to improve their volatility, stability, or detectability in chromatographic systems like GC-MS [16]. |

| Solid Phase Extraction (SPE) Sorbents | Selective phases used to isolate, concentrate, and clean up analytes from complex biological or environmental matrices before analysis [14]. |

| Stable Isotope-Labeled Internal Standards | Standards used in quantitative MS to correct for sample loss and matrix effects, ensuring analytical accuracy and precision. |

| Buffers and Mobile Phase Additives | Chemicals (e.g., ammonium formate, trifluoroacetic acid) used to control pH and ionic strength, optimizing chromatographic separation and ionization efficiency. |

The strategic integration of rapid technologies and efficient workflows, as outlined in the NIJ's research plan, is critical for the future of forensic chemistry. The application of techniques like FTIR for rapid screening and GC-MS/LS-MS for definitive confirmation and quantitation directly addresses the need for increased laboratory efficiency and actionable results. By aligning research and daily practice with these national goals, forensic scientists, researchers, and drug development professionals can contribute to a more responsive, reliable, and impactful forensic science enterprise. Continued focus on foundational research, method validation, and workforce development will ensure these efficiency gains are sustainable and scientifically sound.

From Benchtop to Field: A Guide to Rapid Technologies in Action

The field of forensic chemistry is increasingly defined by its demand for rapid, definitive, and efficient analytical results. Growing caseloads, complex sample matrices, and the need for timely intelligence in investigations have driven the adoption of accelerated chromatography techniques. Among these, Rapid Gas Chromatography-Mass Spectrometry (GC-MS) and Comprehensive Two-Dimensional Gas Chromatography (GC×GC) stand out for their ability to dramatically increase throughput and analytical resolution. These technologies are transforming forensic workflows, moving labs from a backlogged, batch-processing model toward a dynamic, data-driven operation capable of providing critical insights with unprecedented speed.

This shift is underpinned by significant advancements in instrumentation. Modern benchtop gas chromatographs now prioritize ease of use, compact size, and integrated diagnostics, enabling more analysis to be performed in less time and space without sacrificing data quality [17]. Furthermore, the principles of green chemistry are being integrated into analytical methods, promoting the use of techniques like GC-MS that forgo the substantial volumes of hazardous organic solvents required by liquid chromatography, thereby reducing environmental impact and waste disposal costs [18]. This article provides detailed application notes and protocols for implementing these powerful techniques, framed within the context of enhancing efficiency in modern forensic science.

Current State of Benchtop GC Technology and Trends

The landscape of benchtop gas chromatography in 2025 is characterized by a focus on connectivity, automation, and operational simplicity. Major vendors are designing systems that integrate seamlessly into increasingly digitalized forensic laboratories.

Table 1: Key Features of Modern Mainline Benchtop Gas Chromatographs (2025)

| Vendor | Instrument Model | Key Features and Forensic Workflow Benefits |

|---|---|---|

| Agilent Technologies | 8890 GC System | Features autonomous diagnostics that check system health and provide alerts. Offers step-by-step maintenance instructions on a touch screen or remote browser interface [17]. |

| PerkinElmer | GC 2400 Platform | Includes a detachable touchscreen for remote instrument control and monitoring, enabling faster decision-making from anywhere in or out of the lab [17]. |

| Thermo Fisher Scientific | Trace 1600 Series | Designed for minimal user interaction via an advanced touchscreen with health monitoring and how-to videos. Allows for full instrument control through the chromatography data system (CDS) [17]. |

| Shimadzu | Nexis GC-2030 | Employs "Analytical Intelligence" for automated workflows and remote operation. The system features self-diagnostics to simplify maintenance [17]. |

A parallel trend is the rise of smaller-footprint benchtop GC systems. These instruments retain the core capabilities of their larger counterparts but are designed for dedicated routine applications, allowing forensic labs to maximize throughput per square foot of lab space. Examples include the Agilent 8850 GC and Intuvo 9000 GC, and the Shimadzu Brevis GC-2050, the latter of which is only 350 mm wide and designed for ease of use with minimal physical buttons [17].

Rapid GC-MS Methods for Forensic Analysis

Rapid GC-MS achieves significant reductions in analysis time through a combination of instrumental parameters: using shorter, narrower-bore capillary columns, higher carrier gas linear velocities, and faster temperature ramps. This section outlines a protocol for the rapid analysis of a common pharmaceutical combination, which is directly applicable to forensic drug analysis.

Application Note: Rapid GC-MS Analysis of Paracetamol and Metoclopramide

A green, fast, and sensitive GC-MS method has been developed for the simultaneous quantification of paracetamol (PAR) and metoclopramide (MET) in pharmaceutical formulations and human plasma, demonstrating the potential for high-throughput toxicological and counterfeit drug analysis [18].

Table 2: Performance Data for the Rapid GC-MS Assay

| Parameter | Paracetamol (PAR) | Metoclopramide (MET) |

|---|---|---|

| Analytical Range | 0.2 – 80 µg/mL | 0.3 – 90 µg/mL |

| Linearity (r²) | 0.9999 | 0.9988 |

| Precision (RSD %) | Tablet: 3.605% | Tablet: 3.392% |

| Plasma: 1.521% | Plasma: 2.153% | |

| Recovery (%) | Tablet: 102.87% | Tablet: 101.98% |

| Plasma: 92.79% | Plasma: 91.99% | |

| Detection Ion (m/z) | 109 | 86 |

| Total Runtime | < 5 minutes | < 5 minutes |

Experimental Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

- Analytical Standards: High-purity Paracetamol (≥ 99.90%) and Metoclopramide HCl (≥ 99.98%) for calibration.

- Solvents: HPLC-grade ethanol for sample preparation and dilution.

- Internal Standard (Recommended for bioanalysis): A structurally similar compound or deuterated analog not found in the sample matrix.

- GC-MS System: Agilent 7890A GC coupled with a 5975C inert mass spectrometer with a Triple Axis Detector [18].

- Chromatographic Column: Agilent 19091S-433; 5% Phenyl Methyl Siloxane (30 m × 250 µm × 0.25 µm) [18].

- Consumables: Deionized water, helium carrier gas, sample vials, and vial caps.

Step-by-Step Procedure:

Instrument Setup:

- Configure the GC-MS system with the specified column.

- Set the carrier gas (Helium) to a constant flow rate of 2 mL/min [18].

- Configure the inlet temperature (e.g., 250°C) and use a split injection mode (e.g., 10:1 split ratio) with an injection volume of 1 µL.

- Set the GC oven program for rapid analysis: Initial temperature 80°C, ramp at 50°C/min to 280°C, and hold for 0.5 min. The total runtime will be under 5 minutes.

- Set the MS transfer line temperature to 280°C, ion source to 150°C, and quadrupole to 230°C. Operate the MS in Selected Ion Monitoring (SIM) mode for detection, monitoring m/z 109 for PAR and m/z 86 for MET [18].

Sample Preparation:

- For pharmaceutical tablets: Accurately weigh and crush tablets. Dissolve an equivalent of 500 mg PAR and 100 mg MET in ethanol, sonicate, and centrifuge. Dilute the supernatant as needed.

- For plasma: Perform a protein precipitation extraction. Add a known volume of ethanol (e.g., 300 µL) to a plasma aliquot (e.g., 100 µL), vortex mix, and centrifuge. Use the supernatant for analysis [18].

- Prepare calibration standards in ethanol or blank plasma across the specified concentration range (e.g., 0.2-80 µg/mL for PAR and 0.3-90 µg/mL for MET).

Data Acquisition and Analysis:

- Inject calibration standards and samples in sequence.

- Use the data system (e.g., Agilent MassHunter) to integrate the peak areas for the target ions.

- Construct a calibration curve by plotting the peak area (or area ratio to an internal standard) against the known concentration of each standard.

- Use the linear regression equation from the calibration curve to calculate the concentration of PAR and MET in the unknown samples.

Diagram 1: Rapid GC-MS Forensic Analysis Workflow

Comprehensive Two-Dimensional Gas Chromatography (GC×GC)

GC×GC provides a monumental leap in separation power for complex mixtures. It connects two chromatographic columns with distinct stationary phases through a modulator. The effluent from the first column is collected, focused, and reinjected in small pulses into the second column. This process produces a two-dimensional chromatogram where compounds are separated by two different chemical properties (e.g., volatility and polarity), resolving co-eluting peaks that would be inseparable by one-dimensional GC.

Data Analysis in GC×GC: Fisher Ratio for Non-Targeted Discovery

In forensic science, the power of GC×GC is often harnessed for non-targeted, discovery-based analysis, where the goal is to find minute, unknown differences between complex sample classes (e.g., comparing ignitable liquid residues from arson scenes). Fisher Ratio (F-ratio) analysis is a powerful supervised method for this task [19].

The F-ratio is defined as the ratio of class-to-class variance to the sum of within-class variances. It prioritizes compounds that show consistent and significant differences between sample groups over those with just a large signal [19]. The formula is expressed as:

Fisher Ratio = σcl² / σerr²

where σ_cl² is the variance between classes and σ_err² is the variance within classes [19].

Diagram 2: GC×GC F-Ratio Analysis for Feature Discovery

Three computational approaches exist for F-ratio analysis, with a 2020 study finding the pixel-based method to be the most sensitive for discovering spiked analytes in a complex gasoline matrix, followed by tile-based and peak table-based methods [19]. A null distribution analysis should be used to establish a statistical F-ratio cutoff and minimize false positives [19].

Quantitative Calibration Fundamentals

Accurate quantification is a cornerstone of forensic chemistry, whether for determining drug concentrations or quantifying impurities. The choice of calibration method is critical for achieving reliable results.

Table 3: Comparison of Common Quantitative Calibration Methods in GC

| Calibration Method | Principle | Advantages | Limitations | Best for Forensic Applications |

|---|---|---|---|---|

| Area Percent Normalization | Reports area % as concentration %. | Simple; no standards needed. | Assumes all components are detected and have equal response; highly inaccurate for quantitation [20]. | Screening for impurities relative to a main component. |

| External Standard | Calibration curve of peak area vs. standard concentration. | Mitigates variable detector response. | Does not correct for sample prep/injection variability; can be noisy [20]. | Simple "dilute-and-shoot" analyses with high reproducibility. |

| Internal Standard (IS) | Calibration curve of (analyte area/IS area) vs. concentration. | Corrects for sample prep and injection losses; improves precision [20]. | Finding a suitable IS that is not in the sample and behaves like the analyte can be challenging [20]. | Most bioanalyses and methods requiring extraction; essential for high-precision work. |

| Standard Addition | Analyte signal is measured after adding known amounts to the sample itself. | Corrects for complex matrix effects. | Time-consuming; requires more sample; best used with peak height [20]. | Analyzing samples with unique or un-mimickable matrices. |

For GC-MS analysis in complex matrices like blood or urine, the internal standard method is highly recommended. The best internal standard is a deuterated analog of the analyte, which has nearly identical chemical behavior but a different mass, allowing the MS to distinguish it [20].

Integration into Modern Forensic Workflows

The adoption of accelerated chromatography techniques aligns with a broader movement toward data-driven efficiency in forensic laboratories. Artificial Intelligence (AI) and machine learning are emerging as powerful tools for managing the complex data generated by these techniques and for optimizing lab operations [7].

Potential AI applications include:

- Resource Allocation and Case Prioritization: Using predictive models on past case data to estimate processing time and staffing needs, and to automatically prioritize cases or evidence types based on potential investigative value [7].

- Data Synthesis: AI can integrate results from multiple forensic disciplines (e.g., GC-MS, DNA, latent prints) to generate cohesive intelligence and suggest investigative leads [7].

A critical guardrail for any AI application in forensics is human verification. AI outputs, especially from generative systems, must be viewed as coming from "a witness with no reputation and amnesia," requiring rigorous validation and an audit trail of all inputs and outputs [7].

Rapid GC-MS and GC×GC represent the vanguard of analytical techniques that directly address the pressing needs of modern forensic chemistry for speed, resolution, and efficiency. The protocols and application notes detailed herein provide a framework for implementing these powerful technologies. When combined with robust quantitative calibration practices and emerging data science tools, they form a comprehensive strategy for transforming forensic workflows. This integration enables laboratories to not only clear backlogs but also to generate more definitive, data-rich results that can withstand legal scrutiny and provide stronger evidence for the justice system.

Direct Analysis in Real Time coupled with High-Resolution Mass Spectrometry (DART-HRMS) represents a transformative ambient ionization technique that enables rapid mass spectral analysis of samples in their native state without extensive preparation. This technology addresses critical needs in forensic chemistry workflows where case backlogs, difficult-to-analyze samples, and previously unseen materials demand new analytical tools [21] [22]. DART-HRMS operates at atmospheric pressure, allowing analysis of a wide range of analytes—including solids, liquids, and gases—directly on surfaces as varied as concrete, human skin, and currency [23].

The fundamental ionization mechanism of DART involves generating excited-state species in a heated gas stream (typically helium or nitrogen) that initiates a cascade of gas-phase reactions upon release [23] [22]. These processes create reagent ions that chemically ionize analytes present near the mass spectrometer inlet, with elevated temperature promoting sample desorption. The technique can generate both positive and negative ions depending on the analytical requirements [23]. This solvent-free approach eliminates time-consuming sample preparation, preserves sample integrity, and significantly reduces analysis time from hours to seconds while maintaining high sensitivity and specificity [22].

Technical Foundations & Ionization Mechanisms

DART Ionization Processes

The DART ion source creates a gas-phase ionization mechanism through a carefully controlled process. Inside the source, a corona discharge converts flowing inert gas into plasma containing ions, electrons, and excited-state species. Electrostatic lenses then remove ions and electrons, leaving only long-lived electronically or vibronically excited atoms or molecules [23]. When these excited species exit the source and interact with atmospheric gases and the sample, several ionization pathways can occur:

- Penning Ionization: Metastable atoms transfer their energy to analyte molecules having lower ionization energies, resulting in the formation of molecular ions [22].

- Proton Transfer: When excited-state species ionize atmospheric water molecules, they create protonated water clusters [H₃O⁺(H₂O)n] that can donate protons to analyte molecules with higher proton affinity than water [22].

- Electron Capture: For negative ion mode, the grid electrode at the DART exit provides low-energy electrons that can be captured by analytes with high electron affinity [23].

The resulting ions are then directed into the mass spectrometer for separation and detection. The high-resolution mass spectrometer provides accurate mass measurements, enabling determination of elemental composition and facilitating confident compound identification [24].

Instrumentation Components

A complete DART-HRMS system consists of several key components:

- DART Ion Source: Generates the excited-state species required for ionization, featuring a gas heater, grid electrode, and insulator cap [23].

- Mass Spectrometer: Typically a high-resolution time-of-flight (TOF) instrument that provides accurate mass measurements.

- Sample Introduction System: Various interfaces including automated linear rails, thermal desorber units, or manual positioning devices [23] [24].

- Gas Supply: High-purity helium or nitrogen gas sources that produce the excited-state species [22].

The incorporation of a thermal desorption (TD) unit extends application possibilities by providing controlled heating of samples prior to ionization, improving reproducibility for solid samples and surface wipes [24].

Application Protocols

The following section provides detailed methodologies for implementing DART-HRMS across various forensic applications, emphasizing the minimal sample preparation required.

Protocol 1: Analysis of Recreational Cannabis Products

Objective: To rapidly identify cannabinoids and terpenes in diverse commercial cannabis products without sample pretreatment [25].

Materials & Equipment:

- DART-HRMS system with Vapur interface

- High-purity helium gas (≥99.999%)

- OpenSpot sample cards or automated rail system

- Positive and negative mode mass calibration standards

- Commercially available cannabis products (edibles, concentrates, tinctures, topicals, vaporizers, flower)

Methodology:

- Instrument Setup:

- Set DART gas heater temperature to 350°C for solid samples or 250°C for liquids/topicals

- Configure helium gas flow to 2.0-3.0 L/min

- Set mass spectrometer to acquire data in the range of m/z 50-800

- Select positive ion mode for terpenes and neutral cannabinoids; negative ion mode for cannabinoid acids

Sample Analysis:

- For plant material: Position small floral clusters (~2-5 mg) directly in the DART gas stream using tweezers

- For edibles: Apply small aliquot (~1 µL) of extracted material or solid fragment to OpenSpot card

- For concentrates/topicals: Use glass dip tube to transfer trace amount to sampling card

- Analyze each sample for 30-60 seconds to ensure representative data acquisition

Data Interpretation:

- Identify protonated molecules [M+H]+ in positive ion mode for THC (m/z 315.2324), CBD (m/z 315.2324), CBN (m/z 311.2015)

- Identify deprotonated molecules [M-H]- in negative ion mode for CBDA (m/z 358.2147), THCA (m/z 358.2147)

- Detect terpenes including eucalyptol as [M+H]+ (m/z 155.1430) or [M-H]- (m/z 153.1277)

Key Advantages: This approach avoids difficulties typically encountered with traditional chromatographic methods for complex matrices, with analysis times under 2 minutes per sample compared to 20-30 minutes for LC-MS methods [25].

Protocol 2: Detection of Riot Control Agents on Fabrics

Objective: To screen clothing and surfaces for riot control agent (RCA) contamination using DART-TD-HRMS [24].

Materials & Equipment:

- DART ion source with thermal desorption unit

- Cotton fabric samples (pre-washed)

- Sample traps (glass fiber swabs, ST1318P)

- RCA reference standards (capsaicin, CS, CR, CN, PAVA)

- Acetonitrile (hyper grade for LC-MS)

Methodology:

- Sample Collection:

- Wipe suspect fabric areas (approximately 10 cm²) with glass fiber sample trap

- For liquid contamination, use dry swab; for particulate matter, slightly moisten with acetonitrile

- Alternatively, directly position small fabric sections (≤1 cm²) in thermal desorption unit

Instrument Parameters:

- Set TD unit temperature gradient: 50°C to 300°C at 100°C/min

- Configure DART gas temperature to 350°C (helium)

- Mass spectrometer acquisition: m/z 100-500 in positive ion mode

- Calibrate mass spectrometer using tune mix before analysis

Analysis Procedure:

- Place sample trap in TD autosampler or manually position fabric in holder

- Initiate thermal desorption and simultaneous data acquisition

- Analyze samples in batches of 10-20 with solvent blanks between specimens

- Acquisition time: 2 minutes per sample maximum

Compound Identification:

- Monitor for [M+H]+ of capsaicin (m/z 306.2063), CS (m/z 189.0088), CR (m/z 198.0913)

- Apply mass accuracy threshold of ≤5 ppm for confident identification

- Use extracted ion chromatograms for quantification when required

Validation Parameters: The method demonstrated detection of all 16 OPCW-listed potential RCAs with linear response from 0.5-100 ng/μL for most compounds [24].

Protocol 3: Entomotoxicological Screening of Blow Flies

Objective: To determine toxicological information from entomological evidence by screening blow flies for fentanyl-derivative accumulation [26].

Materials & Equipment:

- DART-HRMS system with high-resolution mass spectrometer

- Blow fly specimens (larvae, pupae, adults)

- Control and exposed insect colonies

- Solid-phase microextraction (SPME) fibers (optional)

- Multivariate statistical analysis software

Methodology:

- Sample Preparation:

- Collect insect specimens from remains or controlled feeding studies

- Rinse with distilled water to remove external contaminants

- Blot dry and homogenize individual specimens using glass homogenizer

- For direct analysis, position intact insects or body parts in DART stream

DART-HRMS Parameters:

- Set DART gas temperature to 400°C to facilitate desorption of insect matrices

- Use helium gas at 3.5 L/min flow rate

- Mass spectrometer resolution: ≥30,000 (FWHM)

- Data acquisition: m/z 70-1000 in both positive and negative modes

Metabolomic Analysis:

- Acquire mass spectral profiles of control and exposed insects

- Collect data from multiple life stages (larvae, pupae, adults)

- Perform 5-10 technical replicates per specimen to ensure reproducibility

Data Processing:

- Export raw mass spectral data to multivariate analysis software

- Apply principal component analysis (PCA) to differentiate metabolic profiles

- Use orthogonal projections to latent structures discriminant analysis (OPLS-DA) to identify significant m/z features

- Identify biomarkers of fentanyl exposure through database searching

Key Findings: Chemometric analysis facilitated differentiation of blow flies that fed on fentanyl-derivative-laced liver from controls across various life stages, enabling toxicological inference from insects [26].

Quantitative Performance Data

The following tables summarize key quantitative performance metrics for DART-HRMS across various forensic applications.

Table 1: Detection Capabilities for Different Compound Classes

| Compound Class | Example Analytes | Limit of Detection | Linear Range | Analysis Time | Reference |

|---|---|---|---|---|---|

| Cannabinoids | THC, CBD, CBN | 0.1-1 ng | 1-500 ng | <30 seconds | [25] |

| Riot Control Agents | Capsaicin, CS, CR | 0.1-0.5 ng | 0.5-100 ng/μL | <2 minutes | [24] |

| Pharmaceuticals | Fentanyl derivatives | Low ppb level | Not specified | <1 minute | [26] |

| Entomological Evidence | Insect metabolites | Not specified | Not specified | <2 minutes | [26] |

| Explosives & GSR | Inorganic residues | Not specified | Not specified | <30 seconds | [21] |

Table 2: Comparison of Analysis Time Between Traditional Methods and DART-HRMS

| Application | Traditional Method | Traditional Analysis Time | DART-HRMS Time | Time Reduction |

|---|---|---|---|---|

| Cannabis Analysis | GC-MS/MS | 20-30 minutes | 1-2 minutes | 85-95% |

| RCA Detection | LC-MS/MS | 15-25 minutes | 1.5-2 minutes | 90-95% |

| Entomotoxicology | HPLC with sample prep | 45-60 minutes | 2-3 minutes | 95-97% |

| Drug Screening | UPLC-QTOF | 10-15 minutes | 0.5-1 minute | 85-95% |

| Ink Differentiation | TLC & MS | 30-45 minutes | 1 minute | 95-98% |

Experimental Workflow Visualization

DART-HRMS Experimental Workflow: This diagram illustrates the streamlined workflow for non-extracted sample screening using DART-HRMS technology, highlighting key advantages including minimal sample preparation and atmospheric pressure operation.

Research Reagent Solutions

Table 3: Essential Materials for DART-HRMS Implementation

| Item | Specification | Function | Application Examples |

|---|---|---|---|

| Helium Gas | High purity (≥99.999%) | Production of excited-state metastable species | All DART-HRMS applications [23] [22] |

| Nitrogen Gas | High purity (≥99.999%) | Alternative to helium for some applications | Cost-effective analysis of low IP compounds [22] |

| OpenSpot Sample Cards | Polyester mesh or glass fiber | Sample presentation substrate | Cannabis products, powders, residues [25] |

| Sample Traps (ST1318P) | Glass fiber swabs | Surface sampling and thermal desorption | RCA detection on fabrics, surface screening [24] |

| Thermal Desorber Unit | Programmable temperature (50-400°C) | Controlled sample heating prior to ionization | Solid samples, swabs, low volatility compounds [23] [24] |

| Calibration Standards | Tune mix for positive/negative mode | Mass axis calibration | Daily instrument performance verification [25] [24] |

| SPME Fibers | Various coatings (PDMS, CAR/PDMS) | Headspace sampling for volatile compounds | Fire debris, ignitable liquids, volatile organics |

| Automated Rail System | Motorized sample positioning | High-throughput sequential analysis | 384-well plate screening, batch processing [23] |

DART-HRMS technology represents a paradigm shift in forensic chemical analysis, offering unprecedented capabilities for rapid screening of non-extracted samples across diverse matrices. The technique's minimal sample requirements, absence of extensive preparation, and rapid analysis times (typically 10 seconds to 2 minutes per sample) directly address workflow efficiency challenges in forensic laboratories [23] [22].

The applications demonstrated—from cannabis product screening and riot control agent detection to entomotoxicological assessments—highlight the versatility of this ambient ionization approach [25] [24] [26]. As forensic chemistry continues to confront emerging analytical challenges, including novel psychoactive substances and complex sample matrices, DART-HRMS stands positioned as a key enabling technology for rapid triage and comprehensive analysis. Future developments will likely focus on expanding compound libraries, validating quantitative performance, and further integrating automated sampling approaches to maximize throughput and reliability in forensic workflows.

Application Notes

The integration of portable Gas Chromatography-Mass Spectrometry (GC-MS) and Rapid DNA technologies into forensic workflows represents a significant advancement, dramatically increasing efficiency by delivering actionable intelligence from the sample site in hours instead of weeks or months. Deploying these platforms directly to the crime scene, border checkpoint, or battlefield enables investigators to make mission-critical decisions based on confirmed data, fundamentally changing the investigative tempo. The following application notes and quantitative data summarize the performance and utility of these platforms for various evidence types.

Application Note 1: Rapid DNA for Biological Evidence

Objective: To evaluate the performance of Rapid DNA systems in processing non-reference biological traces, such as blood and saliva, secured from crime scenes and compare the results to conventional laboratory DNA analysis.

Background: Rapid DNA technology has matured from processing buccal (cheek) swabs to handling a wider array of sample types encountered in casework. A primary driver for its implementation is the significant reduction in the turnaround time for DNA results, which can directly impact the speed and direction of criminal investigations [27].

Key Findings:

- Investigation Duration: A field experiment demonstrated that a decentralized Rapid DNA procedure significantly reduced the total duration of the investigative process compared to the regular laboratory procedure [27].

- Profile Success Rate: The success of generating a usable DNA profile is highly dependent on the sample type and its condition. Rapid DNA techniques are less sensitive than conventional laboratory equipment and are most suitable for traces with an expected high DNA quantity from a single donor [27].

- Sample Type Performance: One study found that the success rate for blood and saliva-based samples varied between the ANDE 6C and RapidHIT ID systems and was dependent on the specific sample type (e.g., blood on fabric, saliva on drink containers) [28]. Adherence to manufacturer instructions for sample collection was found to be critical, particularly for the ANDE system [28].

- Sensitivity: The sensitivity range for leading Rapid DNA systems is comparable, with both capable of generating full profiles from samples that typically yield 5–10 ng of DNA in a conventional workflow [28].

Table 1: Performance Summary of Rapid DNA Analysis for Crime Scene Traces

| Metric | Rapid DNA (RapidHIT) | Conventional Laboratory |

|---|---|---|

| Typical Turnaround Time | ~1.5 to 2 hours [29] [27] | Weeks to months [27] |

| Optimal Sample Types | Visible blood traces; single-donor, high-quantity saliva [27] | Wide range, including low-quantity and complex mixture samples [27] |

| Sensitivity | Lower; suitable for samples yielding ≥5-10 ng DNA [28] | Higher; capable of profiling low-template DNA [27] |

| Key Impact | Significant reduction in investigative process duration [27] | Gold standard for sensitivity and mixture deconvolution [27] |

Application Note 2: Portable GC-MS for Explosives and Chemical Evidence

Objective: To demonstrate the application of portable GC-MS for the confirmatory identification of explosive residues in field settings to support immediate threat assessment and intelligence gathering.

Background: Portable GC-MS instruments have been deployed for organic analysis in harsh environments for over two decades [30]. Their ability to provide separation and definitive mass spectral identification makes them indispensable for analyzing complex mixtures encountered in forensic and military scenarios.

Key Findings:

- Confirmatory Analysis: Portable GC-MS is capable of the confirmatory identification of pre- and post-detonation explosive threats, providing information on the source based on trace-level chemicals [31].

- Operational Benefits: On-scene analysis enables the development of render-safe procedures, drastically improves intelligence turnaround time, and guides optimal scene processing. It also ensures analysis reflects the scene's condition at the time of sampling, which is critical for volatile compounds [31].

- Technology Comparison: Both portable ion-trap and quadrupole GC-MS systems are available. While quadrupole systems generate spectra that are more easily comparable to standard libraries (e.g., NIST), ion-trap systems can operate with more field-friendly hardware, though their spectra may be affected by ion-chemistry events [31].

Table 2: Performance Summary of Portable GC-MS for Explosives Analysis

| Metric | Portable GC-MS | Traditional Laboratory GC-MS |

|---|---|---|

| Analysis Time | ~90 seconds to 5 minutes per sample [31] | Hours to days (including transport) |

| Primary Advantage | Real-time, confirmatory data at the sample site [31] | Ultimate resolution and sensitivity in a controlled environment |

| Key Applications | Explosives identification [31], chemical warfare agents [30], ignitable liquids [32] | Broadest range of forensic chemical analysis |

| Data Quality | Confirmatory identification possible [31] | Gold standard for definitive analysis |

Experimental Protocols

Protocol 1: Rapid DNA Analysis of Crime Scene Blood Traces Using a RapidHIT System

Principle: This protocol describes the procedure for generating a DNA ID from a visible blood stain at a crime scene or in a mobile laboratory using a RapidHIT instrument, enabling a database search in under two hours [27].

Materials:

- RapidHIT ID System (or similar Rapid DNA platform)

- Approved sample collection cartridge or swab (e.g., RapidINTEL cartridge, splitable 4N6 FLOQSwabs)

- Disposable gloves

- Personal protective equipment (PPE)

Procedure:

Sample Collection:

- Don PPE and gloves to avoid contamination.

- Using an approved swab, collect the blood stain from the surface. If using a splitable swab for validation, use a rotary motion to achieve a homogeneous distribution of the trace on the swab head [27].

- If required, air-dry the swab for a few minutes to allow solvent evaporation.

Instrument Preparation:

- Ensure the RapidHIT system has passed its daily performance check, which verifies GC, MS, and library search functionality.

- Load the sample cartridge into the instrument according to the manufacturer's instructions.

Sample Loading and Run Initiation:

- Place the collected swab into the designated lane of the sample cartridge.

- Close the instrument and initiate the automated run sequence. The process, including extraction, amplification, separation, and analysis, is fully automated and requires approximately 1.5 to 2 hours [27].

Data Analysis and Reporting:

- The instrument software will automatically process the raw data, interpret the DNA profile, and generate a report.

- For accredited procedures, the resulting DNA profile can be directly uploaded to the CODIS database for a search if the system is approved for such use [29].

Quality Control:

Protocol 2: Analysis of Explosive Residues Using Portable GC-MS

Principle: This protocol details the use of portable GC-MS with solid-phase microextraction (SPME) for the sampling and confirmatory identification of organic explosives residues in the field [31].

Materials:

- Portable GC-MS system (e.g., Smiths Detection Guardion)

- SPME fiber assembly (e.g., 65-μm PDMS/DVB)

- Helium carrier gas cartridge

- Headspace GC-MS vials

- Microsyringe

- Performance validation mixture

Procedure:

System Performance Check:

- At the start of operations, perform a system performance test using a standard validation mixture.

- Verify that GC retention times for all chemicals are within ±2 seconds of the expected values and that MS performance (mass calibration, resolution, sensitivity) and library search functions meet acceptance criteria [31].

Sample Collection (SPME Headspace Sampling):

- Transfer between 100–500 mg of solid explosive evidence to a headspace vial and seal it.

- Allow the vial to equilibrate at room temperature (e.g., 22 °C) for a minimum of 2 hours.

- Pierce the vial cap with the SPME holder and expose the fiber to the vial's headspace for a specified time, typically between 10–40 minutes [31].

Sample Collection (Direct Deposition for Liquid Standards):

- For standard solutions, deposit 20–200 ng of the explosive standard (e.g., RDX, TNT, PETN) directly onto the coated SPME fiber using a microsyringe.

- Allow the solvent to air-dry for up to 5 minutes [31].

GC-MS Analysis:

- Introduce the SPME fiber into the GC-MS injection port for thermal desorption.

- Initiate the GC-MS method. A typical field method is fast, with a total analysis time of approximately 3 minutes and a cycle time of about 5 minutes between injections [31].

- The GC method uses a resistively heated capillary column for rapid temperature programming.

Data Interpretation:

- The system software will automatically process the data and compare the acquired mass spectrum against its proprietary library and a condensed NIST library.

- Review the library search results for the confirmatory identification of explosive compounds. Be aware that ion-trap mass spectra may differ from quadrupole library entries due to ion chemistry [31].

Workflow and Signaling Pathways

The following diagram illustrates the logical workflow and decision-making process for deploying portable forensic platforms at a crime scene, highlighting how these tools are integrated to increase overall investigative efficiency.

On-Site Forensic Analysis Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for Portable Forensic Analysis

| Item | Function |

|---|---|

| Splitable 4N6 FLOQSwabs | Allows a single biological trace to be sampled once and split, enabling parallel analysis by Rapid DNA and conventional laboratory methods for validation [27]. |

| RapidINTEL / I-Chip Cartridge | Sample cartridge specific to the Rapid DNA instrument brand (RapidHIT or ANDE); holds the sample and reagents for the fully automated process [28]. |

| SPME Fiber (PDMS/DVB) | A solid-phase microextraction fiber used for sampling volatile and semi-volatile organic compounds from headspace or via direct contact; serves as the introduction method for portable GC-MS [31]. |

| Helium Cartridge | Provides the carrier gas for the portable GC system; field-friendly disposable cartridges enable untethered operation [31]. |

| Performance Validation Mixture | A standard solution containing known compounds; used to verify the proper function of the GC, MS, and library search before operational use [31]. |

| Explosive Standards | Certified reference materials (e.g., RDX, TNT, PETN) used for method development, calibration, and quality control of the GC-MS analysis [31]. |

The integration of Artificial Intelligence (AI) and automation technologies is fundamentally transforming forensic chemistry and drug development research. These tools are revolutionizing how scientists manage complex data interpretation and workflow processes, enabling unprecedented levels of efficiency, accuracy, and scalability. In environments characterized by vast datasets and stringent reproducibility requirements, AI-powered automation moves beyond simple task execution to create intelligent, self-optimizing systems that enhance human expertise and accelerate discovery [33].

This document provides detailed application notes and experimental protocols for implementing these technologies within modern research laboratories. The guidance is structured to help researchers and scientists navigate the selection, deployment, and validation of automation tools, with a specific focus on applications in forensic chemistry workflows such as sample analysis, compound identification, and toxicological reporting [34].

Foundational Concepts and Definitions

Core Terminology

- Workflow Automation: The technology-enabled automation of activities or tasks that constitute a business process. It uses defined business rules to route items through workflow steps, streamlining repetitive, manual processes to increase efficiency, reduce errors, and free up human resources for higher-value work [35].

- AI Automation: The use of computer systems powered by machine learning and other AI subfields to perform tasks that typically require human intelligence. In scientific contexts, this includes data analysis, pattern recognition, and predictive modeling [36] [37].

- Automation in Digital Forensics: The use of technology to partially or fully automate tasks in a digital forensic process, crucial for managing increasing volumes of digital evidence. This ranges from basic automation (e.g., keyword searches) to full automation (autonomous operation) [34].

Levels of Workflow Automation

Modern workflow automation operates at varying levels of sophistication, from simple task automation to fully autonomous systems. Understanding these levels is crucial for selecting the right tools for a specific laboratory need [35].

Table: Levels of Workflow Automation Maturity

| Level | Name | Key Characteristics | Example in a Research Context |

|---|---|---|---|

| 1 | Manual Workflows with Triggered Automation | Task-based automation; human-initiated actions; no orchestration across steps [35]. | A laboratory information management system (LIMS) sends an email notification upon sample registration, but a human handles all subsequent steps [35]. |

| 2 | Rule-Based Automation | Processes automated based on predefined rules and conditions (IF/THEN logic); requires human oversight for exceptions [35]. | A chromatographic data system automatically flags results that fall outside a pre-defined calibration range for analyst review [35]. |

| 3 | Orchestrated Multi-Step Automation | Multiple tasks and systems connected sequentially for end-to-end workflow automation; fewer human handoffs; workflow visualization tools [35]. | A new sample submission triggers login, preparation vial assignment, instrument sequence creation, and preliminary data processing in multiple integrated systems [35]. |

| 4 | Adaptive Automation with Intelligence | Leverages AI/ML to adapt workflows based on data patterns and past outcomes; predictive decision-making; dynamic, self-adjusting workflows [35]. | An AI system routes spectral data for interpretation to the most effective analyst based on historical resolution times and expertise with specific compound classes [35]. |

| 5 | Autonomous Workflows | Fully automated, self-optimizing systems operating with minimal human intervention; closed-loop automation; continuous improvement via feedback loops [35]. | An integrated system detects an anomaly in a high-throughput screening run, automatically re-runs quality control checks, executes calibration scripts, and updates the electronic lab notebook [35]. |

Experimental Protocols for AI Integration

Protocol: Automated Data Review for Chromatographic Results