Streamlining Justice: Advanced Strategies to Reduce Backlog in Forensic Chemistry Casework

This article addresses the critical challenge of casework backlogs in forensic chemistry, a issue that delays justice and strains criminal justice systems globally.

Streamlining Justice: Advanced Strategies to Reduce Backlog in Forensic Chemistry Casework

Abstract

This article addresses the critical challenge of casework backlogs in forensic chemistry, a issue that delays justice and strains criminal justice systems globally. Aimed at researchers, scientists, and drug development professionals, it explores the problem's foundation through current data and root cause analysis. The piece then delves into methodological advancements, including rapid screening techniques, green analytical chemistry, and the integration of chemometrics and AI. Furthermore, it covers operational optimizations through digitalization and strategic resource management. Finally, it provides a comparative evaluation of emerging versus traditional technologies, offering a validated roadmap for laboratories to enhance throughput, reduce turnaround times, and improve the reliability of forensic science.

Understanding the Bottleneck: The Scale and Impact of Forensic Casework Backlogs

Forensic science laboratories are grappling with a pervasive and challenging case backlog crisis, which directly impedes the timely administration of justice. This backlog encompasses a wide spectrum of evidence—from DNA samples and toxicological specimens to illicit drugs and arson debris—awaiting analysis. The core of this crisis lies in the disconnect between the increasing demand for forensic services and the analytical capacity of laboratories. Overwhelmed by casework due to rising submissions, limited resources, and reliance on sometimes slower, traditional analytical methods, many labs struggle with efficiency [1] [2]. The consequences are severe: delayed criminal investigations, prolonged wait times for victims seeking justice, and potential risks to public safety as perpetrators may remain unidentified [2].

Quantifying this backlog and understanding its drivers is the first critical step toward developing effective reduction strategies. This article provides a technical support framework, equipping researchers and scientists with advanced methodologies and troubleshooting guides to enhance laboratory throughput and data analysis, thereby directly addressing the backlog in forensic chemistry casework.

Quantitative Data on Processing Delays

While comprehensive, real-time public data on forensic laboratory backlogs is limited, insights from related government systems reveal a widespread issue of processing delays and growing caseloads. The data in this section is illustrative of the types of delays affecting the broader justice system.

TABLE 1: FY2025 U.S. CITIZENSHIP AND IMMIGRATION SERVICES (USCIS) PROCESSING DATA (DATA THROUGH JUNE 2025)

| Form Category | Key Trend | Quantitative Change |

|---|---|---|

| Overall Caseload | Decrease in case completions | Nearly 16% decrease in completions YoY |

| Increase in net backlog | Rose to 5,408,000 cases | |

| Asylum Cases | Increase in processed cases | 397% more affirmative asylum cases completed |

| Increase in denials | 538% more affirmative asylum cases denied | |

| H-1B Petitions | Surge in receipts | 103,211 petitions in June 2025 (highest since April 2019) |

| DACA Population | Decrease in active individuals | Drop of 9,640 individuals between Q2 and Q3 |

| Form I-90 (Green Card Replacement) | Sharp increase in median processing time | Increased by 471% between Jan and June 2025 [3] |

Data from the federal judiciary for the 12-month period ending March 31, 2025, provides additional context on system-wide caseload pressures, which are intrinsically linked to the availability of forensic evidence [4].

Consular and Visa Processing Wait Times

Increased wait times are not isolated to domestic agencies. The Department of State has reported significant fluctuations in consular processing, reflecting broader systemic strains.

TABLE 2: DEPARTMENT OF STATE CONSULAR WAIT TIME INCREASES (JAN - SEP 2025)

| Visa Category | Average Increase in Wait Times | Notes |

|---|---|---|

| Student Visas | 137% | New vetting measures enacted |

| Petition-Based Visas | 77% | Includes various employment-based visas |

| Visitor Visas | 65% | Social media reviews and other vetting implemented |

| Transit/Crew Visas | 25% | Relatively smaller increase [3] |

Advanced Analytical Strategies for Backlog Reduction

Comprehensive Two-Dimensional Gas Chromatography (GC×GC)

Experimental Protocol for GC×GC in Forensic Analysis

GC×GC provides superior separation for complex mixtures compared to traditional 1D GC, making it ideal for non-targeted analysis of forensic evidence like illicit drugs, ignitable liquids, and decomposition odors [1].

- Instrument Setup: Connect a primary column (e.g., a non-polar 30m column) to a secondary column (e.g., a mid-polar 1-2m column) via a thermal or flow modulator. The different stationary phases provide two independent separation mechanisms [1].

- Sample Preparation: Prepare samples according to standard protocols for the evidence type (e.g., liquid-liquid extraction for drugs, headspace sampling for arson debris).

- Method Configuration: Set the modulation period (typically 1-5 seconds) to define the frequency at which effluent from the first column is trapped, focused, and injected onto the second column [1].

- Data Acquisition: Use a fast-detection system such as Time-of-Flight Mass Spectrometry (TOFMS) or a Flame Ionization Detector (FID) to capture the high-resolution data generated. TOFMS is preferred for its fast acquisition rates and ability to deconvolute overlapping peaks [1].

- Data Processing: Employ specialized software to process the three-dimensional data (1D retention time, 2D retention time, signal intensity). Chemometric techniques like Principal Component Analysis (PCA) are often used to interpret complex sample patterns [1] [5].

Troubleshooting Guide: GC×GC

FAQ: What causes wrapping or overcrowding of peaks in the 2D chromatogram?

- Cause & Solution: This is often due to an improperly optimized modulation period. If the period is too long, multiple first-dimension peaks may be co-modulated, leading to overcrowding. Conversely, a period that is too short may not provide enough time for complete separation in the second dimension. Re-optimize the modulation period for the specific sample type. Also, verify that the temperature program for the primary column is appropriately paced [1].

FAQ: How can I improve the sensitivity for trace-level analytes in a complex matrix?

- Cause & Solution: Matrix effects can suppress the signal of trace analytes. Improve sample cleanup procedures prior to injection. Additionally, ensure the modulator is effectively focusing the analyte bands, as this focusing effect enhances signal-to-noise ratios. Using a cryogenic modulator can often provide better focusing compared to flow modulators for certain applications [1].

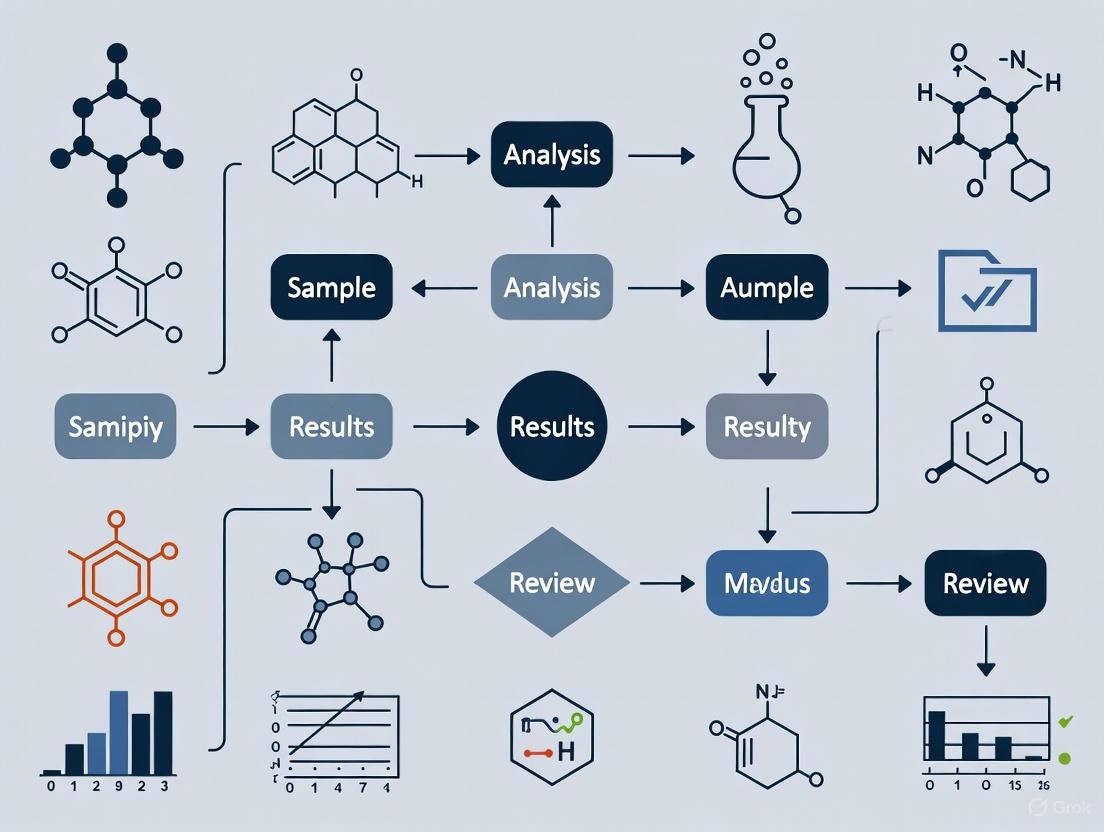

GC×GC Technology Readiness Workflow

The following diagram illustrates the pathway for developing and validating a GC×GC method for courtroom admissibility, based on criteria from the Frye, Daubert, and Mohan standards [1].

Chemometrics for Objective Evidence Analysis

Experimental Protocol for Applying Chemometrics to Spectral Data

Chemometrics uses statistical methods to extract meaningful information from chemical data, reducing human bias and improving the objectivity and throughput of evidence analysis [5].

- Data Collection: Acquire spectral data (e.g., FT-IR, Raman) or chromatographic data from a set of known reference samples and unknown questioned samples. Ensure consistent instrumental parameters across all measurements [5].

- Data Preprocessing: Preprocess the raw data to remove unwanted variations (e.g., baseline drift, noise). Common techniques include:

- Exploratory Data Analysis: Use an unsupervised pattern recognition technique like Principal Component Analysis (PCA) to visualize the natural clustering of samples without prior class assignment. This helps identify outliers and inherent data structure [6] [5].

- Classification Modeling: Build a supervised classification model, such as Linear Discriminant Analysis (LDA) or Partial Least Squares-Discriminant Analysis (PLS-DA), using the known reference samples. This model will define the feature space for different sample classes (e.g., glass from different manufacturers) [5].

- Model Validation: Critically test the model's predictive accuracy using a separate validation set of samples not used in model building. Perform cross-validation to estimate the model's robustness and report its error rate [5].

Troubleshooting Guide: Chemometrics

FAQ: My PCA model shows poor separation between known sample classes. What is wrong?

- Cause & Solution: The spectral features may not be sufficiently distinct, or the preprocessing may be inadequate. First, re-examine the raw spectra for subtle, consistent differences. Try alternative preprocessing techniques (e.g., derivatives for resolving overlapping peaks). If the problem persists, the chosen analytical technique may not be discriminatory enough for your sample types, and an alternative method should be considered [5].

FAQ: How do I prevent overfitting in my classification model?

- Cause & Solution: Overfitting occurs when a model is too complex and learns the noise in the training data rather than the general pattern. It is characterized by high accuracy on training data but poor performance on validation data. To prevent it, use variable selection techniques to reduce the number of input features to the most meaningful ones. Ensure you have a sufficient number of samples per class relative to the number of variables. Always use a separate test set or rigorous cross-validation to assess the model's true performance [5].

Data Fusion for Multi-Sensor Analysis

Experimental Protocol for Low-Level Data Fusion

Data fusion merges raw or preprocessed data from multiple analytical instruments (e.g., Raman spectroscopy and GC-MS) to create a more comprehensive chemical profile of a sample, enhancing the confidence of classification [6].

- Multi-Instrument Data Acquisition: Analyze the same set of samples using two or more complementary analytical techniques. Ensure sample integrity is maintained between analyses.

- Data Preprocessing: Preprocess the raw data from each instrument individually, as described in the chemometrics protocol (e.g., SNV for spectra, alignment for chromatograms).

- Data Concatenation: Fuse the preprocessed data matrices from the different instruments by column-wise concatenation to create a single, combined data matrix. This is known as low-level data fusion.

- Exploratory and Classification Analysis: Analyze the fused data matrix using PCA and other chemometric tools (e.g., PLS-DA). The fused model will leverage the combined chemical information for a more powerful analysis [6].

Troubleshooting Guide: Data Fusion

- FAQ: The data fusion model performs worse than a model from a single instrument. Why?

- Cause & Solution: This can happen if the data blocks from different instruments are on vastly different scales or if one instrument provides largely irrelevant or noisy data for the specific classification problem. Autoscale the fused data matrix before modeling to give equal weight to all variables. Alternatively, use mid-level data fusion, where features are extracted from each instrument's data first, and only the most relevant features are fused, reducing the impact of noise [6].

The Scientist's Toolkit: Key Research Reagent Solutions

TABLE 3: Essential Materials for Advanced Forensic Chemistry Methods

| Item | Function in Forensic Analysis |

|---|---|

| Carbon Quantum Dots (CQDs) | Fluorescent nanomaterials used for sensitive detection and fingerprint enhancement due to their tunable optical properties and high biocompatibility [7]. |

| Heteroatom-Doped CQDs (e.g., N-CQDs) | CQDs doped with nitrogen or sulfur to enhance fluorescence intensity, solubility, and chemical reactivity for improved sensor performance [7]. |

| Surface Passivation Agents (Polymers, Surfactants) | Used to coat CQDs to prevent aggregation, improve dispersion in solvents, and maintain photoluminescent stability for reliable evidence detection [7]. |

| Python-based Forensic-DataFusion-Tool | An open-source software application for merging raw data from multiple sensors, enabling low-level data fusion and exploratory analysis via PCA [6]. |

| Time-of-Flight Mass Spectrometer (TOFMS) | A detector for GC×GC that provides fast acquisition rates necessary to capture narrow peaks and allows for deconvolution of co-eluting analytes [1]. |

| Standardized Reference Materials | Certified materials used for instrument calibration, method validation, and establishing the known error rates required for courtroom admissibility [1] [5]. |

The case backlog in forensic chemistry is a multi-faceted problem demanding innovative solutions. The path forward requires a dual focus: the strategic implementation of high-throughput, definitive analytical technologies like GC×GC and the adoption of objective, data-driven interpretation tools like chemometrics and data fusion. By integrating these advanced protocols into laboratory workflows and rigorously validating them against legal standards, forensic scientists can significantly enhance capacity, reduce turnaround times, and fortify the scientific foundation of evidence presented in court.

Forensic chemistry laboratories are critical hubs for the administration of justice, providing essential data for criminal investigations and legal proceedings. However, these facilities worldwide are grappling with a persistent and growing challenge: the imbalance between rising case submissions and analytical capacity. This article explores the root causes of forensic chemistry backlogs, examining the drivers of increased demand for services alongside the constraints that limit laboratory throughput. By understanding these dynamics, stakeholders can develop targeted, effective strategies for restoring timeliness and efficiency to forensic casework.

The following table summarizes key quantitative data that illustrates the scale and impact of evidence backlogs in forensic laboratories.

Table 1: Quantitative Metrics of Forensic Backlogs and Impacts

| Metric Area | Specific Data Point | Value / Finding | Source Context |

|---|---|---|---|

| Backlog Scale | U.S. Forensic Labs' Annual Funding Shortfall (2019 estimate) | $640 Million [8] | Needs Assessment |

| Backlog Scale | Additional Funding Needed for Opioid Crisis (2019) | $270 Million [8] | Needs Assessment |

| Backlog Scale | NHLS Toxicology Backlog (South Africa) | 40,051 cases [9] | Institutional Report |

| Performance Impact | Increase in DNA Casework Turnaround Times (2017-2023) | 88% [8] | Project FORESIGHT |

| Performance Impact | Increase in Post-Mortem Toxicology Turnaround Times | 246% [8] | Project FORESIGHT |

| Performance Impact | Increase in Controlled Substances Turnaround Times | 232% [8] | Project FORESIGHT |

| Success Story | Louisiana State Police Avg. Turnaround Time Reduction | 291 days to 31 days [8] | Lean Six Sigma Implementation |

| Success Story | Michigan State Police Yield from Backlogged SAKs | 455 CODIS Hits, 127 Serial Assaults Linked [10] | Backlog Testing Initiative |

Frequently Asked Questions (FAQs)

FAQ 1: What exactly is classified as a "backlog" in a forensic chemistry context? There is no single industry-standard definition. A backlogged case is generally considered unprocessed or non-finalized casework that has not been completed within a target timeframe [11]. However, the specific timeframe varies:

- The U.S. National Institute of Justice (NIJ) defines a DNA sample as backlogged if it remains untested 30 days after submission [11].

- Project FORESIGHT, a benchmarking project for forensic labs, defines a backlog as cases unworked for 30 calendar days or more [10].

- Individual laboratories may define backlogs based on their own operational plans, such as cases exceeding target finalization dates for different priority categories [11].

- "Artificial backlogs" also exist, where cases remain active because submitting agencies have not informed the lab that analysis is no longer needed (e.g., due to a plea deal), skewing demand perception [10].

FAQ 2: What are the primary factors driving the increase in submissions to forensic labs? The rise in submissions is multifactorial, driven by legislative, technological, and societal changes:

- Unfunded Legislative Mandates: Many jurisdictions have passed laws requiring the testing of all sexual assault kits (SAKs), often without providing corresponding funding. One U.S. laboratory reported a 150% increase in SAK submissions due to such legislation [10].

- Expanded Applications of Forensic Science: There is growing pressure to apply forensic chemistry analysis to a broader range of cases, including property crimes and cold cases, increasing overall demand [8].

- Improved Forensic Technology: Advances in analytical sensitivity, while beneficial, can create more work. For example, switching to more sensitive Y-STR screening for SAKs yields more male-positive results, referring more cases for full DNA analysis and increasing the workload for downstream chemistry processes [10].

- Complex Data: The rise in digital data from devices and complex evidence creates secondary analysis burdens that strain resources [12].

FAQ 3: What are the key internal and external constraints limiting laboratory capacity? Laboratories face a combination of external resource constraints and internal process inefficiencies.

- Inadequate Funding: Federal grant programs like the Paul Coverdell Forensic Science Improvement Grants face proposed cuts (e.g., a 70% reduction proposed for FY 2026), while the primary DNA-specific funding stream remains underfunded relative to congressional authorization [8].

- Human Resource Challenges: Labs face analyst burnout, attrition to better-paying private sector jobs, and the significant time required to train new employees to competency [8] [10].

- Outdated or Insufficient Infrastructure: Many labs operate with outdated instruments, lack equipment upgrades, or have insufficient laboratory space, hindering efficiency [8] [9].

- Process Inefficiencies: Legacy, paper-based workflows, lack of case triage, and administrative bottlenecks can dramatically slow down throughput [13].

FAQ 4: How do backlogs in forensic chemistry impact the criminal justice system? The consequences are severe and far-reaching:

- Delayed Justice: Court cases are postponed, which can prolong the detention of innocent individuals and leave victims without legal redress [11].

- Public Safety Risk: Each day without a forensic lead allows a recidivist offender to remain free and potentially commit more crimes. Research shows uploading a DNA profile to CODIS represents a societal benefit of over $20,000 per profile [10].

- Erosion of Trust: Long delays undermine public confidence in the criminal justice system and cause trauma for families awaiting results, such as in toxicology cases to determine cause of death [11] [8].

- Increased Costs: Contributors who turn to private laboratories due to public lab delays incur much higher costs [11].

Troubleshooting Guides: Strategic Approaches to Backlog Reduction

Guide 1: Implementing a Systemic Root Cause Analysis

A linear, "mechanistic" approach to backlogs (e.g., just requesting more funding) has proven insufficient. A "systems thinking" approach views the laboratory as a dynamic system within the larger criminal justice system [10].

Objective: To move from treating symptoms to understanding and addressing the root causes of backlog within the forensic laboratory system.

Methodology: The A3 Process This structured problem-solving method uses a single A3-sized paper to document the entire problem-solving journey.

- Step 1: Define the Initial Problem Statement - Clearly describe the problem as it is currently understood (e.g., "Toxicology case turnaround time has increased by 246% over the past 6 years").

- Step 2: Conduct Root Cause Analysis - Use a tool like the 5 Whys to drill down past symptoms to fundamental causes.

- Why? → Cases are waiting too long in the sample queue.

- Why? → The number of submissions has doubled, but the number of instruments has not.

- Why? → Capital budget requests for new instruments have been denied for three consecutive years.

- Why? → The budget office does not have visibility into the link between instrument capacity and case throughput.

- Root Cause → Ineffective communication of operational needs to stakeholders.

- Step 3: Develop Countermeasures - Brainstorm targeted solutions to address the root causes identified.

- Step 4: Create an Implementation Plan - Define actions, owners, and deadlines.

- Step 5: Verify Results and Follow-up - Measure outcomes and ensure the countermeasures are working.

The following diagram visualizes a forensic laboratory as a dynamic system, highlighting key pressure points and feedback loops that contribute to backlogs.

Guide 2: Executing a Workflow Efficiency and Triage Protocol

Inefficient workflows and a "first-in, first-out" case management approach are major contributors to backlogs. Implementing structured triage and process improvement methodologies can dramatically increase throughput.

Objective: To reduce average turnaround times and increase the number of cases processed per analyst by streamlining workflows and prioritizing casework intelligently.

Methodology: Lean Six Sigma for Forensic Chemistry

Phase 1: Case Triage Implementation

- Action: Establish a multi-disciplinary committee (including lab analysts, investigators, and prosecutors) to review incoming cases.

- Protocol: Create a tiered prioritization system. For example:

- Tier 1 (Critical): Homicide, violent sexual assault, cases with imminent court dates.

- Tier 2 (Routine): Property crimes, non-violent felonies.

- Tier 3 (Low Priority/Intelligence): Cases where a suspect has already pled guilty or where the analysis is for intelligence purposes only.

- Outcome: Ensures critical cases move quickly, and low-priority cases do not consume resources needed for serious crimes [12]. Some labs, like Oregon's, have paused DNA testing for property crimes entirely to focus on sexual assault kits [8].

Phase 2: Process Mapping and Waste Identification

- Action: Map the current end-to-end process for a typical toxicology or controlled substances case.

- Protocol: Use value-stream mapping to identify the eight wastes (defects, overproduction, waiting, non-utilized talent, transportation, inventory, motion, extra-processing). Look for bottlenecks like manual data entry, lengthy review steps, or inefficient instrument use.

- Outcome: Visualize the process to pinpoint inefficiencies.

Phase 3: Workflow Redesign

- Action: Implement changes to eliminate identified wastes.

- Protocol: This may include:

- Automation: Introducing automated sample preparation, data processing, or reporting tools [12].

- Batching: Grouping similar analyses to improve instrument utilization.

- Parallel Processing: Creating dedicated units for backlogged samples vs. new submissions, as done by the NHLS in South Africa [9].

- Digital Management: Implementing a Laboratory Information Management System (LIMS) to replace paper-based tracking [13].

The workflow below outlines the key stages in a strategic backlog reduction initiative, from initial assessment to sustained monitoring.

The Scientist's Toolkit: Essential Solutions for Backlog Reduction

Table 2: Research Reagent Solutions for Forensic Laboratory Efficiency

| Tool / Solution Category | Specific Example | Function & Role in Backlog Reduction |

|---|---|---|

| High-Throughput Analytical Instruments | Dedicated backlog analyzers (e.g., LC-MS/MS systems) [9] | Increases sample processing capacity; designated backlog instruments prevent new casework from being disrupted. |

| Laboratory Information Management System (LIMS) | Versaterm LIMS-plus [13] | Digitizes and streamlines case management, evidence tracking, and data organization; eliminates paper-based bottlenecks and improves workflow efficiency. |

| Process Improvement Methodologies | Lean Six Sigma [8] | A structured framework for identifying and eliminating waste in laboratory processes, leading to faster turnaround times and higher throughput. |

| Advanced Data Analysis Tools | Probabilistic Genotyping Software (e.g., STRmix) [8] | Enables complex DNA mixture interpretation, increasing the success rate and efficiency of data analysis from difficult samples. |

| Targeted Grant Funding | Capacity Enhancement and Backlog Reduction (CEBR) Competitive Grants [8] | Provides funding for technical innovation projects (e.g., validating new extraction methods) that expand lab capabilities and efficiency. |

| Workforce & Wellness Solutions | Peer support and clinical wellness resources [13] | Mitigates analyst burnout and improves staff retention by supporting mental well-being, which is crucial for maintaining long-term capacity. |

Troubleshooting Guides and FAQs

Troubleshooting Guide: Addressing Common Backlog Challenges

| Challenge | Root Cause | Recommended Solution | Key Performance Indicator |

|---|---|---|---|

| Increasing DNA Case Backlogs [10] [11] | Linear thinking; lack of a systems approach; unfunded mandates; more successful cases encouraging more submissions [10]. | Adopt systems thinking and the A3 problem-solving method. Define laboratory capacity and implement strategic triage for casework [10] [11]. | Reduction in cases exceeding 30-day processing time [11]; improved cost-per-case efficiency [10]. |

| Inconclusive Results for Marijuana Analysis [14] | Sample degradation over time in backlog (e.g., THC oxidation); improper storage conditions [14]. | Optimize storage conditions to minimize light exposure and environmental fluctuations. Implement rapid screening techniques to reduce holding times [14]. | Percentage of inconclusive results; rate of sample degradation under defined storage conditions. |

| Seized Drugs Casework Overload [15] | High volume of submissions; increasing complexity of substances (e.g., novel psychoactive substances) [15]. | Implement and communicate a clear Efficient Casework Policy (e.g., testing the 3 items for highest potential charges). Strengthen stakeholder relationships to manage expectations [15]. | Turnaround time (e.g., days); backlog size relative to total case intake. |

| Slow Seized Drug Analysis [16] | Use of time-consuming conventional methods (e.g., 30-minute GC-MS run times) [16]. | Develop and validate rapid GC-MS methods with optimized temperature programming to drastically reduce analytical run time [16]. | Analysis time per sample; method detection limits (e.g., μg/mL). |

Frequently Asked Questions (FAQs)

Q1: What qualifies as a "backlog" in a forensic context? There is no single industry standard. Common definitions include [11] [17]:

- The 30-Day Rule: The U.S. National Institute of Justice (NIJ) defines a backlogged case as one not tested within 30 days of receipt [11] [17].

- Laboratory-Specific Targets: Some labs define backlogs based on internal targets, such as cases exceeding 90 days or missing finalisation dates [11].

- Artificial Backlogs: Cases that remain open because submitting agencies have not informed the lab that analysis is no longer needed (e.g., after a plea deal) [10].

Q2: Beyond simple delays, what is the broader impact of forensic backlogs? Backlogs create a negative ripple effect throughout the entire criminal justice system [11]:

- Investigation Delays: Slows down investigative leads, allowing potential recidivist offenders to remain free and commit more crimes [11].

- Courtroom Delays: Causes postponements of trials, disrupting legal processes [11].

- Impact on Victims: Deprives victims, especially in sexual assault cases, of their right to legal redress and prolongs trauma [11].

- Impact on the Accused: Can prolong the detention of innocent individuals falsely accused of crimes [17].

- Financial Burden: Forces contributors (e.g., families awaiting human remains identification) to use costly private laboratories [11].

Q3: How can laboratories balance new analytical challenges with existing heavy caseloads? Success requires a multi-faceted approach focusing on policies, people, and processes [15]:

- Establish Clear Policies: Create an "Efficient Casework Policy" that clearly defines the scope and priority of testing, such as limiting the number of items tested per case to those with the highest potential charges [15].

- Invest in People: Rely on well-trained, dedicated staff who are encouraged to think innovatively and iteratively improve processes [15].

- Enhance Communication: Maintain ongoing, proactive communication with all stakeholders (law enforcement, prosecutors) to manage demand and align expectations [15].

Q4: What is a major systemic risk when forensic labs are not independent? Forensic labs under prosecutorial or law enforcement control face inherent risks of bias that can undermine scientific integrity [18]. Institutional pressure can lead to:

- Shaping reports to meet prosecution needs [18].

- Prioritizing cases based on prosecutorial requests over scientific triage [18].

- A lack of transparency and equal access for defense attorneys, which is crucial for challenging evidence [18]. Best practices recommend structural independence for forensic labs to mitigate these risks [18].

Experimental Protocols & Data

Quantitative Data on Backlog and Efficiency

Table 1: Backlog Definitions and Impacts

| Category | Metric / Definition | Impact / Statistic |

|---|---|---|

| Backlog Definition | U.S. NIJ Standard (30 days) [11] [17] | Provides a benchmark for federally funded labs. |

| Project FORESIGHT (30+ calendar days) [10] | Consensus-based definition used for lab benchmarking. | |

| Backlog Impact | DNA Database Hits (Michigan State Police) [10] | 1,595 processed SAKs yielded 455 CODIS hits and 127 serial sexual assault identifications. |

| Societal Benefit (Doleac) [10] | Each DNA profile uploaded to CODIS provides a financial benefit of \$20,096 to society. | |

| Unsubmitted Evidence (NIJ) [17] | 14% of unsolved homicides and 18% of unsolved rapes had evidence not submitted for analysis. |

Table 2: Efficient Capacity and Output Metrics

| Laboratory Function | Efficient Capacity / Policy | Outcome / Metric |

|---|---|---|

| Seized Drugs (Kentucky) [15] | Policy: Test 3 items for highest potential charges. | Maintained a 10- to 15-day turnaround time; handles ~30,000 submissions/year with ~30 chemists. |

| Laboratory Efficiency (FORESIGHT) [10] | Performance on or near the industry average total cost curve. | Indicates efficient performance; high Cases/FTE is a critical component of lab efficiency. |

| Rapid GC-MS Screening [16] | Method reduction from 30 min to 10 min total analysis time. | LOD for Cocaine improved from 2.5 μg/mL to 1 μg/mL; RSDs < 0.25% for stable compounds. |

Detailed Methodology: Rapid GC-MS Screening for Seized Drugs

This protocol is adapted from the research by Askar et al. (2025) to create a rapid screening method for seized drugs, significantly reducing analysis time and helping to alleviate backlogs [16].

1. Instrumentation and Materials

- GC-MS System: Agilent 7890B Gas Chromatograph coupled with 5977A Single Quadrupole Mass Spectrometer [16].

- Column: Agilent J&W DB-5 ms (30 m × 0.25 mm × 0.25 μm) [16].

- Carrier Gas: Helium, 99.999% purity, constant flow rate of 2.0 mL/min [16].

- Autosampler: Agilent 7693 autosampler [16].

- Software: Agilent MassHunter and Enhanced ChemStation for data acquisition and processing. Spectral libraries (e.g., Wiley, Cayman) for compound identification [16].

- Standards and Reagents: Certified reference materials for target drugs (e.g., Cocaine, Heroin, MDMA, synthetic cannabinoids). Methanol (99.9%) for extractions [16].

2. Optimized Rapid GC-MS Method Parameters

- Injection Volume: 1 μL (split mode, split ratio 10:1) [16].

- Injector Temperature: 250°C [16].

- Oven Temperature Program:

- Total Run Time: 10 minutes [16].

- MSD Parameters:

3. Sample Preparation (Liquid-Liquid Extraction)

- For Solid Samples:

- Grind tablets/capsules to a fine powder.

- Weigh ~0.1 g into a test tube.

- Add 1 mL of methanol, sonicate for 5 minutes, and centrifuge.

- Transfer the clear supernatant to a GC-MS vial for analysis [16].

- For Trace/Residue Samples:

- Swab surfaces of interest (e.g., digital scales, syringes) with a methanol-moistened swab.

- Immerse the swab tip in 1 mL of methanol and vortex vigorously.

- Transfer the extract to a GC-MS vial for analysis [16].

4. Method Validation The rapid method should be validated for [16]:

- Precision and Reproducibility: Calculate Relative Standard Deviations (RSDs) for retention times and peak areas. The target for stable compounds is RSD < 0.25% [16].

- Limit of Detection (LOD) and Quantification (LOQ): Determine for key substances. The optimized method achieved an LOD for Cocaine of 1 μg/mL, a 50% improvement over the conventional method [16].

- Carryover: Assess by running a blank solvent after a high-concentration sample [16].

- Application to Real Samples: Test the method with 20+ adjudicated case samples to confirm its utility and accuracy in a real forensic context [16].

Visualizations

Integrated Backlog Reduction Strategy

Rapid GC-MS Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Rapid Drug Screening

| Item | Function / Application | Specification / Note |

|---|---|---|

| GC-MS System | High-specificity separation and identification of chemical compounds in a sample. | Single quadrupole mass spectrometer; requires constant helium carrier gas [16]. |

| DB-5 ms Column | A commonly used GC column for separating a wide range of organic compounds, including drugs. | 30 m × 0.25 mm × 0.25 μm dimensions [16]. |

| Certified Reference Materials | Provide known standards for method development, calibration, and positive identification of unknown drugs. | Purity-certified standards for target analytes (e.g., Cocaine, MDMA, Fentanyl) [16]. |

| Methanol (HPLC Grade) | Solvent for liquid-liquid extraction of drugs from solid and trace evidence samples. | 99.9% purity to minimize interference [16]. |

| Spectral Libraries | Digital databases of known mass spectra used for automated preliminary identification of unknowns. | Commercial libraries (e.g., Wiley, Cayman) are essential [16]. |

Innovative Analytical Techniques to Accelerate Forensic Drug Analysis

Implementing Rapid, Non-Destructive Screening with Portable Spectroscopic Tools

Forensic chemistry laboratories face a critical challenge: overwhelming casework backlogs delay justice, prolong investigations, and strain public safety resources. For instance, some firearms case backlogs exceed 950 requests with wait times of over 370 days [19]. Similarly, DNA evidence backlogs persist due to increasing demands, limited resources, and outdated technology [2]. Rapid, non-destructive spectroscopic tools present a transformative strategy for reducing these backlogs. These techniques—including Raman, UV-VIS, and NMR spectroscopy—enable quick, on-site screening without consuming or altering evidence. This technical support center provides forensic scientists and researchers with essential troubleshooting guides, experimental protocols, and FAQs to successfully implement these portable tools, thereby accelerating casework and enhancing forensic capacity.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My portable spectrometer will not calibrate, or is providing very noisy data. What should I check?

- Confirm Power and Connection: Ensure the AC power supply is connected, the power switch is ON, and the lamp indicator LED is steady green. For Bluetooth models, connect to a USB power adapter, not a computer port [20].

- Verify Software: Use the latest version of your data-collection software (e.g., LabQuest App, Logger Pro) [20].

- Check Calibration Mode: Always calibrate in the correct mode (e.g., Absorbance or %T) using the appropriate solvent for your experiment [20].

- Inspect Windows and Lenses: Dirty windows in front of the fiber optic or direct light pipe can cause drift and poor analysis. Clean them regularly as part of maintenance [21].

Q2: Why are my quantitative results for carbon, phosphorus, or sulfur consistently below expected levels? This often indicates a problem with the instrument's vacuum pump. The vacuum purges the optic chamber to allow low-wavelength light (essential for measuring these elements) to pass through. A malfunctioning pump causes atmosphere to enter the chamber, reducing intensity for these key elements [21].

- Symptoms: Watch for pump noises (gurgling, extreme loudness), smoke, heat, or oil leaks. Address these immediately [21].

Q3: The absorbance readings on my UV-VIS spectrophotometer are unstable or non-linear above 1.0. Is this normal? Yes, this is a common limitation. For reliable and stable readings, ensure your measurements fall within the absorbance range of 0.1 to 1.0 [20]. Samples with absorbance significantly above 1.0 can lead to unstable, non-linear data.

Q4: I suspect my analysis is being affected by contaminated samples. How can I prevent this?

- Sample Preparation: Always use a new grinding pad to remove plating, carbonization, or protective coatings before analysis.

- Handling: Do not touch samples with bare hands, as skin oils can contaminate them. Avoid quenching samples in water or oil [21].

Q5: How accurate are rapid, non-destructive methods compared to traditional lab techniques? When properly calibrated and validated, techniques like NIR spectroscopy and electronic noses are a reliable alternative to traditional studies [22]. However, their accuracy depends on robust calibration with large datasets and careful control of variables like operator training, sample collection, and environmental conditions [22] [23] [24].

Troubleshooting Common Spectrometer Issues

The table below summarizes common problems, their symptoms, and solutions for portable spectroscopic tools.

Table 1: Troubleshooting Guide for Common Spectrometer Issues

| Problem Area | Symptoms | Possible Causes | Corrective Actions |

|---|---|---|---|

| Vacuum Pump [21] | Low readings for C, P, S; Pump is noisy, hot, smoking, or leaking oil. | Pump failure; Air in optic chamber. | Service or replace pump immediately; Monitor pump performance indicators. |

| Dirty Optical Windows [21] | Analysis drift; Poor or inconsistent results. | Dust, debris on fiber optic or light pipe windows. | Clean windows regularly with appropriate materials as part of scheduled maintenance. |

| Poor Probe Contact [21] | Loud analysis sound; Bright light from pistol face; Inconsistent or no results. | Improper surface contact; Argon flow too low. | Increase argon flow to 60 psi; Use seals for convex surfaces; Consult technician for custom head. |

| Contaminated Sample [21] | Inconsistent or unstable results; White, milky-looking burn. | Skin oils, quench oils, or coatings on sample. | Re-grind sample with a new pad; Avoid touching sample or quenching in water/oil. |

| General Calibration/Noise [20] | Failure to calibrate; Noisy, unusable data. | Incorrect setup, old software, or faulty calibration. | Update software; Re-calibrate with correct solvent; Ensure stable power source. |

Detailed Experimental Protocols for Forensic Backlog Reduction

Protocol 1: Rapid Drug Screening via Quantitative NMR (qNMR)

Objective: To quickly identify and quantify unknown pharmaceutical compounds in seized materials, providing a non-destructive initial screen to triage cases for further confirmatory testing.

Principle: qNMR leverages the direct proportionality between the area under an NMR signal and the number of nuclei generating it. This allows for quantification without compound-specific reference standards [25].

Materials:

- Portable or benchtop NMR spectrometer

- Deuterated solvent (e.g., D₂O, CDCl₃)

- Internal standard (e.g., caffeine, 3-(trimethylsilyl)propionic acid)

- NMR tubes

Procedure:

- Sample Preparation: A small, non-destructive portion of the seized material is dissolved in a deuterated solvent. A precise amount of a known internal standard is added to the solution [25].

- Data Acquisition: The sample is placed in the NMR spectrometer. A

^1HNMR spectrum is acquired with parameters set to ensure full relaxation of nuclei between pulses for accurate quantification (e.g., long relaxation delays) [25]. - Data Analysis:

- Identify a unique, non-overlapping signal for the target analyte and the internal standard.

- Integrate the area under these peaks.

- Calculate the concentration of the unknown analyte using the formula:

n_analyte = (I_analyte / I_standard) * (N_standard / N_analyte) * n_standardwherenis moles,Iis integrated peak area, andNis the number of nuclei contributing to the signal [25].

Application in Backlog Reduction: This method rapidly provides both structural identity and quantitative data from a single, non-destructive test, allowing forensic labs to quickly screen and prioritize large volumes of drug-related evidence.

Protocol 2: On-Site Material Analysis using Portable Spectroscopy

Objective: To perform rapid, non-destructive elemental analysis of evidence (e.g., gunshot residue, paint chips, metals) at a crime scene or in the lab to expedite initial investigations.

Principle: Optical Emission Spectrometry (OES) identifies elements by exciting a sample and measuring the characteristic light wavelengths emitted.

Materials:

- Portable OES spectrometer

- Argon gas supply (for purging)

- Cleaning materials for the probe lens

Procedure:

- System Setup: Ensure the argon flow rate is correctly set (typically >43 psi) to create a stable environment for the spark [21]. Power on the spectrometer and allow it to initialize.

- Probe Contact: Press the probe firmly and evenly against the sample surface. Inadequate contact can lead to loud noises, bright light escape, and invalid results [21].

- Measurement: Trigger the analysis. The instrument will spark the sample and collect the emitted light spectrum.

- Data Interpretation: Review the elemental composition report generated by the instrument's software. Compare results against reference databases.

Application in Backlog Reduction: Enables immediate triage of evidence at crime scenes, helping investigators focus resources on the most probative items and reducing the number of items sent to the central lab for more time-consuming analysis.

Workflow Diagrams for Troubleshooting and Validation

The following diagram illustrates a logical workflow for diagnosing and resolving common spectrometer issues, helping to minimize instrument downtime.

Spectrometer Troubleshooting Workflow

This diagram outlines the experimental and data validation workflow crucial for implementing robust non-destructive screening methods.

Method Development & Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Essential Materials for Rapid Spectroscopic Analysis

| Item | Function/Application | Key Considerations |

|---|---|---|

| Deuterated Solvents (e.g., D₂O) [25] | Solvent for NMR spectroscopy that does not produce interfering signals. | Required for qNMR protocols; Purity is critical for accurate results. |

| Internal Standards (e.g., caffeine, TSP) [25] | Reference compound with known concentration for quantitative NMR (qNMR). | Must be chemically stable and have a non-overlapping NMR signal with the analyte. |

| Quartz Cuvettes [20] | Hold liquid samples for UV-VIS spectrophotometry. | Required for UV range measurements; More transparent than plastic. |

| Argon Gas [21] | Inert gas used to purge optic chambers in OES to prevent interference from air. | Purity is essential; Contaminated argon leads to inconsistent results. |

| Certified Reference Materials (CRMs) [24] | Samples with known composition and properties for instrument calibration and method validation. | Vital for building accurate, defensible chemometric models in forensic work. |

Advancing High-Throughput Chromatography and Mass Spectrometry Methods

This technical support center provides troubleshooting guides and FAQs to help researchers address common challenges in chromatography and mass spectrometry. The guidance is framed within strategies to enhance throughput and reduce backlogs in forensic chemistry casework.

Frequently Asked Questions (FAQs)

Q1: How can I improve the throughput of my LC-MS methods for seized drug analysis? Higher throughput in LC-MS is achieved by optimizing the entire workflow. Advances in mass spectrometry have increased LC throughput requirements by 40-70% [26]. Key strategies include using columns with micropillar arrays for uniform flow and high reproducibility, adopting microfluidic chip-based columns for exceptional scalability, and optimizing detector settings for faster data acquisition and more sensitive readings [26].

Q2: What are the common pitfalls in HPLC(MS) method development and how can I avoid them? A common mistake is not setting clear target specifications for chromatographic parameters like retention, resolution, and efficiency prior to validation [27]. To avoid this, use a systematic approach grounded in the fundamental principles of separation science. Ensure robust method performance by carefully designing mobile phases, selecting appropriate stationary phase chemistry, and using correct detector parameters [27] [28].

Q3: My GC-MS system is facing throughput bottlenecks. What solutions are available? For GC-MS, consider implementing sustainable method development, such as evaluating hydrogen or nitrogen as alternative carrier gases to helium [29]. Also, leverage software tools and AI-developed mass spectral databases to maximize unknown compound identification confidence, which streamlines analysis [29]. Developing miniaturized sample preparation techniques can also significantly speed up the overall workflow [29].

Q4: How can our lab balance new, complex analyses with existing high caseloads? Success relies on having well-trained staff who continually iterate and improve processes [15]. Technologically, focus on systems that offer greater efficiency and reduced consumption. This includes instruments with lower power and mobile phase usage, which cut costs and align with sustainability goals [26]. Implementing standardized, pre-configured methods can also reduce errors and speed up adoption for routine analyses [26].

Q5: What role does AI play in modern method development? AI and machine learning (ML) are emerging tools for automating system calibration and optimizing performance [26]. However, a purely data-driven approach may require too many chromatograms to be practical for all applications. The most promising are hybrid approaches that combine ML tools with extensive separation science knowledge for tasks like Quantitative Structure-Retention Relationship (QSRR) modeling and peak integration [28].

Troubleshooting Guides

Guide 1: Poor Chromatographic Resolution

Problem: Inadequate separation of peaks, leading to co-elution and inaccurate quantification. This is critical in forensic toxicology for distinguishing complex mixtures.

| Possible Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| Incorrect Mobile Phase | Check pH, buffer concentration, and organic solvent ratio. | Re-design eluent for better selectivity; use quality solvents and salts [27]. |

| Unsuitable Stationary Phase | Review column chemistry (e.g., C18, HILIC, phenyl). | Select a stationary phase with different selectivity for your analytes [27]. |

| Column Overload | Inject a lower sample concentration. | Optimize sample loading or use a column with higher capacity [27]. |

Experimental Protocol for Systematic Optimization:

- Define Targets: Set specifications for retention factor (k > 1.5), resolution (Rs > 2.0), and peak asymmetry [27].

- Scouting Gradient: Run a fast, broad gradient (e.g., 5-95% organic in 10 min) to estimate optimal conditions.

- Fine-Tuning: Adjust pH and organic modifier to maximize resolution of critical pairs.

- Robustness Testing: Test small variations in temperature (±5°C) and flow rate (±0.2 mL/min) to ensure method reliability [27].

Guide 2: MS Signal Inhibition in Complex Samples

Problem: Reduced analyte signal due to ion suppression from matrix effects, common in seized drug extracts or biological samples.

| Possible Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| Sample Matrix | Post-column infuse analyte and observe signal drop. | Improve sample cleanup; use selective solid-phase extraction (SPE) [27]. |

| Inadequate Sample Prep | Review preparation protocol for removal of salts, lipids, proteins. | Dilute and re-inject; develop more rigorous sample cleaning procedures [27]. |

| Source Contamination | Inspect cone and ion transfer tube for debris. | Clean ion source; increase collision energy to break up non-volatile salts [27]. |

Guide 3: Long GC-MS Analysis Cycles

Problem: Lengthy run times and slow method development hinder high-throughput screening of controlled substances.

Workflow for Faster GC-MS: The following diagram illustrates an optimized workflow to accelerate GC-MS analysis and method development.

Experimental Protocol for Fast GC-MS:

- Sample Prep: Develop miniaturized techniques to reduce processing time [29].

- Carrier Gas: Use hydrogen as a carrier gas for faster optimal linear velocities compared to helium [29].

- Method Parameters: Implement shorter, narrower-bore columns and faster temperature ramps.

- Data Analysis: Use software tools for automated deconvolution of overlapping spectra and AI-assisted compound identification to minimize manual review time [29].

Essential Materials and Reagents

The table below details key reagents and materials crucial for developing robust, high-throughput chromatographic methods.

| Item | Function & Importance |

|---|---|

| Ultra-Pure Solvents & MS-Grade Additives | Reduces background noise and ion source contamination; essential for sensitive and robust LC-MS operation [27]. |

| Modern Stationary Phases (e.g., C18, Charged Surface) | Provides improved peak shape, stability, and selectivity for "sticky" compounds like biopharmaceuticals or complex natural products [26]. |

| Alternative Carrier Gases (e.g., Hydrogen for GC) | Offers a sustainable and often more efficient alternative to helium, improving throughput and mitigating supply chain issues [29]. |

| Quality SPE Sorbents | Critical for efficient sample clean-up; removes matrix interferents that cause ion suppression/enhancement in MS detection [27]. |

| AI-Supported Spectral Databases | Increases confidence and speed in unknown compound identification by comparing against a large, curated library of spectra [29]. |

Integrating Advanced Methods to Reduce Forensic Backlogs

Forensic laboratories are dynamic systems where inputs (case submissions) must be balanced with processing capacity (analytical throughput) to prevent backlog hysteresis, where delays become self-reinforcing [10]. The high-throughput strategies detailed in this guide directly increase laboratory capacity.

Systematic Workflow for Backlog Reduction: Implementing a structured approach from sample intake to reporting is key to managing forensic backlogs.

- Policy-Driven Sample Triage: Forensic laboratories can implement efficient casework policies, such as testing the three items that represent the highest potential charges in a case, rather than every submitted sample. This directly reduces the analytical workload without compromising judicial outcomes [15].

- Adoption of High-Throughput Technologies: As shown in the troubleshooting guides, leveraging faster GC-MS and LC-MS systems, automated software, and streamlined sample preparation cuts down the time required per analysis [29] [26].

- Continuous Improvement and Stakeholder Communication: Maintaining open communication with stakeholders (e.g., law enforcement, prosecutors) ensures laboratory services meet actual needs and helps eliminate "artificial backlogs" from unnecessary analyses [15] [10].

Leveraging Chemometrics and Machine Learning for Automated Data Interpretation

Frequently Asked Questions (FAQs)

FAQ 1: What are the most suitable machine learning algorithms for analyzing non-linear spectroscopic data from complex forensic mixtures?

For non-linear spectroscopic data (e.g., from NIR, IR, Raman), traditional linear methods like PLS may be insufficient. Instead, the following algorithms are recommended due to their ability to model complex, non-linear relationships [30]:

- Support Vector Machine (SVM) with non-linear kernels: Uses kernel functions (e.g., Radial Basis Function) to map data into higher-dimensional spaces, enabling robust classification and quantification even with noisy, overlapping spectral data [30].

- Random Forest (RF): An ensemble method that constructs multiple decision trees, offering strong generalization, reduced overfitting, and robustness against spectral noise and collinearity. It also provides feature importance rankings [30].

- Extreme Gradient Boosting (XGBoost): A advanced boosting algorithm that builds trees sequentially to correct errors, offering high computational efficiency and state-of-the-art predictive accuracy for complex, nonlinear relationships [30].

- Deep Neural Networks (DNNs): With many hidden layers, DNNs can automatically extract hierarchical features from raw or minimally preprocessed data, excelling in pattern recognition for large spectral datasets [30].

FAQ 2: How can we address the "black box" nature of complex AI models to ensure results are interpretable and defensible in a forensic context?

The interpretability of AI models is a critical challenge. Solutions involve using Explainable AI (XAI) frameworks [31] to make model decisions transparent [30]:

- Leverage Model-Specific Interpretability Tools: For models like Random Forest, directly use the built-in feature importance rankings to identify which wavelengths contribute most to predictions [30].

- Apply Post-Hoc Explanation Methods: Use techniques like SHAP (SHapley Additive exPlanations) or Grad-CAM to generate sensitivity maps, which help identify the specific spectral regions that most influenced a model's output for a given sample [30]. This preserves chemical interpretability, a central goal for forensic scientists [30].

FAQ 3: Our lab faces a significant data backlog. What funding opportunities exist to enhance capacity through automation and advanced data analysis techniques?

The DNA Capacity Enhancement for Backlog Reduction (CEBR) Program is a key federal funding source administered by the Bureau of Justice Assistance (BJA) [2].

- Purpose: It provides critical funding to publicly funded forensic laboratories to process, analyze, and interpret forensic DNA evidence more effectively [2]. This includes:

- Reducing backlog cases by increasing testing capacity [2].

- Supporting personnel hiring and training [2].

- Upgrading technology and equipment, including the adoption of automation and advanced testing techniques to streamline workflows [2].

- Enhancing database capabilities for CODIS (Combined DNA Index System) [2].

- FY2025 Funding: The application deadlines for FY2025 CEBR funding are October 22, 2025 (Grants.gov) and October 29, 2025 (JustGrants) [2].

Troubleshooting Guides

Issue 1: Poor Model Performance and Generalization on New Spectral Data

This is often caused by overfitting, where a model learns noise and specific features of the training data instead of the underlying pattern.

| Probable Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Insufficient Training Data | - Check dataset size.- Perform learning curve analysis. | - Use Generative AI to create synthetic spectral data to balance and augment datasets [30].- Collect more experimental data. |

| Inadequate Data Preprocessing | - Visually inspect raw spectra for baseline drift, scatter effects, or noise. | - Apply standard preprocessing: Standard Normal Variate (SNV), multiplicative scatter correction (MSC), Savitzky-Golay derivatives, or normalization [30]. |

| Suboptimal Model Hyperparameters | - Use validation set performance to assess model tuning. | - Perform systematic hyperparameter tuning using grid or random search [31]. |

Issue 2: Failure to Integrate or Fuse Data from Multiple Analytical Techniques

Integrating data from different sources (e.g., spectroscopy and chromatography) is complex but can provide a more comprehensive chemical profile.

| Probable Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Data Scale and Type Mismatch | - Review the scale, units, and dimensionality of each data block. | - Perform data fusion methods, which can be facilitated by advanced AI frameworks [30].- Use pre-fusion normalization and scaling. |

| Lack of a Unified Model Architecture | - Evaluate if separate models are built for each data type. | - Implement AI models capable of handling multi-modal data. Deep Learning approaches, such as multi-input neural networks, are particularly well-suited for this task [30] [31]. |

Experimental Protocols for Forensic Backlog Reduction

Protocol 1: Rapid Screening of Forensic Samples Using Spectroscopy and Chemometrics

Objective: To quickly classify unknown forensic samples (e.g., drug seizures, trace evidence) using Raman spectroscopy and a pre-trained machine learning model to prioritize cases for further analysis.

Materials and Reagents:

- Raman spectrometer

- Sample slides or vials

- Standard reference materials for model calibration

- Pre-processing software (e.g., Python with SciKit-Learn, MATLAB)

Step-by-Step Methodology:

- Sample Preparation: Present the unknown forensic sample to the spectrometer according to standard operating procedures.

- Spectral Acquisition: Collect Raman spectra from multiple points on the sample to account for heterogeneity.

- Data Preprocessing: Process all acquired spectra.

- Apply Savitzky-Golay smoothing to reduce high-frequency noise.

- Perform baseline correction to remove fluorescence effects.

- Use Standard Normal Variate (SNV) scaling to minimize light scatter effects [30].

- Feature Extraction (Optional): For traditional ML models, use Principal Component Analysis (PCA) to reduce data dimensionality and highlight major sources of variance [30]. If using Deep Learning, this step may be automated by the network.

- Classification: Input the preprocessed spectrum (or its features) into a pre-trained classifier. A Support Vector Machine (SVM) or Random Forest model is often effective for this task [30].

- Interpretation: Review the model's predicted class (e.g., "Cocaine," "Fentanyl," "Inconclusive") and, if available, the confidence score or explanation map from an XAI tool to support the finding.

Protocol 2: Quantitative Analysis of a Target Analyte in a Complex Mixture

Objective: To accurately determine the concentration of an active pharmaceutical ingredient (API) in a seized drug sample using NIR spectroscopy and a multivariate calibration model.

Materials and Reagents:

- NIR spectrometer

- A set of calibration standards with known concentrations of the target API

- Chemometric software for model development (e.g., PLS Toolbox, Python)

Step-by-Step Methodology:

- Calibration Set Design: Prepare a representative set of standards that cover the expected concentration range and matrix variations of casework samples.

- Reference Analysis: Determine the "true" concentration of the API in each standard using a primary reference method (e.g., GC-MS).

- Spectral Acquisition: Collect NIR spectra for all calibration standards.

- Model Training:

- Preprocess the calibration spectra (e.g., SNV, derivatives).

- Use the preprocessed spectra and reference concentrations to build a Partial Least Squares (PLS) regression model [30]. For highly non-linear data, consider XGBoost or a shallow Neural Network [30].

- Validate the model using a separate test set or cross-validation to ensure robustness.

- Prediction on Unknowns: Acquire and preprocess the spectrum of the unknown casework sample. Input it into the trained PLS (or other) model to obtain a concentration prediction.

- Reporting: Report the predicted concentration along with the model's estimate of uncertainty (e.g., prediction interval).

Data Presentation

Table 1: Performance Comparison of ML Algorithms for Spectral Data Classification [30]

| Algorithm | Key Strengths | Typical Forensic Applications | Considerations for Backlog Reduction |

|---|---|---|---|

| PLS Regression | Robust for linear data, handles collinearity, well-understood. | Quantitative analysis of drug purity, alcohol concentration. | Fast and reliable for well-characterized, linear systems. |

| Support Vector Machine (SVM) | Effective in high-dimensional spaces, good for non-linear data with kernels. | Drug classification, fiber identification, explosive residue detection. | Performs well with limited samples; parameter tuning is key. |

| Random Forest (RF) | Reduces overfitting, provides feature importance, handles non-linearity. | Sample authentication, origin tracing, complex mixture analysis. | Robust against noise; interpretable via feature rankings. |

| XGBoost | High predictive accuracy, efficient, handles complex non-linearities. | Predicting drug properties, complex sample classification. | Often top performance; requires careful tuning and more data. |

| Deep Neural Networks (DNN) | Automates feature extraction, superior for very complex patterns and large datasets. | Hyperspectral image analysis, advanced pattern recognition. | Requires large datasets and computational resources; use XAI. |

Table 2: Common Data Issues and AI-Driven Solutions in Forensic Chemometrics [30] [31]

| Data Challenge | Impact on Casework | AI/Chemometric Solution |

|---|---|---|

| High-Dimensionality (e.g., 1000s of wavelengths) | Complex, slow analysis; "curse of dimensionality." | PCA for exploratory analysis and data compression. PLS for regression with correlated variables [30]. |

| Spectral Non-Linearity | Poor accuracy with linear models. | SVM with non-linear kernels, Random Forest, XGBoost, or Neural Networks [30]. |

| Small or Unbalanced Datasets | Models fail to generalize; rare classes are missed. | Generative AI to create synthetic spectra for data augmentation [30]. |

| Model Interpretability ("Black Box") | Results are not defensible in court. | Explainable AI (XAI) methods like SHAP and LIME to identify decisive spectral regions [30] [31]. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Chemometric Analysis

| Item | Function in Chemometric Workflow |

|---|---|

| Standard Reference Materials | Certified materials used to calibrate instruments and validate machine learning models, ensuring analytical accuracy and traceability. |

| Chemometric Software Packages (e.g., PLS_Toolbox, The Unscrambler) | Specialized software providing a suite of algorithms (PCA, PLS, PCR) for multivariate calibration and classification of spectral data. |

| Programming Libraries (e.g., Scikit-learn, TensorFlow, PyTorch) | Open-source libraries in Python/R that provide tools for data preprocessing, machine learning (SVM, RF, XGBoost), and deep learning model development [30]. |

| Hyperspectral Imaging Systems | Advanced instruments that collect spatial and spectral data simultaneously, enabling detailed analysis of heterogeneous forensic samples via AI. |

| Validated Synthetic Data | Artificially generated spectral data created by Generative AI models, used to augment training datasets and improve model robustness where real data is scarce [30]. |

� Workflow Visualization

Automated Data Interpretation Workflow for Forensic Backlog Reduction

Adopting Green Analytical Chemistry for Faster, Solvent-Free Sample Prep

Forensic laboratories worldwide face a critical challenge: persistent casework backlogs that delay justice, impede investigations, and allow offenders to remain at large [11] [10]. These backlogs represent a dynamic systems problem, often exacerbated by traditional analytical methods that are time-consuming, resource-intensive, and environmentally harmful [32]. Green Analytical Chemistry (GAC) emerges as a strategic solution, offering pathways to not only reduce environmental impact but also dramatically increase processing efficiency. By minimizing or eliminating toxic solvents, reducing procedural steps, and implementing innovative techniques, forensic laboratories can accelerate sample preparation, decrease turnaround times, and more effectively manage their caseloads [32] [33]. This technical support center provides practical methodologies and troubleshooting guidance for implementing these sustainable approaches within forensic chemistry contexts.

Core Principles of Green Analytical Chemistry

Green Analytical Chemistry applies the broader concepts of green chemistry specifically to analytical practices. The fundamental goal is to make the entire analytical workflow—from sample preparation to final analysis—as environmentally benign as possible while maintaining, or even enhancing, analytical performance [32] [34].

Key principles driving GAC implementation include:

- Source Reduction: Preventing waste generation by using smaller sample volumes, reducing reagents, and eliminating unnecessary procedural steps [32].

- Use of Safer Solvents: Replacing hazardous solvents like chloroform and benzene with non-toxic, biodegradable alternatives such as water, ionic liquids, or bio-based solvents [32] [35].

- Energy Efficiency: Minimizing energy consumption through equipment optimization and ambient-temperature procedures [32].

- Miniaturization: Scaling down analyses to dramatically reduce consumption of samples and reagents [32].

- Real-time Analysis: Moving analysis to the field to eliminate transportation, storage, and complex preservation requirements [32].

The table below contrasts traditional methods with green analytical approaches:

| Principle | Traditional Method | Green Analytical Method |

|---|---|---|

| Sample Size | Milliliters or more | Microliters to Nanoliters |

| Solvent Choice | Hazardous solvents (e.g., chloroform, benzene) | Non-toxic alternatives (e.g., water, ethanol, ionic liquids) |

| Waste Generation | High volume of hazardous waste | Minimal waste, often non-hazardous |

| Energy Use | High (e.g., heating, vacuum pumps) | Low (e.g., room temperature methods) |

| Safety Profile | High-risk due to toxic chemicals | Low-risk, improved lab safety [32] |

Green Sample Preparation Methodologies: Detailed Experimental Protocols

Solid-Phase Microextraction (SPME)

SPME is a solvent-free extraction technique that integrates sampling, extraction, concentration, and sample introduction into a single step [32] [34].

Protocol for Analyzing Volatile Compounds in Seized Drug Evidence:

Equipment Preparation:

- SPME fiber assembly (select fiber coating based on target analytes; PDMS for non-polar, PA for polar compounds)

- Gas Chromatograph-Mass Spectrometer (GC-MS) system

- Sample vials with septa

Sample Preparation:

- Place a small, representative portion of solid evidence (≤10 mg) in a 10 mL headspace vial.

- For liquid samples, use 1-2 mL aliquot.

- Add internal standard if required for quantitative analysis.

- Seal vial immediately with PTFE/silicone septum cap.

Extraction Process:

- Condition SPME fiber according to manufacturer specifications (typically 250°C for 30 minutes).

- Heat sample vial to appropriate temperature (60-80°C) using a heating block or oven.

- Inject SPME fiber through septum and expose to sample headspace for 10-30 minutes.

- Retract fiber and withdraw from vial.

Sample Introduction:

- Introduce SPME fiber into GC injector port (220-250°C).

- Desorb analytes for 1-5 minutes in splitless mode.

- Retract fiber and begin chromatographic separation.

Method Validation:

QuEChERS (Quick, Easy, Cheap, Effective, Rugged, and Safe)

QuEChERS methodology utilizes minimal solvent volumes compared to traditional extraction procedures, making it ideal for forensic screening of complex matrices [33].

Protocol for Seized Drug Analysis in Complex Matrices:

Equipment and Reagents:

- Centrifuge tubes (50 mL)

- Acetonitrile (ACN) - HPLC grade

- QuEChERS extraction salts: 4g MgSO₄, 1g NaCl, 1g sodium citrate, 0.5g disodium hydrogen citrate sesquihydrate

- Dispersive SPE kits for clean-up: 150 mg MgSO₄, 25 mg primary secondary amine (PSA) sorbent per mL extract

Extraction Procedure:

- Homogenize representative sample and weigh 2 g into a 50 mL centrifuge tube.

- Add 10 mL acetonitrile and shake vigorously for 1 minute.

- Add extraction salt mixture and shake immediately for 1 minute to prevent salt clumping.

- Centrifuge at ≥3000 RCF for 5 minutes.

Clean-up Process:

- Transfer 1 mL of upper ACN layer to a d-SPE tube containing 150 mg MgSO₄ and 25 mg PSA sorbent.

- Shake for 30 seconds to ensure proper interaction.

- Centrifuge at ≥3000 RCF for 5 minutes.

- Transfer supernatant to an autosampler vial for analysis.

Instrumental Analysis:

- Analyze using GC-MS or LC-MS/MS with appropriate calibration standards.

- Monitor for matrix effects and implement matrix-matched calibration if necessary [33].

Supercritical Fluid Extraction (SFE)

SFE uses supercritical CO₂ as the extraction fluid, eliminating organic solvent use while providing efficient extraction [35].

Protocol for Natural Product Analysis in Forensic Botany Cases:

Equipment Setup:

- SFE system with CO₂ pump, co-solvent pump, extraction vessel, and collection chamber

- High-purity CO₂ source

- Modifier solvents (e.g., ethanol)

Extraction Parameters:

- Place 1-5 g of dried, ground plant material in extraction vessel.

- Set temperature to 40-60°C and pressure to 200-400 bar.

- Set CO₂ flow rate to 1-3 mL/min.

- For polar compounds, add 5-15% ethanol as modifier.

- Perform dynamic extraction for 15-30 minutes.

Sample Collection:

- Depressurize extract into collection vessel cooled to 4°C.

- Rinse collection vessel with appropriate solvent and make to volume.

- Analyze directly using chromatographic techniques [35].

The Scientist's Toolkit: Essential Reagents and Materials

| Reagent/Material | Function in Green Sample Prep | Forensic Application Examples |

|---|---|---|

| SPME Fibers | Solventless extraction and concentration of analytes | Drug analysis, fire debris, explosive residues |

| QuEChERS Kits | Rapid extraction and clean-up with minimal solvent | Seized drug screening, toxicology in complex matrices |

| Supercritical CO₂ | Non-toxic replacement for organic solvents | Cannabis analysis, herbal drug preparations |

| Ionic Liquids | Tunable, non-volatile solvents for extraction | Metal analysis, DNA extraction, explosive residues |

| Deep Eutectic Solvents | Biodegradable, inexpensive solvent systems | Natural product extraction, pharmaceutical analysis |

| Bio-based Solvents | Renewable solvents from plant sources | General replacement for petroleum-based solvents [32] [33] [35] |

Workflow Visualization: Implementing Green Methods

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Are green chemistry methods as accurate and reliable as traditional techniques?

Yes. While proper validation is crucial, modern green analytical techniques have been demonstrated to provide results that are just as accurate and reliable as traditional methods, often with added benefits like increased speed and reduced cost [32]. For forensic applications, all methods must undergo rigorous validation following established guidelines to ensure courtroom admissibility.

Q2: What is the easiest way to start transitioning our forensic lab to greener practices?

Begin with simple changes like minimizing solvent use in routine procedures, exploring microscale techniques for common assays, and properly sorting and recycling lab waste [32]. Implementing QuEChERS for seized drug screening or SPME for volatile compound analysis represent excellent starting points with minimal equipment investment.

Q3: How do we validate new green methods to meet forensic standards?

Validation should follow established protocols (e.g., SWGDRG guidelines) assessing parameters including precision, accuracy, limit of detection, limit of quantitation, selectivity, robustness, and linearity. Compare results from green methods with validated traditional methods using certified reference materials and real case samples [36].

Q4: Can green methods truly help reduce our laboratory's backlog?

Yes. The Palm Beach County Sheriff's Office implemented a biological screening laboratory using efficient methods and reduced average turnaround time from 153 days to 80 days—a 35% decrease—while significantly reducing their backlog [37]. Similar efficiency gains are achievable in drug chemistry units through streamlined green approaches.

Q5: What are the cost implications of transitioning to green methodologies?

While some techniques require initial equipment investment, most green methods generate significant operational cost savings through reduced solvent consumption, less waste disposal, decreased purchasing costs, and improved analyst efficiency [32] [37]. The long-term financial benefits typically outweigh initial setup costs.

Troubleshooting Common Issues

Problem: Poor Extraction Efficiency with SPME

- Possible Cause: Incorrect fiber coating selection for target analytes.

- Solution: Match fiber polarity to analyte polarity (PDMS for non-polar, PA for polar compounds). Adjust extraction time and temperature to optimize recovery.

Problem: Matrix Effects in QuEChERS

- Possible Cause: Incomplete clean-up of complex forensic samples.

- Solution: Optimize d-SPE sorbent combinations (e.g., add C18 for lipid removal, GCB for pigment removal). Use matrix-matched calibration standards to compensate for residual effects.

Problem: Inconsistent Recoveries with Supercritical Fluid Extraction

- Possible Cause: Inadequate modifier percentage for polar analytes.

- Solution: Systematically optimize modifier type (methanol, ethanol) and percentage (5-20%). Ensure proper moisture control in samples.

Problem: Method Validation Failures

- Possible Cause: Insufficient method optimization before validation.

- Solution: Conduct full design of experiments (DOE) to identify critical method parameters and establish robust method operating ranges before beginning formal validation.

Quantitative Impact Assessment

Implementation of green chemistry methods directly addresses forensic backlogs by dramatically reducing processing times. The table below demonstrates efficiency gains achievable through green approaches:

| Efficiency Metric | Traditional Methods | Green Methods | Improvement |

|---|---|---|---|

| Sample Preparation Time | 2-3 hours per sample | 15-30 minutes per sample | 75-87% reduction |

| Solvent Consumption | 50-250 mL per sample | 0-15 mL per sample | 70-100% reduction |

| Analyst Hands-on Time | 45-60 minutes | 5-10 minutes | 80-90% reduction |

| Waste Generation | 50-500 mL per sample | 0-30 mL per sample | 40-100% reduction |

| Total Turnaround Time | 153 days (average for some DNA cases) | 80 days (after green implementation) | 48% reduction [37] |

Method Selection Guide

Adopting Green Analytical Chemistry represents a paradigm shift in forensic science—from viewing sustainability as an added burden to recognizing it as a strategic tool for enhancing efficiency, reducing costs, and addressing persistent casework backlogs [11] [10]. The methodologies and guidance provided in this technical support center demonstrate that green practices are not merely environmentally responsible but are operationally superior to traditional approaches. As forensic laboratories face increasing caseloads with limited resources, integrating these solvent-free and minimal-waste techniques becomes essential for meeting the demands of modern justice systems. The future of forensic chemistry lies in methods that are simultaneously analytically rigorous, forensically sound, environmentally conscious, and strategically efficient.