Strategies for Mitigating Contextual Bias in Forensic Laboratory Workflows: From Theory to Practice

This article provides a comprehensive analysis of contextual bias in forensic science, detailing its pervasive effects across disciplines from toxicology to DNA analysis.

Strategies for Mitigating Contextual Bias in Forensic Laboratory Workflows: From Theory to Practice

Abstract

This article provides a comprehensive analysis of contextual bias in forensic science, detailing its pervasive effects across disciplines from toxicology to DNA analysis. It explores the psychological foundations of cognitive bias, including System 1 and System 2 thinking frameworks, and presents empirically-validated mitigation strategies such as Linear Sequential Unmasking-Expanded (LSU-E), blind verification, and case management protocols. Drawing on recent international surveys and case studies, the content addresses implementation barriers, expert fallacies, and validation frameworks through ISO/IEC 17025 accreditation. Designed for forensic researchers, scientists, and laboratory managers, this resource offers practical guidance for enhancing methodological rigor, reducing subjective error, and improving the reliability of forensic conclusions in both research and casework applications.

Understanding the Pervasiveness and Psychology of Forensic Contextual Bias

Defining Cognitive and Contextual Bias in Forensic Science

Troubleshooting Guides

Guide 1: Unexpected Influence of Contextual Information on Analytical Results

Problem: Forensic analysis conclusions appear to be swayed by knowledge of case background information (e.g., suspect confessions, eyewitness accounts, or evidence from other domains) rather than being based solely on the scientific evidence.

Diagnosis Steps:

- Audit Case Documentation: Review case notes and reports for mentions of task-irrelevant information such as a suspect's criminal history, an investigator's presumption of guilt, or results from other forensic analyses [1].

- Check Information Flow: Determine when contextual information was received. Analyses are more vulnerable to bias if examiners were exposed to potentially biasing information before reaching their own scientific conclusions [2] [3].

- Compare with Standards: Verify if the analysis deviated from standard operating procedures (SOPs), for example, by skipping certain tests or confirmatory steps based on expectations [1].

Solutions:

- Immediate Action: Document all information received, including when it was received and its potential influence. Re-analyze the evidence, if possible, using a "blinded" protocol where this contextual information is withheld [3].

- Long-Term Protocol Change: Advocate for the implementation of Linear Sequential Unmasking (LSU) or Linear Sequential Unmasking-Expanded (LSU-E). This protocol controls the sequence and timing of information release to examiners, ensuring they have the necessary data for analysis but are protected from irrelevant contextual information until after their initial assessment is complete [2] [4] [3].

Guide 2: Recurring "Bias Blind Spot" Among Laboratory Staff

Problem: Forensic examiners acknowledge that cognitive bias is a general issue but deny or are unaware of their own susceptibility to it, a phenomenon known as the "bias blind spot" [2] [5].

Diagnosis Steps:

- Conduct a Self-Assessment Survey: Use anonymized surveys to gauge staff understanding of cognitive bias concepts and their perceived personal susceptibility. Past surveys have shown that many examiners are not properly trained about cognitive bias and maintain a bias blind spot [2] [6].

- Review Error and Near-Miss Reports: Analyze casework where initial conclusions were later revised. Check if exposure to contextual information was a factor, indicating a potential bias effect that was not initially recognized [4].

Solutions:

- Immediate Action: Implement mandatory education and training that specifically addresses the six expert fallacies [4] [5]:

- The fallacy that only unethical people are biased.

- The fallacy that only incompetent people are biased.

- The "Expert Immunity" fallacy.

- The "Technological Protection" fallacy.

- The "Bias Blind Spot" fallacy.

- The "Illusion of Control" fallacy.

- Long-Term Protocol Change: Introduce blind verifications as a standard quality control procedure. A second examiner verifies the results without knowledge of the first examiner's findings or any contextual case information, ensuring an independent assessment [4] [3].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between cognitive bias and contextual bias in a forensic setting?

A1: Cognitive bias is an umbrella term describing the various natural, often unconscious, mental shortcuts that can lead to incorrect judgments. Contextual bias is a specific type of cognitive bias where task-irrelevant background information—such as a suspect's confession, evidence from other experts, or an investigator's beliefs—unduly influences the collection, perception, or interpretation of forensic evidence [2] [3] [1]. Essentially, contextual bias is a primary mechanism through which cognitive bias manifests in forensic science.

Q2: Aren't objective, instrument-based disciplines like toxicology or DNA analysis immune to these biases?

A2: No. While the use of instrumentation provides a layer of objectivity, human decision-making is still involved in operating the instrumentation, interpreting the results, and deciding which tests to perform. Empirical research has demonstrated that even experts in these "objective" disciplines are vulnerable to contextual bias [6] [1] [5]. For example, a toxicologist who knows a deceased individual had a history of heroin use might decide to forego a broad screening test, potentially missing relevant compounds [1].

Q3: If I am aware of cognitive bias, can't I just use willpower to avoid it in my analysis?

A3: This belief is known as the "Illusion of Control" fallacy. Cognitive biases operate subconsciously, so awareness alone is insufficient to prevent them [4] [3]. Relying on willpower is not a reliable mitigation strategy. Effective mitigation requires structural changes to the workflow, such as blinding protocols, sequential unmasking, and blind verification, which are designed to prevent exposure to biasing information in the first place [7] [3].

Q4: What is the single most effective step a laboratory can take to mitigate contextual bias?

A4: There is no single silver bullet, but a highly effective strategy is the adoption of case managers and Linear Sequential Unmasking-Expanded (LSU-E) protocols [2] [4] [3]. A case manager acts as a filter, reviewing all incoming information and providing the examiner with only that which is deemed analytically relevant at the appropriate time. LSU-E provides a structured framework for deciding what information is released and when, based on its biasing power, objectivity, and relevance [4] [3].

Quantitative Data on Contextual Bias

The table below summarizes key findings from an empirical survey of forensic toxicology practitioners in China, illustrating the very real impact of contextual information on decision-making.

Table 1: Survey Data on Contextual Bias in Forensic Toxicology (n=200) [6] [1]

| Survey Aspect | Key Finding | Implication |

|---|---|---|

| Deviation from Standard Process | Most participants made decisions deviating from standard procedures under a biasing context. | Contextual information can lead to faster, simpler, but non-standard analytical pathways. |

| Familiarity with Bias Concept | Participants showed a low level of familiarity with the concept and nature of contextual bias. | A lack of training and awareness is a significant vulnerability in laboratory practice. |

| Communication with Investigators | Close contact with police investigators was common; some had a dual role as investigator and examiner. | Organizational structure can directly facilitate the flow of potentially biasing information. |

| Perception of Task-Relevance | There was a general opinion that all available case information should be considered in analysis. | A cultural norm exists that conflates having more information with better analysis, rather than recognizing its potential to bias. |

Experimental Protocols for Bias Research

Protocol 1: Testing for Contextual Bias Using Paired Case Studies

Objective: To empirically measure the effect of task-irrelevant contextual information on forensic decision-making.

Methodology:

- Stimuli Development: Create two versions of a hypothetical forensic case (e.g., a toxicology or fingerprint case). The "context" version includes extraneous, potentially biasing information (e.g., "the suspect has confessed"). The "no-context" version is identical but omits this information [6] [1].

- Participant Recruitment: Recruit forensic practitioners from the target discipline. Participants should be randomly assigned to evaluate one version of the case.

- Task: Participants analyze the case evidence and report their conclusions and the steps they would take.

- Data Analysis: Compare the outcomes (e.g., conclusions, steps skipped, tests ordered) between the two groups. A statistically significant difference indicates the contextual information biased the results [1].

Protocol 2: Implementing and Validating a Linear Sequential Unmasking-Expanded (LSU-E) Workflow

Objective: To integrate a structured information management protocol into a laboratory workflow and assess its impact on reducing cognitive bias.

Methodology:

- Worksheet Development: Create an LSU-E worksheet to be used for each case. This worksheet should list all available information and require the examiner/case manager to rate each piece for its Relevance, Objectivity, and Biasing Power before the analysis begins [4] [3].

- Sequential Information Release: Based on the ratings, the protocol dictates the order in which information is released to the examiner. Essential, low-bias information is provided first to allow for an initial analysis. High-bias information is provided later or withheld entirely [4].

- Blind Verification: After the primary analysis, a second examiner performs a verification. This verification should be conducted blindly, without knowledge of the first examiner's results or the contextual information [3].

- Validation: Track key metrics pre- and post-implementation, such as the rate of conclusive versus inconclusive findings, inter-examiner agreement, and the frequency of contextual information being documented as influential [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Protocols for Bias-Mitigated Forensic Research

| Tool / Protocol | Function | Application in Workflow |

|---|---|---|

| Linear Sequential Unmasking-Expanded (LSU-E) | A structured framework to control the flow of information to examiners, minimizing premature exposure to biasing context. | Used at the case intake and assignment phase to plan the sequence of analysis [4] [3]. |

| Blind Verification | A quality control procedure where a second examiner independently verifies results without knowledge of the initial findings or contextual details. | Applied after the primary analysis is complete to ensure objectivity and independence [4] [3]. |

| Case Manager | A role or system dedicated to filtering incoming case information and acting as a liaison between investigators and examiners. | Serves as a "firewall" to prevent contextual contamination before the analytical phase begins [2] [3]. |

| Evidence Line-ups | Presenting several known-innocent samples alongside the suspect sample during comparative analyses to prevent confirmation bias. | Used in pattern-matching disciplines (e.g., fingerprints, firearms) to counteract inherent assumptions of a single-suspect comparison [3]. |

| Pre-Analytical Worksheet | A documented pre-analysis plan where examiners define their evaluation criteria and sequence of operations before exposure to reference materials. | Helps commit to an objective methodology and reduces the temptation to adjust criteria to fit a desired outcome [3]. |

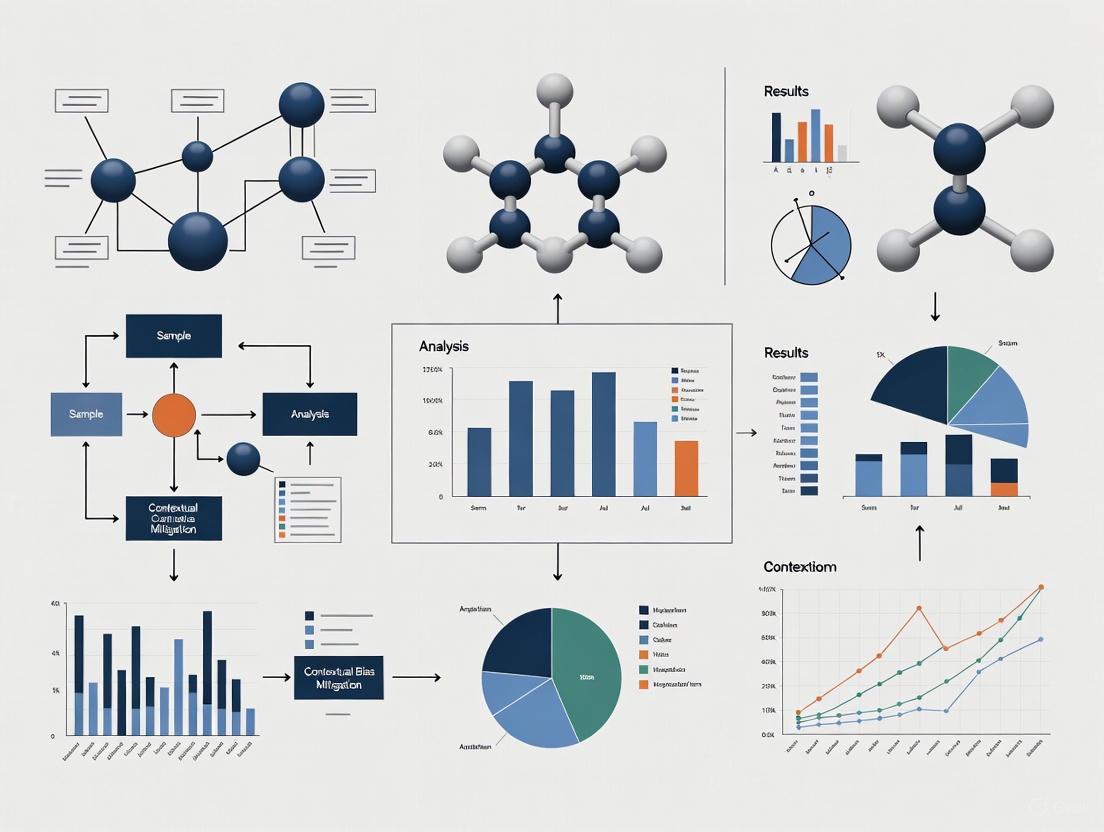

Workflow Diagrams

Cognitive Bias Mitigation Protocol

FAQ: Core Concepts and Definitions

What are System 1 and System 2 thinking? System 1 and System 2 are two distinct modes of cognitive processing introduced by Daniel Kahneman [8]. Their key characteristics are summarized below [5] [9] [8]:

| Feature | System 1 (Fast Thinking) | System 2 (Slow Thinking) |

|---|---|---|

| Speed | Fast, automatic, instantaneous | Slow, deliberate, effortful |

| Effort | Low or no effort, effortless | High effort, requires conscious attention |

| Control | Unconscious, intuitive, involuntary | Conscious, analytical, controlled |

| Process | Relies on heuristics (mental shortcuts) | Relies on logical rules and reasoning |

| Role | Gut feelings, snap judgements, pattern recognition | Complex problem-solving, critical evaluation |

Why is understanding these systems critical for forensic and drug development researchers? Expert decision-making is vulnerable to the cognitive shortcuts (heuristics) of System 1, which can introduce significant bias into analytical results [5] [10]. In forensic science, ostensibly objective data can be affected by bias driven by contextual, motivational, and organizational factors [5]. In drug development, machine learning models used to predict outcomes can be skewed by various forms of bias in historical data, affecting both financial value and patient safety [11]. Mitigating these biases requires structured, external strategies that engage the analytical power of System 2 [5].

What is the relationship between System 1 thinking and cognitive bias? System 1 thinking operates using heuristics to make efficient snap judgements [9]. While useful in daily life, these shortcuts can lead to systematic errors in scientific and clinical settings [5]. For example:

- Confirmation Bias: The tendency to seek, interpret, and favor information that confirms pre-existing beliefs [9] [10]. Once System 1 forms an initial belief, it becomes difficult to change, leading to "tunnel vision" [9].

- Representativeness Heuristic: Judging the probability that a person or item belongs to a group based only on how well it matches a stereotype, while ignoring base rate statistics [9].

FAQ: Bias Identification and Troubleshooting

What are common cognitive biases I might encounter in the laboratory? Researchers should be vigilant for the following common biases [10]:

| Bias | Description | Potential Impact in the Lab |

|---|---|---|

| Confirmation Bias | Selectively gathering or weighting evidence that supports an initial hypothesis while neglecting contradictory evidence. | Interpreting ambiguous data to support expected outcomes; dismissing anomalous results as "noise." |

| Base Rate Neglect | Ignoring or misusing the underlying prevalence of a condition or event in the population. | Over- or under-estimating the significance of a finding by failing to account for how common it truly is. |

| Hindsight Bias | Overestimating the predictability of an outcome after it is already known. | Influencing retrospective data analysis or audits, making it harder to learn from past unexpected results. |

| Allegiance Bias | A subtle form of confirmation bias where an expert's opinion is swayed by financial incentives or the side that retained them. | Compromising objectivity in settings where funding or partnership interests are present. |

I consider myself an ethical, competent expert. Am I still vulnerable to these biases? Yes. Vulnerability to cognitive bias is a human attribute and does not reflect a person's character or competence [5]. Experts often hold several "fallacies" that increase their risk, including [5]:

- The Ethical Immunity Fallacy: Believing only unethical practitioners are biased.

- The Competence Fallacy: Believing bias is only a result of incompetence.

- The Expert Immunity Fallacy: Belieiving expertise itself shields one from bias.

- The Bias Blind Spot: Perceiving others, but not oneself, as vulnerable to bias.

How can I tell if my data interpretation is being influenced by System 1 biases? Be alert to these warning signs in your workflow:

- Feeling Certainty Too Quickly: A strong, intuitive conclusion forms before all data is systematically analyzed [9].

- Discounting Discrepancies: Dismissing or explaining away results that don't fit the expected pattern without rigorous investigation [10].

- Seeking Only Confirmatory Evidence: Designing analyses or tests primarily to prove a hypothesis rather than to challenge it [9].

Experimental Protocols for Bias Mitigation

This section provides detailed methodologies for key experiments and procedures cited in bias mitigation research.

Protocol 1: Implementing Linear Sequential Unmasking-Expanded (LSU-E) LSU-E is a procedural method designed to minimize contextual bias by sequencing analytical tasks and controlling information flow [12] [13].

- Objective: To ensure key analytical judgments are made before exposure to potentially biasing contextual information (e.g., suspect history, other evidence).

- Materials: Case materials, standardized reporting forms, an independent case manager.

- Procedure:

- Step 1: Initial Analysis. The examiner performs all initial analyses using only the essential, non-biasing information required (e.g., a questioned sample and a set of reference samples).

- Step 2: Documentation. The examiner documents their initial findings, conclusions, and the confidence level in a preliminary report before proceeding.

- Step 3: Controlled Information Revelation. A case manager, who is fully informed of the case context, then reveals specific, pre-determined pieces of relevant information to the examiner in a structured sequence.

- Step 4: Integrated Analysis. After each piece of new information is revealed, the examiner re-evaluates their findings and notes any changes or reaffirmations.

- Validation: This protocol has been successfully piluted in forensic document and bloodstain pattern analysis, demonstrating reduced subjectivity and enhanced reliability [12] [13].

Protocol 2: The Case Manager Model This model separates informational functions within the laboratory to insulate examiners from unnecessary contextual information [13] [14].

- Objective: To create a barrier between investigators (who possess full case context) and forensic examiners (who perform analytical tasks).

- Materials: Modified case submission forms, a designated case manager role.

- Procedure:

- Step 1: Case Intake. The case manager receives all case information from investigators or attorneys.

- Step 2: Information Filtering. The case manager reviews the information and filters out all details not strictly necessary for the analytical examination (e.g., suspect confessions, prior criminal record).

- Step 3: Task Assignment. The case manager provides the "context-stripped" evidence and a specific analytical task to the examiner.

- Step 4: Result Reporting. The examiner returns their findings to the case manager, who then integrates them back into the full case context.

- Validation: Found and Ganas (as cited in [14]) successfully implemented this system using modified forms and email notices, effectively insulating document examiners from biasing information.

Protocol 3: Blind Verification This is a quality control procedure where a second examiner independently verifies the results of the first without exposure to the first examiner's conclusions or the biasing context [13].

- Objective: To independently replicate key judgments and ensure they are robust and not influenced by the initial examiner's potential biases.

- Materials: The same evidence samples used in the initial analysis.

- Procedure:

- Step 1: Initial Examination. The first examiner completes their analysis, which may or may not be blind to context.

- Step 2: Blind Re-examination. The original evidence is submitted to a second examiner for a completely independent analysis. This second examiner is blinded to the first examiner's findings and to any potentially biasing contextual information.

- Step 3: Comparison. The conclusions of both examiners are compared. Any discrepancies are resolved through a structured process, such as a conference or review by a third senior examiner.

The Scientist's Toolkit: Key Research Reagent Solutions

Essential materials and procedural "reagents" for conducting robust, bias-aware research.

| Tool / Solution | Function in Mitigating Bias |

|---|---|

| Linear Sequential Unmasking (LSU) | A procedural "reagent" that sequences information flow to protect core analytical judgments from contamination by contextual information [12] [13]. |

| Case Manager Protocol | An organizational "buffer solution" that filters out unnecessary and potentially biasing information before it reaches the analyst [13] [14]. |

| Blind Verification | A quality control "assay" that tests the robustness of an initial finding by having it independently replicated in a blinded manner [13]. |

| Pre-Documented Findings | A methodological "fixative" that locks in initial impressions and confidence levels before subsequent information or peer pressure can influence them [12]. |

| Cognitive Bias Awareness Training | A foundational "primer" that makes individuals and teams aware of the inherent vulnerabilities of System 1 thinking and common expert fallacies [5] [10]. |

| Standardized Reporting Forms | A "scaffolding" tool that structures the documentation of results, forcing consideration of alternative hypotheses and ensuring consistent evaluation criteria across cases [12]. |

Workflow and Relationship Visualizations

Diagram 1: Integrated Bias Mitigation Workflow. This diagram illustrates a combined protocol integrating the Case Manager Model and Linear Sequential Unmasking, with an optional Blind Verification step for critical findings.

Diagram 2: System 1 and System 2 Interaction in Analysis. This diagram shows the competition between the two cognitive systems when processing data. Unmitigated, System 1 often dominates, leading to potential bias. Structured protocols are designed to force the engagement of System 2 for more reliable outcomes.

FAQs: Understanding Bias Contamination

What is contextual bias in forensic science? Contextual bias occurs when a forensic examiner's judgment is unconsciously influenced by task-irrelevant information about the case. This is a form of "cognitive contamination" where extraneous details—such as a suspect's criminal record, eyewitness identifications, or other evidence—can affect how evidence is collected and evaluated. It is not a result of unethical behavior or incompetence, but rather a natural function of human cognition where the brain uses shortcuts in ambiguous situations [4] [2].

What empirical evidence demonstrates confirmation bias in forensic disciplines? A systematic review of 29 studies across 14 forensic disciplines found robust evidence of confirmation bias effects [15]. The research shows that forensic examiners' conclusions can be influenced by:

- Knowledge of case-specific information about the suspect or crime scenario (9 of 11 studies showed this effect)

- The way reference materials are presented (4 of 4 studies)

- Knowledge of a previous examiner's decision (4 of 4 studies) [15]

What are the real-world consequences of forensic confirmation bias? Forensic errors have led to wrongful convictions and lasting injustices:

- Brandon Mayfield: Wrongfully implicated in the 2004 Madrid train bombings due to a fingerprint misidentification, despite having no connection to Spain [16] [17]. Multiple FBI examiners confirmed the erroneous match, demonstrating how cognitive bias can affect even experienced professionals [4].

- Josiah Sutton: Served 4 years in prison for rape after Houston Crime Lab analysts misidentified his DNA. The lab was later found to have systemic issues including cross-contamination and human error [16].

- Toxicology Errors: In the District of Columbia, breath alcohol analyzers were miscalibrated 20-40% too high for 14 years before discovery, affecting thousands of cases [18].

Can technology eliminate cognitive bias in forensic analysis? No. The "technological protection fallacy" incorrectly assumes that technology, algorithms, or AI will completely resolve subjectivity. These systems are still built, programmed, and interpreted by humans, and can even amplify existing biases if not properly designed and monitored [4] [17]. Technological tools can help reduce bias but cannot eliminate it entirely.

Troubleshooting Guides: Mitigating Bias in Laboratory Workflows

Problem: Cross-Contamination and Error in DNA Analysis

Issue: DNA evidence has been misinterpreted due to laboratory error and cross-contamination, leading to wrongful convictions.

Empirical Case Study: The Josiah Sutton case demonstrated how laboratory errors and misinterpretation can have severe consequences. The Houston Crime Lab misidentified Sutton's DNA as matching evidence from a rape case, leading to his wrongful conviction. An independent review later revealed the DNA never actually matched, exposing systemic failures in the lab's procedures [16].

Solution Protocol: Linear Sequential Unmasking-Expanded (LSU-E) This methodology sequences analytical tasks to ensure key judgments are made before exposure to potentially biasing information:

- Document all initial observations from the evidence sample before any comparisons

- Record all relevant features and measurements objectively

- Make initial assessments without reference to suspect samples

- Only then compare with known reference samples

- Blind verification by a second examiner unaware of the first examiner's conclusions [4] [19]

Table: DNA Analysis Error Patterns and Detection

| Error Type | Case Example | Detection Method | Consequence |

|---|---|---|---|

| Sample Contamination | Houston Crime Lab | Independent audit | Wrongful conviction; lab shutdown |

| Misinterpretation of Results | Josiah Sutton case | Technical review | 4 years wrongful imprisonment |

| Systemic Quality Failures | Multiple cases | External oversight | Widespread case reviews required |

Problem: Cognitive Bias in Fingerprint Analysis

Issue: Even highly trained fingerprint examiners can erroneously match prints when exposed to contextual biasing information.

Empirical Case Study: The Brandon Mayfield case represents a classic example of contextual bias in fingerprint analysis. Despite Mayfield's fingerprint only partially resembling the one found in Madrid, multiple FBI examiners—including a highly respected supervisor—confirmed the erroneous match. Investigators, eager for a suspect, forced the evidence to fit their theory [16]. Verifiers who knew the initial conclusion made by their esteemed colleague unconsciously assumed "identification" was correct [4].

Solution Protocol: Case Manager Model with Blind Verification This approach separates case information management from analytical functions:

- Implement a case manager who receives all case information and evidence

- Case manager prepares materials for examiners, filtering out potentially biasing task-irrelevant information

- Examiners conduct analysis using only the information needed for their technical tasks

- Blind verification by independent examiner unaware of initial findings

- Resolution process for any discrepant results between examiners [13]

Enhanced Fingerprint Analysis Workflow with Bias Mitigation

Problem: Systemic Errors in Toxicology

Issue: Toxicology errors—including calibration problems, traceability issues, and discovery violations—have persisted for years or even decades before detection, typically being discovered by external sources rather than internal quality controls [18].

Empirical Case Studies:

- District of Columbia: Breath alcohol analyzers miscalibrated 20-40% too high for 14 years before discovery by a new employee [18]

- Washington State: Incorrect formula in spreadsheet used to calculate reference material concentration; fraud involving false certifications about who performed testing [18]

- Maryland: Laboratory used single-point calibration curves for blood alcohol analysis from 2011-2021, despite this method being scientifically inappropriate as it doesn't span the entire concentration range of interest [18]

Solution Protocol: Comprehensive Quality Assurance with Third-Party Oversight

- Multi-point calibration spanning entire concentration range of interest

- Regular independent audits of calibration protocols and reference materials

- Digital data retention with complete traceability of all adjustments and calculations

- Mandatory disclosure protocols for all exculpatory evidence

- Whistleblower protections for staff reporting quality issues [18]

Table: Toxicology Error Patterns and Reform Strategies

| Error Category | Example Cases | Duration Before Detection | Recommended Reform |

|---|---|---|---|

| Calibration Errors | DC, Maryland, Pennsylvania | 10-14 years | Multi-point calibration, independent audits |

| Traceability Issues | Alaska, Washington State | Years to decades | Digital data retention, full transparency |

| Discovery Violations | Multiple jurisdictions | Varies | Mandatory disclosure portals, whistleblower protections |

| Reference Material | Minnesota, New Jersey | 2+ years | Proper assignment protocols, validation |

Improved Toxicology Quality Assurance Pathway

Table: Key Research Reagent Solutions for Bias-Resistant Forensic Workflows

| Tool/Technique | Primary Function | Application Context | Evidential Support |

|---|---|---|---|

| Linear Sequential Unmasking (LSU/LSU-E) | Sequences information exposure to prevent premature conclusions | All comparative forensic disciplines | Empirical studies show reduced contextual bias effects [4] [13] |

| Case Manager Model | Separates contextual information management from analytical functions | Complex multi-evidence cases | Pilot programs demonstrate improved reliability [4] [13] |

| Blind Verification | Independent confirmation without exposure to previous conclusions | All subjective interpretation tasks | Research shows 4 of 4 studies found bias from knowledge of previous decisions [15] |

| Multiple Comparison Samples | Prevents narrow focus on single suspect | Pattern evidence disciplines | 4 of 4 studies show procedure affects examiner conclusions [15] |

| Context Management Protocols | Systematically limits exposure to task-irrelevant information | Laboratory settings | Supported by PCAST (2016) and NAS (2009) recommendations [4] [18] |

Frequently Asked Questions (FAQs) on Cognitive Bias

1. What are the six common fallacies about bias that experts believe? Many experts operate under six key fallacies about bias, which can increase their vulnerability to its effects [20] [21]:

- The Ethical Fallacy: The mistaken belief that bias is an ethical issue or a sign of dishonesty. In reality, cognitive bias impacts honest and dedicated professionals due to brain architecture, not a lack of character [20] [21].

- The "Bad Apples" Fallacy: The tendency to blame errors on individuals rather than recognizing that cognitive bias is a widespread, systemic issue not linked to incompetency [20] [21].

- The Expert Immunity Fallacy: The incorrect belief that experts are impartial and immune to biases. In fact, expertise can sometimes make professionals more susceptible due to their use of mental shortcuts and expectations from past experiences [20] [21].

- The Technological Protection Fallacy: The assumption that technology, automation, or machine learning eliminates bias. However, these systems are built, programmed, and interpreted by humans, so biases can still be introduced [20] [21].

- The Bias Blind Spot: The tendency for people to believe they are less affected by cognitive biases than others [20] [21].

- The Illusion of Control: The belief that one can overcome biases through mere willpower. This can be counterproductive, as increased effort to suppress a bias may sometimes amplify its effect due to "ironic processing" [20] [21].

2. How can bias affect the work of a forensic scientist or researcher? Bias can infiltrate multiple stages of an analysis [20] [21]:

- What the data are: Biases can influence how data is sampled, collected, or what is considered relevant and what is dismissed as noise.

- The actual results: Decisions on testing strategies, how an analysis is conducted, and when to stop testing can be biased.

- The conclusions: The final interpretation of results can be skewed to align with pre-existing expectations or contextual information.

3. What are some specific cognitive biases I should be aware of in my work? Several cognitive biases are particularly relevant in scientific and analytical work [22]:

- Confirmation Bias: The tendency to seek out or favor information that confirms one's pre-existing beliefs or hypotheses.

- Anchoring Bias: Relying too heavily on the first piece of information encountered (the "anchor") when making decisions.

- Overconfidence Bias: The tendency to have excessive confidence in one's own judgments or abilities.

- Selection Bias: Systematically including or excluding certain data or samples, leading to skewed conclusions.

- Base Rate Neglect: Ignoring general statistical information (base rates) in favor of specific, case-specific information [23].

Troubleshooting Guide: Mitigating Bias in Your Workflow

Problem: Suspected contextual bias influencing analytical decisions.

Solution: Implement a structured debiasing protocol.

The following workflow, based on practices successfully implemented in forensic laboratories, outlines a systematic approach to minimize cognitive bias [24] [25].

Diagram: A sequential workflow for mitigating cognitive bias, incorporating blinding and structured evaluation.

Detailed Experimental Protocols for Bias Mitigation

1. Protocol: Linear Sequential Unmasking-Expanded (LSU) This methodology controls the sequence and timing of information exposure to prevent biasing from reference materials [25].

- Objective: To ensure that the initial evidence is evaluated without being influenced by known reference samples or task-irrelevant contextual information.

- Procedure:

- Initial Analysis: The analyst performs an initial assessment of the evidence sample (e.g., a fingerprint, DNA profile, or document) in isolation.

- Record Findings: Document all initial observations, features, and potential conclusions before any comparison is made.

- Controlled Comparison: Only after the initial analysis is complete and documented is the evidence compared to a reference sample.

- Re-evaluation: Re-examine the evidence in the context of the reference sample and document any changes in interpretation, including the rationale.

2. Protocol: Blind Verification This protocol ensures an independent review of the evidence [25].

- Objective: To obtain a second opinion that is free from the influence of the primary analyst's conclusions or contextual information.

- Procedure:

- Case Manager Role: A case manager screens the case file and provides the evidence to the verifying analyst.

- Information Control: The verifying analyst is not informed of the primary analyst's results or any potentially biasing contextual details about the case.

- Independent Analysis: The verifier conducts their own analysis following the same LSU protocol.

- Comparison of Results: The results from the primary and verifying analyst are compared. Any discrepancies are resolved through a structured process before a final conclusion is reached.

Research Reagent Solutions: The Bias Mitigation Toolkit

The following table details key methodological "reagents" essential for designing robust experiments and analyses resistant to cognitive bias.

| Tool / Solution | Function & Explanation |

|---|---|

| Blinding & Masking | Prevents exposure to task-irrelevant information (e.g., suspect details, other analysts' opinions) that can skew perception and interpretation [21] [25]. |

| Linear Sequential Unmasking (LSU) | A structured protocol that controls the sequence of information exposure, ensuring evidence is evaluated before comparison to references to prevent backward reasoning [21] [25]. |

| Case Manager | An individual or system that screens and controls what information is provided to analysts and when, acting as a filter against biasing information [21] [25]. |

| Multiple Hypotheses | The practice of actively generating and considering alternative explanations or conclusions to counter confirmation bias and encourage exploratory analysis [21]. |

| Differential Diagnostic Approach | A framework where different possible conclusions are presented along with their associated probabilities, promoting transparent and balanced reasoning [21]. |

| Blind Proficiency Testing | A quality control measure where analysts are tested with samples without their knowledge, providing objective data on performance and error rates [24]. |

FAQs: Understanding Cognitive Bias in the Forensic Laboratory

This section addresses common questions about the nature, sources, and impact of cognitive bias in forensic science workflows.

Q1: What is cognitive bias, and why is it a problem in forensic science?

Cognitive bias refers to the unconscious and automatic mental shortcuts that can influence judgment, particularly in situations involving ambiguity or insufficient data [4]. In forensic science, this is problematic because disciplines that rely on human experts to make pattern-matching judgments (e.g., fingerprints, handwriting) are susceptible to these biases, which can introduce error into the criminal legal system [4]. These biases are not a result of incompetence or unethical behavior but are a normal part of human cognition that must be managed through systemic safeguards [4].

Q2: I am an experienced examiner. Aren't I immune to bias?

This belief is a common misconception known as the "Expert Immunity" fallacy [4]. Expertise does not cure bias; in fact, extensive experience may cause experts to rely more heavily on automatic decision-making processes. Another prevalent misconception is the "Bias Blind Spot," where individuals acknowledge bias as a general problem but believe they are personally less vulnerable to it [4]. Awareness of bias is crucial, but willpower alone is insufficient to prevent it, as these processes occur unconsciously [4].

Q3: What are the main sources of bias in a forensic examination?

A 2020 summary identifies eight key sources of bias that can uniquely and compoundingly affect expert decisions [4]:

- The Data: The evidence itself can contain biasing elements or evoke emotions.

- Reference Materials: The materials used for comparison can influence conclusions.

- Contextual Information: Task-irrelevant information about the case can inappropriately influence judgment.

- Base-Rate Expectations: Prior expectations about how common a finding might be.

- The Examiner's Own Background: Personal experiences and beliefs.

- Organizational Factors: The culture and pressures within the laboratory.

- The Presentation of Results: How findings are communicated and reported.

- The Human Factors of the Examiner: The individual's cognitive state (e.g., fatigue, stress).

Q4: Can't technology and AI completely eliminate bias from our workflows?

This belief is the "Technological Protection" fallacy [4]. While artificial intelligence, advanced instruments, and automation can significantly reduce bias, they will not eliminate it. These systems are built, programmed, operated, and interpreted by humans, meaning bias can still be introduced at various stages of their development and use [4].

Troubleshooting Guides: Mitigating Bias in Your Workflows

Guide 1: Troubleshooting Contextual Bias in Forensic Examination

Problem: Forensic conclusions are being inappropriately influenced by task-irrelevant contextual information (e.g., knowing about a suspect's confession or other evidence not related to the pattern-matching task).

Application Scope: This guide is designed for forensic examiners and laboratory managers in pattern-matching disciplines such as fingerprint analysis, questioned documents, and firearms examination.

Process:

- Identify the Problem: A conclusion in a case does not align with the expected scientific objectivity. The first step is to acknowledge the potential for bias without assigning blame [4].

- List All Possible Explanations/Sources: Use the list of eight sources of bias as a checklist to identify potential biasing influences in your specific case and laboratory environment [4].

- Collect Data: Review the case workflow to identify where task-irrelevant information could have been introduced. Was the case manager protocol followed? Was a blind verification performed? [4].

- Eliminate Some Explanations: Based on the data, rule out sources that were properly controlled. For example, if a blind verification was conducted and confirmed the original result, this reduces the likelihood that contextual bias was the sole cause.

- Check with Experimentation (Implement Mitigation Strategies): If contextual bias is a likely factor, design and implement procedural changes. Key research-based strategies include [4]:

- Linear Sequential Unmasking-Expanded (LSU-E): Revealing case information to the examiner in a structured sequence, only after their initial analysis of the evidence is complete.

- Blind Verification: Having a second examiner verify the results without knowledge of the first examiner's conclusion or any contextual information.

- Case Manager Model: Using a case manager to filter information and provide examiners with only the data essential for their specific analysis.

- Identify the Cause: After implementing mitigation strategies, re-evaluate the evidence. If conclusions become more robust and less variable, it indicates that contextual bias was a likely contributing factor. Document this outcome to support ongoing use of these procedures [4].

Guide 2: Troubleshooting Bias in Machine Learning Models for Forensic Data Classification

Problem: A machine learning model used for classifying forensic data (e.g., DNA samples, chemical spectra) is producing skewed or unfair outcomes, indicating potential algorithmic bias.

Application Scope: This guide is for data scientists and researchers developing or using ML models for classification tasks in forensic science laboratories.

Process:

- Identify the Problem: The model's predictions show statistically significant disparities across different sensitive or protected groups (e.g., demographic groups) when evaluated with fairness metrics [26].

- List All Possible Explanations/Sources: Bias can originate from the training data (e.g., unrepresentative samples), the model algorithm itself, or the interpretation of the outputs [26].

- Collect Data: Use fairness metrics like Demographic Parity, Equalized Odds, or Statistical Parity to quantify the bias in the model's predictions [26].

- Eliminate Some Explanations: Based on the metric results, hypothesize where in the ML pipeline the bias is most likely introduced.

- Check with Experimentation (Implement Mitigation Strategies): Apply bias mitigation techniques based on the stage of the ML pipeline [26]:

- Pre-processing: Adjust the training data before model training. Techniques include:

- Reweighing: Assigning different weights to training instances to balance the impact of protected groups.

- Sampling: Using methods like SMOTE (Synthetic Minority Over-sampling Technique) to balance dataset distribution [26].

- Feature-wise Mixing: A newer method that redistributes feature representations across datasets, which has been shown to reduce bias by 43.35% on average without needing explicit bias attribute identification [27].

- In-processing: Modify the learning algorithm during training.

- Regularization: Adding a fairness term to the algorithm's loss function to penalize discrimination.

- Adversarial Debiasing: Training a competing model to try to predict the protected attribute from the main model's predictions, thereby forcing the main model to learn features that are independent of the protected attribute [26].

- Post-processing: Adjust the model's outputs after training.

- Reject Option based Classification (ROC): Changing the predicted labels for instances where the model has low confidence, typically assigning favorable outcomes to unprivileged groups and unfavorable outcomes to privileged groups [26].

- Pre-processing: Adjust the training data before model training. Techniques include:

- Identify the Cause: After applying one or more mitigation techniques, re-run the fairness metrics. A significant reduction in disparity confirms the presence of algorithmic bias and the effectiveness of the chosen mitigation strategy.

Data Presentation: Quantitative Findings on Bias Mitigation

Table 1: Performance of Machine Learning Bias Mitigation Techniques

This table summarizes the effectiveness of different categories of bias mitigation methods used in classification tasks, based on a review of available strategies [26].

| Mitigation Category | Example Methods | Key Mechanism | Relative Effectiveness & Notes |

|---|---|---|---|

| Pre-processing | Reweighing, SMOTE, Feature-wise Mixing [27] [26] | Modifies the training dataset to remove bias before model training. | Feature-wise mixing reported 43.35% average bias reduction and significant decrease in Mean Squared Error [27]. |

| In-processing | Adversarial Debiasing, Prejudice Remover [26] | Alters the learning algorithm to incorporate fairness constraints during model training. | Directly penalizes bias in the objective function; can be highly effective but may require more specialized expertise to implement. |

| Post-processing | Reject Option Classification, Calibrated Equalized Odds [26] | Adjusts the model's predictions after they have been generated. | Useful when you cannot modify the model or training data; generally considered less frequent in literature than other methods [26]. |

Table 2: Checklist for Reporting Experimental Protocols to Enhance Reproducibility

A guideline for reporting experimental protocols proposes 17 key data elements to ensure reproducibility. The table below lists a subset of these fundamental elements [28].

| Data Element Category | Specific Item to Report | Importance for Reproducibility |

|---|---|---|

| Materials & Reagents | Unique identifiers (e.g., catalog numbers, RRIDs) [28] | Unambiguously identifies exact reagents used, as properties can vary between lots and suppliers. |

| Experimental Parameters | Precise values (e.g., temperature, time, concentration) [28] | Avoids ambiguities like "room temperature" or "store overnight," which can lead to procedural variations. |

| Sample Description | Relevant characteristics and preparation methods [28] | Provides necessary context for the experimental system and allows others to replicate the sample prep. |

| Workflow & Steps | A detailed, sequential description of the process [28] | Serves as the primary recipe for the experiment, enabling others to follow the same sequence of actions. |

Experimental Protocols

Protocol 1: Implementing a Linear Sequential Unmasking (LSU) Workflow

Objective: To minimize the influence of contextual and confirmation bias during the forensic examination of pattern evidence by controlling the sequence of information revelation [4].

Key Research Reagent Solutions & Materials:

- Case File: Contains all evidence and contextual information.

- Laboratory Information Management System (LIMS): Used to manage and restrict access to case information.

- Standard Operating Procedure (SOP) Document: Details the LSU steps and rules.

Methodology:

- Initial Analysis: The examiner is provided only with the evidence item requiring analysis (e.g., a latent fingerprint). All contextual information (e.g., suspect statements, other forensic reports) is withheld.

- Documentation: The examiner performs their analysis and documents their findings, conclusions, and confidence level based solely on the evidence.

- Controlled Revelation: The case manager or system then reveals the first piece of additional information, typically the known reference sample(s) (e.g., a suspect's fingerprint card).

- Comparison: The examiner compares their documented analysis from Step 2 with the new information.

- Final Conclusion: The examiner integrates all information to form a final conclusion. This structured process helps isolate the examiner's objective analysis of the evidence from biasing contextual influences [4].

Protocol 2: Feature-Wise Mixing for Mitigating Contextual Bias in Predictive Models

Objective: To reduce contextual bias in supervised machine learning models by redistributing feature representations across multiple datasets, without requiring explicit identification of bias attributes [27].

Key Research Reagent Solutions & Materials:

- Source Datasets: Multiple datasets containing the predictive features and labels, presumed to contain different contextual biases.

- Computational Environment: Python/R environment with standard ML libraries (e.g., scikit-learn).

- Evaluation Metrics: Bias-sensitive loss functions (e.g., disparity metrics, Mean Squared Error).

Methodology:

- Dataset Preparation: Assemble your source datasets. The method is designed to work without pre-defined bias attributes.

- Feature-wise Mixing: Apply the feature-wise mixing framework. This involves systematically mixing or recombining feature columns from the different source datasets to create a new, blended training dataset. This process redistributes the feature representations that may be associated with contextual biases [27].

- Model Training: Train multiple ML classifiers (e.g., Logistic Regression, Decision Trees, Support Vector Machines) on the newly created mixed dataset using standard cross-validation techniques [27].

- Evaluation: Evaluate the trained models using the chosen bias-sensitive metrics and Mean Squared Error (MSE). Compare the results against models trained on the original, unmixed data or with other mitigation techniques like SMOTE [27].

- Validation: The protocol is considered successful if the models trained on the mixed dataset show a statistically significant reduction in bias metrics and MSE compared to baseline models [27].

Workflow Visualization

Forensic Bias Mitigation Strategy Map

Machine Learning Bias Mitigation Pathways

Implementing Practical Bias Mitigation Frameworks and Protocols

Troubleshooting Guides & FAQs

Common Implementation Challenges and Solutions

Q1: What is the most frequent error in applying LSU-E to non-comparative forensic domains, and how can it be resolved?

A: A common error is providing contextual information (e.g., presumed manner of death, investigative theories) to the expert before they have conducted an initial examination of the raw evidence. This violates the core LSU-E principle and introduces potential bias [29].

- Solution: Implement a strict protocol where all contextual information is withheld until after the analyst has performed and documented an initial assessment of the raw data or evidence. For example, crime scene investigators should not receive any case details until after they have initially seen the crime scene and documented their first impressions [29].

Q2: How can a laboratory manage the practical challenge of segregating information while still providing experts with the context needed to do their work?

A: This is a key implementation barrier. The solution is not to deprive experts of necessary information but to control the sequence in which it is presented [29].

- Solution: Adopt a phased information release system.

- Phase 1: Evidence-Centric Analysis. The analyst works solely with the raw data (e.g., the crime scene evidence, the digital hard drive, the biological sample).

- Phase 2: Contextual Integration. Only after documenting conclusions from Phase 1 is the analyst provided with relevant contextual information to aid in further interpretation, ensuring the initial judgment is driven by the evidence itself [29].

Q3: What is a major limitation of the original Linear Sequential Unmasking (LSU) framework that LSU-E aims to overcome?

A: The original LSU framework is limited in two significant ways [29]:

- Scope Limitation: It applies only to comparative decisions (e.g., comparing fingerprints or DNA profiles) and not to other forensic decisions like crime scene investigation or forensic pathology.

- Focus Limitation: Its function is limited to minimizing cognitive bias, rather than reducing noise and improving decision-making reliability more broadly.

LSU-E Procedural Troubleshooting

Q4: How can a laboratory objectively track the information an analyst received and when they received it?

A: Research recommends using a practical worksheet or checklist to document the information management process [30]. This tool bridges the gap between research and practice by providing a concrete mechanism to record:

- The pieces of information available in a case.

- The sequence in which they were disclosed to the analyst.

- The analyst's conclusions at each stage, thereby increasing the transparency and repeatability of the process [30].

Q5: Has LSU-E been successfully implemented in a working forensic laboratory?

A: Yes. The Department of Forensic Sciences in Costa Rica designed a pilot program that incorporated LSU-E, among other mitigation strategies. This program demonstrated that existing research recommendations can be used within laboratory systems to reduce error and bias in practice, providing a model for other laboratories [12].

Experimental Protocols & Methodologies

Core Protocol for Implementing LSU-E

The following methodology provides a step-by-step guide for integrating LSU-E into a forensic workflow, based on its foundational principles [29].

Objective: To minimize cognitive bias and reduce noise in forensic decision-making by optimizing the sequence of information processing.

Workflow:

- Information Audit: Identify all informational elements available in a case (e.g., evidence items, reference materials, witness statements, investigative hypotheses).

- Information Categorization: Classify each piece of information based on its objectivity, relevance to the specific forensic task, and potential biasing power [30].

- Sequencing: Establish a linear sequence for revealing information to the analyst, adhering to this rule: the most objective, task-relevant, and least biasing information must be presented first.

- Initial Analysis & Documentation: The analyst examines only the first piece of information (typically the raw, unknown evidence) and documents their findings and interpretations before proceeding.

- Sequential Unmasking: The next piece of information in the sequence is revealed. The analyst integrates this new information and documents any new or revised conclusions. This step repeats until all information has been considered.

- Blind Verification (Optional but Recommended): Where feasible, a second analyst should repeat the process without exposure to the first analyst's conclusions or any extraneous biasing information to verify results [12].

LSU-E Decision Workflow: This diagram visualizes the step-by-step process for implementing the LSU-E protocol, from information audit to final conclusion.

Key Experiment: Demonstrating Order Effects in Forensic Anthropology

Objective: To empirically demonstrate that the sequence of information processing can bias forensic conclusions, thereby validating the need for a framework like LSU-E.

Cited Methodology: [29]

- Independent Variable: The order in which skeletal material was analyzed (e.g., skull first vs. hip first).

- Dependent Variable: The resulting sex estimates for the skeletal remains.

- Procedure: Analysts were asked to estimate the sex of skeletal remains but were given different sequences of anatomical regions to analyze.

- Results: The study found that the order of analysis significantly biased the final sex estimates. For instance, starting with the skull led to different conclusions than starting with the hip bone, demonstrating a clear primacy effect where initial information disproportionately influences the final judgment.

Scope and Impact of Cognitive Bias in Forensic Science

The table below synthesizes evidence from the literature on the prevalence and impact of cognitive bias across forensic disciplines, underscoring the critical need for mitigation frameworks like LSU-E [29].

Table 1: Documented Reach of Cognitive Bias in Forensic Science

| Aspect of Bias | Documented Impact/Recognition | Key Sources / Domains |

|---|---|---|

| General Susceptibility | Recognized as a real and important issue impacting all domains of forensic decision-making. | National Academy of Sciences (2009); President's Council of Advisors on Science and Technology (PCAST); National Commission on Forensic Science [29]. |

| International Recognition | Guidance and concerns about bias have been issued by regulatory bodies worldwide. | United Kingdom's Forensic Science Regulator; Australian forensic authorities [29]. |

| Domain Prevalence | Effects observed and replicated across a wide range of forensic disciplines. | Fingerprinting, DNA, firearms, digital forensics, handwriting, pathology, anthropology, and crime scene investigation [29]. |

| Expert Susceptibility | Practicing forensic experts are susceptible to cognitive biases, which can operate without conscious awareness. | Documented among practicing forensic scientists; experts can be more susceptible than non-experts due to factors like escalation of commitment [29]. |

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details the key components required for the implementation and study of bias mitigation strategies like LSU-E in a research or laboratory setting.

Table 2: Essential Components for Implementing LSU-E and Bias Research

| Tool / Component | Function in Research & Implementation |

|---|---|

| LSU-E Procedural Worksheet | A practical tool to guide labs and analysts in prioritizing and sequencing case information. It increases repeatability, reproducibility, and transparency [30]. |

| Blind Verification Protocol | A control procedure where a second examiner conducts an analysis without exposure to the first examiner's results or potentially biasing context, used to test and ensure reliability [12]. |

| Case Manager System | An administrative role or system responsible for controlling the flow of information to analysts, ensuring adherence to the LSU-E sequence and acting as a "case firewall" [12]. |

| Pilot Program Framework | A structured model for rolling out LSU-E in a single laboratory section first. This allows for barrier identification, protocol refinement, and demonstration of feasibility before lab-wide implementation [12]. |

Troubleshooting Guide: FAQs on Implementation and Workflow

This technical support guide addresses common challenges researchers and forensic professionals face when implementing bias mitigation protocols like Blind Verification and Case Manager systems into laboratory workflows.

Q1: What are the most common fallacies that hinder the adoption of cognitive bias mitigation procedures, and how can we counter them?

Researchers often hold misconceptions that impede implementation. The table below summarizes six common fallacies and evidence-based counterarguments [4].

| Fallacy | Reality Check |

|---|---|

| Ethical Issues: "Only bad people are biased." | Cognitive bias is not corruption or misconduct; it is a normal, automatic decision-making process with inherent limitations [4]. |

| Expert Immunity: "I am an expert, so I am not susceptible." | Expertise does not cure bias. Frequent decision-making may cause experts to rely more on automatic processes, increasing vulnerability [4]. |

| Technological Protection: "More AI and technology will solve subjectivity." | AI systems are built and interpreted by humans, so they reduce but do not eliminate bias effects [4]. |

| Blind Spot: "I know bias is an issue, but I am not vulnerable." | Most people exhibit a "bias blind spot," readily acknowledging general vulnerability but denying their own [4]. |

| Illusion of Control: "I'll just be mindful of bias during my analyses." | Willpower alone cannot overcome bias, as it occurs automatically and unconsciously. Systems must be built around examiners to catch bias [4]. |

| Bad Apples: "Only incompetent people are biased." | Bias is not a result of lack of skill or incompetence. It is a normal, efficient decision strategy [4]. |

Q2: Our laboratory is piloting a Case Manager system. What is the primary function of the Case Manager in controlling information flow?

The Case Manager acts as an information firewall. Their core function is to control the flow of task-irrelevant and potentially biasing contextual information to the examiner [4]. This includes segregating reference materials from the original evidence data during the initial examination phase to prevent confirmation bias, where an examiner might overemphasize similarities when comparing data and reference materials side-by-side [4].

Q3: During Blind Verification, the verifier reports difficulty reaching a conclusion without the original context. What is the proper procedure?

The verifier should never receive the original examiner's conclusion or the contextual details of the case. If the verifier cannot reach a conclusion based solely on the evidence presented, the result should be documented as "inconclusive" or "no conclusion." The verification must remain truly blind to be effective. Providing context or the initial result undermines the process, as seen in high-profile errors like the FBI's misidentification in the Madrid bombing case, where verifiers knew the initial conclusion from a respected colleague [4].

Q4: How can we validate that our Blind Verification and Case Manager protocols are effectively reducing cognitive bias?

Effectiveness should be measured through quantitative and qualitative metrics. Implement a pilot program and track key performance indicators over time. The table below outlines a framework for measuring protocol effectiveness [4].

| Metric Category | Specific Indicator | Goal |

|---|---|---|

| Workflow Integrity | Percentage of cases where Case Manager protocol was correctly followed. | >98% adherence to the established workflow. |

| Rate of contextual information leaks to examiners. | Zero leaks. | |

| Analytical Outcomes | Rate of inconclusive results from blind verifiers. | Stable or decreasing trend. |

| Discordance rate between initial examination and blind verification. | Maintain a low, stable rate consistent with known error rates. | |

| Operational Impact | Average time added to case completion. | Quantify and justify as a necessary cost for increased reliability. |

| Staff feedback and acceptance scores. | Gradual improvement in acceptance and understanding. |

Q5: What are the key sources of bias in forensic examinations that these systems are designed to address?

A 2020 summary identifies multiple compounding sources of bias. The Case Manager and Blind Verification systems directly target several of these, including the data itself, reference materials, and contextual information [4]. By controlling the flow of information, these systems help prevent contamination from pre-existing beliefs, expectations, and motives from inappropriately influencing the collection, perception, or interpretation of evidence [4].

Experimental Protocol: Implementing a Pilot Program for Bias Mitigation

The following protocol is adapted from a successful pilot program implemented in a questioned documents section, providing a model for systematic implementation [4].

Objective

To implement and evaluate a structured bias mitigation protocol within a forensic laboratory workflow, integrating Case Manager and Blind Verification procedures to enhance the reliability and objectivity of analytical results.

Materials and Reagent Solutions

| Item | Function in the Protocol |

|---|---|

| Laboratory Information Management System (LIMS) | An automated system for immutable record-keeping and tracking evidence movement, crucial for maintaining the chain-of-custody [31]. |

| Case Manager | A designated individual or role responsible for acting as an information firewall. They control the flow of all information to the examiner, ensuring only task-relevant data is provided [4]. |

| Blind Verifier | A second, independent examiner who performs the analysis without any knowledge of the initial examiner's findings, the case context, or any other potentially biasing information [4]. |

| Linear Sequential Unmasking-Expanded (LSU-E) Framework | A research-based tool that structures the examination process to reveal information to the examiner in a controlled, sequential manner, minimizing the risk of confirmation bias [4]. |

| Standardized Report Templates | Documentation that explains scientific conclusions using precise, defensible terminology and properly conveys statistical probabilities, avoiding overstatement [31]. |

Methodology

Pre-Implementation Phase

- Stakeholder Engagement: Conduct educational sessions to address common fallacies (see FAQ #1) and build consensus on the need for bias mitigation.

- Role Definition: Clearly define the responsibilities and authority of the Case Manager and Blind Verifiers.

- Workflow Mapping: Document the current evidence flow and identify all points where contextual information is introduced.

Case Manager Workflow Implementation

- Intake: All case materials and information are received by the Case Manager.

- Triage: The Case Manager assesses the case and segregates the evidence into two streams:

- Examiner Stream: Contains only the data necessary for the initial analysis (e.g., the latent print).

- Contextual Stream: Contains all other information (e.g., suspect information, reference materials, reports from other examiners).

- Assignment: The Case Manager assigns the decontextualized evidence from the Examiner Stream to an examiner for analysis.

Blind Verification Workflow Implementation

- Initial Examination: The first examiner completes their analysis and documents their conclusion in a report submitted to the Case Manager.

- Verification Assignment: The Case Manager prepares a new case file for the verifier, containing only the original evidence. The initial examiner's report is excluded.

- Independent Verification: The blind verifier conducts a full, independent analysis without access to the initial findings or contextual data.

- Conclusion Comparison: The Case Manager compares the two results. Any discordance is managed according to predefined laboratory policy (e.g., escalation to a third expert or panel).

Data Collection and Analysis

- Track the metrics outlined in FAQ #4 for a predetermined pilot period (e.g., 6-12 months).

- Use the data to refine the protocols, demonstrate the value to stakeholders, and justify permanent implementation.

Visualization of Workflow

The following diagram illustrates the controlled information flow, highlighting how the Case Manager acts as a critical firewall.

This technical support center provides practical guidance for researchers and forensic professionals implementing Linear Sequential Unmasking - Enhanced (LSU-E) worksheets to mitigate contextual bias in laboratory workflows.

Troubleshooting Guides and FAQs

Data Collection and Preparation Phase

Q: What is the most common source of bias in forensic data collection? A: Human biases represent the dominant origin of biases observed in analytical workflows. These include implicit bias (subconscious attitudes or stereotypes) and systemic bias (broader institutional norms, practices, or policies that can lead to societal harm or inequities). These rarely introduced deliberately, they reflect historic or prevalent human perceptions that can manifest across various stages of analytical development [32].

Q: How can we minimize exposure to irrelevant contextual information during evidence examination? A: Implement the case manager model, which separates functions in the laboratory between case managers and examiners. Case managers can be fully informed about context, while forensic examiners receive only the information needed for their specific analytical tasks. This prevents exposure to potentially biasing information that doesn't contribute to the scientific examination [13].

Q: What practical steps can individual practitioners take to reduce cognitive bias in their work? A: Practitioners can adopt several specific actions: ground work in evidence, create structures that encourage scrutiny, implement blind verification procedures, and maintain a questioning mindset that critically assesses evidence. These approaches allow practitioners to take ownership for minimizing cognitive bias [7].

Analysis and Interpretation Phase

Q: Our team often experiences confirmation bias. How can LSU-E worksheets help? A: LSU-E worksheets directly address confirmation bias (the tendency to seek, interpret, and remember information that confirms pre-existing beliefs) by structuring the analytical process. The worksheets enforce documentation of initial observations before exposure to reference materials, preventing "tunnel vision." Teams should also conduct blind re-examination, where key judgments are replicated by a second examiner not exposed to potentially biasing information [33] [13].

Q: What should we do when different analysts reach conflicting conclusions using the same worksheet? A: This may indicate anchoring bias (relying too heavily on first impressions) or the Dunning-Kruger effect (overestimating competence). Implement a structured consensus process where each analyst presents their documented observations from the worksheet. Focus discussion on the evidence rather than opinions, and consider bringing in a neutral third party with relevant expertise [33].

Q: How can we maintain worksheet consistency when dealing with complex, multi-part evidence? A: Break down complex evidence into discrete analytical units, with separate worksheet sections for each. Maintain a clear chain of documentation that shows how each piece was evaluated individually before integrated conclusions were drawn. This approach manages complexity while preserving analytical rigor [34] [13].

Implementation and Verification Phase

Q: What metrics should we track to evaluate the effectiveness of our LSU-E implementation? A: Monitor both process and outcome metrics. Process metrics include documentation completeness rates and adherence to sequencing protocols. Outcome metrics should track inter-rater reliability, reduction in contradictory findings, and quantitative bias assessments using established fairness metrics where applicable [32] [35].

Q: How can we adapt LSU-E worksheets for different types of forensic analysis? A: While maintaining core principles, customize worksheet templates to specific analytical domains. The key is preserving the sequential revelation of information, not standardizing every detail. Create domain-specific versions that address unique aspects of different evidence types while maintaining the unbiased examination sequence [34] [13].

Q: What is the most common implementation error when first adopting structured worksheets? A: The planning fallacy - underestimating the time, cost, and risks required to complete a task, despite experience suggesting otherwise. Teams often create overly optimistic timelines for worksheet completion. Mitigate this by tracking actual time requirements during the initial implementation phase and adjusting expectations accordingly [33].

Experimental Protocols and Methodologies

Protocol 1: Bias Assessment in Existing Workflows

Purpose: Establish baseline bias measurements before implementing LSU-E worksheets [35].

Materials: Historical case data, assessment worksheets, statistical analysis software

Procedure:

- Select a representative sample of completed cases (minimum n=30 recommended)

- Document all available contextual information originally present during analysis

- Measure subgroup performance variations using appropriate fairness metrics

- Calculate Equal Opportunity Difference (EOD) values comparing false negative rates

- Identify specific bias patterns across different contextual factors

Table 1: Bias Assessment Metrics and Interpretation

| Metric | Calculation | Acceptance Threshold | Purpose |

|---|---|---|---|

| Equal Opportunity Difference (EOD) | Difference in false negative rates between subgroups | <5 percentage points [35] | Measures fairness across demographic groups |

| Inter-rater Reliability | Percentage agreement between independent examiners | >90% for major conclusions | Assesses analytical consistency |

| Contextual Influence Index | Rate of conclusion changes when context is modified | <5% variation | Quantifies susceptibility to contextual bias |

Protocol 2: LSU-E Worksheet Implementation

Purpose: Integrate sequential unmasking into daily laboratory practice [13].

Materials: LSU-E worksheets, case management system, blinding protocols

Procedure:

- Case Intake: Case manager receives all contextual information

- Evidence Preparation: Remove all identifying and contextual details not essential for analysis

- Initial Examination: Analyst completes sections 1-3 of worksheet documenting:

- Physical characteristics of evidence

- Class characteristics

- Initial observations and measurements

- Sequential Revelation: Case manager provides specific reference materials requested by analyst

- Comparative Analysis: Analyst completes sections 4-6 documenting comparison process

- Verification: Second analyst performs blind re-examination of key findings

- Contextual Integration: Case manager and analyst jointly review contextual information and document its influence (if any) on conclusions

LSU-E Worksheet Implementation Workflow

Protocol 3: Threshold Adjustment for Algorithmic Bias Mitigation

Purpose: Address performance disparities across subgroups in analytical algorithms [36] [35].

Materials: Classification algorithms, performance data across subgroups, threshold adjustment tools

Procedure:

- Establish baseline performance metrics for all relevant subgroups

- Identify subgroups with meaningful performance disparities (EOD >5 percentage points)

- Calculate optimal thresholds for each subgroup to minimize EOD

- Implement adjusted thresholds in analytical workflows

- Validate that accuracy reduction remains <10% and alert rate change <20%

- Document mitigation effectiveness for ongoing improvement

Table 2: Post-Processing Bias Mitigation Methods Comparison

| Method | Effectiveness | Accuracy Impact | Implementation Complexity | Best Use Cases |

|---|---|---|---|---|

| Threshold Adjustment | High (8/9 trials showed bias reduction) [36] | Low loss | Low | Binary classification models |

| Reject Option Classification | Moderate (5/8 trials showed bias reduction) [36] | Low loss | Medium | High-stakes decisions with uncertainty |

| Calibration | Moderate (4/8 trials showed bias reduction) [36] | No loss | Medium | Probabilistic predictions |

| Feature-Wise Mixing | High (43.35% average bias reduction) [27] | Statistically significant improvement | High | Complex predictive models |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bias-Aware Forensic Research

| Item | Function | Implementation Example |

|---|---|---|

| LSU-E Worksheets | Structured documentation for sequential unmasking | Customizable templates for different evidence types [13] |

| Case Management System | Controls information flow to examiners | Implements case manager model [13] |

| Blinding Protocols | Prevents exposure to biasing information | Standard operating procedures for evidence preparation [34] |

| Bias Assessment Metrics | Quantifies fairness and performance disparities | Equal Opportunity Difference, demographic parity [32] [35] |

| Threshold Adjustment Tools | Implements post-processing bias mitigation | Aequitas, custom Python scripts [36] [35] |

| Adversarial Validation Sets | Tests system robustness to contextual bias | Artificially created datasets with controlled contextual variables [37] |

| Statistical Analysis Package | Measures inter-rater reliability and bias metrics | R, Python with fairness libraries [36] [35] |

Bias Mitigation Framework Overview

ISO/IEC 17025 Accreditation as a Foundation for Impartiality and Quality Management

Troubleshooting Guides & FAQs