Strategic Sample Preparation: Optimizing Protocols for Diverse Evidence Types in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on optimizing sample preparation, a critical yet often rate-limiting step in analytical workflows.

Strategic Sample Preparation: Optimizing Protocols for Diverse Evidence Types in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing sample preparation, a critical yet often rate-limiting step in analytical workflows. Covering foundational principles to advanced applications, it explores high-performance strategies for techniques including mass spectrometry, NGS, ELISA, and Western blotting. The content delivers practical methodologies, targeted troubleshooting for common pitfalls, and validation frameworks to enhance accuracy, reproducibility, and sensitivity across diverse sample types, from proteins and nucleic acids to complex biological matrices.

The Critical Role of Sample Preparation: Foundations for Reproducible Science

Why Sample Preparation is the Rate-Limiting Step in Analytical Workflows

In modern analytical science, sample preparation is frequently the rate-limiting step, consuming over 60% of total analysis time in chromatographic methods and being responsible for approximately one-third of all analytical errors [1]. This critical process is designed to isolate target analytes from complex matrices, but it cannot occur automatically and often requires auxiliary phases or external energy, making it a significant bottleneck in developing robust and reliable analytical methods [1].

This technical support center provides troubleshooting guides and FAQs to help researchers overcome common sample preparation challenges, enhance reproducibility, and streamline their analytical workflows.

The Sample Preparation Bottleneck: Core Concepts

Quantitative Impact on Analytical Workflows

The following table summarizes key data points that illustrate why sample preparation is often the slowest part of an analytical process [1].

| Performance Metric | Impact of Sample Preparation |

|---|---|

| Time Consumption | Consumes >60% of total analysis time in chromatographic analyses [1]. |

| Error Contribution | Responsible for ~30% (one-third) of all analytical errors [1]. |

| Reproducibility Impact | Protocol missteps account for over 10% of experimental reproducibility failures [2]. |

| Automation Benefit | Automated screening reduced manual screening time by an estimated 382 hours over 3 years in one implementation [3]. |

Fundamental Reasons for the Bottleneck

Sample preparation becomes rate-limiting due to several inherent challenges:

- Complex Matrices: Real-world samples contain numerous interfering substances that must be removed to isolate target analytes [1].

- Ultra-Trace Analysis: Target analytes often exist at very low concentrations alongside much more abundant matrix components [1].

- Manual Intensive Processes: Traditional methods are often labor-intensive and prone to human error [4] [2].

- Equilibrium Requirements: Processes like extraction require time to reach equilibrium and cannot be rushed without sacrificing efficiency [1].

Troubleshooting Guides and FAQs

Common Sample Preparation Errors and Solutions

| Common Error | Impact | Prevention & Solution |

|---|---|---|

| Measurement Inaccuracies [2] | Small inaccuracies amplify into invalid results; affects reproducibility. | Use calibrated pipettes and balances; verify technique; use appropriate tool for volume (e.g., micropipette for µL volumes). |

| Cross-Contamination [2] | False positives/negatives; compromised data integrity. | Always use fresh pipette tips; clean surfaces and equipment properly. |

| Incomplete Solubilization/Extraction [5] | Low analyte recovery; inaccurate concentration measurements. | Follow validated methods for sonication/shaking time and diluent composition; visually inspect for undissolved particles. |

| Improper Filtration [5] | Clogged columns/instruments; particle introduction in U/HPLC. | Use correct filter size (e.g., 0.45µm or 0.2µm); discard first 0.5 mL of filtrate; select compatible membrane material. |

| Poor Documentation [2] | Irreproducible results; inability to trace error sources. | Maintain detailed, real-time lab notebook recording all deviations and observations. |

Frequently Asked Questions

Q1: Our sample prep is our biggest bottleneck. What are the main strategic approaches to improve it? The four principal high-performance strategies are [1]:

- Functional Materials: Using advanced sorbents (e.g., MOFs, COFs, MIPs) to enhance selectivity and sensitivity during extraction and enrichment [1].

- Chemical/Biological Reactions: Employing derivatization or enzymatic reactions to convert analytes into more detectable forms [1].

- External Energy Fields: Applying ultrasound, microwave, or thermal energy to accelerate mass transfer and kinetics [1].

- Specialized Devices: Implementing automated, miniaturized, or online devices to improve precision, accuracy, and throughput [1].

Q2: How can I improve the recovery of intact proteins from a complex biological matrix like plasma? Intact protein analysis is challenging due to nonspecific binding and matrix interference. While immunoaffinity methods are selective but expensive, simpler alternatives are emerging [6]. Micro-Elution Solid-Phase Extraction (μSPE) is a promising technique. Key considerations [6]:

- Format: Use a μSPE microplate format to handle small sample volumes.

- Elution: Elute into small volumes (as low as 25 µL) to avoid drying and reconstitution steps, which can cause significant protein loss.

- Recovery: Under optimized conditions, recoveries of >50% in serum and plasma for most lower molecular weight intact proteins (<30 kDa) are achievable.

Q3: What are the critical steps for preparing a simple drug substance (API) powder for a potency assay? The "dilute and shoot" approach for a Drug Substance (DS) requires extreme precision [5]:

- Weighing: Accurately weigh 25-50 mg of DS using a five-place analytical balance (±0.1 mg). Use a folded weighing paper or boat to facilitate transfer. For hygoscopic materials, allow refrigerated samples to reach room temperature before opening and handle speedily [5].

- Transfer & Solubilization: Quantitatively transfer all powder to a Class A volumetric flask. Use the validated diluent (often acidified water or buffer with organic solvent for low-solubility APIs) and solubilize via sonication or shaking for the specified time [5].

- Precaution: Filtration of the final DS solution is generally discouraged, as regulatory agencies do not expect particulates in a pure substance [5].

Q4: How can automation specifically help reduce errors in my sample prep workflow? Automation addresses several key sources of manual error [4]:

- Consistency: An automated liquid handler (e.g., Hamilton Microlab STAR) performs all liquid transfers with high precision, eliminating inconsistencies between analysts and runs [4].

- Complex Protocols: It reliably executes multi-step workflows (e.g., adding internal standard, mixing, loading, washing, and eluting for SPE), reducing errors in complex methods [4].

- Traceability: Automated systems log all actions, improving documentation and compliance.

Essential Workflows and Visual Guides

Logical Troubleshooting Pathway for Failed Sample Prep

When encountering a problem, follow a systematic approach to identify the root cause. The diagram below outlines a logical decision-making pathway for troubleshooting failed sample preparation.

Standardized Workflow for Solid Oral Drug Product Preparation

A typical "grind, extract, and filter" workflow for tablets or capsules is essential for obtaining accurate and reproducible results in pharmaceutical analysis [5].

The Scientist's Toolkit: Key Research Reagent Solutions

Selecting the appropriate materials and reagents is fundamental to successful sample preparation. The following table details essential items and their functions in a typical lab.

| Tool/Reagent | Primary Function | Key Application Notes |

|---|---|---|

| Analytical Balance | High-precision weighing of samples and standards. | 5-place balance (±0.1 mg) is standard for DS weighing; requires regular calibration [5]. |

| Volumetric Flask (Class A) | Precise preparation of standard and sample solutions. | Ensures accurate final volume; verify flask size is correct before use [5]. |

| Diluent | Liquid medium to dissolve and stabilize the analyte. | Composition is critical (e.g., acidified water for weak bases); must be compatible with HPLC mobile phase [5]. |

| Syringe Filter (0.45 µm) | Removes insoluble particulates from sample solutions. | Essential for drug products; nylon or PTFE membranes are common; discard first 0.5 mL filtrate [5]. |

| Solid-Phase Extraction (SPE) Sorbent | Selectively isolates and concentrates analytes from a liquid sample. | Functionalized materials (e.g., Oasis MCX for cations) enhance selectivity; μElution plates allow for small elution volumes [4] [6]. |

| Ultrasonic Bath or Shaker | Facilitates dissolution and extraction of the analyte from the matrix. | Provides consistent energy input; extraction time must be optimized and validated [5]. |

| Automated Liquid Handler | Precisely dispenses and transfers liquids without manual intervention. | Reduces human error and improves reproducibility in complex protocols (e.g., SPE) [4]. |

Core Concepts and Troubleshooting Guides

What are the fundamental challenges when analyzing complex samples?

Analyzing complex samples such as biological fluids, tissues, or environmental extracts presents three interconnected fundamental challenges: selectivity, sensitivity, and matrix effects. Understanding these concepts is crucial for developing reliable analytical methods.

Selectivity is the ability of an analytical method to distinguish and quantify the target analyte in the presence of other components in the sample. In complex matrices, numerous interfering substances may co-elute with the analyte, leading to inaccurate results. Liquid chromatography-tandem mass spectrometry (LC/MS/MS) provides high specificity by monitoring selected mass ions, but chromatographic separation remains critical because co-eluting substances can significantly affect the ionization process [7].

Sensitivity refers to the ability of a method to detect and quantify trace levels of analytes. It is often defined by limits of detection (LOD) and quantification (LOQ). Proper sample preparation, such as preconcentration techniques, can enhance sensitivity by isolating and concentrating target analytes while removing interfering substances [8] [9].

Matrix Effects are the combined impact of all sample components other than the analyte on its measurement. These effects can cause signal suppression or enhancement, particularly in mass spectrometry-based methods. Matrix effects occur when co-eluting endogenous substances compete with the analyte for charge during the ionization process in the mass spectrometer, leading to unreliable quantitative results [7] [10]. Electrospray ionization (ESI) is known to be more prone to ion suppression than atmospheric pressure chemical ionization (APCI) [7].

Troubleshooting Common Problems

Problem: Inconsistent or inaccurate quantification results.

- Potential Cause: Matrix effects from co-eluting compounds, such as phospholipids in plasma samples, causing ion suppression or enhancement [7] [10].

- Solution:

- Improve sample clean-up by moving from simple protein precipitation to more selective techniques like solid-phase extraction (SPE). SPE can provide a ten-fold reduction in phospholipid interference [10].

- Optimize the LC method to shift the retention time of the analyte away from the region where matrix components elute. This can involve testing different gradient conditions [7].

- Use a stable isotopically labeled internal standard (IS), which experiences the same ionization effects as the analyte and can effectively correct the analyte response [8] [7].

Problem: Poor sensitivity, failing to achieve low detection limits.

- Potential Cause: Inefficient extraction or excessive dilution during sample preparation, or high background noise from the sample matrix [8] [9].

- Solution:

- Re-evaluate the sample preparation method. Techniques like liquid-liquid extraction (LLE) or solid-phase extraction (SPE) can preconcentrate the analytes and remove interfering matrix components [8].

- For liquid chromatography, consider comprehensive two-dimensional liquid chromatography (LC × LC). This technique offers higher separation power and peak capacity, which can reduce matrix effects and improve sensitivity for complex samples [11].

- Ensure the use of appropriate internal standards. Nitrogen-15 (15N) and carbon-13 (13C) labeled internal standards are often preferred over deuterated standards to eliminate chromatographic deuterium isotope effects that can impact precision [8].

Problem: Analytical column degradation or system clogging.

- Potential Cause: Inadequate removal of particulate matter or damaging matrix components (e.g., proteins) from the sample prior to injection [12] [10].

- Solution:

- Implement or improve filtration. Use a syringe filter with a membrane material compatible with your solvent and sample. For samples heavy in particulates, use a multilayer syringe with a prefilter to prevent clogging [12].

- For biological samples, ensure effective protein precipitation or removal. While protein precipitation is simple, it may not provide a very clean final extract, leading to downstream issues [7].

Detailed Experimental Protocols

Protocol for Evaluating and Mitigating Matrix Effect in LC-MS/MS

Objective: To identify and correct for matrix-mediated ion suppression/enhancement in a quantitative LC-MS/MS method for biological samples.

Materials and Reagents:

- Samples: Blank matrix (e.g., human plasma), quality control samples, and study samples [7].

- Chemicals: Acetonitrile, methanol, water, and formic acid (all LC/MS grade) [7].

- Equipment: LC-MS/MS system with electrospray ionization (e.g., Shimadzu UFLC system coupled to an API4000 triple quadrupole mass spectrometer) [7].

- Consumables: LC column (e.g., Phenomenex Synergi C18, 150 × 2.0 mm, 4 μm), guard cartridge, SPE cartridges for clean-up (e.g., Strata-X PRO) [7] [10].

Procedure:

- Sample Preparation:

- Option A (Minimal Clean-up): Precipitate proteins by adding a volume of organic solvent (e.g., acetonitrile) to the plasma sample, vortex mix, and centrifuge. Transfer the supernatant for analysis [7] [10].

- Option B (Enhanced Clean-up): Use solid-phase extraction. Condition an SPE cartridge with methanol and water. Load the sample, wash with appropriate solvents to remove impurities, and elute the analyte with a strong solvent. Evaporate and reconstitute the eluent for analysis [10].

LC-MS/MS Analysis:

- Chromatography: Utilize a gradient elution. For example, use a mobile phase of water with 0.1% formic acid (Solvent A) and acetonitrile with 0.1% formic acid (Solvent B). A sample gradient could be: 5% B (0–0.5 min), 5–35% B (0.5–1.5 min), 35–55% B (2.5–4.5 min), then re-equilibrate to 5% B [7].

- Mass Spectrometry: Operate in multiple reaction monitoring (MRM) mode. Optimize compound-specific parameters like declustering potential (DP) and collision energy (CE) for each analyte [7].

Matrix Effect Assessment:

- Compare the instrument response for the analyte in a neat solution to the response for the analyte spiked into a extracted blank matrix.

- A significant difference in response indicates a matrix effect. The matrix effect (ME) can be calculated as:

ME (%) = (Response in matrix / Response in neat solution) × 100%. A value of 100% indicates no effect, <100% indicates suppression, and >100% indicates enhancement [10].

Troubleshooting: If a significant matrix effect is observed, consider the following adjustments to the LC method, as demonstrated in a case study analyzing antibiotics [7]:

- Alter the Gradient: Modify the gradient profile and flow rate to change analyte retention times and separate them from interfering compounds.

- Extend Runtime: A longer run time (e.g., from 6.0 to 7.5 minutes) can allow for better separation of analytes from each other and from matrix interferences [7].

Protocol for Improving Selectivity via Comprehensive Two-Dimensional Liquid Chromatography (LC × LC)

Objective: To achieve superior separation of analytes from matrix components in a complex sample, thereby enhancing selectivity and reducing matrix effects.

Materials and Reagents:

- Samples: Complex mixture, such as pesticide residues in water [11].

- Chemicals: Milli-Q water, acetonitrile (ACN), ammonium formate, formic acid.

- Equipment: Comprehensive two-dimensional LC system (LC × LC) coupled to a high-resolution mass spectrometer (HRMS), two LC columns with different selectivities.

Procedure:

- System Configuration:

- First Dimension (1D): Utilize a per-aqueous liquid chromatography (PALC) column for the first separation [11].

- Second Dimension (2D): Utilize a reversed-phase (RPLC) column for the second separation. Using a 2D column with a smaller internal diameter (e.g., 1.5 mm) can help maximize sensitivity [11].

Method Development:

- Optimize the mobile phases for each dimension to be compatible. The use of a water-based mobile phase in the 1D (PALC) allows for on-column refocusing in the 2D (RPLC), preventing peak broadening and sensitivity loss [11].

- Set the modulation time (the time the effluent from the 1D is collected and transferred to the 2D) to capture multiple slices across each 1D peak.

Analysis:

- The sample is injected into the 1D column. As components elute from the 1D, they are sequentially captured and transferred to the 2D column for further separation.

- The effluent from the 2D column is then directed to the HRMS for detection.

Advantages: This setup provides a significant boost in peak capacity and selectivity compared to one-dimensional LC. It effectively reduces matrix effects by physically separating analytes from a greater number of potential interferents before they reach the mass spectrometer [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Key reagents and materials for handling complex samples.

| Item | Function | Example Application |

|---|---|---|

| Strata-X PRO Sorbent | A polymeric solid-phase extraction sorbent designed for enhanced matrix removal. | Effectively removes phospholipids from biological samples like serum, reducing matrix effects and improving reproducibility [10]. |

| Stable Isotopically Labeled Internal Standards | An internal standard physicochemically similar to the target analyte but structurally unique (e.g., 13C or 15N labeled). | Corrects for fluctuations during sample preparation and ionization suppression/enhancement in mass spectrometry, ensuring accurate quantification [8] [7]. |

| Phospholipid Monitoring Kits | Tools to detect and quantify phospholipids in sample extracts. | Used during method development to identify the elution region of phospholipids and adjust the LC method to move analytes away from this region [7]. |

| HILIC/PALC Columns | Columns for hydrophilic interaction liquid chromatography or per-aqueous liquid chromatography. | Provide orthogonal separation mechanisms to reversed-phase LC. Useful as the first dimension in 2D-LC setups to increase overall separation power for complex samples [11]. |

| Syringe Filters (PVDF/PES) | Filtration devices to remove particulate matter from samples prior to injection. | Prevents clogging of LC systems and columns. Hydrophilic PVDF and PES membranes are recommended for low nonspecific binding of proteins and lower molecular weight analytes [12]. |

Frequently Asked Questions (FAQs)

Q: What is the simplest way to check for matrix effects in my LC-MS/MS method? A: The most straightforward test is a post-extraction addition experiment. Prepare two sets of samples: 1) analyte spiked into a neat solution, and 2) analyte spiked into an extracted blank matrix. Compare the peak responses. If the response in the matrix is significantly lower or higher, a matrix effect is present. Using a stable isotope internal standard that co-elutes with the analyte is also a key strategy to monitor and correct for these effects during routine analysis [8] [10].

Q: My method has adequate sensitivity with standards but fails with real samples. What should I do? A: This is a classic symptom of matrix-induced signal suppression. First, enhance your sample clean-up protocol. Moving from a simple protein precipitation to a selective technique like solid-phase extraction (SPE) can dramatically reduce interfering compounds [10]. Second, re-optimize your chromatographic method to achieve better separation of the analyte from the region where matrix interferences elute. This might involve testing different gradient conditions or a different type of LC column [7].

Q: Are there any alternatives to extensive blood sampling for pharmacokinetic studies in vulnerable populations? A: Yes, several strategies are employed, especially in pediatric studies. These include:

- Dried Blood Spot (DBS) Sampling: Requires only a very small volume of blood (5-10 µL) collected from a finger or heel prick.

- Sparse Sampling: In conjunction with population PK (popPK) modeling, where each patient contributes only a few samples taken at different times, and the data are pooled to build a robust model.

- Opportunistic Sampling: Aligning PK sample collection with routine clinical blood draws to minimize additional venipuncture [13].

Q: How does comprehensive 2D-LC (LC × LC) help with complex samples? A: Comprehensive 2D-LC significantly increases the separation power, or "peak capacity," of the chromatographic system. By combining two independent separation mechanisms (e.g., PALC and RPLC), it can resolve many more compounds in a single run than one-dimensional LC. This greatly reduces the likelihood of co-elution between an analyte and matrix interferents, thereby minimizing matrix effects and improving the accuracy of quantification [11].

Workflow and Relationship Diagrams

Analytical Challenge-Solution Workflow

Sample Preparation Technique Hierarchy

Sample preparation is the critical first step in the analytical process, designed to isolate target analytes from complex matrices [1]. In modern analytical workflows, this step frequently becomes the rate-limiting factor, consuming over 60% of total analysis time in chromatographic analyses and being responsible for approximately one-third of all analytical errors [1]. The performance of subsequent analysis is fundamentally dependent on the effectiveness of these initial preparation steps.

The growing complexity of analytical challenges—from environmental monitoring to pharmaceutical development—has driven the development of high-performance strategies that enhance selectivity, sensitivity, speed, stability, accuracy, automation, application, and sustainability [1]. This article examines four principal strategies that have emerged as transformative approaches: employing functional materials, utilizing chemical or biological reactions, applying external energy fields, and integrating specialized devices [1].

High-Performance Strategy 1: Functional Materials

Mechanism and Implementation

Functional materials serve as additional phases that disrupt the equilibrium of sample preparation systems, enabling efficient enrichment and selective separation of target analytes [1]. These materials enhance both sensitivity and selectivity by concentrating analytes within their specialized structures. The development of these materials has been significantly shaped by interdisciplinary demands from life sciences, environmental monitoring, medical diagnostics, and food safety [1].

Key Material Types and Applications:

- Porous Materials (e.g., MOFs, COFs): Feature designable pore structures and functional surfaces for efficient extraction of organic pollutants [1]. A melamine foam@COF composite has been successfully fabricated with hierarchically porous structures for food safety analysis [1].

- Molecularly Imprinted Polymers (MIPs): Create specific recognition cavities for target molecules, significantly improving selectivity [1]. A space-confined growth strategy has been used to develop molecularly imprinted membrane SERS substrates for rapid food safety analysis [1].

- Magnetic Materials: Enable rapid separation through magnetic fields, simplifying extraction procedures [1]. Magnetic graphene oxide nanocomposites have been developed for efficient extraction of pyrrolizidine alkaloids from tea beverages [1].

- Advanced Carbon Materials: Include graphene, carbon nanotubes, and their derivatives with high surface areas and tunable surface chemistry [1].

- Ionic Liquids and Deep Eutectic Solvents: Offer unique solvation properties and environmental benefits compared to traditional organic solvents [1]. A pH-controlled reversible deep-eutectic solvent system has been developed for simultaneous extraction and in-situ separation of isoflavones from pueraria lobata [1].

Troubleshooting Guide: Functional Materials

| Problem | Possible Causes | Solutions |

|---|---|---|

| Low extraction efficiency | Material saturation, incorrect pH, insufficient contact time | Regenerate material; adjust sample pH; optimize incubation time |

| Poor selectivity | Non-specific binding, matrix interference | Use more specific MIPs; implement clean-up steps; adjust loading conditions |

| Material loss | Physical degradation, improper handling | Use magnetic composites; follow manufacturer handling protocols |

| Inconsistent results | Batch-to-batch variability, improper storage | Source from reliable suppliers; maintain strict storage conditions |

| High background noise | Incomplete washing, material leaching | Increase wash steps; use stable cross-linking; pre-wash materials |

Frequently Asked Questions (FAQs)

Q: How do I select the appropriate functional material for my specific analytes? A: Consider the chemical nature of your target analytes (polarity, charge, size) and the sample matrix. Hydrophobic analytes pair well with carbon-based materials, while ionic compounds may require ion-exchange materials. For complex matrices, magnetic composites with specific surface functionalities often provide the best balance of selectivity and practicality [1].

Q: What is the typical lifespan and regeneration protocol for these materials? A: Most functional materials can withstand 10-50 cycles depending on matrix complexity. Magnetic materials can be regenerated with appropriate solvent washes (e.g., methanol for reversed-phase materials), while MIPs may require specific elution protocols matching their imprinting conditions [1].

High-Performance Strategy 2: Chemical and Biological Reactions

Mechanism and Implementation

Reaction-based sample preparation addresses limitations of traditional separation techniques by transforming analytes into more detectable forms or leveraging biological recognition mechanisms [1]. This strategy significantly enhances detection sensitivity and selectivity, particularly for challenging applications where target analytes exist at ultra-trace levels or coexist with structurally similar compounds in complex matrices [1].

Key Reaction-Based Techniques:

- Derivatization: Chemically modifies analytes to improve volatility, detectability, or chromatographic behavior [1] [9]. This process is particularly valuable for gas chromatography, where it improves the volatility of compounds, and for enhancing spectroscopic detection of compounds with poor native response [1] [9].

- Enzyme-Mediated Digestion: Uses specific enzymes to break down complex matrices and release target analytes [9]. Enzyme digestion is especially valuable in biological studies for breaking down proteins or other macromolecules that might interfere with analysis [9].

- Acid Digestion: Employed for decomposing organic materials and preparing inorganic samples for analysis [9]. This method is commonly used for metal analysis and for samples requiring complete matrix decomposition.

- Biological Recognition: Utilizes antibodies, aptamers, or molecularly imprinted polymers for highly specific target capture [1]. These mechanisms greatly increase selectivity through lock-and-key binding principles.

Experimental Protocol: Enzyme-Assisted Extraction

Materials Required:

- Appropriate hydrolytic enzyme (e.g., protease, lipase, cellulase)

- Buffer solution optimized for enzyme activity

- Temperature-controlled incubation system

- Precipitation reagents (e.g., organic solvents, acids)

- Centrifuge and filtration apparatus

Step-by-Step Procedure:

- Sample Homogenization: Prepare a homogeneous sample suspension in appropriate buffer.

- pH Adjustment: Adjust to optimal pH for enzyme activity using dilute acid/base.

- Enzyme Addition: Add enzyme at recommended concentration (typically 1-5% w/w).

- Incubation: Incubate at optimal temperature with continuous mixing for 2-24 hours.

- Enzyme Inactivation: Heat to 85°C for 10 minutes or use solvent precipitation.

- Clarification: Centrifuge and filter to remove precipitated proteins and debris.

- Extract Concentration: Evaporate solvent under nitrogen stream if necessary.

- Reconstitution: Reconstitute in compatible solvent for analysis.

High-Performance Strategy 3: External Energy Fields

Mechanism and Implementation

External energy fields enhance sample preparation by significantly accelerating mass transfer and reducing the duration of phase separation processes [1]. Various energy fields—including thermal, ultrasonic, microwave, electric, and magnetic—improve extraction efficiency and separation performance through physical mechanisms that disrupt sample matrices and enhance analyte transfer [1].

Energy Field Applications:

- Ultrasonic Energy: Creates cavitation bubbles that disrupt cells and enhance solvent penetration [1]. This method is extensively applied for extracting organic and inorganic analytes from solid and semi-solid samples, often reducing extraction time from hours to minutes [1].

- Microwave Energy: Generates rapid internal heating through molecular rotation and ionic conduction, efficiently extracting analytes from various matrices [1].

- Electric Fields: Enable electrokinetic extraction and separation based on charge differences, particularly useful for biological molecules [1].

- Thermal Energy: Accelerates kinetic processes and improves diffusion rates, with advanced systems offering precise temperature control [1].

- Magnetic Fields: Facilitate rapid separation of magnetic particles functionalized with specific capture agents [1].

Research Reagent Solutions

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Functionalized Magnetic Beads | Target capture & separation | Surface chemistry must match analyte properties; optimize binding buffer |

| Ionic Liquids | Green extraction solvents | Tunable properties; excellent for hydrophobic compounds |

| Molecularly Imprinted Polymers | Selective recognition | Custom synthesis for target analyte; validate cross-reactivity |

| Enzyme Cocktails | Matrix digestion | Select based on matrix composition; optimize pH and temperature |

| Derivatization Reagents | Analyte modification | Improve detection; must not interfere with analysis |

High-Performance Strategy 4: Specialized Devices

Mechanism and Implementation

Device-based strategies represent an innovative approach to overcoming limitations of traditional methods, such as operational complexity and insufficient automation [1]. Miniaturization, particularly through microfluidic technology, enables significant improvements in analytical performance while reducing reagent consumption and analysis time [1]. These systems enhance precision through automated fluid handling and integrated control systems.

Key Device Configurations:

- Microfluidic Chips: Enable precise manipulation of small fluid volumes (nL-pL) with integrated functional elements for rapid, high-efficiency separations [1].

- Online Sample Preparation Systems: Automate and hyphenate sample preparation with analytical instruments, significantly reducing total analysis time and improving reproducibility [1].

- Arrayed and High-Throughput Platforms: Allow parallel processing of multiple samples, dramatically increasing throughput while maintaining consistency [1].

- Miniaturized Extraction Devices: Incorporate functional materials in compact formats that reduce solvent consumption while maintaining extraction efficiency [1].

- Lab-on-a-Chip Systems: Integrate multiple sample preparation steps into single devices, minimizing sample loss and contamination risks [1].

Performance Comparison of Sample Preparation Strategies

| Strategy | Key Strengths | Common Limitations | Optimal Applications |

|---|---|---|---|

| Functional Materials | High selectivity & sensitivity; analyte concentration | Operational complexity; extended analysis time | Trace analysis; complex matrices |

| Chemical/Biological Reactions | Enhanced detectability; high specificity | Additional steps; reagent consumption | Targeted compound analysis; structural analogs |

| External Energy Fields | Rapid processing; improved kinetics | Specialized instrumentation; method optimization | Time-sensitive analysis; solid samples |

| Specialized Devices | Automation; precision; miniaturization | Initial cost; design complexity | High-throughput labs; integrated analysis |

Integrated Workflows and Future Perspectives

The strategic integration of multiple high-performance approaches often yields superior results compared to individual methods. Material-enhanced strategies can be effectively combined with energy field assistance to simultaneously improve selectivity and processing speed [1]. Similarly, reaction-based methods integrated into specialized devices enable automated, highly specific sample preparation workflows [1].

Future developments will likely focus on creating more intelligent, adaptive systems that automatically optimize preparation parameters based on sample characteristics [1]. Sustainable chemistry principles will continue to influence the field, driving the development of greener materials and methods that reduce environmental impact while maintaining analytical performance [1]. The integration of artificial intelligence for method selection and optimization represents another promising direction for advancing sample preparation capabilities [1].

Frequently Asked Questions (FAQs)

Q: How do I approach optimizing a sample preparation method for a completely new analyte? A: Begin with a thorough literature review of similar compounds, then systematically evaluate the four high-performance strategies: start with functional materials matching your analyte's properties, explore derivatization options if detection sensitivity is low, consider energy-assisted extraction for difficult matrices, and evaluate device-based approaches if throughput is a priority. A factorial experimental design is recommended for optimizing multiple parameters efficiently [1].

Q: What strategy is most suitable for high-throughput laboratory environments? A: Device-based strategies, particularly automated online systems and arrayed platforms, offer the greatest advantages for high-throughput settings. These systems minimize manual intervention, improve reproducibility, and can process large sample batches with minimal operator attention. The initial investment is offset by significant time savings and reduced error rates [1].

In diverse evidence types research, the steps taken long before data analysis—the sample and data preparation—fundamentally determine the validity of experimental conclusions. Poor preparation introduces errors, biases, and artifacts that compromise data quality at its source, leading to unreliable analytics and flawed decision-making. This technical support center provides targeted troubleshooting guides and FAQs to help researchers identify, resolve, and prevent the most common preparation-related issues, thereby safeguarding the integrity of their scientific outcomes.

Technical Troubleshooting Guides

Troubleshooting High-Performance Liquid Chromatography (HPLC)

HPLC analysis is susceptible to a range of issues stemming from poor preparation of samples, mobile phases, or system setup. The table below summarizes common symptoms, their root causes in preparation, and corrective actions.

| Symptom | Root Cause (Preparation-Related) | Solution |

|---|---|---|

| Peak Tailing [14] [15] | - Basic compounds interacting with silanol groups.- Incorrect mobile phase pH. [14] | - Use high-purity silica columns. [15]- Prepare fresh mobile phase with correct pH. [14] |

| Broad Peaks [14] [15] | - Sample solvent stronger than mobile phase.- Column contamination from previous samples. | - Dissolve or dilute sample in the mobile phase. [15]- Flush column with strong solvent; use a guard column. [14] |

| Extra Peaks / Ghost Peaks [14] | - Sample contamination.- Carryover from previous injections. | - Filter sample and use clean solvents. [14]- Increase wash/run time; flush system with strong solvent. [14] |

| Low Pressure [14] | - Leaks in the system. | - Check and tighten all fittings; replace damaged parts. [14] |

| High Pressure [14] | - Column blockage.- Mobile phase precipitation. | - Backflush or replace the column. [14]- Prepare fresh mobile phase and flush the system. [14] |

| Baseline Noise & Drift [14] | - Air bubbles in the system.- Contaminated mobile phase or detector cell. | - Degas the mobile phase thoroughly. [14]- Prepare fresh mobile phase and clean the detector flow cell. [14] |

Troubleshooting RNA Isolation

Successful downstream applications like sequencing depend on high-quality RNA, which can be compromised during isolation. The following workflow outlines a diagnostic path for common RNA preparation problems.

Troubleshooting Next-Generation Sequencing (NGS) Library Preparation

Library preparation is a critical stage where small errors can lead to sequencing failure. The table below catalogs common problem categories and their preparatory root causes.

| Problem Category | Typical Failure Signals | Common Root Causes in Preparation |

|---|---|---|

| Sample Input & Quality [16] | Low yield; smear in electropherogram; low complexity. | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification. [16] |

| Fragmentation & Ligation [16] | Unexpected fragment size; inefficient ligation; adapter-dimer peaks. | Over- or under-shearing; improper buffer conditions; suboptimal adapter-to-insert ratio. [16] |

| Amplification & PCR [16] | Over-amplification artifacts; high duplicate rate; bias. | Too many PCR cycles; carryover of enzyme inhibitors. [16] |

| Purification & Cleanup [16] | Incomplete removal of adapter dimers; high sample loss. | Incorrect bead-to-sample ratio; over-drying beads; pipetting errors. [16] |

Diagnostic Strategy for Low NGS Library Yield [16]:

- Check the Electropherogram: Look for sharp peaks at ~70-90 bp, indicating adapter dimers, or broad peaks indicating size heterogeneity.

- Cross-Validate Quantification: Compare fluorometric methods (Qubit) with qPCR and absorbance to confirm the concentration of amplifiable molecules.

- Trace Backward: If ligation fails, check the fragmentation quality and input DNA integrity.

- Review Protocols and Reagents: Confirm kit lot numbers, enzyme expiry dates, buffer freshness, and pipette calibration.

Frequently Asked Questions (FAQs)

General Data Quality

Q1: What are the broader business impacts of poor data quality in research? Poor data quality has cascading consequences beyond the lab, including significant financial loss—averaging $15 million annually for businesses according to a Gartner survey [17] [18]. It leads to inaccurate analytics, wasted resources on futile campaigns, reputational damage, and non-compliance fines, ultimately undermining strategic decision-making and competitive standing [17].

Q2: What are the most common data quality issues that arise from poor preparation? The most frequent issues are:

- Duplicate Data: Skews analytical results and machine learning models [18].

- Inaccurate Data: Often traced to human entry errors, data decay, or data drift [18].

- Inconsistent Data: Mismatches in formats, units, or spellings across different data sources [17] [18].

- Incomplete Data: Blank fields or missing information render data useless for analysis [17].

Q3: What human factors drive poor data quality in research? Key human factors include [19]:

- Human Error: Typos, misinterpretation of instructions, and inaccuracies in data recording.

- Bias and Subjectivity: Researchers' preconceptions can unconsciously influence data collection and analysis.

- Lack of Standardization: Inconsistent definitions and formats across projects impede data comparability.

- Publication Pressure: The urgency to publish can lead to rushed data collection and analysis, overlooking errors.

Experimental Scenarios

Q4: My HPLC peaks are fronting. What is the most likely cause related to my sample? Peak fronting is often caused by sample overload or incompatible solvent strength [14] [15]. To fix this, reduce your injection volume or dilute your sample. Ensure the sample is dissolved in the mobile phase or a solvent weaker than the mobile phase [15].

Q5: I see genomic DNA contamination in my RNA sample. How can I prevent this? DNA contamination occurs when genomic DNA is not sufficiently sheared or removed [20]. Ensure your homogenization method (e.g., using a bead beater) is vigorous enough to break down the DNA. The most effective solution is to include a dedicated DNase treatment step during or after the isolation process [20].

Q6: My NGS library has a very high level of adapter dimers. What went wrong in the prep? A high adapter-dimer peak typically indicates a suboptimal adapter-to-insert molar ratio during the ligation step, often from too much adapter or too little starting DNA [16]. To resolve this, accurately quantify your fragmented DNA using a sensitive method like fluorometry and titrate the adapter concentration. Improving the efficiency of post-ligation cleanup using size-selective beads can also help remove these dimers [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Preparation |

|---|---|

| DNase Treatment Kit | Enzymatically degrades contaminating genomic DNA during RNA isolation to ensure pure RNA for downstream applications. [20] |

| Beta-Mercaptoethanol (BME) | Added to lysis buffers to inactivate RNases and stabilize RNA samples during extraction, preventing degradation. [20] |

| Size-Selection Beads | Used in NGS library prep to selectively bind and remove unwanted short fragments like adapter dimers and to isolate the desired fragment size range. [16] |

| HPLC Guard Column | A small, disposable column placed before the main analytical column to trap particulate matter and contaminants, protecting the more expensive analytical column and extending its life. [14] [15] |

| Silica Spin Filters | A core component of many nucleic acid extraction kits, using a silica membrane to bind DNA or RNA in the presence of specific salts, allowing impurities to be washed away. [20] |

Proactive Prevention: Building a Culture of Data Quality

Preventing data quality issues is more efficient than fixing them. The following diagram illustrates how a cascade of small preparation errors leads to invalid conclusions, and how to build a robust defense at each stage.

To foster a proactive culture of data quality, implement these three foundational methods [17]:

- Develop a Supportive Workplace Culture: Establish and enforce standardized guidelines for data handling, including consistent naming conventions, formats, and clearly defined data ownership.

- Conduct Regular Audits and Cleaning: Perform routine, real-time data quality checks to identify and correct issues before they propagate, preventing the use of decayed or stagnant data.

- Apply Core Data Principles: Integrate the five principles of data quality into all workflows: Accuracy, Completeness, Consistency, Uniqueness, and Timeliness.

FAQs and Troubleshooting Guides

This technical support center provides targeted troubleshooting for researchers working with proteins, nucleic acids, and metabolites. The following FAQs address common experimental pitfalls and their solutions.

Protein Analysis

1. My Bradford assay results are inconsistent or show high background. What should I do?

The Bradford assay is susceptible to interference from substances commonly found in sample buffers.

- Cause & Solution: Inconsistent results often stem from inaccurate pipetting or old, improperly stored dye reagents. Ensure your Bradford reagent is stored at 4°C and has not expired. Use consistent pipetting techniques and bring the reagent to room temperature before use [21].

- Cause & Solution: High background can be caused by contaminants on glassware or incompatible substances in your sample buffer. Use clean cuvettes and ensure your sample does not contain high concentrations of interfering substances [21].

- Cause & Solution: If you suspect a specific interfering substance, dilute your sample several-fold in a compatible buffer. If the protein concentration is sufficient, this can reduce interferents to a non-problematic level. Alternatively, dialyze or desalt the sample into a compatible buffer [22]. Precipitation methods (using acetone or TCA) can also be used to remove interfering substances and isolate the protein pellet [22].

Table: Common Compatible Substances in Bradford Assays

| Substance | Maximum Compatible Concentration |

|---|---|

| Sucrose | 10 mM |

| Ammonium Sulfate | 10 mM |

| EDTA | 1 mM |

| Sodium Chloride (NaCl) | 100 mM |

| Triton X-100 | 0.01% |

Source: Adapted from ZAGENO [21].

2. My fluorescent protein assay (e.g., Qubit) is giving a "Standards Incorrect" error.

This error indicates a problem with the calibration of the assay.

- Cause & Solution: The kit may have expired or been stored incorrectly. Check the expiration date and ensure components are stored as specified (e.g., protect dyes from light) [22].

- Cause & Solution: The calibration standard may be degraded. High degradation of the BSA standard will decrease the signal and trigger this error. Replace the kit [22].

- Cause & Solution: Detergents in the sample buffer can interfere. Fluorescent protein assays are detergent-based and can only tolerate very low concentrations of additional detergents. Check the manual for a compatibility table [22].

- Cause & Solution: Inaccurate pipetting of small volumes (1-2 µL) can cause errors. If possible, pipette at least 5 µL of sample for more consistent results [22].

3. My recombinant protein is not expressing in my bacterial system.

Protein expression depends on the interplay of vector, host strain, and growth conditions [23].

- Cause & Solution: Verify your plasmid construct. After cloning, ensure your protein of interest is still in-frame and the sequence is correct by sequencing the plasmid. Also, check the sequence for long stretches of rare codons, which can cause truncation; use online tools to analyze this and consider using an expression host engineered with genes for the necessary tRNAs [23].

- Cause & Solution: Choose the appropriate bacterial host. If you have a toxic protein or issues with "leaky" expression (expression before induction), use a host strain designed for tight control, such as one containing the pLysS plasmid for T7 polymerase systems [23].

- Cause & Solution: Optimize growth conditions. Perform an expression time course, taking samples every hour after induction to determine the optimal production window. Test different induction temperatures (e.g., 30°C vs. 37°C) and inducer concentrations, as some inducers like IPTG can be toxic to cells at high levels [23].

Nucleic Acid Analysis

1. I see faint or no bands on my nucleic acid gel.

This issue is commonly related to sample preparation, loading, or detection.

- Cause & Solution: Low quantity of loaded nucleic acid. For clear visualization, load a minimum of 0.1–0.2 μg of DNA or RNA per millimeter of gel well width [24].

- Cause & Solution: Sample degradation. Use molecular biology grade reagents and nuclease-free labware. Always wear gloves and use areas designated for nucleic acid work [24].

- Cause & Solution: The gel was over-run, causing small fragments to migrate off the gel. Monitor the run time and the migration of the loading dyes [24].

- Cause & Solution: Low sensitivity of the stain. For thick or high-percentage gels, allow a longer staining time for the dye to penetrate. Consider using stains with higher affinity for your target (e.g., special stains for single-stranded nucleic acids) [24].

2. My nucleic acid gel shows smeared bands.

Smearing often indicates degradation or suboptimal electrophoresis conditions.

- Cause & Solution: Sample overloading. Do not exceed the recommended 0.1–0.2 μg of sample per millimeter of gel well width. Overloaded gels show trailing smears and warped bands [24].

- Cause & Solution: Sample degradation. This is a very common cause of smearing. Ensure all reagents and labware are nuclease-free [24].

- Cause & Solution: The presence of excess protein or salt in the sample. Remove proteins by purification or by denaturing them in a loading dye with SDS and heating. For high-salt buffers, dilute the sample in nuclease-free water or precipitate and resuspend the nucleic acid [24].

- Cause & Solution: Using the incorrect gel type. For single-stranded nucleic acids like RNA, always use a denaturing gel to prevent secondary structure formation. For double-stranded DNA, avoid denaturing conditions [24].

3. The bands on my gel are poorly separated.

Poor resolution is typically addressed by optimizing the gel matrix and run conditions.

- Cause & Solution: Incorrect gel percentage. Use a higher percentage gel to better resolve smaller molecular fragments [24].

- Cause & Solution: Suboptimal gel choice. For nucleic acids smaller than 1,000 bp, polyacrylamide gels provide much better resolution than agarose gels [24].

- Cause & Solution: Sample overloading. As with smearing, overloading leads to poorly resolved, dense bands. Follow the loading guidelines [24].

- Cause & Solution: Incorrect voltage or run time. Apply voltage as recommended for the nucleic acid size and buffer system. A very low voltage leads to poor separation, while a very high voltage can generate excessive heat and cause band diffusion [24].

Metabolite Analysis (LC-MS/MS Based)

1. Why does my LC-MS/MS data show multiple signals for what I think is a single metabolite?

A single metabolite can generate multiple signals due to its chemical properties and the ionization process [25].

- Cause & Solution: Formation of multiple adducts. Besides the common [M+H]+ and [M-H]- ions, metabolites can form adducts with sodium [M+Na]+, potassium [M+K]+, or ammonium [M+NH4]+ (in positive mode). The extent of adduct formation depends on the mobile phase composition and metabolite structure. Check solvent quality, as water stored in glass can lead to higher Na+ and K+ adducts [25].

- Cause & Solution: In-source fragmentation. Metabolites can fragment before reaching the mass analyzer due to high ionization voltages or temperature, leading to signals like [M-H2O+H]+. This is metabolite-dependent and not consistent across all compounds [25].

- Cause & Solution: Presence of isotopic peaks. Naturally occurring isotopes like 13C (1.1% abundance) will cause an M+1 peak. The pattern of these peaks can be a key identifier [25].

2. My metabolite identification pipeline is unreliable. What are common pitfalls?

Metabolite identification is a major challenge in non-targeted metabolomics.

- Cause & Solution: Over-reliance on m/z alone. An m/z value can match thousands of compounds. Always use tandem MS/MS fragmentation data to confirm structural details and narrow down candidate identities [25].

- Cause & Solution: Ignoring retention time and adduct information. The unique pair of m/z and retention time (RT) defines a feature. Annotating all detected adducts and in-source fragments for a single metabolite is crucial for accurate quantification and to avoid misinterpreting them as unique metabolites [25].

- Cause & Solution: Not using a standardized data format. Peak tables from different processing software (e.g., MZmine, XCMS) can look very different. Using standardized formats like .mzTab helps ensure consistency and reproducibility in data analysis [25].

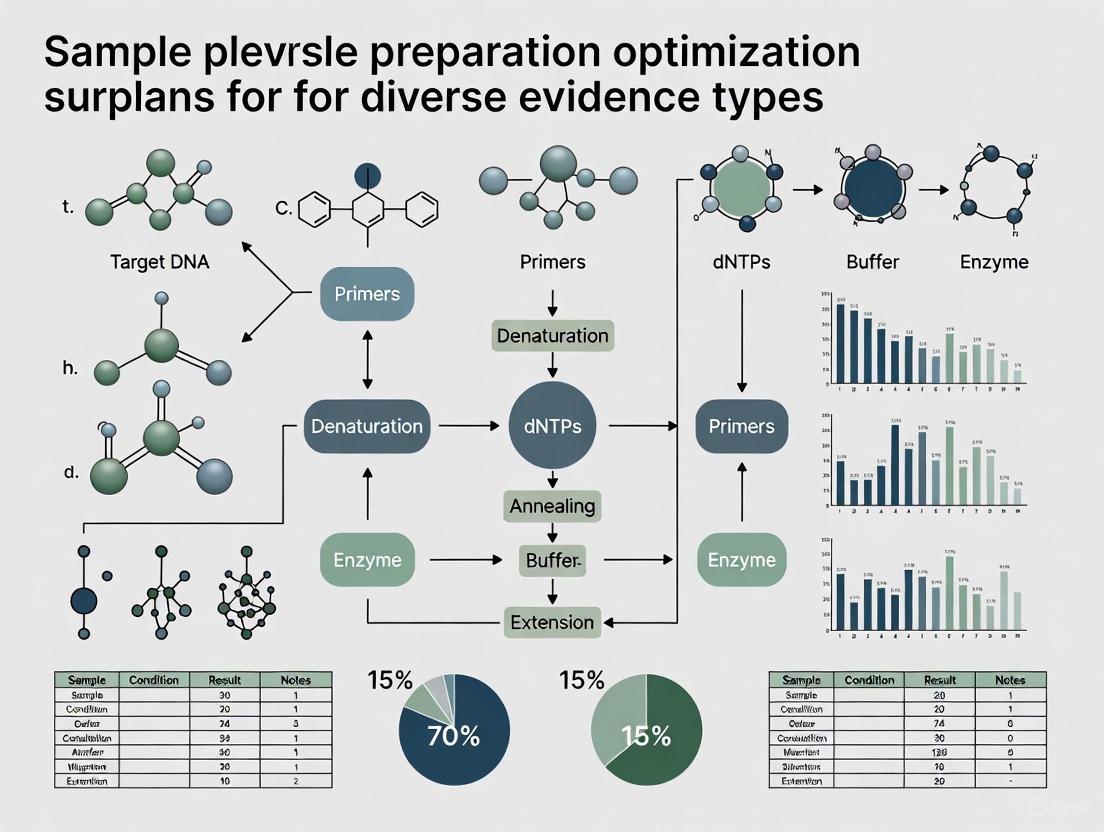

Sample Preparation Optimization Workflow

Efficient sample preparation is the critical first step in any analytical workflow. The following diagram illustrates a strategic framework for optimizing this process, highlighting four key high-performance strategies.

Metabolite Signal Complexity in LC-MS/MS

A single metabolite can generate multiple signals in a mass spectrometer, complicating data interpretation. The following diagram outlines the primary sources of this complexity.

Experimental Troubleshooting Flowchart

This flowchart provides a systematic approach to diagnosing and resolving common issues across different experimental types.

Research Reagent Solutions

The following table details key reagents and materials essential for successful experiments in protein, nucleic acid, and metabolite research.

Table: Essential Research Reagents and Materials

| Item | Function / Application | Key Considerations |

|---|---|---|

| Functional Materials (e.g., MOFs, MIPs) | High-performance sample preparation; selective enrichment of target analytes from complex matrices [1]. | Enhances sensitivity and selectivity; may increase operational complexity [1]. |

| Deep Eutectic Solvents (DES) | Green and efficient extraction solvents for various analytes, including proteins [1]. | Offer low toxicity and tunable physicochemical properties; used in liquid-phase microextraction [1]. |

| Coomassie Brilliant Blue Dye | The active component in Bradford assays; binds to basic/aromatic amino acids for protein quantification [21]. | Susceptible to interference from detergents and alkaline conditions; use at room temperature in plastic/glass cuvettes [21]. |

| Fluorescent Protein Assay Dyes | Quantify proteins selectively using fluorescence (e.g., Qubit assays); more tolerant of some contaminants than colorimetric assays [22]. | Highly sensitive to detergents; requires accurate pipetting; use specific assay tubes for optimal performance [22]. |

| Agarose & Polyacrylamide | Matrix for nucleic acid gel electrophoresis. Agarose for larger fragments, polyacrylamide for higher resolution of small fragments (<1,000 bp) [24]. | Gel percentage must be appropriate for fragment size; use denaturing gels (e.g., with urea) for RNA or single-stranded DNA [24]. |

| Fluorescent Nucleic Acid Stains | Detect nucleic acids in gels; high sensitivity and safety compared to traditional ethidium bromide. | Sensitivity varies; single-stranded nucleic acids may require specific stains or longer staining times [24]. |

| T7 Polymerase & Expression Hosts | Drive high-level expression of recombinant proteins in bacterial systems (e.g., BL21 strains). | For toxic proteins, use hosts with pLysS for tighter control and reduced "leaky" expression [23]. |

| tRNA Supplemented Strains | Bacterial hosts engineered to encode rare tRNAs. | Facilitate correct translation and full-length expression of proteins containing codons that are rare in E. coli [23]. |

Protocols in Practice: Tailored Sample Preparation for Core Analytical Techniques

The success of any mass spectrometry (MS)-based proteomics experiment is critically dependent on the quality of sample preparation. Inconsistent or suboptimal protocols for lysis, digestion, and clean-up are major sources of irreproducibility, potentially leading to false negatives, false positives, and significant data variability [26]. Careful planning at this initial stage is foundational to obtaining reliable and meaningful results, enabling researchers to accurately explore the proteome and answer complex biological questions [27].

Pre-Experiment Considerations

Before beginning wet lab work, address these key questions to define your experimental strategy [27]:

- Biological Question: What specific hypothesis is the experiment designed to test?

- Sample Type: What is the nature of the sample (e.g., cells, tissue, biofluid)?

- Protein Abundance: How abundant is the target protein? Low-abundance proteins may require enrichment.

- Modification Stability: Are the protein modifications of interest (e.g., phosphorylation) stable under your planned conditions?

- Contamination Control: What measures will be implemented to prevent contamination from keratins or polymers?

- Experimental Controls: What controls are necessary to validate the results?

- Digestion Strategy: Which enzyme and digestion protocol will yield optimally sized peptides?

- Data Analysis Software: Which software will be used for data analysis, and what are its requirements?

Detailed Experimental Protocols

Protein Extraction and Digestion from Limited Tissue

This protocol is optimized for small-scale samples, such as neuronal tissues, where protein yield is a primary concern [28].

Materials:

- Lysis Buffer: 5% SDS

- Pierce BCA Protein Assay Kit

- S-Trap micro columns

- Dithiothreitol (DTT), 0.5M

- Iodoacetamide (IAA), 0.55M

- Trypsin Gold, Mass Spectrometry Grade

- Phosphoric acid, 12%

- Formic acid, 0.2% in water

- 1.5 ml Protein LoBind tubes

- Tissue homogenizer

- Thermonixer or incubator

- Refrigerated centrifuge

- SpeedVac concentrator

Procedure:

- Homogenization: Transfer frozen tissue to a homogenizer. Add 100 µl of 5% SDS lysis buffer and homogenize thoroughly at room temperature. Note: SDS may precipitate on ice. [28]

- Clarification: Transfer the homogenate to a 1.5 ml LoBind tube, boil for 2 minutes, and centrifuge at 14,000 × g for 10 minutes. Collect the supernatant. [28]

- Protein Quantification: Determine the protein concentration using a BCA assay.

- Reduction: Take a 100 µg protein aliquot. Add DTT to a final concentration of 2 mM and incubate at 56°C for 30 minutes. [28]

- Alkylation: Add IAA to a final concentration of 5 mM. Incubate at room temperature for 45 minutes in the dark. [28]

- Acidification and Binding: Add a 1/10 volume of 12% phosphoric acid. Then add 165 µl of binding/wash buffer for every 27.5 µl of acidified sample. Vortex until the solution turns opaque. [28]

- S-Trap Digestion:

- Load the mixture onto the S-Trap column and centrifuge at 4,000 × g until all liquid passes through.

- Wash the column with the recommended binding/wash buffer.

- Add 20 µl of trypsin solution (1 µg/µl in 50 mM TEAB) to the column, ensuring the enzyme soaks into the matrix.

- Incubate at 37°C for at least 1 hour.

- Sequentially elute peptides with 0.2% formic acid, followed by 50% acetonitrile with 0.2% formic acid.

- Combine eluents and concentrate in a SpeedVac. [28]

Diagram 1: Protein Extraction and Digestion Workflow

Phosphopeptide Enrichment Workflow

For phosphoproteomics, a dual-enrichment strategy significantly improves yield from limited samples [28].

Materials:

- Fe-NTA Magnetic Beads

- TiO2 Beads and Kit

- Loading buffer (e.g., 80% Acetonitrile / 2% Lactic Acid)

- Wash buffers (e.g., 80% Acetonitrile / 1% TFA)

Procedure:

- First Enrichment (Fe-NTA): Reconstitute digested peptides in loading buffer. Incubate with Fe-NTA magnetic beads to capture phosphopeptides. Wash beads thoroughly to remove non-specific binders. [28]

- Elution: Elute phosphopeptides from the Fe-NTA beads.

- Second Enrichment (TiO2): Take the eluate (or a separate aliquot of the digest) and incubate with TiO2 beads. Wash and elute again. [28]

- Combine and Concentrate: Combine eluents from both enrichment steps, or analyze separately for broader coverage. Concentrate samples prior to LC-MS/MS. [28]

Diagram 2: Dual-Strategy Phosphopeptide Enrichment

Troubleshooting Guide: Common Issues and Solutions

| Problem Scenario | Question to Ask | Recommended Solution |

|---|---|---|

| No Protein Detection | Was the protein expressed in my sample? | Verify input sample by Western Blot. [27] |

| Sample Loss | Was the protein lost during processing? | Monitor each step (e.g., Western Blot, Coomassie). Scale up or use fractionation/IP for low-abundance proteins. [27] |

| Unexpected Results | Was the protein degraded? | Add broad-spectrum, EDTA-free protease inhibitor cocktails (e.g., PMSF) to all preparation buffers. [27] |

| Poor Peptide Detection | Do my peptides "escape detection"? | Optimize digestion time or change protease type (e.g., trypsin/Lys-C mix). Consider double digestion. [27] |

| System Performance | Is the issue from sample prep or the LC-MS? | Check system performance with a HeLa Protein Digest Standard. Run it directly and as a control co-treated with your sample. [29] |

| Inconsistent Data | Are my results suffering from poor quantification? | Use stable isotope-labeled internal standards to mitigate matrix effects. Ensure consistent dilution and mixing. [30] |

The Scientist's Toolkit: Essential Reagents and Materials

| Item | Function | Example |

|---|---|---|

| S-Trap Micro Columns | Efficient digestion and cleanup of protein samples, especially in high-SDS conditions. [28] | Protifi, cat. no. C02-MICRO-10 |

| Pierce HeLa Digest Standard | Control standard to verify LC-MS system performance and troubleshoot sample prep workflows. [29] | Thermo Fisher, cat. no. 88328 |

| Pierce Calibration Solutions | Calibrate the mass spectrometer to ensure mass accuracy and reliable data. [29] | Thermo Fisher |

| Trypsin, MS-Grade | High-purity protease for specific digestion of proteins into peptides for MS analysis. [28] | Promega, cat. no. V5280 |

| Fe-NTA Magnetic Beads | High-specificity enrichment of phosphopeptides prior to MS analysis. [28] | - |

| TiO2 Enrichment Kit | Broad-spectrum enrichment of phosphopeptides; often used in tandem with Fe-NTA. [28] | - |

| Nitrogen Blowdown Evaporator | Gentle concentration of samples by using a stream of dry nitrogen gas, minimizing sample loss. [30] | Organomation N-EVAP |

FAQs: Addressing Specific Experimental Challenges

Q: How can I prevent sample contamination that interferes with MS detection? A: Use filter tips and single-use pipettes whenever possible. Prepare solutions with HPLC-grade water and avoid autoclaving plastics and solutions, as this can leach polymers. Do not use standard washing detergents for glassware dedicated to MS sample prep. [27]

Q: What are the common mistakes in sample cleanup for chromatography? A: The most frequent errors are inadequate sample cleanup leading to ion suppression, and contamination from plasticware. Employ appropriate cleanup techniques like Solid-Phase Extraction (SPE) and use high-quality, MS-grade solvents and labware to minimize interference. [30]

Q: My protein coverage is low. What does this mean and how can I improve it? A: Low coverage means a small proportion of the protein's sequence was detected by peptides. This can result from low protein abundance or suboptimal peptide sizing. To improve it, consider increasing digestion time, using a different protease, or performing a double digestion with two different enzymes. [27]

Q: How should I store my protein samples to maintain stability? A: Keep all protein samples at a low temperature during preparation (4°C) and for storage (-20°C to -80°C). Always avoid repeated freeze-thaw cycles, as this can degrade proteins. [27] [30]

Q: Why is my data irreproducible even with a controlled sample? A: A multi-laboratory study revealed that irreproducibility often stems from missed identifications (false negatives) and errors in database matching and curation, not necessarily a failure to detect the peptides. [26] Ensure you are using updated search engines and databases, and carefully validate your search parameters. [29] [26]

Key Parameters for Data Analysis

When interpreting your MS data, these four parameters are essential for assessing protein identification confidence [27]:

| Parameter | Description | Ideal Range/Value |

|---|---|---|

| Intensity | Measure of peptide abundance; influenced by protein abundance and ionization efficiency. | Varies by sample. |

| Peptide Count | Number of unique peptides detected for a given protein. | Higher counts increase confidence. |

| Coverage | Percentage of the total protein sequence covered by the detected peptides. | >40% for purified proteins; 1-10% in complex proteomes. [27] |

| P-value / Q-value / Score | Statistical significance of peptide identification. | P-value/Q-value < 0.05. [27] |

Nucleic Acid Isolation and Library Construction for Next-Generation Sequencing (NGS)

Nucleic Acid Isolation: Troubleshooting Common Challenges

The initial phase of nucleic acid isolation is critical, as the quality and quantity of the extracted DNA or RNA directly determine the success of all subsequent NGS steps. This section addresses frequent obstacles and provides targeted solutions.

Frequently Asked Questions

What are the primary causes of DNA degradation, and how can I prevent it? DNA degradation can occur through several mechanisms, and prevention requires a multi-faceted approach [31].

- Oxidation: Caused by exposure to heat or UV radiation. Use antioxidants and store samples at -80°C in oxygen-free environments to slow this process [31].

- Hydrolysis: Results from water molecules breaking DNA bonds. Use buffered solutions and store samples in dry or frozen conditions to minimize damage [31].

- Enzymatic Breakdown: Caused by nucleases present in biological samples. Inactivate nucleases with heat treatment, chelating agents (e.g., EDTA), or nuclease inhibitors during extraction and storage [31].

- Excessive Mechanical Shearing: Overly aggressive homogenization can fragment DNA. Use instruments that allow for precise control over homogenization speed and duration, and employ specialized bead tubes to minimize mechanical stress [31].

My DNA yield from a tissue sample is low. What could be the reason? Low yield from tissues is often a result of suboptimal handling or protocol selection [32].

- Cause: Tissue pieces are too large, preventing efficient lysis. Alternatively, the silica membrane in the spin column may be clogged with indigestible tissue fibers [32].

- Solution: Cut the starting material into the smallest possible pieces or grind it with liquid nitrogen. For fibrous tissues, after Proteinase K digestion, centrifuge the lysate to pellet and remove these fibers before loading it onto the column [32].

My DNA sample appears contaminated. How can I improve purity? Contamination is often revealed by poor absorbance ratios (A260/A230 and A260/280) and can stem from various sources [16] [32].

- Protein Contamination: Ensure complete tissue digestion by extending lysis time and cutting tissue into small pieces. For blood samples with high hemoglobin, optimize lysis time [32].

- Salt Contamination: This is frequently caused by the binding buffer contacting the upper area of the spin column. Avoid touching the upper column with the pipette tip, do not transfer foam, and ensure all wash steps are performed thoroughly [32].

Table 1: Troubleshooting Nucleic Acid Isolation

| Problem | Common Causes | Recommended Solutions |

|---|---|---|

| Low DNA Yield [16] [32] | Degraded input sample; clogged column; inaccurate quantification. | Flash-freeze tissues in liquid nitrogen; minimize tissue input size; use fluorometric quantification (e.g., Qubit) instead of spectrophotometry [32] [33]. |

| DNA Degradation [31] [32] | Improper storage; high nuclease activity in tissues (e.g., liver, pancreas); slow thawing of cell pellets. | Store samples at -80°C; use stabilizers like RNAlater; keep samples on ice during prep; add enzymes to frozen samples and let them thaw during lysis [32]. |

| Protein Contamination [32] | Incomplete tissue digestion; high hemoglobin in blood. | Extend lysis time; cut tissue into small pieces; for blood, adjust Proteinase K digestion time [32]. |

| Salt Contamination [32] | Carryover of binding buffer (e.g., guanidine salts) into the eluate. | Pipette carefully onto the center of the silica membrane; avoid transferring foam; ensure complete washing [32]. |

NGS Library Construction: FAQs and Troubleshooting

Library construction converts purified nucleic acids into a format compatible with NGS platforms. Errors in this process are a common source of sequencing failure.

Frequently Asked Questions

What are the key steps in NGS library preparation? A conventional library construction protocol consists of four main steps [34]:

- Fragmentation: DNA is sheared to a desired length via enzymatic, sonication, or other physical methods.

- End Repair: The fragmented DNA is converted into blunt-ended, 5'-phosphorylated fragments.

- Adapter Ligation: Platform-specific adapters are ligated to the fragments, enabling sequencing and sample multiplexing.

- Library Amplification (Optional): The adapter-ligated library is amplified using PCR to generate sufficient material for sequencing.

My final library yield is low. Where should I look for the problem? Low library yield can originate from several points in the preparation workflow [16].

- Root Causes: Poor input DNA quality, contaminants inhibiting enzymes, inefficient fragmentation or ligation, and suboptimal purification or size selection that leads to sample loss [16].

- Diagnostic Strategy: Check the electropherogram for a broad size distribution or adapter dimer peaks. Use fluorometric quantification (Qubit) and qPCR to cross-validate concentration. Ensure reagents are fresh and enzymes are not expired [16].

I see a sharp peak at ~70-90 bp in my library bioanalyzer trace. What is it? This is a classic sign of adapter dimer formation, where adapters ligate to themselves instead of your target DNA fragments [16] [34].

- Causes: Using an excessive adapter-to-insert molar ratio or inefficient ligation of adapters to the target DNA [16].

- Solutions: Precisely titrate the adapter concentration. Include a rigorous size selection or purification step (e.g., using magnetic beads) to remove these small fragments before sequencing [16] [34].

How can I reduce bias in my library during PCR amplification? Amplification bias is a common challenge that reduces library complexity [35].

- Cause: Performing too many PCR cycles can lead to over-amplification artifacts and a high duplicate rate [16].

- Solutions: Use the minimum number of PCR cycles necessary. Employ high-fidelity DNA polymerases known to minimize amplification bias. If bias is detected in the data, bioinformatics tools like Picard MarkDuplicates or SAMTools can be used to remove PCR duplicates [35].

Table 2: Troubleshooting Library Construction

| Problem | Common Causes | Recommended Solutions |

|---|---|---|

| Low Library Yield [16] | Inhibitors in input DNA; inefficient ligation; over-aggressive size selection. | Re-purify input DNA; titrate adapter:insert ratio; optimize bead-based cleanup ratios [16]. |

| Adapter Dimer Formation [16] [34] | Excess adapters; inefficient ligation of insert DNA. | Use precise adapter:insert ratios; include a size selection step to remove dimers [16]. |

| High Duplicate Rate / PCR Bias [35] [16] | Too many amplification cycles; low input material. | Minimize PCR cycles; use high-fidelity polymerases; remove duplicates bioinformatically [35]. |

| Inconsistent Fragment Size [36] | Variation in fragmentation conditions; issues during size selection. | Carefully control fragmentation time/energy; validate and optimize the size selection method [36]. |

Workflow and Process Diagrams

The following diagrams illustrate the core workflows for nucleic acid isolation and library construction, highlighting key decision points and potential failure points addressed in the troubleshooting guides.

Nucleic Acid Isolation Workflow

Library Construction Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful NGS sample preparation relies on a suite of specialized reagents and kits. The table below details key solutions for critical steps in the workflow.

Table 3: Essential Research Reagent Solutions

| Reagent / Kit | Primary Function | Key Considerations |

|---|---|---|

| Mechanical Homogenizer (e.g., Bead Ruptor) [31] | Efficiently disrupts tough or fibrous samples (tissue, bone, bacteria) for nucleic acid release. | Allows precise control over speed and time to balance yield against DNA shearing. Cryo-cooling accessories can minimize heat-induced degradation [31]. |

| Magnetic Beads [35] [16] | Purify and size-select nucleic acids after enzymatic reactions (e.g., ligation, PCR). | The bead-to-sample ratio is critical. Incorrect ratios can lead to inefficient removal of adapter dimers or loss of desired fragments [16]. |

| Monarch Spin gDNA Extraction Kit [32] | Purifies genomic DNA from cells, tissue, and blood. | Protocol is optimized for specific input amounts. Overloading columns, especially with DNA-rich tissues like spleen, can drastically reduce yield [32]. |

| Ion Plus Fragment Library Kit [37] | Prepares fragment libraries from mechanically sheared DNA. | Designed specifically for physically fragmented DNA and is not compatible with enzymatic shearing methods [37]. |

| Ion Universal Library Quantitation Kit [37] | Accurately quantifies sequencing libraries via qPCR. | This kit is compatible with U-containing amplicons (e.g., from Ion 16S Metagenomics Kit), unlike other quantification kits, ensuring accurate results for specialized libraries [37]. |

| T4 DNA Polymerase & T4 PNK [34] | Performs end repair during library construction, creating blunt-ended, 5'-phosphorylated fragments. | Essential for generating the correct ends for subsequent adapter ligation. Inefficient repair directly reduces ligation efficiency [34]. |

| High-Fidelity DNA Polymerase [34] | Amplifies the adapter-ligated library with minimal errors and bias. | Using a polymerase with high fidelity is crucial to minimize the introduction of mutations during the PCR amplification step of library prep [34]. |

ELISA Fundamentals: Core Concepts and Workflow

What are the basic principles behind ELISA?

The Enzyme-Linked Immunosorbent Assay (ELISA) is a powerful biochemical immunological assay that detects antigen-antibody interactions using enzyme-labelled conjugates and substrates that generate measurable color changes [38]. The method is based on the principle of detecting antigen-antibody interaction where the enzymatic activity is linked to the antibodies [38].

The key components essential for any ELISA protocol include:

- Solid phase: Typically 96-well microplates where analytes are attached

- Conjugate: Enzyme-labelled antibodies specific to the target molecule

- Substrate: Reacts with the enzyme to produce detectable color

- Wash buffer: Removes unbound components between steps

- Stop solution: Halts the enzyme-substrate reaction at desired time [38]

The most common ELISA formats are direct, indirect, sandwich, and competitive ELISA, each with specific advantages for different applications [38] [39].

What is the typical workflow for a sandwich ELISA?

The following diagram illustrates the generalized workflow for a sandwich ELISA, which is considered the most robust format:

In sandwich ELISA, the antigen is captured between two primary antibodies (capture and detection), providing high specificity [40]. This format is particularly valuable for detecting complex antigens in biological samples [40].

ELISA Development: Strategic Planning

How do I select the right ELISA format for my research?

Choosing the appropriate ELISA format depends on your specific research needs, target analyte, and available reagents:

Table: Comparison of Major ELISA Formats

| Format | Principle | Advantages | Limitations | Best For |

|---|---|---|---|---|

| Direct ELISA | Antigen immobilized directly; detected with labeled primary antibody | Fast procedure; minimal steps | Lower sensitivity; potential high background | High-abundance targets; screening |

| Indirect ELISA | Antigen immobilized; detected with unlabeled primary and labeled secondary antibody | High sensitivity; signal amplification | Cross-reactivity potential | Antibody detection; titer determination |

| Sandwich ELISA | Antigen captured between two antibodies | High specificity and sensitivity | Requires matched antibody pairs | Complex samples; low-abundance targets |