Spectroscopic Methods Decoded: A Comparative Analysis of Advantages, Limitations, and Applications in Drug Development

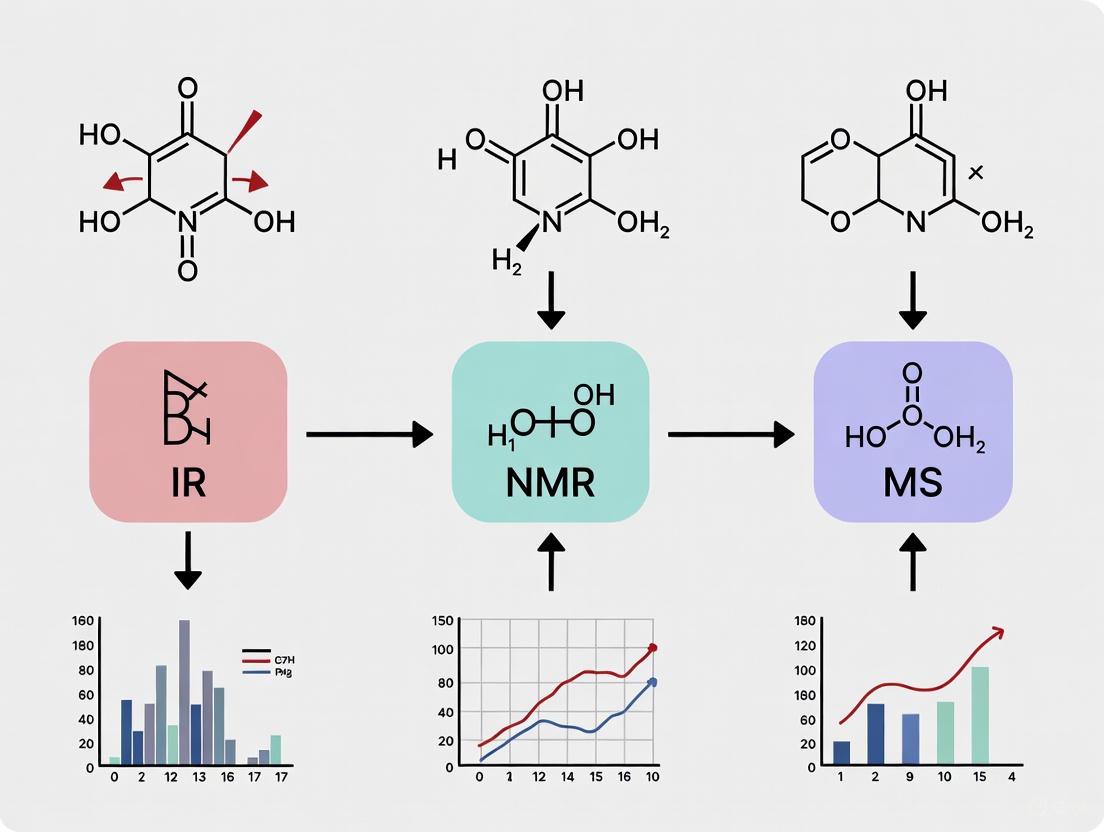

This article provides a comprehensive comparative analysis of major spectroscopic techniques, including NMR, MS, UV-Vis, NIR, and IR spectroscopy, tailored for researchers and drug development professionals.

Spectroscopic Methods Decoded: A Comparative Analysis of Advantages, Limitations, and Applications in Drug Development

Abstract

This article provides a comprehensive comparative analysis of major spectroscopic techniques, including NMR, MS, UV-Vis, NIR, and IR spectroscopy, tailored for researchers and drug development professionals. It explores the fundamental principles, operational methodologies, and specific applications of each technique, addressing common troubleshooting scenarios and offering optimization strategies. A direct comparative evaluation equips scientists with the knowledge to select the most appropriate method for their specific analytical challenges, from routine quality control to complex structural elucidation in biomedical research.

Core Principles of Spectroscopic Techniques: Building Your Analytical Foundation

Spectroscopy is the scientific discipline concerned with the measurement and interpretation of spectra resulting from the interaction of electromagnetic radiation with matter [1]. As a cornerstone of analytical chemistry, it provides invaluable insights into molecular composition, structure, and dynamics by analyzing how materials absorb, emit, or scatter light across the electromagnetic spectrum [2]. The fundamental process involves illuminating a sample with electromagnetic energy and measuring its response across various wavelengths, generating a unique spectral fingerprint for each material [3] [4]. These light-matter interactions are governed by quantum mechanical principles, where energy is transferred in discrete packets called photons, and molecules undergo transitions between discrete energy states [3]. The resulting data, often characterized as "big data" due to the large number of wavelengths measured, enables both qualitative identification and quantitative determination of substances across diverse fields including pharmaceutical development, materials science, and clinical diagnostics [2] [4].

The Electromagnetic Spectrum and Light-Matter Interactions

The electromagnetic spectrum encompasses multiple regions defined by wavelength, frequency, and photon energy, each probing distinct molecular phenomena through specific light-matter interactions [3] [2]. Figure 1 illustrates the fundamental relationship between spectroscopic techniques and their corresponding electromagnetic regions.

The photon energy (E=hν) varies significantly across the spectrum, determining which molecular phenomena can be probed by each technique [2]. In the X-ray regime (0.1-100 nm), the high photon energy causes excitation of core electrons and can ionize atoms, making it suitable for elemental analysis [2]. The ultraviolet and visible (UV-Vis) regime (100 nm-1 μm) is dominated by electronic transitions in molecules, particularly affecting chromophores and molecules with aromatic and conjugated pi-electron systems [3] [2]. The infrared regime (1-30 μm) is commonly subdivided into near-infrared (NIR, overtone and combination vibrations) and mid-infrared (MIR, fundamental vibrations) regions [3] [2]. The terahertz regime (30-3000 μm) probes low-frequency vibrations of intermolecular bonds such as hydrogen bonds and dipole-dipole interactions, while the microwave regime (3-300 mm) utilizes even lower energies to study molecular rotations [2].

Fundamental Interaction Mechanisms

Absorption Processes

Absorption occurs when incident photon energy matches the energy difference between two molecular quantum states, promoting the molecule to a higher energy level [3]. The resulting absorption spectrum represents a plot of absorbed radiation versus wavelength or frequency, providing characteristic molecular fingerprints [2]. The Beer-Lambert law quantifies this relationship, establishing that absorbance is proportional to concentration, path length, and a molecular absorption coefficient, forming the basis for quantitative analysis [2].

In UV-Vis spectroscopy, measurements focus on electronic transitions between molecular orbitals in the 190-800 nm range [3] [5]. Specific chromophores absorb at characteristic wavelengths: nitriles (~160 nm), acetylenes (~170 nm), alkenes (~175 nm), ketones (180 nm & 280 nm), and aldehydes (190 nm & 290 nm) [3]. Infrared absorption involves vibrational transitions where molecules absorb specific frequencies corresponding to natural vibrational energies of their chemical bonds [3]. Different functional groups demonstrate characteristic fundamental vibrations: C-H stretching (methyl, methylene, aromatic), O-H stretching, N-H stretching, C=O stretching (carbonyl), and C-F stretching [3].

Scattering Phenomena

Scattering techniques involve irradiating a sample and analyzing the elastically or inelastically scattered light [2]. Rayleigh scattering represents elastic scattering where incident and scattered photons have the same energy, while Raman scattering is an inelastic process where energy transfer occurs between photons and molecules [2]. It is crucial to note that scattering is a virtually instantaneous process (femtosecond timescale), distinct from absorption-emission processes like fluorescence which occur over pico- to microsecond timescales [2].

Raman spectroscopy provides complementary information to IR spectroscopy, particularly advantageous for aqueous samples because water exhibits weak scattering [3] [2]. Dominant Raman spectral features include acetylenic -C≡C- stretching, olefinic C=C stretching (1680-1630 cm⁻¹), N=N (azo-) stretching, S-H (thio-) stretching, C=S stretching, and S-S stretching bands [3]. Raman instrumentation typically offers high signal-to-noise ratio, compatibility with fiber optics, and requires minimal sample preparation [3].

Emission Processes

Emission occurs when excited molecules return to lower energy states, releasing energy as photons [2]. Fluorescence spectroscopy involves photon absorption promoting electrons to excited singlet states, followed by emission of lower-energy photons during relaxation [6]. Modern instrumentation like the Edinburgh Instruments FS5 v2 spectrofluorometer and Horiba's Veloci A-TEEM Biopharma Analyzer simultaneously collect absorbance, transmittance, and fluorescence excitation-emission matrix (A-TEEM) data, providing powerful alternatives to traditional separation methods for biopharmaceutical applications including monoclonal antibody analysis, vaccine characterization, and protein stability studies [6].

Comparative Analysis of Spectroscopic Techniques

Table 1: Quantitative Comparison of Major Spectroscopic Methods

| Technique | Spectral Range | Primary Interactions | Key Applications | Detection Limits | Sample Requirements |

|---|---|---|---|---|---|

| UV-Vis [3] [5] | 190-800 nm | Electronic transitions | Concentration determination, dissolution testing, impurity monitoring | ppm range | Optically clear solutions, minimal particulates |

| NIR [3] [2] | 780-2500 nm | Overtone & combination vibrations | Process monitoring, moisture analysis, raw material identification | 0.1% range | Minimal preparation, compatible with fibers |

| MIR [3] [5] | 2.5-30 μm | Fundamental vibrations | Structural elucidation, functional group identification, polymorph screening | <1% range | Solids (KBr pellets), liquids (ATR), limited by water interference |

| Raman [3] [2] | 1800-1000 cm⁻¹ | Molecular vibrations | Aqueous samples, polymorph identification, material characterization | 1-5% range | Minimal preparation, non-destructive, avoids fluorescence |

| Fluorescence [6] | UV-Vis range | Emission from excited states | Trace analysis, protein folding, binding studies | ppb-ppt range | Requires fluorophores, sensitive to environment |

| Microwave [6] [2] | 3-300 mm | Molecular rotations | Gas-phase structure determination, conformational analysis | High purity required | Gas phase, small molecules |

Table 2: Performance Characteristics for Pharmaceutical Analysis

| Technique | Specificity | Sensitivity | Analysis Speed | Cost Considerations | Regulatory Acceptance |

|---|---|---|---|---|---|

| UV-Vis [5] | Moderate | High for chromophores | Very fast (<1 min) | Low instrument cost | Well-established in pharmacopeias |

| NIR [2] [1] | Low to moderate (requires chemometrics) | Moderate | Fast (seconds) | Moderate cost | PAT applications, requires validation |

| MIR [5] | High (specific fingerprints) | High | Moderate (minutes) | Moderate to high cost | Standard for identity testing |

| Raman [2] | High (sharp peaks) | Moderate to high | Fast (seconds-minutes) | High initial cost | Growing in PAT applications |

| Fluorescence [6] | Very high | Very high | Fast (seconds) | High cost for advanced systems | Specialized applications |

| NMR [5] | Very high (atomic resolution) | Moderate | Slow (minutes-hours) | Very high cost | Gold standard for structure elucidation |

Experimental Methodologies and Protocols

UV-Vis Spectroscopy Protocol

Sample Preparation: Prepare optically clear solutions free from particulate matter to avoid scattering effects [5]. Select solvents transparent in the spectral region of interest (e.g., acetonitrile for UV below 210 nm) [5]. For solid samples, use appropriate dissolution techniques with filtering if necessary [5].

Instrument Calibration: Perform wavelength accuracy verification using holmium oxide or didymium filters [5]. Validate photometric accuracy with potassium dichromate standards [5]. Establish baseline correction with blank solvent in matched quartz cuvettes [5].

Data Collection: Measure absorbance within the optimal range of 0.1-1.0 AU for linear Beer-Lambert behavior [5]. For quantitative analysis, develop calibration curves using minimum five standard concentrations covering the expected sample range [5]. Collect spectra with appropriate resolution (typically 1-2 nm) and scan speed based on application requirements [5].

FT-IR Spectroscopy Protocol

Sample Preparation Techniques: For ATR-FTIR, ensure good contact between sample and crystal (diamond, ZnSe, or Ge) with consistent pressure [5]. For transmission measurements, prepare KBr pellets using 1-2 mg sample in 200 mg dried KBr, pressed under vacuum [5]. For liquid samples, use sealed liquid cells with defined pathlengths (0.1-1.0 mm) [5].

Spectral Acquisition: Acquire background spectrum under identical conditions before sample measurement [6]. Collect minimum 32 scans at 4 cm⁻¹ resolution for acceptable signal-to-noise ratio [5]. Maintain consistent atmospheric conditions (purge with dry air or nitrogen) to minimize water vapor and CO₂ interference [5].

Data Processing: Apply atmospheric suppression algorithms to remove residual water vapor and CO₂ contributions [5]. For quantitative analysis, select absorption bands with high specificity and establish univariate or multivariate calibration models [5]. For the Bruker Vertex NEO platform, utilize the vacuum ATR accessory to eliminate atmospheric interferences throughout the optical path [6].

Raman Spectroscopy Protocol

Sample Considerations: Minimal preparation required; samples can be analyzed in glass containers or through transparent packaging [3]. Avoid fluorescent containers that may interfere with measurements [3]. For solid dosage forms, ensure consistent positioning and laser focus on the sample surface [2].

Instrument Parameters: Select appropriate laser wavelength (785 nm for reducing fluorescence, 1064 nm for fluorescence avoidance) [6]. Optimize laser power to prevent sample degradation while maintaining adequate signal intensity [2]. Set integration time and number of accumulations based on sample properties and desired signal-to-noise ratio [2].

Spectral Validation: Perform wavelength calibration using silicon or neon emission standards [2]. Verify intensity response with NIST-traceable standards [2]. For quantitative applications, develop multivariate calibration models using partial least squares (PLSR) regression with appropriate validation [2].

Advanced Instrumentation and Emerging Technologies

The field of spectroscopic instrumentation continues to evolve with significant advancements in 2024-2025. Atomic spectrometry has seen innovations like multi-collector ICP-MS systems designed with flexibility and versatility, featuring unique designs that enable users to customize each analysis with high-resolution multi-collector capability to resolve isotopes of interest from their interferences [6].

In molecular spectroscopy, the division between laboratory and field instruments has become more pronounced. For fluorescence applications, the Edinburgh Instruments FS5 v2 spectrofluorometer offers increased performance targeting photochemistry and photophysics communities, while Horiba's Veloci A-TEEM Biopharma Analyzer provides simultaneous collection of absorbance, transmittance, and fluorescence excitation emission matrix data specifically for biopharmaceutical applications [6].

Microspectroscopy has gained importance with instruments addressing increasingly smaller samples. The Jasco and PerkinElmer microscope accessories for FT-IR systems incorporate features like auto-focus, multiple detector capabilities, and guided workflows to simplify contaminant analysis [6]. Quantum Cascade Laser (QCL) based microscopy systems like the Bruker LUMOS II ILIM and Protein Mentor from Protein Dynamic Solutions offer enhanced imaging capabilities, with the latter specifically designed for protein-containing samples in the biopharmaceutical industry [6].

Emerging technologies include microwave spectroscopy with BrightSpec's commercial broadband chirped pulse microwave spectrometer, based on technique developed in 2006 but only recently available as commercial instrumentation [6]. This platform measures microwave rotational spectra of small molecules to unambiguously determine structure and configuration in the gas phase, with applications in academia, pharmaceutical, and chemical industries [6].

Data Analysis and Preprocessing Techniques

Spectroscopic data analysis encompasses various approaches from simple univariate calibration to complex multivariate techniques. Qualitative analysis typically involves cross-correlation of measured spectra with reference spectral databases [2]. Quantitative analysis may utilize univariate approaches when specific spectral signatures can be assigned to parameters of interest, or multivariate techniques like partial least-squares regression (PLSR), support vector machines (SVM), and artificial neural networks (ANN) for complex samples with overlapping spectral features [2].

Data preprocessing is essential for handling spectroscopic "big data" recorded across numerous wavelengths, typically [350-2500] nm in 1 nm increments [4]. Raw data often requires mathematical transformation to correct for instrumental artifacts, noise, and scattering effects [4]. Common preprocessing methods include:

- Mean centering: Subtracting the average spectrum to enhance spectral differences [4]

- Standard Normal Variate (SNV): Transforming data to zero mean and unit variance to remove scatter effects [4]

- Derivative techniques: Enhancing resolution of overlapping bands (Savitzky-Golay filtering) [4]

- Multiplicative Scatter Correction (MSC): Compensating for light scattering variations [4]

Statistical preprocessing functions, particularly affine transformation (min-max normalization) and standardization (zero mean, unit variance), have demonstrated superior performance in preserving original data relationships while accentuating spectral features like peaks, valleys, and trends [4]. These approaches maintain local maxima, minima, and underlying trends while enhancing pattern recognition in subsequent multivariate analysis [4].

Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Spectroscopic Analysis

| Reagent/Material | Technical Specification | Primary Function | Application Context |

|---|---|---|---|

| Deuterated Solvents [5] | D₂O, CDCl₃, DMSO-d₆ (99.8% deuterium) | NMR solvent without proton interference | Structural elucidation, quantitative NMR |

| KBr (Potassium Bromide) [5] | FT-IR grade, spectroscopic purity | Pellet formation for solid samples | Transmission FT-IR measurements |

| ATR Crystals [5] | Diamond, ZnSe, Ge elements | Internal reflection element | ATR-FTIR sampling with durability |

| Spectrophotometric Solvents [5] | HPLC grade, low UV cutpoint | Sample dissolution medium | UV-Vis spectroscopy |

| NMR Reference Standards [5] | TMS (tetramethylsilane), DSS | Chemical shift calibration | Quantitative chemical shift measurement |

| Calibration Standards [5] | NIST-traceable materials | Instrument performance verification | Wavelength and photometric accuracy |

| Ultrapure Water [6] | 18.2 MΩ·cm resistivity | Sample preparation, dilution | Minimize background interference |

Decision Framework for Technique Selection

Selecting appropriate spectroscopic methods requires systematic evaluation of multiple factors. Figure 2 outlines a logical decision workflow for technique selection based on analytical requirements and sample characteristics.

Key selection criteria include:

- Nature of the analyte: Organic/inorganic composition, molecular size, physical state, and degradation susceptibility under measurement conditions [2]

- Analysis type: Qualitative identification, quantitative determination, structural elucidation, or purity assessment [2] [5]

- Sensitivity requirements: Detection limits ranging from percent levels for NIR to parts-per-billion for fluorescence techniques [2]

- Sample matrix effects: Compatibility with aqueous environments (favors Raman), presence of interferents, and need for sample preparation [2]

- Throughput needs: Analysis speed ranging from seconds for UV-Vis to hours for sophisticated NMR experiments [2] [5]

- Regulatory compliance: Validation requirements according to ICH Q2(R1) guidelines and pharmacopeial standards [5]

No single spectroscopic method universally addresses all analytical needs, and strategic combination of complementary techniques often provides the most comprehensive solution for complex pharmaceutical analysis [2].

Nuclear Magnetic Resonance (NMR) spectroscopy is a powerful analytical technique that exploits the magnetic properties of atomic nuclei to determine the structure, dynamics, reaction state, and chemical environment of molecules. This method provides detailed information at the atomic level, making it indispensable across chemistry, biochemistry, pharmaceuticals, and materials science. NMR spectroscopy is particularly valuable for studying biological molecules in their natural state, offering insights into molecular conformation and interactions that are difficult to obtain with other techniques [7].

The fundamental principle of NMR involves observing local magnetic fields around atomic nuclei. When placed in a strong external magnetic field, nuclei with an odd number of protons or neutrons (such as ^1H, ^13C, ^15N, ^19F, and ^31P) possess a property called nuclear spin, which gives rise to a magnetic moment. These nuclei can absorb electromagnetic radiation at specific frequencies, resonating between different energy states. The resonance frequency is highly dependent on the atom's chemical environment, providing a detailed fingerprint of the molecular structure [7].

Theoretical Foundations and Instrumentation

Fundamental Principles

NMR spectroscopy is based on the interaction between atomic nuclei and an external magnetic field. Key principles include:

- Nuclear Spin: Nuclei with an odd mass number (such as ^1H or ^13C) possess intrinsic spin angular momentum, resulting in a magnetic moment that enables NMR observation [7].

- Zeeman Effect: When placed in a strong external magnetic field (B₀), these magnetic moments align with or against the field, creating distinct energy levels. The energy difference between these levels corresponds to radiofrequency radiation [7].

- Resonance: At characteristic frequencies dependent on the magnetic field strength and nuclear environment, nuclei absorb energy and transition between energy states. This resonance condition forms the basis for NMR spectroscopy [7].

- Chemical Shift: The resonant frequency of a nucleus is slightly shifted by its local electronic environment, providing crucial information about molecular structure and functional groups. This is measured in parts per million (ppm) relative to a standard reference compound [8].

Instrument Components

Modern NMR spectrometers consist of several essential components [7]:

- Magnet and Sample Holder: The magnet generates a strong, stable, and homogeneous magnetic field. Traditional superconducting magnets require cryogenic cooling, while newer benchtop systems use permanent magnets. Samples are typically held in glass tubes.

- Radiofrequency (RF) Transmitter: Produces short, powerful pulses of radio waves to excite the nuclei.

- Probe and Coil: Positioned surrounding the sample, the coil serves both to transmit RF pulses and to detect the NMR signal.

- Receiver: Detects the radio frequencies emitted as excited nuclei relax to their lower energy state.

- Computer System: Processes the detected signal (Free Induction Decay) through Fourier transformation to generate the interpretable frequency-domain spectrum, and controls the instrument operation.

Experimental Methodologies and Protocols

Standard NMR Experiment Workflow

The following diagram illustrates the generalized workflow for a protein-ligand interaction study using NMR spectroscopy, a common application in drug discovery:

Sample Preparation Protocols

Proper sample preparation is critical for obtaining high-quality NMR data. The specific protocol varies depending on the sample type and experiment goal.

For small organic molecules (< 1000 Da):

- Solvent Selection: Dissolve 2-10 mg of sample in 0.6-0.7 mL of deuterated solvent (CDCl₃, DMSO-d₆, D₂O, or CD₃OD). The deuterated solvent provides a signal for the field frequency lock [9].

- Reference Standard: Add 0.1% tetramethylsilane (TMS) as an internal chemical shift reference, or use the solvent's residual proton peak as a secondary reference [9].

- Sample Filtration: Filter the solution through a 0.45 μm filter to remove particulate matter that could degrade spectral resolution.

- Tube Loading: Transfer the solution to a clean, dry NMR tube (standard 5 mm or 3 mm for limited sample), avoiding bubbles.

For protein-ligand interaction studies:

- Protein Preparation: Concentrate the isotopically labeled (^15N, ^13C) protein to 0.1-0.5 mM in an appropriate buffer (e.g., 20 mM phosphate, 50 mM NaCl, pH 6.5-7.5) using centrifugal filtration devices [10].

- Solvent Exchange: Exchange into deuterated buffer using gel filtration or dialysis to minimize the strong water signal. Alternatively, use water suppression techniques during data acquisition.

- Ligand Titration: Add small aliquots of ligand stock solution directly to the NMR tube, mixing gently after each addition. Typical ligand:protein ratios range from 0.5:1 to 10:1 for binding affinity measurements.

- Temperature Control: Maintain constant temperature during data acquisition, typically 25-30°C for proteins, unless studying temperature-dependent phenomena.

Key NMR Experiments and Methodologies

NMR spectroscopy encompasses a diverse set of experiments, each providing specific structural information.

One-Dimensional (1D) Experiments:

- ¹H NMR: The most basic experiment revealing hydrogen environments, electronic surroundings, and relative proton counts through integration [8].

- ¹³C NMR: Provides information about carbon frameworks in molecules, though lower natural abundance (1.1%) requires longer acquisition times. DEPT editing distinguishes CH₃, CH₂, CH, and quaternary carbons [8].

- Pulsed Gradient Spin-Echo (PGSE): Measures diffusion coefficients to study molecular size, aggregation, and binding.

Two-Dimensional (2D) Experiments:

- COSY (Correlation Spectroscopy): Identifies scalar-coupled protons (typically through 2-3 bonds) within a molecule, establishing proton-proton connectivity [8].

- HSQC (Heteronuclear Single Quantum Coherence): Correlates directly bonded ^1H and ^13C/^15N nuclei, providing a fingerprint of molecular structure. Particularly valuable for protein studies with ^15N-labeled samples to monitor ligand binding through chemical shift perturbations [8] [10].

- HMBC (Heteronuclear Multiple Bond Correlation): Detects long-range ^1H-^13C couplings (typically 2-3 bonds), crucial for establishing connectivity between molecular fragments [8].

- NOESY/ROESY (Nuclear Overhauser Effect Spectroscopy): Measures through-space dipolar couplings between nuclei (<5 Å), providing critical distance restraints for 3D structure determination and studying molecular conformation [8].

Specialized Advanced Experiments:

- Saturation Transfer Difference (STD): Identifies ligand atoms in close contact with protein surfaces, useful for mapping binding epitopes [9].

- TROSY (Transverse Relaxation-Optimized Spectroscopy): Reduces relaxation effects, enabling NMR studies of larger proteins and complexes (>50 kDa) [10].

- In-Cell NMR: Uses isotopically labeled molecules in living cells to study structures and interactions in native environments.

Research Reagent Solutions

The following table details essential reagents and materials required for NMR spectroscopy experiments:

Table 1: Essential Research Reagents for NMR Spectroscopy

| Reagent/Material | Function and Importance | Application Notes |

|---|---|---|

| Deuterated Solvents (CDCl₃, DMSO-d₆, D₂O) | Provides field frequency lock; minimizes strong solvent proton signals that would otherwise overwhelm sample signals [9]. | Choice depends on sample solubility; residual solvent peaks serve as secondary chemical shift references [9]. |

| Internal Standards (TMS, DSS) | Provides reference point (0 ppm) for chemical shift calibration [9]. | TMS for organic solvents; DSS for aqueous solutions. Critical for accurate chemical shift reporting [9]. |

| Isotope-Labeled Precursors (^13C-glucose, ^15N-NH₄Cl) | Incorporates NMR-active isotopes into proteins for structural studies; enables detection of low-abundance nuclei [10]. | Essential for protein NMR; specific labeling strategies (e.g., side-chain selective) simplify spectra [10]. |

| NMR Tubes | Holds sample in magnetic field; quality affects spectral resolution. | Standard 5 mm outer diameter; higher quality tubes provide better resolution for demanding applications. |

| Shift Reagents (Eu(fod)₃) | Induces predictable chemical shifts to determine stereochemistry or resolve overlapping signals. | Chiral shift reagents distinguish enantiomers; paramagnetic reagents enhance relaxation. |

| Buffer Components (deuterated salts, DTT) | Maintains pH and protein stability/activity during analysis. | Phosphate buffer common; avoid amines; use deuterated or reductant forms for compatibility. |

Comparative Analysis with Other Structural Techniques

NMR vs. X-ray Crystallography and Mass Spectrometry

The selection of structural elucidation technique depends on the specific research question, sample properties, and required information. The following table provides a quantitative comparison of NMR spectroscopy with other major analytical techniques:

Table 2: Comparative Analysis of Structural Elucidation Techniques

| Parameter | NMR Spectroscopy | X-ray Crystallography | Mass Spectrometry (MS) |

|---|---|---|---|

| Structural Detail | Full molecular framework, stereochemistry, and dynamics [8] | High-resolution 3D atomic coordinates | Molecular weight, fragmentation pattern |

| Stereochemistry Resolution | Excellent (chiral centers, conformers via NOESY) [8] | Limited to crystal conformation | Limited |

| Quantification Ability | Accurate without external standards [8] | Limited | Requires standards or internal calibrants |

| Sample State | Solution or solid (natural state) [11] [12] | Single crystal required | Gas phase (vaporized) |

| Sample Integrity | Non-destructive (sample recovery) [12] | Destructive (crystal disruption) | Destructive (sample consumed) |

| Hydrogen Atom Detection | Direct observation | Inferred, not directly observed [10] | Indirect (via fragmentation) |

| Molecular Weight Range | < 50 kDa routinely (up to ~1 MDa with TROSY) [10] | No strict upper limit | Virtually unlimited |

| Dynamic Information | Real-time kinetics, molecular motions [11] | Static snapshot only [11] | Limited |

| Key Limitations | Low sensitivity; expensive equipment; complex data interpretation [12] | Requires crystallization; no dynamics [11] | No 3D structure; ionization dependencies |

Instrument Type Comparison

The NMR spectroscopy market offers various instrument types tailored to different applications and budgets:

Table 3: NMR Spectrometer Types and Characteristics

| Instrument Type | Field Strength | Key Features | Applications | Market Share (2024) |

|---|---|---|---|---|

| High-Field NMR | 400-1200 MHz | Highest resolution and sensitivity; requires cryogenic cooling | Protein structure, complex natural products, metabolomics | 54.33% [13] |

| Benchtop NMR | 60-100 MHz | Compact, cryogen-free, lower cost and maintenance [13] | Teaching labs, quality control, reaction monitoring | Fastest growing segment (8.37% CAGR) [13] |

| Solid-State NMR | 200-1000 MHz | Specialized for insoluble materials; magic angle spinning | Polymers, membrane proteins, pharmaceuticals | Niche but essential segment |

Advantages and Limitations of NMR Spectroscopy

Key Advantages

NMR spectroscopy offers several compelling advantages that explain its widespread adoption:

- Non-Destructive Analysis: Samples can be recovered after analysis, which is particularly valuable for precious synthetic compounds or biological samples [12]. This also allows for longitudinal studies of the same sample over time.

- Atomic-Level Resolution: Provides detailed information about molecular structure, including bond connectivity, stereochemistry, and conformation at atomic resolution [8].

- Solution-State Studies: Enables analysis of molecules in near-physiological conditions, preserving native conformations and dynamics that might be lost in crystallization [11] [10].

- Dynamic Information: Unique ability to probe molecular motions and interactions across various timescales (ps-s), providing insights into binding kinetics, conformational exchange, and protein folding [11].

- Versatile Nuclei Observation: Can study multiple NMR-active nuclei (^1H, ^13C, ^15N, ^19F, ^31P) within the same molecule, providing complementary structural information [12].

- Quantitative Capabilities: Enables precise concentration measurements without external calibration standards, useful for purity assessment and reaction monitoring [8].

Current Limitations and Challenges

Despite its powerful capabilities, NMR spectroscopy faces several limitations:

- Low Sensitivity: Relatively weak interaction energies result in poor sensitivity compared to techniques like mass spectrometry, often requiring concentrated samples (0.1-5 mM for proteins) and longer acquisition times [12].

- High Instrument Cost: High-field NMR spectrometers represent significant capital investments ($500,000-$5+ million), with substantial maintenance costs for cryogenic systems [12].

- Spectral Complexity: Interpretation requires significant expertise, particularly for larger molecules where signal overlap becomes problematic [11] [12].

- Molecular Size Limitations: Conventional solution NMR becomes challenging for proteins >50 kDa due to increased signal overlap and faster relaxation, though TROSY and other advanced methods extend this limit [10].

- Deuterated Solvents Requirement: Necessity for deuterated solvents adds to experimental costs and may affect solubility for some samples [9].

Applications in Pharmaceutical Research and Drug Discovery

NMR spectroscopy plays an increasingly crucial role in modern drug discovery, with several key applications:

Structure-Based Drug Design (SBDD)

NMR-driven structure-based drug design (NMR-SBDD) has emerged as a powerful alternative to purely X-ray crystallography-driven approaches. This methodology combines ^13C amino acid precursors, selective side-chain labeling strategies, and straightforward NMR approaches with advanced computational tools to generate protein-ligand structural ensembles [10]. This provides reliable and accurate structural information about protein-ligand complexes that closely resembles the native state distribution in solution [10].

A significant advantage of NMR in SBDD is its ability to directly detect hydrogen atoms and their interactions, which are invisible to X-ray crystallography. Protons with large downfield chemical shift values typically act as hydrogen bond donors in classical H-bond interactions, while those with large upfield chemical shift values correspond to hydrogen bond donors with aromatic ring systems in CH-π and Methyl-π interactions [10]. This information is crucial for rational drug design aimed at optimizing binding interactions.

Fragment-Based Drug Discovery (FBDD)

NMR-based fragment screening has become a powerful strategy for identifying small molecules that bind to target proteins. This approach involves screening libraries of low-molecular-weight compounds (typically 150-300 Da) to identify fragments that interact with the protein of interest [14]. The hits identified through this method can then be optimized into potent and selective drug candidates. NMR's ability to provide detailed information on binding interactions at the atomic level makes it ideal for this purpose, even for weak binders (K_d in μM-mM range) [14].

Protein-Ligand Interaction Studies

NMR provides multiple approaches for studying protein-ligand interactions:

- Chemical Shift Perturbation (CSP): Monitoring changes in chemical shifts of protein signals upon ligand binding identifies binding sites and sometimes binding affinity.

- Saturation Transfer Difference (STD): Identifies ligand moieties in close proximity to the protein surface, enabling epitope mapping [9].

- INPHARMA NMR: Uses inter-ligand NOEs to investigate binding modes and competition between ligands, even in proteins with multiple binding sites [9].

These approaches provide critical information about binding affinity, kinetics, and stoichiometry that guides medicinal chemistry optimization.

Emerging Trends and Future Directions

The field of NMR spectroscopy continues to evolve with several exciting developments:

- AI and Machine Learning Integration: Deep-learning models such as DeepSAT can extract atom-level structures from 2D spectra faster than manual analysis, addressing the spectroscopist shortage and improving throughput [13]. AI is being applied to automated spectral analysis, predictive modeling, and structure elucidation [15].

- Benchtop NMR Revolution: Compact, cryogen-free benchtop NMR systems (60-100 MHz) are increasing accessibility, with the segment scaling at an 8.37% CAGR to 2030 [13]. These systems bring NMR capabilities to quality control laboratories, teaching facilities, and smaller research groups.

- Hyperpolarization Techniques: Methods like Dynamic Nuclear Polarization (DNP) can enhance NMR sensitivity by several orders of magnitude, potentially revolutionizing the study of low-abundance species and real-time metabolic tracking [10].

- Integrated Structural Biology: Combining NMR with cryo-electron microscopy (cryo-EM) and X-ray crystallography provides complementary structural information, overcoming limitations of individual methods [10].

- Operando and Inline Applications: Flow-chemistry integration is turning NMR into an inline process-control sensor that verifies reaction conversions in real time, shrinking batch times and waste [13].

- Helium-Free Systems: Growing focus on sustainable magnet technologies addresses concerns about helium scarcity and operational costs [13] [15].

The global NMR spectroscopy market reflects these trends, projected to grow from USD 1.68 billion in 2025 to approximately USD 2.73 billion by 2034, at a CAGR of 5.54% [15]. This growth is driven by expanding applications in pharmaceuticals, metabolomics, materials science, and the development of more accessible and automated systems.

Nuclear Magnetic Resonance spectroscopy remains a cornerstone technique for molecular structure determination across scientific disciplines. Its unique capabilities for probing atomic-level structure and dynamics in solution, combined with its non-destructive nature, make it particularly valuable for studying biological systems and guiding drug discovery efforts. While challenges remain in sensitivity, cost, and data interpretation, ongoing technological advancements in instrumentation, computational methods, and AI integration continue to expand its applications and accessibility.

As part of the broader spectroscopic toolkit, NMR provides complementary information to techniques like X-ray crystallography and mass spectrometry, often revealing molecular insights unavailable through other methods. The continued evolution of NMR technology promises to further solidify its role in addressing complex scientific questions in structural biology, medicinal chemistry, and materials science.

Mass Spectrometry (MS) is a powerful analytical technique that identifies and quantifies molecules by measuring the mass-to-charge ratio (m/z) of gas-phase ions. It has become a cornerstone in modern laboratories, enabling precise analysis across fields like pharmaceuticals, environmental testing, proteomics, and clinical diagnostics [16] [17]. The core principle of MS involves converting sample molecules into ions, separating these ions based on their m/z, and detecting them to generate a mass spectrum that serves as a molecular fingerprint [18]. This technical guide delves into the core components, methodologies, and applications of mass spectrometry, providing a detailed comparison of its techniques within the broader context of spectroscopic research.

The fundamental process of mass spectrometry can be broken down into three key stages: ionization, where neutral molecules are converted into ions; mass analysis, where ions are separated based on their m/z; and detection, where the separated ions are detected and data is transformed into a interpretable mass spectrum [18] [19]. The following diagram illustrates this core workflow and the essential components of a mass spectrometer.

Ionization Techniques

Ionization, the process of converting neutral molecules into charged ions, is the critical first step in mass spectrometry. The choice of ionization method depends on the sample's physical properties, volatility, and molecular weight. These techniques are broadly categorized as "hard" or "soft" based on the amount of energy transferred to the analyte during ionization.

Hard Ionization techniques, such as Electron Ionization (EI), impart high energy to molecules, resulting in extensive fragmentation. This provides valuable structural information but may obscure the molecular ion peak. Soft Ionization techniques, such as Electrospray Ionization (ESI) and Matrix-Assisted Laser Desorption/Ionization (MALDI), impart lower energy, resulting in little fragmentation and a clear molecular ion peak, making them suitable for large, labile molecules like proteins and peptides [20] [19] [21].

The following table summarizes the key characteristics of prevalent ionization methods.

| Ionization Method | Type | Typical Sample Form | Mass Range | Key Applications | Advantages | Disadvantages |

|---|---|---|---|---|---|---|

| Electron Impact (EI) [20] [19] | Hard | Gas, volatile | < 600 Da | GC-MS, environmental analysis, forensic analysis [20] [19] | High fragmentation for structural info; robust and reproducible [19] | Extensive fragmentation; requires volatile samples [20] |

| Electrospray Ionization (ESI) [16] [20] [19] | Soft | Liquid, polar | Broad (small to large molecules) | LC-MS, proteomics, pharmaceuticals [16] [20] [19] | Produces multiply charged ions for large molecules; compatible with LC [20] [19] | Sensitive to salts and impurities; complex spectra for mixtures [19] |

| Matrix-Assisted Laser Desorption/Ionization (MALDI) [16] [20] [19] | Soft | Solid, co-crystallized with matrix | Broad, up to millions of Da | Large biomolecules (proteins, peptides), imaging MS [16] [20] [19] | Minimal fragmentation; high mass range; single-charged ions simplify spectra [19] | Requires suitable matrix; spot-to-spot variability; quantitative challenges [19] |

| Atmospheric Pressure Chemical Ionization (APCI) [20] [19] | Soft | Liquid, less polar than ESI | < 1500 Da | LC-MS, less polar molecules, lipids [19] | Handles less polar compounds than ESI; good for thermostable molecules [19] | Less effective for large, thermally labile biomolecules [19] |

| Inductively Coupled Plasma (ICP) [19] [21] | Hard | Liquid (aqueous) | Elements | Trace metal analysis, elemental speciation [19] | Excellent for trace element and isotope analysis; high temperature plasma [19] | Primarily for elemental analysis, not molecular |

The workflows for two of the most common soft ionization techniques, ESI and MALDI, are detailed below.

Mass Analyzers

Following ionization, the mass analyzer separates the generated ions based on their mass-to-charge ratio (m/z). Different types of analyzers offer varying trade-offs between resolution, mass accuracy, speed, and cost. Resolution is a key parameter, defined as the ability of the mass spectrometer to distinguish between ions with small differences in m/z [16] [18] [17].

The selection of a mass analyzer is dictated by the analytical requirements, such as the need for high mass accuracy, high throughput, or detailed structural information via tandem MS (MS/MS). The following table provides a comparative overview of the most common mass analyzers.

| Mass Analyzer | Resolution | Mass Accuracy | m/z Range | Key Features | Best For | Limitations |

|---|---|---|---|---|---|---|

| Quadrupole (Q) [16] [18] | Low to Medium (~2000) [16] | Low | Up to 3000 m/z [16] | Robust, cost-effective, good for quantification [16] | Routine targeted quantification (e.g., clinical labs, QA/QC) [16] | Medium resolution; limited for complex mixtures [16] |

| Time-of-Flight (TOF) [16] [18] [17] | High | High | Essentially unlimited (m/z ∞) [16] | Rapid analysis; high mass accuracy [16] [18] | Untargeted analysis, proteomics, polymer analysis [16] [17] | Requires pulsed ionization (e.g., MALDI); can have low resolution (~350) in some configurations [16] |

| Ion Trap (IT) [16] [18] [17] | Medium (~1500) [16] | Medium | ~2000 m/z [16] | Can perform MSⁿ in a single device; compact [16] | Structural elucidation, forensic analysis, trace detection [16] | Lower resolution than TOF or Orbitrap [16] |

| Orbitrap [16] [18] [17] | Very High (>100,000) [17] | Very High | Excellent | Exceptional resolution and accuracy; no superconducting magnet [16] [17] | High-resolution analysis (proteomics, metabolomics) [16] | Expensive; requires significant space and expertise [16] |

| FT-ICR [17] | Ultra-High | Ultra-High | High | Unparalleled resolution and mass accuracy [17] | Ultra-complex mixture analysis (e.g., petroleomics, metabolomics) [17] | Very expensive; requires superconducting magnet; complex operation [17] |

Hybrid Mass Spectrometers

To leverage the strengths of different analyzers, hybrid instruments have been developed. These systems combine multiple analyzers in tandem, enhancing capabilities for specific applications.

- Quadrupole-Time-of-Flight (Q-TOF): Combines the mass-filtering capability of a quadrupole with the high resolution and mass accuracy of a TOF analyzer. Ideal for accurate mass measurement of precursor and product ions, facilitating unknown compound identification and metabolomics [18] [17].

- Quadrupole-Orbitrap: Combines a quadrupole mass filter with the high-resolution Orbitrap detector. Excellent for targeted and untargeted screening with high mass accuracy, widely used in proteomics and metabolomics [18] [17].

- Tribrid Systems: Incorporate three independent analyzers, such as a quadrupole, an Orbitrap, and a linear ion trap, offering unparalleled flexibility for complex experimental designs in top-down proteomics and PTM analysis [18].

Experimental Protocol: Metabolomics Profiling

Metabolomics, the comprehensive study of small molecules (metabolites) in a biological system, heavily relies on LC-MS platforms. The following protocol outlines a typical workflow for a global (untargeted) metabolomics study using liquid chromatography coupled to a high-resolution mass spectrometer (e.g., Q-TOF or Quadrupole-Orbitrap) [22].

Sample Preparation and Metabolite Extraction

- Sample Collection and Quenching: Collect biological samples (e.g., cells, tissue, plasma) rapidly. To immediately halt metabolic activity, use rapid quenching methods such as flash-freezing in liquid nitrogen or submerging in cold methanol (-80 °C). This step is critical for capturing an accurate metabolic snapshot [22].

- Metabolite Extraction:

- Add a pre-chilled extraction solvent (e.g., methanol:chloroform, 2:1 v/v) to the quenched sample. The use of a biphasic solvent system allows for the simultaneous extraction of polar (methanol/water phase) and non-polar lipids (chloroform phase) [22].

- Vortex vigorously and incubate on ice or at -20°C for a set time (e.g., 20 minutes).

- Centrifuge at high speed (e.g., 14,000 x g, 15 min, 4°C) to separate phases and precipitate protein.

- Carefully collect the supernatant containing the metabolites.

- Quality Control (QC) and Standardization:

- Internal Standards: Add a mixture of stable isotope-labeled internal standards to the extraction solvent before processing. This corrects for variability during extraction and analysis and aids in quantification [22].

- Pooled QC: Create a pooled QC sample by combining a small aliquot of every experimental sample. This pooled QC is injected repeatedly throughout the analytical sequence to monitor instrument stability and for data normalization.

LC-MS Analysis and Data Processing

- Chromatographic Separation: Separate the extracted metabolites using reversed-phase or hydrophilic interaction liquid chromatography (HILIC) coupled online to the mass spectrometer. This separation reduces ion suppression and complexity at the ion source [22].

- Mass Spectrometry Detection:

- Acquire data in data-dependent acquisition (DDA) or data-independent acquisition (DIA) mode.

- For broad coverage, operate the mass spectrometer in both positive and negative electrospray ionization (ESI) modes with switching.

- Use the pooled QC samples to condition the system and then run them periodically (e.g., every 6-10 injections) throughout the batch.

- Data Processing:

- Use software (e.g., XCMS, MS-DIAL, Compound Discoverer) for peak picking, alignment, and integration to generate a feature table with metabolite intensities across all samples.

- Perform statistical analysis (e.g., PCA, t-tests) to identify metabolites that are significantly altered between experimental groups.

- Metabolite Annotation: Putatively identify metabolites by matching the accurate mass (often within 5 ppm error) and MS/MS fragmentation spectra against databases such as HMDB, METLIN, or mzCloud [22].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful mass spectrometry analysis, particularly in complex fields like metabolomics and proteomics, requires careful selection of reagents and materials. The following table details key solutions used in the featured metabolomics protocol and beyond.

| Item | Function / Role in MS Analysis |

|---|---|

| Stable Isotope-Labeled Internal Standards (e.g., ¹³C, ¹⁵N) [22] | Correct for analyte loss during sample preparation and ion suppression during ionization; enable absolute quantification. |

| LC-MS Grade Solvents (e.g., Methanol, Acetonitrile, Water) [22] | Minimize background chemical noise and ion suppression; ensure chromatographic performance and reproducibility. |

| Metabolite Extraction Solvents (e.g., Methanol/Chloroform, MTBE) [22] | Precipitate proteins and efficiently extract a broad range of metabolites of varying polarity from the biological matrix. |

| MALDI Matrices (e.g., CHCA, SA, DHB) [19] [21] | Absorb laser energy and facilitate soft desorption and ionization of the analyte with minimal fragmentation. |

| Ammonium Formate/Acetate | Common volatile buffers used in LC-MS mobile phases to improve chromatographic separation and aid in protonation/deprotonation in ESI. |

| Trypsin (Proteomics Grade) | A specific protease used in proteomics to digest proteins into predictable peptides, which are more amenable to MS analysis. |

Mass spectrometry stands as a pivotal analytical technique, offering unparalleled capabilities for the identification and quantification of chemical entities. Its versatility stems from the synergistic combination of various ionization sources and mass analyzers, each with distinct advantages and limitations that make them suitable for specific analytical challenges. When evaluated against other spectroscopic methods, MS consistently demonstrates superior sensitivity, specificity, and dynamic range, particularly for analyzing complex mixtures in biological matrices.

The continuous innovation in ionization methods, mass analyzer technology, and hybrid instrument design is pushing the boundaries of MS applications. Emerging areas such as single-cell analysis, spatial metabolomics, and clinical diagnostics are increasingly reliant on MS technology [17] [23]. As protocols become more standardized and instruments more sensitive and accessible, mass spectrometry is poised to deepen its impact as an indispensable tool for researchers and drug development professionals, solidifying its role in advancing scientific discovery and improving human health.

Ultraviolet-Visible (UV-Vis) spectroscopy is a foundational analytical technique in modern scientific research and industrial applications. This method measures the absorption of ultraviolet and visible light by a sample, providing critical insights into electronic structure, composition, and concentration [24] [25]. The technique operates on the principle that molecules undergo electronic transitions when they absorb specific wavelengths of light in the UV (typically 190-400 nm) and visible (400-700 nm) regions of the electromagnetic spectrum [26].

The significance of UV-Vis spectroscopy extends across multiple disciplines due to its versatility, relative simplicity, and cost-effectiveness [25]. In pharmaceutical development, it facilitates drug discovery and quality control [26]. In biochemistry, it enables the quantification of biomolecules like proteins and nucleic acids [27] [28]. Environmental scientists employ it for contaminant detection, while materials researchers use it to characterize compounds with conjugated systems [26]. This technique occupies a unique position in the spectroscopic toolkit, offering particular strengths for quantitative analysis while presenting certain limitations in structural elucidation compared to other spectroscopic methods.

Fundamental Principles: Electronic Transitions

At its core, UV-Vis spectroscopy probes the energy required to promote electrons from ground state orbitals to higher energy, excited state orbitals [29]. When a photon of light possesses energy precisely matching the energy gap (ΔE) between a molecular orbital containing electrons and an empty higher-energy orbital, that photon may be absorbed [30]. This event, termed an electronic transition, reduces the intensity of the transmitted light at that specific wavelength, creating a measurable absorption signal [24].

The energy of the absorbed photon is inversely proportional to its wavelength, described by the equation E = hc/λ, where h is Planck's constant, c is the speed of light, and λ is the wavelength [30]. Shorter wavelengths (UV region) carry more energy and can induce more demanding electronic transitions, while longer wavelengths (visible region) carry less energy and correspond to smaller energy gaps [24].

Types of Electronic Transitions

Molecules contain various types of electrons, each with different energy requirements for excitation. The primary electronic transitions observed in UV-Vis spectroscopy are summarized in Table 1.

Table 1: Characteristics of Major Electronic Transitions in UV-Vis Spectroscopy

| Transition Type | Electrons Involved | Typical Energy/Wavelength Range | Molar Absorptivity (ε) [L·mol⁻¹·cm⁻¹] | Example Compounds |

|---|---|---|---|---|

| σ → σ* | σ-bonding electrons | High Energy / <150 nm (Far UV) | Very High | H₂, CH₄ [31] [30] |

| n → σ* | Non-bonding electrons (e.g., in O, N, S, halogens) | 150-250 nm | Low to Moderate | H₂O, CH₃OH, CH₃Cl [31] |

| π → π* | π-bonding electrons (in double/triple bonds) | 170-300 nm (longer if conjugated) | High (1,000 - 10,000) | Ethene (170 nm), Conjugated Dienes [31] [30] |

| n → π* | Non-bonding electrons adjacent to π-bonds (e.g., in C=O) | 270-350 nm | Low (10 - 100) | Acetone, Aldehydes [31] |

Chromophores are molecular functional groups containing valence electrons of relatively low excitation energy, responsible for absorbing UV or visible light [29] [31]. Extended conjugation in a molecule, such as in β-carotene which possesses 11 conjugated double bonds, significantly reduces the HOMO-LUMO energy gap, shifting the absorption to longer wavelengths (lower energies) and often into the visible region, thereby imparting color [29] [30].

Figure 1: Basic workflow of a UV-Vis spectrophotometer, showing the key components and the path of light and signal processing.

Instrumentation and Methodology

A UV-Vis spectrophotometer is designed to execute a fundamental process: generate light across a spectrum of wavelengths, direct it through a sample, and measure how much light is absorbed at each wavelength [24] [25]. The instrument's design directly impacts its accuracy, sensitivity, and applicability.

Core Components

The essential components of a typical UV-Vis spectrophotometer, as illustrated in Figure 1, include:

- Light Source: Provides broad-spectrum radiation covering UV and visible ranges. Common sources include xenon lamps (for both UV and visible), deuterium lamps (for UV), and tungsten or halogen lamps (for visible light) [24] [26]. The lamp must offer stable and continuous output across the wavelength range of interest.

- Wavelength Selector (Monochromator): This component isolates a narrow band of wavelengths from the broad output of the light source. Diffraction gratings are most common, where rotating the grating selects specific wavelengths. Filters (absorption, interference, bandpass) may also be used, often in conjunction with monochromators, to enhance precision [24]. The groove frequency of the grating (e.g., 1200 grooves/mm) determines the balance between optical resolution and usable wavelength range [24].

- Sample Compartment: Holds the sample, typically contained in a cuvette. The material of the cuvette is critical: quartz is required for UV measurements below 350 nm as it is transparent to most UV light, while glass or plastic cuvettes, which absorb UV light, can be used for visible wavelengths only [24]. Modern systems also include cuvette-free setups for very small sample volumes (e.g., 2 µL), using microfluidic capillaries [24] [28].

- Detector: Converts the transmitted light intensity into an electrical signal. Photomultiplier tubes (PMTs) are highly sensitive detectors that amplify the signal from weak light, making them ideal for low-light applications [24]. Semiconductor-based detectors, such as photodiodes and charge-coupled devices (CCDs), are also widely used for their compactness and multi-wavelength detection capabilities [24] [26].

Quantitative Analysis: The Beer-Lambert Law

UV-Vis spectroscopy is a powerful quantitative tool, primarily governed by the Beer-Lambert Law [24] [25] [28]. This law states that the absorbance (A) of a solution is directly proportional to the concentration (c) of the absorbing species and the path length (L) of the light through the solution:

A = εlc

Where:

- A is the measured absorbance (unitless).

- ε is the molar absorptivity or extinction coefficient (L·mol⁻¹·cm⁻¹), a substance-specific constant at a given wavelength.

- l is the path length of the cuvette (cm).

- c is the concentration of the solution (mol·L⁻¹).

Absorbance is defined as A = log₁₀(I₀/I), where I₀ is the intensity of the incident light and I is the intensity of the transmitted light [24] [25]. For accurate quantification, absorbance values should generally be kept below 1 to remain within the instrument's linear dynamic range [24].

Experimental Protocol: Protein Quantification at 280 nm

A standard application of UV-Vis spectroscopy is determining the concentration of proteins in solution [28]. The following protocol outlines a typical procedure using a conventional cuvette-based spectrophotometer.

Table 2: Key Reagents and Materials for Protein Quantification via UV-Vis

| Item | Function/Description | Critical Notes |

|---|---|---|

| Purified Protein Sample | The analyte of interest. | Must contain aromatic residues (Trp, Tyr) or disulfide bonds to absorb at 280 nm. |

| Reference Buffer | The solvent used to dissolve or dialyze the protein. | Serves as the "blank"; must be identical to the protein solvent to correct for background absorption. |

| Quartz Cuvette | Container for sample and reference during measurement. | Quartz is essential for UV transmission. Pathlength is typically 1 cm. |

| UV-Vis Spectrophotometer | Instrument for measuring light absorption. | Must be calibrated and capable of measurements at 280 nm. |

Step-by-Step Procedure:

- Instrument Warm-up and Initialization: Turn on the spectrophotometer and allow the lamp and electronics to stabilize for at least 15-30 minutes. Initialize the instrument software and select the absorbance mode.

- Sample and Blank Preparation: Prepare the protein sample in an appropriate buffer. Centrifuge if necessary to remove any particulate matter. Pipette the reference buffer into a clean quartz cuvette, ensuring the meniscus is below the light path and no bubbles are on the optical surfaces.

- Blank Measurement: Place the cuvette containing the reference buffer into the sample compartment. Close the lid and execute a "blank" or "zero" measurement. This sets the baseline for 100% transmittance (A=0), correcting for any minor absorption from the solvent and cuvette.

- Sample Measurement: Carefully replace the reference cuvette with a cuvette containing the protein solution. Ensure the cuvette is oriented consistently. Measure the absorbance at 280 nm.

- Data Analysis and Concentration Calculation: Record the absorbance value (A₂₈₀). Calculate the protein concentration using the Beer-Lambert law: c = A₂₈₀ / (ε × l), where ε is the theoretical or known molar absorptivity of the specific protein at 280 nm and l is the path length in cm.

Troubleshooting and Best Practices:

- High Absorbance: If A₂₈₀ > 1, dilute the sample and remeasure. The calculated concentration must then be multiplied by the dilution factor [24].

- Buffer Compatibility: Ensure the buffer components do not significantly absorb at 280 nm. Common interfering substances include EDTA and some detergents.

- Cuvette Handling: Always handle cuvettes by the opaque sides; never touch the transparent optical faces.

Applications in Research and Industry

The utility of UV-Vis spectroscopy spans qualitative identification, quantitative analysis, and dynamic monitoring across diverse fields. Its role in the comparative analysis of spectroscopic methods is defined by its specific strengths and limitations.

Key Application Areas

Quantitative Analysis of Biomolecules: This is one of the most prevalent applications.

- Nucleic Acid Quantification: DNA and RNA are quantified by measuring absorbance at 260 nm. The ratio A₂₆₀/A₂₈₀ is a standard metric for assessing purity (a ratio of ~1.8 is indicative of pure DNA) [28].

- Protein Quantification: As detailed in the protocol above, proteins are quantified based on absorbance from aromatic amino acids at 280 nm [27] [28].

- Hemoglobin Analysis: Specific assays like the SLS-Hemoglobin method are employed for precise Hb quantification in the development of blood substitutes, valued for their specificity and safety over cyanmethemoglobin-based methods [27].

Pharmaceutical Analysis: UV-Vis is used extensively in drug development and quality control for identifying active pharmaceutical ingredients (APIs), quantifying impurities, and assessing dissolution profiles [26].

Chemical Reaction Kinetics: By monitoring absorbance changes at a specific wavelength over time, researchers can track the concentration of a reactant or product, enabling the study of reaction rates and mechanisms [25] [28].

Quality Control in Food and Beverage: The technique is used to quantify concentrations of specific ingredients, such as caffeine in beverages, or to detect contaminants, ensuring compliance with labeling and safety regulations [26].

Strengths and Limitations in Comparison to Other Techniques

When framed within a broader thesis on spectroscopic methods, the position of UV-Vis spectroscopy becomes clear.

Strengths:

- High Quantitative Accuracy: When used appropriately, it provides highly precise and accurate concentration data [28].

- Simplicity and Speed: Experiments are typically straightforward to set up and execute, with measurements taking seconds to minutes [26] [25].

- Cost-Effectiveness: Instrumentation and operational costs are generally lower than for techniques like NMR, MS, or HPLC [25].

- Non-Destructive: Samples can often be recovered after analysis [28].

Limitations:

- Limited Structural Information: UV-Vis spectra are typically broad and provide less detailed structural information compared to NMR or IR spectroscopy. They are best for identifying the presence of chromophores rather than full molecular structure [25].

- Spectral Overlap: Mixtures of chromophores can have overlapping absorptions, making it difficult to resolve individual components without separation techniques prior to analysis [25].

- Solvent and pH Dependence: Absorption spectra can be significantly influenced by the solvent polarity and the pH of the solution, which can shift λmax and ε values [31] [25].

- Deviation from Beer-Lambert Law: At high concentrations (>0.01 M), electrostatic interactions between molecules can cause non-linear deviations from the Beer-Lambert law. Instrumental factors like stray light can also lead to deviations, especially at high absorbances [25].

Figure 2: A generalized workflow for a quantitative analysis experiment using UV-Vis spectroscopy, highlighting the key steps from sample preparation to data analysis.

UV-Visible spectroscopy remains an indispensable tool in the scientific arsenal, primarily due to its robust quantitative capabilities, operational simplicity, and broad applicability. Its fundamental principle—tracking electronic transitions by measuring the absorption of light—provides a direct window into the electronic structure of chromophores. While techniques like Mass Spectrometry and Nuclear Magnetic Resonance offer more detailed structural elucidation, and Infrared Spectroscopy provides finer vibrational fingerprints, UV-Vis excels in rapid, cost-effective quantification and kinetic studies. Understanding its operating principles, instrumental components, and methodological best practices, as outlined in this guide, enables researchers and drug development professionals to leverage this technique effectively. Its continued evolution, particularly in miniaturization and high-throughput automation, ensures its relevance for addressing contemporary analytical challenges across chemistry, biology, and materials science.

This technical guide provides an in-depth comparison of Near-Infrared (NIR) and Infrared (IR) spectroscopy, with a focused examination of their fundamental principles grounded in molecular vibrations. Within the broader context of evaluating spectroscopic methods, this whitepaper delineates the theoretical underpinnings, instrumental requirements, and experimental protocols for both techniques. It further presents a critical analysis of their respective advantages and limitations, supported by contemporary applications in pharmaceutical development and industrial process control. The objective is to equip researchers and scientists with the necessary knowledge to select the appropriate spectroscopic method based on specific analytical challenges.

Vibrational spectroscopy encompasses analytical techniques that probe the vibrational states of molecules. When molecules interact with infrared light, they can absorb energy, leading to transitions in their vibrational energy levels. This interaction forms the basis for both Near-Infrared (NIR) and mid-Infrared (IR or mid-IR) spectroscopy. Although both techniques belong to the broader category of vibrational spectroscopy, they differ significantly in the energy of the photons involved, the types of vibrational transitions they induce, and the resulting analytical applications. The global infrared spectroscopy market, valued at approximately $1.3 billion in 2023 and projected to grow to $2 billion by 2032, underscores the critical importance of these techniques, particularly in the pharmaceutical sector which commands about 42% of the molecular spectroscopy market [32] [33]. This guide delves into the specifics of NIR and IR spectroscopy, with a concentrated focus on their relationship to molecular vibrations.

Fundamental Principles and Molecular Vibrations

The Electromagnetic Spectrum and Vibrational Energy

The NIR region occupies the segment of the electromagnetic spectrum from approximately 780 to 2500 nanometers (nm), situated between the visible and the mid-IR regions [34] [35] [36]. The mid-IR region spans from about 2500 to 25,000 nm [37]. A fundamental distinction lies in the energy of the photons: NIR radiation is higher in energy compared to mid-IR radiation [35]. The energy of a vibrational transition is quantized, meaning molecules can only vibrate at specific frequencies. According to quantum mechanics, these vibrational energy levels are described by the equation ( E = (v + 1/2)hν ), where ( v ) is the vibrational quantum number, ( h ) is Planck's constant, and ( ν ) is the vibrational frequency [38].

Types of Molecular Vibrations and Selection Rules

Molecular vibrations are primarily categorized as stretching (changes in bond length) and bending (changes in bond angle) [38]. For a vibration to be observed in an IR or NIR spectrum, it must cause a change in the dipole moment of the molecule [38]. This is the primary selection rule for vibrational spectroscopy.

The core difference between NIR and IR spectroscopy lies in the types of vibrational transitions they probe:

- IR Spectroscopy: Fundamental Vibrations. Mid-IR spectroscopy measures the absorption of light that promotes molecules from the ground vibrational state (v=0) to the first excited vibrational state (v=1). These are known as fundamental vibrations and are highly intense and specific to functional groups [35] [38].

- NIR Spectroscopy: Overtones and Combinations. NIR spectroscopy involves transitions from the ground state (v=0) to higher energy states (v=2, 3, ...), known as overtones, or the simultaneous excitation of two or more different vibrations, called combination bands [34] [35] [36]. These transitions have a lower probability of occurring than fundamental vibrations, resulting in absorption bands that are typically 10 to 1000 times weaker than those in the mid-IR region [35]. This phenomenon is often explained by an anharmonic oscillator model, which deviates from the simple harmonic oscillator model [38].

Table 1: Comparative Overview of Vibrational Transitions in NIR and IR Spectroscopy

| Feature | Mid-Infrared (IR) Spectroscopy | Near-Infrared (NIR) Spectroscopy |

|---|---|---|

| Spectral Range | 2500 – 25,000 nm (4000 – 400 cm⁻¹) [37] | 780 – 2500 nm [34] |

| Primary Transitions | Fundamental vibrations (v=0 → v=1) [35] | Overtones & combination bands (v=0 → v=2,3,...) [34] [35] |

| Absorption Intensity | Strong [35] | Weak (10 to 1000x weaker than IR) [35] |

| Information Depth | Surface characterization (with ATR) [35] | Bulk composition analysis [35] |

| Typical Applications | Functional group identification, qualitative analysis [35] [37] | Quantification of chemical & physical parameters [35] |

Experimental Protocols and Methodologies

Sample Presentation and Interaction with Light

The choice of sampling technique is critical and depends on the sample's physical state and optical properties.

- Transmission: Incident light passes through the sample, and the transmitted light is measured. It is suitable for transparent liquids and gases [39]. The Beer-Lambert law often governs the quantitative relationship between absorption and concentration.

- Diffuse Reflectance: Incident light is scattered in various directions upon interacting with a solid, particulate sample. This method is ideal for heterogeneous, opaque materials like grains or powders without extensive preparation [39].

- Transflectance: A combination of transmission and reflectance where light penetrates the sample and is reflected from a backing surface. This is valuable for semi-transparent samples [39].

- Attenuated Total Reflectance (ATR): A dominant technique in mid-IR for solids and liquids. The sample is placed in contact with a high-refractive-index crystal. IR light undergoes total internal reflection, and an evanescent wave penetrates the sample, absorbing energy at characteristic frequencies. This method requires minimal sample preparation and is highly surface-sensitive [35] [37].

Detailed Experimental Protocol: Pharmaceutical Tablet Analysis by NIR

Aim: To identify and quantify the Active Pharmaceutical Ingredient (API) in a solid dosage form using a handheld NIR spectrometer in diffuse reflectance mode.

Materials:

- Handheld NIR spectrometer with a diffuse reflectance probe.

- Set of calibration standards with known API concentrations (e.g., 50 mg, 75 mg, 100 mg).

- Validation set of tablets with known API concentrations.

- Unknown tablet samples.

Procedure:

- Calibration Model Development:

- Acquire NIR spectra of all calibration standard tablets. For each tablet, collect multiple scans and average them to improve the signal-to-noise ratio.

- Using a reference method (e.g., HPLC), determine the exact API concentration for each calibration standard [36] [40].

- Apply chemometric techniques, such as Partial Least Squares Regression (PLSR), to develop a mathematical model that correlates the spectral data (X-matrix) with the reference concentration data (Y-matrix) [36] [39].

- Model Validation:

- Scan the validation set of tablets and use the developed PLSR model to predict their API concentrations.

- Compare the predicted values to the known reference values. Calculate statistical metrics like the Root Mean Square Error of Prediction (RMSEP) and the correlation coefficient (R²) to evaluate the model's accuracy and robustness [36].

- Analysis of Unknown Samples:

- Scan the unknown tablet with the NIR spectrometer using the same instrumental parameters as during calibration.

- Input the unknown spectrum into the validated PLSR model to obtain a prediction of the API concentration.

Detailed Experimental Protocol: Polymer Functional Group Identification by IR-ATR

Aim: To identify the functional groups present in an unknown polymer film using FT-IR spectroscopy with an ATR accessory.

Materials:

- FT-IR spectrometer equipped with an ATR accessory (e.g., diamond crystal).

- Unknown polymer film sample.

- Solvent (e.g., ethanol) for cleaning the ATR crystal.

Procedure:

- Background Collection:

- Clean the ATR crystal thoroughly with solvent and allow it to dry.

- Collect a background spectrum (or single-beam spectrum) with no sample in contact with the crystal. This records the instrument and environment response.

- Sample Measurement:

- Place the polymer film directly onto the ATR crystal, ensuring good optical contact. Apply consistent pressure using the spectrometer's pressure clamp.

- Collect the sample single-beam spectrum.

- Data Processing:

- The instrument software automatically generates a transmittance or absorbance spectrum by ratioing the sample single-beam spectrum against the background spectrum.

- Apply standard processing functions such as baseline correction and atmospheric suppression (if necessary).

- Spectral Interpretation:

- Identify the key absorption bands in the spectrum and correlate them to known functional group frequencies using a correlation chart or spectral library.

- For example, a strong band at ~1700 cm⁻¹ indicates a carbonyl (C=O) stretch, common in polyesters or polycarbonates. Aliphatic C-H stretches appear between 2850-2950 cm⁻¹ [38] [37].

Critical Comparison: Advantages and Limitations

Advantages of NIR Spectroscopy

- Minimal Sample Preparation: NIR spectroscopy requires little to no sample preparation. Solids can be analyzed directly in vials, and liquids in disposable glass vials, unlike IR which may require KBr pellets or careful administration to ATR crystals [35].

- Bulk Analysis and Penetration Depth: The higher energy NIR light penetrates deeper into a sample, providing information about the bulk material rather than just surface characteristics, as is often the case with IR-ATR [35].

- Quantitative Proficiency: While IR is often used for identification, NIR's complex spectra are highly amenable to multivariate calibration, making it a powerful tool for quantification [35].

- Fiber Optics and Process Compatibility: NIR radiation can be transmitted over long distances using fiber optic cables, enabling remote analysis and direct implementation in process environments. This is not feasible with mid-IR radiation due to physical limitations [35].

Limitations of NIR Spectroscopy

- Indirect Technique and Model Dependency: NIR is generally not a direct analysis technique. It requires building calibration models using reference data, which can be time-consuming and require significant expertise [36] [39].

- High Detection Limit: The technique is not suitable for trace analysis due to its relatively high detection limit [36].

- Spectral Complexity: NIR spectra consist of broad, overlapping overtones and combination bands, making them difficult to interpret directly without chemometrics [36].

Advantages of IR Spectroscopy

- Direct Structural Elucidation: IR spectra provide direct, interpretable information about functional groups present in a molecule, serving as a molecular "fingerprint" [32] [38].

- High Sensitivity for Fundamentals: The fundamental vibrations measured in IR are intense and specific, allowing for the detection of subtle structural differences.

Limitations of IR Spectroscopy

- Sample Preparation: Traditional transmission IR can require laborious sample preparation (e.g., KBr pellets). While ATR has simplified this, it remains more involved than typical NIR analysis [35].

- Surface Sensitivity (with ATR): ATR-IR primarily characterizes the surface of a sample in direct contact with the crystal, which may not be representative of the bulk [35].

Table 2: Summary of Pros, Cons, and Primary Applications

| Aspect | Mid-Infrared (IR) Spectroscopy | Near-Infrared (NIR) Spectroscopy |

|---|---|---|

| Primary Advantages | Direct functional group identification; High specificity; Mature technique [35] [38] | Non-destructive; Minimal sample prep; High penetration; Quantitative; Portable & process-capable [34] [35] |

| Key Limitations | Can require sample prep; Surface-sensitive (ATR); Not ideal for quantification [35] [36] | Indirect method (requires calibration); Weak absorption bands; Not for trace analysis; Complex spectra [36] |

| Dominant Applications | Qualitative identification of unknowns; Structural elucidation; Forensic analysis [35] [37] | Quantitative analysis (moisture, API); Raw material identification; Process Analytical Technology (PAT) [35] [36] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Reagents for Spectroscopic Analysis

| Item | Function/Brief Explanation |

|---|---|

| Potassium Bromide (KBr) | A transparent, non-absorbing material used to prepare pellets for transmission IR analysis of solid samples [37]. |

| ATR Crystals (Diamond, ZnSe) | High-refractive-index crystals used in ATR accessories. Diamond is durable for hard materials, while ZnSe offers a broader spectral range for softer samples [35] [37]. |

| NIR Calibration Standards | A set of samples with known chemical composition or physical properties, used to build the chemometric model for quantitative analysis [36] [39]. |

| Chemometric Software | Software packages for multivariate data analysis (e.g., PCA, PLS). Essential for extracting meaningful information from complex NIR spectra [36] [41] [39]. |