Scientific Validation of Subjective Forensic Feature-Comparison Methods: Establishing Foundational Validity and Reliability

This article provides a comprehensive framework for the scientific validation of subjective forensic feature-comparison methods, addressing a critical need in the wake of landmark reports from the National Academy of...

Scientific Validation of Subjective Forensic Feature-Comparison Methods: Establishing Foundational Validity and Reliability

Abstract

This article provides a comprehensive framework for the scientific validation of subjective forensic feature-comparison methods, addressing a critical need in the wake of landmark reports from the National Academy of Sciences and PCAST that highlighted the lack of empirical foundation in many forensic disciplines. Targeting researchers, scientists, and drug development professionals, we explore the theoretical underpinnings of validation, methodological approaches including the likelihood-ratio framework and blind testing, strategies for overcoming operational and cognitive challenges, and comparative evaluation of validation techniques. By synthesizing current research, international standards like ISO 21043, and emerging best practices, this work aims to equip professionals with practical strategies for establishing the foundational validity of forensic comparison methods and enhancing their reliability in both legal and research contexts.

The Scientific Imperative: Why Validating Forensic Feature-Comparison Methods Matters

Application Note: Quantifying the Validation Crisis in Forensic Feature-Comparison Methods

The 2009 National Academy of Sciences (NAS) report and the 2016 President's Council of Advisors on Science and Technology (PCAST) report revealed fundamental deficiencies in many forensic feature-comparison disciplines, creating a validation crisis that challenges their scientific foundation and legal admissibility. These landmark reports demonstrated that much forensic evidence—including bite marks, firearm and toolmark identification, and others—was introduced in criminal trials without meaningful scientific validation, determination of error rates, or reliability testing [1] [2]. This application note synthesizes the current landscape of forensic validation research, providing structured data and experimental protocols to address these critical deficiencies through scientifically rigorous methods.

Current Status of Key Forensic Disciplines Post-NAS/PCAST

Table 1: Validation Status and Error Rates of Forensic Feature-Comparison Methods

| Discipline | PCAST Foundational Validity Assessment | Estimated Error Rates | Current Judicial Treatment | Key Limitations |

|---|---|---|---|---|

| Bitemark Analysis | Lacks foundational validity [3] | Not established empirically | Increasingly excluded; subject to Daubert/Frye hearings [3] | Highly subjective; no scientific basis for uniqueness claims |

| Firearms/Toolmarks (FTM) | Fell short of foundational validity in 2016 [3] | 1 in 66 (95% CI: 1 in 46) in black-box studies [3] | Admitted with limitations on testimony scope [3] | Subjective nature; insufficient black-box studies |

| Latent Fingerprints | Foundationally valid [3] | False positives as high as 1 in 18 [3] | Generally admitted with error rate disclosures | Contextual biases and human judgment limitations |

| DNA (Single-source/Simple mixture) | Foundationally valid [3] | Established through validation studies | Routinely admitted | Well-established methodology |

| DNA (Complex mixtures) | Questionable foundational validity [3] | Varies by software and contributors | Admitted with limitations; ongoing challenges [3] | Subjective probabilistic genotyping |

| Footwear Analysis | Lacks foundational validity for individualization [4] | Not established empirically | Limited to class characteristics | No scientific basis for source identification |

Quantitative Framework for Validation Metrics

Table 2: Core Validation Metrics and Measurement Standards

| Validation Metric | Experimental Requirement | Statistical Framework | Reporting Standard |

|---|---|---|---|

| Foundation Validity | Black-box studies with appropriate design [3] | Error rates with confidence intervals [3] | PCAST criteria for empirical validation |

| Reliability | Multiple examiners, multiple samples [5] | Intra-class correlation coefficients | ISO 21043 standards for repeatability [6] |

| Measurement Accuracy | Reference standards and controls | Sensitivity, specificity, likelihood ratios [5] | Empirical calibration under casework conditions [6] |

| Reproducibility | Inter-laboratory comparisons | Concordance statistics | Transparent and reproducible methods [6] |

| Cognitive Bias Resistance | Sequential unmasking protocols | Differential decision analysis | Error rate documentation by laboratory [7] |

Experimental Protocols for Forensic Method Validation

Protocol 1: Black-Box Study Design for Error Rate Estimation

Purpose and Scope

This protocol provides a standardized methodology for conducting black-box studies to estimate error rates of forensic feature-comparison methods, addressing the PCAST requirement for "appropriately designed" empirical validation [3].

Materials and Equipment

- Sample sets with known ground truth (minimum 300 pairs: 150 matching, 150 non-matching)

- Multiple participating examiners (minimum 20 from different laboratories)

- Standardized casework documentation forms

- Blind testing administration system

- Data collection software for recording conclusions and confidence measures

Procedure

- Sample Preparation: Curate representative sample sets reflecting real-case complexity and quality variations. Document all known characteristics and establish ground truth through independent means.

- Examiner Recruitment: Engage participating examiners across multiple laboratories with varied experience levels. Provide standardized training on reporting scales.

- Blind Administration: Present samples to examiners in random order without contextual case information. Implement sequential unmasking to prevent cognitive biases [7].

- Data Collection: Record all examiner conclusions using standardized scales (identification, exclusion, inconclusive). Capture decision time and confidence measures.

- Statistical Analysis: Calculate false positive and false negative rates with 95% confidence intervals using appropriate binomial proportion methods.

Data Analysis

- Compute observed error rates with confidence bounds

- Conduct subgroup analyses by examiner experience and sample quality

- Perform reliability assessments using inter-rater agreement statistics

- Model results using logistic regression to identify influential factors

Protocol 2: Quantitative Fracture Surface Topography Analysis

Purpose and Scope

This protocol establishes an objective, quantitative method for fracture matching using surface topography and statistical learning, addressing NAS concerns about subjective pattern recognition [2].

Materials and Equipment

- 3D optical microscope or profilometer (minimum 50nm vertical resolution)

- Metrology-grade reference standards

- Fracture surface samples with known matching status

- Statistical computing environment (R/Python with MixMatrix package) [2]

- Sample mounting and alignment fixtures

Procedure

- Sample Preparation: Mount fracture surfaces to ensure stability during imaging. Clean surfaces appropriately for material type.

- Topography Mapping: Acquire 3D surface topography data using predetermined imaging scale (>10× self-affine transition scale, typically >500μm field of view) [2].

- Feature Extraction: Calculate height-height correlation functions to identify transition scales where surface uniqueness manifests (typically 50-70μm for metallic materials).

- Spectral Analysis: Perform multivariate statistical analysis of surface topography across multiple frequency bands.

- Statistical Classification: Apply statistical learning tools (discriminant analysis, machine learning) to classify matches and non-matches.

- Validation: Conduct cross-validation to estimate misclassification probabilities and compute likelihood ratios.

Data Analysis

- Generate likelihood ratios for match determinations

- Establish decision thresholds based on validation study results

- Compute confidence metrics for classification outcomes

- Document all parameters for reproducibility

Protocol 3: Probabilistic Genotyping Validation for Complex DNA Mixtures

Purpose and Scope

This protocol validates probabilistic genotyping software for complex DNA mixtures (3+ contributors), addressing PCAST concerns about foundational validity for DNA analysis of complex mixtures [3].

Materials and Equipment

- Reference DNA samples with known profiles

- Mixed DNA samples with controlled contributor ratios

- Standard DNA extraction and amplification kits

- Probabilistic genotyping software (STRmix, TrueAllele)

- Computational resources for likelihood ratio calculations

- Validation samples spanning expected casework conditions

Procedure

- Sample Preparation: Create mixed DNA samples with varying contributor numbers (3-5), different ratios (1:1:1 to 1:10:100), and degradation levels.

- DNA Analysis: Process samples using standard capillary electrophoresis protocols with appropriate controls.

- Software Configuration: Set up probabilistic genotyping software with validated parameters and models.

- Likelihood Ratio Calculation: Compute likelihood ratios for known matching and non-matching references across mixture variations.

- Performance Assessment: Evaluate calibration and discrimination performance using representative test sets.

- Error Rate Estimation: Determine reliability under different mixture conditions and template quantities.

Data Analysis

- Assess quantitative calibration of likelihood ratios

- Determine empirical probabilities for reported ranges

- Compute confidence intervals for reliability estimates

- Establish minimum template thresholds for reliable interpretation

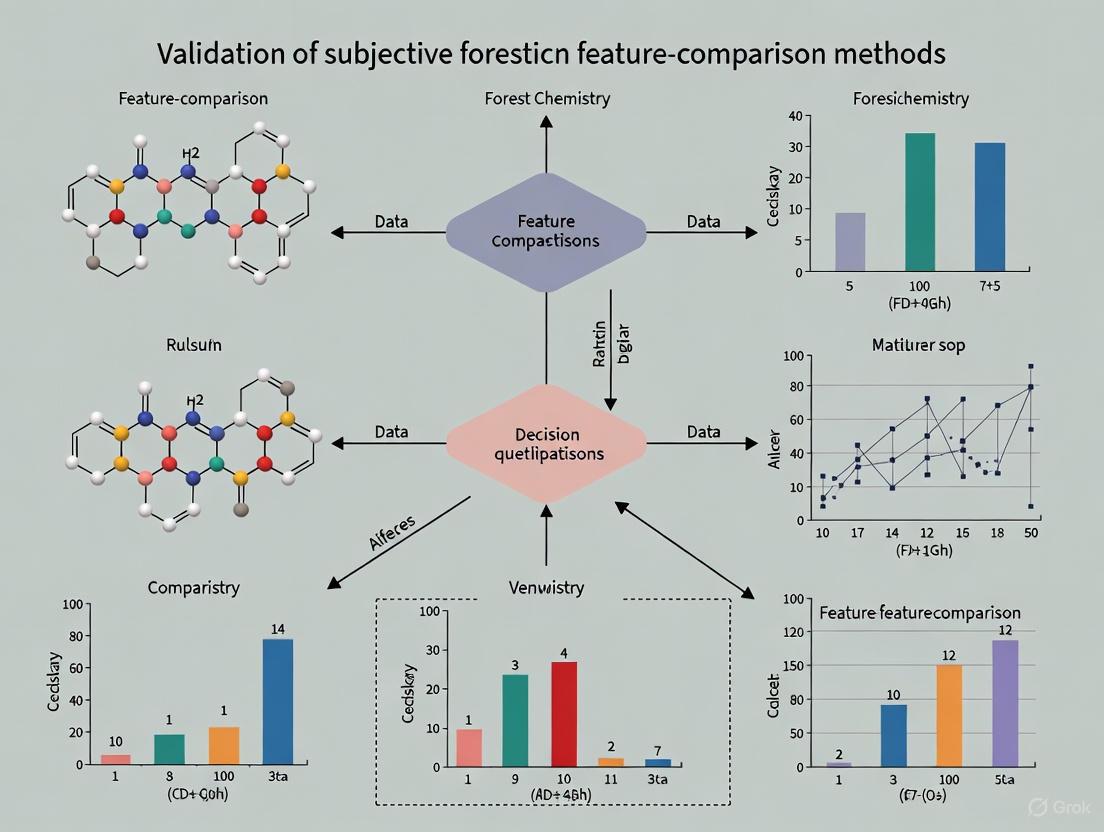

Visualization of Forensic Validation Workflows

Forensic Method Validation Pathway

Statistical Learning Framework for Forensic Matching

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Forensic Validation Studies

| Item/Category | Function/Purpose | Examples/Specifications | Validation Role |

|---|---|---|---|

| Reference Sample Sets | Ground truth establishment for validation studies | Curated sets with known matching status; minimum 300 pairs | Essential for empirical error rate estimation [3] |

| 3D Surface Metrology | Quantitative topography measurement | Optical profilometers, confocal microscopes (50nm resolution) | Objective fracture surface characterization [2] |

| Probabilistic Genotyping Software | Complex DNA mixture interpretation | STRmix, TrueAllele with validated parameters | Addressing PCAST concerns for DNA foundation validity [3] |

| Statistical Computing Environment | Data analysis and likelihood ratio computation | R with MixMatrix package, Python with scikit-learn [2] | Implementation of transparent, reproducible methods [5] |

| Black-Box Study Platforms | Blind testing administration | Custom software for unbiased data collection | Measuring real-world performance under casework conditions [3] |

| ISO 21043 Standards | Quality assurance framework | International standards for forensic processes [6] | Ensuring methodological rigor and conformity [6] |

| Cognitive Bias Controls | Minimizing contextual influences | Sequential unmasking protocols, linear testimony | Reducing extraneous influence on decision-making [7] |

The validation of subjective forensic feature-comparison methods is paramount for the integrity of the criminal justice system. These methods—including fingerprint analysis, firearms identification, and bite mark analysis—have historically faced scrutiny regarding their scientific foundation [8] [1]. A core challenge lies in the fact that many forensic disciplines "have few roots in basic science and lack sound theories to justify their predicted actions or empirical tests to prove their effectiveness" [9]. This application note establishes core scientific principles—plausibility, construct validity, and error rate measurement—as essential pillars for rigorous forensic method validation, providing researchers and practitioners with structured protocols for their implementation.

Core Principle 1: Plausibility

Definition and Rationale

Plausibility serves as the foundational checkpoint for any forensic method. It is the principle that there must be a scientifically sound theory or a potential mechanism to explain how the method achieves its intended effect [9]. Before investing resources in complex validation studies, the underlying premise of the method must be logically coherent and consistent with established scientific knowledge. Intuitive appeal or long-standing use is insufficient; the theory and methods must be scientifically plausible [9].

For example, the theory underpinning a method must not rely on assumptions that contradict what is known about human cognitive capabilities. One critique highlights the implausibility of the Association of Firearm and Tool Mark Examiners (AFTE) theory, which assumes examiners can mentally compare evidence marks to "libraries" of marks from different tools, a task that may exceed human memory and analytical limits [9].

Application Protocol: Assessing Plausibility

Objective: To systematically evaluate the scientific plausibility of a forensic feature-comparison method. Materials: All available literature on the method's theoretical basis, documentation of its procedures, and access to subject matter experts.

| Step | Action | Key Consideration |

|---|---|---|

| 1. Theory Articulation | Clearly state the theoretical basis for the method. What mechanism allows examiners to distinguish between sources? | The theory should be specific, not merely a general claim of "uniqueness." |

| 2. Mechanism Mapping | Identify the proposed causal pathway from evidence observation to final conclusion. | Ensure the pathway is logically coherent and does not contain unsupported leaps. |

| 3. Consistency Check | Compare the method's theory and mechanisms against established knowledge in relevant fields (e.g., cognitive psychology, materials science, physics). | Identify any contradictions with known scientific principles. |

| 4. Peer Consultation | Engage with experts in the foundational sciences (not just the forensic discipline) to review the plausibility assessment. | External review mitigates institutional bias and introduces critical, independent perspectives. |

Core Principle 2: Construct Validity

Theoretical Framework

Construct validity is "the extent to which a test measures what it is supposed to measure" [9] [10] [11]. In forensic science, the "construct" is the abstract characteristic being assessed, such as the ability to determine whether two fingerprints originated from the same source. A method with high construct validity accurately captures this underlying reality. It is not merely about reliable outputs, but about ensuring that those outputs truly represent the intended phenomenon [10]. As noted in research on physical activity, poor construct validity can lead to self-reports showing associations with demographic variables that are the opposite of those observed with objective measures [12].

This is especially critical in cross-cultural and cross-contextual research, where a tool validated in one population may not measure the same construct in another [11]. While often discussed in social sciences, construct validity is equally vital for forensic science, where the stakes involve justice and liberty.

Key Criteria for Establishing Construct Validity

The following criteria are essential for building evidence of construct validity [10]:

- Reliability: The measure must be consistent. A method that produces wildly different results for the same evidence under identical conditions has low test-retest reliability and cannot be valid. Reliability is necessary but not sufficient for validity. [10]

- Face Validity: The method should subjectively appear to measure the intended construct. While not empirical proof, a complete lack of face validity (e.g., using a color perception test to measure stress) indicates a fundamental misalignment. [10]

- Convergent Validity: The method's results should correlate with other established methods designed to measure the same or similar constructs. If multiple tests for the same ability yield conflicting results, it calls the construct validity of one or all into question. [10]

- Criterion Validity: The method should predict other concrete, relevant outcomes. For instance, a forensic method's conclusions should be consistent with other strong evidence in a case. [10]

Experimental Protocol: Establishing Construct Validity

Objective: To design and execute a study that provides empirical evidence for the construct validity of a forensic feature-comparison method. Materials: A set of evidence samples with known ground truth (e.g., from a validated database), multiple relevant comparison tests (if available), and a cohort of trained examiners.

Diagram 1: Construct validity assessment workflow.

Procedure:

- Define the Construct: Precisely define the latent construct the method purports to measure (e.g., "source individuality based on friction ridge features").

- Hypothesize Relationships: Formulate specific hypotheses about how the method's results should relate to other variables if it is valid. For example, "Scores on this method will strongly correlate with results from [Independent Method Y]."

- Execute Multimethod Assessment: Conduct the study using the following design, and compile results into a structured table for analysis.

| Evidence Sample ID | Ground Truth | Test Method Result | Independent Method Y Result | Examiner Confidence (1-5) | Retest Result (if applicable) |

|---|---|---|---|---|---|

| Sample 1 | Match | Identification | Identification | 5 | Identification |

| Sample 2 | Non-Match | Exclusion | Exclusion | 4 | Exclusion |

| Sample 3 | Match | Inconclusive | Identification | 2 | Inconclusive |

| Sample 4 | Non-Match | Inconclusive | Exclusion | 3 | Exclusion |

| ... | ... | ... | ... | ... | ... |

- Data Analysis:

- Reliability: Calculate test-retest reliability (e.g., Cohen's Kappa) by comparing the first and second rounds of testing for the same examiners.

- Convergent Validity: Calculate correlation coefficients (e.g., Phi coefficient) between the results of the test method and the independent method(s).

- Criterion Validity: Assess the method's ability to predict the known ground truth, calculating metrics like sensitivity and specificity.

Core Principle 3: Error Rate Measurement

The Imperative of Empirical Error Rates

The "known or potential rate of error" is a cornerstone of scientific evidence and a key factor for judicial admissibility under the Daubert standard [13] [1]. Error rates provide a quantifiable measure of a method's reliability and accuracy. Without them, "the appropriate weight of the evidence cannot be known" [13]. Claims of zero error rates are "not scientifically plausible" [13], and studies have shown that flawed forensic testimony has been a factor in a significant number of wrongful convictions [8].

A critical flaw in many existing error rate studies is the improper handling of inconclusive decisions [13]. Simply excluding inconclusives from calculations or always counting them as correct decisions artificially deflates reported error rates and undermines their credibility.

Experimental Protocol: Calculating Realistic Error Rates

Objective: To design a robust error rate study that properly accounts for all decision types, including inconclusive results, and provides meaningful accuracy metrics. Materials: A representative set of evidence samples with known ground truth, specifically designed to include challenging samples prone to error. A group of examiners representative of the practicing community.

Diagram 2: Error rate calculation logic.

Procedure:

Study Design:

- Evidence Set: Curate a set of evidence samples where the ground truth (same-source or different-source) is known. The set must include a range of quality and clarity, including samples that are inherently ambiguous and likely to generate inconclusive decisions.

- Examiner Pool: Select a representative sample of examiners.

- Blinding: Examiners must be blinded to the purpose of the study and the ground truth of the samples to prevent bias.

Data Collection: Present each evidence sample to each examiner and record their definitive decision (Identification or Exclusion) or an Inconclusive decision.

Data Analysis and Error Classification: Tally decisions against ground truth using the following framework. This corrects the common flaw of automatically counting all inconclusives as correct [13].

| Decision | Ground Truth | Classification | Explanation |

|---|---|---|---|

| Identification | Same-Source | Correct | True Positive |

| Identification | Different-Source | Error | False Positive |

| Exclusion | Different-Source | Correct | True Negative |

| Exclusion | Same-Source | Error | False Negative |

| Inconclusive | (Any) | Context-Dependent | Must be evaluated based on sample quality. |

| Inconclusive | (Sufficient Quality Info) | Error | False Inconclusive (Failure to make a definitive correct decision) [13] |

| Inconclusive | (Insufficient Quality Info) | Correct | True Inconclusive (Appropriate meta-cognitive judgment) [13] |

- Error Rate Calculation: Calculate multiple error rates to provide a comprehensive view:

- False Positive Rate: (False Identifications) / (All Different-Source Samples)

- False Negative Rate: (False Exclusions) / (All Same-Source Samples)

- Total Definitive Error Rate: (False Positives + False Negatives) / (All Definitive Decisions)

- Overall Error Rate: (False Positives + False Negatives + False Inconclusives) / (All Decisions)

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and concepts essential for conducting validation research in forensic feature-comparison.

| Item / Concept | Function / Definition | Application Note |

|---|---|---|

| Known Ground Truth Database | A collection of evidence samples with verified source information. | Serves as the reference standard for criterion validity and error rate studies. Ecological validity is critical [13]. |

| Directed Acyclic Graph (DAG) | A visual tool for mapping assumed causal relationships between variables. | Used to formalize causal frameworks in research design, clarifying confounding and causal paths [11]. |

| Multitrait-Multimethod Matrix (MTMM) | A matrix for evaluating construct validity by correlating multiple traits measured with multiple methods. | Helps disentangle the method used from the trait being measured, providing evidence for convergent and discriminant validity [11]. |

| Blinded Study Design | A research design where examiners are unaware of the study's hypotheses or sample ground truth. | Mitigates confirmation bias and ensures that results reflect the method's accuracy rather than examiner expectations [13]. |

| Inconclusive Decision Framework | A protocol for classifying inconclusive results as correct or erroneous. | Prevents the artificial inflation of accuracy metrics and is essential for realistic error rate calculation [13]. |

The Daubert Standard is a legal framework established by the 1993 U.S. Supreme Court case Daubert v. Merrell Dow Pharmaceuticals, Inc. that provides trial court judges with a systematic process for assessing the reliability and relevance of expert witness testimony before presenting it to a jury [14]. This ruling fundamentally transformed the legal landscape by assigning judges a "gatekeeping" role to scrutinize not only an expert's conclusions but, more importantly, the underlying scientific methodology and principles [14] [15]. The standard aims to prevent "junk science" from influencing judicial proceedings by ensuring expert testimony rests on a reliable foundation [16] [15].

The Daubert Standard supplanted the earlier Frye Standard (Frye v. United States, 1923), which focused primarily on whether scientific evidence had gained "general acceptance" in the relevant scientific community [14] [17]. The adoption of the Federal Rules of Evidence in 1975, particularly Rule 702, paved the way for this evolution by emphasizing reliability and relevance over mere general acceptance [16] [18]. While Daubert governs federal courts and most states, some jurisdictions (including California, New York, and Illinois) continue to adhere to the Frye Standard or "Frye-plus" variations [16].

The Daubert framework was further refined through two subsequent Supreme Court rulings, collectively known as the "Daubert Trilogy":

- General Electric Co. v. Joiner (1997): Established that appellate courts review trial court decisions on expert testimony under an "abuse of discretion" standard and emphasized that there must be a valid connection between an expert's data and their proffered opinion [14] [15].

- Kumho Tire Co. v. Carmichael (1999): Extended the Daubert Standard's application to non-scientific expert testimony, including technical and other specialized knowledge [14] [15].

The Five Daubert Factors: A Framework for Scrutiny

Under Daubert, trial courts evaluate the reliability of expert methodology through five key factors [14] [15] [18]. These factors provide a flexible framework for assessing scientific validity, though not all factors may apply equally in every case.

Table 1: The Five Daubert Factors for Evaluating Expert Testimony

| Daubert Factor | Judicial Inquiry Focus | Research Validation Objective |

|---|---|---|

| Testability | Whether the technique or theory can be and has been tested [14] [18]. | Implement protocols for hypothesis testing and falsification. |

| Peer Review | Whether the method has been subjected to publication and peer review [14] [15]. | Submit study designs and results to independent scholarly critique. |

| Error Rate | The known or potential error rate of the technique [14] [15]. | Establish quantitative error rates through validation studies. |

| Standards | The existence and maintenance of standards controlling the technique's operation [14] [18]. | Develop and document standardized operating procedures. |

| General Acceptance | Whether the technique has attracted widespread acceptance in a relevant scientific community [14] [15]. | Demonstrate methodological consensus through literature and practice. |

Contemporary Application and Burden of Proof

The 2023 amendments to Federal Rule of Evidence 702 clarified and emphasized that the proponent of expert testimony must demonstrate its admissibility by a preponderance of evidence [19]. The rule states that an expert witness may testify only if the proponent demonstrates to the court that it is more likely than not that: (a) the expert's specialized knowledge will help the trier of fact; (b) the testimony is based on sufficient facts or data; (c) the testimony is the product of reliable principles and methods; and (d) the expert's opinion reflects a reliable application of these principles and methods to the case facts [19].

This amended language reinforces the judge's gatekeeping role and establishes that questions about the sufficiency of an expert's basis and the application of their methodology are threshold admissibility requirements, not merely matters of "weight" for the jury to consider [19].

Daubert's Implications for Subjective Forensic Feature-Comparison Methods

Forensic feature-comparison methods—including fingerprint analysis, toolmark examination, and other pattern-recognition disciplines—face particular challenges under Daubert scrutiny due to their reliance on human interpretation and subjective judgment [9].

The Subjectivity Challenge in Forensic Science

A growing body of research demonstrates that pure scientific objectivity is a myth in forensic science [20]. Forensic data and conclusions are inherently "theory-laden," meaning they are influenced by the examiner's background, experiences, beliefs, and the contextual information they receive [20]. Studies across multiple forensic disciplines have documented various sources of bias:

- Individualized, experience-based biases: Expectations formed through previous casework may influence current interpretations [20].

- Theories and methods bias: Preferences for certain analytical approaches based on training or mentorship rather than empirical evidence of accuracy [20].

- Social environment bias: Uninterrogated implicit biases that may develop from working primarily within law enforcement contexts [20].

These biasing effects are particularly pronounced when evidence quality is poor, methods rely heavily on subjective interpretation, or data are ambiguous [20]. The recognition of these limitations has prompted calls for a paradigm shift in forensic science toward methods based on relevant data, quantitative measurements, and statistical models [21].

Scientific Guidelines for Validating Forensic Methods

Recent scholarship has proposed scientific guidelines for evaluating the validity of forensic feature-comparison methods, emphasizing that courts should employ ordinary standards of applied science when considering questions of measurement, association, and causality [9]. These guidelines include:

- Plausibility: The theoretical foundation for a method must be scientifically plausible based on established knowledge [9].

- Sound Research Design: Studies must demonstrate both construct validity (measuring what they claim to measure) and external validity (generalizability to real-world conditions) [9].

- Intersubjective Testability: Methods and findings must be replicable and reproducible by different researchers across various testing paradigms [9].

- Valid Group-to-Individual Reasoning: There must be a scientifically sound methodology for reasoning from group-level data to statements about individual cases [9].

These guidelines highlight that forensic claims of individualization are inherently problematic because applied science is fundamentally probabilistic and often lacks the robust empirical support needed for definitive source attribution [9].

Experimental Protocols for Daubert-Compliant Validation

Protocol 1: Establishing Error Rates for Feature-Comparison Methods

Objective: Quantify the known or potential error rate of a forensic feature-comparison method to satisfy Daubert's third factor [14] [15].

Materials:

- Representative sample set of known origin

- Blind proficiency test materials with ground truth

- Standardized data collection equipment

- Multiple qualified examiners

Procedure:

- Design Phase: Create a balanced set of comparison pairs (matching and non-matching) that reflect real-world casework conditions and complexities.

- Blinding: Ensure examiners are blinded to the expected outcomes and work independently without collaboration.

- Administration: Present samples to examiners in a controlled environment using standardized reporting forms that include "inconclusive" as a response option.

- Data Collection: Record all responses, including correct identifications, false identifications, correct exclusions, false exclusions, and inconclusive determinations.

- Analysis: Calculate false positive rate (false identifications/non-matching pairs), false negative rate (false exclusions/matching pairs), and overall accuracy. Report confidence intervals where appropriate.

Validation Metrics:

- Discriminatory Power: Measure of the method's ability to distinguish between sources.

- Repeatability & Reproducibility: Consistency of results when the test is repeated by the same examiner or different examiners.

- Robustness: Method performance across varying sample quality and conditions.

Protocol 2: Assessing Method Reliability and Standards Compliance

Objective: Establish the existence and maintenance of standards controlling the technique's operation, addressing Daubert's fourth factor [14] [18].

Materials:

- Documented standard operating procedures (SOPs)

- Quality control materials and protocols

- Data recording and management systems

- Training and competency assessment materials

Procedure:

- Procedure Documentation: Develop comprehensive SOPs detailing each step of the analytical process, including sample handling, analysis, interpretation, and reporting.

- Quality Control Implementation: Establish routine quality control measures, including equipment calibration, reagent testing, and periodic review of analytical outputs.

- Proficiency Testing: Implement regular internal and external proficiency testing to monitor ongoing performance.

- Training and Certification: Document training requirements, establish competency thresholds, and maintain records of examiner qualifications.

- Technical Review: Institute independent technical review of casework conclusions to identify potential deviations from established protocols.

Validation Outputs:

- Documented standard operating procedures

- Quality assurance manual

- Proficiency testing results and trends

- Training and competency records

https://www.law.cornell.edu/wex/daubert_standard

Protocol 3: Facilitating Peer Review and Publication

Objective: Subject the forensic methodology to peer review and publication, addressing Daubert's second factor [14] [15].

Materials:

- Complete research documentation

- Statistical analysis software and outputs

- Draft manuscripts suitable for scholarly publication

- Data sharing infrastructure (where applicable)

Procedure:

- Study Design: Develop a research protocol that addresses potential methodological criticisms and employs appropriate controls.

- Transparent Reporting: Document all methodological details, including sample characteristics, analytical conditions, and decision criteria.

- Manuscript Preparation: Prepare comprehensive manuscripts describing the methodology, validation studies, results, and limitations.

- Submission to Peer-Reviewed Journals: Select appropriate journals based on methodological focus and submit for independent peer review.

- Revision and Response: Address reviewer comments substantively and document all changes to the research approach or interpretation.

- Data Sharing: Where feasible, make anonymized data available to facilitate independent verification and replication.

Validation Outputs:

- Published peer-reviewed articles

- Conference presentations and proceedings

- Independent replication studies

- Methodological citations in scholarly literature

The Scientist's Toolkit: Essential Research Reagents for Daubert Compliance

Table 2: Essential Methodological Components for Daubert-Compliant Validation

| Research Component | Function in Daubert Compliance | Implementation Examples |

|---|---|---|

| Blinded Proficiency Testing | Quantifies error rates and assesses examiner reliability [14] [15]. | Designed tests with ground truth; independent administration; statistical analysis of results. |

| Standard Operating Procedures (SOPs) | Documents existence of standards controlling operations [14] [18]. | Step-by-step protocols; quality control measures; training documentation. |

| Statistical Analysis Framework | Provides quantitative foundation for conclusions and error estimation [21] [9]. | Probability models; confidence intervals; validity measures; data visualization. |

| Peer-Review Publication | Demonstrates methodological scrutiny by scientific community [14] [15]. | Journal submissions; conference presentations; pre-print archives; response to critique. |

| Open Science Practices | Enables intersubjective testability and replication [9]. | Data sharing; methodological transparency; code availability; replication initiatives. |

| Cognitive Bias Mitigation | Addresses challenges to objectivity in subjective methods [20]. | Linear sequential unmasking; context management; blind verification; decision documentation. |

Navigating Daubert requirements demands rigorous scientific validation, particularly for subjective forensic feature-comparison methods. By implementing structured experimental protocols, documenting standards and error rates, and engaging with the broader scientific community through peer review, researchers can develop robust evidence that satisfies Daubert's exacting standards. The paradigm shift toward transparent, quantitative, and empirically validated methods represents both a legal necessity and a scientific opportunity to strengthen forensic science's foundation and credibility.

The ISO 21043 Forensic Sciences standard series represents a groundbreaking, internationally recognized framework designed to ensure the quality and reliability of the entire forensic process [22]. Developed by ISO Technical Committee 272, this standard responds to long-standing calls for improvement in forensic science by providing a structured, scientifically robust foundation for forensic activities [23]. For researchers and scientists focused on validating subjective forensic feature-comparison methods, ISO 21043 offers a critical framework that emphasizes transparency, reproducibility, and empirical validation [6]. The standard works in tandem with the established ISO/IEC 17025 for testing and calibration laboratories but provides essential supplementary requirements specific to forensic science, particularly covering interpretation and reporting phases that extend beyond mere analytical measurements [22].

The standard's development involved a global effort with 27 participating and 21 observing national standards organizations, ensuring international consensus and applicability across diverse legal systems and forensic disciplines [23]. This international harmonization is crucial for facilitating the exchange of forensic services and ensuring consistent quality standards worldwide [23] [22]. For research focused on method validation, understanding this framework is essential, as it anchors scientific progress through common terminology and structured processes while allowing necessary flexibility for different forensic disciplines [23].

ISO 21043 Structure and Core Components

The ISO 21043 standard is organized into five distinct parts that collectively cover the complete forensic process. The table below summarizes the scope and focus of each component:

Table 1: Components of the ISO 21043 Forensic Sciences Standard Series

| Part Number | Title | Focus and Scope | Research Relevance |

|---|---|---|---|

| Part 1 | Vocabulary [23] | Defines standardized terminology for forensic sciences | Provides common language essential for research reproducibility and interdisciplinary collaboration |

| Part 2 | Recognition, Recording, Collecting, Transport and Storage of Items [23] | Requirements for early forensic process including crime scene work | Ensures integrity of evidence from recovery through chain of custody |

| Part 3 | Analysis [23] | Applies to all forensic analysis, referencing ISO 17025 where appropriate | Emphasizes forensic-specific analytical requirements |

| Part 4 | Interpretation [23] | Centers on linking observations to case questions using opinions | Core component for validating subjective feature-comparison methods |

| Part 5 | Reporting [23] | Covers communication of outcomes in reports and testimony | Ensures transparent communication of conclusions and limitations |

The forensic process flow governed by ISO 21043 moves sequentially through these components, beginning with a request that leads to item recovery, followed by analysis that generates observations, which are then interpreted to form opinions, and finally reported to the justice system [23]. This end-to-end standardization is particularly valuable for validation research as it provides a consistent framework across the entire evidence lifecycle.

The Interpretation Standard (ISO 21043-4) and Feature-Comparison Methods

ISO 21043-4 Interpretation represents a pivotal advancement for validating subjective forensic feature-comparison methods [23]. This section of the standard centers on the questions in a case and the answers provided through formalized opinions, requiring transparent reasoning and logical frameworks for evidence interpretation [6]. The standard incorporates the likelihood-ratio framework as the logically correct approach for evidence interpretation, providing a mathematically sound basis for expressing the strength of forensic evidence [6]. This framework is essential for moving subjective feature-comparison methods toward more empirically grounded, quantitative foundations.

For research on feature-comparison validation, the interpretation standard introduces crucial requirements for empirical calibration and validation under casework conditions [6]. This directly addresses historical deficiencies in many forensic disciplines identified by critical reports, including the lack of sound theories to justify predicted actions and insufficient empirical testing to prove effectiveness [9]. The standard promotes methods that are intrinsically resistant to cognitive bias through transparent and reproducible processes, a fundamental requirement for improving the validity of subjective examinations [6].

Experimental Protocols for Method Validation

Core Validation Parameters and Assessment Protocols

Validation of forensic feature-comparison methods requires rigorous experimental protocols to demonstrate that methods are fit for purpose. The following table outlines key validation parameters derived from ISO standards and supporting documents:

Table 2: Core Validation Parameters for Forensic Feature-Comparison Methods

| Validation Parameter | Experimental Protocol | Acceptance Criteria Documentation |

|---|---|---|

| Accuracy | Comparison of method results to known reference standards or consensus results | Mean difference ≤ 5 mmHg and SD ≤ 8 mmHg in BP device validation [24]; Comparable metrics for forensic features |

| Precision | Repeated measurements of same sample under defined conditions | Intra-day, inter-day, and inter-operator variability metrics [25] |

| Specificity | Ability to distinguish between similar features from different sources | Demonstration of clustering by tool rather than angle/direction in toolmark study [26] |

| Reproducibility | Testing across multiple laboratories, operators, and instruments | Intersubjective testability through multiple researchers using varied testing paradigms [9] |

| Error Rate Estimation | Blind testing with known and non-match samples | Cross-validated sensitivity of 98% and specificity of 96% in toolmark algorithm [26] |

Protocol for Validating Objective Feature-Comparison Algorithms

For developing objective computational approaches to replace subjective feature-comparison methods, the following detailed protocol is derived from published research on toolmark analysis:

Protocol Title: Empirical Validation of Forensic Feature-Comparison Algorithms Using Statistical Classification and Likelihood Ratios

1. Sample Preparation and Dataset Generation

- Select consecutively manufactured tools or items to maximize initial similarity while maintaining individual characteristics [26]

- Generate 3D toolmarks or feature representations from various angles and directions to account for operational variability

- Ensure balanced representation of known matches and known non-matches in the dataset

2. Feature Extraction and Pattern Analysis

- Apply clustering algorithms (e.g., PAM clustering) to determine natural groupings in the data

- Verify that clustering occurs by tool identity rather than by angle or direction of mark generation [26]

- Extract quantitative features that demonstrate discriminative power between sources

3. Statistical Model Development

- Calculate Known Match and Known Non-Match densities from the feature data

- Fit appropriate probability distributions (e.g., Beta distributions) to the match and non-match densities [26]

- Establish classification thresholds based on the overlap between match and non-match distributions

4. Likelihood Ratio Derivation and Validation

- Derive likelihood ratios for new feature pairs using the fitted distributions

- Implement cross-validation to assess model performance without overfitting

- Determine sensitivity and specificity through blinded testing with independent datasets

- Target performance metrics demonstrated in published studies (e.g., 98% sensitivity, 96% specificity) [26]

5. Implementation Framework

- Develop open-source solutions to promote transparency and adoption [26]

- Create standardized output formats that integrate with existing forensic workflows

- Document all parameters and decision thresholds for forensic accountability

Experimental Workflow for Validation Studies

The following diagram illustrates the complete experimental workflow for validating forensic feature-comparison methods according to ISO 21043 principles:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful validation of forensic feature-comparison methods requires specific materials and computational resources. The following table details essential components of the research toolkit:

Table 3: Essential Research Materials for Forensic Method Validation

| Tool/Reagent | Function in Validation | Specification Requirements |

|---|---|---|

| Reference Materials | Provide ground truth for accuracy assessment | Consecutively manufactured tools [26]; Certified reference materials with known properties |

| 3D Measurement Systems | Capture quantitative feature data | High-resolution surface topography capability; Sub-micrometer precision |

| Statistical Software Platforms | Implement clustering and classification algorithms | Support for PAM clustering, density estimation, probability distribution fitting [26] |

| Likelihood Ratio Framework | Quantify evidence strength for interpretation | Compatible with ISO 21043-4 requirements for transparent evidence evaluation [6] [23] |

| Validation Protocol Templates | Ensure comprehensive study design | Pre-defined acceptance criteria; Experimental design specifications [25] |

| Blinded Testing Datasets | Assess real-world performance | Known match and known non-match pairs; Casework-representative samples |

Implementation Framework and Compliance

Integration with Existing Quality Management

For forensic service providers and research institutions, implementing ISO 21043 requires integration with existing quality management systems. The standard is designed to work in tandem with ISO/IEC 17025 for testing and calibration laboratories, adding forensic-specific requirements particularly for interpretation and reporting [22]. This complementary relationship means that laboratories already accredited to ISO/IEC 17025 have a foundation for implementing ISO 21043, but must address the additional forensic-specific requirements covering the complete process from crime scene to courtroom [23].

The standard uses precise language to distinguish between mandatory requirements and recommendations: "shall" indicates a hard requirement that must be complied with unless impossible; "should" indicates a recommendation that requires justification if not followed; while "may" indicates permission and "can" refers to capability [23]. This precise language is essential for both implementation and validation research, as it clearly distinguishes between mandatory and discretionary elements.

Addressing Historical Limitations in Forensic Feature-Comparison

The ISO 21043 framework directly addresses several historical limitations in forensic feature-comparison methods identified in critical reports [9]. By requiring transparent and reproducible methods [6], the standard helps overcome challenges related to subjective human judgment that has traditionally led to inconsistencies in fields like toolmark analysis [26]. The emphasis on empirical calibration and validation under casework conditions addresses the documented lack of empirical testing in many forensic disciplines [6] [9].

For the specific challenge of reasoning from group data to individual cases (the "G2i" problem), the ISO 21043 framework provides structured approaches for appropriately qualifying conclusions and acknowledging limitations [9]. This is particularly relevant for research on subjective feature-comparison methods, where the standard encourages explicit acknowledgment of uncertainty rather than definitive claims of individualization that lack robust empirical support [9].

The ISO 21043 standard series represents a transformative framework for quality assurance in forensic science, providing a comprehensive structure for validating and implementing forensic feature-comparison methods. For researchers and scientists, the standard offers clearly defined requirements for methodological validation, statistical interpretation using likelihood ratios, and transparent reporting. By establishing international consensus on forensic science processes and terminology, ISO 21043 enables more rigorous validation studies, facilitates cross-jurisdictional collaboration, and ultimately enhances the reliability of forensic evidence in judicial systems worldwide. Implementation of this framework addresses long-standing criticisms of forensic feature-comparison methods while providing the flexibility needed for continuous scientific improvement across diverse forensic disciplines.

The field of forensic science is undergoing a fundamental transformation, moving away from expert opinion-based subjective judgments toward a paradigm rooted in transparent, reproducible, and empirically validated scientific measurement. This shift is formally embodied in the new international standard, ISO 21043, which provides a structured framework covering the entire forensic process: vocabulary; recovery, transport, and storage of items; analysis; interpretation; and reporting [6]. The modern forensic-data-science paradigm emphasizes methods that are intrinsically resistant to cognitive bias, employ the logically correct likelihood-ratio framework for evidence interpretation, and are rigorously calibrated and validated under casework conditions [6] [27].

This paradigm shift addresses long-standing criticisms regarding the lack of validation in traditional forensic approaches, particularly in disciplines such as forensic text comparison where analyses based primarily on expert linguist's opinion have been criticized for lacking empirical validation [27]. The core elements of this scientific approach include: (1) the use of quantitative measurements, (2) the use of statistical models, (3) the use of the likelihood-ratio framework, and (4) empirical validation of the method/system [27]. These elements collectively contribute to developing approaches that are transparent, reproducible, and scientifically defensible.

Core Principles of the Scientific Framework

The Likelihood-Ratio Framework for Evidence Interpretation

The likelihood-ratio (LR) framework represents the logically and legally correct approach for evaluating forensic evidence and has received growing support from relevant scientific and professional associations [27]. In the United Kingdom, for instance, the LR framework will need to be deployed in all main forensic science disciplines by October 2026 [27]. An LR is a quantitative statement of the strength of evidence, expressed as:

LR = p(E|Hp) / p(E|Hd)

Where the LR equals the probability (p) of the given evidence (E) assuming the prosecution hypothesis (Hp) is true, divided by the probability of the same evidence assuming the defense hypothesis (Hd) is true [27]. These probabilities can also be interpreted respectively as similarity (how similar the samples are) and typicality (how distinctive this similarity is). The LR logically updates the prior beliefs of the trier-of-fact through Bayes' Theorem:

Prior Odds × LR = Posterior Odds

This framework prevents forensic scientists from commenting on the ultimate issue of guilt, as they are not positioned to know the trier-of-fact's prior beliefs [27]. Instead, they provide the LR as a measure of evidential strength, allowing the court to update their beliefs appropriately.

ISO 21043 Standard Requirements

The ISO 21043 standard establishes comprehensive requirements for forensic processes. Its five-part structure ensures quality throughout the entire forensic workflow [6]:

- Part 1: Vocabulary - Standardizes terminology to ensure consistent communication

- Part 2: Recovery, Transport, and Storage - Establishes protocols for maintaining evidence integrity

- Part 3: Analysis - Provides guidelines for analytical methodologies

- Part 4: Interpretation - Mandates the use of scientifically sound interpretation frameworks

- Part 5: Reporting - Standardizes reporting formats to ensure clarity and transparency

Implementation of this standard requires forensic-service providers to adopt methods consistent with the forensic-data-science paradigm while maintaining conformance with international requirements [6].

Application Notes: Implementing Validated Methods

Quantitative Measurement Protocols

The transition from subjective judgment to scientific measurement requires implementing robust quantitative measurement protocols across various forensic disciplines. The following experimental workflows illustrate standardized approaches for different forensic applications:

Figure 1: Standardized Experimental Workflows for Key Forensic Disciplines

Validation Requirements for Forensic Methods

Empirical validation must replicate the conditions of casework investigations using relevant data. Two critical requirements for proper validation include:

- Requirement 1: Reflecting the specific conditions of the case under investigation

- Requirement 2: Using data relevant to the case [27]

Failure to meet these requirements may mislead the trier-of-fact in their final decision. For instance, in forensic text comparison, validations must account for potential mismatches in topics between source-questioned and source-known documents, as topic mismatch significantly impacts authorship analysis reliability [27].

Table 1: Quantitative Standards for Forensic Method Validation

| Validation Parameter | Minimum Standard | Optimal Target | Measurement Metric |

|---|---|---|---|

| Method Reliability | >80% | >95% | Case closure rate, correct identification rate [28] |

| Color Contrast Ratio | 4.5:1 (small text) | 7:1 (AAA) | WCAG 2.0 guidelines [29] [30] |

| Likelihood Ratio Calibration | Log-likelihood-ratio cost | Empirical calibration | Tippett plots, TPR/FPR [27] |

| Data Relevance | Casework-condition replication | Full situational matching | Topic, genre, style alignment [27] |

Experimental Protocols

Protocol 1: Forensic Text Comparison with LR Framework

Purpose: To quantitatively evaluate authorship of questioned documents using statistically validated likelihood ratios.

Materials:

- Source-known and source-questioned text samples

- Computational linguistic analysis software

- Dirichlet-multinomial model implementation

- Logistic regression calibration toolkit

- Validation corpus with known authorship

Procedure:

- Text Pre-processing: Clean and normalize text samples, removing metadata while preserving linguistic features.

- Feature Extraction: Quantitatively measure syntactic, lexical, and character-level features across documents.

- Model Training: Implement Dirichlet-multinomial model on reference corpus with known authorship.

- LR Calculation: Compute likelihood ratios using Equation (1) framework for similarity and typicality assessment.

- Calibration: Apply logistic regression calibration to derived LRs to ensure accurate probability statements.

- Validation: Assess LR performance using log-likelihood-ratio cost and visualize with Tippett plots [27].

Validation Considerations:

- Account for topic mismatch between compared documents

- Ensure data relevance to specific case conditions

- Test model performance under cross-domain conditions

- Establish empirical validation under realistic casework conditions [27]

Protocol 2: Bloodstain Age Estimation via Spectroscopic Analysis

Purpose: To estimate the age of bloodstains found at crime scenes through spectroscopic measurement of hemoglobin derivatives.

Materials:

- UV-Vis spectrophotometer with wavelength range 200-700nm

- Standardized blood sample collection kits

- Temperature and humidity control chamber

- Reference spectra for hemoglobin derivatives

- Statistical analysis software for age modeling

Procedure:

- Sample Collection: Collect bloodstains from crime scene using standardized procedures to prevent contamination.

- Spectroscopic Setup: Calibrate spectrophotometer using reference standards and control samples.

- Spectral Measurement: Record absorption spectra across full wavelength range, noting key peak positions.

- Peak Identification: Identify and measure Soret band position (approximately 425nm for fresh blood) and monitor shift toward 400nm with aging.

- Hemoglobin Derivative Quantification: Measure oxyhemoglobin (542nm, 577nm) and methemoglobin (510nm, 631.8nm) peak intensities.

- Age Modeling: Apply mathematical models comparing measured spectra to literature values for age estimation [31].

- Uncertainty Reporting: Calculate and report confidence intervals for age estimates based on statistical models.

Quality Control:

- Document environmental conditions (temperature, humidity, surface properties)

- Include control samples of known age for method validation

- Perform replicate measurements to assess reproducibility

- Report limitations and confidence intervals for all estimates

Protocol 3: Firearm Evidence Analysis Using Advanced Visualization

Purpose: To objectively analyze ballistic evidence using algorithmic pattern matching and statistical comparison.

Materials:

- Forensic Bullet Comparison Visualizer (FBCV) or Integrated Ballistic Identification System (IBIS)

- 3D imaging microscopy system

- Advanced comparison algorithms

- Statistical analysis software

- Reference firearm databases

Procedure:

- Evidence Imaging: Acquire high-resolution 3D images of bullets and cartridge cases using standardized lighting conditions.

- Surface Topography Mapping: Generate detailed topographic maps of tool marks and impressions.

- Algorithmic Comparison: Implement advanced algorithms to compare patterns between questioned and known samples.

- Statistical Scoring: Generate objective statistical scores indicating degree of similarity.

- Visualization: Present comparison results through interactive visualizations for forensic expert evaluation.

- Database Search: Compare evidence against reference databases for potential linkages [28].

Validation Metrics:

- Establish false positive and false negative rates

- Determine statistical confidence levels for matches

- Verify reproducibility across multiple examiners

- Validate against known ground truth samples

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Materials for Validated Forensic Analysis

| Item | Specifications | Application & Function |

|---|---|---|

| Next Generation Sequencing (NGS) Platform | Whole genome sequencing capability, high precision for damaged/small samples | DNA analysis beyond traditional markers, identifies suspects from challenging samples [28] |

| Advanced Spectrophotometer | UV-Vis range 200-700nm, high resolution (≤1nm) | Bloodstain age estimation through hemoglobin derivative quantification [31] |

| Dirichlet-Multinomial Model Software | LR framework implementation, calibration capabilities | Forensic text comparison, authorship verification [27] |

| Forensic Bullet Comparison Visualizer (FBCV) | Advanced algorithms, interactive visualization, statistical support | Objective bullet analysis, firearm identification [28] |

| Integrated Ballistic Identification System (IBIS) | 3D imaging, advanced comparison algorithms, network capability | Firearm and tool mark identification, database sharing [28] |

| Standard Color Coding System | Methuen Handbook of Color reference, 30 double pages with 48 colors each | Paint color measurement and communication standardization [32] |

| Contrast Verification Tools | WCAG 2.0 compliance, APCA algorithm implementation | Ensuring sufficient color contrast in visualizations [29] [30] |

| Omics Techniques Platform | Genomics, transcriptomics, proteomics, metabolomics capabilities | Comprehensive biological sample analysis, species identification [28] |

Data Interpretation and Reporting Standards

Statistical Interpretation Framework

The likelihood-ratio framework provides the statistical foundation for interpreting forensic evidence. Proper implementation requires:

- Transparent Calculation: Clearly document all statistical models and assumptions used in LR derivation

- Empirical Calibration: Ensure LRs are calibrated using relevant population data and casework-like conditions

- Uncertainty Quantification: Report confidence intervals or measures of uncertainty for all conclusions

- Context Appropriateness: Validate methods under conditions that reflect case-specific factors [27]

For forensic text comparison, this means accounting for linguistic variables such as topic, genre, and register that may influence writing style [27]. For bloodstain analysis, it requires consideration of environmental factors that affect the rate of hemoglobin degradation [31].

Standardized Reporting Protocols

ISO 21043 mandates standardized reporting that includes:

- Clear statement of hypotheses being tested

- Complete description of methodologies employed

- Transparent presentation of results and calculations

- Limitations and uncertainties acknowledged

- Logical connection between evidence and conclusions [6]

The following diagram illustrates the logical progression from evidence analysis to interpretation and reporting:

Figure 2: Logical Framework for Evidence Interpretation and Reporting

The paradigm shift from subjective judgment to scientific measurement in forensic science represents a fundamental transformation in how evidence is analyzed, interpreted, and reported. Through the implementation of ISO 21043 standards, adoption of the likelihood-ratio framework, and rigorous empirical validation under casework conditions, forensic science is establishing itself as a truly quantitative and objective discipline. The protocols and application notes detailed herein provide researchers and practitioners with standardized methodologies for implementing this new paradigm across various forensic disciplines, ensuring that forensic conclusions are scientifically defensible, transparent, and reliable.

Implementing Robust Validation Frameworks: From Theory to Practice

The forensic science community has increasingly sought quantitative methods for conveying the weight of evidence, with experts from many forensic laboratories now summarizing their findings in terms of a likelihood ratio (LR) [33]. Proponents of this approach often argue that Bayesian reasoning establishes it as the normative framework for evidence evaluation—the logically correct approach [33]. This application note examines the theoretical foundations, practical applications, and implementation protocols of the likelihood-ratio framework within validation studies for subjective forensic feature-comparison methods.

The LR framework provides a structured approach for evaluating forensic evidence by comparing the probability of the evidence under two competing propositions: one representing the prosecution's view and the other the defense's view [33]. For researchers and scientists engaged in validation studies, understanding and properly implementing this framework is crucial for establishing the scientific validity and reliability of forensic methods.

Theoretical Foundations

Bayesian Framework for Evidence Evaluation

The likelihood-ratio framework operates within a Bayesian reasoning structure that separates the role of the forensic expert from that of the fact-finder. The odds form of Bayes' rule illustrates this relationship [33]:

The theoretical foundation holds that:

- Prior odds represent the fact-finder's belief about the propositions before considering the forensic evidence

- The likelihood ratio represents the strength of the forensic evidence

- Posterior odds represent the updated belief after considering the evidence

This separation allows forensic experts to present evidence strength without encroaching on the domain of the fact-finder [33].

The Likelihood Ratio Formula

The likelihood ratio is calculated as [33]:

Where:

P(E|Hp)is the probability of observing the evidence (E) given the prosecution's proposition (Hp)P(E|Hd)is the probability of observing the evidence (E) given the defense's proposition (Hd)

Support for the Framework

The likelihood-ratio test has the highest power among competing tests according to the Neyman-Pearson lemma, making it statistically optimal for distinguishing between competing hypotheses [34] [35]. This theoretical advantage makes it particularly valuable for forensic evidence evaluation where consequences of errors are substantial.

Quantitative Data and Interpretation

Table 1: Likelihood Ratio Values and Their Interpretative Meaning

| LR Value | Verbal Equivalent | Strength of Evidence |

|---|---|---|

| >10,000 | Extremely strong | Very strong support for Hp over Hd |

| 1,000-10,000 | Strong | Strong support for Hp over Hd |

| 100-1,000 | Moderately strong | Moderate support for Hp over Hd |

| 10-100 | Moderate | Moderate support for Hp over Hd |

| 1-10 | Limited | Limited support for Hp over Hd |

| 1 | No discrimination | Evidence does not distinguish between Hp and Hd |

| 0.1-1.0 | Limited | Limited support for Hd over Hp |

| 0.01-0.1 | Moderate | Moderate support for Hd over Hp |

| 0.001-0.01 | Moderately strong | Moderate support for Hd over Hp |

| <0.001 | Strong | Strong support for Hd over Hp |

Table 2: Comparative Performance of Statistical Tests for 2×2 Tables

| Test | Application Context | Key Advantage | Limitation |

|---|---|---|---|

| Likelihood Ratio Test (LRT) | Testing whether binomial proportions are equal [35] | Highest power according to Neyman-Pearson lemma [35] | Requires nested models [34] |

| Pearson's χ² Test | Where data match too closely a particular hypothesis; testing variance [35] | Simplicity of calculation | Misused for testing proportions; requires expected values >5 [35] |

| Z-test | Approximate test for proportions | Computational simplicity | Approximation may be poor with small samples |

| Fisher's Exact Test | Small sample sizes | Exact p-values | Computationally intensive for large samples |

Table 3: Context Tree Models and Predictive Performance

| Context Tree Model | Entropy Value | Periodic Structure | Predicted Learning Difficulty |

|---|---|---|---|

| (τ₁ᵏ, p₁ᵏ) | 0.65 | No | Medium |

| (τ₂ᵏ, p₂ᵏ) | 0.81 | No | High |

| (τ₃ᵏ, p₃ᵏ) | 0.54 | Yes | Low |

| (τ₄ᵏ, p₄ᵏ) | 0.56 | No | Medium |

Experimental Protocols

Protocol 1: Calculating Likelihood Ratios for Simple Hypotheses

Purpose: To determine the likelihood ratio for fully specified models under simple hypotheses.

Materials:

- Observed evidence data

- Two competing probability models (Hp and Hd)

Procedure:

- Define two competing hypotheses: Hp and Hd

- Calculate the probability of observing the evidence under Hp

- Calculate the probability of observing the evidence under Hd

- Compute LR = P(E|Hp) / P(E|Hd)

- Report the LR value with appropriate uncertainty measures

Example Application: In genetic analysis of elephant tusks to determine subspecies origin, the LR compares probabilities of observed DNA markers under two models: Hp (savannah elephant) and Hd (forest elephant) [36]. For each marker j, calculate:

The overall LR is the product across all independent markers [36].

Protocol 2: Likelihood Ratio Test for Contingency Tables

Purpose: To test whether binomial proportions are equal in a 2×2 contingency table using the likelihood ratio test.

Materials:

- Observed 2×2 contingency table data

- Statistical software or computational tools

Procedure:

- Arrange data in a 2×2 contingency table format

- Calculate expected values for each cell under the null hypothesis of equal proportions

- Compute the log-likelihood ratio statistic: where Oij are observed counts and Eij are expected counts [35]

- Compare the test statistic to the χ² distribution with appropriate degrees of freedom

- Draw conclusions regarding the equality of proportions

Note: The LRT is preferred over Pearson's χ² test for testing proportions as it has better theoretical grounding and performance with small expected numbers [35].

Protocol 3: Uncertainty Assessment for Forensic LRs

Purpose: To evaluate the uncertainty in likelihood ratio evaluations in forensic science.

Materials:

- Forensic evidence data

- Relevant background data for model development

- Computational resources for sensitivity analysis

Procedure:

- Develop an assumptions lattice specifying all modeling choices

- Construct an uncertainty pyramid examining different levels of assumptions

- Calculate likelihood ratios under varying modeling assumptions

- Assess the range of LR values obtained under different reasonable models

- Report the LR with appropriate uncertainty characterization

Critical Consideration: The LR provided by a forensic expert (LRExpert) may differ from the personal LR of the decision-maker (LRDM) due to subjective elements in its assessment [33]. Uncertainty analysis helps bridge this gap.

Visualizations

Workflow for Forensic Evidence Evaluation

Uncertainty Pyramid for LR Assessment

Relationship Between Bayesian Framework and LR

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for LR Framework Implementation

| Tool Category | Specific Tool/Test | Function | Application Context |

|---|---|---|---|

| Statistical Tests | Likelihood Ratio Test (LRT) | Tests whether binomial proportions are equal [35] | 2×2 tables, contingency tables |

| Statistical Tests | Pearson's χ² Test | Tests whether variance differs from expected [35] | Goodness-of-fit testing |

| Statistical Tests | Z-test | Approximate test for proportions | Large sample situations |

| Model Selection | Context Tree Models | Represents dependencies in sequential data [37] | Probabilistic sequence prediction |

| Uncertainty Framework | Assumptions Lattice | Structures modeling assumptions and choices [33] | LR uncertainty assessment |

| Uncertainty Framework | Uncertainty Pyramid | Examines different assumption levels [33] | Sensitivity analysis for LRs |

| Computational Tools | R Statistical Software | Implements LRT and other statistical tests | General statistical analysis |

| Computational Tools | Python SciPy Library | Provides statistical functions | General statistical analysis |

Implementation Considerations

Limitations and Criticisms

While the likelihood-ratio framework offers a logically coherent approach to evidence evaluation, several limitations merit consideration:

- Subjectivity Concerns: The LR computed by a forensic expert is inherently subjective, as it requires personal choices in model specification and probability assessments [33]

- Uncertainty Characterization: Many practitioners fail to adequately characterize uncertainty in LR evaluations, potentially misleading decision-makers [33]

- Model Dependency: LR values can be highly dependent on modeling choices, requiring thorough sensitivity analysis [33]

- Communication Challenges: Converting numerical LR values to verbal descriptions may obscure their quantitative meaning [33]

Best Practices for Validation Studies

For researchers conducting validation studies for forensic feature-comparison methods:

- Explicit Assumption Documentation: Maintain detailed records of all modeling assumptions and choices

- Comprehensive Sensitivity Analysis: Explore how LR values change under different reasonable modeling approaches

- Uncertainty Quantification: Report ranges of plausible LR values rather than single point estimates

- Empirical Validation: Conduct black-box studies where ground truth is known to estimate error rates [33]

- Transparent Reporting: Clearly communicate limitations and assumptions alongside LR values

The likelihood-ratio framework provides a logically coherent approach for evaluating forensic evidence within a Bayesian framework. When properly implemented with appropriate uncertainty characterization, it offers forensic researchers and practitioners a powerful tool for communicating the strength of evidence. Validation studies for subjective forensic feature-comparison methods should incorporate comprehensive sensitivity analyses using assumptions lattices and uncertainty pyramids to ensure the reliability and scientific validity of LR-based evaluations. The protocols and guidelines presented in this application note provide a foundation for rigorous implementation of the LR framework in forensic science research and practice.

Blind proficiency testing represents a cornerstone methodology for validating subjective forensic feature-comparison methods. Unlike declared (open) proficiency tests where analysts know they are being tested, blind proficiency tests involve samples submitted through normal analysis pipelines as if they were real cases [38]. This approach is critical because research demonstrates that examiners may behave differently during declared testing, potentially dedicating additional time and scrutiny to analyses compared to routine casework [38]. Within the context of validating subjective forensic methods, blind testing provides unique insights into actual laboratory performance under real-world conditions, avoiding the changes in behavior that occur when examiners know they are being evaluated.

The theoretical foundation for blind proficiency testing rests on its ability to detect various categories of nonconforming work that might otherwise go undetected. While declared tests can identify innocent clerical mistakes and deficiencies resulting from inadequate training (malpractice), blind testing remains one of the few methods capable of detecting deliberate misconduct, as examiners taking steps to conceal nonconforming work cannot prepare special measures for tests they cannot identify [38]. Furthermore, properly designed blind tests must resemble actual cases closely enough to convince analysts of their authenticity, thereby ensuring greater ecological validity compared to commercial proficiency tests, which have been shown in some disciplines to differ substantially from casework in both tasks and difficulty [38].

Designing Effective Blind Proficiency Studies

Core Principles and Theoretical Framework

Effective blind proficiency testing programs for forensic feature-comparison methods should adhere to four key principles derived from validation frameworks for applied sciences. First, scientific plausibility requires that the theoretical basis for the forensic method must be credible and grounded in established scientific principles [39] [9]. Second, sound research design must encompass both construct validity (whether the test measures what it intends to measure) and external validity (whether results generalize to real-world casework) [9]. Third, intersubjective testability ensures that findings can be replicated by different researchers using varied testing paradigms, overcoming subjective errors and biases [9]. Fourth, a valid methodology must exist to reason from group-level data to statements about individual cases, acknowledging the probabilistic nature of forensic science [9].

These principles align with broader scientific guidelines for evaluating forensic feature-comparison methods, which emphasize that applied sciences generally develop along a path from basic scientific discovery to theory formation, instrument development, specification of predictions, and finally empirical validation [39]. The National Commission on Forensic Science has accordingly recommended that forensic science service providers "seek proficiency testing programs that provide sufficiently rigorous samples that are representative of the challenges of forensic casework" [38].

Implementation Models for Blind Proficiency Testing