Scientific Rigor in the Courtroom: A Guide to Forensic Method Validation Under FRE 702 for Biomedical Researchers

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on navigating the heightened admissibility standards for expert testimony under the amended Federal Rule of Evidence 702.

Scientific Rigor in the Courtroom: A Guide to Forensic Method Validation Under FRE 702 for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on navigating the heightened admissibility standards for expert testimony under the amended Federal Rule of Evidence 702. It explores the foundational legal framework established by Daubert and the crucial 2023 amendment, which demands a more explicit demonstration of reliability from judges. The content details practical methodological approaches for building a validated scientific foundation, identifies common pitfalls in forensic evidence, and offers strategies for troubleshooting and optimizing analytical methods. By synthesizing legal requirements with scientific best practices, this guide aims to equip professionals with the knowledge to ensure their expert evidence meets the rigorous standards of judicial gatekeeping, thereby strengthening the integrity of scientific evidence in legal proceedings.

The New Legal Landscape: Understanding FRE 702's Gatekeeping Mandate and Its Impact on Science

The admissibility of expert testimony in U.S. courts has evolved significantly over the past century, moving from a simplistic "general acceptance" test to a more nuanced judicial gatekeeping function. This evolution reflects an ongoing tension between the need for reliable scientific evidence and the practical realities of courtroom proceedings. For researchers, scientists, and drug development professionals, understanding these legal standards is crucial when preparing to present scientific evidence in litigation or regulatory proceedings. The current framework governing expert evidence is Federal Rule of Evidence 702, which was most recently amended in December 2023 to clarify and reinforce judges' responsibilities in evaluating expert testimony [1] [2].

The journey from Frye to Daubert to the current Rule 2023 Amendment represents the legal system's continuing effort to balance several competing interests: allowing juries access to relevant specialized knowledge while preventing unreliable or unscientific testimony from influencing outcomes; providing judges with clear standards while maintaining flexibility to evaluate diverse types of expertise; and encouraging innovation in scientific fields while maintaining sufficient safeguards against unproven methods. For forensic researchers and drug development professionals, this legal landscape directly impacts how scientific evidence must be validated and presented to withstand judicial scrutiny.

The Frye Era: The "General Acceptance" Standard

Origins and Application

The Frye standard originated from the 1923 District of Columbia Court of Appeals case Frye v. United States, which addressed the admissibility of systolic blood pressure deception tests, a precursor to the polygraph [1] [3]. The court established what became known as the "general acceptance" test, stating that scientific evidence must be "deduced from a well-recognized scientific principle or discovery" that has "gained general acceptance in the particular field in which it belongs" [1]. This standard effectively delegated to scientific communities the responsibility for determining which methods were sufficiently reliable for courtroom use.

For much of the 20th century, Frye served as the predominant standard for expert testimony admissibility in federal and state courts. The standard provided a straightforward test that avoided requiring judges to make independent assessments of scientific validity. Under Frye, courts focused exclusively on whether the methodology underlying an expert's opinion was generally accepted by relevant scientific communities, without evaluating the validity of the methodology itself or whether it was properly applied in a specific case [2].

Limitations in Practice

Despite its longevity, the Frye standard faced significant criticism over time. By deferring completely to scientific communities, Frye created several problems:

- Conservatism Bias: Novel but valid scientific techniques could be excluded for years until they achieved "general acceptance" [2]

- Methodological Rigidity: The test focused exclusively on methodology without considering whether it was reliably applied in specific cases [2]

- Circularity: Courts often interpreted "relevant scientific community" narrowly, allowing subgroups to validate their own questionable methods [4]

The Frye standard's fundamental limitation was its failure to provide judges with tools to evaluate whether "generally accepted" methods actually produced reliable results in specific cases. As one court noted, under Frye, even when "an accepted methodology produces 'bad science,' the testimony will likely be admitted" [2].

The Daubert Revolution: Judicial Gatekeeping and Scientific Reliability

The Supreme Court's Transformative Decision

The landscape of expert evidence admissibility changed dramatically in 1993 with the Supreme Court's decision in Daubert v. Merrell Dow Pharmaceuticals, Inc. [1] [5] [4]. The case involved whether Bendectin, a prescription anti-nausea medication, caused birth defects. The Court held that the Frye standard had been superseded by the Federal Rules of Evidence, which contained no mention of a "general acceptance" requirement [1]. In doing so, the Court articulated a new role for trial judges as "gatekeepers" responsible for ensuring the reliability and relevance of expert testimony [1] [5].

The Daubert Court emphasized that Rule 702's "overarching subject" is the "scientific validity" of the principles underlying proposed testimony [1]. The focus must be on "the principles and methodology the expert uses, not on the conclusions the expert reaches" [1]. The Court also addressed concerns that this gatekeeping role would be "stifling and repressive" to a jury's search for truth, concluding that it was necessary to exclude "conjectures that are probably wrong" to ensure judicial efficiency and sound legal judgment [1].

The Daubert Factors

The Supreme Court provided a non-exclusive checklist of factors for trial courts to consider when assessing scientific validity:

- Testability: Whether the expert's technique or theory can be or has been tested [5] [4]

- Peer Review: Whether the technique or theory has been subjected to peer review and publication [5] [4]

- Error Rate: The known or potential rate of error of the technique or theory when applied [5] [4]

- Standards: The existence and maintenance of standards controlling the technique's operation [5] [4]

- General Acceptance: The degree of acceptance within the relevant scientific community [5] [4]

The Court emphasized that these factors were flexible and not intended as a "definitive checklist or test" [5]. Subsequent decisions, particularly Kumho Tire Co. v. Carmichael (1999), clarified that the Daubert gatekeeping function applies to all expert testimony, not just scientific testimony [5].

Impact on Forensic Sciences

Daubert's requirement that judges examine the empirical foundation for proffered expert testimony had profound implications for forensic sciences [4]. As courts began asking about the methods, principles, and data supporting various forensic disciplines, it became apparent that "little actual scientific work had been done on evidence that had long been routinely admitted" [4]. Despite this, many courts continued to admit forensic evidence with minimal scrutiny, particularly in criminal cases [4] [6].

Table 1: Evolution of Expert Evidence Standards

| Standard | Originating Case | Key Test | Judicial Role | Primary Focus |

|---|---|---|---|---|

| Frye | Frye v. United States (1923) | General acceptance in relevant scientific community | Minimal; defers to scientific consensus | Methodology only |

| Daubert | Daubert v. Merrell Dow (1993) | Flexible factors focusing on scientific reliability | Active gatekeeper | Methodology and principles |

| Rule 702 (2023) | Judicial Conference Amendments | Preponderance of evidence showing reliability | Reinforced gatekeeper with explicit burdens | Methodology, application, and conclusions |

The 2000 and 2011 Amendments: Codifying Daubert

The 2000 Amendment

In 2000, Rule 702 was amended to codify the Daubert standard and the Supreme Court's decision in Kumho Tire [1] [5]. The amendment affirmed the trial court's role as gatekeeper and provided explicit standards for assessing the reliability and helpfulness of proffered expert testimony [5]. The amended rule stated that an expert may testify if "(1) the testimony is based upon sufficient facts or data, (2) the testimony is the product of reliable principles and methods, and (3) the witness has applied the principles and methods reliably to the facts of the case" [5].

The Committee Notes emphasized that the amendment was intended to address courts that had not properly applied Daubert, stating that "the rejection of expert testimony is the exception rather than the rule" [5]. Despite this clarification, courts continued to apply Daubert inconsistently, with some circuits effectively abdicating their gatekeeping role by treating challenges to an expert's basis as going to "weight rather than admissibility" [1] [7].

The 2011 Amendment

The 2011 amendment to Rule 702 further emphasized that the proponent of expert testimony must demonstrate by a preponderance of the evidence that the testimony meets admissibility requirements [1] [5]. The Committee concluded that this clarification was necessary because "many courts have held that the critical questions of the sufficiency of an expert's basis, and the application of the expert's methodology, are questions of weight and not admissibility" – which the Committee stated was "an incorrect application of Rules 702 and 104(a)" [1].

The 2023 Amendment: Clarifying and Reinforcing the Gatekeeping Role

Key Changes and Intent

On December 1, 2023, the latest amendments to Rule 702 took effect [1] [2] [7]. While characterized as a clarification rather than a substantive change, the amendment made two critical modifications to the rule text:

- Explicit Preponderance Standard: The amendment added language requiring that "the proponent demonstrates to the court that it is more likely than not that" the testimony satisfies Rule 702's requirements [1] [2]

- Reliable Application Focus: The amendment changed subsection (d) from requiring that "the expert has reliably applied the principles and methods" to "the expert's opinion reflects a reliable application of the principles and methods" [1] [2]

The Advisory Committee explained that these changes were necessary because many courts had incorrectly applied Rule 702 by treating challenges to the sufficiency of an expert's basis or application of methodology as questions of "weight and not admissibility" [1] [7]. The Committee emphasized that "once the court has found it more likely than not that the admissibility requirement has been met, any attack by the opponent will go only to the weight of the evidence" [2].

Emphasizing Judicial Gatekeeping

The 2023 amendments reinforce that judges must critically evaluate whether expert opinions "stay within the bounds of what can be concluded from a reliable application of the expert's basis and methodology" [2]. The Committee Notes explain that "judicial gatekeeping is essential" because jurors may lack the specialized knowledge to evaluate the reliability of scientific methods or determine whether conclusions go beyond what the expert's methodology can support [1] [2].

This clarification addresses concerns that some courts had been admitting expert testimony where there was an "analytical gap" between the data and the opinion proffered, making the rule consistent with the Supreme Court's decision in General Electric v. Joiner (1997) [2].

Early Judicial Response

Early cases following the 2023 amendments suggest that courts may be slow to change their approach to Rule 702 [7]. Some circuits that had previously been criticized for misapplying the preponderance standard have continued to cite pre-amendment precedent without acknowledging the impact of the amendments [7]. For example, the First Circuit has continued to quote its pre-amendment assertion that "[w]hen the factual underpinning of an expert's opinion is weak, it is a matter affecting the weight and credibility of the testimony," despite this approach being inconsistent with the amended rule's requirements [7].

Table 2: Key Changes in the 2023 Amendment to FRE 702

| Aspect | Pre-2023 Rule | 2023 Amendment | Practical Significance |

|---|---|---|---|

| Burden of Proof | Implicit preponderance standard | Explicit statement that "proponent demonstrates... it is more likely than not" | Clarifies that proponents must affirmatively establish admissibility |

| Application of Methods | "The expert has reliably applied the principles and methods" | "The expert's opinion reflects a reliable application of the principles and methods" | Shifts focus from the expert's process to the objective reliability of the opinion |

| Judicial Gatekeeping | Interpreted inconsistently by courts | Reinforced as essential for protecting jurors from unreliable conclusions | Empowers judges to exclude opinions that extrapolate beyond reliable methodology |

Comparative Analysis: Frye, Daubert, and the 2023 Framework

Fundamental Differences in Approach

The evolution from Frye to Daubert to the 2023 Amendment reflects a fundamental shift from deference to scientific consensus to active judicial assessment of reliability:

- Frye represents a procedural approach that outsources validity determinations to scientific communities

- Daubert and subsequent amendments represent a pragmatic approach that requires judges to evaluate scientific validity using flexible criteria

- The 2023 Amendment represents a clarifying approach that reinforces judicial authority while providing clearer guidance on the proper standard of proof

As one court noted, the difference in outcomes under these standards can be significant: "if a 'reliable, but not yet generally accepted, methodology' produces 'good science,' the Daubert standard will let it in. If an 'accepted methodology' produces 'bad science,' the Daubert standard will keep it out. In contrast, under the Frye standard, even if a new methodology produces 'good science,' the testimony will usually be excluded... [and] even if an accepted methodology produces 'bad science,' the testimony will likely be admitted" [2].

State Court Variations

While federal courts uniformly apply the Daubert standard as codified in Rule 702, state courts exhibit significant variation:

- 32 states have adopted some version of the Daubert standard since the 2000 amendment to Rule 702 [1]

- Pennsylvania continues to use the Frye "general acceptance" standard [2]

- Some states have developed hybrid approaches incorporating elements of both Frye and Daubert

This variation creates challenges for expert witnesses and litigators who practice in both federal and state courts, requiring careful attention to the specific jurisdiction's standard for admissibility.

Implications for Forensic Method Validation

Scientific Guidelines for Validation

In response to Daubert's requirements for scientific validity, researchers have developed guidelines specifically for evaluating forensic feature-comparison methods. Inspired by the "Bradford Hill Guidelines" in epidemiology, these include:

- Plausibility: The theoretical soundness of the proposed method [4]

- Research Design: The soundness of research design and methods (construct and external validity) [4]

- Intersubjective Testability: The ability to replicate and reproduce results [4]

- Individualization Methodology: The availability of a valid methodology to reason from group data to statements about individual cases [4]

These guidelines address the unique challenges of forensic comparison methods, which "routinely involve a trained human examiner visually comparing a patterned impression left at a crime scene... to a known exemplar and making a subjective judgment about whether the patterns are sufficiently similar to conclude that they share a common source" [4].

Ongoing Scientific Scrutiny

Multiple scientific organizations have raised significant concerns about the empirical foundations of many traditional forensic disciplines:

- A 2009 National Research Council (NRC) report found that "with the exception of nuclear DNA analysis... no forensic method has been rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [4] [6]

- A 2016 President's Council of Advisors on Science and Technology (PCAST) report reached similar conclusions, emphasizing that empirical evidence is the only basis for establishing scientific validity [4] [6]

- A 2017 American Association for the Advancement of Science (AAAS) report confirmed foundational validity for fingerprint analysis but noted higher error rates than previously recognized [6]

These reports highlight the ongoing tension between legal precedent admitting long-used forensic methods and scientific standards requiring rigorous empirical validation.

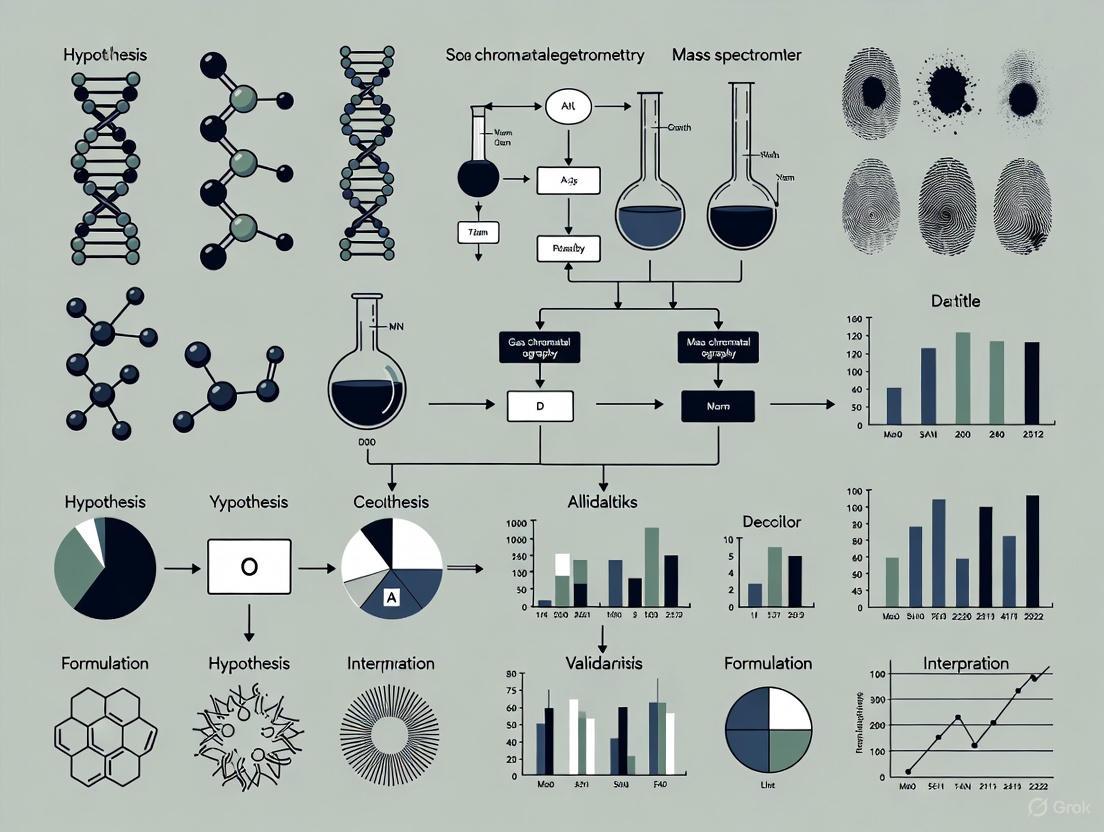

Diagram 1: Evolution of Expert Evidence Standards

Practical Application: The Scientist's Toolkit for Expert Testimony

Essential Methodological Components

For researchers and scientists preparing to offer expert testimony, the following components are essential for withstanding Daubert/Rule 702 challenges:

- Validation Studies: Empirical testing demonstrating the method's reliability and error rates [4] [6]

- Standard Operating Procedures (SOPs): Documented protocols that are followed whenever possible, with thorough explanations for any necessary deviations [8]

- Peer Review: Independent evaluation by qualified experts in the field [5] [4]

- Blind Testing: Procedures to minimize contextual bias in forensic examinations [6]

- Error Rate Data: Transparent assessment and reporting of method and practitioner error rates [5] [4] [6]

Documentation and Reporting Standards

Comprehensive documentation is critical for establishing reliability under Rule 702:

- Methodology Documentation: Detailed records of principles, methods, and analytical processes [8] [9]

- Data Sufficiency Analysis: Documentation showing the connection between available data and expert conclusions [1] [2]

- Application Justification: Explanation of how principles and methods were reliably applied to case facts [1] [2]

- Alternative Explanations: Consideration and ruling out of obvious alternative explanations [5]

Table 3: Research Reagent Solutions for Forensic Method Validation

| Reagent/Resource | Function in Validation | Application in Expert Testimony |

|---|---|---|

| Validation Studies | Establish foundational validity of methods | Demonstrate compliance with Rule 702(c) requirement for reliable principles and methods |

| Error Rate Data | Quantify reliability and limitations of methods | Address Daubert factor regarding known or potential error rate |

| Standard Operating Procedures | Ensure consistent application of methods | Demonstrate reliable application of principles to case facts under Rule 702(d) |

| Blind Testing Protocols | Minimize contextual bias in examinations | Support testimony objectivity and methodological rigor |

| Peer-Reviewed Publications | Provide independent verification of methods | Satisfy Daubert factor regarding peer review and general acceptance |

Diagram 2: Rule 702 Reliability Framework

The journey from Frye to Daubert to the 2023 Amendment reflects the legal system's ongoing effort to balance the need for relevant expert testimony with protections against unreliable or unscientific evidence. For researchers, scientists, and drug development professionals, understanding this evolving landscape is essential for presenting scientific evidence that withstands judicial scrutiny.

The 2023 Amendment represents not a radical change but a clarification of what has always been required under Daubert and Rule 702: that proponents must demonstrate by a preponderance of the evidence that their expert's testimony rests on reliable foundations and stays within the bounds of what those foundations can support. As courts continue to apply the amended rule, the hope is that more consistent application of these standards will enhance the reliability of expert evidence in legal proceedings.

For the scientific community, these legal standards underscore the importance of rigorous methodology, transparent validation, and appropriate limitations in expert opinions. By aligning scientific practice with these legal requirements, researchers can ensure their work contributes meaningfully to legal proceedings while maintaining scientific integrity.

The 2023 amendment to Federal Rule of Evidence 702 represents the most significant clarification to expert testimony standards in nearly a quarter-century. For researchers, scientists, and drug development professionals, these changes have profound implications for how scientific evidence is evaluated in legal proceedings, particularly regarding forensic method validation. The amendment specifically addresses two critical areas: clarifying the burden of proof for admitting expert testimony and tightening the connection between an expert's methodology and their proffered opinions. This refinement aims to ensure that judges fulfill their gatekeeping responsibilities with greater consistency and scientific rigor, directly impacting how forensic sciences are presented and evaluated in the judicial system [10] [11] [12].

Historical Context of Rule 702

Federal Rule of Evidence 702 governs the admissibility of expert testimony in federal courts. The rule has evolved significantly from its original 1975 text through a series of landmark court decisions and amendments.

Table: Evolution of Federal Rule of Evidence 702

| Year | Development | Key Standard | Judicial Role |

|---|---|---|---|

| 1923 | Frye v. United States | "General acceptance" in the relevant scientific community [7] | Limited gatekeeping |

| 1975 | Original FRE 702 enacted | Expert must be qualified and testimony "assist the trier of fact" [7] | Moderate gatekeeping |

| 1993 | Daubert v. Merrell Dow | Trial judge as gatekeeper; flexible reliability factors [5] [7] | Active gatekeeping |

| 2000 | First Amendment to FRE 702 | Codified Daubert; added three specific reliability requirements [5] [7] | Strengthened gatekeeping |

| 2023 | Second Amendment to FRE 702 | Clarified preponderance standard and reliable application requirement [11] [12] | Explicit, rigorous gatekeeping |

The trajectory of these changes reflects an ongoing effort to balance the admission of valuable specialized knowledge with the need to protect juries from unreliable or misleading expert testimony. The 2023 amendments specifically respond to decades of inconsistent application by courts, with studies revealing that approximately 65% of federal trial court opinions failed to properly cite the preponderance of the evidence standard prior to the amendment [10].

The 2023 Amendment: A Detailed Analysis

Core Changes to the Rule Text

The amended rule, effective December 1, 2023, contains two critical textual modifications (additions underlined, deletions struck through):

Rule 702. Testimony by Expert Witnesses

A witness who is qualified as an expert by knowledge, skill, experience, training, or education may testify in the form of an opinion or otherwise if the proponent demonstrates to the court that it is more likely than not that:

(a) the expert's scientific, technical, or other specialized knowledge will help the trier of fact to understand the evidence or to determine a fact in issue;

(b) the testimony is based on sufficient facts or data;

(c) the testimony is the product of reliable principles and methods; and

(d) the expert has reliably applied expert's opinion reflects a reliable application of the principles and methods to the facts of the case [11] [12].

Practical Implications of the Amendments

The first change explicitly confirms that the proponent of expert testimony bears the burden of establishing admissibility by a preponderance of the evidence ("more likely than not"). This standard applies to all four prerequisites in Rule 702(a)-(d), not just the expert's qualifications [10] [7]. Prior to this amendment, many courts erroneously treated questions about the sufficiency of an expert's basis or the application of their methodology as matters of "weight" for the jury rather than "admissibility" for the judge [10].

The second change modifies subsection (d) to emphasize that the expert's opinion itself—not merely the application of the methodology—must reliably follow from the principles and methods applied. This aims to prevent experts from overstating their conclusions, particularly in fields relying on subjective judgment [10] [11]. For forensic practitioners, this means avoiding "assertions of absolute or one hundred percent certainty" when the underlying methodology is subjective and potentially subject to error [10].

Application to Forensic Method Validation

The Scientific Validity Challenge in Forensics

The amended Rule 702 presents particular significance for forensic sciences, where many traditional disciplines face ongoing scrutiny regarding their scientific validity. Landmark reports from the National Research Council (2009) and the President's Council of Advisors on Science and Technology (2016) found that with the exception of nuclear DNA analysis, most forensic feature-comparison methods lacked rigorous validation of their ability to consistently and accurately identify specific individuals or sources [4] [6].

Table: Scientific Validation Status of Select Forensic Disciplines

| Forensic Discipline | Level of Scientific Validation | Key Limitations Noted |

|---|---|---|

| DNA Analysis (single-source) | Extensive validation through thousands of studies [6] | Considered the gold standard |

| Latent Fingerprint Analysis | Limited validation (approx. 12 studies); foundational validity with recognized error rates [6] | Subjective comparisons; potential for contextual bias |

| Firearms & Toolmark Analysis | Limited validation; some emerging empirical studies [4] [6] | Subjective comparisons; lack of objective standards |

| Bitemark Analysis | No empirical evidence of validity [6] | No scientific basis for claiming unique matches |

A Guidelines Approach for Forensic Validation

In response to these challenges, scientists have proposed validation frameworks specifically for forensic comparison methods. Inspired by the Bradford Hill Guidelines for causal inference in epidemiology, researchers have suggested four key guidelines for evaluating forensic feature-comparison methods:

- Plausibility: The scientific plausibility of the method's underlying principles

- Validity of Research Design: The soundness of research design and methods (construct and external validity)

- Intersubjective Testability: The ability to replicate and reproduce results

- Individualization Methodology: The availability of a valid methodology to reason from group data to statements about individual cases [4]

These guidelines emphasize that forensic science, like other applied sciences, should progress from basic scientific discovery to theory formation, invention development, specification of predictions, and finally empirical validation [4].

Rule 702 in the Broader Landscape of Evidence Standards

Comparison with Regulatory Evidence Standards

The "preponderance of the evidence" standard under Rule 702 differs significantly from the evidence thresholds required in regulatory and research contexts:

Table: Comparative Evidence Standards Across Domains

| Domain | Governing Body/Context | Evidence Standard | Key Requirements |

|---|---|---|---|

| Legal Proceedings | Federal Courts (FRE 702) | Preponderance of the evidence ("more likely than not") [11] | Reliable principles/methods; reliable application to facts [5] |

| Drug Approval | Food & Drug Administration (FDA) | "Substantial evidence" from adequate, well-controlled investigations [13] | Typically requires two independent studies; validated surrogate endpoints acceptable [13] |

| Research Ethics | Institutional Review Boards (IRBs) | "Clear and convincing evidence" for studies with significant risks [14] | Empirical evidence preferred; risk-benefit assessment [14] |

For drug development professionals, these distinctions are crucial. The FDA's "substantial evidence" standard typically requires replication in more than one adequate and well-controlled clinical investigation, with limited exceptions for cases where a single trial with confirmatory evidence may suffice [13]. This contrasts with the legal standard which focuses on the reliability of methodology rather than replicated findings.

The Researcher's Toolkit for Rule 702 Compliance

For scientific and technical professionals whose work may be presented in legal proceedings, several key practices enhance compliance with Rule 702's standards:

- Comprehensive Documentation: Maintain detailed records of methodologies, data sources, and analytical choices

- Error Rate Assessment: Quantify and document known or potential error rates of methodologies

- Peer Review Participation: Seek peer review through publication or scientific evaluation

- Blinded Procedures: Implement protocols that minimize contextual bias in forensic analyses [6]

- Appropriate Qualification Statements: Ensure expert opinions accurately reflect the limitations of the methodology used

The 2023 amendments to Rule of Evidence 702 represent a significant step toward more rigorous and consistent evaluation of expert testimony. By clarifying the preponderance of the evidence standard and strengthening the requirement for reliable application of methodology to opinions, the amended rule addresses longstanding concerns about the admission of insufficiently validated forensic evidence. For researchers, scientists, and drug development professionals, these changes underscore the critical importance of methodological transparency, empirical validation, and appropriate qualification of conclusions. As courts continue to apply the amended rule, the scientific community's engagement with these evidence standards will be essential to ensuring that reliable science informs legal decision-making.

Federal Rule of Evidence 702 imposes a critical gatekeeping duty on judges to ensure that all expert testimony admitted in court is not only relevant but reliable. This mandate, solidified by the Supreme Court's landmark decision in Daubert v. Merrell Dow Pharmaceuticals, Inc., requires judges to perform a preliminary assessment of whether an expert's testimony reflects "scientific knowledge" derived from a scientifically valid methodology. The rule specifically demands that judges scrutinize whether testimony is based on sufficient facts or data, is the product of reliable principles and methods, and reliably applies those principles to the case facts [5]. In forensic science, this judicial function is paramount, as it forms the primary barrier preventing unreliable or unvalidated forensic methods from reaching the jury, thus protecting the integrity of legal outcomes.

The 2023 amendment to Rule 702 significantly reinforced this gatekeeping role by clarifying that the proponent of the expert testimony must demonstrate its admissibility by a preponderance of the evidence [15]. This amendment sought to correct widespread misapplication by courts that had incorrectly treated the sufficiency of an expert's basis and the application of their methodology as mere "weight of the evidence" issues for the jury, rather than questions of admissibility for the judge. Recent circuit court decisions have embraced this changed standard, emphasizing that judges must now more critically analyze an expert's data and methodology at the admissibility stage [15].

The Evolution of Judicial Scrutiny: FromDaubertto the 2023 Amendment

The legal standard for admitting expert evidence has evolved substantially. The foundational case of Daubert v. Merrell Dow Pharmaceuticals, Inc. established the trial judge's role as a gatekeeper and provided a non-exclusive checklist of factors for assessing the reliability of scientific testimony, including testing, peer review, error rates, and acceptability in the relevant scientific community [5]. This was later extended to all expert testimony in Kumho Tire Co. v. Carmichael [5].

For years following Daubert, many courts, including the Fifth and Eighth Circuits, operated under a "liberal admission" standard, often declaring that the factual basis of an expert's opinion went to the credibility of the testimony, not its admissibility [15]. The 2023 amendment to Rule 702, along with its accompanying Committee Note, explicitly rejected this approach, stating that such rulings were "an incorrect application" of the rules [15]. This correction has reshaped circuit court law in 2025, with courts like the Fifth Circuit now explicitly breaking with their prior precedent and declaring that an insufficient factual basis is a valid ground for exclusion [15].

Table: Evolution of Judicial Scrutiny of Expert Testimony

| Period | Leading Case/Event | Standard for Scrutinizing Basis & Methodology |

|---|---|---|

| Pre-1993 | Frye v. United States | "General acceptance" in the relevant scientific community. |

| 1993-1999 | Daubert v. Merrell Dow | Judge as gatekeeper; flexible factors focused on scientific validity and reliability. |

| 1999-2023 | Kumho Tire v. Carmichael | Daubert gatekeeping applies to all expert testimony, not just "scientific" knowledge. |

| Post-2023 Amendment | EcoFactor v. Google LLC (2025) | Proponent must show admissibility by a preponderance; basis and application are admissibility questions. |

The Crucial Distinction: Scrutinizing Basis and Methodology Versus Weight

A core responsibility of the judge as gatekeeper is to distinguish between the admissibility of expert evidence and the weight it should be accorded by the fact-finder. The 2023 amendment decisively resolved a long-standing conflict in the case law by clarifying that the critical questions of the sufficiency of an expert's basis and the application of the expert's methodology are questions of admissibility to be decided by the court under Rule 104(a), not questions of weight for the jury [15].

This means a judge must exclude expert testimony if the proponent fails to show that the opinion is grounded in sufficient facts or data, even if the underlying methodology is otherwise sound. For example, in the 2025 case EcoFactor, Inc. v. Google LLC, the Federal Circuit held that a damages expert's testimony was inadmissible because his opinion that certain licenses reflected an established per-unit royalty rate was directly contradicted by the plain language of the licenses themselves, which stated the lump-sum payments were "not based upon sales" [16]. The expert's reliance on an executive's unsupported assertion about the licenses' basis, without any underlying sales data, meant the testimony failed the "sufficient facts or data" requirement of Rule 702(b) [16]. The judge's role is to make this admissibility determination before the testimony ever reaches the jury.

Analytical Framework: The Judge's Toolkit for Scrutinizing Forensic Evidence

Judges employ a multi-faceted analytical framework when scrutinizing the basis and methodology of proffered expert testimony. This framework integrates the specific subsections of Rule 702 with factors developed in case law.

TheDaubertFactors and Beyond

The non-exclusive Daubert factors remain a foundational toolkit [5]:

- Testing: Can and has the expert's theory or technique been tested?

- Peer Review: Has the method been subjected to peer review and publication?

- Error Rates: What is the known or potential rate of error?

- Standards: Are there standards controlling the technique's operation?

- General Acceptance: Is the method generally accepted in the relevant scientific community?

Other practical considerations include whether the expert developed their opinion independently of the litigation, unjustifiably extrapolated from an accepted premise, or adequately accounted for alternative explanations [5].

The Question of Sufficient Facts or Data

Judges must examine the quantitative and qualitative adequacy of the information an expert relies upon. An opinion is not admissible if it is based on assumed facts that are not supported by the record. The EcoFactor decision is a paradigm case where the court engaged in detailed contract interpretation to find that the actual evidence contradicted, rather than supported, the expert's critical factual premise [16]. An assertion without evidentiary support cannot provide a sufficient basis.

Reliable Application of Principles and Methods

It is not enough for an expert to use a reliable methodology in the abstract; they must also reliably apply it to the facts of the case. Rule 702(d) requires this, and a failure can lead to exclusion. The Advisory Committee Note cites General Elec. Co. v. Joiner, noting that a court may conclude "there is simply too great an analytical gap between the data and the opinion proffered" [5]. The judge must look for a clear, logical connection between the data, the methodology, and the conclusion reached.

Diagram: Judicial Gatekeeping Pathway under Federal Rule of Evidence 702. This flowchart outlines the sequential questions a judge must answer when determining the admissibility of expert testimony. The proponent must satisfy each requirement by a preponderance of the evidence.

Comparative Analysis: Method Validation in Forensic Science vs. Judicial Scrutiny

For forensic scientists, the process of method validation provides the foundational reliability that judges then scrutinize. There is a direct, parallel relationship between the scientific rigor of validation and the legal standards of admissibility.

Comparative Experimental Protocols

The following table contrasts the key stages of formal method validation in forensic science with the corresponding judicial scrutiny under Rule 702.

Table: Comparison of Scientific Validation and Judicial Scrutiny Protocols

| Validation Phase (Scientific) | Key Procedures & Metrics | Judicial Scrutiny (Legal) | Key Inquiries & Standards |

|---|---|---|---|

| Method Comparison | Compare test method to reference method using 40+ patient specimens covering working range; assess specificity with 100-200 specimens [17]. | Sufficient Facts/Data | Did the expert use an adequate sample size? Was the data representative? Were obvious alternative explanations considered? [5] |

| Accuracy & Precision | Estimate systematic error via linear regression (slope, y-intercept); calculate standard deviation of differences; use difference plots [17]. | Reliable Principles/Methods | Has the method been tested? What is its error rate? Is it subject to standards and controls? Is it generally accepted? [5] |

| Data Analysis | Graph data via difference/ comparison plots; calculate correlation coefficient (r); use regression statistics (Yc = a + bXc) to estimate systematic error at decision points [17]. | Reliable Application | Is there an "analytical gap" between the data and the opinion? Did the expert use the same intellectual rigor as in their professional work? [5] |

| Verification | Laboratory demonstrates it can properly perform a validated method (implementation verification and item verification) [18]. | Qualifications & Fit | Is the expert qualified by knowledge, skill, experience, training, or education? Will the testimony assist the trier of fact? [5] |

Case Study: TheEcoFactorApplication

The Federal Circuit's 2025 en banc decision in EcoFactor, Inc. v. Google LLC serves as a prime example of rigorous judicial scrutiny. The court held that a damages expert's testimony was inadmissible because it was not "based on sufficient facts or data" as required by Rule 702(b) [16] [15]. The expert claimed that certain lump-sum license agreements were based on a per-unit royalty rate. However, the court found this critical fact was contradicted by the plain language of the licenses themselves, which stated the sums were "not based upon sales" [16]. The expert's additional reliance on an executive's unsupported testimony about the licenses' basis, without any underlying sales data or documentation, further failed to provide a sufficient factual foundation. The district court's failure to exclude this testimony was a failure of its gatekeeping function, necessitating a new trial on damages [16].

For researchers and forensic science professionals, demonstrating the reliability of a method requires a suite of standardized reagents, materials, and conceptual frameworks. The following toolkit is essential for constructing a validation that will withstand judicial scrutiny.

Table: Essential Research Reagent Solutions for Forensic Method Validation

| Tool Category | Specific Examples | Function in Validation & Scrutiny |

|---|---|---|

| Reference Materials & Controls | Certified Reference Materials (CRMs), Positive/Negative Controls, Internal Standards | Establishes accuracy and calibrates instruments; provides a benchmark for comparison, addressing the Daubert factor of standards and controls [17] [18]. |

| Standardized Protocols | ISO 16140 (Microbiology), SWGDAM Guidelines, ASTM Standards | Provides a community-accepted framework for validation design, ensuring the method has been evaluated via reliable principles and is generally accepted [19] [18]. |

| Data Analysis Software | Statistical Packages (R, SPSS), Linear Regression Tools, Difference Plot Generators | Enables quantitative estimation of systematic error, precision, and uncertainty; allows for the graphical presentation of data to identify outliers and trends [17]. |

| Quality Assurance Documentation | Quality Assurance Standards (QAS), Audit Checklists, Proficiency Test Data | Demonstrates ongoing compliance with operational standards and provides evidence of the laboratory's commitment to reliable results, reinforcing the foundation for admissibility [19]. |

| Reference Method | A well-characterized method whose correctness is documented, used for comparison in validation studies [17]. | Serves as a benchmark in a comparison of methods experiment; any differences are assigned to the test method, directly testing its accuracy. |

Diagram: Forensic Method Validation and Verification Workflow. This chart visualizes the staged process for validating a new forensic method and verifying its competent performance in a laboratory, as outlined in the ISO 16140 series [18].

The responsibilities of a judge as a gatekeeper and the responsibilities of a forensic scientist are converging on the same fundamental principle: the imperative of demonstrable reliability. For judges, the 2023 amendment to Rule 702 has cemented a more demanding standard, requiring proactive and critical scrutiny of the factual basis and methodological application of every expert opinion. For scientists, this legal landscape makes robust, transparent, and standardized method validation more critical than ever. A validation process that aligns with standards like the ISO 16140 series or SWGDAM guidelines directly provides the evidence judges need to find a methodology reliable under Daubert and Rule 702. In the end, the effective interplay between rigorous scientific validation and rigorous judicial gatekeeping is the bedrock upon which reliable forensic science and just legal outcomes are built.

Forensic evidence has long played a critical role in the justice system by providing scientific proof and professional expertise to support legal proceedings. However, the credibility of forensic evidence has come under intense scrutiny, particularly in cases where flawed scientific testimony has contributed to wrongful convictions. This growing skepticism culminated in two landmark investigations that would fundamentally reshape the discourse around forensic science validity: the 2009 National Research Council's report "Strengthening Forensic Science in the United States: A Path Forward" (NRC Report) and the 2016 President's Council of Advisors on Science and Technology's report "Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods" (PCAST Report). These reports revealed significant flaws in widely accepted forensic techniques and called for stricter scientific validation, creating a new framework for evaluating forensic evidence under legal standards like Federal Rule of Evidence 702 [20].

The convergence of these scientific critiques with the judiciary's gatekeeping responsibilities has created a complex landscape for legal professionals and researchers alike. This guide provides a comprehensive comparison of these foundational reports, their differential impacts on forensic disciplines, and their practical implications for evidence admissibility. By examining the experimental protocols, empirical assessments, and legal implementation of these critiques, researchers and legal practitioners can better navigate the evolving standards of forensic evidence validation.

Report Comparative Analysis: NRC vs. PCAST

Origins, Scope, and Methodological Approaches

The NRC and PCAST reports emerged from distinct vantage points with complementary but differing methodologies. The 2009 NRC report provided a comprehensive examination of the entire forensic science system, highlighting systemic issues across disciplines and operational environments. It addressed problems ranging from laboratory practices to standardization needs, offering a broad critique of the field's scientific foundations [20]. In contrast, the 2016 PCAST report applied a more focused analytical framework specifically on "feature-comparison methods," introducing rigorous guidelines for assessing "foundational validity" and applying those guidelines to specific disciplines including DNA, latent fingerprints, firearms/toolmarks, footwear, bitemarks, and hair microscopy [21].

A fundamental distinction lies in their methodological approaches to validity assessment. The NRC report emphasized the general lack of scientific rigor across many forensic disciplines, noting that many methods had not undergone proper empirical validation. Meanwhile, PCAST established specific technical criteria for foundational validity, requiring that methods be shown to be reproducible with known and acceptable error rates through empirical studies, typically from appropriately designed black-box studies [21] [20]. This methodological difference would significantly influence their reception and implementation within the legal community.

Key Findings and Recommendations Comparison

Table 1: Comparative Analysis of NRC and PCAST Reports

| Aspect | NRC Report (2009) | PCAST Report (2016) |

|---|---|---|

| Primary Focus | Systemic review of entire forensic science system | Specific analysis of feature-comparison methods |

| Definition of Validity | General scientific rigor and reliability | Foundational validity with specific empirical criteria |

| Key Recommendations | Create independent federal entity, standardize practices, improve research | Adopt rigorous empirical validation, establish error rates, enhance testimony limitations |

| DNA Analysis Assessment | Generally supportive with noted limitations | Distinguished between single-source, simple mixtures, and complex mixtures |

| Pattern Evidence View | Expressed significant concerns about subjective methods | Provided specific validity assessments by discipline |

| Impact on Legal Community | Raised general awareness of forensic limitations | Provided specific framework for admissibility challenges |

The PCAST Report specifically defined and established guidelines for what it termed "foundational validity" and applied those guidelines to specific forensic disciplines. It concluded that only certain DNA analyses (single-source and two-person mixtures meeting specific criteria) and latent fingerprint analysis had established foundational validity based on empirical evidence. Other disciplines like bitemark analysis, firearms/toolmarks, and hair microscopy were judged to lack sufficient foundational validity [21]. This specific, discipline-by-discipline approach differed from the NRC's broader critique and provided more targeted guidance for legal challenges.

Experimental Protocols & Empirical Validation Standards

PCAST's Framework for Foundational Validity

The PCAST report introduced a rigorous methodological framework for assessing foundational validity, emphasizing that scientific validity requires empirical evidence from appropriately designed studies. For feature-comparison methods, PCAST specified that foundational validity is established by evidence demonstrating that a method can, in practice, reproducibly yield a low false-positive rate with appropriate estimates of uncertainty [21]. The report emphasized that black-box studies - which measure the performance of the entire forensic analysis process, including human examiners - provide the most direct and applicable evidence for determining validity [21].

For DNA analysis, PCAST established specific performance thresholds for complex mixture interpretations. The report determined that probabilistic genotyping methodology is reliable for samples with up to three contributors where the minor contributor constitutes at least 20% of the intact DNA and where the sample is above the required minimum amount for testing [21]. This precise specification created measurable standards for admissibility challenges, particularly for complex DNA mixtures analyzed by software programs like TrueAllele and STRmix [21].

Response Studies and Methodological Refinements

The forensic science community has conducted numerous response studies to address PCAST's validity criteria. For instance, in response to PCAST's concerns about complex DNA mixture interpretation, the co-founder of STRmix conducted a "PCAST Response Study" claiming that when used correctly, STRmix's reliability remains high with a low margin of error at up to four contributors to a DNA sample [21]. Similarly, for firearms and toolmark analysis, proponents have cited more recently published black-box studies conducted after 2016 as evidence of the method's increasing reliability [21].

These response studies reflect an ongoing methodological evolution in forensic science toward more rigorous empirical validation. The National Institute of Justice's Forensic Science Strategic Research Plan, 2022-2026 explicitly prioritizes "foundational validity and reliability of forensic methods" and calls for "measurement of the accuracy and reliability of forensic examinations (e.g., black box studies)" [22], demonstrating how PCAST's experimental framework has influenced national research priorities.

Legal Integration & Admissibility Impact

Differential Impact on Forensic Disciplines

The integration of NRC and PCAST critiques into legal practice has yielded dramatically different outcomes across forensic disciplines, reflecting varying levels of scientific validity and methodological robustness. The following table illustrates this differential impact on admissibility determinations:

Table 2: Post-PCAST Admissibility Outcomes by Forensic Discipline

| Discipline | PCAST Assessment | Typical Court Response | Case Examples |

|---|---|---|---|

| Bitemark Analysis | Lacks foundational validity | Increasingly excluded or limited; subject to admissibility hearings | Commonwealth v. Ross (2019); State v. Fortin (2020) [21] |

| DNA (Complex Mixtures) | Conditionally valid based on specific criteria | Generally admitted with limitations on testimony scope | U.S. v. Lewis (2020) - Courts reviewed PCAST Response Study [21] |

| Firearms/Toolmarks | Lacked foundational validity in 2016 | Mixed jurisdictionally; often admitted with testimony limitations | Gardner v. U.S. (2016); U.S. v. Hunt (2023) cited newer studies [21] |

| Latent Fingerprints | Foundational validity established | Generally admitted without limitation | [21] |

| Footwear Analysis | Lacked foundational validity | Often subject to limitations and rigorous cross-examination | [21] |

The database of post-PCAST court decisions maintained by the National Center on Forensics reveals that courts have attempted to address validity concerns by limiting the scope of expert testimony rather than excluding evidence entirely. For example, in firearms and toolmark analysis, experts "may not give an unqualified opinion, or testify with absolute or 100% certainty" about matching results [21]. This judicial approach acknowledges methodological concerns while preserving potentially valuable evidence for triers of fact.

Implementation in Federal Rule of Evidence 702 Framework

The NRC and PCAST reports have significantly influenced judicial application of Federal Rule of Evidence 702, which governs expert testimony. Rule 702 requires that expert testimony be based on sufficient facts and data, reliable principles and methods, and reliable application of those methods to the case [5]. The PCAST report in particular has provided judges with a specific framework for assessing the "reliable principles and methods" component of Rule 702, shifting focus from the expert's qualifications to the underlying validity of the methodology [20].

This enhanced scrutiny is evident in database of post-PCAST decisions, which categorizes case outcomes as "Admit," "Admit with government's proposed limits," "Limit," "Exclude," and other designations [21]. The data reveals that outright exclusion of forensic evidence based on PCAST critiques remains relatively rare, with courts more frequently imposing limitations on testimony scope or terminology. For example, in DNA cases involving complex mixtures, courts have limited how statistical weight is described to jurors [21]. This reflects a pragmatic judicial approach that balances scientific concerns with practical adjudication needs.

Research Implementation & Standards Development

Organizational Response and Standards Advancement

The forensic science community has responded to NRC and PCAST critiques through significant standardization efforts, primarily coordinated through the Organization of Scientific Area Committees (OSAC) for Forensic Science. As of February 2025, the OSAC Registry contains 225 standards (152 published and 73 OSAC Proposed) representing over 20 forensic science disciplines [23]. These standards address many methodological concerns raised in both reports, providing validated protocols and best practices for forensic analysis.

Recent standards additions reflect ongoing efforts to address specific validity concerns. In January 2025, nine new standards were added to the OSAC Registry, including standards for "DNA-based Taxonomic Identification in Forensic Entomology," "Examination and Comparison of Toolmarks for Source Attribution," and "Best Practice Recommendations for the Resolution of Conflicts in Toolmark Value Determinations and Source Conclusions" [9]. These developments demonstrate how the critiques have stimulated methodological refinement and standardization across multiple forensic disciplines.

Strategic Research Priorities

The National Institute of Justice's Forensic Science Strategic Research Plan, 2022-2026 explicitly incorporates priorities aligned with NRC and PCAST recommendations [22]. The plan emphasizes:

- Foundational Validity and Reliability: Supporting research to "assess the fundamental scientific basis of forensic analysis" and "quantification of measurement uncertainty" [22]

- Decision Analysis: Funding "black-box studies" to "measure the accuracy and reliability of forensic examinations" and "identification of sources of error" through white-box studies [22]

- Automated Tools: Developing "objective methods to support interpretations and conclusions" and "evaluation of algorithms for quantitative pattern evidence comparisons" [22]

These strategic priorities reflect a direct institutional response to the methodological gaps identified in both reports, channeling research funding toward addressing fundamental validity questions across forensic disciplines.

Table 3: Essential Research Resources for Forensic Method Validation

| Resource | Function | Access Point |

|---|---|---|

| OSAC Registry | Central repository of validated forensic science standards | NIST website [23] |

| NIJ Forensic Science Strategic Plan | Guides research priorities and funding opportunities | NIJ website [22] |

| Post-PCAST Court Decisions Database | Tracks judicial treatment of forensic evidence post-PCAST | National Center on Forensics [21] |

| Federal Rule of Evidence 702 | Legal standard for expert testimony admissibility | U.S. Courts or Cornell LII [5] |

| Scientific Validation Studies | Empirical evidence for method validity | Peer-reviewed journals |

Visualizing the Legal-Scientific Integration Pathway

Forensic Evidence Admissibility Decision Pathway

The integration of NRC and PCAST critiques into legal practice represents an ongoing paradigm shift in how forensic evidence is evaluated in U.S. courtrooms. Where courts previously relied primarily on the experience and qualifications of forensic experts, there is now heightened focus on the scientific validity of the underlying methods [20]. This shift advocates for "trusting the scientific method" over the traditional "trusting the examiner" approach [20].

The differential impact across disciplines highlights the complex interplay between scientific progress and legal standards. While some pattern evidence disciplines like bitemark analysis face increasing admissibility challenges, others like firearms/toolmarks have undergone methodological refinement in response to validity critiques [21]. DNA analysis remains the benchmark for forensic validity, though even here PCAST has prompted more nuanced evaluation of complex mixture interpretation protocols [21].

Future developments will likely be shaped by continued research on foundational validity, refinement of standards through organizations like OSAC, and evolving judicial application of Rule 702. The ultimate integration of these scientific critiques into legal practice requires ongoing collaboration between scientific and legal communities to ensure forensic evidence meets appropriate standards of reliability while serving the needs of justice.

In the intricate landscape of biomedical litigation, from pharmaceutical liability to medical malpractice, the admission and interpretation of forensic evidence carry profound consequences. The judicial system relies on Federal Rule of Evidence 702, which assigns judges the critical "gatekeeping" role of ensuring that proffered expert testimony is both reliable and relevant [7]. The 2023 amendment to this rule explicitly clarifies that the proponent must demonstrate to the court that it is "more likely than not" that the expert's testimony meets all admissibility requirements, placing a heightened burden on those presenting scientific evidence [15] [7]. This legal framework is designed to filter out unsupported science, but its application to forensic disciplines—many of which have recently faced significant scrutiny regarding their scientific foundations—presents a formidable challenge.

The consequences of this challenge are not merely theoretical. Flawed forensic evidence has been directly linked to wrongful convictions and unjust civil outcomes. Research analyzing exoneration cases has identified specific forensic disciplines as disproportionately contributing to erroneous verdicts [24]. In the biomedical context, where matters of health and liberty intersect, the stakes are exceptionally high. This guide objectively compares the performance and reliability of various forensic methodologies as applied in litigation, providing researchers and drug development professionals with the empirical data and analytical frameworks necessary to navigate this complex evidentiary terrain.

Legal Standards Framework: Rule 702 and Forensic Admissibility

The Evolution of the Judicial Gatekeeping Role

The standard for admitting expert testimony has evolved significantly over the past century. The Frye standard, established in 1923, required scientific evidence to be "generally accepted" in its relevant field [25]. This was superseded in federal courts and many states by the Supreme Court's 1993 decision in Daubert v. Merrell Dow Pharmaceuticals, Inc., which assigned trial judges the responsibility of being evidentiary "gatekeepers" [4] [25]. Daubert provided a non-exclusive checklist for judges to assess scientific reliability, including: testability, peer review, error rates, operational standards, and general acceptance [5].

The most recent iteration of this evolution is the 2023 amendment to Federal Rule of Evidence 702, which sought to correct widespread misapplications of the standard. As the Advisory Committee noted, many courts had incorrectly treated the "sufficiency of an expert's basis, and the application of the expert's methodology, are questions of weight and not admissibility" [15]. The amended rule now explicitly requires the proponent to demonstrate to the court that it is more likely than not that:

- The testimony is based on sufficient facts or data

- The testimony is the product of reliable principles and methods

- The expert's opinion reflects a reliable application of the principles and methods to the facts of the case [15] [5]

Circuit courts have begun embracing this changed standard. The Federal Circuit's en banc ruling in EcoFactor, Inc. v. Google LLC (2025) emphasized that trial courts must take notice of the 2023 amendment, confirming that an adequate factual basis is "an essential prerequisite" for admissibility [15]. Similarly, the Eighth Circuit in Sprafka v. Medical Device Bus. Svcs. (2025) moved away from its prior "liberal admission" stance, declaring that opinions "lack reliability" and should be excluded if they lack an adequate factual basis [15].

Application to Forensic Science Disciplines

Despite this legal framework, courts have struggled with forensic evidence, particularly what the President's Council of Advisors on Science and Technology (PCAST) termed "feature-comparison methods" [4]. These subjective pattern-matching disciplines—including fingerprints, firearms, toolmarks, and bitemarks—have historically been admitted based largely on their longstanding use rather than rigorous empirical validation [6].

The 2009 National Research Council report starkly concluded: "With the exception of nuclear DNA analysis… no forensic method has been rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [4]. A 2016 PCAST report echoed these concerns, finding that many forensic methods lacked sufficient empirical evidence of validity [4] [6].

The following diagram illustrates the judicial application of Rule 702 to forensic evidence:

Quantitative Analysis of Forensic Error Impacts

Wrongful Convictions and Forensic Errors

Empirical research provides stark quantification of how flawed forensic evidence contributes to miscarriages of justice. A comprehensive study analyzed 732 wrongful conviction cases from the National Registry of Exonerations, examining 1,391 forensic examinations across 34 disciplines [24]. The findings revealed that 635 cases (approximately 87%) had errors related to forensic evidence, with 891 forensic examinations (64%) containing at least one error [24].

Table 1: Forensic Discipline Error Rates in Wrongful Convictions

| Discipline | Number of Examinations | % Examinations with Case Error | % with Individualization/Classification Errors |

|---|---|---|---|

| Seized drug analysis | 130 | 100% | 100% |

| Bitemark | 44 | 77% | 73% |

| Shoe/foot impression | 32 | 66% | 41% |

| Fire debris investigation | 45 | 78% | 38% |

| Forensic medicine (pediatric sexual abuse) | 64 | 72% | 34% |

| Serology | 204 | 68% | 26% |

| Firearms identification | 66 | 39% | 26% |

| Hair comparison | 143 | 59% | 20% |

| Latent fingerprint | 87 | 46% | 18% |

| DNA | 64 | 64% | 14% |

| Forensic pathology (cause and manner) | 136 | 46% | 13% |

Source: Adapted from Morgan (2023) analysis of National Registry of Exonerations data [24]

The data reveals critical patterns: certain disciplines with weak scientific foundations (bitemark analysis, seized drug analysis) demonstrate alarmingly high error rates, while even more established fields like latent fingerprints and firearms identification contribute significantly to wrongful convictions [24]. Notably, the high error rate for seized drug analysis primarily stemmed from errors using drug testing kits in the field rather than laboratory errors [24].

Forensic Error Typology and Frequency

Dr. John Morgan's forensic error typology, developed through analysis of wrongful conviction cases, categorizes the nature and frequency of forensic failures [24]. This systematic classification enables targeted reforms by identifying the most common failure points in forensic practice.

Table 2: Forensic Error Typology and Manifestations

| Error Type | Description | Common Examples | Frequency in Study |

|---|---|---|---|

| Type 1: Forensic Science Reports | Misstatement of scientific basis in reports | Lab error, poor communication, resource constraints | Prevalent in serology, toxicology |

| Type 2: Individualization/Classification | Incorrect individualization or classification | Interpretation error, fraudulent interpretation | 100% in seized drug analysis; 73% in bitemark |

| Type 3: Testimony | Erroneous testimony at trial | Mischaracterized statistical weight or probability | Widespread across disciplines |

| Type 4: Officer of the Court | Legal professional errors with forensic evidence | Excluded evidence, faulty testimony accepted | Common in combination with other error types |

| Type 5: Evidence Handling/Reporting | Failures in evidence collection, examination, or reporting | Chain of custody breaches, lost evidence, police misconduct | Found across all disciplines |

Source: Adapted from Morgan's forensic error typology [24]

The typology analysis indicates that most errors related to forensic evidence were not merely identification or classification errors by forensic scientists, but often involved broader systemic failures, including: incompetent or fraudulent examiners, disciplines with inadequate scientific foundations, and organizational deficiencies in training, management, governance, or resources [24].

Experimental Protocols for Forensic Method Validation

Scientific Validation Guidelines for Forensic Feature-Comparison Methods

Inspired by the Bradford Hill Guidelines for causal inference in epidemiology, leading scientists have proposed a framework of four guidelines to evaluate the validity of forensic feature-comparison methods [4]. This approach provides a structured methodology for assessing whether forensic disciplines meet the standards for admission under Rule 702.

Table 3: Experimental Validation Framework for Forensic Methods

| Validation Guideline | Experimental Protocol | Application Example: Firearms & Toolmarks |

|---|---|---|

| Plausibility | Assess theoretical foundation and mechanistic reasoning | Examine whether manufacturing processes produce unique, reproducible marks |

| Sound Research Design | Evaluate construct and external validity through controlled studies | Conduct blind testing of examiners with known and unknown samples |

| Intersubjective Testability | Implement replication studies across independent laboratories | Multiple research groups test same bullet pairs using same protocols |

| Individualization Methodology | Validate reasoning from group data to specific source claims | Establish statistical models for probability of random match |

Source: Adapted from "Scientific guidelines for evaluating the validity of forensic evidence" [4]

The Plausibility guideline requires examining whether the fundamental principles underlying a forensic discipline are scientifically sound. For example, the theory behind firearms and toolmark identification posits that manufacturing processes impart unique, reproducible marks on surfaces [4]. Experimental protocols must test this foundational assumption before evaluating performance claims.

The Sound Research Design guideline emphasizes that studies must have both construct validity (testing what they purport to test) and external validity (generalizability to real-world conditions). This typically involves creating known sample sets with ground truth established, then having examiners—blind to the expected outcomes—evaluate these samples using standard protocols [4].

Intersubjective Testability requires that findings be replicable across different research teams, a cornerstone of the scientific method. This guideline addresses concerns that many forensic techniques have been developed within law enforcement communities rather than academic settings, with limited independent verification [4].

The Individualization Methodology guideline is particularly crucial for forensic sciences that claim the ability to identify a specific source to the exclusion of all others. The protocol requires developing valid statistical methods to bridge the "analytical gap" between general scientific principles and specific source attributions [4].

Cognitive Bias Testing Protocols

A critical experimental protocol in forensic validation involves testing for cognitive bias—the risk that contextual information unrelated to the forensic analysis may influence an examiner's conclusions. The experimental design involves:

- Sample Preparation: Creating sets of comparison samples with known ground truth

- Context Manipulation: Providing different contextual information to examiner groups (e.g., suggestive case information vs. context-blind)

- Blinded Administration: Ensuring examiners are unaware they are participating in a study

- Result Comparison: Statistically analyzing differences in conclusions between groups

Research has found that disciplines more susceptible to cognitive bias (bitemark comparison, fire debris investigation, forensic medicine, forensic pathology) require scientists to consider contextual information, creating tension between analytical objectivity and real-world assessment needs [24].

Case Studies: Forensic Failures in Biomedical Contexts

Toxicology Laboratory Failures

Toxicology, often perceived as objective due to its foundation in analytical chemistry, has demonstrated significant vulnerabilities with profound implications for biomedical litigation. A comprehensive review of toxicology errors identified multiple categories of failure across jurisdictions [26]:

Traceability Errors: The Alaska Department of Public Safety manufactured dry gas reference material with an inverted barometric pressure formula, affecting approximately 2,500 breath alcohol tests [26]. Similarly, the District of Columbia Metropolitan Police Department incorrectly calibrated breath alcohol analyzers 20-40% too high for 14 years before detection [26].

Calibration Errors: The Maryland Department of State Police Forensic Sciences Division used single-point calibration curves for blood alcohol analysis from 2011-2021, despite this method being scientifically inappropriate as it doesn't span the entire concentration range of interest [26]. The laboratory had passed accreditation visits in 2015 and 2019 despite this fundamental methodological flaw.

Discovery Violations: Systematic withholding of exculpatory evidence and institutional resistance to disclosure have been documented across multiple jurisdictions. In Massachusetts, laboratory scandals involving Annie Dookhan "dry labbing" results (reporting without actual analysis) and Sonja Farak using control standards while working on drug cases compromised thousands of convictions [26].

Cannabis DUI Laboratory Misconduct

The University of Illinois Chicago forensic toxicology laboratory scandal exemplifies how methodological flaws and misleading testimony can impact numerous cases. Between 2016-2024, the laboratory tested bodily fluids for DUI-cannabis investigations using scientifically discredited methods and faulty machinery [27]. Key failures included:

- Testing urine for cannabis metabolites despite scientific consensus that these metabolites remain detectable for days or weeks after use, making them useless for determining impairment while driving [27]

- Inability to differentiate between legal and illegal types of THC in bodily fluids

- Continued testing and reporting despite internal knowledge of methodology problems since at least 2021 [27]

- Misleading testimony that characterized cannabis metabolites as equivalent to psychoactive THC

The laboratory conducted more than 2,200 tests for THC in bodily fluids before its eventual closure, with multiple wrongful convictions resulting from its work [27]. Internal emails revealed university officials were focused on the lab's financial performance rather than scientific quality, and the decision to terminate human testing came due to revenue failure rather than quality concerns [27].

Research Reagent Solutions for Forensic Validation

Implementing rigorous forensic validation requires specific methodological tools and approaches. The following table details essential "research reagents"—conceptual frameworks and practical tools—for conducting and evaluating forensic validation studies.

Table 4: Research Reagent Solutions for Forensic Method Validation

| Research Reagent | Function | Application Example |

|---|---|---|

| Error Rate Studies | Quantifies method reliability through controlled testing | Blind proficiency testing of examiners with known samples |

| Context Management Protocols | Controls for cognitive bias in forensic analysis | Sequential unmasking techniques that reveal contextual information only after initial analysis |

| Digital Data Retention Systems | Preserves raw analytical data for independent verification | Mandatory retention of instrument output files with audit trails |

| Statistical Foundation Models | Provides mathematical framework for evidence interpretation | Bayesian analysis calculating likelihood ratios for evidence |

| Independent Accreditation Standards | Establishes minimum competency requirements | ISO 17025 accreditation with forensic-specific supplements |

| Transparency Databases | Enables systematic error detection through data aggregation | Online discovery portals with standardized error reporting |

Source: Synthesized from multiple sources on forensic reform [24] [4] [26]

These research reagents represent essential methodological tools for improving forensic validation. Error rate studies, for instance, address a key Daubert factor that many traditional forensic disciplines have historically failed to quantify [4] [6]. Context management protocols respond to research demonstrating that forensic examiners are vulnerable to cognitive bias when aware of contextual case information [24].

The following diagram illustrates the relationship between validation methodologies and legal standards:

The consequences of flawed forensic evidence in biomedical litigation extend beyond individual case outcomes to undermine the integrity of the judicial system itself. The 2023 amendment to Rule 702 represents a significant step toward heightened scrutiny of expert evidence, but its effectiveness depends on consistent application by courts and rigorous challenge by legal and scientific professionals.

For researchers and drug development professionals, understanding these forensic validation principles is essential not only when interacting with the legal system but also in maintaining scientific integrity across all domains. The experimental protocols and research reagents outlined provide a framework for critically evaluating forensic evidence, while the quantitative data on error rates offers sobering perspective on the real-world impacts of methodological flaws.

As Dr. John Morgan's research concluded, in approximately half of wrongful convictions analyzed, "improved technology, testimony standards, or practice standards may have prevented a wrongful conviction at the time of trial" [24]. This statistic highlights both the profound consequences of forensic failures and the tangible benefits of implementing the validation methodologies described in this guide.

Building a Defensible Method: From Principles and Practices to Courtroom Application