Quantifying Discriminatory Power with Shannon Entropy: A Comprehensive Guide for Biomedical Research and Drug Development

This article provides a comprehensive exploration of Shannon entropy as a powerful, information-theoretic metric for quantifying discriminatory power in biomedical and pharmaceutical research.

Quantifying Discriminatory Power with Shannon Entropy: A Comprehensive Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive exploration of Shannon entropy as a powerful, information-theoretic metric for quantifying discriminatory power in biomedical and pharmaceutical research. Tailored for researchers, scientists, and drug development professionals, it covers the foundational theory of Shannon entropy, its practical application in methodologies from feature selection to diagnostic tool evaluation, strategies for troubleshooting and optimizing entropy-based models, and frameworks for the validation and comparative assessment of instruments and algorithms. By synthesizing insights from recent literature, this guide serves as a vital resource for enhancing the precision and interpretability of data-driven decisions in clinical and research settings.

Shannon Entropy Fundamentals: From Information Theory to Measuring Uncertainty

Within the framework of information theory, the concepts of self-information, surprisal, and average uncertainty provide the fundamental vocabulary for quantifying information. This technical guide details these core principles and their role in research, particularly in quantifying the discriminatory power of measurement instruments and analytical methods. Shannon entropy serves as a critical tool for evaluating how well diagnostic systems, health assessments, and classification models can distinguish between different states or groups, moving beyond qualitative assessments to provide robust, mathematically-grounded evidence for research validity and instrument selection.

Core Conceptual Definitions

Self-Information (Surprisal)

Self-information, also commonly termed surprisal or Shannon information, is a measure of the information content associated with the outcome of a random event [1]. Formally, the self-information of a particular outcome ( x ) of a discrete random variable ( X ) is defined as: [ I(x) = -\log_b p(x) ] where ( p(x) ) is the probability of the outcome ( x ), and ( b ) is the base of the logarithm, which determines the unit of information [2] [1]. When ( b = 2 ), the unit is the bit; when ( b = e ), the unit is the nat; and when ( b = 10 ), the unit is the hartley [2].

Table: Units of Self-Information

| Logarithm Base | Unit | Application Context |

|---|---|---|

| ( b=2 ) | bit | Digital communications, computer science |

| ( b=e ) | nat | Mathematical physics, theoretical derivations |

| ( b=10 ) | hartley | Historical applications, engineering |

This function exhibits three key properties that align with the intuitive understanding of information [2] [1]:

- Decreasing Function of Probability: The less probable an event ( E ) is, the more surprising it is and the more information it conveys. Formally, ( I(E) ) is a decreasing function of ( p(E) ).

- Certain Events Convey No Information: If an outcome ( E ) is certain to occur ( (p(E) = 1) ), then it conveys no information: ( I(E) = 0 ).

- Additivity for Independent Events: The information conveyed by two independent events ( E ) and ( F ) is equal to the sum of the information of the individual events: ( I(E \cap F) = I(E) + I(F) ).

Example: Consider being told that a single card randomly drawn from a well-shuffled standard 52-card deck is the 10 of spades. The self-information of this event is ( I(x) = -\log_2 (1/52) \approx 5.70044 ) bits [2].

Entropy (Average Uncertainty)

Entropy, or Shannon entropy, quantifies the average uncertainty or the expected amount of information inherent in a random variable's possible outcomes [3]. For a discrete random variable ( X ) that takes on values ( x1, x2, ..., xM ) with probabilities ( p1, p2, ..., pM ), the entropy ( H(X) ) is defined as the expected value of the self-information [2] [3]: [ H(X) = E[I(X)] = -\sum{i=1}^{M} pi \logb pi ]

Entropy is a measure of the unpredictability of a state. A fundamental interpretation is that entropy represents the average number of bits (or other units) needed to encode the outcomes of the random variable ( X ) under an optimal encoding scheme [3].

Example: The entropy of a fair coin toss ( (p{\text{heads}} = p{\text{tails}} = 0.5) ) is: [ H(X) = -[0.5 \cdot \log2(0.5) + 0.5 \cdot \log2(0.5)] = -[0.5 \cdot (-1) + 0.5 \cdot (-1)] = 1 \text{ bit} ] This is the maximum entropy for a binary variable—the state of maximum uncertainty. If the coin is unfair (e.g., ( p_{\text{heads}} = 0.9 )), the entropy decreases because the outcome becomes more predictable [3].

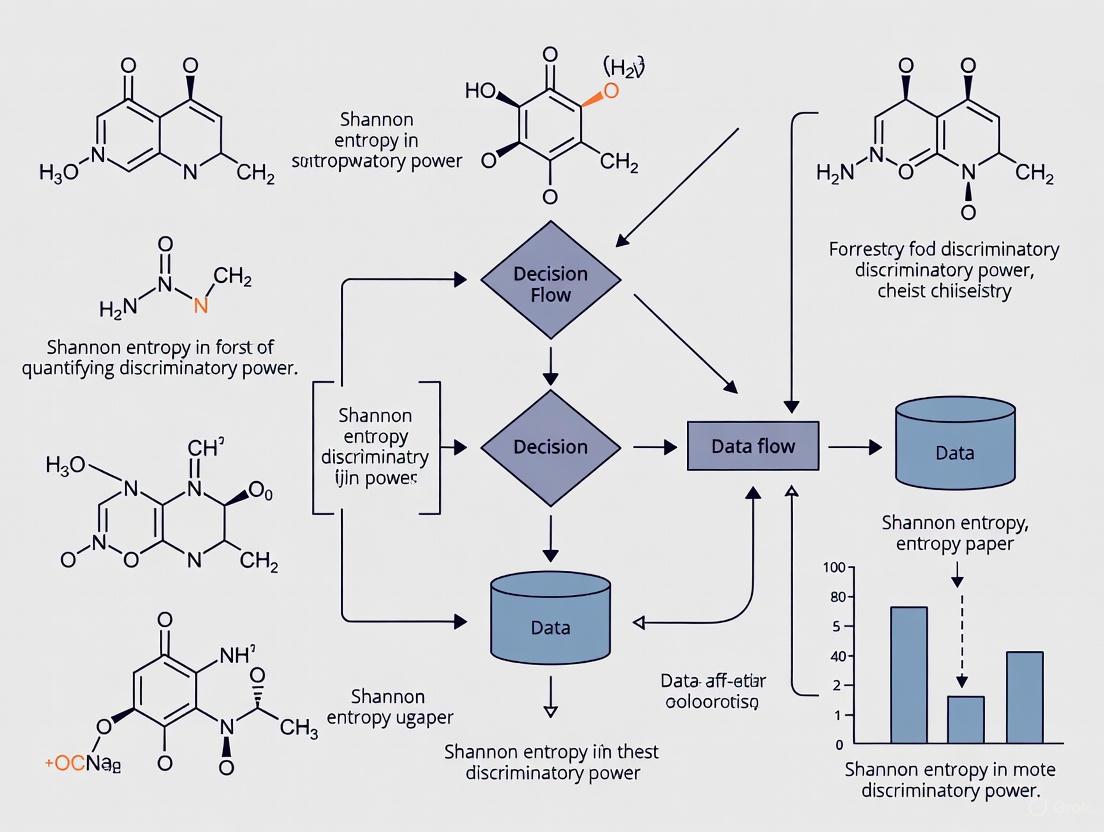

Figure 1: The logical relationship between a probability distribution, self-information, and entropy. Entropy is the expectation of self-information over all possible outcomes and represents both the average uncertainty and the average information of the variable.

Reconciling "Average Information" and "Uncertainty"

The dual interpretation of entropy as both "average information" and "uncertainty" can seem paradoxical but is, in fact, two sides of the same coin [4].

- Uncertainty Perspective: Before a random variable is measured, ( H(X) ) quantifies the average uncertainty about its outcome. A higher entropy means greater unpredictability.

- Information Perspective: After the variable is measured and the outcome is known, the amount of information gained is, on average, ( H(X) ). Learning the outcome of a high-entropy (unpredictable) variable provides more information than learning the outcome of a low-entropy (predictable) variable [4].

Thus, high uncertainty directly corresponds to high expected information gain upon measurement [4].

Relationships and Extended Concepts

Conditional Self-Information and Conditional Entropy

The conditional self-information of an event ( x ) given that another event ( y ) has occurred is defined as [2]: [ I(x|y) = -\log p(x|y) ] It represents the surprisal of observing ( x ) after already knowing ( y ).

Conditional entropy ( H(X|Y) ) measures the average uncertainty remaining about random variable ( X ) after observing random variable ( Y ). It is defined as the expected value of the conditional self-information [3]: [ H(X|Y) = \sum{y} p(y) \left[ -\sum{x} p(x|y) \log p(x|y) \right] = E[I(X|Y)] ]

Mutual Information

Mutual information quantifies the amount of information that one random variable provides about another [2]. For two events ( x ) and ( y ), it is defined as: [ I(x; y) = \log \frac{p(x, y)}{p(x)p(y)} ] For random variables ( X ) and ( Y ), the average mutual information ( I(X; Y) ) is the expected value of the mutual information of all possible event pairs [2]. It can be expressed in terms of entropy: [ I(X; Y) = H(X) - H(X|Y) = H(Y) - H(Y|X) ] This relationship shows that mutual information is the reduction in uncertainty about ( X ) due to knowledge of ( Y ) (or vice versa) [2].

Figure 2: The relationship between the entropy of two variables (H(X), H(Y)), their conditional entropies (H(X|Y), H(Y|X)), and their mutual information (I(X;Y)). The mutual information is the intersection of the information in X and Y.

Table: Summary of Key Information-Theoretic Measures

| Concept | Notation | Formula | Interpretation |

|---|---|---|---|

| Self-Information | ( I(x) ) | ( -\log p(x) ) | Surprise or information from a single outcome ( x ). |

| Entropy | ( H(X) ) | ( E[I(X)] ) | Average uncertainty or information of variable ( X ). |

| Conditional Entropy | ( H(X|Y) ) | ( E[I(X|Y)] ) | Average uncertainty in ( X ) remaining after knowing ( Y ). |

| Mutual Information | ( I(X; Y) ) | ( H(X) - H(X|Y) ) | Average amount of information ( Y ) provides about ( X ). |

Application in Research: Quantifying Discriminatory Power

The core concepts of self-information and entropy are directly applicable to evaluating the discriminatory power of research instruments, particularly in healthcare and psychology.

Shannon's Indices in Health-Related Quality of Life (HRQL) Assessment

A pivotal application involves using Shannon's index ( H' ) and Shannon's Evenness index ( J' ) to quantitatively compare multi-attribute utility instruments (MAUIs) like the EQ-5D, HUI2, and HUI3 [5].

- Shannon's Index (Absolute Informativity): ( H' = -\sum{i=1}^{L} pi \log pi ), where ( L ) is the number of levels in a dimension and ( pi ) is the proportion of observations in the ( i )-th level. A higher ( H' ) indicates a greater ability to discriminate among different health states (higher absolute informativity) [5].

- Shannon's Evenness Index (Relative Informativity): ( J' = H' / H'{\text{max}} ), where ( H'{\text{max}} = \log L ). This measures how evenly the responses are distributed across all available levels, with a higher ( J' ) indicating that the instrument better utilizes its full classification system [5].

Key Findings: A study comparing EQ-5D, HUI2, and HUI3 in a general US adult population (N=3,691) found that HUI3 had the highest absolute informativity, while EQ-5D had the highest relative informativity [5]. This indicates that while HUI3 discriminates best among health states in an absolute sense, the EQ-5D uses its simpler classification system (5 dimensions with 3 levels each) more efficiently.

Sample Entropy in Neuroimaging

Sample entropy (SampEn), an entropy measure derived from approximate entropy, quantifies the complexity and irregularity of physiological signals like fMRI data [6]. It measures the negative logarithm of the conditional probability that two sequences similar for ( m ) points remain similar at the next point, excluding self-matches.

Research Protocol: Discriminating Age Groups with fMRI

- Objective: To determine if Sample Entropy can discriminate between young and elderly adults using short fMRI datasets [6].

- Data: Resting-state fMRI data from the International Consortium for Brain Mapping (ICBM) dataset, including 43 younger and 43 elderly adults.

- Methodology:

- Preprocessing: Standard fMRI preprocessing, including discarding the first 3-4 volumes for signal conditioning.

- Parameter Selection: Pattern length ( m = 2 ), tolerance ( r = 0.30 \times \text{standard deviation of the data} ).

- Data Length Analysis: Investigated data lengths ( N ) (number of volumes) from 85 to 128.

- Analysis: Whole-brain and regional Sample Entropy calculated for each subject. Groups compared using statistical tests (e.g., t-tests) with significance level ( p < 0.05 ) and false discovery rate (FDR) correction.

- Key Result: Sample Entropy effectively discriminated between young and elderly adults, with an accuracy of 85% at ( N = 85 ), supporting the hypothesis of a "loss of entropy" or reduced brain signal complexity with ageing [6].

Table: The Scientist's Toolkit - Key Reagents & Materials for Entropy-Based Discrimination Studies

| Item / Reagent | Function / Role in Analysis |

|---|---|

| Multi-Attribute Utility Instrument (MAUI) | A standardized health state classification system (e.g., EQ-5D, HUI2/3) used to collect response data across multiple dimensions and levels. |

| fMRI Scanner | Equipment used to acquire blood-oxygen-level-dependent (BOLD) time series data, which serves as the input for calculating signal entropy (e.g., Sample Entropy). |

| Preprocessing Pipeline Software | Software (e.g., FSL, SPM) used to clean and prepare raw data by removing artifacts, correcting for head motion, and discarding initial non-steady-state volumes. |

| Optimal Parameter Set (m, r, N) | The critical parameters for entropy calculation: pattern length ( m ), tolerance ( r ), and data length ( N ). Their robust selection is crucial for valid and consistent results. |

| Statistical Analysis Suite | Software (e.g., R, Python with SciPy) used to perform significance testing and multiple comparison corrections to validate the discriminatory power of entropy measures. |

Network Entropy in Complex System Analysis

In archaeological and social network studies, entropy measures are adapted to analyze complex system dynamics. The PANARCH framework uses multiple entropy types—degree, eigenvector, community, and betweenness entropy—to identify and quantify phases in adaptive cycles [7]. This application demonstrates how the core concept of average uncertainty can be extended to quantify structural diversity and predictability within networks, providing a mathematical signature for different system states and phase transitions [7].

Practical Calculation and Implementation

Workflow for Instrument Discriminatory Power Analysis

The following workflow outlines the steps for applying Shannon's indices to assess the discriminatory power of a multi-category instrument.

Figure 3: A practical workflow for calculating Shannon's indices to evaluate the discriminatory power of research instruments, such as health state classification systems.

Key Considerations for Robust Analysis

- Data Requirements: Shannon's indices require a representative sample size to ensure stable probability estimates ( p_i ) [5]. For Sample Entropy in fMRI, a data length ( N > 100 ) is recommended for reliable results, though discrimination is possible with shorter lengths (~85 volumes) [6].

- Parameter Sensitivity: Entropy measures can be sensitive to parameter choices (e.g., ( m ) and ( r ) for Sample Entropy, the logarithm base for Shannon's indices). Sensitivity analysis is recommended [6].

- Interpretation: No single measure provides a complete picture. A holistic assessment of discriminatory power should consider both absolute ( (H') ) and relative ( (J') ) informativity, as they can lead to different conclusions about instrument performance [5].

This technical guide provides an in-depth examination of the Shannon entropy formula, H(X) = -Σ p(x) log p(x), within the context of its role in quantifying discriminatory power in scientific research, particularly in drug discovery and development. Shannon entropy serves as a fundamental measure of uncertainty, information content, and system variability, enabling researchers to discriminate between complex biological states, identify critical molecular targets, and prioritize experimental resources. We explore the mathematical foundations of entropy, present detailed experimental protocols for its application in gene expression analysis and molecular property prediction, and visualize key workflows and relationships. By synthesizing current methodologies and applications, this whitepaper aims to equip researchers with the theoretical understanding and practical tools necessary to leverage entropy-based metrics for enhanced discriminatory power in scientific investigations.

Shannon entropy, introduced by Claude Shannon in his seminal 1948 paper "A Mathematical Theory of Communication," quantifies the average level of uncertainty or information inherent in a random variable's possible outcomes [3]. The entropy H(X) of a discrete random variable X measures the expected amount of information needed to describe the state of the variable, considering the probability distribution across all potential states [3]. In research contexts, this translates directly to discriminatory power – the ability to distinguish between system states, identify meaningful patterns amidst noise, and prioritize variables based on their information content rather than mere magnitude.

The core intuition behind Shannon's formulation is that the informational value of a message depends on its surprisingness: highly probable events carry little information, while unlikely events communicate substantial information when they occur [3]. This principle enables entropy to serve as a powerful filter for identifying biologically significant elements in complex datasets, where the mere presence of change is less important than the pattern and context of that change across multiple states or conditions.

Mathematical Foundations

Core Formula and Components

The Shannon entropy H(X) for a discrete random variable X is defined as:

H(X) = -Σ p(x) log p(x)

Where:

- X is a discrete random variable with possible outcomes in set 𝒳

- p(x) is the probability mass function, representing Pr(X = x)

- The summation is taken over all possible outcomes x ∈ 𝒳

- The logarithm base (typically 2, e, or 10) determines the entropy units (bits, nats, or hartleys, respectively) [3] [8]

This formulation can be equivalently expressed as an expected value: H(X) = E[-log p(X)], representing the average surprisal or self-information of the variable X [3] [8]. The self-information of an individual outcome x is defined as I(x) = -log p(x), representing the information gained by observing that specific outcome [3].

Key Properties and Interpretations

Shannon entropy satisfies several fundamental properties that make it particularly valuable for research applications:

- Non-negativity: H(X) ≥ 0 for all probability distributions, with equality only when one outcome has probability 1 and all others have probability 0 [3] [8].

- Maximum entropy: For a finite set of n possible outcomes, entropy is maximized when all outcomes are equally likely (uniform distribution): H(X) ≤ log(n) [8].

- Additivity: The joint entropy of independent random variables equals the sum of their individual entropies: H(X,Y) = H(X) + H(Y) for independent X and Y [8].

- Continuity and symmetry: H(X) depends continuously on the probability distribution and is symmetric with respect to permutations of the probability values [8].

Table 1: Key Properties of Shannon Entropy and Their Research Implications

| Property | Mathematical Expression | Research Implication |

|---|---|---|

| Non-negativity | H(X) ≥ 0 | Provides consistent, interpretable baseline for comparisons |

| Maximum Value | H(X) ≤ log(n) | Enables normalization for cross-study comparisons |

| Additivity | H(X,Y) = H(X) + H(Y) for independent variables | Supports analysis of independent biological processes |

| Continuity | Small probability changes → small entropy changes | Ensures robustness to minor measurement variations |

Shannon Entropy in Research: Quantifying Discriminatory Power

Theoretical Framework for Discriminatory Power

In research contexts, discriminatory power refers to the ability to distinguish between relevant categories, states, or conditions based on available data. Shannon entropy quantifies this power by measuring the reduction in uncertainty achieved when classifying or categorizing observations. Variables with high entropy across conditions exhibit greater potential for discrimination, as they contain more information about system state differences.

The theoretical foundation lies in information theory's core principle: entropy measures the uncertainty about a system's state before measurement, while conditional entropy measures the remaining uncertainty after observing related variables [3]. The mutual information between variables – quantifying their shared information – directly measures the discriminatory power one variable provides about another [9].

Application Domains

Drug Target Identification

In functional genomics, Shannon entropy identifies putative drug targets by analyzing temporal gene expression patterns [10] [11]. Genes with high entropy expression patterns across time points or conditions carry more information about biological processes and disease progression, making them stronger candidates for therapeutic intervention [11]. This approach effectively prioritizes from thousands of genes to a manageable subset with the greatest physiological relevance, significantly increasing drug discovery efficiency [11].

Molecular Property Prediction

In cheminformatics, entropy-based descriptors derived from molecular representations (SMILES, SMARTS, InChiKey) enhance machine learning models for predicting physicochemical properties [12]. These descriptors capture structural complexity and information content, improving prediction accuracy for properties critical to drug efficacy and safety [12] [13]. The approach provides a unique numerical representation sensitive to stereochemistry and structural changes, enabling more discriminative models.

Efficiency Assessment in Healthcare Systems

Entropy-weighted data envelopment analysis (DEA) applies Shannon entropy to derive objective, data-driven weight constraints in efficiency models [14]. This method limits weight flexibility without relying on subjective expert judgment, producing more robust efficiency scores that better discriminate between high-performing and low-performing healthcare systems based on their resource utilization and outcomes [14].

Experimental Protocols and Methodologies

Protocol 1: Identifying Putative Drug Targets from Gene Expression Data

This protocol applies Shannon entropy to rank genes by their potential as drug targets based on temporal expression patterns [11].

Materials and Reagents

- Biological Sample: Tissue or cell lines representing the disease model across multiple time points

- Gene Expression Assay: DNA microarrays, RNA-seq, or robotic RT-PCR systems

- Triplicate Samples: For each time point to ensure statistical reliability

- Control Reference: Plasmid-derived RNA for RT-PCR normalization

- Analysis Software: Python, R, or specialized bioinformatics platforms

Procedure

- Sample Collection: Collect biological samples at multiple time points during disease progression or treatment response. Include triplicate samples for each time point.

- Expression Quantification: Assay mRNA levels using DNA microarrays, RNA-seq, or RT-PCR. For RT-PCR, include control plasmid-derived RNA in each reaction for normalization.

- Data Normalization: Calculate relative expression levels at each time point compared to controls. For triplicate samples, use average expression values.

- Discretization: Convert continuous expression values to discrete levels. One method is ternary discretization:

- "High" expression: Value > mean + 0.5 × standard deviation

- "Low" expression: Value < mean - 0.5 × standard deviation

- "Medium" expression: All other values

- Probability Calculation: For each gene, compute the probability of each expression level across all time points: p = (count of time points with level) / (total time points)

- Entropy Calculation: Compute Shannon entropy for each gene:

- H(gene) = -Σ [p(level) × log₂p(level)] across all expression levels

- Use 0log0 = 0 for any expression level not observed

- Target Prioritization: Rank genes by descending entropy values. Genes with highest entropy represent the best drug target candidates.

Validation

- Confirm known relevant functional categories are over-represented among high-entropy genes

- Validate top candidates through pathway analysis and literature review

- Perform experimental validation for selected high-priority targets

Figure 1: Workflow for identifying putative drug targets using Shannon entropy analysis of gene expression data.

Protocol 2: Molecular Property Prediction Using Entropy Descriptors

This protocol employs Shannon entropy descriptors to predict physicochemical properties of compounds for drug development [12] [13].

Materials and Reagents

- Compound Dataset: Libraries of molecules with known physicochemical properties

- Molecular Representation: Canonical SMILES, SMARTS, or InChiKey strings

- Computational Resources: Python with RDKit, ChemPy, or similar cheminformatics libraries

- Validation Set: Compounds with experimentally determined properties for model validation

Procedure

- Dataset Preparation: Compile a dataset of molecules with known values for the target property (e.g., boiling point, molar refractivity, inhibitory concentration).

- String Representation: Generate canonical SMILES strings for all molecules in the dataset.

- Tokenization: Split SMILES strings into tokens based on standard chemical vocabulary (atoms, bonds, ring indicators, branching symbols).

- Frequency Analysis: For each molecule, calculate the frequency of each token type: f(token) = (count of token) / (total tokens)

- Descriptor Calculation:

- Total Entropy: H_total = -Σ [f(token) × log₂f(token)] across all token types

- Fractional Atom Entropy: For each atom type, calculate Hatom = (atomcount/totalatoms) × Htotal

- Bond Entropy: Calculate based on bond type frequencies

- Model Development:

- Split data into training and test sets (typically 80/20)

- Train multiple regression models (linear, Ridge, Lasso, SVM) using entropy descriptors as features

- Optimize hyperparameters via cross-validation

- Model Evaluation: Assess performance using coefficient of determination (R²), mean absolute error (MAE), and root mean squared error (RMSE)

Validation

- Compare performance against traditional descriptors (Morgan fingerprints)

- Test predictive accuracy on external validation sets

- Apply to novel compounds and correlate predictions with experimental results

Table 2: Research Reagent Solutions for Entropy-Based Experiments

| Reagent/Resource | Function | Application Context |

|---|---|---|

| DNA Microarrays | Parallel quantification of thousands of gene transcripts | Genome-wide entropy analysis for drug target identification |

| RT-PCR Systems | Precise measurement of specific gene expression levels | Targeted entropy validation studies |

| Canonical SMILES | Standardized string representation of molecular structure | Calculation of molecular entropy descriptors |

| Morgan Fingerprints | Circular topological fingerprints of molecular structure | Benchmark comparison for entropy-based descriptors |

| PubChem Database | Repository of chemical structures and properties | Source of molecular data and validation properties |

Data Analysis and Visualization

Quantitative Comparison of Entropy Applications

Table 3: Performance Comparison of Entropy-Based Methods Across Applications

| Application Domain | Baseline Method | Entropy Method | Performance Improvement |

|---|---|---|---|

| Drug Target Identification | Single change in expression | Temporal pattern entropy | Focus on ~10% of genome with highest physiological relevance [11] |

| Molecular Property Prediction | Morgan fingerprints | SMILES Shannon entropy + fractional entropy | 25.5% improvement in MAPE for IC50 prediction [12] |

| Binding Efficiency Prediction | Molecular weight only | Hybrid entropy descriptors | 64% improvement in MAPE, 62% in MAE for BEI prediction [12] |

| Healthcare Efficiency Assessment | Traditional DEA | Entropy-weighted AR DEA | More robust efficiency scores, reduced artificial overestimation [14] |

Interpretation of Results

The quantitative improvements observed across domains demonstrate entropy's enhanced discriminatory power compared to traditional approaches. In drug target identification, entropy efficiently prioritizes candidates by focusing on genes with diverse expression patterns across multiple conditions rather than those showing only single dramatic changes [11]. This temporal or contextual discrimination identifies genes that are active participants in biological processes rather than passive responders.

In molecular property prediction, entropy descriptors capture complex structural information that traditional fingerprints may miss, leading to significant improvements in prediction accuracy [12]. The superiority of hybrid approaches combining multiple entropy types suggests that different entropy formulations capture complementary aspects of molecular complexity, together providing more discriminative power for property prediction.

Figure 2: Relationship between Shannon entropy analysis and enhanced discriminatory power across research applications.

Shannon entropy provides a powerful mathematical framework for quantifying discriminatory power across diverse research domains, particularly in drug discovery and development. By measuring information content and uncertainty, entropy-based approaches enable researchers to distinguish meaningful signals from noise, prioritize resources efficiently, and gain deeper insights into complex biological and chemical systems.

The experimental protocols and case studies presented demonstrate that going beyond simple magnitude-based metrics to pattern-based entropy analysis yields substantial improvements in target identification, property prediction, and efficiency assessment. As research continues to generate increasingly complex datasets, Shannon entropy and its derivatives will remain essential tools for extracting meaningful information and enhancing discriminatory power in scientific investigations.

Future directions include integrating entropy metrics with deep learning architectures, developing domain-specific entropy formulations, and applying entropy-based discrimination to emerging areas such as single-cell analysis and personalized medicine. The continued refinement and application of these information-theoretic approaches will undoubtedly contribute to more efficient and effective research methodologies across the biological and chemical sciences.

Shannon entropy, introduced by Claude Shannon in 1948, provides a fundamental framework for quantifying uncertainty and information content in data systems [3]. In research domains, particularly drug development and biomedical sciences, entropy serves as a powerful tool for measuring the discriminatory power of experiments and analyses. This mathematical formulation quantifies the average level of "surprise" or information expected from a random variable's possible outcomes, enabling researchers to objectively compare variability across different datasets and experimental conditions [15] [16].

The core value of entropy in research lies in its ability to transform subjective observations about data variability into precise quantitative measurements. For drug development professionals, this translates to concrete metrics for evaluating sequence diversity in pathogens, assessing variability in physiological signals, and determining the information content of diagnostic features [16]. By measuring entropy, researchers can establish statistical confidence in their findings, particularly when comparing populations or assessing changes in complexity related to disease states or therapeutic interventions [6].

Theoretical Foundations of Entropy

Mathematical Definition

Shannon entropy quantifies the uncertainty associated with a discrete random variable X. The formal definition is expressed as:

H(X) = -Σ p(xᵢ) logᵦ p(xᵢ)

where p(xᵢ) represents the probability of outcome xᵢ, and the logarithm base b determines the measurement unit [3] [15]. When probabilities are evenly distributed across all possible outcomes, entropy reaches its maximum value, representing the greatest uncertainty. Conversely, when one outcome is certain, entropy equals zero, indicating perfect predictability [15].

The choice of logarithm base establishes the measurement units: base 2 yields "bits" (binary digits), base e (natural logarithm) gives "nats," and base 10 produces "dits" or "hartleys" [15]. Most information theory applications utilize base 2 due to its natural connection with binary systems and computer science. The relationships between units are straightforward: 1 nat ≈ 1.44 bits, and 1 dit ≈ 3.32 bits [15].

Conceptual Framework

Entropy fundamentally measures uncertainty or randomness in a system [15]. Variables with high entropy are unpredictable and contain more information when observed, while variables with low entropy are predictable and provide less new information when measured [15]. This relationship between uncertainty and information content creates the foundation for information theory – when an outcome is highly uncertain, observing it provides more information than observing a predictable outcome [3].

The "surprisal" or self-information of an individual event E is defined as I(E) = -log(p(E)), where p(E) is the probability of event E [3]. Entropy then represents the expected value of these surprisal measurements across all possible outcomes [3]. This statistical concept of entropy differs from physical entropy, which measures disorder in thermodynamic systems, though the mathematical formulations share similarities [15].

Interpretation of Entropy Values

Quantitative Interpretation Framework

Interpreting entropy values requires understanding the spectrum from perfect predictability to maximum uncertainty. The table below summarizes key entropy values and their interpretations:

Table 1: Interpretation Guide for Entropy Values

| Entropy Value | Interpretation | Example System | Information Content |

|---|---|---|---|

| 0 bits | Perfect predictability | Biased coin with P(heads)=1 | None - outcome is certain |

| 0.811 bits | Moderate predictability | Biased coin with P(heads)=0.75 | Low - outcome can often be guessed |

| 1 bit | Maximum uncertainty for binary system | Fair coin (50/50) | 1 bit per observation |

| 2.58 bits | High uncertainty | Fair six-sided die | Moderate - 2.58 bits per observation |

| 4.70 bits | Very high uncertainty | Random letter (26 equally likely) | High - 4.7 bits per observation |

For a variable with n possible outcomes, the theoretical maximum entropy is log₂(n) bits, achieved when all outcomes are equally probable [15]. This represents the scenario of maximum uncertainty where no outcome is more predictable than any other.

Contextual Factors in Interpretation

Several important considerations affect how entropy values should be interpreted:

Data Type Differences: Discrete entropy applies to categorical variables with distinct, countable outcomes, while continuous variables require differential entropy, which can produce negative values [15]. These two types of entropy are not directly comparable, as continuous entropy measures information content relative to a unit of measurement [15].

Relationship to Variance: Entropy and variance both measure variability but capture different aspects. Variance measures how spread out numerical values are, while entropy measures how unpredictable categorical outcomes are [15]. A variable can have high variance but low entropy (widely spread but predictable values) or low variance but high entropy (clustered but unpredictable categories) [15].

Practical Significance: Higher entropy doesn't always indicate "better" data – the optimal entropy level depends on analytical goals [15]. Sometimes predictable patterns (low entropy) are exactly what researchers want to identify, such as conserved regions in genetic sequences or stable physiological parameters [16].

Entropy in Research Applications

Measuring Discriminatory Power

In research settings, entropy provides a quantitative foundation for assessing discriminatory power – the ability to distinguish between different populations or conditions. The HIV Sequence Database demonstrates this application effectively, where Shannon entropy measures sequence variability across different viral populations [16]. By comparing entropy profiles between drug-resistant and susceptible HIV strains, researchers can identify positions where increased variability (higher entropy) correlates with drug resistance [16].

This approach enables the identification of sites that are "certain" in susceptible populations (low entropy) but uncertain in resistant populations (significantly higher entropy) [16]. Even when consensus sequences appear identical, entropy analysis can reveal positions with differential variability patterns that might indicate adaptive evolution or selective pressure [16].

Table 2: Research Applications of Entropy Measurements

| Application Domain | Entropy Type | Discriminatory Power Measurement | Research Utility |

|---|---|---|---|

| HIV sequence analysis | Shannon entropy | Variability in amino acid positions | Identifying drug resistance sites [16] |

| fMRI brain imaging | Sample entropy | Signal complexity in neural data | Differentiating age groups [6] |

| Medical deep learning | Feature entropy | Model bias across populations | Ensuring equitable healthcare applications [17] |

| Data compression | Shannon entropy | Pattern redundancy in datasets | Optimizing storage and transmission [15] |

| Feature selection | Information entropy | Predictive value of variables | Guiding machine learning pipeline design [15] |

Experimental Protocols for Entropy Analysis

Sequence Variability Analysis (HIV Example)

This protocol outlines the methodology for using Shannon entropy to compare sequence variability between populations, as implemented in the HIV Sequence Database [16]:

Sequence Alignment: Prepare multiple sequence alignments for each population (e.g., drug-resistant and drug-susceptible HIV strains).

Positional Frequency Calculation: For each position in the alignment, calculate the frequencies of each amino acid or nucleotide: fₐ = nₐ/N, where nₐ is the count of amino acid a, and N is the total number of sequences.

Entropy Calculation: Compute Shannon entropy for each position: H = -Σ fₐ × log₂(fₐ), where the sum is taken over all amino acids present at that position.

Entropy Difference Calculation: For each position, calculate the entropy difference between the two populations: ΔH = Hpop1 - Hpop2.

Statistical Validation:

- Use Monte Carlo randomization to assess statistical significance

- Combine sequences from both populations

- Randomly resample to create new datasets matching original sizes

- Repeat entropy difference calculation for randomized datasets

- Compare observed entropy differences to randomization distribution

- Apply multiple testing correction (e.g., Bonferroni) for the number of positions tested

Biological Interpretation: Identify positions with statistically significant entropy differences for further biological investigation [16].

Sample Entropy Analysis for fMRI Data

This protocol describes the methodology for using sample entropy to discriminate between patient groups using functional magnetic resonance imaging (fMRI) data [6]:

Data Preprocessing:

- Remove first 3-4 volumes to allow for magnetic field stabilization

- Apply standard preprocessing (motion correction, normalization, filtering)

- Extract time series from regions of interest

Parameter Selection:

- Pattern length (m): Typically m=2 for detailed reconstruction of joint probabilistic dynamics

- Tolerance (r): Commonly r=0.30-0.46 times standard deviation of data

- Data length (N): Can be effective with N=85-128 volumes despite traditional recommendations

Sample Entropy Calculation:

- Form time series vectors: xₘ(1), xₘ(2), ..., xₘ(N-m+1)

- Calculate Chebyshev distance between vectors

- Count similar vectors: Bₘ(r) = number of vector pairs within distance r

- Repeat for dimension m+1: Aₘ(r) = number of vector pairs within distance r

- Compute Sample Entropy: SampEn(m, r, N) = -ln[Aₘ(r)/Bₘ(r)]

Group Comparison:

- Calculate sample entropy for each subject and region

- Perform statistical tests (t-tests, ANOVA) between groups

- Assess classification accuracy using discriminant analysis [6]

Experimental Visualization

Entropy Calculation Workflow

The following diagram illustrates the complete workflow for calculating and interpreting entropy in research contexts:

Entropy-Based Decision Framework

This diagram presents the logical framework for interpreting entropy values and making research decisions based on entropy measurements:

Research Reagent Solutions

Table 3: Essential Research Tools for Entropy Analysis

| Research Tool | Function/Purpose | Application Context |

|---|---|---|

| MIMIC-III Database | Provides clinical dataset for healthcare ML research | Benchmarking bias mitigation algorithms [17] |

| ICBM Resting State Dataset | fMRI data for neuroinformatics research | Studying age-related entropy changes [6] |

| HIV Sequence Database | Repository of viral sequences with entropy tools | Studying sequence variability and drug resistance [16] |

| Monte Carlo Randomization | Statistical validation of entropy differences | Establishing significance in comparative studies [16] |

| Sample Entropy Algorithm | Measures complexity in physiological signals | Discriminating clinical groups in fMRI/EEG studies [6] |

| Gerchberg-Saxton Algorithm | Frequency domain bias reduction technique | Improving equity in deep learning medical applications [17] |

Shannon entropy provides researchers with a powerful quantitative framework for measuring uncertainty, information content, and discriminatory power across diverse scientific domains. Proper interpretation of entropy values enables meaningful comparisons between experimental conditions and populations, from identifying drug resistance sites in viral sequences to discriminating age groups based on neural signal complexity. The experimental protocols and analytical frameworks presented here offer practical guidance for implementing entropy analysis in research settings, while the visualization tools help conceptualize the relationship between entropy values and their research implications. As biomedical research increasingly relies on quantitative measures of variability and information, entropy continues to serve as a fundamental metric for advancing scientific discovery and diagnostic innovation.

In scientific research and data analysis, discriminatory power refers to the capacity of a model or metric to effectively separate distinct groups, classes, or states within a dataset. The quest to quantify this power reliably is paramount across diverse fields, from drug discovery to operational benchmarking. Shannon entropy, a foundational concept from information theory, provides a powerful mathematical framework for directly quantifying this discriminatory capability. Originally developed by Claude Shannon to measure uncertainty in communication systems, entropy has transcended its origins to become a versatile tool for analyzing probability distributions across scientific disciplines [18]. At its core, Shannon entropy measures the average uncertainty or information content in a probability distribution, making it exceptionally suited for evaluating how effectively variables or models can distinguish between different states or categories.

The fundamental formula for Shannon entropy, H, of a discrete probability distribution P = {p₁, p₂, ..., pₙ} is:

[ H(P) = -\sum{i=1}^{n} pi \log2 pi ]

This equation quantifies the expected value of the information content, where pᵢ represents the probability of the i-th outcome [18]. A higher entropy value indicates greater uncertainty or diversity within the system, while lower entropy signifies order and predictability. This property enables researchers to leverage entropy for enhancing discriminatory power by optimizing variable selection, refining model architectures, and improving feature discrimination in complex datasets. The following sections explore the theoretical foundations and practical applications of entropy across multiple domains, with particular emphasis on its transformative role in molecular property prediction and decision-making efficiency.

Theoretical Foundations of Shannon Entropy

The Shannon-Khinchin Axiomatic Basis

Shannon entropy derives its mathematical rigor from the Shannon-Khinchin axioms, which provide a set of fundamental properties that any information-theoretic entropy measure should satisfy [18]. These axioms establish entropy as a unique functional form under specific conditions:

- SK1 Continuity: The entropy H(p₁, ..., pₙ) depends continuously on all probability values for each possible number of outcomes n.

- SK2 Maximality: For any n, the entropy H(p₁, ..., pₙ) is maximized when all probabilities are equal (uniform distribution).

- SK3 Expansibility: Adding an outcome with zero probability does not change the entropy value.

- SK4 Strong Additivity: The joint entropy of two systems equals the entropy of one plus the expected conditional entropy of the other given the first.

A positive functional H that satisfies these four axioms necessarily takes the form of the Boltzmann-Gibbs-Shannon entropy: H(p₁, ..., pₙ) = -kΣpᵢlogpᵢ, where k is a positive constant [18]. This mathematical foundation ensures that entropy provides a consistent and reliable measure of uncertainty across diverse applications.

Entropy as a Measure of Discrimination

The connection between entropy and discriminatory power emerges from entropy's ability to quantify the distributional characteristics of data. When evaluating classification models or feature sets, entropy directly measures how well separated different classes or states appear within the probability distribution:

- Low entropy distributions indicate concentrated probabilities with minimal uncertainty, corresponding to clear separation between classes and high discriminatory power.

- High entropy distributions reflect more uniform probabilities with greater uncertainty, indicating overlapping classes and reduced discriminatory power.

In practical applications, researchers can leverage this relationship by constructing probability distributions from model outputs or feature importance scores, then using entropy measurements to optimize the system's discriminatory capacity. This approach has proven particularly valuable in scenarios requiring variable selection from large candidate sets, where entropy provides an objective criterion for identifying the most discriminative feature combinations.

Entropy-Driven Discriminatory Power in Molecular Science

Enhancing Molecular Property Prediction

In cheminformatics and drug discovery, accurately predicting molecular properties is essential for screening potential drug candidates and functional materials. Traditional approaches often rely on property-specific molecular descriptors that require extensive customization and offer limited prediction accuracy. Recent research demonstrates that Shannon entropy-based descriptors derived directly from molecular string representations (such as SMILES, SMARTS, or InChiKey) can significantly enhance the predictive accuracy of machine learning models for molecular properties [19].

The methodology employs a framework analogous to partial pressures in gas mixtures, using atom-wise fractional Shannon entropy combined with total Shannon entropy from respective tokens of the string representation to model molecules efficiently [19]. This approach captures essential structural information in a computationally efficient manner, competing favorably with standard descriptors like Morgan fingerprints and SHED in regression models. The resulting entropy descriptors provide enhanced discriminatory power for distinguishing molecules with different properties and activities.

Table 1: Shannon Entropy Descriptors for Molecular Properties

| Descriptor Type | Calculation Method | Key Advantage | Performance Comparison |

|---|---|---|---|

| SMILES-based Entropy | Derived directly from SMILES string tokens | No need for property-specific customization | Competitive with Morgan fingerprints |

| Atom-wise Fractional Entropy | Analogous to partial pressures in mixtures | Captures atomic contribution to complexity | Improved prediction accuracy |

| Hybrid Descriptor Sets | Combines entropy descriptors with standard descriptors | Synergistic effect on model performance | Enhanced accuracy in ensemble models |

| Total Molecular Entropy | Composite of token-level entropies | Holistic complexity representation | Effective for QSAR modeling |

Experimental Protocol: Molecular Property Prediction

The general workflow for implementing entropy-enhanced molecular property prediction involves several key stages:

Molecular Representation: Convert molecular structures into string representations (SMILES, SMARTS, or InChiKeys) that encode structural information.

Entropy Calculation: Compute Shannon entropy descriptors using the following steps:

- Tokenize the string representation into discrete elements

- Calculate probability distributions of tokens

- Apply Shannon entropy formula: H = -Σpᵢlogpᵢ

- Derive both total and fractional entropy components

Model Integration: Incorporate entropy descriptors into machine learning architectures, either as:

- Standalone feature sets for traditional regression models

- Hybrid descriptors combined with conventional molecular fingerprints

- Input features for ensemble models combining multilayer perceptrons (MLPs) and graph neural networks (GNNs)

Performance Validation: Evaluate predictive accuracy using cross-validation and benchmark against established descriptor sets across diverse molecular databases [19].

This methodology has demonstrated particular utility in quantitative structure-activity relationship (QSAR) modeling and virtual screening applications, where enhanced discriminatory power directly translates to more efficient identification of promising drug candidates.

Entropy in Data Envelopment Analysis and Decision-Making

Improving Discrimination in Efficiency Analysis

Data Envelopment Analysis (DEA) constitutes a non-parametric method for evaluating the relative efficiency of decision-making units (DMUs) with multiple inputs and outputs. A fundamental challenge in traditional DEA applications is poor discrimination power, particularly when dealing with datasets containing numerous variables relative to the number of DMUs [20]. This limitation often results in multiple DMUs being classified as efficient, reducing the practical utility of the analysis for benchmarking and decision-making.

Shannon entropy addresses this limitation through a comprehensive efficiency score (CES) methodology that aggregates results across all possible variable subsets [20]. Rather than relying on a single DEA model with all variables, the entropy-enhanced approach:

- Computes efficiency scores for all possible variable subsets

- Applies Shannon's entropy to determine the importance weight of each subset

- Combines the efficiency scores using entropy-derived weights

- Generates a comprehensive ranking with enhanced discriminatory power

This method significantly improves upon the conventional "one-third rule" guideline in DEA (which suggests the number of variables should be less than one-third the number of DMUs), enabling effective analysis even with variable-rich datasets [20].

Table 2: Entropy-Enhanced DEA Methodology

| Processing Stage | Key Operation | Discriminatory Power Impact |

|---|---|---|

| Variable Subset Generation | Identify all possible input/output combinations | Ensures comprehensive model space exploration |

| Efficiency Calculation | Compute DEA efficiencies for each subset | Generates base efficiency scores |

| Entropy Weighting | Apply Shannon entropy to subset importance | Quantifies information value of each model |

| Comprehensive Score Generation | Weighted combination of efficiencies | Produces complete DMU ranking |

| Decision Support | Benchmark inefficient DMUs | Identifies improvement targets |

Experimental Protocol: Entropy-Enhanced DEA

The implementation of Shannon entropy to improve DEA discrimination follows a systematic procedure:

Variable Subset Identification: For m inputs and s outputs, identify all K = (2ᵐ - 1) × (2ˢ - 1) possible variable combinations that include at least one input and one output.

Efficiency Calculation: For each DMUⱼ (j = 1, ..., n) and each variable subset Mₖ (k = 1, ..., K), compute the efficiency score Eₖⱼ using the standard CCR DEA model:

Minimize θ - ε(Σsᵢ⁻ + Σsᵣ⁺)

Subject to: Σλⱼxᵢⱼ + sᵢ⁻ = θxᵢ𝒹, i = 1,...,m

Σλⱼyᵣⱼ - sᵣ⁺ = yᵣ𝒹, r = 1,...,s

λⱼ, sᵢ⁻, sᵣ⁺ ≥ 0

Entropy Weight Calculation: For each variable subset Mₖ, compute the importance degree using Shannon entropy:

First, normalize the efficiency scores across DMUs: pₖⱼ = Eₖⱼ / ΣⱼEₖⱼ

Then calculate the entropy value: eₖ = -Σⱼpₖⱼln(pₖⱼ)

Finally, determine the weight: wₖ = (1 - eₖ) / Σₖ(1 - eₖ)

Comprehensive Efficiency Scoring: For each DMUⱼ, compute the comprehensive efficiency score (CES) as the weighted sum: CESⱼ = ΣₖwₖEₖⱼ

Ranking and Analysis: Use the CES values to generate a complete ranking of all DMUs, enabling more effective benchmarking and identification of improvement targets for inefficient units [20].

This methodology has been successfully applied to diverse evaluation contexts, including university department performance, hotel efficiency, solid waste disposal alternatives, and ecological efficiency of cities, consistently demonstrating enhanced discriminatory power compared to traditional DEA approaches.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools for Entropy Analysis

| Reagent/Tool | Function/Purpose | Application Context |

|---|---|---|

| Molecular String Representations (SMILES/SMARTS/InChiKeys) | Standardized encoding of molecular structure | Provides input for entropy-based molecular descriptors |

| Shannon Entropy Calculator | Computational implementation of H = -Σpᵢlogpᵢ | Core entropy computation for various data types |

| DEA Software with Custom Scripting | Data Envelopment Analysis model implementation | Efficiency score calculation for decision-making units |

| Machine Learning Frameworks (Python/R) | Integration of entropy descriptors into predictive models | Molecular property prediction and classification |

| Graph Neural Networks (GNNs) | Advanced architecture for structured data | Enhanced molecular modeling with entropy features |

| Multilayer Perceptrons (MLPs) | Standard neural network architecture | Baseline models for entropy-enhanced prediction |

| Ensemble Modeling Framework | Combination of multiple model architectures | Leverages hybrid entropy descriptors for improved accuracy |

| Cross-Validation Pipelines | Robust model evaluation and validation | Performance assessment of entropy-enhanced methods |

Shannon entropy provides a versatile and mathematically rigorous framework for quantifying and enhancing discriminatory power across diverse scientific domains. From molecular property prediction in drug discovery to efficiency analysis in operational research, entropy-based methods consistently deliver improved discrimination, enhanced model performance, and more reliable decision-making support. The fundamental capacity of entropy to measure uncertainty and information content in probability distributions enables researchers to optimize feature selection, refine model architectures, and extract more meaningful insights from complex datasets. As scientific challenges continue to increase in complexity, the strategic application of Shannon entropy will remain an essential component of the analytical toolkit for researchers seeking to maximize the discriminatory power of their models and methodologies.

Within research domains requiring precise measurement and classification—such as drug development and health outcomes assessment—the discriminatory power of a model is paramount. It represents the model's ability to distinguish meaningfully between different states, entities, or outcomes. A core challenge in enhancing this power lies in managing the fundamental properties of probability theory upon which these models are built. This technical guide examines two such key properties—the additivity of independent events and the methodologies for handling zero probabilities—and frames them within an innovative approach that leverages Shannon's entropy to quantify and improve discriminatory power. We will explore the mathematical foundations, practical challenges and solutions in computational statistics, and demonstrate how entropy-based measures provide a unified framework for evaluating and enhancing the sensitivity of research models.

Mathematical Foundations: Additivity and Independence

Defining Independent Events

In probability theory, two events, A and B, are considered independent if the occurrence of one does not affect the probability of the other occurring. Formally, this is defined as:

P(A ∩ B) = P(A) * P(B) [21]

This definition leads directly to the concept of conditional probability. If P(B) > 0, the conditional probability of A given B is P(A|B) = P(A ∩ B) / P(B). If A and B are independent, this simplifies to P(A|B) = P(A), confirming that knowledge of B's occurrence provides no information about A's likelihood [21] [22].

The Additive Property

Additivity is a fundamental axiom of probability. For any two mutually exclusive events (events that cannot occur simultaneously), the probability of their union equals the sum of their individual probabilities:

P(A ∪ B) = P(A) + P(B) if A ∩ B = ∅ [23]

When dealing with independent events, additivity manifests in the summed probabilities of their outcomes. A prime example is the Poisson distribution, which possesses a strong additive property: the sum of independent Poisson random variables is itself a Poisson random variable whose rate parameter is the sum of the individual rates [23].

Table 1: Key Properties of Independent and Additive Events

| Property | Mathematical Formulation | Interpretation |

|---|---|---|

| Independence | P(A ∩ B) = P(A) * P(B) |

The events do not influence each other. |

| Additivity (Mutually Exclusive) | P(A ∪ B) = P(A) + P(B) |

The chance of either event is the sum of their individual chances. |

| Additive Property of Poisson | Poisson(λ₁) + Poisson(λ₂) = Poisson(λ₁+λ₂) |

The sum of independent Poisson variables is Poisson. |

The Zero Probability Problem

Origins and Implications

A zero probability can signify either true impossibility or a limitation of the model. In finite sample spaces, an outcome assigned P=0 is typically impossible [24]. However, in continuous or infinite sample spaces, possible events can have a probability of zero.

For instance, when randomly selecting a point from the continuous interval [0, 1], the probability of drawing any single, specific number (e.g., exactly 0.3875) is zero, despite being possible [24]. This phenomenon arises because the sample space is infinite, and the probability is calculated as the ratio of a finite, positive outcome to an infinite number of possibilities, effectively yielding zero [24].

Challenges in Modeling and Simulation

Zero probabilities pose significant practical challenges. In language modeling, if a word sequence unseen in training data is assigned a zero probability, the model cannot assign any likelihood to it, breaking its ability to generalize [25]. Similarly, in simulation studies, distributions like the Geometric or Negative Binomial are not well-defined when the probability of success p is exactly zero, as they would require an infinite number of trials to achieve a success. Software like SAS will return errors or missing values in such cases [26].

Methodological Solutions for Zero Probabilities

Laplace Smoothing

Laplace Smoothing (or Additive Smoothing) is a fundamental technique for handling zero probabilities in discrete distributions. It works by adding a small constant to the count of every possible event, including those with zero observations.

If x_i is the count of event i, N is the total number of observations, and d is the number of possible event types, the unsmoothed probability is P(i) = x_i / N. With Laplace smoothing, it becomes:

P_Laplace(i) = (x_i + α) / (N + α * d)

where α is the smoothing parameter (often 1) [25]. This ensures no probability is ever zero, allowing models to generalize to unseen data.

Computational Workarounds

In simulation and software implementation, defensive programming techniques are required to handle zero probabilities. The core strategy is to use conditional logic to trap invalid parameters before they are passed to a function.

Table 2: Handling Zero Probabilities in Statistical Distributions

| Distribution | Effect of p=0 | Recommended Handling |

|---|---|---|

| Bernoulli/Binomial | Well-defined; result is always 0 (no successes). | No special handling needed. |

| Geometric | Undefined; number of trials until a success becomes infinite. | Use IF-THEN logic to assign a missing value or large number if p is below a cutoff (e.g., 1e-16) [26]. |

| Negative Binomial | Undefined; number of failures before k successes becomes infinite. | Same as Geometric; use a conditional check to avoid passing p=0 to the function [26]. |

The following workflow diagram illustrates a robust simulation protocol that implements these checks:

Shannon's Entropy as a Measure of Discriminatory Power

Theoretical Framework

Shannon's Entropy, derived from information theory, is a measure of uncertainty or information content. For a discrete random variable X with probability mass function P(x), entropy H(X) is defined as:

H(X) = - Σ P(x) * log P(x) [20] [5]

In the context of model discrimination, a higher entropy indicates a more uniform distribution of probabilities across categories, which corresponds to a greater inherent uncertainty and a higher potential for the model to discriminate between different states. Conversely, a low entropy indicates a concentration of probability in a few categories, implying poor discriminatory power.

Application in Health Research and Performance Evaluation

Shannon's entropy provides a formal metric to evaluate the discriminatory power of multi-attribute instruments. For example, a study compared the EQ-5D, HUI2, and HUI3 health classification systems using Shannon's indices [5]. The indices were calculated per dimension and for the instruments as a whole, assessing both absolute informativity (raw discriminatory power) and relative informativity (efficiency of level utilization) [5]. The study found HUI3 had the highest absolute informativity, while EQ-5D had the highest relative informativity, offering nuanced insights beyond simple ceiling/floor effect analyses [5].

In operations research, Shannon's entropy has been integrated with Data Envelopment Analysis (DEA) to improve discrimination among decision-making units (DMUs). The method involves:

- Calculating DEA efficiencies for all possible variable subsets.

- Using Shannon's entropy to compute the degree of importance of each variable subset based on the distribution of efficiency scores.

- Combining the efficiencies and importance weights to generate a Comprehensive Efficiency Score (CES) for each DMU [20] [27].

This entropy-based approach creates a more complete ranking without arbitrarily discarding variable information, thereby significantly enhancing discriminatory power [20].

Experimental Protocols and Research Toolkit

Protocol for an Entropy-Enhanced DEA Study

- Define DMUs and Variables: Identify the set of Decision-Making Units (e.g., hospitals, research programs) and the full suite of input and output variables.

- Generate Variable Subsets: Create all possible combinations of variables that form valid DEA models (at least one input and one output). This results in

K = (2^m - 1) * (2^s - 1)models, wheremandsare the numbers of inputs and outputs [20]. - Compute Base Efficiencies: For each DMU

jand each variable subsetk, compute the efficiency scoreE_kjusing a standard DEA model (e.g., CCR) [20]. - Calculate Entropy Weights:

- Let

E_jrepresent the average efficiency of DMUjacross allKmodels. - Compute the entropy measure for the efficiency distribution:

e_j = - constant * Σ (E_kj / E_j) * ln(E_kj / E_j). - Calculate the degree of divergence:

d_j = 1 - e_j. - Normalize the divergences to obtain the importance weight for each variable subset

k:w_k = d_j / Σ d_j[20].

- Let

- Compute Comprehensive Scores: For each DMU, calculate the final Comprehensive Efficiency Score (CES) as a weighted average of its efficiencies across all models, using the entropy-derived weights:

CES_j = Σ (w_k * E_kj)[20]. - Rank and Analyze: Rank all DMUs based on their CES to obtain a full, discriminatory ranking.

The Researcher's Toolkit

Table 3: Essential Reagents and Solutions for Entropy-Discrimination Research

| Research Component | Function | Example Implementation |

|---|---|---|

| Probability Distributions | Model stochastic processes and event occurrences. | Bernoulli, Binomial, Geometric, Poisson, and Multinomial (Table) distributions [26]. |

| Smoothing Parameters (α) | Prevent zero probabilities to maintain model generalizability. | A small positive value (e.g., 1) used in Laplace Smoothing [25]. |

| Statistical Software | Perform simulations and probability calculations. | SAS (RAND function), R, Python (SciPy) with defensive coding for invalid parameters [26]. |

| DEA Model Solver | Calculate baseline efficiency scores for DMUs. | Software capable of solving linear programming problems (e.g., R deaR, Python PyDEA) [20]. |

| Entropy Calculation Module | Compute Shannon's index and importance weights. | A custom script in R or Python to process efficiency scores and calculate entropy measures [20] [5]. |

The logical relationship between these components and the core concepts is visualized below:

The interplay between the additivity of independent events and the challenges of handling zero probabilities forms a critical foundation for building robust statistical models. By integrating Shannon's entropy into this framework, researchers gain a powerful, theoretically-grounded method to quantify and enhance the discriminatory power of their analyses. The protocols and methodologies outlined—from smoothing techniques and defensive programming to entropy-weighted scoring—provide a actionable pathway for scientists and drug development professionals to achieve more nuanced differentiation and ranking in complex research environments. This entropy-driven approach ensures that models are not only mathematically sound but also maximally informative.

Applied Methodologies: Leveraging Entropy for Enhanced Discrimination in Research

In the realm of data science and machine learning, feature selection serves as a critical preprocessing technique for reducing dimensionality and improving model performance. Among the various approaches available, methods grounded in information theory, particularly Shannon entropy, provide a mathematically rigorous framework for quantifying the discriminatory power of potential predictors. These techniques measure the inherent uncertainty in random variables and the mutual dependence between them, allowing researchers to identify features that maximize information gain about a target outcome. Within the context of drug development and biomedical research, this translates to the ability to pinpoint clinical variables, genetic markers, or biomolecular measurements that are most informative for predicting disease progression, treatment response, or patient outcomes.

The application of Shannon entropy enables quantification of how much information a feature provides about a target variable, forming the theoretical foundation for feature selection techniques that are both computationally efficient and effective in high-dimensional spaces. Unlike methods that assume linear relationships, entropy-based approaches can capture complex nonlinear dependencies, making them particularly valuable for analyzing biological and clinical data where relationships are often nonlinear and multifaceted. As research in personalized medicine advances, the role of entropy in identifying key predictors from vast arrays of candidate variables continues to grow in importance, enabling more interpretable and accurate predictive models.

Theoretical Foundations

Shannon Entropy and Information Gain

Shannon Entropy, introduced by Claude Shannon in 1948, serves as a fundamental measure of uncertainty or randomness in a random variable. For a discrete random variable (X) with probability mass function (p(x)), the entropy (H(X)) is defined as:

[ H(X) = -\sum{x \in X} p(x) \log2 p(x) ]

In practical terms, entropy quantifies the average amount of information needed to describe the random variable. A key application in feature selection is Information Gain (IG), which measures the reduction in entropy of a target variable (Y) after observing a feature (X). The information gain of (Y) given (X) is defined as:

[ IG(Y, X) = H(Y) - H(Y|X) ]

Where (H(Y|X)) is the conditional entropy of (Y) given (X). Features with higher information gain are more useful for predicting the target variable as they reduce uncertainty more significantly. Information Gain forms the basis for building decision trees like ID3 and C4.5, where features are selected at each node based on their IG values [28].

Mutual Information

Mutual Information (MI) generalizes the concept of information gain by measuring the mutual dependence between two random variables. Unlike correlation, which primarily captures linear relationships, MI can detect any form of statistical dependency, including nonlinear relationships. For two continuous random variables (X) and (Y), mutual information is defined as:

[ I(X; Y) = \iint p(x, y) \log \frac{p(x, y)}{p(x)p(y)} dx dy ]

Where (p(x, y)) is the joint probability density function, and (p(x)) and (p(y)) are the marginal density functions. Mutual information can also be expressed in terms of entropy:

[ I(X; Y) = H(X) - H(X|Y) = H(Y) - H(Y|X) ]

This symmetric measure is non-negative, with zero indicating complete independence between the variables. Higher values indicate stronger dependency [29] [28]. In feature selection, MI estimates the amount of information that a feature contains about the target variable, making it invaluable for identifying key predictors.

Relationship Between Information Theory and Discriminatory Power

The discriminatory power of a feature refers to its ability to distinguish between different classes or outcomes of the target variable. Shannon entropy quantifies this capability through information gain and mutual information. When a feature has high mutual information with a target variable, it means that knowing the feature's value significantly reduces uncertainty about the target's value, thereby exhibiting strong discriminatory power.

This theoretical framework is particularly valuable in research contexts where understanding the fundamental relationships between variables is as important as prediction accuracy. For example, in drug development, researchers need to identify which clinical measurements or genetic markers truly contribute to understanding disease mechanisms, not just those that improve model performance. Entropy-based measures provide this insight by directly quantifying how much information each variable contributes to the outcome of interest [30].

Table 1: Key Information-Theoretic Measures for Feature Selection

| Measure | Formula | Interpretation | Application Context |

|---|---|---|---|

| Shannon Entropy | (H(X) = -\sum p(x) \log_2 p(x)) | Measures uncertainty in a variable | Fundamental concept for all information-based feature selection |

| Information Gain | (IG(Y,X) = H(Y) - H(Y|X)) | Measures reduction in target uncertainty after observing a feature | Decision tree algorithms (ID3, C4.5) |

| Mutual Information | (I(X;Y) = H(X) - H(X|Y)) | Measures mutual dependence between two variables | Filter-based feature selection for classification and regression |

Methodological Approaches

Mutual Information for Classification and Regression

The implementation of mutual information for feature selection varies depending on whether the target variable is categorical (classification) or continuous (regression). Scikit-learn provides specialized functions for each case:

- mutualinfoclassif: Used when the target variable is discrete/categorical [31]

- mutualinforegression: Used when the target variable is continuous [29]

Both functions rely on nonparametric methods based on entropy estimation from k-nearest neighbors distances, as described by Kraskov et al. (2004) and Ross (2014) [31]. The parameter n_neighbors (default=3) controls the trade-off between bias and variance in the estimation, with higher values reducing variance but potentially introducing bias [31].

Feature Selection Algorithms Based on Mutual Information

Several algorithmic approaches utilize mutual information for feature selection:

Univariate Filter Methods: These methods evaluate each feature independently based on its mutual information with the target and select the top-k features. The

SelectKBestmethod in scikit-learn can be used withmutual_info_classiformutual_info_regressionas the scoring function [29].Multivariate Filter Methods: These approaches consider feature dependencies by evaluating subsets of features. The Decomposed Mutual Information Maximization (DMIM) method is a recent advancement that applies maximization separately to inter-feature and class-relevant redundancies, overcoming the complementarity penalization found in earlier methods [32].

Copula Entropy (CE): CE is a multivariate measure of statistical independence with copula theory that has been proved to be equivalent to mutual information. It enjoys advantages over traditional association measures as it is symmetric, non-positive (0 if and only if independent), invariant to monotonic transformations, and equivalent to correlation coefficient in Gaussian cases [33].

Table 2: Mutual Information-Based Feature Selection Methods

| Method | Type | Key Characteristics | Advantages | Limitations |

|---|---|---|---|---|

| Univariate Filter | Filter | Selects top-k features based on MI scores | Computationally efficient, works well with high-dimensional data | Ignores feature interactions |

| DMIM | Filter | Applies maximization separately to redundancies | Accounts for complementarity, better classification performance | More computationally intensive |

| Copula Entropy | Filter | Uses copula theory to estimate MI | Model-free, tuning-free, works with any distribution | Complex implementation |

Advanced Variations and Extensions

Several advanced entropy-based methods have been developed for specialized applications:

Approximate Conditional Entropy based on Fuzzy Information Granule: This approach is particularly useful for gene expression data analysis, where it measures the uncertainty of knowledge from both information and algebra perspectives [30].

Entropy-Weighted Assurance Region DEA: Integrates entropy weighting with data envelopment analysis (DEA) and assurance region constraints, providing a more objective, data-driven way to limit weight flexibility without relying on additional information or expert judgment [14].

Information Gain Ratio: Normalizes information gain to reduce bias toward attributes with many values, addressing a limitation of standard information gain in decision tree algorithms [28].

Experimental Protocols and Implementation

Standard Protocol for Mutual Information-Based Feature Selection

The following protocol provides a step-by-step methodology for implementing mutual information-based feature selection:

Data Preparation:

- Split dataset into training and testing sets

- Handle missing values (e.g., using

fillna(0)as in [29]) - Normalize or standardize continuous features if necessary

Mutual Information Calculation:

- For classification: Use

mutual_info_classif(X_train, y_train) - For regression: Use

mutual_info_regression(X_train, y_train) - Set appropriate parameters:

discrete_features('auto', bool or array-like),n_neighbors(default=3)

- For classification: Use

Feature Ranking and Selection:

- Create a Series of mutual information scores with feature names as index

- Sort features in descending order of MI scores

- Visualize results using bar plots for interpretation [29]

Subset Selection:

- Use

SelectKBestorSelectPercentilefrom scikit-learn - For

SelectKBest, specifyk(number of top features to select) - For

SelectPercentile, specifypercentile(percentage of top features to select)

- Use

Model Training and Validation:

- Transform training and test sets using selected features

- Train model on transformed training set

- Evaluate model performance on transformed test set

Case Study: Feature Selection for Heart Disease Diagnosis

An application of copula entropy for variable selection in heart disease diagnosis demonstrates the practical utility of entropy-based methods. Using the UCI heart disease dataset containing 76 raw attributes, the CE method was compared against traditional methods including AIC, BIC, LASSO, and other independence measures (dCor and HSIC) [33].

The experimental results showed that the CE-based method achieved the highest prediction accuracy (84.76%) and selected 11 out of 13 clinically recommended variables, outperforming all other methods in both predictability and interpretability [33]. This demonstrates how entropy-based feature selection can simultaneously optimize model performance and align with domain knowledge.

Case Study: Gene Expression Data Analysis