Probabilistic Genotyping Software: A Comprehensive Guide to Interpreting Complex DNA Mixtures for Forensic Research

This article provides a detailed exploration of probabilistic genotyping (PG) software, an essential tool for interpreting complex DNA mixtures that traditional methods cannot resolve.

Probabilistic Genotyping Software: A Comprehensive Guide to Interpreting Complex DNA Mixtures for Forensic Research

Abstract

This article provides a detailed exploration of probabilistic genotyping (PG) software, an essential tool for interpreting complex DNA mixtures that traditional methods cannot resolve. Aimed at researchers, scientists, and forensic development professionals, it covers the foundational principles of PG, including the shift from binary to continuous models that utilize peak height information and calculate Likelihood Ratios (LRs) for statistical evidence weighting. The content delves into methodological workflows, from data evaluation and hypothesis formulation to Markov Chain Monte Carlo (MCMC) analysis. It further addresses critical troubleshooting aspects, such as stutter modeling and managing low-template DNA, and outlines rigorous validation protocols as per SWGDAM guidelines. Finally, the article offers a comparative analysis of leading PG software like STRmix™, EuroForMix, and TrueAllele™, highlighting their performance in sensitivity, specificity, and reproducibility to guide informed tool selection and application.

The Evolution of DNA Mixture Interpretation: From Binary to Probabilistic Models

The evolution of forensic DNA analysis has been marked by a paradoxical trend: as technological advancements have increased the sensitivity of DNA profiling, allowing scientists to generate profiles from merely a few skin cells, the complexity of the evidence encountered in casework has grown substantially [1] [2]. Complex DNA mixtures—samples originating from three or more individuals, containing low-template DNA (LT-DNA), or exhibiting degradation—present unique interpretational challenges that surpass those of single-source samples or simple two-person mixtures [1] [3]. These challenges include distinguishing individual contributors within the mixture, accurately estimating the number of contributors, determining the relevance of the DNA to the case versus potential contamination, and interpreting trace amounts of suspect or victim DNA [2]. When not properly addressed and communicated, these complexities can lead to significant misunderstandings regarding the strength and relevance of DNA evidence in legal proceedings [2].

The fundamental shift in forensic practice is evidenced by the changing nature of casework samples. Whereas single-source profiles were once the norm, laboratories are now frequently asked to evaluate complex mixtures from challenging sources such as touched objects, making the interpretation of complex DNA mixtures a central and critical task in modern forensic genetics [1]. This document, framed within broader research on probabilistic genotyping software, outlines the standardized protocols and application notes essential for addressing these fundamental challenges.

Methodological Approaches to DNA Mixture Interpretation

The bio-statistical interpretation of DNA mixtures has evolved through three primary methodological approaches, each differing in complexity and the type of data they utilize [3].

Binary Models

The binary model was the first interpretative approach adopted by the forensic community. This method relies solely on the qualitative presence or absence of alleles and does not account for stochastic effects (such as drop-in and drop-out) or the quantitative peak height information of the detected alleles [3]. While simple, its limitations in handling low-template and complex mixtures have led to its gradual replacement by more sophisticated models.

Semi-Continuous (Qualitative) Models

Semi-continuous models represent a significant advancement by incorporating the possibility of stochastic effects like allele drop-out and drop-in [3]. These models use probabilistic frameworks to compute a Likelihood Ratio (LR) but still do not utilize the quantitative information from allele peak heights. Their relative simplicity and more straightforward computation have led to widespread use, with available open-source software including LRmix Studio and Lab Retriever [3]. The algorithms are generally more comprehensible, which can be advantageous when presenting results in courtroom proceedings [3].

Fully-Continuous (Quantitative) Models

Fully-continuous models constitute the current gold standard for interpreting complex DNA mixtures [3]. These quantitative approaches utilize all available information, including both the qualitative presence of alleles and their quantitative peak heights [3] [4]. This allows for more powerful deconvolution of mixtures by modeling key parameters such as DNA quantity, degradation, and PCR artefacts like stutter peaks [3] [4]. The ability to model stutter—both back stutter (the more common artefact resulting from a deletion of one or more repeat units) and forward stutter (resulting from an addition of repeat units)—is a critical feature that helps distinguish these artefacts from true alleles of minor contributors [4]. Prominent software implementations include STRmix, EuroForMix, and DNA•VIEW [3].

Table 1: Comparison of DNA Mixture Interpretation Models

| Model Type | Data Utilized | Handles Stochastic Effects? | Key Software Examples | Best Application Context |

|---|---|---|---|---|

| Binary | Qualitative (allele presence/absence) | No | N/A | Largely superseded by more advanced models |

| Semi-Continuous | Qualitative | Yes | LRmix Studio, Lab Retriever | Moderate complexity mixtures; labs transitioning from binary |

| Fully-Continuous | Qualitative & Quantitative (peak heights) | Yes | STRmix, EuroForMix, DNA•VIEW | Complex mixtures (≥3 contributors), LT-DNA, degraded samples |

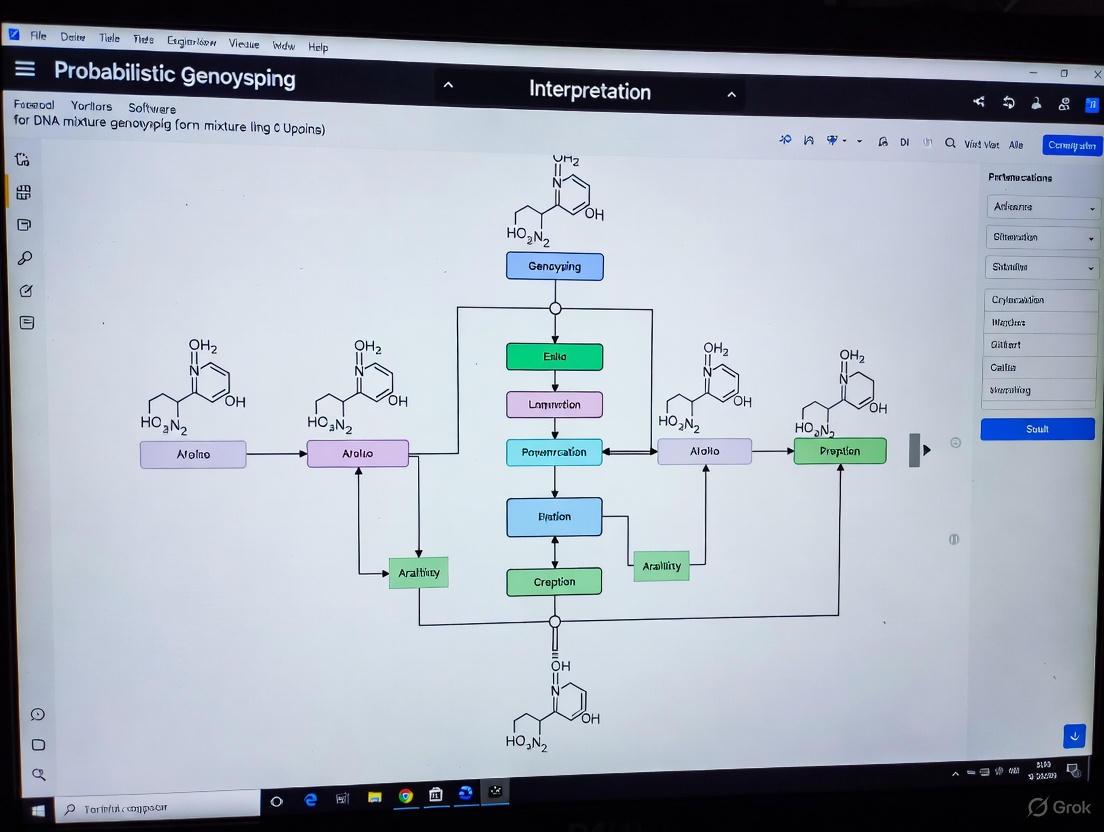

The following workflow diagram illustrates the decision-making process for selecting and applying these interpretation methods within a validated framework:

Internal Validation of Probabilistic Genotyping Systems

The proper utilization of any probabilistic genotyping software (PGS) requires comprehensive internal validation specific to each laboratory's environment and population context [5]. Such validation must be performed according to established scientific guidelines, such as those from the Scientific Working Group on DNA Analysis Methods (SWGDAM) [5]. A recent internal validation of STRmix using Japanese individuals and GlobalFiler profiles exemplifies this process, focusing on the software's sensitivity, specificity, precision, and the effects of adding a known contributor or incorrectly assuming the number of contributors [5].

The findings confirmed that STRmix with laboratory-specific parameters was suitable for interpreting mixed DNA profiles in their environment [5]. However, the validation also revealed rare edge cases (e.g., those with extreme heterozygote imbalance or significant differences in mixture ratios between loci due to PCR stochastic effects) where the software incorrectly excluded true contributors (LR = 0) [5]. These findings underscore the critical importance of conducting population-specific validation studies to understand the limitations and performance boundaries of any probabilistic genotyping system before implementation in casework.

Comparative Performance of Probabilistic Genotyping Software

Multi-Software Performance Comparison

A proof-of-concept study compared the performance of probabilistic genotyping software using known two-person and three-person mixtures amplified with different DNA kits [3]. The research employed two semi-continuous (LRmix Studio, Lab Retriever) and three fully-continuous (STRmix, EuroForMix, DNA•VIEW) software tools to analyze the same samples, allowing for direct comparison of their performance and outputs [3].

Table 2: Key Reagent Solutions for DNA Mixture Analysis

| Research Reagent | Function in Analysis | Application Context |

|---|---|---|

| GlobalFiler PCR Amplification Kit | 24-locus STR multiplex kit for DNA profiling | Standardized amplification for mixture deconvolution [3] [4] |

| NIST SRM 2391c | Certified reference DNA material for standardization | Quality assurance and validation studies [3] |

| Standard Allele Frequency Datasets | Population-specific genetic frequency data (e.g., NIST, ALFRED) | Statistical calculation of match probabilities [4] |

| Analytical Thresholds | Minimum RFU value for calling true alleles (e.g., 100 RFU) | Differentiation of true alleles from background noise [4] |

The study found that while semi-continuous and fully-continuous models generally produced coherent results, their performance diverged in more challenging conditions [3]. For simpler mixtures with balanced contributions, different software and kits generally produced consistent LR values. However, as mixture complexity increased—with more contributors, highly unbalanced mixture ratios, or decreasing DNA template—the differences between software performances became more pronounced [3].

Impact of Software Version and Stutter Modeling

A critical aspect of software performance involves updates to underlying models, particularly for handling PCR artefacts. A 2025 study compared two versions of EuroForMix (v1.9.3 and v3.4.0) to evaluate the impact of different stutter modeling approaches on the same input data from 156 real casework samples [4]. The key difference was the stutter modeling capability: v1.9.3 only modeled back stutters, while v3.4.0 modeled both back and forward stutters [4].

Most LR values differed by less than one order of magnitude across versions. However, significant exceptions occurred in more complex samples—those with more contributors, unbalanced contributions, or greater degradation [4]. This demonstrates that even different versions of the same software, with updated stutter modeling capabilities, can produce meaningfully different results for challenging samples, emphasizing the need for rigorous re-validation when updating software versions.

The following diagram illustrates the experimental workflow for such a comparative software performance study:

Statistical Framework and Interpretation Protocols

The Likelihood Ratio Framework

Probabilistic genotyping software quantifies the strength of DNA evidence through the Likelihood Ratio (LR), a fundamental statistical measure that compares the probability of observing the evidence under two competing hypotheses [4]. In standard identification cases, these hypotheses are:

- H1: The person of interest (PoI) is a contributor to the mixture.

- H2: The PoI is not a contributor and is not genetically related to any contributor [4].

The LR framework provides a coherent method for evaluating evidence while considering various parameters, including population allele frequencies, co-ancestry coefficients (Fst), drop-in and drop-out rates, and stutter models [4]. When multiple persons of interest are involved, the interpretation becomes more complex, requiring a systematic approach that considers all relevant hypotheses and their likelihoods before computing LRs for individual persons of interest [6].

Combined Probability of Inclusion/Exclusion (CPI/CPE)

Despite the advantages of probabilistic genotyping, the Combined Probability of Inclusion/Exclusion (CPI/CPE) remains the most commonly used statistical method for DNA mixture evaluation in many parts of the world, including the United States [1] [7]. The CPI represents the proportion of a given population that would be expected to be included as a potential contributor to the observed DNA mixture [1].

A standardized protocol for CPI application involves three critical steps:

- Assessment of the Profile: Evaluating peak heights to determine if contributors can be distinguished and whether allele drop-out is likely.

- Comparison with Reference Profiles: Performing inclusion/exclusion determinations.

- Calculation of the Statistic: Computing the CPI while disqualifying any locus where allele drop-out is possible [1].

The CPI approach is considered simpler than LR-based methods as it does not strictly require assumptions about the number of contributors for the calculation itself [1]. However, this perceived simplicity has sometimes led to incorrect applications, particularly with complex, low-template mixtures where stochastic effects are prominent [1]. Laboratories using CPI must ensure it is applied correctly, with trained professionals exercising judgment to disqualify loci where allele drop-out is possible [1] [7].

Consensus Approach for Complex Mixture Interpretation

Given the variability in software performance and modeling approaches, some laboratories have adopted a "statistic consensus approach" for interpreting complex LT-DNA mixtures [3]. This methodology involves:

- Comparing LR results provided by different probabilistic software.

- Reporting only the most conservative LR value if coherence exists among the tested models.

- Reporting inconclusive results when significant incoherence appears among software outputs [3].

This approach provides a safeguard against over-reliance on any single software's specific modeling assumptions, particularly important for the most challenging casework samples where different algorithms may diverge in their interpretations.

The interpretation of complex multi-person DNA mixtures remains a fundamental challenge in forensic genetics, requiring sophisticated probabilistic genotyping software, rigorous validation protocols, and standardized statistical approaches. The field has evolved from simple binary models to fully-continuous systems that leverage quantitative peak height information to deconvolve complex mixtures. Internal validation studies, performance comparisons across software platforms, and careful attention to statistical frameworks are all essential components of a robust forensic DNA analysis program. As the sensitivity of DNA profiling continues to increase, the development and refinement of these methodologies will remain critical for ensuring the accurate and reliable interpretation of complex DNA mixture evidence in the judicial system.

The Likelihood Ratio (LR) has emerged as the fundamental and most powerful statistical framework for evaluating the weight of forensic DNA evidence, particularly in the complex analysis of mixed samples [8] [9]. It provides a scientifically robust method to quantify the strength of evidence supporting one proposition over another, moving beyond simplistic inclusions or exclusions to a continuous measure of evidentiary strength [10]. The widespread adoption of probabilistic genotyping software (PGS) such as STRmix, EuroForMix, and DNAStatistX has made the accurate calculation of LRs for complex DNA mixtures feasible for forensic laboratories worldwide [8] [5].

The LR framework is mathematically rooted in Bayes' Theorem, allowing for the logical updating of prior beliefs in light of new evidence [9]. In forensic DNA interpretation, this translates to evaluating how much the observed evidence (the DNA profile) should change our belief about the propositions put forward by prosecution and defense. The LR forms the core of modern forensic genetics because it properly accounts for the complexities of DNA mixtures, including stochastic effects, stutter, allelic drop-in, and drop-out, which are particularly challenging in low-template and complex multi-contributor samples [8] [10].

Statistical Foundation of the Likelihood Ratio

Mathematical Formulation

The Likelihood Ratio is fundamentally a ratio of two conditional probabilities [9]. Formally, it is expressed as:

LR = Pr(E|H₁,I) / Pr(E|H₂,I)

Where:

- E represents the observed evidence (DNA profile data)

- H₁ and H₂ represent two competing propositions

- I represents relevant background information about the case

In forensic DNA practice, the LR evaluates the probability of observing the DNA evidence given the prosecution proposition (typically that a person of interest is a contributor to the sample) relative to the probability of the same evidence given the defense proposition (typically that the person of interest is not a contributor) [8] [9]. The LR framework naturally accommodates the evaluation of multiple propositions and can be extended to complex case scenarios involving multiple persons of interest [9].

Interpretation of LR Values

The value of the LR provides a direct measure of the evidence strength [11]:

- LR > 1: The evidence supports the first proposition (H₁)

- LR < 1: The evidence supports the second proposition (H₂)

- LR = 1: The evidence is inconclusive; it does not discriminate between the propositions

The further the LR value is from 1 in either direction, the stronger the evidence. For example, an LR of 10,000 indicates that the evidence is 10,000 times more likely under H₁ than under H₂, while an LR of 0.001 indicates the evidence is 1,000 times more likely under H₂ [9] [11].

Relationship to Bayes' Theorem

The LR serves as the bridge between prior odds and posterior odds in Bayes' Theorem [9]:

Posterior Odds = LR × Prior Odds

Where:

- Prior Odds represent the relative plausibility of the propositions before considering the DNA evidence

- Posterior Odds represent the relative plausibility after considering the DNA evidence

This relationship highlights that while the LR quantitatively assesses the evidence, the ultimate interpretation also depends on the context of the case and other non-DNA evidence [11]. The forensic scientist's role is typically limited to providing the LR, while the court considers the prior odds based on other case information.

LR Framework in DNA Mixture Interpretation

Proposition Setting in Forensic DNA Analysis

The appropriate formulation of propositions is critical for meaningful LR calculation. Proposition setting follows a hierarchy from sub-source to activity level, with most DNA mixture interpretation occurring at the sub-source level [9]. The table below outlines the three main types of proposition pairs used in forensic DNA analysis.

Table 1: Types of Proposition Pairs in DNA Mixture Interpretation

| Proposition Type | Definition | Example for 2-Person Mixture | Use Case |

|---|---|---|---|

| Simple | One Person of Interest (POI) in Hₚ replaced with one unknown in Hₐ [9] | Hₚ: POI + unknown; Hₐ: two unknowns | Standard single POI evaluation |

| Compound | Multiple POIs in Hₚ replaced with unknowns in Hₐ [9] | Hₚ: POI₁ + POI₂; Hₐ: two unknowns | Evaluating multiple POIs together |

| Conditional | All POIs in Hₚ and all but one POI in Hₐ [9] | Hₚ: POI₁ + POI₂; Hₐ: POI₁ + unknown | Isolating evidence for each POI when multiple known contributors exist |

Research has demonstrated that conditional propositions have superior performance in differentiating true from false donors compared to simple propositions, while compound propositions can potentially misstate the weight of evidence when contributors have markedly different levels of support [9].

The Evolution of Statistical Methods for DNA Mixtures

The interpretation of DNA mixtures has evolved significantly through three generations of statistical methods [8]:

Table 2: Evolution of Statistical Methods for DNA Mixture Interpretation

| Method Type | Key Characteristics | Limitations | Representative Approaches |

|---|---|---|---|

| Binary Models | Yes/no decisions about genotype inclusion; does not account for drop-out or drop-in [8] | Cannot handle low-level or complex mixtures | Clayton Rules [8] |

| Semi-Continuous (Qualitative) Models | Considers probabilities of drop-out/drop-in; uses peak heights indirectly [8] | Does not fully utilize quantitative peak data | LikeLTD [8] |

| Continuous (Quantitative) Models | Fully utilizes peak height information; models PCR stochastic effects [8] | Computationally intensive; requires validation | STRmix, EuroForMix [8] |

The progression toward continuous models represents a significant advancement in forensic genetics, as these systems more completely account for the behavior of DNA profiles through realistic models of DNA amount, degradation, and other real-world factors [8].

Experimental Protocols for LR Validation

Internal Validation of Probabilistic Genotyping Systems

Before implementing any probabilistic genotyping software in casework, laboratories must conduct comprehensive internal validation following established guidelines such as those from the Scientific Working Group on DNA Analysis Methods (SWGDAM) [5]. The protocol below outlines the key components of this validation.

Protocol 1: Internal Validation of Probabilistic Genotyping Software

Purpose: To verify that probabilistic genotyping software performs as expected within a laboratory's specific operational environment and with relevant population samples.

Materials and Equipment:

- Probabilistic genotyping software (e.g., STRmix, EuroForMix)

- DNA profiles from known contributors

- Artificial mixture samples with known composition

- Computing infrastructure meeting software specifications

- Laboratory-specific analytical thresholds and interpretation guidelines

Procedure:

- Sensitivity and Specificity Assessment:

- Prepare mixture samples with varying template amounts (0.01-0.5 ng total DNA)

- Include two-, three-, and four-person mixtures with different contributor ratios

- Process samples using standard laboratory protocols and capillary electrophoresis

- Interpret results in the probabilistic genotyping software

- Calculate rates of true positives, false positives, true negatives, and false negatives

Precision and Reproducibility Testing:

- Analyze replicate samples of the same mixture across different analytical batches

- Evaluate consistency of LR outputs for the same ground truth scenarios

- Assess impact of stochastic effects on LR stability

Known Contributor Effects:

- Evaluate how inclusion of known contributor profiles affects mixture deconvolution

- Test scenarios with correctly and incorrectly specified known contributors

- Assess software performance when known contributors are omitted

Number of Contributors Assessment:

- Analyze mixtures with correctly and incorrectly specified numbers of contributors

- Document the impact of over- and under-estimation of contributors on LR results

Population Studies:

- Validate software performance with relevant population datasets

- Ensure LRs for non-contributors are appropriately low across different ethnic groups

Validation Criteria: The software is considered validated for casework when it demonstrates [5]:

- High sensitivity and specificity across expected casework types

- Stable and reproducible LRs for replicate analyses

- Appropriate performance with laboratory-specific protocols and populations

- Robustness to minor deviations in user inputs

Protocol for Assessing Inter-Laboratory Variability

Understanding variability in DNA mixture interpretation across different laboratories is essential for establishing reliability standards and best practices.

Protocol 2: Quantifying Intra- and Inter-Laboratory Variability in DNA Mixture Interpretation

Purpose: To objectively assess and quantify the variation in forensic DNA mixture interpretation both within and between laboratories.

Experimental Design:

- Sample Preparation:

- Create mixture samples comprising two and three DNA sources with differing ratios

- Include mixtures with and without reference samples

- Ensure coverage of template amounts typically encountered in casework

Data Distribution:

- Generate DNA sample profiles from each mixture

- Distribute uninterpreted raw data files to participating laboratories

- Provide standardized threshold parameters for analysis

- Include detailed instructions for data interpretation and reporting

Data Collection:

- Collect completed questionnaires and worksheets from participating laboratories

- Focus analysis on laboratories with sufficient numbers of participating examiners

Metric Calculation:

- Calculate Genotype Interpretation and Allelic Truth metrics

- Compute metrics at multiple levels: per locus, per contributor, and per mixture

- Aggregate results by laboratory and by jurisdiction type

Key Findings from Implementation: A study implementing this protocol with 55 laboratories and 189 examiners found that [12]:

- Significant intra- and inter-laboratory interpretation variation exists

- Inclusion of a known reference DNA profile markedly improves interpretability

- Two-person DNA mixtures are generally interpretable by most laboratories

- Three-person mixtures are generally beyond the scope of protocol limits for most examiners

- Accurate interpretation of challenging three-person mixtures is possible in some laboratories, emphasizing the need for ongoing training and dissemination of best practices

Research Reagent Solutions for LR Studies

Table 3: Essential Research Reagents and Materials for LR Validation Studies

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Commercial STR Kits | Multiplex amplification of forensic STR markers | GlobalFiler, PowerPlex ESX/ESI systems, AmpFlSTR NGM [10] |

| Genetic Analyzers | Capillary electrophoresis for DNA separation | 3500 Genetic Analyser with standardized injection parameters [9] |

| Quantification Systems | Precise DNA quantification for mixture preparation | Plexor HY system for human and male DNA quantification [10] |

| Probabilistic Genotyping Software | LR calculation and mixture deconvolution | STRmix, EuroForMix, DNAStatistX [8] |

| Reference DNA Samples | Controlled samples for mixture creation | Commercially available DNA standards or characterized donor samples [12] |

| Quality Control Materials | Monitoring analytical processes and thresholds | Internal size standards, allelic ladders, positive controls [12] |

Workflow and Conceptual Diagrams

LR Calculation Workflow in Probabilistic Genotyping

The following diagram illustrates the generalized workflow for likelihood ratio calculation in probabilistic genotyping systems:

Diagram 1: LR Calculation Workflow in Probabilistic Genotyping

Proposition Hierarchy in DNA Evidence Evaluation

The conceptual relationships between different proposition types in DNA evidence evaluation can be visualized as follows:

Diagram 2: Proposition Hierarchy in DNA Evidence Evaluation

Current Challenges and Research Directions

Despite significant advances, several challenges remain in the implementation and standardization of the LR framework for DNA mixture interpretation:

Interpretation Variability

Recent studies have quantified substantial variability in DNA mixture interpretation both within and between laboratories [12]. This variability stems from differences in:

- Laboratory protocols and analytical thresholds

- Training and experience of DNA analysts

- Software systems and their parameterization

- Proposition setting practices

The development of standardized metrics such as the Genotype Interpretation and Allelic Truth metrics provides objective tools to quantify this variability and work toward improved consistency [12].

Proposition Setting Complexity

Research continues to refine approaches to proposition setting, particularly for complex mixtures with multiple persons of interest [9]. Key findings indicate that:

- Conditional propositions generally provide better discrimination between true and false donors than simple propositions

- Compound propositions can potentially misstate the weight of evidence when applied indiscriminately

- The hierarchy of propositions framework helps ensure that propositions address the appropriate issues in a case

Validation and Standardization

As probabilistic genotyping becomes more widespread, ensuring consistent validation and implementation across laboratories remains challenging [8] [5]. Current efforts focus on:

- Developing consensus standards for software validation

- Establishing proficiency testing programs

- Creating guidelines for presenting LR evidence in court

- Harmonizing terminology and reporting practices

The LR framework continues to evolve as the statistical cornerstone of forensic DNA evidence evaluation, with ongoing research refining its application, addressing limitations, and expanding its capabilities for justice system applications.

The interpretation of complex DNA mixtures, especially those involving multiple contributors or low-template DNA (LT-DNA), represents one of the most significant challenges in forensic genetics. The evolution of interpretation methodologies has progressed through three distinct phases: binary, semi-continuous (qualitative), and fully continuous (quantitative) models [3] [8]. This paradigm shift has fundamentally transformed how forensic scientists extract information from electrophoretic data, moving from simple presence/absence determinations to sophisticated probabilistic frameworks that leverage peak height information and model stochastic effects [8]. Binary models, which formed the early foundation of mixture interpretation, treated alleles in a binary fashion—either present or absent—without considering peak heights, stochastic effects like drop-out and drop-in, or stutter artifacts [3] [8]. The semi-continuous models that followed incorporated probabilities for drop-out and drop-in but still did not fully utilize quantitative peak height data [8]. The most advanced fully continuous models now leverage all available information, including peak heights, through statistical models that describe expected peak behavior using parameters aligned with real-world properties such as DNA quantity, degradation, and PCR artifacts [3] [8] [13].

This transition has been driven by both technological advancements and operational necessities. As DNA analysis sensitivity has improved, allowing profiles to be generated from merely a few skin cells, forensic laboratories increasingly encounter complex mixtures that traditional methods cannot interpret with sufficient statistical confidence [3] [2]. Continuous models have demonstrated superior performance for complex DNA mixtures involving multiple contributors and LT-DNA, providing greater ability to distinguish true donors from non-donors [3] [13]. The implementation of these advanced systems requires careful validation, appropriate parameterization, and thorough understanding of their underlying statistical frameworks to ensure reliable and scientifically defensible results in forensic casework [5] [8].

Comparative Analysis of Interpretation Models

Theoretical Foundations and Methodological Differences

The core distinction between interpretation models lies in their treatment of electropherogram data and their approach to calculating the Likelihood Ratio (LR), which expresses the weight of evidence by comparing probabilities under competing propositions (typically prosecution and defense hypotheses) [8] [13]. Table 1 summarizes the fundamental characteristics of the three primary model types used in forensic DNA mixture interpretation.

Table 1: Comparison of DNA Mixture Interpretation Models

| Feature | Binary Models | Semi-Continuous Models | Fully Continuous Models |

|---|---|---|---|

| Data Utilization | Allele presence/absence only | Allele presence/absence with drop-out/drop-in probabilities | Peak heights, areas, and qualitative data |

| Stochastic Effects | Not modeled | Modeled via drop-out/drop-in probabilities | Modeled via statistical distributions of peak behavior |

| Peak Height Information | Not used | Not used directly; may inform drop-out parameters | Integral to model calculations |

| Statistical Framework | Unconstrained or constrained combinatorial | Probabilistic with qualitative weights | Fully probabilistic with quantitative weights |

| LR Calculation | Based on possible/included genotypes | Sum over genotype combinations considering drop-out/drop-in | Integration over all possible genotype combinations and model parameters |

| Complex Mixture Capability | Limited | Moderate | High |

| LT-DNA Performance | Poor | Moderate | Superior |

| Example Software | Early Clayton guidelines | LRmix Studio, Lab Retriever | STRmix, EuroForMix, DNA•VIEW |

Binary models, the earliest approach, assign weights of 0 or 1 to genotype sets based solely on whether they account for observed peaks, without considering stochastic effects [8]. Semi-continuous models advance beyond binary approaches by calculating weights as combinations of drop-out and drop-in probabilities, though they still do not directly model peak heights [3] [8]. Fully continuous models represent the most sophisticated approach, using statistical distributions to model peak height expectations and incorporating all available quantitative information into the LR calculation [8] [13].

Performance Comparison Across Model Types

Comparative studies have demonstrated significant performance differences between interpretation models, particularly with complex mixtures and low-template DNA. A proof-of-concept multi-software comparison evaluated two semi-continuous (Lab Retriever, LRmix Studio) and three fully-continuous (STRmix, EuroForMix, DNA•VIEW) software packages on two-person and three-person mixtures with varying contributor ratios and template amounts [3]. The findings revealed that fully continuous software generally provided stronger support for true contributors (higher LRs) and better discrimination between true and non-contributors, especially with unbalanced mixtures and low-template samples [3].

The performance advantages of continuous models are particularly evident in challenging forensic scenarios. Table 2 presents quantitative results from validation studies comparing model performance across different mixture complexities and DNA template amounts.

Table 2: Performance Comparison Across Interpretation Models for Different Mixture Scenarios

| Mixture Scenario | Binary Model Performance | Semi-Continuous Model Performance | Fully Continuous Model Performance |

|---|---|---|---|

| Single Source | Reliable | Reliable | Reliable |

| 2-Person, Balanced | Moderately reliable | Reliable with minor limitations | Highly reliable |

| 2-Person, Unbalanced (1:19) | Unreliable | Limited reliability | Moderately to highly reliable |

| 3-Person, Balanced | Unreliable | Moderately reliable | Reliable |

| 3-Person, Unbalanced | Unreliable | Limited reliability | Moderately reliable |

| Low-Template DNA (>0.1 ng) | Unreliable | Variable reliability | Most reliable option |

| Degraded Samples | Unreliable | Limited reliability | Good reliability with proper modeling |

Fully continuous models demonstrate particular advantages in challenging conditions such as low-template DNA (as low as 0.1 ng total) and mixtures with unbalanced contributor ratios (e.g., 1:19), where stochastic effects significantly impact profile quality [3] [13]. Intra-model variability in LR calculations increases with both the number of contributors and decreased template mass, but this variability is more pronounced in binary and semi-continuous models [13]. Continuous models maintain more stable performance across these challenging conditions due to their more complete utilization of peak height information and better modeling of stochastic effects [3] [13].

Implementation of Continuous Models: Protocols and Procedures

Laboratory Validation Framework for Continuous Systems

The implementation of continuous probabilistic genotyping systems requires comprehensive internal validation following established scientific guidelines. The Scientific Working Group on DNA Analysis Methods (SWGDAM) validation guidelines provide a standardized framework for this process [5]. The validation should assess sensitivity, specificity, precision, and robustness under conditions reflecting actual casework, including varying contributor numbers, mixture ratios, and DNA template amounts [5] [2].

A typical validation protocol for continuous probabilistic genotyping software involves multiple experimental phases:

Single Source Samples: Analysis of single source profiles across a range of DNA quantities (from 2.0 ng to 0.1 ng or lower) to establish baseline characteristics and model parameters for the laboratory-specific environment [5] [13].

Simple Mixtures: Two-person mixtures with varying ratios (e.g., 1:1, 1:4, 1:9, 1:19) to evaluate software performance with unbalanced contributions [5] [3].

Complex Mixtures: Three- and four-person mixtures with different proportions to assess performance degradation with increasing contributor numbers [3].

Stochastic Effects Evaluation: Testing with low-template DNA (typically <0.1 ng total) to characterize drop-out, drop-in, and stutter modeling under extreme conditions [3] [14].

Model Parameterization: Establishing laboratory-specific parameters for stutter ratios, drop-in rates, and other model components based on experimental data [5] [14].

Sensitivity Analysis: Testing the impact of incorrect assumptions, particularly regarding the number of contributors and the addition of known contributors [5].

The following workflow diagram illustrates the key stages in implementing and validating continuous probabilistic genotyping systems:

Analytical Protocol for Continuous Model Implementation

The transition to continuous models requires standardized analytical protocols to ensure consistent application and reliable results. The following step-by-step protocol outlines the procedure for implementing continuous probabilistic genotyping in forensic casework:

Protocol: Implementation of Continuous Probabilistic Genotyping for DNA Mixture Interpretation

Materials and Equipment:

- Validated continuous probabilistic genotyping software (e.g., STRmix, EuroForMix)

- Electropherogram data in standardized format

- Laboratory-specific model parameters (stutter ratios, drop-in rate, etc.)

- Allele frequency database appropriate for the population

- Computational resources meeting software specifications

Procedure:

Data Quality Assessment

- Review electropherogram quality metrics (baseline noise, peak morphology, signal intensity)

- Verify analytical and stochastic thresholds established through validation

- Identify potential artifacts (stutter, pull-up, baseline noise) for model consideration

Profile Interpretation Pre-processing

- Review allele calls and peak height data across all loci

- Identify potential drop-out events based on peak height patterns

- Document any technical anomalies that may affect interpretation

Software Parameterization

- Input laboratory-specific parameters (stutter models, drop-in rate, degradation parameters)

- Select appropriate allele frequency database for the relevant population

- Configure model settings based on validation studies (e.g., number of MCMC iterations)

Proposition Setting

- Define prosecution hypothesis (Hp) based on case circumstances

- Define defense hypothesis (Hd) considering reasonable alternatives

- Specify known contributors (victim, suspect) where appropriate

LR Calculation and Analysis

- Execute software analysis with specified parameters and propositions

- Review model convergence and diagnostic statistics

- Assess sensitivity to key assumptions (number of contributors, proposition wording)

Results Interpretation and Reporting

- Interpret LR value within the context of case circumstances

- Apply verbal equivalence scale if laboratory policy requires

- Document all parameters, assumptions, and software settings used

Quality Assurance

- Peer review of interpretation process and results

- Verify software version and database used

- Archive case file with complete documentation of analysis

Troubleshooting Notes:

- If model convergence issues occur, increase MCMC iterations or adjust parameter settings

- If LRs show unexpected values, re-examine contributor number assumptions and proposition setting

- For highly complex mixtures, consider multiple software approaches or a "statistic consensus approach" [3]

Experimental Data and Validation Studies

Quantitative Performance Metrics Across Platforms

Validation studies across multiple laboratories and software platforms have generated substantial quantitative data on the performance of continuous probabilistic genotyping systems. The internal validation of STRmix using Japanese individuals and GlobalFiler profiles demonstrated the software's suitability for interpreting mixed DNA profiles in that population context, while noting rare exclusion errors (LR = 0) for true contributors under conditions of extreme heterozygote imbalance or significant mixture ratio differences between loci due to PCR stochastic effects [5].

A comprehensive multi-software comparison study examined two-person and three-person mixtures with different contributor ratios and amplification kits (GlobalFiler and Fusion 6C), providing direct performance comparisons between semi-continuous and fully-continuous approaches [3]. The study found that while semi-continuous models (LRmix Studio, Lab Retriever) generally produced lower LRs for true contributors compared to fully continuous systems, they showed less variability between different DNA amplification kits [3]. Fully continuous software (STRmix, EuroForMix, DNA•VIEW) demonstrated higher discriminatory power but showed greater variability in LR magnitudes across different kits, particularly with low-template and highly unbalanced mixtures [3].

Table 3 presents quantitative results from software comparison studies, showing typical LR ranges obtained for true contributors under different mixture conditions.

Table 3: Likelihood Ratio Ranges Across Software Platforms for True Contributors

| Mixture Type | Semi-Continuous Models | Fully Continuous Models | Key Observations |

|---|---|---|---|

| 2-Person, 1:1 Ratio | 10^6 - 10^9 | 10^8 - 10^15 | Fully continuous models generally produce higher LRs for balanced mixtures |

| 2-Person, 1:19 Ratio | 10^0 - 10^3 | 10^2 - 10^7 | Semi-continuous models show more false exclusions with highly unbalanced mixtures |

| 3-Person, Balanced | 10^3 - 10^6 | 10^5 - 10^10 | Performance gap widens with increasing contributor number |

| 3-Person, Unbalanced | 10^0 - 10^4 | 10^2 - 10^8 | Continuous models maintain better sensitivity with minor contributors |

| Low-Template (<0.1 ng) | 10^0 - 10^2 | 10^1 - 10^5 | Continuous models show superior performance with limited DNA |

Model Variability and Sensitivity Analysis

Understanding variability within and between continuous models is essential for proper implementation and courtroom testimony. A study examining four variants of a continuous interpretation method tested each model five times on 101 experimental samples with known contributors, including one-, two-, and three-person mixtures [13]. The results demonstrated that intra-model variability increased with both the number of contributors and decreased template mass [13]. More significantly, inter-model variability in the associated verbal expression of the LR was observed in 32 of the 195 LRs compared, with 11 profiles showing a change from LR > 1 to LR < 1 depending on the model variant used [13].

This variability highlights the importance of thorough validation and sensitivity analysis when implementing continuous systems. The impact of different stutter models was specifically investigated in a casework-driven assessment of EuroForMix versions 1.9.3 and 3.4.0, which differ in their stutter modeling capabilities (version 1.9.3 models only back stutter inputted by the expert, while version 3.4.0 models both back and forward stutter) [14]. Analysis of 156 real casework samples revealed that while most LR values differed by less than one order of magnitude across versions, exceptions occurred in more complex samples with increased contributors, unbalanced contributions, or greater degradation [14].

The following diagram illustrates the relationship between mixture complexity, DNA quantity, and model performance across different interpretation approaches:

Successful implementation of continuous probabilistic genotyping requires specific computational tools, laboratory resources, and methodological frameworks. The following table details essential components of the modern forensic geneticist's toolkit for continuous model implementation.

Table 4: Essential Research Reagent Solutions for Continuous Probabilistic Genotyping

| Tool Category | Specific Tools/Resources | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Probabilistic Genotyping Software | STRmix, EuroForMix, DNA•VIEW | Continuous model implementation for LR calculation | Commercial vs. open-source; computational requirements; validation status |

| Semi-Continuous Software | LRmix Studio, Lab Retriever | Comparison tool; transitional option; consensus approach | Useful for method comparison; less computationally intensive |

| Profile Analysis Tools | GeneMapper ID-X, Genemapper Software | Electropherogram analysis; allele calling; peak height data extraction | Must provide compatible output format for PG software |

| Database Systems | Laboratory information management systems (LIMS) | Reference sample management; case data tracking; quality control | Integration with PG software improves workflow efficiency |

| Statistical Packages | R, Python with specialized libraries | Custom analyses; validation data processing; visualization | Useful for advanced sensitivity analyses and validation studies |

| Validation Materials | NIST Standard Reference Material 2391c | Validation standards; interlaboratory comparisons | Provides standardized materials for validation studies [3] |

| Amplification Kits | GlobalFiler, Fusion 6C | DNA profile generation; multiplex STR amplification | Different kits may affect model performance and parameters [5] [3] |

The selection of appropriate tools depends on multiple factors, including laboratory resources, casework complexity, and jurisdictional requirements. Open-source solutions like EuroForMix provide accessibility but may require greater technical expertise for implementation and troubleshooting [3] [8]. Commercial systems like STRmix typically offer greater support infrastructure but at significant financial cost [5] [8]. Many laboratories implement multiple systems to enable comparative analyses and consensus approaches, particularly for complex mixtures and low-template DNA where model variability may be more pronounced [3].

The "statistic consensus approach" has emerged as a valuable methodology for handling complex DNA mixtures, particularly with low-template samples [3]. This approach compares LR results from different probabilistic software and reports only the most conservative LR value if coherence among models is observed, with inconclusive decisions when results show significant discrepancies [3]. This conservative approach helps mitigate limitations of individual models while leveraging the strengths of multiple systems.

The paradigm shift from binary and qualitative to continuous quantitative models represents fundamental progress in forensic DNA mixture interpretation. Continuous models provide superior statistical resolution, enhanced capabilities for complex mixtures, and more robust performance with low-template DNA compared to earlier methodologies [3] [13]. This advancement comes with implementation challenges, including computational demands, comprehensive validation requirements, and the need for advanced technical expertise [8] [2].

Successful implementation requires careful attention to laboratory-specific parameterization, sensitivity analysis of key assumptions, and understanding of model limitations [5] [13]. The forensic community continues to develop standards and best practices for continuous model implementation, with ongoing research addressing areas such as stutter modeling, validation frameworks, and consensus approaches for complex casework [3] [14]. As these methodologies evolve and mature, they provide increasingly powerful tools for forensic genetics while demanding rigorous scientific understanding and methodological care from practitioners.

The interpretation of complex DNA mixtures represents a significant challenge in modern forensic genetics, particularly with the increased sensitivity of DNA testing methods that allow profiles to be generated from just a few skin cells. This advancement has extended the usefulness of DNA analysis but also introduces complex mixtures often encountered in casework. The accurate interpretation of these mixtures hinges on the effective modeling of core nuisance parameters—stutter, drop-in, drop-out, and degradation—which introduce uncertainty and complexity into forensic analysis. This article details the protocols and application notes for modeling these parameters within the framework of probabilistic genotyping, providing researchers and forensic scientists with standardized methodologies to enhance the reliability and accuracy of DNA mixture interpretation in legal proceedings.

Forensic DNA analysis has evolved significantly since its inception in 1985, with contemporary investigations utilizing a variety of tools to analyze mixed DNA samples in criminal cases. DNA mixtures contain genetic material from two or more contributors, compounding analysis by combining major contributor DNA with small amounts from potentially numerous minor contributors. These samples are characterized by a high probability of drop-out (failure to detect alleles) or drop-in (contamination), elevated stutter artifacts, and potential degradation, significantly increasing analytical complexity [10].

The evolution of probabilistic genotyping software (PGS) has revolutionized mixture interpretation by employing statistical frameworks to account for multiple levels of uncertainty in allelic contributions from different individuals. These methods are particularly crucial for samples containing few DNA molecules, where stochastic effects are pronounced [15]. The International Society of Forensic Genetics (ISFG) has established guidelines for examining DNA mixtures and low copy number reporting, creating standardized step-by-step analysis procedures now employed globally [10].

Within this framework, accurate modeling of nuisance parameters is not merely optional but fundamental to generating reliable, defensible results. This article provides detailed protocols for identifying, quantifying, and computationally modeling these critical parameters to support advanced research in forensic genetics and drug development.

Defining Core Nuisance Parameters

Stutter

- Definition and Formation Mechanism: Stutter peaks are artifacts originating during the PCR extension phase through slipped-strand mispairing. This occurs when one strand loops and aligns in a position different from its supposed location during re-annealing of template and extending strands [4].

- Types and Characteristics:

- Back Stutter: Results from a loop in the template strand, causing deletion of one or more repeat units in the new strand. It typically accounts for 5–10% of the parent allelic peak height [4].

- Forward Stutter: Occurs when looping happens in the new strand, leading to addition of repeat unit(s). It accounts for a smaller fraction (0.5–2%) of the parent allelic height and is less common [4].

- Impact on Analysis: Stutter artifacts challenge the distinction between true alleles and artifacts, particularly for minor donors in mixed-source samples. This can lead to inaccurate estimation of the number of contributors and potential misinterpretation of evidence [4].

Drop-out and Drop-in

- Allele Drop-out: The failure to detect one or more alleles of a true donor during PCR amplification, primarily occurring in low-template DNA (LTDNA) samples where stochastic effects are pronounced. Drop-out invalidates conventional rules for analyzing heterozygous balance and other DNA characteristics, complicating mixture deconvolution [10] [16].

- Allele Drop-in: The presence of amplified DNA not originating from the sample, typically occurring as sporadic contamination. Sources include investigating officers, laboratory technicians, and laboratory plasticware. These contaminations are often difficult to identify and distinguish from true contributor DNA [10].

Degradation

- Definition and Causes: Degradation refers to the breakdown of DNA molecules into smaller fragments over time due to environmental factors such as heat, moisture, UV exposure, and microbial activity. This results in a reduction of high-molecular-weight DNA available for amplification [16].

- Analytical Consequences: Degraded samples exhibit a characteristic downward slope in peak heights across increasing fragment sizes in electrophoregrams. This non-uniform profile affects the efficiency of PCR amplification, particularly for larger loci, leading to potential allelic drop-out and imbalanced peak heights [16].

Table 1: Core Nuisance Parameters and Their Characteristics in Forensic DNA Analysis

| Parameter | Formation Cause | Key Characteristics | Impact on Analysis |

|---|---|---|---|

| Stutter | PCR slippage (slipped-strand mispairing) | Back stutter (5-10%), Forward stutter (0.5-2%) | Obscures minor contributor alleles; complicates contributor counting |

| Drop-out | Stochastic effects in low-template DNA | Allele missing despite contributor inclusion; more common with <200 pg DNA | Invalidates heterozygous balance rules; causes missing data |

| Drop-in | Contamination during collection/processing | Sporadic, low-level alien alleles | Introduces foreign alleles potentially misinterpreted as contributor alleles |

| Degradation | Environmental exposure (heat, moisture, UV) | Slope in peak heights; larger loci affected more | Causes allelic imbalance; mimics low-template effects |

Experimental Protocols for Parameter Modeling

Stutter Modeling and Analysis Protocol

Purpose: To empirically determine stutter ratios and incorporate them into probabilistic genotyping models for improved mixture interpretation.

Materials and Reagents:

- GlobalFiler or PowerPlex STR amplification kits

- High-quality single-source DNA reference standards

- Thermal cycler for PCR amplification

- Capillary electrophoresis system

- Probabilistic genotyping software (EuroForMix, STRmix)

Experimental Procedure:

- Sample Preparation: Prepare a series of single-source DNA samples at optimal concentrations (0.5-1.0 ng/μL) using reference standards with known genotypes.

- PCR Amplification: Amplify samples using standardized cycling conditions with the selected STR multiplex kit. Include appropriate positive and negative controls.

- Capillary Electrophoresis: Inject amplified products using standard parameters (e.g., 1.5 kV for 10 seconds) and collect raw data.

- Data Analysis:

- Identify stutter peaks as peaks typically one repeat unit smaller (back stutter) or larger (forward stutter) than true allelic peaks.

- Calculate stutter ratio for each allele:

Stutter Ratio = (Peak Height of Stutter Artifact) / (Peak Height of Parent Allele) - Compile locus-specific stutter percentages by averaging ratios across multiple samples and alleles for each marker.

- Software Implementation: Input empirical stutter ratios into probabilistic genotyping software parameters. For EuroForMix v3.4.0+, enable both back and forward stutter modeling options.

Validation: Compare Likelihood Ratio outputs between software versions with different stutter modeling capabilities (e.g., EuroForMix v1.9.3 with only back stutter modeling versus v3.4.0 with both back and forward stutter modeling) using identical sample sets [4].

Drop-out and Drop-in Modeling Protocol

Purpose: To establish stochastic thresholds and drop-in rates for low-template DNA analysis.

Materials and Reagents:

- Quantifiler HP or Plexor HY DNA Quantification System

- Serially diluted DNA standards (ranging from 1000 pg to 10 pg)

- Cleanroom facilities and UV-irradiated plasticware to minimize contamination

- Stochastic threshold calculation tools

Experimental Procedure:

- Sample Dilution Series: Create a dilution series of known DNA standards from 1000 pg down to 10 pg to model low-template conditions.

- Quantification and Amplification: Quantify each dilution in triplicate and amplify using standard STR protocols with increased PCR cycles (e.g., 28-34 cycles) for low-template samples.

- Peak Height Analysis:

- Measure peak heights for all heterozygous alleles across the dilution series.

- Identify the point at which heterozygous balance falls below 50% (peak height ratio <0.5).

- Stochastic Threshold Determination:

- Calculate the peak height value below which drop-out becomes probable.

- Establish laboratory-specific stochastic threshold, typically corresponding to 150-200 RFU based on validation data [16].

- Drop-in Rate Estimation:

- Analyze negative controls across multiple batches to determine baseline drop-in frequency.

- Calculate drop-in rate as the number of drop-in events per PCR, typically modeled as a Poisson random variable with mean λ = 0.05 or less in clean laboratory conditions [10].

Interpretation Guidelines: For CPI/CPE calculations, disqualify any locus from statistical evaluation where allele drop-out is possible based on peak height observations [16].

Degradation Modeling Protocol

Purpose: To quantify degradation levels and incorporate degradation parameters into mixture interpretation.

Materials and Reagents:

- Degraded DNA samples from casework or artificially degraded standards

- DNA quantification system with size distribution measurement capability

- Degradation index calculation software

Experimental Procedure:

- Sample Selection and Quantification:

- Select casework samples showing decreasing peak heights with increasing fragment size.

- Use quantification systems that provide degradation indices or similar metrics.

- Slope Calculation:

- Plot peak heights against fragment sizes for all loci.

- Calculate degradation slope using linear regression analysis.

- Typically, values range from 1.0 (no degradation) to <0.60 (highly degraded) [4].

- Software Implementation:

- Input degradation parameters into probabilistic genotyping software.

- In EuroForMix, set the degradation slope parameter under the "Model Options" with a default starting value of 1.0 (no degradation).

- Model Validation:

- Compare model performance with and without degradation parameters using positive controls with known degradation levels.

- Assess improvement in LR values and mixture deconvolution accuracy.

Table 2: Quantitative Parameters for Nuisance Factor Modeling

| Parameter | Measurement Technique | Typical Range | Software Implementation |

|---|---|---|---|

| Back Stutter Ratio | (Stutter peak height / Parent allele height) × 100% | 5–10% per locus | Locus-specific stutter percentages input in PGS |

| Forward Stutter Ratio | (Stutter peak height / Parent allele height) × 100% | 0.5–2% per locus | Enabled in advanced PGS (e.g., EuroForMix v3.4.0+) |

| Stochastic Threshold | Peak height at which heterozygote balance <50% occurs | 150–200 RFU | Analytical threshold setting in PGS |

| Drop-in Rate | Number of drop-in events in negative controls per PCR | λ ≤ 0.05 | Poisson rate parameter (mean) in PGS |

| Degradation Slope | Linear regression of peak heights vs. base pairs | 1.0 (none) to <0.6 (severe) | Degradation slope parameter in quantitative PGS |

Visualization of Computational Workflows

Probabilistic Genotyping Logic and Nuisance Parameter Integration

Diagram 1: Probabilistic genotyping logic framework with nuisance parameter integration, illustrating how core nuisance parameters are incorporated into the statistical evaluation of DNA evidence.

Laboratory Workflow for Nuisance Parameter Analysis

Diagram 2: Laboratory workflow for DNA analysis with integrated nuisance parameter considerations, showing key control points for managing stutter, drop-in, drop-out, and degradation throughout the analytical process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Software for Nuisance Parameter Modeling

| Tool/Reagent | Manufacturer/Developer | Primary Function | Application in Nuisance Modeling |

|---|---|---|---|

| GlobalFiler PCR Amplification Kit | Applied Biosystems, Thermo Fisher Scientific | Multiplex STR amplification | Provides 24-locus STR data for comprehensive stutter and drop-out analysis [4] |

| Plexor HY DNA Quantification System | Promega Corporation | Simultaneous quantification of total human and male DNA | Critical for determining DNA quantity and quality before amplification, informing drop-out potential [10] |

| EuroForMix | Øyvind Bleka et al. | Open-source quantitative probabilistic genotyping | Models stutter (back & forward), drop-in, drop-out, and degradation; allows parameter customization [4] |

| STRmix | ESR (New Zealand) & CFS (Australia) | Commercial probabilistic genotyping software | Incorporates empirical stutter ratios and models all nuisance parameters; widely validated [5] |

| NIST STRBase Population Databases | National Institute of Standards and Technology | Population allele frequency data | Essential for calculating likelihood ratios with correct population baselines [2] |

Discussion and Research Implications

The accurate modeling of core nuisance parameters is fundamental to reliable DNA mixture interpretation. Recent studies demonstrate that even incremental improvements in stutter modeling—such as the addition of forward stutter modeling in EuroForMix v3.4.0—can significantly impact likelihood ratio calculations, particularly in complex mixtures with more contributors, unbalanced contributions, or greater degradation [4]. The implementation of these models must be guided by empirical data and thorough validation to ensure statistical robustness.

Research indicates that the accuracy of DNA mixture analysis varies across human populations, with groups exhibiting lower genetic diversity showing higher false inclusion rates [15]. This highlights the critical importance of population-specific allele frequency databases and appropriate coancestry coefficients in probabilistic models. Furthermore, studies comparing different software versions reveal that LR values can differ by less than one order of magnitude in most cases, with greater discrepancies observed in complex samples [4], emphasizing the need for standardized implementation of nuisance parameter models.

The move toward probabilistic genotyping using likelihood ratios represents the current state-of-the-art, offering greater flexibility than combined probability of inclusion/exclusion (CPI/CPE) methods to coherently incorporate potential allele drop-out in complex mixtures [16]. However, all methods require careful consideration of nuisance parameters and their interactions. As forensic genetics continues to advance, with technologies like massively parallel sequencing enabling the analysis of microhaplotypes and additional markers, the fundamental need to accurately model stutter, drop-in, drop-out, and degradation will remain paramount to ensuring the reliability and relevance of DNA evidence in legal proceedings [2].

Implementing Probabilistic Genotyping: Workflows, Algorithms, and Practical Applications

The interpretation of DNA mixtures, comprising genetic material from two or more individuals, remains one of the most significant challenges in forensic DNA analysis. Advances in DNA extraction techniques, STR chemistry, and capillary electrophoresis have dramatically increased the sensitivity of forensic testing, enabling the recovery of usable DNA from increasingly minute samples [17]. This heightened sensitivity, while forensically valuable, often results in more complex mixture profiles that necessitate sophisticated interpretation methods. Probabilistic genotyping (PG) has emerged as the scientific standard for interpreting these complex mixtures, providing a statistical framework that accounts for biological processes such as stutter, drop-in, and drop-out, while delivering quantitative weight of evidence through likelihood ratios (LR).

This document outlines a standardized step-by-step workflow for probabilistic genotyping software analysis, from initial data evaluation through final reporting. The protocols described herein are framed within the broader context of ongoing research into the reliability, validity, and limitations of DNA mixture interpretation methods. A recent scientific foundation review by the National Institute of Standards and Technology (NIST) has underscored the need for rigorous methodology in this domain, evaluating the scientific basis for the mixture interpretation methods employed by forensic laboratories [18]. Furthermore, studies have indicated that analytical accuracy can vary across populations with different genetic diversity, emphasizing the necessity of robust and standardized protocols [15]. The workflow detailed in this application note provides a framework for implementing PG software in a manner that promotes transparency, reproducibility, and scientific rigor in forensic genetic research and casework.

Probabilistic genotyping software employs mathematical models to calculate the probability of observing a mixed DNA profile given different propositions about who contributed to the mixture. Unlike traditional binary methods, PG software uses a fully continuous model that considers both qualitative (allelic) and quantitative (peak height) information, enabling more precise and reproducible mixture deconvolution [17]. Several PG software solutions are available, each with specific strengths and applications.

Commonly Utilized Probabilistic Genotyping Systems:

- STRmix: A widely adopted continuous system used for deconvoluting complex DNA mixtures and calculating likelihood ratios. It is referenced in the protocols of major forensic laboratories, including the NYC Office of Chief Medical Examiner [19].

- EuroForMix: An open-source probabilistic genotyping system that can be used for mixture deconvolution and is integrated into software solutions like CaseSolver for database searching [20].

- DNAStatistX: A probabilistic genotyping system that forms the basis for the ProbRank database search method, which has been integrated into automated identification pipelines [20].

- GeneMapper PG Software: Extends the functionality of GeneMapper ID-X Software with a suite of tools for mixture analysis, including data-driven number of contributor estimations and likelihood ratio calculations [17].

These systems provide the computational foundation for the workflow described in the following sections, enabling researchers to move from raw electrophoretic data to a statistically robust assessment of evidential weight.

Step-by-Step PG Workflow

The following workflow describes a generalized, step-by-step process for the interpretation of forensic DNA mixtures using probabilistic genotyping software. This process ensures a systematic approach from the initial evaluation of analytical data to the final generation of a report.

Workflow Visualization

The following diagram illustrates the logical sequence and decision points in the probabilistic genotyping workflow:

Detailed Workflow Description

STR Data Evaluation and Quality Assessment The process begins with the evaluation of STR data generated by capillary electrophoresis. This raw data must undergo quality checks to ensure it is suitable for interpretation. This includes verifying that positive and negative controls perform as expected, assessing baseline noise, and checking for spectral pull-up or other artifacts. The data is then analyzed using profile analysis software (e.g., GeneMarker HID) to generate allele calls and peak height information. The analyst must review these calls for anomalies such as off-ladder alleles, high stutter, or extreme peak height imbalance [19].

Profile Suitability Assessment Not all DNA profiles are suitable for fully automated probabilistic genotyping analysis. Laboratories must establish and validate specific suitability criteria. These criteria may include thresholds for peak height, heterozygote balance, the presence of a major contributor, and the successful estimation of the number of contributors. If a profile does not meet the predefined criteria, it is flagged for manual review by a DNA expert before proceeding [20]. This step is critical for maintaining the reliability of the automated workflow.

Estimate Number of Contributors (NOC) An accurate estimation of the number of individuals who contributed to the mixture is a critical input for most probabilistic genotyping software. This estimation can be performed using a combination of methods, including:

- Examining the Maximum Allele Count (MAC) per locus.

- Calculating the Total Allele Count (TAC) across all loci.

- Utilizing machine learning tools integrated into expert systems. For instance, some systems use a random forest classifier trained on various profile features to predict the NOC [20]. Using multiple models for data-driven NOC estimation strengthens the robustness of this initial assumption [17].

Define Propositions (Hp and Hd) The core of likelihood ratio calculation is the formulation of two competing propositions under a prosecution hypothesis (Hp) and a defense hypothesis (Hd). For example:

- Hp: The DNA profile originated from the Person of Interest (POI) and N unknown individuals.

- Hd: The DNA profile originated from N+1 unknown individuals. The propositions must be clearly defined before the probabilistic calculation, as they frame the context of the comparison.

Set Model Parameters The analyst configures the probabilistic genotyping software with validated model parameters that reflect the behavior of the laboratory's specific DNA analysis process. These parameters include:

- Stutter ratios: Modeled per locus and allele.

- Drop-in rate: The probability of a spurious allele appearing.

- Drop-out probability: Modeled based on peak height and template DNA amount.

- Allele frequencies: Based on relevant population databases. These parameters are typically established during laboratory validation and are crucial for accurate model performance.

Perform Likelihood Ratio Calculation The PG software computes the likelihood ratio using a fully continuous model that considers the peak height information and the defined parameters. The LR is calculated as the probability of the evidence given Hp divided by the probability of the evidence given Hd. Software such as GeneMapper PG provides transparency in this calculation, allowing the analyst to track the logic and compare models [17].

Robustness Analysis and Sensitivity Testing Following the initial LR calculation, it is good practice to test the robustness of the result. This involves varying key assumptions, such as the number of contributors or model parameters, within reasonable bounds to see if the LR conclusion (e.g., strongly support Hp) remains stable. Some software includes functionality to simulate profiles and test the strength of the LR for a person of interest [17].

Interpret LR Results and Generate Report The final step is the interpretation of the LR within the context of the case. The laboratory's reporting guidelines will dictate how the LR is communicated (e.g., as a numerical value or a verbal equivalent). The report should clearly state the propositions, the calculated LR, and any limitations or caveats. In automated systems like the Fast DNA Identification Line, reports can be generated automatically, but they are always followed by a confirmation check and a more comprehensive expert report [20].

Experimental Protocols for PG Validation

The implementation of probabilistic genotyping in a laboratory requires rigorous validation to demonstrate that the software and methods are fit for purpose. The following protocols outline key experiments for validating a PG workflow.

Preparation of Validation Samples

A comprehensive validation study requires a set of mixture samples that represent the variability observed in forensic casework. The design proposed by the SWGDAM Next-Generation Sequencing Committee provides an excellent template [21].

Table 1: Example Plate Layout for PG Validation Mixtures

| Well Position | Sample Type | Contributor Ratios | Input DNA (ng) | Degradation State | Replicates |

|---|---|---|---|---|---|

| A1, A5, A9 | 3-person mixture | 98:1:1 | 4.0, 1.0, 0.25 | Non-degraded | Triplicate |

| B1, B5, B9 | 3-person mixture | 94:3:3 | 4.0, 1.0, 0.25 | Non-degraded | Triplicate |

| C3 | 3-person mixture | Varies | 1.0 | Major contributor degraded | Single |

| C4 | 3-person mixture | Varies | 1.0 | All contributors degraded | Single |

| D2 | 4-person mixture | Varies | 1.0 | Non-degraded | Single |

| E2 | 5-person mixture | Varies | 1.0 | Non-degraded | Single |

| G10, G11, G12 | Single-source | N/A | 0.5 to 0.0156 | Non-degraded | Dilution Series |

Adapted from the SWGDAM mixture study design [21].

Protocol:

- Source DNA Selection: Select single-source DNA samples from a diverse set of donors. Quantify samples accurately using digital PCR (dPCR) or other precise methods [21].

- Allelic Overlap Analysis: Calculate the Allele Sharing Ratio (ASR) and the number of unique alleles per locus for potential mixture combinations to ensure a range of complexities [21].

- Mixture Preparation: Combine quantified single-source samples in predetermined ratios to create mixtures. Use serial dilutions to achieve the desired input amounts for sensitivity testing.

- Degradation Protocol (Optional): To simulate degraded casework samples, subject DNA to controlled sonication. For example, sonicate a 130 µL sample for 15 minutes using a Covaris S2 sonicator (duty cycle=10%, intensity=10, cycles/burst=100) at ≈6°C. Verify the degree of fragmentation using a TapeStation system [21].

- Quality Control: Genotype the prepared mixtures using standard CE STR kits (e.g., PowerPlex Fusion 6C) and analyze with PG software to confirm the expected contributor ratios match the theoretical ratios.

Software Performance and Sensitivity Testing

This protocol tests the accuracy and limits of the PG software.

Table 2: PG Software Performance Metrics

| Test Category | Specific Metric | Target Performance Threshold |

|---|---|---|

| Sensitivity | Lowest minor component % detected and deconvoluted | ≤1% in a 3-person mixture |

| Reproducibility | LR variance across replicate injections | LR log10 standard deviation < 0.5 |

| Accuracy | False Inclusion Rate (FIR) | FIR < 1e-5 for major contributors [15] |

| Accuracy | False Exclusion Rate (FER) | FER < 1% |

| Specificity | Adventitious Match Rate | Consistent with population frequency |

Protocol:

- Amplification and Electrophoresis: Amplify the validation samples from Table 1 using standard commercial STR kits. Process the amplified products on a capillary electrophoresis instrument (e.g., 3500xL Genetic Analyzer) according to the manufacturer's protocols [19].

- Data Analysis and Profile Export: Analyze the raw data using STR analysis software (e.g., GeneMarker) according to established laboratory protocols and export the DNA profiles for PG software import [19].

- PG Software Processing: Process the exported profiles through the PG workflow (Section 3). For known mixtures, calculate the LRs for true contributors and non-contributors.

- Data Collection: Record the LRs for true contributors (to assess sensitivity and FER) and for non-contributors (to assess FIR and specificity). Note the success rate of profile deconvolution and any software warnings or errors.

- Analysis: Plot the LR results against variables such as template amount, mixture ratio, and degradation state. Determine the operational limits of the software for your laboratory's use.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential software, reagents, and instruments required for implementing a probabilistic genotyping workflow in a research or casework setting.

Table 3: Essential Research Reagents and Software Solutions

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| GeneMapper PG Software | Software | Provides a suite for mixture interpretation with transparent logic, multiple NOC models, and LR calculation tools [17]. |

| STRmix | Software | A continuous probabilistic genotyping system used for deconvoluting complex DNA mixtures and calculating LRs [19]. |

| EuroForMix | Software | An open-source probabilistic genotyping program that can be used for mixture interpretation and is integrated into tools like CaseSolver [20]. |

| PowerPlex Fusion 6C | STR Kit | A multiplex PCR assay for co-amplification of 27 autosomal STRs, 7 Y-STRs, and 94 SNPs. Used to generate the DNA profile data for interpretation [21]. |

| 3500xL Genetic Analyzer | Instrument | A capillary electrophoresis instrument used for the separation, detection, and analysis of fluorescently labeled STR fragments [19]. |