Overcoming Publication Bias in Environmental Research: A Practical Guide for Scientists and Clinicians

This article addresses the critical challenge of publication bias, which skews the scientific record by favoring positive results and threatens the integrity of environmental and biomedical research.

Overcoming Publication Bias in Environmental Research: A Practical Guide for Scientists and Clinicians

Abstract

This article addresses the critical challenge of publication bias, which skews the scientific record by favoring positive results and threatens the integrity of environmental and biomedical research. It explores the root causes and far-reaching consequences of this bias, from distorted meta-analyses to misguided policy. A practical framework is provided, covering methods for detecting bias, strategies for prevention, and validation techniques to ensure a more complete and reliable evidence base. Tailored for researchers, scientists, and drug development professionals, this guide aims to empower the scientific community to foster transparency and enhance the credibility of research for informed decision-making.

The Unseen Threat: How Publication Bias Distorts Environmental Science

What is Publication Bias and Why Does It Matter?

Publication bias occurs when the publication of research results depends not just on the quality of the research but also on the hypothesis tested, and the significance and direction of effects detected [1]. This means that studies with statistically significant positive results are more likely to be published than those with null or negative findings [2] [3].

This bias is sometimes called the "file-drawer problem" because negative results often remain in researchers' file drawers rather than being published [1]. The term was coined by psychologist Robert Rosenthal in 1979 to describe this systematic suppression of non-significant findings [1].

Why Publication Bias Severely Impacts Environmental Research

In environmental degradation research, publication bias creates dangerous knowledge gaps. When studies showing minimal environmental impact or failed conservation interventions remain unpublished, we get an overly optimistic view of ecosystem health and intervention effectiveness [4]. This bias can lead to:

- Incomplete risk assessments of environmental threats

- Repeated failures in conservation strategies

- Misallocation of limited conservation resources

- False confidence in environmental management approaches

How to Detect Publication Bias: Technical Protocols

Visual Detection Methods

Funnel Plot Analysis

Protocol Implementation:

- Plot effect sizes against precision measures (usually 1/standard error) [5]

- In absence of bias, studies scatter symmetrically in an inverted funnel pattern

- Asymmetry suggests missing studies, often with smaller effects and lower precision [2]

- Limitation: Visual assessment can be subjective; requires statistical confirmation [1]

Statistical Detection Methods

Egger's Regression Test

Experimental Protocol:

- Calculate standardized effect sizes: For each study, compute (effect size)/(standard error)

- Compute precision: Calculate (1/standard error) for each study

- Perform weighted regression: Standardized effect = α + β × precision [5]

- Test significance: Statistically significant intercept (α) indicates publication bias

- Interpret results: p < 0.05 suggests substantial bias in the literature

Table 1: Statistical Tests for Publication Bias Detection

| Method | Basis of Operation | When to Use | Interpretation Guidelines |

|---|---|---|---|

| Egger's Regression Test [5] | Linear regression of standardized effect on precision | Initial screening; continuous outcome data | Significant intercept (p < 0.05) indicates bias |

| Begg's Rank Test [5] | Correlation between effect sizes and their variances | Small sample sizes; non-parametric alternative | Significant correlation (p < 0.05) suggests bias |

| Skewness Test [5] | Asymmetry of standardized deviates' distribution | Alternative to Egger's test; newer method | Significant skewness indicates bias |

| Trim and Fill Method [5] | Iterative trimming and filling of funnel plot | Both detection and adjustment for bias | Estimates number of missing studies |

Troubleshooting Guide: Publication Bias Detection

FAQ: Common Technical Challenges

Q: Our funnel plot shows asymmetry, but Egger's test isn't significant. Which result should we trust? A: This discrepancy often occurs with heterogeneous studies or small sample sizes. Prioritize the funnel plot visual assessment when you have methodological diversity in your studies, as heterogeneity can affect statistical tests. Conduct sensitivity analyses using multiple detection methods and report all results transparently [5] [6].

Q: How many studies are needed to reliably detect publication bias? A: Most statistical tests require at least 10-15 studies for reasonable power. With fewer studies, focus on study registration searches and grey literature inclusion rather than statistical tests. The Cochrane Handbook recommends acknowledging the limitation of small numbers rather than relying on underpowered bias assessments [6].

Q: In environmental research, high heterogeneity is common. How does this affect bias detection? A: High heterogeneity (I² > 75%) can create funnel plot asymmetry unrelated to publication bias. Use random-effects versions of statistical tests when substantial heterogeneity is present. Consider subgroup analyses or meta-regression to account for heterogeneity sources before attributing asymmetry to publication bias [7].

Q: What if we cannot find unpublished studies for our meta-analysis? A: Implement selection model approaches that statistically adjust for potential missing studies. The trim and fill method can impute theoretically missing studies, though this should be framed as sensitivity analysis rather than definitive correction [5] [8].

Environmental Research Case Studies

Place-Based Bias in Ecological Research

Recent research reveals that negative human histories (e.g., communities with histories of environmental injustice, racialized policies, or forced removals) create what scholars term "social-ecological landscapes of fear" [4]. This bias constrains where ecological research is conducted, systematically excluding areas with complex social histories.

Table 2: Documented Biases in Environmental Research

| Bias Type | Impact on Environmental Science | Corrective Strategies |

|---|---|---|

| Place-Based Bias [4] | Research concentrated in "safe" or prestigious locations; gaps in marginalized communities | Community-engaged research; historical context inclusion |

| Climate Change Reporting Bias [9] | Storms and wildfires over-reported; heatwaves under-reported despite health impacts | Balanced hazard coverage; climate attribution reporting |

| Negative Footprint Illusion [10] | Overestimation of "eco-friendly" items' benefits; averaging bias in impact assessment | Training in quantitative reasoning; life-cycle assessment emphasis |

| Conservation Success Bias | Predominantly published success stories; unpublished failed interventions | Conservation failure repositories; null result journals |

Experimental Protocol: Addressing Place-Based Bias

- Historical context analysis: Research the social history of your study region

- Community engagement: Include local knowledge in research design

- Diverse site selection: Intentionally include underrepresented areas

- Methodological transparency: Document how site selection may influence findings

Negative Footprint Illusion in Environmental Assessment

Cognitive research demonstrates a systematic bias where people believe adding "eco-friendly" items to conventional items reduces the total environmental footprint, when the footprint actually increases [10]. This averaging bias leads to overoptimistic environmental assessments.

Detection Protocol:

- Use direct footprint calculation alongside subjective assessments

- Implement cognitive reflection tests to identify susceptible individuals

- Provide clear summation frameworks rather than relative assessments

Research Reagent Solutions: Bias Detection Tools

Table 3: Essential Materials for Publication Bias Assessment

| Tool/Resource | Function | Application Notes |

|---|---|---|

| PRISMA Checklist [2] | Standardized reporting for systematic reviews | Item 16 specifically addresses meta-bias assessment |

| ROSES Reporting Standards | Environmental systematic review protocols | Environment-specific reporting guidelines |

| ClinicalTrials.gov | Registry for clinical trials; model for environmental registry development | Template for environmental intervention registration |

| Open Science Framework | Study pre-registration platform | Mitigates publication bias through study registration |

| R package: metafor | Comprehensive meta-analysis with bias detection | Implements Egger's test, Begg's test, trim and fill |

| Copernicus EM-DAT Database [9] | International disaster database | Identifies reporting biases in environmental hazards |

Mitigation Protocols for Environmental Researchers

Pre-Registration Solution

Experimental Protocol: Study Pre-Registration

- Write detailed protocol before data collection

- Register with environmental study registries (developing) or Open Science Framework

- Specify primary outcomes and analysis plans

- Commit to publishing regardless of results

Registered Reports Implementation

Environmental Research Adaptation:

- Journal selection: Target journals accepting Registered Reports

- Protocol development: Emphasize environmental specificity and context

- Outcome selection: Pre-specify primary environmental endpoints

- Analysis transparency: Document all analytical decisions

Grey Literature Integration Protocol

- Search strategy: Include theses, government reports, conference abstracts

- Language inclusion: Non-English literature searching

- Database selection: Environmental specific databases (EM-DAT, environmental agency publications)

- Quality assessment: Adapt quality appraisal for non-peer reviewed sources

Advanced Multivariate Methods

For complex environmental data with multiple outcomes or dependent effect sizes, recent methodological advances offer multivariate selection models [8]. These approaches extend publication bias correction to more realistic research scenarios.

Experimental Protocol: Multivariate Selection Models

- Model dependence structure between effect sizes

- Apply selection functions that account for multiple outcomes

- Use strict selection criteria: Different publication probabilities for studies with all significant outcomes vs. at least one significant outcome

- Implement sensitivity analyses comparing different selection assumptions

This technical support framework provides environmental researchers with comprehensive tools to detect, understand, and mitigate publication bias, ultimately strengthening the evidence base for addressing environmental degradation.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q: I have a null result from my environmental study. Is it even worth writing up?

- A: Yes. While there is a well-documented bias against null results, their publication is crucial for an accurate evidence base. Unpublished null studies waste resources, slow scientific progress, and distort meta-analyses, leading to flawed policy interventions [11] [12]. Publishing null findings is an ethical responsibility to research participants and the scientific community.

Q: My study shows a positive priming effect but a net gain in soil carbon. Is this a "positive" or "negative" finding?

- A: This highlights the nuance often lost due to bias. Your finding is scientifically critical. A focus on only the positive priming effect, while ignoring the net C balance, perpetuates the misleading narrative that priming invariably leads to carbon loss, which is often not the case [13]. The full context of the carbon budget is essential.

Q: A journal reviewer rejected my paper, stating my null result is "not novel." How should I respond?

- A: This is a common manifestation of publication bias. In your response, you can politely clarify the importance of null results for research integrity. Cite literature on the harms of publication bias, including how it leads to exaggerated effect sizes and research waste [11] [14]. You can also seek out journals or preprint servers with explicit policies welcoming null results.

Q: How can I check for publication bias in my own meta-analysis?

- A: The most common method is a funnel plot, which is a scatterplot of effect size against a measure of study precision (e.g., standard error) [13] [15]. Asymmetry in the plot can indicate publication bias. Statistical tests like Egger's regression often accompany the visual inspection. For prevalence studies, ensure the analysis uses log-transformed prevalence to create a proper funnel plot range [15].

Troubleshooting Guide: Diagnosing and Correcting for Publication Bias

| Problem | Diagnostic Checks | Corrective Actions & Solutions |

|---|---|---|

| Suspected selective publication in literature. | - Create a funnel plot; look for asymmetry [13] [15].- Use statistical tests (e.g., Egger's test).- Conduct a trim-and-fill analysis to estimate missing studies [13]. | - Search clinical trial registries and preprint servers for unpublished data.- Contact leading researchers in the field for unpublished datasets.- Interpret the pooled effect size from meta-analysis with caution, noting potential overestimation. |

| Planning a study with a high risk of being perceived as "null". | - Evaluate if the research question is important regardless of the outcome.- Check if the study has power to detect a meaningful effect. | Preregister your study's hypotheses, methods, and analysis plan before beginning [11] [14]. This commits journals to publishing the work based on the importance of the question and rigor of the method, not the outcome. |

| Difficulty publishing a null or negative result. | - Receive desk rejection or reviewer comments focusing on a lack of "impact." | - Target journals that explicitly welcome null results (e.g., PLOS ONE, null journals) or use Registered Reports [11] [12].- Submit to preprint servers (e.g., bioRxiv) with dedicated sections for contradictory results [11] [12]. |

Quantitative Evidence of Publication Bias

The following tables summarize documented evidence of publication bias across various scientific fields.

Table 1: Documented Prevalence and Impact of Publication Bias

| Field / Discipline | Documented Evidence of Bias | Key Quantitative Findings | Impact on Literature |

|---|---|---|---|

| Soil Science (Priming Effects) | Overrepresentation of positive priming (C loss) in literature [13]. | A corrected meta-analysis showed a real priming effect of 10.7%, far lower than often-cited inflated figures (e.g., 125%) [13]. | Creates a distorted narrative that priming invariably leads to net soil carbon loss, despite evidence that C inputs often exceed losses [13]. |

| Biomedical Research (Neuroscience) | Under-publication of null findings in specific subfields [11] [12]. | Fewer than 2 in 100 articles on animal models of stroke report null findings [11] [12]. | Leads to a false impression of biomarker reliability and wastes resources on dead-end research paths. |

| Clinical Trials | Non-publication of trials with null or negative results [16]. | Between 25% and 50% of clinical trials are never published or are published years after completion [16]. | Poses risks to patient care, as treatment decisions are based on an incomplete and overly optimistic evidence base. |

| Psychology | Bias against null results in standard reports [11]. | The adoption of the Registered Report format substantially increased the proportion of null findings published [11] [12]. | Demonstrates that the bias is systemic to publication models, not a lack of null studies being conducted. |

Table 2: Common Cognitive and Systemic Biases Driving Publication Bias

| Type of Bias | Description | Effect on Publication of Null Results |

|---|---|---|

| Availability Heuristic | The tendency to overestimate the prevalence of what is easily recalled [13]. | "Catchy" studies showing large effects become "top of mind," overshadowing more common null results and skewing perceived norms [13]. |

| Confirmation Bias | The tendency to search for, interpret, and recall information that confirms pre-existing beliefs [13]. | Researchers and reviewers may subconsciously dismiss null results that contradict dominant theories while accepting less rigorous positive results that confirm them [13]. |

| Hindsight Bias | The tendency to see past events as being predictable [13]. | After a positive result is published, it seems inevitable, making null results appear to be due to researcher error rather than a valid outcome [13]. |

| Systemic/Peer Pressure | Institutional incentives that prioritize high-impact publications [13] [11]. | Tenure and promotion systems that favor journal impact factors over methodological rigor actively discourage researchers from spending time on null results [13] [11] [12]. |

Experimental Protocols for Detecting and Measuring Bias

Protocol 1: Conducting a Funnel Plot Analysis for a Meta-Analysis

Purpose: To visually and statistically assess the potential for publication bias in a body of literature.

Materials: Statistical software (e.g., R, Stata), dataset of effect sizes and standard errors/variance from included studies.

Workflow:

- Data Extraction: For each study in your meta-analysis, extract the effect size (e.g., mean difference, odds ratio, correlation coefficient) and its measure of precision (standard error or variance).

- Generate Scatterplot: Create a scatterplot (the funnel plot) where the X-axis is the effect size and the Y-axis is the standard error (or a related measure like 1/SE).

- Assess Symmetry: In the absence of bias, the plot should resemble an inverted funnel, with smaller, less precise studies scattering more widely at the bottom and larger, more precise studies clustering tightly at the top around the true effect. Asymmetry, such as a gap in the bottom-left or bottom-right corner, suggests missing studies, often null ones [13] [15].

- Statistical Testing: Perform a regression-based test (e.g., Egger's test) to statistically evaluate the relationship between effect size and its precision. A significant result indicates funnel plot asymmetry.

- Adjustment (Optional): Use methods like "trim-and-fill" to impute potentially missing studies and provide an adjusted effect size estimate [13].

Protocol 2: Implementing a Registered Report for a New Study

Purpose: To ensure a study is published based on the importance of the research question and rigor of the methodology, regardless of the outcome.

Materials: Journal offering the Registered Report format, detailed study protocol.

Workflow:

- Stage 1: Pre-Study Submission

- Develop Protocol: Design your study, including introduction, hypotheses, detailed methods, experimental procedures, and the planned statistical analysis plan.

- Submit for Review: Submit this Stage 1 manuscript to a journal offering Registered Reports.

- Peer Review: Journal reviewers assess the study's conceptual rationale and methodological soundness. If successful, the journal provides an in-principle acceptance (IPA).

- Stage 2: Post-Study Submission

- Conduct the Study: Perform the experiment exactly as described in the Stage 1 protocol.

- Write the Report: Complete the manuscript with results and discussion, adhering to the pre-approved analysis plan.

- Submit Final Manuscript: The journal reviews the final manuscript to verify adherence to the protocol. The outcome of the study (positive, null, or negative) does not influence the publication decision [11] [12].

The Scientist's Toolkit: Research Reagent Solutions

Key Materials for Investigating Environmental Degradation

| Item / Solution | Function in Research | Example Application in Environmental Studies |

|---|---|---|

| Stable Isotope Probes (e.g., ¹³C) | To trace the fate of carbon inputs in soil/ecosystem studies [13]. | Quantifying the portion of added substrate vs. native soil organic matter that is mineralized by microbes, allowing precise measurement of priming effects [13]. |

| Environmental Sensor Networks | To collect high-resolution, real-time data on environmental parameters. | Monitoring carbon fluxes, temperature, humidity, and soil moisture at scale to link microbial processes to ecosystem-level C balances [13]. |

| VOSviewer Software | A software tool for constructing and visualizing bibliometric networks [17]. | Conducting bibliometric analysis to map research trends, collaborations, and identify over- or under-studied factors in environmental degradation literature [17]. |

| Quantitative Genotypic Tools | To characterize microbial community structure and functional potential. | Comparing the microbial traits and genotypes associated with positive vs. negative priming in soil incubation studies [13]. |

| Registered Report Format | An article type that peer-reviews methods before results are known [11] [12]. | Ensuring that well-designed studies on the drivers of environmental degradation (e.g., urbanization, resource use) are published regardless of their findings, combating file-drawer bias [11] [12]. |

Frequently Asked Questions (FAQs)

1. What is publication bias and why is it a problem in environmental research? Publication bias occurs when studies with statistically significant or "positive" results are more likely to be published than those with null or negative results [18] [16]. In environmental research, this creates a distorted evidence base [19] [20]. For example, if multiple studies showing no significant effect of a chemical are left unpublished, regulations might be based only on the few studies that showed a harmful effect, leading to misguided policies, wasted resources, and a flawed understanding of environmental risks [21] [22].

2. Our institution rewards publications in high-impact journals. How can I justify spending time on publishing a null result? The academic reward system is a known driver of publication bias [12]. However, the landscape is changing. You can justify this work by:

- Highlighting Ethical Compliance: Many funders now mandate that all results be shared as a condition of funding [12]. Publishing null results fulfills this ethical contract with research participants and funders [16].

- Emphasizing Scientific Rigor: Publishing a well-designed study with null results demonstrates intellectual honesty and contributes to research integrity, preventing other scientists from wasting resources on the same futile quests [18] [22].

- Using New Avenues: Cite the growing number of prestigious journals that offer Registered Reports or dedicated sections for null results [12]. You can also use preprint servers and data repositories to ensure your work is citable and accessible [12].

3. A journal rejected our paper because the results were "not novel enough." What are our options? Journal preference for novel, positive findings is a key cause of publication bias [18] [12]. Your options include:

- Seek journals that welcome null results: An increasing number of journals explicitly state they consider results of methodologically sound research, regardless of outcome [12]. The NINDS analysis found 14 neuroscience journals that accept null studies without extra conditions [12].

- Submit to a preprint server: Platforms like bioRxiv (which has a 'Contradictory Results' section), OSFPreprints, or arXiv allow you to make your findings public immediately [12].

- Consider alternative formats: Explore modular publications or micropublications, which are designed for concise, single-result papers [12].

- Deposit in a repository: Ensure your work is accessible by depositing the full manuscript or a detailed report in an institutional repository or on platforms like Zenodo or Figshare [12].

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Systemic Biases in Your Research Ecosystem

Systemic biases can skew research before an experiment even begins. Use this guide to identify and address them.

Table: Common Systemic Biases and Their Effects in Environmental Research

| Type of Bias | Description | Potential Effect on Environmental Research |

|---|---|---|

| Funding Bias [19] [20] | Research agendas and outcomes are influenced by the funder's interests. | Studies funded by industry may downplay environmental harms, while those from advocacy groups may overstate them [20]. |

| Institutional Bias [19] | Research is directed towards objectives that perpetuate an institution's own power and narrow goals. | Academic "publish or perish" culture prioritizes positive results for career advancement, disincentivizing null studies [19] [18]. |

| Socio-Cultural Bias [19] | The dominant cultural worldview prioritizes certain types of knowledge and solutions. | Western scientific approaches may be favored over indigenous or local knowledge in designing environmental solutions [19]. |

| Methodological Bias [20] | The choice of models and methods introduces systematic errors. | Climate models that simplify cloud processes can lead to inaccurate regional projections [20]. |

Diagnostic Questions:

- To identify Funding Bias: Are our research questions limited to topics that are likely to receive funding? Do we feel pressure to interpret data in a way that aligns with our funder's interests? [19] [20]

- To identify Institutional Bias: Does our promotion and tenure system exclusively value high-impact publications and grant money, rather than reproducible and rigorous science, including null results? [12]

- To identify Methodological Bias: Have we critically examined the inherent assumptions and limitations of our chosen experimental models or statistical analyses? [20]

Corrective Protocols:

- For Funding Bias: Actively seek diverse funding sources, including public and non-profit grants. Maintain transparency by publicly disclosing all funding sources and potential conflicts of interest [20] [22].

- For Institutional Bias: Advocate for reforms in academic evaluation. Promote holistic review that values data sharing, replication studies, and publication in null-result friendly venues [12].

- For Methodological Bias: Employ open-source models and code where possible. Use sensitivity analyses to test how different assumptions affect your results. Engage in interdisciplinary collaboration to challenge methodological norms [20].

Guide 2: Implementing a Pre-Registration and Data-Sharing Protocol

Pre-registration is one of the most effective tools for combating publication bias and other questionable research practices.

Workflow Overview:

Step-by-Step Pre-registration Protocol:

- Develop Your Research Question and Hypothesis: Formulate a clear, focused primary question.

- Finalize Your Experimental Design: Before collecting any data, detail your:

- Population/Sample: Source, size, inclusion/exclusion criteria.

- Variables: Independent, dependent, and controlled variables.

- Procedures: Step-by-step experimental methodology.

- Statistical Analysis Plan: Precisely define the statistical tests you will use to test your primary hypothesis. Specify how you will handle outliers and missing data.

- Submit to a Registry:

- Platforms: Use a public, time-stamped registry like the Open Science Framework (OSF) or ClinicalTrials.gov.

- Level of Detail: The protocol should be detailed enough for another researcher to replicate your study.

- Conduct the Experiment: Adhere strictly to the pre-registered protocol. Document any unavoidable deviations.

- Analyze the Data: First, conduct the pre-registered confirmatory analysis. You may then perform exploratory analyses, but they must be clearly labeled as such in any resulting publication.

- Publish the Results: Submit the full manuscript for publication, highlighting that the study was pre-registered. The journal's decision should be based on the methodological rigor, not the nature of the results [12].

Guide 3: Navigating the Publication Process for Null and Negative Results

Publishing null findings requires a specific strategy. This protocol maximizes your chances of success.

Pathway for Publishing Null Results:

Step-by-Step Publication Protocol:

Confirm a "True Null" Result:

- Power Analysis: Ensure your study was adequately powered to detect a meaningful effect. A common reason for rejection is the suspicion that a null result is simply a "false negative" from an underpowered experiment [18].

- Methodological Rigor: Double-check your data quality, controls, and adherence to your protocol. Be prepared to demonstrate this rigor in your manuscript.

Select the Right Publication Venue:

- Target Null-Friendly Journals: Seek out journals that explicitly welcome null results. The PLOS family, Scientific Reports, and many field-specific journals have such policies. Look for journals that offer the Registered Report format, where peer review happens before results are known, guaranteeing publication of high-quality science regardless of outcome [12].

- Consider Alternative Platforms: If traditional journals are not an option, publish a preprint on bioRxiv or arXiv. You can also write a concise "micropublication" or deposit a complete manuscript in a data repository like Zenodo to make it citable [12].

Structure Your Manuscript for Success:

- Title and Abstract: Clearly state the study tested a hypothesis that was not supported. Use phrases like "No evidence for..." or "The null effect of...".

- Introduction: Justify why testing this hypothesis was important and what the expected effect would have been.

- Methods: Emphasize the rigorous design, including the pre-registration (if applicable) and a priori power analysis.

- Results and Discussion: Present the null findings clearly. Discuss the implications of your null result for the field and why it is valuable, perhaps by challenging a dominant paradigm or preventing future research waste.

The Researcher's Toolkit: Essential Reagents for Combating Bias

Table: Key Solutions and Resources for Unbiased Research

| Tool / Reagent | Function / Purpose | Example Platforms & Resources |

|---|---|---|

| Pre-registration | Eliminates HARKing (Hypothesizing After the Results are Known) and p-hacking by locking in the hypothesis and analysis plan. | Open Science Framework (OSF), ClinicalTrials.gov, AsPredicted |

| Registered Reports | A publishing format where peer review occurs before data collection, guaranteeing publication based on methodological soundness, not results. | Journals from PLOS, Elsevier, Springer Nature, and many society journals [12]. |

| Preprint Servers | Provides immediate, open dissemination of results, bypassing journal biases against null findings. | bioRxiv, arXiv, OSF Preprints [12]. |

| Data Repositories | Ensures data and code are accessible, enabling verification and reuse, and fulfilling funder mandates. | Zenodo, Figshare, Dryad [12]. |

| Systematic Reviews | Synthesizes all available evidence on a topic, actively seeking to include unpublished and null results to minimize bias. | Cochrane Collaboration, Campbell Collaboration. |

Quantifying the Problem: Data on Publication Bias

The following table summarizes key quantitative findings that highlight the prevalence and impact of publication bias.

Table: Documented Evidence of Publication Bias Across Disciplines

| Field / Context | Finding | Source / Reference |

|---|---|---|

| Biomedical Research (General) | Frequency of papers declaring significant statistical support for their hypotheses increased by 22% between 1990 and 2007. Psychology and psychiatry are among the disciplines with the highest increase. | Ioannidis, 2012 [18] |

| Autism-Spectrum Disorder (ASD) Research | In 4 emerging fields of ASD research, over 89% of 437 studies reported a significant association, with 100% of 115 studies on oxidative stress reporting positive results. | Ioannidis, 2012 [18] |

| Clinical Trials | Between 25% and 50% of clinical trials are never published or are published many years after completion. | Scoping Review, 2024 [16] |

| Neuroscience Journals | An analysis found that 180 out of 215 neuroscience journals do not explicitly welcome null studies, while only 14 accepted them without additional conditions. | Curry et al., 2025 [12] |

| Antidepressant Efficacy | Meta-analyses using unpublished data obtained via Freedom of Information requests showed the therapeutic value of antidepressants was significantly overestimated in the published literature. | Ioannidis, 2012 [18] |

Frequently Asked Questions (FAQs)

FAQ 1: What are the core cognitive biases affecting scientific literature? The two most impactful biases are the availability heuristic and confirmation bias.

- Availability Heuristic: Researchers may judge the likelihood of a phenomenon or the strength of evidence based on how easily examples come to mind. Dramatic, recent, or heavily media-covered findings are perceived as more representative than they are, skewing research focus and interpretation [23] [24].

- Confirmation Bias: This is the tendency to search for, interpret, favor, and recall information that confirms one's pre-existing beliefs or hypotheses [25] [26]. In research, it can lead to preferentially citing supportive literature and discounting contradictory evidence.

FAQ 2: How do these biases specifically contribute to publication bias? Publication bias occurs when the publication of research findings is influenced by the nature and direction of the results [18]. Availability heuristic and confirmation bias fuel this by creating an environment where:

- Positive results are more "available" and memorable, making them more likely to be submitted and published [18].

- Authors, reviewers, and editors may unconsciously favor results that confirm prevailing theories or hypotheses, leading to a systematic exclusion of null or negative findings from the scientific record [18] [27].

FAQ 3: What is the impact of this skewed literature on environmental degradation research? A literature skewed by these biases presents a distorted picture of reality, with severe consequences for environmental research:

- Misguided Policy: Policies may be based on an over-optimistic or incomplete understanding of interventions, leading to ineffective conservation efforts [28].

- Wasted Resources: Precious research funding and time are drained in futile quests based on false leads from biased literature [18].

- Impaired Scientific Self-Correction: When negative results are not published, the scientific community loses the ability to correctly identify and abandon false hypotheses, undermining the cumulative nature of science [18].

FAQ 4: How can I, as a researcher, mitigate these biases in my own work?

- Pre-register studies: Publicly commit to your hypothesis, methods, and analysis plan before conducting the research to resist the temptation of confirming after-the-fact patterns [27].

- Actively seek disconfirming evidence: Deliberately look for and engage with literature and data that challenge your initial hypothesis [26].

- Practice blind data analysis: Where possible, analyze data without knowing which group is the control and which is the experimental to prevent subjective interpretation.

FAQ 5: What systemic changes can help overcome these biases?

- Journals accepting Registered Reports: This format peer-reviews study proposals before data collection, committing to publication based on the methodological rigor, not the outcome [27].

- Mandatory registration and reporting of all trials: Registries like ClinicalTrials.gov for clinical research should be mirrored in environmental sciences to ensure all initiated studies, and their results, are accounted for [27].

- Platforms for publishing null results: Supporting journals and repositories dedicated to publishing well-conducted studies with null or negative findings makes these results "available" and restores balance to the literature [18].

Troubleshooting Guides

Issue: Suspecting a Skewed Literature Base in Your Field

Symptoms:

- Inability to find high-quality studies with null results on a popular topic.

- A published meta-analysis that relies only on positive findings.

- A feeling that the evidence for a established theory is fragile or non-replicable.

Diagnostic Steps:

- Check for Grey Literature: Search clinical trial registries (e.g., ClinicalTrials.gov), institutional repositories, and pre-print servers for studies that were completed but never published in a traditional journal [27].

- Conduct a Systematic Review: Instead of a narrative review, use systematic methods to locate all studies on a topic, reducing the risk of only selecting those that are easily available or confirm your view.

- Test for Publication Bias: Use statistical methods like funnel plots or p-curve analysis to detect gaps in the literature that suggest missing null results [28].

Solutions:

- Include Unpublished Data: Where possible and ethical, contact authors for raw data or include results from grey literature in your analyses.

- Publish Persistently: Advocate within your team and institution for the submission of all research outcomes, regardless of the result direction.

Issue: Combating Bias in Peer Review

Symptoms:

- Reviewer comments that dismiss robust null results as "uninteresting."

- Requests to remove citations to contradictory literature.

- A pattern of papers in a journal that only support a single, dominant narrative.

Corrective Actions:

- For Authors: In your manuscript, explicitly discuss and cite literature that contradicts your findings and provide a reasoned argument for your interpretation. This demonstrates intellectual honesty and pre-empts reviewer concerns.

- For Reviewers and Editors: Champion the value of methodological rigor over result direction. Ask specifically: "Is the method sound?" rather than "Is the result exciting?" [18].

Quantitative Data on Cognitive Biases and Publication

Table 1: Impact of Cognitive Biases on Decision-Making in Various Professional Fields [25]

| Professional Field | Most Prevalent Bias | Key Impact on Decision-Making |

|---|---|---|

| Management | Overconfidence | Impacts strategic decisions (e.g., mergers, acquisitions) leading to excessive risk-taking. |

| Finance | Overconfidence | Results in excessive trading and the disposition effect (selling winners too early, holding losers too long). |

| Medicine | Relative Risk Bias, Confirmation Bias | Influences diagnosis and treatment choices based on how risk information is framed and prior beliefs. |

| Law | Framing Effect, Hindsight Bias | Affects settlement decisions and judgments of negligence based on how information is presented. |

Table 2: Consequences of Publication and Dissemination Bias in Clinical Research [18] [27]

| Problem | Manifestation | Consequence |

|---|---|---|

| Non-Publication | ~50% of studies never published; negative results disproportionately filed away. | Distorted meta-analyses, overestimation of treatment effects, harm to patients. |

| Delayed Publication | Mean delay of over 2 years for presenting results at conferences and >5 years for full publication. | Critical public health information is withheld, impacting policy and care during crises. |

| Outcome Reporting Bias | Selective publication of only some outcomes from a trial (e.g., only positive secondary endpoints). | Misrepresentation of a drug's true efficacy and safety profile. |

Experimental Protocols for Bias Mitigation

Protocol: Pre-registration of a Study

Objective: To prevent confirmation bias and data dredging (p-hacking) by specifying the research plan in advance.

Materials: Online pre-registration platform (e.g., OSF, AsPredicted, ClinicalTrials.gov).

Methodology:

- Hypothesis: Precisely state the primary research question and hypothesis.

- Variables: Define all independent, dependent, and control variables.

- Study Design: Detail the experimental design, including randomization and blinding procedures.

- Sample Size: Justify the sample size with an a priori power analysis.

- Analysis Plan: Specify the exact statistical tests and models that will be used to test the primary hypothesis. Define any criteria for excluding data.

- Timeline: Outline the projected timeline for data collection and analysis.

Protocol: Conducting a Blind Analysis

Objective: To eliminate the influence of expectations on data analysis and interpretation.

Materials: A data analyst, a study coordinator, and anonymized datasets.

Methodology:

- Data Cleaning: The analyst performs initial data cleaning and processing based on a pre-defined script, without knowledge of group assignments.

- Data Anonymization: The study coordinator replaces group labels (e.g., "Control," "Treatment A") with arbitrary, non-informative codes (e.g., "Group 1," "Group 2").

- Analysis: The analyst runs the pre-registered statistical analysis on the anonymized dataset.

- Unblinding: Once the final results and figures are prepared, the study coordinator reveals the meaning of the group codes to the analyst for interpretation and manuscript writing.

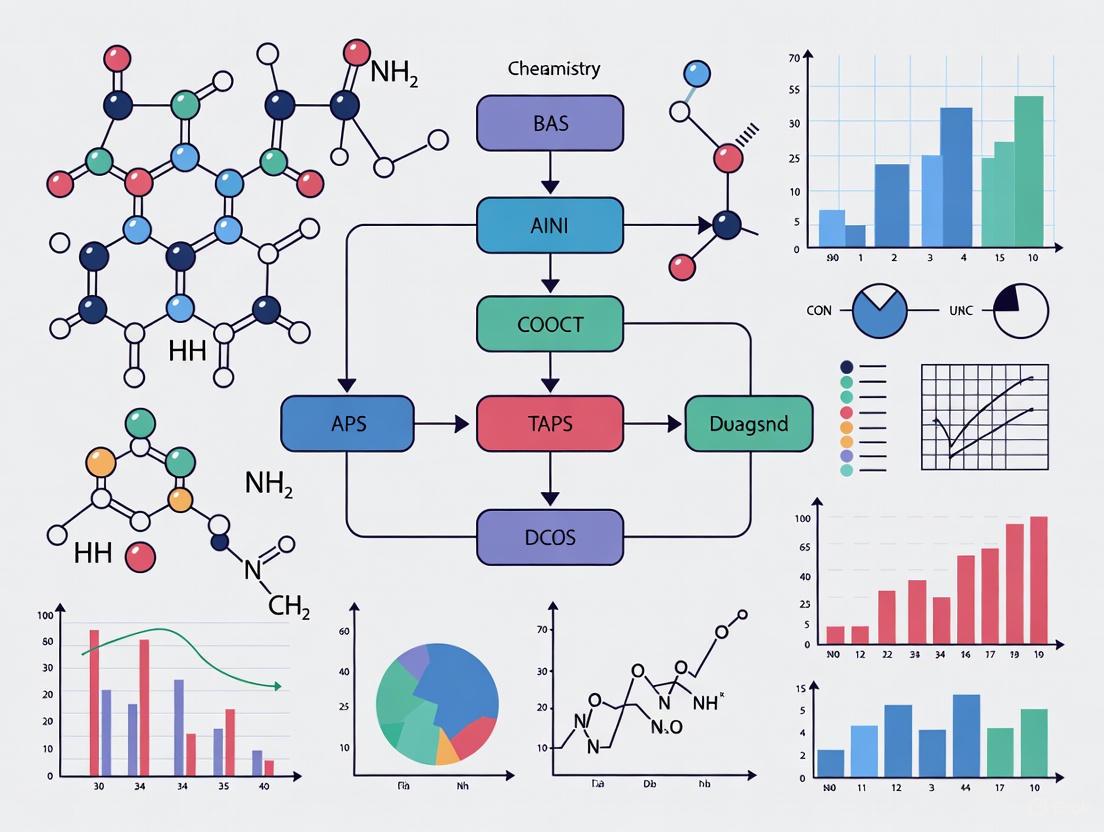

Visualizing Bias Mechanisms and Workflows

Diagram 1: How Biases Skew Literature

Diagram 2: Bias-Resistant Research Workflow

The Scientist's Toolkit: Key Reagents for Unbiased Research

Table 3: Essential Resources for Mitigating Bias in Research

| Tool / Resource | Function | Example Platforms / Uses |

|---|---|---|

| Pre-registration Platforms | Locks in research plans to prevent HARKing (Hypothesizing After Results are Known) and p-hacking. | AsPredicted, OSF Registries, ClinicalTrials.gov. |

| Data & Code Repositories | Ensures transparency and reproducibility by sharing raw data and analysis code. | Zenodo, Figshare, GitHub. |

| Blind Analysis Protocols | A methodology to prevent confirmation bias during data analysis by hiding group identities from the analyst. | Used internally by research teams following pre-defined scripts. |

| Null Result Journals / Sections | Provides a venue for publishing well-conducted studies with negative findings, combating the file drawer problem. | Journals like PLOS ONE (which accepts based on method, not result), dedicated sections in field-specific journals. |

| Systematic Review Software | Supports a comprehensive and unbiased synthesis of all existing literature on a topic. | Rayyan, Covidence, SRDR+. |

Real-World Consequences for Environmental Policy and Public Health

Technical Support Center: FAQs on Publication Bias & Environmental Research

This technical support center provides scientists and researchers with practical guidance for identifying, troubleshooting, and overcoming publication bias in environmental and public health research.

Frequently Asked Questions

FAQ 1: Our meta-analysis on soil carbon priming shows extreme heterogeneity (I² > 75%). How do we determine if this is due to true biological variation or publication bias?

- Answer: High heterogeneity is a known signal of potential publication bias, where studies with large, positive effects are over-represented [13]. Begin by constructing a funnel plot of your effect sizes (e.g., response ratios) against their standard errors. Asymmetry in the plot, with a gap in non-significant or negative results, indicates likely publication bias. Statistical methods like trim-and-fill can be used to estimate the number and effect size of missing studies to adjust your overall estimate [13]. A corrected, more moderate effect size (e.g., ~10.7% instead of 125%) is often a more reliable conclusion [13].

FAQ 2: We have compelling null results from a long-term field experiment on conservation practices. Which journals are most receptive to such findings?

- Answer: The publication landscape for null results is improving. First, target journals that explicitly state their commitment to reducing publication bias, often indicated by their support for initiatives like the San Francisco Declaration on Research Assessment (DORA). Second, consider journals specializing in negative results, such as PLOS ONE or BMC Research Notes. When submitting, frame the importance of your null result within the context of correcting the scientific record and preventing other researchers from wasting resources, as emphasized in recent critiques of priming effect literature [13].

FAQ 3: What is the minimum reporting standard for a study to be included in a future meta-analysis on environmental degradation, even if the results are null?

- Answer: To ensure future discoverability and utility, your study record must include, at a minimum: 1) Sample Size and Power Calculation, 2) Full Experimental Protocol, 3) Pre-specified Primary Outcome Variable, and 4) Complete Description of All Measured Variables. The most effective way to meet this standard is through study pre-registration on a platform like the Open Science Framework (OSF). Pre-registration makes all planned studies discoverable, combating the "file-drawer effect" [14].

FAQ 4: Our lab study on a new chemical's toxicity failed to replicate an earlier, high-impact study. How should we present this finding to avoid being dismissed?

- Answer: Directly address the replication crisis in your manuscript's introduction. Frame your work not as a simple "failure to replicate" but as a necessary investigation into the robustness of an existing claim. Provide a detailed, side-by-side comparison of your methodology and the original study's, highlighting any potential sources of discrepancy. Emphasize that the replication of findings is a cornerstone of the scientific method and that reporting these results is an ethical obligation to the research community [14].

Troubleshooting Guides for Common Experimental Issues

Problem: Net Carbon Balance Calculations Appear Inconclusive

- Symptoms: Positive priming of soil organic matter is observed, but the overall carbon budget does not show a net loss.

- Diagnosis: This is a common issue where the focus on a significant positive priming effect overshadows the more important metric: the net carbon balance. In many experiments, the quantity of new carbon inputs (e.g., from root exudates or crop residues) far exceeds the carbon lost via primed respiration [13].

- Solution: Always calculate and report the full carbon budget. The experimental C inputs must be quantified and compared directly to the C outputs from both basal and primed respiration. A net balance in favor of sequestration is frequently observed and should be the central conclusion, avoiding the misleading narrative that positive priming invariably leads to carbon loss [13].

Problem: Inability to Distinguish Between General and Rhizosphere Priming Effects

- Symptoms: Experimental results on soil organic matter mineralization are difficult to interpret or scale to ecosystem levels.

- Diagnosis: Priming effects (PE) driven by bulk litter and rhizosphere priming effects (RPE) driven by root exudates are often conflated. They operate at different spatial and temporal scales and have different driving factors [13].

- Solution: Employ methodologies tailored to the specific effect.

- For General PE: Use soil incubation studies with added litter or synthetic root exudates.

- For RPE: Use plant-soil systems, often with isotopic labeling (¹³C or ¹⁴C), to trace root-derived carbon. Clearly state in your publication which effect your study measures and avoid over-extrapolating conclusions beyond your experimental scale [13].

Problem: Ecological Analysis Reveals Weaker-than-Expected Correlations

- Symptoms: When linking aggregate data from separate surveys (e.g., air pollution data from one source and public health outcomes from another), the observed correlation is significantly attenuated.

- Diagnosis: This is likely sampling fraction bias, a methodological bias that occurs when combining aggregate measures from multiple sample datasets. The bias is proportional to the sampling fractions of the respective surveys [29].

- Solution: Apply a statistical adjustment to the correlation coefficient. The bias can be corrected using the formula:

- Adjusted Correlation = Observed Correlation / √(sfx * sfy)

where

sf_xandsf_yare the sampling fractions for the surveys collecting variables x and y, respectively. Using measurement error models is another robust adjustment method [29].

- Adjusted Correlation = Observed Correlation / √(sfx * sfy)

where

Table 1: Documented Consequences of Environmental Policy Shifts (2025)

| Policy Area | Specific Action | Quantitative Impact | Data Source |

|---|---|---|---|

| International Climate Leadership | Withdrawal from UNFCCC & Paris Agreement [30] | Projected global temperature rise of 2.5°C to 2.9°C (vs. 4°C pre-Paris) now at risk [30] | Center for American Progress |

| U.S. Power Sector | Repeal of 2024 Carbon Pollution Standards [31] | Affects sector responsible for ~25% of U.S. GHG emissions [31] | EPA Data |

| U.S. Transportation | Reconsideration of Vehicle GHG Standards [31] | Affects sector responsible for ~29% of U.S. GHG emissions [31] | EPA Data |

| Public Health | Deaths from air pollution in Africa (2017) [32] | 258,000 deaths (increased from 164,000 in 1990) [32] | UNICEF |

| Biodiversity | Decline in wildlife population sizes (1970-2016) [32] | Average decline of 68% across mammals, birds, fish, reptiles, and amphibians [32] | WWF Report |

Table 2: Cognitive Biases Driving Publication Bias in Environmental Science [13]

| Bias | Description | Impact on Priming Literature |

|---|---|---|

| Availability Heuristic | Overestimating the prevalence of a phenomenon based on easily recalled, "catchy" examples. | A few highly cited studies claiming dramatic C-loss from priming overshadow more common studies showing minimal effects. |

| Confirmation Bias | Interpreting data in a way that confirms pre-existing beliefs or the prevailing narrative. | Researchers may focus on data supporting the view that priming causes major C-loss while dismissing contradictory evidence. |

| Hindsight Bias | Believing an outcome was predictable after it has occurred. | After a positive priming effect is reported, researchers may claim they "knew it all along," reinforcing the narrative. |

| Inattentional Blindness | Failing to notice critical factors when focused on a specific outcome. | A narrow focus on the priming effect can cause researchers to ignore the net C balance, leading to incomplete conclusions. |

Experimental Protocols

Protocol 1: Assessing Net Carbon Balance in Soil Priming Studies

Objective: To accurately determine the net change in soil carbon stock following fresh carbon input, moving beyond the mere measurement of the priming effect.

Materials:

- Soil cores from relevant ecosystem

- ¹³C-labeled substrate (e.g., glucose, plant litter)

- Sealed incubation jars with septum

- Gas chromatograph or infrared gas analyzer (IRGA)

- Elemental analyzer coupled with an isotope ratio mass spectrometer (EA-IRMS)

Methodology:

- Soil Preparation: Sieve soil and adjust to a standardized water-holding capacity. Pre-incubate to stabilize microbial activity.

- Experimental Setup: Divide soil into treatment groups (n ≥ 5): a) Control (no addition), b) ¹³C-Labeled Substrate Addition.

- Incubation: Place soils in sealed jars and incubate at constant temperature. Periodically flush jars with CO₂-free air.

- Gas Sampling & Analysis: Sample headspace gas at regular intervals through the septum. Use IRGA to measure CO₂ concentration. Use IRMS to determine the δ¹³C of the evolved CO₂.

- Calculation:

- Total C respired = CO₂-C from control + CO₂-C from treatment

- Primed C = (Total CO₂-C from treatment) - (CO₂-C from control) - (Mineralized ¹³C-substrate)

- Net C Balance = (Amount of ¹³C-substrate added) - (Primed C + Mineralized ¹³C-substrate)

- Endpoint Analysis: Terminate incubation and analyze soil for total C and ¹³C content using EA-IRMS to directly measure C sequestration [13].

Protocol 2: Correcting for Sampling Fraction Bias in Ecological Analysis

Objective: To adjust correlation coefficients when using aggregate data from two independent sample surveys.

Materials:

- Aggregate-level data (e.g., means, proportions) for variables X and Y from two separate surveys.

- Population size for each aggregate group (N_c).

- Sample sizes for each aggregate group from both surveys (nxc, nyc).

Methodology:

- Calculate Sampling Fractions: For each group

c, calculate the sampling fraction for each dataset.sf_x = n_xc / N_csf_y = n_yc / N_c

- Compute Observed Correlation: Calculate the correlation coefficient (

r_observed) between the aggregate measures of X and Y across all groups. - Apply Bias Adjustment: Calculate the adjusted correlation coefficient (

r_adjusted) that estimates the true individual-level correlation using the formula derived from formal mathematical analysis [29]:r_adjusted = r_observed / √( sf_x * sf_y )

- Validation: For more complex sampling designs, employ a measurement error model as an alternative adjustment method to validate the results [29].

Research Workflow and Signaling Pathways

Research Bias and Mitigation Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Research on Publication Bias and Environmental Science

| Item | Function | Application Example |

|---|---|---|

| ¹³C or ¹⁴C Isotopic Label | Allows tracing of specific carbon pathways through ecosystems. | Critical for distinguishing primed soil carbon (old) from newly added substrate carbon (labeled) in net carbon balance studies [13]. |

| Open Science Framework (OSF) | A free, open-source platform for supporting research and enabling collaboration. | Used for pre-registering study hypotheses and methods, making all research efforts discoverable regardless of outcome [14]. |

| Measurement Error Models | Statistical models that account for errors in the measurement of independent variables. | Used to adjust for sampling fraction bias in ecological analyses when combining data from multiple surveys [29]. |

| Trim-and-Fill Statistical Method | A meta-analytic method to identify and correct for funnel plot asymmetry caused by publication bias. | Used to estimate the number and effect size of missing studies in a meta-analysis, providing a corrected overall effect estimate [13]. |

| Funnel Plot | A scatterplot of effect size against a measure of its precision (e.g., standard error). | A primary diagnostic tool for visually detecting publication bias in a body of literature; asymmetry suggests missing studies [13]. |

A Researcher's Toolkit: Practical Methods to Detect and Correct for Bias

In environmental research, robust synthetic findings are crucial for accurately diagnosing the scope and severity of degradation. However, publication bias—the preferential publication of statistically significant, "positive" results—threatens the validity of these conclusions. This technical guide details the implementation of funnel plots and Egger's regression test, key methodological tools for detecting and correcting for such bias in meta-analyses of environmental studies.

Frequently Asked Questions (FAQs)

1. What is a funnel plot and how does it detect publication bias? A funnel plot is a scatterplot designed to check for the existence of publication bias in a meta-analysis [33]. In the absence of bias, the plot resembles an inverted funnel: studies with high precision (e.g., lower standard error) cluster near the average effect size at the top, while studies with lower precision spread out evenly on both sides of the average at the bottom [33] [34]. Asymmetry in this plot, often with a missing "chunk" from the bottom-left or bottom-right quadrant, can indicate publication bias, where smaller studies showing no significant effect (or effects in an undesired direction) are missing from the literature [34].

2. What is Egger's regression test and how does it relate to the funnel plot? Egger's regression test is a statistical method that formally tests for funnel plot asymmetry [33] [35]. It uses a weighted linear regression to assess the association between a study's effect size and its precision (typically the standard error) [35]. A statistically significant result from Egger's test suggests the presence of small-study effects, which are often caused by publication bias [35] [36].

3. My funnel plot is asymmetric. Does this always mean there is publication bias? No. While asymmetry is commonly equated with publication bias, it can also arise from other factors, known collectively as "small-study effects" [34]. These include:

- Poor methodological quality in smaller studies [34].

- Data fabrication or inadequate analysis [34].

- Chance, especially if the meta-analysis includes only a small number of studies [34].

- True heterogeneity, where the intervention effect differs based on study size or population [33] [34]. Therefore, an asymmetric funnel plot should be interpreted as an indicator to investigate potential bias, not as definitive proof [33].

4. For binary outcomes (e.g., species presence/absence), are standard tests still valid? Caution is needed. For effect sizes like the odds ratio, a mathematical association with the standard error can exist even without publication bias, potentially inflating the false-positive rate of tests like Begg's or Egger's [35]. For binary outcomes, it is recommended to use tests designed specifically for them, such as Peters', Macaskill's, or Deeks' tests [35].

5. Which publication bias test is the best? No single test is universally best. A large-scale empirical comparison of seven tests found that Egger's regression test detected publication bias more frequently than others, but the agreement between different tests was often only weak to moderate [35]. The study concluded that "meta-analysts should not rely on a single test and may apply multiple tests with various assumptions" [35].

Table 1: Empirical Comparison of Common Publication Bias Tests [35]

| Test | Designed For | Core Methodology | Detection Rate in Cochrane Meta-Analyses (Binary Outcomes) |

|---|---|---|---|

| Egger's Regression Test | All outcomes | Weighted linear regression of effect size on its standard error | 15.7% |

| Macaskill's Regression Test | Binary outcomes | Weighted linear regression of effect size on total sample size | 14.1% |

| Peters' Regression Test | Binary outcomes | Weighted linear regression of effect size on inverse sample size | 11.8% |

| Deeks' Regression Test | Binary outcomes | Weighted linear regression of effect size on inverse effective sample size | 11.5% |

| Trim-and-Fill Method | All outcomes | Iteratively imputes missing studies to create symmetry | 10.1% |

| Tang's Regression Test | All outcomes | Weighted linear regression of effect size on inverse root sample size | 11.4% |

| Begg's Rank Test | All outcomes | Rank correlation between standardized effect and its variance | 8.2% |

Troubleshooting Guides

Issue 1: Interpreting an Asymmetric Funnel Plot

Problem: Your funnel plot shows clear asymmetry, but you are unsure of the cause and the implications for your meta-analysis on, for instance, the efficacy of different conservation interventions.

Solution:

- Do not rely on visual inspection alone. Researchers have a poor ability to visually identify publication bias from funnel plots [33] [34]. Always complement the plot with statistical tests.

- Conduct multiple statistical tests. As shown in Table 1, run a suite of tests appropriate for your data (e.g., Egger's test, and the trim-and-fill method). Consistent results across tests strengthen the evidence for bias.

- Investigate sources of heterogeneity. Explore whether methodological quality, population differences, or intervention intensity are correlated with study size and effect size. This can be done via subgroup analysis or meta-regression.

- Apply bias-correction methods. Use methods like the trim-and-fill analysis, which imputes hypothetical missing studies to create a symmetric funnel and then recalculates the pooled effect [35] [36]. Report both the original and adjusted estimates.

- Acknowledge the uncertainty. In your report, explicitly state the presence of funnel plot asymmetry, the potential for publication bias, and how it may have influenced your summary effect.

Issue 2: Low Power of Statistical Tests for Publication Bias

Problem: Your meta-analysis includes a limited number of studies, and Egger's test is non-significant, yet you suspect publication bias.

Solution:

- Recognize the limitation. Tests like Egger's have low statistical power, particularly when the number of studies is small (e.g., < 20) [34] [36]. A non-significant result does not rule out publication bias.

- Supplement with non-statistical methods. Proactively search for unpublished evidence:

- Search clinical trials registries (e.g., ClinicalTrials.gov) and records of regulatory agencies.

- Examine scientific conference proceedings for presented-but-unpublished studies.

- Contact experts in the field for known ongoing or unpublished studies [35].

- Consider the impact. Estimate how many null studies would need to be in "file drawers" to render your statistically significant result non-significant. This is often called the "fail-safe N" approach.

Issue 3: Implementing Analyses in Statistical Software (R)

Problem: You want to create a funnel plot and perform Egger's test using the metafor package in R but are unsure of the basic syntax and how to customize the plot.

Solution: Below is a fundamental experimental protocol for a random-effects meta-analysis and subsequent publication bias assessment.

Experimental Protocol: Publication Bias Analysis

- Software: R

- Primary Package:

metafor

Table 2: Research Reagent Solutions: Key Software & Functions

| Item | Function/Description | Application in Analysis |

|---|---|---|

| R Statistical Environment | An open-source software environment for statistical computing. | The foundational platform for conducting the meta-analysis and bias diagnostics. |

metafor Package |

A comprehensive R package for conducting meta-analyses. | Provides the rma(), funnel(), and regtest() functions for model fitting, plotting, and testing. |

rma() function |

Fits meta-analytic fixed, random, and mixed-effects models. | Calculates the pooled effect estimate and its confidence interval, forming the basis for the funnel plot. |

funnel() function |

Creates a funnel plot from a meta-analysis model object. | Visualizes the distribution of study effects against their precision to allow for asymmetry checks. |

regtest() function |

Performs a regression test for funnel plot asymmetry (Egger's test). | Provides a statistical p-value to objectively assess the presence of small-study effects. |

Workflow Diagram

FAQs on Publication Bias and Correction

What is publication bias, and why is it a problem in environmental research? Publication bias occurs when studies with statistically significant results are more likely to be published than those with non-significant or null findings [37]. In environmental research, this can lead to overestimating the effectiveness of policies or the severity of a pollutant's health impact, misdirecting regulatory efforts and resources [37].

How can I visually check for publication bias in my meta-analysis? The most common visual method is the funnel plot [38] [37]. It plots each study's effect size (e.g., a risk ratio) against a measure of its precision (e.g., standard error). In the absence of bias, the plot resembles an inverted, symmetrical funnel. Asymmetry, often with a gap in the bottom-right of the plot, suggests potential publication bias, where small studies showing no effect are missing [38] [37].

What is the Trim-and-Fill method? Trim-and-Fill is a statistical method used to correct for funnel plot asymmetry [37]. It first "trims" the smaller studies from the asymmetric side of the funnel, estimates the true center of the studies, and then "fills" (imputes) hypothetical missing studies by mirroring the trimmed ones. This provides an adjusted, "corrected" overall effect size [38] [37].

Are there alternatives to the Trim-and-Fill method? Yes. Egger's regression test is a statistical method to quantify funnel plot asymmetry [39] [37]. Other advanced methods include selection models and PET-PEESE, which model the publication selection process but can be complex to implement [38] [37].

My meta-analysis shows signs of publication bias. What should I do? The next crucial step is to conduct sensitivity analyses [37] [40]. Run your analysis using multiple correction methods (e.g., Trim-and-Fill, Egger's test, selection models) and compare the adjusted effect sizes to your original finding. This tests how robust your conclusions are to different assumptions about the bias [37].

Troubleshooting Guide: Dealing with Suspected Publication Bias

Problem: Your funnel plot is asymmetrical, or you suspect that your meta-analysis on an environmental topic (e.g., the impact of a regulation) is skewed because studies with null results were never published.

Step 1: Identify and Quantify the Problem

- Action: Generate a funnel plot and perform a statistical test for asymmetry, such as Egger's regression test [39] [37].

- Protocol:

- Using your meta-analysis software (e.g., R, Stata, JASP), input the effect size and its standard error for each included study.

- Plot the funnel graph. Look for visual asymmetry.

- Run Egger's test. A statistically significant intercept (typically p < 0.05) indicates significant funnel plot asymmetry [37].

- Data Interpretation: The table below summarizes the key outputs and their meanings.

Table: Interpreting Initial Bias Detection Tests

| Method | What to Look For | Indication of Potential Bias |

|---|---|---|

| Funnel Plot | Asymmetrical shape, gap in bottom-right quadrant | Visual suggestion of "missing" studies [37] |

| Egger's Test | Significant p-value (p < 0.05) for the intercept | Statistical evidence of small-study effects [37] |

Step 2: Apply Corrective Methods

- Action: Use the Trim-and-Fill method to estimate an adjusted effect size.

- Protocol for Trim-and-Fill:

- The algorithm iteratively removes (trims) the most extreme small studies from the asymmetric side.

- It calculates a pooled effect estimate from the remaining symmetrical set.

- The trimmed studies are then replaced, and their missing "mirror" counterparts are added (filled) to the data.

- A final adjusted effect size is computed using both the original and the imputed studies [37].

- Note: Be aware that the Trim-and-Fill method is not robust when there is large between-study heterogeneity and that it "corrects" the analysis by adding imputed data points [38] [37].

Step 3: Perform Sensitivity Analysis

- Action: Assess the robustness of your findings by comparing results from different models and correction techniques [40].

- Protocol:

- Record the original pooled effect size from your random- or fixed-effects model.

- Record the adjusted effect size from the Trim-and-Fill procedure.

- If possible, compute effect sizes using other methods like selection models or meta-regression.

- Compare the range of effect sizes. If your conclusion (e.g., "Policy A has a significant positive effect") changes after correction, this indicates that your initial result is not robust and may be heavily influenced by bias [37].

Table 2: Example Sensitivity Analysis from an Environmental Meta-Analysis

| Analytical Model | Pooled Effect Size (Correlation) | 95% Confidence Interval | Interpretation |

|---|---|---|---|

| Original Random-Effects | 0.28 | (0.14, 0.41) | Significant positive relationship |

| Trim-and-Fill Adjusted | 0.25 | (0.10, 0.39) | Significant, but slightly weaker relationship |

| Conclusion | The finding of a significant relationship appears robust to potential publication bias. |

Experimental Protocols for a Robust Meta-Analysis

Protocol 1: Comprehensive Literature Search to Minimize Bias

- Objective: To identify all relevant studies, including unpublished or "gray" literature, to reduce the risk of publication bias from the outset [40].

- Methodology:

- Search Multiple Databases: Systematically search major bibliographic databases (e.g., PubMed, Embase, Web of Science, Scopus) and specialized environmental science databases [40].

- Gray Literature: Search for trial registrations, dissertations, and government reports.

- No Language Restrictions: Avoid excluding studies based on language to prevent language bias [40].

- Register Your Protocol: Preregister your systematic review protocol on PROSPERO to enhance transparency [40].

Protocol 2: Statistical Analysis and Bias Assessment Workflow

The following diagram visualizes the key stages of the statistical workflow for assessing and correcting publication bias.

The Scientist's Toolkit: Essential Software for Meta-Analysis

The following table details key software tools that can be used to perform the analyses described in this guide.

Table: Key Software Tools for Corrective Meta-Analyses

| Tool Name | Primary Function | Key Feature for Bias Correction | Cost & Accessibility |

|---|---|---|---|

| R (with packages like metafor) | Statistical computing and graphics. | Highly flexible; allows implementation of funnel plots, Egger's test, Trim-and-Fill, and advanced selection models [41] [40]. | Free and open-source [41]. |

| Stata | General statistical software. | Has user-written commands (e.g., metan) for comprehensive meta-analysis and bias diagnostics [40]. | Commercial, high cost [41]. |

| JASP | User-friendly statistical software with GUI. | Provides point-and-click access to funnel plots and the Trim-and-Fill method, as used in published research [42]. | Free and open-source [41]. |

| OpenMetaAnalyst | Stand-alone meta-analysis software. | Designed specifically for meta-analysis, includes tools for assessing publication bias [40]. | Free and open-source. |

Within the critical field of environmental degradation research, the soil priming effect (PE)—the phenomenon where fresh carbon inputs to soil alter the decomposition rate of existing soil organic matter (SOM)—is a pivotal but challenging concept. Accurate quantification of PE is essential for predicting soil carbon stocks and climate feedbacks. However, this research area is not immune to the broader crisis of reproducibility in science, often fueled by publication bias—the preferential publication of statistically significant, positive, or dramatic results.

This publication bias can create a distorted literature where inflated priming effect estimates are over-represented, while null or negative results remain in the file drawer. This technical support center provides troubleshooting guides and FAQs to help researchers identify and correct sources of error and bias in their PE experiments, thereby enhancing the reliability and reproducibility of soil carbon science.

Troubleshooting Guides & FAQs

FAQ 1: Why might my soil carbon measurements be unreliable, and how does this affect priming effect estimates?

Answer: Inconsistent soil sample processing is a major, often overlooked, source of large measurement errors that can directly lead to inflated or unreliable priming effect estimates. A 2025 study comparing eight laboratories found that processing protocols introduced significant variability. If your baseline soil organic carbon (SOC) measurements are inaccurate, any calculated priming effect based on changes in SOC will be inherently flawed [43].

Troubleshooting Guide: Common Soil Processing Errors and Solutions

| Error Source | Impact on Measurement | Corrective Action |

|---|---|---|

| Using a mechanical grinder for sieving | Fails to effectively remove coarse roots/rocks; results in higher variability and significantly different C measurements [43]. | Sieve to < 2 mm using a mortar and pestle or rolling pin to gently break aggregates and remove coarse materials [43]. |

| Inadequate fine grinding (> 250 µm) | Leads to a higher coefficient of variance due to poor sample homogenization [43]. | Fine-grind soils to < 125 µm or < 250 µm prior to elemental analysis to improve homogeneity and precision [43]. |

| Omission of oven-drying (or moisture correction) | On average, results in a 3.5% lower TC and 5% lower SOC measurement due to residual moisture inflating soil mass [43]. | Oven-dry soils at 105°C prior to elemental analysis to adequately remove moisture [43]. |

FAQ 2: What experimental design biases most commonly lead to overestimated effects?

Answer: The two most prevalent experimental design flaws that introduce bias are a lack of blinding and inadequate randomization. These are forms of confirmation bias (or observer bias), where researchers' unconscious expectations influence the collection or interpretation of data [44].

Troubleshooting Guide: Mitigating Cognitive Biases in Experimental Design

| Bias Type | Risk | Control Measure |

|---|---|---|

| Lack of Blinding | Overestimation of the effects under study when the researcher is aware of the hypothesis or treatment condition of a sample [44]. | Implement blinding procedures wherever possible. For lab incubations, this could involve having a technician who is unaware of the experimental hypotheses process samples or analyze data [44]. |

| Inadequate Randomization | Overestimation of effects due to the non-random, subjective selection of experimental units (e.g., soil samples, pots, field plots) [44]. | Perform a true random choice of experimental units using a random number generator, rather than a haphazard (convenience) selection [44]. |

| Selective Reporting | Publication bias, where only statistically significant priming effects are published, skewing the scientific record [44]. | Report all results, not only statistically significant ones, and pre-register experimental designs to commit to a plan of analysis [44]. |

FAQ 3: My priming effects are highly variable. What are the key drivers I should be measuring and controlling for?

Answer: Priming effects are inherently variable, but this variability is not random. The stability of the native soil organic matter (SOM) is a dominant driver, often more important than soil, plant, or even microbial properties. A large-scale geographic study found that SOM stability explained 38.6% of the variance in priming intensity, far more than other factors [45].

Troubleshooting Guide: Key Drivers of Priming Effects

| Factor Category | Specific Variable | Relationship with Priming Effect | How to Measure/Control |

|---|---|---|---|

| SOM Stability | Chemical Recalcitrance | Positive correlation with recalcitrant pools (e.g., polymers of lipid and lignin). Negative correlation with labile pools (e.g., non-cellulosic polysaccharides) [45]. | Acid hydrolysis; biomarker analysis; two-pool C decomposition model [45]. |

| Physico-chemical Protection | Negative correlation with mineral-organic associations (Fe/Al oxides, exchangeable Ca) and C in microaggregates/silt+clay [45]. | Aggregate fractionation; sequential extraction for minerals; analysis of Fe, Al, Ca oxides [45]. | |

| Stoichiometry | Substrate N/C Ratio | Priming magnitude declines as N availability increases. Low N/C ratio substrates induce significant positive priming [46] [47]. | Use substrates with defined C/N ratios; consider adding N with C to test stoichiometric constraints [47]. |

| Microbial Community | r vs. K-strategists | Shifts in microbial community composition (e.g., increased Proteobacteria) can regulate PE [48]. | DNA-SIP; high-throughput qPCR; microbial biomass assays [48]. |

The following diagram summarizes the relationship between methodological errors and inflated priming effect estimates, and the pathway to corrective actions:

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and methods used in modern, rigorous priming effect research.

Table: Essential Reagents and Methods for Priming Effect Studies

| Reagent / Method | Function in Priming Research | Technical Notes |

|---|---|---|

| 13C-Labeled Glucose | A standard labile C source used to induce priming. The 13C label allows researchers to distinguish CO₂ derived from the added substrate vs. native SOM, enabling precise PE calculation [48] [45]. | |

| Microdialysis Probes | A novel method to continuously release substrates into the soil, providing a more realistic simulation of root exudation compared to single-pulse additions. This method can yield higher substrate respiration and different CUE [46]. | |