Optimizing Performance Characteristics of Learning Systems for Drug Development: A 2025 Guide for Researchers

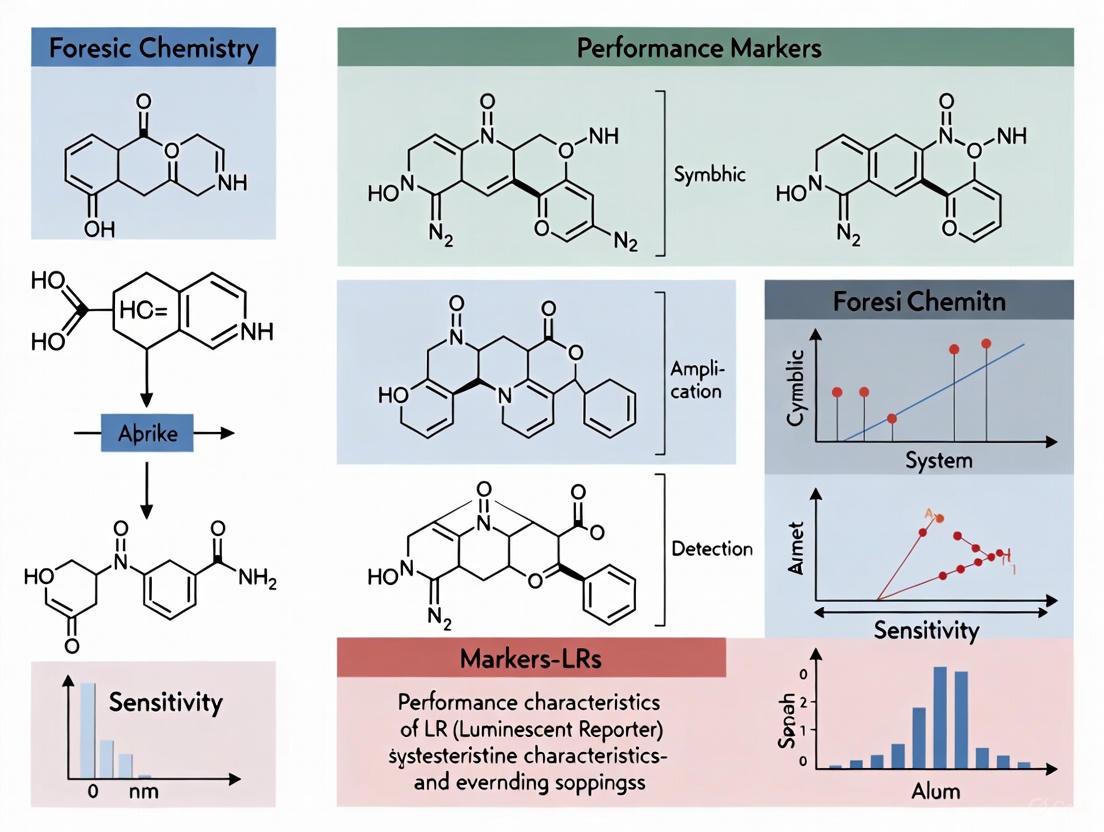

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, apply, and optimize machine learning (LR) systems.

Optimizing Performance Characteristics of Learning Systems for Drug Development: A 2025 Guide for Researchers

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, apply, and optimize machine learning (LR) systems. It covers foundational optimization algorithms, methodological applications in clinical and biomedical contexts, practical troubleshooting for performance bottlenecks, and robust validation techniques for reliable model comparison. The guide synthesizes current trends to enhance R&D efficiency, improve predictive accuracy, and accelerate the translation of data into therapeutic insights.

Core Principles and Modern Algorithms Powering Learning Systems in Research

Optimization algorithms form the computational backbone of modern scientific research, from training machine learning models to automating drug discovery pipelines. These methods can be broadly categorized into two distinct paradigms: gradient-based methods, which leverage derivative information to efficiently navigate the loss landscape, and population-based methods, which maintain and evolve multiple candidate solutions simultaneously. Within the context of performance characteristics in laboratory research systems, understanding the trade-offs between these approaches is critical for selecting the appropriate tool for a given scientific problem. This guide provides an objective comparison of these families of algorithms, detailing their operational principles, experimental performance, and optimal application domains to inform researchers, scientists, and drug development professionals.

Theoretical Foundations and Algorithmic Taxonomy

The fundamental divergence between gradient-based and population-based optimization methods stems from their underlying search mechanisms and information requirements.

Gradient-Based Methods are first-order iterative algorithms that utilize the gradient (first derivative) of an objective function to determine the direction of steepest descent for parameter updates [1]. The core update rule for standard Gradient Descent is ( x{t+1} = xt - \gammat \nabla f(xt) ), where ( \gammat ) is the learning rate and ( \nabla f(xt) ) is the gradient of the objective function at the current point ( x_t ) [1]. These methods assume the optimization landscape is a smooth manifold where gradient information provides a reliable direction toward local minima [2]. Common variants include Stochastic Gradient Descent (SGD), which uses a single data point to compute the gradient, and Mini-Batch Gradient Descent, which strikes a balance between variance and computational efficiency [1]. Modern enhancements like Momentum incorporate information from previous updates to accelerate convergence and navigate regions of high curvature more effectively [1].

Population-Based Methods, predominantly Evolutionary Algorithms (EAs), operate on fundamentally different principles inspired by natural selection [1] [3]. These algorithms maintain a population of candidate solutions that undergo iterative evolution through selection, crossover (recombination), and mutation operations [1] [3]. Unlike gradient-based methods, EAs do not require gradient information and can optimize directly on black-box functions or over complex, discrete structures where derivatives are unavailable or undefined [4] [2]. Key components include a fitness function that evaluates solution quality, selection mechanisms that prioritize fitter individuals for reproduction, and genetic operators that introduce diversity to explore the search space [1]. Genetic Algorithms (GAs) and Differential Evolution (DE) are prominent examples, with the latter creating new candidate solutions through vector addition and mixing operations [1].

Table 1: Fundamental Characteristics of Optimization Paradigms

| Characteristic | Gradient-Based Methods | Population-Based Methods |

|---|---|---|

| Core Principle | Follows gradient direction | Simulates natural evolution |

| Information Used | First/second derivatives | Objective function values only |

| Solution Representation | Single point in parameter space | Population of candidate solutions |

| Search Mechanism | Local, deterministic direction | Global, stochastic exploration |

| Theoretical Guarantees | Strong local convergence | Often heuristic with few guarantees |

| Handling Non-Smooth Spaces | Poor performance | Effective on complex/discrete spaces |

Experimental Performance and Benchmark Analysis

Empirical evaluations across various problem domains reveal distinct performance profiles for gradient-based and population-based optimization methods, with hybrid approaches increasingly demonstrating complementary advantages.

Convergence Behavior and Sample Efficiency

Gradient-based methods typically exhibit superior sample efficiency on smooth, continuous optimization problems where accurate gradients are computable. The Population-based Variance-Reduced Evolution (PVRE) algorithm, which combines evolutionary strategies with variance reduction techniques, achieves a function evaluation complexity of ( \mathscr{O}(n\epsilon^{-3}) ) for finding an (\epsilon)-accurate first-order optimal solution [4]. This matches the best-known complexity bounds for zeroth-order stochastic optimization, indicating that carefully designed population methods can approach the theoretical efficiency of gradient-based approaches [4].

In reinforcement learning domains, the hybrid Evolutionary Policy Optimization (EPO) algorithm demonstrates how combining evolutionary exploration with policy gradients can overcome limitations of purely gradient-based approaches. EPO maintains a population of agents conditioned on latent variables while sharing actor-critic network parameters, enabling it to "aggregate diverse experiences into a master agent" [5]. This architecture outperforms state-of-the-art baselines in sample efficiency, asymptotic performance, and scalability across dexterous manipulation, legged locomotion, and classic control tasks [5].

Scalability and Parallelization

Population-based methods exhibit superior scaling properties with increasing computational resources, as noted in the analysis of Evolutionary Policy Optimization: "Evolutionary Algorithms (EAs) scale naturally and encourage exploration via randomized population-based search" [5]. This scalability stems from the inherent parallelism of population-based approaches, where each candidate solution can be evaluated independently across distributed computing resources [2].

Conversely, purely on-policy gradient methods struggle with scalability: "policy-gradient algorithms do not scale well with larger batch sizes: because data are collected from the current policy, adding more parallel environments does not guarantee greater diversity" [5]. The data distribution quickly converges when sampling from a single policy, causing diminishing returns with additional parallel environments.

Table 2: Experimental Performance Comparison Across Domains

| Problem Domain | Gradient-Based Performance | Population-Based Performance | Key Findings |

|---|---|---|---|

| Continuous Control RL | High asymptotic performance but limited diversity | Superior scalability and exploration | EPO hybrid outperforms both in sample efficiency and final performance [5] |

| Black-Box Stochastic Optimization | Limited without gradients | Effective with variance reduction | PVRE achieves ( \mathscr{O}(n\epsilon^{-3}) ) complexity [4] |

| Biomedical Pipeline Optimization | Requires differentiable pipeline | Effective for non-differentiable spaces | TPOT uses GP to optimize complete ML pipelines [3] |

| High-Dimensional Multimodal Problems | Prone to local minima | Better global exploration capability | GAs outperform Bayesian optimization in some media mix modeling [2] |

| Multiobjective Optimization | Single solution per run | Natural Pareto front approximation | NSGA-II in TPOT finds multiple trade-off solutions [3] |

Methodological Protocols for Experimental Optimization

To ensure reproducible comparisons between optimization approaches, researchers should adhere to standardized experimental protocols encompassing problem formulation, algorithm configuration, and evaluation metrics.

Zeroth-Order Stochastic Optimization Protocol

The Population-based Variance-Reduced Evolution (PVRE) method provides a rigorous protocol for black-box stochastic optimization problems of the form ( \min{x \in \mathbb{R}^n} f(x) = \mathbb{E}{\xi \sim \mathscr{D}}[F(x;\xi )] ), where only function values ( F(x;\xi) ) are accessible rather than gradients [4].

Experimental Workflow:

- Gaussian Smoothing: Create a smooth approximation of the objective function: ( f\eta(x) = \mathbb{E}{v \sim \mathscr{N}}[f(x + \eta v)] ), where ( \eta ) is the smoothing radius [4]

- Gradient Estimation: Compute the gradient estimate using finite differences: ( g = \frac{f(x + \eta v) - f(x - \eta v)}{2\eta} v ), where ( v \sim \mathscr{N}(0,I) ) [4]

- Normalized Momentum: Apply a STORM-like momentum mechanism: ( dt = (1-a{t-1}) d{t-1} + a{t-1} gt + (1-a{t-1})(gt - g{t-1}) ) to reduce variance [4]

- Population-Based Refinement: Evolve multiple solutions simultaneously to further reduce noise in the solution space [4]

Evaluation Metrics: Function evaluation complexity, convergence rate to (\epsilon)-accurate solution, and wall-clock time for practical convergence [4].

Evolutionary Reinforcement Learning Protocol

The Evolutionary Policy Optimization (EPO) framework combines evolutionary diversity with policy gradient updates, providing a protocol for reinforcement learning tasks [5].

Experimental Workflow:

- Population Initialization: Create a population of agents with shared network weights but unique latent variables ("genes") for diversity [5]

- Parallel Experience Collection: All agents interact with their environments simultaneously to gather diverse trajectories [5]

- Fitness Evaluation: Assess each agent's performance using the cumulative reward objective [5]

- Darwinian Selection: Remove low-performing agents and retain elites for reproduction [5]

- Genetic Operations: Apply crossover and mutation to elite agents to create offspring while maintaining behavioral diversity [5]

- Policy Gradient Updates: Update all agents using proximal policy optimization (PPO) with shared reward signals [5]

- Experience Aggregation: Use Split-and-Aggregate Policy Gradient (SAPG) to fold follower data into the master agent's updates via importance sampling [5]

Evaluation Metrics: Sample efficiency (performance vs. environment interactions), asymptotic performance (final reward), scalability (performance with increasing parallel workers), and behavioral diversity [5].

Research Reagent Solutions: Optimization Toolkit

Implementing rigorous optimization experiments requires both software tools and methodological components. The following table catalogs essential "research reagents" for computational optimization research.

Table 3: Essential Research Reagents for Optimization Experiments

| Research Reagent | Function | Example Implementations |

|---|---|---|

| Gradient Estimators | Approximate derivatives when unavailable | Gaussian smoothing with finite differences [4] |

| Variance Reduction Modules | Reduce stochastic noise in updates | STORM momentum with recursive error correction [4] |

| Population Managers | Maintain and evolve candidate solutions | Genetic Algorithm with selection, crossover, mutation [1] |

| Fitness Evaluators | Assess solution quality | Objective function with multi-criteria support [3] |

| Hyperparameter Optimizers | Tune algorithm parameters | Bayesian Optimization with Tree Parzen Estimator [6] |

| Pareto Front Calculators | Identify non-dominated solutions in multiobjective optimization | Non-dominated Sorting Genetic Algorithm II (NSGA-II) [3] |

| Convergence Diagnostics | Detect algorithm termination points | Gradient norm thresholds or performance plateau detection [4] |

The taxonomy of modern optimization methods reveals a sophisticated landscape where gradient-based and population-based approaches offer complementary strengths rather than competing solutions. Gradient-based methods provide theoretical soundness and sample efficiency for smooth, continuous problems where derivative information is available, while population-based approaches excel in scalability, global exploration, and handling of non-differentiable or discrete spaces. The emerging class of hybrid algorithms, such as PVRE and EPO, demonstrates that combining theoretical guarantees with evolutionary diversity can achieve superior performance across challenging domains including reinforcement learning, biomedical pipeline optimization, and complex control tasks. For researchers and drug development professionals, selection criteria should include problem differentiability, available parallel resources, solution quality requirements, and the need for multiobjective optimization. As optimization demands grow in complexity and scale, the continued synthesis of these paradigms will likely yield increasingly powerful tools for scientific discovery.

Adaptive optimization algorithms represent the key pillar behind the rise of the machine learning field, enabling efficient training of complex models across diverse domains from drug discovery to AI development [7]. These algorithms automatically adjust model parameters to minimize a loss function, with different families of optimizers—from gradient-based methods like AdamW and AdamP to evolutionary strategies like CMA-ES—excelling in distinct problem domains [8]. Understanding their performance characteristics is crucial for researchers and scientists seeking to optimize computational experiments in fields like drug development, where efficient resource allocation can significantly accelerate research timelines.

This guide provides an objective comparison of adaptive algorithm performance, presenting structured experimental data and detailed methodologies to inform selection decisions for specific research applications within the broader context of performance characteristics in large-scale systems research.

Performance Comparison of Adaptive Algorithms

The table below summarizes the key performance characteristics, strengths, and limitations of major adaptive algorithm families:

| Algorithm | Type | Key Mechanism | Best Performing Domains | Key Limitations |

|---|---|---|---|---|

| AdamW [8] | Gradient-based | Adaptive learning rates with decoupled weight decay | Computer Vision (CNNs), NLP tasks | Can converge to suboptimal solutions on some convex problems [7] |

| AdamP [8] | Gradient-based | Adaptive learning rates with parameter-wise scaling | Computer Vision, handling scale-invariant weights | Limited explicit convergence guarantees |

| CMA-ES [9] | Evolutionary Strategy | Covariance matrix adaptation of search distribution | Non-linear, non-convex black-box optimization; rugged search landscapes [9] | Slower on purely convex-quadratic functions vs. gradient-based methods [9] |

| AMSGrad [7] | Gradient-based | Adaptive learning rates with guaranteed convergence | Non-convex stochastic optimization [7] | Requires increasing mini-batch sizes for optimal convergence [7] |

| TAO [10] | Test-time Adaptive | Reinforcement learning with test-time compute | LLM tuning on enterprise tasks without labeled data [10] | Requires thousands of example inputs and accurate scoring method [10] |

| DE-SG [11] | Evolutionary Strategy | Differential Evolution with separated groups & migration | Multi-dimensional optimization, rotated problems [11] | Performance significantly depends on the problem [11] |

Experimental Analysis and Benchmarking

Comparative Performance on Standard Benchmarks

Experimental results on rotated benchmark problems reveal significant performance variations between algorithm classes. In comprehensive testing, CMA-ES and AMALGAM were identified as top performers due to their nearly 100% success rate and rapid convergence characteristics [11]. The Differential Evolution with Separated Groups (DE-SG) algorithm also demonstrated competitive performance, particularly on problems with rotation transformations that challenge many evolutionary approaches [11].

For large language model tuning, TAO has demonstrated an ability to outperform traditional fine-tuning approaches that require thousands of labeled examples. In enterprise tasks including document question answering and SQL generation, TAO brought efficient open-source models like Llama 8B and 70B to similar quality levels as expensive proprietary models like GPT-4o without requiring labeled data [10].

Neural Network Training Performance

In neural network training for non-convex problems, adaptive algorithms with momentum terms have shown significant improvements. Novel adaptive algorithms with additional momentum steps and shifted updates have demonstrated strong theoretical convergence properties and empirical performance in stochastic non-convex optimization settings [7]. These approaches maintain connections to both accelerated gradient methods and AMSGrad-type momentum techniques, providing robust performance across various network architectures.

Experimental Protocols and Methodologies

Benchmarking Protocol for Continuous Optimization

The experimental methodology for evaluating evolutionary strategies like CMA-ES and DE-SG typically involves:

Test Functions: Utilizing standardized benchmark suites including 19 rotated 10-to-50-dimensional test problems that challenge algorithm robustness [11]. Functions include sphere, Rastrigin, and other multimodal landscapes that test exploratory capabilities [12].

Performance Metrics: Measuring success rates, convergence speed (number of function evaluations to reach target), and solution accuracy across multiple independent runs [11].

Parameter Settings: Applying default or recommended parameter values across all compared algorithms to ensure fair comparison. For CMA-ES, this includes using the default population size unless employing restart strategies with increasing populations [9].

LLM Tuning Protocol with TAO

The TAO methodology employs a four-stage pipeline for model improvement without labeled data [10]:

Response Generation: Collect example input prompts and generate diverse candidate responses using various generation strategies from chain-of-thought to sophisticated reasoning techniques.

Response Scoring: Evaluate generated responses using reward modeling, preference-based scoring, or task-specific verification with LLM judges or custom rules.

Reinforcement Learning Training: Apply RL-based approaches to update the LLM, guiding it to produce outputs aligned with high-scoring responses.

Continuous Improvement: Leverage naturally collected LLM usage data from deployed applications to enable ongoing model refinement.

Algorithm Relationships and Workflows

The following diagram illustrates the conceptual relationships between major adaptive algorithm families and their typical application workflows:

| Resource | Function | Application Context |

|---|---|---|

| Ax Platform [13] | Adaptive experimentation platform | Bayesian optimization for complex parameter tuning |

| CMA-ES Implementation [9] | Evolutionary algorithm implementation | Continuous optimization for non-linear, non-convex problems |

| DBRM [10] | Enterprise-focused reward model | Scoring signal for TAO method across diverse tasks |

| Benchmark Functions [11] | Standardized test problems | Algorithm performance evaluation and validation |

| Simulation Environments [13] | Hardware/software testing | AR/VR hardware design and infrastructure optimization |

The adaptive algorithm landscape offers diverse solutions tailored to distinct optimization challenges. Gradient-based methods like AdamW and AMSGrad excel in deep learning applications where gradients are readily available, while evolutionary approaches like CMA-ES dominate black-box optimization problems with rugged landscapes. The emerging class of test-time adaptive methods like TAO demonstrates promising performance for specialized enterprise tasks, particularly in scenarios with limited labeled data.

Selection decisions should be guided by problem characteristics including gradient availability, landscape convexity, dimensionality, and computational constraints. As adaptive algorithms continue evolving, researchers can leverage the structured comparisons and experimental protocols presented here to inform algorithm selection for specific research applications in drug development and scientific computing.

In the realm of machine learning and statistical modeling, three interconnected challenges persistently shape research trajectories and practical implementations: high-dimensionality, non-convex landscapes, and dynamic constraints. High-dimensional problems involve parameter spaces where the number of features or variables dramatically exceeds available observations, creating optimization environments that scale exponentially with dimensionality [14]. Non-convex landscapes introduce complex optimization surfaces riddled with multiple local minima, saddle points, and regions of flat curvature that complicate convergence to meaningful solutions [15] [16]. Dynamic constraints further compound these difficulties by imposing evolving limitations on resources, model architectures, or operational parameters during the optimization process [17] [14].

These challenges manifest with particular acuity in learning-enabled systems (LR systems), where they collectively impact model training, feature selection, and hyperparameter optimization. Research indicates that high-dimensional optimization problems exponentially increase computational costs while degrading generalization stability and increasing the risk of convergence to suboptimal local minima [14]. Meanwhile, the non-convex nature of modern deep learning loss functions creates landscapes where saddle points—positions with zero gradient but mixed curvature—can trap optimization algorithms for extended periods [15] [16]. Dynamic constraints, such as budget limitations in data collection or evolving resource allocations, introduce additional complexity that static optimization approaches cannot adequately address [17].

This guide systematically compares methodological approaches for addressing these core challenges, providing experimental protocols and analytical frameworks relevant to researchers, scientists, and drug development professionals working at the intersection of machine learning and computational science.

Theoretical Foundations and Comparative Analysis

High-Dimensional Optimization Characteristics

High-dimensional optimization spaces exhibit distinct properties that complicate traditional optimization approaches. As dimensionality increases, the volume of the parameter space grows exponentially, while available data often remains sparse—a phenomenon known as the "curse of dimensionality" [14]. This sparsity undermines statistical stability and increases the risk of overfitting, particularly in models like logistic regression where separation issues can drive coefficients toward extreme values [18].

The geometry of high-dimensional spaces also creates unexpected optimization dynamics. Research reveals that in very high dimensions, critical points (where gradients vanish) become increasingly prevalent, with most being saddle points rather than true local minima [19]. This topological characteristic means that optimization algorithms must navigate increasingly complex networks of flat regions and deceptive descent directions as dimensionality grows.

Table 1: High-Dimensional Optimization Challenges and Mitigation Strategies

| Challenge | Impact on Optimization | Representative Mitigation Approaches |

|---|---|---|

| Feature Sparsity | Degraded generalization stability; increased overfitting risk | Regularization (L1/L2); dropout; dimensionality reduction |

| Abundant Saddle Points | Optimization stagnation; slow convergence | Stochastic gradient descent with noise; curvature information utilization |

| Exponential Search Space Growth | Computational intractability; slow convergence | Feature selection; stochastic optimization; adaptive learning methods |

| Critical Point Proliferation | Convergence to suboptimal solutions | Second-order methods; strict saddle point avoidance techniques |

Non-Convex Landscape Navigation

Non-convex optimization landscapes present fundamental challenges for convergence guarantees that are well-established in convex settings. These landscapes contain multiple local minima, saddle points, and regions of varying curvature that collectively complicate optimization dynamics [15]. The presence of saddle points—positions with zero gradient but indefinite Hessian matrices—is particularly problematic as they can trap first-order optimization methods for extended periods [16].

Statistical physics approaches to analyzing high-dimensional non-convex landscapes have revealed that the topological structure of sub-level sets significantly influences optimization navigability [19]. The sequence of sub-level sets $\mathsf{Sub}(u) = \{\bm x: f(\bm x) \leq u\}$ determines which regions are accessible to descent-based optimization methods without encountering topological obstructions. When these sets become disconnected or develop complex topological features, optimization paths must navigate increasingly convoluted routes to reach global minima [19].

The counting of critical points by index ($\mathsf{Crt}_k(f, u)$) provides a quantitative framework for assessing landscape complexity. Landscapes with numerous high-index critical points (many descent directions) typically prove more navigable than those dominated by low-index critical points (few descent directions), as optimization algorithms have more opportunities to escape suboptimal regions [19].

Dynamic Constraint Integration

Dynamic constraints reflect practical limitations that evolve throughout the optimization process, such as budget constraints in data collection, computational resource limitations, or changing operational requirements. Unlike static constraints, these dynamic limitations require adaptive optimization strategies that can respond to evolving feasibility boundaries [17].

In cost-constrained regression problems, budget limitations create NP-hard optimization problems with non-convex feasible regions [17]. Traditional approaches that treat constraints via soft penalty terms often prove inadequate for hard budget constraints, necessitating specialized optimization techniques. Similar challenges arise in real-world applications ranging from medical diagnostic testing—where different biomarkers incur different costs—to sensor placement problems with strict resource limitations [17].

Table 2: Dynamic Constraint Typology and Solution Approaches

| Constraint Type | Definition | Solution Methods |

|---|---|---|

| Budget Constraints | Cumulative cost of selected features/variables must not exceed specified budget | Discrete first-order optimization; 0-1 knapsack algorithms; cost-constrained regression |

| Resource Limitations | Computational resources (memory, processing time) that vary during optimization | Adaptive batch sizing; dynamic learning rate adjustment; model compression techniques |

| Evolving Feasibility | Solution feasibility criteria that change during optimization process | Constraint-aware optimization; dynamic penalty methods; multi-stage optimization |

| Performance Requirements | Minimum performance thresholds that increase during training | Curriculum learning; self-paced learning; progressive difficulty scaling |

Methodological Comparisons

Gradient-Based Optimization Methods

Gradient-based methods form the cornerstone of modern optimization in high-dimensional, non-convex spaces. These approaches leverage derivative information to navigate complex landscapes efficiently, with stochastic gradient descent (SGD) serving as the fundamental algorithm for large-scale problems [16]. SGD's inherent noise from mini-batch sampling provides serendipitous benefits in non-convex landscapes by helping algorithms escape shallow local minima and saddle points [16].

Adaptive learning rate methods represent significant advances over basic SGD. Algorithms like Adam (Adaptive Moment Estimation) combine momentum-based navigation with per-parameter learning rate adjustment, demonstrating particular effectiveness for problems with noisy or sparse gradients [16] [14]. Recent variants address specific limitations: AdamW decouples weight decay from gradient-based updates to improve generalization; AdamP incorporates projected gradient normalization to handle parameters where direction matters more than magnitude; and AMSGrad modifies the adaptive learning rate mechanism to preserve convergence guarantees [14].

For non-convex landscapes with abundant saddle points, methods that explicitly incorporate curvature information can significantly outperform first-order approaches. Second-order methods like Hessian-Free Optimization approximate Newton-direction steps without explicitly forming the computationally prohibitive Hessian matrix, enabling more effective navigation of regions with negative curvature [16]. Trust region methods dynamically adjust step sizes based on local landscape approximations, balancing between aggressive movement in well-behaved regions and caution in areas of uncertain curvature [16].

Alternative Optimization Paradigms

Population-based approaches offer complementary strengths for problems where gradient information is unavailable, unreliable, or insufficient. These methods employ stochastic search strategies inspired by natural systems, maintaining multiple candidate solutions simultaneously [14]. The Covariance Matrix Adaptation Evolution Strategy (CMA-ES) represents a state-of-the-art approach in this category, dynamically adjusting search distributions based on successful candidate solutions [14]. Other biologically inspired algorithms include the Harris Hawks Optimization (HHO) mimicking cooperative hunting behaviors and the African Vultures Optimization Algorithm (AVOA) based on foraging patterns [14].

Smooth parametrization techniques address non-convexity by transforming optimization domains to reveal more tractable landscape structures [20]. This approach either simplifies algorithm implementation by creating smoother surfaces or reveals hidden convexity that makes global optimization more feasible. Applications include low-rank matrix and tensor factorization, semidefinite programming via the Burer-Monteiro approach, and neural network training through carefully designed parameterizations [20]. These methods can eliminate problematic landscape features while preserving global optimality, though the parametrization must be carefully chosen to avoid introducing new spurious critical points.

Discrete-first-order methods bridge continuous optimization techniques with discrete constraint satisfaction, particularly for budget-constrained problems. These approaches solve sequences of 0-1 knapsack problems to generate convergent series of estimates for regression coefficients under cost constraints [17]. Theoretical guarantees establish convergence to first-order stationary points that can be globally optimal under specific conditions, providing a principled approach to NP-hard budget-constrained optimization [17].

Experimental Protocols and Benchmarking

Diabetes Biomarker Selection Case Study

Experimental Context: A phase III diabetes study examining twenty biomarkers for predicting treatment response illustrates the interplay of high-dimensionality, non-convex landscapes, and budget constraints [17]. Biomarkers exhibit significant cost variation—from $5 for diabetes duration to $200 for blood lipid panels—creating a natural budget optimization problem.

Methodology: The cost-constrained regression approach formulates biomarker selection as a high-dimensional optimization problem with a hard budget constraint [17]. The experimental protocol involves:

- Problem Formulation: Define the constrained optimization problem as finding the regression model with smallest expected prediction error among all models satisfying the budget constraint

- Algorithm Implementation: Apply discrete first-order continuous optimization method that solves sequences of 0-1 knapsack problems

- Convergence Verification: Monitor algorithm progress toward first-order stationary points with potential global optimality under specific conditions

- Validation: Compare selected biomarkers against unconstrained models and traditional selection methods

Key Metrics: Prediction error versus cost expenditure; selection stability across budget levels; computational efficiency compared to exhaustive search methods.

Non-Convex Landscape Analysis Protocol

Experimental Framework: Analyzing optimization landscape complexity requires specialized methodologies to assess navigability and critical point distribution [19]. The experimental protocol includes:

- Sub-level Set Topology Mapping: Characterize the structure of $\mathsf{Sub}(u) = \{\bm x: f(\bm x) \leq u\}$ across energy levels

- Critical Point Enumeration: Compute $\mathsf{Crt}_k(f, u)$ counts of critical points by index and value threshold

- Homology Computation: Calculate topological invariants including Betti numbers to quantify "holes" at different dimensions

- Euler Characteristic Calculation: Derive $\chi(\mathsf{Sub}(f, u)) = \sumk (-1)^k \mathsf{Crt}k(f, u)$ for Morse functions

- Dynamics Correlation: Relate landscape topology to optimization algorithm performance across initial conditions

Implementation Considerations: For high-dimensional problems, complete enumeration of critical points becomes computationally prohibitive, necessitating sampling-based approximations or analytical random function models [19].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization Research

| Tool Category | Representative Examples | Primary Function | Application Context |

|---|---|---|---|

| Deep Learning Frameworks | TensorFlow 2.10, PyTorch 2.1.0 | Automatic differentiation; distributed training support | Model training; gradient-based optimization |

| Gradient-Based Optimizers | Adam, AdamW, AMSGrad, NAdam | Adaptive learning rate optimization | Non-convex landscape navigation; high-dimensional parameter tuning |

| Population-Based Algorithms | CMA-ES, LM-MA, HHO, AVOA | Derivative-free global optimization | Problems with unavailable gradients; multi-modal landscapes |

| Constrained Optimization Tools | Discrete first-order methods; 0-1 knapsack solvers | Budget-constrained variable selection | Cost-constrained regression; resource-limited feature selection |

| Landscape Analysis Libraries | Custom topology computation tools | Critical point identification; sub-level set topology mapping | Landscape complexity assessment; algorithm behavior prediction |

Performance Comparison Data

Optimization Method Efficiency Metrics

Table 4: Relative Performance Across Optimization Challenge Domains

| Optimization Method | High-Dimensional Scaling | Non-Convex Navigation | Constraint Handling | Theoretical Guarantees |

|---|---|---|---|---|

| Stochastic Gradient Descent | Moderate (O(1/√T) convergence) | Limited (saddle point issues) | Limited (primarily unconstrained) | Strong (convex cases) |

| Adaptive Methods (Adam) | Strong (per-parameter adaptation) | Moderate (saddle escape issues) | Limited (soft constraints only) | Moderate (stationary points) |

| Cost-Constrained Regression | Strong (knapsack sequencing) | Strong (convergence to stationary points) | Strong (hard budget constraints) | Strong (first-order guarantees) |

| Population-Based Approaches | Weak (curse of dimensionality) | Strong (global exploration) | Moderate (constraint incorporation) | Limited (empirical validation) |

| Smooth Parametrization | Variable (depends on parametrization) | Strong (hidden convexity revelation) | Moderate (reformulation-dependent) | Strong (under specific conditions) |

The interdisciplinary challenges of high-dimensionality, non-convex landscapes, and dynamic constraints continue to shape optimization research across machine learning and scientific computing. Our analysis reveals that while gradient-based methods—particularly adaptive variants like AdamW and AdamP—deliver strong performance across many high-dimensional scenarios, no single approach dominates all challenge domains. Cost-constrained regression methods offer principled solutions for hard budget limitations but require specialized optimization techniques. Population-based algorithms provide valuable alternatives for problems with pathological landscape features or unavailable gradient information.

Future research directions include developing more effective saddle point escape mechanisms, creating theoretical frameworks for dynamic constraint incorporation, and improving scalability to ultra-high-dimensional problems. The integration of biological inspiration with mathematical rigor—exemplified by both population-based algorithms and smooth parametrization techniques—promises continued advances in addressing these fundamental optimization challenges.

The Role of Optimization in Model Training, Feature Selection, and Hyperparameter Tuning

In the field of computational drug development, the optimization of machine learning (ML) models is not merely a technical enhancement but a fundamental requirement for generating clinically relevant and interpretable predictions. This guide examines the critical role of optimization techniques within the specific context of drug response prediction (DRP), a cornerstone of personalized medicine. For researchers and scientists, the careful balancing of model complexity, interpretability, and predictive power directly influences the translational potential of in-silico models. We provide a structured comparison of contemporary methodologies, supported by experimental data and detailed protocols, to inform the selection of optimization strategies in LR systems research.

The Critical Role of Feature Selection in Drug Response Prediction

Feature selection is a primary optimization step that addresses the high-dimensionality of molecular data, such as gene expression profiles, which often contain measurements for over 20,000 genes from a limited set of cell lines or tumor samples. Effective feature reduction mitigates overfitting, reduces computational complexity, and, most importantly, enhances the biological interpretability of the resulting models—a non-negotiable aspect in therapeutic design.

Comparative Evaluation of Feature Reduction Methods

Recent systematic studies have evaluated numerous feature reduction strategies, categorizing them into knowledge-based and data-driven approaches [21]. The performance of these methods varies significantly across different drugs and cancer types.

Table 1: Comparison of Feature Reduction Methods for Drug Response Prediction [21]

| Feature Reduction Method | Type | Average Number of Features | Key Strengths | Best-Performing ML Model |

|---|---|---|---|---|

| Transcription Factor (TF) Activities | Knowledge-based | ~1,200 | High biological interpretability; best overall performer on tumor data | Ridge Regression |

| Pathway Activities | Knowledge-based | 14 | Extremely low-dimensional; good interpretability | Ridge Regression |

| Drug Pathway Genes | Knowledge-based | ~3,700 | Leverages known drug mechanism-of-action | Ridge Regression |

| Landmark Genes (L1000) | Knowledge-based | 978 | Captures majority of transcriptome information | Ridge Regression |

| Autoencoder (AE) Embedding | Data-driven | Varies | Captures non-linear patterns in data | Multilayer Perceptron |

| Principal Components (PCs) | Data-driven | Varies | Maximizes variance captured | Ridge Regression |

A landmark 2024 study in Scientific Reports conducted over 6,000 experimental runs to compare nine feature reduction methods followed by six ML models [21]. The findings indicate that for the critical task of generalizing from cell line data to clinical tumor data, knowledge-based methods consistently outperformed data-driven approaches. Specifically, Transcription Factor (TF) Activities—scores quantifying the activity of TFs based on their regulated genes—proved most effective, successfully distinguishing sensitive and resistant tumors for seven out of twenty drugs evaluated [21].

Experimental Protocol for Evaluating Feature Selection Strategies

The following workflow, derived from established methodologies, provides a robust framework for benchmarking feature selection techniques in DRP [22] [21].

Diagram 1: Experimental workflow for feature selection evaluation.

Detailed Methodology:

- Data Acquisition: Obtain drug sensitivity data (e.g., Area Under the dose-response Curve - AUC) and corresponding molecular profiles (e.g., gene expression) from public repositories such as the PRISM, GDSC, or CCLE datasets [22] [21].

- Feature Reduction Application: Apply the various feature selection methods to the input gene expression matrix (e.g., ~21,000 genes) to generate reduced feature sets.

- Model Training & Validation:

- Cross-Validation: Perform repeated random-subsampling cross-validation (e.g., 100 splits of 80%/20%) on cell line data to tune hyperparameters via nested cross-validation and assess baseline performance [21].

- Clinical Validation: Train the model on the entire cell line dataset and evaluate its predictive power on an independent set of clinical tumor data. This is the gold standard for assessing translational utility [21].

- Performance Metrics: Use Pearson’s Correlation Coefficient (PCC) between predicted and observed drug responses. Relative Root Mean Squared Error (RelRMSE) is also recommended over raw RMSE, as it accounts for varying drug response variances and provides a more reliable comparison across different compounds [22].

Hyperparameter Tuning for Robust Predictive Modeling

Hyperparameter tuning is the process of optimizing the configuration settings that govern the ML training process itself. In DRP, where datasets are often noisy and limited, effective tuning is critical for building generalizable models.

Advanced Tuning Techniques and Platforms

While traditional methods like grid and random search are common, more sophisticated approaches have demonstrated superior efficiency.

Table 2: Hyperparameter Optimization Methods and Applications

| Method | Principle | Advantages | Common Use-Cases in DRP |

|---|---|---|---|

| Bayesian Optimization | Builds a probabilistic surrogate model to guide the search for optimal parameters [13]. | Highly sample-efficient; suitable for expensive-to-evaluate functions [13]. | Tuning SVM parameters (C, γ) and neural network hyperparameters [23] [13]. |

| Integrated Schemes (GA-CG) | Combines Genetic Algorithm (GA) for feature selection with Conjugate Gradient (CG) for parameter optimization [24]. | Solves feature selection and parameter tuning simultaneously, acknowledging their interdependence [24]. | Developing optimal SVM models for ADMET property prediction [24]. |

| Automated Frameworks (e.g., Ax, Optuna) | Provides a platform for adaptive experimentation, implementing state-of-the-art algorithms like Bayesian Optimization [13]. | Manages complex experiments with multiple objectives and constraints; provides analysis tools for deeper insight [13]. | Large-scale hyperparameter optimization and architecture search for AI models in drug discovery [13]. |

A key finding from prior research is that feature selection and model parameter setting are deeply intertwined [24]. An integrated approach that addresses both simultaneously can yield more predictive and robust models. For instance, a study on predicting ADMET properties showed that a GA-CG-SVM scheme, which jointly optimizes feature subsets and SVM parameters, produced models with higher accuracy and fewer features [24].

Protocol for Data-Driven Hyperparameter Tuning

The sample complexity of tuning hyperparameters, particularly for deep neural networks, is a formally studied challenge [25]. The following protocol outlines a practical tuning workflow.

Diagram 2: Bayesian optimization loop for hyperparameter tuning.

Detailed Methodology:

- Problem Formulation: Define the objective function (e.g., maximize PCC on a validation set) and the search space for all hyperparameters (e.g., learning rate, regularization strength, number of layers) [25] [13].

- Bayesian Optimization Loop:

- Build a Surrogate Model: A probabilistic model, typically a Gaussian Process (GP), is used to approximate the complex, unknown relationship between hyperparameters and model performance [13].

- Optimize an Acquisition Function: A utility function, such as Expected Improvement (EI), uses the surrogate's predictions to propose the most promising hyperparameter configuration to evaluate next. This balances exploration (testing uncertain regions) and exploitation (refining known good configurations) [13].

- Evaluate and Update: The proposed configuration is evaluated by training the model, and the result is used to update the surrogate model, refining its accuracy [13].

- Convergence Check: The loop continues until a predefined computational budget is exhausted or performance converges. Platforms like Ax automate this entire process and provide diagnostic tools to understand the influence of each hyperparameter [13].

The Scientist's Toolkit: Essential Reagents for Optimization Research

This section catalogs key computational tools and data resources essential for conducting rigorous optimization experiments in DRP.

Table 3: Key Research Reagent Solutions for Optimization in DRP

| Item / Resource | Type | Function in Research | Example |

|---|---|---|---|

| Drug Sensitivity Databases | Dataset | Provides ground-truth data for training and validating models. | GDSC [22], CCLE [21], PRISM [21] |

| Molecular Profiles | Dataset | Provides the high-dimensional input features (e.g., gene expression) for models. | CCLE transcriptomics [21], Tumor sequencing data |

| Pathway & TF Databases | Knowledge Base | Enables knowledge-based feature selection by providing gene sets. | Reactome [21], OncoKB [21], TF regulons |

| Optimization Platforms | Software Tool | Automates and manages complex hyperparameter tuning experiments. | Ax [13], Optuna [23] |

| ML Frameworks | Software Library | Provides implementations of ML algorithms and feature selection methods. | Scikit-learn, PyTorch, TensorFlow [26] |

| Benchmarking Suites | Software/Metric | Standardizes performance evaluation and comparison across studies. | MLPerf [26], custom cross-validation pipelines [21] |

The systematic optimization of model training, feature selection, and hyperparameter tuning is indispensable for advancing drug response prediction research. Empirical evidence strongly suggests that knowledge-based feature selection methods, particularly those leveraging transcription factor activities, offer a superior balance of predictive performance and biological interpretability—a crucial combination for generating testable hypotheses in therapy design. Furthermore, the adoption of advanced, integrated optimization schemes that concurrently handle features and parameters, often facilitated by modern platforms like Ax, can yield significant performance gains. As the field progresses towards more complex models and heterogeneous data, the principles of rigorous, data-driven optimization detailed in this guide will remain foundational to building trustworthy and impactful predictive models in computational drug development.

Implementing Learning Systems in Clinical Development and Biomedical Innovation

Applied AI for Predicting Firm-Level Innovation Outcomes from Survey Data

The ability to accurately predict firm-level innovation outcomes is a cornerstone of economic growth and competitive strategy, particularly in research-intensive sectors. Traditional methods, which often rely on lagging indicators such as patent filings or R&D expenditure, are rapidly being supplemented by advanced Artificial Intelligence (AI) techniques that can extract predictive signals from unstructured data. Among these data sources, surveys—ranging from customer feedback and expert panels to internal employee assessments—represent a rich, yet notoriously challenging, vein of information. This guide explores how applied AI, particularly in the realm of Natural Language Processing (NLP) and Large Language Models (LLMs), is revolutionizing the prediction of innovation outcomes from survey data. We frame this exploration within the broader thesis of performance characteristics in language recognition (LR) systems research, examining the capabilities, limitations, and practical applications of current AI technologies in transforming qualitative text into quantifiable, actionable forecasts for researchers, scientists, and drug development professionals. The core value proposition lies in AI's capacity to overcome human limitations in processing volume, speed, and bias, thereby unlocking a more dynamic and precise understanding of a firm's innovative potential [27] [28].

The AI Toolkit for Survey Analysis

The integration of AI into survey analysis for innovation prediction relies on a suite of sophisticated tools and techniques. These methods move beyond simple keyword counting to a deeper, context-aware understanding of language.

Core Natural Language Processing (NLP) Techniques

At the foundation of this analysis are established NLP techniques that enable computers to deconstruct and understand human language. These include [28]:

- Tokenization: Breaking down text into individual words or tokens for initial processing.

- Part-of-Speech Tagging: Identifying the grammatical role of each word (e.g., noun, verb, adjective) to understand sentence structure.

- Named Entity Recognition (NER): Identifying and classifying specific entities such as company names, drug compounds, technologies, or personnel within the text.

- Syntax Parsing: Analyzing the grammatical structure of sentences to understand the relationships between words.

- Semantic Analysis: Extracting the underlying meaning and intent behind sentences, which is crucial for accurately gauging sentiment and thematic content.

From Word Embeddings to Large Language Models

A significant breakthrough in NLP was the development of numerical representation of words, such as Google's Word2Vec model. These "word embeddings" allow words to be converted into vectors of numbers, enabling algorithms to grasp linguistic relationships; for instance, understanding that "king" is to "queen" as "man" is to "woman." [29] This principle has been vastly extended by modern pre-trained language models like GPT, Claude, and Llama. These LLMs are first trained on immense corpora of text from the internet and scientific literature, allowing them to learn a deep, contextual understanding of language, including technical jargon specific to domains like biotech and pharmaceuticals. They can then be fine-tuned on specific tasks, such as analyzing survey responses from R&D teams or patient focus groups, making them powerful tools for domain-specific analysis [30] [29].

Key Analytical Applications

When applied to survey data, these technologies power several critical applications:

- Topic Modeling: An advanced NLP technique that uses algorithms to automatically identify the main themes or topics from a large collection of text responses. Methods like Latent Dirichlet Allocation (LDA) can discover hidden semantic structures without a researcher's pre-conceived notions, which helps eliminate bias and uncover unrecognized trends relevant to innovation [27] [29].

- Sentiment Analysis: Also known as opinion mining, this tool classifies the emotional tone of text (positive, negative, or neutral). For innovation surveys, this helps researchers understand not just what is being discussed, but the level of optimism, concern, or satisfaction associated with different projects or processes [28].

- TF-IDF (Term Frequency-Inverse Document Frequency): A statistical measure used to evaluate how important a word is to a document in a collection. It identifies words that are uniquely important to specific segments of respondents, which can highlight emerging niche technologies or specialized concerns that might be drowned out in a broader analysis [27].

Comparative Analysis of Leading AI Models

The landscape of AI models suitable for this task is diverse, ranging from proprietary, closed-source systems to powerful open-weight models. The following table provides a structured comparison of leading LLMs as of late 2024 to mid-2025, highlighting their relevance for analyzing innovation-focused survey data.

Table 1: Comparison of Leading Large Language Models for Innovation Analysis

| Model/Provider | Key Characteristics | Licensing & Cost | Strengths for Innovation Survey Analysis |

|---|---|---|---|

| OpenAI GPT-5 [30] | State-of-the-art performance; multimodal; dedicated "reasoning" model for complex problems. | Proprietary; requires commercial license or subscription. | Excels in multi-step reasoning on complex, open-ended responses; strong in coding and mathematical tasks. |

| DeepSeek V3.1 / R1 [30] | Open-source; hybrid "thinking"/"non-thinking" mode; efficient Mixture of Experts (MoE) architecture. | MIT license (free commercial use). | Cost-effective for large-volume analysis; R1 series specialized for complex reasoning in finance and science. |

| Qwen3 Series [30] | Hybrid MoE models; meets or beats GPT-4o on many benchmarks; highly flexible dense models. | Apache 2.0 license (open-source). | Strong performance with less compute; specialized models (e.g., Qwen3-Coder) for technical domains. |

| Claude 4 Family [30] | "Extended thinking mode" for deliberate, self-reflective reasoning; versatile model family. | Proprietary. | Ideal for complex, multi-step problem-solving; strong accuracy in long-document analysis. |

| Llama 4 Series [30] | Open-source; natively multimodal (text, images, video); massive context window (Llama 4 Scout). | Open-source. | Flexibility for fine-tuning on private data; strong community support; excellent for long, complex documents. |

Performance and Benchmarking Considerations

Evaluating these models requires a rigorous look at their performance on standardized benchmarks. However, the field faces challenges such as data contamination, where models are exposed to evaluation data during training, leading to inflated scores [31]. Furthermore, over-reliance on single metrics like accuracy can fail to capture a model's full capabilities and limitations in real-world, dynamic environments [31]. For innovation surveys, domain-specific benchmarks that test for scientific reasoning, understanding of technical jargon, and ability to infer causal relationships are more informative than general knowledge tests. Models are demonstrating rapid progress, with performance on demanding benchmarks like MMLU (Massive Multitask Language Understanding) and GPQA (Graduate-Level Google-Proof Q&A) seeing sharp increases, narrowing the performance gap between open and closed models to just 1.7% on some benchmarks in a single year [32].

AI in Action: Predicting Innovation in Pharma and Biotech

The pharmaceutical and biotechnology industry, where innovation is both exceptionally valuable and costly, provides a compelling case study for the application of AI to survey data. AI is projected to generate between $350 billion and $410 billion annually for the pharmaceutical sector by 2025, largely by improving the efficiency and success rate of drug development [33].

Quantitative Impact of AI on Drug Innovation

The traditional drug development process is notoriously long and expensive, taking an average of 14.6 years and costing around $2.6 billion to bring a new drug to market [34]. AI is fundamentally altering this calculus, as shown by the following data on its impact across the development pipeline.

Table 2: Quantitative Impact of AI on Drug Discovery and Development

| Metric | Traditional Process | AI-Accelerated Process | Data Source & Context |

|---|---|---|---|

| Discovery Timeline | 5 years | 12-18 months | AI-driven platforms like Exscientia's Centaur Chemist [33]. |

| Cost to Preclinical Stage | N/A | Savings of 30-40% | Efficiency in target identification and compound screening [33]. |

| Probability of Clinical Success | ~10% | Increased likelihood | AI analysis improves candidate selection [33]. |

| Lead Generation Timelines | N/A | Reduced by up to 28% | AI efficiency in early-stage "findy" [35]. |

| Virtual Screening Costs | N/A | Reduced by up to 40% | AI-driven predictive modeling [35]. |

Experimental Protocols for AI-Driven Analysis

To translate survey data into predictive insights, specific experimental protocols are employed. Below is a detailed methodology for a typical analysis workflow, which can be adapted for various survey types, such as those measuring researcher sentiment on project viability or customer feedback on prototype technologies.

Protocol: Predictive Topic and Sentiment Modeling from Open-Ended Survey Responses

- Objective: To identify key themes and associated sentiments from a large corpus of open-ended survey responses and correlate these themes with future innovation outcomes (e.g., project continuation, clinical trial success).

- Input Data: A minimum of 1,000 open-ended text responses from a targeted survey (e.g., "Please describe the most significant technical hurdle for this project.") [27].

- Pre-processing:

- Data Cleaning: Remove irrelevant characters, correct typos, and standardize text to lowercase.

- Tokenization: Break down all responses into individual words or sub-word tokens [28].

- Noise Removal: Filter out common but uninformative "stop words" (e.g., "the," "and") and words that appear with extremely high or low frequency [28].

- Feature Engineering:

- Vectorization: Convert the pre-processed text into a numerical format. This can be done using TF-IDF to highlight important words or, more effectively, using sentence embeddings from a pre-trained LLM to capture semantic meaning [29].

- Modeling and Analysis:

- Topic Modeling (Unsupervised): Apply the LDA algorithm to the vectorized data to probabilistically cluster responses into a pre-specified number of topics (e.g., 5-10). Each topic is defined by a cluster of words (e.g., "synthesis," "yield," "reaction" for a chemistry-related topic) [27] [29].

- Sentiment Analysis (Supervised): Use a pre-trained sentiment model (e.g., from Google Cloud Natural Language API or IBM Watson NLP) to classify each response, or segments of responses, as positive, negative, or neutral [28].

- Correlation with Outcomes: Statistically correlate the prevalence and sentiment of identified topics with subsequent, real-world innovation outcomes. For example, a retrospective study could determine if a high frequency of negative-sentiment comments about "scale-up" was predictive of a project's eventual failure to move to manufacturing.

This workflow can be visualized in the following diagram, which outlines the logical progression from raw data to actionable insight.

Diagram 1: AI Analysis Workflow for Survey Data. This chart illustrates the sequential process of transforming raw text into predictive insights.

The Scientist's Toolkit: Essential Reagents for AI-Powered Innovation Analysis

Implementing the described experimental protocols requires a set of core "research reagents" – the software tools, models, and data resources that form the foundation of any AI-driven innovation analysis project.

Table 3: Essential Research Reagent Solutions for AI-Driven Survey Analysis

| Reagent / Tool Name | Type | Primary Function in Analysis | Relevance to Innovation Prediction |

|---|---|---|---|

| Pre-trained LLM (e.g., DeepSeek V3.1, Llama 4) [30] | AI Model | Provides a foundational understanding of language and reasoning; can be fine-tuned for specific domains. | Core engine for interpreting technical survey responses and identifying complex relationships. |

| LDA Algorithm [27] [29] | Computational Algorithm | Performs probabilistic topic modeling on a corpus of text to uncover latent themes. | Discovers emerging research trends or unstated project challenges from internal or expert surveys. |

| Word2Vec / Sentence Embeddings [29] | Numerical Representation | Converts words and sentences into vectors, capturing semantic meaning for machine learning. | Enables clustering of similar ideas and concepts across different respondent vocabularies. |

| Trusted Research Environment (TRE) [34] | Data Security Platform | Provides a secure, controlled computing environment for analyzing sensitive data. | Essential for handling proprietary R&D survey data and patient feedback without compromising privacy. |

| Federated Learning Framework [34] | AI Training Paradigm | Allows model training across decentralized data sources without sharing raw data. | Enables collaborative analysis across different departments or partner companies while protecting IP. |

| Sentiment Analysis API (e.g., Google Cloud NLP) [28] | Cloud Service | Classifies the emotional tone (positive, negative, neutral) of text. | Gauges researcher morale, customer excitement, or expert skepticism from open-ended feedback. |

Advanced Applications: From Prediction to Autonomous Action

The predictive insights gleaned from surveys are increasingly fueling more advanced AI applications, most notably autonomous agents. These are AI-powered systems that can perform complex tasks without constant human intervention. Business executives forecast that autonomous agents will dominate the AI agenda, with the potential to handle tasks from scheduling meetings to conducting initial literature reviews and even managing aspects of customer support [36]. In the context of innovation, an AI agent could continuously monitor internal project management surveys and external scientific literature, automatically flagging projects that exhibit sentiment and topic patterns historically associated with failure, or re-allocating resources to those showing signals of breakthrough potential. This represents a shift from passive prediction to active management of the innovation pipeline.

The integration of AI into clinical trials showcases this advanced application. AI optimizes trial design, patient recruitment, and data analysis, leading to significant time and cost savings. The following diagram details this specific application.

Diagram 2: AI-Driven Clinical Trial Optimization. This chart shows how AI uses various data inputs to streamline key phases of clinical development.

The application of AI for predicting firm-level innovation outcomes from survey data marks a paradigm shift in how organizations measure and manage their most valuable asset: their innovative capacity. By leveraging sophisticated NLP techniques and powerful LLMs, researchers and drug development professionals can transition from retrospective analysis to proactive forecasting. The experimental data and comparative model analysis presented in this guide demonstrate that while challenges like data contamination and benchmarking fairness remain [31], the potential is immense. As the technology continues to evolve, becoming more efficient and accessible [32], its integration into the innovation lifecycle will deepen. The future of innovation intelligence lies in a synergistic partnership between human expertise and AI's unparalleled ability to decode the complex narratives hidden within our data, ultimately accelerating the pace of scientific discovery and technological progress.

Leveraging Ensemble Methods and Boosting Algorithms for Enhanced Predictive Performance

Ensemble methods represent a powerful paradigm in machine learning, designed to improve predictive performance by combining multiple models. These techniques are particularly valuable in research domains where predictive accuracy is paramount, such as in the development of quantitative structure-activity relationship (QSAR) models within drug discovery. By aggregating the predictions of several base learners, ensemble methods often achieve superior performance compared to any single constituent model, effectively reducing variance, minimizing bias, and enhancing generalization on unseen data [37] [38]. The core principle rests on the idea that a collective of models can compensate for individual shortcomings, leading to more robust and accurate predictions.

This guide focuses on three primary ensemble strategies: Bagging, Boosting, and Stacking. Bagging operates by training multiple models in parallel on different data subsets, Boosting builds models sequentially with each new model correcting its predecessors, and Stacking uses a meta-learner to optimally combine predictions from diverse base models [39] [40]. Within the context of performance characteristics for learning system research, understanding the trade-offs, operational mechanisms, and optimal application scenarios for these ensembles is critical for researchers and drug development professionals aiming to build state-of-the-art predictive systems.

Core Ensemble Methodologies: A Comparative Framework

Bagging (Bootstrap Aggregating)

Bagging is a parallel ensemble method designed primarily to reduce variance and prevent overfitting in high-variance models like deep decision trees [41] [38]. Its operational workflow begins with bootstrap sampling, where multiple subsets are created by randomly sampling the original training data with replacement. This results in different, albeit overlapping, datasets for training each base learner. A key characteristic is that each model is trained independently of the others. The final prediction is formed by aggregating the outputs of all models, typically through majority voting for classification or averaging for regression tasks [39] [42].

- Primary Goal: Variance reduction [38] [42].

- Model Training: Independent and parallel [42].

- Data Handling: Utilizes bootstrap sampling with replacement; some data points may be omitted in each subset and are then used as out-of-bag error estimates [39] [38].

- Advantages: Highly parallelizable, effective at smoothing out model instability and overfitting, performs well with strong, high-variance base learners like fully-grown decision trees [39] [38].

- Disadvantages: Less effective at reducing model bias if the base learners are too simple [41].

- Exemplar Algorithm: Random Forest, which extends bagging by not only sampling data instances but also randomly selecting a subset of features at each split, further decorrelating the individual trees [39] [38].

Boosting

Boosting is a sequential ensemble technique focused on reducing bias and variance by converting weak learners into a strong learner [41] [38]. Unlike Bagging, models are built sequentially, with each new model focusing on the errors made by the previous ones. This is achieved by adaptively adjusting the weights of training instances, increasing the emphasis on those that were previously misclassified, or by directly fitting new models to the residuals of the current ensemble [39] [38]. The final combination of models is typically done through a weighted majority vote or a weighted sum.

- Primary Goal: Bias (and variance) reduction [38] [42].

- Model Training: Sequential and adaptive [42].

- Data Handling: Uses the entire dataset but re-weights instances or fits to residuals in each iteration, forcing subsequent models to concentrate on harder-to-predict examples [41] [38].

- Advantages: Often achieves higher predictive accuracy than bagging, particularly on structured data; effectively improves upon weak learners like shallow decision trees [39] [38].

- Disadvantages: Prone to overfitting if not properly regularized, sensitive to noisy data and outliers, and computationally more intensive and less easily parallelized than bagging [41] [38].

- Exemplar Algorithms:

- AdaBoost (Adaptive Boosting): One of the first successful boosting algorithms, it re-weights data points, putting more weight on misclassified instances at each step [39] [38].

- Gradient Boosting: A generalization that frames the problem as a numerical optimization, where each new model is trained to predict the negative gradient (residuals) of the loss function. Modern implementations like XGBoost, LightGBM, and CatBoost offer enhanced efficiency, regularization, and handling of data types [39] [38] [43].

Stacking (Stacked Generalization)

Stacking is a more advanced, heterogeneous ensemble method that aims to leverage the strengths of diverse algorithms. It introduces a hierarchical structure: multiple different base models (e.g., a Random Forest, a Gradient Boosting model, and an SVM) are trained on the original data in the first level. Their predictions are then used as input features for a second-level model, known as the meta-learner, which learns how to best combine these predictions to make the final output [39] [37].

- Primary Goal: Leverage model diversity for superior performance [39].

- Model Training: Two-level training process for base learners and a meta-learner [37].

- Data Handling: To prevent information leakage and overfitting, the predictions for the meta-learner's training set are typically generated via cross-validation or by using a hold-out set not seen by the base learners [37].

- Advantages: Highly flexible, can capture different patterns in the data through model diversity, and has the potential to outperform any single base model type [39].

- Disadvantages: Complex to train and tune, computationally expensive, and can be less interpretable [39] [44].

Table 1: Comparative Summary of Ensemble Learning Techniques

| Feature | Bagging | Boosting | Stacking |

|---|---|---|---|

| Core Objective | Reduce variance | Reduce bias & variance | Leverage model diversity |

| Training Approach | Parallel | Sequential | Hierarchical / Meta-learning |

| Base Learner Type | Often homogeneous, strong (high-variance) | Homogeneous, weak to start (e.g., shallow trees) | Heterogeneous (different algorithms) |

| Data Sampling | Bootstrap samples with replacement | Full dataset with re-weighting/fitting to residuals | Original dataset, with hold-out for meta-learner |

| Prediction Aggregation | Averaging / Majority Vote | Weighted Averaging / Vote | Meta-model (e.g., linear model) learns combination |

| Overfitting Tendency | Low, reduces overfitting | Higher, requires careful regularization | Can be high, requires cross-validation |

| Parallelizability | High | Low | Moderate (base learners can be parallel) |

| Example Algorithms | Random Forest, Bagged Decision Trees | AdaBoost, Gradient Boosting, XGBoost, LightGBM | Custom stacks of diverse classifiers/regressors |

Diagram 1: Workflow comparison of Bagging, Boosting, and Stacking.

Experimental Performance and Benchmarking

Quantitative Performance Comparisons

Empirical evidence from recent studies across various domains consistently demonstrates the performance advantages of ensemble methods. A 2025 comparative analysis on public datasets like MNIST and CIFAR highlighted key performance and computational trade-offs. As ensemble complexity (number of base learners) increased from 20 to 200, Boosting's accuracy on MNIST improved from 0.930 to 0.961 before showing signs of overfitting, while Bagging's performance improved more modestly from 0.932 to 0.933 before plateauing. This performance gain for Boosting came at a significant computational cost, requiring approximately 14 times more computational time than Bagging for an ensemble of 200 learners [45].

In a 2025 educational study predicting student performance, a LightGBM model (a boosting variant) emerged as the best-performing base model with an Area Under the Curve (AUC) of 0.953 and an F1-score of 0.950, outperforming a Random Forest model. However, the implemented stacking ensemble (AUC = 0.835) did not yield a significant improvement in this specific case, underscoring that its success depends on careful model selection and tuning [44]. Similarly, a study on energy consumption prediction found that a clustering-based ensemble framework using CatBoost and LightGBM statistically significantly outperformed traditional non-clustered machine learning approaches (p < 0.05 or 0.01) [46].

Table 2: Experimental Performance Metrics Across Domains (2025 Studies)

| Study / Domain | Algorithms Compared | Key Performance Metric | Reported Results | Key Finding |

|---|---|---|---|---|

| Algorithmic Comparison [45] | Bagging vs. Boosting | Accuracy / Computational Time | Boosting: 0.961 Accuracy, ~14x Bagging's compute time. Bagging: 0.933 Accuracy. | Boosting achieves higher peak performance but with substantially higher computational cost. |

| Higher Education [44] | LightGBM vs. Random Forest vs. Stacking | AUC (Area Under the Curve) | LightGBM: 0.953, Random Forest: High, Stacking: 0.835 | Boosting (LightGBM) can outperform both Bagging (RF) and Stacking in some contexts. |

| Energy Consumption [46] | Clustering + ML Ensembles (CatBoost, LightGBM) vs. Traditional ML | Statistical Significance (p-value) | p < 0.05 or p < 0.01 | The proposed ensemble framework significantly outperformed traditional non-clustered approaches. |

| Construction Materials [43] | XGBoost vs. RF vs. AdaBoost vs. CatBoost | Rank Analysis (Multiple Metrics) | XGBoost outperformed RF, AdaBoost, and CatBoost. | Advanced boosting algorithms (XGBoost) can show superior predictive performance in engineering tasks. |

Detailed Experimental Protocol

To ensure the validity and reproducibility of ensemble model comparisons, researchers should adhere to a structured experimental protocol. The following methodology, synthesized from recent literature, provides a robust framework for benchmarking.

1. Data Preprocessing and Feature Engineering

- Data Cleaning: Handle missing values, timestamp gaps, and outliers through audit and imputation (e.g., two-stage imputation: cross-household averaging for long gaps, linear interpolation for short gaps) [46].

- Feature Standardization: Normalize or standardize features (e.g., using

StandardScalerfor mean zero and unit variance), particularly for algorithms sensitive to feature scales [46]. - Class Imbalance Handling: For classification tasks with imbalanced classes, apply techniques like SMOTE (Synthetic Minority Oversampling Technique) to create balanced datasets and mitigate model bias [44].

2. Model Training and Validation Framework

- Training/Test Split: Split the dataset into training and held-out test sets, typically using an 70/30 or 80/20 ratio. For time-series data, use a chronological split to avoid data leakage [39] [46].

- Hyperparameter Tuning: Perform a systematic search (e.g., Grid Search or Random Search) for optimal hyperparameters for each model using cross-validation on the training set only [46].

- Cross-Validation: Use k-fold cross-validation (e.g., 5-fold or 10-fold) to assess model performance robustly. For sequential models or time-series data, employ rolling-origin (forward-chaining) validation [46] [44].

- Performance Metrics: Select metrics aligned with the research objective. Common choices include:

3. Ensemble-Specific Considerations

- Bagging: For algorithms like Random Forest, report the Out-of-Bag (OOB) score as an estimate of generalization accuracy [38].

- Boosting: Implement early stopping based on a validation set to determine the optimal number of estimators and prevent overfitting [38].

- Stacking: Use cross-validated predictions from the base learners to train the meta-learner. This ensures that the meta-learner is trained on predictions that the base learners did not see during their own training, preventing overfitting [37].

Building and benchmarking advanced ensemble models requires a suite of robust software libraries and computational tools. The following table details key "research reagents" for practitioners in this field.

Table 3: Essential Computational Tools for Ensemble Learning Research

| Tool / Resource | Type | Primary Function in Research | Key Advantages |

|---|---|---|---|

| scikit-learn [39] [37] | Python Library | Provides implementations of Bagging (BaggingClassifier), Random Forest, AdaBoost, GradientBoosting, and Stacking (StackingClassifier). | Unified API, excellent documentation, extensive preprocessing and model evaluation tools. Foundation for many ML workflows. |

| XGBoost [38] [43] | Boosting Library | An optimized gradient boosting library. | High speed, performance, and regularization to prevent overfitting. Dominant in competitive data science. |

| LightGBM [46] [44] | Boosting Library | A gradient boosting framework by Microsoft. | Faster training speed and lower memory consumption than XGBoost via histogram-based algorithms. |

| CatBoost [46] [43] | Boosting Library | A gradient boosting algorithm by Yandex. | Native handling of categorical features without extensive preprocessing, robust to hyperparameter settings. |