Optimizing Compound AI Systems: Topology and Parameter Tuning for Accelerated Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on optimizing compound AI systems for biomedical applications.

Optimizing Compound AI Systems: Topology and Parameter Tuning for Accelerated Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing compound AI systems for biomedical applications. It explores the foundational principles of compound AI architectures, details methodological approaches for designing and applying these systems to specific drug discovery tasks like target identification and molecular design, outlines advanced troubleshooting and parameter optimization techniques to enhance performance and cost-efficiency, and establishes a framework for rigorous validation and comparative analysis using domain-specific metrics. The content synthesizes the latest research and industry trends to equip scientists with the knowledge to build more efficient, reliable, and impactful AI-driven research tools.

The Architecture of Intelligence: Deconstructing Compound AI Systems for Biomedical Research

Technical Support Center

Troubleshooting Guides

Troubleshooting Guide 1: Resolving Performance Degradation in Multi-Agent AI Systems

- Problem: A compound AI system for literature review and hypothesis generation, which integrates a retrieval agent, a summarization agent, and a reasoning agent, is experiencing slow overall task completion and a drop in the quality of its final output reports.

- Background: This system is used by researchers to rapidly analyze new scientific publications. The system's topology involves a sequential workflow where the output of the retrieval agent is passed to the summarization agent, whose output is then passed to the reasoning agent.

- Diagnosis Steps:

- Isolate the Component: Run diagnostic inputs separately through each agent (retrieval, summarization, reasoning) to identify if a single component is the bottleneck [1].

- Check Communication Overhead: Monitor the latency introduced by the communication protocols between agents. High overhead can significantly slow down a sequential workflow [1].

- Analyze Resource Allocation: Check if computational resources (CPU/GPU) are being dynamically allocated based on agent demand. A resource-intensive agent might be starving others if allocation is static [1].

- Review Agent Prompts: Examine the textual parameters (prompts) of each agent. Vague prompts can lead to poor output quality, which compounds through the workflow [2].

- Solution:

- If a single agent is slow: Optimize the prompts of the slow agent or consider replacing it with a more efficient, specialized model [3].

- If communication overhead is high: Consider a more efficient data exchange format or a parallel execution topology where possible [1].

- If resource allocation is poor: Implement an orchestration platform that can dynamically manage resources, scaling them based on real-time demand [4] [1].

- For prompt issues: Implement an automated prompt optimization technique, such as using a separate LLM to provide textual feedback for prompt updates [2].

Troubleshooting Guide 2: Addressing Coordination Failures in Decentralized AI Agent Swarms

- Problem: A decentralized system of AI agents, designed for collaborative drug target identification, is producing conflicting results. The agents (e.g., a genomics data analyzer, a literature mining agent, a pathway modeling agent) are not effectively synthesizing their findings into a coherent recommendation.

- Background: In this decentralized setup, agents operate with autonomy and interact peer-to-peer. The lack of a central coordinator is leading to context collapse and accountability issues [5].

- Diagnosis Steps:

- Audit Communication Logs: Examine the messages exchanged between agents to identify misunderstandings or contradictions in the data being shared [1].

- Check Shared Memory Consistency: Verify that the shared knowledge base or memory that agents use to maintain context is being updated correctly and consistently [1].

- Evaluate Decision Logic: Analyze the local decision-making rules of each agent to ensure they are not based on conflicting assumptions or goals.

- Solution:

- Implement a Hierarchical Orchestrator: Introduce a lightweight supervisory agent (a hierarchical approach) to manage the interactions and synthesize final decisions from the specialized agents, reducing conflict [1].

- Standardize Communication: Enforce stricter, domain-specific communication protocols (ACPs) to ensure all agents use a common language and data format [1].

- Introduce a Reflection Mechanism: Build in a feedback loop where agents can critique each other's preliminary outputs before a final decision is made, allowing for self-correction [1].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a monolithic AI model and an orchestrated intelligence system?

A monolithic AI model is a single, large model (e.g., a general-purpose LLM) that handles all aspects of a task, from data processing to final output. While simple to deploy, it can be expensive, brittle, and hard to debug for complex tasks [3]. In contrast, an orchestrated intelligence system (or Compound AI System) coordinates multiple specialized components—such as models, tools, and data sources—to solve a problem [2] [6]. Think of it as moving from a solo musician to a full orchestra, where a conductor (the orchestrator) ensures each specialist plays its part in harmony. This leads to greater efficiency, scalability, and better performance on sophisticated tasks [4] [1].

FAQ 2: Our research team wants to build a compound AI system for optimizing clinical trial design. What is the first step in designing the system's topology?

The first step is to formally decompose your high-level goal into smaller, manageable subtasks [1]. For clinical trial design, this could involve:

- Task 1: A data retrieval agent to fetch relevant historical trial data, medical literature, and regulatory guidelines.

- Task 2: A patient cohort modeling agent to simulate patient populations and predict enrollment.

- Task 3: A protocol authoring agent to help draft the trial protocol based on the synthesized information. Once the tasks are defined, you can structure them into a computational graph, which defines the flow of data and control between these specialized components [2]. Frameworks like LangGraph or AutoGen can help you model this execution as a directed acyclic graph (DAG) or a stateful workflow [3].

FAQ 3: We have a working topology for our multi-agent system, but the final output is often inaccurate. How can we optimize the system without changing its core structure?

This is a classic problem of optimizing node parameters within a fixed structure [2]. You can focus on:

- Prompt Tuning: The textual parameters (prompts) for each agent are critical. Systematically refine these prompts using heuristic bootstrap-based methods or automated techniques where an auxiliary LLM provides feedback on prompt updates [2].

- Introducing Verification Nodes: Add a new, specialized "verifier" or "critic" agent to your existing topology. This agent's role is to check the outputs of other agents for accuracy, consistency, or compliance before the final result is produced, creating a quality control layer [6].

FAQ 4: How can we ensure our orchestrated AI system remains compliant with regulatory standards (e.g., FDA, HIPAA) in drug development?

AI orchestration platforms provide centralized governance features that are essential for compliance [4] [7]. You can:

- Implement Governance Guardrails: Apply policies across all models and tools for data access, security, and ethical use [7].

- Ensure Auditability: Use the orchestration layer's logging and monitoring capabilities to maintain a complete, immutable record of the system's processes, data flow, and decisions. This provides the transparency required for regulatory audits [4] [7].

- Incorporate Human-in-the-Loop Oversight: Design workflows that automatically escalate high-risk decisions or anomalous outputs to human researchers for review [7].

Experimental Data & Protocols

Table 1: Comparison of Compound AI System Optimization Methods

| Method Category | Key Principle | Ideal Use Case | Example Framework/Tool |

|---|---|---|---|

| Fixed-Structure Optimization [2] | Optimizes node parameters (e.g., prompts, weights) without changing the system's graph topology. | Systems with a validated, effective workflow that need fine-tuning for accuracy or efficiency. | LangChain [4], Prompt optimization via auxiliary LLM feedback [2] |

| Structure-Evolving Optimization [2] | Modifies the system's computational graph itself, including adding/removing nodes or edges. | Exploring novel system architectures or adapting a system to entirely new tasks or data types. | AutoGen [3], CrewAI [3] |

| Numerical Feedback Learning | Uses quantitative metrics (e.g., accuracy, latency) as signals for optimization, often via reinforcement learning. | Optimizing for well-defined, quantifiable objectives like task success rate or response time. | Reinforcement Learning (RL) [2] [1] |

| Language-Based Feedback Learning [2] | Uses natural language critiques (from humans or AI) as signals to guide system improvement. | Optimizing complex tasks where success is easier to describe qualitatively than to define with a single metric. | LLM-generated textual feedback [2] |

Table 2: Research Reagent Solutions for Compound AI Systems

| Reagent Solution | Function in AI Research | Relevance to Drug Development |

|---|---|---|

| Orchestration Platform (e.g., IBM watsonx Orchestrate, UiPath Maestro) [4] [7] | Provides the foundational layer for deploying, integrating, and managing multi-component AI systems at scale. | Manages end-to-end AI-driven workflows in drug discovery, ensuring governance and compliance across models and data sources. |

| Agent Framework (e.g., LangGraph, AutoGen, CrewAI) [2] [3] | A toolkit for building and experimenting with multi-agent systems, defining roles, communication, and workflows. | Enables the creation of specialized AI agents for tasks like literature review, genomic analysis, and clinical trial simulation. |

| Vector Database [7] | Enables efficient storage and retrieval of unstructured data (e.g., scientific papers, molecular data) for AI agents. | Powers retrieval-augmented generation (RAG) systems that provide AI models with access to the latest research and proprietary lab data. |

| Decentralized Knowledge Graph (e.g., OriginTrail) [8] | Provides a verifiable and auditable trail for data provenance, crucial for trust and reproducibility. | Secures and tracks the origin and integrity of training data and model outputs, which is critical for regulatory submissions. |

Experimental Protocol: Optimizing a Fixed-Topology Compound AI System

Objective: To improve the performance metric (\mu) (e.g., accuracy of generated drug synergy reports) of a fixed-topology compound AI system (\Phi = (G, \mathcal{F})) by optimizing its textual parameters (\theta_{i,T}) (prompts) [2].

Methodology:

- System Definition: Define your system (\Phi) as a graph (G=(V,E)) where nodes (V) represent agents (e.g.,

Data_Retriever,Analysis_Agent,Report_Generator) and edges (E) represent the data flow. - Baseline Establishment: Run the system on a curated validation set (\mathcal{D}) and measure the baseline performance using metric (\mu).

- Optimization Loop: For a set number of iterations: a. Generate Variants: Create new candidate prompts (\theta'{i,T}) for one or more nodes. This can be done manually or automatically (e.g., using an LLM to generate prompt variations). b. Evaluate: Run the system with the new parameters on (\mathcal{D}) and compute (\mu(\Phi(qi), mi)) for each query. c. Select: Compare the average performance against the baseline. If improved, adopt the new parameters (\theta'{i,T}) as the current best.

- Validation: Apply the optimized parameters to a held-out test set to confirm performance improvement.

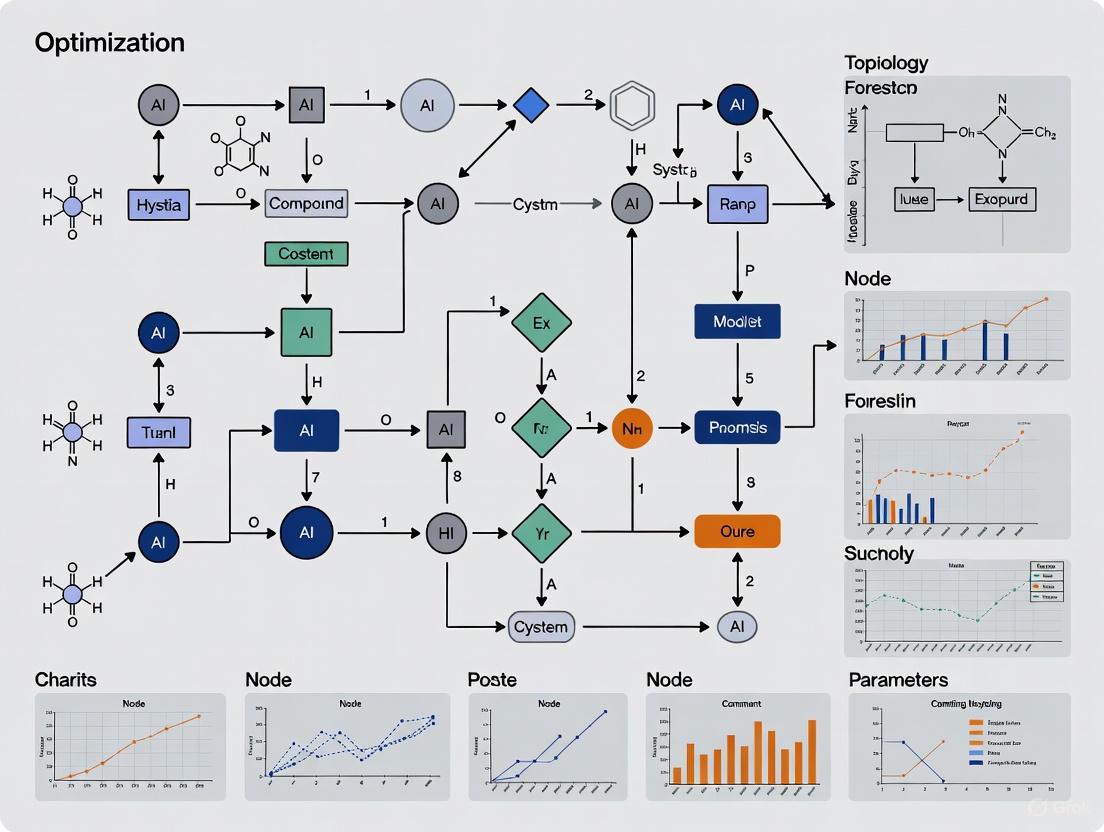

System Visualization: Architecture and Optimization

Compound AI System Topology

Parameter Optimization Workflow

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between an AI Model and a Compound AI System? An AI Model is a single statistical model, like a Transformer that predicts the next token in text. In contrast, a Compound AI System is a configuration that tackles AI tasks by combining multiple interacting components, such as multiple calls to models, retrievers, or external tools [9]. The key difference is that compound systems leverage the strengths of various specialized components to solve problems more effectively than a single model can [10].

Q2: What are the primary architectural choices when designing a Multi-Agent System? The two primary network architectures for Multi-Agent Systems are [11]:

- Centralized Networks: A central unit contains global knowledge, connects agents, and oversees their information. This allows for easy communication but creates a single point of failure.

- Decentralized Networks: Agents share information only with their neighbors without a global knowledge base. This architecture is more robust and modular but requires more complex coordination.

Q3: Why would a researcher choose to build a Compound AI System over using a single, more powerful LLM? There are several strategic reasons [12] [9]:

- Maximizing Performance: For high-value applications, system design (e.g., sampling multiple solutions) can often improve results more than simply using a larger model.

- Dynamic Knowledge: Systems can incorporate timely data through retrieval, overcoming the fixed knowledge of a statically trained model.

- Improved Control & Trust: Systems can include components to filter outputs, verify facts, or provide citations, reducing hallucinations and increasing reliability.

- Cost-Quality Flexibility: Systems allow developers to tailor the cost and quality of outputs by combining different models and tools, rather than being locked to a single model's performance and cost.

Q4: In the context of drug development, what is a concrete example of an AI agent's function? A prominent example is the use of AI to create "digital twins" in clinical trials. An AI agent can generate a model that predicts an individual patient's disease progression over time. This digital twin serves as a control, allowing researchers to compare the actual effects of an experimental therapy against the predicted outcome, thereby reducing the number of participants needed in a trial without compromising its statistical integrity [13].

Q5: What is "Tool Use" in Agentic AI and why is it critical? Tool Use refers to an AI agent's ability to call external services and APIs by itself. This allows agents to interact with databases, search engines, code execution environments, and other software systems. It is a key capability that amplifies an agent's functionality far beyond its built-in knowledge, turning it into a versatile tool that can perform a wider scope of tasks [14].

Troubleshooting Guides

Challenge: Unpredictable or Conflicting Agent Behavior in a Multi-Agent System

This occurs when agents in a decentralized network act autonomously in ways that conflict or lead to undesirable system-wide outcomes.

Diagnosis Steps:

- Map Agent Dependencies: Identify the goals, resources, and communication pathways of all agents involved.

- Analyze Communication Logs: Check if agents are successfully sharing information, goals, and learned policies. Look for misunderstandings or failures in the communication protocol [11].

- Check for Conflicting Goals: Determine if individual agents have objectives that are inherently in conflict, leading to competition rather than cooperation [11].

Resolution Steps:

- Implement Robust Communication Protocols: Use standardized languages like Knowledge Query Manipulation Language (KQML) or Agent Communication Language (ACL) to ensure clear communication [15].

- Adopt a Coalition or Team Structure: Temporarily unite agents (coalition) or organize them into a hierarchical structure (team) to align their efforts towards a common superordinate goal [11].

- Introduce a Mediation Mechanism: Design a lightweight overseer agent or a set of rules to arbitrate resource conflicts and negotiate between agents.

Challenge: Poor End-to-End Performance in a Compound AI System

The overall quality of a compound system (e.g., a RAG pipeline) is unsatisfactory, and it's unclear which component is the bottleneck.

Diagnosis Steps:

- Isolate and Evaluate Components: Independently test the performance of each system component. For a RAG system, this means evaluating the retriever's accuracy and the LLM's generation quality separately [12].

- Check for Component Mismatch: Ensure that the components are co-optimized to work together. For example, an LLM might be generating search queries that are not optimal for a specific retriever's design [9].

- Profile Resource Allocation: Analyze the latency and cost budget allocated to each component. An imbalance (e.g., spending 80% of the latency budget on a retriever) can severely limit the system's performance [9].

Resolution Steps:

- Develop a Strong Evaluation System: Implement a robust metrics and logging framework to track the performance of individual components and the system as a whole. Tools like MLflow can be used for this purpose [12].

- Iterate on System Design: Experiment with different component combinations and architectures. Use a modular framework that allows you to easily swap out models, retrievers, or tools [12] [9].

- Apply End-to-End Optimization: Use frameworks like DSPy, which can optimize the prompts and weights of multiple components in a pipeline to work better together, even with non-differentiable components like search engines [9].

Challenge: Agentic AI System Demonstrates Unreliable or Non-Factual Outputs

The AI agent successfully uses tools and executes tasks, but its final outputs or decisions are factually incorrect or inconsistent.

Diagnosis Steps:

- Trace the Reasoning Chain: Review the agent's step-by-step reasoning process (if available) to identify where the factual error or logical misstep was introduced.

- Audit Tool Inputs/Outputs: Verify that the data returned by external tools (e.g., databases, APIs) is accurate and current.

- Check Context Management: Assess whether the agent is being provided with the correct, up-to-date context for its decision-making, a process known as Context Engineering [14].

Resolution Steps:

- Implement a Verification Step: Add a final step in the agent's workflow where another agent or a simpler model verifies the output against source documents or knowledge bases.

- Improve Context Engineering: Carefully curate the information provided to the agent to maximize relevance and reliability. This goes beyond simple prompt engineering [14].

- Enforce Tool Use for Fact-Checking: Program the agent to use a web search or database lookup tool specifically to verify critical facts before presenting them as final.

Comparative Analysis: System Architectures

Table 1: Key Concepts and Their Characteristics in Agentic AI.

| Concept | Core Definition | Key Characteristics | Common Frameworks |

|---|---|---|---|

| Agentic AI | A branch of AI focused on agents that can make decisions, plan, and execute tasks autonomously to achieve goals [14]. | Autonomy, Goal-Orientation, Perception, Reasoning, Action [14] [16]. | LangChain, AgentFlow [14]. |

| Compound AI System | A system that uses multiple components (models, retrievers, tools) to solve an AI task more effectively than a single model [10] [9]. | Multi-component, Specialization, Dynamic Knowledge, Improved Control [10] [9]. | Custom-built architectures, often utilizing frameworks for orchestration like DSPy [9]. |

| Multi-Agent System (MAS) | A computerized system composed of multiple interacting intelligent agents that work collectively [11] [15]. | Collaboration, Coordination, Distributed Problem-Solving, Flexibility, Scalability [11]. | JADE, CAMEL [15]. |

Table 2: Troubleshooting Common Scenarios in AI Systems.

| Scenario | Likely Cause | Recommended Action |

|---|---|---|

| Repetitive agent behavior or deadlock | Lack of effective coordination mechanisms; conflicting goals. | Implement flocking or swarming behaviors (separation, alignment, cohesion) or form agent teams/coalitions [11]. |

| Compound system is too slow or expensive | Poor resource allocation between components; using a large LLM for all sub-tasks. | Profile component cost/latency; delegate specific tasks to smaller, specialized models or tools [10] [9]. |

| System outputs are factually incorrect (hallucinations) | Over-reliance on the model's internal knowledge; lack of grounding. | Integrate a retrieval (RAG) component to provide external, verifiable data sources [10] [9]. |

Experimental Protocols & Methodologies

Protocol 1: Evaluating Multi-Agent Coordination in a Simulated Environment This protocol is designed to test the efficiency of different coordination strategies in a MAS.

- Objective: To measure the task completion time and success rate of a MAS under different organizational structures (Hierarchical vs. Coalition).

- Materials: A multi-agent simulation framework (e.g., CAMEL [15]), a defined task environment (e.g., a supply chain logistics simulator [11]).

- Procedure:

- Configure Agent Team A with a hierarchical structure, where a manager agent delegates tasks to worker agents.

- Configure Agent Team B with a coalition structure, where agents temporarily group based on task requirements.

- Deploy both teams in the simulation environment with an identical complex task (e.g., "optimize package delivery routes under a new constraint").

- Record the time to complete the task and the overall success rate.

- Repeat the experiment multiple times to ensure statistical significance.

- Metrics: Average Task Completion Time, Task Success Rate, Resource Utilization Efficiency.

Protocol 2: Co-Optimization of a Compound RAG System for Scientific Q&A This protocol outlines how to systematically improve a RAG system designed for answering domain-specific questions, such as in drug discovery.

- Objective: To maximize the answer accuracy of a RAG pipeline by co-optimizing the retriever and the LLM components.

- Materials: A curated dataset of scientific questions and ground-truth answers, a vector database for document retrieval, multiple candidate LLMs (large and small), an evaluation framework like MLflow [12].

- Procedure:

- Baseline: Establish a baseline by running the dataset with a standard RAG setup (e.g., using a generic embedding model and a large LLM).

- Component Isolation: Evaluate the retriever's performance separately by measuring its recall@k for relevant documents.

- Component Swapping: Experiment with different embedding models and LLMs. For example, try a domain-specific embedding model and a smaller, fine-tuned LLM.

- Pipeline Optimization: Use an optimizer like DSPy [9] to automatically tune the prompts and interactions between the retriever and the LLM.

- End-to-End Evaluation: Measure the final answer accuracy of each configuration against the ground-truth dataset.

- Metrics: Recall@k (for retriever), Exact Match (EM) and F1 Score (for end-to-end Q&A accuracy).

System Topology Visualizations

Diagram 1: Compound AI system topology.

Diagram 2: Multi-agent system workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Building Advanced AI Systems.

| Item | Function in Research | Example Use Case |

|---|---|---|

| LangChain Framework | An open-source framework for building LLM-powered applications. It supports chaining prompts, external tool use, memory, and building AI agents [14]. | Creating an automated workflow that takes a scientific query, searches a database, and summarizes the findings. |

| Model Context Protocol (MCP) | A standardized communication protocol that facilitates interaction between agents, language models, and other components, ensuring robust and transparent communication [14]. | Enabling different agents in a drug discovery pipeline (e.g., a genomics agent and a chemistry agent) to exchange data seamlessly. |

| Digital Twin Generator | An AI-driven model that creates a simulated version of a real-world process or entity (e.g., a patient's disease progression). Used for prediction and analysis [13]. | Generating a control arm for a clinical trial to reduce the number of required human participants and accelerate the trial timeline [13]. |

| Retrieval-Augmented Generation (RAG) | A compound AI technique that combines an LLM with a retrieval system. The retriever fetches relevant, up-to-date information from external sources to ground the LLM's responses [10] [12]. | Building a Q&A system for researchers that answers specific questions by retrieving data from the latest scientific literature and internal lab reports. |

| Orchestration Engine (e.g., watsonx Orchestrate) | A platform designed to manage, coordinate, and monitor the execution of multiple AI agents and workflows within a compound system [10] [11]. | Managing a complex multi-agent system where different agents handle tasks from patient data analysis to clinical trial optimization in a coordinated manner. |

In the modern drug discovery pipeline, Large Language Models (LLMs) offer transformative potential but face significant limitations including hallucinations, information incompleteness, and dissemination of misinformation [17]. These challenges are particularly critical in healthcare contexts where accuracy directly impacts patient outcomes [17]. This technical support center provides structured methodologies for researchers to overcome these limitations through optimized compound AI system topology and node parameter configuration.

Compound AI systems, defined as systems that tackle AI tasks using multiple interacting components, require novel optimization approaches because they are built from non-differentiable components [2]. By implementing the structured troubleshooting guides and experimental protocols below, research teams can significantly enhance the reliability and performance of LLM-integrated drug discovery workflows.

Frequently Asked Questions

Q1: Our LLM frequently generates plausible but incorrect drug-target interactions. How can we improve factual accuracy? A1: This indicates model hallucination, a known limitation where LLMs generate fluent but factually incorrect content [17] [18]. Implement a knowledge-grounded framework like DrugGPT, which incorporates three cooperative models:

- IA-LLM (Inquiry Analysis LLM) analyzes inquiries to determine required knowledge

- KA-LLM (Knowledge Acquisition LLM) extracts relevant information from verified knowledge bases

- EG-LLM (Evidence Generation LLM) generates answers based on identified evidence [18]

Q2: Our drug response predictions lack consistency across similar queries. What structural changes can help? A2: Inconsistent outputs suggest information completeness issues [17]. Optimize your system topology by:

- Implementing a Fixed Structure approach with predefined computational graph (V,E) while optimizing node parameters [2]

- Adding retrieval-augmented generation (RAG) modules to ground predictions in established biomedical knowledge bases [2]

- Applying knowledge-consistency prompting and evidence-traceable prompting strategies to improve output credibility [18]

Q3: How can we adapt general-purpose LLMs for specialized drug discovery tasks without full retraining? A3: Utilize Parameter-Efficient Fine-Tuning (PEFT) methods:

- LoRA (Low-Rank Adaptation) adds small trainable matrices to model layers while freezing original weights

- QLoRA (Quantized LoRA) enables fine-tuning of large models (up to 65B parameters) on a single GPU through 4-bit quantization [19] These approaches dramatically reduce compute requirements while enabling domain adaptation for specialized tasks like target-disease linkage analysis [19].

Q4: Our multi-component AI system suffers from integration bottlenecks. How can we optimize component interactions? A4: This requires compound AI system optimization. Formalize your system as Φ=(G,ℱ) where G is a directed graph and ℱ is a set of operations [2]. Then apply:

- Structural Flexibility analysis to determine whether to modify system topology or optimize existing node parameters [2]

- Graph-based formalization to map dependencies between components and identify optimization pathways [2]

Troubleshooting Guides

Problem 1: Hallucinations in Drug-Target Recommendation

Symptoms: Generated content appears reasonable but contains factually incorrect drug mechanisms or target interactions.

Diagnosis: Lack of grounding in verified pharmacological knowledge bases.

Solution: Implement the knowledge-grounded collaborative framework.

Experimental Protocol:

- Knowledge Base Integration

- Incorporate Drugs.com, NHS, and PubMed databases

- Construct a Disease-Symptom-Drug Graph (DSDG) modeling relationships between entities

Collaborative Mechanism Setup

- Configure IA-LLM with chain-of-thought (CoT) and few-shot prompting for inquiry analysis

- Train KA-LLM using knowledge-based instruction prompt tuning for evidence extraction

- Implement EG-LLM with knowledge-consistency prompting for answer generation

Validation Framework

- Test on MedQA-USMLE, MedMCQA, and MMLU-Medicine datasets

- Evaluate using accuracy, precision, recall, and F1 scores

- Compare against baseline LLMs (GPT-4, ChatGPT, Med-PaLM-2)

Table: Performance Comparison of DrugGPT vs. Baseline Models on Medical QA Tasks

| Model | MedQA-USMLE Accuracy | MedMCQA Accuracy | ADE-Corpus-v2 Performance | Parameters |

|---|---|---|---|---|

| DrugGPT | 87.3% | 84.7% | 89.1% | ~7B |

| GPT-4 | 76.2% | 72.8% | 74.5% | ~1.7T |

| ChatGPT | 70.1% | 68.3% | 71.2% | ~175B |

| Med-PaLM-2 | 81.5% | 79.2% | 83.7% | ~340B |

Source: Adapted from DrugGPT evaluation metrics [18]

Problem 2: Incomplete Drug-Drug Interaction Predictions

Symptoms: System identifies basic interactions but misses complex pharmacokinetic/pharmacodynamic relationships.

Diagnosis: Limited proficiency with complex, information-rich inputs [17].

Solution: Enhance system topology with specialized DDI components.

Experimental Protocol:

- Data Preparation

- Utilize DDI-Corpus with 5,028 manually annotated drug-drug interactions

- Create balanced test set with 500 positive and 500 negative samples

- Incorporate ADE-Corpus-v2 for adverse drug event relationships

System Architecture Optimization

- Implement dedicated DDI analysis node with pharmacological knowledge grounding

- Add cross-validation node against DrugBank and clinical databases

- Configure iterative refinement loops for complex query processing

Evaluation Metrics

- Measure accuracy on DDI identification tasks

- Assess response completeness using 3-point Likert scales

- Benchmark against domain-specific models and clinical experts

Problem 3: Inefficient Fine-Tuning for Domain Adaptation

Symptoms: Model adaptation requires excessive computational resources or fails to capture domain-specific nuances.

Diagnosis: Suboptimal fine-tuning strategy selection for specialized drug discovery tasks.

Solution: Implement structured fine-tuning protocol based on model size and task complexity.

Experimental Protocol:

- Task Analysis

- Categorize target task: drug recommendation, dosage optimization, or adverse reaction prediction

- Estimate data availability: low (<1k samples), medium (1k-10k), or high (>10k)

- Assess computational constraints: single GPU vs. multi-node cluster

Fine-Tuning Method Selection

- For parameter-efficient adaptation: Implement LoRA or QLoRA

- For high-resource scenarios: Consider full fine-tuning with progressive layer unfreezing

- For multi-task requirements: Deploy adapter-based approaches for task switching

Validation Framework

- Domain-specific benchmarks: DrugBank-QA, MIMIC-DrugQA, COVID-Moderna

- Generalization assessment: Cross-database performance evaluation

- Clinical relevance: Expert evaluation of output usefulness

Table: Fine-Tuning Method Comparison for Drug Discovery Applications

| Method | Best For | Compute Requirements | Parameter Efficiency | Typical Performance Gain |

|---|---|---|---|---|

| Full Fine-Tuning | High-resource domains with >10k samples | Very High | Low | 15-25% |

| LoRA | Limited data scenarios with moderate compute | Medium | High | 12-20% |

| QLoRA | Memory-constrained environments | Low | Very High | 10-18% |

| Adapter-Based | Multi-task learning and rapid switching | Medium-High | Medium | 8-15% |

Source: Adapted from fine-tuning landscape analysis [19]

The Scientist's Toolkit

Table: Essential Research Reagents for LLM-Enhanced Drug Discovery

| Reagent / Tool | Function | Application Example |

|---|---|---|

| Drugs.com Database | Comprehensive drug information source | Grounding drug mechanism predictions in verified data [18] |

| Disease-Symptom-Drug Graph (DSDG) | Knowledge graph modeling medical relationships | Enabling evidence-based drug recommendation [18] |

| LoRA (Low-Rank Adaptation) | Parameter-efficient fine-tuning method | Adapting base LLMs to specialized pharmacology tasks [19] |

| DDI-Corpus | Manually annotated drug-drug interactions | Training and validating interaction prediction models [18] |

| MedQA-USMLE Dataset | Professional medical examination questions | Benchmarking model performance on clinical reasoning [18] |

| Compound AI System Framework | Formalized approach for multi-component systems | Optimizing topology and parameters of complex AI workflows [2] |

Experimental Protocols

Protocol 1: Compound AI System Optimization for Target Identification

Objective: Optimize multi-component AI system for novel drug target identification.

Workflow:

- System Formalization

- Define computational graph G=(V,E) with nodes for target validation, literature analysis, and pathway mapping

- Specify node operations ℱ including LLM inference, database lookup, and similarity scoring

Parameter Optimization

- Apply textual parameter (θ_i,T) optimization for prompt engineering

- Implement numerical parameter (θ_i,N) tuning for model weights and temperature settings

- Utilize gradient-based or heuristic methods depending on differentiability

Performance Evaluation

- Measure target-disease linkage accuracy against known biological pathways

- Assess novelty of predictions through literature validation

- Benchmark against standalone LLM performance

Protocol 2: Hallucination Reduction in Pharmacology QA

Objective: Minimize factual errors in pharmacology question answering.

Workflow:

- Baseline Assessment

- Evaluate GPT-4, ChatGPT, and Med-PaLM-2 on MedQA-USMLE and PubMedQA datasets

- Quantify hallucination rate through expert annotation

- Identify common error patterns in drug mechanism explanations

Intervention Implementation

- Deploy three-component DrugGPT architecture with cooperative models

- Implement knowledge-consistency prompting to ensure faithfulness

- Apply evidence-traceable prompting for source transparency

Validation Metrics

- Accuracy on standardized medical examinations

- Hallucination rate reduction compared to baselines

- Expert evaluation of response quality and evidence quality

Table: Hallucination Reduction Performance Across Model Architectures

| Model Architecture | MedQA-USMLE Accuracy | Hallucination Rate | Evidence Quality Score |

|---|---|---|---|

| Standard GPT-4 | 76.2% | 18.7% | 2.1/5.0 |

| + Knowledge Grounding | 81.5% | 12.3% | 3.4/5.0 |

| + Evidence Tracing | 84.2% | 8.9% | 4.2/5.0 |

| DrugGPT (Full) | 87.3% | 4.1% | 4.7/5.0 |

Source: Adapted from DrugGPT evaluation results [18]

Compound AI systems are advanced frameworks designed to tackle complex tasks by orchestrating multiple, interacting components such as models, retrievers, and tools, rather than relying on a single monolithic model [12]. This architectural shift recognizes that many challenging problems in artificial intelligence, particularly in scientific and research domains, require a division of labor where specialized components handle specific sub-tasks like retrieval, planning, problem-solving, and verification [20].

For researchers in fields like drug development, compound systems offer significant advantages over single-model approaches. They provide better control and trustworthiness by supplying AI with accurate information from external sources and using tools to enforce output constraints [12]. These systems are also more dynamic, capable of integrating outside resources such as scientific databases, code interpreters, and permissions systems, making them more flexible and adaptable to evolving research needs [12]. Furthermore, they enable more cost-quality options, allowing research teams to achieve higher performance or reduce costs by carefully selecting and combining components [12].

Core Components and Their Functions

The Retriever

Function: The retriever component is responsible for sourcing and providing relevant, external information to the system from knowledge bases, scientific databases, or document repositories. It acts as the system's foundational knowledge access module [21] [12].

Technical Implementation:

- Query Understanding and Reformulation: Transforms natural language queries into structured queries suitable for database systems. This includes techniques like self-querying, where the retriever uses an LLM chain to write structured queries for its underlying VectorStore [21].

- Query Expansion: Generates multiple query variations to improve retrieval coverage and effectiveness [21].

- Information Grounding: Provides factual foundation for subsequent reasoning steps, crucial for maintaining scientific accuracy in drug discovery applications.

The Planner

Function: The planner performs decision-making to form sub-goals and build a path from the current state to a desired future state. It breaks down complex research problems into manageable sequential steps [21].

Technical Implementation:

- Task Decomposition: Adopts a divide-and-conquer approach, decomposing complicated multi-step tasks into several sub-tasks and sequentially planning for each [21].

- Multi-Plan Selection: Generates various alternative plans for a task, then employs task-related search algorithms to select the optimal plan for execution [21].

- Memory-Augmented Planning: Enhances planning with a memory module storing valuable information like domain-specific knowledge, past experiences, and commonsense knowledge, which is retrieved during planning as auxiliary signals [21].

The Solver

Function: The solver executes the specific computational or reasoning tasks identified by the planner. It generates solutions, hypotheses, or content based on the retrieved information and defined plan [20].

Technical Implementation:

- Reasoning Application: Employs logical methods to solve problems by making observations, generating hypotheses, and validating based on data [21].

- Thought Generation: Creates coherent cause-effect relationships to connect information and derive conclusions [21].

- Tool Utilization: Interacts with specialized external tools and environments (e.g., molecular simulators, data analysis packages) to perform actions in pursuit of research goals [21].

The Verifier

Function: The verifier assesses the quality, accuracy, and validity of the solver's outputs. It implements quality control through reflection and refinement cycles [21].

Technical Implementation:

- Output Validation: Checks solutions for factual consistency, logical soundness, and compliance with domain constraints.

- Self-Critique and Refinement: Enables the system to reflect on failures and refine outputs through iterative improvement loops [21].

- Constraint Enforcement: Ensures outputs adhere to required formats, scientific principles, and predefined research parameters.

Component Interaction Workflow

System Architecture and Design Patterns

Compound AI systems can be architected following different design patterns, each with distinct advantages for research applications. The two primary patterns are workflow-based systems and agentic systems.

Workflow-Based Systems utilize pre-defined, manually declared plans that solve problems in predictable, repeatable manners. This approach offers higher reliability through programmatic control flow while benefiting from LLM expressiveness for specific tasks [21].

Agentic Systems employ modules that autonomously decide what steps to take using capabilities like reasoning, planning, and tool usage. This offers greater flexibility in interpreting and acting on complex inputs, though with potential trade-offs in reliability [21].

Multi-Agent Collaborative Systems

In complex research domains like drug development, multi-agent systems enable collaborative problem-solving where different modules assume specialized roles and work upon each other's outputs [21]. This pattern is particularly valuable for tackling multifaceted research problems requiring diverse expertise.

Experimental Protocols and Evaluation Framework

Component-Level Evaluation Protocol

Objective: Systematically assess individual component performance to identify optimization opportunities.

Methodology:

- Retriever Evaluation:

- Prepare benchmark queries relevant to your research domain (e.g., compound-target interactions, clinical trial criteria)

- Establish ground truth relevance judgments for retrieved documents

- Calculate standard metrics: Precision@K, Recall@K, Mean Reciprocal Rank (MRR)

- Conduct ablation studies to determine optimal retrieval parameters

Planner Evaluation:

- Define complex multi-step tasks representative of research workflows

- Assess plan quality using expert evaluation rubrics measuring:

- Logical coherence of step sequence

- Appropriateness for task completion

- Efficiency in resource utilization

- Measure planning success rate across task categories

Solver Evaluation:

- Develop task-specific performance metrics aligned with research objectives

- For hypothesis generation: novelty, feasibility, scientific soundness

- For data analysis: accuracy, completeness, interpretability

- Implement A/B testing frameworks comparing different solver configurations

Verifier Evaluation:

- Create test sets containing both valid and invalid solutions

- Measure verification accuracy, precision, and recall

- Assess false positive/negative rates across error types

- Evaluate refinement effectiveness through solution improvement metrics

End-to-End System Evaluation Protocol

Objective: Measure overall system performance on complete research tasks.

Methodology:

- Task Design:

- Develop comprehensive benchmark tasks reflecting real research challenges

- Include tasks of varying complexity levels

- Ensure tasks require integrated use of all system components

Evaluation Framework:

- Employ both automated metrics and expert human evaluation

- Establish scoring rubrics assessing:

- Factual accuracy and scientific validity

- Completeness of solution

- Efficiency in task completion

- Novelty and creativity of approach

- Compare performance against baseline methods and expert performance

Iterative Optimization:

- Use evaluation results to identify system bottlenecks

- Implement targeted component improvements

- Re-evaluate to measure improvement impact

- Maintain detailed experiment logs for reproducibility

Quantitative Evaluation Metrics Table

| Component | Primary Metrics | Target Benchmarks | Measurement Frequency |

|---|---|---|---|

| Retriever | Precision@5: >0.85Recall@10: >0.90MRR: >0.80 | Domain-specificknowledge basecoverage | Per 100 queriesand monthlycomprehensive review |

| Planner | Task completion rate: >85%Step efficiency ratio: >0.75Human approval rate: >80% | Expert-definedoptimal workflowsand protocols | Per 50 complextasks and quarterlyexpert review |

| Solver | Solution accuracy: >90%Hallucination rate: <5%Response coherence: >85% | Domain expertperformance onstandardized tests | Continuous monitoringwith weeklyaggregate reporting |

| Verifier | Error detection rate: >95%False positive rate: <8%Refinement efficacy: >70% | Human expertvalidation asgold standard | Per verificationcycle and monthlycalibration |

Troubleshooting Guide: Common Issues and Solutions

Retrieval Performance Issues

Problem: The retriever consistently returns irrelevant or incomplete information for research queries.

Symptoms:

- Generated solutions lack domain-specific knowledge

- High factual error rate in solver outputs

- Poor performance on tasks requiring specialized knowledge

Diagnostic Steps:

- Check query understanding module performance using test queries

- Evaluate embedding effectiveness for domain-specific terminology

- Assess knowledge base coverage for relevant research areas

- Analyze retrieval failure patterns across query types

Solutions:

- Implement query expansion techniques (synonym generation, hyponym inclusion) [21]

- Fine-tune embeddings on domain-specific corpora

- Enhance knowledge base with specialized research sources

- Implement multi-query retrieval strategies [21]

Planning Inefficiencies

Problem: The planner creates suboptimal task decompositions or inefficient workflows.

Symptoms:

- Excessive steps for straightforward tasks

- Illogical step sequences

- Missing critical process steps

- Resource-intensive planning with minimal benefit

Diagnostic Steps:

- Analyze planning trajectories for similar tasks

- Evaluate plan optimality against expert-defined workflows

- Assess planning consistency across task variations

- Measure planning time versus execution time ratios

Solutions:

- Implement multi-plan selection with optimal plan identification [21]

- Augment planning with memory of successful previous plans [21]

- Incorporate domain-specific planning constraints and heuristics

- Establish planning templates for common research workflows

Solver Quality Problems

Problem: The solver generates inaccurate, nonsensical, or hallucinated content.

Symptoms:

- Factual inconsistencies in generated solutions

- Logical fallacies in reasoning chains

- Poor alignment with scientific principles

- Low expert approval rates

Diagnostic Steps:

- Conduct ablation studies to isolate solver vs. retrieval issues

- Evaluate solver performance with perfect retrieval inputs

- Analyze error patterns across problem types

- Assess reasoning chain coherence and validity

Solutions:

- Implement chain-of-thought reasoning with explicit validation steps [21]

- Enhance solver prompts with domain-specific constraints and examples

- Incorporate external tool usage for specialized computations [21]

- Establish solution verification checkpoints throughout generation process

Verification System Failures

Problem: The verifier misses critical errors or incorrectly flags valid solutions.

Symptoms:

- Invalid solutions passing verification

- Valid solutions rejected unnecessarily

- Inconsistent verification standards

- Limited refinement effectiveness

Diagnostic Steps:

- Analyze verification decision patterns on known-valid and known-invalid solutions

- Assess verification consistency across similar solutions

- Evaluate refinement impact on solution quality

- Measure verification module calibration

Solutions:

- Implement multi-stage verification with increasing scrutiny [21]

- Enhance verification criteria with domain-specific validation rules

- Incorporate external validation tools and resources

- Establish verification confidence scoring with appropriate thresholds

Troubleshooting Workflow Diagram

Frequently Asked Questions (FAQs)

Q1: How do we determine the optimal complexity for a compound AI system versus using a single model?

A1: The decision should be based on task complexity, reliability requirements, and available resources. Single models are sufficient for straightforward tasks with well-defined outputs. Compound systems become beneficial when tasks require: (1) integration of external or proprietary knowledge, (2) multi-step reasoning with verification, (3) specialized tools or computations, or (4) higher reliability than a single model can provide. Start with the simplest viable architecture and incrementally add components only when they address specific performance gaps [12].

Q2: What strategies are most effective for optimizing component integration in compound systems?

A2: Effective integration strategies include:

- Modular Design: Create well-defined interfaces between components to enable independent testing and optimization [12]

- Structured Communication: Establish clear data formats and protocols for component interactions

- Joint Optimization: Rather than optimizing components in isolation, assess and refine them in the context of their interactions within the full system [12]

- Iterative Refinement: Use evaluation results to identify integration bottlenecks and address them systematically

Q3: How can we effectively evaluate and benchmark compound AI systems for research applications?

A3: Implement a multi-faceted evaluation framework including:

- Component-level metrics to assess individual module performance

- End-to-end task success rates measuring overall system effectiveness

- Human expert evaluation for quality assessment of outputs

- Efficiency metrics tracking computational resource utilization

- A/B testing capabilities to compare different system configurations [12] Focus evaluation on tasks representative of real research workflows rather than artificial benchmarks.

Q4: What are the most common failure modes in compound AI systems and how can we mitigate them?

A4: Common failure modes include:

- Cascading errors: Where one component's error propagates through the system - mitigated through verification checkpoints

- Integration inconsistencies: When components use conflicting assumptions or data formats - addressed with clear interface specifications

- Reasoning chain breakdowns: Where multi-step reasoning fails at intermediate steps - improved through better planning and verification

- Knowledge retrieval gaps: When retrievers miss critical information - enhanced through better query understanding and knowledge base coverage Implement robust error handling, validation at each processing stage, and comprehensive logging for failure analysis.

Q5: How do we manage the increased computational costs and latency of compound systems?

A5: Cost and latency management strategies include:

- Selective component invocation: Only use computationally expensive components when necessary

- Caching strategies: Store and reuse frequent retrieval results or intermediate computations

- Asynchronous processing: Execute independent components in parallel where possible

- Component efficiency optimization: Focus on optimizing the most resource-intensive components first

- Intelligent routing: Direct simpler queries to less expensive processing paths Establish clear performance budgets and monitor resource utilization continuously.

The Scientist's Toolkit: Research Reagents and Solutions

Essential Components for Compound AI System Research

| Research Reagent | Function | Implementation Examples | Considerations for Drug Development |

|---|---|---|---|

| Vector Database | Stores and retrieves embeddings for semantic search | Pinecone, Weaviate, Chroma, PGVector | Must handle domain-specificterminology and structuredscientific data |

| Reasoning Engine | Executes logical reasoningand problem-solving tasks | LLMs (GPT-4, Claude,domain-specific models),Symbolic reasoning systems | Requires fine-tuning onscientific literature anddomain knowledge |

| Tool IntegrationFramework | Enables interaction withexternal tools and APIs | LangChain, LlamaIndex,Custom API integrations | Critical for connecting tospecialized research toolsand databases |

| EvaluationFramework | Measures system performanceacross multiple dimensions | MLflow, TruEra,Custom metrics pipelines | Must incorporatedomain-specific successmetrics and expert validation |

| OrchestrationPlatform | Manages componentinteractions and workflows | AutoGen, CrewAI,LangGraph, Prefect | Requires flexibility toadapt to evolving researchworkflows and protocols |

| Knowledge Bases | Provide domain-specificinformation to the system | PubMed, DrugBank,ClinicalTrials.gov,Proprietary research data | Quality and coveragedirectly impact systemreliability and usefulness |

The Economic and Scientific Imperative for Optimization in Pharma R&D

Technical Support Center

Troubleshooting Guides

Issue 1: Poor Generalization of Machine Learning Models in Virtual Screening

Problem Description Machine learning models perform well on internal validation sets but show a significant drop in performance when screening novel chemical structures or against new protein target families. Predictions become unreliable for real-world drug discovery applications [22].

Diagnostic Steps

- Performance Gap Analysis: Compare model performance on the standard test set versus a hold-out test set composed of entirely novel protein superfamilies not represented in the training data [22].

- Structural Shortcut Inspection: Analyze whether the model is relying on spurious correlations or memorizing specific structural motifs in the training data, rather than learning the underlying principles of molecular binding [22].

Resolution Protocol

- Implement a Targeted Model Architecture: Shift from a general-purpose model to a specialized architecture that is constrained to learn only from the representation of the protein-ligand interaction space. This forces the model to focus on the distance-dependent physicochemical interactions between atom pairs, which are more transferable across diverse protein families [22].

- Adopt Rigorous Benchmarking: During model validation, simulate real-world scenarios by leaving out entire protein superfamilies and all associated chemical data from the training process. This provides a more realistic assessment of the model's utility for novel target discovery [22].

- Utilize Generalizable Datasets: Train models on large, diverse, and publicly available datasets that encompass a wide variety of protein families and chemical spaces to improve inherent model robustness [22].

Issue 2: Inefficient and Unreliable AI Infrastructure for Large-Scale Training

Problem Description AI infrastructure cannot handle the computational demands of training deep learning models on massive compound libraries, leading to long training times, system instability, and an inability to scale [23] [24].

Diagnostic Steps

- Resource Utilization Check: Use monitoring tools (e.g., Prometheus, Grafana) to track GPU/CPU utilization, memory usage, and storage I/O during model training to identify bottlenecks [25].

- Workload Assessment: Determine if the workload is primarily data-intensive (processing petabytes of data) or compute-intensive (training complex neural networks), as the solutions differ [24].

Resolution Protocol

- Select Appropriate Hardware Accelerators: For compute-intensive model training, utilize GPUs (e.g., NVIDIA A100) or TPUs for their parallel processing capabilities. For specific, efficient inference tasks, consider FPGAs [24] [25].

- Implement Container Orchestration: Use Kubernetes to automate the deployment, scaling, and management of containerized AI workloads. This ensures high availability and efficient resource use [25].

- Design for Horizontal Scaling: Architect systems to scale out by adding more machines rather than scaling up a single machine. Leverage distributed computing frameworks like TensorFlow or PyTorch to parallelize training tasks across multiple nodes [24].

- Implement Infrastructure-as-Code (IaC): Use tools like Terraform or Ansible to define and provision infrastructure in a consistent, reproducible manner, reducing configuration errors and saving time [25].

Issue 3: Inaccurate Prediction of Compound Physicochemical and ADMET Properties

Problem Description Quantitative Structure-Activity Relationship (QSAR) models fail to accurately predict complex biological properties like efficacy, metabolic stability, or toxicity, leading to late-stage attrition of drug candidates [23].

Diagnostic Steps

- Data Quality Audit: Check for issues in the training data, such as small dataset size, high experimental error, or lack of diversity in the chemical space [23].

- Model Technique Evaluation: Determine if traditional QSAR models are being used for tasks that require more advanced deep learning approaches capable of handling big data [23].

Resolution Protocol

- Transition to Deep Learning Models: Employ Deep Neural Networks (DNNs) for ADMET prediction, as they have shown superior predictivity compared to traditional ML methods on large, complex datasets [23].

- Leverage Specialized Predictor Tools: Utilize industry-tested AI-based predictors (e.g., ADMET Predictor, ALGOPS) that are trained on extensive, high-quality data to forecast critical properties like lipophilicity and solubility [23].

- Incorporate Diverse Molecular Descriptors: Use advanced molecular representations, such as Coulomb matrices, molecular fingerprint recognition, and 3D atomic coordinates, as input features for the models to improve prediction accuracy [23].

Frequently Asked Questions (FAQs)

FAQ 1: What are the core architectural principles for building a scalable and reliable AI system for drug discovery?

A robust AI system should be designed around four key principles [24]:

- Scalability: The system must handle growing datasets and computational demands, typically achieved through horizontal scaling (adding more machines) and distributed computing [24].

- Reliability: Implement redundancy, fault tolerance, and automated recovery mechanisms to ensure consistent performance, which is critical for applications like medical diagnostics [24].

- Availability: Design systems with high uptime (e.g., 99.9%) using strategies like load balancing and failover mechanisms, essential for real-time applications like fraud detection or clinical trial monitoring [24].

- Maintainability: Use modular designs (e.g., separating data ingestion, preprocessing, training, and inference) and clear documentation to make systems easy to update and debug [24].

FAQ 2: How can I improve the accuracy of binding affinity predictions for novel protein targets?

Focus on improving model generalizability. A proven method is to use a task-specific model architecture that learns from the protein-ligand interaction space rather than the raw 3D structures of the protein and ligand. This approach captures the transferable principles of molecular binding, reducing the model's reliance on structural shortcuts that fail with novel targets. Rigorous benchmarking that holds out entire protein superfamilies during training is essential to validate this capability [22].

FAQ 3: Our AI models are computationally expensive. How can we manage infrastructure costs without sacrificing performance?

Optimize costs through several strategies [25]:

- Monitor Utilization: Implement cost-monitoring tools to track GPU and storage usage, identifying and eliminating underused resources.

- Use Hybrid Deployment: Leverage spot instances (cloud) or a hybrid cloud/on-premises model to optimize spending for different workload stages.

- Container Orchestration: Use Kubernetes to auto-scale resources up and down based on demand, ensuring you only pay for what you use.

- Evaluate Total Cost of Ownership (TCO): Consider operational and data transfer expenses, not just upfront costs, when choosing between cloud and on-premises solutions.

FAQ 4: What are the most impactful applications of AI in accelerating the early drug discovery pipeline?

AI impacts several key areas [23] [26] [27]:

- Virtual Screening (VS): AI algorithms can rapidly screen millions of compounds in silico, predicting bioactivity and toxicity, thus prioritizing the most promising candidates for synthesis and testing [23].

- De Novo Drug Design: Generative AI and deep learning models can design novel molecular structures that satisfy specific criteria for potency, selectivity, and ADMET properties [23] [26].

- Lead Optimization: AI can significantly shorten the design-make-test-analyze cycle, with some platforms reporting design cycles that are ~70% faster and require 10x fewer synthesized compounds than industry norms [26].

- Drug Repurposing: AI analyzes vast datasets of biological and clinical information to identify new therapeutic uses for existing drugs [27].

Experimental Protocols & Data

Protocol 1: Evaluating ML Model Generalizability for Novel Protein Targets

Objective To rigorously assess a machine learning model's ability to accurately predict protein-ligand binding affinity for novel protein families not seen during training [22].

Methodology

- Data Curation: Assemble a large, diverse dataset of protein-ligand complexes with experimentally determined binding affinities (e.g., from PDBBind).

- Data Splitting for Generalization: Partition the dataset at the level of protein superfamilies. All complexes associated with one or more entire superfamilies are completely excluded from the training and validation sets to form the final test set.

- Model Training:

- Train the model on the remaining training set.

- Use a validation set from the training superfamilies for hyperparameter tuning.

- Model Evaluation: The model's performance is exclusively evaluated on the held-out test set of novel protein superfamilies. Key metrics include Pearson's R (for affinity prediction) and AUC-ROC (for classification tasks).

Interpretation A significant performance drop on the held-out superfamily test set compared to the standard validation set indicates poor generalizability and limited utility for de novo target discovery. A small performance gap indicates a robust model [22].

Protocol 2: AI-Driven Virtual Screening Workflow

Objective To rapidly and efficiently identify high-quality "hit" compounds from a virtual chemical library using a multi-step AI screening process [23].

Methodology

- Library Preparation: Compile a virtual compound library from databases like PubChem, ChemBank, or ZINC. Standardize structures and generate relevant molecular descriptors.

- Physicochemical Property Filtering: Use AI-based QSPR models to filter out compounds with poor drug-like properties (e.g., undesirable logP, low solubility, high molecular weight).

- Pharmacophore or Structure-Based Screening:

- Ligand-Based: If known active compounds are available, use similarity search or pharmacophore models to find structurally similar compounds.

- Structure-Based: If a 3D protein structure is available, use molecular docking with an AI-powered scoring function (see Protocol 1) to rank compounds by predicted binding affinity.

- ADMET Prediction: Subject the top-ranking compounds to deep learning-based ADMET prediction models to flag potential toxicity, poor metabolic stability, or low bioavailability.

- Visual Inspection & Selection: A final, short list of compounds is selected by medicinal chemists for purchase or synthesis based on the AI outputs, structural novelty, and synthetic feasibility.

Interpretation This workflow prioritizes compounds with a high probability of being potent, selective, and drug-like, thereby reducing the number of compounds that require costly and time-consuming experimental testing [23].

Table 1: Performance of AI Methods in Drug Discovery Applications

| Application Area | AI Method | Reported Performance | Benchmark / Context |

|---|---|---|---|

| Binding Affinity Prediction | Generalizable DL Framework (Interaction-Space Focus) | Modest gains, but highly reliable | Outperforms conventional scoring functions on novel protein families; establishes a dependable baseline [22]. |

| ADMET Prediction | Deep Learning (DL) | Significant predictivity | Outperformed traditional ML on 15 ADMET datasets in a Merck-sponsored challenge [23]. |

| De Novo Drug Design | Generative AI (Exscientia) | ~70% faster design cycles | Requires 10x fewer synthesized compounds than industry standards [26]. |

| Intestinal Absorption Prediction | Artificial Neural Network (ANN) | 16% error rate | Considered acceptable given a diverse structural dataset [28]. |

| IVIVC for Inhalers | ANN | R² ≈ 80% | Successful correlation of in vitro data with in vivo outcomes [28]. |

Table 2: Key Research Reagent Solutions for AI-Driven Drug Discovery

| Reagent / Resource | Function / Application | Example / Source |

|---|---|---|

| Curated Protein-Ligand Affinity Datasets | Training and benchmarking structure-based AI models for binding affinity prediction. | PDBBind [22] |

| Virtual Chemical Libraries | Source of small molecules for virtual screening and de novo design inspiration. | PubChem, ChemBank, ZINC, DrugBank [23] |

| AI-Based ADMET Prediction Tools | In silico prediction of absorption, distribution, metabolism, excretion, and toxicity properties. | ADMET Predictor, ALGOPS program [23] |

| Generative Chemistry Platforms | AI-driven design of novel, synthetically accessible molecular structures. | Exscientia's Centaur Chemist, Insilico Medicine's Generative Tensorial Reinforcement Learning [26] |

| High-Performance Computing (HPC) Hardware | Accelerating the training of complex deep learning models on large datasets. | NVIDIA GPUs (e.g., A100), Google TPUs [24] [25] |

System Topology and Workflow Visualizations

AI System Topology for Pharma R&D

Virtual Screening Workflow

Protein-Ligand Interaction Model

Building for the Benchside: Designing and Applying AI Topologies in Drug Development

Orchestrating Specialized Agents for Core Drug Discovery Workflows

This technical support center addresses common challenges and questions researchers face when implementing and optimizing compound AI systems for drug discovery. The following troubleshooting guides and FAQs are framed within ongoing research into optimizing compound AI system topology and node parameters.

### Frequently Asked Questions (FAQs)

1. What is a compound AI system in drug discovery, and how does it differ from a single model?

A compound AI system is one that tackles complex tasks using multiple, interacting components, as opposed to a single, monolithic AI model [2]. In drug discovery, this typically involves orchestrating specialized agents—such as a planning agent, a data retrieval agent, and a synthesis agent—that work together to navigate the multi-stage drug discovery pipeline [29]. The system can be formally defined as a directed graph Φ=(G,ℱ), where G=(V,E) represents the topology (nodes and edges) and ℱ is the set of operations (e.g., an LLM forward pass, a RAG step) attached to each node [2].

2. When should I use agentic AI versus a single fine-tuned model for my project? The choice depends on the task's complexity and need for specialized tools.

- Use Agentic AI when your workflow requires autonomy, multi-step reasoning, and interaction with multiple, distinct data sources or tools (e.g., searching PubMed, querying ChEMBL, and generating a report) [30] [29].

- Use a Single Fine-Tuned Model for well-defined, single tasks that require deep domain specialization but not external tool use, such as analyzing a specific type of medical record or classifying chemical sentiment [31]. Parameter-efficient fine-tuning (PEFT) methods like LoRA are often ideal for creating such specialized models efficiently [32] [33].

3. A key agent in my workflow is underperforming. Should I optimize the node parameters or the system's topology? This is a core research question in optimizing compound AI systems. The approach depends on the nature of the performance issue [2]:

- Optimize Node Parameters (Fixed Structure): If the system's overall workflow is sound but a specific component is generating poor outputs, focus on parameter optimization. This involves tuning the node's numerical parameters (e.g., LLM weights, temperature) or, more commonly, its textual parameters (e.g., the prompt templates) without changing how agents are connected [2].

- Optimize System Topology (Structural Flexibility): If the failure is due to poor information routing or coordination between agents—for instance, a data retrieval agent is not passing the correct information to the analysis agent—then you need to modify the system's topology. This means redefining the graph's edges

(E)or potentially adding/removing nodes(V)[2].

4. My multi-agent system produces verbose or irrelevant information in its final report. How can I fix this? This is often a topology issue related to the synthesis or orchestration agent. Implement a dedicated synthesis agent whose sole function is to integrate findings from multiple sources into a concise, comprehensive report [29]. Ensure the orchestrator agent is configured to route information specifically to this synthesis node, filtering out redundant data before the final output is generated. Fine-tuning the synthesis agent's foundational model with instruction tuning can also improve its ability to follow formatting and brevity instructions [33].

### Troubleshooting Guides

Problem: Cascading Failures in a Multi-Agent Workflow

- Scenario: The failure of one specialized agent (e.g., a PubMed query agent) causes the entire workflow to halt or produce incorrect results.

- Diagnosis: This indicates a fragile system topology with insufficient error handling and feedback loops.

- Solution: Implement a robust orchestration layer with fault tolerance.

- Protocol:

- Define Fallback Protocols: The orchestrator agent should have conditional logic (defined in the edge matrix

[cij][2]) to reroute tasks if a primary agent fails or times out. - Implement Validation Nodes: Introduce lightweight agent nodes dedicated to validating the output of critical steps before they are passed to the next agent.

- Establish Retry Mechanisms: Configure the orchestrator to retry a failed agent operation a predefined number of times before initiating the fallback protocol.

- Define Fallback Protocols: The orchestrator agent should have conditional logic (defined in the edge matrix

- Protocol:

The following diagram illustrates a robust topology designed to handle such failures.

Orchestrator Handling Agent Failure

Problem: The AI System Generates Factually Inaccurate or Hallucinated Scientific Content

- Scenario: The system's output contains plausible but incorrect information about drug targets or compound properties.

- Diagnosis: This can stem from over-reliance on a single knowledge source or a foundational model that has not been specialized for the scientific domain.

- Solution: Augment agents with specialized data and implement rigorous fact-checking.

- Protocol:

- Integrate Multiple Data Tools: Use Model Context Protocol (MCP) servers to connect agents to authoritative, domain-specific databases like ChEMBL (for bioactive molecules), PubMed (for biomedical literature), and ClinicalTrials.gov [29]. This provides a ground-truth foundation.

- Fine-Tune Base Models: Employ continued pre-training or instruction tuning on a high-quality corpus of biomedical research, clinical notes, and proprietary data to adapt general-purpose models to the niche scientific domain [33] [34].

- Implement Cross-Verification: Design the workflow so that key facts generated by one agent (e.g., a data retrieval agent) are cross-verified by a separate, independent agent or tool.

- Protocol:

Problem: Inefficient Resource Utilization Leading to High Costs and Slowdowns

- Scenario: Running the compound AI system requires significant computational resources, making it expensive and slow for iterative research.

- Diagnosis: The system may be using inappropriately large models for all tasks or suffering from inefficient orchestration.

- Solution: Strategically deploy models of varying sizes and leverage parameter-efficient fine-tuning.

- Protocol:

- Adopt a Small Language Model (SLM) Strategy: Use smaller, fine-tuned models (e.g., Phi-3, SmolLM) for specific, well-defined tasks like data classification or formatting. SLMs are optimized for specific tasks, run on consumer hardware, and offer 2–10x faster inference at 90% lower cost than large model APIs [32].

- Use PEFT for Specialization: Instead of full fine-tuning, use Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA (Low-Rank Adaptation) or QLoRA to adapt models to new tasks. This approach updates only 0.1–1% of parameters, drastically reducing computational demands [32] [33].

- Benchmark Training Efficiency: Utilize modern training stacks that support multi-GPU training with near-linear speedup. For example, benchmark your setup against reported gains, such as a 35.7x speed-up compared to using PEFT alone [33].

- Protocol:

### Experimental Protocols for System Optimization

The field of compound AI system optimization can be classified based on two key dimensions: Structural Flexibility (whether the method can change the system topology) and the Nature of Learning Signals (numerical vs. natural language) [2]. The following table summarizes this taxonomy and provides a methodological overview.

Table 1: Taxonomy of Compound AI System Optimization Methods [2]

| Structural Flexibility | Learning Signal | Method Class | Key Methodology | Example Application in Drug Discovery |

|---|---|---|---|---|

| Fixed Structure | Numerical | Gradient-Based | Use of proxy gradients or evolutionary strategies to optimize prompts/weights. | Fine-tuning a molecule generation agent's output for better binding affinity scores. |

| Fixed Structure | Natural Language | Language-Based Feedback | An auxiliary LLM provides textual feedback to refine prompts or actions. | Improving a literature review agent's query formulation based on summary quality critiques. |

| Variable Structure | Numerical | Architecture Search | Reinforcement learning or Monte Carlo Tree Search to alter the agent graph. | Discovering a new workflow that adds a toxicity-prediction agent to the pipeline. |

| Variable Structure | Natural Language | Language-Based Planning | An LLM planner suggests modifications to the system topology or agent roles. | Using a planner to incorporate a new clinical trial data source into the research workflow. |

Protocol 1: Fine-Tuning a Specialist Agent using PEFT

This protocol is for creating a specialized agent when the system topology is fixed.

- Objective: Adapt a base language model to perform a specific, narrow task (e.g., identifying non-medical factors in patient records) with high accuracy and efficiency [31].

- Method: Parameter-Efficient Fine-Tuning (PEFT) via QLoRA.

- Dataset Preparation: Curate a minimum of 500–1,000 high-quality, task-specific examples. Focus on data quality and diversity over quantity [32]. Format data into instruction-response pairs and split into training/validation sets.

- Model Initialization: Select a suitable base SLM (e.g., Phi-3-mini for its balance of performance and efficiency) [32]. Quantize the model to 4-bit precision using QLoRA.

- Training Configuration: Set hyperparameters: a learning rate of 1e-4 to 5e-4, batch size as large as GPU memory allows, and 3-5 epochs. Use the AdamW optimizer [32] [33].

- Training & Monitoring: Train the model, tracking training and validation loss for signs of overfitting. The process should be fast and cost-effective, often achievable on a single consumer GPU [32].

- Integration: The fine-tuned model is now a specialized agent node that can be integrated into the larger compound system.

Protocol 2: Optimizing System Topology with Language-Based Feedback

This protocol is for improving how agents are connected and coordinated.

- Objective: Improve the overall performance of a multi-agent research assistant by refining the interaction logic between specialized agents (e.g., planner, retriever, synthesizer) [29].

- Method: Language-based feedback for variable structure optimization [2].