Optimizing C-Index with Gradient Boosting: A Comprehensive Guide for Clinical Survival Analysis

This article provides a comprehensive framework for researchers and drug development professionals seeking to leverage gradient boosting techniques to optimize the concordance index (C-index) in survival analysis.

Optimizing C-Index with Gradient Boosting: A Comprehensive Guide for Clinical Survival Analysis

Abstract

This article provides a comprehensive framework for researchers and drug development professionals seeking to leverage gradient boosting techniques to optimize the concordance index (C-index) in survival analysis. We cover foundational concepts of gradient boosting and C-index, methodological implementation for survival data, advanced optimization strategies to address common challenges, and rigorous validation approaches for model comparison. By integrating theoretical explanations with practical applications from recent biomedical literature, this guide enables the development of robust predictive models for time-to-event data in clinical and pharmaceutical research.

Understanding Survival Analysis and Gradient Boosting Fundamentals

The Critical Role of C-Index in Biomedical Survival Analysis

In biomedical research, accurately predicting the time until critical clinical events—such as disease recurrence or mortality—is fundamental to improving patient care. Survival analysis models developed for this purpose must be rigorously evaluated, and the Concordance Index (C-Index) has emerged as the predominant metric for assessing a model's discrimination ability. The C-Index quantifies how well a model ranks patients by risk, answering a critical question: given two random patients, will the model assign higher risk to the one who experiences the event earlier? [1] [2] Its robustness to censored data—where the event of interest has not occurred for all patients during the study period—makes it particularly valuable for clinical studies with limited follow-up [1].

With the integration of machine learning into biomedical research, gradient boosting techniques have shown significant promise for optimizing predictive models. Framing research within the context of gradient boosting for C-index optimization represents a cutting-edge approach to developing more accurate risk prediction models in drug development and clinical prognosis [3].

Quantitative Interpretation of C-Index Values

The C-Index measures the concordance between predicted risk scores and actual observed survival times. It is calculated as the proportion of comparable patient pairs in which the predictions and outcomes are concordant [1] [4]. The value ranges from 0.0 to 1.0, with specific thresholds indicating model performance.

Table 1: Interpretation Guidelines for the C-Index

| C-Index Value | Interpretation | Clinical Implication |

|---|---|---|

| 0.5 | No discrimination | Model predictions are equivalent to random chance |

| 0.5 - 0.7 | Poor to moderate discrimination | Limited clinical utility |

| > 0.7 | Good discrimination | Potically useful for risk stratification |

| > 0.8 | Strong discrimination | Valuable for individual patient decision-making |

| 1.0 | Perfect discrimination | All patient pairs are correctly ordered; rarely achieved in practice |

For biomedical applications, a C-Index value above 0.7 is generally considered acceptable, while values above 0.8 indicate a strong model [2]. However, these thresholds should be interpreted within the specific clinical context and disease area.

Key C-Index Estimators and Their Properties

Several C-Index estimators have been developed to address challenges in survival data, particularly regarding censoring and truncation. Understanding their properties is essential for appropriate metric selection.

Table 2: Comparison of Primary C-Index Estimators in Survival Analysis

| Estimator | Data Handling | Key Assumptions | Limiting Value | Advantages | Limitations |

|---|---|---|---|---|---|

| Harrell's C-Index [1] [5] | Right-censored | Independent censoring | Depends on study-specific censoring distribution [6] [5] | Intuitive; widely implemented | Potentially optimistic with heavy censoring |

| Uno's C-Index [6] [5] | Right-censored | Independent censoring; requires pre-specified τ | Free of censoring distribution when truncated at τ [6] [5] | Robust to censoring patterns; recommended for heavy censoring | Requires choice of τ; less intuitive |

| IPW C-Index [5] | Left-truncated and right-censored | Independent truncation and censoring | Free of truncation distribution [5] | Handles both left-truncation and right-censoring | Complex computation; requires estimation of weights |

Experimental Protocols for C-Index Evaluation

Protocol: Calculating Harrell's C-Index

Purpose: To evaluate model discrimination using Harrell's C-Index for right-censored survival data.

Materials and Software:

- Dataset with observed times, event indicators, and predicted risk scores

- Statistical software (R, Python, or SAS)

- Survival analysis package (e.g.,

sksurvin Python orsurvivalin R)

Procedure:

- Sort Data: Arrange all patients by their observed ground truth time in ascending order [1]

- Identify Comparable Pairs: For each patient pair (i, j), determine if they are comparable:

- Pairs are comparable if the patient with earlier observed time experienced the event (uncensored)

- Skip pairs where the earlier patient was censored [1]

- Assess Concordance: For each comparable pair:

- Check if the predicted risk score is higher for the patient with earlier event time

- Mark as concordant if

risk_score_earlier > risk_score_later

- Calculate C-Index:

- Numerator: Count of concordant pairs

- Denominator: Total number of comparable pairs

- C-Index = Concordant Pairs / Comparable Pairs [1]

Example Calculation: Consider three patients:

- Patient A: Time=1.35 years, Event=0 (censored), Risk=1.48

- Patient B: Time=11.89 years, Event=1 (uncensored), Risk=3.52

- Patient C: Time=19.17 years, Event=0 (censored), Risk=5.52

Sorted by time: [A, B, C] Comparable pairs: (B,C) only (B has event, B's time < C's time) Concordance check: RiskB (3.52) > RiskC (5.52)? No, but this pair is still considered concordant in Harrell's approach because patient C is censored with a longer follow-up [1] C-Index = 1/1 = 1.0

Protocol: Implementing Uno's C-Index with Inverse Probability Censoring Weighting (IPCW)

Purpose: To calculate a C-Index that is robust to the censoring distribution.

Materials and Software:

- Dataset with observed times, event indicators, and predicted risk scores

- Statistical software with IPCW capability

- Kaplan-Meier estimator for censoring distribution

Procedure:

- Estimate Censoring Distribution: Calculate Kaplan-Meier estimate for the censoring survival function, denoted as Ĝ(t) [6]

- Select Time Point τ: Choose a clinically relevant time point τ such that pr(D > τ) > 0, where D is the censoring time [6]

- Calculate Weights: For each patient i with observed time Ti, compute weight wi = 1/Ĝ(Ti)² for Ti < τ [6]

- Compute Weighted Concordance:

- Numerator: Sum of weights for concordant pairs where Ti < Tj, Ti < τ, and riski > riskj

- Denominator: Sum of weights for all comparable pairs where Ti < Tj and Ti < τ [6]

- C-Index Calculation: Uno's C-Index = Weighted Concordant Pairs / Weighted Comparable Pairs

Implementation Code (Python):

Protocol: Handling Left-Truncated and Right-Censored Data

Purpose: To evaluate C-Index when patients enter the study at different times (left-truncation) and may be lost to follow-up (right-censoring).

Materials and Software:

- Dataset with entry times, event times, and risk scores

- Statistical software with IPW capability

Procedure:

- Estimate Truncation Weights: Calculate the probability of being observed given the truncation time [5]

- Compute IPW C-Index:

- Use inverse probability weights to account for both truncation and censoring

- Apply similar weighting approach as Uno's method but with additional truncation adjustment [5]

- Validate Results: Compare with naive C-Index to assess bias from truncation

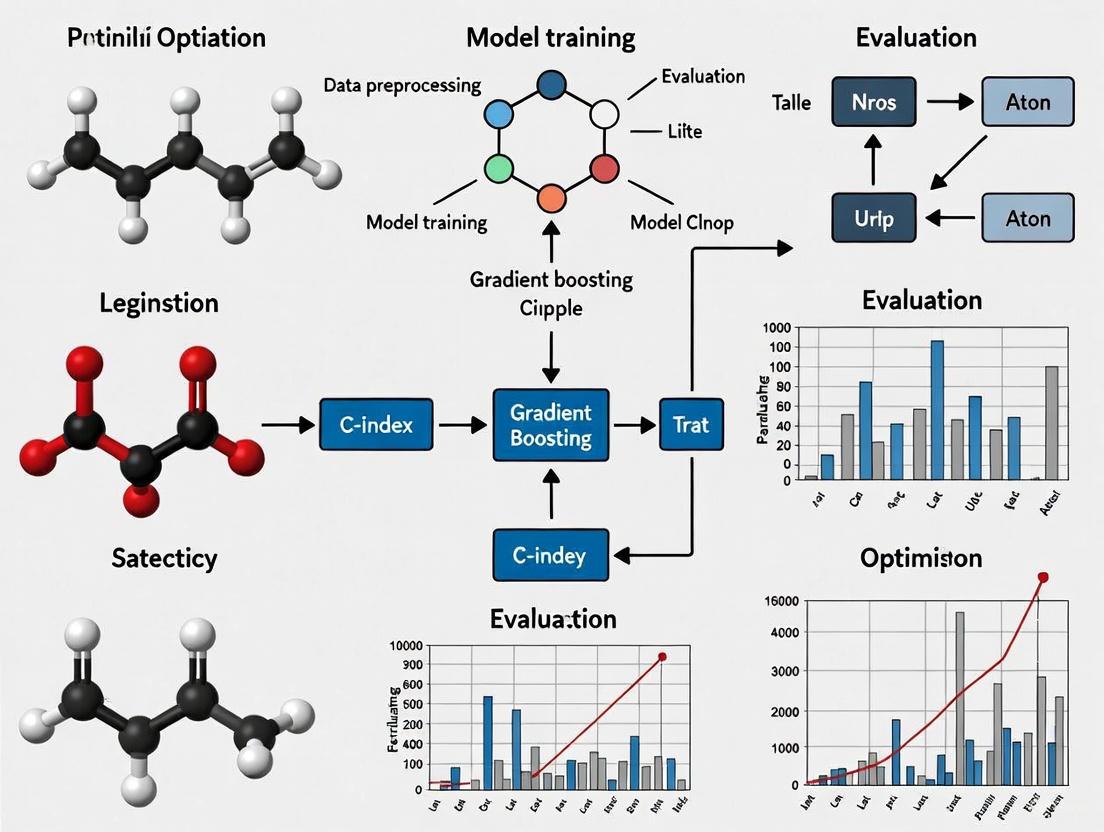

Visualization of C-Index Computational Workflows

C-Index Calculation Logic

Gradient-Based Tree Optimization for C-Index

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Research Reagent Solutions for C-Index Optimization Studies

| Tool/Reagent | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| scikit-survival | Python library for survival analysis | Calculating Harrell's and Uno's C-Index | Provides concordance_index_censored function [1] |

| Survival Package (R) | Comprehensive survival analysis in R | Various C-Index implementations | Includes coxph and survConcordance functions |

| Gradient Boosting Machines | Machine learning for risk prediction | Optimizing models for C-Index performance | Implemented in XGBoost, LightGBM [3] |

| Inverse Probability Weights | Statistical adjustment method | Handling truncation and censoring | Essential for Uno's and IPW C-Index [6] [5] |

| Kaplan-Meier Estimator | Non-parametric survival function | Estimating censoring distribution | Required for Uno's C-Index calculation [6] |

| Time-Dependent ROC Tools | Evaluation of time-dependent discrimination | Assessing performance at specific time points | Complements C-Index analysis [1] |

The C-Index remains a cornerstone metric for evaluating risk prediction models in biomedical survival analysis. While Harrell's C-Index provides an intuitive starting point, modern research should prioritize Uno's C-Index or IPW-adjusted versions when dealing with substantial censoring or truncation. The integration of gradient-based optimization techniques represents a promising frontier for enhancing model discrimination in clinical prediction models. By following the standardized protocols outlined in this article and selecting appropriate C-Index variants based on dataset characteristics, researchers can ensure robust evaluation of predictive models critical to drug development and clinical decision-making.

Core Algorithmic Mechanics

Sequential Learning Process

Gradient boosting constructs an ensemble model through a sequential, additive process where each new weak model is trained to correct the errors of the existing ensemble [7]. The algorithm begins with an initial naive model (often simply the mean of the target values for regression) and iteratively adds new models that focus on the residual errors made by the current ensemble [8]. This sequential error-correcting approach distinguishes boosting from bagging methods like Random Forests, which build models independently and average their predictions [9].

The fundamental sequential process follows this procedure:

- Initialization: Create an initial base model $F_0(x)$

- Iterative Optimization: For each iteration $m = 1$ to $M$:

This framework allows gradient boosting to optimize any differentiable loss function, making it highly adaptable to various problem types including regression, classification, and survival analysis [9].

Residual Fitting via Gradient Descent

The "gradient" in gradient boosting refers to the algorithm's use of gradient descent in function space to minimize the chosen loss function [9]. Rather than directly fitting to residuals, the method fits new base learners to the negative gradient of the loss function, which for commonly used loss functions (like mean squared error) corresponds to the residual errors [8].

For regression tasks using mean squared error loss, the negative gradient indeed equals the residuals: $$r{im} = yi - F{m-1}(xi)$$ This connection between gradients and residuals makes the algorithm particularly intuitive for regression problems, where each new tree explicitly predicts the errors of the current ensemble [8].

Shrinkage and Regularization

Shrinkage is a crucial regularization technique in gradient boosting where the contribution of each tree is scaled by a learning rate $\eta$ (typically between 0.01 and 0.3) [7]. The update rule becomes: $$Fm(x) = F{m-1}(x) + \eta \cdot \gammam hm(x)$$ Smaller learning rates provide better generalization but require more trees to achieve the same training error, creating a trade-off between learning rate and number of estimators [7]. Modern implementations like XGBoost incorporate additional regularization through L1 and L2 regularization on leaf weights and tree complexity constraints [10].

Gradient Boosting for Survival Analysis and C-index Optimization

Survival Analysis Adaptation

In survival analysis with censored data, gradient boosting can be adapted through specialized loss functions that handle time-to-event data [11]. The Gradient Boosting Survival Analysis (GrBSA) method uses the Cox partial likelihood loss to model hazard functions while maintaining the proportional hazards assumption [11]. For more complex scenarios with non-proportional hazards, alternative loss functions can be implemented that directly optimize concordance indices or other survival metrics.

The key challenge in survival analysis is properly handling censored observations, which requires specialized loss functions that account for incomplete follow-up time. Gradient boosting frameworks can incorporate these specialized loss functions while maintaining the core sequential residual-fitting mechanics [11].

C-index Optimization Strategies

The Concordance Index (C-index) is the primary evaluation metric for survival models, measuring the model's ability to correctly rank survival times [11]. For non-proportional hazards scenarios where risk rankings may change over time, Antolini's C-index provides a more appropriate evaluation metric than Harrell's C-index, which assumes proportional hazards [11].

Table 1: C-index Evaluation Metrics for Survival Analysis

| Metric | Applicability | Key Characteristics | Interpretation |

|---|---|---|---|

| Harrell's C-index | Proportional Hazards | Assumes fixed risk ranking over time | Proportion of correctly ordered pairs |

| Antolini's C-index | Non-Proportional Hazards | Accounts for time-dependent risk rankings | Generalized concordance for non-PH scenarios |

Gradient boosting can be tailored to optimize C-index performance through:

- Loss Function Selection: Implementing survival loss functions that directly optimize ranking performance

- Hyperparameter Tuning: Adjusting tree complexity, learning rate, and number of estimators to improve concordance

- Ensemble Design: Combining multiple survival boosting models to enhance predictive accuracy

Recent research indicates that proper evaluation requires combining Antolini's C-index with calibration metrics like Brier score to fully assess model performance, as high C-index values can sometimes mask poor calibration [11].

Experimental Protocols and Implementation

Basic Regression Protocol

For standard regression tasks using scikit-learn, the following protocol implements gradient boosting with residual fitting:

This protocol follows the standard gradient boosting workflow with built-in sequential learning and residual fitting through the GradientBoostingRegressor class [7].

Survival Analysis Protocol

For survival data with censoring, the protocol adapts to use survival-specific implementations:

This protocol utilizes the scikit-survival implementation of gradient boosting for survival data, which optimizes the Cox partial likelihood loss function [11].

Performance Benchmarking and Hyperparameter Optimization

Implementation Comparison

Recent large-scale benchmarking studies comparing gradient boosting implementations provide critical insights for selection decisions:

Table 2: Gradient Boosting Implementation Comparison for Scientific Applications

| Implementation | Key Strengths | Optimal Use Cases | Performance Notes |

|---|---|---|---|

| XGBoost | Best predictive performance, robust regularization | Small to medium datasets, extensive hyperparameter tuning | Superior accuracy in QSAR modeling [10] |

| LightGBM | Fastest training time, efficient memory usage | Large datasets, high-dimensional features | Optimal for high-throughput screening data [10] |

| CatBoost | Reduced overfitting, handling categorical features | Small datasets with categorical variables | Excellent performance with default parameters [10] |

| Scikit-learn GBM | Simple API, good baseline | Prototyping, educational use | Lacks advanced optimization of specialized libraries [10] |

Critical Hyperparameters

The performance of gradient boosting models heavily depends on proper hyperparameter tuning:

Table 3: Essential Hyperparameters for Gradient Boosting Optimization

| Hyperparameter | Impact on Performance | Typical Range | Optimization Priority |

|---|---|---|---|

| n_estimators | Number of sequential trees; too few underfits, too many overfits | 100-500 | High - controls ensemble complexity |

| learning_rate | Shrinkage factor; lower values require more trees but generalize better | 0.01-0.3 | High - crucial for regularization |

| max_depth | Tree complexity; deeper trees capture more interactions but may overfit | 3-8 | Medium - affects feature interaction capture |

| max_features | Features considered per split; lower values reduce overfitting | 0.5-1.0 | Medium - promotes diversity in trees |

| subsample | Fraction of samples used per tree; lower values reduce overfitting | 0.7-1.0 | Medium - introduces randomness |

Comprehensive hyperparameter optimization is essential for maximizing model performance, with studies showing that tuning all major parameters simultaneously yields significantly better results than selective tuning [10].

Visualization of Core Mechanics

Sequential Ensemble Building Process

Gradient Boosting Optimization in Function Space

Research Reagent Solutions

Table 4: Essential Computational Tools for Gradient Boosting Research

| Tool Category | Specific Solutions | Primary Function | Research Application |

|---|---|---|---|

| Core Algorithms | XGBoost, LightGBM, CatBoost, scikit-learn GBM | Implement gradient boosting with specialized optimizations | Model development and benchmarking [10] |

| Survival Analysis | scikit-survival, PyCox, Auton Survival | Adapt gradient boosting for censored time-to-event data | C-index optimization in clinical datasets [11] |

| Hyperparameter Optimization | Optuna, Hyperopt, scikit-learn GridSearch | Automated tuning of critical model parameters | Performance maximization and robust model selection [10] |

| Model Interpretation | SHAP, ELI5, partial dependence plots | Explain model predictions and feature importance | Mechanistic insights and biomarker discovery [12] |

| Evaluation Metrics | Antolini's C-index, Harrell's C-index, Brier score | Assess model performance and calibration | Comprehensive model validation [11] |

These research reagents provide the essential toolkit for developing, optimizing, and validating gradient boosting models in scientific applications, particularly for C-index optimization in survival analysis and drug development contexts.

Core Characteristics and Performance

Gradient boosting algorithms constitute a powerful machine learning ensemble technique that builds models sequentially, with each new model correcting errors made by previous ones [13]. Among the most prominent variants are XGBoost, LightGBM, CatBoost, and scikit-learn's HistGradientBoosting, each offering distinct advantages for research applications, particularly in the context of C-index optimization for survival analysis in drug development.

Table 1: Fundamental Characteristics of Gradient Boosting Variants

| Characteristic | XGBoost | LightGBM | CatBoost | HistGradientBoosting |

|---|---|---|---|---|

| Core Innovation | Regularized boosting, parallel processing [14] | Leaf-wise growth, histogram-based learning [15] [16] | Ordered boosting, categorical handling [17] [18] | Histogram-based learning, inspired by LightGBM [19] |

| Tree Growth Strategy | Level-wise (depth-wise) [17] | Leaf-wise (loss-guided) [17] [15] | Symmetric (balanced) [17] | Leaf-wise (by default, similar to LightGBM) |

| Handling Categorical Features | Requires preprocessing (e.g., one-hot encoding) [17] | Optimal binning with manual column specification [17] | Native handling without preprocessing [17] [18] | Native support via categorical_features parameter [19] |

| Missing Value Handling | Built-in routine learns split direction [14] | Native support via histogram binning | Native support via feature statistics | Native support; learns split direction during training [19] |

| Primary Advantage | Predictive power, extensive tuning [17] [14] | Speed & memory efficiency on large data [17] [15] | Accuracy with categorical data, minimal tuning [17] [18] | Speed for big datasets, scikit-learn integration [19] |

Table 2: Performance and Scalability Profile

| Metric | XGBoost | LightGBM | CatBoost | HistGradientBoosting |

|---|---|---|---|---|

| Training Speed | Fast, but slower than LightGBM on large data [17] | Fastest, especially on large datasets [17] [16] | Fast for mixed data types [17] | Much faster than GradientBoostingClassifier for n_samples ≥ 10,000 [19] |

| Memory Usage | High [17] | Low [17] [16] | Moderate [17] | Optimized via histogram binning [19] |

| Overfitting Control | L1/L2 regularization, shrinkage, column subsampling [17] [14] | L1/L2, feature fraction, early stopping [17] | Ordered boosting, bagging [17] | L2 regularization, early stopping [19] |

| Interpretability | Gain-based feature importance, SHAP support [17] | Feature importance scores, SHAP integration [17] [15] | Built-in SHAP values, visualization tools [17] | Standard scikit-learn model inspection |

| Hyperparameter Tuning | Extensive but complex [17] | Requires careful tuning [17] | Minimal tuning needed [17] | Standardized scikit-learn interface |

Research Application Selection Guidelines

Choosing the appropriate algorithm for C-index optimization research depends on dataset characteristics and research goals [17]:

- XGBoost: Ideal for structured/tabular data where extensive hyperparameter tuning is feasible and balanced tree growth is beneficial for interpretability [17] [14].

- LightGBM: Superior for very large datasets (millions of samples) requiring fast training times and minimal memory usage, particularly with numerical features [17] [15].

- CatBoost: Optimal for datasets rich in categorical features, where minimal preprocessing is desired, and robust performance with default parameters is valuable [17] [18].

- HistGradientBoosting: Excellent for large-scale scikit-learn workflows where integration with the scikit-learn ecosystem is prioritized and performance on datasets exceeding 10,000 samples is required [19].

Experimental Protocols for C-index Optimization

Core Experimental Workflow

Parameter Configuration Guidelines

Table 3: Critical Parameter Specifications for C-index Optimization

| Algorithm | Core Parameters | Recommended Values | C-index Specific Notes |

|---|---|---|---|

| XGBoost [14] [20] | objectivelearning_rate (eta)max_depthsubsamplecolsample_bytreealpha, lambda |

survival:cox0.01-0.23-100.5-1.00.5-1.00, 1 |

Use survival:cox objective for right-censored data. Lower learning rates often benefit C-index but require more trees. |

| LightGBM [15] | objectivelearning_ratenum_leavesmin_data_in_leaffeature_fractionbagging_fraction |

Custom objective0.01-0.231-12720-1000.5-1.00.5-1.0 | Requires custom objective for survival analysis. Higher num_leaves increases complexity but risk overfitting. |

| CatBoost [18] | loss_functionlearning_ratedepthl2_leaf_regrandom_strength |

Custom loss0.01-0.23-101-101 | Configure custom loss function for concordance optimization. random_strength for additional regularization. |

| HistGradient‑Boosting [19] | losslearning_ratemax_itermax_leaf_nodesmin_samples_leafl2_regularization |

Custom loss0.01-0.2100-50031-12720-1000-10 | Implement custom loss function. max_leaf_nodes controls model complexity effectively. |

Advanced Optimization Methodology

For robust C-index optimization in drug development research, implement nested cross-validation:

- Outer Loop: 5-fold or 10-fold cross-validation for performance estimation

- Inner Loop: 3-fold or 5-fold cross-validation for hyperparameter tuning

- Evaluation Metric: Harrell's C-index for time-to-event data

- Stratification: Ensure proportional representation of event types across folds

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Reagents for Gradient Boosting Research

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Nested Cross-Validation Framework | Provides unbiased performance estimation while preventing data leakage [14] | scikit-learn ParameterGrid with StratifiedKFold |

| C-index Optimization Objective | Custom loss functions for survival analysis concordance | XGBoost: survival:coxCustom objectives for LightGBM/CatBoost |

| Feature Importance Analyzer | Identifies predictive biomarkers for mechanistic interpretation [17] [15] | SHAP (SHapley Additive exPlanations), permutation importance |

| Algorithmic Fairness Audit | Detects bias across patient subgroups (race, gender, age) [21] | Fairness metrics (demographic parity, equalized odds) |

| Hyperparameter Optimization | Systematic search for optimal C-index performance [15] | Bayesian optimization, grid search, random search |

| Missing Data Handler | Manages incomplete clinical variables without bias introduction [14] [19] | Native algorithm handling or multiple imputation |

The concordance index (C-index) serves as a predominant metric for evaluating prognostic models in survival analysis, particularly in clinical and biomedical research. While its intuitive interpretation as a rank-based measure has led to widespread adoption, this application note examines critical limitations and biases inherent in standard C-index implementations, with a specific focus on challenges posed by right-censored data and time-range constraints. Within the broader context of gradient boosting techniques for C-index optimization research, we dissect how censoring mechanisms introduce distributional dependencies and explore methodological frameworks for robust estimation. We present structured protocols for implementing censoring-adjusted concordance metrics, experimental designs for bias evaluation, and gradient boosting approaches that directly optimize discriminatory power. By integrating theoretical insights with practical implementations, this work provides researchers with a comprehensive toolkit for navigating the complexities of survival model evaluation, ultimately advocating for a more nuanced approach that moves beyond overreliance on the C-index as a solitary performance measure.

Survival analysis models, which predict the time until events of interest such as death, disease recurrence, or treatment failure, require specialized evaluation metrics that account for unique data characteristics like right-censoring. The concordance index (C-index) has emerged as one of the most widely adopted performance measures in this domain, designed to quantify a model's ability to correctly rank patients by their risk of experiencing an event [22]. Originally developed for binary outcomes and later extended to survival data by Harrell et al., the C-index estimates the probability that, for two randomly selected patients, the model correctly predicts which will experience the event first [23].

In clinical practice and biomedical research, the C-index has become a standard validation tool for prognostic models, with arbitrary thresholds above 0.7 often considered indicative of adequate discriminatory power [23]. Its popularity stems from its intuitive interpretation as a generalized version of the area under the receiver operating characteristic curve (AUC) for time-to-event data. However, this very popularity has led to critical oversight of its methodological limitations, particularly when applied to censored survival outcomes.

The core computation of the C-index involves comparing pairs of subjects and determining whether the predicted risk scores align with the observed survival times. Formally, for a prediction rule that produces a risk score η, the C-index is defined as:

[ C = P(\etaj > \etai | Tj < Ti) ]

where (Ti) and (Tj) are the survival times for patients (i) and (j) [24]. This probabilistic interpretation belies a complex underlying structure that becomes particularly problematic when dealing with incomplete observations due to censoring.

Critical Analysis of C-Index Limitations

Censoring-Induced Biases and Distributional Dependencies

The presence of right-censored observations – where a patient's event time is only known to exceed a certain value – fundamentally compromises the estimation of the standard C-index. Harrell's C-statistic converges not to the true concordance probability but to a biased quantity that depends on the censoring distribution:

[ \hat{C}{\text{Harrell}} \rightarrow B{TX} = pr(X1 > X2 | T1 < T2, T1 \leq D1 \land D_2) ]

where (D1) and (D2) represent the censoring times [25]. This distributional dependency means that the same predictive model applied to populations with different censoring patterns will yield different C-index values, even if its true discriminatory performance remains unchanged.

The diagram below illustrates how censoring mechanisms affect C-index estimation:

Figure 1: Censoring effects on C-index estimation

The Comparability Problem in Survival Settings

The C-index for survival outcomes exclusively considers "comparable pairs" – pairs where the earlier observed time is uncensored. This comparability definition creates a fundamental asymmetry in how different risk groups contribute to the metric. Unlike the binary outcome setting where pairs with substantially different risk profiles are more likely to be compared, the survival C-index frequently compares patients with similar risk profiles simply because they form comparable pairs [23].

This comparability problem has significant clinical implications. In low-risk populations, physicians may find little utility in a model that successfully discriminates between patients with 30-year versus 31-year survival, yet such comparisons contribute substantially to the C-index [22]. The metric's focus on rank accuracy rather than absolute accuracy means models can achieve high concordance while producing systematically biased survival time predictions [22].

Time-Range Constraints and Truncation Issues

The standard C-index evaluates discriminatory performance across the entire observed time range, which can be problematic when clinical interest focuses on specific time horizons (e.g., 5-year survival). The truncated C-index has been proposed to address this limitation:

[ C{\text{tr}} = \mathbb{P}(\etaj > \etai | Tj < Ti, Tj \leq \tau) ]

where (\tau) represents the truncation time point [24]. This modification focuses evaluation on clinically relevant timeframes but introduces new challenges in selecting appropriate truncation points and handling increased variance near the truncation boundary.

Table 1: C-Index Variants and Their Properties

| Metric | Formula | Handling of Censoring | Time Focus | Key Limitations |

|---|---|---|---|---|

| Harrell's C | (\frac{\sum{i\neq j} \deltai I(Ti < Tj) I(\etai > \etaj)}{\sum{i\neq j} \deltai I(Ti < Tj)}) | Excludes non-comparable pairs | Entire observation period | Depends on censoring distribution |

| Uno's C | (\frac{\sum{i,j} \frac{\deltaj}{\hat{G}(Tj)^2} I(Tj < Ti) I(\etaj > \etai)}{\sum{i,j} \frac{\deltaj}{\hat{G}(Tj)^2} I(Tj < Ti)}) | Inverse probability of censoring weighting | Entire observation period | Requires correct censoring model specification |

| Truncated C | (\mathbb{P}(\etaj > \etai | Tj < Ti, T_j \leq \tau)) | Varies by implementation | Restricted to [0, τ] | Sensitive to τ choice, increased variance |

Methodological Frameworks for Bias Mitigation

C-Index Decomposition for Diagnostic Insight

Recent research has proposed decomposing the C-index into components that provide finer-grained diagnostic insights. The overall C-index can be expressed as a weighted harmonic mean of two distinct concordance measures:

[ CI = \alpha \cdot CI{ee} + (1 - \alpha) \cdot CI{ec} ]

where (CI{ee}) represents concordance for event-event pairs and (CI{ec}) represents concordance for event-censored pairs [26]. This decomposition enables researchers to identify whether a model's weaknesses stem from difficulties in ranking events against other events or events against censored cases.

The decomposition framework reveals why different model classes exhibit varying performance patterns under different censoring regimes. Deep learning models, for instance, tend to maintain more stable C-index values across censoring levels by effectively utilizing observed events, whereas classical machine learning models often deteriorate when censoring decreases due to limitations in ranking events against other events [26].

Gradient Boosting Approaches for C-Index Optimization

Gradient boosting machines (GBMs) adapted for survival analysis present a powerful framework for directly optimizing concordance. The GBMCI algorithm (Gradient Boosting Machine for Concordance Index) implements a smoothed approximation of the C-index as its objective function, enabling non-parametric modeling of survival relationships without explicit hazard function assumptions [27].

The fundamental optimization problem can be formulated as:

[ \max_{f} \widehat{C}(T, f(X)) ]

where (f) represents the ensemble of regression trees and (\widehat{C}) denotes the empirical C-index [27]. By directly targeting discriminatory performance, these approaches often outperform proportional hazards models, particularly when the underlying hazard assumptions are violated.

Table 2: Gradient Boosting Implementation Comparison

| Algorithm | Optimization Target | Censoring Handling | Variable Selection | Key Advantages |

|---|---|---|---|---|

| GBMCI | Smoothed C-index approximation | Integrated into loss function | Not inherent | Direct concordance optimization, no parametric assumptions |

| C-index Boosting | Uno's C-index or truncated C-index | Inverse probability weighting | Combined with stability selection | Focus on discriminatory power, robust to PH violations |

| Cox-based Gradient Boosting | Partial likelihood | Through risk set definition | Built-in via regularization | Familiar Cox framework, handles standard survival data |

The workflow below illustrates the integrated gradient boosting approach with stability selection for enhanced variable selection:

Figure 2: Stability selection workflow for C-index boosting

Experimental Protocols

Protocol 1: Implementing Censoring-Adjusted Concordance Metrics

Purpose: To compute survival model performance using C-index variants that adjust for censoring distribution.

Materials and Reagents:

- Computing Environment: R Statistical Software (v4.2.0+) or Python (v3.8+)

- Required R Packages:

survival,pec,survAUC - Required Python Packages:

lifelines,scikit-survival,numpy

Procedure:

- Data Preparation: Load time-to-event dataset with features ((X)), observed times ((T)), and event indicators ((\delta)).

- Censoring Distribution Estimation: Compute Kaplan-Meier estimator for censoring distribution: (\hat{G}(t) = \prod{s \leq t} \left(1 - \frac{\Delta Nc(s)}{Y(s)}\right)) where (\Delta N_c(s)) counts censoring events at time (s) and (Y(s)) is the number at risk.

- Uno's C-index Computation: Calculate weighted concordance: [ \widehat{C}{\text{Uno}} = \frac{\sum{i \neq j} \frac{\deltai}{\hat{G}(Ti)^2} I(Ti < Tj) I(\etai > \etaj)}{\sum{i \neq j} \frac{\deltai}{\hat{G}(Ti)^2} I(Ti < T_j)} ]

- Truncated C-index Calculation: For clinically relevant time horizon (\tau), compute: [ \widehat{C}{\text{tr}} = \frac{\sum{i \neq j} \deltai \cdot I(Ti < Tj, Ti \leq \tau) \cdot I(\etai > \etaj)}{\sum{i \neq j} \deltai \cdot I(Ti < Tj, T_i \leq \tau)} ]

- Bootstrap Validation: Estimate confidence intervals using 1000 bootstrap resamples.

Interpretation Guidelines: Compare Uno's C-index with Harrell's C-index. Differences >0.05 suggest significant censoring dependency. Truncated C-index values should be interpreted within their specific time horizons.

Protocol 2: C-Index Decomposition Analysis

Purpose: To decompose overall concordance into event-event and event-censored components for model diagnostics.

Procedure:

- Pair Identification: Identify all comparable pairs ((i,j)) where (Ti < Tj) and (\delta_i = 1).

- Categorization: Separate pairs into:

- Event-Event (EE): (\deltaj = 1)

- Event-Censored (EC): (\deltaj = 0)

- Component Calculation: [ CI{ee} = \frac{\sum{(i,j) \in EE} I(\etai > \etaj)}{|EE|}, \quad CI{ec} = \frac{\sum{(i,j) \in EC} I(\etai > \etaj)}{|EC|} ]

- Weight Computation: Calculate proportion (\alpha = |EE| / (|EE| + |EC|))

- Harmonic Integration: Verify decomposition: (CI = \alpha \cdot CI{ee} + (1-\alpha) \cdot CI{ec})

Interpretation Guidelines: Models with low (CI{ee}) struggle to discriminate among patients who experienced events, while low (CI{ec}) indicates poor identification of high-risk patients among censored cases.

Protocol 3: Gradient Boosting with C-Index Optimization

Purpose: To implement gradient boosting that directly optimizes concordance for enhanced discriminatory power.

Materials and Reagents:

- Computing Environment: R with

gbmpackage or Python withxgboost,lightgbm - Specialized Software: GBMCI implementation from https://github.com/uci-cbcl/GBMCI

Procedure:

- Smooth Approximation: Implement differentiable approximation of C-index using sigmoid function: [ \widetilde{CI} = \frac{\sum{i \neq j} \deltai \cdot I(Ti < Tj) \cdot \sigma(\etai - \etaj)}{\sum{i \neq j} \deltai \cdot I(Ti < Tj)} ] where (\sigma(x) = 1/(1+e^{-x/\tau})) with temperature parameter (\tau).

- Gradient Computation: Calculate gradient of smoothed C-index with respect to predictions: [ \frac{\partial \widetilde{CI}}{\partial \etak} = \sum{i \neq k} \frac{\deltai \cdot I(Ti < Tk) \cdot \sigma'(\etai - \etak)}{\sum{i \neq j} \deltai \cdot I(Ti < T_j)} ]

- Boosting Iteration: For each iteration (m=1) to (M):

- Compute pseudo-residuals (ri = -\frac{\partial \widetilde{CI}}{\partial \etai})

- Fit regression tree (hm(x)) to pseudo-residuals

- Update model: (fm(x) = f{m-1}(x) + \nu \cdot hm(x)) with shrinkage parameter (\nu)

- Stability Selection Integration: For enhanced variable selection:

- Generate 100 subsamples without replacement

- Apply C-index boosting to each subsample

- Compute selection frequency for each variable

- Retain variables with frequency > PFER-controlled threshold

Interpretation Guidelines: Monitor C-index on validation set to ensure improvement. Compare selected variables with those from Cox models to identify potential non-linear effects.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for C-index Studies

| Reagent/Resource | Function/Purpose | Implementation Notes | Key References |

|---|---|---|---|

| Uno's C-index Estimator | Censoring-adjusted concordance | Requires correct specification of censoring model | Uno et al. (2011) [25] |

| Truncated C-index | Time-restricted discrimination | Clinically relevant for specific prognosis windows | Schmid & Potapov (2012) [24] |

| C-index Decomposition | Diagnostic analysis of concordance | Identifies specific ranking weaknesses | Sanyal et al. (2024) [26] |

| GBMCI Algorithm | Direct C-index optimization | Non-parametric approach, no PH assumptions | Chen et al. (2013) [27] |

| Stability Selection | Enhanced variable selection | Controls per-family error rate in high dimensions | Hofner et al. (2016) [24] |

The concordance index remains a valuable but fundamentally limited metric for survival model evaluation. Its censoring dependency, comparability constraints, and rank-based nature necessitate careful implementation and interpretation, particularly in clinical applications where absolute risk accuracy often matters more than relative rankings. The methodological frameworks presented in this application note – including censoring-adjusted estimators, decomposition approaches, and gradient boosting optimization – provide researchers with sophisticated tools to navigate these challenges.

Moving forward, the survival analysis field must embrace multi-metric evaluation frameworks that complement C-index with calibration measures, absolute error metrics, and clinical utility assessments. The integration of gradient boosting with concordance optimization represents a particularly promising direction, enabling flexible, non-parametric modeling while directly targeting discriminatory performance. By adopting these more nuanced evaluation paradigms, researchers can develop prognostic models that not only achieve statistical excellence but also deliver meaningful clinical insights.

Survival analysis, the statistical methodology for analyzing time-to-event data, plays a pivotal role in medical research, drug development, and reliability engineering. Traditional models like the Cox proportional hazards (CPH) model have dominated this field for decades but impose restrictive assumptions that may limit their predictive accuracy in complex biomedical scenarios. The integration of gradient boosting with survival analysis represents a paradigm shift, enabling researchers to capture nonlinear relationships and complex interactions without strong parametric assumptions while optimizing performance metrics directly relevant to clinical decision-making.

The concordance index (C-index) has emerged as a critical evaluation metric in survival modeling, measuring a model's ability to correctly rank survival times rather than accurately predict absolute event times. This focus on discriminatory power aligns closely with clinical needs where risk stratification often takes precedence over absolute risk prediction. This framework explores the theoretical foundations, methodological approaches, and practical implementations of gradient boosting techniques specifically designed for C-index optimization in survival analysis, providing researchers with a comprehensive toolkit for advancing prognostic model development.

Conceptual Foundations

Survival Analysis Fundamentals

Survival analysis characterizes the time until an event of interest occurs, handling the censored observations inherent in time-to-event data. The survival function ( S(t) = Pr(T > t) ) represents the probability of surviving beyond time ( t ), while the hazard function ( \lambda(t) = \lim_{\Delta t\to 0}(Pr(t < T < t + \Delta t | T > t))/\Delta t ) captures the instantaneous event rate at time ( t ) given survival up to that time. A critical challenge in survival modeling involves appropriately handling right-censored data, where the exact event time is unknown but known to exceed some observed value [27] [28].

The Cox proportional hazards model, introduced in 1972, revolutionized survival analysis by enabling covariate effect estimation without specifying the baseline hazard function: ( \lambda(t | x, \theta) = \lambda0(t)\exp{x^\top\theta} ). This semi-parametric approach estimates parameters by maximizing the partial likelihood ( Lp(\theta) = \prod{i\in E} \frac{\exp{\theta^\top xi}}{\sum{j:tj\geq ti}\exp{\theta^\top xj}} ), where ( E ) represents the set of observed events [29] [27]. While widely adopted, the CPH model relies on the proportional hazards assumption that may not hold in complex biomedical settings.

Gradient Boosting Machinery

Gradient boosting is an ensemble learning method that constructs a powerful predictive model through additive expansion of sequentially fitted weak learners. The general framework minimizes a specified loss function ( L(y, F(x)) ) through iterative addition of base learners ( hm(x) ), typically decision trees. At each iteration ( m ), the algorithm computes negative gradients ( -\partial L(yi, F{m-1}(xi))/\partial F{m-1}(xi) ) and fits a base learner to these residuals. The model update follows ( Fm(x) = F{m-1}(x) + \nu \cdot \rhom hm(x) ), where ( \nu ) represents the learning rate and ( \rho_m ) the step size [30] [24].

This functional gradient descent approach provides exceptional flexibility, allowing the boosting framework to accommodate various data types and problem domains through appropriate specification of the loss function. For survival analysis, specialized loss functions incorporate the unique characteristics of time-to-event data, including censoring mechanisms and time-varying effects.

The Concordance Index (C-Index) as an Optimization Target

The concordance index measures the discriminatory power of a survival model by evaluating the probability that the model correctly ranks pairs of observations by their survival times. Formally, ( C := \mathbb{P}(\etaj > \etai | Tj < Ti) ), where ( \eta ) represents the predictor and ( T ) the survival time [24]. A C-index of 1 indicates perfect discrimination, while 0.5 represents random ordering.

Uno et al. proposed an asymptotically unbiased estimator incorporating inverse probability of censoring weighting: ( \widehat{C}{\text{Uno}}(T, \eta) = \frac{\sum{j,i} \frac{\Deltaj}{ \hat{G}(\tilde{T}j)^2} \, I (\tilde{T}j < \tilde{T}i) I \left(\hat{\eta}j > \hat{\eta}i \right) }{\sum{j,i} \frac{\Deltaj}{ \hat{G}(\tilde{T}j)^2} \, I (\tilde{T}j < \tilde{T}i) } ), where ( \Deltaj ) represents the censoring indicator and ( \hat{G}(\cdot) ) denotes the Kaplan-Meier estimator of the censoring time survival function [24].

Direct optimization of the C-index is challenging due to its non-differentiable nature, which relies on indicator functions. However, smooth approximations enable gradient-based optimization techniques, creating a powerful framework for developing survival models with maximized discriminatory power.

Methodological Approaches

Survival-Specific Gradient Boosting Frameworks

Table 1: Survival Gradient Boosting Approaches and Their Characteristics

| Method | Optimization Target | Key Features | Implementation Examples |

|---|---|---|---|

| Cox Partial Likelihood Boosting | Negative log partial likelihood | Proportional hazards assumption; Linear or nonlinear effects | sksurv.ensemble.GradientBoostingSurvivalAnalysis [30] |

| C-Index Boosting | Smoothed concordance index | Direct discrimination optimization; No distributional assumptions | GBMCI [27] [28] [24] |

| Accelerated Failure Time (AFT) Boosting | Weighted least squares | Parametric baseline distribution; Linear acceleration factors | sksurv.ensemble.GradientBoostingSurvivalAnalysis with loss="ipcwls" [30] |

| Fully Parametric Boosting (FPBoost) | Full survival likelihood | Parametric hazard components; Universal hazard approximator | FPBoost [31] |

| Landmarking Gradient Boosting (LGBM) | Landmark-specific partial likelihood | Dynamic prediction; Incorporates longitudinal biomarkers | LGBM [32] |

C-Index Boosting with Stability Selection

The combination of C-index boosting with stability selection addresses the challenge of variable selection in high-dimensional settings. Stability selection involves fitting the model to multiple subsamples of the original data and selecting variables with high selection frequency across subsamples. This approach controls the per-family error rate (PFER) and enhances the interpretability of the resulting model [24].

The C-index boosting algorithm with stability selection follows this workflow:

- For multiple subsamples of the training data, apply C-index boosting with a fixed number of iterations

- Record the selection frequency for each variable across all subsamples

- Select variables with frequencies exceeding a predefined threshold ( \pi_{\text{thr}} )

- Refit the model using only the stable variables

This approach is particularly valuable in biomarker discovery and high-dimensional genomic applications where identifying the most influential predictors is essential for biological interpretation and clinical translation.

Experimental Protocols

Protocol 1: C-Index Boosting for Biomarker Discovery

Objective: Identify stable biomarkers associated with survival outcomes using C-index boosting with stability selection.

Materials and Data Requirements:

- RNA-seq or gene expression data with appropriate normalization

- Clinical data including survival times and censoring indicators

- Computational environment with R or Python and necessary packages

Procedure:

- Data Preprocessing: Perform quality control, normalization, and batch effect correction on gene expression data. Log-transform expression values if necessary.

- Training-Test Split: Randomly split data into training (70%) and test (30%) sets, preserving event frequency across splits.

- Stability Selection Setup: Generate 100 bootstrap samples from the training data. Set PFER threshold according to desired error control (typically PFER ≤ 1).

- C-Index Boosting: For each bootstrap sample:

- Initialize model with constant prediction

- For m = 1 to M iterations (M = 100-500):

- Compute gradients of smooth C-index approximation

- Fit weak learner (regression tree with limited depth) to gradients

- Update model with shrinkage parameter ν (typically 0.1-0.01)

- Record variables selected across iterations

- Variable Selection: Calculate selection frequencies for all variables across bootstrap samples. Select variables exceeding frequency threshold π_thr (typically 0.6-0.9).

- Model Validation: Fit final model with selected variables on full training set. Evaluate performance on test set using Uno's C-index.

Expected Outcomes: A sparse prognostic model with optimized discriminatory power and controlled false discovery rate for included biomarkers.

Protocol 2: Dynamic Prediction with Landmarking Gradient Boosting

Objective: Develop a dynamic survival prediction model that incorporates longitudinal biomarker measurements.

Materials and Data Requirements:

- Longitudinal biomarker measurements at multiple time points

- Baseline clinical covariates

- Survival outcomes with appropriate follow-up

Procedure:

- Landmark Time Selection: Define landmark times s = {s₁, s₂, ..., sₖ} covering the clinical follow-up period of interest.

- Dataset Creation: For each landmark time s:

- Include subjects at risk at time s

- Extract most recent biomarker measurements prior to s

- Define new outcome: event occurrence in window (s, s+w], where w is the prediction horizon

- Administratively censor patients at s+w

- Model Training: For each landmark dataset, train a gradient boosting model using Cox partial likelihood loss:

- Use regression trees as base learners with maximum depth 2-3

- Apply early stopping based on validation set performance

- Regularize using dropout rate (0.1) or subsampling (0.5-0.8)

- Dynamic Prediction: For a new patient at time s, extract current biomarker values and apply the corresponding landmark model to obtain survival probabilities up to time s+w.

- Model Updating: Update predictions as new biomarker measurements become available by applying the appropriate landmark model.

Expected Outcomes: A dynamic prediction system that provides updated survival risk estimates as new longitudinal data accumulates, with potentially superior performance in settings with nonlinear relationships between biomarkers and survival.

Implementation and Visualization

Workflow Diagram

Gradient Boosting Survival Analysis Workflow

Dynamic Prediction Architecture

Dynamic Prediction with Landmarking

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Category | Item | Specifications | Application Notes |

|---|---|---|---|

| Software Libraries | scikit-survival (Python) | Version 0.25.0+ | Implements GradientBoostingSurvivalAnalysis with Cox and AFT losses [30] |

| GBMCI (R) | Direct C-index optimization for survival data [27] [28] | ||

| XGBoost | survival:cox objective | Scalable tree boosting with survival objectives [29] | |

| Data Structures | Structured Survival Array | (X, y) where y is structured array with (time, event) | Required for scikit-survival implementation [30] |

| Longitudinal Data Format | Person-period format with time-varying covariates | Necessary for landmarking analysis [32] | |

| Validation Metrics | Uno's C-index | With inverse probability of censoring weights | Robust performance evaluation with censored data [24] |

| Integrated Brier Score | Time-dependent assessment | Overall model performance including calibration [33] | |

| Time-dependent AUC | ROC curves at specific time points | Discriminatory power at clinically relevant times [24] | |

| Regularization Techniques | Stability Selection | PFER control (typically ≤1) | Enhanced variable selection for C-index boosting [24] |

| Dropout | dropout_rate=0.1 | Improves generalization in gradient boosting [30] | |

| Subsampling | subsample=0.5-0.8 | Stochastic gradient boosting for better performance [30] |

Performance Considerations and Validation

Quantitative Performance Benchmarks

Table 3: Comparative Performance of Survival Gradient Boosting Methods

| Method | C-Index Range | Strengths | Limitations | Optimal Use Cases |

|---|---|---|---|---|

| Cox Boosting | 0.72-0.83 [33] | Handles nonlinear effects; No PH requirement | Computationally intensive; Hyperparameter sensitive | Moderate-dimensional data with complex effects |

| C-Index Boosting | Improved over Cox in non-PH settings [24] | Direct discrimination optimization; Robust to overfitting | Less sensitive to variable selection; Requires smoothing | Biomarker discovery; Risk stratification |

| AFT Boosting | ~0.72 [30] | Parametric interpretation; Direct time prediction | Distributional assumption; Weighting sensitivity | When absolute survival time prediction is needed |

| Landmarking GB | Superior with longitudinal data [32] | Incorporates time-varying covariates; Dynamic prediction | Multiple model training; Complex implementation | Studies with repeated biomarker measurements |

| FPBoost | Robust across distributions [31] | Full likelihood utilization; Flexible hazard shapes | Computational complexity; Parametric components | When hazard shape estimation is important |

Regulatory and Reporting Considerations

For research intended for regulatory submission or clinical implementation, comprehensive reporting following established guidelines is essential. The TRIPOD+AI (Transparent Reporting of a Multivariable Prediction Model for Individual Prognosis or Diagnosis) and CREMLS (Consolidated Reporting Guidelines for Prognostic and Diagnostic Machine Learning Models) guidelines provide structured frameworks for documenting model development and validation [12].

Recent assessments indicate significant reporting gaps in machine learning applications in oncology, particularly in sample size justification (98% of studies deficient), data quality reporting (69% deficient), and outlier handling strategies (100% deficient) [12]. Adherence to these guidelines enhances reproducibility, facilitates independent validation, and strengthens the evidence base for clinical implementation of survival gradient boosting models.

The integration of survival analysis with gradient boosting represents a powerful methodological advancement for prognostic modeling in biomedical research. By directly optimizing the concordance index, researchers can develop models with superior discriminatory power for clinical risk stratification. The framework presented here encompasses multiple approaches—from traditional Cox-based boosting to innovative C-index optimization and dynamic prediction methods—providing researchers with a comprehensive toolkit for addressing diverse survival analysis challenges.

Future directions in this field include the development of more efficient algorithms for high-dimensional data, enhanced interpretability methods for complex ensemble models, and standardized validation frameworks for clinical implementation. As these methodologies continue to mature, they hold significant promise for advancing personalized medicine through more accurate and dynamic risk prediction tools.

Implementing Gradient Boosting for Survival Prediction and C-Index Optimization

Survival analysis, also known as time-to-event analysis, is a statistical approach used to analyze the time until an event of interest occurs [34]. In medical research, this typically involves events such as death, disease recurrence, or hospitalization. The unique characteristic of survival data is the presence of censored observations—cases where the event of interest has not occurred during the study period [35]. Understanding and properly handling censoring is fundamental to accurate survival modeling, as standard techniques that ignore censoring or use ad hoc methods can produce biased and poorly calibrated predictions [36].

Formally, survival data consists of triplets (X, T, δ), where X represents a vector of features, T represents the observed time, and δ is the event indicator (δ = 1 if the event occurred, δ = 0 if censored) [37]. The observed time Y = min(T, C) where T is the true event time and C is the censoring time [38]. Right-censoring, the most common form, occurs when a subject leaves the study before experiencing the event or the study ends before the event occurs [35] [39]. Other types include left-truncation, which happens when subjects enter the study at different times [35].

Understanding Censoring Mechanisms

Types of Censoring

Table 1: Types of Censoring in Survival Analysis

| Censoring Type | Description | Common Causes | Impact on Analysis |

|---|---|---|---|

| Right Censoring | Event not observed during study period | Study conclusion; Loss to follow-up [35] | Most common; well-handled by standard methods |

| Administrative Censoring | Fixed study end date | Predefined study timeline [35] | Often independent; less problematic |

| Loss to Follow-up | Participant withdraws | Moving, dropping out, non-response [35] | Potentially informative; requires careful handling |

| Competing Risks | Other events prevent target event | Death from unrelated causes [35] | Requires specialized techniques |

The independent censoring assumption is crucial for most survival methods—it assumes that the censoring mechanism is independent of the event process [35]. When this assumption is violated (informative censoring), results may be biased. In practice, censoring mechanisms must be carefully considered during study design and analysis planning.

Challenges in Highly Censored Data

High censoring rates present significant analytical challenges [40]. As medical treatments improve, survival times increase, leading to higher censoring rates in fixed-duration studies [40]. With small sample sizes and high censoring rates, models may fail to converge or produce unstable predictions [40]. For gradient boosting models optimizing the C-index, high censoring can reduce the number of comparable pairs in the objective function, potentially degrading performance.

Data Preprocessing Strategies for Censored Data

Structured Data Preparation

The initial step involves organizing survival data into an appropriate structure. Two essential variables must be created: (1) a follow-up time variable indicating duration from study entry to event or censoring, and (2) an event indicator variable specifying whether the event occurred (1) or was censored (0) [39]. Most survival analysis software requires this structured format [38] [41].

For studies with delayed entry, entry times must be specified to account for left-truncation [35]. Time-varying covariates require special handling, typically through a counting process format with separate intervals for each period of constant covariates [35].

Handling Censored Observations

Table 2: Methods for Handling Censored Data in Survival Analysis

| Method | Approach | Advantages | Limitations |

|---|---|---|---|

| Complete Case Analysis | Discard censored observations [36] | Simple implementation | Biased estimates; loss of information [36] |

| Censor-as-Event | Treat censored as events [36] | Uses all data | Underestimates survival; substantial bias [36] |

| Inverse Probability of Censoring Weighting (IPCW) | Weight observations by inverse of censoring probability [36] | Reduces bias; general applicability [36] | Requires correct censoring model |

| Data Augmentation | Generate synthetic data for censored cases [40] | Addresses small samples | Complex implementation; model-dependent |

Inverse Probability of Censoring Weighting (IPCW) has emerged as a powerful general-purpose technique that can be incorporated into various machine learning algorithms [36]. The IPCW approach assigns weights to observations with known event status to account for similar subjects who were censored. Subjects with longer event times receive higher weights because they are more likely to be censored before experiencing the event [36]. The weighted data can then be analyzed using any method that supports observation weights.

For highly censored datasets with small sample sizes, data augmentation strategies such as PSDATA and nPSDATA have shown promise [40]. These approaches generate synthetic survival data from parametric models or by perturbing existing observations to create more robust training sets.

Feature Engineering for Survival Data

Domain-Specific Feature Creation

Feature engineering for survival analysis should incorporate clinical domain knowledge to create informative predictors. This may include:

- Temporal features: Time-varying covariates, landmark measurements

- Composite scores: Combining multiple biomarkers into prognostic indices

- Interaction terms: Clinically relevant interactions between treatment and patient characteristics

- Nonlinear transformations: Splines or polynomial terms for non-linear relationships

Feature engineering should consider the proportional hazards assumption—if using Cox-based models, features that violate this assumption may require special handling through stratification or time-dependent coefficients.

Feature Selection for Sparse Survival Models

In high-dimensional settings (e.g., genomics), feature selection becomes crucial. Stability selection combined with gradient boosting has demonstrated excellent performance for identifying robust biomarkers while controlling false discovery rates [24]. This approach involves:

- Fitting the model to multiple subsamples of the data

- Recording selection frequencies for each feature

- Retaining features with frequencies exceeding a predefined threshold

- Controlling the per-family error rate (PFER) to maintain inferential validity [24]

Experimental Protocols for Preprocessing Evaluation

Protocol 1: IPCW Implementation for Gradient Boosting

Objective: Implement IPCW to handle censoring in gradient boosting for C-index optimization.

Materials: Survival dataset with features X, observed time Y, and event indicator δ.

Procedure:

- Compute the Kaplan-Meier estimator for the censoring distribution: Ĝ(t)

- Calculate weights for each observation: wi = δi / Ĝ(Y_i)

- For subjects with Y_i > τ (if considering truncated C-index), additional weighting is needed

- Apply gradient boosting to the weighted data using the C-index as optimization criterion

- Validate using appropriate cross-validation techniques that preserve the censoring structure

Validation: Compare calibration and discrimination (C-index) against naive methods (complete-case, censor-as-event).

Protocol 2: Stability Selection for Feature Selection

Objective: Implement stability selection with C-index boosting to identify stable biomarkers.

Materials: High-dimensional survival dataset; gradient boosting algorithm with component-wise base learners.

Procedure:

- Specify the PFER control level (e.g., PFER ≤ 1)

- Generate multiple subsamples of the data (typically 100-500)

- For each subsample, apply C-index boosting with early stopping

- Record selected features in each iteration

- Compute selection frequencies for all features

- Select features with frequency exceeding threshold π_thr (typically 0.6-0.9)

- Refit the model with selected features only

Validation: Assess stability of selected features across multiple runs and compare predictive performance against non-selected models.

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Tools for Survival Data Preprocessing

| Tool/Resource | Type | Function | Implementation Notes |

|---|---|---|---|

| scikit-survival | Python library | Survival analysis with scikit-learn compatibility [38] | Supports IPCW, feature selection, and model validation |

| survival R package | R library | Core survival analysis functions [41] | Standard for Kaplan-Meier, Cox models, and basic preprocessing |

| Stability Selection | Algorithm | Controlled feature selection [24] | Can be implemented with gradient boosting frameworks |

| IPC Weights | Method | Censoring bias correction [36] | Requires Kaplan-Meier estimator for censoring distribution |

| Data Augmentation (PSDATA) | Algorithm | Address small sample sizes [40] | Parametric survival data generation |

| Stata stset command | Software function | Declare survival data structure [39] | Essential for proper analysis in Stata |

Proper data preprocessing is foundational for developing robust survival models using gradient boosting techniques. The integration of IPCW for censoring handling and stability selection for feature identification creates a powerful framework for optimizing the C-index in survival prediction. These methods directly address the key challenges in survival data: biased censoring and high-dimensional features. By implementing the structured protocols and workflows outlined in this document, researchers can enhance the discriminative performance and interpretability of their survival models, ultimately advancing predictive analytics in drug development and clinical research.

Survival analysis encompasses statistical methods for modeling time-to-event data, where the outcome of interest is the time until an event occurs. A fundamental challenge in this field is selecting and implementing appropriate loss functions to train predictive models, particularly when dealing with censored data where the exact event time is unknown for some subjects. Within the broader context of gradient boosting techniques for C-index optimization research, this article provides detailed application notes and protocols for two primary classes of loss functions: partial likelihood-based objectives (derived from the Cox proportional hazards model) and ranking-based objectives (focused on optimizing the concordance index).

The performance and interpretation of survival models are profoundly influenced by the choice of loss function. Traditional approaches often maximize the Cox partial likelihood, which estimates hazard ratios without specifying the baseline hazard. Alternatively, direct optimization of the concordance index (C-index) focuses on improving the model's ability to correctly rank survival times rather than estimating exact hazard proportions. Understanding the implementation nuances of these loss functions is crucial for researchers and drug development professionals building prognostic models for time-to-event outcomes.

Loss Functions in Survival Analysis: Theoretical Foundation

Cox Partial Likelihood

The Cox proportional hazards model is a semi-parametric approach that models the hazard function for an individual with covariates x as h(t|x) = h₀(t)exp(xᵀβ), where h₀(t) is an unspecified baseline hazard function. The model estimates the parameter β by maximizing the partial likelihood, which does not require specification of h₀(t).

The partial likelihood for uncensored data is defined as:

{{< katex >}} Lp(\beta) = \prod{i=1}^{n} \frac{\exp(\beta^T xi)}{\sum{j \in R(ti)} \exp(\beta^T xj)} {{< /katex >}}

where R(tᵢ) is the set of individuals at risk at time tᵢ. For data with censoring, the formulation incorporates the censoring indicator δᵢ (1 for events, 0 for censored observations) [42] [27].

The negative log-partial likelihood, used as a loss function for minimization, is derived as:

{{< katex >}} \ell(\beta) = -\sum{i=1}^{n} \deltai \left[ \beta^T xi - \log\left( \sum{j \in R(ti)} \exp(\beta^T xj) \right) \right] {{< /katex >}}

This loss function is convex in β, ensuring a unique minimum under appropriate conditions [42].

Concordance Index (C-index) Optimization

The concordance index (C-index) evaluates a model's ability to produce a ranking of survival times that matches the observed order of events. It represents the probability that, for a random pair of comparable subjects, the subject with higher predicted risk experiences the event first [24] [27].

Formally, the C-index is defined as:

{{< katex >}} C = P(\etaj > \etai | Tj < Ti) {{< /katex >}}

where ηᵢ and ηⱼ are the predictors for two observations, and Tᵢ and Tⱼ are their survival times [24].

For censored data, Uno et al. proposed an asymptotically unbiased estimator incorporating inverse probability of censoring weighting:

{{< katex >}} \widehat{C}{Uno}(T, \eta) = \frac{\sum{j,i} \frac{\Deltaj}{\hat{G}(\tilde{T}j)^2} I(\tilde{T}j < \tilde{T}i) I(\hat{\eta}j > \hat{\eta}i)}{\sum{j,i} \frac{\Deltaj}{\hat{G}(\tilde{T}j)^2} I(\tilde{T}j < \tilde{T}_i)} {{< /katex >}}

where Δⱼ is the censoring indicator, T̃ are observed survival times subject to censoring, and Ĝ(·) is the Kaplan-Meier estimator of the censoring time survival function [24].

Comparative Analysis of Loss Functions

Table 1: Comparison of Key Survival Analysis Loss Functions

| Loss Function | Objective | Handling of Censoring | Assumptions | Implementation Considerations |

|---|---|---|---|---|

| Cox Partial Likelihood | Estimate hazard ratios | Uses risk sets at event times | Proportional hazards | Efficient for linear predictors; convex optimization |

| C-index Optimization | Maximize ranking accuracy | Inverse probability of censoring weights | No specific hazard form assumption | Non-convex optimization; smoothing often required |

| Accelerated Failure Time (AFT) | Predict survival times directly | Inverse censoring probability weights | Linear relationship with log-time | Weighted least squares formulation |

| Integrated Brier Score | Calibrate survival probability predictions | Inverse probability of censoring weights | None; model-agnostic | Requires estimation of censoring distribution |

Implementation Protocols

Gradient Boosting with Cox Partial Likelihood

Gradient boosting can be implemented to minimize the negative log-partial likelihood of the Cox model. The scikit-survival package provides implementations for both tree-based and component-wise least squares base learners [30].

Protocol 1: Implementing Cox Partial Likelihood in Python

Data Preparation: Load and preprocess survival data. Ensure the data includes:

- Event time (possibly with noise added to break ties)

- Event indicator (1 for event, 0 for censoring)

- Covariates standardized for numerical stability

Model Initialization: Choose appropriate base learners:

- Regression trees for capturing non-linear relationships

- Component-wise least squares for sparse linear models

Gradient Computation: Implement the gradient of the negative log-partial likelihood:

Optimization: Use a gradient-based optimization algorithm (e.g., Newton-CG) to find parameters that minimize the objective function.

Regularization: Control model complexity through:

- Number of base learners (

n_estimators) - Learning rate (shrinkage)

- Dropout rate (for stochastic gradient boosting)

- Subsampling (using random subsets of data for each iteration)

- Number of base learners (

Table 2: Key Hyperparameters for Gradient Boosting Survival Models

| Hyperparameter | Description | Recommended Settings | Impact on Model |

|---|---|---|---|

n_estimators |

Number of boosting iterations | 100-500 (monitor validation performance) | Controls model complexity; too high leads to overfitting |

learning_rate |

Shrinkage factor for each base learner | 0.01-0.1 | Smaller values require more iterations but often generalize better |

max_depth |

Maximum depth of tree base learners | 1-3 | Deeper trees capture more complex interactions |

subsample |

Fraction of samples used for each iteration | 0.5-0.8 | Introduces randomness to prevent overfitting |

dropout_rate |

Fraction of base learners to drop during training | 0-0.2 | Similar to neural network dropout; regularizes ensemble |

C-index Optimization via Gradient Boosting

Direct optimization of the C-index is challenging because it is non-differentiable. Solutions include using a smoothed approximation of the C-index or employing alternative optimization strategies [27].

Protocol 2: Smooth C-index Optimization

Smoothing Approach: Replace the indicator function in the C-index calculation with a differentiable surrogate, such as the logistic function:

{{< katex >}} I(\hat{\eta}j > \hat{\eta}i) \approx \frac{1}{1 + \exp(-(\hat{\eta}j - \hat{\eta}i)/\sigma)} {{< /katex >}}

where σ is a smoothing parameter.

Gradient Boosting Implementation:

- Initialize the model with a constant prediction

- For each iteration:

- Compute the gradient of the smoothed C-index with respect to predictions

- Fit a base learner to the negative gradient

- Update the model with a step-size controlled by the learning rate

Stability Selection: Combine with stability selection to enhance variable selection:

- Fit the model to multiple subsamples of the data

- Select variables with high selection frequency across subsamples

- Control the per-family error rate for more reliable variable selection [24]

Alternative Loss Functions

Accelerated Failure Time (AFT) Model: The AFT model can be implemented as a weighted least squares problem:

{{< katex >}} \arg \min{f} \frac{1}{n} \sum{i=1}^n \omegai (\log yi - f(\mathbf{x}_i)) {{< /katex >}}

where the weight ωᵢ = δᵢ/Ĝ(yᵢ) is the inverse probability of being censored after time yᵢ, and Ĝ(·) is an estimator of the censoring survival function [30].

Scoring Rule Optimization: Recent approaches propose using proper scoring rules as loss functions, which provide a generic framework for training survival models without relying on likelihood-based estimation [43].

Visualization of Methodologies

Gradient Boosting Survival Analysis Workflow

Gradient Boosting Survival Analysis Workflow

Loss Function Comparison and Selection

Loss Function Selection Guide

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for Survival Analysis Implementation

| Tool/Resource | Type | Primary Function | Implementation Considerations |

|---|---|---|---|

| scikit-survival | Python library | Gradient boosting survival analysis | Provides GradientBoostingSurvivalAnalysis and ComponentwiseGradientBoostingSurvivalAnalysis classes |

| GBMCI | R package | Gradient boosting for C-index optimization | Directly optimizes concordance index; non-parametric approach |

| CoxPH | Statistical model | Partial likelihood optimization | Available in most statistical software; baseline for comparison |

| Stability Selection | Method | Variable selection for boosting | Controls per-family error rate; enhances interpretability |

| Uno's C-index | Evaluation metric | Censoring-adjusted discrimination | Uses inverse probability of censoring weights; implemented in various packages |

This article has presented detailed application notes and protocols for implementing partial likelihood and ranking objectives in survival analysis, with particular emphasis on gradient boosting frameworks for C-index optimization. The Cox partial likelihood offers a well-established approach with convex optimization properties, while direct C-index optimization aligns model training with discriminatory performance metrics frequently used in model evaluation.

For researchers and drug development professionals, the choice between these loss functions should be guided by the specific analytical goals, the underlying assumptions of each method, and practical implementation considerations. Gradient boosting provides a flexible framework for implementing both approaches, with regularization techniques essential to prevent overfitting and enhance model interpretability. As survival analysis continues to evolve in biomedical research, understanding these fundamental implementation details remains crucial for developing robust prognostic and predictive models.

Gradient Boosting Architecture for Time-to-Event Prediction