Non-Destructive Chemical Analysis: Cutting-Edge Techniques to Preserve Evidence Integrity in Research and Forensics

This article provides a comprehensive overview of modern non-destructive testing (NDT) and evaluation techniques for chemical analysis, tailored for researchers, scientists, and drug development professionals.

Non-Destructive Chemical Analysis: Cutting-Edge Techniques to Preserve Evidence Integrity in Research and Forensics

Abstract

This article provides a comprehensive overview of modern non-destructive testing (NDT) and evaluation techniques for chemical analysis, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of preserving sample integrity across diverse fields, from forensic drug analysis to cultural heritage and industrial quality control. The scope ranges from established spectroscopic methods to emerging ambient mass spectrometry, offering insights into troubleshooting, method optimization, and comparative validation frameworks. By synthesizing the latest trends, this review serves as a guide for selecting and implementing non-destructive strategies that maximize information yield while maintaining the evidential value of irreplaceable samples.

Preserving Integrity: The Core Principles and Critical Need for Non-Destructive Analysis

Defining Non-Destructive, Non-Invasive, and Micro-Destructive Analysis

In chemical analysis research, particularly in fields where sample integrity is paramount, the choice of analytical technique is critical. The terms non-destructive, non-invasive, and micro-destructive represent a hierarchy of methodological approaches that balance analytical precision with the preservation of evidentiary integrity. For researchers in drug development and forensic science, understanding these distinctions is essential for designing ethically and scientifically sound methodologies. Non-destructive testing (NDT) and evaluation (NDE) encompass techniques that allow for the inspection, testing, or evaluation of materials without destroying or permanently altering their functionality or structural integrity [1] [2]. These approaches enable repeated testing of the same specimen and are invaluable for longitudinal studies, precious samples, and in-situ analysis.

Definitions and Distinctions

Core Terminology

Non-Destructive Analysis: Analytical techniques that do not permanently alter or damage the sample being tested, allowing it to be reused or returned to service after analysis. These methods typically involve probing a material with various forms of energy and analyzing the response to determine properties or detect flaws [1]. While generally preserving sample functionality, some methods may involve minor surface preparation or contact that doesn't compromise structural integrity.

Non-Invasive Analysis: A stricter subset of non-destructive methods that involve no physical contact with the sample and no alteration of its physical or chemical state. These techniques are performed without any sample preparation or direct contact that might potentially contaminate or minimally affect the most sensitive surfaces [3]. The term implies a higher assurance of zero alteration to the sample.

Micro-Destructive Analysis: Techniques that require the removal of minute sample quantities (typically microscopic) or cause highly localized damage that is negligible relative to the overall sample. While "destructive" in the strictest sense, the damage is often invisible to the naked eye or confined to an insignificant area, making these methods "minimally destructive" for practical purposes [3]. Some researchers classify X-ray fluorescence spectroscopy as micro-destructive due to potential chemical alterations from X-ray exposure on sensitive materials [3].

Comparative Analysis

Table 1: Comparative Characteristics of Analytical Approaches

| Characteristic | Non-Invasive | Non-Destructive | Micro-Destructive |

|---|---|---|---|

| Sample Contact | No physical contact | Possible physical contact | Minimal physical contact/sampling |

| Sample Alteration | No alteration of physical or chemical state | No significant alteration of functionality | Highly localized/minor alteration |

| Sample Preparation | None required | Minimal or none required | Minimal preparation possible |

| Analytical Capabilities | Surface/elemental analysis | Surface/subsurface analysis | Bulk composition analysis |

| Sample Reusability | Fully reusable | Fully reusable | Essentially reusable for most purposes |

| Typical Techniques | Raman spectroscopy, Visual inspection, IR thermography | XRF, Ultrasonic testing, Ground-penetrating radar | Micro-sampling for chromatography, Laboratory-XRF with preparation |

Technical Approaches and Instrumentation

Non-Invasive Methodologies

Non-invasive techniques are particularly valuable for analyzing irreplaceable samples where any alteration is unacceptable. These methods typically rely on photons, electromagnetic waves, or visual inspection without physical contact.

Visual Inspection represents the most fundamental non-invasive approach, enhanced through digital microscopy, borescopes, and remote visual inspection (RVI) equipment that can document sample condition without contact [4].

Raman Spectroscopy enables molecular identification through inelastic scattering of monochromatic light, typically from a laser source. The technique provides vibrational information about molecular bonds without contact or sample preparation, making it ideal for pharmaceutical polymorph identification and counterfeit drug detection [3].

Ground-Penetrating Radar (GPR) utilizes high-frequency electromagnetic waves (20 MHz to 2.5 GHz) to image subsurface structures in non-metallic materials. The transmitting antenna emits pulses into the material, while the receiving antenna captures reflections from internal interfaces or embedded objects, generating detailed cross-sectional images without physical intrusion [5].

Non-Destructive Methodologies

Non-destructive techniques may involve physical contact or energy exposure that doesn't compromise the sample's future utility.

Ultrasonic Testing (UT) employs high-frequency sound waves (typically in the MHz range) to detect internal flaws or characterize material properties. The technique measures the time-of-flight and amplitude of ultrasonic pulses that travel through the material, with variations indicating discontinuities or property changes [6] [1]. Advanced methods like Phased Array Ultrasonic Testing (PAUT) use multiple transducer elements for enhanced imaging capabilities [7].

X-Ray Fluorescence (XRF) Spectroscopy enables elemental analysis by exciting atoms in the sample with primary X-rays, then detecting the characteristic secondary X-rays emitted as electrons transition between energy levels. Portable XRF systems allow in-situ analysis of solid samples with minimal preparation, though laboratory systems may require grinding or pelletizing for optimal quantification [3].

Electrical Resistivity (ER) measures a material's resistance to electrical current flow, which correlates with properties like porosity, permeability, and hydration in construction materials and pharmaceutical compacts [6].

Micro-Destructive Methodologies

Micro-destructive techniques provide more detailed compositional information through minimal sampling.

Micro-sampling for Chromatography involves removing minute quantities (typically micrograms) for analysis via Gas Chromatography-Mass Spectrometry (GC-MS) or Liquid Chromatography-Mass Spectrometry (LC-MS). While requiring physical sampling, the amount is negligible for most practical purposes, especially when collected from non-visible areas [3].

Laboratory-based XRF Systems may require surface preparation such as polishing, grinding, or pelletizing to optimize analytical precision. These procedures alter a negligible portion of the sample while enabling more accurate quantitative analysis compared to portable systems [3].

Instrumented Indentation Testing creates localized plastic deformation using a precision stylus that engages the material with controlled force, then measures the response during frictional sliding. This approach provides mechanical property data (hardness, yield strength) from a highly localized test area [8].

Experimental Protocols

Protocol 1: Non-Invasive Analysis Using Raman Spectroscopy

Table 2: Research Reagent Solutions for Raman Spectroscopy

| Item | Function | Specifications |

|---|---|---|

| Raman Spectrometer | Molecular identification via inelastic light scattering | 785nm laser, CCD detector, spectral range 200-2000 cm⁻¹ |

| Spectral Calibration Standard | Instrument verification | Neon or polystyrene reference standards |

| Positioning Stage | Precise sample alignment | Motorized XYZ stage with rotational capability |

| Microscope Objectives | Laser focusing and signal collection | 10x, 20x, 50x magnification options |

Workflow Description: Raman spectroscopy operates by focusing a monochromatic laser source onto the sample, where photons interact with molecular vibrations, resulting in energy shifts in the scattered light. These shifts provide a characteristic molecular fingerprint that can identify compounds, polymorphs, and mixtures without contact or sample preparation [3].

Step-by-Step Procedure:

- Instrument Calibration: Verify spectrometer performance using a neon emission source or polystyrene reference standard to ensure accurate wavelength registration.

- Sample Positioning: Place the sample on the stage without any preparation. For powdered pharmaceuticals, ensure a consistent, flat surface when possible.

- Laser Alignment: Focus the laser beam on the area of interest using the microscope objective, starting with lowest power (0.5 mW) to prevent potential photodegradation.

- Spectral Acquisition: Collect spectra with integration times of 1-10 seconds, accumulating multiple scans (typically 16-64) to improve signal-to-noise ratio.

- Data Analysis: Compare acquired spectra against reference libraries for compound identification, noting characteristic peak positions and relative intensities.

Protocol 2: Non-Destructive Analysis Using X-Ray Fluorescence

Table 3: Research Reagent Solutions for XRF Analysis

| Item | Function | Specifications |

|---|---|---|

| XRF Spectrometer | Elemental composition analysis | Rhodium or tungsten X-ray tube, silicon drift detector |

| Certified Reference Materials | Quantitative calibration | NIST-traceable standards matching sample matrix |

| Helium Purge System | Enhanced light element detection | Reduces air absorption for elements Na-Mg |

| Sample Cups | Standardized presentation | Polypropylene with XRF film windows |

Workflow Description: XRF spectroscopy functions by exciting atoms in the sample with high-energy X-rays from a tube, causing ejection of inner-shell electrons. As outer-shell electrons transition to fill these vacancies, they emit characteristic X-ray fluorescence photons whose energies identify elements present and whose intensities correlate with concentration [3].

Step-by-Step Procedure:

- Instrument Setup: Power up the XRF spectrometer and allow the X-ray tube to stabilize for 30-60 minutes to ensure consistent output.

- Method Selection: Choose analytical conditions based on target elements - higher kV settings (40-50 kV) for heavy elements, lower kV (15-20 kV) for light elements.

- Sample Presentation: Place the sample in the measurement chamber ensuring a flat surface for consistent geometry. For loose powders, use standardized sample cups with polypropylene film windows.

- Analysis Cycle: Initiate measurement with parameters optimized for the sample type. Typical measurement times range from 10-300 seconds per spot depending on required precision.

- Data Processing: Use fundamental parameters or empirical calibration models to convert X-ray intensities to elemental concentrations, verified with certified reference materials.

Protocol 3: Micro-Destructive Analysis Using Chromatographic Micro-Sampling

Table 4: Research Reagent Solutions for Chromatographic Micro-Sampling

| Item | Function | Specifications |

|---|---|---|

| Micro-sampling Tools | Minute sample collection | Stainless steel scalpel, micro-drill, or capillary tubes |

| HPLC-MS System | Separation and identification | C18 column, ESI ionization, triple quadrupole mass analyzer |

| Solid Phase Extraction | Sample clean-up | C18 or mixed-mode cartridges (1-10mg capacity) |

| Solvent Systems | Compound extraction | HPLC-grade methanol, acetonitrile, and buffers |

Workflow Description: Micro-destructive sampling for chromatographic analysis involves removing minuscule material amounts (typically 10-100 micrograms) from non-critical sample areas, followed by extraction, separation, and mass spectrometric detection. This approach provides comprehensive molecular information while preserving the bulk sample integrity [3] [9].

Step-by-Step Procedure:

- Sample Selection: Identify an appropriate sampling location that is minimally visible or structurally insignificant. Document the sampling area with photography.

- Micro-sampling: Using a sterile scalpel or micro-drill, collect 10-100 micrograms of material, taking care to confine removal to the selected area.

- Sample Preparation: Transfer the micro-sample to a vial and add appropriate extraction solvent (typically 100-500 µL of methanol or methanol-water mixture). Sonicate for 10-15 minutes to enhance extraction.

- Chromatographic Analysis: Inject an aliquot (typically 1-10 µL) into the HPLC-MS system. Employ gradient elution with a C18 column and mobile phases of water and acetonitrile, both modified with 0.1% formic acid.

- Data Interpretation: Identify compounds through retention time matching with standards and mass spectral fragmentation patterns compared against databases.

Applications in Chemical Evidence Analysis

The hierarchical application of these analytical approaches is particularly valuable in pharmaceutical research and forensic chemistry, where maintaining evidence integrity is crucial.

In pharmaceutical development, non-invasive Raman spectroscopy can identify polymorphic forms in final products without compromising packaging or product stability [3]. Non-destructive XRF rapidly screens raw materials for elemental contaminants, while micro-destructive LC-MS/MS analyzes formulation homogeneity with minimal product consumption.

For forensic evidence, the analytical sequence typically begins with non-invasive visual documentation and Raman screening for drug identification, progresses to non-destructive XRF for gunshot residue analysis, and reserves micro-destructive GC-MS for confirmatory testing when required [1]. This approach preserves evidence for re-examination by defense experts and maintains chain-of-custody integrity.

Cultural heritage analysis exemplifies the extreme application of these principles, where techniques must extract maximum information from irreplaceable objects. Studies on historical pigments utilize non-invasive Raman spectroscopy for initial identification, followed by non-destructive XRF for elemental mapping, with only micro-sampling permitted for ultramarine blue verification through chromatographic techniques [3].

Emerging Trends and Future Directions

The field of non-destructive analysis is evolving rapidly through technological integration. NDE 4.0 represents the digital transformation of non-destructive evaluation, incorporating artificial intelligence, digital twins, and the industrial metaverse to enable real-time diagnostics and predictive maintenance [1] [4]. These advancements are shifting inspection paradigms from periodic evaluations to continuous, intelligent asset management.

Multi-modal approaches combine complementary techniques to overcome individual limitations. For instance, integrating ultrasonic testing with electrical resistivity provides comprehensive data on both mechanical and hydration properties of materials [6]. Similarly, combining spectroscopic methods with imaging technologies enables both chemical and structural characterization in a single analytical platform [9].

The miniaturization of analytical instrumentation has enabled in-situ analysis through portable XRF, handheld Raman spectrometers, and mobile GC-MS systems. These field-deployable tools bring laboratory-grade capabilities to the sample location, eliminating transportation risks and enabling rapid decision-making [3] [4].

Artificial intelligence and machine learning are revolutionizing data interpretation from non-destructive techniques. AI-driven image recognition enhances defect detection in ultrasonic testing, while machine learning models predict material performance from spectral data patterns, reducing reliance on expert interpretation and increasing analytical throughput [7] [1].

The Imperative of Evidence Preservation in Forensic Science and Cultural Heritage

Application Note: The Role of Non-Destructive Techniques in Evidence Integrity

In the interconnected fields of forensic science and cultural heritage, the integrity of the original sample is paramount. The application of non-destructive testing (NDT) and non-destructive evaluation (NDE) methods provides a powerful suite of analytical tools that allow for the examination of materials, components, and systems for discontinuities or differences in characteristics without causing damage to the part being inspected [10]. This capability is critical for everything from failure analysis and criminal investigations to ensuring the long-term preservation of invaluable cultural artifacts. The core principle is the reliance on different forms of energy—including sound waves, light, magnetism, and radiation—to interrogate a material, providing measurable signals about its condition without physical compromise [10].

The technical foundation of NDT is essential for both quality assurance in forensic methodologies and the preservation ethics inherent to cultural heritage. For forensic researchers and drug development professionals, this means the preservation of valuable or rare samples, and the ability to conduct repeated tests on a single specimen to track changes over time, a capability not possible with destructive methods [10]. The following sections detail the standardized protocols and advanced techniques that make this possible, ensuring evidence remains unaltered for future analysis or legal proceedings.

The selection of an appropriate non-destructive method depends on the analytical question, the nature of the evidence, and the required depth of information. The table below summarizes the primary NDT techniques, their operating principles, and their representative applications in forensic and cultural heritage contexts.

Table 1: Comparison of Key Non-Destructive Testing Methods for Evidence Preservation

| Method | Governing Principle | Primary Applications | Limitations |

|---|---|---|---|

| Visual Testing (VT) [10] | Use of naked eye or optical enhancement to examine surface conditions. | Identification of surface flaws like cracks, corrosion, or misalignments; initial artifact assessment. | Limited to surface features; requires trained professional. |

| Liquid Penetrant Testing (LPT) [10] | Capillary action draws a liquid penetrant into surface-breaking discontinuities. | Detecting surface cracks, porosity, and leaks in non-porous materials (e.g., metal artifacts, toolmarks). | Limited to surface flaws open to the surface; cannot detect subsurface defects. |

| Ultrasonic Testing (UT) [10] | High-frequency sound waves are transmitted into a material; echoes from internal flaws are measured. | Detecting internal voids, inclusions, and laminations; thickness measurement for corrosion monitoring. | Effectiveness can be reduced in coarse-grained or highly attenuative materials; requires skilled operator. |

| Radiographic Testing (RT) [10] | Penetrating radiation (X/Gamma rays) passes through material, creating an image based on density variations. | Detecting internal voids, porosity, and inclusions in complex assemblies; examining internal structures of artifacts. | Involves ionizing radiation safety protocols; can be a slow process; equipment can be costly. |

| Eddy Current Testing (ECT) [10] | Electromagnetic induction induces eddy currents in conductive materials; flaws disrupt current flow. | Detecting surface and near-surface cracks in metals; material sorting and heat damage detection. | Limited to conductive materials; not suitable for deep flaws. |

| Magnetic Particle Testing (MPT) [10] | A magnetized ferromagnetic material will have leakage fields at surface flaws, attracting magnetic particles. | Detecting surface and near-surface discontinuities in ferromagnetic materials (iron, cobalt, nickel). | Limited to ferromagnetic materials; not for deep flaws or non-magnetic alloys. |

Standardized Protocols for Evidence Preservation

Protocol: Liquid Penetrant Testing for Surface Flaw Detection

1. Scope: This protocol provides a standardized method for detecting surface-breaking discontinuities (e.g., cracks, porosity) in non-porous materials commonly encountered in forensic toolmark analysis or metallic cultural artifacts [10].

2. Reagents and Materials:

- Cleaner/Remover

- Penetrant (Visible or Fluorescent Dye)

- Developer (Non-Aqueous, Water-Soluble, or Dry Powder)

3. Procedure: 1. Surface Preparation: Thoroughly clean the test surface to remove all contaminants (dirt, grease, rust, paint) that could block penetrant entry. Allow the surface to dry completely [10]. 2. Penetrant Application: Apply the penetrant uniformly across the surface by spraying, brushing, or dipping. Allow a sufficient dwell time (as specified by the penetrant manufacturer) for the liquid to seep into flaws via capillary action [10]. 3. Excess Penetrant Removal: Carefully remove the excess penetrant from the surface using a clean cloth, followed by a solvent-dampened cloth for the final cleaning. Avoid over-cleaning that removes penetrant from the flaws [10]. 4. Developer Application: Apply a thin, uniform layer of developer over the entire tested surface. The developer acts as a blotter, drawing the trapped penetrant out of the discontinuity [10]. 5. Inspection and Evaluation: After a specified development time, inspect the surface. For visible dye penetrants, inspect under adequate white light. For fluorescent penetrants, inspect in a darkened area under ultraviolet (UV-A) light (e.g., 365 nm wavelength). Mark and document all relevant indications [10].

Protocol: Digital Evidence Preservation via Chain of Custody and Hashing

1. Scope: This protocol outlines the critical steps for preserving the integrity of digital evidence—such as data from infotainment systems, videos, and device logs—from collection through analysis, ensuring its admissibility in legal proceedings [11].

2. Reagents and Materials:

- Forensic Write-Blocker Hardware

- Forensic Imaging Software

- Access to a Digital Evidence Management System (DEMS)

3. Procedure: 1. Drive Imaging: Before any analysis, create a bit-for-bit duplicate (forensic image) of the original digital evidence file or storage medium. All analysis must be performed on this image, never on the original evidence [11]. 2. Chain of Custody Logging: From the moment of collection, maintain a continuous and unbroken chain of custody. Log every access, detailing who accessed the evidence, when, for what purpose, and what actions were performed [11]. 3. Integrity Verification: The imaging process should generate a cryptographic hash value (e.g., MD5, SHA-256) for the original evidence and its image. Any alteration to the data will change this hash. Verify the hash before and after any analysis to prove the evidence is unaltered [11]. 4. Secure Storage: Store original evidence and forensic images in a secure repository with strong access controls, including multi-factor authentication (MFA) and granular user permissions. All stored data should be encrypted (e.g., AES-256) both at rest and in transit [11].

Workflow for Method Selection in Evidence Preservation

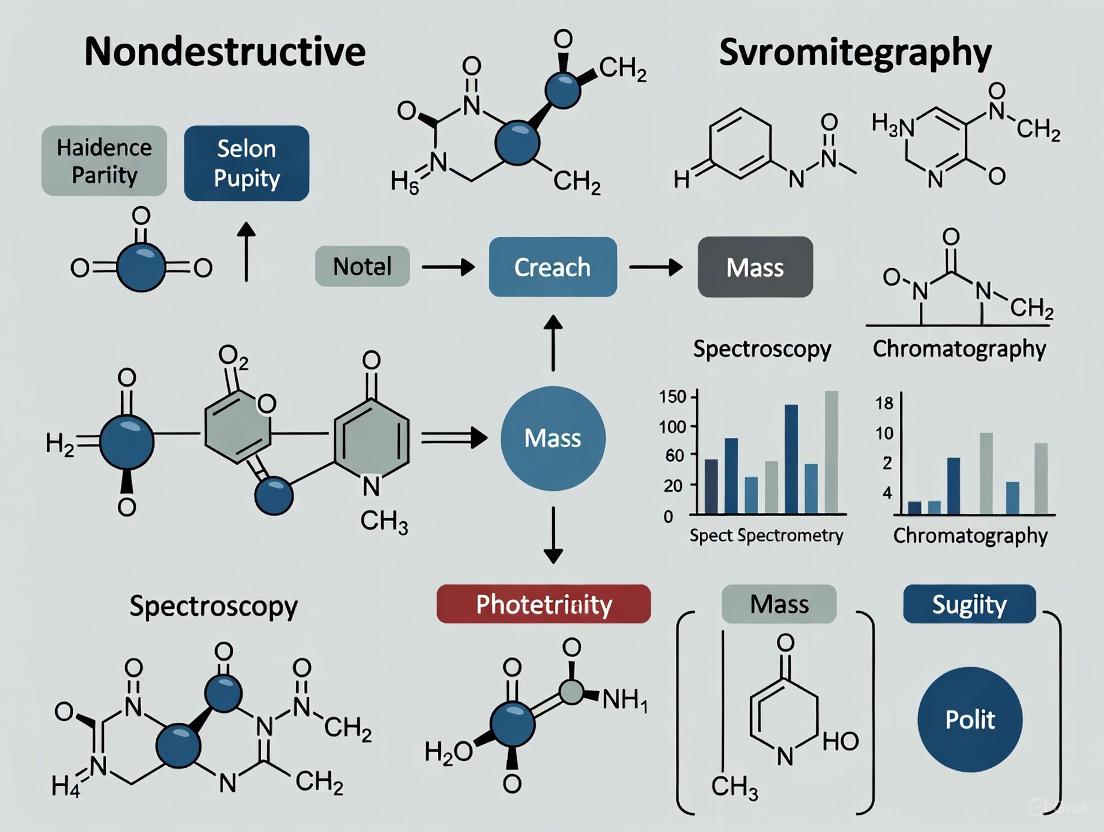

The following diagram illustrates the logical decision-making process for selecting the most appropriate non-destructive method based on the analytical goal and sample properties.

Diagram 1: NDT Method Selection Workflow

Research Reagent Solutions and Essential Materials

The following table details key materials and reagents essential for executing the non-destructive testing and evidence preservation protocols described in this document.

Table 2: Essential Research Reagents and Materials for Evidence Preservation

| Item | Function/Application |

|---|---|

| Forensic Write-Blocker | A hardware device that allows read-access to digital storage media while preventing any writes or modifications, preserving the original evidence [11]. |

| Cryptographic Hashing Algorithm | A software tool (e.g., for generating SHA-256) that creates a unique digital fingerprint of a file or drive, used to verify evidence integrity [11]. |

| Liquid Penetrant Kit | A complete set including cleaner, penetrant (visible or fluorescent), and developer for detecting surface-breaking flaws via capillary action [10]. |

| Ultrasonic Transducer | A device that generates high-frequency sound waves for internal material inspection and thickness measurement [10]. |

| Digital Evidence Management System (DEMS) | A secure software platform (often cloud-based) for storing, managing, and sharing digital evidence with robust chain-of-custody logging, encryption, and access controls [11]. |

| UV-A Light Source | A lamp emitting long-wave ultraviolet light (365 nm) for the inspection of fluorescent dye penetrants in liquid penetrant testing [10]. |

Non-destructive testing (NDT) methods represent a paradigm shift in chemical and materials research by enabling comprehensive analysis while preserving specimen integrity. These techniques allow investigators to maintain evidentiary continuity from initial in-situ measurement through subsequent re-examination cycles. The fundamental advantage lies in the ability to perform repeated, multi-point assessments on the same specimen, eliminating the sampling bias inherent in destructive methods and providing a more complete understanding of material heterogeneity and property distribution.

Within pharmaceutical development and analytical research, this capability ensures that valuable reference standards, clinical trial materials, and research specimens remain available for future verification, additional testing, or regulatory review. The application of NDT creates a continuous analytical pathway where data collected at different times can be directly correlated because the source material remains physically intact and available for further investigation.

Core Advantages of Non-Destructive Approaches

Preservation of Evidentiary Integrity

Non-destructive methods maintain the physical and chemical integrity of research specimens, which is critical for analytical continuity. Unlike destructive techniques that consume or alter samples, NDT allows the same specimen to undergo multiple analytical procedures over time. This capability is particularly valuable in regulated environments like pharmaceutical development, where material traceability and re-testing capability are often required for regulatory compliance and quality assurance [12]. The preserved specimens serve as permanent reference materials, enabling direct comparison of results across different analytical campaigns and instrumentation.

Comprehensive Spatial Documentation

Destructive testing methods, such as core sampling, evaluate only discrete points within a material, potentially missing critical variations and anomalies [13]. In contrast, non-destructive techniques enable comprehensive spatial mapping of properties across entire specimens or structures. This capability provides researchers with a complete understanding of material heterogeneity, gradient formation, and defect distribution. For concrete documentation in reuse scenarios, NDT methods have demonstrated the ability to reliably document properties uniformly across entire structural elements, addressing a critical limitation of point-based destructive methods [13].

Enhanced Data Reliability Through Multi-Method Correlation

Combining multiple non-destructive techniques creates a synergistic analytical approach where the limitations of one method are compensated by the strengths of another. Research on concrete documentation has shown that combining ultrasonic pulse velocity (UPV), rebound hammer (RH), and electrical resistivity (ER) methods improves the accuracy of property estimation beyond what any single method can achieve independently [13]. This multi-modal approach enhances measurement reliability and provides a more robust foundation for critical decisions in research and development.

Temporal Monitoring Capabilities

The non-destructive nature of these methods enables researchers to monitor dynamic processes and property evolution over time on the same specimen. This temporal dimension is invaluable for studying degradation pathways, reaction kinetics, and material aging under various environmental conditions. The ability to collect longitudinal data from identical locations eliminates inter-specimen variability, providing clearer insights into time-dependent phenomena that would be impossible to reconstruct from destructive testing alone.

Application Notes and Experimental Protocols

Protocol 1: Multi-Modal Documentation for Material Property Assessment

This protocol outlines a systematic approach for comprehensive material characterization using complementary NDT methods, adapted from research on concrete documentation for reuse applications [13].

Objective: To reliably document mechanical and durability properties of solid-phase materials through correlated non-destructive measurements while maintaining specimen integrity for future re-examination.

Materials and Equipment:

- Ultrasonic pulse velocity tester with transducers and coupling agent

- Rebound hammer (Schmidt hammer) with certified calibration

- Electrical resistivity meter with four-point Wenner array probe

- Environmental monitoring equipment (temperature, relative humidity)

- Specimen positioning fixture for measurement consistency

- Data recording system with spatial referencing capability

Procedure:

- Specimen Preparation and Conditioning:

- Record initial specimen dimensions, mass, and visual characteristics

- Condition specimens to standardized moisture content if required for comparative analysis

- Establish coordinate system for spatial referencing of all measurements

Ultrasonic Pulse Velocity Measurement:

- Apply acoustic coupling agent to transducer contact surfaces

- Position transducers on opposite parallel faces of specimen for direct transmission measurement

- Record wave transit time with nanosecond precision

- Calculate UPV using measured path length: UPVC = Path Length / Transit Time

- Repeat at minimum five locations distributed across specimen surface

Rebound Hammer Assessment:

- Position specimen in stable configuration against solid background

- Press hammer perpendicular to specimen surface at predetermined test locations

- Record rebound number from hammer scale after impact

- Take minimum ten readings per specimen, excluding outliers varying by >±20% from mean

- Calculate mean rebound index after statistical outlier removal

Electrical Resistivity Measurement:

- Arrange four-point probe in linear Wenner configuration with equal electrode spacing

- Ensure good electrode-specimen contact with conductive gel if necessary

- Apply alternating current to outer electrodes, measure potential difference between inner electrodes

- Calculate apparent resistivity: ρ = 2πaV/I, where a = electrode spacing

- Conduct measurements at multiple orientations to assess anisotropy

Data Correlation and Analysis:

- Correlate UPV and rebound hammer data using SonReb method for mechanical property estimation

- Establish relationship between electrical resistivity and durability parameters (e.g., chloride migration coefficient)

- Create spatial property maps by integrating all measurement datasets

- Document measurement locations for future re-examination

Quality Assurance:

- Validate instrument calibration using certified reference materials before testing

- Monitor and record environmental conditions throughout testing protocol

- Implement control measurements on reference specimens to detect instrument drift

- Perform statistical analysis on replicate measurements to determine precision

Protocol 2: Historical Data Review for Analytical Continuity

This protocol implements systematic historical data comparison to enhance analytical accuracy and detect methodological inconsistencies, adapted from quality assurance practices in analytical laboratories [12].

Objective: To maintain data integrity across multiple analytical sessions by leveraging historical data trends to identify anomalies and ensure measurement consistency.

Materials and Equipment:

- Historical dataset with minimum 4-5 previous analytical results for each specimen/sampling location

- Statistical analysis software capable of time-series analysis and control chart generation

- Documentation system for tracking specimen history and analytical parameters

- Instrument performance verification standards

Procedure:

- Historical Data Compilation:

- Collect minimum 4-5 previous analytical results for each specimen or sampling location

- Document all relevant analytical parameters (instrumentation, methods, operators, conditions)

- Establish baseline variability and expected ranges for each analyte

Blinded Data Review:

- Conduct initial historical comparison without knowledge of current results to prevent bias

- Perform both tabular review (direct numerical comparison) and graphical time-series analysis

- Establish upper and lower control limits based on historical variability

Anomaly Identification:

- Flag results showing significant deviation from historical trends (>2 standard deviations from mean)

- Identify potential contamination through unexpected analyte detection or concentration spikes

- Detect possible sample switches through correlated analyte pattern mismatches

Root Cause Investigation:

- Review laboratory data package for analytical errors or quality control failures

- Evaluate seasonal trends and environmental factors that may explain variations

- Examine field data (pH, ORP, specific conductance) for sampling condition changes

- Assess sampling personnel notes for unusual events or conditions

Corrective Action and Verification:

- Consult laboratory to review reported data and analytical documentation

- Request sample reanalysis if insufficient evidence supports anomalous results

- Implement formal corrective actions for systematic errors

- Document all investigative steps and resolution for analytical continuity

Quality Assurance:

- Maintain specimen identity and chain of custody throughout analytical history

- Standardize analytical methods across timepoints to ensure data comparability

- Implement statistical process control for ongoing monitoring of analytical performance

- Document all methodological changes that may affect data comparability

Quantitative Data Comparison of NDT Methods

Table 1: Performance Characteristics of Non-Destructive Testing Methods

| Method | Measured Parameter | Typical Range | Target Properties | Accuracy Considerations | Primary Applications |

|---|---|---|---|---|---|

| Ultrasonic Pulse Velocity (UPV) | Wave transit time | 3.0-5.0 km/s [13] | Compressive strength, homogeneity, internal defects | Tends to underestimate strength due to internal defect sensitivity [13] | Detection of internal voids, homogeneity assessment, strength estimation |

| Rebound Hammer (RH) | Surface hardness | 15-45 rebound number [13] | Surface hardness, compressive strength | Often overestimates strength due to surface carbonation [13] | Near-surface strength assessment, uniformity evaluation |

| Electrical Resistivity (ER) | Electrical resistance | 10-500 kΩ·cm [13] | Chloride ingress resistance, permeability | Accuracy affected by moisture variability and internal inconsistencies [13] | Durability assessment, corrosion risk evaluation, permeability estimation |

| SonReb Combined Method | UPV + RH correlation | Varies with mixture | Compressive strength | Improved accuracy by compensating individual method limitations [13] | Reliable strength estimation, especially with unknown aggregate/moisture conditions |

Table 2: Documented Relationships Between NDT Measurements and Material Properties

| Material System | Testing Method | Correlation Equation | Coefficient of Determination (R²) | Experimental Conditions |

|---|---|---|---|---|

| Concrete mixtures (w/c=0.45-0.84) [13] | UPV vs. Strength | Exponential relationship | 0.67-0.89 (varies with aggregate) | 15 mixtures, 3 aggregate types, systematic evaluation |

| Concrete mixtures (w/c=0.45-0.84) [13] | RH vs. Strength | Power-law relationship | 0.72-0.85 (varies with aggregate) | 15 mixtures, 3 aggregate types, systematic evaluation |

| Concrete mixtures (w/c=0.45-0.84) [13] | ER vs. Chloride Migration | Inverse relationship | 0.61-0.79 (varies with saturation) | Controlled laboratory environment, varying saturation levels |

| Combined Method [13] | SonReb vs. Strength | Multivariable regression | >0.90 (improved accuracy) | Compensation for individual method limitations |

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Non-Destructive Testing

| Material/Reagent | Function | Application Specifics | Quality Considerations |

|---|---|---|---|

| Acoustic Coupling Gel | Ensures efficient ultrasonic wave transmission between transducer and specimen | UPV measurements | High acoustic impedance matching, non-reactive with test materials, consistent viscosity |

| Surface Preparation Kit | Standardizes specimen surface conditions for reliable measurements | RH and ER testing | Controlled abrasion protocols, dust removal, surface flatness verification |

| Conductive Electrolyte Gel | Facilitates electrical contact between resistivity probe and specimen surface | ER measurements | Stable ionic concentration, non-corrosive, appropriate viscosity for vertical surfaces |

| Reference Calibration Standards | Verifies instrument calibration and measurement reliability | All NDT methods | Certified reference materials with traceable properties, regular recalibration schedule |

| Environmental Monitoring Sensors | Records temperature and humidity during testing | All NDT methods | NIST-traceable calibration, appropriate measurement range and precision |

| Spatial Referencing System | Documents measurement locations for future re-examination | All NDT methods | Precise coordinate measurement, compatibility with data management systems |

Visualization of Experimental Workflows

Non-Destructive Analysis and Re-examination Workflow

Historical Data Review Process for Analytical Continuity

Non-Destructive Testing for Material Integrity in Chemical Analysis Research

Non-destructive testing (NDT) comprises a group of analysis techniques used to evaluate material properties, component integrity, and structural health without causing damage to the test object. These methods are critically valuable in chemical analysis research where preserving evidence integrity is paramount. NDT enables repeated measurements on the same specimen, allows monitoring of progressive changes, and maintains the evidential chain of custody by avoiding alteration of source materials. This application note examines the implementation of major NDT methodologies—including ultrasonic testing, radiographic testing, thermography, and visual testing—for metals, polymers, composites, and biological samples within chemical research contexts, providing detailed protocols and performance comparisons to guide researchers in method selection.

Non-destructive testing (NDT) encompasses a wide group of analysis techniques used in science and technology to evaluate material properties without causing damage [14]. The terms non-destructive examination (NDE), non-destructive inspection (NDI), and non-destructive evaluation (NDE) are also commonly used to describe this technology [14]. In chemical analysis research, maintaining evidence integrity is fundamental, and NDT provides the methodological foundation for this principle by enabling thorough material characterization while preserving specimen integrity for subsequent analyses or archival purposes.

The fundamental value proposition of NDT in research settings includes: (1) enabling longitudinal studies on the same specimen through non-invasiveness, (2) preserving evidentiary integrity for forensic chemical analysis, (3) allowing complementary analytical techniques to be performed on pristine samples, and (4) providing real-time monitoring capabilities for dynamic processes. These advantages make NDT indispensable for research in material science, pharmaceutical development, biomedical engineering, and forensic chemistry where sample integrity cannot be compromised.

Fundamental NDT Methodologies

Core Principles and Physical Foundations

NDT methods leverage various physical principles to probe material interiors and surfaces without causing damage. Electromagnetic radiation, sound waves, and other signal conversions form the basis of these techniques [14]. The selection of appropriate NDT methods depends on material properties, defect types of interest, and specific research requirements [15]. Each technique exhibits unique strengths and limitations for different material classes and detection capabilities.

Comparative Method Performance

Table 1: NDT Method Capabilities Across Material Classes

| Method | Metals | Polymers | Composites | Biological | Defect Types Detected | Penetration Depth | Resolution |

|---|---|---|---|---|---|---|---|

| Ultrasonic Testing (UT) | Excellent | Good | Excellent [15] | Limited | Internal voids, delamination, cracks | High (cm range) | Sub-millimeter |

| Radiographic Testing (RT) | Excellent | Good | Good [15] | Good (with low dose) | Internal defects, density variations | High | Sub-millimeter |

| Visual Testing (VT) | Good (surface only) | Good (surface only) | Good (surface only) | Good (surface only) | Surface cracks, corrosion, morphology | Surface only | 10-100 μm |

| Eddy Current Testing (ET) | Excellent (conductive) | Not applicable | Limited (CFRP only) [15] | Not applicable | Surface/subsurface cracks, conductivity changes | Shallow (mm) | Millimeter |

| Thermography (TR/IRT) | Good | Good | Excellent [15] | Fair | Disbonds, delamination, subsurface defects | Shallow to moderate | Millimeter |

| Acoustic Emission (AE) | Good | Good | Excellent [15] | Limited | Active crack growth, fiber breakage | Entire structure | Centimeter |

| Penetrant Testing (PT) | Excellent | Good (non-porous) | Good (non-porous) | Limited | Surface-breaking defects | Surface only | 10-100 μm |

Table 2: Quantitative Performance Metrics for NDT Methods

| Method | Detection Sensitivity | Inspection Speed | Equipment Cost | Operator Skill Requirement | Safety Considerations |

|---|---|---|---|---|---|

| UT | 50-500 μm flaws | Moderate | Medium-high | High | Minimal |

| RT | 1-2% density variation | Slow | High | High | Radiation hazards |

| VT | 10-100 μm surface | Fast | Low | Low-medium | Minimal |

| ET | 10-100 μm surface | Fast | Medium | Medium-high | Minimal |

| TR/IRT | Millimeter-scale defects | Fast | Medium-high | Medium | Minimal |

| AE | Active defect growth | Continuous monitoring | Medium | High | Minimal |

| PT | 10-50 μm surface | Moderate | Low | Low-medium | Chemical handling |

Material-Specific Applications and Protocols

Metals Analysis

Metals present unique challenges and opportunities for NDT in chemical research. Ultrasonic Testing (UT) has long been the preferred choice for metal parts and assemblies owing to the effective penetration and propagation of ultrasonic waves through metallic materials [15]. This makes UT ideal for detecting internal defects like cracks, voids, inclusions, and corrosion that can compromise structural integrity [15].

Protocol 3.1.1: Ultrasonic Testing for Metal Corrosion Assessment

- Sample Preparation: Clean the metal surface to remove scale, corrosion products, or coatings that might interfere with coupling. For quantitative thickness measurements, slight surface smoothing may be necessary.

- Couplant Application: Apply an appropriate couplant (gel or water) to ensure efficient sound energy transmission between the transducer and test material. Use chemically inert couplants for research specimens to prevent reactions.

- Transducer Selection: Choose transducer frequency based on required resolution and penetration: 2.25 MHz for general purpose, 5 MHz for higher resolution in thinner materials, or 10 MHz for fine-grained metals.

- Calibration: Calibrate using reference standards of known thickness matching the test material. For anisotropic materials, use velocity correction based on material certificate data.

- Data Acquisition: Scan systematically across the surface maintaining consistent pressure and coupling. For manual scanning, use overlapping passes at 25-50% of transducer width.

- Data Interpretation: Analyze echo patterns for backwall attenuation (indicating generalized corrosion) or discrete intermediate echoes (indicating pitting or inclusions).

- Documentation: Record A-scan data with position references, B-scan cross-sections, or C-scan plan views for comprehensive documentation.

Protocol 3.1.2: Eddy Current Testing for Metallic Sample Integrity

Eddy Current Testing (ET) is particularly valuable for detecting surface and near-surface defects in conductive materials [16]. The technique induces circular electric currents in the material and detects flaws through impedance changes in the test coil [16].

- Probe Selection: Select probe type based on application: surface probes for flat surfaces, encircling coils for rods/tubes, or slot probes for specific geometries.

- Frequency Optimization: Adjust test frequency based on desired penetration depth: higher frequencies (100 kHz-10 MHz) for surface defects, lower frequencies (100 Hz-10 kHz) for subsurface detection.

- Reference Standards: Use specimens with known artificial defects (EDM notches, drilled holes) to establish sensitivity settings and phase rotation for defect discrimination.

- Lift-off Compensation: Adjust for varying probe-to-surface distance using lift-off compensation techniques to distinguish between real defects and spacing variations.

- Scanning Procedure: Maintain consistent lift-off and scanning speed while monitoring impedance plane display for indications.

- Data Analysis: Differentiate between defect signals and material property variations through phase analysis.

Polymer and Composite Materials

Composite materials have become revolutionary in various industries due to advantages like superior strength-to-weight ratios [15]. The reliability and structural integrity of fiber-reinforced polymer (FRP) composite materials are paramount in critical applications [15]. Successful NDT of composites requires addressing their anisotropic nature and complex damage modes.

Protocol 3.2.1: Ultrasonic Testing for Composite Delamination

The UT and Phased Array Ultrasonic Testing (PAUT) of FRP materials present unique challenges due to the anisotropic nature of FRP composites [15]. This anisotropy affects ultrasonic wave propagation, with speed of sound, attenuation, and reflection characteristics differing significantly depending on fiber direction [15].

- Anisotropy Compensation: Account for direction-dependent sound velocity by establishing velocity profiles along different material axes using reference samples.

- Frequency Selection: Use lower frequencies (1-2.25 MHz) for thick, highly attenuative composites; higher frequencies (5-10 MHz) for thinner sections or higher resolution requirements.

- Scanning Methodology: Implement full-waveform capture for post-processing and analysis. Use through-transmission for highly attenuative or complex geometries.

- Data Analysis: Identify delamination indications through characteristic backwall echo reduction or intermediate echoes with polarity reversal.

- Phased Array Advantages: Utilize sectorial scanning to inspect at multiple angles from a single probe position, improving detection of off-axis defects.

Protocol 3.2.2: Thermographic Testing for Composite Integrity

Thermography (TR), including Infrared Thermography (IRT), has proven effective for identifying defects in composite structures [15]. These methods detect thermal anomalies associated with subsurface defects.

- Excitation Method Selection: Choose appropriate thermal stimulation: pulsed thermography for rapid inspection, lock-in thermography for better depth resolution, or vibrothermography for active defect detection.

- Excitation Parameters: Optimize heating duration and power based on material thermal properties and defect depth of interest.

- Infrared Camera Setup: Select appropriate spectral band (MWIR: 3-5 μm or LWIR: 8-12 μm) and ensure proper focus and spatial calibration.

- Data Acquisition: Capture thermal image sequence during heating and cooling phases with frame rates sufficient to capture thermal transients.

- Signal Processing: Apply thermal signal reconstruction, pulsed phase thermography, or principal component analysis to enhance defect contrast.

- Defect Characterization: Correlate thermal contrast and time constants with defect depth and size using calibration on samples with known defects.

Biological Samples

NDT of biological materials requires special considerations to prevent damage to delicate structures and maintain biological integrity. Methods must often be adapted to accommodate hydration requirements, temperature sensitivity, and structural complexity.

Protocol 3.3.1: Visual Testing for Biological Specimen Integrity

Visual Testing (VT) is the most basic NDT method, involving direct examination of components with the naked eye or optical aids [16]. This method can be enhanced with tools like magnifying glasses, borescopes, or video inspection cameras [16].

- Illumination Optimization: Use multiple lighting angles (brightfield, darkfield, oblique) to enhance surface feature visibility. Consider polarized light to reduce glare.

- Magnification Selection: Choose appropriate magnification based on feature size: low magnification (2-10X) for overall assessment, higher magnification (20-100X) for detailed inspection.

- Documentation Standards: Capture reference images with scale markers and color standards for longitudinal comparisons.

- Sterility Maintenance: Implement aseptic techniques when handling living specimens or samples for subsequent biological analysis.

- Feature Annotation: Systematically document observations using standardized terminology and reference to coordinate systems.

Protocol 3.3.2: Low-Dose Radiographic Testing for Biological Samples

Radiographic Testing (RT) using X-rays produces images of internal structures [16]. For biological specimens, dose minimization is critical while maintaining sufficient contrast.

- Exposure Parameters: Optimize kVp and exposure time to achieve sufficient contrast while minimizing radiation dose. Use lower kVp for better soft tissue contrast.

- Digital Detector Selection: Choose appropriate digital detectors (flat panels, CMOS sensors) with high quantum efficiency for dose reduction.

- Contrast Enhancement: Utilize phase-contrast techniques when available to enhance visibility of low-contrast features in biological materials.

- Sample Stabilization: Immobilize specimens to prevent motion artifacts during exposure, using low-impact support materials.

- Dose Monitoring: Quantify and record radiation dose for each specimen to ensure compatibility with subsequent analyses.

Advanced and Emerging NDT Technologies

3D and 4D Characterization Methods

X-ray computed tomography (XCT) is an emerging NDT technique for composite materials [15]. This method provides three-dimensional volumetric data that can be essential for understanding complex internal structures.

Four-dimensional (4D) printing represents a transformative advancement in additive manufacturing, integrating time-responsive behavior into traditionally static three-dimensional (3D) printed structures [17]. This technology leverages stimuli-responsive materials such as shape memory polymers, hydrogels, liquid crystal elastomers, and smart composites that undergo controlled transformations when exposed to external triggers [17].

Protocol 4.1.1: X-ray Computed Tomography for Material Structure Analysis

- Resolution Requirements: Determine necessary voxel size based on smallest features of interest, balancing with field of view requirements.

- Scan Parameters: Optimize voltage, current, filter selection, and exposure time based on material composition and density.

- Reconstruction Settings: Select appropriate reconstruction algorithm (Feldkamp-Davis-Kress for cone-beam CT) and apply necessary corrections (beam hardening, ring artifacts).

- Segmentation and Analysis: Apply threshold-based or machine learning segmentation to identify features of interest. Perform quantitative analysis of porosity, fiber orientation, or defect distribution.

- Data Validation: Correlate with destructive sectioning or other NDT methods when possible to verify interpretation.

Integrated and Automated NDT Approaches

Future trends in NDT include adopting multimodal NDT systems, integrating digital twin and Industry 4.0 technologies, utilizing embedded and wireless structural health monitoring, and applying artificial intelligence for automated defect interpretation [15]. These advancements are promising for transforming NDT into an intelligent, predictive, and integrated quality assurance system [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for NDT Applications

| Item | Function | Application Notes | Material Compatibility |

|---|---|---|---|

| Ultrasonic Couplants | Enables efficient sound energy transfer between transducer and test material | Use water-based gels for general applications; specialized high-temperature or chemical-resistant couplants for extreme conditions | All materials; select based on chemical compatibility |

| Penetrant Materials | Reveals surface-breaking defects through capillary action | Three-component systems (penetrant, emulsifier, developer); fluorescent or visible dye options | Non-porous materials; metals, plastics, ceramics |

| Magnetic Particles | Detects surface and near-surface defects in ferromagnetic materials | Dry particles for rough surfaces; wet suspensions for finer defects; fluorescent particles for enhanced sensitivity | Ferromagnetic materials only |

| Eddy Current Probes | Induces electromagnetic fields in conductive materials | Absolute, differential, or reflection probes based on application; frequency range determines penetration depth | Electrically conductive materials |

| Reference Standards | Calibrates equipment and validates inspection procedures | Manufactured with known artificial defects (holes, notches, cracks); material and geometry matched to test specimens | All materials; specific to each NDT method |

| Infrared Cameras | Detects thermal patterns and anomalies | MWIR (3-5 μm) or LWIR (8-12 μm) detectors; resolution and sensitivity determine detection capability | All materials; emissivity correction required |

| Radiographic Sources | Generates penetrating radiation for internal inspection | X-ray tubes (variable energy) or gamma sources (fixed energy); energy selection based on material density and thickness | All materials; safety protocols essential |

| Acoustic Emission Sensors | Detects high-frequency sounds from active defects | Piezoelectric sensors with specific frequency response; array configuration for source location | All materials; requires stress application |

Non-destructive testing methods provide powerful capabilities for material characterization while preserving evidence integrity in chemical analysis research. The appropriate selection and implementation of NDT techniques depends on material properties, defects of interest, and research objectives. As NDT technologies continue advancing—with trends toward multimodal systems, digital twin integration, and AI-assisted analysis—their value in research contexts will further increase. By adopting the protocols and methodologies outlined in this application note, researchers can effectively implement NDT approaches that maintain sample integrity while extracting comprehensive material property data.

A Toolkit of Techniques: Spectroscopic, Mass Spectrometry, and Other Non-Destructive Methods

In the realm of modern analytical science, the imperative to analyze valuable samples without altering or destroying them is paramount. Non-destructive techniques preserve evidence integrity, allow for repeated measurements, and are essential for studying irreplaceable materials, from unique archaeological artifacts to clinical samples. Among these techniques, X-ray Fluorescence (XRF), Raman spectroscopy, and Fourier-Transform Infrared (FTIR) spectroscopy have emerged as foundational "workhorses" for elemental and molecular fingerprinting [18] [19] [20]. These methods provide complementary insights: XRF reveals elemental composition, while Raman and FTIR probe molecular bonds and structures, offering a comprehensive view of a material's chemical identity.

This application note details the principles, applications, and standardized protocols for these techniques, framed within the critical context of non-destructive analysis for research and drug development.

Fundamental Principles and Comparative Analysis

Core Physical Interactions

The fundamental interactions behind each technique dictate its applications and strengths.

X-Ray Fluorescence (XRF): This technique functions on the principle of atomic excitation. When a sample is exposed to high-energy X-rays, inner-shell electrons are ejected from atoms. As outer-shell electrons fall to fill these vacancies, they emit characteristic fluorescent X-rays. The energy of these emitted X-rays identifies the element, while their intensity quantifies its concentration [21]. It is a purely elemental analysis technique.

Fourier-Transform Infrared (FTIR) Spectroscopy: FTIR is based on molecular bond absorption. A broadband infrared source is directed at the sample, and molecular bonds (e.g., C=O, N-H, O-H) absorb specific IR frequencies that match their vibrational modes. The instrument uses an interferometer to measure all frequencies simultaneously, and a Fourier transform converts this data into an absorption spectrum, providing a molecular "fingerprint" [18]. The selection rule for FTIR requires a change in the dipole moment of the bond.

Raman Spectroscopy: Raman relies on inelastic scattering of light. A monochromatic laser interacts with the sample, and a tiny fraction of the scattered light shifts in energy due to interactions with molecular vibrations. This shift, measured in wavenumbers (cm⁻¹), provides vibrational information complementary to FTIR [18] [22]. The selection rule depends on a change in the bond's polarizability. A key advantage is that water is a weak Raman scatterer, making it suitable for aqueous samples.

Technical Comparison

The table below summarizes the core characteristics and comparative advantages of these three techniques.

Table 1: Comparative Analysis of XRF, FTIR, and Raman Spectroscopy

| Feature | XRF | FTIR | Raman |

|---|---|---|---|

| Primary Information | Elemental composition (from Na to U) | Molecular functional groups & bonds | Molecular vibrations, crystal lattice structure |

| Interaction Measured | Emission of characteristic X-rays | Absorption of infrared radiation | Inelastic scattering of visible/NIR light |

| Typical Excitation Source | X-ray Tube | Broadband IR source (Globar) | Monochromatic laser (NIR, visible, UV) |

| Detection Limit | ppm to % (e.g., Pb LOD: 0.06 ppm [23]) | ~1% | ~0.1 - 1% |

| Sample Preparation | Minimal (often none) | Required for solids (ATR, KBr pellets) | Minimal (can analyze through glass) |

| Key Strength | Quantitative elemental analysis; bulk & mapping | Strong sensitivity to polar bonds (e.g., C=O, O-H) | Excellent for non-polar bonds (C-C, C=C); low water interference |

| Primary Limitation | Cannot detect light elements (below Na) | Strong water absorption interferes with aqueous samples | Fluorescence from impurities can swamp signal |

Application Protocols

Protocol 1: Non-Destructive Classification of Arboviral Infections via FTIR

This protocol, adapted from a clinical study, outlines the use of FTIR for rapidly classifying dengue and chikungunya infections from human serum, a method that outperforms traditional ELISA and RT-PCR in speed and avoids cross-reactivity [20].

- Application: Rapid, label-free diagnostic classification of viral infections from human serum.

- Principle: Viral infections alter the host's biomolecular profile (e.g., protein secondary structure), which is detected as specific shifts in the FTIR spectrum [20].

- Materials & Reagents:

- Serum samples from confirmed dengue, chikungunya, and healthy controls.

- FTIR spectrometer with Attenuated Total Reflectance (ATR) accessory (e.g., diamond crystal).

- Standard glass slides or low-e slides for transmission mode.

- Software for multivariate analysis (e.g., Python with scikit-learn, MATLAB, or commercial packages).

Procedure:

- Sample Preparation: Thaw frozen serum samples and vortex gently to ensure homogeneity. For ATR-FTIR, place a small droplet (2-5 µL) directly onto the crystal and allow it to air-dry to form a thin film.

- Data Acquisition:

- Acquire background spectrum with a clean ATR crystal.

- Place sample on crystal and ensure good contact.

- Collect spectra in the mid-IR range (e.g., 4000-600 cm⁻¹) with a resolution of 4 cm⁻¹ and 64-128 scans to ensure a high signal-to-noise ratio.

- Data Preprocessing: Perform atmospheric compensation (for H₂O and CO₂), vector normalization, and baseline correction. Use second-derivative transformation to resolve overlapping bands (e.g., in the Amide I region ~1700-1600 cm⁻¹).

- Machine Learning Classification:

- Input preprocessed spectral data (key regions: Amide I, Amide III).

- Train a Support Vector Machine (SVM), Neural Network (NN), or Random Forest (RF) model using labeled data from confirmed cases.

- Validate model performance using a separate test set or cross-validation.

Expected Results: The study achieved near-perfect classification (AUC = 1.000) with distinct spectral features, including a marked increase in β-sheet content and loss of α-helical structures in dengue-infected sera [20].

Protocol 2: In-line Monitoring of Lithium Recycling Using Raman and FTIR

This protocol describes the integration of spectroscopy as a Process Analytical Technology (PAT) for real-time monitoring and control of a hydrometallurgical lithium recycling process, leading to significant cost and environmental impact savings [24].

- Application: Real-time, in-line monitoring of extractant concentration and metal-complex formation in a liquid-liquid extraction process for lithium purification.

- Principle: FTIR and Raman spectroscopy detect specific vibrational modes of process reagents (e.g., TTA, TOPO) and the Li(TTA)(TOPO)₂ complex, enabling quantitative monitoring [24].

- Materials & Reagents:

- Process streams (organic and aqueous phases).

- In-line flow cell compatible with FTIR or Raman probes, resistant to organic solvents.

- FTIR or Raman spectrometer with fiber-optic probe for process integration.

- Chemometric software for Partial Least Squares (PLS) regression modeling.

Procedure:

- Calibration Model Development:

- Prepare a series of standard solutions covering the expected operating range for extractants (TTA, TOPO) and the lithium complex.

- Collect FTIR and Raman spectra for each standard solution under controlled conditions.

- Use reference methods (e.g., ICP-MS) to determine the actual concentration of components in the standards.

- Develop a PLS regression model to correlate spectral features with reference concentrations.

- In-line Process Integration:

- Install the spectroscopic probe directly into the process stream (e.g., in the organic phase post-extraction).

- Continuously collect spectra at defined intervals (e.g., every 30 seconds).

- Real-Time Prediction and Control:

- Process the incoming spectra in real-time using the pre-trained PLS model.

- Output the predicted concentrations of key components to the process control system.

- Use this data for feedback control to maintain optimal process conditions (e.g., pH, flow rates).

- Calibration Model Development:

Expected Results: The study achieved PLS models with an R² minimum of 0.95, enabling an estimated 15% reduction in chemical costs and a 20% reduction in global warming potential for a lithium purification plant [24].

Protocol 3: Cancer Exosome Classification via Raman Spectroscopy

This protocol utilizes Raman spectroscopy combined with machine learning to classify exosomes derived from different cancer cell lines, demonstrating the potential for non-invasive liquid biopsies [22].

- Application: Label-free classification of cancer types based on exosomal lipid composition.

- Principle: Different cancer cell lines produce exosomes with unique biochemical compositions, particularly in their lipid membranes, which generate distinct Raman spectral fingerprints [22].

- Materials & Reagents:

- Purified exosomes from cell culture supernatant or patient biofluids.

- Aluminum or gold-coated slides for sample deposition.

- Raman microscope system (e.g., 785 nm laser excitation to minimize fluorescence).

- Software for Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA).

Procedure:

- Sample Preparation: Isolate exosomes from biofluids (e.g., blood plasma) using ultracentrifugation or kit-based methods. Deposit a concentrated exosome solution onto a substrate and allow it to dry.

- Data Acquisition:

- Focus the laser on the sample deposit.

- Acquire Raman spectra (e.g., 500-3200 cm⁻¹ range) with high sensitivity detectors.

- Use low laser power and longer integration times to obtain high signal-to-noise ratios without damaging the sample.

- Data Analysis:

- Preprocess spectra with cosmic ray removal, baseline correction, and normalization.

- Perform PCA on the preprocessed spectra to reduce dimensionality and identify the most significant wavenumber regions contributing to variance (e.g., 700-900 cm⁻¹, 2800-3000 cm⁻¹ for lipids).

- Use the principal components as input for an LDA classifier to distinguish between different cancer types.

Expected Results: The cited study achieved 93.3% overall classification accuracy for colon, skin, and prostate cancer exosomes, identifying unique lipid profiles such as high omega-3 25:5 in prostate and skin cancers and glycerophospholipids in colon cancer [22].

Workflow and Decision Pathways

Selecting the appropriate spectroscopic technique depends on the sample type, state, and analytical question. The following decision workflow provides a logical pathway for method selection.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of these spectroscopic methods relies on key reagents and accessories. The following table details essential items for the featured experiments.

Table 2: Key Research Reagent Solutions and Materials

| Item | Function / Application | Example Experiment |

|---|---|---|

| ATR Crystal (Diamond) | Enables FTIR analysis of solids and liquids with minimal preparation by measuring the interaction of IR light with a sample in close contact with the crystal. | FTIR classification of serum samples [20]. |

| Certified Reference Materials (CRMs) | Used for calibration and validation of quantitative models, ensuring accuracy and traceability. | Optimizing XRF algorithms for toxic elements in food [23]. |

| SERS Substrates | Nanostructured metallic surfaces that enhance Raman signals by orders of magnitude, enabling trace-level detection. | Improving sensitivity for clinical exosome detection [22]. |

| Process Flow Cell | A sealed cell that allows for the safe and continuous analysis of process streams by FTIR or Raman probes. | In-line monitoring of lithium extraction [24]. |

| Chemometric Software | Software packages for multivariate data analysis, including preprocessing, PCA, PLS, and machine learning classification. | All protocols involving complex spectral data analysis [20] [24] [22]. |

| Fiber-Optic Probe | Allows for remote sampling, enabling analysis of hazardous materials or integration into process lines and microscopes. | Raman analysis of exosomes; In-line process monitoring [24] [22]. |

XRF, FTIR, and Raman spectroscopy provide a powerful, complementary toolkit for non-destructive chemical analysis. The choice of technique is not a matter of which is "best," but which is most appropriate for the specific analytical challenge, as guided by the sample properties and information required. The integration of these techniques with advanced chemometrics and machine learning is pushing the boundaries of diagnostic and process control capabilities. By adhering to standardized protocols and understanding the fundamental principles outlined in this note, researchers and drug development professionals can effectively leverage these spectroscopic workhorses to maintain evidence integrity while extracting rich chemical information.

The advent of ambient ionization mass spectrometry (ambient MS) in the mid-2000s marked a paradigm shift in analytical chemistry, opening the field to a whole new range of applications where samples can be analyzed in their native state with minimal or no preparation [25]. The pioneering techniques of Desorption Electrospray Ionization (DESI) and Direct Analysis in Real Time (DART) have emerged as the most established methods in this field, revolutionizing how researchers approach chemical analysis while maintaining evidence integrity [25] [26]. These techniques enable direct analysis of samples at atmospheric pressure, in the open air, outside the mass spectrometer, preserving the original state of valuable evidentiary materials [25].

The fundamental advantage of ambient MS techniques lies in their nondestructive character, allowing for the analysis of compounds directly from various surfaces without compromising the sample's integrity [27]. This minimally invasive approach is particularly valuable in fields where sample preservation is paramount, including forensic investigations, cultural heritage analysis, and pharmaceutical development [25] [26]. By eliminating extensive sample preparation and enabling rapid, in-situ analysis, DESI and DART have transformed traditional mass spectrometry into a more efficient, versatile, and environmentally friendly analytical tool that aligns with green chemistry principles through reduced solvent usage and waste generation [28].

Fundamental Principles and Instrumentation

DESI (Desorption Electrospray Ionization)

DESI is a spray-based liquid extraction technique that operates by directing a charged solvent spray at a sample surface, forming a thin solvent film where extraction and desorption of analyte molecules occur [25]. In this process, microdroplets containing the analytes are formed through a splashing effect and are subsequently ejected toward the mass spectrometer inlet for analysis [25]. The mechanism involves primary microdroplets impacting the surface to create a thin solvent layer, enabling solid-liquid extraction of analytes. Subsequent microdroplets then splash into this layer, releasing secondary microdroplets that contain the dissolved analytes for ionization and detection [27].

DART (Direct Analysis in Real Time)

DART employs a plasma-based desorption mechanism where a carrier gas, typically helium, is exposed to a corona discharge needle, creating excited gas atoms or metastable species that stream out of the source to ionize molecules from the sample placed between the source and the mass spectrometer inlet [25]. The reactive species responsible for DART ionization are metastable atoms or molecules of inert gas generated by electrical discharge, which subsequently react in the gas phase with ambient oxygen and water to produce reactant ions that interact with analytes through processes similar to atmospheric pressure chemical ionization (APCI) [29] [27].

Figure 1: Ionization Mechanisms of DESI and DART Techniques

Technical Comparison and Performance Characteristics

Table 1: Comparative Analysis of DESI and DART Techniques

| Characteristic | DESI | DART |

|---|---|---|

| Ionization Mechanism | Liquid extraction using charged solvent spray [25] | Plasma-based desorption using metastable gas species [25] |

| Suitable Samples | Thermally-sensitive materials (textiles, paper) [25] | Objects sensitive to solvent exposure [25] |

| Spatial Resolution | Larger, customizable stage for bigger objects [25] | Limited by small sample gap; better for fragments/small objects [25] |

| Analysis Speed | Rapid (seconds per sample) [27] | Rapid (seconds per sample) [27] |

| Key Applications | Forensic analysis, tissue imaging, pharmaceuticals [27] [26] | Explosives, drugs of abuse, ink analysis [29] [27] |

| Background Interference | Environmental contaminants, personal hygiene volatiles [25] | Reduced background in closed-source configurations [29] |

Experimental Protocols and Methodologies

Protocol 1: Analysis of Explosive Traces on Fabrics Using DESI and DART

Application Context: This protocol is designed for forensic analysis of explosive residues collected from surfaces using fabric wipes, enabling rapid screening for security applications and crime scene investigations [27].

Materials and Reagents:

- Fabric wipes (cotton, polyester, or starched cotton)

- RDX standard solutions (1 mg/mL in 1:1 methanol-acetonitrile)

- HPLC-grade methanol and water

- Ammonium chloride (Suprapur) for adduct formation

- Glass slides and double-face adhesive tape

Sample Preparation:

- Prepare standard solutions of RDX in 1:1 water-methanol at concentrations ranging from 0.1 mg/L to 900 mg/L.

- For enhanced sensitivity, add ammonium chloride to achieve a final chloride concentration of 1 mM to promote chloride adduct formation [27].

- Deposit 1 μL aliquots onto fabric surfaces (4 × 7 cm pieces), allowing spots to dry completely.

- Alternative physical transfer method: Deposit target quantities on glass slides, allow to dry, then wipe with fabric to simulate real-world evidence collection.

DESI-MS Parameters and Analysis:

- Attach fabric samples to glass slides using double-face adhesive tape.

- Set nitrogen pressure to 120 psi and solvent flow rate to 5 μL/min using 1:1 methanol-water.

- Adjust sprayer-to-surface distance to 2 mm and sprayer-to-MS inlet distance to 6 mm.

- Set incident angle to 54° and collection angle to 10° for optimal signal recovery.

- Operate mass spectrometer in negative ion mode with capillary temperature at 250°C.

- Monitor mass range 150-500 Th in total ion current mode with resolving power ≥30,000 to distinguish isobaric interferences [27].

DART-MS Parameters and Analysis:

- Utilize transmission mode (TM-DART) for improved reproducibility over reflection mode.

- Set helium gas pressure to 80 psi and gas temperature to 350°C (below fabric degradation threshold).

- Position fabric samples perpendicular to the gas beam at 0.7 cm distance.

- Operate in negative ion mode with identical MS parameters as DESI for comparative analysis.

- Analysis time approximately 20 seconds per sample spot.

Data Interpretation:

- Identify RDX via chloride adduct anion [M+Cl]⁻ at m/z 257.00428 (C₃H₆O₆N₆³⁵Cl)

- Ensure sufficient resolving power (≥30,000 FWHM) to distinguish from isobaric compounds like TATB (m/z 257.02761)

Protocol 2: Analysis of Writing Inks for Questioned Documents

Application Context: Forensic examination of questioned documents to determine ink composition for investigating forged checks, contracts, or determining document authenticity [29].

Materials and Reagents:

- Questioned document samples or paper strips with ink strokes

- DSA-MS mesh holder screens (for sample introduction)

- Standard ink samples for comparison (ballpoint, gel pens, fountain pens)

- FC-43 calibration solution for mass spectrometer calibration

Sample Preparation:

- Prepare samples by creating single stroke lines of ink (1-7 mm length) on standard printer paper.