Non-Destructive Analysis in Forensics: Preserving Evidence Integrity with Advanced Methodologies

This article provides a comprehensive examination of non-destructive analysis methods revolutionizing forensic evidence preservation.

Non-Destructive Analysis in Forensics: Preserving Evidence Integrity with Advanced Methodologies

Abstract

This article provides a comprehensive examination of non-destructive analysis methods revolutionizing forensic evidence preservation. Targeting forensic researchers, scientists, and development professionals, it explores the foundational principles, cutting-edge applications, optimization strategies, and validation frameworks for techniques that maintain evidence integrity. Covering spectroscopic methods, 3D reconstruction, nanomaterials, and established NDT approaches, the content addresses operational challenges while emphasizing methodological rigor required for admissibility in legal contexts. The synthesis offers practical insights for implementing these preservation-focused methodologies across diverse forensic disciplines while outlining future directions integrating AI, advanced sensors, and standardized protocols.

The Science of Preservation: Core Principles of Non-Destructive Forensic Analysis

Non-destructive analysis (NDA) represents a paradigm shift in forensic science, enabling the examination of physical and digital evidence without alteration or destruction. These techniques preserve the integrity of evidence for subsequent analyses, courtroom presentation, and archival storage, while providing reliable, court-admissible data. The fundamental principle of NDA is the application of analytical techniques that leave evidence intact and unmodified, maintaining the chain of custody and evidentiary integrity throughout the investigative process [1] [2]. This approach stands in stark contrast to traditional destructive methods that consume or permanently alter evidence samples during analysis.

In forensic contexts, non-destructive techniques span multiple disciplines, including chemical analysis, materials characterization, and digital evidence preservation. The adoption of NDA has grown significantly due to technological advancements and increasing demands for evidence preservation and the ability to perform repeated analyses by multiple experts [1]. This document provides comprehensive application notes and experimental protocols for implementing non-destructive analysis within forensic frameworks, with particular emphasis on practical implementation for researchers and forensic professionals.

Fundamental Principles of Non-Destructive Analysis

Definition and Core Concepts

Non-destructive analysis (NDA) encompasses a wide array of analytical techniques used to evaluate the properties of materials, components, or systems without causing damage or alteration. In forensic science, this principle extends to maintaining evidence in its original state while extracting maximum informational value [3]. The core advantage of NDA lies in its ability to preserve evidence for future re-examination, defense verification, and archival purposes, which is particularly crucial in legal proceedings where evidence may need to be presented multiple times over extended periods [1] [2].

The conceptual framework of non-destructive analysis in forensic applications rests on three foundational pillars:

- Evidence Preservation: Maintaining the original state and properties of all evidence

- Analytical Reliability: Providing scientifically valid and reproducible results

- Legal Admissibility: Ensuring methodologies meet judicial standards for evidence handling

Comparison with Destructive Methods

Destructive testing methods, while valuable for determining exact failure points or material composition, result in irreversible damage to specimens [4]. These methods include tensile testing, crush testing, fracture testing, and various forms of chemical extraction that alter or consume the sample [4]. In forensic contexts, such destruction poses significant challenges for evidence preservation, chain of custody maintenance, and future re-analysis by defense experts.

Table 1: Comparative Analysis of Destructive vs. Non-Destructive Methods in Forensic Science

| Parameter | Destructive Methods | Non-Destructive Methods |

|---|---|---|

| Evidence Integrity | Permanently altered or destroyed | Fully preserved in original state |

| Re-analysis Potential | Limited or impossible | Multiple re-analyses possible |

| Analytical Focus | Bulk properties, failure points | Surface and internal structure, chemical composition |

| Sample Preparation | Often extensive, altering sample | Minimal or none required |

| Forensic Applications | Limited to cases where consumption is acceptable | Broad applicability across evidence types |

| Resource Impact | High material waste, replacement costs | Minimal waste, cost-effective over time |

Analytical Techniques and Instrumentation

Spectroscopic Methods

Fourier Transform Infrared (FTIR) Spectroscopy

FTIR spectroscopy has emerged as a cornerstone technique for non-destructive forensic analysis, providing both visual and chemical information from microscopic samples [1]. FTIR microspectroscopy combines optical microscopy with integrated FTIR, enabling rapid, non-destructive investigation of samples as small as 10 microns [1]. This technique is particularly valuable for analyzing illicit pills, hair, fibers, inks, and paints while preserving evidence integrity.

The Thermo Scientific Nicolet iN10 Infrared Microscope exemplifies modern FTIR applications in forensics, offering capabilities for visual inspection and chemical characterization without liquid nitrogen requirements, allowing laboratories to quickly evaluate evidence in any location [1]. The integrated OMNIC Picta Software simplifies microscopy operations with wizards for reflection, transmission, and ATR analysis, making the technology accessible even to inexperienced users [1].

Spectrophotometry

Spectrophotometry provides objective measurement of color and radio wavelengths, serving as a non-destructive alternative to traditional destructive procedures in crime evidence examination [2]. This method analyzes how samples reflect wavelengths, enabling differentiation of chemical composition, material type, and even brand identification of evidence [2]. UV-visible spectroscopy is particularly valuable for fiber and ink analysis, while infrared spectroscopy examines organic materials like hair, paint, and gunshot residue.

Modern spectrophotometers require no sample preparation before analysis, making them ideal for preserving evidence integrity [2]. The technique has become a gold standard in forensic analysis, employed by agencies including the FBI and American Hazardous Material Response Unit for its reliability and non-destructive characteristics [2].

Terahertz Time-Domain Spectroscopy (THz-TDS)

Terahertz spectroscopy represents an advanced approach for non-destructive identification of substances through packaging materials. Attenuated total reflection terahertz time domain spectroscopy (ATR THz-TDS) enables sample identification without opening containers by utilizing evanescent waves that penetrate packaging materials [5]. This method is particularly valuable for analyzing pharmaceuticals and illicit drugs sealed in plastic packaging, as the penetration depth of evanescent waves (typically tens of micrometers) exceeds the thickness of most plastic packaging in the sub-terahertz frequency region [5].

The ATR THz-TDS approach offers significant advantages for forensic applications, including the ability to measure thick samples, highly absorbing materials, and samples in powdered form without special preparation requirements [5]. This technique has demonstrated successful identification of saccharides like lactose through plastic packaging based on spectral fingerprints at 0.53 THz [5].

Digital Forensic Preservation Methods

Digital evidence requires specialized non-destructive approaches to preserve data integrity and maintain legal admissibility. Modern digital forensic techniques include disk imaging (creating bit-for-bit copies of storage devices), reverse steganography (extracting hidden information from files), and mobile device forensics (recovering data from smartphones and tablets) [6]. These methods ensure original evidence remains untouched while allowing comprehensive analysis.

The proliferation of security features in modern devices presents new challenges for digital evidence preservation. Features such as location-based security, automatic reboots, USB restrictions, and temporary data expiration can cause evidence degradation if not addressed promptly [7]. Contemporary digital forensic practice requires near-immediate acquisition to preserve comprehensive data, as traditional approaches of isolating devices for later analysis have become obsolete [7].

Table 2: Technical Specifications of Major Non-Destructive Analytical Techniques

| Technique | Spatial Resolution | Detection Capabilities | Primary Forensic Applications |

|---|---|---|---|

| FTIR Microscopy | ~10 microns | Chemical functional groups, molecular structure | Fibers, paints, drugs, inks, trace evidence |

| UV-Vis Spectrophotometry | Macroscopic | Color measurement, electronic transitions | Ink comparison, fiber analysis, blood detection |

| Terahertz Spectroscopy | Sub-millimeter | Molecular vibrations, crystal lattice modes | Drugs through packaging, counterfeit documents |

| Raman Spectroscopy | ~1 micron | Molecular vibrations, crystal structure | Explosives, narcotics, ink analysis |

| Digital Imaging | Bit-level | Data patterns, file structures | Computer forensics, mobile device analysis |

Application Notes: Forensic Evidence Types

Pharmaceutical and Illicit Drug Analysis

Non-destructive analysis has revolutionized the examination of pharmaceutical products and illicit drugs, enabling qualitative and quantitative assessment without consuming evidence. FTIR microscopy provides rapid analytical approaches for determining chemical composition and distribution of active components in illicit drug tablets [1]. The Nicolet iN10 MX Imaging Infrared Microscope can perform chemical imaging of prescription drugs across a 5 × 5 mm area in approximately five minutes, identifying both active ingredients and excipients without sample dissolution [1].

The OMNIC Picta Software incorporates automatic collection and analysis wizards, including a random mixture wizard that can examine and identify multiple components with a single click [1]. For forensic chemists, this enables semiquantitative distribution data and component identification through spectral library matching, providing both chemical information and insights into illegal production processes [1].

ATR THz-TDS has demonstrated particular value for identifying drugs in plastic packaging without opening containers, addressing a critical need in law enforcement and border control [5]. This approach can detect spectral fingerprints of substances like lactose at 0.53 THz through polyethylene packaging, with measurements taking approximately 30 seconds and requiring no sample preparation [5].

Trace Evidence Examination

Fiber and Hair Analysis

FTIR microscopy combines visible microscopic examination with chemical information for forensic analysis of hairs and fibers [1]. This approach can detect residual hair styling agents, conditioners, and protein structural alterations caused by chemical treatments like bleaching [1]. The oxidation of amino acid cystine to cysteic acid in bleached hair increases S=O stretching absorbance at 1040 cm⁻¹ and 1175 cm⁻¹, providing measurable indicators of treatment history [1].

For synthetic fibers, FTIR microscopy rapidly determines chemical subclass non-destructively with minimal sample preparation [1]. This capability is particularly valuable for analyzing security fibers in banknotes, where ATR microspectroscopy can identify specific polymer compositions (e.g., nylon) while providing high spectral quality with minimal cellulose contribution from the paper substrate [1].

Ink and Document Analysis

The non-destructive nature of infrared imaging and ATR FTIR microscopy provides significant benefits for assessing questioned documents [1]. FTIR microscopy enables rapid chemical imaging of both ink and paper materials, yielding unambiguous data that can be directly compared to authentic documents [1]. Chemical imaging highlights pigment distribution while ATR analysis provides detailed spectral information of the ink composition.

Modern printing technology has made visual discrimination between printing processes increasingly challenging, but FTIR analysis can distinguish between ink types and application methods [1]. The technique successfully overcomes the high infrared absorbance from cellulose between 1200-950 cm⁻¹, which previously limited infrared spectroscopy for ink analysis [1].

Fingerprint and Residue Analysis

FTIR microspectroscopic examination can reveal chemical information left behind by fingerprints beyond the friction ridge pattern [1]. This chemical information can trace a suspect's activities before committing a crime, as fingerprints contain natural sebum oil from skin (triglyceride esters) and may include contaminants from handling other materials [1]. Chemical imaging instantly determines the unique fingerprint pattern while exposing essential trace chemical information, such as fibrous wood particles or other environmental contaminants [1].

Paint and Coating Analysis

Automotive paint evidence typically consists of multiple layers of chemically diverse materials, including binders, primers, pigments, and protective resins [1]. Traditional chemical identification of paint layers requires dissolution and chemical extraction, but FTIR microscopy enables immediate chemical identification of each layer through fast mapping [1]. This approach can distinguish between the exterior protective polyurethane coating, base coat and polypropylene polymer, and paint binder layer in a single analysis [1].

Digital Evidence Preservation

Digital evidence preservation requires specialized non-destructive techniques to maintain data integrity while extracting forensically relevant information. The digital forensic investigation process follows a structured approach: identification of potential digital evidence sources, collection of devices from crime scenes, preservation through forensic imaging, analysis of evidence, and reporting of findings [6].

Contemporary challenges include modern smartphone security features that can cause evidence degradation, such as Apple's Stolen Device Protection that locks devices when moved from familiar locations, automatic reboots that purge temporary data, USB restrictions that block data connections, and self-destruct applications that wipe devices if not unlocked within specific timeframes [7]. These developments necessitate immediate acquisition rather than traditional preservation protocols that involved isolating devices in Faraday bags for later analysis [7].

Experimental Protocols

Protocol 1: FTIR Analysis of Synthetic Fibers

Scope and Application

This protocol describes the procedure for analyzing synthetic fibers using Fourier Transform Infrared (FTIR) microscopy to determine polymer subclass and chemical treatment history while preserving evidence integrity.

Equipment and Materials

- FTIR microscope with ATR capability

- Analytical balance

- Forensic tweezers

- Reference spectral libraries

- Evidence packaging materials

Procedure

Sample Preparation:

- Using clean forensic tweezers, place the fiber specimen on the microscope stage.

- Ensure the fiber is straight and securely positioned for analysis.

- No chemical preparation or coating is required.

Visual Examination:

- Using the optical microscope, examine the fiber at appropriate magnifications.

- Document physical characteristics including color, diameter, and surface features.

- Capture digital images for documentation.

Spectral Acquisition:

- Engage the ATR crystal onto the fiber specimen with consistent pressure.

- Collect infrared spectrum in the range of 4000-650 cm⁻¹.

- Set resolution to 4 cm⁻¹ with 32 scans per spectrum.

- Collect background spectrum periodically to ensure data quality.

Data Analysis:

- Compare obtained spectrum against polymer reference libraries.

- Identify characteristic absorption bands for polymer identification.

- Document any anomalies indicating chemical treatments or degradation.

Post-Analysis Handling:

- Carefully remove the fiber from the stage using clean tweezers.

- Return the fiber to appropriate evidence packaging.

- Document chain of custody continuity.

Quality Control

- Analyze known reference standards with each batch of samples

- Verify instrument performance using polystyrene calibration standards

- Maintain environmental controls to minimize atmospheric interference

Protocol 2: Non-Destructive Drug Identification Through Packaging

Scope and Application

This protocol outlines the procedure for identifying pharmaceutical substances and illicit drugs through plastic packaging using Attenuated Total Reflection Terahertz Time-Domain Spectroscopy.

Equipment and Materials

- ATR THz-TDS system with silicon prism

- Reference drug spectral database

- Plastic-packaged suspect materials

- Calibration standards

Procedure

System Preparation:

- Power on the ATR THz-TDS system and allow stabilization.

- Verify system performance using reference materials.

- Clean the silicon prism surface with appropriate solvents.

Sample Placement:

- Place the packaged material directly on the silicon prism.

- Ensure full contact between packaging and prism surface.

- Apply minimal pressure to maintain contact without damaging packaging.

Spectral Acquisition:

- Acquire time-domain THz pulse without sample as reference.

- Measure THz pulse with sample in place.

- Repeat measurement five times and average for signal-to-noise enhancement.

- Each measurement typically requires 30 seconds acquisition time.

Data Processing:

- Transform time-domain signals to frequency domain using Fourier transformation.

- Compute amplitude reflectance and phase difference.

- Calculate complex refractive index using ATR equations.

- Compare obtained spectrum to reference database.

Interpretation:

- Identify characteristic absorption features of target compounds.

- Document presence of specific spectral fingerprints.

- Note any packaging interference in the spectral profile.

Quality Assurance

- Maintain consistent contact pressure between package and prism

- Monitor signal-to-noise ratio for quality assessment

- Validate method with known standards through identical packaging

Protocol 3: Digital Evidence Preservation from Mobile Devices

Scope and Application

This protocol provides guidelines for preserving digital evidence from mobile devices while maintaining data integrity and overcoming modern security features.

Equipment and Materials

- Forensic write-blocking hardware

- Faraday bags or signal-blocking containers

- Forensic imaging software

- Certified storage media

- Documentation materials

Procedure

Device Identification:

- Document device make, model, and physical condition.

- Photograph device from multiple angles.

- Record serial numbers and other identifiers.

Signal Isolation:

- Immediately place device in Faraday bag to prevent remote wiping.

- Maintain device in powered-on state if already on.

- Do not power on devices that are off.

Immediate Acquisition:

- Connect device to forensic workstation using appropriate cables.

- Bypass USB restrictions using specialized forensic tools.

- Create forensic image using approved mobile forensics software.

- Generate hash verification of acquired data.

Data Extraction:

- Extract logical data including call logs, messages, and applications.

- Attempt physical extraction for complete data recovery.

- Document all extraction methods and success rates.

Preservation:

- Store original device in secure evidence locker.

- Create redundant copies of forensic images.

- Maintain detailed chain of custody documentation.

Quality Control

- Verify data integrity through hash verification at multiple stages

- Document all actions taken with timestamps

- Maintain specialized training on evolving mobile security features

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Non-Destructive Forensic Analysis

| Item | Specification | Function in Analysis |

|---|---|---|

| ATR Crystals | Diamond, Germanium, or Silicon | Surface contact for internal reflection measurements |

| Reference Spectral Libraries | Certified commercial databases | Chemical identification and comparison |

| Forensic Tweezers | Anti-static, non-magnetic | Evidence handling without contamination |

| Faraday Bags | Multiple layer signal blocking | Prevention of remote data wiping during digital evidence collection |

| Silicon Prisms | High resistivity, low THz absorption | Total internal reflection for THz-TDS measurements |

| Certified Reference Materials | Traceable to national standards | Method validation and quality control |

| Forensic Imaging Software | Court-accepted applications | Bit-level data preservation and analysis |

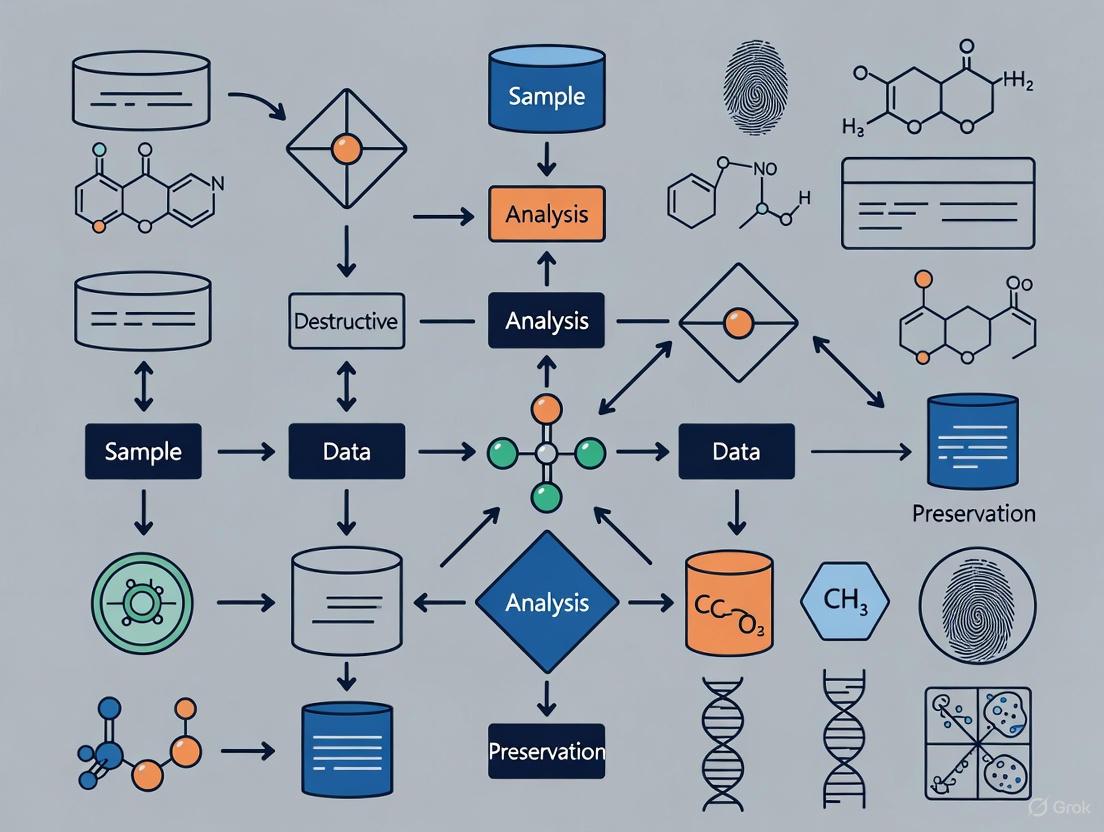

Workflow and Signaling Pathways

Non-destructive analysis represents the future of forensic science, balancing the competing demands of comprehensive evidence examination and preservation of materials for judicial proceedings. The techniques outlined in this document—spanning spectroscopic methods, digital preservation protocols, and specialized analytical approaches—provide forensic practitioners with powerful tools for evidence characterization while maintaining integrity for future analyses.

The continued evolution of non-destructive methods will likely focus on increasing sensitivity, reducing analysis time, and expanding capabilities for through-barrier detection. As these technologies mature, their integration into standard forensic practice will further enhance the scientific rigor and reliability of forensic investigations while preserving the fundamental principle of evidence integrity throughout the judicial process.

Locard's Exchange Principle, a cornerstone of forensic science, dictates that "every contact leaves a trace" [8] [9]. Formulated by Dr. Edmond Locard in the early 20th century, this principle states that whenever two objects come into contact, there is a mutual exchange of trace material between them [8]. This foundational concept has traditionally guided criminal investigations, where microscopic evidence such as hair, fibers, or dust serves as a silent witness to events [10]. In contemporary scientific research, this principle provides a powerful theoretical framework for understanding how materials interact with their environment and with analytical instruments during non-destructive testing (NDT). The integration of Locard's principle with modern NDT methodologies creates a robust paradigm for preserving irreplaceable materials—from archaeological bones to composite materials in aerospace—while extracting critical data about their composition, history, and integrity.

The convergence of these fields addresses a critical need in evidence-based research: the necessity to derive maximum information from unique or fragile specimens without altering or destroying them. This is particularly vital in fields such as cultural heritage preservation, archaeology, and materials science, where the subject's preservation is paramount. Non-destructive evaluation (NDE) techniques enable researchers to act as forensic experts of history and material science, investigating the "crime scene" of degradation or material change without contaminating the evidence [11] [12]. This approach ensures that materials remain available for future analysis with potentially more advanced technologies, thereby extending their research lifespan and value.

Theoretical Foundations: Locard's Principle in Context

Core Concept and Historical Development

Edmond Locard (1877-1966), often called the "Sherlock Holmes of France," established the first forensic laboratory in Lyon, France, in 1910 [8]. Although the succinct phrase "every contact leaves a trace" is the common formulation, Locard himself wrote: "It is impossible for a criminal to act, especially considering the intensity of a crime, without leaving traces of this presence" [9]. This insight revolutionized forensic science by providing a theoretical basis for the systematic examination of trace evidence. Locard was inspired by multiple sources, including Sir Arthur Conan Doyle's Sherlock Holmes stories, the biometric work of Alphonse Bertillon, and the criminalistics foundations laid by Hans Gross [8].

Locard demonstrated his principle through practical investigation. In one famous 1912 case involving the murder of Marie Latelle, Locard examined skin cells from under suspect Emile Gourbin's fingernails and discovered a distinctive pink dust that was matched to custom-made face powder used by the victim [9]. This trace evidence proved crucial in securing a confession and conviction, powerfully illustrating how microscopic transfers could establish connections between people, objects, and locations.

The Principle in Modern Scientific Context

In contemporary preservation science, Locard's principle has expanded beyond its forensic origins to encompass several key theoretical concepts:

Mutual Alteration Concept: Every interaction between an object and its environment, or between an object and a measurement device, results in bidirectional transfer or alteration, however minimal [8]. This understanding necessitates careful consideration of how analysis itself might affect specimens.

Trace Evidence Persistence: Trace materials—whether physical particles or digital artifacts—persist over time and can be detected with appropriate methodologies [13]. This persistence enables researchers to reconstruct past events or conditions from present evidence.

Hierarchy of Detection: As analytical technologies advance, the scale of detectable evidence continues to decrease, with nanotechnology and molecular-level analysis now enabling detection of previously invisible traces [8].

The application of Locard's principle has naturally extended to digital forensics, where cybercrimes leave data traces such as log files, metadata, and network artifacts [13] [10]. Similarly, in preservation science, the principle guides the detection of subtle material changes, environmental interactions, and degradation patterns that inform conservation strategies.

Non-Destructive Testing Methods for Preservation Science

Non-destructive testing (NDT) comprises a wide group of analysis techniques used to evaluate material properties without causing damage [3]. Also referred to as non-destructive examination (NDE) or non-destructive evaluation (NDE), these methods are indispensable for investigating precious or irreplaceable materials where preservation is essential [11] [12]. The following sections detail prominent NDT methods relevant to preservation science across various disciplines.

Spectroscopic Techniques

Spectroscopic methods analyze the interaction between matter and electromagnetic radiation to determine material composition and properties.

Table 1: Spectroscopic NDT Methods for Material Analysis

| Method | Physical Principle | Typical Applications | Penetration Depth | Key Advantages |

|---|---|---|---|---|

| Near-Infrared (NIR) Spectroscopy | Measures molecular overtone and combination vibrations | Bone collagen quantification [12], material identification | Millimeters [12] | Rapid analysis (seconds), non-contact capability, field-portable instruments |

| Infrared Thermography (IRT) | Detects infrared energy emission variations | Building diagnostics [11], delamination detection in composites [14] | Surface to subsurface | Wide area coverage, real-time imaging, non-contact |

| X-ray Computed Tomography (XCT) | Measures X-ray attenuation through multiple projections | 3D void characterization in composites [14], internal structure visualization | Varies with material density and energy | Detailed 3D visualization, quantitative analysis |

Near-Infrared (NIR) Spectroscopy has emerged as particularly valuable for archaeological and cultural heritage applications. A 2019 study demonstrated that portable NIR spectroscopy could accurately quantify collagen content in ancient bone specimens ranging from 500 to 45,000 years old [12]. This method successfully classified specimens into preservation categories with over 90% accuracy when identifying bones with sufficient collagen (>1%) for radiocarbon dating or stable isotope analysis, all without destructive sampling [12].

Wave-Based Imaging Techniques

Wave-based methods utilize various forms of energy propagation to visualize internal structures and detect anomalies.

Table 2: Wave-Based NDT Methods for Structural Evaluation

| Method | Physical Principle | Typical Applications | Spatial Resolution | Limitations |

|---|---|---|---|---|

| Ultrasonic Testing (UT) | High-frequency sound wave propagation and reflection | Internal flaw detection [14], thickness measurement [3] | Millimeter to sub-millimeter | Requires couplant, sensitive to microstructure |

| Ground Penetrating Radar (GPR) | Electromagnetic wave reflection | Subsurface feature mapping [11], rebar localization in concrete [15] | Centimeter scale | Limited depth in conductive materials |

| Impact-Echo Testing | Analysis of stress wave reflections | Thickness measurement, delamination detection in concrete [15] | Centimeter scale | Point measurement, requires surface access |

Ultrasonic Testing (UT) presents unique challenges for anisotropic materials like fiber-reinforced polymer (FRP) composites, where wave propagation characteristics vary significantly with fiber orientation [14]. Advanced ultrasonic techniques such as Phased-Array Ultrasonic Testing (PAUT) have been developed to address these challenges through controlled beam steering and focusing, enabling more accurate defect characterization in complex composite structures [14].

Visual and Optical Methods

Visual and optical techniques enhance or extend human vision for detailed surface analysis.

Visual Testing (VT): The most fundamental NDT method, VT involves direct observation of surfaces using tools such as borescopes, magnifiers, and digital microscopes to identify visible defects, corrosion, or misalignments [3]. Fiber Optic Microscopy (FOM) has proven particularly valuable for cultural heritage applications, enabling detailed examination of architectural surfaces without physical contact [11].

Digital Image Processing (DIP): This technique enhances and analyzes digital images of surfaces to quantify decay patterns, map weathering effects, and monitor changes over time. In cultural heritage preservation, DIP has been successfully used to objectively assess cleaning interventions on historic marble surfaces [11].

Experimental Protocols for Preservation Research

Protocol 1: NIR Spectroscopy for Bone Collagen Quantification

Principle: Locard's Exchange Principle manifests in the preservation of molecular signatures in archaeological bone. The non-destructive analysis detects the persistent "traces" of original collagen through its NIR spectral signature [12].

Materials and Equipment:

- Portable NIR spectrometer with wavelength range 1000-2500 nm

- Spectralon reference standard for calibration

- Sample positioning fixture

- Computer with multivariate analysis software

Procedure:

- Allow spectrometer to warm up according to manufacturer specifications.

- Acquire reference spectrum using Spectralon standard.

- Position bone specimen securely in the sample fixture, ensuring reproducible geometry.

- Collect spectra from multiple positions on the specimen to account for heterogeneity.

- Process spectra using standard normal variate (SNV) or multiplicative scatter correction (MSC) to minimize light scattering effects.

- Apply pre-developed partial least squares (PLS) regression model to predict collagen content from spectral data.

- Classify specimens into preservation categories based on predicted collagen content (>1% suitable for radiocarbon dating; >3% for comprehensive analysis).

Validation: The method demonstrated excellent predictive power (R² = 0.91-0.97) in validation studies with bone specimens of known collagen content, with root mean square error of prediction of 1.18-1.97% collagen [12].

Protocol 2: Integrated NDT Assessment for Historic Structures

Principle: Building materials continuously exchange traces with their environment through weathering processes. Multiple NDT techniques detect and characterize these alterations without contributing to the decay [11].

Materials and Equipment:

- Infrared thermal camera

- Ground penetrating radar system with appropriate frequency antennas

- Ultrasonic pulse velocity tester

- Digital camera for high-resolution imaging

- Fiber optic microscope

Procedure:

- Macro-scale Mapping: Conduct systematic visual inspection documented with digital photography. Create detailed condition mapping using standardized decay terminology.

- Thermographic Survey: Perform active or passive thermography under appropriate environmental conditions. Identify subsurface anomalies through thermal contrast patterns.

- GPR Assessment: Systematically scan structural elements using grid methodology. Process data to identify subsurface features, moisture distribution, and structural heterogeneities.

- Ultrasonic Testing: Measure pulse velocity through structural elements following established paths. Calculate velocity variations indicative of material quality or deterioration.

- Data Integration: Correlate findings from all techniques using geographic information systems (GIS) or building information modeling (BIM) platforms. Identify convergence of evidence from multiple NDT methods.

Quality Control: Perform repeated measurements on reference areas to establish precision. Validate findings with minimal destructive sampling when absolutely necessary and ethically justified.

Visualization of Methodologies and Workflows

Logical Workflow for NDT in Preservation Science

Locard's Principle in Material Analysis Context

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Non-Destructive Preservation Research

| Tool/Reagent | Function | Application Examples |

|---|---|---|

| Portable NIR Spectrometer | Quantitative molecular analysis via overtone vibrations | Bone collagen prescreening [12], material identification |

| Infrared Thermal Camera | Surface temperature mapping and variation detection | Building thermography [11], composite delamination detection [14] |

| Ultrasonic Pulse Velocity Tester | Internal structure assessment through sound wave propagation | Concrete integrity testing [15], composite flaw detection [14] |

| Ground Penetrating Radar | Subsurface imaging using electromagnetic wave reflection | Structural element mapping [11], rebar localization [15] |

| Fiber Optic Microscope | High-resolution visual examination without contact | Surface degradation mapping [11], material characterization |

| Multivariate Analysis Software | Spectral data processing and predictive modeling | Collagen content prediction [12], material classification |

The integration of Locard's Exchange Principle with modern non-destructive testing methodologies creates a powerful theoretical and practical framework for preservation science. This synergy enables researchers to extract maximum information from valuable specimens while maintaining their integrity for future study. The continuing advancement of NDT technologies—including the integration of artificial intelligence, digital twin technology, and multimodal inspection systems—promises to further enhance our ability to detect increasingly subtle traces of interaction and alteration [14]. As these technologies evolve, they will expand our capacity to investigate and preserve our material cultural heritage, historical artifacts, and advanced composite materials, ensuring that these valuable resources remain available for future generations of scientists and researchers. The theoretical foundation presented here establishes a basis for ethical, evidence-based preservation practice that honors both the imperative for knowledge advancement and the responsibility of material conservation.

Evidence preservation is a critical facet of the criminal justice system, forming the foundational integrity upon which forensic science is built. At every stage, handlers of evidence must ensure that it has not been compromised, contaminated, or degraded and that its chain of custody is meticulously tracked [16]. The National Institute of Justice (NIJ), as the principal federal agency supporting forensic science research and development, plays a pivotal role in advancing this field. Its research priorities are strategically designed to address the growing complexity of managing vast inventories of property and evidence, particularly with the justice system's increasing reliance on forensic evidence in casework [16] [17]. This document frames these priorities within a broader thesis on the application of non-destructive analysis methods, which allow for the evaluation of evidence properties without causing damage, thereby preserving materials for subsequent analyses and maintaining their legal integrity [18]. For researchers and scientists, understanding these priorities is essential for directing investigative efforts towards the most pressing challenges in forensic science.

NIJ's Strategic Research Framework for Forensic Science

The NIJ's research, development, testing, and evaluation (RDT&E) process is engineered to align its portfolio with the expressed needs of the forensic science community [17]. The mission of the NIJ's Office of Investigative and Forensic Sciences (OIFS) is to "improve the quality and practice of forensic science through innovative solutions" [17]. Its research and development goals are threefold and directly inform evidence preservation strategies, as outlined in the table below.

Table 1: Strategic Goals of NIJ's Office of Investigative and Forensic Sciences

| Goal Number | Strategic Goal | Implication for Evidence Preservation |

|---|---|---|

| 1 | Expand the information that can be extracted from forensic evidence and quantify its evidentiary value. | Promotes development of non-destructive and sequential analysis methods to maximize data yield from a single sample. |

| 2 | Develop reliable and widely applicable tools that allow faster, cheaper, and less labor-intensive identification, collection, preservation, and analysis of evidence. | Directly drives research into automation, triage tools, and efficient preservation techniques to reduce backlogs. |

| 3 | Strengthen the scientific basis of the forensic science disciplines. | Encourages foundational research into the stability and degradation of materials, underpinning effective preservation protocols. |

A key mechanism for identifying specific research needs is the use of Technology Working Groups (TWGs) [17]. These groups, composed of forensic science practitioners, generate a detailed list of operational and technology needs affecting day-to-day work. For the 2025 fiscal year, NIJ has released a list of anticipated research interests that highlights social science research and evaluative studies on forensic science systems and projects to identify and inform the forensic community of best practices [19]. This aligns with the broader goal of strengthening the entire ecosystem of evidence management, from the crime scene to the courtroom.

Application Note: Non-Destructive Fluorescence for Fiber Evidence Preservation

Principle and Rationale

The analysis of textile fibers is a common form of trace evidence examination in forensic investigations. A primary challenge is the need to compare a questioned fiber to a known sample without consuming or altering the evidence, thus preserving it for confirmatory testing or re-examination by defense experts. Non-destructive testing (NDT) methods are "highly valuable technique[s] that can save both money and time in product evaluation, troubleshooting, and research" [18]. Fluorescence spectroscopy has emerged as a powerful NDT method for this purpose. The technique capitalizes on the fact that many dyes and intrinsic impurities in fibers fluoresce when exposed to specific wavelengths of light. By measuring the unique excitation-emission matrix (EEM) of a single fiber, a detailed fluorescent profile can be obtained without destroying the sample [20].

Experimental Protocol for Non-Destructive Fiber Analysis

This protocol provides a step-by-step methodology for the non-destructive characterization of single textile fibers using fluorescence spectroscopy, based on research supported by the National Institute of Justice [20].

Table 2: Key Research Reagent Solutions for Fluorescence Analysis of Fibers

| Item Name | Function / Explanation |

|---|---|

| Fluorescence Spectrophotometer | Instrument capable of collecting excitation-emission matrices (EEMs). Must have a xenon lamp and capable of scanning emission wavelengths from 250-800 nm. |

| Microspectrophotometer Attachment | Essential for focusing the excitation beam and collecting emitted light from a single, microscopic fiber. |

| Non-Fluorescent Microscope Slides & Coverslips | To mount the single fiber for analysis without introducing background fluorescence. |

| Immersion Oil (Non-Fluorescent) | To secure the fiber and improve optical clarity under the microscope objective. |

| Standard Reference Materials | Such as Standard Reference Material 1597a (polycyclic aromatic hydrocarbons), for instrument calibration and validation [20]. |

Procedure:

- Sample Mounting: Isolate a single questioned fiber and a known single fiber. Using clean forceps, mount each fiber separately on a non-fluorescent microscope slide. Secure the fiber with a coverslip, using a minimal amount of non-fluorescent immersion oil if necessary.

- Instrument Calibration: Power on the fluorescence spectrophotometer and allow the lamp to stabilize. Calibrate the instrument's wavelength accuracy using the appropriate standard reference materials according to the manufacturer's protocol.

- Data Acquisition: a. Place the mounted questioned fiber on the microscope stage of the spectrophotometer. b. Set the initial excitation wavelength (e.g., 250 nm). The excitation wavelength should be selected based on the dye class, but a full spectral range is recommended for untargeted analysis. c. Scan the emission wavelength from a value slightly above the excitation wavelength up to 800 nm, recording the fluorescence intensity at each wavelength. d. Increment the excitation wavelength by a fixed step (e.g., 5-10 nm) and repeat the emission scan. e. Continue this process to generate a complete three-dimensional EEM, which is a plot of fluorescence intensity as a function of both excitation and emission wavelengths.

- Data Analysis: Subject the collected EEM data to multi-way statistical analysis, such as Parallel Factor (PARAFAC) analysis, to decompose the complex signal into the contributing fluorescent components [20]. This allows for the differentiation of fibers with visually similar colors but different chemical compositions.

- Sample Recovery: After analysis, carefully remove the fiber from the slide. The fiber remains intact and unaltered, available for further analysis with other techniques (e.g., microspectrophotometry in the visible range, Fourier-Transform Infrared spectroscopy).

Diagram 1: Non-Destructive Fiber Analysis Workflow.

Quantitative Data and Research Priorities in Evidence Management

Recent surveys and reports commissioned by the NIJ provide a quantitative backbone for understanding the current state and needs of evidence management. The NIST/NIJ Evidence Management Steering Committee conducted a national survey of evidence handlers in 2021, with the final reports published in 2025 [16]. While the full quantitative data is housed separately, the key findings highlight systemic challenges that directly inform research priorities [16] [21].

Table 3: Key Evidence Management Challenges and Research Implications

| Documented Challenge | Quantitative / Qualitative Data | Related NIJ Research Priority |

|---|---|---|

| Volume of Digital Evidence | "Considerable quantity of evidence" creates "overwhelming volume of work" and "large backlogs" for examiners [21]. | Develop tools for faster, cheaper analysis; Triage tools for detectives [19] [21]. |

| Training Gaps | Potential difficulties with prosecutors, judges, and defense attorneys not understanding digital evidence [21]. Inexperience of patrol officers in preserving evidence [21]. | Social science research on forensic systems; Education for courtroom personnel [19] [21]. |

| Resource Limitations | Small agencies lack resources for effective analysis; challenges in obtaining funding and staffing [21]. | Develop regional analysis models; Foundational/applied R&D in forensics [19] [21]. |

The NIJ's focus on digital evidence preservation is particularly salient. Digital evidence is "much more fragile" than physical evidence and can be "easily altered, deleted, or corrupted" [22]. The core principles for its preservation, which align with non-destructive ideals, include forensic soundness, a verifiable chain of custody, evidence integrity (verified via hash algorithms), and minimal handling (often using write blockers) [23] [22]. The international standard ISO/IEC 27037 provides guidelines for the identification, collection, acquisition, and preservation of digital evidence, emphasizing the need to maintain data integrity without alteration [23].

The strategic research priorities of the NIJ underscore an unwavering commitment to enhancing the integrity, efficiency, and scientific rigor of evidence preservation. The path forward is clear: a continued investment in the development and validation of non-destructive analysis methods is paramount. Techniques such as fluorescence spectroscopy for fibers, along with other NDT methods like eddy-current and ultrasonic testing [18], represent the vanguard of this effort. They allow for the maximal extraction of information from precious and often minute evidence samples while perfectly preserving the sample for the judicial process. For the research community, this translates to a clear call to action. By aligning experimental designs with the NIJ's stated goals of expanding informational yield, developing efficient tools, and strengthening scientific foundations, scientists can directly contribute to a more robust and reliable criminal justice system. The future of evidence preservation lies in innovative, non-destructive technologies that uphold the highest standards of forensic science.

Within forensic evidence preservation research, non-destructive analysis methods are paramount. These techniques allow for the initial examination of evidence without consuming or altering the original material, thereby preserving its integrity for future testing. The core pillars supporting this paradigm are a rigorously maintained chain of evidence, the capacity for evidence re-analysis, and the establishment of legal admissibility. This document outlines detailed application notes and protocols to achieve these critical objectives, providing researchers and drug development professionals with a framework to ensure their forensic workflows yield scientifically sound and legally defensible results.

The Critical Role of Chain of Evidence

The chain of evidence (CoC), also known as the chain of custody, is the chronological and documented process that records the handling, collection, transfer, storage, analysis, and presentation of physical or digital evidence [24]. It acts as the legal backbone for maintaining the integrity and admissibility of evidence in investigative and judicial processes [25] [24]. The core principle is that every piece of evidence must be accounted for at all times, from the moment it is discovered to its final presentation in court [24].

A well-maintained CoC serves several vital purposes:

- Preserving Integrity: It demonstrates that the evidence presented in court is the same as the evidence originally collected and has not been tampered with, altered, or contaminated [24].

- Establishing Authenticity: It provides a verifiable record that is essential for authenticating evidence under legal standards, such as those outlined in Section 65B of the Information Technology Act, 2000, for electronic records [25].

- Creating Accountability: It assigns responsibility to every individual who handled the evidence, creating a clear trail of custody [24].

Quantitative Metrics for Evidence Integrity

The following table summarizes key quantitative data and standards related to evidence integrity and legal admissibility, providing a quick reference for researchers.

Table 1: Standards and Metrics for Evidence Integrity and Analysis

| Category | Metric/Standard | Description | Legal/Scientific Basis |

|---|---|---|---|

| Digital Evidence Admissibility | Section 65B Certificate | A mandatory certificate for the admissibility of electronic evidence in Indian courts, affirming the integrity of the electronic record [25]. | Information Technology Act, 2000; Arjun Panditrao Khotkar v. Kailash Kushanrao Gorantyal (2020) [25]. |

| Statistical Significance | p-value | The probability of obtaining results at least as extreme as the observed results, assuming the null hypothesis is true. A p-value < 0.05 is a common threshold for statistical significance in forensic analysis. | Standard scientific practice for hypothesis testing. |

| Digital Contrast (Minimum) | 4.5:1 (Text) / 3.0:1 (Large Text) | The minimum contrast ratio between text and its background for standard WCAG Level AA compliance, ensuring legibility and reducing misinterpretation of data [26]. | WCAG Success Criterion 1.4.3 Contrast (Minimum) [26]. |

| Sample Contamination Rate | Laboratory-specific benchmark | The acceptable percentage of samples that are compromised during handling or analysis. Maintaining a low, documented rate is critical for defending the validity of results. | Laboratory accreditation standards (e.g., ISO/IEC 17025). |

Experimental Protocols for Evidence Handling

Protocol: Chain of Custody Documentation

Objective: To create an unbroken, documented trail for every item of evidence from collection to disposal.

Materials: Evidence tags, tamper-evident bags/containers, chain of custody forms (physical or digital), permanent ink pens, secure storage facility.

Methodology:

- Collection: Upon discovery, evidence is assigned a unique identifier. The collector records the date, time, location, case number, description of the item, and their name and signature on the evidence tag and CoC form [24].

- Packaging: Evidence is placed in a tamper-evident container. The container is sealed, and the seal is initialed and dated [24].

- Transfer: Every time evidence changes hands, the CoC form must be updated. The recipient documents the date, time, their name, signature, and the condition of the evidence and its seal upon receipt [24].

- Storage: Evidence is stored in a secure, access-controlled environment with conditions (e.g., temperature, humidity) appropriate to prevent degradation [24].

- Analysis: The analyst documents the date, time, and tests performed. For non-destructive analysis, the evidence remains sealed; for destructive tests, a specific request and authorization must be documented [24].

Protocol: Non-Destructive Analysis of Trace Evidence

Objective: To analyze trace evidence (e.g., fibers, paint chips) without consuming or altering the sample, enabling future re-analysis.

Materials: Sterile tweezers, microscope slides, stereomicroscope, Fourier-Transform Infrared (FTIR) spectrometer, Raman spectrometer, sealed evidence containers.

Methodology:

- Visual Examination: Under a stereomicroscope, document the physical characteristics (color, shape, size) of the trace evidence.

- Chemical Composition Analysis:

- FTIR Spectroscopy: Place the sample in the FTIR spectrometer. This technique identifies organic components by measuring the absorption of infrared light, requiring no sample preparation and being non-destructive [24].

- Raman Spectroscopy: Focus a laser on the sample to measure the scattering of light. This provides complementary molecular information to FTIR and is also non-destructive [24].

- Re-packaging: After analysis, the evidence is immediately re-sealed in its original container, and the CoC form is updated to reflect the analysis performed.

Visualization of Workflows

Evidence Lifecycle Management

Data Analysis and Re-analysis Pathway

The Scientist's Toolkit: Research Reagent Solutions

This section details essential materials and solutions used in forensic evidence preservation and analysis.

Table 2: Essential Materials for Forensic Evidence Preservation

| Item | Function | Application in Non-Destructive Analysis |

|---|---|---|

| Tamper-Evident Bags | To securely package evidence and provide visual proof if the container has been opened [24]. | Used for storing all physical evidence after initial collection and examination. |

| FTIR Spectroscopy | To identify organic and some inorganic materials by producing an infrared absorption spectrum without damaging the sample [24]. | Analysis of polymers, drugs, paints, and fibers. |

| Raman Spectroscopy | To provide a molecular fingerprint for identifying substances, complementary to FTIR, and is also non-destructive [24]. | Analysis of pigments, inks, and minerals. |

| Digital Forensic Write-Blockers | Hardware devices that allow data to be read from a storage device (e.g., hard drive) without any possibility of the data being altered. | Essential for creating a forensically sound image of digital evidence for analysis and re-analysis. |

| Secure, Barcoded Evidence Containers | To provide physical protection and allow for integrated tracking within a Laboratory Information Management System (LIMS). | Storage of all physical evidence, linking the physical item to its digital CoC record. |

Future Directions and Innovations

The field of forensic evidence preservation is evolving rapidly. Key innovations include:

- Blockchain for Chain of Custody: Implementing blockchain technology to create an immutable, transparent, and decentralized log of every transaction and handling event in the evidence lifecycle, making the CoC virtually tamper-proof [24].

- Artificial Intelligence (AI) in Pattern Recognition: Using AI and machine learning to analyze vast datasets, such as DNA mixtures or digital evidence, identifying patterns that may be missed by human analysts and providing statistical weight to findings [24].

- Portable Rapid Forensic Devices: The development of handheld devices for on-site DNA analysis (Rapid DNA) and drug testing allows for preliminary, non-destructive screening at the scene, guiding investigation while preserving the bulk of the sample for lab analysis [24].

The analysis of biological and physical evidence is a cornerstone of forensic investigations, yet the highly limiting nature of such evidence often necessitates accessing suboptimal sources. Archived microscope slides from sexual assault evidence collection kits, autopsies, or hospital visits represent a critical reservoir of potential evidence, containing hair, cells, fibers, and other materials trapped beneath coverslipping media. Traditional methods for accessing this slide-bound evidence have involved dangerous processes or solvents such as xylene and liquid nitrogen, which risk compromising the sample's integrity through chemical alteration or physical destruction. This protocol outlines a simple, nondestructive, and safe method for accessing and processing material on coverslipped slides, thereby preserving material integrity for downstream forensic analysis.

Quantitative Comparison of Coverslip Removal Methods

The following table summarizes the key characteristics of the novel nondestructive method against traditional approaches, highlighting its advantages in preserving material integrity.

Table 1: Quantitative Comparison of Coverslip Removal Methods

| Method Characteristic | Traditional Solvent Methods | Cryogenic Methods | Novel Humidification Method |

|---|---|---|---|

| Primary Mechanism | Chemical dissolution of mounting media [27] | Thermal shock via liquid nitrogen [27] | Humid environment softens media [27] |

| Sample Integrity Risk | High (Chemical alteration) [27] | High (Physical cracking) [27] | None (Nondestructive) [27] |

| User Safety Hazard | High (Use of toxic xylene) [27] | Moderate (Extreme cold handling) | Low (Uses water vapor, clear nail polish) [27] |

| Success Rate | Variable, not explicitly stated [27] | Variable, not explicitly stated [27] | 100% (across slides aged 6+ years) [27] |

| Key Advantage | Well-established protocol | Rapid action | Preserves sample for sensitive downstream analysis [27] |

Detailed Experimental Protocol for Nondestructive Coverslip Removal

Principle

This method leverages a humid environment to gradually plasticize and loosen the coverslipping mounting media, allowing for its gentle separation from the glass slide. Subsequent reinforcement of the coverslip with clear nail polish prevents cracking during removal, providing full access to the underlying sample without chemical or physical alteration [27].

Materials and Reagents

The following "Research Reagent Solutions" and materials are required for the execution of this protocol.

Table 2: Essential Materials and Reagents

| Item Name | Function / Application Note |

|---|---|

| Humid Chamber | Creates a controlled environment with high humidity to soften the mounting media without liquid water contact. A sealed container with a rack placed over distilled water suffices. [27] |

| Distilled Water | Source of vapor within the humid chamber; prevents mineral deposits on the slide. |

| Clear Nail Polish | Forms a flexible, reinforcing film over the coverslip. This layer provides structural integrity, preventing cracks and fragmentation during the lifting process. [27] |

| Fine-Tip Forceps | Precision tool for gently lifting the reinforced coverslip from the slide surface once the media has loosened. |

| Microscope Slides | The source of the archival evidence, specifically coverslipped slides with various mounting media, aged 6 years or more. [27] |

Step-by-Step Procedure

- Preparation of Humid Chamber: Place a small volume of distilled water in the base of a sealable container. Ensure a raised platform or rack is present to hold the slides above the water level, preventing direct contact.

- Humidification: Position the target coverslipped slide on the rack within the humid chamber. Seal the container and allow it to incubate at ambient room temperature. The required time may vary slightly with the age and type of mounting media but typically ranges from several minutes to an hour. The process is complete when the coverslip moves slightly when gently nudged with forceps.

- Coverslip Reinforcement: Carefully remove the slide from the humid chamber. Using the applicator brush, apply a thin, uniform layer of clear nail polish across the entire surface of the coverslip. Allow the polish to dry completely, forming a continuous, transparent film.

- Coverslip Removal: Using fine-tip forceps, gently lift one edge of the reinforced coverslip. Slowly and carefully peel the entire coverslip away from the slide. The nail polish film will bind to the coverslip, holding it together and preventing shattering.

- Sample Access: The underlying sample material (e.g., cells, hair, fibers) is now exposed on the slide surface and can be directly processed for subsequent analysis, such as DNA extraction or microscopic examination.

Workflow Visualization of the Nondestructive Protocol

The following diagram illustrates the logical sequence and key decision points in the nondestructive coverslip removal workflow.

Nondestructive Coverslip Removal Workflow

The outlined protocol provides a robust, safe, and highly effective method for accessing delicate evidence from archived microscope slides. By eliminating the use of hazardous solvents and minimizing physical stress on the sample, this nondestructive approach fundamentally supports the core thesis of material integrity preservation in forensic evidence research. The 100% success rate in accessing historical samples ensures that valuable evidence can be subjected to modern, sensitive analytical techniques without risk of alteration, thereby unlocking past evidence for future justice.

Advanced Non-Destructive Techniques: From Crime Scene to Laboratory Applications

Vibrational spectroscopy and optical emission techniques represent cornerstone methodologies for non-destructive chemical analysis within forensic science and pharmaceutical development. This article details the application notes and experimental protocols for three principal techniques: Raman spectroscopy, Fourier-Transform Infrared (FT-IR) spectroscopy, and Laser-Induced Breakdown Spectroscopy (LIBS). The drive toward non-destructive analysis is paramount in forensic contexts, where evidence preservation for subsequent re-examination and courtroom testimony is critical [28] [29]. Similarly, in pharmaceutical research, the ability to analyze materials without altering their chemical structure supports robust quality control and the fight against counterfeit drugs [29]. These techniques provide molecular-level information that enables researchers and scientists to identify unknown substances, characterize materials, and detect trace evidence with a high degree of specificity while maintaining evidence integrity.

Technique Fundamentals & Comparative Analysis

Principles of Operation

Raman Spectroscopy: This technique relies on inelastic light scattering. When monochromatic laser light interacts with a molecule, the energy shift of the scattered light corresponds to the vibrational energies of molecular bonds, providing a unique molecular fingerprint. The process measures relative frequencies at which a sample scatters radiation and depends on a change in the polarizability of a molecule [28] [30]. It is particularly sensitive to homo-nuclear molecular bonds (e.g., C-C, C=C, C≡C) [30].

Fourier-Transform Infrared (FT-IR) Spectroscopy: FT-IR operates on the principle of infrared light absorption. It measures the absolute frequencies at which a sample absorbs IR radiation, which corresponds to the vibrational frequencies of molecular bonds. This absorption requires a change in the dipole moment of the molecule and is highly sensitive to hetero-nuclear functional group vibrations and polar bonds, such as O-H stretching in water [28] [30].

Laser-Induced Breakdown Spectroscopy (LIBS): LIBS is an atomic emission technique. It involves using a high-power, pulsed laser to ablate a micro-scale amount of material, generating a transient plasma. As the plasma cools, the excited atoms and ions emit characteristic wavelengths of light. The detection of these elemental emission lines provides a quantitative and qualitative analysis of the sample's elemental composition [31] [32].

Comparative Technique Analysis

Table 1: Comparative analysis of Raman, FT-IR, and LIBS spectroscopic techniques.

| Parameter | Raman Spectroscopy | FT-IR Spectroscopy | Laser-Induced Breakdown Spectroscopy (LIBS) |

|---|---|---|---|

| Fundamental Principle | Inelastic light scattering [30] | Infrared light absorption [30] | Atomic optical emission from laser-induced plasma [31] |

| Probed Information | Molecular vibrations (phonons); chemical structure & phases [29] | Molecular vibrations; chemical bonds & functional groups [28] | Elemental composition (bulk & trace) [31] |

| Sensitivity | Sensitive to homo-nuclear bonds (C-C, C=C) [30] | Sensitive to hetero-nuclear, polar bonds (O-H, C=O) [28] [30] | High sensitivity for trace elements (ppb) [31] |

| Sample Preparation | Minimal to none; non-destructive [28] [29] | Can be extensive (e.g., KBr pellets); often destructive [28] | Minimal; micro-destructive [31] |

| Key Advantage | Little sample prep; insensitive to water; specific C-C bond ID [28] | Strong absorption for many functional groups; well-established libraries [33] | Rapid, elemental analysis; depth profiling; field-portable [31] [34] |

| Primary Limitation | Fluorescence interference can mask signal [28] [30] | Strong water absorption; sample thickness constraints [28] | Primarily elemental, not molecular information; matrix effects [32] |

Application Notes in Forensic & Pharmaceutical Contexts

Forensic Science Applications

The non-destructive nature and minimal sample preparation of Raman spectroscopy and LIBS make them exceptionally valuable for forensic evidence analysis, where preserving original evidence is paramount for legal proceedings [28] [29].

Controlled Substance Analysis: Raman spectroscopy is extensively used for the identification of illicit drugs like cocaine and novel psychoactive substances (NPS). It can detect not only the primary drug but also cutting agents and adulterants, providing intelligence on trafficking patterns [35] [29]. FT-IR serves as a complementary technique for verifying functional groups and identifying organic adulterants [28] [36].

Trace Evidence Examination:

- Gunshot Residue (GSR) & Paint: LIBS excels in the analysis of inorganic GSR particles and the depth profiling of multi-layer paint chips from hit-and-run incidents, capable of identifying all layers in a sample [31] [34].

- Inks, Toners, and Questioned Documents: Raman spectroscopy can differentiate between various ink and toner formulations on documents with up to 90% accuracy, aiding in the detection of forgeries without destroying the document [36].

- Fibers, Hairs, and Bodily Fluids: Raman can differentiate between fiber types and even determine the race of an individual from a dried bloodstain [35]. LIBS can be applied to elemental analysis of hair and nails, useful in toxicology and nutritional studies [32].

Pharmaceutical Development & Analysis

In the pharmaceutical industry, these techniques are critical for ensuring product quality, safety, and efficacy from development to manufacturing.

Active Pharmaceutical Ingredient (API) Analysis: Both Raman and FT-IR are employed for the identification and quantification of APIs in drug formulations. Their ability to provide a chemical fingerprint makes them ideal for verifying the identity of raw materials and final products against reference standards [33] [29].

Counterfeit Drug Detection: Raman spectroscopy is a powerful tool for identifying economically motivated adulteration in pharmaceuticals. It can rapidly detect the presence, absence, or wrong proportion of APIs, as well as the presence of toxic adulterants [29].

Process Analytical Technology (PAT): The speed and non-destructive nature of Raman spectroscopy allow for its use in real-time monitoring of chemical reactions and processes during drug manufacturing, such as monitoring polymerization reactions or powder blending homogeneity [33] [29].

Advanced & Emerging Applications

Portable and On-Site Analysis: The development of compact, portable LIBS [34] and Raman [35] sensors is revolutionizing crime scene investigations by enabling on-the-spot analysis of evidence, thus delivering actionable intelligence rapidly and reducing laboratory backlogs.

Microplastics and Environmental Analysis: FT-IR microscopy is a leading technique for identifying and characterizing microplastic particles in environmental and biological samples, a growing area of public health concern [33].

Biomedical and Tissue Analysis: LIBS is emerging as a valuable tool in biomedical research for mapping the elemental distribution in tissues, with applications in disease diagnosis (e.g., cancer, Alzheimer's) and studying the distribution of therapeutic metals [32].

Experimental Protocols

Protocol: Raman Spectroscopy for Forensic Drug Analysis

This protocol outlines the steps for identifying an unknown white powder using a Raman spectrometer, simulating a common forensic scenario [28].

Research Reagent Solutions & Materials: Table 2: Essential materials for Raman spectroscopy drug analysis.

| Item | Function |

|---|---|

| PeakSeeker Raman Spectrometer (785 nm laser) | Instrument for spectral acquisition [28] |

| Glass vials | Sample holder for solid powders [28] |

| Reference standards (e.g., Cocaine, Caffeine) | Known materials for library matching and validation [28] |

| Raman Spectral Library Database | Software database for automated chemical identification [28] |

Procedure:

- Sample Preparation: Transfer the unknown white powder into a clean glass vial until it is approximately 3/4 full to ensure sufficient material for the laser to analyze [28].

- Instrument Setup: Place the vial securely into the sample compartment of the Raman spectrometer. Ensure the instrument's laser is powered on and the software is initialized.

- Data Acquisition: Turn the laser to the "on" position to begin data collection. Acquire the Raman spectrum typically over the range of 200-2000 cm⁻¹ [28].

- Data Analysis:

- Library Search: Compare the acquired spectrum against the instrument's Raman spectral library database to generate a list of potential matches [28].

- Peak Assignment: Manually compare the peak positions and relative intensities in the sample spectrum with literature values and reference tables for the suspected compound (e.g., cocaine). Pay particular attention to key functional groups, such as the C-N stretch in cocaine, which can differentiate it from other similar powders [28].

- Validation: Confirm the identity by comparing the results with a known reference standard analyzed under the same conditions, if available.

Protocol: FT-IR Spectroscopy with KBr Pellet Method

This protocol describes the traditional KBr pellet method for analyzing solid samples via FT-IR transmission spectroscopy, which is a standard technique for definitive identification in pharmaceuticals [28] [33].

Research Reagent Solutions & Materials: Table 3: Essential materials for FT-IR spectroscopy with KBr pellets.

| Item | Function |

|---|---|

| FT-IR Spectrometer (e.g., Nicolet) | Instrument for IR absorption measurement [28] |

| Potassium Bromide (KBr) | Inert, IR-transparent matrix for sample dilution [28] |

| Hydraulic Press | Equipment to compress powder into a transparent pellet [28] |

| Mortar and Pestle | For grinding and homogenizing the sample-KBr mixture [28] |

| Aluminum foil & block | Support for pellet formation [28] |

Procedure:

- Weighing: Precisely weigh out 1.000 gram of dry potassium bromide (KBr) and 0.010 grams of the solid sample to be analyzed, achieving a 100:1 dilution ratio [28].

- Grinding and Mixing: Transfer both the KBr and the sample into a mortar. Grind the mixture thoroughly with a pestle to create a fine, homogeneous powder and ensure even distribution of the sample within the KBr matrix [28].

- Pellet Formation:

- Place a piece of aluminum foil on an aluminum block and use a hole punch to create a cavity.

- Transfer half of the ground mixture into the cavity and level the surface.

- Place a second aluminum block on top and insert the entire assembly into a hydraulic press.

- Apply a pressure of 18,000 psi for approximately 30 seconds to form a transparent pellet [28].

- Data Acquisition: Carefully remove the KBr pellet from the press and place it into a holder in the FT-IR spectrometer. Collect the infrared absorption spectrum.

- Data Analysis: Analyze the resulting spectrum by identifying key absorption bands corresponding to functional groups (e.g., O-H stretch, C=O stretch). Cross-reference the peak positions with literature values or a reference standard spectrum for identification, as commercial library databases may not always be available [28].

Protocol: LIBS for Elemental Analysis of Trace Evidence

This protocol describes the general use of LIBS for the elemental analysis of various trace evidence types, such as paint chips, glass, and gunshot residue, leveraging its minimal preparation requirements [31] [34].

Research Reagent Solutions & Materials: Table 4: Essential materials for LIBS analysis of trace evidence.

| Item | Function |

|---|---|

| LIBS Spectrometer (Portable or Benchtop) | Instrument for plasma generation & spectral detection [34] |

| Sample Substrate (e.g., Glass Slide, Tape) | Platform for mounting small or particulate evidence [31] |

| Standard Reference Materials | For instrument calibration and quantitative analysis [32] |

Procedure:

- Sample Presentation: For solid samples like a paint chip or a fragment of glass, mount the evidence securely on a sample stage or adhesive tape. Ensure the analysis surface is accessible to the laser. For loose residues like GSR, tap the substrate onto a double-sided conductive tape on a microscope slide [31].

- Instrument Setup:

- Position the sensor head of the LIBS instrument at the correct working distance from the sample surface.

- For a portable device, use the graphical user interface (GUI) to select the analysis mode and parameters [34].

- Data Acquisition:

- Fire a series of high-power laser pulses at the sample surface. Each pulse ablates a micro-volume of material, creating a transient plasma.

- Collect the light emitted by the cooling plasma using the built-in spectrometer.

- For layered materials (e.g., car paint), successive laser shots can be used for depth profiling, revealing the elemental composition of each layer [34].

- Data Analysis: The spectrometer software will display the emission spectrum, which consists of sharp peaks at wavelengths characteristic of the elements present. Identify elements by matching these peak positions to known elemental emission lines (e.g., Pb for gunshot residue, or various metals in paint pigments) [31] [34].

- Data Interpretation: Use the elemental fingerprint to discriminate between different sample sources (e.g., linking a paint chip from a crime scene to a specific vehicle model) [31].

In the field of forensic evidence preservation, non-destructive analysis methods are paramount for maintaining the integrity of evidence for legal proceedings. Among these methods, three-dimensional (3D) reconstruction technologies have emerged as powerful tools for accurately documenting and preserving crime scenes and physical evidence without causing alteration or damage [18]. This document provides detailed application notes and protocols for two principal 3D reconstruction technologies—laser scanning and photogrammetry—framed within the context of non-destructive forensic analysis. These techniques enable investigators to create precise digital representations of scenes, objects, and structures, facilitating detailed subsequent analysis, virtual re-examination, and reliable presentation in court [37] [38].

Fundamental Principles

Photogrammetry is a technique that utilizes photographs to measure and interpret physical objects or environmental features. By analyzing multiple images taken from different angles, photogrammetry reconstructs a 3D model of an object or scene. The fundamental principle is triangulation, where the precise positions of points on an object are determined using intersection points of lines of sight from multiple images [39]. The process involves capturing overlapping photos, software processing to identify common points, and generation of 3D models through dense point clouds that can be converted into textured meshes [39] [38].

Laser Scanning (also known as LiDAR - Light Detection and Ranging) is a technology that measures surface distances by illuminating targets with lasers and analyzing the reflected light. The core principle is time-of-flight measurement, where the time taken for a laser beam to return to the sensor is used to calculate the distance to the surface [39] [40]. Laser scanners rapidly emit a series of laser beams in a sweeping pattern, capturing millions of distance measurements per second to generate dense point clouds representing the surface geometry of scanned objects or environments [39].

Comparative Technical Analysis

The table below summarizes the key differences between photogrammetry and laser scanning to guide appropriate technology selection for forensic applications:

Table 1: Technical comparison between photogrammetry and laser scanning

| Parameter | Photogrammetry | Laser Scanning |

|---|---|---|

| Data Acquisition Method | Photographs from digital cameras, drones, or specialized photogrammetric cameras [39] | Laser beams emitted in sweeping pattern [39] |