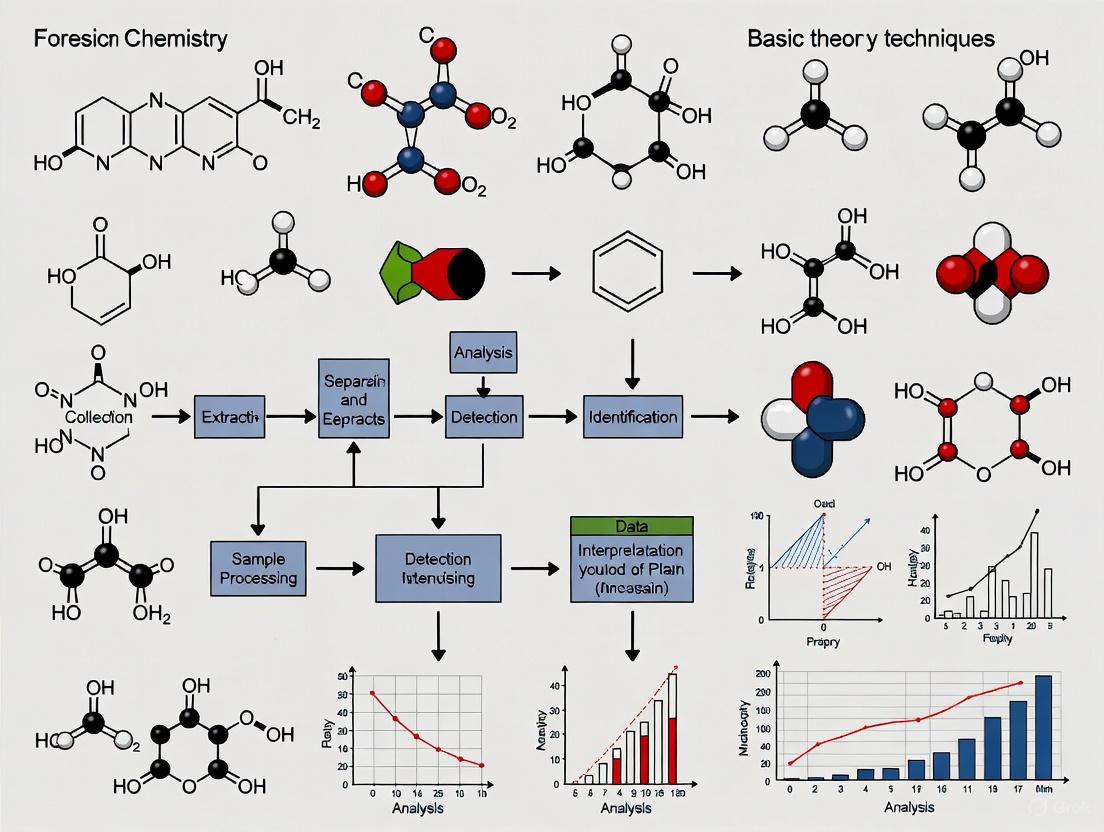

New Frontiers in Forensic Chemistry: Foundational Theories, Advanced Techniques, and Validation for Modern Evidence Analysis

This article provides a comprehensive overview of the latest foundational theories, methodological applications, and validation frameworks shaping modern forensic chemistry.

New Frontiers in Forensic Chemistry: Foundational Theories, Advanced Techniques, and Validation for Modern Evidence Analysis

Abstract

This article provides a comprehensive overview of the latest foundational theories, methodological applications, and validation frameworks shaping modern forensic chemistry. Tailored for researchers, scientists, and drug development professionals, it explores cutting-edge analytical techniques like DART-HRMS and GC×GC–MS, detailing their principles for characterizing complex seized drugs and other evidence. The content addresses critical challenges in method optimization, troubleshooting, and data interpretation, while firmly grounding the discussion in the rigorous standards required for scientific and legal admissibility. By synthesizing current research priorities and technological advances, this review serves as a vital resource for professionals navigating the evolving landscape of forensic analytical science.

Core Principles and Emerging Analytical Theories in Modern Forensic Chemistry

The Strategic Shift from Targeted to Untargeted and Non-Destructive Analysis

The field of analytical science is undergoing a fundamental transformation, moving from narrowly focused targeted methods toward comprehensive untargeted and non-destructive approaches. This paradigm shift represents a significant advancement in how scientists investigate complex chemical mixtures, particularly in forensic chemistry and drug development. Where targeted analysis traditionally focuses on identifying and quantifying a predetermined set of known compounds using validated methods, non-targeted analysis (NTA) aims to capture a broader spectrum of chemicals present in a sample without preconceptions [1]. This approach is conjointly referred to as 'non-target screening', 'untargeted screening', or 'suspect screening' [1].

The strategic value of this shift lies in its capacity to reveal previously unknown or unexpected compounds, providing a more holistic understanding of sample composition. In forensic contexts, this enables the detection of novel psychoactive substances (NPS) that would evade traditional targeted methods [2]. Meanwhile, non-destructive techniques preserve evidence integrity—a critical requirement in forensic investigations and valuable sample analysis. The integration of high-resolution mass spectrometry (HRMS) and advanced computational tools has been instrumental in driving this transition, allowing researchers to process complex data sets and identify compounds without relying solely on reference standards [1] [3].

Fundamental Principles and Definitions

Core Analytical Approaches

- Targeted Analysis: A hypothesis-driven approach that detects and quantifies specific predefined analytes using reference standards. It offers high sensitivity and precision for known compounds but cannot identify unexpected substances [1] [4].

- Suspect Screening: An intermediate approach where analysts screen samples against lists of suspected compounds based on prior knowledge, using available mass information for identification and confirmation [1].

- Non-Targeted Analysis (NTA): A hypothesis-generating approach that aims to detect all measurable analytes in a sample without predetermined targets. NTA is particularly valuable for discovering unknown compounds and comprehensive sample characterization [1] [3].

- Non-Destructive Analysis: Techniques that preserve sample integrity throughout the analytical process, enabling subsequent analyses or maintaining evidence continuity. Examples include spectroscopic methods and specialized mass spectrometry approaches that minimize sample consumption [5] [6].

Comparative Framework

Table 1: Comparison of Targeted and Untargeted Analytical Approaches

| Aspect | Targeted Analysis | Non-Targeted Analysis |

|---|---|---|

| Scope | Limited to predefined compounds | Comprehensive, aiming for all detectable analytes |

| Hypothesis | Confirmatory (hypothesis-driven) | Exploratory (hypothesis-generating) |

| Reference Standards | Required for identification and quantification | Not required for initial detection |

| Identification Confidence | High for targeted compounds | Varies; requires confidence levels and confirmation |

| Quantification | Absolute quantification possible | Typically relative quantification among samples |

| Forensic Utility | Excellent for known substances | Essential for novel compounds and unknown mixtures |

| Data Complexity | Manageable, focused data sets | Highly complex, requires advanced bioinformatics |

Technological Drivers of the Analytical Shift

Mass Spectrometry Advancements

The evolution of high-resolution mass spectrometry (HRMS) has been the cornerstone enabling the shift to non-targeted approaches. Modern HRMS instruments achieve mass resolutions exceeding 20,000, allowing precise mass determination with errors typically below 5 ppm, compared to nominal mass measurements (±1 Da) provided by low-resolution systems (LRMS) [4]. This precision is critical for distinguishing isobaric compounds—different molecules with the same nominal mass but different exact masses—which frequently cause false positives in LRMS methods [4].

The coupling of HRMS with soft ionization techniques and high-performance separation methods like liquid chromatography (LC) has created powerful platforms for untargeted analysis. These systems enable sensitive detection while preserving molecular information, with tandem HRMS (HR-MS/MS) providing structural elucidation capabilities through fragmentation patterns [1]. Instrumentation including time-of-flight (TOF) and orbital ion trap mass analyzers, often combined with ion mobility separation, offers multidimensional data acquisition that deconvolutes complex mixtures [3].

Non-Destructive and Minimal-Sampling Techniques

Parallel advancements in non-destructive techniques have expanded analytical possibilities, particularly for precious or irreplaceable samples. Non-proximate desorption photoionization mass spectrometry (NPDPI-MS) represents one innovative approach, allowing direct analysis of museum objects without physical contact [5]. This technique uses heated nitrogen to desorb analytes from swabbed samples or intact objects, with subsequent photoionization and high-resolution mass analysis enabling comprehensive characterization without damage [5].

Vibrational spectroscopic methods including Raman spectroscopy, Fourier-transform infrared (FT-IR) spectroscopy, and near-infrared (NIR) spectroscopy provide molecular fingerprinting capabilities with minimal or no sample preparation [6] [7]. These techniques are particularly valuable for forensic applications where evidence preservation is paramount, such as determining the age of bloodstains using ATR FT-IR spectroscopy with chemometrics [6].

Table 2: Non-Destructive Analytical Techniques and Their Forensic Applications

| Technique | Principle | Forensic Application | Example Use Case |

|---|---|---|---|

| NPDPI-MS | Thermal desorption with photoionization | Surface analysis of evidence | Characterizing plasticizer exudates on historical PVC objects [5] |

| Raman Spectroscopy | Inelastic light scattering | Drug identification, trace evidence | Mobile systems for on-scene analysis [6] |

| ATR FT-IR | Infrared absorption with attenuated total reflection | Bloodstain age determination | Estimating time since deposition of bloodstains [6] |

| LIBS | Laser-induced plasma emission | Elemental analysis of materials | Portable sensor for crime scene investigations [6] |

| Handheld XRF | X-ray fluorescence | Elemental composition analysis | Distinguishing tobacco brands through ash analysis [6] |

| NIR/UV-vis Spectroscopy | Absorption of specific wavelengths | Bloodstain characterization | Determining time since deposition of bloodstains [6] |

Experimental Protocols in Untargeted Analysis

Non-Destructive Swab Sampling with NPDPI-MS Analysis

Application Context: This protocol was developed for analyzing surface exudates on heritage poly(vinyl chloride) objects but demonstrates principles applicable to forensic evidence where sample preservation is essential [5].

Materials and Equipment:

- Texwipe TX714A swabs (knitted lint-free polyester)

- Acid-free paper stencil (5 × 5 cm)

- 50 mL centrifuge tubes with cardboard holders

- NPDPI-MS system with Orbitrap mass analyzer

- Nitrogen gas supply

- Krypton vacuum ultraviolet source

Procedure:

- Sample Collection:

- Position the stencil to define the swabbing area.

- Using a dry swab, wipe across the surface left to right and back four times while moving downward.

- Flip the swab and repeat the wiping motion perpendicular to the first direction.

- Store the swab in a centrifuge tube suspended with a cardboard holder to prevent surface contact.

NPDPI-MS Analysis:

- Position the swab head less than 1 mm below the stationary gas jet probe.

- Expose the swab to nitrogen at 200°C flowing at 1.0 L/min for 2.0 seconds.

- Transfer desorbed analytes through a 2 m long, 195°C transfer tube.

- Mix analytes with room air at 4.8 L/min and gas-phase anisole from an in-line permeation tube.

- Ionize using a krypton vacuum ultraviolet source.

- Analyze with Orbitrap Elite mass spectrometer scanning m/z 120-600 at 30k resolving power.

Data Acquisition:

- Perform MS^n experiments with different collision-induced dissociation (CID) voltages for structural information.

- Use full-scan acquisition for comprehensive detection.

Validation: The method was validated against direct object analysis by NPDPI-MS and demonstrated correlation with targeted GC-MS analysis of extracted swabs [5].

LC-HRMS Untargeted Screening for Novel Psychoactive Substances

Application Context: This protocol is adapted from forensic toxicology applications for detecting new psychoactive substances and their metabolites in biological samples [2].

Materials and Equipment:

- High-resolution mass spectrometer (QTOF or Orbitrap)

- Liquid chromatography system with biphenyl column (100 × 2.1 mm, 2.7 μm)

- Quaternary solvent delivery system

- Refrigerated autosampler

- QuEChERS extraction salts (4 g MgSO₄/1 g NaCl/1 g sodium citrate dihydrate/0.5 g sodium citrate sesquihydrate)

- Glacial acetonitrile (-20°C)

- Ammonium formate and formic acid for mobile phase preparation

Procedure:

- Sample Preparation:

- Add 200 μL of glacial acetonitrile to 100 μL of whole blood for deproteinization.

- Add 40 mg of QuEChERS salts for liquid-liquid extraction.

- Vortex mix and centrifuge to separate phases.

- Transfer 30 μL of the upper phase to 90 μL of aqueous mobile phase.

- Inject 5 μL into the LC-HRMS system.

Chromatographic Separation:

- Use gradient elution with (A) ammonium formate 2 mM/0.002% formic acid and (B) methanol with ammonium formate 2 mM/0.002% formic acid.

- Employ the following gradient at 0.3 mL/min (increased to 0.6 mL/min from 11-16.2 min):

- 0.00-1.00 min: 5% B

- 1.00-2.00 min: 5-40% B

- 2.00-10.50 min: 40-100% B

- 10.50-11.00 min: 100% B

- 11.01-13.00 min: 100% B

- 13.00-13.01 min: 100-5% B

- 13.51-18.00 min: 5% B

- Maintain column temperature at 40°C.

HRMS Data Acquisition:

- Use data-dependent acquisition (DDA) or data-independent acquisition (DIA) modes.

- For DDA: Full scan MS1 at resolution ≥30,000 followed by MS2 on top N precursors.

- For DIA: Isolate wide m/z windows (e.g., 20-50 Da) for fragmentation.

- Include quality control samples pooled from all specimens.

Data Processing:

- Perform peak picking, alignment, and componentization.

- Use molecular feature extraction to group related ions.

- Screen against suspect lists (e.g., NORMAN Suspect List Exchange, SWGDRUG).

- Apply statistical analysis for biomarker discovery in case-control studies.

Non-Targeted Analysis Workflow: This diagram illustrates the integrated stages of modern untargeted analysis, from sample preparation to data interpretation.

Forensic Chemistry Applications and Case Studies

Drug-Facilitated Crime Investigations

The strategic value of untargeted approaches is particularly evident in drug-facilitated sexual assault (DFSA) cases, where victims may be unable to identify the substances administered. Traditional targeted screens may miss uncommon pharmaceuticals or novel psychoactive substances. In one case study, LRMS initially detected alfuzosin (an alpha-blocker) in a female victim's blood, a finding inconsistent with the context [4]. HRMS confirmation validated the presence through exact mass measurement (390.21291 m/z vs. expected 390.2136 m/z, Δm < 2 ppm) and fragment matching (Δm < 5 ppm for all fragments) [4]. This demonstrates how untargeted methods with orthogonal verification can detect unexpected substances that might be dismissed as false positives in targeted approaches.

New Psychoactive Substance Discovery

The rapid proliferation of new psychoactive substances (NPS) presents significant challenges for forensic laboratories. Targeted methods require reference standards that are unavailable for newly emerging compounds. Untargeted metabolomics approaches have been successfully employed to identify novel biomarkers of NPS consumption. For instance, untargeted analysis of urine samples following gamma-hydroxybutyric acid (GHB) administration revealed previously unknown metabolites including GHB carnitine, GHB glycine, and GHB glutamate, extending the detection window beyond the parent compound's short half-life [2].

Driving Under the Influence Investigations

In a driving under the influence of drugs (DUID) case, LRMS screening suggested the presence of 2C-B (an amphetamine) based on nominal mass and retention time matches [4]. However, HRMS analysis revealed significant mass errors (>500 ppm) for both the precursor and fragment ions, correctly excluding 2C-B and preventing a false positive identification [4]. The case highlights how isobaric compounds with similar fragmentation patterns in LRMS can be distinguished through exact mass measurements, demonstrating the confirmatory power of HRMS in forensic toxicology.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Untargeted and Non-Destructive Analysis

| Item | Function | Application Notes |

|---|---|---|

| High-Resolution Mass Spectrometer | Precise mass measurement for compound identification | Resolution ≥20,000; mass accuracy <5 ppm required for confident identification [4] |

| Biphenyl LC Column | Chromatographic separation of diverse compounds | Provides different selectivity compared to C18 columns; improved for aromatic compounds [4] |

| QuEChERS Salts | Efficient extraction of broad analyte classes | Minimizes matrix effects; enables high-throughput sample preparation [4] |

| Polyester Swabs | Non-destructive sample collection | Low organic content residue; no adhesives; compatible with direct MS analysis [5] |

| Quality Control Reference Materials | Monitoring instrumental performance and data quality | Should represent study samples; used for reproducibility assessment [2] |

| Chemical Databases | Compound identification and suspect screening | NORMAN Suspect List Exchange, US-EPA CompTox Chemicals Dashboard, SWGDRUG [3] |

| Ion Mobility Spectrometry | Additional separation dimension | Resolves isobaric compounds; provides collisional cross-section data [3] |

| Vacuum Ultraviolet Source | Soft ionization for complex mixtures | Enables non-proximate desorption photoionization; minimizes fragmentation [5] |

Data Analysis and Computational Strategies

Prioritization Frameworks for Complex Data

The vast number of features detected in untargeted analyses (tens of thousands in environmental samples) necessitates effective prioritization strategies [3]. These can be categorized as online prioritization (real-time during acquisition) and offline prioritization (post-acquisition) [3].

Online prioritization techniques include:

- Inclusion lists: Target specific m/z values based on suspect screening

- Mass defect filtering: Focus on characteristic mass defects of compound classes

- Isotopic pattern triggers: Prioritize compounds with distinctive isotopic signatures

- Retention time prediction: Narrow acquisition windows based on expected elution

Offline prioritization strategies include:

- Structure-based prioritization: Highlight compounds with concerning functional groups

- Property-based filtering: Focus on compounds with shared properties

- Regulatory list alignment: Flag compounds subject to restrictions

- Abundance-based ranking: Prioritize high-intensity signals

- Toxicity prediction: Use computational models to identify potentially hazardous compounds

Data Prioritization Framework: This diagram outlines strategies for managing complex data from untargeted analyses, highlighting the most relevant features for further investigation.

Confidence Assessment and Identification Levels

Confident compound identification remains a significant challenge in non-targeted analysis. A four-level identification framework has been widely adopted:

- Level 1: Confirmed structure - Matched to reference standard with multiple parameters

- Level 2: Probable structure - Library spectrum match or diagnostic evidence

- Level 3: Tentative candidate - Consistent with spectral data but ambiguous

- Level 4: Unknown feature - Detected but uncharacterized

This structured approach helps communicate identification confidence and guides appropriate follow-up actions, which is particularly important in forensic contexts where results may have legal implications [3].

Challenges and Future Directions

Current Limitations

Despite its transformative potential, the implementation of untargeted and non-destructive analysis faces several significant challenges:

- Standardization: Lack of standardized protocols, reference materials, and reporting standards complicates method validation and comparison across laboratories [1].

- Data Complexity: The vast amount of data generated requires sophisticated bioinformatics tools and specialized expertise for proper interpretation [1] [7].

- Quantification: While excellent for compound discovery, untargeted methods typically provide only relative quantification between samples, unlike the absolute quantification possible with targeted approaches [1].

- Sensitivity: Non-destructive techniques may have higher detection limits compared to destructive methods that concentrate analytes [5].

- Computational Demands: Processing high-resolution MS data requires significant computational resources and specialized software [3].

Quality Assurance and Validation

Ensuring data quality in untargeted analysis requires specific quality assurance measures:

- Quality Control Samples: Pooled quality control (QC) samples should be analyzed throughout sequence to monitor instrument stability [2].

- Blank Samples: Process and instrument blanks identify contamination sources.

- Reference Materials: When available, certified reference materials validate analytical performance.

- Reproducibility Assessment: Technical replicates evaluate method precision.

- Matrix Effects: Evaluation of ionization suppression/enhancement in complex samples.

Emerging Trends

The field continues to evolve with several promising developments:

- Artificial Intelligence Integration: Machine learning and deep neural networks are being applied to improve compound identification and prioritize features of interest [8] [7].

- Miniaturized Sensors: Portable spectroscopic devices enable on-site analysis for rapid screening at crime scenes [6].

- Data Fusion: Combining multiple analytical techniques provides complementary information for comprehensive sample characterization [7].

- Advanced Separation: Coupling ion mobility with LC-HRMS adds a fourth separation dimension (retention time, m/z, mobility, intensity) [3].

The strategic shift from targeted to untargeted and non-destructive analysis represents a fundamental transformation in analytical chemistry, particularly impactful in forensic science. This paradigm enables a more comprehensive understanding of complex samples, discovery of novel compounds, and preservation of valuable evidence. As technology continues to advance, these approaches will increasingly become integrated into standard analytical workflows, enhancing capabilities for forensic investigation and chemical risk assessment.

Fundamental Theory of Direct Analysis in Real-Time High-Resolution Mass Spectrometry (DART-HRMS)

Direct Analysis in Real-Time High-Resolution Mass Spectrometry (DART-HRMS) represents a transformative advancement in analytical chemistry, particularly within the field of forensic science. As an ambient ionization technique, DART-HRMS enables the rapid analysis of a wide variety of samples in their native state with minimal or no sample preparation [9] [10]. This capability makes it exceptionally valuable for forensic applications where preserving evidence integrity is paramount. The technique operates at atmospheric pressure and in an open laboratory environment, allowing for the direct examination of solids, liquids, and gases [9].

The fundamental innovation of DART-HRMS lies in its combination of a gentle ionization process with the high mass accuracy and resolution of modern mass analyzers. The DART ion source produces electronically or vibronically excited-state species from gases such as helium, argon, or nitrogen that initiate a cascade of ionization reactions [9]. When coupled with high-resolution mass analyzers like Orbitrap or time-of-flight (TOF) instruments, this technique provides exact mass measurements that are crucial for confident compound identification [11] [12]. For forensic chemistry, DART-HRMS has become an indispensable tool for analyzing drugs of abuse, explosives, inks, and other forensic evidence directly from complex surfaces including banknotes, clothing, and biological tissues [10] [13].

Fundamental Principles and Ionization Mechanisms

Formation of Metastable Species

The DART ionization process begins with the creation of long-lived excited-state neutral atoms or molecules known as metastable species [9]. As the inert gas (typically helium, nitrogen, or argon) flows into the DART source, an electric potential in the range of +1 to +5 kV generates a glow discharge plasma containing electrons, ions, and other energetic species [9]. The ion/electron recombination in the flowing afterglow region produces these metastable species (represented as M*), which possess substantial internal energy but are electrically neutral.

The governing reaction for this process is:

M + energy → M* [9]

The stream of gaseous metastable species then passes through a critical component—a porous exit electrode—which can be biased to either positive or negative potentials (typically 0-530V) depending on the desired ionization mode [9]. This electrode serves to remove electrons and negative ions formed by Penning ionization when positively biased, thereby preventing ion/electron recombination. An optional heating element can raise the gas temperature from ambient to 550°C to facilitate desorption of analyte molecules from the sample surface [9].

Positive Ion Formation Mechanisms

In positive ion mode, the metastable carrier gas atoms (M*) initiate a complex series of gas-phase reactions that ultimately lead to analyte ionization through Penning ionization and subsequent chemical ionization processes [9]. The primary mechanism involves ionization of atmospheric components rather than direct analyte ionization.

The initial step involves Penning ionization of atmospheric nitrogen and water:

M* + N₂ → M + N₂⁺• + e⁻ [9]

M* + H₂O → M + H₂O⁺• + e⁻ [9]

The ionized nitrogen molecules can form dimer ions:

N₂⁺• + 2N₂ → N₄⁺• + N₂ [9]

These primary ions then transfer charge to water molecules, creating protonated water clusters:

H₂O⁺• + H₂O → H₃O⁺ + OH• [9]

H₃O⁺ + nH₂O → [(H₂O)ₙH]⁺ [9]

These protonated water clusters act as secondary ionizing species that generate analyte ions through proton transfer reactions [9]:

S + [(H₂O)ₙH]⁺ → [S+H]⁺ + nH₂O

Alternative ionization pathways include charge transfer reactions:

N₄⁺• + S → 2N₂ + S⁺• [9]

O₂⁺• + S → O₂ + S⁺• [9]

It is noteworthy that when using argon as the DART gas, the metastable atoms lack sufficient energy to directly ionize water, necessitating the use of a dopant to facilitate ionization [9].

Negative Ion Formation Mechanisms

In negative ion mode, the exit grid electrode is set to negative potentials, enabling the generation of electrons through surface Penning ionization [9]. These electrons then undergo electron capture with atmospheric oxygen to produce superoxide anions:

O₂ + e⁻ → O₂⁻• [9]

The resulting superoxide anions can initiate several different analyte ionization pathways depending on the chemical properties of the sample molecules:

O₂⁻• + S → S⁻• + O₂ (Electron transfer) [9]

S + e⁻ → S⁻• (Electron capture) [9]

SX + e⁻ → S⁻ + X• (Dissociative electron capture) [9]

SH → [S-H]⁻ + H⁺ (Deprotonation) [9]

The efficiency of negative ion formation exhibits a strong dependence on the internal energy of the metastable species, with the sensitivity following the order: nitrogen < neon < helium [9].

Instrumentation and Interface

The DART source requires careful integration with the mass spectrometer through a specialized atmospheric pressure interface that bridges the ambient pressure ionization region with the high vacuum necessary for mass analysis [9]. In a typical configuration, ions are guided into the mass spectrometer through a series of skimmer orifices with applied potential differences (e.g., 20V and 5V for outer and inner skimmers, respectively) [9].

The alignment of these orifices is strategically staggered to trap neutral contaminants and prevent them from entering the high-vacuum region, thereby protecting the instrument and maintaining analytical performance [9]. DART analysis can be conducted in two primary operational modes: surface desorption mode, where the sample is positioned to allow the reactive DART gas stream to flow across the surface, and transmission mode DART (tm-DART), which employs custom sample holders with fixed geometries for improved reproducibility [9].

Figure 1: DART-HRMS Ionization Pathway and Instrumental Workflow. This diagram illustrates the sequential processes from gas introduction through mass spectral detection.

Experimental Protocols and Methodologies

DART-HRMS accommodates diverse sample introduction methods tailored to different sample types and analytical requirements. For solid samples, the simplest approach involves direct analysis by positioning the sample material in the gap between the DART source exit and the mass spectrometer inlet using specialized tweezers or a sample holder [9]. This approach has been successfully applied to tablets, plant materials, banknotes, and other solid substrates.

Liquid samples are typically analyzed by dipping an inert object such as a glass rod or melting point capillary into the liquid and then presenting it to the DART gas stream [9]. Alternatively, automated sample introduction systems can be employed for high-throughput analysis of liquid extracts. For gaseous samples, vapors can be introduced directly into the DART gas stream, enabling real-time monitoring of volatile compounds [9].

In forensic applications involving complex matrices, a simple solid-liquid extraction procedure is often employed. For example, in saffron authenticity testing, 50 mg of powdered sample is extracted with 5 mL of ethanol/water (70/30, v/v) by shaking at 250 rpm for 1 hour at room temperature, followed by centrifugation at 13,416× g for 5 minutes [12]. The supernatant is then collected for DART-HRMS analysis, providing a comprehensive metabolic fingerprint for discrimination between authentic and adulterated samples [12].

Coupling with Separation Techniques

Although DART-HRMS is primarily used for direct analysis, it can be effectively coupled with various separation techniques to enhance analytical performance for complex mixtures. Thin-layer chromatography (TLC) plates can be analyzed directly by positioning developed plates in the DART gas stream, enabling rapid compound identification without the need for extraction [9].

Gas chromatography can be interfaced with DART-MS by coupling the GC column effluent directly into the DART gas stream through a heated interface, providing complementary ionization to traditional electron ionization [9]. Similarly, eluate from high-performance liquid chromatography (HPLC) can be introduced into the DART ionization region, though this requires careful flow rate optimization [9]. DART has also been successfully coupled with capillary electrophoresis (CE), with the CE eluate guided to the mass spectrometer through the DART ion source [9].

Method Optimization Parameters

Optimal DART-HRMS performance requires careful optimization of several critical parameters that influence ionization efficiency and analytical sensitivity. The gas temperature represents one of the most important parameters, as it controls the desorption efficiency of analytes from the sample surface or introduction device. Typical operating temperatures range from room temperature to 550°C, with higher temperatures generally improving the detection of less volatile compounds but potentially causing thermal degradation of labile analytes [9].

The choice of ionization gas significantly impacts the available internal energy for ionization processes. Helium provides the highest internal energy (19.8 eV for He*) and is therefore most commonly employed, particularly for negative ion mode where it demonstrates superior sensitivity [9]. Nitrogen and argon offer lower internal energies (8.4 eV and 11.5 eV respectively) and may be selected for specific applications requiring softer ionization [9].

The grid electrode voltage (typically 0-530V) must be optimized for the desired ionization mode—positive potentials for positive ion mode and negative potentials for negative ion mode [9]. Additionally, the geometric alignment between the DART source outlet, sample position, and mass spectrometer inlet must be carefully optimized to maximize ion transmission and analytical sensitivity [9] [12].

Table 1: Key Experimental Parameters for DART-HRMS Method Development

| Parameter | Typical Range | Impact on Analysis | Optimization Consideration |

|---|---|---|---|

| Gas Temperature | RT to 550°C | Controls desorption efficiency; higher temperatures improve volatility but may cause degradation | Balance between signal intensity and analyte stability |

| Ionization Gas | He, N₂, Ar | Determines internal energy available for ionization (He: 19.8 eV > Ar: 11.5 eV > N₂: 8.4 eV) | Select based on analyte ionization energy and desired fragmentation |

| Grid Electrode Voltage | ±(0-530 V) | Determines ionization mode (positive/negative) and ion transmission efficiency | Set positive for positive ion mode, negative for negative ion mode |

| Source-to-Sample Distance | 5-25 mm | Affects interaction between metastable species and sample | Optimize for maximum signal intensity for target analytes |

| Sample-to-Inlet Distance | 0-10 mm | Impacts transmission of ions into mass spectrometer | Minimize while preventing contamination of inlet |

Mass Spectrometry Conditions

When coupling DART with high-resolution mass analyzers, several instrument-specific parameters require optimization. For Orbitrap-based systems, resolution settings typically range from 15,000 to 100,000 or higher, with higher resolutions providing improved mass accuracy and isotopic distribution fidelity at the cost of acquisition speed [11] [12]. Time-of-flight (TOF) instruments should be operated at their maximum resolution setting to ensure accurate mass measurement capability [12].

Mass calibration must be performed regularly using appropriate calibration standards compatible with DART ionization. Commonly used calibrants include polytyrosine, polyethylene glycol, or other compounds that produce well-characterized ions under DART conditions [12]. The mass accuracy should be maintained at ≤5 ppm for confident elemental composition assignment, particularly for unknown identification in forensic applications [11].

Data acquisition in profile mode is recommended for untargeted analyses to preserve isotopic distribution information, which is crucial for confirming elemental composition assignments [11]. For targeted analyses, centroid mode may be employed to reduce file sizes and simplify data processing.

Analytical Performance and Data Interpretation

Mass Spectral Characteristics

DART-HRMS typically produces relatively simple mass spectra characterized by predominant molecular ion species with minimal fragmentation, consistent with its classification as a soft ionization technique [9]. In positive ion mode, the most common ions observed are protonated molecules [M+H]⁺, while negative ion mode predominantly yields deprotonated molecules [M-H]⁻ [9].

Depending on the analyte properties and experimental conditions, other ion types may be observed including molecular radical cations M⁺•, adduct ions (e.g., [M+NH₄]⁺, [M+Na]⁺), and occasionally cluster ions representing non-covalent associations [9]. The relative simplicity of DART mass spectra significantly simplifies data interpretation compared to conventional electron ionization spectra, though it may reduce structural information available from fragmentation patterns.

High-Resolution Mass Measurement

The coupling of DART ionization with high-resolution mass analyzers provides exact mass measurements that enable confident compound identification through elemental composition assignment [11]. High-resolution instruments separate isotopic peaks, allowing recognition of characteristic isotopic distributions such as the chlorine or bromine patterns that facilitate molecular formula determination [11].

The monoisotopic mass—the mass of a molecule based on the most abundant isotopes of each constituent atom—serves as the reference point for elemental composition calculation [11]. Mass accuracy, typically expressed in parts per million (ppm) or millidalton (mDa), quantifies the agreement between measured and theoretical masses, with values ≤5 ppm generally considered sufficient for confident formula assignment [11].

Table 2: Performance Characteristics of HRMS Analyzers for DART Applications

| Mass Analyzer | Typical Resolution | Mass Accuracy (ppm) | Advantages for DART Applications |

|---|---|---|---|

| Orbitrap | 15,000-100,000 | 1-5 ppm | High resolution and mass accuracy; excellent for metabolomic studies |

| Time-of-Flight (TOF) | 20,000-50,000 | 2-5 ppm | Fast acquisition speed; well-suited for high-throughput screening |

| FT-ICR | >100,000 | <1 ppm | Ultrahigh resolution and mass accuracy; capable of complex mixture analysis |

| Quadrupole-TOF (QqTOF) | 20,000-50,000 | 2-5 ppm | MS/MS capability for structural elucidation |

Multivariate Data Analysis

The rich metabolic fingerprinting data generated by DART-HRMS often necessitates advanced chemometric tools for effective pattern recognition and sample classification. Unsupervised methods such as principal component analysis (PCA) and hierarchical cluster analysis (HCA) serve as initial approaches for exploring natural groupings within datasets and identifying potential outliers [12].

Supervised pattern recognition techniques including partial least squares discriminant analysis (PLS-DA) are then employed to build predictive models that discriminate between sample classes based on their metabolic profiles [14] [12]. These models can identify discriminating ions that serve as potential markers for specific sample characteristics, such as adulteration in forensic samples or geographical origin in authentic products [12].

Figure 2: DART-HRMS Data Analysis Workflow for Forensic Applications. This diagram outlines the sequential steps from data acquisition through final interpretation.

Essential Research Reagent Solutions

Successful implementation of DART-HRMS methodologies requires careful selection of reagents and consumables that maintain analytical performance while minimizing background interference.

Table 3: Essential Research Reagents and Materials for DART-HRMS

| Reagent/Material | Function/Purpose | Application Example | Considerations |

|---|---|---|---|

| High-purity helium (≥99.999%) | Primary ionization gas; produces metastable He* with 19.8 eV internal energy | General DART analysis; negative ion mode | Highest purity minimizes background ions and source contamination |

| Nitrogen or argon gas | Alternative ionization gases with lower internal energy | Specialized applications requiring softer ionization | Argon requires dopant for efficient ionization of water clusters |

| HPLC-grade solvents (methanol, ethanol, acetonitrile) | Sample extraction and preparation | Metabolite extraction from complex matrices | Low UV absorbance grade minimizes chemical noise |

| Ammonium acetate/formate | volatile additives for adduct formation | Enhancing ionization of specific compound classes | Concentrations typically 1-10 mM in extraction solvent |

| Calibration standards (polytyrosine, PEG) | Mass scale calibration for accurate mass measurement | Routine instrument calibration and performance verification | Select compounds compatible with DART ionization characteristics |

| Inert sample holders (glass capillaries, ceramic tweezers) | Sample introduction without interference | Solid sample analysis | Non-conductive materials prevent electrical discharge |

Forensic Applications and Case Studies

Drug Identification and Toxicological Analysis

DART-HRMS has emerged as a powerful technique for the rapid identification of drugs of abuse and pharmaceutical compounds in forensic investigations. The direct analysis capability allows for the screening of seized drug samples without extensive sample preparation, providing results within seconds rather than hours [13] [15]. This rapid turnaround is particularly valuable in operational forensic settings where timely intelligence can guide investigative directions.

The high mass accuracy provided by HRMS enables discrimination of isobaric compounds that would be indistinguishable using lower resolution techniques, a critical capability for novel psychoactive substances that often differ by minimal structural modifications [15]. Furthermore, the minimal sample preparation reduces the risk of sample contamination or degradation, preserving evidence integrity for subsequent confirmatory analyses.

Food and Agricultural Product Authenticity

The untargeted metabolic profiling capabilities of DART-HRMS have been successfully applied to the detection of food adulteration, as demonstrated in saffron authenticity testing [12]. This approach discriminated pure saffron from samples adulterated with safflower or turmeric at concentrations as low as 5% (w/w), a detection level unattainable using standard ISO methods that cannot reliably identify adulteration below 20% [12].

The metabolic fingerprints obtained in both positive and negative ionization modes enabled the identification of characteristic markers for each adulterant, providing both classification capability and mechanistic understanding of the compositional differences [12]. Similar approaches have been applied to other high-value agricultural products vulnerable to economically motivated adulteration, including olive oil, honey, and milk products [14] [12].

Explosives and Chemical Threat Detection

The ambient sampling capability of DART-HRMS makes it ideally suited for the detection of explosives and chemical warfare agents on various surfaces including clothing, luggage, and currency [9] [10]. The direct analysis of suspect materials without solvent extraction or other preparatory steps minimizes analyst exposure to hazardous substances while preserving evidence for subsequent courtroom proceedings.

The combination of rapid analysis (typically 10-30 seconds per sample) and high specificity provided by exact mass measurement enables comprehensive screening for both known and unknown threat compounds through non-targeted analysis approaches [10]. This capability is particularly valuable in security applications where the rapid identification of potential threats is essential for effective response.

DART-HRMS represents a significant advancement in analytical technology for forensic chemistry, combining the rapid, direct analysis capabilities of ambient ionization with the exceptional specificity of high-resolution mass spectrometry. The fundamental ionization mechanisms—based on energy transfer from metastable species to atmospheric components and subsequently to analyte molecules—provide a versatile platform for analyzing diverse compounds across the forensic science spectrum.

The minimal sample preparation requirements, rapid analysis times, and capability for direct analysis of complex surfaces make DART-HRMS particularly valuable for forensic applications where evidence preservation and analytical efficiency are paramount. When integrated with sophisticated multivariate statistical tools, the metabolic fingerprinting data generated by DART-HRMS enables not only compound identification but also pattern recognition for sample classification and origin determination.

As forensic science continues to evolve toward more rapid, information-rich analytical techniques, DART-HRMS is positioned to play an increasingly important role in providing scientifically defensible evidence for the criminal justice system. The ongoing development of portable DART-MS systems further expands the potential for on-site forensic analysis, potentially transforming traditional evidence collection and analysis paradigms.

Principles of Comprehensive Two-Dimensional Gas Chromatography (GC×GC) for Complex Mixtures

Comprehensive Two-Dimensional Gas Chromatography (GC×GC) represents a significant advancement in separation science, offering unprecedented resolution for complex mixtures that challenge conventional analytical methods. This technical guide explores the fundamental principles of GC×GC, detailing its operational mechanisms, advantages over traditional gas chromatography, and specific applications within forensic chemistry research. By implementing two distinct separation phases coupled with a modulation interface, GC×GC achieves enhanced peak capacity and sensitivity, enabling the detection of trace-level compounds in intricate matrices such as sexual lubricants, automotive paints, and pyrolyzed tire rubber. This whitepaper provides detailed experimental protocols and technical specifications to support researchers and scientists in deploying GC×GC for advanced analytical challenges, particularly in developing novel forensic techniques for criminal investigation and evidence analysis.

Comprehensive Two-Dimensional Gas Chromatography (GC×GC) stands as a powerful evolution in analytical separation technology, specifically designed to address the limitations of conventional gas chromatography when confronting highly complex samples [16]. Where traditional one-dimensional GC may struggle with coelution and limited peak capacity, GC×GC employs two separate chromatographic columns with distinct stationary phases connected in series through a specialized modulator. This configuration creates a truly orthogonal separation system where compounds are subjected to two independent partitioning processes based on different chemical properties [16].

The fundamental advancement of GC×GC lies in its comprehensive nature—every component from the first dimension separation is subjected to analysis in the second dimension, unlike heart-cutting techniques (GC-GC) which transfer only selected fractions [16]. This approach generates a two-dimensional chromatogram with significantly enhanced resolution, often described as a "fingerprint" that reveals both major and minor components in complex mixtures [16]. For forensic researchers, this capability proves invaluable when analyzing trace evidence containing hundreds of chemical constituents, such as sexual lubricants in assault cases or pyrolyzed materials from hit-and-run accidents [16].

When coupled with mass spectrometry (GC×GC–MS), the technique provides both superior separation and definitive identification capabilities, making it particularly suitable for forensic applications where evidentiary standards demand high confidence in analytical results [16]. The following sections explore the technical foundations of this methodology and its practical implementation in forensic science research.

Fundamental Principles and Technical Basis

Core Separation Mechanism

The GC×GC system operates on the principle of orthogonal separation, where two independent separation mechanisms are applied sequentially to the same sample. The process begins with a conventional first-dimension separation typically using a non-polar column (e.g., 20-30m length) where separation occurs primarily based on analyte volatility [17] [16]. As compounds elute from this first column, they enter a critical component known as the modulator, which collects and focuses narrow bands of effluent before reinjecting them as sharp pulses into the second dimension column [16].

The second dimension generally employs a shorter, more polar column (e.g., 1-2m length) where separation occurs based on polarity differences [16]. This secondary separation occurs rapidly, typically within 2-8 seconds, before the next modulation cycle begins [16]. The modulator serves as the heart of the GC×GC system, with the most common commercial types being thermal modulation (TM), Deans switch (DS), and differential flow modulation (DFM) [16]. The modulation process ensures that the separation achieved in the first dimension is preserved while adding a complementary separation dimension, dramatically increasing the total peak capacity of the system.

The separation orthogonality arises from the different chemical properties governing partitioning in each dimension. For example, in the analysis of sexual lubricants, the first dimension might separate compounds by molecular size/volatility, while the second dimension separates based on polarity, allowing resolution of isoparaffins (lower arc) from aldehydes (upper arc) within the same retention window [16]. This orthogonal approach can increase peak capacity to the product of the peak capacities of the two individual dimensions, significantly surpassing conventional GC capabilities.

Comparison with Traditional GC and GC-MS

Table 1: Technical Comparison of GC Methodologies

| Parameter | Conventional GC | GC-MS | GC×GC-MS |

|---|---|---|---|

| Peak Capacity | Limited (typically 100-400) | Similar to GC | High (typically 1000-5000) |

| Separation Mechanism | Single dimension based primarily on volatility | Single dimension with MS identification | Two orthogonal dimensions (volatility + polarity) |

| Resolution of Coelutions | Limited, requires method optimization | Limited, relies on spectral deconvolution | Excellent, physical separation in 2D space |

| Sensitivity | Standard | Standard | Enhanced due to modulator focusing |

| Data Representation | Linear chromatogram (retention time vs. response) | Linear chromatogram with mass spectra | 2D contour plot (1tR vs. 2tR) with color intensity |

| Detection of Minor Components | Often obscured by major components | Similar to GC, with spectral identification | Improved due to separation expansion |

| Forensic Discrimination Power | Moderate | Good | Excellent for complex mixtures |

The fundamental advantage of GC×GC becomes evident when analyzing samples with high complexity, where conventional GC often fails to resolve all components. For instance, in the analysis of an oil-based lubricant with six labeled ingredients, traditional GC-MS showed substantial coelution between retention times of 7 and 20 minutes, whereas GC×GC-MS revealed more than 25 different components with clear separation of previously coeluted compounds [16]. This enhanced resolution is particularly valuable in forensic applications where accurate identification of minor components can provide crucial investigative leads.

Advantages of GC×GC in Forensic Analysis

Enhanced Separation and Sensitivity

The two-dimensional separation in GC×GC provides two primary advantages critical to forensic analysis: increased peak capacity and enhanced sensitivity. Peak capacity refers to the maximum number of peaks that can be separated with unit resolution in a chromatographic separation, and in GC×GC, this approaches the product of the peak capacities of the two dimensions [16]. This expanded separation space dramatically reduces peak overlap, allowing for more confident compound identification in complex mixtures such as sexual lubricants, automotive paints, and tire rubber [16].

The modulation process between dimensions provides a significant sensitivity boost through band compression. As analytes are focused into narrow bands before entering the second dimension, they produce sharper peaks with higher signal-to-noise ratios [16]. This focusing effect lowers detection limits, enabling the identification of trace-level components that might be obscured by major constituents in conventional GC analysis. In forensic contexts, this enhanced sensitivity can reveal minor additives or impurities that serve as chemical fingerprints for sample source attribution.

Structured Chromatographic Patterns

Beyond simple component separation, GC×GC generates structured chromatograms where chemically related compounds form ordered patterns in the two-dimensional separation space [16]. For instance, in lubricant analysis, isoparaffinic compounds typically occupy the lower arc of the early chromatographic region while aldehydes appear above them [16]. Similarly, homologous series often align along predictable trajectories, allowing for tentative identification of compound classes even without pure standards.

These structured patterns provide valuable information for forensic intelligence, enabling researchers to identify sample composition trends and detect anomalous components that may indicate specific manufacturing processes or contamination sources. The visual "fingerprint" produced by GC×GC facilitates both comparative analysis between evidence samples and intelligence-led screening for characteristic chemical profiles associated with specific materials or products encountered in criminal investigations.

Forensic Applications and Experimental Protocols

Sexual Lubricant Analysis in Sexual Assault Cases

Background and Forensic Significance: With approximately 30% of sexual assault kits lacking probative DNA profiles, the analysis of sexual lubricants provides an alternative investigative link between perpetrators and victims [16]. Lubricants present complex chemical mixtures, particularly oil-based varieties containing multiple organic butters, waxes, and plant extracts that challenge conventional GC-MS due to extensive coelution [16].

Experimental Protocol:

- Sample Preparation: Conduct hexane solvent extraction of lubricant residues from swabs or condom fragments [16].

- GC×GC-MS Conditions:

- System: 7890B Gas Chromatograph coupled to 5977 Quadrupole Mass Spectrometer (Agilent) [16].

- First Dimension Column: Non-polar stationary phase (e.g., DB-5), 30m × 0.25mm ID × 0.25μm film [16].

- Second Dimension Column: Polar stationary phase (e.g., DB-17), 2m × 0.15mm ID × 0.15μm film [16].

- Modulator: Differential Flow Modulation (DFM) [16].

- Temperature Program: Initial 50°C (hold 2min), ramp to 280°C at 5°C/min [16].

- Carrier Gas: Helium, constant flow mode [16].

- Mass Spectrometer: Electron impact ionization (70eV), scan range m/z 40-550 [16].

- Data Analysis: Generate two-dimensional contour plots with first dimension retention time (¹tR) on x-axis and second dimension retention time (²tR) on y-axis. Identify component patterns characteristic of specific lubricant formulations [16].

Expected Results: GC×GC-MS analysis of an oil-based lubricant with six labeled ingredients reveals more than 25 different components, with clear separation of previously coeluted compounds between first dimension retention times of 10-15 minutes [16]. The structured chromatogram shows isoparaffinic compounds forming a lower arc and aldehydes positioned above, creating a characteristic fingerprint for comparison with reference samples [16].

Automotive Paint Analysis via Pyrolysis-GC×GC-MS

Background and Forensic Significance: Automotive paint evidence is frequently encountered in hit-and-run accidents and burglaries. The multilayer paint system contains complex chemical formulations including pigments, additives, binders, and solvents that vary between manufacturers and models [16]. While pyrolysis-GC-MS offers greater discrimination than microscopy or IR spectroscopy, it still suffers from coelution issues that limit differentiation of similar clear coats [16].

Experimental Protocol:

- Sample Preparation: Collect microscopic paint chips and separate clear coat layer using scalpel under microscope [16].

- Pyrolysis Conditions: Use Pyroprobe 4000 (CDS Analytical LLC) with flash pyrolysis profile: initial 50°C for 2s, ramp to 750°C at 50°C/s, hold for 2s [16].

- GC×GC-MS Conditions:

- System: Same as lubricant analysis with optimized column selection [16].

- First Dimension Column: Mid-polarity stationary phase suitable for pyrolysates.

- Second Dimension Column: Highly polar stationary phase for orthogonal separation.

- Modulation: Thermal modulator with 4-6s modulation period.

- Temperature Program: Optimized for clear coat pyrolysates (e.g., 40°C to 300°C).

- Data Analysis: Compare two-dimensional chromatographic patterns of unknown and reference samples, focusing on resolution of previously coeluted compounds like α-methylstyrene and n-butyl methacrylate around 11.6 minutes first dimension retention time [16].

Expected Results: Py-GC×GC-MS demonstrates improved separation of clear coat components, particularly distinguishing α-methylstyrene (¹tR 11.776min) from n-butyl methacrylate (¹tR 11.600min) which typically coelute in conventional Py-GC-MS [16]. The enhanced resolution facilitates more precise differentiation between visually similar paint samples.

Tire Rubber Analysis in Hit-and-Run Investigations

Background and Forensic Significance: Tire rubber traces recovered from accident scenes can provide crucial evidence for vehicle identification in hit-and-run cases. The extreme chemical complexity of tire rubber—containing over 200 components including natural/synthetic rubbers, oils, plasticizers, antioxidants, and vulcanizing agents—often leads to coelution in conventional Py-GC-MS, potentially preventing correct matches [16].

Experimental Protocol:

- Sample Preparation: Recover trace tire particulates from road surfaces or victim clothing. Use ~50μg samples for pyrolysis [16].

- Pyrolysis Conditions: identical to paint analysis (50°C to 750°C at 50°C/s) [16].

- GC×GC-MS Conditions: Similar configuration to lubricant and paint analyses with possible method adjustments to optimize for tire pyrolysates [16].

- Data Analysis: Examine comprehensive two-dimensional chromatograms for characteristic patterns of tire components, noting the presence of specific antioxidants, vulcanization agents, and synthetic rubber markers that may be manufacturer-specific.

Expected Results: GC×GC-MS provides multidimensional separation of tire pyrolysates, resolving coeluted components that complicate conventional GC-MS analysis. The resulting "fingerprint" facilitates more confident comparison between tire evidence and suspected source vehicles, potentially increasing the evidentiary value of trace rubber transfers in criminal investigations [16].

Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Materials for GC×GC Forensic Analysis

| Category | Specific Items | Function and Forensic Application |

|---|---|---|

| Chromatography Columns | Non-polar (e.g., DB-5, 30m × 0.25mm ID × 0.25μm) | First dimension separation based primarily on volatility [16] |

| Polar (e.g., DB-17, 2m × 0.15mm ID × 0.15μm) | Second dimension separation based on polarity [16] | |

| Modulation Systems | Thermal Modulator (TM) | Effluent focusing and reinjection between dimensions [16] |

| Deans Switch (DS) | Heart-cutting or comprehensive flow switching [16] | |

| Differential Flow Modulation (DFM) | Commercial modulation approach for forensic applications [16] | |

| Reference Standards | Homologous series (alkanes, alkenes, aldehydes) | Retention index calibration and compound identification [18] |

| Specific target compounds (e.g., antioxidants, plasticizers) | Qualitative confirmation of case-relevant compounds [16] | |

| Sample Preparation | Hexane, dichloromethane, other organic solvents | Solvent extraction of lubricants, accelerants, or other organic evidence [16] |

| Solid-phase microextraction (SPME) fibers | Headspace sampling of volatile compounds from evidence [19] | |

| Calibration Materials | Alkane series (C₈-C₄₀) | Retention index markers for both dimensions [18] |

| Internal standards (e.g., deuterated analogs) | Quantitative accuracy via internal standardization [18] [20] |

Implementation Considerations for Forensic Laboratories

Method Development and Optimization

Implementing GC×GC in forensic analysis requires careful method development to maximize separation orthogonality for specific evidence types. Column selection represents a critical first step, with preferred combinations including non-polar × polar phases for comprehensive coverage of compound classes encountered in forensic evidence [16]. The choice of modulator type should align with analytical requirements—thermal modulators generally offer higher peak capacity while flow modulators provide robustness and easier implementation [16].

Temperature programming must be optimized to balance analysis time with resolution, typically employing slower heating rates in the first dimension (e.g., 1-5°C/min) to maintain separation integrity while allowing rapid second dimension cycles (2-8 seconds) [16]. Method development should include validation using representative casework samples to establish discrimination power and reproducibility under casework conditions.

Data Analysis and Interpretation

The complex three-dimensional data generated by GC×GC (¹tR × ²tR × intensity) requires specialized software for visualization and interpretation [16]. Contour plots with color-coded intensity provide the most intuitive visualization, with structured patterns of chemically related compounds enabling class-based identification [16]. Statistical comparison algorithms can facilitate objective comparison between evidentiary samples, though forensic practitioners must maintain familiarity with the underlying chromatographic patterns to effectively testify to results in legal proceedings.

Quantitation in GC×GC follows similar principles to conventional GC, with peak volume in the 2D space proportional to analyte amount [18] [20]. However, comprehensive quantification across complex mixtures may require specialized software capable of integrating peaks in both dimensions and managing possible partial coelution. The use of internal standards remains critical for quantitative accuracy, with deuterated analogs of target analytes providing the most reliable correction for analytical variability [18] [20].

Comprehensive Two-Dimensional Gas Chromatography represents a transformative analytical methodology for forensic chemistry, offering unprecedented resolution for complex evidence materials that defy conventional analysis. Through orthogonal separation mechanisms and modulation-based focusing, GC×GC provides enhanced peak capacity, improved sensitivity, and structured chromatographic patterns that facilitate compound identification and sample comparison. The applications in sexual lubricant analysis, automotive paint characterization, and tire rubber examination demonstrate the technique's potential to extract valuable investigative information from challenging evidence types. As forensic science continues to evolve toward more sophisticated analytical approaches, GC×GC stands poised to become an essential tool for forensic researchers and practitioners confronting increasingly complex evidentiary materials in criminal investigations.

The Growing Role of Artificial Intelligence and Machine Learning in Data Interpretation

The integration of Artificial Intelligence (AI) and Machine Learning (ML) is fundamentally transforming data interpretation across scientific domains. This technical guide examines the application of these technologies in two specialized fields: forensic chemistry and drug discovery. It details how ML models, particularly deep learning, are engineered to extract meaningful patterns from complex, high-dimensional datasets such as chromatographic signals and molecular structures. The document provides an in-depth analysis of experimental protocols, performance benchmarks, and the requisite computational tools. By framing this within the context of basic theory and new observational research in forensic chemistry, this review serves as a resource for researchers and scientists seeking to leverage AI for enhanced analytical precision and accelerated innovation.

Scientific discovery, particularly in fields reliant on complex instrumental analysis, is undergoing a profound transformation driven by AI and ML. Traditional analytical methods often struggle with the volume, variety, and veracity of data generated by modern instruments. Machine learning, a subset of AI, provides a suite of tools that can parse this data, learn from it, and make determinations or predictions about future states [21]. This is especially true for deep learning, a modern reincarnation of artificial neural networks, which uses sophisticated, multi-level deep neural networks (DNNs) to perform feature detection from massive amounts of training data [21].

In forensic chemistry, this shift is moving analysis away from labor-intensive, subjective tasks toward automated, data-driven systems. For example, the comparison of complex samples like diesel oils, known as "oil fingerprinting," is a prime candidate for ML augmentation due to its subjective and time-consuming nature [22]. Similarly, in drug discovery and development, ML approaches are being deployed to improve decision-making across a pipeline that is notoriously long, costly, and prone to failure, with an overall success rate from phase I clinical trials to drug approvals as low as 6.2% [21]. The core strength of ML lies in its ability to generalize from training data to new, unseen data, enabling it to tackle problems where a large amount of data and numerous variables exist, but a definitive model relating them is unknown [21].

Core Machine Learning Architectures for Scientific Interpretation

The predictive power of any ML system is contingent upon its architecture and the quality of the data it processes. Below are key architectures relevant to forensic and pharmaceutical analysis.

Convolutional Neural Networks (CNNs): These architectures feature hidden layers that are only locally connected to the next layer, allowing them to hierarchically compose simple local features into complex models. They excel in processing structured data like images, spectra, and chromatograms [21]. A specific application is in forensic source attribution using raw chromatographic signals [22].

Generative Adversarial Networks (GANs): GANs consist of two networks—a generator and a discriminator—that are trained simultaneously through adversarial processes. The generator creates new data instances, while the discriminator evaluates them for authenticity. This architecture is particularly powerful for de novo molecular design in drug discovery, generating novel chemical structures with desired properties [21] [23].

Deep Autoencoder Neural Networks (DAENs): This is an unsupervised learning algorithm that applies backpropagation to project its input to its output. Its primary purpose is dimensionality reduction, aiming to preserve essential variables in the data while discarding non-essential parts, which is crucial for analyzing high-dimensional 'omics' data [21].

Table 1: Key Machine Learning Architectures for Scientific Data Interpretation

| Architecture | Primary Learning Type | Key Strength | Exemplary Application in Scientific Research |

|---|---|---|---|

| Convolutional Neural Network (CNN) | Supervised, Unsupervised | Feature detection from structured, grid-like data | Interpreting raw gas chromatographic data for forensic oil attribution [22]. |

| Generative Adversarial Network (GAN) | Unsupervised | Generating novel, realistic data instances | De novo design of drug-like molecules and chemical structures [21] [24]. |

| Deep Autoencoder (DAEN) | Unsupervised | Dimensionality reduction and feature learning | Extracting meaningful features from high-dimensional genomic or proteomic data [21]. |

| Recurrent Neural Network (RNN) | Supervised | Modeling sequential and time-series data | Analyzing dynamic biological processes and text-based data from scientific literature. |

| Graph Convolutional Network | Supervised | Learning from graph-structured data | Predicting drug-target interactions and polypharmacy effects within biological networks [21] [24]. |

AI in Forensic Chemistry: A Case Study on Source Attribution

Experimental Protocol: ML for Chromatographic Data

The following protocol is derived from a study comparing an ML approach with traditional methods for the forensic source attribution of diesel oil samples using gas chromatography – mass spectrometry (GC/MS) data [22].

1. Problem Formulation & Hypotheses:

- Objective: To determine if a questioned sample (e.g., from a crime scene) and a reference sample originate from the same source.

- Competing Hypotheses:

- H1: The samples originate from the same source (e.g., a shared container or tank).

- H2: The samples originate from different sources [22].

2. Data Collection & Chemical Analysis:

- Samples: 136 diesel oil samples were obtained from Swedish gas stations and refineries.

- Sample Preparation: Each oil sample was diluted with approximately 7 mL of dichloromethane, transferred to a GC vial.

- Instrumentation: Analysis was performed using an Agilent 7890 A GC coupled with an Agilent 5975C mass spectrometry detector.

- Chromatogram Generation: The total ion chromatogram (TIC) was used for subsequent analysis, resulting in a one-dimensional vector of intensity measurements per sample [22].

3. Data Preprocessing for ML:

- Alignment: The start and end of the chromatographic run were defined, and the baseline was corrected.

- Normalization: Each chromatogram was normalized to a total signal of 1 to mitigate the influence of the total amount of analyte.

- Feature Extraction (for benchmark models): For traditional statistical models, ten peak height ratios were selected by a forensic expert to represent the chemical profile [22].

4. Model Training & Evaluation Framework:

- Models Evaluated:

- Model A (Experimental CNN model): A score-based model using feature vectors extracted from a CNN trained on the raw chromatographic signal.

- Model B (Benchmark model): A score-based statistical model using similarity scores from ten selected peak height ratios.

- Model C (Benchmark model): A feature-based statistical model operating in a three-dimensional space of three peak height ratios [22].

- Evaluation Metric: The Likelihood Ratio (LR) framework was employed to quantitatively assess the strength of evidence for H1 versus H2. The validity and performance of the LR systems were evaluated using metrics like the log-likelihood ratio cost (Cllr) and Tippett plots [22].

Performance Benchmarking

The performance of the three models was quantitatively assessed, yielding the following results [22]:

Table 2: Performance Comparison of LR Models for Diesel Oil Attribution

| Model | Model Type | Data Representation | Median LR for H1 (Same Source) | Key Performance Finding |

|---|---|---|---|---|

| Model A (CNN) | Score-based ML | Raw chromatographic signal | ~1800 | Showed potential but was the least forensically valid under the tested metrics. |

| Model B (Peak Ratio) | Score-based Statistical | 10 expert-selected peak ratios | ~180 | Provided relatively well-calibrated LRs but lower evidential strength. |

| Model C (Peak Ratio) | Feature-based Statistical | 3 expert-selected peak ratios | ~3200 | Produced the strongest evidence for same-source samples and was the most forensically valid. |

The study concluded that while the CNN model demonstrated promise, the feature-based statistical model (C) outperformed it in validity on this specific dataset. This highlights a critical point in applied ML: a simpler, well-understood model can sometimes outperform a more complex one, especially when data is limited. The authors noted that the CNN's performance could potentially be improved with a larger training dataset [22].

Workflow Visualization: AI-Assisted Forensic Source Attribution

The following diagram illustrates the logical workflow and data progression for a machine learning-based forensic source attribution analysis.

AI in Drug Discovery and Development: From Target to Candidate

Experimental Protocol: ML for Molecular Property Prediction

A critical task in drug discovery is predicting the properties of a molecule, such as its bioactivity, toxicity, and solubility, thereby prioritizing the most promising candidates for synthesis and testing.

1. Problem Formulation:

- Objective: To accurately predict the properties of a novel chemical compound from its structure.

- Input: A representation of a molecule (e.g., SMILES string, molecular graph).

- Output: A predicted value for a specific property (e.g., IC50, solubility logS) or a classification (e.g., toxic/non-toxic) [24].

2. Data Curation:

- Data Sources: Large-scale chemical databases (e.g., ChEMBL, PubChem) and proprietary assay data from pharmaceutical companies.

- Data Characteristics: The practice of ML consists of at least 80% data processing and cleaning. Data must be accurate, curated, and as complete as possible to maximize predictability [21].

- Featurization: Molecules are converted into a machine-readable format. This can be:

3. Model Selection and Training:

- Algorithms:

- Deep Representation Learning: Graph neural networks automatically learn informative representations (embeddings) of molecules that capture their structural and functional characteristics [24].

- Transformers: Models originally developed for natural language processing have been adapted to process SMILES strings, treating them as a "chemical language" to predict molecular interactions [24].

- Training Loop: The model is trained on a dataset of molecules with known properties. The weights of the model are iteratively adjusted to minimize the difference between the predicted and actual property values.

- Validation: To avoid overfitting, techniques like k-fold cross-validation are used, where the data is partitioned into training and validation sets multiple times to ensure the model generalizes well [21].

4. Model Deployment and Active Learning:

- Virtual Screening: The trained model is used to screen vast virtual libraries of molecules (containing millions to billions of compounds) to identify those with the highest predicted activity and favorable properties.

- Iterative Learning: The top-ranked compounds are synthesized and tested experimentally. This new data is then fed back into the model to refine its predictions in an active learning cycle [25] [24].

Performance and Market Landscape

The impact of AI on drug discovery is reflected in both its technical achievements and its growing economic footprint.

Table 3: AI in Drug Discovery: Selected Use Cases and Funding (2024-2025)

| AI Application Area | Specific Task | Exemplary Tool/Company | Key Outcome/Investment |

|---|---|---|---|

| Target Identification | Mining genomic/proteomic data to identify new drug targets. | Various AI platforms | Speeds up target identification and validation through simulation of biological interactions [23]. |

| Molecular Design | De novo design of novel drug candidates. | DeepMind's AlphaFold, Generate:Biomedicines | Generation of chemical structures with desired efficacy and safety profiles [23] [25]. |

| Virtual Screening | Analyzing millions of compounds in silico. | AI software platforms | Reduces reliance on physical high-throughput screening, saving time and resources [23] [25]. |

| Protein Structure Prediction | Predicting 3D protein structures from amino acid sequences. | AlphaFold (Isomorphic Labs) | Revolutionized the field; creators secured a $600M Series A for Isomorphic Labs in 2025 [25]. |

| Clinical Trial Recruitment | Identifying qualified patients and trial sites. | FormationBio, HUMA, Deep6 AI | Optimizes patient recruitment and site selection to accelerate clinical research [25]. |

Venture capital funding for AI-driven drug discovery grew 27% in 2024, reaching $3.3 billion, signaling strong investor confidence. However, a key challenge remains clinical efficacy; while several AI-discovered drugs are in trials, most have faced disappointments in Phase II, underscoring that AI-based discovery is still maturing and must prove it can yield therapeutics that succeed in late-stage trials [25].

Workflow Visualization: AI-Driven Drug Discovery Pipeline

The following diagram outlines the key stages and iterative feedback loops of a modern, AI-enhanced drug discovery pipeline.

The Scientist's Toolkit: Essential Research Reagents and Materials

The implementation of AI-driven research relies on a foundation of both computational and laboratory-based resources. The following table details key solutions and materials used in the experiments and fields cited.

Table 4: Essential Research Reagent Solutions and Computational Tools

| Item Name | Type | Function / Application | Relevant Field |

|---|---|---|---|

| Dichloromethane | Chemical Solvent | Used for diluting diesel oil samples prior to GC/MS analysis to prepare them for instrumental measurement. | Forensic Chemistry [22] |

| Gas Chromatograph-Mass Spectrometer (GC/MS) | Analytical Instrument | Separates complex mixtures (GC) and identifies individual components based on their mass-to-charge ratio (MS). Generates the primary data for analysis. | Forensic Chemistry [22] |

| TensorFlow / PyTorch | Programmatic Framework | Open-source libraries used for building, training, and deploying deep learning models (e.g., CNNs, GANs). | General AI / Drug Discovery [21] |

| Therapeutics Data Commons (TDC) | Data Resource | A curated collection of datasets for a wide range of drug discovery tasks, facilitating benchmarking and model development. | Drug Discovery [24] |

| Molecular Graphs | Data Structure | A representation of a molecule where atoms are nodes and bonds are edges; the input structure for graph neural networks. | Drug Discovery [21] [24] |

| Graph Convolutional Network (GCN) | Algorithm | A type of neural network designed to operate directly on graph structures, ideal for learning from molecular graphs. | Drug Discovery [21] |

| Generative Adversarial Network (GAN) | Algorithm | A framework for training generative models to create novel molecular structures with optimized properties. | Drug Discovery [21] [24] |

The integration of AI and ML into data interpretation marks a definitive shift toward a more predictive and data-driven scientific paradigm. In forensic chemistry, these tools offer a path to more objective, efficient, and quantifiable analyses of complex evidence, as demonstrated by the rigorous LR-based evaluation of chromatographic data. In drug discovery, they provide a powerful means to navigate the vast complexity of biological systems and chemical space, potentially de-risking and accelerating the development of new therapies. The successful application of these technologies, however, hinges on a synergistic relationship between machine intelligence and human expertise. Challenges such as model interpretability, data quality, algorithmic bias, and the need for robust validation frameworks must be actively addressed. As these fields continue to evolve, the collaboration between domain scientists and AI researchers will be paramount in unlocking the full potential of machine learning to not only interpret data but to generate novel scientific insights.