NCFS Validation Guidelines: Advancing Scientific Rigor in Forensic Method Development

This article examines the enduring impact of the National Commission on Forensic Sciences (NCFS) validation framework on scientific practice.

NCFS Validation Guidelines: Advancing Scientific Rigor in Forensic Method Development

Abstract

This article examines the enduring impact of the National Commission on Forensic Sciences (NCFS) validation framework on scientific practice. It explores the foundational principles of forensic method validation, current implementation methodologies through OSAC standards, strategies for addressing reliability challenges, and comparative analysis with international standards like ISO 21043. Designed for researchers and development professionals, this resource provides practical guidance for integrating rigorous validation protocols that meet evolving legal and scientific expectations for reliability and error rate assessment.

The NCFS Legacy: Building a Foundation of Scientific Rigor in Forensic Science

The 2009 National Academy of Sciences (NAS) report, formally titled "Strengthening Forensic Science in the United States: A Path Forward," represented a watershed moment for forensic science, delivering a critical assessment of the field's scientific foundations and triggering significant structural reforms [1] [2]. The report identified fundamental deficiencies in many forensic disciplines and recommended establishing a national scientific body to lead reform efforts, ultimately leading to the creation of the National Commission on Forensic Science (NCFS) in 2013 [1] [2]. This document examines the historical context of the NAS Report and the NCFS, detailing their roles in advancing validation guidelines and the subsequent evolution of forensic science standards.

The NAS Report emerged from growing concerns about the ability of the criminal justice system to analyze evidence efficiently and fairly [3]. Prior studies had highlighted issues with forensic science infrastructure and delivery, but the 2009 report provided the most comprehensive analysis, concluding that "with the exception of nuclear DNA analysis ... no forensic method has been rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [2]. This stark finding underscored the urgent need for scientific validation across forensic disciplines.

The 2009 NAS Report: A Critical Assessment

Key Findings and Recommendations

The NAS Report provided a systematic evaluation of forensic science disciplines, highlighting significant variations in their scientific foundations. The report identified that many traditional forensic methods—including fingerprint analysis, bite-mark comparisons, and toolmark examination—had been developed primarily within law enforcement communities rather than through scientific research [2]. This historical development path meant these methods matured largely outside mainstream scientific culture, lacking proper validation, error rate characterization, and established reliability measures.

The report's authors noted a particular concern regarding the institutional relationships within forensic science, observing that "almost all publicly funded [forensic] laboratories, whether federal, state, or local, are associated with law enforcement. At the very least, this creates a potential conflict-of-interest" [2]. This close association potentially compromised the independence and objectivity of forensic analysis, necessitating structural reforms.

Table: Key Recommendations from the 2009 NAS Report

| Recommendation Area | Specific Action Proposed | Intended Outcome |

|---|---|---|

| Research Foundation | Establish a national research program focused on forensic science | Develop empirical bases for forensic methods |

| Standardization | Develop standardized terminology and reporting procedures | Improve consistency across laboratories and experts |

| Independence | Create an independent federal entity to oversee forensic science | Separate science from law enforcement influences |

| Accreditation | Implement mandatory laboratory accreditation and practitioner certification | Ensure quality standards across the profession |

| Education | Enhance educational programs in forensic science disciplines | Improve scientific rigor among future practitioners |

Impact on Forensic Science Community

The NAS Report fundamentally challenged the status quo in forensic science, forcing the community to confront methodological limitations that had previously been overlooked. The report catalyzed a reevaluation of long-accepted practices, particularly for pattern-matching disciplines that relied on subjective interpretation rather than quantitative, statistically validated approaches [2]. This reckoning was partly driven by the demonstrated reliability of DNA analysis, which had overturned numerous convictions based on less precise methods and highlighted the need for rigorous scientific validation across all forensic disciplines [2].

The report's impact extended beyond technical considerations to address broader systemic issues, noting that "forensic science research is [overall] not well supported" and that funding opportunities were "extremely limited" compared to other scientific fields [3]. This inadequate research infrastructure hampered efforts to improve methodological validity and reliability.

Establishment of the National Commission on Forensic Science (NCFS)

Formation and Mandate

In response to the NAS Report's recommendations, the U.S. Department of Justice (DOJ) and the National Institute of Standards and Technology (NIST) announced the formation of the National Commission on Forensic Science (NCFS) in February 2013 [1]. The commission brought together approximately 30 stakeholders from diverse backgrounds, including forensic science service providers, academic researchers, prosecutors, defense attorneys, judges, and other community leaders selected by the Attorney General [1].

The NCFS was charged with multiple responsibilities aimed at strengthening forensic science:

- Recommending priorities for standards development to enhance quality and reliability

- Reviewing guidance identified or developed by subject-matter experts across forensic disciplines

- Developing policy recommendations on critical issues such as minimum requirements for training, proficiency testing, and accreditation [1]

The commission's placement under the joint administration of DOJ and NIST represented a compromise, as the NAS Report had originally recommended establishing a fully independent entity outside DOJ to avoid potential conflicts of interest between law enforcement needs and scientific requirements [2] [3].

Operations and Achievements

During its operational period from 2013 to 2017, the NCFS served as a critical forum for dialogue between the forensic science community and the broader scientific research community. The commission worked to increase communication among academic scientists, judges, prosecutors, defense attorneys, and forensic practitioners [2]. This collaborative approach helped bridge traditional divides and fostered mutual understanding of the challenges facing forensic science.

The NCFS made progress in developing recommendations for standardizing practices and improving the scientific underpinnings of various forensic disciplines. Commissioners recognized that addressing methodological questions could potentially improve both forensic techniques and the overall justice system [2]. The commission's work helped establish a framework for evaluating forensic methods based on scientific principles rather than historical precedent or legal acceptance alone.

Termination of NCFS and Transition to OSAC

Demise of the Commission

In 2017, the DOJ decided not to renew the NCFS charter, effectively terminating the commission [2]. This decision occurred following the 2016 presidential election and represented a significant shift in the federal government's approach to forensic science reform. The termination drew criticism from scientific members of the commission, who viewed the NCFS as making meaningful progress in addressing the deficiencies identified in the NAS Report [2].

In a 2018 editorial published in the Proceedings of the National Academy of Sciences, former NCFS members from institutions including Johns Hopkins University, West Virginia University, Cornell University, and other research institutions decried the lack of scientific validation in many forensic methods [2]. They noted that "many of the forensic techniques used today to put people in jail have no scientific backing" and expressed concern that the termination of NCFS would hinder ongoing reform efforts [2].

Legacy and Continued Standardization Efforts

Despite its termination, the NCFS established an important foundation for ongoing forensic science improvement efforts. The commission's work highlighted the necessity of empirical testing for all forensic methods admissible in court and reinforced the need for characterizing uncertainties in forensic conclusions [2]. The NCFS also helped foster relationships between the traditional forensic science community and academic researchers, creating networks that would continue to collaborate after the commission's dissolution.

Following the NCFS termination, the Organization of Scientific Area Committees (OSAC) for Forensic Science, administered by NIST, emerged as the primary entity driving standardization efforts in forensic science [4] [5]. OSAC maintains a public registry of approved standards and works to develop new standards through a consensus-based process involving hundreds of forensic science experts [4]. As of early 2025, the OSAC Registry contained 225 standards (152 published and 73 OSAC Proposed) representing over 20 forensic science disciplines [4].

Table: Current OSAC Registry Statistics (February 2025)

| Category | Count | Examples |

|---|---|---|

| Total Registry Standards | 225 | |

| Published Standards | 152 | ANSI/ASB Standard 017, Standard for Metrological Traceability in Forensic Toxicology |

| OSAC Proposed Standards | 73 | OSAC 2022-S-0032, Best Practice Recommendation for Chemical Processing of Footwear and Tire Impression Evidence |

| Disciplines Represented | 20+ | Digital Evidence, Forensic Toxicology, Anthropology, Seized Drugs, Trace Materials |

Current State of Forensic Science Standards and Validation

Active Standardization Initiatives

The forensic science standardization landscape remains dynamic, with numerous standards currently under development through Standards Development Organizations (SDOs) including the ASTM International and the Academy Standards Board (ASB) [4] [5]. As of February 2025, there were 16 forensic science standards open for public comment across various disciplines, including medicolegal death investigation, forensic document examination, firearms and toolmarks, forensic toxicology, ignitable liquids, explosives, gunshot residue, and trace materials [4].

Recent publications demonstrate the continuing evolution of forensic science standards, with new and revised documents addressing emerging needs and methodological improvements. Examples include ANSI/ASB Standard 056, Standard for Evaluation of Measurement Uncertainty in Forensic Toxicology (1st Edition, 2025), and ANSI/ASB Best Practice Recommendation 007, Postmortem Impression Submission Strategy for Comprehensive Searches of Essential Automated Fingerprint Identification System Databases (2nd Edition, 2024) [4] [5].

Implementation Challenges and Research Needs

Despite progress in standards development, implementation challenges persist. The forensic science community continues to face resource constraints and variations in practices across different jurisdictions [3]. Stable and adequate funding for forensic science research remains elusive, with the National Institute of Justice (NIJ) continuing to serve as the primary but limited federal source for forensic science research funding [3].

Recent efforts have focused on improving implementation tracking through the OSAC Registry Implementation Survey, which had garnered contributions from 226 forensic science service providers as of February 2025 [4]. These data collection initiatives aim to better understand how standards are being adopted and identify areas where additional support or guidance may be needed. The ongoing development of standards for emerging areas, such as digital evidence (e.g., SWGDE Recommendations for Cell Site Analysis) and forensic genetics (e.g., Standard for DNA-based Taxonomic Identification in Forensic Entomology), demonstrates the field's continuing evolution [5].

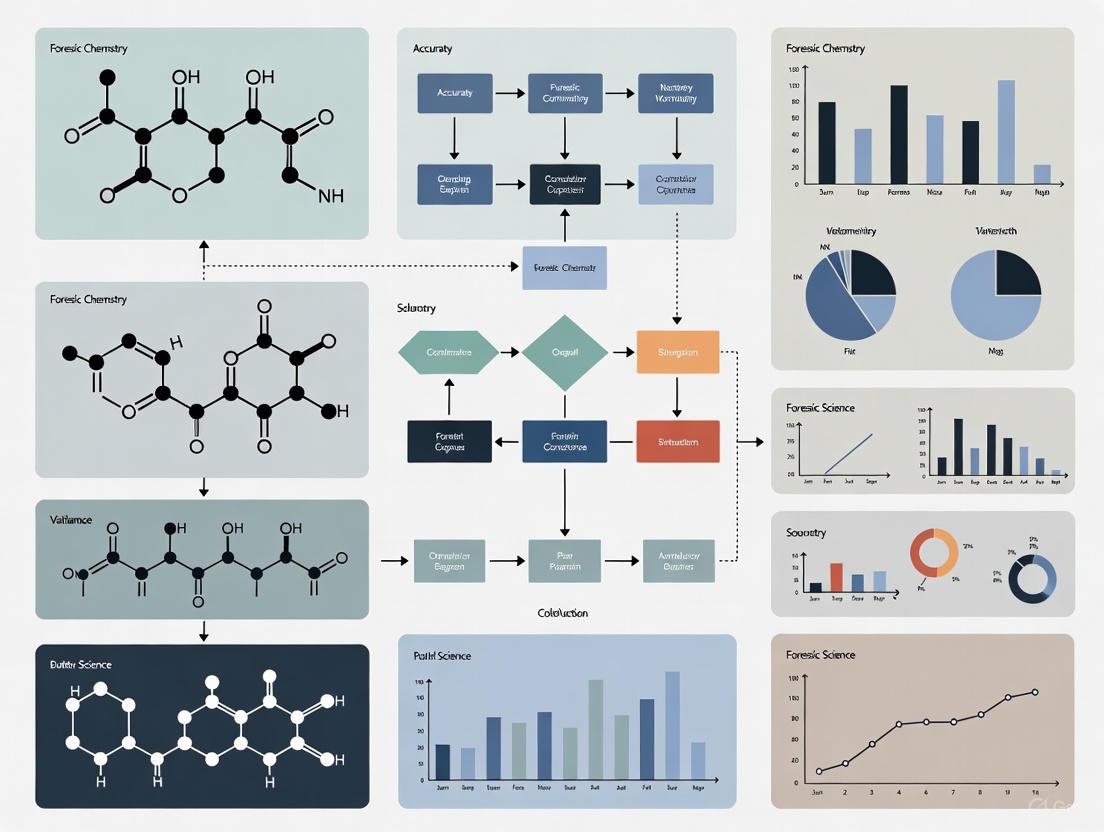

Diagram: The historical progression of forensic science reform shows key milestones from the 2009 NAS Report through the NCFS era to current OSAC-led standardization efforts.

Research Reagents and Materials

Table: Essential Resources for Forensic Science Validation Research

| Resource Category | Specific Examples | Research Application |

|---|---|---|

| Reference Materials | Certified DNA standards, Controlled substance reference materials, Trace evidence reference sets | Method validation, Proficiency testing, Instrument calibration |

| Technical Standards | OSAC Registry standards, ASTM forensic standards, ASB best practice recommendations | Protocol standardization, Quality assurance, Method verification |

| Data Resources | GenBank for taxonomic assignment, Digital evidence reference datasets, Population frequency databases | Comparative analysis, Statistical validation, Error rate determination |

| Quality Control Tools | Uncertainty measurement protocols, Validation frameworks, Accreditation requirements | Quality management, Technical oversight, Continuous improvement |

The historical trajectory from the 2009 NAS Report through the establishment and termination of the NCFS represents a pivotal period in forensic science reform. While the NAS Report provided a comprehensive critique of forensic methodologies and recommended substantial structural changes, the NCFS represented an initial attempt to implement those recommendations through a collaborative, multi-stakeholder approach. The termination of NCFS in 2017 disrupted this formal reform process but did not eliminate the ongoing need for scientific validation and standardization in forensic practice.

Current efforts led by OSAC and various SDOs continue to advance the standardization agenda, with significant progress evident in the growing number of registry standards and increased participation from forensic science service providers in implementation surveys. However, fundamental challenges remain, including the need for stable research funding, continued methodological validation, and the elimination of cognitive and contextual biases in forensic practice. The scientific community's engagement remains essential to ensuring that forensic science methods meet appropriate standards of validity and reliability, thereby supporting the administration of justice.

The National Commission on Forensic Science (NCFS) was established to advance the field of forensic science and make recommendations to the Attorney General on ensuring reliable and scientifically valid evidence in criminal investigations [6]. This independent advisory body brought together forensic science practitioners, academic researchers, prosecutors, defense attorneys, and other stakeholders to address critical issues in forensic science. The Commission's work has been instrumental in shaping policies that emphasize scientific validity, robust accreditation, and empirical validation of forensic methods.

The NCFS emerged in response to growing concerns about the reliability of various forensic disciplines highlighted in landmark reports from the National Academy of Sciences (NAS), the President's Council of Advisors on Science and Technology (PCAST), and the American Association for the Advancement of Science (AAAS) [7]. These reports consistently found that many widely used forensic disciplines lacked sufficient scientific validation, with some methods having no empirical basis for their foundational claims [7]. Within this context, the NCFS developed guidelines focusing on two cornerstone principles: mandatory accreditation of forensic laboratories and rigorous empirical validation of forensic methods.

Accreditation Requirements for Forensic Laboratories

Department of Justice Accreditation Policies

The U.S. Department of Justice (DOJ) implemented groundbreaking policies requiring all department-run forensic laboratories to obtain and maintain accreditation from recognized accrediting bodies [6]. This policy emerged directly from NCFS recommendations and represents a significant step toward standardizing quality practices across federal forensic operations. The DOJ mandate established a five-year timeline for full implementation, requiring that by 2020, all department forensic labs at agencies including the ATF, DEA, and FBI maintain accreditation [6]. These agencies were already accredited at the time of the policy announcement, but the new requirement ensured ongoing compliance with accreditation standards.

The DOJ also instituted a complementary policy requiring department prosecutors to use accredited forensic laboratories for evidence processing "when practicable" [6]. This requirement extends the quality assurance principles of accreditation to the entire investigative process and creates market-based incentives for non-federal laboratories to seek accreditation. The Executive Office for U.S. Attorneys was tasked with developing implementation guidance to ensure consistent application of this policy across all federal jurisdictions [6].

Grant Funding Incentives for Accreditation

To encourage broader adoption of accreditation standards beyond federal laboratories, the DOJ implemented strategic changes to its grant funding mechanisms [6]. These changes create both direct and indirect incentives for state and local forensic laboratories to pursue accreditation:

Explicit Allowance for Accreditation Costs: Solicitations for Edward Byrne Memorial Justice Assistance Grant funding and Paul Coverdell Forensic Science Improvement Grant funding were revised to explicitly state that applicants could use these funds to seek accreditation [6]. This clarification addressed previous uncertainties about permissible uses of grant funds.

Preferential Treatment for Accreditation-Seeking Labs: Discretionary grant programs administered by the Office of Justice Programs were modified to give competitive preference to laboratories using grant money to obtain accreditation [6]. These applicants receive a "plus factor" that increases their likelihood of receiving funding.

Components of Forensic Laboratory Accreditation

Accreditation serves as an external validation of a forensic laboratory's competence and adherence to established standards. The process involves comprehensive assessment of multiple laboratory components [6]:

Table: Key Components of Forensic Laboratory Accreditation

| Component | Assessment Focus | Standards Reference |

|---|---|---|

| Staff Competence | Education, training, certification, proficiency testing | ISO/IEC 17025:2017 |

| Method Validation | Scientific validity, reliability, error rate determination | FBI Quality Assurance Standards |

| Test Methods | Appropriateness, standardization, documentation | ATF Forensic Science Policy |

| Equipment Management | Calibration, maintenance, documentation | DEA Laboratory Division Procedures |

| Testing Environment | Contamination prevention, environmental controls | ANSI/ASQ Standard Z1.4 |

| Quality Assurance | Data review, corrective actions, continuous improvement | NIST Forensic Science Standards |

Empirical Validation of Forensic Methods

The Scientific Foundation Requirement

The NCFS principles emphasize that empirical evidence forms the only reliable foundation for establishing the scientific validity of forensic methods [7]. This requirement addresses fundamental questions about whether forensic disciplines are based on scientifically valid principles and whether they can produce reliable results when applied in casework. The 2016 PCAST report particularly emphasized the necessity of "well-designed empirical studies" to demonstrate validity, especially for methods relying on subjective examiner judgments [7].

The push for empirical validation represents a significant shift from traditional approaches that often relied on practical experience, training, and professional judgment as primary indicators of reliability [7]. While these factors remain important for competent practice, they are now considered insufficient without supporting empirical evidence of methodological validity. This evolution reflects the increasing scientific rigor expected in forensic practice and aligns with standards long established in other scientific fields.

Current State of Empirical Validation by Discipline

Different forensic disciplines vary considerably in their level of empirical validation. Research studies provide substantially different levels of support for various forensic methods:

Table: Empirical Validation Status of Forensic Disciplines

| Forensic Discipline | Level of Empirical Support | Key Studies | Error Rate Data |

|---|---|---|---|

| DNA Analysis of Single-Source Samples | Strong (Thousands of studies) | NAS Report, PCAST Report | Well-characterized |

| Latent Fingerprint Analysis | Moderate (Approximately 12 studies) | AAAS Report, PCAST Report | Emerging data showing higher than previously acknowledged rates |

| Firearms Toolmark Analysis | Limited | NAS Report, PCAST Report | Preliminary data from recent studies |

| Bitemark Analysis | None | NAS Report, AAAS Report | No empirical evidence |

Methodological Framework for Validation Studies

The NCFS framework emphasizes specific methodological requirements for validation studies to ensure they produce scientifically defensible results:

Study Design Protocols

Blind Testing Procedures: Implemented to minimize contextual bias where examiners may be influenced by extraneous case information [7]. Proper blinding requires that examiners analyze evidence without knowledge of reference samples, case details, or expected outcomes.

Sample Selection and Size: Requires appropriate sample sizes with statistical power to detect meaningful effects. Samples must represent the range of materials encountered in casework, including challenging specimens that test method limitations.

Error Rate Characterization: Studies must specifically design to measure both false positive and false negative rates under casework conditions [7]. This includes establishing criteria for inconclusive results and their impact on error rate calculations.

Data Analysis and Interpretation

Statistical Rigor: Application of appropriate statistical methods to quantify the strength of evidence and measure uncertainty. This includes using likelihood ratios rather than categorical statements when possible.

Cross-Laboratory Reproducibility: Validation through independent replication across multiple laboratories to establish generalizability of findings.

Contextual Bias Assessment: Specific testing to measure the impact of contextual information on examiner conclusions [7].

Implementation Framework and Visual Guide

Forensic Method Validation Workflow

The following diagram illustrates the comprehensive workflow for validating forensic methods according to NCFS principles:

Essential Research Reagents and Materials

Implementation of NCFS validation guidelines requires specific research materials and methodological tools:

Table: Essential Research Reagents and Methodological Tools

| Item | Function in Validation Studies | Application Examples |

|---|---|---|

| Standard Reference Materials | Provide ground truth for method validation | Known source samples for proficiency testing |

| Blind Proficiency Test Sets | Measure examiner performance without bias | Fabricated evidence samples with known sources |

| Statistical Analysis Software | Quantify error rates and confidence intervals | R, SPSS, or specialized forensic statistics packages |

| Context Management Protocols | Control access to potentially biasing information | Case information sequestration procedures |

| Digital Documentation Systems | Maintain chain of custody and data integrity | LIMS (Laboratory Information Management Systems) |

Technical Protocols for Empirical Validation

Blind Proficiency Testing Protocol

The following technical protocol provides a detailed methodology for implementing blind proficiency testing, a cornerstone of empirical validation under NCFS guidelines:

Objective: To assess the performance of forensic examiners and methods under conditions that approximate real casework while maintaining scientific controls for measuring accuracy and error rates [7].

Sample Preparation: Create test samples that represent the range of materials and difficulty levels encountered in casework. For pattern recognition disciplines (e.g., fingerprints, toolmarks), include known matches, known non-matches, and samples with varying quality and complexity.

Blinding Procedures: Incorporate test samples into the regular workflow without examiner awareness. This requires collaboration with evidence submission systems to create realistic case contexts without providing potentially biasing information [7].

Data Collection: Record all examiner conclusions including matches, exclusions, inconclusive determinations, and confidence statements. Document the time taken for analysis and any contextual factors that might influence results.

Statistical Analysis: Calculate false positive rates, false negative rates, and inconclusive rates with confidence intervals. Use appropriate statistical models to account for sample size and difficulty effects.

Method Validation Protocol for Subjective Disciplines

For forensic disciplines relying on subjective examiner judgment, the NCFS framework requires specific validation approaches:

Inter-Rater Reliability Assessment: Multiple examiners independently analyze the same set of samples to measure consistency in conclusions. Calculate agreement statistics such as Cohen's kappa to quantify reliability beyond chance agreement.

Intra-Rater Reliability Assessment: The same examiners re-analyze the same samples after a sufficient time interval to measure within-examiner consistency.

Source of Difficulty Analysis: Identify specific sample characteristics that contribute to examiner disagreement or errors. Use this information to refine methods and training protocols.

Decision Threshold Calibration: Establish quantitative or qualitative criteria for decision boundaries between match, non-match, and inconclusive determinations.

The NCFS principles of accreditation and empirical validation represent a fundamental shift toward more scientifically rigorous forensic practice. The implementation of these principles through DOJ policies has created a framework for continuous improvement in forensic science [6]. However, full implementation across all forensic disciplines remains a work in progress, with significant variation in the current state of validation across different methods [7].

The ongoing development of empirical evidence for forensic methods requires sustained collaboration between forensic practitioners, academic researchers, and funding agencies. The establishment of the interagency working group on medico-legal death investigation exemplifies this collaborative approach [6]. As empirical studies continue to emerge, the scientific foundation of forensic science will strengthen, enhancing the reliability and validity of forensic evidence in the justice system.

For researchers and practitioners, the NCFS framework provides a roadmap for developing, validating, and implementing forensic methods that meet contemporary scientific standards. By adhering to these principles, the forensic science community can address historical limitations while building a more robust scientific foundation for future practice.

The establishment of the Organization of Scientific Area Committees (OSAC) for Forensic Science in 2014 under the National Institute of Standards and Technology (NIST) administration marked a pivotal transition in the United States' approach to forensic science standardization [8]. This transition addressed a critical lack of discipline-specific forensic science standards through a transparent, consensus-based process involving over 800 volunteer members and affiliates with expertise across 19 forensic disciplines [8]. OSAC represents the operational realization of the standardization mission, strengthening the nation's use of forensic science by facilitating the development and promoting the implementation of high-quality, technically sound standards that define minimum requirements, best practices, and standard protocols to ensure reliable and reproducible forensic analysis [8].

The OSAC Framework and Process

Architectural Structure and Workflow

OSAC functions through a sophisticated architectural framework designed to ensure rigorous standard development. The process begins with identification of standards needs and gaps, proceeds through drafting and technical review, and culminates in placement on the OSAC Registry following a transparent consensus-based process that actively encourages feedback from forensic science practitioners, research scientists, human factors experts, statisticians, legal experts, and the public [9]. Placement on the Registry requires a consensus (as evidenced by 2/3 vote or more) of both the OSAC subcommittee that proposed the inclusion of the standard and the Forensic Science Standards Board [9].

The following workflow diagram illustrates the comprehensive process standards undergo from development to implementation:

Registry Composition and Standard Types

The OSAC Registry maintains two distinct categories of standards, each serving a specific purpose in the ecosystem of forensic science standardization. SDO-published standards have completed the formal consensus process of an external standards developing organization (SDO) and have been approved by OSAC for placement on the Registry [9]. OSAC Proposed Standards represent drafted standards that have undergone OSAC's technical and quality review process but are still undergoing development and publication through an SDO [9]. These proposed standards help fill critical gaps while the SDO completes its often lengthy development process.

Table: Current OSAC Registry Composition (February 2025 Data)

| Standard Type | Count | Percentage | Purpose |

|---|---|---|---|

| SDO-Published Standards | 152 | 67.6% | Completed consensus process through external SDOs |

| OSAC Proposed Standards | 73 | 32.4% | Fill gaps during SDO development process |

| Total Registry Standards | 225 | 100% | Covering 20+ forensic disciplines |

Source: OSAC Standards Bulletin, February 2025 [4]

Quantitative Analysis of OSAC Registry Growth

Temporal Development and Implementation Impact

The OSAC Registry has demonstrated substantial growth and evolving impact since its inception. Recent data reveals consistent expansion, with the Registry growing from 216 standards in January 2025 to 225 standards by February 2025 [5] [4]. This growth trajectory underscores OSAC's active standardization efforts across diverse forensic disciplines. Implementation tracking has similarly shown remarkable progress, with 224 Forensic Science Service Providers (FSSPs) having contributed implementation survey data by the end of 2024, representing an increase of 72 new contributions over the previous calendar year [5].

Table: Recent Additions to OSAC Registry (January 2025)

| Standard Number | Title | Subcommittee | Type |

|---|---|---|---|

| ANSI/ASB Standard 180 | Standard for the Use of GenBank for Taxonomic Assignment of Wildlife | Wildlife Forensic Biology | SDO-Published |

| 17-F-001-2.0 SWGDE | Recommendations for Cell Site Analysis | Digital/Multimedia | SDO-Published |

| OSAC 2022-S-0032 | Best Practice Recommendation for the Chemical Processing of Footwear and Tire Impression Evidence | Crime Scene Investigation | OSAC Proposed |

| OSAC 2022-S-0037 | Standard for DNA-based Taxonomic Identification in Forensic Entomology | Wildlife Forensic Biology | OSAC Proposed |

| OSAC 2024-S-0012 | Standard Practice for the Forensic Analysis of Geological Materials by SEM/EDX | Trace Materials | OSAC Proposed |

Source: OSAC Standards Bulletin, January 2025 [5]

Methodological Framework for Standard Development

Technical Review and Validation Protocols

The OSAC standard development process incorporates rigorous methodological frameworks to ensure technical validity and practical applicability. Each standard undergoes comprehensive evaluation through a multi-layered review process that examines scientific foundation, measurable requirements, and implementability across diverse operational environments. The technical review protocol actively identifies and addresses potential limitations, including concerns about "vacuous standards" that may set requirements too low to ensure scientifically valid results [10].

The validation methodology encompasses both pre- and post-registry phases. Pre-registry assessment includes evaluation of intra- and inter-laboratory validation studies, uncertainty quantification methodologies, and defined acceptance criteria. Post-registry implementation tracking utilizes structured surveys to monitor adoption rates, practical challenges, and methodological effectiveness across 224 participating forensic science service providers [5]. This continuous feedback mechanism enables iterative refinement and ensures standards remain current with technological advances and evolving best practices.

Interdisciplinary Collaboration Mechanisms

OSAC's effectiveness stems from its structured interdisciplinary collaboration framework that engages diverse stakeholders throughout the standard development lifecycle. The process incorporates specialized input from statistical experts on experimental design and data interpretation, human factors professionals on cognitive biases and procedural safeguards, legal scholars on admissibility considerations, and research scientists on technical validity [9]. This collaborative model ensures standards are scientifically rigorous, legally defensible, and practically implementable.

Public commentary periods represent a critical component of this collaborative framework, providing opportunities for broader community engagement and critique. For example, the February 2025 OSAC bulletin documented 16 forensic science standards open for public comment across multiple SDOs, including disciplines such as medicolegal death investigation, forensic document examination, firearms and toolmarks, and forensic toxicology [4]. This transparent review process helps identify potential limitations and strengthens the technical foundation of proposed standards.

Research and Implementation Toolkit

Successful implementation of OSAC standards requires access to specialized resources and methodological tools. The following table details critical components of the research and implementation toolkit for forensic science standardization:

Table: Essential Research Reagent Solutions for Standards Implementation

| Resource Category | Specific Examples | Function in Standards Implementation |

|---|---|---|

| Reference Materials | ASTM E3307-24 Collection Materials | Provide validated materials for implementing standard practices for organic gunshot residue collection [9] |

| Analytical Tools | WebAIM Color Contrast Checker | Enable verification of compliance with accessibility standards for visual presentation of data [11] |

| Documentation Frameworks | ANSI/ASB Standard 127-22 | Guide proper preservation and examination procedures for charred documents [9] |

| Taxonomic Databases | GenBank Database | Support standardized taxonomic assignment of wildlife through reference sequences [5] |

| Quality Assurance Protocols | ISO/IEC 17025:2017 | Establish foundational requirements for laboratory competence across forensic disciplines [5] |

Implementation Assessment Methodologies

The implementation impact of OSAC standards is quantified through systematic assessment methodologies deployed across forensic science service providers. These methodologies employ standardized survey instruments that measure both adoption rates and operational effectiveness. Key metrics include the number of laboratories implementing specific standards, modifications required for implementation, observed improvements in reliability and reproducibility, and identified operational challenges [4].

The implementation data reveal insightful patterns regarding standard utility and adoption barriers. For example, previously well-implemented standards such as ANSI/ASTM E2917-19a demonstrated how implementation tracking can identify when updated versions of standards require renewed implementation efforts [4]. This assessment framework provides valuable feedback for refining standards and developing implementation guidance that addresses practical operational constraints.

The transition to OSAC represents a significant evolution in forensic science standardization, establishing a robust framework for developing, reviewing, and implementing technically sound standards across diverse disciplines. The quantitative registry growth to 225 standards and documented implementation by hundreds of forensic science service providers demonstrates the substantial progress achieved through this systematic approach [4] [5]. As OSAC continues to address standards gaps and refine existing standards through its transparent, consensus-based process, it strengthens the scientific foundation of forensic practice and enhances the reliability and reproducibility of forensic analysis. This ongoing standardization mission critically supports the validity and admissibility of forensic evidence within the judicial system, fulfilling essential quality requirements emphasized in validation guidelines research.

Within the framework of the National Commission on Forensic Sciences (NCFS) validation guidelines, the rigorous assessment of forensic methods is paramount for a credible and scientifically sound criminal justice system. This guide details three interdependent core concepts—foundational validity, applied reliability, and error rates—that form the bedrock of this assessment. Foundational validity establishes whether a method is, in principle, capable of providing reliable results. Applied reliability examines whether the method is executed consistently and accurately in practice by forensic practitioners and laboratories. Error rates provide the empirical, quantitative measure of a method's performance, informing the scope and limitations of the conclusions that can be drawn from its results. Understanding these concepts and their interrelationships is essential for researchers, forensic scientists, and legal professionals dedicated to ensuring the scientific integrity of forensic evidence [12] [7].

Foundational Validity

Core Definition

Foundational validity is defined as the property of a forensic science method that has been empirically shown to be repeatable, reproducible, and accurate under conditions representative of its intended use [13] [14]. It is a prerequisite for a method's use in casework, answering the question: "Has this method been scientifically tested and shown to work?" [12].

The President’s Council of Advisors on Science and Technology (PCAST) emphasized that foundational validity is established through well-designed empirical studies, which are an "absolute requirement" [12]. It is a property of the specific method itself, not merely of the performance outcomes. A discipline can lack foundational validity even when examiners achieve accurate results if that success cannot be attributed to a clearly defined and consistently applied method that can be independently replicated [13].

Key Components and Evaluation Criteria

The evaluation of foundational validity rests on three pillars, derived from the PCAST and other scientific reports [12] [13] [14].

- Repeatability: The ability of the same examiner to obtain consistent results when repeatedly applying the method to the same evidence under the same conditions.

- Reproducibility: The ability of different examiners in different laboratories to obtain consistent results when applying the method to the same evidence.

- Accuracy: The ability of the method to produce correct results, as determined by comparison to a known ground truth, under conditions that reflect real-world casework.

The primary mechanism for establishing these components is through empirical testing, particularly black-box studies. These studies test the performance of examiners using the method in a manner that mimics real-world conditions, without altering the workflow or informing the participants that they are part of a study [13].

Table: Criteria for Foundational Validity Based on PCAST Guidelines

| Component | Definition | Method of Evaluation |

|---|---|---|

| Repeatability | Consistency of results from the same examiner. | Intra-examiner reliability studies. |

| Reproducibility | Consistency of results across different examiners. | Inter-examiner reliability studies. |

| Accuracy | The correctness of the conclusions. | Black-box studies with known ground truth. |

Applied Reliability

Core Definition

Applied reliability (often addressed in legal contexts as "reliability as applied") concerns whether a foundationally valid method has been reliably executed in a specific instance or by a specific laboratory [12] [7]. While foundational validity asks "Does the method work?", applied reliability asks "Was the method followed correctly in this case?" This concept aligns with the requirements of Federal Rule of Evidence 702(d), which mandates that "the expert has reliably applied the principles and methods to the facts of the case" [12].

Factors Influencing Applied Reliability

A method that is foundationally valid can still be applied unreliably in practice. Key factors that influence applied reliability include [12] [13] [7]:

- Adherence to Standard Operating Procedures (SOPs): The consistency with which a laboratory or examiner follows a defined, validated protocol.

- Practitioner Training and Proficiency: The ongoing training, qualification, and competency testing of individual forensic examiners.

- Context Management and Bias Mitigation: The implementation of procedures to minimize contextual bias, such as using linear sequential unmasking or ensuring that examiners are not exposed to extraneous information about the case that could influence their judgment.

- Quality Assurance and Control: The presence of robust laboratory protocols, including technical review, equipment calibration, and proficiency testing.

Error Rates

Core Definition and Importance

An error rate is an empirical estimate of how often a forensic method or practitioner produces an incorrect result. The Daubert ruling explicitly identified "the known or potential error rate" as a key factor for judges to consider when evaluating the admissibility of expert testimony [12]. Error rates provide a quantitative measure of uncertainty, which is critical for understanding the weight to be given to forensic evidence. They are essential for establishing both foundational validity (through method-level error rates) and applied reliability (through laboratory- or examiner-specific proficiency rates) [12] [7].

Error rates are not a single, universal number. They vary by discipline, the specific method used, and the conditions of the test. The most scientifically rigorous estimates come from black-box studies that reflect real-world conditions [13] [7].

Table: Forensic Science Error Rates and Foundational Validity Status (Post-PCAST)

| Forensic Discipline | Status of Foundational Validity (PCAST) | Key Empirical Studies & Estimated Error Rates |

|---|---|---|

| Single-Source DNA | Established | Considered valid; thousands of studies support its reliability [7]. |

| Latent Fingerprints | Established (with limitations) | Based on a handful of black-box studies. False positive rates are low but non-zero (e.g., 0.1% in one major study) [13]. |

| Firearms/Toolmarks | Lacking (as of 2016) | PCAST found insufficient black-box studies. Subsequent research is ongoing, with courts acknowledging newer studies [14] [7]. |

| Bitemark Analysis | Lacking | PCAST and NIST reviews found no scientific foundation for validity; is a source of wrongful convictions [14] [15]. |

| Complex DNA Mixtures | Established for limited contributors | Validity is accepted for mixtures of up to 3-4 contributors, with error rates provided by specific probabilistic genotyping software [14]. |

Interrelationship of Concepts: A Conceptual Workflow

The concepts of foundational validity, applied reliability, and error rates are hierarchically connected. The following diagram illustrates the logical pathway and dependencies between them in the validation and application of a forensic method.

Experimental Protocols for Validation

The Black-Box Study Design

The "gold standard" for estimating the accuracy and error rates of a forensic feature-comparison method is the black-box study [13] [7]. This design tests the performance of practicing forensic examiners under conditions that closely mimic real casework without the examiners knowing which cases are part of the study.

- Objective: To obtain an empirical estimate of the accuracy and error rates of a forensic discipline as it is practiced in the field.

- Methodology:

- Participant Recruitment: Practicing, active forensic examiners are recruited to participate.

- Stimulus Creation: A set of test samples is created with a known ground truth (e.g., known matches and non-matches). These samples should cover a range of difficulties and be representative of authentic casework materials.

- Blinded Administration: The tests are incorporated into the examiners' normal workflow without their knowledge that it is a test, or are presented as a blind proficiency test. Critically, examiners must be shielded from contextual information that could bias their judgment.

- Data Collection: Examiners submit their conclusions (e.g., identification, exclusion, inconclusive) for each sample.

- Data Analysis: Results are compared to the ground truth to calculate measures of accuracy, including false positive rates (declaring a match when there is none) and false negative rates (failing to declare a match when one exists).

Human Factors and Performance Testing

The National Institute of Standards and Technology (NIST) Organization of Scientific Area Committees (OSAC) provides detailed advice on designing human factors into validation and performance testing [16]. Key considerations include:

- Task Design: Ensuring the experimental tasks accurately reflect the cognitive and perceptual demands of actual casework.

- Context Management: Implementing protocols to control for contextual and confirmation bias, which are major threats to applied reliability.

- Representative Samples: Using a sufficient number and variety of samples to ensure results are generalizable to real-world conditions.

Table: Essential Resources for Forensic Science Validation Research

| Resource Category | Specific Example / Agency | Function & Purpose |

|---|---|---|

| Authoritative Reports | NAS (2009) & PCAST (2016) Reports | Provide critical evaluations of the scientific foundations of forensic disciplines and define key concepts like foundational validity [12] [13]. |

| Scientific Foundation Reviews | NIST Scientific Foundation Reviews (e.g., on bitemarks, DNA mixtures, firearms) | Independent, comprehensive studies that evaluate the validity, reliability, and error rates of specific forensic methods [15]. |

| Human Factors Guidance | OSAC Technical Series Publication on Human Factors | Provides a framework for designing, conducting, and reporting empirical studies on the accuracy of forensic examinations [16]. |

| Legal Database | Post-PCAST Court Decisions Database (NIJ) | Compiles court decisions assessing the admissibility of forensic evidence, illustrating how courts apply concepts of validity and reliability [14]. |

| Black-Box Studies | Peer-reviewed studies (e.g., Ulery et al., 2011; Hicklin et al., 2025) | Serve as the primary source of empirical data for estimating error rates and establishing foundational validity [13]. |

The admissibility of forensic evidence in United States courts has undergone a profound transformation over the past century, driven primarily by evolving legal standards and increasing scientific scrutiny. The landmark 1993 Supreme Court case Daubert v. Merrell Dow Pharmaceuticals established a new framework for evaluating expert testimony that has fundamentally reshaped how courts assess forensic evidence [17] [18]. This ruling emerged alongside two other critical developments: the advent of DNA profiling, which provided a scientifically rigorous identification method while revealing weaknesses in other forensic disciplines, and the influential 2009 National Research Council (NRC) report, "Strengthening Forensic Science in the United States: A Path Forward," which questioned the scientific validity of many traditional forensic methods [19] [20]. These developments have created an complex interface between law and science where judicial gatekeeping, scientific validation, and forensic practice continuously interact.

Within this context, the National Commission on Forensic Sciences validation guidelines represent a concerted effort to address identified deficiencies and establish rigorous scientific standards for forensic practice. The Daubert standard serves as the primary legal driver enforcing the implementation of these validation guidelines in courtroom proceedings. This technical guide examines the legal frameworks governing forensic evidence admissibility, detailed experimental protocols for forensic validation, quantitative measures of forensic reliability, and essential resources for researchers and practitioners working at the intersection of forensic science and judicial scrutiny.

Legal Evolution: From Frye to Daubert and Beyond

Historical Foundations and the Dawn of DNA

The legal standard for admitting scientific evidence originally centered on the 1923 Frye v. United States decision, which established that expert testimony must be based on principles that had "gained general acceptance in the particular field in which it belongs" [17] [20]. For decades, this standard governed the admissibility of forensic evidence, often resulting in courts deferring to the opinions of forensic practitioners themselves when determining general acceptance [19]. However, the advent of DNA evidence in the late 1980s fundamentally altered this landscape. DNA profiling differed from other forensic disciplines in that it was developed in academic settings rather than crime laboratories, employed rigorous statistical validation, and could demonstrate a high probability of either inclusion or exclusion [20].

The exonerations facilitated by the Innocence Project, many of which involved overturning convictions based on other forms of forensic evidence, demonstrated that traditional forensic methods could produce erroneous results [20] [21]. According to the National Registry of Exonerations, there have been over 3,000 documented wrongful convictions in the United States, with approximately half of the DNA exoneration cases involving unvalidated or improper forensic science [21]. This revelation shattered the previously unquestioned confidence in many forensic disciplines and created impetus for legal reform.

The Daubert Trilogy and Federal Rules of Evidence

The 1993 Daubert decision marked a paradigm shift in the admissibility standards for expert testimony. The Supreme Court ruled that the Federal Rules of Evidence, particularly Rule 702, had superseded the Frye standard [17] [18]. The Court assigned trial judges a "gatekeeping" responsibility to ensure that all expert testimony is not only relevant but also reliable [17]. The decision articulated five factors for evaluating scientific validity:

- Testability: Whether the theory or technique can be (and has been) tested

- Peer Review: Whether the method has been subjected to peer review and publication

- Error Rates: The known or potential error rate of the technique

- Standards: The existence and maintenance of standards controlling operation

- General Acceptance: The degree of acceptance within the relevant scientific community [17] [18]

The "Daubert trilogy" was completed by two subsequent cases: General Electric Co. v. Joiner (1997), which established an abuse-of-discretion standard for appellate review and emphasized the importance of analytical gaps between evidence and conclusions, and Kumho Tire Co. v. Carmichael (1999), which extended Daubert's gatekeeping requirements to all expert testimony, including non-scientific technical and specialized knowledge [17] [18].

Table 1: Evolution of Legal Standards for Forensic Evidence

| Legal Standard | Year Established | Key Principle | Primary Focus |

|---|---|---|---|

| Frye Standard | 1923 | "General acceptance" in the relevant scientific community | Consensus among practitioners |

| Federal Rules of Evidence | 1975 | Expert testimony must assist trier of fact | Relevance and helpfulness |

| Daubert Standard | 1993 | Scientific validity and reliability | Methodological rigor |

| Joiner Decision | 1997 | Analytical gap between evidence and conclusions | Reasoning process |

| Kumho Tire Decision | 1999 | Gatekeeping applies to all expert testimony | Non-scientific expertise |

In 2000, Rule 702 of the Federal Rules of Evidence was amended to codify the Daubert principles, explicitly requiring that expert testimony be based on sufficient facts and data, reliable principles and methods, and reliable application of those methods to the case [17]. As of 2025, the Daubert standard governs forensic evidence admissibility in federal courts and the majority of states, though some jurisdictions (including California, Illinois, and Pennsylvania) continue to adhere to the Frye standard or modified versions thereof [17].

The Impact of National Research Council and PCAST Reports

The 2009 NRC report delivered a comprehensive critique of forensic science, stating that "with the exception of nuclear DNA analysis, no forensic method has been rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [19] [22]. The report identified significant deficiencies across multiple forensic disciplines, including inadequate validation studies, insufficient quantitation of uncertainty, and lack of established reliability measures [19].

The 2016 President's Council of Advisors on Science and Technology (PCAST) report expanded on these findings, emphasizing alarmingly high error rates in certain forensic sciences and calling for more rigorous validation based on empirical studies [19] [20]. Together, these reports provided scientific support for defense attorneys challenging forensic evidence and increased pressure on courts to apply Daubert's reliability factors more stringently [19].

Contemporary Forensic Methodologies: Validation and Quantitative Frameworks

DNA Analysis and Probabilistic Genotyping

Forensic DNA analysis has evolved from simple visual comparisons to sophisticated probabilistic genotyping methods that compute likelihood ratios (LR) to quantify the strength of evidence [23]. These methods overcome the complexities of interpreting capillary electrophoresis results from forensic mixture samples, which may contain DNA from multiple contributors.

Table 2: Comparison of Probabilistic Genotyping Software Approaches

| Software | Model Type | Data Utilized | Key Characteristics | Typical LR Values |

|---|---|---|---|---|

| LRmix Studio (v.2.1.3) | Qualitative | Allele information (qualitative) | Considers detected alleles without quantitative data | Generally lower LRs than quantitative models |

| STRmix (v.2.7) | Quantitative | Allele peaks and heights (quantitative) | Incorporates quantitative peak information | Higher LRs, generally highest among tools studied |

| EuroForMix (v.3.4.0) | Quantitative | Allele peaks and heights (quantitative) | Open-source alternative with comprehensive modeling | Higher LRs than qualitative, typically lower than STRmix |

A 2022 comparative study analyzed 156 sample pairs using these three software approaches, demonstrating that quantitative tools (STRmix and EuroForMix) generally produced higher likelihood ratios than the qualitative software (LRmix Studio) [23]. The study also confirmed that mixtures with three estimated contributors typically yielded lower LR values than two-contributor mixtures, reflecting the increased complexity of interpretation [23]. Understanding these methodological differences is crucial for forensic experts who must explain and defend their conclusions in court.

Fracture Surface Topography Analysis

Emerging quantitative approaches for forensic fracture matching employ three-dimensional microscopy and statistical learning to overcome the subjectivity of traditional pattern recognition methods [22]. The methodology leverages the unique topographic features of fracture surfaces resulting from material microstructure and crack propagation.

Experimental Protocol: Fracture Surface Analysis

Sample Preparation: Generate fracture surfaces under controlled conditions. For metallic materials, this typically involves notched specimens subjected to standard fracture toughness tests (e.g., ASTM E1820).

Surface Imaging: Capture 3D topographic maps using confocal or white-light interferometry microscopy at appropriate scales. The imaging field of view should exceed 10 times the self-affine transition scale (typically 50-70μm for metals) to ensure capture of unique surface characteristics [22].

Topographic Analysis: Compute height-height correlation functions to identify the transition from self-affine to non-self-affine behavior, which occurs at approximately 2-3 times the average grain size for cleavage fractures [22].

Feature Extraction: Apply spectral analysis to characterize surface topography across multiple frequency bands, focusing on distinctive attributes at length scales beyond the self-affine transition.

Statistical Classification: Utilize multivariate statistical learning tools (e.g., linear discriminant analysis, logistic regression) to classify matching and non-matching surfaces based on extracted features.

Validation: Establish error rates through blind testing with known matching and non-matching pairs, calculating false positive and false negative rates across multiple materials and fracture modes.

This quantitative framework demonstrates the potential for near-perfect identification of matches and non-matches across various fractured materials and toolmarks, providing the statistical foundation called for in the NRC and PCAST reports [22].

Fingerprint Comparison and Difficulty Prediction

Research has identified quantitative image measures that can predict the difficulty of fingerprint comparisons and estimate potential error rates [24]. Key predictors include:

- Image Quality Metrics: Intensity and contrast information

- Information Quantity: Total fingerprint area available for comparison

- Configural Features: Presence and clarity of global features (loops, whorls, deltas) and ridge details

Regression models incorporating these predictors have demonstrated reasonable success in predicting objective difficulty for print pairs, enabling proactive identification of challenging comparisons that may require additional scrutiny or have higher potential for error [24].

Implementation Challenges and Legal Realities

The Gap Between Legal Standards and Forensic Practice

Despite the clear directives of Daubert and the critiques of the NRC and PCAST reports, implementation of rigorous scientific standards in forensic practice and courtrooms has been inconsistent [19] [20]. Studies reveal that judicial scrutiny of forensic evidence varies significantly, with some courts rigorously applying Daubert factors while others continue to admit questionably reliable evidence [19].

Several structural challenges contribute to this implementation gap:

- Judicial Scientific Literacy: Many judges lack scientific training, making it difficult to evaluate complex methodological and statistical arguments about forensic validity [17].

- Procedural Inertia: Courts often rely on precedent rather than re-evaluating forensic methodologies in light of new scientific critiques [19].

- Resource Limitations: Forensic laboratories frequently face underfunding, staffing deficiencies, and inadequate training resources [19].

- Adversarial System Limitations: The traditional adversarial process may be ill-suited for evaluating complex scientific issues, particularly when one party lacks resources to challenge opposing experts [19].

These challenges are compounded by the fact that forensic evidence is often critical in criminal prosecutions, creating institutional resistance to excluding evidence that may be perceived as probative despite reliability concerns.

Error Rates and Wrongful Convictions

Empirical research on forensic errors reveals concerning patterns across disciplines. A comprehensive analysis of 732 wrongful conviction cases identified 1,391 forensic examinations, of which 891 contained errors related to forensic evidence [21]. The study developed a forensic error typology with five categories:

Table 3: Forensic Evidence Error Typology in Wrongful Convictions

| Error Type | Description | Examples | High-Incidence Disciplines |

|---|---|---|---|

| Type 1: Forensic Science Reports | Misstatement of scientific basis in reports | Lab error, poor communication, resource constraints | Seized drug analysis (100%), serology (68%) |

| Type 2: Individualization/Classification | Incorrect individualization or classification | Interpretation error, fraudulent interpretation | Bitemark (73%), shoe impression (41%) |

| Type 3: Testimony | Erroneous testimony presentation | Mischaracterized statistical weight or probability | Multiple disciplines |

| Type 4: Officer of the Court | Errors by legal professionals | Excluded evidence, accepted faulty testimony | Case-specific |

| Type 5: Evidence Handling | Failure to collect, examine, or report evidence | Chain of custody issues, lost evidence | Police investigations |

Certain disciplines appear disproportionately in wrongful conviction cases. Bitemark analysis, for instance, was associated with errors in 77% of examined cases, with 73% involving incorrect individualizations [21]. Similarly, seized drug analysis showed errors in 100% of examined cases, though most resulted from field testing kits rather than laboratory analysis [21]. These findings highlight the critical need for rigorous validation, standardized procedures, and robust quality control across forensic disciplines.

Research Reagent Solutions: Essential Methodological Tools

Table 4: Essential Research Reagents and Materials for Forensic Validation Studies

| Research Reagent | Function/Application | Key Characteristics | Representative Examples |

|---|---|---|---|

| Probabilistic Genotyping Software | Quantifies strength of DNA evidence through likelihood ratios | Qualitative vs. quantitative models; mixture deconvolution | STRmix, EuroForMix, LRmix Studio [23] |

| 3D Surface Topography Systems | Captures microscopic fracture surface details | High-resolution (μm-scale); non-contact measurement | Confocal microscopy, white-light interferometry [22] |

| Statistical Classification Tools | Distinguishes matches from non-matches using quantitative data | Multivariate analysis; error rate estimation | R packages (MixMatrix), linear discriminant analysis [22] |

| Image Quality Metrics | Predicts difficulty and potential errors in pattern recognition | Quantifies contrast, clarity, information content | Fingerprint image analysis algorithms [24] |

| Height-Height Correlation Algorithms | Characterizes surface roughness and self-affine properties | Identifies transition to unique topographic features | Custom MATLAB/Python implementations [22] |

| Likelihood Ratio Frameworks | Provides quantitative measure of evidence strength | Bayesian approach; compares prosecution and defense hypotheses | Standardized software implementations [23] |

Visualizing Forensic Evidence Admissibility Workflows

Daubert Evidence Admissibility Decision Pathway

Quantitative Forensic Matching Methodology

The Daubert standard and subsequent developments have fundamentally transformed the legal landscape for forensic evidence, establishing rigorous criteria for scientific validity and reliability. The integration of National Commission on Forensic Sciences validation guidelines represents a critical step toward ensuring that forensic methodologies meet appropriate scientific standards. However, significant challenges remain in consistently implementing these standards across diverse forensic disciplines and courtroom settings.

Future progress will depend on continued research to establish empirical foundations for forensic methods, development of quantitative frameworks with measurable error rates, and enhanced scientific literacy among legal professionals. The ongoing collaboration between scientific organizations, forensic practitioners, and legal stakeholders offers promise for further strengthening the scientific rigor of forensic evidence and its appropriate application in the justice system. As forensic science continues to evolve, the Daubert standard provides a flexible framework for ensuring that legal proceedings incorporate scientifically valid evidence while excluding unreliable or unvalidated techniques.

Implementing Validation Frameworks: OSAC Standards and Practical Protocols

The phrase "DOJ Accreditation" pertains to two distinct regulatory spheres: the forensic science laboratories that analyze evidence for the justice system, and the non-attorney immigration representatives who practice before the Department of Justice. This whitepaper addresses the former, focusing on the policies and requirements for forensic science service providers. The Department of Justice (DOJ) has affirmed that accreditation is a cornerstone for ensuring that forensic science is practiced in a reliable and scientifically rigorous manner. It provides independent, external oversight to confirm that a laboratory follows its required procedures, thereby increasing the quality of work and reducing the likelihood of errors [6]. This landscape is intrinsically linked to the validation guidelines advocated by the National Commission on Forensic Science (NCFS), which emphasized the need for scientific validity and reliability across all forensic disciplines.

Current DOJ Forensic Science Accreditation Policies

The foundational DOJ policy for forensic science accreditation was announced in 2015, establishing a multi-year framework for implementation [6].

Core Policy Directives

The policy consists of three key directives aimed at department-run labs, department prosecutors, and the broader forensic community through grant incentives [6]:

- Mandatory Accreditation for DOJ Labs: All department-run forensic laboratories (e.g., those at the ATF, DEA, and FBI) are required to obtain and maintain accreditation. Although these labs were already accredited at the time of the policy announcement, the directive mandates the ongoing maintenance of that accredited status [6].

- Prosecutorial Use of Accredited Labs: Department prosecutors are required to use accredited forensic laboratories for the processing of forensic evidence when practicable. The Executive Office for U.S. Attorneys (EOUSA) was tasked with developing guidance for implementation in the field [6].

- Grant Funding as an Incentive: The DOJ leverages its grant funding mechanisms to encourage state and local labs to pursue accreditation. This is achieved through two primary means:

- Clarifying that Edward Byrne Memorial Justice Assistance Grant and Paul Coverdell Forensic Science Improvement Grant funds can be used to seek accreditation.

- Giving a "plus factor" or preference to applicants for relevant discretionary grants who intend to use the funding to obtain accreditation [6].

Table: Summary of Key DOJ Forensic Laboratory Accreditation Policies

| Policy Element | Applicable Entities | Core Requirement | Timeline/Status |

|---|---|---|---|

| Lab Accreditation | DOJ-run forensic labs (ATF, DEA, FBI) | Obtain and maintain accreditation | Required by 2020; maintenance ongoing |

| Prosecutor Use | All DOJ prosecutors | Use accredited labs for evidence processing | Required "when practicable" |

| Grant Incentives | State and local forensic labs | Preference for labs seeking accreditation | Ongoing |

It is important to note that the original policy exempted digital forensic labs from these immediate requirements. The Deputy Attorney General instead asked the NCFS to develop separate, tailored recommendations for accrediting labs that conduct digital forensic work [6].

The Role of the National Commission on Forensic Science (NCFS) and OSAC

The current regulatory infrastructure for forensic science is deeply influenced by the work of the NCFS and is sustained by the Organization of Scientific Area Committees (OSAC) for Forensic Science.

From NCFS Recommendations to OSAC Implementation

The DOJ's accreditation policies were a direct result of recommendations made by the NCFS, which was established to advance the field and ensure the use of reliable and scientifically valid evidence [6]. Although the NCFS is no longer active, its mission continues through OSAC, which is administered by the National Institute of Standards and Technology (NIST). OSAC's primary function is to identify and develop scientifically sound standards and to promote their adoption within the forensic science community [4] [5].

The OSAC Registry and Standards Development

A central component of this ecosystem is the OSAC Registry, a curated list of technically sound standards for forensic science. Widespread adoption of these standards is critical for ensuring consistency and validity across accredited laboratories.

The following workflow illustrates the process for a standard to be developed and placed on the OSAC Registry, ensuring scientific rigor and consensus.

The registry is dynamic, with standards regularly added, revised, and sometimes extended. As of early 2025, the OSAC Registry contained 225 standards (152 published and 73 proposed) spanning over 20 forensic disciplines [4] [5]. The process relies heavily on Standards Development Organizations (SDOs), with the Academy Standards Board (ASB) being a predominant contributor. The ASB has published over 130 standards, best practice recommendations, and technical reports to date [25].

Table: Recent Additions to the OSAC Registry (as of January 2025) [5]

| Standard ID | Standard Name | Discipline | Type |

|---|---|---|---|

| ANSI/ASB Std 180 | Standard for the Use of GenBank for Taxonomic Assignment of Wildlife | Biology | SDO Published |

| OSAC 2022-S-0032 | Best Practice Recommendation for the Chemical Processing of Footwear and Tire Impression Evidence | Footwear/Tire | OSAC Proposed |

| OSAC 2024-S-0012 | Standard Practice for the Forensic Analysis of Geological Materials by SEM/EDX | Trace Materials | OSAC Proposed |

| 17-F-001-2.0 SWGDE | Recommendations for Cell Site Analysis | Digital Evidence | SDO Published |

Accreditation Requirements and Implementation Metrics

Achieving and maintaining accreditation requires laboratories to demonstrate adherence to a complex framework of quality standards.

Core Requirements for Forensic Laboratories

Accreditation assesses a lab's capacity to generate and interpret results reliably within specific forensic disciplines. Independent accrediting bodies examine several critical factors [6]:

- Staff Competence: Formal standards for training and proficiency, such as the new ASB Standards 078, 079, 080, 081, and 091 for training in various aspects of forensic DNA analysis [25].

- Method Validation: Requirements for validating technical procedures to ensure they are fit for purpose.

- Quality Assurance: Ongoing processes, including the use of controls and audits, to ensure data integrity.

- Equipment and Environment: Appropriate calibration, maintenance of equipment, and control of the testing environment.

Quantitative Implementation and Impact

The implementation of OSAC Registry standards is a key metric for assessing the integration of scientific rigor into forensic practice. Data collected through the OSAC Registry Implementation Survey provides insight into adoption rates.

As of early 2025, 226 forensic science service providers (FSSPs) had contributed implementation data, with over 185 making their achievements public [4]. The year 2024 saw a significant increase, with 72 new FSSPs contributing to the survey [5]. This growth indicates a strengthening commitment to standardized practices across the community. The data also reveals the dynamic nature of standards implementation; as new versions of standards are published, FSSPs must update their practices and surveys to reflect their current status accurately [4].

The Scientist's Toolkit: Key Standards and Reagents

For researchers and professionals developing and validating methods in a regulated forensic environment, adherence to published standards is non-negotiable. The following table details key standards and documents that function as essential "research reagents" for building a scientifically sound protocol.

Table: Essential Standards and Resources for Forensic Science Research and Validation

| Item Name / ID | Category / Discipline | Function in Research & Validation |

|---|---|---|

| ANSI/ASB Std 018 (under revision) | Biology/DNA | Provides the methodology for the validation of probabilistic genotyping systems, which are critical for complex DNA mixture interpretation [25]. |

| ANSI/ASB Std 056 | Toxicology | Establishes the standard for evaluating measurement uncertainty in forensic toxicology, a fundamental requirement for reporting analytically sound results [4] [5]. |

| ASB Std 207 (open for comment) | Document Examination | Sets requirements for the collection and preservation of document evidence, dictating proper handling to avoid contamination or degradation prior to analysis [25]. |

| ISO/IEC 17025:2017 | Interdisciplinary (Quality) | Defines the general requirements for the competence of testing and calibration laboratories; it is the foundational quality standard for most forensic lab accreditation [5]. |

| OSAC Registry | Interdisciplinary | Serves as a centralized repository of vetted standards, allowing researchers to identify and apply technically sound methods for method development and validation [4] [5]. |

Future Directions and Evolving Standards

The regulatory landscape for forensic science is not static. Ongoing efforts focus on expanding the scope and depth of standardization into new disciplines and refining existing practices.

A significant future direction involves the establishment of an interagency working group on medico-legal death investigation (MDI), convened with the White House's Office of Science and Technology Policy. This initiative, born from an NCFS recommendation, aims to bring higher levels of scientific rigor and reliability to the MDI field [6]. Furthermore, continuous development is evident in the pipeline of standards. For example, as of early 2025, new projects were initiated for standards governing the ethical treatment of human remains for research (ASB Std 217) and the collection of entomological evidence (ASB Std 218) [4] [5]. The process also includes the regular recirculation of drafts for public comment, ensuring that the community of researchers and practitioners can contribute to the refinement of standards before they are finalized [25]. This iterative, consensus-based process is fundamental to maintaining the scientific integrity of the forensic sciences in line with the principles championed by the NCFS.

The Organization of Scientific Area Committees (OSAC) for Forensic Science was established in 2014 through a collaboration between the National Institute of Standards and Technology (NIST) and the U.S. Department of Justice (DOJ) [26]. This initiative was a direct response to the landmark 2009 National Research Council (NRC) report, Strengthening Forensic Science in the United States: A Path Forward, which identified a critical lack of standardization and scientifically rigorous practices across forensic disciplines [26] [27]. OSAC's primary mission is to strengthen the nation's use of forensic science by facilitating the development of high-quality, technically sound standards and promoting their widespread adoption throughout the forensic community [8].