Navigating Uncertainty: A Practical Guide to the Assumptions Lattice and Uncertainty Pyramid Framework in Drug Development

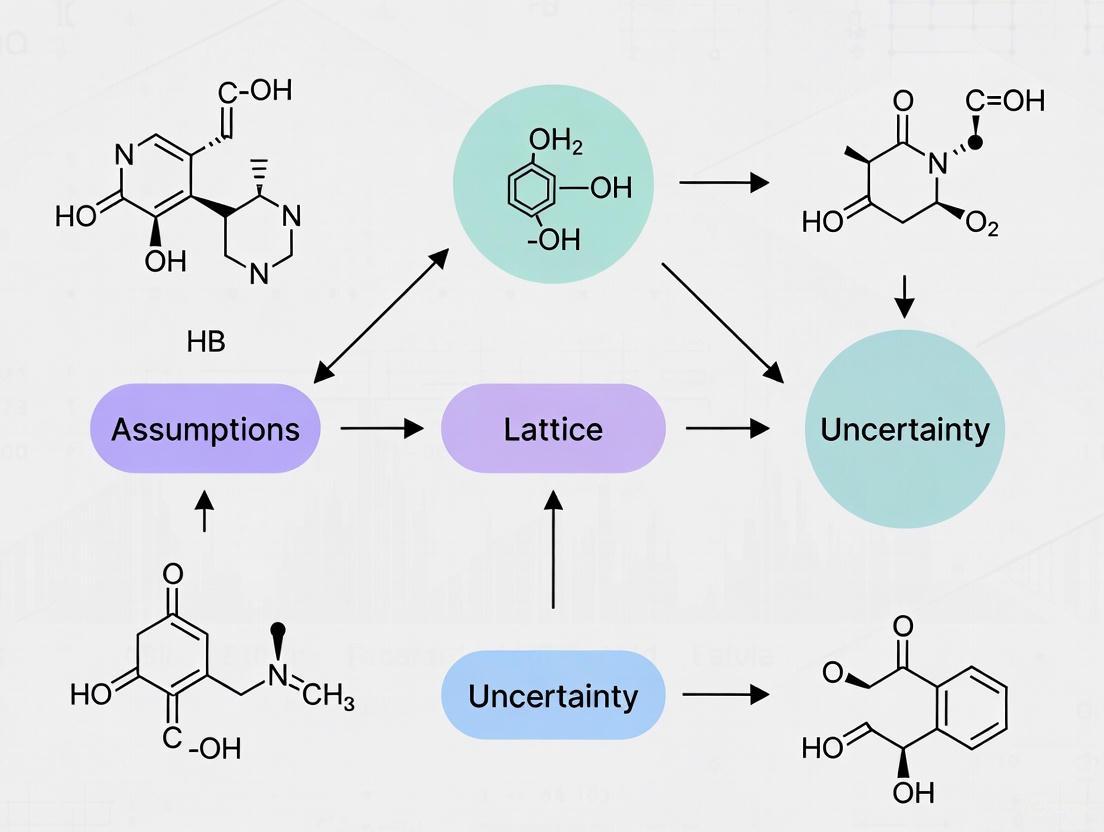

This article provides a comprehensive guide to the Assumptions Lattice and Uncertainty Pyramid framework, a structured approach for quantifying and communicating uncertainty in pharmaceutical research and development.

Navigating Uncertainty: A Practical Guide to the Assumptions Lattice and Uncertainty Pyramid Framework in Drug Development

Abstract

This article provides a comprehensive guide to the Assumptions Lattice and Uncertainty Pyramid framework, a structured approach for quantifying and communicating uncertainty in pharmaceutical research and development. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of the framework, details its methodological application from preclinical translation to clinical decision-making, addresses common troubleshooting and optimization challenges, and validates its utility through comparative analysis with other uncertainty quantification methods. By synthesizing these core intents, the article aims to equip practitioners with the tools to improve risk assessment, enhance regulatory communication, and ultimately build more resilient drug development pipelines.

Deconstructing Uncertainty: Understanding the Assumptions Lattice and Uncertainty Pyramid Framework

The Translation Challenge: Navigating the 'Valley of Death'

The transition from promising preclinical results to successful clinical applications represents one of the most significant challenges in drug development. This gap, often termed the "valley of death," sees the majority of potential therapeutic candidates failing to cross from bench to bedside [1] [2].

The Scale of the Problem

Table: Attrition Rates in Drug Development

| Development Phase | Failure Rate | Primary Causes of Failure |

|---|---|---|

| Preclinical Research | 80-90% of projects fail before human testing [1] | Poor hypothesis, irreproducible data, ambiguous preclinical models |

| Phase I Clinical Trials | 9 out of 10 drug candidates fail [2] | Safety issues, pharmacokinetic problems |

| Phase II Clinical Trials | High failure rates [3] | Lack of effectiveness, unexpected toxicity |

| Phase III Clinical Trials | Approximately 50% fail [1] | Lack of effectiveness, poor safety profiles not predicted in preclinical studies |

| Overall Approval | Only 0.1% of candidates reach approval [1] | Cumulative effects of above factors |

This crisis in translation stems from multiple factors, including poor hypothesis generation, irreproducible data, ambiguous preclinical models, statistical errors, and insufficient characterization of uncertainty in experimental systems [3] [1]. The fundamental issue often lies in the lack of "robustness" in preclinical science - defined as stability and reproducibility in the face of challenges that occur when moving to human trials [3].

The Uncertainty Pyramid Framework: A Systematic Approach

The assumptions lattice and uncertainty pyramid framework provides a structured method for assessing uncertainty in translational research. This approach requires researchers to explicitly consider and document the range of assumptions underlying their experimental models and resulting data interpretations.

Uncertainty Pyramid: Cumulative Impact of Assumptions on Decision Risk

Each level of the pyramid represents a category of assumptions that must be tested and validated. The framework explores the range of results attainable by models that satisfy stated criteria for reasonableness, enabling researchers to better understand relationships among interpretation, data, and assumptions [4].

Troubleshooting Common Experimental Scenarios

Assay Development and Validation Issues

Problem: No assay window in TR-FRET assays

- Potential Cause: Incorrect instrument setup or improper emission filter selection [5].

- Solution: Verify instrument configuration using compatibility portals and test reader setup with existing reagents before beginning assays. Ensure exact recommended emission filters are used, as filter choice can critically impact assay performance [5].

Problem: Differences in EC₅₀/IC₅₀ values between laboratories

- Potential Cause: Variability in stock solution preparation, typically at 1 mM concentrations [5].

- Solution: Standardize compound preparation protocols across collaborating laboratories and verify compound purity and concentration through quality control measures.

Problem: Inconsistent results in cell-based kinase assays

- Potential Causes: Compound inability to cross cell membranes, cellular efflux mechanisms, or targeting of inactive kinase forms [5].

- Solution: Use binding assays (e.g., LanthaScreen Eu Kinase Binding Assay) for studying inactive kinase forms and verify compound permeability through complementary assays.

Preclinical Model Validation

Problem: Poor translational predictability of animal models

- Potential Causes: Narrow experimental conditions that don't reflect human genetic diversity, age variations, or disease complexity [3] [2].

- Solution: Implement systematic heterogenization by varying genetic backgrounds, environmental conditions, and using multiple models to triangulate evidence [6].

Problem: Inflated effect sizes in exploratory studies

- Potential Cause: Low sample sizes leading to the "winner's curse" phenomenon where only large effect sizes achieve statistical significance [6].

- Solution: Conduct within-lab replications with refined experimental designs and increased sample sizes to decrease outcome uncertainty before proceeding to confirmatory studies [6].

Data Analysis and Interpretation

Problem: Determining appropriate sample sizes for preclinical studies

- Potential Cause: Underpowered experiments that increase false positive rates and effect size inflation [6].

- Solution: Define smallest effect size of interest reflecting biological or clinical relevance through discussion with clinicians and biostatisticians. Use this to inform sample size planning [6].

Problem: Assessing assay performance robustness

- Potential Cause: Over-reliance on assay window size without considering variability [5].

- Solution: Calculate Z'-factor that incorporates both assay window size and data variability. Assays with Z'-factor > 0.5 are considered suitable for screening [5].

Table: Z'-Factor Interpretation Guide

| Z'-Factor Value | Assay Quality Assessment |

|---|---|

| > 0.5 | Excellent assay suitable for screening |

| 0.5 - 0 | Marginal assay that may require optimization |

| < 0 | Assay not suitable for screening |

Experimental Protocols for Enhanced Robustness

Protocol: Preclinical Confirmatory Study Design

Purpose: To generate robust preclinical evidence supporting clinical translation decisions [6].

Methodology:

- Define Minimum Validity Criteria: Establish thresholds for internal validity (randomization, blinding), external validity (sources of variation), and translational validity (clinical relevance) [6].

- Implement Multicenter Design: Conduct studies across independent sites using shared protocols to identify site-specific effects and increase generalizability [6].

- Systematic Heterogenization: Introduce controlled variation in genetic backgrounds, environmental conditions, and technical procedures to test robustness across conditions [6].

- Triangulation Approach: Combine different methods and approaches to support the same claim, increasing validity at potential cost of added complexity [6].

- Sample Size Justification: Base sample sizes on smallest effect size of interest rather than arbitrary power calculations alone [6].

Protocol: ELISA Assay Qualification and Troubleshooting

Purpose: To ensure reliable performance of immunoassays for critical biomarkers and impurity testing [7].

Methodology:

- Control Preparation: Create 2-3 controls (low, medium, high) using your source of analyte in your sample matrices, with low control at 2-4 times the assay Limit of Quantitation (LOQ) [7].

- Bulk Preparation: Prepare controls in bulk, aliquot for single use, and store at -80°C until stability is established [7].

- Quality Monitoring: Use laboratory-specific controls rather than curve fit parameters (R square, slope, etc.) for quality control, as the latter lack sensitivity and specificity to detect assay problems [7].

- Replicate Analysis: Use duplicate analysis when precision is good (%CV < 5%), repeating any sample with %CV > 20% without outlier editing in duplicate analyses [7].

Preclinical Confirmation Workflow: From Exploration to Clinical Decision

Research Reagent Solutions for Robust Experimentation

Table: Essential Research Reagents and Their Functions

| Reagent/Assay Type | Primary Function | Key Applications |

|---|---|---|

| TR-FRET Assays (e.g., LanthaScreen) | Distance-dependent detection of molecular interactions | Kinase activity studies, protein-protein interactions [5] |

| Z'-LYTE Assay Systems | Enzyme activity measurement through ratio-metric detection | Kinase inhibition profiling, enzyme characterization [5] |

| Cell-Based Assay Systems | Evaluation of compound activity in cellular context | Target validation, compound screening [5] |

| ELISA Kits (e.g., Cygnus Technologies) | Quantification of protein impurities and biomarkers | Host cell protein detection, process impurity monitoring [7] |

| 3D Organoid Systems | Better representation of human tissue architecture | Compound screening, disease modeling [2] |

| Patient-Derived Xenograft Models | Preservation of tumor heterogeneity and clinical relevance | Oncology drug development, personalized medicine approaches [6] |

Frequently Asked Questions

Q1: What are the minimum criteria that should be met before proceeding to a confirmatory preclinical study?

Before engaging in confirmatory studies, research should meet minimum thresholds for both reliability and validity. For reliability, ensure adequate sample sizes to avoid effect size inflation and false positives. For validity, address three domains: internal validity (through randomization, blinding, validated methods), external validity (through systematic heterogenization), and translational validity (through clinical relevance of models and endpoints) [6].

Q2: How can we improve the translational predictivity of animal models?

Improve translational predictivity by: (1) Using multiple models to triangulate evidence rather than relying on a single model system; (2) Implementing systematic heterogenization to introduce genetic and environmental variation; (3) Ensuring model systems reflect the human disease pathophysiology and patient population characteristics (e.g., using aged animals for age-related diseases); (4) Incorporating human tissue models where possible to bridge species gaps [6] [2].

Q3: What strategies can reduce the high failure rates in Phase III clinical trials?

Key strategies include: (1) Implementing more robust preclinical experimentation that tests interventions under diverse conditions resembling human population variability; (2) Conducting preclinical confirmatory multicenter trials to weed out false positives; (3) Using the assumptions lattice framework to explicitly characterize uncertainty; (4) Improving target validation through human tissue studies and multi-omics approaches; (5) Establishing clear Go/No-Go decision criteria early in development [3] [6].

Q4: How should we handle modifications to established assay protocols?

When modifying established protocols (e.g., ELISA methods), carefully qualify that changes achieve acceptable accuracy, specificity, and precision. Modifications to sample volume, incubation times, or sequential schemes can significantly alter sensitivity and specificity. Always perform thorough validation when implementing protocol changes, and contact technical support for guidance on optimal modifications for specific analytical needs [7].

Q5: What is the role of computational approaches in improving translational success?

Computational methods including artificial intelligence and machine learning can: (1) Predict how novel compounds will behave in different biological environments; (2) Identify potential off-target effects; (3) Accelerate drug repurposing efforts; (4) Support clinical trial design through better patient stratification. However, these approaches require high-quality input data to generate reliable predictions [2].

This technical support center provides guidance for researchers applying the Assumptions Lattice and Uncertainty Pyramid frameworks in scientific experiments, particularly in drug development. These frameworks help structure your hypotheses and systematically quantify the uncertainty in your computational models and experimental results.

The Assumptions Lattice provides a structured way to organize and evaluate the foundational assumptions in your research. Formally, a lattice is a partially ordered set in which every two elements have a unique supremum (least upper bound or join) and a unique infimum (greatest lower bound or meet) [8]. In practical terms, this allows you to map the relationships between your different experimental assumptions, understanding how they support or conflict with one another.

The Uncertainty Pyramid is a Bayesian deep learning framework for quantifying uncertainty in complex models, such as those used for semantic segmentation in autonomous driving or predictive modeling in drug discovery [9]. It helps you distinguish between different types of uncertainty in your results, which is crucial for making reliable inferences and decisions.

Frequently Asked Questions (FAQs)

Q1: My Bayesian SegNet model is running too slowly during uncertainty evaluation. What optimization strategies can I implement?

A: This is a common issue when working with Monte Carlo (MC) Dropout sampling. Based on research by Gal et al. [9], we recommend these specific troubleshooting steps:

- Reduce MC-Dropout Layers: Simplify your network by implementing MC-Dropout only in the deeper layers of the network rather than every layer. This maintains uncertainty capture while significantly reducing computational overhead.

- Implement Pyramid Pooling: Introduce a pyramid pooling module to improve sampling efficiency, which can reduce the total number of sampling iterations required.

- Balanced Sampling: Find the optimal balance between the number of forward propagation samples and model performance. Start with 20-30 samples and adjust based on your specific accuracy requirements.

Q2: How can I formally validate that my set of experimental assumptions forms a proper lattice structure?

A: To validate your Assumptions Lattice structure, you must verify these mathematical properties [8]:

- Partial Order: Demonstrate that a "support" or "implies" relationship exists between your assumptions, creating a hierarchy.

- Join Existence: For any two assumptions, identify their most specific common consequence (join).

- Meet Existence: For any two assumptions, identify their most general common premise (meet).

- Use directed acyclic graphs (DAGs) to visualize these relationships, and employ formal concept analysis to verify the lattice properties mathematically.

Q3: What are the minimum contrast requirements for visualization elements in research diagrams and publications?

A: For accessibility and clarity, ensure your diagrams meet these enhanced contrast ratios [10] [11]:

Table: Minimum Color Contrast Requirements for Visual Elements

| Element Type | Minimum Contrast Ratio | Examples & Notes |

|---|---|---|

| Standard Text | 7:1 | Body text, axis labels, legends |

| Large-Scale Text | 4.5:1 | 18pt+ or 14pt+bold text |

| Data Points | 4.5:1 | Chart markers, graph symbols |

| Diagram Elements | 4.5:1 | Arrows, shapes, connectors |

Q4: How do I distinguish between aleatoric and epistemic uncertainty in my Pyramid Bayesian model outputs?

A: The Uncertainty Pyramid framework differentiates these uncertainty types as follows [9]:

- Aleatoric Uncertainty: This is inherent randomness in your data. It's measured by training the model to predict an uncertainty value alongside each output using a special loss function.

- Epistemic Uncertainty: This stems from model limitations. It's quantified using MC-Dropout during inference by running multiple forward passes and measuring the variation in predictions.

- Use metrics like mPAvPU (mean Pixel Accuracy vs. Uncertainty) to quantitatively evaluate your uncertainty calibration, particularly for image-based data in drug discovery assays.

Experimental Protocols & Methodologies

Protocol: Implementing Pyramid Bayesian Uncertainty Estimation

This protocol adapts the Bayesian SegNet framework for general scientific use [9]:

Materials Required:

- Dataset with labeled training examples

- Computational environment with GPU acceleration

- Python with PyTorch/TensorFlow and Bayesian deep learning libraries

Procedure:

- Network Modification: Integrate MC-Dropout layers into the decoder section of your segmentation/classification network rather than throughout the entire architecture.

- Pyramid Pooling Integration: Add a pyramid pooling module after the final encoder layer to capture multi-scale contextual information.

- Training: Train the network using a combined loss function that includes both standard segmentation/classification loss and uncertainty estimation terms.

- Inference with MC Sampling: Perform 20-50 forward passes with dropout activated during prediction to generate multiple output samples.

- Uncertainty Quantification: Calculate the mean of samples as your prediction and the variance as your uncertainty measure.

- Validation: Evaluate using mIoU (mean Intersection over Union) for accuracy and mPAvPU for uncertainty calibration.

Troubleshooting Tips:

- If uncertainty estimates appear noisy, increase the number of MC samples (step 4)

- If training fails to converge, reduce the number of MC-Dropout layers initially

- For memory issues during inference, reduce batch size rather than MC samples

Protocol: Constructing and Validating an Assumptions Lattice

Procedure:

- Assumption Enumeration: List all explicit and implicit assumptions in your experimental design.

- Relationship Mapping: For each assumption pair, determine if one supports (implies) the other, if they conflict, or are independent.

- Lattice Construction: Organize assumptions hierarchically with more fundamental assumptions at the base and derived assumptions higher up.

- Join/Meet Calculation: For each assumption pair, identify their most specific common consequence (join) and most general common premise (meet).

- Validation: Verify that your structure satisfies the lattice properties: reflexivity, antisymmetry, transitivity, and the existence of all pairwise joins/meets.

- Sensitivity Analysis: Test how violation of assumptions at different lattice levels affects your conclusions.

Research Reagent Solutions

Table: Essential Computational Tools for Lattice and Pyramid Frameworks

| Tool/Reagent | Function | Application Notes |

|---|---|---|

| MC-Dropout Layers | Approximate Bayesian inference | Place in deeper network layers only [9] |

| Pyramid Pooling Module | Multi-scale context aggregation | Improves sampling efficiency in Uncertainty Pyramid [9] |

| Directed Acyclic Graphs (DAGs) | Visualize assumption relationships | Essential for lattice structure validation [8] |

| Markov Chain Monte Carlo (MCMC) | Alternative to MC-Dropout | More accurate but computationally intensive [9] |

| Semantic Segmentation Networks | Pixel-level classification | Base architecture for Bayesian SegNet [9] |

| Formal Concept Analysis | Mathematical lattice validation | Verifies join/meet existence for all element pairs [8] |

Diagnostic Diagrams & Workflows

Uncertainty Pyramid Framework

Assumptions Lattice Structure

Experimental Workflow Integration

Troubleshooting Guides

Guide 1: Diagnosing and Addressing Poor Model Performance

Problem: Your scientific model shows poor predictive performance when applied to new data or in real-world conditions.

| Step | Action | Expected Outcome | Underlying Issue |

|---|---|---|---|

| 1 | Check for Variability in input data: Calculate variance, standard deviation, and range of key input parameters. [12] | Identification of inherent heterogeneity in your data population. | High variability in inputs (e.g., patient body weight, environmental conditions) is being treated as error, leading to an oversimplified model. |

| 2 | Quantify Uncertainty in parameter estimates: Perform sensitivity analysis or calculate confidence intervals for model parameters. [13] [14] | Understanding of the confidence in your model's fitted parameters (e.g., a dose-response slope). | High parameter uncertainty suggests a lack of knowledge, often due to insufficient or low-quality data. |

| 3 | Validate Model Structure: Compare predictions from alternative model structures or use a design of experiments (DOE) approach. [13] [15] | Insight into whether the model's fundamental equations are appropriate. | Structural uncertainty is present; the model may be oversimplified or miss key relationships. |

| 4 | Implement a Refined Approach: Use probabilistic techniques (e.g., Monte Carlo analysis) to propagate both variability and uncertainty. [12] [14] | A distribution of outcomes that honestly represents the total potential error in predictions. | Variability and uncertainty were conflated, giving a false sense of precision. |

Guide 2: Selecting and Validating a Model for Clinical Precision Dosing

Problem: Choosing an inappropriate population pharmacokinetic (PopPK) model for Model-Informed Precision Dosing (MIPD) leads to inaccurate dosing recommendations. [16]

| Consideration | Diagnostic Question | Implication of a "No" Answer |

|---|---|---|

| Target Population [16] | Was the model developed in a patient population with similar demographics (age, ethnicity), health status, and clinical care environment? | The model may not be generalizable, introducing structural and parametric uncertainty. |

| Dosing Scenario [16] | Does the model account for the same drug, dosing regimen, and route of administration you intend to use? | Introduces scenario uncertainty, as the model's predictive power is untested for your specific use case. |

| Sampling & Assays [16] | Were the model's underlying data obtained from a high-quality sampling strategy and assays replicable at your institution? | Underlying data may have high measurement error, increasing overall uncertainty. |

| Model Validation [16] | Have you validated the model's performance using example patient data from your own institution? | Failure to conduct prospective validation leaves model uncertainty uncharacterized, risking patient safety. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between uncertainty and variability?

- Variability refers to the inherent heterogeneity or diversity in a system or population. It is a property of the real world that cannot be reduced with more data, only better characterized. [12] Examples include the variation in body weight among a study population or differences in breathing rates. [12]

- Uncertainty describes a lack of knowledge or incomplete information about the system. It can arise from measurement errors, use of surrogate data, or an incomplete understanding of the model structure. [12] [17] Unlike variability, uncertainty can often be reduced by collecting more or better data. [12]

FAQ 2: Why is it critical to distinguish between them in drug development?

Distinguishing between the two is essential because they have different implications for decision-making and risk assessment. [17]

- Variability informs you about the diversity of responses you can expect in your target population. This is crucial for understanding the range of effective and safe doses.

- Uncertainty informs you about your confidence in the model's predictions. High uncertainty means you cannot be sure if the model is accurate, which is a major risk for regulatory approval and patient safety. [16] Conflating the two can lead to overstated conclusions and poor clinical decisions. [13]

FAQ 3: How can I visually conceptualize the relationship between uncertainty, variability, and model assumptions?

The relationship can be framed within an "Assumptions Lattice Uncertainty Pyramid" framework. This framework posits that a model is built on a lattice of interconnected assumptions. The pyramid structure represents the propagation and amplification of different sources of uncertainty and variability from the base (fundamental assumptions) to the apex (final model prediction).

FAQ 4: What are common methods for quantifying uncertainty and variability?

The following table summarizes key techniques for addressing uncertainty and variability. [12] [14]

| Method | Best Used For | Brief Description |

|---|---|---|

| Monte Carlo Simulation [12] [14] | Forward propagation of uncertainty and variability. | Repeated random sampling from input distributions to compute a distribution of possible outcomes. |

| Sensitivity Analysis [12] [14] | Identifying which uncertain inputs contribute most to output uncertainty. | Systematically varying model inputs to determine their effect on the output. |

| Bayesian Estimation [16] [14] | Reducing parameter uncertainty by incorporating new data. | Updates prior knowledge (a model) with new observed data to produce a posterior estimate with reduced uncertainty. |

| Disaggregating Data [12] | Characterizing variability in a population. | Separating data into categories (e.g., by age, sex) to better understand the sources of heterogeneity. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key methodological "reagents" for experiments focused on quantifying uncertainty and variability. [12] [16] [14]

| Tool / Solution | Function in Analysis |

|---|---|

| Probabilistic Programming Languages (e.g., Stan, PyMC) | Facilitates Bayesian analysis, allowing for the formal integration of prior knowledge with new data to update parameter estimates and quantify their uncertainty. |

| Monte Carlo Simulation Software | Enables the propagation of input variability and parameter uncertainty through complex models to generate a full probability distribution of outcomes. |

| Sensitivity Analysis Packages (e.g., Sobol, Morris) | Systematically tests how the variation in a model's output can be apportioned to different sources of variation in its inputs. |

| Population PK/PD Modeling Software (e.g., NONMEM) | Specifically designed to quantify between-subject variability (BSV) and residual unexplained variability (RUV) in pharmacokinetic and pharmacodynamic models. [16] |

| Design of Experiments (DOE) Software | Helps plan efficient experiments to map a process's "design space," characterizing how input variables affect Critical Quality Attributes (CQAs) while managing uncertainty. [15] |

Experimental Protocol: Systematic Audit of Model-Related Uncertainty

Aim: To conduct a systematic audit of uncertainty quantification and reporting in a set of scientific publications, based on the methodology from an interdisciplinary audit. [13]

Materials

- A curated library of scientific papers from your field of interest (e.g., from two representative journals over a specific time period).

- Data extraction sheet (digital or physical).

- The "Sources of Uncertainty" framework (Response, Explanatory, Parameters, Model Structure). [13]

Workflow Diagram

Method

- Paper Selection: Define your field and time period of interest. Identify and gather all original research papers from two representative journals for that period. [13]

- Data Extraction: For each paper, determine if it uses a statistical or mathematical model. For papers that do, audit them against the four sources of uncertainty: [13]

- Response Variable: Did the study quantify measurement or observation error in the primary outcome being measured?

- Explanatory Variable: Did the study account for potential error or noise in the variables used to explain the response?

- Parameter Estimates: Were the uncertainties in the model's parameter estimates reported (e.g., standard errors, confidence intervals)?

- Model Structure: Did the study compare, contrast, or average the results of alternative model structures to test for structural uncertainty?

- Synthesis: Tabulate the frequency with which each source of uncertainty is quantified across the audited papers. This provides a snapshot of the current state of practice in your field. [13]

Expected Results and Interpretation

This audit will likely reveal that no field fully considers all possible sources of uncertainty. [13] The area of explanatory variable uncertainty is most frequently overlooked. [13] The results can be used to identify specific gaps in common practice and to formulate guidelines for more complete uncertainty reporting in your domain.

In scientific research and drug development, uncertainty is not a single entity but a spectrum with distinct characteristics. The two primary categories are epistemic uncertainty (arising from a lack of knowledge and theoretically reducible) and aleatoric uncertainty (stemming from inherent randomness and essentially irreducible) [18] [19]. Understanding this distinction is critical for making robust inferences, designing effective experiments, and communicating findings accurately. This guide provides troubleshooting advice and methodologies to help you identify, quantify, and manage these different uncertainties within your research, framed within the advanced context of the assumptions lattice and uncertainty pyramid framework [20] [21] [4].

Core Concepts FAQ

What is the fundamental difference between epistemic and aleatoric uncertainty?

- Epistemic Uncertainty (Reducible): This is uncertainty due to incomplete knowledge about the system or phenomenon. It can be reduced by collecting more data, improving models, or refining measurements [18] [19]. For example, uncertainty about a model parameter or the true functional form of a relationship is epistemic.

- Aleatoric Uncertainty (Irreducible): This is uncertainty due to the inherent randomness or stochasticity of a process. It cannot be reduced by gathering more data, only better characterized [18] [14]. The natural variability in experimental measurements or the randomness in a biological outcome are classic examples.

Is the distinction between epistemic and aleatoric uncertainty always clear-cut?

No, the distinction can be context-dependent and is sometimes debated [22] [19]. Some argue that all uncertainty ultimately stems from incomplete information. However, from a practical modeling perspective, the distinction is highly useful. It helps decide where to allocate resources: seeking more data to reduce epistemic uncertainty, or accepting and quantifying the inherent noise of aleatoric uncertainty.

How does this distinction relate to the 'assumptions lattice' and 'uncertainty pyramid' framework?

The assumptions lattice is a framework that maps the hierarchy of assumptions made during an analysis, from very conservative to more speculative [20] [21] [4]. The uncertainty pyramid conceptualizes how uncertainty propagates and potentially expands as one moves up this lattice of increasingly strong assumptions. In this context:

- Aleatoric uncertainty is the base-level variability that exists even under the most conservative assumptions.

- Epistemic uncertainty is reflected in the range of results obtained across different levels of the assumptions lattice, as our knowledge and modeling choices change.

Why is it critical to characterize both types of uncertainty when reporting a Likelihood Ratio (LR) or similar statistic?

A single Likelihood Ratio value depends on a specific set of modeling assumptions. Without characterizing the uncertainty in the LR itself, its meaning is limited [20] [21] [4]. A proper uncertainty analysis explores how the LR changes across the assumptions lattice, revealing its stability (low epistemic uncertainty) or sensitivity (high epistemic uncertainty) to modeling choices. This provides a "fitness for purpose" assessment of the reported value [4].

Troubleshooting Guide: Identifying and Managing Uncertainty

Problem: My model fits the training data well but generalizes poorly to new data.

| Potential Cause | Type of Uncertainty | Diagnostic Check | Mitigation Strategy |

|---|---|---|---|

| Overfitting | Epistemic (Model Uncertainty) | - Performance gap between training and validation sets.- Large parameter values/overly complex model. | - Apply regularization (L1/L2).- Simplify the model structure.- Increase training data. |

| Insufficient Data | Epistemic (Parameter Uncertainty) | - Wide confidence intervals on parameter estimates.- High sensitivity to data resampling (e.g., bootstrapping). | - Collect more data, if possible.- Use Bayesian methods to quantify parameter uncertainty. |

| Incorrect Model Structure | Epistemic (Structural Uncertainty) | - Residuals show clear patterns (not random).- Model fails to capture known physics/biology. | - Incorporate domain knowledge into the model.- Test alternative model architectures. |

Problem: My experimental results are inconsistent, with high variability between replicates.

| Potential Cause | Type of Uncertainty | Diagnostic Check | Mitigation Strategy |

|---|---|---|---|

| Inherent Randomness | Aleatoric (Sampling Uncertainty) | - Variability is consistent and cannot be eliminated.- Replicates form a stable distribution. | - Quantify the variability (e.g., estimate variance).- Increase sample size to better estimate the population distribution. |

| Uncontrolled Experimental Variables | Epistemic (Measurement Uncertainty) | - Variability changes with experimental conditions or operators.- Trends in data over time. | - Standardize experimental protocols.- Identify, control, or measure key confounding variables. |

| Measurement Instrument Noise | Aleatoric (Measurement Uncertainty) | - Noise level is constant and documented in instrument specs.- Observed in control experiments with known standards. | - Use more precise instrumentation.- Apply signal processing or filtering techniques. |

Problem: I am unsure if my computational model accurately represents the real-world system.

| Potential Cause | Type of Uncertainty | Diagnostic Check | Mitigation Strategy |

|---|---|---|---|

| Model Discrepancy/Inadequacy | Epistemic (Structural Uncertainty) | - Systematic bias between model predictions and validation data, even after parameter tuning. | - Perform bias correction or model calibration using experimental data [14].- Enhance the model to include missing physics/biology. |

| Numerical Approximation Errors | Epistemic (Algorithmic Uncertainty) | - Results change with solver type, step size, or mesh density. | - Perform convergence studies.- Use higher-fidelity numerical methods (if computationally feasible). |

| Uncertain Input Parameters | A mix of Aleatoric & Epistemic (Parameter Uncertainty) | - Input parameters are not known precisely (e.g., drawn from a distribution). | - Propagate input uncertainty using Monte Carlo simulation or polynomial chaos expansion [14]. |

Experimental Protocols for Uncertainty Quantification

Protocol: Quantifying Aleatoric and Epistemic Uncertainty in a Regression Model

This protocol uses a Bayesian neural network to separately estimate both types of uncertainty [19].

Workflow Diagram: Uncertainty Quantification in Regression

Methodology:

- Model Definition: Define a neural network where the weights are represented as probability distributions rather than fixed values.

- Training: Train the model using a method like Variational Inference, which learns the parameters of these weight distributions.

- Prediction & Sampling: For a new input

x, perform multiple stochastic forward passes (e.g., 100-1000 times), each time sampling a new set of weights from their posterior distributions. This generates a distribution of outputs{ŷ₁, ŷ₂, ..., ŷ_T}. - Uncertainty Decomposition:

- Epistemic Uncertainty: Calculate the variance of the

Tpredicted means. This reflects the model's uncertainty about its parameters. - Aleatoric Uncertainty: Calculate the mean of the

Tpredicted variances (the model also learns to predict data noise). This reflects the inherent noise in the data.

- Epistemic Uncertainty: Calculate the variance of the

Key Research Reagent Solutions:

| Reagent / Tool | Function in Protocol |

|---|---|

| Probabilistic Programming Framework (e.g., Pyro, TensorFlow Probability) | Provides the infrastructure to define and train models with probabilistic weights. |

| Variational Distribution (e.g., Mean-Field Gaussian) | An approximation to the true, intractable posterior distribution of the model weights. |

| Evidence Lower Bound (ELBO) | The objective function optimized during training to fit the variational distribution. |

Protocol: Applying the Assumptions Lattice to a Likelihood Ratio Calculation

This protocol provides a framework for assessing the robustness of a forensic likelihood ratio (LR) but is applicable to any model-based comparison [20] [21] [4].

Workflow Diagram: The Assumptions Lattice & Uncertainty Pyramid

Methodology:

- Define the Lattice: Explicitly list the key assumptions made in your analysis. Structure them into a hierarchy (lattice) from most conservative/safe (Level 1) to most specific/optimistic (Level N). Example assumptions include:

- Choice of prior distribution in a Bayesian analysis.

- The functional form of the statistical model.

- The set of variables included in the model.

- Compute Across the Lattice: Calculate your statistic of interest (e.g., the Likelihood Ratio) at each level of the assumptions lattice.

- Construct the Uncertainty Pyramid: Analyze the range of results obtained. A wide range (a broad pyramid) indicates high sensitivity to assumptions (high epistemic uncertainty). A narrow range indicates robustness.

Key Research Reagent Solutions:

| Reagent / Tool | Function in Protocol |

|---|---|

| Statistical Modeling Software (e.g., R, Stan) | Allows for flexible re-calculation of models under different assumptions and priors. |

Sensitivity Analysis Package (e.g., sensitivity in R) |

Automates the process of varying model inputs/assumptions and tracking outputs. |

| Benchmark Dataset (with known ground truth) | Used to validate and compare the performance of models based on different assumptions. |

The Assumptions Lattice and Uncertainty Pyramid form a structured framework for assessing how different assumptions and modeling choices affect scientific conclusions, particularly when evaluating the strength of evidence via metrics like Likelihood Ratios (LRs) [4].

- Assumptions Lattice: This is a systematic map of the analytical choices made during an evaluation. Imagine a tree structure where each node represents a specific assumption. Moving from the base to the tip of the tree involves making progressively more specific choices about data processing, statistical models, and parameter sets. The lattice framework explicitly acknowledges that multiple reasonable analytical paths exist, and the goal is to explore the range of results these different paths produce [4].

- Uncertainty Pyramid: This concept visualizes the framework's exploration process. The base of the pyramid represents a broad set of plausible models and assumptions. As one applies stricter criteria or "reasonableness" filters to select models (moving up the pyramid), the range of potential results (e.g., LR values) typically narrows. Analyzing this pyramid helps experts and decision-makers understand the sensitivity of a conclusion to the underlying assumptions and assess its robustness [4].

Frequently Asked Questions (FAQs)

Q1: Why is it necessary to use this framework instead of reporting a single, best-estimate result? Reporting a single value can mask the underlying uncertainty and subjectivity involved in its calculation. This framework is necessary because it provides a transparent method to demonstrate how conclusions depend on personal choices made during assessment. It shifts the focus from a single, potentially misleading number to a comprehensive understanding of the result's stability and reliability, which is critical for evaluating its fitness for purpose [4].

Q2: In what specific research areas is this framework most applicable? This framework is highly valuable in any field that relies on complex model-based inference where expert findings inform critical decisions.

- Forensic Science: For transparently conveying the weight of evidence through Likelihood Ratios, accounting for variability in model selection [4].

- Drug Discovery and Development: For analyzing Quantitative Structure-Activity Relationship (QSAR) models, where different molecular descriptors and statistical methods can lead to varying predictions [23].

- Materials Science: For predicting the equivalent properties of complex structures like honeycombs, where different homogenization techniques and theoretical models yield a range of answers [24].

Q3: What is the practical output of conducting an analysis using this framework? The primary output is not a single number, but a range of plausible results (e.g., a distribution of LR values) and a clear documentation of the assumption paths that lead to them. This provides decision-makers with a realistic picture of the evidence's strength and the confidence they can place in it [4].

Q4: How does this framework relate to traditional sensitivity analysis? While traditional sensitivity analysis might test variations around a single "best" model, the assumptions lattice and uncertainty pyramid advocate for a broader and more systematic exploration. It encourages the evaluation of fundamentally different, yet still reasonable, models and assumptions, going beyond minor parameter adjustments to reveal larger potential uncertainties [4].

Troubleshooting Common Experimental & Computational Issues

Problem: Computational results are highly sensitive to the initial choice of molecular descriptors.

- Solution: Do not rely on a single set of descriptors. Follow a structured feature selection process:

- Filtering: Start by removing descriptors with low variance or high correlation to others to reduce redundancy [23].

- Systematic Selection: Use automated feature selection routines (e.g., forward selection, backward elimination) or expert intuition to identify the most prominent descriptors [23].

- Lattice Exploration: Construct an assumptions lattice where each branch represents a different, valid set of descriptors. Run your model for each major branch to see how the final conclusion changes [4].

Problem: The model performs well on training data but fails to predict new experimental data accurately.

- Solution: This indicates overfitting. The framework mandates an uncertainty assessment.

- Validate Across the Lattice: Use hold-out validation or cross-validation not just on one model, but on multiple models defined by different assumption paths in your lattice [23] [4].

- Report Performance Ranges: The "true" performance of your modeling approach should be reported as a range observed across the various validated models, giving a more honest account of predictive uncertainty [4].

Problem: Inconsistent conclusions are drawn from the same dataset by different researchers.

- Solution: This is a classic case highlighting the need for the framework.

- Map the Composite Lattice: Document the different analytical choices made by each researcher (e.g., data pre-processing methods, statistical algorithms, parameter priors).

- Build an Uncertainty Pyramid: Consolidate these choices into a unified lattice. The collective results from all paths will form the base of your uncertainty pyramid. Applying consensus fitness criteria will help narrow down the most reliable conclusions and identify the most contentious assumptions [4].

Key Experimental Protocols & Data Presentation

Protocol: Developing a QSAR Model with Uncertainty Assessment

This protocol is adapted from ligand-based drug design methodologies for use within the assumptions lattice framework [23].

Descriptor Generation:

- Generate a comprehensive set of molecular descriptors for all compounds under investigation. This can include 1D-descriptors (e.g., molecular weight), 2D-descriptors (e.g., molecular connectivity indices, structural fingerprints), and 3D-descriptors (e.g., molecular volume, solvent-accessible surface area) [23].

- Framework Integration: The choice of descriptor type (1D, 2D, 3D) represents a major branch point in the assumptions lattice.

Feature Selection:

- Reduce the descriptor set to avoid overfitting. Use a combination of automated methods (e.g., variance filtering, correlation analysis) and expert knowledge to select the most relevant features [23].

- Framework Integration: Different feature selection methods create sub-branches within the main descriptor-type branches of the lattice.

Model Building and Validation:

- Choose a statistical or machine learning method (e.g., linear regression, partial least squares, neural networks) to correlate descriptors with biological activity.

- Validate the model using robust techniques like k-fold cross-validation or leave-one-out validation. The critical step is to repeat this process for each significant path in the assumptions lattice [23].

- Framework Integration: The choice of algorithm creates another dimension in the lattice. The final output is a distribution of model performances (e.g., R², prediction error) across the lattice, forming the base of the uncertainty pyramid.

The following tables summarize key data types and reagent solutions used in related fields, illustrating the framework's utility.

Table 1: Categories of Molecular Descriptors for QSAR Modeling [23]

| Descriptor Dimensionality | Example Descriptors | Information Captured | Computational Cost |

|---|---|---|---|

| 1D-Descriptors | Molecular Weight, Atom Count | Constitutive, bulk properties | Very Low |

| 2D-Descriptors | Molecular Connectivity Index (χ), Wiener Index (W) | Size, branching, shape, flexibility | Low |

| 3D-Descriptors | Molecular Volume, Polar Surface Area, GRID/K CoMFA Fields | 3D shape, surface properties, interaction energies | High to Very High |

Table 2: Research Reagent Solutions for Material & Computational Analysis

| Reagent / Solution | Function / Application | Key Considerations |

|---|---|---|

| Finite Element Analysis (FEA) Software | Predicts equivalent linear elastic properties (e.g., stiffness, modulus) of complex structures like honeycombs by approximating them as homogeneous materials [24]. | Model complexity (computational load) vs. accuracy of the equivalent properties. |

| Physics-Guided Neural Networks (PGNN) | Machine learning models used for predicting nonlinear equivalent performance of structures under large deformations [24]. | Integrates physical laws to improve model reliability and reduce purely data-driven errors. |

| Sequential & Categorical Color Palettes | Used in data visualization to ensure accessibility and avoid false data associations in charts and graphs [25]. | Must meet WCAG 2.1 contrast ratios (≥ 3:1); colors alone should not convey meaning. |

Framework Visualization with Graphviz

Assumptions Lattice Structure

Assumptions Lattice Map

Uncertainty Pyramid Workflow

Uncertainty Pyramid Workflow

From Theory to Practice: Implementing the Uncertainty Framework in Pharmaceutical R&D

In drug development, an Assumptions Lattice is a structured framework that maps and prioritizes the critical uncertainties and hypotheses at each stage of the process. This guide provides a technical support center to help you construct and validate your own lattice, with a focus on the solid-form selection of an Active Pharmaceutical Ingredient (API). The framework is built upon the Uncertainty Pyramid, which conceptualizes the layered nature of risk, from fundamental molecular-level assumptions to high-level product performance predictions. Properly implemented, this approach de-risks development by forcing the explicit testing of your most critical assumptions through targeted experiments and computational tools [26] [27].

Frequently Asked Questions (FAQs)

Q1: What is the single most critical assumption in early-stage solid form selection? The most critical assumption is often the identification of the most stable polymorph of your API. A late-appearing, more stable polymorph can drastically alter the drug's solubility, bioavailability, and stability, jeopardizing the entire development program and even causing market recalls. Your lattice must explicitly document the assumption that the currently known polymorph is the most stable one and outline a plan to test it [27].

Q2: Our computational models predict a lattice energy that doesn't match our experimental observations. What should we troubleshoot? This discrepancy can arise from several sources. Follow this troubleshooting guide:

- Check Conformational Flexibility: Does your molecule have multiple rotatable bonds? Standard QSPR models trained on rigid molecules may be inaccurate for flexible, drug-like compounds. Verify that the computational method is validated for your chemical space [26].

- Inspect the Input Structure: For crystal structure prediction (CSP), the accuracy of the generated crystal packings is highly sensitive to the input molecular conformation. Ensure your starting geometry is correct [27].

- Review the Experimental Data: How was the experimental sublimation enthalpy (ΔHsub) measured? High temperatures can cause decomposition. Consider using calculated lattice energies from a known crystal structure as a more reliable benchmark for your model [26].

Q3: How can we be confident that our crystal structure prediction (CSP) method isn't missing a risky, unknown polymorph? This is a fundamental uncertainty. To manage it:

- Demand Large-Scale Validation: Use or develop CSP methods that have been validated on a large and diverse set of molecules (dozens to hundreds), not just a few proof-of-concept cases. This tests the method's ability to handle diverse functional groups and flexibility [27].

- Perform Hierarchical Ranking: Employ a method that combines fast force fields with more accurate machine learning force fields and periodic DFT calculations. This ensures robust energy rankings of predicted polymorphs [27].

- Conduct a Clustering Analysis: Many predicted structures are trivial duplicates. Cluster similar structures (e.g., using RMSD) to avoid over-prediction and focus on genuinely novel, low-energy polymorphs that pose a real risk [27].

Q4: The electron density map for our protein-ligand complex is ambiguous. How should we interpret the binding mode for our lattice? This is a common pitfall. Never overinterpret unclear data.

- Critically Assess the Density: Use validation tools to check if the reported ligand has sufficient continuous electron density to support its presence and location. A significant number of PDB deposits have poorly supported ligands [28].

- Document the Uncertainty: Your assumption lattice must clearly state that the proposed binding mode is a hypothesis based on a model with limited resolution or clarity. Plan follow-up experiments (e.g., mutagenesis, biophysical assays) to test this specific hypothesis, rather than treating the model as ground truth [28].

Experimental Protocols & Methodologies

Protocol: Validating a Lattice Energy Predictive Model

Purpose: To build and validate a Quantitative Structure-Property Relationship (QSPR) model for predicting the lattice energy of drug-like molecules using only their 2D structure.

Workflow:

- Data Curation: Compile a dataset of known crystal structures for in-house drug molecules. Calculate their lattice energies using established atom-atom summation methods [26].

- Descriptor Calculation: From the 2D molecular structure, calculate relevant molecular descriptors (e.g., molecular weight, topological polar surface area, hydrogen bond acceptors/donors, conformational flexibility) [26].

- Model Training: Use a machine learning algorithm to train a model that correlates the molecular descriptors with the calculated lattice energies. Split data into training and test sets [26].

- Proof-of-Concept: Build a parallel model using experimental sublimation enthalpies to demonstrate interchangeability with the calculated lattice energy model [26].

- Validation: Test the model's accuracy on a blind set of molecules not used in training. The model is successful if it predicts lattice energies with acceptable accuracy for your chemical space [26].

Protocol: Computational Polymorph Screening (CSP) to De-Risk Solid Form Selection

Purpose: To identify all low-energy polymorphs of an API computationally, highlighting potential risks from undiscovered forms.

Workflow:

- Systematic Packing Search: Use a crystal packing search algorithm to generate thousands of potential crystal structures across common space groups. This explores the "Z' = 1" search space (one molecule in the asymmetric unit) [27].

- Hierarchical Energy Ranking:

- Stage 1 (FF): Use a classical force field for initial optimization and ranking.

- Stage 2 (MLFF): Re-optimize and re-rank the top candidates using a machine learning force field for higher accuracy.

- Stage 3 (DFT): Perform final ranking of the shortlist using periodic Density Functional Theory (e.g., r2SCAN-D3 functional) [27].

- Clustering and Analysis: Cluster predicted structures based on similarity (e.g., RMSD < 1.2 Å) to remove duplicates. The known experimental polymorph should be ranked among the top candidates. Any new, low-energy predicted polymorphs represent a potential development risk [27].

The diagram below illustrates this hierarchical workflow.

Data Presentation: Key Properties Affecting Lattice Energy

The following table summarizes the core physical properties you must quantify and the computational tools used to predict them. These form the quantitative foundation of your assumptions lattice.

Table 1: Key Properties and Computational Methods for the Assumptions Lattice

| Property | Impact on Development | Recommended Computational Method | Quantitative Benchmark for De-risking |

|---|---|---|---|

| Lattice Energy | Determines intrinsic solubility, physical stability, and processability [26]. | Bespoke QSPR model (for early stage) [26], Periodic DFT (for accurate ranking) [27]. | Model predicts lattice energy within acceptable accuracy (e.g., ± a few kJ/mol) for your chemical space [26]. |

| Polymorph Landscape | Identifies risk of late-appearing, more stable forms that can alter product properties [27]. | Crystal Structure Prediction (CSP) with hierarchical ranking (FF -> MLFF -> DFT) [27]. | All known experimental polymorphs are reproduced and ranked in the top 10 predicted structures [27]. |

| Crystal Structure | Provides atomic-level understanding of intermolecular interactions packing energy [26]. | X-ray Crystallography (experimental), Crystal Structure Prediction (computational) [26] [27]. | Calculated lattice energy matches value derived from experimental crystal structure [26]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists critical materials and tools required for building and validating the solid-form segment of your assumptions lattice.

Table 2: Essential Research Reagents and Computational Tools

| Item / Reagent | Function / Explanation | Technical Specification / Purpose |

|---|---|---|

| High-Purity API | The subject of the solid-form screen. Essential for all experimental work. | Purity >99% to ensure crystallization experiments are not biased by impurities. |

| Crystallization Solvent Kit | To explore diverse crystallization conditions for polymorph screening. | A diverse library of > 50 solvents (polar, non-polar, protic, aprotic) and solvent mixtures. |

| X-ray Diffractometer | To determine the crystal structure of single crystals obtained from screening. | Provides experimental electron density maps to derive atomic coordinates and calculate lattice energy [26]. |

| Validated CSP Software | To computationally predict the crystal structure and polymorph landscape. | Software must be validated on a large, diverse set of drug-like molecules [27]. |

| Machine Learning Force Field | For accurate energy ranking of predicted crystal structures. | A pre-trained model (e.g., QRNN) that includes long-range electrostatic and dispersion interactions [27]. |

| Periodic DFT Code | For the highest-accuracy final ranking of predicted polymorphs. | Code with functionals like r2SCAN-D3 for robust treatment of van der Waals forces in molecular crystals [27]. |

The Integrated Assumptions Lattice Workflow

The following diagram synthesizes the core concepts, methodologies, and decision points into a single, integrated Assumptions Lattice workflow. This provides a visual map for navigating the de-risking process.

FAQs: Uncertainty in Preclinical Pharmacokinetic Prediction

Uncertainty in predicting human PK parameters arises from multiple sources during the translation from preclinical models. Key areas include:

- Parameter Uncertainty: This represents limited knowledge of the true parameter values (e.g., clearance, volume of distribution) in the mathematical model used for prediction. It is not a system property and can be reduced as more information is gathered [29].

- Model Structure Uncertainty: The choice of the mathematical model itself (e.g., allometric scaling vs. physiologically-based pharmacokinetic (PBPK) modeling) can introduce uncertainty, as different models may make different assumptions about the underlying biological processes [29].

- Interspecies Differences: Fundamental physiological and metabolic differences between animal species and humans are a major source of uncertainty, particularly for parameters like bioavailability that are influenced by gut physiology and enzymatic activity [29].

- Variability vs. Uncertainty: It is crucial to distinguish variability (a property of the system, such as genetic or environmental variations in a population) from uncertainty (limited knowledge about the system). The main concern in the scaling process is typically the inherent uncertainty in the methodology itself [29].

What are the typical uncertainty ranges for key human PK parameters like Clearance and Volume of Distribution (Vss) based on current prediction methods?

The performance of prediction methods is often evaluated by the percentage of compounds for which the predicted parameter falls within a certain fold of the true human value. The table below summarizes reported uncertainties for two critical parameters:

Table 1: Typical Uncertainty Ranges for Human PK Parameter Predictions

| PK Parameter | Common Prediction Methods | Reported Prediction Performance | Suggested Uncertainty Range |

|---|---|---|---|

| Clearance (CL) | Allometric scaling, In vitro-in vivo extrapolation (IVIVE) | - Best allometric methods: ~60% of compounds within 2-fold of human value [29].- IVIVE methods: 20–90% of compounds within 2-fold, varying with experimental setup [29]. | A factor of 3 (approximated by a lognormal distribution where there is a 95% chance the true value falls within 3-fold of the prediction) [29]. |

| Volume of Distribution at Steady State (Vss) | Allometry, Oie-Tozer method | Little consensus on the best method; predictive power is compound-dependent [29] [30]. | A factor of 3 of the true value [29]. |

What methodologies are available to quantify and integrate these uncertainties for dose prediction?

Several methodologies can be used to quantify and propagate uncertainty from individual parameters into a final dose prediction.

- Monte Carlo Simulation: This is a powerful probabilistic method that quantifies overall uncertainty by simultaneously integrating all sources of input uncertainty. It runs thousands of simulations, each time sampling parameter values from their defined probability distributions. The main output is a distribution of the predicted dose, which captures the combined effect of all uncertainties [29].

- Generalized Polynomial Chaos (gPC): This is a more advanced, non-sampling method for uncertainty quantification. It represents random variables (uncertain parameters) and the system's solution (e.g., drug concentration over time) as expansions of orthogonal polynomials in the random space. This method can be more computationally efficient than Monte Carlo simulation and can describe the random variation of the system state [31].

- Sensitivity Plots and Tables: These are simpler methods that communicate uncertainty in one or two parameters at a time at discrete points. While easy to generate, they risk information overload if all aspects are covered or a lack of information if only selected instances are shown [29].

Troubleshooting Guide: Addressing Common Challenges in Uncertainty Quantification

Problem: Poor Predictive Accuracy When Applying a Model to a New Patient Population or Dosing Regimen

Potential Cause: The model's predictive accuracy is often context-specific. A model developed for one population (e.g., healthy volunteers) or a specific dosing regimen may not generalize well to another (e.g., critically ill patients) due to unaccounted-for physiological or pathophysiological differences [32].

Solutions:

- Internal-External Validation: Develop the model on one dataset (e.g., from one hospital) and rigorously evaluate it on an external dataset from a different center using a different dosing regimen. This tests the model's generalizability [32].

- Incorporate Machine Learning (ML) with Uncertainty Quantification: Consider using interpretable ML models (e.g., CatBoost) that can find complex patterns from data. Enhance these models with distribution-based uncertainty quantification methods, such as a Quantile Ensemble, to provide individualized uncertainty ranges for each prediction, alerting the user to potentially less reliable outputs in new contexts [32].

- Use a Lattice of Assumptions (Uncertainty Pyramid): Frame your uncertainty analysis within a "lattice of assumptions," where each level of the pyramid represents a different set of assumptions about the model structure and parameters. This helps in assessing which assumptions contribute most to the overall uncertainty [33].

Problem: How to Determine the Appropriate Loading Dose for a Drug with High Tissue Distribution

Potential Cause: The loading dose is directly proportional to the volume of distribution (Vd). A drug with a high Vd has a greater propensity to redistribute from the plasma into tissues, meaning a higher initial dose is required to achieve the target plasma concentration [30].

Solution:

- Use Steady-State Volume of Distribution (Vss): The most clinically relevant volume for calculating a loading dose is the volume of distribution at steady-state (Vss), as it best represents the drug's distribution after the initial redistribution phase [30].

- Calculation: The loading dose can be calculated using the formula:

Loading dose (mg) = [Desired Plasma Concentration (mg/L) x Vss (L)] / Bioavailability (F)[30]. Note: For intravenous administration, bioavailability (F) is 1.

Experimental Protocols for Key Uncertainty Quantification Methods

Protocol: Quantifying Dose Prediction Uncertainty Using Monte Carlo Simulation

This protocol outlines the steps for propagating parameter uncertainty to human dose prediction [29].

1. Define the Pharmacokinetic Model and Dose Equation:

- Select a structural PK model (e.g., a one-compartment model with first-order absorption).

- Define the equation for the human dose, which is typically a function of key PK parameters like clearance (CL), volume of distribution (Vss), target exposure (AUC), and bioavailability (F). For example:

Dose = (Target AUC × CL) / F.

2. Characterize Input Parameter Distributions:

- For each input parameter in the dose equation (CL, Vss, F), define a probability distribution that represents its uncertainty.

- These distributions (e.g., log-normal for CL and Vss) should be based on literature evaluations, as shown in Table 1. For instance, clearance (CL) might be defined as

CL ~ LogNormal(Mean, SD), where the standard deviation is set so that the 95% interval spans a 3-fold range.

3. Execute the Monte Carlo Simulation:

- Perform a large number of iterations (e.g., 10,000). In each iteration:

- Randomly sample a value for each input parameter from its defined distribution.

- Calculate the resulting dose using the dose equation.

- Collect all the calculated dose values from all iterations.

4. Analyze and Communicate the Output:

- The collection of simulated doses forms a predictive distribution.

- Plot this distribution as a histogram or a cumulative distribution function.

- Communicate the results using percentiles (e.g., the 5th, 50th, and 95th percentiles) to inform decision-makers about the range of plausible doses, highlighting the median prediction and the uncertainty around it [29].

Protocol: Implementing a Quantile Ensemble for Machine Learning-Based Predictions

This protocol describes a method to add distribution-based uncertainty quantification to any machine learning model that can optimize a quantile function [32].

1. Model Training:

- Select a regression model, such as CatBoost or XGBoost.

- Instead of training a single model to predict the mean, train multiple instances of the model to predict different quantiles (e.g., the 10th, 50th, and 90th quantiles) of the target variable (e.g., drug concentration). This is done by using a quantile loss function during training.

2. Forming the Predictive Distribution:

- For a new input, each of the trained quantile models makes a prediction.

- These predicted quantiles (

q10,q50,q90) are then used to construct an approximate cumulative distribution function (CDF) for that individual prediction.

3. Evaluation of Uncertainty Calibration:

- Use proposed metrics like the Distribution Coverage Error (DCE) and Absolute Distribution Coverage Error (ADCE) to evaluate the calibration of the uncertainty estimates [32].

- The DCE indicates whether the predictive distribution is too narrow (undercovered) or too wide (overcovered), while the ADCE provides an overall measure of miscalibration. The goal is a well-calibrated (DCE near 0) and sharp (narrow) predictive distribution.

Method Visualization and Workflows

Uncertainty Quantification Method Selection Workflow

This diagram illustrates a decision pathway for selecting an appropriate uncertainty quantification method based on the research context and available data.

The Lattice of Assumptions in an Uncertainty Pyramid Framework

This diagram conceptualizes how different levels of assumptions contribute to the overall uncertainty in a translational PK/PD prediction, forming an "uncertainty pyramid" [33].

Research Reagent Solutions for PK/PD Modeling

Table 2: Key Materials and Tools for PK/PD Uncertainty Quantification

| Item / Reagent | Function in Experiment / Analysis |

|---|---|

| Preclinical in vivo PK Data | Provides the foundational data (e.g., concentration-time profiles) for estimating PK parameters and their variability in animal models [29]. |

| Human Hepatocytes / Liver Microsomes | Critical for in vitro-in vivo extrapolation (IVIVE) methods to predict human hepatic metabolic clearance and its uncertainty [29]. |

| Monte Carlo Simulation Software (e.g., R, NONMEM, Matlab) | The computational engine for performing probabilistic simulations to propagate input uncertainty to model outputs [29]. |

| Machine Learning Libraries (e.g., CatBoost, Scikit-learn) | Provide algorithms for building predictive models from complex data and for implementing advanced uncertainty quantification techniques like quantile regression [32]. |

| Generalized Polynomial Chaos (gPC) Solver | Specialized software or code for implementing the gPC methodology, a efficient alternative to Monte Carlo for solving systems with random parameters [31]. |

FAQs: Uncertainty in Human Dose Prediction

FAQ 1: What are the primary sources of uncertainty in predicting human dose from preclinical data? Uncertainty enters human dose prediction from several key areas. Pharmacokinetic (PK) uncertainty arises from predicting parameters like human clearance and volume of distribution; for instance, even high-performance methods may have an uncertainty factor of three (a 95% chance the true value falls within a threefold range of the prediction) [29]. Pharmacodynamic (PD) uncertainty stems from species differences in biology and the translatability of preclinical efficacy models, which can vary significantly between drug projects [29]. Model structure uncertainty concerns the choice of the mathematical model itself (e.g., allometry vs. physiologically-based pharmacokinetic modeling) [29]. Furthermore, uncertainty in absorption and bioavailability is common, especially for compounds with low solubility or permeability [29].

FAQ 2: How does the assumptions lattice and uncertainty pyramid framework apply to dose prediction? The assumptions lattice is a framework that maps the hierarchy of choices made during model development, from fundamental assumptions to specific modeling decisions [4] [21]. In dose prediction, this could range from choosing a scaling method (e.g., allometry vs. in vitro-in vivo extrapolation) to selecting specific correction factors. The uncertainty pyramid concept illustrates how these cascading assumptions contribute to the total uncertainty in the final prediction, the Likelihood Ratio (LR) or, in this context, the predicted dose [4] [21]. Using this framework forces a systematic evaluation of how sensitive the final dose prediction is to changes at various levels of the assumption lattice.

FAQ 3: Why is a Monte Carlo simulation preferred over a single point estimate for dose prediction? A traditional forecast produces a single, fixed value, which fails to communicate the range of possible outcomes inherent in drug development [34]. A Monte Carlo simulation, in contrast, uses input ranges and probability distributions for key parameters to run thousands of computational experiments [29] [34]. The output is a probability distribution of the predicted human dose, which provides a much more realistic and informative view of risk. It enables decision-makers to understand not just a single estimate, but the likelihood of achieving a target dose, helping to set rational action standards for project progression [34].

FAQ 4: My Monte Carlo simulation shows a very wide dose distribution. What does this indicate and how can I proceed? A wide dose distribution is a direct reflection of high uncertainty in your input parameters [34]. This should not be seen as a failure of the model, but as a valuable diagnostic tool. To proceed, you should:

- Identify Key Drivers: Perform a sensitivity analysis to determine which input parameters (e.g., predicted clearance, bioavailability, or efficacy target) contribute most to the variance in the final dose.

- Refine Critical Assumptions: Use the assumptions lattice to pinpoint which high-level assumptions have the greatest impact. Focus research efforts on refining the data and models for these key drivers, perhaps by running additional experiments.

- Make Risk-Informed Decisions: With a clear understanding of the uncertainty, you can make more strategic decisions. For example, you might proceed with development only if there is a high probability (e.g., >90%) that the dose is below a pre-defined feasibility threshold [34].

Troubleshooting Guides

Problem 1: Poor Convergence of Monte Carlo Simulation Results

| Step | Action | Principle |

|---|---|---|

| 1 | Verify the number of simulation runs. | A small number of runs (e.g., 1,000) may not fully represent the parameter space. Increase to 10,000 or more for stability [34]. |

| 2 | Check the specified input distributions. | Incorrectly specified distributions (e.g., using a Normal distribution for a parameter that is log-normal) can skew results. Review the underlying data for each parameter [29]. |

| 3 | Analyze parameter correlations. | Ignoring strong correlations between input parameters (e.g., between clearance and volume of distribution) can produce invalid output. Incorporate correlation matrices if needed. |

Problem 2: Translational Failure Due to Incorrect PK/PD Assumptions

| Step | Action | Principle |

|---|---|---|

| 1 | Revisit the preclinical PK/PD model. | Ensure the exposure-response relationship is well-established in a pharmacologically relevant animal model. Weakness here is a major source of clinical failure [35]. |

| 2 | Interrogate key assumptions in the lattice. | Systematically test alternative assumptions for scaling PK and PD. For example, compare allometric scaling to IVIVE methods for clearance [29]. |

| 3 | Incorporate validated biomarkers. | Using translatable PD biomarkers measured in accessible tissues (e.g., blood) greatly reduces uncertainty in predicting the pharmacologically active dose in humans [35]. |

Problem 3: Handling Sparse or Low-Quality Preclinical Input Data

| Step | Action | Principle |

|---|---|---|

| 1 | Choose appropriate input distributions. | For parameters with high uncertainty and poor data quality, use a Uniform distribution with conservative (wide) min-max values to avoid false precision [34]. |

| 2 | Leverage prior knowledge and literature. | Use published uncertainty estimates for common prediction methods (see Table 1) to define input ranges when project-specific data is limited [29]. |

| 3 | Clearly communicate data limitations. | The uncertainty pyramid framework mandates transparency. The output distribution should be interpreted with caution, and the underlying data limitations must be part of the decision-making process [4]. |

Quantitative Data for Parameter Uncertainty

Table 1: Typical Uncertainty Ranges for Human PK Parameter Predictions [29]

| Parameter | Prediction Method | Typical Uncertainty (Fold) | Notes / Rationale |

|---|---|---|---|

| Clearance (CL) | Allometry (Monkey) | ~3-fold | Best-performing allometric method predicts ~60% of compounds within 2-fold [29]. |

| Clearance (CL) | In vitro-in vivo extrapolation (IVIVE) | 2-3 fold | Success rates vary widely (20-90% within 2-fold) based on experimental setup and corrections [29]. |

| Volume of Distribution (Vss) | Allometry / Oie-Tozer | ~3-fold | Little consensus on best method; physicochemical properties must conform to model assumptions [29]. |

| Bioavailability (F) | BCS-based / PBPK | Highly variable | High uncertainty for low solubility/permeability compounds (BCS II-IV); often under-predicted by PBPK models [29]. |

Table 2: Key Inputs and Distributions for a Monte Carlo Dose Prediction Model

| Model Input | Description | Suggested Distribution Type | Justification |

|---|---|---|---|

| Predicted Human CL | Point estimate from scaling method (e.g., 1 mL/min/kg). | Lognormal | Accounts for multiplicative error and ensures values remain positive [29]. |

| Uncertainty Factor for CL | The fold-error around the point estimate (e.g., 3-fold). | Constant or Distribution | Can be fixed based on literature (Table 1) or itself given a distribution if its uncertainty is known. |

| Target Trough Concentration (Cmin) | The PD-driven target exposure. | Lognormal or Uniform | Use lognormal if variability is known; use uniform if the therapeutic window is poorly defined. |

| Dosing Interval (τ) | Fixed value (e.g., 24 hours). | Constant | Typically a fixed design parameter. |

| Competitor Launch Date | Discrete event impacting market share. | Discrete (Binary/Probability) | Modeled as a scenario with an assigned probability of occurrence [34]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Methods for Uncertainty Analysis

| Item / Reagent | Function in Dose Prediction & Uncertainty Analysis |

|---|---|

| Preclinical In Vivo PK Data | Provides the foundational data for allometric scaling or model fitting to estimate primary PK parameters [29]. |

| Human Liver Microsomes / Hepatocytes | Critical in vitro systems used in IVIVE methods to predict human metabolic clearance and potential drug-drug interactions [29]. |

| Validated Pharmacodynamic Biomarker Assay | Quantifies the drug's effect; a translatable biomarker is crucial for reducing PD uncertainty and establishing the target exposure in humans [35]. |

| Monte Carlo Simulation Software | The computational engine that propagates input uncertainties through the dose prediction model to generate a probability distribution of outcomes [34]. |