Navigating the Legal-Regulatory Communication Gap: 2025 Strategies for Drug Development Professionals

This article explores the critical challenges in communicating complex drug development data to legal and regulatory courts and juries.

Navigating the Legal-Regulatory Communication Gap: 2025 Strategies for Drug Development Professionals

Abstract

This article explores the critical challenges in communicating complex drug development data to legal and regulatory courts and juries. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive framework covering the evolving communication landscape, practical methodologies for message testing and preparation, strategies to overcome common pitfalls, and validation techniques to ensure scientific integrity and persuasive impact in high-stakes legal and regulatory proceedings.

The Evolving Legal-Regulatory Landscape: Why Communication is Now a Core Scientific Challenge

In the high-stakes realms of pharmaceutical development and litigation, effective communication is not merely an administrative function but a critical determinant of success. Miscommunication within drug development teams can trigger regulatory delays, costly resubmission requirements, and even application rejection by health authorities [1]. Parallel communication failures in legal contexts can lead to spoliation sanctions, adverse inferences, and devastating litigation outcomes [2]. This article examines these interconnected risks through a technical lens, providing researchers and drug development professionals with practical frameworks to navigate these complex challenges.

Miscommunication in the Drug Approval Pathway

The Regulatory Submission Lifecycle: Critical Handoff Points

The regulatory submission process constitutes a complex sequence of interdependent stages where communication bottlenecks frequently develop. As detailed by Santosh Shevade, this lifecycle spans from initial data collection through final agency submission, requiring seamless collaboration across clinical, regulatory, medical writing, and biostatistical domains [1].

Table: Communication Pain Points in Regulatory Submissions

| Stage | Communication Challenge | Potential Impact |

|---|---|---|

| Data Collection | Fragmented data from global trial sites, real-world evidence sources, and laboratory studies | Inconsistent data formats, missing datasets, reconciliation delays |

| Content Generation | Multiple authors and reviewers working on complex documents without centralized version control | Version conflicts, content inconsistencies, contradictory statements |

| Cross-functional Review | Misalignment between clinical, regulatory, and statistical teams on data interpretation | Regulatory queries, challenges establishing cohesive efficacy narrative |

| Final Submission | Last-minute changes not communicated to all stakeholders | Submission package inconsistencies, formatting violations |

The regulatory landscape itself introduces additional complexity, with varying requirements across jurisdictions like the FDA, EMA, and other regulatory bodies [1]. Without clear communication channels to track these evolving standards, companies risk submitting non-compliant applications.

Current Regulatory Challenges and FDA Transformation

The year 2025 has introduced unprecedented uncertainty into the U.S. regulatory landscape following significant workforce reductions at the FDA. Despite exemptions for drug reviewers, cuts to support staff and policy offices have created operational disarray that directly impacts sponsor-agency communication [3].

According to industry reports, meeting wait times with FDA regulators have stretched from 3 months to as long as 6 months, creating critical bottlenecks for cash-constrained biotech firms [3]. Perhaps more significantly, the reduction in policy office expertise has created ambiguity around developing regulatory guidelines, particularly concerning proposed shifts away from animal testing toward novel alternatives [3].

This institutional knowledge loss poses particular challenges for ongoing development programs, as continuity in regulatory dialogue is essential for complex drug applications. As one retired biotech executive noted, "The principal reviewer knows all the backgrounds, knows the decisions which were made, and knows the product the best" [3]. The departure of such experienced staff disrupts this critical communication thread.

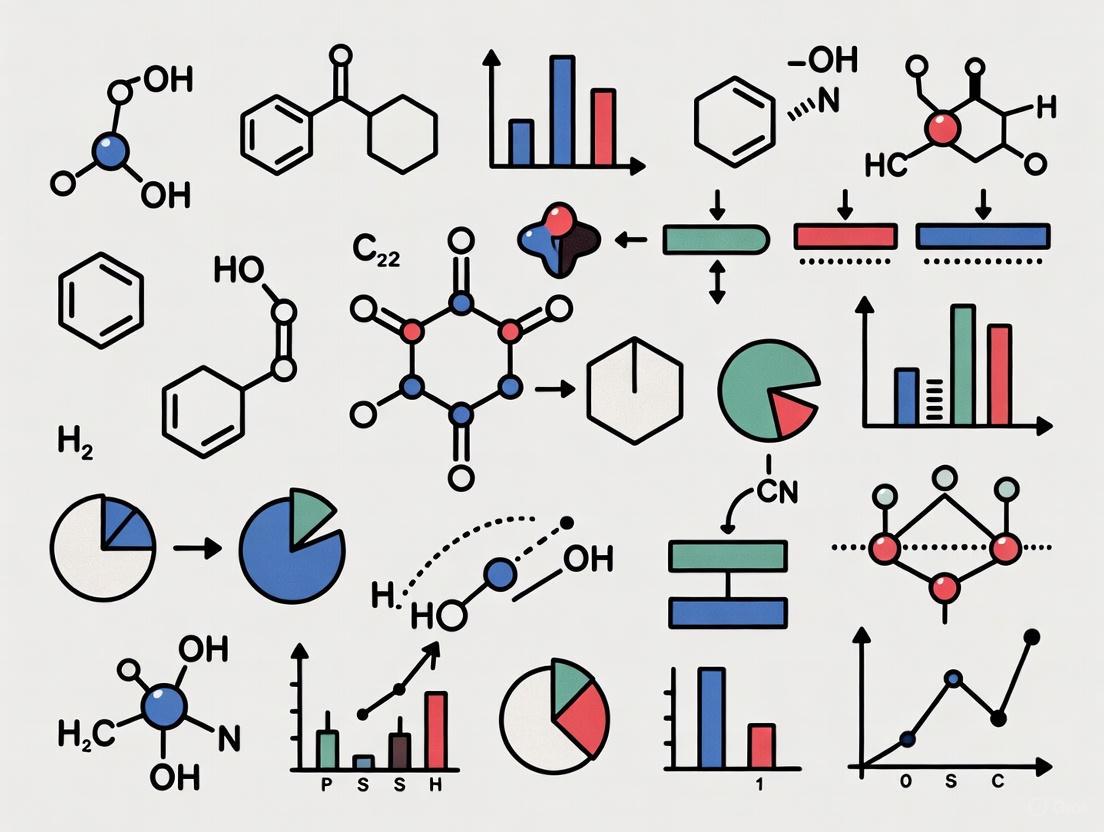

Diagram: Communication Breakdowns in the Regulatory Pathway. This workflow illustrates how miscommunication at each stage creates compounding delays, particularly under current FDA transformation challenges [1] [3].

Emerging Approval Pathways: Communication Implications

The FDA is currently exploring a potential conditional approval pathway that would represent a fundamental shift in the evidentiary standards for drug approval [4]. While still theoretical, such a pathway would likely require even more rigorous post-market surveillance communication and transparent safety reporting.

Unlike the current accelerated approval pathway - which employs the same statutory standard as traditional approval but uses different endpoints - conditional approval would potentially establish a lower evidentiary threshold for initial market entry [4]. This paradigm shift would necessitate exceptionally clear communication about:

- Evidence limitations to physicians and patients

- Post-market study requirements and timelines

- Risk-management protocols and adverse event reporting

- Coverage and reimbursement communications with payers

As noted in Morgan Lewis's analysis, "Payors have expressed concern over potential approval revocation of conditionally approved drugs," with some indicating they may postpone coverage reviews for six to twelve months post-approval [4]. These coverage uncertainties would require strategic communication planning to ensure patient access.

Miscommunication Risks in Litigation Contexts

Spoliation: The Evidence Preservation Imperative

In litigation, perhaps no area demonstrates the consequences of communication failure more starkly than spoliation - the destruction or suppression of evidence. As explained by the Center for Legal & Court Technology, "Spoliation may result from negligent oversight, miscommunication between an attorney and their client, or simply a failure to foresee the course of potential litigation" [2].

The challenges of proving spoliation are significant, as it often "requires you to prove that a document that has been destroyed did, in fact, exist—often through circumstantial inference" [2]. Modern electronic data complexities have led courts to apply stricter rules in assessing a party's "reasonable steps" in avoiding spoliation, with less leniency for simple mistakes that could have been avoided through proper communication [2].

Legal-Technical Collaboration Gaps

A critical vulnerability exists at the intersection of legal and technical teams. CLCT staff note that "the law of spoliation is not well known by most non-litigating lawyers, and although they lack data, they believe that most cyber technologists are unaware of it" [2]. This knowledge gap creates profound communication barriers that can jeopardize case outcomes.

The solution, according to conference participants, involves implementing a "C.I.A. focus" in data management - preserving Confidentiality, Integrity, and Availability of data [2]. However, speakers emphasized that "such principles will only be adequately executed if the company's legal and technological teams work together" [2], highlighting the interdependence of technical safeguards and clear communication protocols.

Integrated Framework: Mitigating Communication Risks

Experimental Protocols for Communication Optimization

Table: Research Reagent Solutions for Communication Integrity

| Solution Category | Specific Tool/Methodology | Function in Maintaining Communication Integrity |

|---|---|---|

| Document Management | Version-controlled submission platforms | Tracks document iterations, maintains audit trails, prevents conflicting versions |

| Data Governance | Standardized data collection templates | Ensures consistency across trial sites, facilitates data aggregation |

| Stakeholder Alignment | Cross-functional review protocols | Formalizes feedback incorporation, documents decision rationales |

| Regulatory Intelligence | Requirements tracking databases | Centralizes evolving agency expectations, maintains compliance |

| Evidence Preservation | Legal hold notification systems | Automates preservation duties, documents compliance efforts |

Protocol 1: Cross-Functional Document Development

- Establish a centralized submission portal with role-based access controls

- Implement structured review cycles with clearly defined comment resolution procedures

- Maintain a living "assumptions and decisions" log tracking key scientific and regulatory choices

- Conduct pre-submission alignment meetings to resolve interpretational differences

- Utilize standardized templates that auto-populate with approved language and data

Protocol 2: Legal-Technical Collaboration for Evidence Preservation

- Initiate early case assessment meetings between legal counsel and IT/data specialists

- Map data sources and custodians potentially relevant to anticipated litigation

- Implement automated legal holds with acknowledgment tracking

- Establish chain of custody documentation for key experimental data

- Conduct periodic compliance audits to ensure preservation protocols are functioning

Visualization: Integrated Communication Workflow

Diagram: Integrated Communication Risk Mitigation. This workflow demonstrates how centralized information management supports both regulatory success and litigation preparedness [1] [2].

Technical FAQs: Addressing Researcher Questions

Q: What specific communication strategies are most effective for managing regulatory submissions in the current uncertain FDA environment? A: In the current climate of FDA transformation, several strategies prove critical: First, document all interactions with the agency meticulously, including informal communications. Second, implement redundant verification for all regulatory requirements, as policy guidance may be inconsistent. Third, build contingency timelines into development plans that account for extended review cycles and meeting delays. Fourth, diversify regulatory expertise beyond single points of contact within the organization to mitigate knowledge loss from FDA turnover [3].

Q: How can research organizations practically improve collaboration between scientific and legal teams to prevent spoliation? A: Effective legal-technical collaboration requires both structural and cultural interventions: Establish quarterly cross-training sessions where legal counsel educates researchers on preservation duties while technical staff explains data systems and limitations. Implement unified preservation protocols that automatically trigger when research enters certain phases (e.g., before publication of controversial findings). Create a joint task force with representatives from both functions to regularly update data retention policies. Most importantly, foster pre-litigation relationships so teams aren't meeting for the first time during crisis [2].

Q: What are the most common points of communication failure in regulatory submission teams, and how can they be addressed? A: Analysis of submission challenges reveals several consistent failure points: (1) Incomplete handoffs between clinical operations and regulatory affairs, addressed through standardized transition checklists; (2) Unresolved interpretation differences between biostatistics and medical writing teams, mitigated by structured resolution meetings with documented rationales; (3) Version control breakdowns in complex submission documents, remedied by implementing single-source publishing platforms with permission controls; and (4) Inconsistent messaging about post-submission changes, corrected through formal change control procedures with designated decision authorities [1].

Q: How might emerging conditional approval pathways change communication requirements between sponsors and regulators? A: While still theoretical, conditional approval would fundamentally reshape sponsor-regulator communication in several ways: It would require more nuanced benefit-risk discussions throughout development rather than just at submission; necessitate clearer post-approval study protocols with predefined success criteria; demand transparent safety monitoring plans with explicit thresholds for regulatory action; and likely involve more ongoing dialogue about emerging evidence compared to traditional binary approval decisions. Sponsors should prepare by documenting how their development programs could generate the mechanistic plausibility evidence that might support such pathways [4].

In both drug approval and litigation contexts, communication excellence serves as both risk mitigation strategy and competitive advantage. The technical protocols and frameworks outlined provide researchers and development professionals with practical tools to navigate these complex interdisciplinary interfaces. As regulatory standards evolve and litigation risks multiply, organizations that institutionalize these communication competencies will achieve not only faster approvals and stronger legal defenses but, ultimately, greater success in delivering innovative therapies to patients.

Frequently Asked Questions (FAQs)

Q1: What is "priming" in the context of a juror's perception? A1: Priming is a psychological process where a juror's decision-making is influenced by information they were exposed to before the trial, often through media. This can cause them to unconsciously weigh certain facts or evidence more heavily than others during deliberations. For instance, repeated media narratives can prime jurors to view certain parties in a case as more credible or culpable before any evidence is formally presented [5] [6].

Q2: How does "confirmation bias" pose a challenge to an impartial jury? A2: Confirmation bias is the natural human tendency to seek out and favor information that confirms one's existing beliefs. Social media algorithms, which curate content to match a user's views, can supercharge this bias. A juror exposed to such filtered information may have difficulty considering trial evidence objectively, as they may unconsciously dismiss facts that contradict their pre-formed opinions [5] [6].

Q3: What specific behaviors are jurors instructed to avoid? A3: Courts provide explicit instructions to jurors, prohibiting them from:

- Conducting their own research or Google searches on the case.

- Using social media (Facebook, X, TikTok, etc.) to post, read, or learn about the trial.

- Discussing the case with anyone, including other jurors, until deliberations begin. These rules are crucial to ensure the verdict is based solely on evidence admitted in court [5] [7].

Q4: Are judges also affected by social media? A4: Yes, judges must navigate social media with extreme caution. Ethics rules apply to their online activity, and they can face disciplinary action for missteps such as endorsing businesses or political figures, engaging in fundraising, or posting comments that could create an appearance of bias [7].

Q5: What is "scientific jury analysis" and how can it help? A5: Scientific jury analysis is a process that helps legal teams understand the potential biases of the jury pool. It involves studying demographics, attitudes, and beliefs, often through pre-trial data analysis and supplemental juror questionnaires. This helps lawyers develop strategies to mitigate the impact of media bias during jury selection and the trial itself [6].

Troubleshooting Guide: Identifying and Mitigating External Influences on Legal Research

This guide provides a systematic methodology for researchers to identify, measure, and counteract the effects of media on public perception and legal outcomes.

Phase 1: Understanding the Problem

Objective: Diagnose the extent and nature of media influence on a specific case or legal topic.

Ask Focused Questions:

- What is the volume and sentiment of media coverage (traditional and social) surrounding the case?

- What are the dominant narratives or frames being used?

- Which demographic groups are most exposed to this coverage?

Gather Quantitative Data: Collect data to benchmark media influence. The table below summarizes key metrics from research.

| Metric | Finding | Source |

|---|---|---|

| Jurors who would search for case info online pre-trial | 46% | [5] |

| Key psychological effect | Confirmation Bias | [5] [6] |

| Key psychological effect | Priming | [5] [6] |

| Key psychological effect | Group Think | [5] |

| Direct mail response rate for clinical trials | 40-60% | [8] |

- Reproduce the Media Landscape: Use media monitoring tools to create a comprehensive dataset of news articles, social media posts, and influencer commentary related to the case. Analyze this data for recurring themes, factual inaccuracies, and emotional language.

Phase 2: Isolating the Issue

Objective: Pinpoint the root cause and mechanism of media influence.

Remove Complexity: Break down the media influence into core components:

- Source: Is the influence from algorithmic social media feeds, traditional news, or "armchair experts"? [5]

- Psychological Mechanism: Is the primary effect priming, confirmation bias, stereotype activation, or emotional manipulation? [6]

- Audience: Which segments of the population (e.g., heavy news consumers, digital natives) are most susceptible? [5] [6]

Change One Variable at a Time: Design experiments that test the impact of a single media variable. For example, expose different mock jury groups to positive, negative, or neutral media clips about a defendant, while keeping all other case facts constant, to isolate the media's effect on the verdict.

Compare to a Baseline: Compare the perceptions of a research group heavily exposed to case media against a control group with minimal exposure. This helps establish the baseline level of bias introduced by external information.

Phase 3: Developing a Fix or Workaround

Objective: Formulate evidence-based strategies to counteract media influence.

Test Proposed Solutions:

- Enhanced Voir Dire: Develop supplemental juror questionnaires to directly probe media consumption habits and pre-existing case knowledge [5] [6].

- Counter-Framing: Craft a clear, compelling trial narrative that preemptively addresses and neutralizes common media-driven biases [6].

- Expert Testimony: Use expert witnesses to explain to the jury how media and algorithms can shape perceptions and create implicit biases [5] [6].

Fix for Future Research: Document successful mitigation strategies and contribute to the development of updated model jury instructions, which now explicitly warn jurors about the risks of social media and disinformation [5].

Experimental Protocols

Protocol 1: Measuring Priming Effects in Mock Juries

- Recruitment: Recruit a diverse pool of participants representative of a jury pool.

- Stimulus: Randomly assign participants to groups. Expose the experimental group to a series of news articles with a specific narrative (e.g., emphasizing corporate greed). The control group reads neutral articles.

- Task: Both groups review the same set of case materials from a civil lawsuit.

- Measurement: Have participants deliberate and reach a verdict. Use pre- and post-deliberation questionnaires to measure their perception of key facts, the defendant's credibility, and liability.

- Analysis: Statistically compare verdict outcomes and fact weighting between the two groups to quantify the priming effect.

Protocol 2: Quantifying Confirmation Bias Through Information Seeking

- Pre-Screening: Survey participants to establish their initial leaning on a relevant topic (e.g., corporate regulation).

- Simulated Research Task: Provide participants with a curated digital library of information about a case, containing a mix of pro-plaintiff and pro-defense documents.

- Data Collection: Use tracking software to log which documents participants open, how much time they spend on each, and in what order they access them.

- Analysis: Analyze the data to determine if participants selectively consume information that aligns with their pre-existing leanings, demonstrating confirmation bias.

Visualizing the Psychological Pathways of Media Influence

The following diagram illustrates the logical relationship between media exposure and its psychological impacts on juror decision-making.

This table details essential methodological solutions for researching and addressing the impact of media on legal proceedings.

| Research Reagent Solution | Function |

|---|---|

| Scientific Jury Analysis | Studies demographics, attitudes, and beliefs of a jury pool to predict and navigate biases stemming from media exposure [6]. |

| Supplemental Juror Questionnaires (SJQs) | Tailored written questionnaires used during jury selection to identify potential jurors with strong biases resulting from media coverage [5] [6]. |

| Mock Trials & Focus Groups | A simulated trial used to test case narratives, arguments, and evidence on a representative sample, evaluating the impact of media frames and refining counter-strategies [5] [6]. |

| Model Jury Instructions | Updated, explicit court instructions that warn jurors about the specific risks of social media, algorithms, and disinformation, and prohibit their use during the trial [5]. |

| Media Monitoring & Analysis | Systematic tracking and quantitative/qualitative analysis of traditional and social media coverage to understand the narrative landscape surrounding a case [5]. |

Technical Support Center: FAQs for Researchers and Drug Development Professionals

This technical support center is designed to help researchers, scientists, and drug development professionals navigate the complex intersection of rigorous scientific data and its interpretation in legal and public domains. The following FAQs and troubleshooting guides address common challenges you might encounter during your experiments and development processes.

Frequently Asked Questions (FAQs)

Q1: What are the most critical considerations for an initial regulatory submission to support clinical trials?

A: The foundation of a successful regulatory submission lies in demonstrating a clear and scientifically justified path from your non-clinical data to the proposed clinical trial. Your submission must include [9] [10]:

- Robust Non-Clinical Data: This includes comprehensive pharmacology and toxicology studies to establish a preliminary safety profile and support the choice of the initial human dose.

- Detailed Clinical Protocol: The protocol must be feasible and prioritize subject safety, with special attention to dose selection, escalation plans, and safety monitoring. It should align with relevant technical guidance principles, such as those for clinical pharmacology and maximum recommended starting doses [9].

- Complete CMC Information: The application must include detailed information on the chemistry, manufacturing, and controls to ensure the drug's quality, characterization, and consistency.

Q2: Our research involves processing patient data. What is the legal basis for handling this information for scientific purposes?

A: In many jurisdictions, the legal framework allows for processing personal data for scientific research, but with strict boundaries. Key legal bases and limits include [11]:

- Public Interest and Specific Laws: Processing may be permitted as necessary for tasks performed in the public interest, subject to appropriate safeguards laid down in law.

- Proportionality and Data Minimization: The scope of collected personal information must be limited to the "minimum necessary" to achieve the specific research purpose.

- Anonymization: Applying anonymization techniques, which render data incapable of identifying a specific natural person and non-reversible, is a crucial safeguard. The ultimate legal boundary is that the processing must not cause harm or damage to the data subjects' rights and freedoms [11].

Q3: How is "Important Data" defined from a regulatory perspective, and why does it matter for our research datasets?

A: Important Data is a key concept in data security laws, defined as data that, if tampered with, destroyed, leaked, or illegally obtained/used, could harm national security, public interests, or the legitimate rights of individuals/organizations [12] [13]. For researchers:

- It's a National Security Classification: This classification primarily aims to identify and protect non-state secret data that nonetheless impacts national security [13].

- Mandatory Protection: Data classified as "important" is subject to stricter protection requirements than general data, including more stringent management systems and legal obligations for the data processor [13].

- Sector-Specific Directories: Regulatory bodies are tasked with creating specific catalogs of important data for their respective sectors and regions, which researchers must consult [12].

Q4: When can data from overseas clinical trials be used to support a domestic application?

A: Using foreign clinical data requires a thorough assessment to bridge potential ethnic differences. Key factors regulators consider include [9]:

- Assessment of Ethnic Sensitivity: You must evaluate whether the drug's pharmacokinetics (PK), pharmacodynamics (PD), dose-response relationships, and metabolic pathways are sensitive to ethnic factors, following guidelines like ICH E5(R1).

- Therapeutic Window: Drugs with a wider therapeutic window are generally more amenable to extrapolation.

- Clinical Practice Consistency: Differences in medical practice, accepted concomitant medications, and diagnostic criteria between regions can impact the applicability of foreign data.

Q5: What is a CAPA plan and when is it required in the drug development process?

A: A Corrective and Preventive Action (CAPA) plan is a quality system process designed to address compliance issues and prevent their recurrence. It is crucial for ensuring trial subject safety and data integrity [14].

- Corrective Action: This is reactive, addressing the root cause of an existing problem to prevent its recurrence.

- Preventive Action: This is proactive, identifying and eliminating the cause of a potential problem to prevent its first occurrence. A CAPA plan is typically required following a serious deviation from Good Clinical Practice (GCP) or the study protocol. It must include a detailed description of the problem, an investigation summary, the root cause, and a list of corrective and preventive actions [14].

Troubleshooting Guides

Issue 1: Regulatory Feedback Indicates Inadequate Justification for Clinical Trial Design

Symptoms: A regulatory agency questions your proposed starting dose, dose escalation scheme, or the feasibility of your Phase I trial protocol.

Resolution:

- Revisit Non-Clinical Data: Ensure your starting dose is justified using methods outlined in relevant guides, such as the Healthy Adult Volunteer First Clinical Trial Drug Maximum Recommended Starting Dose Estimation Guide [9].

- Strengthen Dose Rationale: Your dose escalation plan and the definition of a Dose-Limiting Toxicity (DLT) must be explicitly linked to your drug's mechanism and pharmacokinetic profile (e.g., half-life). For drugs with long half-lives, the observation period must be sufficiently long to capture safety signals [9].

- Benchmark Against Guidelines: Cross-reference your protocol with key guidelines like ICHE8 (RI): General Considerations for Clinical Studies and other region-specific clinical pharmacology guidances to ensure all necessary elements are included and justified [9].

Issue 2: Uncertainty in Categorizing Research Data for Compliance

Symptoms: Difficulty determining the protection level required for research datasets, leading to risks of non-compliance with data security laws.

Resolution:

- Apply the "Consequence" Path for Grading: Analyze the impact on security attributes (confidentiality, integrity, availability) if the data is compromised. The grading should be based on the potential harm to national security, public interest, or individual/organizational rights [12] [13].

- Consult "Top-Down" Frameworks: Do not rely solely on an internal "bottom-up" classification. Refer to national or sector-specific important data protection catalogs. The classification is fundamentally a state-driven ("top-down") activity to manage national security risks [12].

- Implement Enhanced Safeguards: For data classified as "important," you must implement stronger security measures and stricter management protocols than for general data, as required by law [13].

Issue 3: Challenges in Leveraging Existing Data for a New Indication

Symptoms: A desire to bypass a new Phase II exploratory trial for a new disease indication based on existing efficacy data.

Resolution:

- Justify the Scientific Bridge: It is usually necessary to conduct new Phase II trials for a new indication. Different diseases or patient populations can significantly alter a drug's PK/PD profile, making the original dose and regimen potentially ineffective or unsafe [9].

- Avoid Direct Extrapolation: Do not assume the effective dose from one indication is directly applicable to another. For example, a potent anti-platelet drug's dose for acute coronary syndrome cannot be directly extrapolated for use in stroke patients without dedicated studies [9].

- Engage Regulators Early: If you believe there is a strong scientific rationale to bypass Phase II (e.g., identical disease mechanism and drug target), you must proactively seek a communication meeting with the regulatory agency to discuss your evidence and strategy [9].

Experimental Protocols & Methodologies

Protocol 1: Designing a First-in-Human (FIH) Clinical Trial

Objective: To assess the safety, tolerability, and pharmacokinetics of a new investigational drug in humans for the first time.

Methodology:

- Subject Selection: Determine if the trial will be in healthy volunteers or a specific patient population (e.g., oncology drugs in cancer patients). Justify this choice based on the drug's mechanism and toxicity profile [9].

- Dosing Strategy:

- Starting Dose: Calculate based on non-clinical toxicology data (e.g., No Observed Adverse Effect Level - NOAEL from animal studies) using established algorithms [9].

- Dose Escalation: Employ a pre-defined dose escalation scheme (e.g., modified Fibonacci). Specify clear stopping rules for dose-limiting toxicities (DLTs).

- Maximum Dose: Pre-define the maximum administered dose based on non-clinical data or achieving a predefined pharmacodynamic target.

- Safety and PK/PD Assessments: Establish a schedule for safety monitoring (vital signs, labs, ECGs, adverse events) and intensive PK/PD sampling to characterize the drug's exposure and effect profile [9].

Protocol 2: Implementing a CAPA Plan for a Protocol Deviation

Objective: To systematically address a significant protocol violation and prevent its recurrence.

Methodology [14]:

- Identify and Document the Problem: Clearly describe the non-compliance event. Use techniques like the "Five Whys" to drill down to the root cause.

- Contain the Issue: Implement immediate corrective or containment actions to mitigate the current problem's impact.

- Root Cause Analysis: Formally investigate and document the fundamental reason(s) the deviation occurred.

- Develop Action Plan: Create a list of corrective actions (to fix the root cause) and preventive actions (to stop it from happening again).

- Implement and Verify: Execute the actions, document all steps, and after a suitable period, verify that the actions have been effective and the problem has been resolved.

Data Presentation Tables

Table 1: Comparison of Key Regulatory Submission Pathways for Initial Clinical Trials

| Feature | Investigational New Drug (IND) - USA | Clinical Trial Application (CTA) - EU |

|---|---|---|

| Governing Regulation | FDA regulations | EU Clinical Trials Regulation (CTR) |

| Review Timeline | 30-day review period [10] | Average of 60 days at the national level [10] |

| Review Outcome | Study may proceed if no FDA hold ("pass") [10] | Formal approval required [10] |

| Application Scope | Single IND can cover multiple studies [10] | Each interventional clinical study requires its own CTA [10] |

| Core Documentation | Forms, non-clinical reports, CMC, protocol, Investigator's Brochure (IB) [10] | Protocol, informed consent, IB, Investigational Medicinal Product Dossier (IMPD) with CMC data [10] |

Table 2: Research Reagent Solutions for Data Compliance and Security

| Item / Solution | Function / Explanation |

|---|---|

| Data Anonymization Tools | Software solutions that apply techniques like masking, generalization, and perturbation to permanently remove personal identifiers from datasets, facilitating research use under privacy laws [11]. |

| Data Classification Engines | Technology that uses natural language processing and pattern matching to automatically scan and tag data according to predefined policies (e.g., identifying "Important Data" based on content) [13]. |

| CAPA Management Software | Digital systems that help track quality events, manage the root cause analysis process, and document the implementation and verification of corrective and preventive actions [14]. |

| Electronic Trial Master File (eTMF) | A secure, centralized digital repository for all essential trial documents, ensuring version control, audit readiness, and facilitating the storage of CAPA plans and related communications [14]. |

Workflow and Relationship Visualizations

Diagram 1: Simplified Drug Development Regulatory Pathway

Diagram 2: Corrective and Preventive Action (CAPA) Workflow

Diagram 3: Data Classification Hierarchy Based on Potential Harm

Troubleshooting Guide: Navigating New Regulatory Landscapes

Q: Our company is preparing a New Drug Application (NDA). A recent FDA pre-NDA meeting revealed concerns about a secondary endpoint, with the agency recommending an additional clinical trial. Our leadership is unsure what needs to be disclosed to investors. What are the risks of incomplete disclosure?

A: Failure to adequately communicate material regulatory information can lead to severe consequences, including civil actions from the Securities and Exchange Commission (SEC), criminal actions from the Department of Justice, and private lawsuits from stockholders [15]. The key is determining the "materiality" of the information. According to legal analysis of past cases, information is considered material if there is a substantial likelihood that a reasonable shareholder would consider it important to an investment decision [15]. If the regulatory communication (like an FDA recommendation for another trial) concerns a lead product on which the company's success depends, it is likely material and should be disclosed. Merely listing general risk factors in SEC filings is often insufficient if specific, material communications are omitted [15].

Q: The FDA has started publishing Complete Response Letters (CRLs). What should we do if our confidential commercial information is inadvertently disclosed in a published CRL?

A: The FDA has begun publishing more than 200 redacted CRLs issued between 2020 and 2024 to increase transparency [16]. However, the agency notes that these letters were redacted for trade secrets and confidential commercial information [16]. If you are a product sponsor, it is critical to proactively review the published CRLs in the FDA's database to confirm your confidential information has not been inadvertently disclosed. If you find a problem, you should contact the FDA immediately. To prevent issues, carefully mark all submitted materials that contain trade secrets or confidential commercial information [16].

Q: Our institution's IRB is reviewing a study that will be conducted at an external site. Are we permitted to do this, and what steps must we follow?

A: Yes, a hospital or institutional IRB may review a study conducted outside of its main facility. FDA regulations do not require an IRB to be local to the research site [17]. Your IRB's written procedures should authorize the review of external studies. During review, the IRB meeting minutes must clearly show that members are aware the study is being conducted at an external site and that the IRB possesses appropriate knowledge about that study site to make an informed judgment [17].

Q: The FDA is encouraging more Remote Regulatory Assessments (RRAs). What should we do if the FDA requests a voluntary RRA?

A: In June 2025, the FDA issued final guidance on RRAs to help industry understand both voluntary and mandatory assessments [18]. If the FDA requests a voluntary RRA, you should review the final guidance, "Conducting Remote Regulatory Assessments--Questions and Answers," which describes the Agency's current thinking and processes. This guidance is intended to facilitate the RRA process for FDA-regulated products. While RRAs can be voluntary, the FDA also has the authority to mandate them in certain situations, so understanding the guidance is crucial for compliance [18].

Key 2025 Regulatory Updates and Data

Table 1: Recent FDA Policy Shifts (July 2025)

| Policy Change | Description | Potential Impact on Industry |

|---|---|---|

| Publication of CRLs [16] | FDA published >200 complete response letters for NDAs/BLAs from 2020-2024. | Competitors may gain insights into regulatory strategies; sponsors must be vigilant about confidential information. |

| Recall Communication Goals [16] | FDA outlined short & long-term goals to improve public awareness of recalls, especially for baby food and infant formula. | Industry may face pressure for voluntary cooperation; potential for more streamlined but transparent recall processes. |

| Commissioner's National Priority Voucher (CNPV) [16] | Pilot program may grant faster drug reviews for products advancing national health priorities; pricing may be a factor. | Represents a potential shift as FDA traditionally avoids pricing discussions; could influence drug development priorities. |

Table 2: Global Regulatory Updates on Clinical Trials (September 2025) [19]

| Health Authority | Update Type | Guideline/Topic | Key Change |

|---|---|---|---|

| FDA (U.S.) | Final | ICH E6(R3) Good Clinical Practice | Introduces flexible, risk-based approaches and modernizes trial design/conduct. |

| FDA (CBER) | Draft | Expedited Programs for Regenerative Medicine Therapies | Details use of expedited pathways (e.g., RMAT) for serious conditions. |

| EMA (EU) | Draft | Patient Experience Data | Encourages inclusion of patient perspectives throughout medicine lifecycle. |

| NMPA (China) | Final | Revised Clinical Trial Policies | Aims to accelerate drug development and shorten trial approval timelines by ~30%. |

| Health Canada | Draft | Biosimilar Biologic Drugs | Proposes removing routine requirement for Phase III comparative efficacy trials. |

The Scientist's Toolkit: Essential Research and Compliance Materials

Table 3: Key Research Reagent Solutions for Regulatory Compliance

| Item/Tool | Function in Regulatory Context |

|---|---|

| USP Public Standards | Universally recognized standards for drug substances and products that support regulatory compliance and help ensure quality and safety [20]. |

| FDA Guidance Documents | Represent the FDA's current thinking on a subject; essential for designing studies that meet regulatory expectations [21]. |

| ICH E6(R3) GCP Guideline | The updated international ethical and scientific quality standard for designing, conducting, and recording clinical trials [19]. |

| Remote Assessment Tools | Digital platforms and protocols required for participating in FDA Remote Regulatory Assessments (RRAs) [18]. |

| Estimand Framework (ICH E9(R1)) | A structured framework to precisely define the treatment effect of interest in a clinical trial, addressing how intercurrent events are handled, which improves clarity for regulatory review [19]. |

Experimental Protocol: Implementing a Risk-Based Monitoring Strategy as per ICH E6(R3)

Objective: To implement a monitoring approach for a clinical trial that aligns with the modernized, risk-based principles of the ICH E6(R3) guideline, ensuring participant protection and data quality while optimizing resources [19].

Methodology:

- Centralized Monitoring Activities:

- Utilize statistical and analytical methods on accumulated data (e.g., electronic case report form data) to identify sites with potential data quality or integrity issues, protocol deviations, or trends in safety signals.

- Perform a cross-site comparison of key efficacy and safety variables to identify outliers or inconsistent data patterns.

- Targeted On-Site Monitoring:

- Based on the risk assessment and triggers identified from centralized monitoring, conduct targeted on-site visits.

- Focus on critical data and processes, such as verification of informed consent, primary endpoint data, and investigational product accountability, rather than 100% source data verification.

- Risk Communication:

- Establish a clear pathway for communicating identified risks and monitoring findings to the sponsor's management and the IRB, as required, ensuring ongoing oversight.

This targeted approach is more efficient and effective than a purely on-site, high-frequency model and is endorsed by the updated international standard [19].

Logical Workflow for Communicating Material Regulatory Information

The following diagram outlines a structured process for evaluating and communicating significant regulatory feedback, such as from the FDA, to stakeholders, while considering legal and materiality requirements. This process helps mitigate the risk of misleading investors or omitting critical information [15].

Building Your Communication Toolkit: Practical Methods for Effective Data Presentation

Frequently Asked Questions (FAQs)

What is the core function of AI in simulating jury reactions? AI uses natural language processing to analyze case data and simulate how different jury demographics might react to specific arguments, themes, and terminology. This helps in refining the most persuasive narrative before trial [22].

Can AI replace traditional mock trials? No. While AI can provide many of the insights of a mock exercise and is excellent for early-stage testing and refinement, it does not fully replicate the complex group dynamics of real jury deliberations. The inherent value of a traditional mock trial, including getting counsel to practice their delivery, remains [22].

What are the major risks of using consumer-grade AI tools for legal research? Public AI tools, trained on unvetted internet content, carry a high risk of "hallucinations," including fabricating case citations or providing inaccurate legal precedents. Their accuracy rates in legal research can be as low as 60-70%, which fails to meet professional legal standards [23].

How can I ensure the AI tools I use are reliable? Use professional-grade AI solutions that are built on curated, authoritative legal databases (like Westlaw or Practical Law) and provide transparent sourcing for verification. These tools can achieve over 95% accuracy and are designed to meet the profession's rigorous standards of accountability [23].

Are there specific courtroom rules for using AI-generated visuals? Yes. Some courts are beginning to implement rules that mandate the disclosure of AI-generated visual aids, such as diagrams or reconstructions. The goal is to preserve transparency and allow for scrutiny of the tool's methodology and the accuracy of its outputs [24].

Troubleshooting Guides

Description A user discovers that case citations or legal precedent generated by a public AI tool (e.g., ChatGPT) are invented or inaccurate, a phenomenon known as "hallucination" [23].

Solution Follow this verification protocol to ensure research integrity:

- Switch to a Professional-Grade Tool: Immediately cease using public AI for legal research. Transition to a professional-grade AI that is integrally built with and draws answers exclusively from validated legal databases [23].

- Verify Every Citation: Manually check every provided citation using a trusted legal research service and its integrated citator, such as the West Key Number System, to confirm the case exists, is still good law, and supports the purported proposition [23].

- Maintain Professional Responsibility: Remember that ethical obligations require attorneys to personally verify the accuracy of all AI-generated work product before it is relied upon or submitted to the court [23].

Problem: Difficulty Developing a Persuasive, Jury-Friendly Case Narrative

Description A research team struggles to distill complex technical information into a simple, compelling story that will resonate with a non-expert jury.

Solution Apply cognitive science principles to structure your narrative:

- Apply the "Chunking" Technique: Break the complex story into manageable, logical segments or "chunks." For example, structure the explanation of a technical process into three distinct phases: Input, Processing, and Output [25].

- Employ a Consistent Narrative Framework: Use a single, simple metaphor throughout the case to ground abstract technical concepts. For instance, frame an AI's learning process as "an art student studying masterworks in a museum" to make the process intuitive [25].

- Coordinate Visuals and Testimony: Design trial graphics that reinforce—rather than compete with—the spoken narrative. Use a maximum of three elements per visual and ensure the imagery supports your core metaphor to prevent juror "split-attention effect" [25].

Problem: Jurors Anthropomorphize AI Systems

Description Jurors begin to incorrectly attribute human-like consciousness, intent, or judgment to an AI system, which can skew their evaluation of legal standards like "intent" or "knowledge" [25].

Solution Implement a clear educational strategy to explain the AI's fundamental nature:

- Preempt the Issue in Opening Statements: Clearly state that the AI is a sophisticated pattern-matching tool, no more conscious than a calculator. Introduce the "art student" or similar metaphor early on [25].

- Use Precise Language in Testimony: Train expert witnesses to consistently use non-human terminology. Describe the AI as "processing data," "adjusting numerical weights," and "statistically generating outputs" rather than "thinking" or "deciding" [25].

- Contrast Human vs. AI Cognition: Explicitly explain the difference. For example: "Where a human perceives a unified whole, the AI system analyzes millions of discrete statistical data points without comprehension." This prevents jurors from applying human moral standards to a non-human tool [25].

Experimental Protocols & Workflows

Protocol: Simulating Jury Reactions with AI

Objective: To identify the case themes and terminology that will most resonate with a target jury demographic.

Data Input Phase:

- Action: Feed the AI system voluminous case materials, including pleadings, key deposition transcripts, and expert reports.

- Tool Setup: Configure the AI platform with parameters reflecting the relevant jurisdictional and demographic profiles.

Analysis & Theme Generation Phase:

- Action: Use the AI's natural language processing capabilities to analyze the documents.

- Output: The AI will suggest recurring language, emotional tones, factual patterns, and potential narrative arcs based on the ingested data [22].

Simulation & Refinement Phase:

- Action: Test the identified themes and specific arguments against the AI's simulated jury models.

- Iteration: Refine the messaging based on the AI's feedback regarding resonance and comprehension. Use these insights to focus traditional mock trial exercises more efficiently [22].

The workflow for this protocol is as follows:

Protocol: Creating Court-Ready AI-Generated Visuals

Objective: To create accurate, admissible visual aids that simplify complex evidence while complying with emerging court regulations.

Data Processing & Visualization:

- Action: Use AI-powered tools to automatically process case files, medical records, or financial data.

- Output: Generate precise, data-backed visuals such as timelines, graphs, and 3D accident reconstructions [26].

Disclosure and Transparency Check:

- Action: Adhere to any local court rules requiring the disclosure of AI-generated exhibits. Be prepared to explain the tool's methodology and data sources [24].

Verification and Validation:

The workflow for this protocol is as follows:

Data Presentation

Table 1: Comparison of Public vs. Professional-Grade AI Tools for Legal Work

| Feature | Public AI Tools (e.g., ChatGPT) | Professional-Grade Legal AI |

|---|---|---|

| Training Data | Unvetted, web-scraped internet content [23] | Curated, authoritative legal databases (e.g., Westlaw) [23] |

| Accuracy in Legal Research | 60-70% [23] | 95%+ [23] |

| Source Verification | Difficult or impossible; high risk of hallucinated citations [23] | Direct traceability to authoritative sources with citator support [23] |

| Editorial Oversight | None [23] | Maintained by legal experts and attorney editors [23] |

| Suitability for Legal Research | Not recommended; high ethical and professional risk [23] | Recommended; built for professional standards and accountability [23] |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential AI and Support Tools for Jury Research and Communication

| Item | Function |

|---|---|

| Professional-Grade Legal AI | An AI platform integrated with validated legal databases. Its function is to provide high-accuracy legal research and avoid the risks of fabricated citations [23]. |

| Narrative Analysis AI | A tool that uses natural language processing to analyze case documents and suggest resonant themes and story arcs based on language patterns and emotional tone [22]. |

| Jury Simulation Software | Software that models demographic regions and simulates how different jury pools might react to arguments, helping to refine case themes before trial [27] [22]. |

| AI-Powered Visualization Tool | Software that automates the creation of data-driven visuals, timelines, and 3D reconstructions from case evidence, enhancing juror comprehension [26]. |

| Cognitive Load Management Framework | A communication strategy (not a software tool) involving "chunking" and metaphorical narratives. Its function is to structure complex information for optimal juror understanding and retention [25]. |

Troubleshooting Guides

Guide 1: Troubleshooting Jury Comprehension of Complex Clinical Data

| Problem Area | Specific Issue | Potential Solution | Key Considerations |

|---|---|---|---|

| Data Overload | Presenting too many data points or statistics at once, overwhelming the jury. | Use data visualization to highlight only the most critical 2-3 findings. Rely on charts and graphs instead of dense tables [28]. | Jurors view evidence as more reliable when they can see visualizations of the presented data [29]. |

| Technical Jargon | Use of complex scientific or medical terminology that is unfamiliar to laypersons. | Replace acronyms and technical codes with plain English explanations. Use anatomical illustrations to show injuries or procedures [28]. | A well-placed visual can anchor your case theory in the juror’s mind far more effectively than words alone [28]. |

| Chronology | Difficulty in conveying the sequence of events, such as a delayed diagnosis or treatment. | Create a clean, visual timeline that highlights key events, missed assessments, and escalation points [28]. | Jurors need to see how events unfolded over time; visual timelines help them understand the story [28]. |

| Causation | Challenges in demonstrating a direct link between an action (or inaction) and a clinical outcome. | Use before-and-after comparisons (e.g., imaging scans) and graphs of lab results to highlight deterioration or missed red flags [28]. | Partner with medical-legal consultants to ensure visuals are clinically accurate and litigation-ready [28]. |

Guide 2: Troubleshooting Language and Cultural Barriers in Global Clinical Trials

| Problem Area | Specific Issue | Potential Solution | Key Considerations |

|---|---|---|---|

| Patient Recruitment | Over 80% of clinical trials are delayed due to recruitment problems, often stemming from language barriers [30]. | Localize all patient-facing materials. This goes beyond translation to align content with local customs, values, and legal requirements [30]. | Working with diverse patient groups is essential for generalizable results and higher data quality [30]. |

| Informed Consent | Potential participants cannot understand complex informed consent documents, leading to low enrollment [30]. | Provide professional interpretation services and translate consent forms into the patient's native language [30]. | Patients feel included and are more motivated to participate when documents are in their language, increasing trial success rates [30]. |

| Data Quality & Regulation | Risk of lost context or inaccurate data from poorly translated materials, violating regulatory standards. | Implement back translation for quality assurance and work with certified translators who have subject matter expertise [30]. | Regulatory bodies like the FDA require accurate translation. Using certified experts ensures documents hold the same legal value as the originals [30]. |

Frequently Asked Questions (FAQs)

Q: Why are visuals like timelines and charts so critical in a medical malpractice trial? Medical records are dense with jargon and long narratives. Visuals translate this complex data into a story that helps juries see what happened, when it happened, and why it matters. They view visualized evidence as more reliable and find it easier to understand than dry legal terms [29] [28].

Q: What is the most common mistake when creating medical visuals for court? A common mistake is overloading a graphic with too much detail, which can confuse jurors instead of clarifying the point. Other errors include using medical jargon and failing to have the visuals reviewed by a medical professional for clinical accuracy [28].

Q: Our clinical trial is global. Are we legally required to provide translated materials? Yes, there is a legal foundation for language access. Title VI of the 1964 Civil Rights Act prohibits discrimination based on national origin in federally funded programs, and this has been interpreted to include language access. Furthermore, providing translated materials is crucial for both ethical recruitment and data quality in global trials [31] [30].

Q: What is "back translation" and why is it important? Back translation is a quality control process where a third-party translator who has not seen the original document translates the translated version back into the original language. This helps check for disparities and ensures the translated content accurately reflects the original, which is vital for regulatory compliance and patient safety [30].

Q: How can we quickly improve our data visuals for a mediation or deposition? Focus on creating one key visual per central issue. Ensure each graphic is clear, accurate, relevant, and concise. Use a visual timeline to show a sequence of care or an anatomical illustration to show an injury. Even outside a trial, these visuals can simplify complex arguments and challenge inconsistent testimony [28].

Data Presentation: Best Practices for Legal Settings

The table below summarizes key principles for presenting clinical data to judges and juries, synthesized from litigation experts.

| Principle | Application in Legal Context | Rationale |

|---|---|---|

| Simplify to Clarify | Convert complex records into clean, jury-friendly visuals focused on one key point per graphic [28]. | Preverts information overload and helps laypersons grasp the core medical facts of the case. |

| Tell a Story | Use visual timelines to illustrate the chronology of care, highlighting delays, errors, or escalation points [28]. | Emotional decision-making is in; storytelling is the biggest tool that captures the hearts of juries [29]. |

| Ensure Accuracy | All visuals must be clinically and factually correct, backed by documentation and expert analysis [28]. | Inaccurate visuals can be challenged and excluded, damaging the credibility of your entire case. |

| Use Intuitive Colors | When choosing colors for data visualization, consider their cultural meaning (e.g., red for attention, green for good). Use light colors for low values and dark colors for high values in gradients [32]. | Intuitive color choices help readers correctly interpret the data without needing extensive explanation. |

Experimental Protocol: The Visual Translation Workflow

The following diagram outlines a recommended workflow for transforming raw clinical data into a court-ready visual exhibit.

| Tool or Resource | Function in Legal Translation | Example/Application |

|---|---|---|

| Medical-Legal Consultant | Bridges the gap between raw medical records and visually presentable information; ensures clinical accuracy [28]. | An LNC can prepare customized timelines and flag where the standard of care was breached. |

| Certified Medical Interpreter | Provides accurate oral interpretation in healthcare settings, a legal requirement for meaningful access [31]. | Used for patient interviews, witness preparation, and explaining complex medical terms in depositions. |

| Certified Translation Service | Provides accurate translation of written documents, ensuring they hold the same legal value as the original [30]. | Essential for translating clinical trial protocols, informed consents, and non-English medical records. |

| Data Visualization Software | Creates compelling charts, graphs, and timelines that make complex information digestible for juries [29] [28]. | Used to generate visuals for timelines of care, lab result trends, and anatomical illustrations. |

| Color Palette Tool | Ensures chosen colors for data visuals have sufficient contrast and are intuitive for the audience [32]. | Applying a palette like Google's (#4285F4, #EA4335, #FBBC05, #34A853) while checking contrast ratios. |

Technical Support Center

This support center provides troubleshooting guides and FAQs for researchers and legal professionals using the Nordstrom Method for AI-assisted witness preparation. This methodology is framed within the broader research on the challenges of communicating complex evidence, like Likelihood Ratios (LR), to juries, a domain where studies show jurors often struggle to interpret quantitative testimony as experts intend [33].

Frequently Asked Questions (FAQs)

Q: What is the core principle of the Nordstrom Method for witness preparation? A: The Nordstrom Method is a three-stage process that uses voice-to-text technology and AI analysis to refine witness statements. It is designed to improve the quality of testimony for all witnesses, ensuring it is accurate, credible, and truthful, while adhering to ethical boundaries [34].

Q: My witness is anxious about the testimony process. How can this method help? A: A key goal of witness preparation is to educate witnesses about the testimony process and enhance their communication skills. By using realistic practice sessions and providing feedback on non-verbal cues, the method helps witnesses manage anxiety, build confidence, and remain composed under pressure [34] [35].

Q: The research mentions jurors often misunderstand statistical evidence. How can better witness preparation address this? A: Studies indicate that laypeople frequently confound statistical measures like Random Match Probability (RMP) and struggle with the necessary mathematical computations [33]. A well-prepared expert witness, trained through this method, can use clearer language, explain the direction of the evidence explicitly, and employ visual aids or simplified frequency statements to improve juror comprehension [33].

Q: I'm concerned about the ethical line between preparation and coaching. How does this method ensure compliance? A: The method is grounded in the duty to provide competent representation as defined by bodies like the American Bar Association. It focuses on fostering truthful testimony by having witnesses provide their own initial statements without attorney interference. The subsequent AI analysis and attorney feedback aim to clarify and enhance the communication of this truthful account, not to script or influence its substance [34].

Q: What are the most common technical issues when recording the initial witness statement? A: Common issues and their solutions are summarized in the table below.

| Problem | Possible Cause | Solution |

|---|---|---|

| Software/App won't run or record. | Compatibility issues, corrupted files, or missing dependencies [36]. | Check software compatibility with your operating system, restart the application, reinstall the program, or update to the latest version [36]. |

| Poor audio quality. | Low-quality microphone, background noise, or incorrect device settings. | Use a high-quality, dedicated recording device (e.g., PLAUD recorder or smartphone). Test equipment in the actual environment beforehand and ensure the selected microphone is the input device in your software settings [34]. |

| Voice-to-text transcription is inaccurate. | Unclear speech, background noise, or poor software performance. | Ensure the witness speaks clearly and at a moderate pace. Use an external microphone in a quiet, distraction-free environment. Consider using specialized, high-accuracy transcription software [34]. |

| Computer is running slowly during other prep tasks. | Too many programs running, low disk space, or malware [36]. | Close unnecessary applications and browser tabs. Free up disk space by deleting temporary files. Run an antivirus or anti-malware scan [36]. |

Experimental Protocols & Workflows

Protocol 1: Initial Witness Statement Recording

Objective: To capture a clear, foundational statement from the witness in their own words. Materials: Voice-to-text device (e.g., iPhone 16 Pro, PLAUD recorder), list of key questions, distraction-free conference room [34]. Methodology:

- Direct the witness to a distraction-free environment.

- The attorney provides a series of key questions addressing the fundamental aspects of the case (who, what, why, when, where) and other case-specific topics.

- The witness records their responses using the voice-to-text device. The statement should thoroughly address all relevant topics, including:

- Date, time, and environmental conditions.

- Description of physical evidence.

- Recollection of conversations and actions.

- Ongoing implications (medical, emotional, financial).

- Impact on personal relationships [34].

- The witness is instructed to provide clear, truthful, and factual statements without exaggeration. This session typically lasts less than an hour [34].

Protocol 2: AI Analysis of Recorded Statement

Objective: To use AI tools to identify areas for improvement in the witness's initial statement. Materials: Transcript of the witness's recorded statement, AI analysis software [34]. Methodology:

- The recorded statement is processed by AI algorithms.

- The AI performs several key functions:

- Keyword Analysis: Generates a word cloud to visualize key concepts and word frequency [34].

- Emotional Tone Analysis: Uses sentiment analysis to evaluate the emotional tone of responses (e.g., hesitation, frustration) [34].

- Content Optimization: Suggests ways to clarify or enhance responses for conciseness and impact [34].

Research Reagent Solutions

The following table details key tools and materials used in the Nordstrom Method.

| Item | Function |

|---|---|

| Voice-to-Text Device (e.g., iPhone 16 Pro, PLAUD recorder) | Captures the witness's initial statement accurately and converts it into a text transcript for subsequent analysis [34]. |

| AI Analysis Software | Provides objective, data-driven insights into the witness's statement by analyzing word choice, emotional tone, and content structure [34]. |

| Litigation Management Software (e.g., CARET Legal) | Centralizes case materials (depositions, pleadings, exhibits), schedules preparation sessions, and supports coordinated team strategy, ensuring nothing is overlooked [35]. |

| Video Recording Equipment | Records mock examination sessions for later review with the witness to identify and correct unhelpful non-verbal communication habits [35]. |

Workflow Visualization

The following diagram illustrates the logical workflow of the Nordstrom Method for AI-assisted witness preparation.

Witness Preparation 2.0 Workflow

The following diagram maps the communication challenge between expert testimony and juror comprehension, and how technology-aided preparation can bridge the gap.

Bridging the Testimony Comprehension Gap

Modern juror research has been transformed by Legal AI tools, which use machine learning and natural language processing to provide data-driven insights for case strategy [37]. These tools help legal teams analyze large volumes of case data, predict juror behavior, and forecast case outcomes, moving beyond traditional reliance on intuition alone [37]. This guide details the protocols for using these technologies ethically and effectively, ensuring your research is both insightful and compliant with professional standards.

Troubleshooting Guides

Issue 1: Inconclusive or Contradictory Juror Profile Data

- Problem: AI juror profiling tool returns profiles that are weak, contradictory, or lack clear predictive value for case strategy.

- Diagnosis: This often results from low-quality or insufficient input data, or a misalignment between the tool's analysis and your specific case themes.

- Resolution:

- Verify Input Data: Ensure juror questionnaires and social media data feeds are complete and correctly formatted.

- Refine Case Parameters: Re-calibrate the AI tool by re-inputting your core case story and key themes with greater specificity.

- Run a Simulation: Use the platform's Jury Simulator feature with the updated profiles to test for stronger correlations between juror backgrounds and case outcome predictions [37].

- Consult an Expert: If results remain inconclusive, engage an in-trial consultant provided by the platform for deeper analysis [37].

Issue 2: Potential Bias in AI-Generated Voir Dire Questions

- Problem: The AI tool suggests voir dire questions that could be perceived as discriminatory or that risk violating legal standards against bias based on race, sex, or religion.

- Diagnosis: The algorithm may be basing its suggestions on correlative data points that align with protected characteristics.

- Resolution:

- Conduct Due Diligence: Critically evaluate every AI-generated question. Understand the factors the tool used to generate its recommendations [37].

- Apply the "Unlawful Bias" Filter: Manually review all questions to ensure they do not perpetuate unlawful biases, in line with ABA guidelines [37].

- Document Rationale: Keep a clear record of the neutral, case-related reason for asking each approved question to demonstrate adherence to ethical standards [37].

Issue 3: Difficulty Integrating AI Insights with Traditional Case Strategy

- Problem: The data-driven insights from the AI platform conflict with the legal team's experience-based strategy, causing confusion.

- Diagnosis: A disconnect between quantitative data and qualitative legal expertise.

- Resolution:

- Use AI as a Complement: Frame AI tools as a source of data-backed insights that complement, not replace, legal expertise [37].

- Seek Synergy: Use virtual focus groups to test both the traditional strategy and the AI-suggested strategy, comparing the outcomes to find the most effective hybrid approach [37].

- Facilitate a Team Discussion: Use the conflicting data points to drive a strategic conversation, exploring why the AI might be suggesting a different path and whether it reveals a blind spot in the initial strategy.

Frequently Asked Questions (FAQs)

What are the core capabilities of Legal AI in juror research?

Legal AI tools enhance jury selection and case preparation through several key features [37]:

- Predictive Analytics: Forecasts case outcomes based on historical data and trends from similar cases.

- Juror Profiling: Builds data-driven profiles using demographics, behaviors, and potential biases.

- Bias Detection: Identifies both explicit and implicit biases in potential jurors by analyzing responses and behavioral patterns.

- Virtual Focus Groups & Simulations: Simulates jury reactions to case arguments, enabling strategy refinement before trial.

How can we ensure our use of juror profiling AI is ethical?

Adhering to ethical guidelines is critical. Follow these best practices [37]:

- Understand the Tool: Conduct sufficient due diligence to acquire a general understanding of the methodology used by the AI program.

- Maintain Human Oversight: Never accept AI recommendations at face value. An attorney must critically evaluate all suggestions.

- Avoid Unlawful Bias: Ensure that peremptory challenges are not based on protected characteristics. AI should not be used to perpetuate unlawful discrimination.

- Invest in Training: Enhance your team's understanding of AI technologies to use them effectively and recognize their limitations.

- Document Decisions: Keep detailed records of AI-influenced decisions to demonstrate adherence to ethical guidelines.

Our firm is new to this technology. What is the best way to start?

For smaller firms, beginning with a structured approach is key to successful integration [37]:

- Start with a Pilot Project: Choose a single, well-defined case to test the AI tools.

- Select an Appropriate Service Tier: Many providers offer basic consulting tiers tailored for smaller firms or budgets, which can include consultation and voir dire preparation without a full-scale commitment [37].

- Focus on a Single Feature: Begin by using one core feature, such as Juror Scoring, which combines demographic and psychographic data into a single dashboard for quick insights [37].

- Review and Iterate: After the case, evaluate the tool's impact on your strategy and outcomes to decide on future use.

Experimental Protocols & Data Presentation

Table 1: Legal AI Tool Feature Comparison for Experimental Design

| AI Tool Feature | Experimental Input | Measurable Output / Data Point | Primary Function in Research |

|---|---|---|---|

| Jury Simulator | Case facts, arguments, juror profiles. | Simulated trial outcomes (e.g., 70% verdict for plaintiff). | Models how different juror compositions react to case elements [37]. |

| Juror Scoring | Demographic data, questionnaire responses, social media activity. | Numerical bias score (e.g., 0-100 scale). | Quantifies juror predispositions for systematic comparison [37]. |

| Virtual Focus Groups | Presentation materials, witness statements. | Qualitative feedback, poll results on key issues. | Tests argument resonance and identifies potential weaknesses [37]. |

| Bias Detection Algorithm | Voir dire question responses, language use. | Flagged implicit/explicit biases (e.g., "high skepticism toward corporate defendants"). | Identifies non-obvious biases that may influence juror decision-making [37]. |

Table 2: Essential Research Reagent Solutions for Digital Juror Analysis

| Research Reagent / Tool | Function & Explanation in the Juror Research Process |

|---|---|

| Machine Learning Platform | The core engine that processes vast datasets to identify patterns and predict behaviors [37]. |

| Venue-Specific Historical Data | A curated database of past juror behavior and case outcomes in a specific legal venue, used to train AI models for greater local accuracy [37]. |

| Juror Questionnaire Data | Structured input data (demographics, attitudes) that serves as the primary feedstock for generating initial juror profiles. |

| Social Media Analysis Tool | A software component that scans juror digital footprints to uncover biases, affiliations, and lifestyle factors not revealed in court [37]. |

Workflow: Ethical AI-Assisted Juror Research Protocol

Process: Strategic Response to AI-Generated Insights

Overcoming Common Pitfalls: Navigating Ethical Boundaries and Technical Hurdles

Social Media and Juror Research FAQs

Is it ethical to review jurors’ social media accounts during jury selection?

Yes, provided the review is passive and limited to publicly available content. The American Bar Association (ABA) has issued guidance stating that this practice is ethical as long as attorneys and their teams do not communicate with or "connect" with potential jurors through friend requests, follows, or messages [38] [39]. This is considered a standard part of a lawyer's duty to engage in zealous advocacy for their client.

What are the primary ethical risks and how can they be avoided?

The main ethical risks involve improper communication with jurors and the use of discriminatory reasoning for juror strikes.

- No Contact Rule: You must never attempt to connect with or "friend" a potential juror to access non-public information [38] [39]. Research must be strictly observational.

- Non-Discrimination in Strikes: Even with social media information, you cannot use peremptory challenges to strike jurors based on race, sex, religion, national origin, or other protected classes. Violating this can lead to sanctions under rules of professional conduct, such as Maryland's Rule 19-308.4(e) [40]. You must always be prepared to articulate a non-discriminatory, case-related reason for the strike [40].

What should I do if I find a post that reveals juror bias, but it is later deleted?

Social media content is dynamic and can be deleted or edited. If you find relevant information, you must preserve it immediately [39]. Reliable tools for this should:

- Capture content in real-time with a timestamp.

- Generate a forensic hash to verify the integrity of the evidence.

- Save the data in an exportable format suitable for court [39]. Without proper preservation, you may be unable to prove the post existed, making it unusable to support a challenge for cause.

Can social media findings be used to strike a juror for cause?

Yes. If social media research reveals clear bias or a failure to be candid during voir dire, it can form the basis for a strike for cause [39]. For example, if a juror states they have never been involved in litigation but their social media history reveals a previous lawsuit they filed, this discrepancy can be grounds for removal [39].

Experimental Protocol: A Methodological Workflow for Social Media Juror Research

The following diagram illustrates a systematic workflow for conducting ethical and effective social media research on potential jurors. This protocol helps ensure compliance with professional standards while maximizing the value of the data collected.

Detailed Methodology for Social Media Vetting

- Begin Upon Receiving the Juror List: Start research as early as possible. Early initiation provides more time to validate identities, analyze content, and integrate findings into your overall voir dire strategy [39].

- Conduct Publicly Available Research Only: Limit all investigations to content that is publicly viewable. Do not send friend requests, follow accounts, or use any other method to access private information [39]. This is a critical ethical boundary.

- Validate Juror Identity: Before relying on any information, confirm the social media profile belongs to the correct individual. Cross-reference details like photos, location, employment history, or mutual connections to avoid misidentification, which can lead to flawed decisions [39].

- Preserve Evidence Promptly: As soon as you find a relevant post, comment, or image, capture it using a tool that generates a timestamp and a forensic hash. This preserves the evidence for potential use in court, even if the original post is later deleted [39].

- Analyze for Bias and Inconsistency: Systematically review the preserved data for:

- Strongly Held Beliefs: Political views, opinions on the justice system, or attitudes related to case themes.

- Prior Legal Experience: Undisclosed history as a party in a lawsuit or connection to law enforcement.

- Inconsistencies: Conflicts between their online presence and their answers in the jury questionnaire or during oral voir dire [38] [39].

- Use Findings Strategically in Voir Dire: The goal is to inform your jury selection strategy. Use the insights to:

- Formulate targeted voir dire questions to probe specific attitudes.

- Assess the honesty of a juror's questionnaire responses.

- Build a foundation for strikes for cause.

- Guide the more strategic use of peremptory challenges [39].