Navigating Budget Constraints in Forensic Technology: Strategic Implementation and TRL Scaling for Researchers

This article addresses the critical challenge of implementing advanced forensic technologies amid significant budget constraints, providing researchers and forensic professionals with strategic frameworks for Technology Readiness Level (TRL) scaling.

Navigating Budget Constraints in Forensic Technology: Strategic Implementation and TRL Scaling for Researchers

Abstract

This article addresses the critical challenge of implementing advanced forensic technologies amid significant budget constraints, providing researchers and forensic professionals with strategic frameworks for Technology Readiness Level (TRL) scaling. Drawing on current market analysis and sustainable forensic frameworks, we explore cost-effective implementation methodologies, troubleshooting common financial barriers, and validation approaches that maintain scientific rigor while optimizing resources. With the global forensic technology market projected to reach $18.025 billion by 2030 yet facing systemic funding crises in many jurisdictions, this comprehensive guide offers practical solutions for maximizing technological impact within limited budgets, particularly focusing on digital forensics, rapid DNA analysis, and AI integration.

Understanding the Forensic Technology Funding Landscape and Budgetary Pressures

Forensic science is navigating a critical juncture, caught between groundbreaking technological potential and a severe, systemic funding shortfall. This resource crisis directly impacts the capacity of research institutions and forensic laboratories to adopt new technologies, validate methods, and scale innovations from basic research to practical implementation. An analysis of data from UK Research and Innovation (UKRI), the United Kingdom's premier public funding body for science, quantifies this deficit with stark clarity. Between 2009 and 2018, forensic science research secured only £56.1 million from UKRI research councils, representing a mere 0.01% of the total UKRI budget allocated over that decade [1] [2] [3]. This technical support center addresses the specific, practical challenges that researchers and scientists face in this constrained environment, providing troubleshooting guides for experiments hampered by limited resources and offering strategies for navigating the "valley of death" between research and development (R&D) and operational deployment.

Quantitative Analysis of UKRI Forensic Science Funding

The following tables break down the UKRI funding data to reveal the strategic priorities and significant gaps in research investment.

Table 1: Overall UKRI Forensic Science Research Funding (2009-2018)

| Metric | Value |

|---|---|

| Total Number of Projects | 150 |

| Cumulative Project Value | £56.1 million |

| Percentage of Total UKRI Budget | 0.01% |

| Projects with Dedicated Forensic Science Aims | 69 (46.0% of projects) |

| Value of Dedicated Forensic Science Projects | £17.2 million |

Table 2: Funding Distribution by Research Type and Evidence Focus

| Category | Funding Amount | Percentage of Total Forensic Funding | Number of Projects |

|---|---|---|---|

| By Research Type | |||

| Technological Development | £37.2 million | 69.5% | 91 |

| Foundational Research | £10.7 million | 19.2% | 27 |

| By Evidence Type | |||

| Digital & Cyber | £14.4 million | 25.7% | 33 |

| DNA & Genetics | £2.9 million | 5.1% | 13 |

| Fingerprints | £0.7 million | 1.3% | 2 |

The data reveals a pronounced imbalance, with a heavy focus on short-term technological outputs over the foundational research required to ensure their robustness and long-term validity [1] [4]. Furthermore, traditional forensic evidence types like fingerprints and DNA have been significantly underfunded compared to emerging areas like digital forensics [2] [3].

Troubleshooting Guides and FAQs for Researchers

This section addresses common operational problems exacerbated by budget constraints and the lack of scalable funding pathways.

FAQ 1: Our research demonstrates a promising new DNA collection method, but we cannot secure funding for the large-scale validation studies required for market adoption. What options exist for bridging this "valley of death"?

Answer: The gap between successful research and commercially viable, court-ready technology is a well-documented consequence of systemic underfunding. Consider these approaches:

- Strategic Stakeholder Engagement: Proactively build a network of forensic practitioners, commercial suppliers, and legal representatives. As demonstrated by the SCAnDi project, hosting workshops and regular meetings ensures technical development remains aligned with end-user needs and can open pathways to alternative funding or pilot study opportunities [5].

- Targeted Funding Calls: Diligently monitor calls from innovation-focused bodies like Innovate UK, which may have specific programs for scaling near-market technologies, unlike pure research councils [6].

- Phased Validation: Design your research project with a clear, modular pathway. Initial proof-of-concept studies can be conducted with research grants, with subsequent phases explicitly designed to gather the data required for commercial and regulatory approval, making the project more attractive to later-stage funders.

FAQ 2: How can we improve the success rate of grant applications for foundational forensic science research, which seems to be chronically undervalued?

Answer: The data confirms that foundational research received less than a fifth of the dedicated forensic science budget [1]. To improve success rates:

- Articulate Systemic Value: Frame your proposal to demonstrate how the foundational science addresses a root cause of a known crisis in forensic science (e.g., the reproducibility of evidence), rather than just a symptomatic technological fix. Emphasize the long-term value and cost-saving potential for the entire criminal justice system [4].

- Interdisciplinary Alignment: While advocating for forensic science as a coherent discipline, leverage its interdisciplinary nature. Partner with established departments (e.g., chemistry, biology, computer science) to submit proposals through funding streams with larger budgets, while ensuring the core forensic science question remains central.

- Pilot Data is Key: Even for foundational work, use low-cost preliminary studies or computational modeling to generate pilot data that de-risks the proposal for reviewers.

FAQ 3: Budget cuts are preventing our lab from updating to the latest equipment. How can we maintain research productivity with outdated instrumentation?

Answer: This is a pervasive issue, with laboratories often unable to purchase new equipment due to funding cuts or pauses [7].

- Collaborative Resource Sharing: Establish formal or informal consortia with neighboring university departments or research institutes to access their core facilities and high-end equipment.

- Focus on Data Analysis: Invest in computational skills and infrastructure. Often, more value can be extracted from existing datasets through advanced bioinformatics or AI-driven re-analysis than from generating new data with old equipment [8].

- Open-Source and Modular Tools: Explore the use of open-source hardware and software solutions for specific tasks, which can be more affordable and customizable than commercial black-box systems.

Featured Experimental Protocol: Single-Cell DNA Analysis for Complex Mixtures

This protocol, inspired by the UKRI-funded SCAnDi project, details a methodology for deconvoluting complex DNA mixtures, a common challenge in forensic casework that can be hindered by backlogs and resource limitations [9] [5].

Objective: To isolate and generate DNA profiles from individual cells within a mixed biological sample to attribute DNA to specific donors.

Principle: Combining single-cell isolation techniques with established DNA profiling methods to overcome the limitations of bulk analysis, which loses cell-of-origin information.

Materials and Reagents:

- Laser Capture Microdissection (LCM) system or Imaging Cell Sorter: For precise isolation of individual cells based on morphological characteristics.

- Microfluidic platforms: For automated processing of single cells.

- Lysis Buffer: A specialized buffer to break open individual cells without degrading DNA.

- Whole Genome Amplification (WGA) Kit: For amplifying the minute quantity of DNA from a single cell to a workable amount for profiling.

- STR Profiling Kit or Next-Generation Sequencing (NGS) Library Prep Kit: Depending on the desired downstream analysis (traditional databases or advanced sequencing).

- Artificial Mixture Cells: Cultured cells from known donors to validate the protocol.

Procedure:

- Sample Preparation: Create an artificial mixture of epithelial cells from two or more donors. Suspend in an appropriate buffer.

- Single-Cell Isolation:

- Option A (LCM): Smear the cell mixture on a specialized membrane slide. Use the LCM system to visually identify and isolate single cells by cutting and capturing them into a microfuge tube cap.

- Option B (Imaging Cell Sorter): Use a cell sorter that incorporates imaging to select and deposit single cells into a multi-well plate based on size, shape, or fluorescent markers.

- Cell Lysis and DNA Release: Add a small volume of lysis buffer to each isolated cell. Incubate to release genomic DNA.

- Whole Genome Amplification: Transfer the lysate to a microfluidic device or tube for WGA. Perform amplification according to the kit protocol to generate sufficient DNA for analysis.

- DNA Profiling:

- STR Pathway: Use a portion of the WGA product for PCR with a standard STR multiplex kit. Analyze the fragments on a capillary electrophoresis instrument.

- NGS Pathway: Prepare a sequencing library from the WGA product. Sequence on a suitable NGS platform and analyze the data for STRs and single nucleotide polymorphisms (SNPs).

- Data Analysis: Compare the single-cell DNA profiles to reference samples from the donors. The successful deconvolution is indicated by obtaining single-source profiles from individual cells within the mixture.

Troubleshooting:

- Low DNA Yield after WGA: Optimize lysis conditions and ensure the WGA reaction is performed on a clean, concentrated single cell. Include positive and negative controls.

- Allelic Dropout (STRs): A common issue in single-cell analysis. Consider using a dedicated, validated single-cell STR assay or switch to an NGS approach, which can be more tolerant of imbalanced amplification.

- Contamination: Implement rigorous cleaning protocols and use UV irradiation in workstations to destroy ambient DNA. Include negative controls at the single-cell isolation step.

Workflow and Systemic Analysis Diagrams

The following diagrams visualize the experimental protocol and the broader systemic challenges.

Diagram 1: Single-Cell DNA Analysis Workflow

Diagram 2: Systemic Impact of Forensic Science Underfunding

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Advanced Forensic DNA Analysis

| Reagent / Solution | Function | Key Considerations for Budget Constraints |

|---|---|---|

| Magnetic Bead-Based DNA Extraction Kits | Selective binding and purification of DNA from complex samples. | Prefer automated systems to reduce hands-on time and improve throughput, mitigating staff shortages [8]. |

| Whole Genome Amplification (WGA) Kits | Amplifying genome-wide DNA from single or low-copy number templates. | Essential for single-cell and low-template DNA workflows. Critical for maximizing data from scarce evidence. |

| STR Multiplex PCR Kits | Simultaneous amplification of multiple Short Tandem Repeat loci for database-compatible profiling. | The standard for most labs. Ensure any novel method (e.g., single-cell) maintains compatibility with these core kits [5]. |

| Next-Generation Sequencing (NGS) Library Prep Kits | Preparing DNA for massively parallel sequencing to access SNP/STR data and more. | Offers more information from degraded mixtures but at a higher cost. Requires significant investment in bioinformatics. |

| Lysis Buffers for Single Cells | Breaking open individual cells while preserving DNA integrity. | Formulations are critical for success in single-cell genomics. In-house optimization can reduce costs. |

| Microfluidic Chips/Cartridges | Automating and miniaturizing reactions (e.g., DNA extraction, PCR) for portability and efficiency. | Represents a high initial investment but can reduce long-term reagent consumption and improve reproducibility [8]. |

Technical Support Center

This support center provides resources for researchers and scientists navigating the challenges of implementing and validating forensic technologies in an environment of significant budget constraints.

Frequently Asked Questions (FAQs)

Q1: Our laboratory faces budget cuts while the market for new forensic technologies grows. How can we justify investment in new instruments? Justification requires a focus on long-term operational efficiency and demonstrable return on investment. Emphasize how new technologies can reduce analysis time, automate manual processes, and improve throughput, thereby offsetting initial costs over time. Frame proposals around specific, high-priority needs, such as addressing backlogs in digital forensics or improving the sensitivity of DNA analysis to solve more cases with less sample. Highlight how modern equipment can reduce the risk of errors and subsequent costly legal challenges [10].

Q2: What are the key legal standards a new forensic method must meet before it can be used in casework? Before implementation, a new method must meet rigorous legal standards for admissibility as evidence. In the United States, this is governed by the Daubert Standard (or the Frye Standard in some states), which requires that the technique has been tested, peer-reviewed, has a known error rate, and is generally accepted in the scientific community. In Canada, the Mohan Criteria govern admissibility based on relevance, necessity, the absence of exclusionary rules, and a properly qualified expert [10]. Always consult with your legal department to ensure compliance with local jurisdiction requirements.

Q3: We are considering implementing a new technique like Comprehensive Two-Dimensional Gas Chromatography (GC×GC). What is its current readiness level for routine casework? GC×GC is a powerful research tool with high peak capacity for complex mixtures like illicit drugs, toxicological evidence, and ignitable liquid residues. However, its Technology Readiness Level (TRL) for routine forensic casework is still developing. Key barriers to routine implementation include the need for extensive intra- and inter-laboratory validation studies, the establishment of standardized methods, and the determination of known error rates to meet legal admissibility standards like the Daubert Standard [10]. It is currently more suited to advanced research applications rather than routine evidence processing.

Q4: How can we manage the increasing volume of digital evidence with limited resources and staff? The surge in digital evidence is a major market driver [11]. To manage this, prioritize investments in forensic software solutions that incorporate automation, artificial intelligence (AI), and machine learning. These tools can help process large datasets more quickly and accurately, reducing the manual burden on limited staff. Furthermore, leveraging cloud-based solutions and exploring partnerships with private forensic service providers can help manage workflow peaks without the need for immediate capital expenditure on new hardware and additional full-time staff [11] [12].

Q5: A key piece of our instrumentation has failed, and we lack the budget for a like-for-like replacement. What are our options? First, explore service contracts and manufacturer support for repair. If replacement is unavoidable, consider:

- Refurbished Instruments: Purchasing certified refurbished equipment from reputable vendors can offer significant cost savings.

- Collaborative Partnerships: Partner with nearby university laboratories or other government agencies to share access to essential equipment.

- Reagent & Consumable Management: Audit current reagent and consumable usage to identify potential cost savings, which can be reallocated [13].

- Grants and Funding Opportunities: Actively monitor for new grant announcements from federal and state agencies, as funding priorities can shift [14].

Troubleshooting Guides

Guide: Troubleshooting Instrument Validation Under Budget Constraints

Problem: Difficulty conducting full validation studies for new methods or instruments due to limited funding for overtime, reference materials, and dedicated instrument time.

Background: Method validation is a non-negotiable requirement for forensics, but budget cuts can make this process challenging [7].

Solution:

- Phased Validation Approach: Break the validation into smaller, manageable phases funded across multiple budget cycles. Begin with critical parameters like specificity and precision.

- Leverage Vendor Data: Request comprehensive validation data from instrument and reagent manufacturers. While not a substitute for in-house verification, this can reduce the scope of testing required.

- Collaborative Inter-Laboratory Studies: Partner with other laboratories to share the workload and cost of validation studies. This also strengthens the data by incorporating multiple data points.

- Utilize Free Proficiency Tests: Use proficiency testing samples from quality assurance programs, like those offered by the Center for Forensic Sciences at RTI International, for additional method performance data at low cost [15].

Guide: Troubleshooting Staff Shortages in a Specialized Field

Problem: Inability to hire or retain skilled forensic professionals, leading to backlogs and increased pressure on existing staff [11] [13].

Background: A conspicuous shortage of skilled forensic experts is a major market challenge, exacerbated by budget limitations that restrict competitive salaries [11].

Solution:

- Cross-Training: Implement cross-training programs to create a more versatile workforce where staff can support multiple disciplines during shortages.

- Automation Investment: Prioritize investments in laboratory automation for repetitive, high-volume tasks (e.g., liquid handling, sample preparation) to free up expert time for complex analysis and interpretation [11].

- University Partnerships: Establish internship and fellowship programs with local universities to build a pipeline of new talent and access specialized academic expertise for complex casework [15].

The following tables summarize the key quantitative data highlighting the conflict between projected market growth and the reality of budget reductions.

Table 1: Forensic Technology Market Growth Projections

| Metric | Value | Source & Time Period |

|---|---|---|

| Market Size (2024) | USD 10,017 Million | MarkSpeak Solutions (2025-2030) [11] |

| Projected Market Size (2030) | USD 18,025 Million | MarkSpeak Solutions (2025-2030) [11] |

| Compound Annual Growth Rate (CAGR) | 8.6% | MarkSpeak Solutions (2025-2030) [11] |

| Alternative CAGR | 13.3% | Technavio (2024-2029) [12] |

| North America Market Share (2024) | 45.33% | MarkSpeak Solutions [11] |

Table 2: Documented Budget Reductions and Constraints

| Metric | Value | Context & Source |

|---|---|---|

| DOJ Grant Terminations (Apr 2025) | 373 grants | Terminated for not effectuating new departmental priorities [14] |

| Rescinded Funding Value | ~ USD 500 Million | Estimated remaining balances of terminated grants [14] |

| Community Violence Intervention Cuts | ~ USD 145 Million | Cuts to the Community Violence Intervention and Prevention Initiative [14] |

| Key Challenge | Funding constraints | Limiting acquisition of new equipment per AAFS 2025 report [7] |

Experimental Protocols

Detailed Methodology: Drug Analysis via Gas Chromatography-Mass Spectrometry (GC-MS)

This protocol details the definitive confirmatory test for controlled substances, considered the "gold standard" in forensic laboratories [16].

1. Principle: A sample is vaporized and separated by a gas chromatograph (GC) based on the volatility and affinity of its components for the column's stationary phase. The separated components are then ionized and identified by a mass spectrometer (MS) based on their mass-to-charge ratio, providing a unique molecular fingerprint.

2. Materials and Reagents:

- Suspected controlled substance sample

- Appropriate organic solvent (e.g., methanol)

- Internal standard solution

- GC-MS system with autosampler

- Helium or nitrogen carrier gas

- GC column (e.g., 5% phenyl polysiloxane)

- Reference standards of suspected drugs

3. Procedure:

- Sample Preparation: Accurately weigh a small amount of the sample (~1-2 mg) and dissolve it in 1 mL of solvent. Add a known amount of internal standard. Mix thoroughly and centrifuge if necessary to separate particulates.

- Instrument Calibration: Calibrate the MS using a manufacturer-recommended calibration standard. System suitability should be verified by running a known reference standard and ensuring it produces the correct retention time and mass spectrum.

- GC Parameters:

- Injection Port Temperature: 250-280°C

- Carrier Gas Flow Rate: ~1.0 mL/min (optimize for column)

- Oven Temperature Program: Ramp from an initial low temperature (e.g., 80°C) to a high temperature (e.g., 300°C) at a defined rate to achieve optimal separation.

- Injection Volume: 1 µL (split or splitless mode)

- MS Parameters:

- Ionization Mode: Electron Impact (EI)

- Ion Source Temperature: 230°C

- Scan Range: e.g., 40-550 m/z

- Data Analysis:

- Compare the retention time and the full mass spectrum of the analyte in the sample to that of a certified reference standard analyzed under identical conditions.

- A positive identification is confirmed when the sample's mass spectrum and retention time match the reference standard within accepted tolerances.

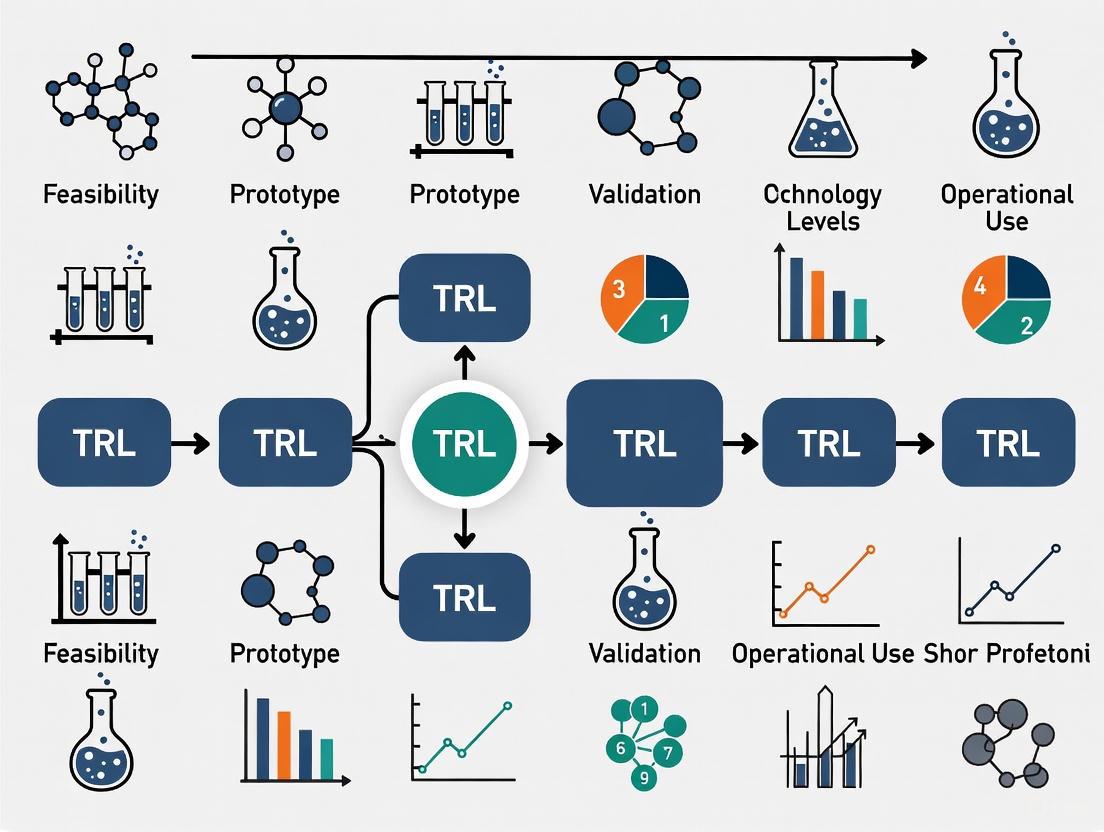

Workflow: Scaling a Technology from Research to Courtroom

This diagram illustrates the pathway and challenges, including budget constraints, for implementing a new forensic technology like GC×GC in a forensics laboratory.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Drug Chemistry Analysis

| Item | Function | Example / Note |

|---|---|---|

| Presumptive Test Kits | Provides initial, non-definitive indication of a drug's class (e.g., Marquis test for opioids/amphetamines). Prone to false positives. [16] | Commercial kits from suppliers like Sirchie. |

| GC-MS Reference Standards | Certified pure compounds used to calibrate instruments and confirm the identity of an unknown sample by matching retention time and mass spectrum. [16] | Available from chemical suppliers; essential for court-admissible results. |

| Internal Standards (IS) | A known compound added to a sample at a known concentration; used in quantitative GC-MS to correct for losses during sample preparation and instrument variability. | Often a deuterated analog of the target analyte. |

| LC-MS/MS Solvents & Buffers | High-purity solvents and mobile phase additives are critical for reliable results in Liquid Chromatography-Tandem Mass Spectrometry, used for difficult-to-vaporize or thermally labile compounds. [17] | Acetonitrile, methanol, ammonium formate. |

| Solid Phase Extraction (SPE) Cartridges | Used for sample clean-up and concentration of target analytes from complex biological matrices like urine or blood, reducing ion suppression in MS. [17] | C18, mixed-mode cation exchange phases. |

| Proficiency Test Samples | Blind samples provided by external quality assurance programs to objectively assess a laboratory's analytical performance and ensure continued competency. [15] | Sourced from providers like RTI's Center for Forensic Sciences. |

Technical Support Center

Troubleshooting Guides

Guide 1: Troubleshooting Technology Validation Stalls

Problem: My technology prototype works perfectly in the lab but fails during field testing in realistic environments. The performance metrics have dropped significantly, and I can't progress beyond TRL 5.

Diagnosis: This indicates a classic "relevant environment gap" where controlled laboratory conditions don't adequately simulate real-world operational stresses [18].

Solution: Implement an Environmental Stress Testing Protocol:

- Deconstruct Environment Variables: Break down the target operational environment into specific, testable parameters (temperature ranges, vibration profiles, humidity cycles, user interaction patterns)

- Create Stepped Validation: Develop a testing protocol that gradually introduces environmental stresses rather than immediate full exposure

- Instrumentation for Diagnostics: Add additional sensors and data collection specifically for understanding failure modes during testing

- Iterative Redesign Cycles: Plan for multiple rapid redesign iterations based on field test findings

Guide 2: Troubleshooting Budget Exhaustion Before Validation

Problem: My project funding is running out before we can complete the critical transition from laboratory demonstration to operational environment testing.

Diagnosis: This represents the budget manifestation of the "Valley of Death" where costs increase dramatically at higher TRLs [18].

Solution: Implement a Strategic Funding Bridge Strategy:

- Phased Budget Allocation: Reserve 60-70% of total budget specifically for TRL 5-7 transition activities

- Parallel Funding Pursuit: Simultaneously pursue multiple funding sources (grants, partnerships, internal funds) during early TRL stages

- Minimum Viable Demonstration: Identify the absolute minimum scope required to prove operational viability

- Incremental Milestone Funding: Structure funding releases against specific, measurable TRL advancement milestones

Frequently Asked Questions (FAQs)

We're struggling with legal admissibility standards for our new forensic analysis method. What validation is required?

Answer: Legal systems require rigorous validation before admitting new forensic technologies as evidence. In the United States, methods must meet either Frye ("general acceptance") or Daubert standards (testing, peer review, error rates, acceptance) [10]. For admissibility:

- Documented Error Rates: Establish known error rates through controlled validation studies

- Peer Review: Publish method details and validation results in peer-reviewed literature

- Standardization: Develop standard operating procedures and quality controls

- Inter-laboratory Validation: Conduct validation across multiple independent laboratories

Our technology demonstrates excellent analytical performance, but forensic laboratories won't adopt it. What are we missing?

Answer: You're likely facing implementation readiness gaps beyond pure technical performance. Focus on:

- Workflow Integration: Ensure compatibility with existing laboratory workflows and staffing patterns

- Cost-Benefit Justification: Document clear operational efficiencies or improved outcomes

- Training Requirements: Develop comprehensive training programs for laboratory personnel

- Regulatory Compliance: Address data integrity, chain of custody, and reporting requirements

- Support Infrastructure: Establish technical support and maintenance capabilities

How do we quantify our technology's current maturity level to secure additional funding?

Answer: Use the standardized Technology Readiness Level (TRL) scale with specific, evidence-based assessments [18]:

| TRL Level | Description | Key Evidence Required |

|---|---|---|

| TRL 3 | Proof of Concept | Laboratory experiments validating core principles [18] |

| TRL 4 | Component Validation | Integrated breadboard testing in laboratory environment [18] |

| TRL 5 | Relevant Environment Validation | Prototype testing in simulated relevant environment [18] |

| TRL 6 | Prototype Demonstration | System/subsystem model demonstration in relevant environment [18] |

| TRL 7 | Operational Prototype | Prototype demonstration in operational environment [18] |

What specific evidence do we need to advance from TRL 6 to TRL 7 for a forensic technology?

Answer: The TRL 6 to 7 transition requires moving from simulated to actual operational environments [18]:

- Operational Context Testing: Demonstration in real forensic casework or equivalent operational setting

- End-User Validation: Testing by intended users (forensic examiners, not developers)

- Real-World Performance Metrics: Documented performance under casework conditions (throughput, reliability, error rates)

- Robustness Documentation: Evidence of performance across expected operational variations

- Comparative Effectiveness: Data showing advantages over existing methods or complementary capabilities

The Researcher's Toolkit: Forensic Technology Implementation

Research Reagent Solutions

| Item | Function in TRL Scaling | Implementation Purpose |

|---|---|---|

| Standard Reference Materials | Validation benchmarking across TRL levels | Provides consistent baseline for performance comparison during technology maturation [10] |

| Proficiency Test Panels | Inter-laboratory validation and error rate determination | Establishes reproducibility and reliability metrics required for legal admissibility [10] |

| Quality Control Materials | Daily performance monitoring and standardization | Ensures consistent operation across technology transition from lab to field deployment |

| Sample Processing Kits | Workflow integration and compatibility testing | Validates practical implementation in existing forensic laboratory workflows |

| Data Standards Framework | Result interpretation and reporting consistency | Enables cross-platform compatibility and expert testimony reliability [10] |

Budget Planning Framework for TRL Scaling

| TRL Range | Primary Cost Drivers | Mitigation Strategies |

|---|---|---|

| TRL 1-3 | Research personnel, basic laboratory supplies | Grant funding, internal R&D investment, proof-of-concept awards [18] |

| TRL 4-5 | Prototype development, component integration, initial validation | Strategic partnerships, shared resources, phased development approach [18] |

| TRL 6-7 ("Valley of Death") | Environmental testing, operational demonstration, certification | Dedicated technology demonstration programs, public-private partnerships, strategic funding reserves [18] |

| TRL 8-9 | Manufacturing scale-up, quality systems, deployment support | Implementation grants, commercial partnerships, operational budgets [18] |

The forensic science landscape is defined by a significant divergence in resource allocation and funding priorities, creating a palpable tension between the established field of traditional crime scene investigation (CSI) and the emerging domain of digital forensics. This disparity is driven by distinct growth projections, market forces, and societal technological shifts. Traditional forensic labs, often operating within governmental structures, face chronic funding constraints and backlogs, struggling to keep pace with caseloads with outdated equipment [7] [19]. Conversely, the digital forensics sector is experiencing a rapid market expansion, fueled by the escalating volume of cybercrime and technological adoption across society [20] [21]. This article analyzes these disparities through quantitative data, provides methodologies for researching their impact, and offers guidance for professionals navigating this fragmented resource environment.

Quantitative Analysis of Disparities

The divergence between the two fields can be quantitatively measured through growth projections, salary data, and market size.

Table 1: Career Growth and Financial Allocation Comparison

| Aspect | Digital Forensics | Traditional CSI |

|---|---|---|

| Projected Job Growth (2024-2034) | 35% [22] | 13% [22] |

| Entry-Level Salary Range | $55,000 - $80,000 [22] | $40,000 - $50,000 [22] |

| Global Market Value | Projected to reach $18.2 billion by 2030 [20] | Not specified in search results, but indicated as constrained [7] [19] |

| Key Growth Driver | Market forces and private sector investment [21] | Primarily governmental budgets [23] |

Table 2: Funding Environment and Resource Challenges

| Aspect | Digital Forensics | Traditional CSI |

|---|---|---|

| Primary Funding Source | Corporate cybersecurity budgets, private investment, federal grants [21] | Governmental budgets (state, local), fixed tax revenues [23] |

| Key Resource Constraints | Shortage of court-certified examiners; encryption complicating data acquisition [21] | Inability to purchase new equipment; backlog of cases awaiting analysis [7] [23] |

| Defining Operational Issue | Adapting to rapid technological change (Cloud, AI, IoT) [20] | Managing case backlogs and processing physical evidence with limited capacity [23] |

Experimental Protocols for Impact Assessment

Researchers and lab directors can employ the following methodologies to empirically evaluate the impact of resource constraints and build cases for funding.

Protocol 1: Cost-Benefit Analysis of Backlog Reduction

This protocol uses a model based on "Project Resolution," a successful initiative by the Acadiana Criminalistics Laboratory [23].

- Objective: To quantify the net benefit of allocating additional resources to reduce a specific backlog (e.g., DNA evidence from no-suspect sexual assaults).

- Materials: Historical case files, cost data for analysis (internal & outsourcing), access to CODIS/NDIS, recidivism data.

- Methodology:

- Case Selection and Costing: Identify a cohort of backlogged cases (e.g., 605 no-suspect sexual assaults). Calculate the total cost to analyze the entire cohort, including personnel, reagents, and/or vendor costs [23].

- Evidence Analysis and Database Entry: Process the evidence to generate DNA profiles. Enter all eligible profiles into the national DNA database (CODIS/NDIS) [23].

- Outcome Tracking: Track the number of CODIS hits (matches to offenders or other cases) over a defined period (e.g., 10 years) [23].

- Benefit Calculation: Calculate the benefit by factoring in:

- Clearance Rate: Percentage of cases with a CODIS hit (Project Resolution achieved a 58% hit rate) [23].

- Recidivism Prevention: Assign a cost-avoidance value based on statistical data for crimes prevented by incarcerating a serial offender.

- Investigative Efficiency: Quantify savings from closing cold cases and reallocating investigative resources.

- Output: A net benefit figure (Total Benefits - Total Costs) that objectively supports requests for resource allocation to reduce backlogs.

Protocol 2: Technology Readiness Level (TRL) Scaling for Forensic Tools

This protocol assesses the maturity and scalability of new tools within a resource-constrained environment.

- Objective: To evaluate the implementation feasibility of a new forensic technology (e.g., an AI-based evidence triage tool) from prototype to integrated lab system.

- Materials: The new technology, a defined set of test cases, existing laboratory instrumentation/software, performance metrics.

- Methodology:

- Baseline TRL Assessment: Define the starting TRL (1-9) for the new tool. Most novel forensic tools start at TRL 3-4 (analytical and experimental proof of concept) [24].

- Pilot Validation (TRL 5-6): Run the tool in a lab environment with a small, controlled set of case data. Compare its accuracy, speed, and cost against the current "gold standard" method.

- System Integration (TRL 7): Integrate the tool into a single, representative operational workflow. This tests data transfer, chain-of-custody logging, and analyst usability.

- Operational Deployment & ROI Calculation (TRL 8-9): Deploy the tool for full operational use. Calculate the Return on Investment (ROI) by measuring the change in throughput (cases per analyst), reduction in processing time, and change in operational costs.

- Output: A TRL progression report with validated performance and cost data, providing a clear roadmap and justification for full-scale funding.

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and tools essential for research and experimentation in the modern forensic science landscape.

Table 3: Essential Research and Operational Tools

| Tool / Solution | Function | Field of Application |

|---|---|---|

| Cellebrite UFED / XRY | Extracts and analyzes data from mobile devices, bypassing security where possible. | Digital Forensics (Mobile) [22] [21] |

| EnCase / FTK (Forensic Toolkit) | Creates forensic images of computer hard drives and facilitates analysis of recovered data. | Digital Forensics (Computer) [22] |

| KinTest Software | Uses STR frequency data to calculate Likelihood Ratios (LR) for potential familial relationships from DNA. | Traditional CSI (DNA Analysis) [23] |

| Automated Fingerprint ID System (AFIS) | Database system for storing and comparing fingerprint patterns, enabling rapid suspect identification. | Traditional CSI (Fingerprint Analysis) [22] |

| Cloud-Native Acquisition APIs | Programmatic interfaces that allow for the forensic acquisition of data from cloud platforms like AWS, Azure, and GCP. | Digital Forensics (Cloud) [21] |

| AI-Powered Triage Suites | Use machine learning to automatically analyze large datasets (e.g., logs, files) to identify patterns and prioritize evidence. | Digital Forensics (Cross-Domain) [20] [25] |

Technical Support Center: FAQs and Troubleshooting Guides

FAQ 1: How can we justify increased funding for traditional forensic lab equipment when digital forensics is receiving more market investment?

- Issue: Securing capital for new traditional lab equipment (e.g., updated DNA sequencers, GC-MS) is challenging.

- Solution:

- Conduct a Cost-Benefit Analysis: Use Protocol 1 to demonstrate the long-term cost savings and public safety benefits of modern equipment. Highlight how faster processing reduces backlog, prevents recidivism, and saves investigative resources [23].

- Frame it as Foundational: Argue that traditional forensic evidence (DNA, fingerprints) remains the bedrock of many prosecutions and that reliable, timely analysis is a core government function that cannot be allowed to degrade [19].

- Pilot a Shared Service Model: Propose a regional equipment-sharing initiative with neighboring jurisdictions to maximize utilization and dilute costs.

FAQ 2: Our digital forensics unit is struggling with encrypted devices and a shortage of certified examiners. What are the practical steps to overcome this?

- Issue: Encryption and talent shortages are crippling digital evidence processing.

- Solution:

- For Encryption: Acknowledge that hardware-based encryption reduces success rates. Develop a multi-pronged strategy: 1) Invest in premium decryption utilities; 2) Shift focus to cloud-based evidence and backups that can be legally acquired; 3) Ensure legal teams are prepared to leverage court orders for device access [21].

- For Talent Shortages: 1) Advocate for funding for examiner certification (e.g., CDFE, GCFE). 2) Explore partnerships with local universities to create a talent pipeline. 3) Consider leveraging "Forensics-as-a-Service" providers for specific, high-volume tasks to free up internal experts for complex cases [22] [21].

FAQ 3: We are a small lab with limited budget. How do we prioritize between investing in traditional vs. digital forensics capabilities?

- Issue: Strategic resource allocation with constrained funds.

- Solution:

- Perform a Workload Analysis: Audit your incoming caseload for the past 2-3 years to quantify the proportion of cases requiring digital vs. traditional analysis. Let data drive the decision.

- Start with Cross-Training: Invest in cross-training existing traditional forensic staff in fundamental digital evidence handling to create a hybrid workforce [22].

- Targeted Digital Investment: Instead of building a full digital lab, initially invest in a single capability with the highest demand, such as mobile device extraction, and outsource more complex digital needs [21].

- Seek Grant Funding: Actively apply for federal and state grants aimed at modernizing forensic capabilities, which often have streams for both traditional and digital forensics [26].

Workflow Visualization

The following diagram illustrates the logical relationship and resource flow between the key challenges and potential solutions discussed in this article.

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

FAQ 1: What are the immediate signs that commoditization is affecting my R&D budget?

The most immediate signs are margin compression and a reduction in revenue growth, which directly pressure R&D budgets. Management often responds by allocating less capital to R&D initiatives, implying that opportunities for product differentiation are declining. You will also observe a shift in competition towards pricing rather than features, making it harder to justify R&D for innovation [27].

FAQ 2: Our core technology is becoming a commodity. Should we stop investing in it entirely?

Not necessarily. A "no-frills" core product can be part of a profitable strategy, but it requires a different operational model. For example, Dow Corning created a separate brand, Xiameter, to sell its commoditized silicone products online at competitive prices to volume customers. This allows the company to profit from its commodity line while freeing up resources to develop more differentiated, value-added offerings [28].

FAQ 3: How can we demonstrate the return on investment (ROI) for R&D when budgets are tight?

Focus your R&D on developing Relational Capital—the mutual trust, respect, and friendship in customer relationships. Research shows that relational capital positively moderates the link between R&D services and profitability. When customers trust you, they are more likely to value and pay for your complex R&D services. Frame your R&D proposals around solving specific, high-value customer problems, as SKF did by moving from selling bearings to offering guaranteed performance in reducing machinery downtime [29] [28].

FAQ 4: What is a viable R&D strategy in a highly commoditized market?

The optimal strategy depends on where value is shifting in your market. Use the following table to diagnose your situation [30]:

| Market Environment | Dominant Advantage | Optimal R&D Strategy | Real-World Example |

|---|---|---|---|

| Premium Player | Meaningful differentiation | Protect/enhance differentiation via innovation, brand, patents. | Specialty pharmaceuticals, luxury goods. |

| Producer | Low-cost structure | R&D focused on cost efficiency: product design, process innovation. | Oil production, mining industries. |

| Arbitrager | Exploiting market imperfections | R&D for agility, data analysis to spot supply/demand mismatches. | Fixed-income and foreign-exchange trading. |

| Exit | Neither structural nor dynamic advantage | Redeploy R&D resources to more attractive markets. | IBM exiting the PC business. |

FAQ 5: How can we speed up R&D to keep up with rapidly commoditizing technology markets?

In markets moving quickly toward feature parity, the features arms race is often a losing battle. Instead of exhaustive in-house development, adapt your procurement. For commoditized components, shift from lengthy evaluations to fast-tracked purchasing of standard solutions. This saves person-years of effort, allowing your R&D team to focus on higher-value, integrative innovation that creates unique systems for customers [31].

Troubleshooting Guide: R&D Under Budget Constraints

Problem: Inability to justify R&D budget for foundational forensic research due to its perceived low commercial return.

Solution:

- Action: Link foundational research to specific, funded national initiatives.

- Methodology: Align proposals with the strategic R&D and standards priorities outlined by bodies like the National Institute of Standards and Technology (NIST) [32]. For example, pursue research that addresses the "validity of forensic methods" or develops "measures of uncertainty," as these are stated needs [33]. This frames your R&D as low-risk and policy-supported, making it more likely to secure public or private grants.

Problem: High-cost technology (e.g., gunshot detection systems) fails to deliver expected operational value, leading to budget cuts.

Solution:

- Action: Conduct a pre-implementation "Total Cost of Ownership (TCO) and Efficacy" analysis.

- Methodology: Before procurement, model not just the purchase price but all infrastructure, storage, and specialized personnel costs. Crucially, pilot the technology in a controlled setting to measure its impact on key outcomes (e.g., response time, arrest rates) versus existing methods. Research shows that while gunshot detection works technically, its operational value is often limited, making it a poor investment without prior validation [34].

Problem: Need to achieve Technology Readiness Level (TRL) scaling with limited funding.

Solution:

- Action: Adopt a "Solution Innovation" strategy.

- Methodology: Instead of developing a standalone product, use R&D to bundle your technology with high-value services into a customized solution. BASF did this with its Integrated Paint Shop, where it gets paid per painted car body that passes inspection, not for the volume of paint used. This funds further R&D by creating a new, high-margin revenue stream and demonstrates tangible value to customers [28].

Experimental Protocols & Methodologies

Protocol: Quantifying the Impact of Relational Capital on R&D Profitability

This protocol outlines a methodology to empirically test the hypothesis that relational capital enhances the profitability of R&D services, based on causal modeling techniques [29].

1. Hypothesis: Relational capital (e.g., trust, respect) positively moderates the relationship between a supplier's R&D service intensity and its profit performance within a specific customer relationship.

2. Data Collection:

- Unit of Analysis: Individual supplier-customer relationships.

- Sample: A minimum of 91 relationships to ensure statistical power for causal modeling [29].

- Variables and Measurement:

- Dependent Variable: Supplier Profitability. Measured as the profit margin (percentage) attributed to the specific customer relationship.

- Independent Variable: R&D Service Intensity. A Likert-scale survey metric assessing the extent of R&D services (e.g., feasibility studies, prototype design, product tailoring) provided to the customer.

- Moderating Variable: Relational Capital. A composite index based on survey items measuring mutual trust, respect, and the quality of personal friendships between the supplier and customer organizations [29].

3. Analysis:

- Use structural equation modeling (SEM) or hierarchical regression analysis.

- Test the main effect of R&D services on profitability.

- Test the interaction effect between R&D services and relational capital. A statistically significant positive interaction term confirms the moderating role of relational capital.

Protocol: Evaluating Operational Value of Commoditized Hardware

This methodology assesses whether a commoditized technology (e.g., gunshot detection) delivers sufficient operational value to justify its cost and further R&D investment [34].

1. Hypothesis: Implementation of Technology X will significantly improve operational outcome Y (e.g., response time, case clearance) compared to existing methods, after controlling for cost.

2. Experimental Design:

- Design: A quasi-experimental design comparing pre- and post-implementation data, with a control group (similar jurisdictions without the technology).

- Participants: Law enforcement agencies or forensic labs implementing the technology.

3. Metrics and Data Collection:

- Primary Metric: Operational Effectiveness. e.g., Reduction in average response time to incidents, increase in successful evidence collection rates.

- Secondary Metric: Total Cost of Ownership (TCO). Include initial acquisition, installation, infrastructure, data storage, maintenance, and specialized training costs.

- Data Sources: Internal agency records, 911 call logs, and cost accounting data collected over a 12-month period.

4. Analysis:

- Conduct a cost-benefit analysis, comparing the quantified improvement in operational effectiveness against the TCO.

- Perform a t-test or ANOVA to determine if the differences in outcomes between the experimental and control groups are statistically significant.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Strategic "Reagents" for R&D in Commoditized Markets

| Research Reagent | Function & Explanation | Application Example |

|---|---|---|

| Relational Capital | The "catalyst" for R&D profitability. Builds trust and respect, allowing customers to perceive higher value in your R&D services and making them willing to pay a premium [29]. | A supplier uses deep customer relationships to co-develop a custom R&D service, securing a long-term, profitable contract. |

| Value Space Matrix | A "diagnostic assay" to identify strategic pathways for R&D. It plots Segmentation/Customization against Bundling to reveal four strategic quadrants (Core, Targeted, System, Solution) [28]. | A company stuck in the "Core" quadrant uses the matrix to plot a path toward "Solution Innovation," guiding its R&D portfolio decisions. |

| Commoditization Navigator | A "classification tool" to identify the optimal R&D strategy based on market dynamics. It assesses structural (cost/differentiation) and dynamic (market imperfections) advantage [30]. | A firm in a cost-advantaged "Producer" market focuses R&D on process efficiency, while an "Arbitrager" invests in data analytics for market timing. |

| De-bundled Core Product | A "purified compound" that profitably serves the price-sensitive segment of a commoditized market. Allows R&D resources to be focused on more innovative, bundled offerings [28]. | Dow Corning's Xiameter brand sells basic silicones online at low cost, while the main brand focuses on high-value, service-backed solutions. |

| NIST OSAC Standards | The "buffer solution" providing stability and reliability. Using established standards ensures forensic R&D is valid, reliable, and admissible, preventing wasted investment on non-compliant methods [33]. | A lab developing a new DNA analysis technique aligns its validation protocol with OSAC standards to ensure widespread adoption and credibility. |

Strategic Pathways for R&D Investment

The following diagram illustrates the logical decision process for aligning R&D strategy with market commoditization, based on the Commoditization Navigator framework [30].

Cost-Effective Implementation Strategies and Frugal Forensic Frameworks

Frugal forensics is an emerging paradigm that advocates for the sustainable provision of transparent, high-quality forensic services tailored to meet specific jurisdictional needs and limitations [35]. This approach addresses the stark disadvantages faced by many Global South jurisdictions in resourcing and technological capabilities, despite forensic science's growing importance as a global practice supporting peace, prosperity, and justice [35] [36]. The concept aligns with the United Nations Sustainable Development Goals and aims to narrow inequalities between jurisdictions by developing frameworks that prioritize cost-efficiency, resource optimization, and simplicity without compromising quality [35] [37]. This technical support center provides practical guidance for implementing frugal forensic principles within budget-constrained environments.

Core Principles of Frugal Forensics

- Sustainable Resource Allocation: Focus on maximizing output from limited resources through careful prioritization and strategic investment in areas with the highest impact on justice outcomes [35] [23].

- Context-Appropriate Technology: Select and implement forensic technologies based on jurisdictional needs, infrastructure limitations, and life-cycle costs rather than simply adopting the most advanced available systems [35].

- Supply Chain Resilience: Develop robust local supply chains and reagent management systems to minimize dependencies on international suppliers and reduce costs associated with transportation and importation [35].

- Quality Assurance Frameworks: Implement scalable quality assurance measures that ensure reliable results while remaining feasible within existing operational constraints [35].

- Data-Driven Decision Making: Employ cost-benefit analysis to objectively compare competing options for resource deployment, focusing on timeliness and investigative value [23].

Frequently Asked Questions (FAQs)

Q1: How can forensic laboratories demonstrate the value of additional resources to budget officials?

A: Conduct a formal cost-benefit analysis using historical data to quantify the impact of forensic resources on case resolution. The Project Resolution case study demonstrated that an investment of $186,000 to process 605 cold cases resulted in 164 CODIS matches (a 58% hit rate) over time, identifying serial offenders and solving previously unsolvable crimes [23]. Presenting such quantitative data on outcomes, including recidivism prevention and serial crime identification, provides objective evidence for resource allocation decisions [23].

Q2: What is the most effective approach to reducing case backlogs with limited personnel?

A: Focus resources on eliminating the backlog of cases awaiting analysis rather than just managing cases in-analysis. Studies suggest that ideal response time is achieved when case analysis commences immediately upon submission [23]. Implement triage protocols that prioritize cases with the greatest potential for investigative leads, such as no-suspect sexual assaults that are highly dependent on forensic databases for resolution [23].

Q3: How can laboratories maintain quality while implementing cost-saving measures?

A: Develop context-appropriate quality assurance frameworks that focus on essential validation procedures and transparent documentation [35]. The frugal forensics approach emphasizes maintaining high-quality standards through method selection based on robust principles rather than expensive equipment, ensuring reliability without unnecessary complexity [35] [38].

Q4: What strategies can help overcome technological dependency in Global South jurisdictions?

A: Apply frugal principles that emphasize simplicity, local supply chain development, and appropriate technology levels [37] [38]. This includes building local technical capacity, adapting methods to use readily available reagents, and developing maintenance expertise within the region rather than relying on international vendors for all technical support [35].

Experimental Protocols & Methodologies

Cost-Benefit Analysis Protocol for Forensic Resource Allocation

Objective: To objectively evaluate the return on investment for forensic laboratory resources by analyzing historical case data [23].

Materials: Historical case records, laboratory information management system (LIMS) data, CODIS hit reports, cost accounting records.

Methodology:

- Case Selection: Identify a representative sample of cases, such as no-suspect sexual assaults that are highly dependent on forensic analysis for resolution [23].

- Cost Calculation: Document all direct costs associated with processing the selected cases, including personnel time, reagents, equipment usage, and external vendor costs if applicable [23].

- Outcome Measurement: Track CODIS hits, case resolutions, identifications of serial offenders, and linkages to other cases over an extended period to capture the full value [23].

- Benefit Quantification: Assign quantitative values to outcomes where possible, including saved investigative resources, prevention of future crimes through incapacitation of identified offenders, and justice provided to victims [23].

- Analysis: Calculate cost-benefit ratios and return on investment metrics to compare different resource allocation scenarios [23].

Latent Fingermark Detection Using Frugal Principles

Objective: To develop reliable latent fingermark detection methods appropriate for jurisdictions with limited resources and challenging environmental conditions [35].

Materials: Basic fingerprint powders, alternative light sources, digital imaging equipment, locally-sourced chemicals.

Methodology:

- Substrate Assessment: Categorize evidence by surface type (porous, non-porous, semi-porous) to determine appropriate processing sequences [35].

- Sequential Processing: Implement methodical progression from least to most destructive techniques to preserve evidence integrity [35].

- Local Reagent Development: Adapt formulations using locally available chemicals to reduce costs and supply chain dependencies [35].

- Quality Control: Implement standardized photography and documentation protocols to ensure reproducible results despite equipment limitations [35].

Workflow Diagrams

Frugal Forensics Implementation Workflow

Forensic Backlog Reduction Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Frugal Forensic Research

| Item | Function | Frugal Application |

|---|---|---|

| Alternative Light Sources | Enhances visibility of latent evidence including fingerprints, bodily fluids, and fibers [35] | Select energy-efficient models with minimal maintenance requirements; consider multi-wavelength LED systems for versatility [35] |

| Basic Fingerprint Powders | Develops latent fingerprints on non-porous surfaces [35] | Focus on core color types (black, white, magnetic); ensure proper storage to extend shelf life [35] |

| Local Chemical Substitutes | Replaces imported reagents for various chemical development techniques [35] | Develop formulations using locally available laboratory-grade chemicals; validate against standard methods [35] |

| Digital Documentation System | Captures and preserves evidence through imaging [24] | Implement standardized protocols using available digital cameras; ensure proper color calibration and scale placement [24] |

| Statistical Analysis Software | Provides quantitative assessment of evidence significance using likelihood ratio framework [24] | Utilize open-source platforms for statistical analysis and evidence interpretation to reduce licensing costs [24] |

Table: Project Resolution Cost-Benefit Analysis Outcomes [23]

| Metric | Result | Significance |

|---|---|---|

| Total Investment | $186,000 | Special legislative allocation for cold case sexual assault evidence testing |

| Cases Processed | 605 | Unsolved sexual assault cases with retained serological cuttings from 1985 onward |

| Semen-Positive Cases | 317 (52.4%) | Demonstrates value of retaining biological evidence long-term |

| Foreign Male Profiles Developed | 285 (90% of positive cases) | Successful DNA recovery from historical evidence |

| Initial CODIS Hits | 134 to 119 offenders (47% hit rate) | Immediate investigative leads generated |

| 10-Year Follow-up Hits | 164 total matches (58% hit rate) | Demonstrates increasing value as DNA database expands |

| Serial Offender Identification | Multiple serial rapists identified | Crime pattern recognition through DNA connectivity |

Table: Frugal Forensics Implementation Framework

| Principle | Traditional Approach | Frugal Approach |

|---|---|---|

| Technology Adoption | Latest available technology regardless of context | Appropriate technology matched to jurisdictional needs and limitations [35] |

| Supply Chain | Dependent on international suppliers | Local supply chain development and strategic reagent management [35] |

| Quality Assurance | Comprehensive systems potentially exceeding resources | Context-appropriate QA frameworks focused on essential validation [35] |

| Resource Allocation | Based on tradition or equipment availability | Data-driven using cost-benefit analysis and demonstrated impact [23] |

| Method Selection | Standardized protocols regardless of cost-benefit | Frugal principles emphasizing simplicity and robustness [37] [38] |

For researchers and drug development professionals, the integration of new forensic technologies presents a unique challenge: how to strategically allocate limited R&D resources between established traditional methods and emerging digital capabilities. The framework of Technology Readiness Levels (TRL) provides a critical methodology for assessing the maturity of these technologies, from basic principles (TRL 1) to full deployment (TRL 9) [39] [40]. This technical support center offers guides and protocols to navigate this complex landscape, helping your team make data-driven decisions on technology implementation amidst budget constraints.

► FAQs: Technology Prioritization and TRL Scaling

1. What is the TRL scale and why is it critical for forensic technology investment? The Technology Readiness Level (TRL) scale is a formal metric system used to assess the maturity of a specific technology. It ranges from TRL 1 (basic principles observed) to TRL 9 (actual system proven in operational environment) [39]. For forensic and drug development research, using the TRL scale helps identify immediate technical gaps, structure discussions on project status, and estimate the effort required to advance a technology toward deployment [39]. It is a vital tool for performing rough portfolio analysis based on technology maturity, ensuring that R&D budgets are allocated to projects with a viable path to implementation.

2. How does the volume and complexity of digital evidence impact research priorities? The sheer quantity of digital evidence—from smartphones, cloud storage, and Internet of Things (IoT) devices—can be overwhelming and risks overwhelming traditional analysis systems [41] [42]. Modern criminal investigations increasingly involve evidence that is both digital and volatile; features on mobile devices like auto-reboot and USB restricted mode can permanently erase data if not processed immediately [43]. This reality necessitates a strategic shift in research priorities towards developing automated, scalable digital forensics case management systems and tiered analytical approaches to manage this data deluge effectively [42] [43].

3. What are the key differences between traditional and modern digital forensics that affect resource allocation? Traditional digital forensics primarily focused on analyzing data from standalone computers and local hard drives, often involving a physical replica of the data source for offline analysis [41]. Modern digital forensics has evolved to encompass specialized domains like mobile forensics, cloud forensics, blockchain forensics, and analysis of data from drones, video surveillance, and vehicle systems [41]. This expansion requires a flexible and scalable approach, as data volume is now measured in terabytes rather than gigabytes, making traditional methods increasingly time-consuming and inefficient [41]. Budget allocation must therefore account for these new domains and the specialized tools and training they require.

4. How can a tiered approach to digital forensics optimize resource use in a research or operational setting? A tiered approach delineates roles to maximize efficiency. It involves employing Digital Evidence Technicians (DETs) to handle initial device intake, imaging, and triage to identify immediate leads. This frees up highly trained Digital Forensics Examiners (DFEs) to focus on deep-dive analysis, complex data reconstruction, and courtroom testimony [43]. This model prevents highly skilled personnel from being bogged down by routine tasks and allows an organization to build its digital forensics capacity in a staged, strategic way that aligns with budget realities and case complexity.

5. What is a common methodological pitfall when transitioning a technology from a low to a high TRL? A common pitfall is moving to testing in an operational environment before the technology's components have been properly validated in a laboratory setting. Per the TRL scale, a technology must first have its components validated in a laboratory environment (TRL 4) and its integrated components demonstrated in a laboratory environment (TRL 5) before a prototype can be tested in a relevant environment (TRL 6) [39]. Skipping these steps can lead to failures when the technology encounters real-world conditions because fundamental compatibility and performance issues were not resolved in a controlled setting.

► Troubleshooting Guides

Issue 1: Overwhelming Backlog of Digital Evidence

- Problem: A massive and ever-increasing backlog of devices and data is causing significant delays in investigations and research.

- Diagnosis: This is typically caused by the sheer volume of evidence, combined with inefficient, linear workflows and a lack of automated processes [42] [43].

- Solution:

- Implement a Digital Forensics Case Management System: Adopt a centralized platform to streamline workflows, track requests, and automate routine tasks [42].

- Adopt a Tiered Analytical Model: Utilize Digital Evidence Technicians for triage and initial data extraction to free up expert examiners for complex analysis [43].

- Explore Partnerships: Form consortia or regional partnerships with other institutions to share resources, tools, and reduce the financial burden on a single entity [43].

Issue 2: Inadmissible or Unreliable Digital Evidence

- Problem: Evidence collected is being challenged in legal proceedings or is found to be incomplete.

- Diagnosis: This can stem from a "button-pushing" approach where technicians use tools without a deep understanding of their function, improper evidence handling that breaks the chain of custody, or a failure to account for the volatile nature of modern data [43].

- Solution:

- Ensure Immediate Acquisition: Process mobile devices immediately to counter security features that can erase or lock data [43].

- Invest in Continuous Training: Move beyond basic tool proficiency to ensure personnel understand the underlying processes and legal standards [43].

- Maintain Chain of Custody: Use a case management system that automatically tracks the evidence chain of custody to ensure integrity [42].

Issue 3: Failure to Advance a Technology's TRL

- Problem: A research project remains at a low TRL and cannot progress to validation or operational testing.

- Diagnosis: The project may lack a clear integration plan, well-documented end-user requirements, or the component compatibility may not have been demonstrated in a laboratory environment (TRL 4) [39].

- Solution:

- Document End-User Requirements: Clearly define and document what the end-user needs from the technology [39].

- Develop a Plausible Integration Plan: Create a draft plan for integrating components and demonstrate their compatibility in a fully controlled lab environment [39].

- Validate Individual Components: Before full integration, successfully test individual components in a laboratory setting [39].

► Experimental Protocols & Methodologies

Protocol 1: Technology Maturity Assessment Using the TRL Scale

Objective: To systematically evaluate and determine the Technology Readiness Level of a specific forensic or pharmaceutical technology.

Workflow:

- Define the Technology: Precisely delineate the technology product or process to be assessed.

- Gather Documentation: Collect all available data on the technology, including research papers, test reports, and performance metrics.

- TRL Questionnaire: Assess the technology against the official TRL scale requirements [39]. Key questions include:

- Has basic scientific principle been observed and documented? (TRL 1)

- Has a proof-of-concept been validated through experiment or simulation? (TRL 3)

- Have integrated components been demonstrated in a laboratory environment? (TRL 5)

- Has a prototype been demonstrated in an operational environment? (TRL 7)

- Determine Maturity Level: The technology's TRL is the highest level for which it meets all requirements.

- Identify Gaps: Document the specific tests or developments required to advance to the next TRL.

Technology Readiness Level Assessment Workflow

Protocol 2: Spectroscopic Bloodstain Age Estimation

Objective: To estimate the age of a forensic bloodstain by analyzing age-related color changes in hemoglobin derivatives using spectroscopy.

Workflow:

- Sample Collection: Collect a controlled bloodstain sample from the crime scene or experimental setup.

- Spectroscopic Analysis: Subject the sample to spectroscopic analysis. This technique records the intensity distribution of light (spectra) as a function of wavelength [44].

- Spectral Band Identification: Identify key spectral bands, particularly the Soret band (~425 nm in young stains) and peaks for oxyhemoglobin (542 nm, 577 nm) and methemoglobin (510 nm, 631.8 nm) [44].

- Monitor Spectral Shift: Note that as blood ages, the Soret peak shifts toward ~400 nm, and the oxyhemoglobin peaks diminish in favor of methemoglobin peaks [44].

- Mathematical Comparison: Compare the obtained spectral bands with established literature values to estimate the approximate age of the bloodstain [44].

Table 1: Technology Readiness Level (TRL) Definitions and Requirements

| TRL | Category | Description | Key Requirements |

|---|---|---|---|

| 1-3 | Basic Research | Basic principles observed and formulated; proof-of-concept established. | Basic principles documented; application formulated; feasibility validated via experiment/modeling [39]. |

| 4-5 | Applied Research | Components validated and integrated in a lab environment. | End-user requirements documented; components validated (TRL 4); integration demonstrated in lab (TRL 5) [39]. |

| 6-7 | Development | Prototype demonstrated in relevant and then operational environments. | Prototype tested in realistic environment (TRL 6); demonstrated in operational environment (TRL 7) [39]. |

| 8-9 | Implementation | Technology proven and deployed in its operational environment. | System proven in operational environment (TRL 8); technology deployed and operational (TRL 9) [39]. |

Table 2: Traditional vs. Modern Digital Forensics Domains

| Aspect | Traditional Digital Forensics | Modern Digital Forensics |

|---|---|---|

| Primary Focus | Standalone computers, local hard drives [41]. | Mobile devices, cloud platforms, blockchain, IoT, drones [41]. |

| Data Scale | Gigabytes (GB) [41]. | Terabytes (TB) [41]. |

| Key Challenges | Physical access to devices; creating data replicas [41]. | Data volatility; encryption; vast data volume; need for specialized domains (cloud, mobile) [41] [43]. |

| Investigation Scale | Single device, localized analysis [41]. | Distributed, multi-source, cross-platform analysis [41]. |

► The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Technology Research

| Item | Function |

|---|---|

| Spectrometer | A biophysical instrument used to record the interaction of electromagnetic radiation with matter, producing spectra for substance identification and analysis (e.g., bloodstain age estimation) [44]. |

| Mobile Forensics Tool | Advanced software and hardware used to extract and analyze data from smartphones, tablets, and wearables, often dealing with encrypted or locked devices [41]. |

| Cloud Forensics Platform | Specialized software for retrieving, analyzing, and preserving data from remote cloud environments, navigating complex, shared infrastructures [41]. |

| Digital Forensics Case Management System | A centralized software platform that streamlines workflows, tracks evidence chain-of-custody, automates tasks, and facilitates collaboration for managing complex digital evidence [42]. |

| TRL Scale | A formal assessment framework used as a guide to structure discussions and evaluate the maturity and readiness of a technology for deployment [39]. |

► Strategic Implementation Framework

Strategic Technology Implementation Framework

FAQs on TRLs and Budget-Led Implementation

1. What are Technology Readiness Levels (TRLs), and why are they important for managing budget constraints?

The Technology Readiness Level (TRL) is a systematic metric for assessing the maturity of a particular technology. It divides the product creation process into 9 distinct stages, providing a common language for researchers, funders, and stakeholders to evaluate progress and risk [45]. Originally developed by NASA, the scale is now widely used in other governmental departments and R&I programmes like the EU's Horizon Europe [46].

For projects operating under budget constraints, the TRL framework is indispensable for phasing investments according to risk. It helps prevent the common pitfall of over-investing in unproven concepts by ensuring that funding is released progressively as a technology delivers validated evidence of its feasibility and effectiveness. This methodical, stage-gated approach allows for rational allocation of often-limited public research funds, helping to bridge the "valley of death" between basic research and industrial application [46].

2. How can I map my drug development project to the TRL scale?

For medical product development, including drugs and biologics, more tailored TRL scales have been created. The following table aligns general TRL definitions with specific criteria for medical countermeasures, providing a concrete roadmap for your project [47].

| TRL | General Definition [45] [46] | Specific Milestones for Medical Products [47] | Typical Budget Focus |

|---|---|---|---|

| TRL 1-2 | Basic principles observed; practical applications formulated. | Review of scientific knowledge base; generation of hypotheses and experimental designs. | Minimal funding for foundational research. |

| TRL 3-4 | Active R&D; experimental proof-of-concept; first laboratory prototype. | Target identification; non-GLP in vivo proof-of-concept; candidate optimization. | Focused funding for de-risking core hypotheses. |

| TRL 5-6 | Validation in relevant environment; prototyping in a lab environment. | Initiation of GMP process development; GLP non-clinical studies; IND submission; Phase 1 clinical trial. | Major increase for process development & early regulatory steps. |

| TRL 7-8 | System prototype demonstration in operational environment; technology completed and qualified. | Scale-up and validation of GMP process; Phase 2 & 3 clinical trials; NDA/BLA submission and FDA approval. | Peak funding for pivotal trials and manufacturing. |

| TRL 9 | Actual system proven in operational environment. | Post-approval activities (Phase 4 studies, safety surveillance). | Budget for lifecycle management. |

3. What are the most common budget-related failures during the transition from mid to high TRLs?

The most common failure is underestimating the cost and complexity of scaling and validation, leading to a funding gap that halts promising technologies.

- Transition from TRL 4 to TRL 5-6: A proof-of-concept prototype (TRL 4) is often developed with research-grade materials and processes. The jump to TRL 5-6 requires initiating GMP process development and conducting GLP non-clinical studies to support an IND application [47]. This shift from research to regulated development entails a significant, non-linear increase in costs for specialized expertise, quality systems, and compliant documentation.

- Transition from TRL 6 to TRL 7-8: Success in early-phase clinical trials (TRL 6) must be followed by even more costly Phase 2/3 trials and GMP manufacturing scale-up (TRL 7-8) [47]. Budget forecasts often fail to account for the sheer expense of running large-scale, multi-center trials and validating commercial-scale manufacturing processes. A lack of proactive budget planning for these stages is a primary reason projects stall.

4. Beyond TRL, what other "readiness levels" should I consider for comprehensive planning?

While TRL assesses core technological maturity, a successful launch depends on other critical factors. Several complementary frameworks exist to provide a more holistic assessment [48].

- Manufacturing Readiness Level (MRL): Measures how close a technology is to being manufactured at scale. A high-TRL prototype may have a low MRL if it relies on non-scalable processes or materials [48].

- Commercial Readiness Level (CRL): Assesses market demand, competitive advantage, and business model viability. A "technically perfect" product will fail without a validated market [48].

- Integration Readiness Level (IRL): Evaluates the maturity of interfaces and compatibility between subsystems. This is crucial for complex technologies involving hardware, software, and data integration [48].