Multivariate Statistical Chemometric Tools for Forensic Evidence Interpretation: Enhancing Objectivity and Accuracy

This article provides a comprehensive overview of the application of multivariate statistical chemometric tools in forensic evidence interpretation.

Multivariate Statistical Chemometric Tools for Forensic Evidence Interpretation: Enhancing Objectivity and Accuracy

Abstract

This article provides a comprehensive overview of the application of multivariate statistical chemometric tools in forensic evidence interpretation. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of chemometrics, detailing key methodologies like Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA), and Partial Least Squares Discriminant Analysis (PLS-DA). The content covers practical applications across forensic disciplines—from drug profiling and explosive residue analysis to toxicology and trace evidence. It further addresses critical challenges in method optimization, troubleshooting common pitfalls, and outlines rigorous validation frameworks to ensure reliability and courtroom admissibility. By synthesizing the latest advancements, this review serves as a guide for integrating objective, data-driven chemometric approaches to strengthen forensic conclusions.

Chemometrics in Forensic Science: Foundational Principles and Core Concepts

Chemometrics is the application of mathematical and statistical methods to the analysis of chemical data, enabling the extraction of meaningful information from complex multivariate datasets [1] [2]. Originally developed in the 1970s primarily for process monitoring and spectroscopic calibrations, this discipline has found ever-widening applications across chemical process environments, pharmaceutical quality control, food and flavor analysis, and notably, forensic science [1] [3] [4]. The core strength of chemometrics lies in its ability to form mathematical/statistical models based on historical process data, allowing new data to be compared against models of normal operation to detect changes, classify samples, or identify faults in systems [1].

In recent years, chemometrics has emerged as a transformative approach in forensic science, offering objective and statistically validated methods to interpret evidence while mitigating human bias [3]. This application is particularly valuable as forensic science often relies on physical evidence to reconstruct events and establish links between people, places, and objects. Traditional methods of evidence interpretation, often based on visual comparisons and expert judgment, are increasingly viewed as vulnerable to bias and subjective errors [3]. Chemometrics addresses these challenges by allowing forensic examiners to move beyond subjective visual analysis and make data-driven interpretations using statistical models [3].

Fundamental Chemometric Methods

Core Multivariate Techniques

Chemometrics encompasses a suite of multivariate statistical methods designed to handle complex datasets where multiple variables are measured simultaneously. These techniques can be broadly categorized into unsupervised and supervised pattern recognition methods [2].

Table 1: Fundamental Chemometric Techniques and Their Applications

| Technique | Type | Primary Function | Common Forensic Applications |

|---|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised | Exploratory data analysis, dimensionality reduction, outlier detection | Initial exploration of spectral data, identifying natural clustering patterns in evidence [5] [2] |

| Linear Discriminant Analysis (LDA) | Supervised | Classification and discrimination of predefined groups | Differentiating between authentic and counterfeit pharmaceuticals, classifying textile fibers [3] [2] |

| Partial Least Squares (PLS) | Supervised | Regression modeling, predicting response variables | Quantitative analysis of API in pharmaceuticals, predicting material properties [1] [6] |

| Partial Least Squares-Discriminant Analysis (PLS-DA) | Supervised | Classification using a regression framework | Identifying body fluid traces, differentiating lipstick varieties [3] [5] |

| Soft Independent Modeling of Class Analogy (SIMCA) | Supervised | Class modeling using PCA principles | Verification of sample authenticity, pharmaceutical tablet identification [2] [6] |

| Support Vector Machines (SVM) | Supervised | Classification and regression using kernel methods | Complex pattern recognition in spectral data, gunshot residue analysis [3] [2] |

Unsupervised methods like Principal Component Analysis (PCA) are used for exploratory data analysis without prior knowledge of sample categories. PCA is a projection method that looks for directions in the multivariate space progressively providing the best fit of the data distribution, effectively reducing data dimensionality while minimizing information loss [5]. The mathematical foundation of PCA involves the bilinear decomposition of a data matrix X according to the equation: X = TP^T + E, where T represents the scores matrix (coordinates of samples in the reduced space), P denotes the loadings matrix (directions of maximum variance), and E contains the residuals [5].

Supervised methods such as Linear Discriminant Analysis (LDA) and Partial Least Squares-Discriminant Analysis (PLS-DA) are employed when sample categories are known in advance, building models to classify new unknown samples [3] [2]. These techniques focus on finding combinations of variables that best separate predefined classes, making them particularly valuable for forensic discrimination tasks where the question is whether two samples could originate from the same source.

Advanced Modeling Approaches

More sophisticated chemometric methods continue to emerge, including Support Vector Machines (SVM) and Artificial Neural Networks (ANNs), which offer powerful tools for handling complex nonlinear relationships in data [3] [2]. These advanced modeling approaches are particularly useful for tackling challenging forensic problems such as interpreting mixed DNA samples, analyzing low-quality or degraded evidence, and dealing with complex mixture analysis where traditional methods may fall short.

The integration of these multivariate methods in forensic analysis is increasing tremendously as it helps in deciphering all aspects of investigation including identification, differentiation, and classification of exhibits [2]. A literature survey from 2007 to 2018 revealed that PCA (36.23%) and discriminant analysis (33.33%) were the most frequently utilized chemometric methods in forensic science, while kNN and others were least utilized [2].

Chemometrics in Process Monitoring

Process Analytical Technology (PAT)

In industrial settings, the combination of process chemometrics with analytical techniques is now commonly referred to as Process Analytical Technology (PAT) [7]. Some of the most profitable uses of chemometrics technologies to date have been in the process environment, where these approaches are employed for chemical process monitoring and fault detection [1] [7]. PAT applications involve unique considerations including regulatory compliance, on-line model deployment logistics, and model performance monitoring, requiring specialized approaches beyond standard chemometric methods [7].

The typical PAT project timeline encompasses multiple phases: process and/or product development through design of experiments (DOE); scale-up; and manufacturing support involving sampling issues, calibration protocols, and handling "messy" data [7]. Throughout these phases, chemometric tools including exploratory analysis methods (PCA, MCR) and model building methods are systematically applied to optimize processes and ensure product quality [7].

Pharmaceutical Quality Control

Chemometrics plays a particularly important role in pharmaceutical quality control, where spectroscopic techniques such as Near-Infrared (NIR) spectroscopy combined with chemometric tools have been proposed for pharmaceutical quality checks [5]. These methodologies offer significant benefits due to their non-destructive nature, rapid analysis capabilities, and applicability both off-line and in-/at-/on-line [5].

In pharmaceutical applications, chemometrics enables both qualitative identification of active pharmaceutical ingredients (APIs) and quantitative determination of API concentration [5]. This dual capability makes it invaluable for detecting substandard and counterfeit medicines, which may contain no API, a different API from the one declared, or a different (lower) API strength [5]. The combination of spectroscopy with exploratory data analysis, classification, and regression methods can lead to effective, high-performing, fast, non-destructive, and sometimes online methods for checking the quality of pharmaceuticals and their compliance to production and/or pharmacopeia standards [5].

Chemometrics in Forensic Evidence Analysis

Protocols for Forensic Evidence Interpretation

The application of chemometrics in forensic science requires carefully designed protocols to ensure scientific rigor and legal admissibility. According to researchers from Curtin University, chemometrics brings a new level of objectivity and rigor to forensic investigations by offering statistically validated methods to interpret evidence [3]. Proper forensic protocols dictate that each piece of evidence must be carefully documented and maintained following a strict chain of custody rules so that it can be analyzed appropriately and used later in legal proceedings [8].

Forensic principles such as beneficence (doing the best for the evidence), non-maleficence (avoiding harm to the evidence), and justice are borrowed and adapted from medical bioethics to forensic bioethics, guiding forensic scientists to prioritize maximizing the probative value of evidence while ensuring its preservation for potential retesting [8]. Analytical processes are conducted in a layered manner, from non-destructive to more consumptive tests, so that evidence remains available for defense examinations or further analysis if necessary [8].

Table 2: Forensic Evidence Types and Appropriate Chemometric Approaches

| Evidence Type | Analytical Techniques | Recommended Chemometric Methods | Application Examples |

|---|---|---|---|

| Pharmaceuticals & Drugs | NIR, Raman spectroscopy, Chromatography | PCA, SIMCA, PLS-DA | Detection of counterfeit medicines, identification of illicit drugs [5] [2] |

| Trace Evidence (fibers, paints, glass) | FT-IR, Raman spectroscopy, SEM-EDS | PCA, LDA, SVM | Source identification, comparative analysis [3] [2] |

| Body Fluids | ATR-FTIR, Raman spectroscopy | PCA, PLS-DA, LDA | Identification of semen, vaginal fluid, urine, blood in stained evidence [2] |

| Explosives & Fire Debris | GC-MS, FT-IR | PCA, PLS-DA | Identification of explosive residues, accelerant classification [3] [2] |

| Gunshot Residue | SEM-EDS, ICP-MS | PCA, Regularized Discriminant Analysis | Ammunition brand differentiation [2] |

| Toxicological Samples | LC-MS, GC-MS | PCA, PLS, SVM | Metabolite profiling, substance identification [3] |

Implementation Workflow

The successful application of chemometrics in forensic analysis follows a systematic workflow that ensures results meet the stringent requirements for legal proceedings. The generalized protocol involves evidence collection and preservation, analytical measurement, data preprocessing, chemometric analysis, and statistical interpretation and reporting.

Experimental Protocols for Forensic Chemometrics

Protocol 1: Chemometric Analysis of Suspected Counterfeit Pharmaceuticals

Objective: To identify and classify suspected counterfeit pharmaceutical tablets using Raman spectroscopy combined with chemometric pattern recognition techniques.

Materials and Equipment:

- Raman spectrometer with microscope attachment

- Reference standards of authentic pharmaceutical products

- Suspected counterfeit tablets

- Chemometric software (e.g., Mnova Advanced Chemometrics, PLS_Toolbox with MATLAB, or CAT - Chemometric Agile Tool) [9] [6]

Procedure:

- Sample Preparation:

- Prepare a representative subset of each authentic and suspected counterfeit tablet.

- For each sample, create a uniform surface for analysis when necessary.

Spectral Acquisition:

- Acquire Raman spectra from multiple locations on each tablet (minimum 5 spectra per sample) to account for heterogeneity.

- Use consistent instrumental parameters: laser power, exposure time, and spectral resolution.

- Collect spectra across an appropriate wavenumber range (e.g., 200-2000 cm⁻¹).

Data Preprocessing:

- Apply necessary preprocessing techniques to minimize confounding variances:

- Perform baseline correction to remove fluorescence background

- Apply vector normalization to account for intensity variations

- Use Savitzky-Golay smoothing if needed to improve signal-to-noise ratio

- Apply necessary preprocessing techniques to minimize confounding variances:

Exploratory Data Analysis:

- Perform PCA on the preprocessed spectral data to visualize natural clustering patterns.

- Examine scores plots to identify potential outliers and observe separation between authentic and suspected counterfeit samples.

- Inspect loadings plots to identify spectral regions contributing most to variance.

Classification Modeling:

- Develop a SIMCA model using authentic reference samples as the training set.

- Define acceptance thresholds based on critical distance measures (Q-residuals and Hotelling's T²).

- Apply the model to classify suspected counterfeit samples.

- Validate model performance using cross-validation and external validation sets when available.

Reporting:

- Document all model parameters, including number of principal components used, classification results, and statistical confidence measures.

- Report the chemical basis for any classification decisions based on loadings interpretation.

Protocol 2: Chemometric Discrimination of Trace Evidence

Objective: To discriminate between trace evidence samples (e.g., fibers, paints, glass) recovered from crime scenes and known reference materials using spectroscopic techniques and chemometric classification.

Materials and Equipment:

- FT-IR or Raman spectrometer

- Microscope for sample examination

- Reference materials from potential sources

- Chemometric software with classification capabilities

Procedure:

- Sample Collection and Preparation:

- Collect trace evidence following proper chain of custody protocols.

- Prepare representative subsamples for spectroscopic analysis.

- Mount samples appropriately for transmission or reflectance measurements.

Spectral Collection:

- Acquire infrared or Raman spectra from all samples using consistent parameters.

- Ensure sufficient spectral resolution and signal-to-noise ratio for discrimination.

- Collect multiple spectra from different areas of heterogeneous samples.

Data Preprocessing:

- Apply appropriate preprocessing: baseline correction, normalization, and derivatives if needed.

- Consider standard normal variate (SNV) or multiplicative scatter correction (MSC) for reflectance spectra.

- Segment spectra into relevant regions if full-spectrum analysis is not optimal.

Pattern Recognition:

- Perform initial PCA to explore natural grouping tendencies.

- Apply LDA or PLS-DA to maximize separation between predefined classes.

- Use cross-validation to optimize model parameters and avoid overfitting.

- For complex datasets, employ SVM with appropriate kernel functions.

Model Validation:

- Validate classification models using external test sets not used in model building.

- Calculate performance metrics: sensitivity, specificity, and overall accuracy.

- Establish likelihood ratios when possible to convey evidential strength.

Interpretation and Reporting:

- Interpret results in the context of the forensic question.

- Report statistical confidence measures for classification decisions.

- Document all procedures for potential courtroom testimony.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Chemometric Analysis

| Item | Function | Application Examples |

|---|---|---|

| Reference Standards | Provide authenticated materials for model development and validation | Pharmaceutical API standards, authenticated fiber samples, known explosive compounds [5] [2] |

| Spectroscopic Grade Solvents | Sample preparation and extraction without introducing spectral interference | HPLC-grade solvents for extraction prior to spectroscopic analysis [4] [2] |

| Chemometric Software | Implementation of multivariate algorithms for data analysis | Commercial packages (Mnova, PLS_Toolbox) or freeware (CAT) for PCA, PLS, SIMCA [9] [6] |

| Validated Spectral Databases | Reference collections for comparison and identification | Databases of authentic pharmaceutical products, fiber spectra, or paint layers [3] [2] |

| Quality Control Samples | Monitor analytical system performance and model stability | Stable reference materials for periodic system suitability testing [10] [7] |

Method Validation and Quality Assurance

Validation Protocols for Chemometric Methods

For chemometric methods to be admissible in legal proceedings, they must undergo rigorous validation following established scientific standards. According to the Curtin University research team, one key issue is the validation of chemometric methods against known "ground-truth" samples [3]. Before these techniques can be used routinely in forensic laboratories, their accuracy, error rates, and reliability need to be thoroughly documented and tested [3].

The validation process should assess multiple method characteristics including:

- Accuracy: The ability of the model to correctly classify samples or predict properties

- Precision: Repeatability and reproducibility of model results

- Sensitivity and Specificity: Rates of true positive and true negative classifications

- Robustness: Model performance under variations in analytical conditions

- Limits of Detection/Classification: Minimum sample requirements for reliable results

For forensic DNA analysis specifically, standards such as ANSI/ASB Standard 040 provide requirements for laboratory DNA interpretation and comparison protocols, which can be adapted for chemometric applications [10]. These protocols should encompass all variables permitted in the technical protocols that may have an impact on the data generated and the variety and range of test data anticipated in casework [10].

Continuous Model Performance Monitoring

In both process monitoring and forensic applications, continuous monitoring of chemometric model performance is essential. Drift in analytical instrumentation or changes in sample characteristics can degrade model performance over time, requiring model updating or recalibration [7]. The decision between model augmentation versus replacement depends on the extent of the changes and the availability of new reference data [7].

Implementation of statistical process control charts for model metrics such as Q-residuals and Hotelling's T² can provide early warning of model degradation [1] [7]. This proactive approach to model maintenance ensures the long-term reliability of chemometric methods in both industrial and forensic settings.

Chemometrics represents a powerful bridge between complex analytical data and meaningful interpretations across both process monitoring and forensic evidence analysis. The multivariate statistical tools at the heart of chemometrics—including PCA, PLS, SIMCA, and various discriminant analysis methods—provide objective, statistically grounded approaches to extract maximum information from chemical data [1] [3] [2].

As forensic science moves toward greater objectivity and quantitative rigor, chemometrics plays an increasingly crucial role in transforming how evidence is interpreted and presented in legal contexts [3]. Similarly, in process environments, chemometrics enables more efficient monitoring, fault detection, and quality control through the PAT framework [7]. The ongoing development of chemometric tools, coupled with appropriate validation protocols and quality assurance measures, promises to further advance both fields in the coming years.

Despite challenges in validation and adoption, the trajectory is clear: chemometric methods are on the verge of becoming mainstream in both industrial and forensic applications, offering enhanced accuracy, reduced bias, and statistically defensible conclusions that stand up to scientific and legal scrutiny [3] [2].

Multivariate analysis techniques are powerful tools for interpreting complex chemical data, offering objective and statistically validated methods for forensic evidence analysis [3]. In forensic science, the interpretation of evidence from materials such as homemade explosives (HMEs), fibers, paints, and pharmaceuticals often relies on analytical techniques like vibrational spectroscopy and chromatography, which generate large, multidimensional datasets [3] [11]. The visual inspection of this data is often insufficient for reliable conclusions, necessitating the use of multivariate statistical methods to uncover subtle patterns and differences [12]. Techniques such as Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA), and Partial Least Squares-Discriminant Analysis (PLS-DA) have therefore become fundamental in transforming complex instrumental readings into actionable forensic intelligence [12] [3] [11]. This document provides detailed application notes and protocols for these core techniques, framed within the context of forensic evidence interpretation research.

Technique Definitions and Objectives

Principal Component Analysis (PCA) is an unsupervised technique primarily used for exploratory data analysis and dimensionality reduction. It transforms the original variables of a dataset into a new set of uncorrelated variables, called Principal Components (PCs), which successively capture the maximum variance in the data. The scores plot helps visualize sample similarities, while the loadings plot identifies the original variables (e.g., wavenumbers in spectroscopy) responsible for the observed clustering [12].

Linear Discriminant Analysis (LDA) is a supervised classification method designed to find a linear combination of features that best separates two or more classes. Its goal is to maximize the variance between classes (inter-class variance) while minimizing the variance within each class (intra-class variance). This projects the data into a new space where classes are as distinct as possible [13]. A key limitation is that it cannot be applied directly when the number of variables exceeds the number of samples, which is common in spectroscopic data. This limitation is often overcome by using PCA scores as input for LDA, creating a PCA-LDA pipeline [12].

Partial Least Squares-Discriminant Analysis (PLS-DA) is another supervised technique that combines the principles of Partial Least Squares (PLS) regression with discriminant analysis. It aims to find latent variables (LVs) that not only capture the variance in the predictor variables (X, e.g., spectral data) but are also maximally correlated with the response variable (Y, e.g., class membership). It is particularly effective for handling datasets with a large number of correlated variables [12] [14].

Table 1: Comparative overview of PCA, LDA, and PLS-DA characteristics.

| Feature | PCA | LDA | PLS-DA |

|---|---|---|---|

| Analysis Type | Unsupervised | Supervised | Supervised |

| Primary Goal | Dimensionality reduction, exploratory analysis, and visualization | Classification and feature projection for optimal class separation | Classification and modeling the relationship between X and Y |

| Key Criterion | Maximizes variance in the entire dataset | Maximizes inter-class variance and minimizes intra-class variance | Maximizes covariance between X and the class label Y |

| Output | Principal Components (PCs), scores, and loadings | Discriminant functions and group centroids | Latent Variables (LVs), scores, loadings, and regression coefficients |

| Handling High-Dimensional Data | Directly applicable; reduces dimensionality | Requires prior dimensionality reduction (e.g., via PCA) | Directly applicable |

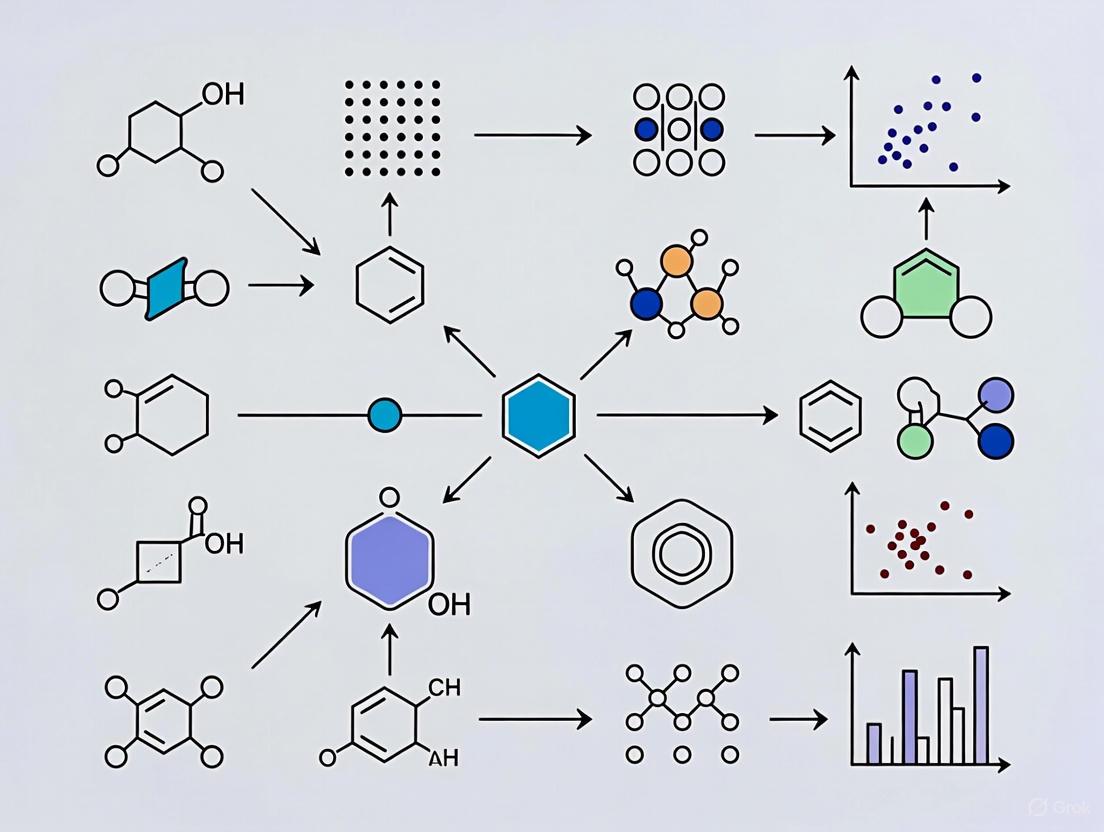

Figure 1: A decision workflow for selecting and applying PCA, LDA, and PLS-DA in data analysis.

Experimental Protocols

Protocol for Principal Component Analysis (PCA)

Objective: To explore a multivariate dataset, reduce its dimensionality, and identify inherent patterns, clusters, or outliers without using prior class information [12].

Materials and Software:

- Multivariate dataset (e.g., spectral intensities across wavenumbers)

- Software with PCA capability (e.g., MATLAB, Python with scikit-learn)

Procedure:

- Data Preprocessing: Arrange data into matrix X (n samples × m variables). Common preprocessing includes mean-centering and scaling (e.g., unit variance) to ensure all variables contribute equally.

- Covariance Matrix Computation: Calculate the covariance matrix of the preprocessed data to understand how variables relate to each other.

- Eigenvalue Decomposition: Perform decomposition of the covariance matrix to obtain eigenvalues and eigenvectors. The eigenvectors represent the directions (Principal Components, PCs) of maximum variance, and eigenvalues represent the magnitude of variance along each PC.

- Projection: Project the original data onto the new PC axes to obtain the scores matrix (T). Each row in T contains the coordinates of a sample in the new PC space.

- Interpretation:

- Variance Explained: Examine the percentage of total variance explained by each PC to decide how many to retain.

- Scores Plot: Plot scores of PC1 vs. PC2 (and further PCs) to visualize sample clustering and identify potential outliers.

- Loadings Plot: Plot the loadings (the weights of the original variables in each PC) to identify which variables are most influential in defining the sample patterns observed in the scores plot.

Protocol for PCA-Linear Discriminant Analysis (PCA-LDA)

Objective: To build a classification model that discriminates between pre-defined classes in a high-dimensional dataset [12] [11].

Materials and Software:

- Multivariate dataset with known class labels

- Software with PCA and LDA capability

Procedure:

- Data Splitting: Split the dataset into a calibration (training) set and a validation (test) set.

- PCA on Calibration Set: Perform PCA on the calibration data as per the protocol in section 3.1. Retain a number of PCs that capture the majority of the relevant variance.

- LDA Model Building: Use the PCA scores from the calibration set as the new input variables for LDA.

- LDA calculates discriminant functions that maximize the ratio of between-class variance to within-class variance.

- The result is a set of decision boundaries that separate the classes in the reduced PCA space.

- Model Validation: Apply the built PCA-LDA model (using both the PCA and LDA parameters) to the test set.

- Project test set samples into the PCA space defined by the calibration set.

- Use the LDA decision functions to predict the class of each test sample.

- Performance Assessment: Calculate classification accuracy, sensitivity, and specificity by comparing predictions against the true class labels of the test set.

Protocol for Partial Least Squares-Discriminant Analysis (PLS-DA)

Objective: To build a supervised classification model that directly relates the predictor variables (X) to class membership (Y) by maximizing their covariance [12] [14].

Materials and Software:

- Predictor matrix X (e.g., spectral data)

- Response matrix Y (class membership, coded as dummy variables, e.g., -1 and +1)

- Software with PLS-DA capability (e.g., MATLAB)

Procedure:

- Data Preparation: Define the X matrix and create a dummy Y matrix that encodes the class membership for each sample.

- Model Training:

- The PLS-DA algorithm iteratively extracts Latent Variables (LVs) from X that are maximally correlated with Y.

- The model is defined by the equation: Y = XB + E, where B is the matrix of regression coefficients and E is the residuals matrix.

- Model Interpretation:

- Scores Plot: Plot the sample scores for LV1 vs. LV2 to visualize class separation.

- Loadings Plot: Analyze the loadings to understand which X-variables are most influential for the discrimination.

- Variable Importance in Projection (VIP): Use VIP scores to identify which variables contribute most to the classification model. Variables with a large regression coefficient in B have a strong influence on the prediction [12].

- Prediction and Validation: Use the calibrated model to predict the class of new, unknown samples. Validate the model using a separate test set or cross-validation and report performance metrics.

Applications in Forensic Evidence Interpretation

Case Study: Classification of Homemade Explosives (HMEs)

The analysis of HMEs is a significant forensic challenge due to their chemical variability and complex sample matrices. ATR-FTIR spectroscopy combined with chemometrics has proven effective for their classification [11].

Table 2: Application of PCA-LDA and PLS-DA in forensic analysis of ammonium nitrate (AN) products [11].

| Analysis Aspect | PCA-LDA Workflow | PLS-DA Workflow |

|---|---|---|

| Analytical Technique | ATR-FTIR spectroscopy and ICP-MS | ATR-FTIR spectroscopy |

| Data Preprocessing | Spectral collection, potential normalization | Spectral collection, potential normalization |

| Chemometric Step 1 | PCA for dimensionality reduction and exploratory analysis | Direct modeling of X (spectra) and Y (class: pure vs. homemade AN) |

| Chemometric Step 2 | LDA on PCA scores to maximize class separation | Extraction of Latent Variables (LVs) maximizing X-Y covariance |

| Key Discriminators | Sulphate peaks from ATR-FTIR; trace elements from ICP-MS | Spectral features correlated with class identity |

| Reported Performance | 92.5% classification accuracy | Comparable high accuracy (specific value not listed) |

Case Study: Pharmaceutical Drug Degradation Prediction

In pharmaceutical forensics and development, predicting drug stability is crucial. An in-silico study on amlodipine besylate used LDA to distinguish between degradation patterns in the presence of different co-medicated antihypertensive drugs [15]. Drug-specific degradation products identified via software (Zeneth) were used as predictors. The LDA successfully differentiated the degradation profiles, with the group centroid distance order revealing that amlodipine degrades differently when combined with ACE inhibitors or beta-blockers compared to being alone or with diuretics [15]. This application demonstrates the use of LDA for classifying and interpreting complex chemical reaction pathways.

Performance Metrics from Vibrational Spectroscopy

The utility of these techniques is further confirmed in biomedical diagnostics. A study classifying vibrational spectra of breast cells achieved high performance using both PCA-LDA and PLS-DA [12]. The built classification models distinguished different spectral types with:

- Accuracy: between 93% and 100%

- Sensitivity: between 86% and 100%

- Specificity: between 90% and 100% [12]

This underscores the considerable potential of combining vibrational spectroscopy with multivariate analysis for reliable diagnostic and forensic models.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key reagents, software, and analytical techniques used in chemometric analysis for forensic science.

| Item Name | Function/Application |

|---|---|

| Fourier-Transform Infrared (FTIR) Spectrometer | Provides molecular fingerprint data of samples through infrared absorption; fundamental for generating multivariate data for analysis [12] [11]. |

| Raman Spectrometer | Provides complementary molecular information to FTIR via inelastic scattering of light; used for analyzing biological materials and trace evidence [12] [3]. |

| Gas Chromatography-Mass Spectrometry (GC-MS) | Separates and identifies complex mixtures of volatile compounds; generates data suitable for chemometric profiling of substances like explosives and drugs [11]. |

| Zeneth Software (Lhasa Limited) | A commercially available in-silico tool for predicting chemical degradation pathways and products of drug substances; generates data for subsequent discriminant analysis [15]. |

| MATLAB | A widely used computing platform for implementing multivariate analysis protocols, including PCA, PLSDA, and PLSR, with detailed step-by-step guides available [14]. |

| ATR-FTIR Accessory | Enables minimal sample preparation for FTIR analysis, providing high surface sensitivity for solid-phase forensic evidence like explosives and pharmaceuticals [11]. |

Figure 2: Logical relationships between data, chemometric methods, and the insights they generate.

Forensic science is a cornerstone of modern criminal justice, relied upon to reconstruct events and establish critical links between people, places, and objects. However, traditional forensic methods, often based on visual comparisons and expert judgment, are increasingly recognized as vulnerable to human cognitive bias and subjective error [3]. The 2009 National Academy of Sciences (NAS) report ignited a significant transformation within the forensic community, highlighting a "dearth of peer-reviewed published studies" and the susceptibility of pattern-matching disciplines to cognitive bias [16]. These biases, which are unconscious decision-making shortcuts, can systematically influence how forensic experts collect, perceive, and interpret information [16] [17]. In response, the field is undergoing a paradigm shift toward greater objectivity and statistical rigor. This application note explores the integration of chemometric—multivariate statistical tools for chemical data interpretation—as a robust framework for mitigating human bias, thereby enhancing the reliability and credibility of forensic evidence interpretation within a research context [3] [18].

The Challenge of Cognitive Bias in Forensic Science

Cognitive bias is a universal human phenomenon, not a reflection of incompetence or unethical behavior [16] [17]. In forensic science, where decisions can have profound legal consequences, these biases present a significant risk. Experts are vulnerable to a range of biases, such as confirmation bias, where pre-existing beliefs or expectations (e.g., from knowing a suspect's confession) lead them to seek out or overweight information that confirms their initial hypothesis while disregarding contradictory evidence [16].

Research has identified several fallacies that can prevent experts from acknowledging their vulnerability to bias, as detailed in Table 1 [16] [17].

Table 1: Common Expert Fallacies About Cognitive Bias

| Fallacy | Description | Reality |

|---|---|---|

| The Ethical Fallacy | Only unethical or "bad" people are biased. | Cognitive bias is a normal, unconscious process unrelated to character [17]. |

| The Bad Apples Fallacy | Only incompetent practitioners are biased. | Bias affects even highly skilled experts; technical competence does not confer immunity [16] [17]. |

| Expert Immunity | Extensive experience and expertise shield against bias. | Expertise may increase reliance on automatic, "fast-thinking" mental shortcuts, potentially enhancing vulnerability [16] [17]. |

| Technological Protection | Advanced instruments, AI, or actuarial tools eliminate bias. | Technology is built, operated, and interpreted by humans, so bias can persist in system design and data interpretation [16] [17]. |

| Bias Blind Spot | "I know bias exists, but I am not susceptible to it." | People are consistently better at recognizing bias in others than in themselves [17]. |

| Illusion of Control | Willpower and awareness alone can prevent bias. | Bias occurs automatically; conscious awareness is insufficient for mitigation [16]. |

The cognitive process of a forensic examiner, from evidence inspection to conclusion, is susceptible to multiple biasing sources. The following workflow diagram visualizes these vulnerabilities and potential mitigation points, adapting Dror's cognitive framework to a general forensic context [17].

Chemometrics as a Framework for Objective Evidence Analysis

Chemometrics provides a powerful statistical toolkit designed to extract meaningful information from complex chemical data. It is defined as the chemical discipline that uses mathematical and statistical methods to design optimal experiments and provide maximum chemical information by analyzing chemical data [18]. In forensic science, chemometrics addresses the core issue of subjectivity by replacing or supplementing human judgment with data-driven, statistically validated models [3].

The application of chemometrics is particularly suited to the multivariate data generated by modern analytical techniques such as Fourier-transform infrared (FT-IR) spectroscopy, Raman spectroscopy, and gas chromatography-mass spectrometry (GC-MS) [3] [11]. By analyzing all variables simultaneously, chemometric models can identify hidden patterns and sample relationships that might be missed through univariate analysis or visual inspection, thus reducing the analyst's cognitive load and exposure to biasing information [3].

Table 2: Key Chemometric Techniques and Their Forensic Applications

| Chemometric Method | Primary Function | Typical Forensic Application |

|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised pattern recognition; reduces data dimensionality and identifies natural clustering. | Exploratory analysis of drug profiles, explosive residues, or ink samples to identify intrinsic groupings [3] [11]. |

| Linear Discriminant Analysis (LDA) | Supervised classification; finds features that best separate predefined groups. | Differentiating between authentic and counterfeit pharmaceuticals or classifying soil samples by geographic origin [3] [11]. |

| Partial Least Squares - Discriminant Analysis (PLS-DA) | Supervised classification; models the relationship between spectral data and class membership. | Quantifying the similarity between glass fragments from a crime scene and a suspect [3]. |

| Support Vector Machines (SVM) | Non-linear classification and regression. | Handling complex, non-linear spectral data for the identification of body fluids or explosive types [3]. |

| Artificial Neural Networks (ANNs) | Non-linear modeling inspired by biological neural networks. | Complex pattern recognition tasks, such as fingerprinting illicit drug manufacturing methods [3]. |

| Hierarchical Cluster Analysis (HCA) | Unsupervised clustering; builds a hierarchy of sample similarities. | Comparing post-blast explosive residues to a database of known materials [11]. |

Experimental Protocols for Bias-Mitigated Forensic Analysis

Protocol 1: Chemometric Analysis of Illicit Drug Profiling Using GC-MS

This protocol outlines a standardized approach for classifying illicit drug samples based on their impurity profiles, minimizing subjectivity in comparison.

- Objective: To classify seized drug samples into distinct groups based on their chromatographic impurity profiles using PCA and LDA for intelligence-led policing.

- Research Reagent Solutions & Materials:

- GC-MS System: Equipped with a non-polar capillary column (e.g., DB-5MS). Functions to separate and detect chemical components.

- Certified Reference Standards: Pure drug standards for method calibration and identification.

- Organic Solvents (e.g., Methanol, Chloroform). HPLC or GC-MS grade for sample preparation.

- Internal Standard Solution: (e.g., Tetracosane). For correcting injection volume inconsistencies.

- Chemometric Software: (e.g., R, PLS_Toolbox, SIMCA, in-house tools like ChemoRe). For multivariate data analysis [18].

- Procedure:

- Sample Preparation: Precisely weigh 1 mg of each homogenized drug sample. Dissolve in 1 mL of solvent containing a known concentration of internal standard. Filter through a 0.45 µm PTFE syringe filter.

- GC-MS Analysis: Inject 1 µL of each sample in randomized order to prevent batch-based bias. Use a standardized temperature gradient and helium carrier gas.

- Data Pre-processing: Export the peak areas of target impurities and the internal standard. Normalize data to the internal standard and optionally apply autoscaling (mean-centering and division by the standard deviation of each variable) to give all peaks equal weight [18].

- Exploratory Analysis (PCA): Input the pre-processed data into a PCA model. Inspect the scores plot to identify natural clusters, trends, and potential outliers among the samples.

- Supervised Classification (LDA): Using the groups suggested by PCA or intelligence, develop an LDA model. Employ cross-validation (e.g., leave-one-out) to validate the model's predictive accuracy and avoid overfitting.

- Reporting: Document the model's classification accuracy, cross-validation results, and the key discriminatory variables (impurity peaks) responsible for sample grouping.

Protocol 2: Objective Identification of Explosive Residues Using FT-IR Spectroscopy and Chemometrics

This protocol is designed for the objective discrimination of different explosive types, which is critical for post-blast investigations and security screening.

- Objective: To discriminate between different types of homemade explosives (HMEs) and commercial explosives based on their IR spectral fingerprints using PLS-DA.

- Research Reagent Solutions & Materials:

- FT-IR Spectrometer: Preferably with an ATR (Attenuated Total Reflectance) accessory to minimize sample preparation.

- Spectral Libraries: Databases of known explosive spectra for model training.

- Chemometric Software: Capable of performing PLS-DA and other multivariate classifications.

- Procedure:

- Sample Collection & Preparation: Collect residue samples using solvent-moistened swabs. Allow solvent to evaporate and press the residue onto the ATR crystal for analysis. For solid samples, ensure firm contact with the crystal.

- Spectral Acquisition: Collect spectra in the range of 4000-600 cm⁻¹ at a resolution of 4 cm⁻¹. Co-add 32 scans per spectrum to ensure a high signal-to-noise ratio. Analyze all samples in a randomized sequence.

- Spectral Pre-processing: Apply standard pre-processing techniques to minimize the effects of baseline drift and scattering: Savitzky-Golay derivative (2nd order, 15 points), Standard Normal Variate (SNV), or Multiplicative Scatter Correction (MSC).

- Model Development (PLS-DA): Construct a PLS-DA model using a training set of spectra from known explosive types (e.g., TATP, ANFO, RDX). The model will learn the spectral features characteristic of each class.

- Model Validation: Test the model's performance on a separate, independent validation set of samples not used in training. Calculate metrics such as sensitivity, specificity, and classification accuracy.

- Application to Unknowns: Input the pre-processed spectrum of an unknown residue into the validated PLS-DA model. The model will output a probability or class assignment for the unknown, providing a statistically grounded identification [11].

The following diagram illustrates the integrated workflow of this objective analysis, from sample to conclusion.

Implementation Strategy and Concluding Remarks

Integrating chemometrics into the standard forensic workflow requires more than just software; it demands a cultural shift toward objective, data-driven decision-making. Successful implementation involves:

- Training and Accessibility: Forensic chemists often find chemometrics demanding [18]. Initiatives like the EU's STEFA-G02 project, which developed the ChemoRe software tool, aim to provide user-friendly interfaces and guidelines to lower the barrier to entry [18] [19].

- Systematic Mitigation: Chemometrics works best as part of a comprehensive bias mitigation strategy. This includes Linear Sequential Unmasking-Expanded (LSU-E), where examiners are exposed to case information sequentially only as needed, and blind verification of results [16] [17].

- Validation and Legal Admissibility: For chemometric results to be admissible in court, the methods must be thoroughly validated. This includes establishing error rates, robustness, and reliability under varying conditions, ensuring they meet the stringent standards of the scientific and legal communities [3].

In conclusion, the pursuit of objectivity in forensic science is not merely a technical upgrade but an ethical imperative. Chemometrics provides a robust, statistical foundation for interpreting complex chemical data, directly mitigating the unconscious cognitive biases that can undermine traditional methods. By adopting these multivariate tools and integrating them into structured protocols that limit contextual bias, forensic researchers and practitioners can significantly enhance the accuracy, reliability, and credibility of their work, thereby strengthening the very foundation of the criminal justice system.

Integrating Chemometrics into the Standard Forensic Workflow

Forensic science is undergoing a significant transformation, moving from traditional subjective comparisons toward objective, data-driven evidence interpretation. The integration of chemometric tools is central to this shift, bringing statistical rigor to the analysis of complex chemical data generated by modern analytical instruments [3]. Chemometrics applies multivariate statistical methods to chemical data, enabling forensic scientists to extract meaningful patterns, classify evidence, and quantify uncertainty with a level of precision previously unattainable [11] [3]. This protocol outlines detailed procedures for incorporating these powerful tools into standard forensic workflows, with a focus on practical application for researchers and scientists.

The core challenge in modern forensic chemistry lies in interpreting the vast, complex datasets produced by techniques like spectroscopy and chromatography. Chemometrics addresses this by providing a structured framework for data exploration and modeling [20]. These statistically validated methods not only enhance analytical accuracy but also mitigate human cognitive biases, thereby strengthening the scientific foundation and legal admissibility of forensic conclusions [3]. This document provides specific application notes and experimental protocols to facilitate this integration across various forensic disciplines.

Core Chemometric Techniques and Their Forensic Applications

Chemometric methods are highly versatile, finding utility across a broad spectrum of forensic evidence types. Their application turns complex, multivariate data into actionable forensic intelligence. The table below summarizes the primary techniques and their specific uses.

Table 1: Key Chemometric Techniques and Their Forensic Applications

| Technique | Primary Function | Typical Forensic Application | Key Strengths |

|---|---|---|---|

| Principal Component Analysis (PCA) | Exploratory data analysis, dimensionality reduction | Identifying natural groupings in evidence (e.g., soil, glass, paint chips); identifying outliers [11] [3]. | Unsupervised; provides visual overview of data structure. |

| Linear Discriminant Analysis (LDA) | Classification and dimensionality reduction | Differentiating between sources of evidence (e.g., industrial vs. homemade explosives) [11] [3]. | Maximizes separation between pre-defined classes. |

| Partial Least Squares-Discriminant Analysis (PLS-DA) | Classification | Identifying the origin of explosive precursors; discriminating between body fluids [11] [3]. | Powerful for correlated variables and noisy data. |

| Support Vector Machines (SVM) | Classification and regression | Building non-linear models for complex evidence profiling [3]. | Effective in high-dimensional spaces; robust. |

| Artificial Neural Networks (ANNs) | Modeling complex non-linear relationships | Advanced pattern recognition in spectral data for identification purposes [3]. | Can model highly complex, non-linear relationships. |

| Hierarchical Cluster Analysis (HCA) | Exploratory data analysis, clustering | Classifying explosive residues based on spectroscopic data without prior class definitions [11]. | Creates a hierarchy of clusters; results are visually intuitive. |

Detailed Experimental Protocols

Protocol 1: Analysis of Homemade Explosive (HME) Residues Using IR Spectroscopy and Chemometrics

This protocol details the use of Attenuated Total Reflectance Fourier-Transform Infrared (ATR-FTIR) spectroscopy coupled with chemometrics for the classification of ammonium nitrate (AN)-based explosives [11].

3.1.1 Research Reagent Solutions & Essential Materials

Table 2: Essential Materials for HME Residue Analysis

| Item | Function/Explanation |

|---|---|

| ATR-FTIR Spectrometer | Provides molecular fingerprint data via surface-sensitive infrared spectroscopy with minimal sample preparation [11]. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Provides complementary trace elemental analysis for enhanced source discrimination [11]. |

| Pure AN Samples | Reference materials for baseline spectral characteristics. |

| Homemade AN Formulations | Casework samples, typically mixed with fuel oils or other precursors [11]. |

| Chemometric Software | Platform (e.g., CAT, MATLAB, R) with capabilities for PCA, LDA, and PLS-DA. |

3.1.2 Step-by-Step Workflow

- Sample Preparation: Homogenize and dry solid residues to remove moisture. For liquid suspensions, filter to isolate solid components. Ensure consistent sample mass and particle size for ATR-FTIR analysis [11].

- Spectral Acquisition: Collect ATR-FTIR spectra for all reference and casework samples across a defined wavenumber range (e.g., 4000-400 cm⁻¹). A minimum of 32 scans per spectrum at a resolution of 4 cm⁻¹ is recommended for signal-to-noise ratio [11].

- Data Preprocessing: Preprocess raw spectral data to remove artifacts. Common techniques include:

- Baseline Correction: To correct for scattering effects.

- Standard Normal Variate (SNV): To minimize path-length effects.

- Savitzky-Golay Smoothing: To reduce high-frequency noise [20].

- Exploratory Analysis (PCA): Perform PCA on the preprocessed spectral dataset. This unsupervised step helps identify natural clusters, detect outliers, and visualize the overall variance within the sample set without using class labels.

- Classification Model (LDA/PLS-DA): Develop a supervised classification model.

- Use known class labels (e.g., "Pure AN," "HME Type A").

- The model utilizes key discriminatory features identified by PCA or prior knowledge (e.g., sulphate peaks from ATR-FTIR, elemental data from ICP-MS) [11].

- Validate the model using a separate test set or cross-validation to determine classification accuracy.

- Interpretation & Reporting: Report the model's classification accuracy and key discriminatory variables. The output provides a statistically supported conclusion on the sample's classification.

The following workflow diagram illustrates this integrated analytical process:

Protocol 2: Fingerprint Age Estimation Using GC×GC–TOF-MS and Chemometric Modeling

This protocol leverages comprehensive two-dimensional gas chromatography with time-of-flight mass spectrometry (GC×GC–TOF-MS) to estimate the age of latent fingerprints based on time-dependent chemical changes [21].

3.2.1 Research Reagent Solutions & Essential Materials

Table 3: Essential Materials for Fingerprint Aging Analysis

| Item | Function/Explanation |

|---|---|

| GC×GC–TOF-MS System | Provides superior separation power and sensitivity for complex fingerprint chemistries compared to 1D-GC-MS [21]. |

| Solvents (e.g., Dichloromethane) | High-purity solvents for extracting chemical constituents from fingerprint residues. |

| Internal Standards | Deuterated or other non-native compounds added to correct for analytical variability. |

| Chemometric Software | Platform capable of handling high-dimensional data and machine learning algorithms. |

3.2.2 Step-by-Step Workflow

- Sample Collection & Storage: Collect latent fingerprints on a standardized substrate. Store samples under controlled conditions (temperature, humidity, light) and age them for predetermined time intervals (e.g., 0, 1, 3, 7 days) [21].

- Sample Extraction: At each time point, chemically extract the fingerprint residue using a suitable solvent (e.g., dichloromethane) spiked with an internal standard. This step must be highly consistent to ensure data reproducibility [21].

- Instrumental Analysis: Analyze the extracts using GC×GC–TOF-MS. The orthogonal separation of GC×GC significantly enhances peak capacity, resolving thousands of chemical compounds, including trace-level degradation products [21].

- Data Processing and Feature Selection: Process the raw chromatographic data to align peaks and perform peak deconvolution. The resulting data matrix consists of samples (rows) versus normalized peak areas or compound ratios (columns). Use compound ratios (e.g., squalene degradation products) to minimize the impact of variable sample quantity [21].

- Chemometric Modeling for Age Prediction: Use the processed data to build a predictive model.

- Input Variables: Ratios of key compounds (e.g., fatty acids, squalene oxides) that change predictably over time.

- Model Training: Employ machine learning algorithms (e.g., PLS regression, SVM) to correlate chemical profiles with known sample age.

- Model Validation: Validate the model's predictive accuracy using a blind test set of samples not included in the model training.

- Timeline Estimation & Reporting: Apply the validated model to casework samples of unknown age. The model outputs an estimated age with a associated confidence interval, providing investigators with a temporal context for the fingerprint deposition.

The logical relationship for the chemical changes driving the model is as follows:

Implementation in a Standard Forensic Workflow

For successful integration, chemometrics must be embedded within the laboratory's quality management system. This requires a focus on method validation and standardized procedures to ensure legal defensibility [3].

- Data Quality and Preprocessing: The accuracy of any chemometric model is contingent on the quality of the input data. Consistent and documented sample preparation and data preprocessing routines are non-negotiable [21] [20].

- Model Validation: Before deployment, any chemometric model must be rigorously validated. This includes establishing figures of merit such as accuracy, precision, sensitivity, specificity, and estimation of error rates. The model must be tested on independent sample sets that were not used during its development [3].

- Training and Expertise: Forensic practitioners require training in both the analytical techniques and the principles of multivariate statistics to correctly apply, interpret, and testify about chemometric findings [3].

The integration of chemometrics into the standard forensic workflow represents a paradigm shift toward more objective, reliable, and statistically robust evidence analysis. The protocols outlined herein for analyzing explosive residues and estimating fingerprint age demonstrate the transformative potential of these tools. By adopting these data-driven approaches, forensic science service providers can enhance the scientific validity of their conclusions, thereby increasing confidence in the justice system. Future advancements will be driven by the broader adoption of advanced machine learning and a continued emphasis on standardization and validation.

Applied Chemometric Methods: From Spectroscopy to Forensic Classification

The integration of Fourier-Transform Infrared (FT-IR) and Raman spectroscopy with chemometrics represents a transformative advancement for forensic evidence interpretation. These vibrational spectroscopy techniques are characterized by their non-destructive nature, minimal sample preparation requirements, and high chemical specificity [22] [23]. Chemometrics applies multivariate statistical methods to complex chemical data, enabling objective interpretation of spectroscopic information and mitigating human bias in forensic analysis [3] [18]. This powerful combination provides forensic scientists with robust tools for discriminating between sample sources, classifying unknown materials, and presenting statistically validated conclusions in judicial contexts [24] [3]. The application of these methodologies is particularly valuable in forensic chemistry and biology, where evidentiary materials often consist of complex mixtures requiring sophisticated analytical approaches for meaningful interpretation [18] [25].

Key Applications in Forensic Science

Paint and Physical Evidence Analysis

Forensic paint analysis demonstrates the robust capabilities of combined vibrational spectroscopy and chemometrics. A study of 34 red paint samples achieved effective discrimination through FT-IR and Raman spectroscopy coupled with principal component analysis (PCA) and hierarchical cluster analysis (HCA) [24]. For FT-IR spectra, applying Standard Normal Variate (SNV) preprocessing with selective wavelength ranges (650-1830 cm⁻¹ and 2730-3600 cm⁻¹) optimized results, where the first four principal components explained 83% of total variance, primarily corresponding to binder types and calcium carbonate presence [24]. Raman spectroscopy provided complementary separation, with the first two PCs (37% and 20% variance respectively) revealing six distinct clusters corresponding to different pigment compositions [24]. This objective methodology significantly enhances the discrimination of chemically similar paint samples encountered in vandalism and vehicle collision investigations.

Forensic Biological Evidence

Vibrational spectroscopy combined with chemometrics shows emerging potential for analyzing biological materials, including body fluids, hair, soft tissues, and bones [25] [23]. These techniques provide rapid, non-destructive characterization of forensic biological samples, offering valuable contextual information about crimes before destructive DNA analysis [25]. For fibromyalgia diagnosis, researchers developed a portable FT-IR method using bloodspot samples that achieved high classification accuracy (sensitivity and specificity >0.93) through orthogonal partial least squares discriminant analysis (OPLS-DA) [26]. The identified spectral biomarkers included peptide backbones and aromatic amino acids, demonstrating the methodology's capability to distinguish between complex physiological conditions [26]. Combined FT-IR/Raman classification models show exceptional performance for body fluid identification and cause of death determination, though these applications remain primarily in the research domain [23].

Pharmaceutical and Illicit Drug Analysis

The complementary nature of FT-IR and Raman spectroscopy proves particularly advantageous for pharmaceutical analysis and drug profiling [26] [18]. A satellite laboratory toolkit incorporating handheld Raman and portable FT-IR spectrometers successfully identified over 650 active pharmaceutical ingredients in 926 products with high reliability [26]. When at least two devices confirmed API identification, results were comparable to full-service laboratory analyses, demonstrating the field-deployment potential of these techniques [26]. In forensic chemistry, chemometrics enables the processing of large datasets for strategic intelligence, revealing connections between illicit drug seizures and trafficking networks [18]. The non-destructive character of vibrational spectroscopy preserves evidence for subsequent legal proceedings, while chemometric analysis provides statistical validation of evidentiary conclusions.

Table 1: Forensic Applications of FT-IR and Raman Spectroscopy with Chemometrics

| Application Area | Analytical Question | Chemometric Methods | Key Findings |

|---|---|---|---|

| Paint Analysis | Discrimination of paint samples based on brand/type [24] | PCA, HCA with SNV preprocessing [24] | FT-IR: 83% variance explained by first 4 PCs; Raman: 6 pigment clusters identified [24] |

| Biological Fluids | Diagnosis of fibromyalgia and related disorders [26] | OPLS-DA [26] | High sensitivity and specificity (Rcv > 0.93) using bloodspot samples [26] |

| Pharmaceuticals | Screening for declared/undeclared APIs [26] | Multivariate classification [26] | 650+ APIs identified with reliability comparable to full-service labs [26] |

| Illicit Drugs | Profiling and intelligence-led policing [18] | PCA, pattern recognition [18] | Enhanced connections between seizures and trafficking networks [18] |

Experimental Protocols

Multivariate Classification Workflow for Forensic Evidence

The following protocol outlines the standard workflow for multivariate classification of forensic samples using FT-IR and Raman spectroscopy, incorporating critical validation steps to ensure forensic reliability [22].

Sample Preparation and Spectral Acquisition

Materials and Equipment:

- FT-IR spectrometer with ATR accessory (diamond crystal recommended)

- Raman spectrometer (portable/handheld versions suitable for field screening)

- Sample substrates (glass slides, aluminum stubs, or specialized sampling cards)

- Reference materials for instrument calibration

- Personal protective equipment for handling forensic evidence

Procedure:

- Sample Collection: Transfer minute quantities of evidence material to appropriate substrate. For paints, create thin films on glass slides; for powders, ensure homogeneous distribution; for biological stains, use specialized sampling cards [24] [26].

- FT-IR Analysis:

- Raman Analysis:

- Quality Control:

- Collect replicate spectra from different sample areas

- Include reference standards for instrument performance verification

- Document all instrument parameters for forensic chain of custody

Data Preprocessing and Model Development

Software Requirements:

- Multivariate analysis software (Python/R with chemometrics packages, commercial software)

- Spectral processing capabilities (baseline correction, normalization, derivatives)

- Statistical validation tools (cross-validation, permutation testing)

Preprocessing Workflow:

- Data Assessment: Visually inspect all spectra for anomalies, artifacts, or outliers [22].

- Spectral Preprocessing:

- Apply Standard Normal Variate (SNV) or multiplicative scatter correction to minimize scattering effects [24]

- Implement Savitzky-Golay derivatives (1st or 2nd) to enhance spectral features

- Use baseline correction methods to remove fluorescence contributions (Raman)

- Data Selection:

- Select informative spectral regions (e.g., 650-1830 cm⁻¹ and 2730-3600 cm⁻¹ for FT-IR of paints) [24]

- Remove noisy or non-informative variables to improve model performance

- Model Development:

- Exploratory Analysis: Perform PCA to identify natural clustering and outliers [24] [22]

- Supervised Classification: Develop PLS-DA, LDA, or SVM models using training datasets with known classifications [3] [22]

- Model Validation: Implement k-fold cross-validation and external validation with independent test sets [22]

Table 2: Data Preprocessing Techniques for Forensic Spectral Analysis

| Processing Step | Technique Options | Forensic Application Considerations |

|---|---|---|

| Quality Control | Spectral visualization, outlier detection [22] | Identify compromised samples or measurement artifacts that could invalidate conclusions |

| Scatter Correction | Standard Normal Variate (SNV), Multiplicative Scatter Correction [24] | Particularly important for heterogeneous forensic samples (paints, powders, biological stains) |

| Spectral Derivatives | Savitzky-Golay 1st or 2nd derivative [22] | Enhance resolution of overlapping bands; 2nd derivative useful for identifying peak positions |

| Baseline Correction | Asymmetric least squares, polynomial fitting [22] | Essential for Raman spectra with fluorescence background |

| Data Reduction | Wavelength selection, PCA [24] [22] | Focus on chemically informative regions (fingerprint region: 1800-900 cm⁻¹ for biological materials) [22] |

Advanced Chemometric Techniques

Seeding Multivariate Algorithms

Advanced chemometric approaches include "seeding" spectral datasets by augmenting the data matrix with known spectral profiles to bias multivariate analysis toward solutions of interest [28]. This approach enhances the ability of algorithms like PCA to differentiate between distinct sample subsets, as demonstrated with Raman spectroscopic data of human lung adenocarcinoma cells exposed to cisplatin [28]. Seeding Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) with pure components improves both model performance and component accuracy for concentration-dependent data [28]. For forensic applications, seeding could potentially enhance the detection of trace components in complex mixtures such as illicit drug formulations or explosive residues.

Multimodal Integration of FT-IR and Raman

The combined use of FT-IR and Raman spectroscopy in a single analytical platform provides complementary molecular information that significantly enhances forensic discrimination capabilities [27]. FT-IR excels in detecting polar bonds and functional groups, while Raman is more sensitive to nonpolar bonds and symmetric vibrations [27]. This complementarity is particularly valuable for complex forensic samples containing both organic and inorganic components [27]. Integrated instrumentation allows analysis of the exact sample location without repositioning, improving correlation between techniques and analytical accuracy [27].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Forensic Spectral Analysis

| Item | Function/Application | Forensic Considerations |

|---|---|---|

| ATR-FTIR Spectrometer | Acquisition of infrared absorption spectra for molecular characterization [24] [26] | Diamond ATR crystal suitable for heterogeneous forensic samples; portable versions enable field screening [26] |

| Raman Spectrometer | Measurement of inelastic scattering providing molecular vibrational information [24] [27] | Varying laser wavelengths help overcome fluorescence; portable devices useful for crime scene investigation [27] |

| Chemometric Software | Multivariate statistical analysis of spectral data (PCA, PLS-DA, etc.) [22] [18] | Must provide validation protocols and maintain chain of custody for forensic admissibility [3] [18] |

| Reference Spectral Libraries | Comparison and identification of unknown materials [24] [18] | Forensic-specific libraries enhance identification capabilities; should be regularly updated and validated [18] |

| Standardized Sampling Kits | Collection and preservation of trace evidence for spectroscopic analysis [26] [25] | Maintain sample integrity, prevent contamination, and preserve chain of custody [25] |

Forensic chemistry increasingly relies on advanced analytical techniques coupled with chemometric tools to interpret complex chemical data from evidence such as illicit drugs and fire debris. Chemometrics, defined as the chemical discipline that uses mathematical and statistical methods to design optimal measurement procedures and extract maximum chemical information from data, has become indispensable in modern forensic laboratories [18] [3]. This application note details standardized protocols and case studies demonstrating the implementation of chemometric approaches for drug profiling and arson debris analysis, supporting a broader thesis on multivariate statistical tools for forensic evidence interpretation.

The integration of chemometrics addresses critical challenges in forensic science, including the need for objective, statistically validated methods to mitigate human bias and enhance courtroom confidence in forensic conclusions [3]. These approaches are particularly valuable for classification (grouping) and profiling (batch comparison) tasks common in both drug intelligence and fire investigation contexts [18].

Application Note: Drug Profiling Using Chromatographic Techniques and Multivariate Analysis

Background and Principle

Drug profiling involves the comprehensive chemical analysis of illicit substances to identify their composition, active ingredients, cutting agents, and impurity patterns. This information enables comparative analysis between seized samples, supporting law enforcement in linking drug batches to common sources or distribution networks [29]. The approach combines separation techniques like gas chromatography-mass spectrometry (GC-MS) with pattern recognition algorithms to extract meaningful intelligence from complex chemical data.

Advanced profiling examines not only major components but also trace impurities and alkaloid content that can serve as chemical fingerprints for manufacturing processes [29]. This intelligence-led approach has been successfully applied to profile cocaine, heroin, amphetamine-type stimulants, and emerging new psychoactive substances (NPS) in strategic police work across Europe [18].

Experimental Protocol: Rapid GC-MS Screening of Seized Drugs

Table 1: Optimized Parameters for Rapid GC-MS Screening of Seized Drugs

| Parameter | Conventional GC-MS | Rapid GC-MS Method |

|---|---|---|

| Column | DB-1 (30 m × 0.25 mm × 0.25 μm) | DB-5 ms (30 m × 0.25 mm × 0.25 μm) |

| Carrier Gas Flow | 1 mL/min helium | 2 mL/min helium |

| Temperature Program | ~30 minutes | 10 minutes |

| Limit of Detection (Cocaine) | 2.5 μg/mL | 1 μg/mL |

| Relative Standard Deviation | <1% | <0.25% |

| Match Quality Scores | >85% | >90% |

Materials and Equipment

- Agilent 7890B Gas Chromatograph coupled with 5977A Single Quadrupole Mass Spectrometer

- DB-5 ms capillary column (30 m × 0.25 mm × 0.25 μm film thickness)

- Helium carrier gas (99.999% purity)

- Reference standards: Tramadol, Cocaine, Codeine, Diazepam, Δ9-THC, Heroin, Alprazolam, Buprenorphine, γ-Butyrolactone (GBL), MDMB-INACA, MDMB-BUTINACA, Methamphetamine, MDMA, Ketamine, LSD

- Extraction solvents: HPLC-grade methanol (99.9%)

- Sample vials: 2 mL GC-MS capped vials

Sample Preparation Procedure

Solid Samples:

- Grind tablets and capsules into fine powder using mortar and pestle

- Weigh approximately 0.1 g of powdered material into test tube

- Add 1 mL methanol and sonicate for 5 minutes

- Centrifuge to separate phases

- Transfer clear supernatant to 2 mL GC-MS vial for analysis

Trace Samples:

- Use swabs pre-moistened with methanol to collect residues from surfaces

- Apply single-direction swabbing technique with controlled pressure

- Immerse swab tips in 1 mL methanol and vortex vigorously

- Transfer methanol extract to 2 mL GC-MS vial for analysis [30]

Instrumental Analysis

GC-MS Parameters:

- Injector Temperature: 250°C

- Injection Volume: 1 μL (split mode, 10:1 ratio)

- Oven Temperature Program: Optimized for 10-minute total run time

- Ion Source Temperature: 230°C

- Quadrupole Temperature: 150°C

- Mass Range: 40-550 m/z

Data Acquisition:

- Use Agilent MassHunter software (version 10.2.489)

- Perform library searches against Wiley Spectral Library (2021) and Cayman Spectral Library (2024)

- Extract retention times at chromatographic peak apex [30]

Chemometric Data Processing

Diagram 1: Drug Profiling Chemometric Workflow

Data Pre-processing:

- Perform peak alignment and retention time correction

- Normalize peak areas to internal standards

- Apply scaling algorithms (Pareto, Mean-Centering) as needed

Pattern Recognition:

Statistical Validation:

- Use cross-validation methods (leave-one-out, k-fold) to assess model robustness

- Calculate classification error rates and confidence intervals

- Apply permutation testing to validate model significance

Case Study: Implementation and Results

Validation studies conducted with Dubai Police Forensic Laboratories demonstrated the rapid GC-MS method's effectiveness in analyzing 20 real case samples containing diverse drug classes, including synthetic opioids and stimulants. The method achieved match quality scores exceeding 90% across tested concentrations while reducing analysis time from 30 minutes to 10 minutes per sample [30].

The systematic application of PCA to chromatographic impurity profiles enabled successful clustering of amphetamine samples according to their synthetic route, providing valuable intelligence on manufacturing sources [18]. Similarly, PLS-DA models applied to infrared spectroscopic data have shown excellent discrimination between cocaine and adulterants, with classification accuracy exceeding 95% in controlled studies [18].

Application Note: Arson Debris Analysis via Advanced Separation and Chemometric Pattern Recognition

Background and Principle

Fire debris analysis focuses on detecting and identifying ignitable liquid residues (ILRs) in samples collected from fire scenes to determine whether a fire was intentionally set. The complex nature of fire debris, which contains pyrolysis products from substrate materials alongside any potential accelerants, makes chemometric tools particularly valuable for distinguishing relevant patterns from background interference [31] [32].

Standard methods classify ignitable liquids into categories defined in ASTM E1618 (e.g., gasoline, petroleum distillates, isoparaffinic compounds), but visual comparison of chromatograms can be subjective and time-consuming [31] [33]. Chemometric approaches provide objective classification and enhance detection limits for trace ILRs, especially in weathered samples or those with substantial substrate interference.

Experimental Protocol: Fire Debris Analysis Using Rapid GC-MS and Multivariate Classification

Table 2: Analytical Figures of Merit for Rapid GC-MS Analysis of Ignitable Liquids

| Parameter | Value/Range |

|---|---|

| Analysis Time | ~1 minute |

| Limit of Detection | 0.012 - 0.018 mg/mL |

| Target Compounds | p-xylene, n-nonane, 1,2,4-trimethylbenzene, n-decane, 1,2,4,5-tetramethylbenzene, 2-methylnaphthalene, n-tridecane |

| Column | DB-1ht QuickProbe GC column (2 m × 0.25 mm × 0.10 μm) |

| Carrier Gas | Helium (99.999%) at 1 mL/min |

| Temperature Program | Optimized for volatile compounds |

Materials and Equipment

- Agilent 8971 QuickProbe GC-MS System with 8890 Gas Chromatograph and 5977B Mass Spectrometer

- DB-1ht QuickProbe GC column (2 m length × 0.25 mm outer diameter × 0.10 μm inner diameter)

- Charcoal strips for passive headspace concentration (ASTM E1412)

- Carbon disulfide (analytical grade) for extraction

- Reference ignitable liquids: Gasoline, diesel fuel, lighter fluids

- Test mixtures: p-xylene, n-nonane, 1,2,4-trimethylbenzene, n-decane, 1,2,4,5-tetramethylbenzene, 2-methylnaphthalene, n-tridecane

Sample Preparation Procedure

Passive Headspace Concentration (ASTM E1412):

Alternative Rapid Screening:

- For direct analysis, use thermal desorption techniques

- Apply minimal sample preparation for high-throughput screening

Instrumental Analysis

Rapid GC-MS Parameters:

- Injector Temperature: 250°C

- Oven Temperature: 280°C (isothermal to prevent recondensation)

- Analysis Time: Approximately 1 minute

- Mass Range: 35-300 m/z

- Solvent Delay: Not used (analysis faster than detector response)

Data Collection:

- Acquire total ion chromatograms and extracted ion profiles

- Apply deconvolution algorithms for co-eluting peaks

- Compare against reference database of ignitable liquids [31]

Chemometric Data Processing

Diagram 2: Fire Debris Analysis Workflow

Feature Extraction:

- Generate extracted ion profiles for characteristic ions

- Create relative abundance patterns of target compounds

- Compile peak ratio measurements within and between chemical classes

Multivariate Classification:

- Implement Support Vector Machines (SVM) for binary classification (ILR present/absent)

- Apply Linear Discriminant Analysis (LDA) and Quadratic Discriminant Analysis (QDA) for category prediction

- Utilize k-Nearest Neighbors (kNN) for pattern recognition based on similarity measures [34]

Likelihood Ratio Approach:

- Calculate probabilities of class membership using computationally mixed training data

- Determine likelihood ratios from class membership probabilities

- Assess evidentiary value using Bayesian statistical framework [34]

Case Study: Implementation and Results