Measuring Uncertainty: A Critical Analysis of Error Rates in Forensic Feature-Comparison Methods

This article provides a comprehensive analysis of error rates across forensic feature-comparison methods, addressing a critical knowledge gap identified by major scientific reviews.

Measuring Uncertainty: A Critical Analysis of Error Rates in Forensic Feature-Comparison Methods

Abstract

This article provides a comprehensive analysis of error rates across forensic feature-comparison methods, addressing a critical knowledge gap identified by major scientific reviews. We explore the foundational concepts of forensic error, examining the concerning asymmetry in how false positives and false negatives are reported and perceived by practitioners. The content delves into methodological advancements, particularly the emergence of quantitative statistical frameworks and machine learning approaches that promise greater objectivity and transparency. We troubleshoot persistent challenges including contextual bias, the lack of empirical validation for subjective judgments, and difficulties in implementing meaningful error rate calculations. Through comparative analysis of traditional pattern matching fields versus emerging molecular methods, this resource equips researchers, scientists, and drug development professionals with the critical framework needed to evaluate forensic evidence reliability and integrate more robust error analysis into biomedical research and clinical applications.

The Hidden Landscape of Forensic Error: Understanding Asymmetries and Current Perceptions

In forensic feature-comparison methods, the conclusions drawn by analysts primarily fall into three categories: identification, exclusion, or inconclusive. Each of these decisions carries an inherent risk of two fundamental types of error. A false positive occurs when an examiner incorrectly associates two samples from different sources (a wrong inclusion), while a false negative occurs when an examiner incorrectly dissociates two samples from the same source (a wrong exclusion) [1]. The prevailing focus on reducing false positives, driven by the legal principle that it is better to let the guilty go free than to convict the innocent, has often overshadowed the significant risks posed by false negatives [2] [1]. This imbalance is particularly critical in disciplines such as latent prints, firearms analysis, and bitemark comparison, where the scientific foundation for exclusionary conclusions may lack rigorous empirical validation. This guide provides a comparative analysis of error rates across forensic disciplines, detailing experimental protocols and data to equip researchers and practitioners with the tools necessary for a more nuanced understanding of forensic method reliability.

Comparative Error Rates Across Forensic Disciplines

Quantitative data from empirical studies, particularly black-box studies, provide the most reliable metrics for comparing the accuracy of forensic feature-comparison methods. The tables below summarize key performance indicators across several disciplines, highlighting both identification and exclusion errors.

Table 1: False Positive and False Negative Rates in Latent Print Examinations

| Study | Discipline | False Positive Rate | False Negative Rate | Number of Examiners | Number of Comparisons |

|---|---|---|---|---|---|

| Ulery et al. (2011) [3] | Latent Prints | 0.1% | 7.5% | 169 | 744 pairs |

| LPE Black Box Study (2022) [4] | Latent Prints | 0.2% | 4.2% | 156 | 14,224 responses |

Table 2: Case Error Rates Across Multiple Forensic Disciplines (Morgan, 2023) [5]

| Discipline | Percentage of Examinations Containing Individualization or Classification (Type 2) Errors |

|---|---|

| Seized drug analysis (field testing) | 100% |

| Bitemark | 73% |

| Shoe/foot impression | 41% |

| Fire debris investigation | 38% |

| Forensic medicine (pediatric sexual abuse) | 34% |

| Blood spatter (crime scene) | 27% |

| Serology | 26% |

| Firearms identification | 26% |

| Hair comparison | 20% |

| Latent fingerprint | 18% |

| Fiber/trace evidence | 14% |

| DNA | 14% |

| Forensic pathology (cause and manner) | 13% |

The data reveals significant variation in reliability across disciplines. Latent print analysis, often considered a gold standard, demonstrates very low false positive rates but notably higher false negative rates, indicating a conservative approach that favors missing an identification over making a wrongful one [3] [4]. In contrast, disciplines like bitemark analysis and field testing for seized drugs show alarmingly high rates of individualization and classification errors, underscoring concerns about their foundational validity [5]. The high error rate in seized drug analysis is primarily attributed to the use of presumptive tests in the field that are not confirmed in a laboratory setting [5].

Experimental Protocols for Measuring Forensic Error Rates

The Black-Box Study Design for Latent Prints

The foundational 2011 study by Ulery et al. and the subsequent 2022 study exemplify the rigorous protocol for assessing error rates in latent print examination [3] [4].

- Objective: To measure the accuracy, reproducibility, and factors influencing latent print examiners' decisions under conditions mimicking operational casework.

- Participant Cohort: The studies engaged a large number of practicing, certified latent print examiners (169 in 2011; 156 in 2022) with a median experience of 10 years to ensure professional relevance [3] [4].

- Materials and Stimuli: Researchers compiled a set of latent and exemplar fingerprint images selected by subject matter experts to represent a challenging range of attributes and quality encountered in real casework. The 2011 study used 744 distinct image pairs (520 mated, 224 nonmated) [3]. The 2022 study used 300 image pairs, with a higher proportion of nonmated comparisons (80 nonmated vs. 20 mated per participant) to better reflect the results of automated database searches [4].

- Procedure: Each examiner was randomly assigned approximately 100 image pairs via custom software. They were instructed to use their professional expertise to compare each pair and report one of four decisions: Individualization (ID), Exclusion, Inconclusive, or No Value. The software recorded decisions, and examiners were given several weeks to complete the task, mimicking real-world time constraints [3].

- Data Analysis: Examiner decisions were compared against ground truth (known mated and nonmated status) to calculate false positive rates (individualization of nonmated pairs) and false negative rates (exclusion of mated pairs). Reproducibility was assessed by analyzing how different examiners treated the same image pair [3] [4].

Forensic Testimony Archaeology Typology

Dr. John Morgan's study for the National Institute of Justice developed an error typology through a retrospective analysis of wrongful convictions [5].

- Objective: To identify and categorize the root causes of errors associated with forensic evidence in exonerated cases.

- Data Source: The study analyzed 732 cases and 1,391 forensic examinations from the National Registry of Exonerations that involved "false or misleading forensic evidence" [5].

- Coding Framework: A detailed typology was developed to categorize errors [5]:

- Type 1 - Forensic Science Reports: Misstatements in the scientific basis of a report.

- Type 2 - Individualization or Classification: Incorrect association or dissociation of evidence.

- Type 3 - Testimony: Misrepresentation of results during trial testimony.

- * Type 4 - Officer of the Court*: Errors by legal professionals related to forensic evidence.

- Type 5 - Evidence Handling and Reporting: Failures in collecting, examining, or reporting evidence.

- Analysis: Each case was systematically reviewed to identify the forensic disciplines involved and the specific type of error that occurred, allowing for quantitative analysis of which disciplines were most prone to which kinds of errors [5].

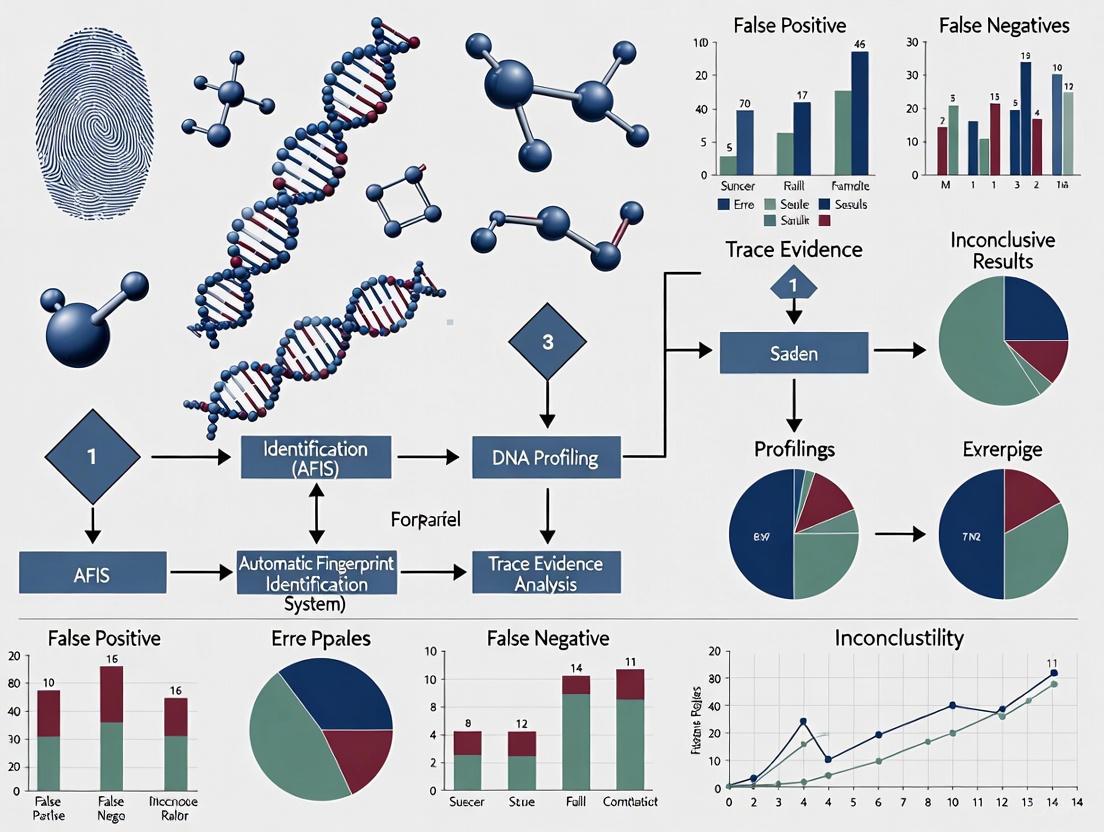

Visualizing the Forensic Decision Pathway and Error Points

The following diagram illustrates the standard decision-making process in forensic comparisons, such as the ACE-V (Analysis, Comparison, Evaluation, Verification) method used in latent print analysis, and maps the points where false positives and false negatives can occur.

Diagram 1: Forensic comparison decision pathway with error points.

This workflow shows that a false positive error is a specific, high-consequence outcome where an examiner concludes "Identification" for a non-mated pair. A false negative occurs when an examiner concludes "Exclusion" for a mated pair. The "Inconclusive" path is a legitimate outcome that avoids a definitive error but provides no associative information [6].

The Scientist's Toolkit: Research Reagent Solutions for Error Rate Studies

Table 3: Essential Materials and Methods for Forensic Error Rate Research

| Tool/Method | Function in Research | Application Example |

|---|---|---|

| Black-Box Study Design | Evaluates examiner accuracy without attempting to dictate their decision-making process. Provides empirical data on real-world performance. | Large-scale studies of latent print examiners to establish baseline false positive and false negative rates [3] [4]. |

| Forensic Error Typology | Provides a standardized coding framework to systematically categorize and analyze the root causes of errors in past cases. | Morgan's typology used to analyze wrongful convictions, identifying that most forensic errors are not simple classification mistakes but involve testimony, reporting, or evidence handling [5]. |

| Ground-Truthed Sample Sets | Collections of evidence samples (e.g., fingerprints, bullets) with known source relationships (mated and nonmated). Essential for validating methods and measuring error. | Creating a pool of 744 latent-exemplar fingerprint pairs with known ground truth to test examiners against [3]. |

| Blinded Verification | An independent re-examination of evidence by a second examiner who is unaware of the first examiner's conclusion. A key procedural safeguard. | The 2011 latent print study found that blind verification detected all false positive errors and most false negative errors [3]. |

| Context Management Protocols | Procedures to shield examiners from extraneous case information that could introduce contextual bias and influence their decision. | Limiting the information provided to examiners in a black-box study to only the necessary images, mimicking an unbiased environment. |

Discussion: The Critical Balance and Path Forward

The empirical data clearly demonstrates that a singular focus on minimizing false positives is scientifically untenable. While legally and ethically paramount, this focus can lead to unacceptably high false negative rates or an over-reliance on "intuitive" exclusions that lack empirical validation [2] [1]. For example, exclusions based on class characteristics (e.g., hair color, general dental pattern) may seem like common sense but can be dangerously misleading without known error rates for those specific classifications [1]. This is especially critical in "closed-pool" scenarios, where an exclusion can function as a de facto identification of another suspect, thereby compounding the error [2].

Moving forward, the field must adopt more nuanced approaches to communicating reliability. Relying on simple error rates is insufficient, especially given the high frequency of inconclusive decisions in casework. The recommendation from NIST researchers to shift towards providing empirical validation data and method conformance information specific to the evidence at hand offers a more robust framework [6]. This allows stakeholders to understand not just how a technique performs on average, but how reliably it was applied to the specific evidence in question. For researchers and practitioners, this means prioritizing studies that measure both false positive and false negative rates, validating exclusionary conclusions with the same rigor as identifications, and developing standardized methods for reporting the probative value of all possible conclusions, including "inconclusive" [2] [1] [6].

Forensic science provides critical evidence within the justice system, yet the accuracy of its methods is not infallible. Legal standards for the admissibility of scientific evidence, such as those established in Daubert v. Merrill Dow Pharmaceuticals, Inc. (1993) and Kumho Tire Co v. Carmichael (1999), guide trial courts to consider known error rates of the techniques presented [7] [8]. However, recent authoritative reviews of the field have concluded that error rates for some common forensic techniques are neither well-documented nor properly established [7] [8]. Complicating this issue is a historical tendency among some forensic analysts to deny the very presence of error in their work [7]. This article surveys the current landscape of error rate understanding, contrasting analyst perceptions with empirical data and comparing error rates across different forensic feature-comparison methods.

Analyst Perceptions of Error Rates: A Survey of the Field

A pivotal 2019 survey of 183 practicing forensic analysts provides crucial insight into how the profession perceives error in its own disciplines [7] [8]. The findings reveal several consistent themes in analyst perception:

- Overall Rarity of Errors: Analysts perceive all types of errors to be rare occurrences in practice [7] [8].

- Asymmetry in Error Perception: False positive errors (incorrectly matching an item to a source) are perceived as even more rare than false negative errors (failing to match two items from the same source) [7] [8].

- Preference for Error Minimization: Analysts typically reported a preference for minimizing the risk of false positives over false negatives, reflecting the serious consequences of erroneous incriminations [7].

- Unrealistically Low Estimates: The estimates provided by analysts for error rates in their fields were widely divergent, with some estimates being "unrealistically low" [7] [8].

- Lack of Documentation Awareness: Perhaps most significantly, most analysts could not specify where error rates for their discipline were documented or published, indicating a gap between the requirement for known error rates and practitioners' awareness of empirical data [7] [8].

Table 1: Summary of Forensic Analyst Perceptions on Error Rates

| Perception Aspect | Finding | Implication |

|---|---|---|

| Overall Error Frequency | Perceived as rare | Potential underestimation of error likelihood |

| False Positives vs False Negatives | False positives seen as more rare | Alignment with conservative approach to incriminating evidence |

| Error Minimization Preference | Preference for minimizing false positives | Reflects serious consequences of erroneous incriminations |

| Quantitative Estimates | Widely divergent, some "unrealistically low" | Lack of consensus and potential overconfidence |

| Awareness of Documentation | Most could not cite error rate documentation | Gap between legal expectations and practitioner knowledge |

Comparative Error Rates Across Forensic Disciplines

While analyst perceptions provide one perspective, empirical studies offer a more objective assessment of error rates across forensic disciplines. Recent research has quantified error rates in several feature-comparison methods, revealing variation across disciplines and methodologies.

Firearms Evidence Identification

The Congruent Matching Cells (CMC) method represents an innovative approach to firearm evidence identification that enables statistical error rate estimation [9]. This method divides compared topography images into correlation cells and derives three sets of identification parameters to quantify both topography similarity and pattern congruency [9]. Initial testing on breech face impressions from consecutively manufactured pistol slides showed wide separation between the distributions of CMCs observed for known matching and known non-matching image pairs [9]. This separation enables the development of statistical models for probability mass functions of comparison scores, providing a framework for estimating cumulative false positive and false negative error rates [9].

Toolmark and Striated Evidence Analysis

Research on striated toolmark evidence has revealed false discovery rates (FDRs) ranging from 0.0045 to 0.072 across multiple studies, with a pooled error rate of approximately 0.02 (2%) when weighted by sample size [10]. These studies examined the comparison of striation marks used to connect evidence to its source [10]. The 2024 analysis of wire cut comparisons highlighted how the multiple comparison problem inherent in such examinations can substantially increase the family-wise false discovery rate [10]. As the number of comparisons increases, so does the probability of encountering a coincidental match, with the family-wise error rate (Eₙ) calculated as 1 - [1 - e]ⁿ, where e is the single-comparison FDR and n is the number of comparisons [10].

Table 2: Empirical Error Rates from Striated Evidence Studies

| Study | False Discovery Rate (e) | Family-Wise Error After 10 Comparisons (E₁₀) | Family-Wise Error After 100 Comparisons (E₁₀₀) | Max Comparisons for Eₙ < 10% |

|---|---|---|---|---|

| Mattijssen (2010) | 7.24% | 52.8% | 99.9% | 1 |

| Pooled Error | 2.00% | 18.3% | 86.7% | 5 |

| Bajic (2019) | 0.70% | 6.8% | 50.7% | 14 |

| Best (2020) | 0.45% | 4.5% | 36.6% | 23 |

The Multiple Comparisons Problem in Forensic Science

A critical methodological issue affecting error rates across multiple forensic disciplines is the multiple comparisons problem [10]. This occurs when a single conclusion relies on many implicit or explicit comparisons, greatly increasing the probability of false discoveries [10]. In wire cut analysis, for example, examiners must compare multiple surfaces and search for optimal alignment between striation patterns, potentially involving thousands of implicit comparisons [10]. Similar issues arise in database searches, where larger database sizes increase the probability of finding "unusually" close non-matches, as exemplified by the wrongful accusation of Brandon Mayfield in the 2004 Madrid train bombing case [10].

Diagram Title: Multiple Comparison Problem in Wire Analysis

Methodological Considerations in Error Rate Estimation

Accurately determining error rates in forensic science requires careful consideration of methodological frameworks and experimental design.

The Filler-Control Method for Error Rate Estimation

The filler-control method represents an innovative approach designed to address contextual bias while estimating error rates and calibrating analyst reports [11]. This method utilizes actual case data and incorporates control items ("fillers") not related to the case, serving multiple purposes: estimating error rates, calibrating analysts, protecting against contextual biases, and identifying unreliable analysts and methods [11]. By implementing this approach, forensic testing aims to achieve greater rigor and credibility, particularly for match judgments associated with various forensic techniques [11].

Treatment of Inconclusive Decisions

The interpretation of inconclusive decisions presents a significant challenge in calculating and understanding error rates in forensic science [12]. Recent scholarship suggests that reliability determination requires consideration of both method conformance (whether analysts adhere to defined procedures) and method performance (the capacity to discriminate between different propositions) [12]. Within this framework, inconclusive decisions are neither "correct" nor "incorrect" but can be evaluated as either "appropriate" or "inappropriate" depending on the context [12]. This nuanced understanding highlights the limitation of simple error rates alone for adequately characterizing method performance for non-binary conclusion scales [12].

Experimental Protocols for Error Rate Studies

Well-designed experimental protocols are essential for generating valid error rate estimates in forensic science:

- Black-Box Studies: These studies present analysts with evidence samples without contextual information that might introduce bias, allowing researchers to measure the baseline accuracy of the analytical method itself [12].

- Open-Set Designs: Unlike closed-set experiments where all samples necessarily come from known sources represented in the set, open-set designs include specimens that may not have matching counterparts in the reference collection, more closely mimicking real-world forensic practice [10].

- Multiple Examiner Designs: These protocols involve having multiple analysts examine the same evidence independently, allowing researchers to measure both individual and consensus-based performance metrics [7].

- Cross-Correlation and Similarity Quantification: For toolmark and impression evidence, protocols often involve calculating cross-correlation functions or other similarity measures across multiple alignments to determine optimal matches [10].

Table 3: Key Methodological Approaches in Error Rate Studies

| Methodological Approach | Key Characteristics | Primary Applications |

|---|---|---|

| Black-Box Studies | Controls for contextual bias by withholding domain-irrelevant information | Validation of core analytical methods across disciplines |

| Filler-Control Method | Incorporates unrelated control items to estimate bias and error rates | Calibrating analysts, identifying unreliable methods |

| Congruent Matching Cells (CMC) | Divides images into correlation cells for quantitative similarity assessment | Firearm evidence identification, toolmark analysis |

| Open-Set Designs | Includes specimens without known matches in reference collection | Simulating real-world forensic practice |

| Multiple Comparison Control | Accounts for family-wise error rate inflation in alignment searches | Database searches, striation pattern matching |

Research Reagent Solutions: Essential Materials for Forensic Error Rate Studies

Table 4: Essential Research Materials for Forensic Error Rate Studies

| Item/Technique | Function in Error Rate Research | Application Examples |

|---|---|---|

| Comparison Microscopes | Visual alignment and comparison of microscopic features | Toolmark analysis, firearm evidence, fiber comparison |

| Cross-Correlation Algorithms | Computational quantification of pattern similarity | Striation mark alignment, optimal match identification |

| Congruent Matching Cells (CMC) Method | Statistical framework for objective feature comparison | Firearm evidence identification, error rate estimation |

| Black-Box Study Protocols | Experimental designs controlling for contextual bias | Validation of forensic methods across disciplines |

| Statistical Models for Probability Mass Functions | Mathematical frameworks for estimating error probabilities | Calculating cumulative false positive/negative rates |

| Filler-Control Materials | Control items unrelated to case evidence | Estimating and controlling for contextual bias |

The landscape of error rate understanding in forensic science reveals a complex interplay between practitioner perceptions and empirical reality. While forensic analysts generally perceive errors as rare—particularly false positives—and express confidence in their disciplines, empirical studies demonstrate measurable error rates that vary significantly across forensic methods [7] [10] [8]. The multiple comparisons problem inherent in many forensic examinations substantially increases the risk of false discoveries, particularly in disciplines involving database searches or pattern alignments [10]. Methodological innovations such as the filler-control method [11], Congruent Matching Cells approach [9], and more nuanced treatment of inconclusive decisions [12] offer promising pathways toward more rigorous error rate estimation. As forensic science continues to evolve, bridging the gap between analyst perceptions and empirical data remains crucial for strengthening the scientific foundation of justice system evidence.

Forensic feature-comparison methods constitute a cornerstone of modern judicial systems, providing scientific evidence that can determine the outcome of criminal investigations and trials. Within this domain, a critical yet often neglected area of research concerns the comparative analysis of error rates, specifically the risk of false negatives in forensic eliminations. A false negative occurs when an examiner incorrectly excludes a true source—for instance, concluding that a bullet did not come from a specific firearm when it actually did. Conversely, a false positive involves an incorrect identification. Recent reforms in forensic science have predominantly focused on quantifying and reducing false positives, creating a significant asymmetry in the understanding of forensic method validity [2].

This comparative guide objectively examines the performance of various forensic disciplines regarding these error rates, with a particular emphasis on the under-scrutinized false negative. We demonstrate that while professional guidelines and major government reports from the National Academy of Sciences (NAS) and the President's Council of Advisors on Science and Technology (PCAST) have echoed this focus on false positives, the integrity of forensic conclusions is equally dependent on a rigorous assessment of false negatives [2]. This is especially critical in closed-pool scenarios, where an elimination can function as a de facto identification of another suspect, thereby introducing a serious, unmeasured risk of error into the justice system [2].

Quantitative Comparison of Forensic Error Rates

Empirical data on error rates across forensic disciplines reveals wide variation and highlights the pressing need for more comprehensive reporting that includes both false positives and false negatives.

Reported Error Rates from Black-Box Studies

The table below summarizes false positive and false negative rates from published studies across several forensic feature-comparison methods. These data illustrate the performance variations and the critical balance between the two error types.

Table 1: Comparative Error Rates Across Forensic Disciplines

| Discipline | False Positive Rate | False Negative Rate | Study Details |

|---|---|---|---|

| Latent Fingerprints | 0.1% | 7.5% | Open-set study data [13] |

| Bitemark Analysis | 64.0% | 22.0% | Comparative analysis [13] |

| Striated Toolmarks (Pooled) | ~2.0% | Not Reported | Weighted average from multiple studies [10] |

| Firearm Comparisons | Focus of Reforms | Overlooked | AFTE guidelines emphasize false positives [2] |

The Impact of Multiple Comparisons on Error Rates

Forensic comparisons, particularly of toolmarks, inherently involve multiple comparisons. For example, matching a cut wire to a tool requires comparing multiple surfaces and alignments. This process dramatically increases the family-wise false discovery rate (FDR). The table below shows how the probability of at least one false discovery escalates with the number of independent comparisons, starting from a single-comparison FDR of 0.7% [10].

Table 2: Inflation of Family-Wise False Discovery Rate with Multiple Comparisons

| Number of Comparisons (N) | Family-Wise False Discovery Rate |

|---|---|

| 1 | 0.70% |

| 10 | 6.8% |

| 14 | ~9.6% |

| 100 | 50.7% |

| 1000 | 99.9% |

This mathematical reality underscores that even a technique with a low single-comparison error rate can produce highly unreliable results when multiple comparisons are part of the standard examination process, a factor that must be accounted for in method validation [10].

Experimental Protocols for Validating Error Rates

To ensure the validity of forensic feature-comparison methods, rigorous experimental designs are required to quantify both false positive and false negative rates accurately.

Black-Box Proficiency Studies

Objective: To measure the practical accuracy of forensic examiners in a blind testing environment that mimics casework conditions [13].

Methodology:

- Sample Selection: A set of evidence items (e.g., latent prints, toolmarks, bullets) is prepared, where the ground truth (true sources) is known to the researchers but not the participating examiners.

- Participant Recruitment: Certified practicing forensic analysts from relevant disciplines are recruited to participate.

- Blinded Testing: Examiners are presented with pairs or sets of samples and asked to render conclusions (e.g., identification, exclusion, or inconclusive) based on their standard protocols.

- Data Analysis: Examiner conclusions are compared against the known ground truth. The rates of false positives (incorrect identifications) and false negatives (incorrect exclusions) are calculated from the data.

Key Measurements: The primary outcomes are the false positive rate (FPR) and false negative rate (FNR) across the participant pool. These studies are considered the gold standard for estimating real-world error rates [13].

The Hageman-Arrindell (HA) Statistical Approach

Objective: To provide a more conservative and accurate method for assessing individual reliable change, reducing false positive rates compared to the more commonly used Jacobson-Truax Reliable Change Index (RCI) [14] [15].

Methodology:

- Data Collection: Pre-test and post-test measurements are collected (e.g., before and after a clinical intervention, or from known matching and non-matching forensic samples).

- Model Specification: The HA statistic is calculated using the formula that incorporates the reliability of the pre-post differences (

R_DD):HA = [(Y_i - X_i) R_DD + (M_Y - M_X)(1 - R_DD)] / √[2 R_DD (S_X √(1 - R_XX))^2]where: - Comparison with RCI: The performance of the HA method is compared against the traditional RCI by evaluating their respective false positive and false negative rates in simulated and empirical datasets.

Key Measurements: The core performance metrics are the false positive rate and false negative rate of each method. Simulation studies have shown that while both methods can produce high false positive rates, the HA statistic systematically offers a lower and more acceptable false positive rate than the RCI statistic, making it a more conservative option [14] [15].

Analytical Frameworks and Visualizations

Forensic Comparison Decision Pathway

The following diagram illustrates the decision pathway in a forensic comparison, highlighting the points where false negatives and false positives can occur.

Diagram 1: Forensic Decision Pathway

This workflow shows that an exclusion can be based solely on non-matching class characteristics, which may be performed intuitively and without the same empirical scrutiny as an identification, creating a pathway for false negatives [2].

The Multiple Comparisons Problem in Toolmark Analysis

The process of matching a wire to a cutting tool involves numerous implicit comparisons, which inflates the family-wise error rate.

Diagram 2: Multiple Comparisons in Toolmark Analysis

This diagram conceptualizes the hidden multiple comparisons in a single forensic examination. For a wire-cutting tool, an examiner may implicitly compare several blade surfaces against multiple wire surfaces, testing thousands of possible alignments to find the best match. Each of these comparisons represents an opportunity for a coincidental match, thereby increasing the overall probability of a false discovery beyond the rate estimated for a single comparison [10].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological components and their functions in the experimental protocols used for validating forensic feature-comparison methods.

Table 3: Essential Methodological Components for Error Rate Studies

| Research Component | Function in Experimental Protocol |

|---|---|

| Black-Box Study Design | Mimics real-world conditions by blinding examiners to ground truth, preventing contextual bias and providing ecologically valid error rate estimates [13]. |

| Proficiency Test Samples | Certified reference materials with known ground truth used to measure analyst competency and method reliability in controlled settings [13]. |

| Cross-Correlation Function Algorithm | A quantitative measure used in pattern-matching algorithms to compute similarity between two patterns (e.g., striations) across numerous alignments [10]. |

| Hageman-Arrindell (HA) Statistic | A conservative statistical method for assessing reliable change that incorporates the reliability of pre-post differences, helping to control false positive rates [14] [15]. |

| Collaborative Testing Services (CTS) | Independent providers of forensic proficiency tests that allow laboratories to benchmark their performance against peer institutions [13]. |

The comparative analysis presented in this guide leads to an inescapable conclusion: the systematic overlooking of false negative rates in forensic eliminations represents a significant vulnerability in the scientific foundation of feature-comparison methods. The quantitative data shows that false negatives are not only prevalent but in some disciplines, such as latent print analysis, can occur at rates far exceeding false positives [13]. The multiple comparison problem further compounds this issue, mathematically inflating error rates in ways that are often hidden from view [10].

To mitigate these risks, the following policy and practice reforms are recommended based on the cited research:

- Balanced Validation: Validity studies for all forensic methods must report both false positive and false negative rates to provide a complete picture of method accuracy [2].

- Empirical Grounding: The use of "common sense" or intuitive eliminations must be replaced by decisions grounded in empirically validated protocols [2].

- Context Management: Examiners should be shielded from contextual information about investigative constraints (e.g., a closed suspect pool) that could bias elimination decisions [2].

- Error Rate Transparency: Legal proceedings should require transparent disclosure of both false positive and false negative rates for any forensic method presented as evidence.

Without these reforms, eliminations will continue to escape the scrutiny required of scientific evidence, perpetuating unmeasured error and potentially undermining the integrity of criminal investigations and prosecutions. A balanced focus on both types of error is not just a scientific imperative but a foundational element of a just legal system.

The 2009 report by the National Academy of Sciences (NAS) and the 2016 report by the President's Council of Advisors on Science and Technology (PCAST) represent pivotal moments in forensic science, establishing a scientific framework for evaluating forensic feature-comparison methods. These reports emerged in response to growing concerns about the scientific validity of many forensic disciplines and their application within the criminal justice system. The NAS report, "Strengthening Forensic Science: A Path Forward," provided a comprehensive critique of various forensic disciplines, highlighting the need for more rigorous scientific validation [16]. Building upon this foundation, the PCAST report specifically addressed the "foundational validity" of feature-comparison methods, establishing explicit guidelines for empirical testing and error rate estimation [17] [16].

Central to both reports is the principle that for any forensic method to be considered scientifically valid, it must demonstrate reliability through empirical testing that provides valid estimates of its accuracy and error rates [16]. The PCAST report defined foundational validity as requiring that "a method has been subjected to empirical testing by multiple groups, under conditions appropriate to its intended use," with studies that "(a) demonstrate that the method is repeatable and reproducible and (b) provide valid estimates of the method's accuracy" [16]. This framework demands that forensic methods be subjected to black-box studies—which measure the performance of examiners on representative samples of known source—to establish realistic error rates before their results are presented in criminal courts [17] [18].

Comparative Analysis of Discipline-Specific Recommendations and Outcomes

PCAST Assessment of Major Forensic Disciplines

Table 1: PCAST Recommendations and Current Status of Forensic Feature-Comparison Methods

| Discipline | PCAST Foundational Validity Assessment | Recommended Limitations | Post-PCAST Judicial Treatment | Key Methodological Concerns |

|---|---|---|---|---|

| DNA Analysis | Established for single-source & two-person mixtures; limited for complex mixtures [17] [16] | Limit testimony on complex mixtures with >3 contributors or minor contributor <20% [17] | Generally admitted with limitations on probabilistic genotyping software testimony [17] | Subjective interpretation of complex mixtures; variable performance of probabilistic genotyping software [17] |

| Latent Fingerprints | Foundational validity established [17] [16] | Full disclosure of false positive rates (~1 in 306); awareness of cognitive bias [16] | Generally admitted with recognition of non-zero error rate [17] | Substantial false positive rate; subjective assessment; need for more rigorous proficiency testing [16] |

| Firearms/Toolmarks | Insufficient evidence for foundational validity in 2016 [17] [19] | Testimony limitations; avoid "absolute certainty" claims; disclosure of error rates [17] [19] | Mixed admissibility; often limited rather than excluded; increased scrutiny post-2016 [17] [19] | Subjective nature; lack of black-box studies; circular "sufficient agreement" standard [19] |

| Bitemark Analysis | Lacks foundational validity; low prospects for development [17] [16] | Advised against significant resource investment; generally should be excluded [17] [16] | Increasingly excluded or limited; successful post-conviction challenges difficult [17] | High error rates; lack of scientific basis for uniqueness claims; subjective interpretation [17] |

| Footwear Analysis | No foundational validity for specific identifying marks [16] | Exclusion of association testimony unsupported by accuracy estimates [16] | Increasing scrutiny; often excluded or limited based on PCAST findings [17] | Lack of meaningful evidence or accuracy estimates for associations [16] |

Experimental Protocols for Error Rate Validation

Black-Box Study Methodology

Black-box studies represent the gold standard for establishing foundational validity according to PCAST guidelines. These studies are designed to measure the real-world performance of forensic examiners by presenting them with evidence samples of known origin without revealing this critical information to the participants. The fundamental protocol involves: (1) selecting representative samples that reflect casework complexity, including both mated pairs (samples from the same source) and non-mated pairs (samples from different sources); (2) administering these samples to practicing forensic examiners under controlled conditions that mimic realistic casework; (3) collecting decisions using the standard conclusion scales of the discipline (typically identification, exclusion, or inconclusive); and (4) calculating error rates based on the responses [17] [18].

The statistical analysis of black-box studies must carefully distinguish between false positive rates (incorrect identifications from different sources) and false negative rates (incorrect exclusions from same sources). PCAST emphasized that for a method to be foundationally valid, it must demonstrate reproducibility through multiple studies by different research groups and provide valid accuracy estimates that justify its use in casework [16]. For disciplines using non-binary conclusion scales that include "inconclusive" decisions, the interpretation of error rates becomes more complex, as inconclusive responses do not neatly fit into traditional correct/incorrect frameworks but significantly impact the method's practical utility [18].

Proficiency Testing and Collaborative Exercises

Proficiency testing (PT) and collaborative exercises (CE) provide complementary approaches to black-box studies for monitoring ongoing performance and estimating error rates. These methodologies involve administering standardized tests to practicing forensic analysts to assess their competency and the consistency of results across different laboratories and examiners [20]. Optimal PT/CE design requires: (1) representative test materials that reflect the complexity and challenges of actual casework; (2) blind administration that prevents participants from knowing they are being tested; (3) regular implementation to monitor performance over time; and (4) systematic analysis of results to identify potential training needs or methodological concerns [20].

Critical to the interpretation of PT/CE results is understanding that measured accuracy depends heavily on test design and its alignment with realistic casework scenarios. These exercises are particularly valuable for distinguishing between "1-to-1" comparisons (where one questioned sample is compared to one known sample) and "1-to-n" scenarios (where one questioned sample is compared against multiple known samples, such as database searches), as error rates may differ significantly between these contexts [20]. The conceptual relationship between different validation approaches can be visualized as follows:

The Impact of Rule 702 Amendments on Forensic Evidence Admissibility

Recent amendments to Federal Rule of Evidence 702 have significant implications for the admissibility of forensic science evidence in light of NAS and PCAST recommendations. These amendments, which took effect in December 2023, clarify that: (1) the proponent of expert testimony must establish its admissibility by a preponderance of evidence; and (2) an expert's opinion must reflect a reliable application of trustworthy methods to the facts of the case [19]. The Advisory Committee notes specifically highlight that these revisions are "especially pertinent" to forensic evidence and require that opinions "must be limited to those inferences that can reasonably be drawn from a reliable application of the principles and methods" [19].

These rule changes directly address concerns raised in both the NAS and PCAST reports regarding the overstatement of forensic conclusions. Courts are increasingly expected to exclude testimony that makes claims of "absolute certainty," "to the exclusion of all other firearms," or "practical impossibility" [19] [16]. For firearms and toolmark evidence specifically, this has resulted in more frequent limitations on expert testimony, with courts typically prohibiting assertions of "100% certainty" while still admitting more qualified statements about matching [19]. The amendments create a stronger framework for judges to implement PCAST's recommendation that courts should never permit "scientifically indefensible claims" about error rates [16].

Conceptual Framework for Interpreting Error Rates in Forensic Science

Distinguishing Method Performance from Method Conformance

A critical advancement in error rate interpretation is the distinction between method performance and method conformance. Method performance refers to the capacity of a forensic method to distinguish between different propositions of interest (e.g., same-source versus different-source) and encompasses both discriminability and reproducibility of outcomes [18] [21]. Method conformance relates to whether an examiner has properly adhered to the defined procedures and protocols of the method in question [18] [21]. This distinction is essential because a method may demonstrate excellent performance in validation studies yet be misapplied by an individual examiner, or conversely, an examiner may perfectly conform to a method with poor inherent discriminability.

The interpretation of error rates must account for this distinction, as black-box studies typically measure the combined effect of both method performance and examiner conformance. For this reason, PCAST recommended that error rate estimates should be based on the performance of the entire system (method plus examiner) under realistic conditions [16]. The relationship between these concepts and their impact on evidence reliability can be visualized through the following conceptual framework:

The Inconclusive Decision Challenge in Error Rate Calculation

The proper treatment of inconclusive decisions represents a significant challenge in calculating and interpreting error rates in forensic science. Most feature-comparison disciplines use ternary conclusion scales (identification, inconclusive, exclusion) rather than binary scales, complicating traditional error rate calculations [18]. As illustrated in Table 2, different approaches to handling inconclusive decisions can lead to dramatically different interpretations of method performance.

Table 2: Impact of Inconclusive Decision Treatment on Error Rate Interpretation

| Treatment Method | False Positive Rate Calculation | False Negative Rate Calculation | Advantages | Limitations |

|---|---|---|---|---|

| PCAST Approach (Exclude inconclusives) | FP / (FP + TN) | FN / (FN + TP) | Focuses on conclusive decisions; avoids dilution of error rates | May overstate practical accuracy; ignores examiners' tendency toward inconclusives |

| Conservative Approach (Count as errors) | (FP + Inc) / Total | (FN + Inc) / Total | Maximizes accountability; captures avoidance of definitive answers | Overstates error rates; penalizes appropriate conservatism |

| Binary Conversion | FP / Total | FN / Total | Simple calculation; consistent denominator | Difficult to compare across studies with different inconclusive rates |

| Likelihood Ratio Framework | Models probability of evidence under different propositions | Models probability of evidence under different propositions | Most statistically rigorous; preserves all information | Complex implementation; unfamiliar to most legal stakeholders |

The fundamental challenge is that inconclusive decisions are neither "correct" nor "incorrect" in the traditional sense, but rather can be either "appropriate" or "inappropriate" depending on the specific case context and the methodological criteria for declaring an inconclusive result [18]. Recent research suggests that the utility of a forensic method is better characterized by how successfully its output distinguishes between mated and non-mated comparisons rather than by simple error rates alone [18] [21].

Essential Research Toolkit for Forensic Error Rate Studies

Table 3: Research Reagent Solutions for Forensic Error Rate Studies

| Tool/Resource | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| Standardized Reference Samples | Provides known source materials for validation studies | Black-box studies; proficiency testing; method validation | Must represent casework complexity; require careful documentation of sources and characteristics |

| Probabilistic Genotyping Software (STRmix, TrueAllele) | Interprets complex DNA mixtures using statistical models | DNA analysis of multi-contributor samples | Validation required for specific case types; sensitivity to contributor ratios and DNA quantity [17] |

| Black-B Study Designs | Measures performance of examiners on samples of known source | Establishing foundational validity per PCAST | Requires representative samples; blind administration; appropriate sample sizes [17] [18] |

| Proficiency Test Programs | Monifies ongoing performance of individual examiners | Quality assurance; monitoring method conformance | Should be blind, regular, and casework-representative [20] [18] |

| Statistical Analysis Frameworks | Calculates error rates with confidence intervals | Interpreting validation study results | Must account for inconclusive decisions; provide measures of uncertainty [18] [21] |

| Data Sharing Platforms | Enables collaborative exercises and meta-analyses | Multi-laboratory validation studies | Standardized formats; privacy protections; structured metadata |

The NAS and PCAST reports have fundamentally transformed the landscape of forensic science by establishing rigorous scientific standards for evaluating feature-comparison methods. Their emphasis on empirical validation through black-box studies and transparent error rate documentation has driven significant improvements across multiple forensic disciplines. While implementation challenges remain—particularly regarding the treatment of inconclusive decisions and the distinction between method performance and method conformance—the framework established by these reports provides a solid foundation for ongoing scientific progress.

The recent amendments to Federal Rule of Evidence 702 have created stronger legal mechanisms for enforcing these scientific standards in court proceedings. As research continues, the focus should remain on developing statistically robust approaches to error rate estimation that account for the complexities of forensic practice while providing transparent information to legal decision-makers. The integration of rigorous error rate documentation into both forensic practice and legal admissibility decisions represents the most promising path forward for strengthening the scientific foundations of the criminal justice system.

In forensic firearm comparisons, examiners typically reach one of three conclusions: identification, elimination, or inconclusive [22]. While recent reforms have justifiably focused on reducing false positive errors (incorrectly matching evidence to an innocent source), this has created a significant blind spot regarding false negative errors (incorrectly excluding the true source) [22]. This imbalance is particularly problematic in closed-pool scenarios, where the elimination of all other potential sources functions as a de facto identification of the remaining source [22] [2].

In such constrained investigative contexts—where investigative constraints define a limited set of suspects—eliminations based on insufficient empirical scrutiny can lead to serious miscarriages of justice [22]. This article examines how this systematic oversight permeates forensic practice, validity studies, and major reform efforts, and proposes essential corrections to current methodologies and reporting standards.

The Problem of Eliminations in Constrained Contexts

The Current Asymmetry in Error Rate Focus

The forensic science community displays a myopic focus on false positive rates while neglecting false negative error measurement [22]. This asymmetry stems partly from legal tradition's emphasis on protecting the innocent, encapsulated by Blackstone's ratio: "It is better that ten guilty persons escape than that one innocent suffer" [22]. While this normative foundation is commendable, its blind application to forensic science creates significant limitations.

Forensic examiners can effectively set false positive rates to zero by concluding "elimination" for all comparisons, but this would render the method useless [22]. A comprehensive validity assessment requires both false positive rates (FPR) and false negative rates (FNR), or equivalently, both sensitivity and specificity [22].

The Closed-Pool Logic Problem

In closed-pool scenarios with a limited number of potential sources, the logical implications of eliminations change fundamentally:

Diagram 1: Logical implications of eliminations in different investigative contexts.

This dynamic creates particular dangers when eliminations are based on class characteristics alone or intuitive "common sense" judgments without empirical validation [22]. The forensic examiner may believe they are merely excluding a source, while investigators and jurors understand the conclusion as identifying the remaining source in the constrained pool.

Quantitative Evidence of the Reporting Imbalance

Review of Validity Studies

A systematic review of existing validity studies in forensic firearms comparisons reveals substantial gaps in error rate reporting [22]. Among 28 examined studies:

Table 1: Error Rate Reporting in Firearms Comparison Validity Studies

| Reporting Category | Percentage of Studies | Number of Studies |

|---|---|---|

| Report both FPR and FNR | 45% | 12.6 |

| Do not split errors into FPR/FNR | 20% | 5.6 |

| Report no errors or have no errors | 35% | 9.8 |

Note: Adapted from analysis of 28 validity studies; fractional values represent proportional estimates from percentage data [22].

This evidence demonstrates that over half of validity studies fail to provide complete information about method accuracy, with more than a third having inadequate designs that report no errors whatsoever [22].

Professional Guidelines and Major Reports

This reporting imbalance is reinforced at institutional levels. Both the AFTE Theory of Identification and major government reports like the 2009 NAS report and 2016 PCAST report allow eliminations to be made with less supportive evidence than identifications [22]. The PCAST report, while acknowledging that "false-negative results can contribute to wrongful convictions as well," predominantly focuses on false positive errors in its analysis [22].

Similar asymmetries appear in other pattern-matching disciplines. For fingerprint comparisons, level 1 detail (ridge flow) is deemed "only sufficient for eliminations, not for declaring identifications" [22]. In bite mark analysis, the NAS stated that "it is reasonable to assume that the process can sometimes reliably exclude suspects" despite acknowledging the discipline's "inherent weaknesses" [22].

Experimental Approaches for Measuring Error Balances

Black-Box Study Design

Comprehensive error rate validation requires properly designed black-box studies that incorporate both same-source and different-source comparisons across representative difficulty levels [22]. The fundamental metrics for assessing method performance include:

Sensitivity = TP / (TP + FN) = 1 - FNR Specificity = TN / (TN + FP) = 1 - FPR

Where TP = True Positive, TN = True Negative, FP = False Positive, and FN = False Negative [22].

Essential Methodological Components

Robust experimental protocols must include:

- Blinded designs that prevent examiners from knowing which comparisons are ground-truth knowns versus experimental probes

- Case-representative materials that reflect the full spectrum of evidence quality encountered in practice

- Context manipulation controls to measure the effects of contextual bias on both elimination and identification decisions

- Statistical power sufficient to detect meaningfully small error rates with reasonable confidence intervals

Studies should report both point estimates and confidence intervals for both false positive and false negative rates, enabling proper risk assessment for both types of errors [22].

Policy Recommendations for Reform

Based on the documented asymmetries and their implications for justice, five key reforms are necessary:

Table 2: Policy Recommendations for Improving Forensic Eliminations

| Recommendation | Key Actions | Expected Impact |

|---|---|---|

| Balanced Error Reporting | Require both FPR and FNR in validity studies; report confidence intervals | Complete accuracy assessment; informed weight of evidence |

| Empirical Validation of Eliminations | Establish minimum criteria for elimination decisions; validate against known ground truth | Prevent "common sense" eliminations without empirical support |

| Context Management Protocols | Implement blinding procedures; limit task-irrelevant information | Reduce contextual bias in elimination decisions |

| Clear Legal Communications | Explain limitations of eliminations in closed-pool scenarios; use clear verbal scales | Prevent factfinders from treating eliminations as identifications |

| Sequential Unmasking | Reveal case information gradually; document decision pathway | Control confirmation bias; maintain forensic integrity |

These reforms collectively address the scientific, operational, and legal dimensions of the elimination problem, promoting more rigorous and transparent forensic practice [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Validation Studies

| Item | Function | Application Notes |

|---|---|---|

| Ground-truth known samples | Establish ground truth for method validation | Should represent range of quality and quantity encountered in casework |

| Proficiency test materials | Assess examiner performance under controlled conditions | Must be blind and incorporate appropriate controls |

| Statistical analysis software | Calculate error rates with confidence intervals | Should accommodate hierarchical and random effects structures |

| Casework-representative materials | Bridge between validation studies and actual casework | Must reflect real-world challenges and evidence types |

| Context management protocols | Control potentially biasing information | Sequential unmasking procedures; information filters |

The current asymmetrical focus on false positives to the exclusion of false negatives creates significant justice risks, particularly in closed-pool scenarios where eliminations function as de facto identifications [22] [2]. Addressing this imbalance requires both scientific reforms—including balanced error rate reporting and empirical validation of elimination thresholds—and operational changes to manage contextual biases and improve legal communications [22].

The visual below summarizes the necessary shift from current practice to a more balanced approach:

Diagram 2: Necessary shift from asymmetrical to balanced error rate evaluation.

Without these reforms, eliminations will continue to escape appropriate scrutiny, perpetuating unmeasured error and potentially undermining the integrity of forensic conclusions [22]. Proper validation of both elimination and identification decisions is essential for delivering justice—whether that means convicting the guilty or exonerating the innocent.

Advancing Forensic Science: Quantitative Frameworks and Machine Learning Applications

The Likelihood Ratio (LR) framework is increasingly recognized as the logically correct method for the interpretation of forensic evidence, a stance advocated by key international organizations and embodied in the new ISO 21043 standard for forensic science [23] [24]. This framework represents a fundamental shift away from traditional categorical conclusion scales—such as the Association of Firearm and Tool Mark Examiners (AFTE) Range of Conclusions ("Identification," "Inconclusive," "Elimination")—toward a quantitative approach that properly expresses evidential strength [25]. The LR provides a transparent, reproducible method that is intrinsically resistant to cognitive bias and uses the logically correct framework for evidence interpretation [23] [24]. This comparative guide examines the implementation of the LR framework against traditional methods within the context of forensic feature-comparison methods research, with particular focus on quantitative performance data and comparative error rates.

Theoretical Foundation: Traditional Methods vs. The LR Framework

Traditional forensic practice relies on predefined verbal scales that force examiners to translate complex observational data into simplistic categories. This approach suffers from five major deficiencies:

- Decision Dependency: Traditional scales require decisions that must respect prior probability of a mated pair, creating potential for misinterpretation [25]

- Utility Ignorance: Examiners rarely have access to case details needed to weigh consequences of erroneous identifications versus eliminations [25]

- Layperson Misinterpretation: Research shows 71% of laypersons believe "Identification" means exclusion of all others, which does not reflect how forensic examiners typically interpret the term [25]

- Contradiction with Error Rates: The concept of "greater than closest nonmatch" is contradicted by nonzero error rates in black box studies [25]

- Lack of Calibration: Traditional articulation language has not been calibrated against actual evidence strength except indirectly through error rate studies [25]

The Logical Structure of the Likelihood Ratio Framework

The LR framework avoids these pitfalls by quantifying evidence strength as the ratio of the probability of the observations under two competing hypotheses:

Figure 1: The Bayesian logical structure of evidence interpretation using the Likelihood Ratio framework

This approach separates the examiner's role (providing the LR) from the fact-finder's role (assessing prior odds to determine posterior odds), eliminating the need for examiners to make decisions about source propositions without knowledge of case context [25]. The framework properly situates forensic evidence within Bayesian belief updating, allowing new information to be combined with existing information in a logically coherent manner [25].

Quantitative Comparison: Experimental Data and Performance Metrics

Firearms Evidence Case Study

Recent research has applied the LR framework to firearms evidence through reanalysis of error rate studies, generating quantitative measures of evidence strength for each comparison. The ordered probit model summarizes the distribution of examiner responses along a latent axis representing support for the same-source proposition, then aggregates data across all comparisons to produce likelihood ratios [25].

Table 1: Comparison of Traditional Conclusions vs. Likelihood Ratios in Firearms Evidence

| Traditional Conclusion | Implied Strength by Language | Actual LR from Ordered Probit Model | Magnitude of Overstatement |

|---|---|---|---|

| Identification | ~10,000 or greater | Often <10 | Up to 3 orders of magnitude |

| Inconclusive A | Vague/Unquantified | Variable near 1 | Cannot be determined |

| Inconclusive B | Vague/Unquantified | Variable near 1 | Cannot be determined |

| Inconclusive C | Vague/Unquantified | Variable near 1 | Cannot be determined |

| Elimination | ~10,000 or greater | Often <10 | Up to 3 orders of magnitude |

Data derived from ordered probit model analysis of firearms and cartridge case comparisons [25]

The data reveal a critical finding: examiners using traditional categorical language consistently overstate evidence strength by several orders of magnitude compared to empirically-derived LRs [25]. This miscalibration has significant implications for how forensic evidence is weighted in legal proceedings.

Methodological Protocols for LR Implementation

Ordered Probit Model Protocol

The ordered probit model implementation follows this experimental workflow:

Figure 2: Experimental workflow for implementing the Ordered Probit Model to calculate Likelihood Ratios

The model assumes examiner responses arise from an underlying continuous latent variable representing the strength of support for the same-source proposition, with the distribution of this variable approximated by a normal distribution for each compared pair [25]. The proportion of examiners selecting each categorical conclusion is determined by the area under this normal distribution between estimated decision thresholds [25].

Dirichlet Prior Method (Warren et al. Protocol)

An alternative method directly calculates Bayes factors using:

- Data Input: Raw count data for each response category pooled across examiners and test trials

- Statistical Model: Dirichlet priors for multinomial probability distributions

- Calculation: Direct computation of LR = P(Response|Same Source) / P(Response|Different Sources)

- Implementation: Simple substitution of categorical conclusions with corresponding LR values [23]

Performance Across Forensic Disciplines

Table 2: LR Framework Application Across Forensic Disciplines

| Forensic Discipline | Implementation Method | Calibration Performance | Key Findings |

|---|---|---|---|

| Firearms Examination | Ordered Probit Model | Cllr values vary by dataset | Different challenge levels affect performance [25] |

| Friction Ridge Analysis | Ordered Probit Model | Not fully reported | Demonstrates feasibility for fingerprint data [23] |

| Bloodstain Pattern Analysis | Dirichlet Method | Not fully reported | Method directly applicable to multiple fields [23] |

| Footwear Evidence | Dirichlet Method | Not fully reported | Enables quantitative evidence assessment [23] |

| Handwriting Analysis | Dirichlet Method | Not fully reported | Provides statistical support for conclusions [23] |

Implementation Challenges and Methodological Considerations

Critical Requirements for Meaningful LR Calculation

For the LR framework to provide meaningful values in casework context, two essential requirements must be met:

- Examiner-Specific Performance Data: The statistical model must be trained on data representative of the particular examiner performing the analysis, as performance varies substantially between practitioners [23]

- Condition-Specific Calibration: Response data must reflect conditions of the specific case, as more challenging conditions naturally produce LRs closer to 1 [23]

Current methods using pooled data across examiners and conditions cannot provide appropriate LRs for individual cases [23]. Morrison's Bayesian method addresses this by using population data to establish informed priors that are updated with individual examiner data as it becomes available, creating a practical pathway for implementation [23].

The Research Toolkit: Essential Materials and Methods

Table 3: Research Reagent Solutions for LR Framework Implementation

| Component | Function | Implementation Example |

|---|---|---|

| Ordered Probit Model | Translates categorical responses to continuous latent variable | Fitted using MCMC procedures to determine credible parameters [25] |

| Dirichlet Priors | Provides Bayesian framework for multinomial data | Enables direct calculation of Bayes factors from count data [23] |

| Black-Box Studies | Generates performance data for model training | Presents examiners with known ground truth comparisons [23] |

| MCMC Algorithms | Estimates model parameters from response data | Determines posterior distributions for ordered probit parameters [25] |

| Tippett Plots | Visualizes system calibration and performance | Shows distribution of LRs for same-source vs. different-source comparisons [23] |

| Logistic Regression | Evaluates system calibration | Measures relationship between evidence strength and ground truth [23] |

The evidence from comparative studies strongly supports the LR framework as a superior approach to traditional categorical conclusions. The implementation of this framework, particularly through methods like the ordered probit model and Dirichlet-based Bayes factors, provides:

- Logical Correctness: Proper handling of uncertainty and separation of examiner role from fact-finder role [25]

- Quantitative Calibration: Empirical demonstration that traditional language overstates evidence strength [25]

- Transparent Methodology: Reproducible, data-driven approaches resistant to cognitive bias [23]

- Cross-Disciplinary Applicability: Successful implementation across multiple forensic disciplines [23]

The integration of the LR framework into international standards (ISO 21043) and its alignment with the forensic-data-science paradigm signal an irreversible shift toward empirically validated, logically coherent forensic science practice [24]. Future research should focus on developing examiner-specific and condition-specific models to enable meaningful implementation in casework, ultimately providing more accurate and appropriately weighted forensic evidence in legal proceedings.

Forensic science is undergoing a fundamental transformation from a discipline reliant on subjective categorical conclusions to one increasingly grounded in statistical, quantitative values. This paradigm shift, often referred to as the forensic-data-science paradigm, emphasizes methods that are transparent, reproducible, resistant to cognitive bias, and use the logically correct framework for evidence interpretation—the likelihood ratio [24]. The movement responds to longstanding criticisms regarding the scientific validity of traditional forensic feature-comparison methods, particularly those relying on examiner declarations of "discernible uniqueness" [26]. Where experts once testified to categorical conclusions like "match" or "identification" with claimed absolute certainty, the field now recognizes that forensic inference requires consideration of probabilities and empirical measurement of reliability [12] [26]. This transition represents perhaps the most significant evolution in forensic science practice over the past century, with profound implications for criminal justice outcomes and the prevention of wrongful convictions.

The Statistical Framework: Likelihood Ratios and Error Rates

The Logic of Forensic Inference

The logical foundation for converting categorical conclusions to quantitative values rests on an inductive framework that considers probabilities under competing propositions. The examiner must evaluate two key probabilities: (1) the probability of observing the evidence patterns if the impressions have the same source, and (2) the probability of observing those same patterns if the impressions have different sources [26]. The ratio between these probabilities provides a quantitative index of the evidence's probative value, formally known as the likelihood ratio [24] [26]. This framework represents a complete departure from the traditional "discernible uniqueness" theory, which asserted that pattern-matching could yield definitive source attributions [26]. The likelihood ratio approach acknowledges that most forensic evidence provides support for a proposition rather than definitive proof, requiring experts to weigh probabilities rather than claim certainty.

Method Conformance Versus Method Performance

A critical distinction in evaluating forensic methods lies between method conformance and method performance [12]. Method conformance relates to whether an analytical outcome results from proper adherence to defined procedures, while method performance reflects a method's capacity to discriminate between different propositions of interest [12]. This distinction is particularly important when considering inconclusive decisions, which should be evaluated not as "correct" or "incorrect" but as "appropriate" or "inappropriate" based on whether the examiner followed prescribed procedures [12]. Studies characterizing method performance are only relevant when method conformance can be demonstrated, highlighting the need for both quality assurance and empirical validation in forensic practice [12].

Table 1: Key Statistical Concepts in Forensic Interpretation

| Concept | Traditional Approach | Quantitative Approach | Significance |

|---|---|---|---|

| Evidence Weight | Subjective "match" declaration | Likelihood ratio calculation | Provides continuous measure of evidentiary strength |

| Error Rates | Often claimed to be zero | Empirically measured via black-box studies | Enables proper assessment of reliability |

| Inconclusive Decisions | Treated as examination failure | Distinguished as appropriate/inappropriate | Separates methodological from performance issues |

| Uncertainty | Rarely quantified | Explicitly measured and reported | Promotes transparency about limitations |

Quantitative Error Rates in Practice: Experimental Data

Error Rates in Pattern Evidence Disciplines

Empirical studies have revealed substantial variation in error rates across forensic feature-comparison disciplines, challenging previous claims of infallibility. The President's Council of Advisors on Science and Technology reviewed available evidence and found that latent print examination, one of the most established pattern-matching disciplines, has a false-positive rate that is "substantial and is likely to be higher than expected by many jurors" [26]. The reported error rates ranged from approximately 1 in 306 cases to as high as 1 in 18 cases across different studies [26]. These findings fundamentally undermine the historical claim of zero error rates in fingerprint identification and highlight the necessity of empirical testing rather than reliance on untested assumptions.

The Multiple Comparisons Problem

Forensic evaluations often involve implicit multiple comparisons that substantially increase the expected false discovery rate. Research on toolmark examinations demonstrates this problem effectively: when comparing cut wires to potential tools, examiners must search across multiple blade surfaces and alignments, performing numerous comparisons [10]. The minimal number of comparisons in a simple wire examination is approximately 15, while computationally-assisted comparisons can involve up to 40,000 implicit comparisons [10]. This "multiple comparison problem" dramatically increases the family-wise error rate, with published false discovery rates for striated toolmarks ranging from 0.45% to 7.24% in black-box studies [10]. As the number of comparisons increases, so does the probability of encountering coincidental matches, necessitating statistical correction methods similar to those used in other scientific fields conducting multiple hypothesis tests.

Table 2: Published Error Rates in Forensic Feature-Comparison Disciplines

| Discipline | Study Type | False Positive Rate | False Negative Rate | Key Limitations |

|---|---|---|---|---|

| Latent Prints | Black-box studies | 0.33% - 5.5% [26] | Substantial (exact rates vary) [26] | Limited number of available studies |

| Striated Toolmarks | Open-set studies | 0.45% - 7.24% [10] | Not consistently reported | Subjective evaluation rules |

| Firearms Analysis | PCAST review | Insufficient data [26] | Insufficient data [26] | Limited foundational validity research |

| Paint Evidence | Interlaboratory exercise | ~7% disagreement with consensus [27] | ~7% disagreement with consensus [27] | Varies with scenario difficulty |

Interlaboratory Studies and Consensus Building

Interlaboratory exercises provide valuable data on variability in forensic interpretation across different laboratories and practitioners. A recent study involving 85 participants evaluating paint evidence found that approximately 93% of responses were consistent between participants and within the consensus or next best category, while 73% agreed exactly with the subject matter expert panel consensus considered as ground truth [27]. Disagreements were most pronounced in "worst-case scenarios" created with intended higher difficulty and complex circumstances [27]. Interestingly, the exercise revealed that participant experience did not significantly impact reported conclusions, though more experienced participants achieved greater consensus for a given exercise [27]. Such studies highlight both the progress toward standardization and the ongoing challenges in achieving consistent interpretation across the forensic science community.

Standardization Initiatives: ISO 21043 and Research Agendas

The ISO 21043 Framework

The development of ISO 21043 as a new international standard for forensic science represents a significant milestone in the transition to quantitative methods. This standard provides requirements and recommendations designed to ensure the quality of the entire forensic process, organized into five parts: (1) vocabulary, (2) recovery, transport, and storage of items, (3) analysis, (4) interpretation, and (5) reporting [24]. The standard supports implementation of the forensic-data-science paradigm, emphasizing methods that are transparent, reproducible, intrinsically resistant to cognitive bias, and empirically calibrated and validated under casework conditions [24]. By establishing standardized terminology and procedures, ISO 21043 addresses the critical need for consistent implementation of quantitative approaches across laboratories and jurisdictions.

The NIJ Forensic Science Strategic Research Plan

The National Institute of Justice's Forensic Science Strategic Research Plan for 2022-2026 outlines prioritized objectives that explicitly support the transition to quantitative forensic methods [28]. The plan emphasizes "foundational validity and reliability of forensic methods" and calls for "quantification of measurement uncertainty in forensic analytical methods" [28]. Specific priority areas include objective methods to support interpretations and conclusions, evaluation of algorithms for quantitative pattern evidence comparisons, and assessment of causes and meaning of artifacts in a forensic context [28]. This coordinated research agenda represents a significant investment in establishing the scientific foundation for quantitative approaches and addressing known limitations in traditional forensic feature-comparison methods.

Implementation Challenges and Methodological Considerations

Cognitive and Human Factors