Measuring Error Rates in Forensic Science: Protocols, Validation, and Best Practices for Reliable Results

This article provides a comprehensive framework for understanding and implementing error rate measurement protocols in forensic method validation.

Measuring Error Rates in Forensic Science: Protocols, Validation, and Best Practices for Reliable Results

Abstract

This article provides a comprehensive framework for understanding and implementing error rate measurement protocols in forensic method validation. Aimed at researchers, scientists, and development professionals, it explores the foundational definitions of forensic error, outlines methodological approaches for calculating error rates, addresses common challenges in troubleshooting and optimization, and establishes criteria for rigorous validation against international standards. By synthesizing current research and standards like ISO 21043, this guide aims to equip practitioners with the knowledge to enhance the scientific reliability, legal admissibility, and quality assurance of forensic methodologies across disciplines.

Defining Forensic Error: Understanding the Foundations of Reliability and Measurement

In forensic method validation, the concept of "error" is not a monolithic, universally agreed-upon quantity. Instead, its definition is highly subjective, varying significantly across different stakeholders based on their specific roles, requirements, and expectations. For the court, error might relate to the reliability of an expert's opinion [1]. For a forensic unit pursuing accreditation, error is defined by the objective evidence that a method is fit for purpose as per international standards like ISO17025 [1]. For the researcher developing a novel method, error is intrinsically linked to the measurement uncertainty and the limitations of the instruments and processes used [2]. This document outlines application notes and protocols to systematically identify, measure, and reconcile these divergent definitions of error within the context of forensic method validation research.

Stakeholder Analysis & Divergent Error Definitions

A critical first step is to map how core error concepts are interpreted by different stakeholders involved in or impacted by a forensic method. The following table synthesizes these divergent perspectives.

Table 1: Divergent Definitions of Error Across Key Stakeholders

| Error Concept | Researcher / Method Developer | Forensic Unit (Accreditation Focus) | The Court / Legal Practitioner |

|---|---|---|---|

| Measurement Uncertainty | The statistical fluctuation (random error) and reproducible inaccuracies (systematic error) inherent in all measurements [2]. Quantified as the standard uncertainty of a single measurement or the standard error in repeated measurements. | Objective evidence that the method's performance characteristics, including precision, fall within predefined acceptance criteria [1]. | A factor affecting the weight of evidence; may question the "true value" of the evidence presented and the expert's confidence in their findings [1]. |

| Accuracy vs. Precision | Accuracy: Closeness to a true/accepted value (presence of systematic error). Precision: Degree of consistency and reproducibility of a result (influence of random error) [2]. | Demonstration of fitness for purpose, which encompasses both accuracy and precision as defined in the method's end-user requirements and specification [1]. | Often conflated. The primary concern is whether the result is "reliable" and "correct" enough for the matters at hand, with less distinction between the error types [1]. |

| Method Failure / Limitation | Identified through stress-testing the method with challenging data sets to find its breaking point or boundaries [1]. | A risk that must be controlled via quality assurance stages, checks, and understood limitations documented in the validation report [1]. | An issue of transparency; the expectation is that any known limitations affecting the reliability of the results are disclosed and explained by the expert [1]. |

Experimental Protocols for Quantifying Subjective Errors

To operationalize the concepts in Table 1, the following protocols provide a methodology for generating the quantitative and qualitative data needed to understand error subjectivity.

Protocol 1: Establishing End-User Requirements and Acceptance Criteria

1. Objective: To formally define what constitutes an "error" for a specific method by capturing the needs of all end-users, thereby creating the benchmark against which all subsequent error measurements are compared [1].

2. Detailed Methodology:

- Stakeholder Identification: Assemble a panel including method developers, forensic examiners, quality managers, and a representative of the end-user (e.g., a prosecutor or defense attorney).

- Requirements Elicitation: Conduct structured interviews or workshops to define the functional requirements. Key questions include:

- What is the specific task this method must accomplish?

- What are the critical outputs, and what level of quality is required?

- What are the potential risks if the method fails?

- Specification Drafting: Translate the requirements into a testable specification document. For example: "The method must correctly classify a known reference sample with 100% accuracy (n=20)," or "The measurement uncertainty for concentration values must be ≤15% relative uncertainty."

- Acceptance Criteria Setting: Define the pass/fail criteria for the validation study based on the specifications. These criteria are the formal, agreed-upon definition of an "unacceptable error" for the method's implementation [1].

Protocol 2: Systematic Error Analysis via Repeated Measurements and Challenge Data

1. Objective: To empirically characterize both random and systematic errors, providing the objective evidence required for validation and for informing the court on the method's limitations [1] [2].

2. Detailed Methodology:

- Precision (Random Error) Assessment:

- Accuracy (Systematic Error) Assessment:

- Use a certified reference material (CRM) with a known value for the target analyte.

- Analyze the CRM using the method and calculate the relative error:

(Measured Value - Known Value) / Known Value * 100%[2].

- Stress-Testing (Method Limitation Exploration):

- Design a challenge data set that includes edge cases, low-level samples, and potentially interfering substances [1].

- Process the challenge set and document the conditions under which the method fails to meet the acceptance criteria defined in Protocol 1.

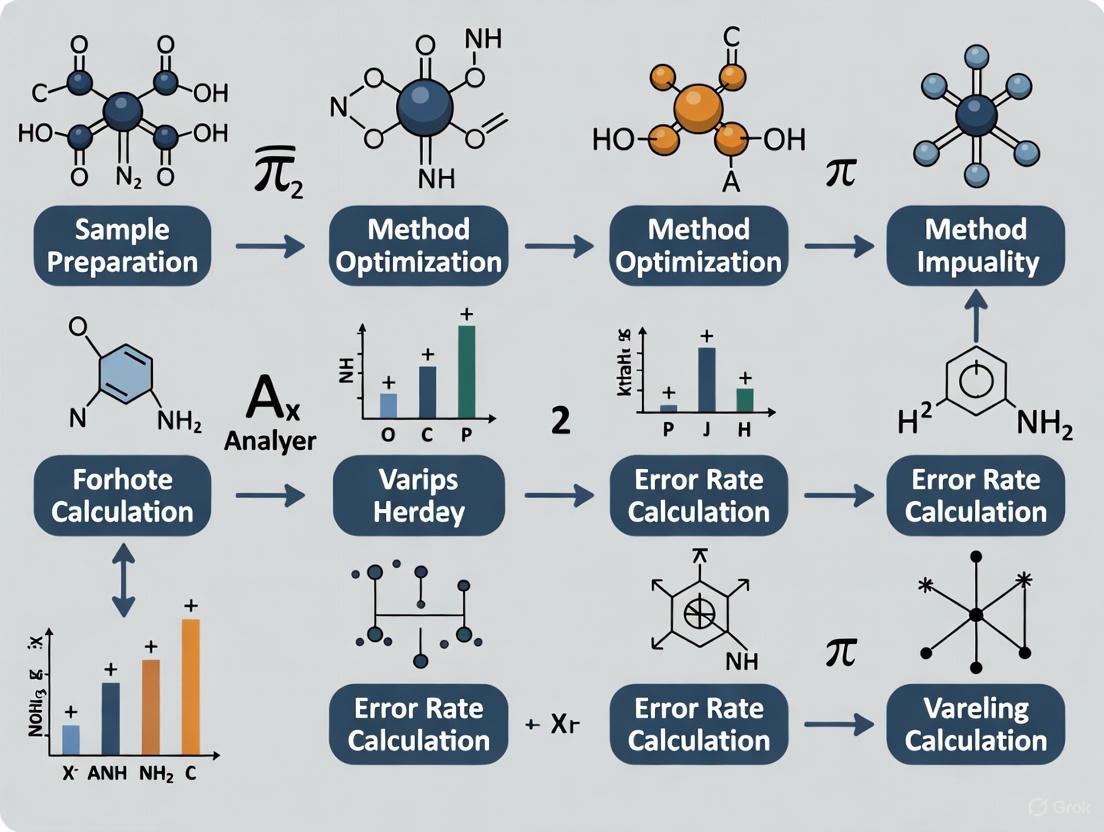

Visualization of the Stakeholder Ecosystem and Validation Workflow

The following diagrams, generated with Graphviz using the specified color palette, illustrate the relationships between stakeholders and the core validation process.

Stakeholder Error Focus

Method Validation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Error Rate Measurement and Method Validation

| Item / Solution | Function / Rationale |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground-truth sample with a known, certified value. Essential for quantifying systematic error (accuracy) and calibrating instruments [2]. |

| In-House Quality Control (QC) Material | A stable, well-characterized material used in every batch to monitor precision (random error) and ensure the method remains in a state of control over time. |

| Challenge Data Sets | Purposely designed data that includes edge cases, known interferences, and low-abundance targets. Used to stress-test the method and empirically define its limitations [1]. |

| Statistical Software (e.g., R, Python, Excel) | Used to calculate descriptive statistics (mean, standard deviation), inferential statistics, and uncertainty estimates, transforming raw data into objective evidence of fitness for purpose [3]. |

| End-User Requirement Specification Document | The foundational document that defines "error" for the specific context. It aligns stakeholder expectations and provides the acceptance criteria for the validation [1]. |

In forensic method validation research, a precise understanding of error is fundamental to establishing reliability and validity. The landscape of error is not monolithic but is instead composed of distinct, interrelated categories. A seminal framework classifies errors into three broad categories: (1) human error, encompassing intentional, negligent, and competency-based mistakes; (2) instrumentation and technology errors involving failures of analytical devices; and (3) fundamental methodological errors, including those arising from the inherent limitations of a technique or cognitive biases [4]. This tripartite model provides a critical structure for developing targeted error rate measurement protocols, ensuring that validation efforts address the full spectrum of potential failures within a forensic system. Acknowledging that error is unavoidable in all complex systems is the first step toward implementing a robust culture of continuous improvement and quality control [4].

Quantitative Data on Error and Measurement Variability

Quantitative data is essential for benchmarking performance and identifying areas for improvement. The following tables synthesize empirical findings on error perceptions and measurement variability, highlighting the context-dependent nature of error rates.

Table 1: Forensic Analyst Perceptions and Estimates of Error Rates

| Discipline or Error Type | Perceived or Measured Rate | Context / Notes | Source |

|---|---|---|---|

| Proficiency Testing (Australia) | Varies | Results from proficiency testing programs; calculation of error rates from these tests is deemed inappropriate by providers like CTS. | [4] |

| Forensic Bloodstain Pattern Analysis | Measured in accuracy studies | Study measured accuracy and reproducibility of conclusions among analysts. | [4] |

| Forensic Firearm Examination | Measured for validity & reliability | Study assessed the validity and reliability of examiners' conclusions. | [4] |

| Forensic DNA Analysis | Defined numbers & impact | Paper focuses on defining, numbering, and communicating error rates in DNA. | [4] |

| Survey of Forensic Analysts | Varies by discipline | Survey gathered perceptions and personal estimates of error rates from practicing analysts. | [4] |

Table 2: Variability in Cardiothoracic Ratio (CTR) Measurement Methods and Results

| Measurement Method Number | Cardiac Diameter Measurement Approach | Thoracic Diameter Measurement Approach | Average Deviation from CT Reference | Statistical Significance vs. CT |

|---|---|---|---|---|

| Method 1 | Maximum transverse diameter (single line) | Widest internal diameter | Significant underestimation | Significant (P = 0.004) |

| Method 4 | Midline reference for left/right borders | Defined anatomical landmarks | 3.64% | Not Significant (P > 0.999) |

| Method 6 | Midline reference for left/right borders | Defined anatomical landmarks | 4.42% | Not Significant (P > 0.999) |

A systematic review identified eight distinct methods for measuring the Cardiothoracic Ratio (CTR) in chest X-rays [5]. This methodological variability leads to significant differences in results, underscoring the profound impact of protocol definition. As shown in Table 2, methods that used a midline reference for the cardiac diameter and clear anatomical landmarks for the thoracic width showed the smallest deviation from the gold standard (CT measurements) and no statistically significant difference [5]. This serves as a powerful example of how methodological error can be introduced through a lack of standardized definitions.

Experimental Protocols for Error Rate Measurement

Protocol for a Black-Box Proficiency Study

Objective: To estimate practitioner-level error rates by testing the ability of forensic analysts to reach correct conclusions from casework-like materials without exposing the internal decision-making process.

Materials:

- Certified reference materials or previously adjudicated case samples with known ground truth.

- Standard laboratory equipment and analytical instruments.

- Data recording sheets (electronic or physical).

Procedure:

- Sample Preparation: Prepare a set of test samples that represent a range of typical casework scenarios, including known matches, non-matches, and potentially ambiguous specimens. The ground truth must be established and documented by an independent party.

- Blinding: Assign a unique, anonymized identifier to each sample. The analysts participating in the study must have no information about the ground truth or the expected outcomes.

- Analysis: Analysts process and examine the samples according to the standard operating procedures (SOPs) of the laboratory. They document their final conclusions (e.g., identification, exclusion, inconclusive).

- Data Collection: Collect all analytical conclusions along with the corresponding ground truth data.

- Data Analysis:

- Calculate the false positive rate: (Number of false positives / Total number of true non-matches) * 100.

- Calculate the false negative rate: (Number of false negatives / Total number of true matches) * 100.

- Calculate the total accuracy: (Number of correct conclusions / Total number of conclusions) * 100.

Protocol for a White-Box Method Validation Study

Objective: To identify and quantify specific sources of human, instrumental, and methodological error by observing and testing the individual components of a forensic method.

Materials:

- Control samples with known properties.

- All standard laboratory equipment and instrumentation.

- Maintenance logs and calibration records for instruments.

- Video recording equipment (optional, for observing human factors).

Procedure:

- Human Factors Assessment:

- Contextual Bias Testing: Present the same evidence to analysts with different contextual information (e.g., suggestive versus neutral case notes) and compare the rates of differing conclusions.

- Competency Monitoring: Analyze the correlation between procedural adherence (as measured by following SOPs) and the rate of erroneous results.

- Instrumentation Performance Verification:

- Calibration Drift: Periodically run certified control samples and plot the results over time to detect and quantify instrumental drift.

- Limit of Detection (LOD)/Limit of Quantitation (LOQ): Determine the lowest quantity of an analyte that can be reliably detected and quantified by the instrument, establishing the boundary for methodological error.

- Method Robustness Testing:

- Environmental Stressors: Deliberately introduce minor variations in approved protocol parameters (e.g., temperature, humidity, reagent concentration) to assess the method's susceptibility to these changes.

- Data Analysis: For each tested component, compute error rates specific to that component. The overall method robustness can be expressed as the range of conditions within which the error rate remains below a pre-defined acceptable threshold.

Visualization of Error Categorization and Management

The following diagram, generated using Graphviz, illustrates the hierarchical relationship between the three primary error categories and their sub-types, providing a logical map for systematic error investigation.

Figure 1: A hierarchical map of error categories in forensic science.

The workflow for managing these errors in a validation study is a cyclical process of execution, analysis, and refinement, as shown below.

Figure 2: A workflow for error management and protocol refinement.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, materials, and tools essential for conducting rigorous error rate measurement studies in a forensic research context.

Table 3: Essential Research Reagents and Materials for Error Rate Studies

| Item Name | Function / Application | Critical Protocol Consideration |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide samples with known, traceable properties to establish ground truth for proficiency testing and instrument calibration. | Verify certification and stability. Use to spike samples for recovery studies and LOD/LOQ determination. |

| Proficiency Test Samples | Allow for the assessment of analyst competency and methodological performance in a controlled, blinded manner. | Source from accredited providers. Ensure test design reflects realistic casework conditions without introducing undue bias. |

| Internal Standards | Used in analytical chemistry (e.g., GC-MS, LC-MS) to correct for instrumental variation and sample preparation inconsistencies, mitigating instrumentation error. | Select an isotopically labeled analog of the target analyte that does not occur naturally in samples. |

| Calibration Standards | Series of solutions with known analyte concentrations used to establish the quantitative response curve of an instrument. | Prepare fresh for each analytical run and cover the entire expected concentration range, including a blank. |

| Quality Control (QC) Samples | Independent samples with known concentrations run alongside casework samples to monitor the ongoing accuracy and precision of the analytical process. | Establish acceptance criteria for QC results. Any batch where QC fails must trigger an investigation into the source of error. |

| Data Recording System (Electronic Lab Notebook) | Ensures complete, tamper-proof, and auditable documentation of all procedures, results, and observations, critical for tracking potential human error. | Implement a system with user authentication, audit trails, and data integrity protection compliant with 21 CFR Part 11 or equivalent. |

Forensic science, despite its rigorous application of scientific principles to matters of law, is not immune to error. All scientific techniques feature some degree of error, and recent reviews conclude that error rates for many common forensic methods remain poorly documented or established [6]. A 2019 survey of 183 practicing forensic analysts revealed that while analysts perceive all error types as rare, their estimates of error rates in their fields were widely divergent, with some being unrealistically low [6]. This document outlines the critical protocols for error rate measurement and method validation essential for maintaining the scientific integrity and reliability of forensic science within the criminal justice system.

The foundational principle of this analysis is that error is an inherent characteristic of all complex systems, including forensic methodologies. The admissibility of scientific evidence in legal proceedings, guided by standards such as those from Daubert v. Merrill Dow Pharmaceuticals, Inc. (1993), requires trial courts to consider "known error rates" [6]. Validation is the process of providing objective evidence that a method is fit for its specific intended purpose, ensuring that the results can be relied upon by investigating officers and courts [1]. For any form of analysis, validation demonstrates that the method is fit for purpose, meaning it is "good enough to do the job it is intended to do" as defined by specifications developed from end-user requirements [1]. This process is a central feature of international standards (ISO17025) and the Forensic Science Regulator’s Codes of Practice and Conduct.

Quantitative Data on Forensic Error Perceptions

The following tables summarize empirical data on forensic analyst perceptions and estimates regarding error rates in their disciplines, highlighting the challenges in establishing unified, realistic error rates.

Table 1: Forensic Analyst Perceptions of Error Rarity and Preferences (n=183) [6]

| Perception Category | Analyst Consensus |

|---|---|

| Overall Error Frequency | All types of errors are perceived as rare. |

| False Positive vs. False Negative | False positive errors are perceived as even more rare than false negatives. |

| Error Risk Minimization | A typical preference to minimize the risk of false positives over false negatives. |

| Error Rate Documentation | Most analysts could not specify where error rates for their discipline were documented or published. |

Table 2: Nature of Analyst Error Rate Estimates [6]

| Characteristic of Estimates | Description |

|---|---|

| Divergence | Estimates provided by analysts were widely divergent across the field. |

| Unrealistic Assessments | A portion of the error rate estimates were deemed to be unrealistically low. |

Experimental Protocols for Method Validation

This section provides a detailed methodology for validating forensic methods to ensure they are fit for purpose and that their inherent error rates are understood. The process is critical for both novel and adopted/adapted methods.

Determination of End-User Requirements and Specification

- Purpose: To capture what the different users of the method's output require, defining what the method must reliably do [1].

- Procedure:

- Identify End Users: Define all parties who will use the information (e.g., forensic unit, investigating officers, courts).

- Define Critical Findings: Specify the aspects of the method the expert will rely on for critical findings in reports or statements.

- Draft Requirements Document: Create a list of testable functional requirements derived from the end-user needs. For software tools, focus on features that affect reliable results, not every available function [1].

Risk Assessment and Acceptance Criteria

- Purpose: To identify potential points of failure within the method and set objective pass/fail criteria for the validation [1].

- Procedure:

- Map Method Stages: Break down the method into its logical sequence of procedures and operations.

- Identify Quality Assurance Checkpoints: Document existing checks, reality checks, or controls that manage risk for specific parts or the entire method [1].

- Set Acceptance Criteria: For each requirement defined in Section 3.1, establish measurable acceptance criteria that will determine the success of the validation exercise.

Validation Planning and Test Data Design

- Purpose: To create a plan for generating objective evidence that the method meets the acceptance criteria [1].

- Procedure:

- Create a Validation Plan: Outline the experiments and tests to be performed.

- Select Test Material/Data: Data must be representative of real-life casework. The dataset should be complex enough to indicate real-world performance but not so complex as to be impractical. Include data challenges that "stress test" the method to probe its limitations [1].

- Review Pre-Existing Data: For methods adopted from elsewhere, critically review the design of any prior validation studies and the test material used to ensure it robustly tests the method against the current end-user requirements [1].

Execution, Analysis, and Reporting

- Purpose: To execute the plan, evaluate the outcomes, and formally document the validation.

- Procedure:

- Perform Validation Exercise: Execute the tests outlined in the validation plan, meticulously recording all outcomes and data.

- Assess Acceptance Criteria Compliance: Evaluate the test data against the pre-defined acceptance criteria.

- Compile Validation Report: Produce a report that includes the validation plan, test data, analysis of results against acceptance criteria, and a clear statement on the method's fitness for purpose, including any understood limitations [1].

Visualization of Forensic Method Validation Workflow

The following diagram illustrates the logical sequence and iterative nature of the method validation process as defined by the Forensic Science Regulator's Codes of Practice [1].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Forensic Validation Studies

| Item / Reagent | Function in Validation |

|---|---|

| Representative Test Datasets | Serves as the foundational input for testing; must mirror real-case data complexity and include edge cases to stress-test the method and uncover potential error conditions [1]. |

| Validated Reference Materials | Provides a ground-truth benchmark for comparing the outputs of the method under validation, essential for calculating false positive/negative rates and establishing accuracy. |

| Standard Operating Procedure (SOP) Draft | The operating manual for the method being validated; the validation is performed on the final method as documented in the SOP, ensuring consistency and reproducibility [1]. |

| Quality Control (QC) Samples | Used to monitor the performance and stability of the method throughout the validation exercise and during subsequent routine use. |

| Statistical Analysis Plan (SAP) | Pre-defines the statistical methods and tests that will be used to evaluate the validation data, preventing bias and ensuring the analytical approach is fit for purpose [7]. |

| Blinded Samples | Introduces objectivity into testing by preventing analyst bias, thereby providing more robust objective evidence of the method's performance [1]. |

Visualization of Error Classification in Forensic Analysis

This diagram categorizes key error types and their relationships within a forensic analysis system, which is crucial for designing targeted validation studies.

The inherent risk of error in complex forensic systems is not a mark of failure but a scientific reality that must be systematically managed. The protocols and structured validation workflows outlined in this document provide a framework for researchers and forensic units to objectively demonstrate the reliability of their methods, understand their limitations, and establish realistic error rates. By rigorously applying these principles, the forensic science community can strengthen the scientific foundation of its contributions to the criminal justice system, ensuring that findings presented in court are both robust and transparent regarding their inherent uncertainties.

The admissibility of expert testimony in U.S. courts hinges primarily on two evidentiary standards: the Frye standard and the Daubert standard. For researchers and forensic scientists conducting method validation, understanding the role of error rate analysis within these legal frameworks is imperative. The Frye standard, originating from Frye v. United States (1923), requires that expert testimony be based on principles "generally accepted" by the relevant scientific community [8] [9]. In contrast, the Daubert standard, established in Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993), provides a multi-factor test for assessing the reliability and relevance of expert testimony, with explicit consideration of a technique's known or potential error rate [10] [8]. This article details the distinct requirements for error rate measurement under each standard and provides specialized protocols for forensic method validation research.

Comparative Analysis of Legal Standards

Key Characteristics and Error Rate Requirements

Table 1: Comparative Analysis of Frye and Daubert Standards

| Feature | Frye Standard | Daubert Standard |

|---|---|---|

| Originating Case | Frye v. United States (1923) [8] | Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993) [10] |

| Primary Focus | General acceptance within relevant scientific community [9] [11] | Reliability and relevance of methodology [10] [12] |

| Judicial Role | Limited gatekeeping; defers to scientific consensus [9] | Active gatekeeper; evaluates scientific validity [12] |

| Error Rate Consideration | Indirect (through general acceptance) [13] | Explicit factor (known or potential rate of error) [10] |

| Treatment of Novel Science | Excludes emerging techniques until widely accepted [11] | Potentially admits if methodologically reliable [11] |

| Error Rate Evidence Required | Documentation of community standards and practices [13] | Quantitative error rates with confidence intervals [10] [13] |

Jurisdictional Application

The choice between Frye and Daubert standards depends on jurisdiction. Federal courts and a supermajority of states follow Daubert, while several states including California, Illinois, and New York continue to apply Frye or hybrid approaches [14] [11]. This jurisdictional variation necessitates that validation researchers understand both standards, particularly for evidence presented in multiple potential venues.

Error Rate Measurement Protocols

Daubert-Compliant Error Rate Quantification

Table 2: Daubert-Compliant Error Rate Measurement Protocol

| Protocol Component | Technical Specification | Validation Parameters |

|---|---|---|

| Study Design | Prospective, multi-center with independent replication [13] | Power analysis (β ≥ 0.8), blinding, controlled conditions |

| Sample Characteristics | Representative population (demographics, substrate types) [13] | Minimum n=200 per group, effect size calculation |

| Testing Conditions | Real-world simulation with casework-like materials [13] | Environmental controls, protocol standardization |

| Error Rate Calculation | False positive rate, false negative rate, overall accuracy [10] | 95% confidence intervals, statistical significance (p<0.05) |

| Uncertainty Quantification | Bayesian probability models, likelihood ratios [13] | Posterior probability distributions, calibration data |

| Comparative Analysis | Benchmarking against established methods [13] | Method comparison studies, equivalence testing |

Frye-Compliant Community Acceptance Documentation

For Frye standard compliance, researchers must demonstrate that error rate characterization follows generally accepted practices within the specific forensic discipline. The protocol includes:

- Systematic Literature Review: Comprehensive analysis of published error rates in peer-reviewed literature, documenting methodological consensus [13]

- Professional Society Surveys: Quantitative assessment of method acceptance through professionally administered surveys of relevant scientific communities [15]

- Proficiency Testing Analysis: Multi-laboratory studies demonstrating consistent performance across qualified laboratories [13]

- Standard Operating Procedure Documentation: Evidence that error rate determination follows established standards (e.g., ISO/IEC 17025) [13]

Experimental Workflow for Error Rate Validation

The following diagram illustrates the complete experimental workflow for forensic method validation that satisfies both Daubert and Frye standards:

Research Reagent Solutions for Validation Studies

Table 3: Essential Research Materials for Error Rate Validation

| Reagent/Material | Technical Specification | Application in Validation |

|---|---|---|

| Reference Standard Materials | NIST-traceable certified references | Method calibration and accuracy determination |

| Proficiency Test Panels | Blind-coded sample sets with known ground truth | False positive/negative rate calculation |

| Statistical Analysis Software | R, Python with scikit-learn, JMP | Error rate calculation with confidence intervals |

| Data Management System | LIMS with audit trail and version control | Chain of custody and data integrity maintenance |

| Quality Control Materials | Internal controls with established performance | Intra- and inter-laboratory reproducibility |

| Blinded Sample Sets | Casework-like materials with varying complexity | Real-world performance assessment |

Case Studies in Error Rate Application

fMRI-Based Lie Detection (Daubert Application)

In United States v. Semrau (2012), the court excluded fMRI-based lie detection evidence, noting the absence of known error rates outside laboratory settings and lack of uniform testing standards [13]. The failure to demonstrate reliability under real-world conditions highlights the necessity for ecological validity in error rate studies. Researchers must design studies that simulate forensic operational conditions rather than optimal laboratory environments.

Handwriting Analysis (Frye Application)

In Pettus v. United States (2012), the court admitted handwriting analysis testimony, finding general acceptance despite methodological criticisms [13]. This demonstrates that under Frye, the absence of precise error rates may not be fatal if the technique maintains broad community acceptance. Documentation should emphasize historical application and professional consensus rather than strict statistical validation.

Error rate analysis occupies fundamentally different positions in the Daubert and Frye standards. Daubert requires direct quantification of error rates through rigorous empirical testing, while Frye emphasizes community acceptance of error characterization practices. Forensic validation researchers should implement the comprehensive protocols outlined herein, including multi-center studies, appropriate statistical analysis, and thorough documentation of methodological consensus. This dual-path approach ensures forensic methods meet admissibility standards across jurisdictional boundaries, maintaining scientific rigor while satisfying legal imperatives.

Implementing Error Rate Protocols: From Theory to Practical Application

Forensic science validation is a critical process for ensuring that analytical methods are technically sound and produce robust, defensible results. The core principles of reproducibility, transparency, and error rate awareness form the foundation of scientifically valid forensic practice. These principles are mandated for laboratories accredited under international standards such as ISO/IEC 17025, though the standard does not prescribe specific validation frameworks, creating a need for consistent approaches across disciplines [16]. The integration of these principles ensures that forensic methods are fit for purpose and that their limitations are properly understood and communicated.

Recent shifts in forensic science emphasize a data-driven paradigm that prioritizes methods which are transparent, reproducible, intrinsically resistant to cognitive bias, and use the logically correct framework for evidence interpretation [17]. This paradigm aligns with the requirements of the new international standard ISO 21043, which provides requirements and recommendations designed to ensure the quality of the entire forensic process [17]. Within this context, proper validation becomes essential for demonstrating that methods meet the rigorous standards expected in modern forensic practice.

Core Principles and Their Theoretical Foundations

The Triad of Fundamental Principles

The validation of forensic methods rests on three interconnected principles that together ensure scientific reliability and legal admissibility. These principles provide the theoretical foundation for developing, implementing, and evaluating forensic methodologies across disciplines.

Reproducibility: This principle requires that forensic methods yield consistent results when repeated under the same conditions. Reproducibility demonstrates that findings are not random or analyst-dependent but are inherent properties of the method itself. It encompasses both repeatability (same conditions, same analyst) and reproducibility (different conditions, different analysts). The forensic-data-science paradigm specifically emphasizes the use of methods that are transparent and reproducible as a core requirement for scientific validity [17].

Transparency: Transparent methodologies ensure that all procedures, data, and decision-making processes are documented and open to scrutiny. The scientific and forensic communities widely endorse transparency as a core principle and fundamental obligation of forensic science reporting [18]. According to Elliott's taxonomy, transparency involves disclosing information about the scientist's authority, compliance, basis, justification, validity, disagreements, and context, providing multiple dimensions of openness for various stakeholders in the justice system [18].

Error Rate Awareness: Understanding and quantifying the limitations and potential error sources of a method is essential for proper interpretation of forensic results. Determination of reliability requires consideration of both method conformance and method performance [19]. Error rates alone do not adequately characterize method performance for non-binary conclusion scales, requiring more nuanced approaches to understanding and communicating methodological limitations [19].

Interrelationship of Principles

These three principles function as an interdependent system rather than isolated requirements. Transparency enables proper assessment of reproducibility by making methodologies and data available for independent verification. Error rate awareness depends on both reproducibility and transparency, as reliable error estimation requires multiple transparently reported trials. Together, they create a foundation for scientifically defensible forensic validation that meets legal and scientific standards [20] [16].

Table 1: Core Principles and Their Functions in Forensic Validation

| Principle | Primary Function | Key Components |

|---|---|---|

| Reproducibility | Ensures consistency and reliability of results | Repeatability, Replicability, Method robustness |

| Transparency | Enables scrutiny and verification of processes | Documentation openness, Procedure disclosure, Decision pathway clarity |

| Error Rate Awareness | Quantifies and communicates methodological limitations | Empirical validation, Performance characterization, Limitations communication |

Experimental Protocols for Validation Studies

Protocol for Method Reproducibility Assessment

Establishing reproducibility requires rigorous experimental design and statistical analysis. The following protocol provides a framework for assessing the reproducibility of forensic methods across multiple laboratories and conditions.

Objective: To determine whether a forensic method produces consistent results when performed by different analysts, using different instruments, across different laboratories, and over time.

Materials and Equipment: Standardized reference materials, calibrated instrumentation, controlled environmental conditions, documented procedures, data recording systems.

Procedure:

- Select a minimum of three independent analysts with appropriate qualifications and training.

- Prepare identical sets of test samples covering the method's intended scope, including known positives, known negatives, and borderline cases.

- Each analyst performs the method independently following the standardized procedure.

- Record all raw data, observations, and intermediate results.

- Repeat the analysis on multiple days to assess temporal variation.

- If applicable, conduct testing across multiple laboratory sites.

Data Analysis:

- Calculate concordance rates between analysts using percentage agreement or Cohen's kappa for categorical data.

- For continuous data, compute intra-class correlation coefficients (ICC) to assess consistency.

- Use analysis of variance (ANOVA) to partition sources of variation (between-analyst, between-day, between-site).

- Establish reproducibility criteria based on method requirements and stakeholder needs.

Interpretation: Methods demonstrating ≥90% agreement or ICC ≥0.9 between independent analysts are generally considered to have acceptable reproducibility for qualitative forensic methods. Quantitative methods may require more stringent criteria based on intended application.

Protocol for Transparency Documentation

Transparency in forensic validation requires systematic documentation of all methodological details and decision processes. This protocol ensures comprehensive transparency throughout the validation process.

Objective: To create complete documentation that enables independent evaluation and verification of forensic methods and results.

Materials and Equipment: Document control system, version control protocol, standardized templates, data management platform.

Procedure:

- Document method development history, including rationale for procedural choices.

- Record complete methodological parameters with justifications for each selection.

- Maintain raw data in accessible formats with appropriate metadata.

- Document all quality control measures and acceptance criteria.

- Record any deviations from protocols with explanations and impact assessments.

- Create comprehensive validation reports including all experimental data.

Data Analysis:

- Implement the Elliott transparency taxonomy, addressing authority, compliance, basis, justification, validity, disagreements, and context [18].

- Use transparency assessment checklists to ensure all required elements are documented.

- Solicit external review to identify documentation gaps.

Interpretation: Documentation should enable competent professionals to understand, evaluate, and replicate the method without additional information. Transparency is achieved when all relevant information is accessible to appropriate stakeholders.

Protocol for Error Rate Characterization

Error rate characterization requires careful experimental design that reflects real-world forensic scenarios. This protocol addresses both traditional error rate calculation and modern approaches to understanding method performance.

Objective: To empirically characterize the performance and limitations of a forensic method, including its error rates, inconclusive rates, and factors affecting reliability.

Materials and Equipment: Representative sample sets, blinded testing materials, data collection forms, statistical analysis software.

Procedure:

- Develop test samples that represent the range of evidence encountered in casework, including challenging samples.

- Implement a blinded testing protocol to prevent analyst bias.

- Use a sufficiently large sample size to provide statistical power for error rate estimation.

- Include known ground truth samples to distinguish correct from incorrect results.

- Record all results, including inconclusive decisions, with detailed observations.

- Vary sample quality and characteristics to assess performance under different conditions.

Data Analysis:

- Calculate traditional error rates (false positive, false negative) where appropriate.

- For methods with non-binary conclusions, use more complete performance summaries that include rates of different conclusion types across sample types [19] [21].

- Analyze the relationship between sample characteristics and method performance.

- Evaluate both method performance (discriminatory capacity) and method conformance (adherence to procedures) as distinct concepts [19].

Interpretation: Report error rates with confidence intervals and contextual factors affecting performance. For methods producing inconclusive results, distinguish between appropriate inconclusives (due to evidence limitations) and inappropriate ones (due to method or analyst failure) [21]. Focus on providing comprehensive performance data rather than simplified error rates.

Visualization of Forensic Validation Workflows

Validation Principle Interrelationship Diagram

Method Validation Decision Pathway

Quantitative Framework for Validation Metrics

A robust quantitative framework is essential for objectively assessing forensic validation parameters. The following tables provide standardized metrics for evaluating the core principles across different forensic disciplines.

Table 2: Reproducibility Assessment Metrics Across Forensic Disciplines

| Discipline | Sample Size Minimum | Acceptable Concordance Rate | Statistical Measure | Key Considerations |

|---|---|---|---|---|

| DNA Analysis | 30 replicates | ≥95% | Cohen's Kappa ≥0.8 | Contamination controls, stochastic effects |

| Toxicology | 20 replicates | ≥90% | ICC ≥0.9 | Matrix effects, calibration curves |

| Digital Forensics | 15 replicates | 100% | Percentage agreement | Tool verification, hash validation |

| Pattern Evidence | 25 blinded pairs | ≥85% | Cohen's Kappa ≥0.7 | Representative samples, difficulty variation |

| Chemical Analysis | 18 replicates | ≥90% | ICC ≥0.85 | Reference materials, instrument calibration |

Table 3: Error Rate Characterization Framework

| Performance Metric | Calculation | Interpretation | Casework Relevance |

|---|---|---|---|

| False Positive Rate | FP / (FP + TN) | Proportion of true negatives incorrectly identified as positives | Informs about wrongful inclusion risk |

| False Negative Rate | FN / (FN + TP) | Proportion of true positives incorrectly identified as negatives | Informs about missed detection risk |

| Inconclusive Rate | I / Total Cases | Proportion of cases where no definitive conclusion reached | Distinguish appropriate vs. inappropriate inconclusives [21] |

| Method Conformance Rate | Conforming analyses / Total analyses | Measures adherence to defined procedures | Assesses implementation quality [19] |

| Discriminatory Power | Separation between known groups | Ability to distinguish between relevant conditions | Fundamental method performance measure |

The Researcher's Toolkit: Essential Materials and Reagents

Implementation of forensic validation protocols requires specific materials, reagents, and reference standards. The following toolkit details essential components for conducting validation studies across forensic disciplines.

Table 4: Essential Research Reagent Solutions for Forensic Validation

| Item | Function | Application Examples | Validation Role |

|---|---|---|---|

| Certified Reference Materials | Provides ground truth for method calibration | Drug standards, DNA quantitation standards, controlled substances | Establishes accuracy and precision benchmarks |

| Quality Control Samples | Monitors method performance over time | Internal quality control materials, proficiency test materials | Assesses long-term reproducibility |

| Blinded Test Sets | Enables objective performance assessment | Mock case samples, known and unknown samples | Eliminates bias in error rate studies |

| Documentation Templates | Standardizes validation documentation | Validation protocols, data recording forms, report templates | Ensures transparency and completeness |

| Statistical Analysis Software | Performs quantitative validation calculations | R, Python, specialized forensic software | Enables rigorous data analysis and error rate calculation |

| Instrument Calibration Standards | Verifies instrument performance | Mass spec calibration standards, optical standards | Ensures reproducible instrument responses |

Implementation Considerations and Challenges

Implementing robust validation protocols presents several practical challenges that require strategic approaches. Understanding these challenges is essential for developing effective validation frameworks that meet both scientific and legal requirements.

Inconclusive Decision Management: Inconclusive results present particular challenges for error rate calculation and interpretation. Rather than being simply "correct" or "incorrect," inconclusive decisions can be either appropriate or inappropriate given the evidence quality and methodological limitations [21]. Validation studies must therefore distinguish between inconclusives that result from legitimate evidence limitations versus those resulting from methodological failures, requiring nuanced performance assessment frameworks.

Method Conformance vs. Performance: A critical distinction in modern forensic validation separates method conformance (whether analysts adhere to defined procedures) from method performance (the inherent discriminatory capacity of the method) [19]. Both dimensions are necessary for determining reliability, yet they address different aspects of validation. Method conformance relates to implementation quality, while method performance reflects fundamental capability, requiring separate but complementary validation approaches.

Transparency Implementation: While transparency is widely endorsed as a core principle, its implementation remains challenging due to ambiguous definitions and multiple stakeholder needs. Effective transparency requires disclosing information across seven dimensions: authority, compliance, basis, justification, validity, disagreements, and context [18]. This multidimensional challenge requires balancing competing demands through standardized templates coupled with ongoing collaboration among forensic scientists, legal stakeholders, and institutional bodies.

Data Quality Requirements: Validation of increasingly sophisticated forensic methods, particularly those incorporating artificial intelligence, requires large volumes of high-quality, representative data [22]. Such data collection can be expensive and labor-intensive, creating resource challenges. Additionally, privacy concerns with sensitive forensic data may limit data availability, requiring careful consideration of data governance and protection strategies during validation.

Application Notes on Error Rate Measurement

Error rate measurement is a critical process for quantifying the accuracy and reliability of a system or method. The following table summarizes the core concepts, contexts, and measurement approaches for error rates across different fields, illustrating the universal principle of comparing observed errors against total events [23] [24].

Table 1: Error Rate Measurement Frameworks Across Disciplines

| Component | Definition & Context | Measurement Methodology & Formula |

|---|---|---|

| Network Error Rate | Quantifies the frequency of errors in data transmission across a computer network [24]. | Calculated by comparing erroneous data packets to the total number transmitted over a specific period [24].Formula: (Number of Erroneous Packets / Total Number of Packets Transmitted) × 100% [24]. |

| Payment Error Rate (PERM) | Measures the national improper payment rate for U.S. Medicaid and CHIP programs, as legally required [23]. | Uses a stratified random sampling of payments from each state. The improper payment rate is estimated by reviewing the samples and extrapolating the findings to the entire program [23]. |

| General Tool/Method Error Rate | The frequency of incorrect outcomes or decisions produced by a tool, method, or analytical procedure. | The fundamental calculation involves dividing the number of incorrect results by the total number of tests or analyses performed.Conceptual Formula: (Number of Incorrect Results / Total Number of Tests) × 100%. |

Experimental Protocol for Method Error Rate Determination

This protocol provides a detailed methodology for empirically determining the error rate of an analytical method, which is essential for forensic method validation.

Objective: To quantify the false positive and false negative rates of a defined analytical method under controlled conditions.

Materials and Reagents:

- Reference Standards: Certified reference materials (CRMs) or known positive/negative controls with validated purity.

- Calibrants: Solutions of known concentration for instrument calibration.

- Blanks: Appropriate matrix blanks to identify background interference or contamination.

- Sample Set: A blinded panel of samples with known ground truth, including true positives, true negatives, and challenging samples near the method's limit of detection.

Procedure:

- Method Definition and Training: Precisely document all steps of the standard operating procedure (SOP). Ensure all personnel are trained and competent in executing the method.

- Instrument Calibration: Calibrate all instruments and equipment according to manufacturer specifications and SOPs using the provided calibrants.

- Sample Preparation: Process the blinded sample panel in a randomized order to minimize systematic bias. Include reference standards and blanks within each batch.

- Data Acquisition: Run the prepared samples through the analytical instrument or process as defined by the method.

- Data Analysis and Interpretation: Analyze the raw data according to the established criteria. Record all results and interpretations without unblinding the sample identities.

- Result Comparison and Tabulation: Unblind the sample panel. Compare the method's results against the known ground truth for each sample.

- Error Rate Calculation:

- False Positive Rate (FPR): Calculate as

(Number of False Positives / Total Number of True Negatives) × 100%. - False Negative Rate (FNR): Calculate as

(Number of False Negatives / Total Number of True Positives) × 100%. - Overall Error Rate: Calculate as

((False Positives + False Negatives) / Total Number of Samples) × 100%.

- False Positive Rate (FPR): Calculate as

Visualization of the Validation Workflow

The following diagram outlines the logical workflow for a comprehensive tool, method, and analysis validation process, from initial definition to final reporting.

Research Reagent Solutions for Validation Studies

The following table details key reagents and materials essential for conducting robust and reliable validation experiments.

Table 2: Essential Research Reagents for Method Validation

| Reagent / Material | Primary Function in Validation |

|---|---|

| Certified Reference Materials (CRMs) | Serves as the gold standard with traceable and certified properties to establish method accuracy, calibrate instruments, and act as positive controls [25]. |

| Matrix-Matched Calibrants | Calibration standards prepared in the same sample matrix (e.g., blood, soil) as the test samples to correct for matrix effects and improve quantitative accuracy. |

| Internal Standards (IS) | A known compound added in a constant amount to all samples and standards to correct for variability in sample preparation and instrument analysis. |

| Positive & Negative Controls | Used in every experimental run to monitor performance, detect contamination (negative control), and confirm the method is functioning correctly (positive control). |

Validating forensic methods requires robust frameworks for measuring performance and quantifying error. Two foundational approaches for this are proficiency testing and black-box studies. Proficiency testing evaluates the performance of individual practitioners or laboratories by having them analyze known samples, providing direct insight into operational competency and the reliable application of methods [26]. In contrast, black-box studies assess the validity of the forensic methods themselves by measuring the accuracy and reliability of examiner conclusions without revealing the internal decision-making processes, thus providing essential data on foundational method performance [27]. Together, these approaches form a critical evidence-based foundation for understanding and managing error within forensic science, helping to exclude the innocent from investigation and prevent wrongful convictions [27].

The recent Forensic Science Strategic Research Plan from the National Institute of Justice explicitly prioritizes "Measurement of the accuracy and reliability of forensic examinations (e.g., black box studies)" as a core objective for foundational research [27]. This highlights the growing recognition that systematic error rate measurement is not optional but essential for demonstrating the scientific validity of forensic disciplines. Furthermore, understanding 'error' is increasingly viewed not merely as a negative outcome to be avoided, but as a potent tool for continuous improvement and accountability, ultimately enhancing the reliability of forensic sciences and public trust [28].

Proficiency Testing: Concepts and Applications

Proficiency Testing (PT) is a quality assurance process that allows laboratories to monitor their analytical performance by comparing their results against established standards or peer groups. These programs are designed to be clinically relevant and are continuously reviewed by expert scientific committees to ensure they reflect real-world operational challenges [26]. Effective PT programs provide both individual evaluations and participant summary reports that deliver actionable insights, enabling laboratories to verify their analytical accuracy, assess staff competency, and demonstrate compliance with accreditation requirements [26].

The College of American Pathologists (CAP), for instance, offers a wide-ranging PT/External Quality Assessment (EQA) portfolio that incorporates both routine and esoteric programs across clinical and anatomic pathology. These programs allow laboratories to compare performance with some of the industry's largest peer groups, reinforcing confidence in results and providing educational discussions that enhance staff knowledge and competence [26]. For disciplines where no formal PT exists, alternative approaches such as Sample Exchange Registries connect laboratories to facilitate mutual performance assessment [26].

Strategic Importance in Research Priorities

The NIJ's Forensic Science Strategic Research Plan emphasizes proficiency testing as a strategic priority, specifically calling for "research regarding proficiency tests that reflect complexity and workflows" of actual casework [27]. This reflects an understanding that effective PT must mirror the complexity of real evidence rather than utilizing idealized samples. Research objectives include optimizing analytical workflows, evaluating the effectiveness of communicating reports and testimony, and implementing new technologies with appropriate cost-benefit analyses [27]. These priorities acknowledge that procedural aspects of forensic science—how methods are implemented and results communicated—are as critical as the analytical validity of the methods themselves.

Black-Box Studies: Design and Implementation

Black-box studies are designed to measure the accuracy and reliability of forensic examinations by presenting practitioners with evidence samples of known origin and recording their conclusions without observing their internal decision processes [27]. The term "black-box" here refers to the treatment of the examiner's cognitive and analytical processes as opaque—the focus is squarely on input-output relationships: what conclusions are reached from specific evidence samples. This methodology allows researchers to quantify performance metrics including true positive rates, false positive rates, true negative rates, and false negative rates across a representative sample of practitioners and case scenarios.

A critical insight from recent literature is that the false negative rate has been systematically overlooked in many forensic disciplines [29]. While recent reforms have focused predominantly on reducing false positives, the risk of false negatives—where a true source is incorrectly excluded—receives little empirical scrutiny despite its potential to undermine forensic integrity [29]. This is particularly problematic in cases involving a closed pool of suspects, where eliminations can function as de facto identifications, introducing serious risk of error with potentially severe consequences [29].

The Multiple Comparisons Problem

A significant challenge in interpreting black-box study results emerges from the multiple comparisons problem. In many forensic evaluations, a single conclusion relies on numerous comparisons, either implicitly or explicitly [30]. This problem is particularly acute in disciplines like toolmark examination, where matching a cut wire to a wire-cutting tool requires comparing multiple surfaces and alignments.

Research demonstrates that the family-wise false discovery rate increases dramatically with the number of comparisons performed. As shown in Table 1, even with a seemingly low single-comparison false discovery rate (FDR) of 0.70%, conducting just 14 comparisons yields a family-wise error rate exceeding 10% [30]. This has profound implications for forensic practice, particularly with growing database sizes and automated comparison algorithms that perform thousands of implicit comparisons.

Table 1: Impact of Multiple Comparisons on Family-Wise False Discovery Rate

| Single-Comparison FDR | 10 Comparisons | 100 Comparisons | 1000 Comparisons | Max Comparisons for ≤10% Family-Wise FDR |

|---|---|---|---|---|

| 7.24% [27] | 52.8% | 99.9% | 100.0% | 1 |

| 2.00% (Pooled) | 18.3% | 86.7% | 100.0% | 5 |

| 0.70% [31] | 6.8% | 50.7% | 99.9% | 14 |

| 0.45% [28] | 4.5% | 36.6% | 98.9% | 23 |

| 0.10% | 1.0% | 9.5% | 63.2% | 105 |

| 0.01% | 0.1% | 1.0% | 9.5% | 1053 |

Experimental Protocols for Error Rate Studies

Protocol 1: Proficiency Testing for Forensic Laboratories

Objective: To assess laboratory performance in analyzing specific evidence types and ensure compliance with quality standards.

Materials:

- Proficiency test samples with predetermined characteristics

- Standardized reporting forms

- Reference materials for calibration

- Normal laboratory equipment and reagents

Procedure:

- Program Enrollment: Select PT programs that mirror the laboratory's casework complexity and evidence types [26].

- Sample Reception and Tracking: Document condition of PT samples upon receipt and incorporate into laboratory workflow using unique identifiers to maintain blinding.

- Analysis: Process samples following standard operating procedures identical to those used for casework.

- Reporting: Complete all result submissions by specified deadlines using standardized reporting formats.

- Performance Assessment: Compare laboratory results with reference values and peer group performance.

- Corrective Action: Implement root cause analysis for any identified deficiencies and document corrective actions.

Validation Parameters: Analytical accuracy, timeliness of reporting, adherence to procedures, and peer group comparison.

Protocol 2: Black-Box Study for Method Validation

Objective: To measure the accuracy and reliability of a forensic comparison method and estimate error rates.

Materials:

- Test sets with known ground truth (known source pairs and non-matching pairs)

- Randomized presentation platform

- Data collection instruments

- Multiple participating examiners representing varied experience levels

Procedure:

- Study Design: Create an open-set test design containing both matching and non-matching specimens reflective of casework complexity.

- Participant Recruitment: Engage examiners across multiple laboratories, ensuring appropriate representation of expertise levels.

- Blinding: Implement complete blinding to prevent participants from knowing they are in a study or having access to reference data.

- Task Administration: Present evidence comparisons in randomized order to minimize contextual bias.

- Data Collection: Record conclusions using standardized scales that include identification, exclusion, and inconclusive options.

- Data Analysis: Calculate false positive, false negative, and overall error rates with confidence intervals.

Validation Parameters: Sensitivity, specificity, reliability, and robustness across different evidence types and examiner experience levels.

Protocol 3: Validation of Algorithmic Forensic Tools

Objective: To validate automated comparison algorithms and quantify their performance relative to human examiners.

Materials:

- Reference database of known specimens

- Automated comparison software

- High-performance computing resources

- Standardized evaluation metrics

Procedure:

- Dataset Curation: Compile representative datasets with verified ground truth.

- Algorithm Configuration: Implement algorithm with predetermined similarity thresholds and comparison parameters.

- Testing: Execute pairwise comparisons across the dataset, recording similarity scores and proposed conclusions.

- Multiple Comparisons Adjustment: Account for implicit multiple comparisons in similarity search algorithms [30].

- Performance Benchmarking: Compare algorithm performance against human examiner performance from black-box studies.

- Validation Reporting: Document accuracy metrics, computational efficiency, and limitations.

Validation Parameters: Discrimination accuracy, computational efficiency, scalability, and resistance to database size effects.

Visualization of Method Validation Pathways

Method Validation Pathway

The Multiple Comparisons Effect in Forensic Practice

Multiple Comparisons Effect

Research Reagent Solutions for Forensic Validation

Table 2: Essential Materials for Proficiency Testing and Black-Box Studies

| Item | Function | Application Examples |

|---|---|---|

| Reference Materials | Provide ground truth for validation studies | Certified reference standards for controlled substances; Known-source toolmarks and firearms [27] |

| Proficiency Test Samples | Assess laboratory performance under controlled conditions | Synthetic biological fluid mixtures; Fabricated toolmark specimens with known source [26] |

| Standardized Reporting Forms | Ensure consistent data collection across participants | Structured conclusion scales; Digital result submission platforms [26] |

| Blinded Test Sets | Prevent bias in black-box studies | Curated image sets for pattern evidence; Physical evidence subsets with verified origins [27] [29] |

| Statistical Analysis Tools | Calculate error rates and confidence intervals | Software for ROC analysis; Multiple comparisons correction algorithms [30] |

| Database Systems | Support reference collections and evidence tracking | Laboratory Information Management Systems (LIMS); Searchable forensic reference databases [27] |

Policy Implications and Future Directions

The findings from proficiency testing and black-box studies have profound implications for forensic science policy and practice. Current professional guidelines and major government reports have largely focused on false positives while neglecting the empirical validation of eliminations and false negatives [29]. This asymmetric approach to error creates significant gaps in forensic reliability that must be addressed through five key policy reforms:

First, balanced error rate reporting that includes both false positive and false negative rates must become standard practice in forensic validation studies [29]. Second, intuitive judgments used for eliminations must be subjected to the same empirical validation as identifications. Third, clear warnings should be developed against using eliminations to infer guilt in closed-pool scenarios where they function as de facto identifications. Fourth, multiple comparison effects must be quantified and controlled in both manual examinations and algorithmic searches [30]. Finally, post-market surveillance models, similar to those proposed for black-box medical algorithms, should be implemented for forensic methods to enable continuous validation and improvement [31].

The future of forensic validation lies in integrated approaches that combine rigorous black-box studies of foundational validity with ongoing proficiency testing that reflects real-world complexity. As forensic science continues to integrate automated algorithms and artificial intelligence, the multiple comparisons problem will intensify, necessitating sophisticated statistical controls and transparent error reporting [30]. Only through this comprehensive, evidence-based approach to performance measurement can forensic science fulfill its critical role in the justice system.

The 2008 investigation into the death of Caylee Anthony and the subsequent trial of her mother, Casey Anthony, represents a watershed moment for digital forensics. The case underscored the discipline's potential to reconstruct events from digital artifacts while simultaneously exposing a critical vulnerability: the lack of standardized validation and error rate measurement for digital forensic tools and methods. A central pillar of the prosecution's case was evidence of Google searches for "chloroform" recovered from a family computer, intended to demonstrate premeditation [32]. The integrity of this evidence was severely challenged due to significant discrepancies in the output of different forensic tools analyzing the same data [32]. This case study analyzes the validation failure that occurred and provides researchers and practitioners with a framework of protocols to quantify error rates and ensure the reliability of digital forensic methods, a necessity for any evidence presented in a legal or scientific context.

Case Background: Digital Evidence inFlorida v. Anthony

Digital evidence featured prominently in the State's theory of premeditation. During a keyword search of the Anthony family computer, a forensic examiner discovered a database file from the Mozilla Firefox browser (a "Mork" database) in unallocated space, which contained a record of a visit to a website about "chloroform" [32].

- Initial Analysis: The initial examination used a tool (NetAnalysis v1.37) which recovered the history record and indicated a single visit to the chloroform-related webpage [32].

- Secondary Analysis & The Discrepancy: A second forensic tool was later used. Initially, this tool failed to parse the Mork database. After the developer made unspecified corrections, the tool recovered the data but presented a drastically different interpretation: it associated the "chloroform" record with a different URL (MySpace.com) and reported a visit count of 84 [32]. This conflation suggested a pattern of extensive research, which the defense argued misled the jury [32].

The court proceedings were also governed by legal frameworks that impacted the presentation of digital evidence. Florida's liberal discovery rules ensured the defense had access to all forensic images and reports [33]. Furthermore, the "Rule of Sequestration" (Florida Statute 90.616) was invoked, preventing witnesses from discussing the case or each other's testimony outside the courtroom. This rule limited the ability of the digital forensic team to collaborate or address the evolving testimony in real-time [33].

Analysis of a Validation Failure

The core of the validation failure lies in the inconsistent processing of the non-standard Mork database format.

- The Mork Database Challenge: The Mork format, used by early versions of Firefox, was a plain-text database known to be difficult to parse correctly. Its inefficient and complex structure made forensic interpretation prone to error without a thoroughly validated tool [32].

- Tool Discrepancy Explained: The critical discrepancy in the "visit count" stemmed from how each tool handled the database's internal pointers. The record for the chloroform-related webpage did not contain a specific "VisitCount" field. According to the Mork structure, the absence of this field implies a default value of 1. The first tool correctly interpreted this. The second tool, however, incorrectly associated the "VisitCount" of 84 from a separate MySpace.com record with the chloroform record, creating a false and highly prejudicial pattern of behavior [32].

Table 1: Summary of Digital Evidence Discrepancies in the Casey Anthony Trial

| Forensic Aspect | Tool A (NetAnalysis) | Tool B (Unnamed) | Nature of Discrepancy |

|---|---|---|---|

| Chloroform Record Visit Count | Single Visit (1) | 84 visits | Tool B incorrectly associated a visit count from a different record (MySpace). |

| Chloroform Record Association | Correctly linked to its own URL | Incorrectly linked to a MySpace URL | Tool B misattributed metadata between different database entries. |

| Total Records Recovered | 8,877 | 8,557 | Tool B failed to recover 320 records present in the dataset [32]. |

| Mork Format Parsing | Successful initial parsing | Required developer intervention post-discovery | Tool B lacked robust, pre-validated support for the complex Mork format. |

Proposed Experimental Protocols for Error Rate Measurement

Inspired by scientific guidelines for evaluating forensic validity [34], the following protocols provide a framework for the empirical measurement of digital forensic tool performance.

Protocol 1: Black Box Tool Validation for File Format Parsing

This protocol is designed to measure the accuracy and error rates of forensic tools when processing specific digital file formats.

- Objective: To determine the false positive and false negative rates of digital forensic tools in parsing known, complex file formats (e.g., Mork, SQLite, custom databases).

- Materials:

- Test Corpus: A ground-truthed dataset containing a known number of records across a variety of file formats.

- Toolset: The digital forensic tools to be validated.

- Analysis Workstation: A clean, standardized computer system for tool installation.

- Procedure:

- Step 1: Generate a controlled test corpus with a verified number of data records (e.g., 10,000 browser history entries with known URLs and visit counts).

- Step 2: Process the test corpus using each tool in the toolset.

- Step 3: Compare the tool's output against the ground-truthed dataset.

- Step 4: Classify and count discrepancies:

- False Negative: A record present in the ground truth that the tool failed to recover.

- False Positive: A record reported by the tool that does not exist in the ground truth.

- Misattribution Error: A record that was recovered but with incorrect associated metadata (e.g., wrong visit count or URL).

- Data Analysis:

- Calculate error rates using the formulas in Table 2 below.

Table 2: Error Rate Calculations for Digital Forensic Tool Validation

| Error Metric | Calculation Formula | Application in the Casey Anthony Case |

|---|---|---|

| False Negative Rate | (Number of False Negatives / Total Actual Positives) * 100 |

Tool B's failure to recover 320 records resulted in a false negative rate of approximately 3.6% for the overall dataset [32]. |

| False Positive Rate | (Number of False Positives / Total Reported Positives) * 100 |

The creation of the "84 visits" to a chloroform site would be classified as a misattribution error, a specific type of false positive. |

| Misattribution Rate | (Number of Misattributed Records / Total Correctly Recovered Records) * 100 |

The central failure was the misattribution of the visit count, highlighting the need for this specific metric. |

Protocol 2: Inter-Tool Reliability Testing

This protocol assesses the consistency of results across different tools and versions, directly addressing the scenario encountered in the case study.

- Objective: To measure the consensus and divergence in output between multiple forensic tools analyzing the same evidence dataset.

- Procedure:

- Step 1: Select a diverse set of forensic tools designed for the same purpose (e.g., browser history parsing).

- Step 2: Run each tool against a standardized, complex evidence image.

- Step 3: Extract key data points from each tool's output (e.g., list of URLs, timestamps, visit counts).

- Step 4: Perform a differential analysis to identify all points of disagreement in the recovered data.

- Data Analysis: Report the percentage of records where all tools agree, and catalog all instances of disagreement for root-cause analysis.

The workflow for implementing these validation protocols is outlined in the diagram below.

Digital Forensic Tool Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

For researchers developing or validating digital forensic methods, the following "reagents" are essential.

Table 3: Essential Materials for Digital Forensic Validation Research

| Research Reagent / Material | Function in Validation |

|---|---|

| Ground-Truthed Test Corpora | Provides the known data standard against which tool output is compared to calculate false positive/negative rates. |

| Forensic Tool Suites (Commercial & Open-Source) | The instruments under test; a diverse set is needed for inter-tool reliability studies. |

| Reference Data Sets (e.g., CFReDS) | Standardized, pre-built digital evidence images for controlled testing and tool comparison. |

| Scripting Frameworks (Python, PowerShell) | Automates the processing of large test corpora and the comparison of tool outputs against ground truth. |

| Statistical Analysis Software (R, Python libraries) | Calculates error rates, confidence intervals, and performs other statistical analyses on validation data. |

The Casey Anthony trial exemplifies that the output of a forensic tool is not synonymous with ground truth. Without rigorous, empirical validation and known error rates, digital evidence can be more misleading than informative. The proposed protocols for black-box testing and inter-tool reliability provide a pathway to the quantifiable error rates demanded by scientific standards and legal frameworks like Daubert [34].

Moving forward, the digital forensics community must adopt a culture of transparency and continuous validation. This includes:

- Mandatory Pre-Trial Validation: Tools must be validated against the specific file formats and data structures present in a case before evidence is presented in court.

- Epistemic Modesty: Expert witnesses must clearly communicate the limitations of their tools and the potential for error, much like the standards called for in other forensic disciplines [35].