Mastering Likelihood Ratio Calculation with Dirichlet-Multinomial Models: A Comprehensive Guide for Biomedical Researchers

This comprehensive guide explores likelihood ratio calculation within Dirichlet-multinomial (DM) models, addressing critical challenges in analyzing overdispersed multivariate count data prevalent in biomedical research.

Mastering Likelihood Ratio Calculation with Dirichlet-Multinomial Models: A Comprehensive Guide for Biomedical Researchers

Abstract

This comprehensive guide explores likelihood ratio calculation within Dirichlet-multinomial (DM) models, addressing critical challenges in analyzing overdispersed multivariate count data prevalent in biomedical research. Covering foundational theory to advanced applications, we detail how DM models extend multinomial frameworks to handle extra-multinomial variation in datasets like microbiome compositions, mutational signatures, and transcriptomics. The article provides practical methodologies for stable likelihood computation, Bayesian estimation techniques including Hamiltonian Monte Carlo and spike-and-slab variable selection, and validation approaches through simulation studies. Researchers will gain actionable insights for implementing these techniques to improve diagnostic test characterization, biomarker discovery, and clinical decision-making in drug development and precision medicine.

Understanding Dirichlet-Multinomial Foundations: From Multinomial Limitations to Overdispersed Data Solutions

The Challenge of Overdispersed Multivariate Count Data in Biomedical Research

In biomedical research, the accurate analysis of multivariate count data—where multiple count outcomes are recorded for each sample or subject—is crucial in areas such as genomics, microbiome studies, and mutational signature analysis. These data are characterized by their compositionality, where the total count per sample is fixed or constrained, and the scientific interest lies in the relative abundances across categories [1] [2]. A recurring statistical challenge with such data is overdispersion, where observed variability exceeds what standard probability models can explain. This phenomenon arises from various sources, including biological heterogeneity, technical artifacts, clumped sampling, or correlation between individual responses [3]. When unaccounted for, overdispersion leads to underestimated standard errors, inflated type I errors, and compromised reproducibility of scientific findings [3] [4].

The Dirichlet-multinomial (DM) model has emerged as a fundamental tool for addressing overdispersion in multivariate count data. As a compound distribution, it extends the standard multinomial by allowing its probability parameters to follow a Dirichlet distribution, thereby incorporating extra-multinomial variation [3] [2]. This application note details the implementation of DM-based models within a likelihood framework, providing experimental protocols and analytical workflows to help researchers overcome the challenges posed by overdispersed multivariate count data in biomedical studies.

Quantitative Comparison of Statistical Models for Overdispersed Count Data

The table below summarizes the characteristics and performance of common statistical models used for multivariate count data, highlighting their capacity to handle different correlation structures and overdispersion.

Table 1: Comparison of Regression Models for Multivariate Count Data

| Model | Correlation Structure | Handles Overdispersion? | Relative Performance (Type I Error Control) | Relative Performance (Power) |

|---|---|---|---|---|

| Multinomial (MN) | Negative only | No | Poor (Seriously inflated error) [2] | Good [2] |

| Dirichlet-Multinomial (DM) | Negative only | Yes | Moderate (Can be conservative) [2] | Good [2] |

| Negative Multinomial (NM) | Positive only | Yes | Poor (Inflated error) [2] | Good [2] |

| Generalized Dirichlet-Multinomial (GDM) | General (Positive & Negative) | Yes | Good (Well controlled) [2] | Good [2] |

| Poisson-Beta (PB) | Flexible | Yes | Superior power & narrower CIs reported [5] | Superior power reported [5] |

The limitations of the standard multinomial model are particularly evident in high-dimensional settings. Its restrictive mean-variance structure and inherent assumption of purely negative correlations between counts are often violated in real-world data [2]. For instance, in RNA-seq data analysis, using a multinomial-logit model for exon set counts can lead to seriously inflated type I errors when testing null predictors [2]. More flexible models like the Dirichlet-multinomial and its extensions, including the Generalized Dirichlet-multinomial (GDM) and Poisson-Beta (PB) models, provide robust alternatives that can adapt to various correlation patterns and effectively account for overdispersion [5] [2].

Experimental Protocol for DM Model Application in Mutational Signature Analysis

Background and Objective

Mutational signature analysis characterizes the imprints of diverse mutational processes active during tumour evolution. The data consists of multivariate counts of mutations classified into different signature categories, with exposures (abundances) measured per sample [1]. The objective is to determine differential abundance of mutational signatures between conditions (e.g., clonal vs. subclonal mutations) while accounting for within-patient correlations and between-signature dependencies [1].

Materials and Reagent Solutions

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function/Application |

|---|---|

| Whole-Genome Sequencing (WGS) Data | Primary data source for identifying mutations and their clonal status. |

| COSMIC Mutational Signatures Database | Reference database of known mutational signatures (e.g., SBS signatures) for annotation [1]. |

| Clonal Architecture Inference Tool | Software (e.g., consensus from multiple methods) to classify mutations as clonal or subclonal [1]. |

| Quadratic Programming Solver | Computational method for estimating signature exposures from observed mutation counts [1]. |

| R Statistical Software with CompSign Package | Implementation of the Dirichlet-multinomial mixed model for differential abundance testing [1]. |

Step-by-Step Procedure

Sample Preparation and Data Generation:

- Perform whole-genome sequencing on tumour samples according to established protocols.

- Identify somatic mutations (e.g., single-base substitutions) using a standardized bioinformatics pipeline.

Clonal Classification of Mutations:

Mutational Signature Exposure Estimation:

- For each sample and mutation group (clonal and subclonal), categorize mutations into the 96 trinucleotide contexts.

- Using a quadratic programming approach, decompose the observed mutational profile into a linear combination of COSMIC signatures [1].

- Extract the exposure matrix, where each element represents the count of mutations attributable to a specific signature in a given sample and group [1].

Statistical Modeling with Dirichlet-Multinomial Mixed Model:

- Model Specification: Implement a Dirichlet-multinomial mixed model with multivariate random effects to account for within-patient correlations and correlations between signatures. Include a group-specific dispersion parameter to accommodate different variability in clonal and subclonal groups [1].

- Parameter Estimation: Use the Laplace analytical approximation (LA) to evaluate the high-dimensional integrals induced by the random effect structure, ensuring computational feasibility [1].

- Hypothesis Testing: Test for fixed effects corresponding to the group (clonal vs. subclonal) to identify signatures with statistically significant differential abundance.

Interpretation and Validation:

- Identify mutational processes that contribute preferentially to clonal or subclonal mutations.

- Report signatures with significantly higher dispersion in the subclonal group, indicating higher inter-patient variability in later stages of tumour evolution [1].

- Biologically validate findings through literature review and experimental follow-up where feasible.

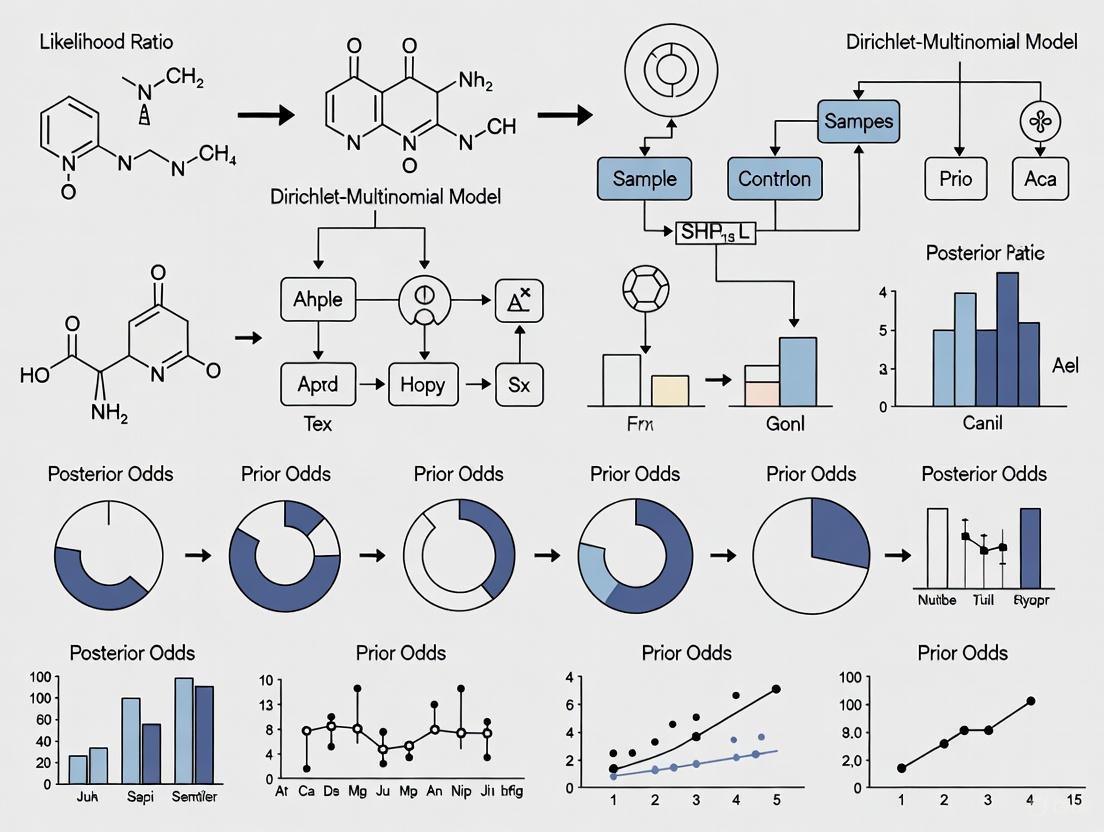

Figure 1: Experimental workflow for mutational signature differential abundance analysis using a Dirichlet-multinomial model.

Advanced Modeling Frameworks and Extensions

The Dirichlet-Multinomial Mixture and Extended Models

To address the limitation of the standard DM model, which imposes negative correlations only, several extended frameworks have been developed:

Extended Flexible Dirichlet-Multinomial (EFDM) Model: This structured DM mixture generalizes the standard DM by allowing for both positive and negative correlations between taxa [6]. Its mixture structure naturally accommodates excessive zeros common in microbiome data and provides explicit expressions for inter- and intraclass correlations, enhancing interpretability of complex microbial interactions [6].

Deep Dirichlet-Multinomial (DDM) Model: This approach uses a restricted mixture of DM distributions with an architecture resembling a deep learning hidden layer, providing enhanced flexibility to capture complex overdispersion patterns in high-dimensional settings [3].

Poisson-Beta (PB) Regression: As an alternative to negative binomial and zero-inflated models, the PB model offers superior flexibility for count data. It is a Poisson mixture where the underlying rate parameter follows a scaled Beta distribution [5]. While computationally challenging, recent advances expressing its density in terms of Kummer's function have enabled its implementation in multivariate regression frameworks, yielding narrower confidence intervals and superior statistical power compared to common alternatives [5].

Model Selection Workflow

The following diagram illustrates a logical pathway for selecting an appropriate model based on data characteristics and research objectives.

Figure 2: Model selection framework for overdispersed multivariate count data.

Implementation Considerations

Successful implementation of DM models and their extensions requires careful attention to several practical aspects:

Computational Complexity: The likelihood evaluation for advanced models like the Poisson-Beta distribution can be computationally intensive, requiring specialized algorithms such as recurrence relationships for Kummer's function to ensure feasible computation times [5].

Software Implementation: The GAMLSS (Generalized Additive Models for Location, Scale, and Shape) package in R provides a flexible platform for regression modeling with over 100 response distribution choices, including many suitable for count data [5]. This framework allows both mean and dispersion parameters to be modeled as functions of covariates.

Study Design: In high-dimensional settings, careful study design is crucial. This includes randomization of biospecimens to assay batches to avoid confounding, adequate sample size considerations that account for multiplicity, and clear distinction between biological and technical replicates [4].

Handling Missing Data: In longitudinal studies like Micro-Randomized Trials, missing data can introduce bias in parameter estimates. Preplanned strategies for minimizing and addressing missing data are essential for maintaining study validity [7].

The Dirichlet-multinomial model and its extended frameworks provide powerful statistical tools for addressing the pervasive challenge of overdispersion in multivariate count data from biomedical research. By moving beyond the restrictive assumptions of standard multinomial models, these approaches enable more accurate inference, better error control, and more reproducible findings in genomics, microbiome research, and mutational signature analysis. The experimental protocols and model selection framework presented here offer researchers practical guidance for implementing these methods in their investigations, ultimately supporting more robust and reliable scientific discoveries in precision medicine and public health.

Multinomial Distribution Limitations and Extra-Multinomial Variation

The multinomial distribution serves as a fundamental probability model for multivariate count data where observations are classified into multiple mutually exclusive categories. It generalizes the binomial distribution to more than two outcomes, modeling the probability of counts for each side of a k-sided die rolled n times [8]. For (n) independent trials, each leading to a success for exactly one of (k) categories with fixed probabilities (p1, p2, \ldots, pk) (where (\sum{i=1}^k pi = 1)), the multinomial distribution gives the probability of any particular combination of numbers of successes for the various categories [8]. The probability mass function (PMF) is defined as: [ f(x1, \ldots, xk; n, p1, \ldots, pk) = \frac{n!}{x1! \cdots xk!} p1^{x1} \cdots pk^{xk} ] for non-negative integers (x1, \ldots, xk) satisfying (\sum{i=1}^k x_i = n) [8].

Despite its widespread application, the multinomial distribution possesses significant limitations that restrict its utility for modeling real-world data, particularly in biomedical research. The most notable constraints include its inflexible variance structure, inherent negative correlations between categories, and inability to account for overdispersion—the situation where observed variance exceeds nominal variance [6]. These limitations become critically problematic when analyzing modern high-dimensional biological data such as microbiome sequencing counts and mutational signature exposures, where variability between samples often far exceeds what the standard multinomial model can capture [6] [1].

Key Limitations of the Standard Multinomial Model

Rigid Variance-Covariance Structure

The multinomial distribution imposes a fixed relationship between the mean and variance, with no separate parameter to control dispersion. This rigidity makes it unsuitable for real-world data exhibiting overdispersion.

Table 1: Key Properties and Limitations of the Multinomial Distribution

| Property | Mathematical Expression | Practical Implication |

|---|---|---|

| Mean | (\operatorname{E}(Xi) = npi) | Expected counts scale linearly with (n) and probabilities (p_i) |

| Variance | (\operatorname{Var}(Xi) = npi(1-p_i)) | Variance is always less than mean; no overdispersion allowed |

| Covariance | (\operatorname{Cov}(Xi,Xj) = -npipj) for (i \neq j) | Inherent negative correlations between categories |

| Correlation | (\rho(Xi,Xj) = -\sqrt{\frac{pipj}{(1-pi)(1-pj)}}) | Correlation structure completely determined by probabilities |

As shown in Table 1, the variance and covariance structure of the multinomial distribution is completely determined by the number of trials (n) and the category probabilities (p_i) [8]. This means that for given parameters, the variability in the data is fixed—an assumption frequently violated in practice. For instance, in microbiome data, the variability between biological replicates often exceeds what the multinomial variance would predict, even after accounting for technical variability [6].

Inherent Negative Correlations Between Categories

The multinomial distribution imposes negative correlations between all pairs of categories, as evidenced by the negative sign in the covariance formula [8]. This constraint arises because an increase in one category must be compensated by decreases in others due to the fixed sum constraint ((n)). In many real-world applications, this assumption is unrealistic. For example, in microbial ecology, certain taxa often co-occur (exhibiting positive correlations) due to shared environmental preferences or synergistic metabolic relationships [6]. The standard multinomial model cannot capture such positive associations, potentially leading to flawed ecological inferences.

Inability to Accommodate Extra-Multinomial Variation

The multinomial distribution assumes that the probability parameters (p_i) remain fixed across all trials and that all observations come from the same underlying population. In practice, however, heterogeneity often exists between samples or populations, leading to "extra-multinomial variation" or overdispersion [6] [9]. When this occurs, the variability in the observed counts exceeds what the multinomial model predicts, resulting in underestimated standard errors and potentially inflated Type I error rates in statistical tests. This limitation is particularly problematic in high-dimensional biological data analysis, where unmeasured covariates and biological heterogeneity contribute substantial additional variability [6] [1].

The Dirichlet-Multinomial Model: Addressing Multinomial Limitations

Model Formulation and Theoretical Foundation

The Dirichlet-multinomial (DM) model represents a fundamental extension to the standard multinomial that effectively addresses its overdispersion limitation. As a compound distribution, it arises by allowing the multinomial probability parameters to follow a Dirichlet distribution. Specifically, if [ (Y1, \ldots, YD) \mid \boldsymbol{\pi} \sim \text{Multinomial}(n, \pi1, \ldots, \piD) ] and [ \boldsymbol{\pi} \sim \text{Dirichlet}(\alpha1, \ldots, \alphaD) ] then the marginal distribution of the counts after integrating out (\boldsymbol{\pi}) is Dirichlet-multinomial [6] [1].

This hierarchical formulation provides a mechanism to account for population-level heterogeneity in the probability parameters. Whereas the standard multinomial assumes fixed (p_i), the DM model allows these probabilities to vary between samples according to the Dirichlet distribution, thereby accommodating the extra-multinomial variation commonly observed in real datasets [6].

Advantages Over the Standard Multinomial

The DM model provides several critical advantages that make it particularly suitable for analyzing complex biological data:

Explicit Overdispersion Parameter: The concentration parameters (\alpha_i) of the Dirichlet distribution control the degree of overdispersion, with smaller values corresponding to greater heterogeneity [6] [1].

Flexible Correlation Structure: While the standard multinomial imposes negative correlations, the DM model can accommodate both negative and positive correlations between taxa, enabling more realistic modeling of co-occurrence patterns in microbial communities [6].

Natural Handling of Compositional Data: The DM model appropriately handles compositional data (where counts represent relative abundances) by modeling the underlying proportions as random variables rather than fixed parameters [1].

Theoretical Properties: The DM distribution possesses well-characterized theoretical properties, including explicit expressions for inter- and intraclass correlations, providing a powerful tool for understanding complex interactions in high-dimensional data [6].

Table 2: Comparison of Multinomial and Dirichlet-Multinomial Properties

| Property | Multinomial | Dirichlet-Multinomial |

|---|---|---|

| Dispersion | Fixed relationship (underdispersed for real data) | Accommodates overdispersion |

| Variance | (\operatorname{Var}(Xi) = npi(1-p_i)) | (\operatorname{Var}(Xi) = npi(1-pi) \times \frac{n+\alpha0}{1+\alpha0}) where (\alpha0 = \sum \alpha_i) |

| Correlations | Always negative between categories | Can model both negative and positive correlations |

| Data Types | Homogeneous populations | Heterogeneous populations with extra variation |

| Application Fit | Often poor for biological data | Improved fit for sparse, overdispersed data |

Experimental Protocols for Model Evaluation

Protocol 1: Assessing Model Fit with Dirichlet-Multinomial Regression

Purpose: To evaluate whether the Dirichlet-multinomial model provides a significantly better fit to overdispersed count data compared to the standard multinomial model.

Workflow:

Data Preparation: Compile multivariate count data with sample-specific totals. For microbiome data, this typically represents OTU/ASV counts across multiple samples [6].

Model Specification:

Parameter Estimation:

- For Bayesian implementations: Use Hamiltonian Monte Carlo (HMC) methods for posterior sampling [6].

- For frequentist implementations: Use maximum likelihood estimation with appropriate numerical optimization techniques.

Model Comparison:

- Calculate goodness-of-fit statistics (e.g., AIC, BIC) for both models.

- Perform likelihood ratio tests where appropriate.

- Assess residual patterns to identify systematic lack of fit.

Interpretation: Determine whether the DM model's improved fit (if any) justifies its additional complexity. Evaluate parameter estimates for biological significance [6] [1].

Protocol 2: Evaluating Likelihood Ratio Calculation Performance

Purpose: To assess the accuracy and stability of likelihood ratio calculations under both multinomial and Dirichlet-multinomial frameworks, particularly in the presence of extra-multinomial variation.

Workflow:

Simulation Design:

- Generate synthetic count data under both multinomial and DM data-generating processes.

- Systematically vary the degree of overdispersion and sample size.

- Incorporate known effect sizes for covariate relationships [6].

Model Fitting:

- Fit both standard multinomial and DM models to the simulated data.

- Calculate likelihood functions and likelihood ratios for predefined hypotheses.

Performance Metrics:

- Compute calibration measures (how well calculated LRs match true odds).

- Assess discrimination ability (separation between true and false hypotheses).

- Evaluate computational stability across parameter space.

Sensitivity Analysis:

- Determine how LR performance degrades with increasing model misspecification.

- Identify boundary conditions where each model maintains adequate performance.

Validation: Apply optimized LR procedures to empirical datasets with known ground truth where possible [6] [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Dirichlet-Multinomial Modeling

| Tool/Resource | Function | Application Context |

|---|---|---|

| Dirichlet-multinomial likelihood calculator | Computes probability masses and likelihood ratios | Model fitting and hypothesis testing |

| Hamiltonian Monte Carlo (HMC) sampler | Bayesian posterior sampling for hierarchical models | Parameter estimation for DM regression |

| Spike-and-slab variable selection | Identifies relevant covariates in high-dimensional settings | Feature selection in DM regression models |

| Isometric log-ratio (ilr) transformation | Maps compositional data to Euclidean space | Covariate creation and data normalization |

| COMPSign R package | Implements DM mixed models for compositional data | Differential abundance analysis for mutational signatures |

| Bernstein polynomial estimators | Nonparametric density estimation on simplex | Flexible modeling of probability distributions |

Advanced Extensions: The EFDM Model and Future Directions

For datasets exhibiting particularly complex correlation structures, the Extended Flexible Dirichlet-Multinomial (EFDM) model provides additional flexibility beyond the standard DM formulation. The EFDM can be viewed as a structured DM mixture that maintains interpretability while accommodating diverse dependence patterns [6]. This model is particularly valuable for:

- Microbiome Data Analysis: Where both co-occurrence and mutual exclusion patterns exist between taxa [6].

- Mutational Signature Analysis: Where exposures to different mutational processes may exhibit complex correlation patterns across samples [1].

- Drug Development Applications: Where multivariate count outcomes with extra variation are common in high-throughput screening and toxicological assessment.

The EFDM framework specifically addresses the limitation of negative correlations in the standard DM by allowing for more general covariance structures, including positive correlations between features [6]. This enhanced flexibility comes with computational challenges that often require specialized estimation approaches such as tailored Hamiltonian Monte Carlo methods combined with spike-and-slab variable selection procedures [6].

The standard multinomial distribution's limitations—particularly its rigid variance-covariance structure, inherent negative correlations, and inability to accommodate extra-multinomial variation—render it inadequate for many modern research applications, especially in biomedical contexts like microbiome analysis and mutational signature studies. The Dirichlet-multinomial model and its extensions directly address these limitations by introducing parameters that explicitly model overdispersion and accommodate more complex correlation structures. For researchers calculating likelihood ratios in the presence of extra-multinomial variation, transitioning from standard multinomial to Dirichlet-multinomial frameworks provides more accurate, reliable, and interpretable results, ultimately enhancing scientific inference in drug development and biological research.

The Dirichlet-multinomial (DMN) distribution represents a fundamental advancement in modeling multivariate count data exhibiting overdispersion. As a compound probability distribution, it naturally arises when multinomial probabilities themselves follow a Dirichlet distribution. This model provides enhanced flexibility for genomic and biomolecular data analysis where traditional multinomial assumptions are violated by biological variability. Within a thesis focused on likelihood ratio calculation using Dirichlet-multinomial models, understanding this compound nature is essential for robust statistical inference in drug development and biomedical research.

Theoretical Foundation

Compound Distribution Formulation

The Dirichlet-multinomial distribution is formally defined as a compound distribution where a probability vector p is first drawn from a Dirichlet distribution with parameter vector α, and then count data x is generated from a multinomial distribution using this random probability vector [10]. The generative process can be specified as:

p ∼ Dirichlet(α) where α = (α₁, α₂, ..., αₖ) with αₖ > 0, and α₀ = ∑αₖ

x ∼ Multinomial(n, p)

The resulting probability mass function for the Dirichlet-multinomial distribution integrates over the Dirichlet prior [10]:

where Γ(·) represents the gamma function, n is the total number of trials, xₖ are the observed counts for each category, and αₖ are the Dirichlet parameters.

Key Distributional Properties

The Dirichlet-multinomial distribution provides moment structures that account for overdispersion compared to the standard multinomial [10]:

Table 1: Moment Properties of the Dirichlet-Multinomial Distribution

| Property | Formula |

|---|---|

| Mean | E(Xᵢ) = n · (αᵢ/α₀) |

| Variance | Var(Xᵢ) = n · (αᵢ/α₀)(1 - αᵢ/α₀) · [(n + α₀)/(1 + α₀)] |

| Covariance | Cov(Xᵢ, Xⱼ) = -n · (αᵢαⱼ/α₀²) · [(n + α₀)/(1 + α₀)] for i ≠ j |

The covariance structure reveals a key characteristic: all pairwise covariances between different categories are negative, reflecting the compositional constraint that increases in one component necessitate decreases in others [10]. The term (n + α₀)/(1 + α₀) represents the overdispersion factor; as α₀ increases, this factor approaches 1 and the distribution converges to the standard multinomial.

Experimental Protocols and Applications

Protocol 1: Differential Transcript Usage Analysis in RNA-Seq

Application Context: Identifying changes in alternative splicing between biological conditions using RNA sequencing data [11].

Table 2: Research Reagent Solutions for Transcriptomic Analysis

| Reagent/Resource | Function | Implementation |

|---|---|---|

| DRIMSeq R Package | Statistical framework for DTU analysis | Bioconductor package implementing DMN model |

| Transcript Quantification | Isoform-level abundance estimation | kallisto, Salmon, or RSEM |

| Annotation Database | Transcript structure information | Ensembl, GENCODE, or RefSeq |

| High-Performance Computing | Likelihood optimization | Cluster computing resources |

Methodology:

Data Preparation: Obtain transcript-level count estimates from RNA-seq alignment using quantification tools (e.g., kallisto, Salmon, or RSEM) [11]. Aggregate counts by gene to create a multivariate count vector for each sample.

Model Specification: For gene g with K isoforms, let Yₛ = (Yₛ₁, ..., Yₛᴷ) represent isoform counts for sample s, with total counts nₛ = ∑Yₛᵢ. Assume Yₛ ∼ DMN(nₛ, αₛ), where αₛ = (αₛ₁, ..., αₛᴷ) are Dirichlet parameters.

Dispersion Estimation: Apply empirical Bayes shrinkage to share information across genes for robust dispersion parameter estimation [11]. This addresses the challenge of limited replicates through weighted likelihood moderation.

Hypothesis Testing: Construct likelihood ratio tests comparing models with and without condition effects on isoform proportions. The test statistic follows asymptotically a χ² distribution with (K-1) degrees of freedom.

Multiple Testing Correction: Apply false discovery rate control (e.g., Benjamini-Hochberg procedure) to account for testing across thousands of genes.

Figure 1: Experimental workflow for differential transcript usage analysis using the Dirichlet-multinomial model.

Protocol 2: Mutational Signature Differential Abundance Analysis

Application Context: Comparing relative abundances of mutational signatures between clonal and subclonal mutations in cancer genomics [1].

Methodology:

Signature Extraction: Decompose mutational catalogues into COSMIC signature exposures using quadratic programming or Bayesian methods. Let Yₚₜ represent the exposure of signature t in patient p.

Data Structure: For each patient, create paired compositional vectors for clonal and subclonal mutations, representing relative signature activities.

Mixed Model Specification: Implement a Dirichlet-multinomial mixed model to account for within-patient correlation:

where Xₚ contains fixed effects (e.g., clonal/subclonal status), Zₚ is the random effect design matrix, and bₚ represents patient-specific random effects [1].

Parameter Estimation: Use Laplace approximation to evaluate high-dimensional integrals induced by the random effect structure [1].

Differential Abundance Testing: Test fixed effect coefficients using Wald statistics or likelihood ratio tests to identify signatures with significant abundance differences between groups.

Protocol 3: Sparse Covariate Selection in Microbiome Studies

Application Context: Identifying nutrients associated with gut microbiome composition changes [12].

Methodology:

Data Collection: Obtain bacterial taxa counts from 16S rRNA sequencing and covariate data (e.g., nutrient intake measurements).

DMN Regression Model: Let Yᵢ ∼ DMN(nᵢ, αᵢ) for sample i, with log-link function:

where Xᵢ is the covariate vector for sample i, and β are regression coefficients [12].

Sparse Regularization: Apply sparse group ℓ₁ penalty to select relevant covariates and their associated taxa:

where βₖ represents the group of coefficients for covariate k across all taxa [12].

Algorithm Optimization: Implement block-coordinate descent algorithm to solve the optimization problem efficiently.

Inference: Refit the model with selected covariates to obtain unbiased parameter estimates and calculate likelihood ratio statistics for significance testing.

Computational Considerations

Likelihood Function Instability

The Dirichlet-multinomial log-likelihood function faces computational instability near ψ=0 (where ψ=1/α₀ is the overdispersion parameter), where the distribution approaches the standard multinomial [13]. Conventional computation methods yield numerical instability in this region, returning NaN values in statistical software.

Efficient Computation Algorithm

A novel computational approach using truncated series of Bernoulli polynomials provides stable calculation of the log-likelihood without excessive runtime [13]. This method enables practical application to high-throughput sequencing data with large counts by:

- Reformulating the log-likelihood using a novel parameterization

- Implementing a mesh algorithm to extend applicability

- Achieving orders-of-magnitude improvement in runtime and accuracy

Table 3: Likelihood Formulations for Dirichlet-Multinomial Distribution

| Formulation | Expression | Advantages | Limitations |

|---|---|---|---|

| Standard | ℓ(α) = ∑[log Γ(xₖ+αₖ) - log Γ(αₖ)] + log Γ(α₀) - log Γ(n+α₀) | Direct interpretation | Unstable as ψ→0 |

| Alternative | ℓ(θ) = ∑ᵢ∑ⱼ[log(1 + θ(j-1)) - log(1 + θ(j-1+πₖn))] | Stable near ψ=0 | Runtime O(n) |

| Novel Approximation | ℓ(ψ) = ∑B₂ₘ/(2m(2m-1)) · [ζ(2m-1,n+πψ) - ζ(2m-1,πψ)] | Stable and fast | Complex implementation |

Extensions Beyond Basic DMN Model

Extended Flexible Dirichlet-Multinomial (EFDM)

The standard DMN model imposes negative correlations between categories, which limits applicability to microbiome data where positive correlations exist between taxa [6]. The EFDM model addresses this through:

- Structured Mixture: EFDM is a structured mixture of DMN distributions with linked parameters

- Flexible Dependence: Accommodates both positive and negative correlations

- Interpretability: Provides explicit expressions for inter- and intraclass correlations

- Zero-Inflation: Naturally accommodates excess zeros common in microbiome data

Bayesian Approaches for Ratio Estimation

For estimating ratios of multinomial parameters, Bayesian approaches with Dirichlet priors provide posterior distributions for πᵢ/πⱼ. The posterior mean is:

which differs from the ratio of posterior means (nᵢ + aᵢ)/(nⱼ + aⱼ), demonstrating that the ratio of posterior means does not equal the posterior mean of the ratio [14].

Figure 2: Generative process for the Dirichlet-multinomial compound distribution.

The Dirichlet-multinomial distribution provides a mathematically elegant and practically useful framework for modeling overdispersed multivariate count data. Its compound nature as a multinomial with Dirichlet-distributed probabilities offers both theoretical coherence and computational tractability. Within likelihood ratio testing frameworks, proper implementation of DMN models requires careful attention to dispersion estimation, computational stability, and appropriate regularization. The protocols outlined here for transcriptomic, mutational signature, and microbiome applications demonstrate the versatility of this distribution for addressing complex questions in biomedical research and drug development.

The Dirichlet-multinomial (DM) model is a fundamental extension of the multinomial distribution that accounts for overdispersion in multivariate categorical count data. This model plays a crucial role in likelihood ratio calculation across diverse fields including bioinformatics, clinical trial analysis, and ecology. Understanding its three key parameters—concentration, overdispersion, and expected fractions—is essential for proper model specification, interpretation, and application in research settings. This application note provides a comprehensive technical overview of these parameters, their mathematical relationships, and practical protocols for their estimation in scientific research, particularly within the context of likelihood ratio testing in Dirichlet-multinomial model research.

Theoretical Foundation

Dirichlet-Multinomial Model Specification

The Dirichlet-multinomial distribution arises as a compound probability distribution where a probability vector p is first drawn from a Dirichlet distribution with parameter vector α, and then categorical count data x are drawn from a multinomial distribution with parameter p [10]. This two-stage generative process can be summarized as:

p ~ Dirichlet(α) x ~ Multinomial(n, p)

The resulting probability mass function for the DM distribution with K categories is given by:

Pr(x ∣ n, α) = [Γ(α₀)Γ(n+1)]/[Γ(n+α₀)] × ∏[k=1 to K] [Γ(xₖ + αₖ)]/[Γ(αₖ)Γ(xₖ+1)]

where α₀ = ∑[k=1 to K] αₖ represents the concentration parameter, Γ(·) is the gamma function, n is the total number of trials, and xₖ are the observed counts for each category [10].

Parameter Reparameterization

For practical interpretation and computational stability, a useful reparameterization expresses the DM parameters as:

α = conc × frac

where:

- conc: concentration parameter (scalar > 0)

- frac: expected fraction vector (K-dimensional probability vector summing to 1) [15]

This parameterization separates the location (frac) and dispersion (conc) aspects of the distribution, facilitating more intuitive model specification and interpretation. The relationship between these parameters and the overdispersion parameter ψ is discussed in Section 3.3.

The following diagram illustrates the hierarchical structure and relationships between key parameters in the Dirichlet-multinomial model:

Figure 1: Parameter Relationships in Dirichlet-Multinomial Model. This diagram illustrates the hierarchical structure and relationships between concentration, expected fractions, the alpha vector, and overdispersion in the Dirichlet-multinomial model.

Key Parameters

Expected Fractions (frac)

The expected fractions parameter, denoted as frac, represents the central tendency or mean direction of the distribution across categories. Mathematically, it defines the expected proportion of counts for each category in the multinomial distribution [15].

Mathematical Definition: For a K-category DM model with parameters α₁, α₂, ..., αₖ, the expected fraction for category k is:

E[fracₖ] = αₖ/α₀

where α₀ = ∑[i=1 to K] αᵢ is the concentration parameter.

Properties:

- frac is a K-dimensional vector on the simplex (∑[k=1 to K] fracₖ = 1)

- It represents the long-run expected proportions of each category

- In Bayesian terms, it can be interpreted as the prior belief about category proportions before observing data

- The expected value of the multinomial probability vector p equals frac: E[p] = frac

Research Implications: In likelihood ratio testing, the expected fractions parameter defines the null hypothesis values against which observed data are compared. Accurate specification of frac is crucial for power calculations and appropriate interpretation of test results.

Concentration Parameter (conc)

The concentration parameter, denoted as conc or α₀, controls how tightly the distribution is concentrated around the expected fractions. It is a scalar positive value that determines the degree of similarity between the Dirichlet-multinomial and standard multinomial distributions [15] [10].

Mathematical Definition: conc = α₀ = ∑[k=1 to K] αₖ

Properties and Effects:

- Large conc values (conc → ∞): The DM distribution approaches the standard multinomial distribution with fixed probability vector frac. Variability between samples decreases, and the data exhibit minimal overdispersion.

- Small conc values (conc → 0): The DM distribution exhibits substantial overdispersion. Individual samples show high variability in their probability vectors pᵢ, with most probability mass concentrated on a few categories (sparsity).

- Intermediate values: Balance between the extremes, allowing for moderate between-sample variability.

Research Applications: In clinical trial settings with multiple co-primary endpoints, the concentration parameter captures the correlation structure among endpoints, which is crucial for accurate power calculations and sample size determination [16].

Overdispersion Parameter (ψ)

Overdispersion in count data occurs when the observed variance exceeds the nominal variance predicted by the multinomial distribution. The overdispersion parameter ψ quantifies this extra-multinomial variation [13].

Mathematical Relationships: The overdispersion parameter ψ can be defined in relation to the concentration parameter:

ψ = 1/(1 + conc) = 1/(1 + α₀)

Alternatively, some parameterizations use:

ψ = 1/α₀

Properties:

- ψ ranges between 0 and 1

- ψ = 0 indicates no overdispersion (DM reduces to multinomial)

- ψ → 1 indicates maximal overdispersion

- The relationship between ψ and conc is inverse: as conc increases, ψ decreases

Variance Inflation: The DM distribution exhibits variance inflation compared to the standard multinomial:

Var(Xₖ) = n × fracₖ × (1 - fracₖ) × [(n + α₀)/(1 + α₀)]

The factor (n + α₀)/(1 + α₀) > 1 (for n > 1) represents the variance inflation due to overdispersion [10].

Quantitative Parameter Relationships

Table 1: Effects of Concentration and Overdispersion Parameters on Distribution Properties

| Parameter Value | Distribution Behavior | Variance Relationship | Approximation to Multinomial |

|---|---|---|---|

| conc → 0 (ψ → 1) | High between-sample variability; sparse distributions | Substantial variance inflation | Poor |

| conc = 1 (ψ = 0.5) | Moderate overdispersion | Moderate variance inflation | Fair |

| conc → ∞ (ψ → 0) | Minimal between-sample variability | Approaches multinomial variance | Excellent |

| intermediate conc | Balanced variability | Controlled variance inflation | Good with overdispersion |

Table 2: Moment Properties of Dirichlet-Multinomial Distribution

| Moment | Formula | Dependencies |

|---|---|---|

| Mean | E[Xₖ] = n × fracₖ | n, fracₖ |

| Variance | Var(Xₖ) = n × fracₖ × (1 - fracₖ) × [(n + conc)/(1 + conc)] | n, fracₖ, conc |

| Covariance | Cov(Xₖ, Xⱼ) = -n × fracₖ × fracⱼ × [(n + conc)/(1 + conc)] | n, fracₖ, fracⱼ, conc |

Experimental Protocols

Parameter Estimation via Maximum Likelihood

Purpose: To estimate the concentration parameter and expected fractions from observed count data.

Materials:

- Multivariate count data arranged in a samples × categories matrix

- Computational environment with appropriate statistical software (R, Python, etc.)

- Specialized libraries: dirmult (R), PyMC (Python), VGAM (R)

Procedure:

- Data Preparation: Compile count data such that rows represent independent samples and columns represent categories. Ensure all counts are non-negative integers and row sums represent total trials per sample.

Likelihood Function Specification: Implement the DM log-likelihood function using stable computational methods [13]: ℓ(α ∣ X) = ∑[i=1 to n] [ln Γ(α₀) - ln Γ(nᵢ + α₀) + ∑[k=1 to K] [ln Γ(xₖᵢ + αₖ) - ln Γ(αₖ)]]

Optimization: Maximize the log-likelihood function with respect to α using constrained optimization methods, ensuring αₖ > 0 for all k.

Parameter Extraction: Compute:

- conc = α₀ = ∑[k=1 to K] αₖ

- fracₖ = αₖ/α₀ for each category k

- ψ = 1/(1 + α₀) (overdispersion parameter)

Uncertainty Quantification: Compute standard errors from the observed Fisher information matrix.

Troubleshooting:

- For numerical instability near ψ = 0, use the Bernoulli polynomial-based approximation [13]

- For sparse data with many zeros, consider zero-inflated extensions of the DM model [6]

Bayesian Estimation with Hyperpriors

Purpose: To estimate DM parameters within a hierarchical Bayesian framework, incorporating prior knowledge and quantifying posterior uncertainty.

Materials:

- Bayesian modeling software (PyMC, Stan, JAGS)

- Specification of prior distributions based on domain knowledge

Procedure:

- Model Specification: Define the hierarchical structure:

- Hyperpriors: γₖ ~ Gamma(shape, rate) or via reparameterization [17]: β ~ Dirichlet(1) λ ~ Exponential(·) α = λ × β

Posterior Sampling: Use Markov Chain Monte Carlo (MCMC) methods to sample from the posterior distribution: p(α ∣ X) ∝ p(X ∣ α) × p(α)

Posterior Summary: Compute posterior means, medians, and credible intervals for:

- conc = ∑ αₖ

- fracₖ = αₖ/conc

- ψ = 1/(1 + conc)

Applications: This approach is particularly valuable in microbiome studies where prior knowledge about taxonomic abundances can be incorporated through informed hyperpriors [6].

Likelihood Ratio Testing for Model Comparison

Purpose: To compare nested DM models using likelihood ratio tests, evaluating specific hypotheses about parameters.

Materials:

- Fitted DM models under null and alternative hypotheses

- Access to likelihood ratio test critical values or permutation testing framework

Procedure:

- Model Formulation: Specify null (H₀) and alternative (H₁) hypotheses about parameters. For example:

- H₀: conc = conc₀ (specific value)

- H₁: conc ≠ conc₀

Likelihood Calculation: Compute maximum likelihood estimates and corresponding log-likelihood values for both models: ℓ₀ = max ℓ(θ ∣ H₀) ℓ₁ = max ℓ(θ ∣ H₁)

Test Statistic Computation: Calculate likelihood ratio test statistic: Λ = -2 × (ℓ₀ - ℓ₁)

Significance Assessment: Compare Λ to χ² distribution with degrees of freedom equal to the difference in parameters between H₁ and H₀.

Effect Size Calculation: Compute practical significance measures such as:

- Estimated difference in concentration parameters

- Change in overdispersion

- Proportional difference in expected fractions

Interpretation: In clinical trial applications, this approach can test whether multiple co-primary endpoints share a common correlation structure, impacting trial design and analysis strategies [16].

Research Reagent Solutions

Table 3: Computational Tools for Dirichlet-Multinomial Modeling

| Tool/Software | Primary Function | Key Features | Application Context |

|---|---|---|---|

| PyMC [15] | Bayesian modeling | Flexible specification of DM models with various priors | General Bayesian analysis, hierarchical modeling |

| dirmult (R) [13] | DM parameter estimation | Maximum likelihood estimation for DM models | Bioinformatics, ecology applications |

| VGAM (R) [13] | Vector generalized models | DM regression modeling | Covariate-adjusted analyses |

| DMNet [18] | Clustering of count data | DM model with network fusion penalty | Microbiome, text data clustering |

| Custom algorithms [13] | Stable likelihood computation | Bernoulli polynomial approximation | High-count sequencing data |

Advanced Applications

Clinical Trial Design with Multiple Co-Primary Endpoints

The DM model provides a framework for designing clinical trials with multiple co-primary endpoints, where success requires all endpoints to meet efficacy criteria simultaneously. The concentration parameter directly influences the correlation structure among endpoints, which must be accounted for in power calculations and sample size determination [16].

Implementation Workflow:

Figure 2: DM Model in Clinical Trial Design with Co-Primary Endpoints

Microbiome Data Analysis

In microbiome research, DM models address the inherent overdispersion in taxonomic count data while accommodating the compositional nature of microbial communities. The concentration parameter captures heterogeneity across samples, while expected fractions represent mean taxonomic abundances [6].

Extended Applications:

- EFDM Models: Extended Flexible Dirichlet-Multinomial models accommodate both positive and negative correlations between taxa, overcoming limitations of standard DM models [6]

- Zero-Inflated Extensions: Address excess zeros common in microbiome data through mixture formulations

- Regression Frameworks: Model relationships between microbial abundances and clinical covariates

The concentration parameter, overdispersion parameter, and expected fractions form the foundational triad of Dirichlet-multinomial modeling. Understanding their precise definitions, mathematical relationships, and appropriate estimation methods is crucial for applying DM models to real-world research problems. The protocols outlined in this document provide researchers with practical guidance for implementing these methods across various application domains, with special relevance to likelihood ratio calculation in hypothesis testing contexts. As DM models continue to evolve with extensions such as the EFDM and network-based clustering approaches, proper parameter interpretation remains essential for valid scientific conclusions.

Likelihood functions represent a fundamental component of statistical inference, serving as the basis for both parameter estimation and hypothesis testing across diverse scientific domains. In computational biology and bioinformatics, where researchers frequently analyze multivariate categorical and count data, the Dirichlet-multinomial (DMN) model has emerged as a particularly valuable statistical framework. This model extends the standard multinomial distribution to account for overdispersion—a common phenomenon in real-world datasets where variability exceeds what would be expected under simple parametric models [13]. The DMN distribution has demonstrated significant utility in analyzing high-throughput sequencing data, including applications in metagenomics, transcriptomics, and alternative splicing analysis [13].

The calculation and application of likelihood ratio tests within the DMN framework provide a powerful approach for testing hypotheses about microbial communities, taxonomic abundances, and other multivariate biological systems. Unlike non-parametric methods that may require reducing complex data to pairwise distances or performing univariate "one-taxa-at-a-time" analyses, the DMN model enables fully multivariate parametric testing while retaining more information contained in the original data [19]. This article explores the theoretical foundations, computational considerations, and practical applications of likelihood functions with a specific focus on the Dirichlet-multinomial model and its role in hypothesis testing and parameter estimation for bioinformatics and pharmaceutical research.

Theoretical Foundations of the Dirichlet-Multinomial Model

Distributional Properties

The Dirichlet-multinomial distribution arises as a compound probability distribution where a probability vector p follows a Dirichlet prior distribution, which is then used as the parameter for a multinomial distribution. This hierarchical structure provides the mathematical flexibility to model overdispersed count data commonly encountered in bioinformatics applications [10]. The probability mass function for the DMN distribution with parameters α = (α₁, α₂, ..., αₖ) and total count N is given by:

$$ P(\mathbf{x} \mid n, \boldsymbol{\alpha}) = \frac{\Gamma(\alpha0)\Gamma(n+1)}{\Gamma(n+\alpha0)} \prod{k=1}^K \frac{\Gamma(xk+\alphak)}{\Gamma(\alphak)\Gamma(x_k+1)} $$

where Γ(·) represents the gamma function, α₀ = ∑αₖ denotes the sum of the Dirichlet parameters, xₖ represents the count for category k, and n = ∑xₖ is the total count across all categories [10]. The DMN distribution reduces to the standard multinomial distribution when the dispersion parameter approaches zero, providing a natural extension that incorporates additional variance.

Mean, Variance, and Covariance Structure

The moments of the DMN distribution reveal its capacity to handle overdispersed data. For a random vector X following a DMN distribution, the expected value, variance, and covariance are given by:

- Mean: $E(Xi) = n\frac{\alphai}{\alpha_0}$

- Variance: $\text{Var}(Xi) = n\frac{\alphai}{\alpha0}(1-\frac{\alphai}{\alpha0})(\frac{n+\alpha0}{1+\alpha_0})$

- Covariance: $\text{Cov}(Xi, Xj) = -n\frac{\alphai}{\alpha0}\frac{\alphaj}{\alpha0}(\frac{n+\alpha0}{1+\alpha0})$ for i ≠ j [10]

The variance formula clearly demonstrates the overdispersion property, as the variance exceeds the corresponding multinomial variance by a factor of (n+α₀)/(1+α₀). This dispersion factor approaches 1 as α₀ → ∞ (indicating no overdispersion) and increases as α₀ decreases, thus accommodating the extra-multinomial variation commonly observed in microbiome sequencing data and other biological count data [19].

Computational Considerations for Likelihood Evaluation

Numerical Stability Challenges

The direct computation of the DMN log-likelihood function using conventional methods presents significant numerical challenges, particularly when the overdispersion parameter ψ approaches zero [13]. As ψ → 0, the individual terms in the log-likelihood function become exceedingly large, but their differences become relatively small. Due to the limited precision of floating-point arithmetic, the large terms cancel each other, leaving substantial rounding errors that compromise calculation accuracy [13]. This instability in the neighborhood of ψ = 0 poses serious problems for statistical inference, as it precisely represents the transition from the overdispersed DMN distribution to the standard multinomial distribution.

Table 1: Comparison of DMN Log-Likelihood Computation Methods

| Method | Stability near ψ=0 | Computational Efficiency | Implementation Complexity |

|---|---|---|---|

| Conventional Formula | Unstable | Moderate | Low |

| Series Expansion | Stable | High for large counts | Moderate |

| Alternative Parameterization | Stable | Low for large samples | Low |

| Novel Bernoulli Approximation | Stable | High | High |

Efficient Computation Strategies

To address these computational challenges, researchers have developed novel evaluation methods based on truncated series consisting of Bernoulli polynomials [13]. This approach enables accurate computation without incurring excessive runtime, making it particularly suitable for high-count data situations common in deep sequencing applications. The method involves a mesh algorithm that extends the applicability of the mathematical formulation across different parameter ranges, ensuring both stability and computational efficiency [13].

Numerical experiments demonstrate that this innovative computation method improves both the accuracy of log-likelihood evaluation and runtime by several orders of magnitude compared to existing approaches [13]. When applied to real metagenomic data, the method achieves manyfold runtime improvement, making feasible the application of DMN distributions to large-scale bioinformatics problems that would otherwise be computationally prohibitive.

Likelihood Ratio Testing with Dirichlet-Multinomial Models

Hypothesis Testing Framework

Likelihood ratio tests (LRTs) within the DMN framework provide a powerful approach for testing hypotheses about differences in multivariate compositional data. The general structure of a likelihood ratio test compares the maximized likelihood values under two nested models:

$$ \Lambda = -2 \ln \left( \frac{\max L(\theta \in \Theta_0)}{\max L(\theta \in \Theta)} \right) $$

where Θ₀ represents the parameter space under the null hypothesis, Θ represents the full parameter space, and L(·) denotes the likelihood function [19]. Under the null hypothesis, the test statistic Λ follows approximately a chi-squared distribution with degrees of freedom equal to the difference in dimensionality between Θ and Θ₀.

In the context of DMN models, likelihood ratio tests can be formulated to examine differences in microbial community composition between experimental groups, treatments, or conditions. The parametric nature of this approach offers advantages over non-parametric methods like permutation tests, which often require reducing the data to pairwise distances and may exhibit inflated Type I error when the dispersion variability differs between groups [19].

Workflow for Dirichlet-Multinomial Hypothesis Testing

The following diagram illustrates the complete workflow for hypothesis testing using the Dirichlet-multinomial model:

Experimental Protocols for Dirichlet-Multinomial Analysis

Parameter Estimation Protocol

Objective: Estimate the parameters of the Dirichlet-multinomial distribution from observed count data.

Materials and Software:

- Multivariate count data (e.g., taxonomic abundance table)

- Statistical software with DMN fitting capabilities (R package "HMP" or "dirmult")

Procedure:

- Data Preparation: Format the data as a matrix with samples as rows and taxonomic features as columns. Include appropriate sample identifiers and group labels if applicable.

- Initialization: Select starting values for the α parameters. Common approaches use method-of-moments estimates or values derived from relative frequencies.

- Likelihood Maximization: Implement an optimization algorithm (e.g., Newton-Raphson, Expectation-Maximization) to find the parameter values that maximize the log-likelihood function.

- Dispersion Estimation: Calculate the overdispersion parameter ψ based on the estimated α parameters using the relationship ψ = 1/(1+α₀).

- Convergence Assessment: Verify algorithm convergence and examine the stability of parameter estimates.

Troubleshooting Tips:

- For numerical instability issues near ψ=0, employ the Bernoulli polynomial approximation method [13].

- When dealing with sparse data (many zero counts), consider adding a small pseudocount (e.g., 0.5) to all observations [20].

- For large-dimensional problems, use regularization techniques to stabilize estimates.

Hypothesis Testing Protocol for Group Comparisons

Objective: Test whether two or more groups exhibit significantly different taxonomic compositions using the Dirichlet-multinomial likelihood ratio test.

Materials and Software:

- Grouped multivariate count data

- R package "HMP" or custom implementation of DMN LRT

- Multiple testing correction procedures

Procedure:

- Model Formulation:

- Fit a separate DMN model for each group (full model)

- Fit a pooled DMN model combining all groups (reduced model)

- Likelihood Calculation:

- Compute the maximized log-likelihood for each model: Lfull and Lreduced

- Test Statistic Computation:

- Calculate Λ = -2(Lreduced - Lfull)

- Significance Assessment:

- Compare Λ to chi-squared distribution with degrees of freedom equal to (G-1)×(K-1), where G is the number of groups and K is the number of taxa

- Multiple Testing Correction:

- Apply appropriate correction (e.g., Holm-Bonferroni, Benjamini-Hochberg) when testing multiple hypotheses [20]

Interpretation Guidelines:

- A significant result indicates that at least one group differs in compositional profile from the others

- Follow-up analyses can identify which specific taxa drive the differences

- Consider effect size measures (e.g., fold-changes) in addition to statistical significance

Bayesian Hypothesis Testing with Spike-and-Slab Priors

Objective: Implement Bayesian variable selection to identify significant associations between taxa abundances and clinical covariates.

Materials and Software:

- Taxonomic abundance data

- Covariate matrix (clinical, environmental, or genetic variables)

- Bayesian computation software (Stan, JAGS, or custom R code)

Procedure:

- Model Specification:

- Define the DMN regression model: ζj = αj + ∑βpj xp

- Implement spike-and-slab priors for variable selection on β coefficients

- Posterior Sampling:

- Run Markov Chain Monte Carlo (MCMC) algorithm to obtain posterior distributions

- Assess convergence using trace plots and diagnostic statistics

- Variable Selection:

- Calculate posterior inclusion probabilities for each covariate-taxon association

- Apply threshold based on controlling false discovery rate

- Result Interpretation:

- Identify significant associations based on posterior probabilities

- Examine direction and magnitude of effects through posterior means and credible intervals

Advantages: This approach naturally incorporates uncertainty in variable selection and provides direct probability statements about associations [21].

Application Notes for Bioinformatics and Pharmaceutical Research

Power and Sample Size Calculations

The DMN framework enables rigorous power analysis and sample size determination for experimental design in microbiome studies. By leveraging the parametric form of the distribution, researchers can estimate the number of samples needed to detect effect sizes of biological relevance [19]. The power calculation procedure involves:

- Parameter Estimation from Pilot Data: Use preliminary data to estimate the DMN parameters and dispersion characteristics

- Effect Size Specification: Define the minimum biologically meaningful difference to detect

- Simulation-Based Power Assessment: Generate synthetic data under alternative hypotheses and evaluate rejection rates

- Sample Size Curve Generation: Create power curves across a range of sample sizes to inform experimental design

Table 2: Key Parameters for Power Calculations in DMN Models

| Parameter | Description | Impact on Power |

|---|---|---|

| Dispersion (ψ) | Degree of overdispersion in data | Higher dispersion requires larger sample sizes |

| Effect Size (δ) | Magnitude of difference between groups | Larger effects require smaller sample sizes |

| Baseline Abundance (α/α₀) | Expected proportion of each taxon | Rare taxa require larger sample sizes |

| Number of Taxa (K) | Dimensionality of the composition | Higher dimensionality may require more samples |

Bayesian Dirichlet-Multinomial Regression for Covariate Integration

The integration of covariate information represents an important advancement in DMN modeling, particularly through Bayesian Dirichlet-multinomial regression approaches [21]. This framework enables researchers to:

- Model taxonomic abundances as a function of clinical, genetic, or environmental covariates

- Simultaneously select relevant predictors from potentially high-dimensional covariate spaces

- Quantify uncertainty in both parameter estimation and variable selection

The model specification takes the form:

$$ \begin{aligned} \mathbf{y}i \mid \boldsymbol{\phi}i &\sim \text{Multinomial}(y{i+}, \boldsymbol{\phi}i) \ \boldsymbol{\phi} &\sim \text{Dirichlet}(\boldsymbol{\gamma}) \ \zetaj &= \log(\gammaj) = \alphaj + \sum{p=1}^P \beta{pj} xp \end{aligned} $$

where spike-and-slab priors on the β coefficients facilitate variable selection [21]. This approach has demonstrated superior performance in simulation studies, with increased accuracy and reduced false positive rates compared to alternative methods.

Research Reagent Solutions for Dirichlet-Multinomial Analysis

Table 3: Essential Computational Tools for DMN Likelihood Analysis

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| R Package 'HMP' | Hypothesis testing and power calculations | Taxonomic-based microbiome data analysis |

| R Package 'dirmult' | Parameter estimation for DMN models | General categorical overdispersed data |

| scikit-bio 'dirmult_ttest' | Differential abundance testing | Python-based microbiome analysis |

| Bayesian DMN Regression Code | Covariate association analysis | Integrative analysis with clinical variables |

| VGAM Package | Alternative DMN parameterization | General statistical modeling |

Advanced Implementation: Visualization of Dirichlet-Multinomial Testing Framework

The following diagram illustrates the relationship between different components of the Dirichlet-multinomial hypothesis testing framework, showing how likelihood functions connect various analytical stages:

Likelihood functions form the cornerstone of statistical inference for Dirichlet-multinomial models, enabling both parameter estimation and hypothesis testing in the analysis of overdispersed multivariate count data. The computational innovations addressing numerical instability, coupled with robust testing protocols and Bayesian extensions for covariate integration, have established the DMN framework as an indispensable tool for bioinformatics and pharmaceutical research. As high-throughput sequencing technologies continue to generate increasingly complex datasets, the likelihood-based approaches described in these application notes will remain essential for extracting biologically meaningful insights from multivariate compositional data.

Researchers implementing these methods should prioritize computational stability through appropriate algorithms, carefully design studies with power considerations in mind, and consider Bayesian approaches when integrating multiple sources of covariate information. The continued development of efficient computational resources will further enhance the accessibility and application of Dirichlet-multinomial models across diverse scientific domains.

Application Note: Gut Microbiome Metagenomics in Clinical Practice

Current Clinical Applications and Quantitative Landscape

Gut microbiome metagenomics is emerging as a cornerstone of precision medicine, offering exceptional opportunities for improved diagnostics, risk stratification, and therapeutic development. Advances in high-throughput sequencing have uncovered robust microbial signatures linked to infectious, inflammatory, metabolic, and neoplastic diseases [22]. Clinical applications now include pathogen detection, antimicrobial resistance profiling, microbiota-based therapies, and enterotype-guided patient stratification [22].

Table 1: Human Microbiome Market Overview and Projected Growth (2024-2030)

| Product Category | 2024 Revenue (USD) | 2030 Projected Revenue (USD) | CAGR (%) | Key Drivers |

|---|---|---|---|---|

| Live Biotherapeutic Products (LBPs) | 425 million | 2.39 billion | ~31% | Regulatory milestones, controlled composition, indication expansion beyond GI |

| Fecal Microbiota Transplantation (FMT) | 175 million | 815 million | ~31% | Gold standard for rCDI with >80% cure rates, despite donor variability challenges |

| Diagnostics & Biomarkers | 140 million | 764 million | ~31% | Sequencing cost decline, AI integration for personalized recommendations |

| Nutrition-Based Interventions | 99 million | 510 million | ~31% | Consumer demand for gut-health solutions, next-generation probiotics |

| Personal Care & Topicals | 75 million | 280 million | 24.6% | Lower regulatory barriers, live biotherapeutics for skin and oral health |

| Total Market | ~990 million | >5.1 billion | ~31% | Multiple first-in-class approvals, clinical diversification, regional expansion |

The global human microbiome market continues to exhibit remarkable growth potential, driven by recent regulatory approvals and expanding clinical applications [23]. Beyond recurrent Clostridioides difficile infection (rCDI), developers are now targeting inflammatory bowel disease (IBD), metabolic disorders, autoimmune diseases, cancer, and neurological conditions, with approximately 243 candidates in development across more than 100 companies [23].

Multi-Omics Integration for Precision Diagnostics

Integrated multi-omics approaches are significantly enhancing the diagnostic and prognostic power of microbiome analysis. Large-scale multi-omics integration encompassing metagenomes and metabolomes has identified consistent alterations in underreported microbial species and significant metabolite shifts in inflammatory bowel disease [22]. Diagnostic models built on these multi-omics signatures have achieved exceptional accuracy (AUROC 0.92–0.98) in distinguishing IBD from controls [22].

Similarly, high-resolution serum metabolomics applied to type 2 diabetes (T2D) has identified 111 gut microbiota-derived metabolites significantly associated with disease progression, particularly those linked to branched-chain amino acid metabolism, aromatic amino acids, and lipid pathways [22]. The diagnostic panel generated from these microbial-derived metabolites achieved an AUROC exceeding 0.80, reinforcing the potential of microbiota-informed early intervention strategies [22].

Figure 1: Multi-Omics Integration Workflow for Microbiome Analysis

Application Note: Mutational Signature Analysis in Oncology

Analytical Frameworks for Mutational Signature Dynamics

Mutational processes of diverse origin leave their imprints in the genome during tumour evolution, with each signature having an exposure, or abundance, per sample that indicates how much a process has contributed to the overall genomic change [1]. The structure of mutational signature data typically consists of multivariate count mutational data with potential correlations between signatures and within-patient correlations when multiple observations are available [1].

The Dirichlet-multinomial mixed model framework addresses key challenges in analyzing mutational signature exposures as compositional data, where the crucial information is the relative allocation of mutations across signature categories rather than absolute counts [1]. This approach incorporates:

- Within-patient correlations through random effects

- Signature correlations via multivariate random effects

- Group-specific dispersion parameters to handle variability differences between conditions

- Flexible fixed-effects structure for multi-group comparisons and regression settings [1]

Table 2: Selected Microbiome Therapeutics in Clinical Development (as of September 2025)

| Company / Product | Indication(s) | Modality & Mechanism | Development Stage |

|---|---|---|---|

| Seres Therapeutics – Vowst (SER-109) | rCDI; exploring ulcerative colitis | Oral live biotherapeutic; purified Firmicutes spores that recolonize gut | Approved |

| Vedanta Biosciences – VE303 | rCDI | Defined eight-strain bacterial consortium; promotes colonization resistance | Phase III |

| 4D Pharma – MRx0518 | Oncology (solid tumors) | Single-strain Bifidobacterium longum engineered to activate immunity | Phase I/II |

| Synlogic – SYNB1934 | Phenylketonuria (PKU) | Engineered E. coli Nissle expressing phenylalanine ammonia lyase | Phase II |

| Eligo Bioscience – Eligobiotics | Carbapenem-resistant infections | CRISPR-guided bacteriophages to eliminate antibiotic-resistant bacteria | Phase I |

| Enterome – EO2401 | Glioblastoma & adrenal tumors | Oncology "onco-mimics"; microbiome peptides mimicking tumor antigens | Phase I/II |

| Akkermansia Therapeutics – Ak02 | Metabolic disorders | Pasteurized Akkermansia muciniphila improving insulin sensitivity | Phase I/II |

Advancements in InDel Signature Analysis

Recent research has revealed limitations in the prevailing InDel classification schema (COSMIC-83), which aggregates 1 bp InDels at homopolymers >5 bp into single channels, reducing the separative capacity for signature extraction [24]. This conflation of discriminatory signals within longer homopolymers hampers the ability to distinguish mismatch repair deficiency (MMRd) signatures from signatures of normal replication errors [24].

A redefined InDel taxonomy that considers flanking sequences and informative motifs (e.g., longer homopolymers) enables unambiguous InDel classification into 89 subtypes [24]. This enhanced classification system has uncovered 37 InDel signatures in seven tumor types from the 100,000 Genomes Project, 27 of which are new [24]. The refined taxonomy provides improved biological insights and has led to the development of PRRDetect—a highly specific classifier of postreplicative repair deficiency status in tumors with potential implications for immunotherapies [24].

Figure 2: Mutational Signature Analysis with Dirichlet-Multinomial Framework

Experimental Protocols

Protocol 1: Metagenomic Sequencing for Pathogen Detection and AMR Profiling

Principle: Shotgun metagenomic sequencing enables culture-independent, sensitive, and specific pathogen detection, particularly in complex or culture-negative infections where traditional methods fail [22]. This protocol details a rapid workflow for bacterial pathogen identification and antimicrobial resistance gene detection.

Procedure:

Sample Preparation and Host DNA Depletion

- Collect 200-500 mg stool sample in DNA/RNA shield buffer

- Perform mechanical lysis using bead beating (0.1 mm glass beads, 6.5 m/s for 45 seconds)

- Implement host DNA depletion using selective lysis (differential centrifugation) or enzymatic degradation (NEBNext Microbiome DNA Enrichment Kit)

- Quantify DNA using fluorometric methods (Qubit dsDNA HS Assay)

Library Preparation and Sequencing

- Fragment 1-10 ng DNA to 300-500 bp (Covaris M220 focused-ultrasonicator)

- Prepare sequencing libraries using Illumina DNA Prep kit with 5-minute bead-based normalization

- For rapid turnaround: Utilize nanopore sequencing kits (SQK-LSK114) with rapid barcoding

- Sequence on Illumina NovaSeq (2×150 bp) or Oxford Nanopore PromethION P2 Solo

Bioinformatic Analysis

- Perform quality control (FastQC v0.12.0), adapter trimming (Trimmomatic v0.39), and host read removal (Bowtie2 v2.5.1 against hg38)

- Conduct taxonomic profiling (Kraken2 v2.1.2 with Standard-8 database) and abundance estimation (Bracken v2.8)

- Align reads to comprehensive AMR database (CARD v3.2.5 using BLASTn v2.13.0)

- Generate clinical report with pathogen identification and resistance profile

Validation: Achieves 96.6% sensitivity compared to culture, with 100% confirmation by qPCR for targeted pathogens [22]. Enables real-time identification of AMR genes within 6 hours of sample receipt [22].

Protocol 2: Dirichlet-Multinomial Analysis of Mutational Signature Exposures

Principle: The Dirichlet-multinomial mixed model framework enables robust differential abundance testing of mutational signature exposures while accounting for within-patient correlations and signature co-dependencies [1]. This protocol applies to the comparison of clonal and subclonal mutations or other paired/multiple sample designs.

Procedure:

Data Preparation and Preprocessing

- Obtain mutational signature exposures using quadratic programming against COSMIC v3.4 signatures

- Filter signatures with mean exposure <1% across the cohort

- Apply additive log-ratio transformation (ALR) to address compositionality

- Create design matrix specifying group memberships (e.g., clonal vs. subclonal)

Model Specification and Fitting

- Implement the Dirichlet-multinomial mixed model using the CompSign R package

- Specify multivariate random effects structure to model within-patient correlations

- Include group-specific dispersion parameters to handle heterogeneity

- Set fixed effects for group comparisons

- Execute model fitting using Laplace analytical approximation for high-dimensional integrals

Differential Abundance Testing and Interpretation

- Extract coefficient estimates and confidence intervals for fixed effects

- Compute likelihood ratio tests for group differences

- Apply false discovery rate correction for multiple testing (Benjamini-Hochberg, α=0.05)

- Visualize results using compositional biplots and exposure difference plots

Validation: Applied to 23 cancer types from PCAWG cohort, successfully identifying ubiquitous differential abundance of clonal and subclonal signatures across cancer types [1]. Revealed higher dispersion of signatures in subclonal groups, indicating higher variability between patients at subclonal level [1].

Protocol 3: Integrated Microbiome-Metabolome Correlation Network Analysis

Principle: Construction of microbiome-metabolome correlation networks illuminates perturbed microbial pathways and functions tied to disease states, enabling development of high-accuracy diagnostic models [22].

Procedure:

Multi-Omic Data Generation

- Perform shotgun metagenomic sequencing (as in Protocol 1)

- Conduct untargeted metabolomics on matched samples (LC-MS with Q-TOF mass spectrometer)

- Process metabolomics data: peak picking (XCMS), alignment, and compound identification (CAMERA)

Integrated Correlation Network Analysis

- Compute sparse correlations between microbial taxa and metabolites (SparCC algorithm)

- Construct bipartite network with microbial taxa and metabolites as nodes

- Identify network modules using greedy modularity optimization

- Annotate modules with KEGG pathway enrichment (clusterProfiler v4.0)

Diagnostic Model Building

- Integrate multi-omics features into machine learning framework (random forest or XGBoost)

- Perform nested cross-validation with feature selection in inner loop

- Assess model performance using AUROC with 95% confidence intervals

- Validate model on independent cohort if available

Validation: Diagnostic models for inflammatory bowel disease achieved AUROC of 0.92-0.98 in distinguishing IBD from controls [22]. For type 2 diabetes, microbiota-derived metabolite panels achieved AUROC exceeding 0.80 for disease prediction [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Bioinformatics Tools and Databases for Microbiome and Mutational Signature Analysis

| Tool/Database | Type | Function | Application Context |

|---|---|---|---|

| COSMIC Mutational Signatures v3.4 | Database | 86 curated single-base substitution signatures | Reference for mutational signature extraction and annotation [1] |

| CARD (Comprehensive Antibiotic Resistance Database) | Database | Curated AMR genes and variants | Metagenomic antimicrobial resistance profiling [22] |

| RESOLVE (Robust EStimation Of mutationaL signatures Via rEgularization) | Algorithm | Mutational signature extraction and assignment | Stratification of cancer genomes by active mutational processes [25] |

| CompSign R Package | Software | Dirichlet-multinomial mixed model implementation | Differential abundance testing of mutational signature exposures [1] |