Likelihood Ratio vs. Random Match Probability: A Statistical Guide for Biomedical Research

This article provides a comprehensive comparison of Likelihood Ratios (LR) and Random Match Probabilities (RMP) for researchers, scientists, and drug development professionals.

Likelihood Ratio vs. Random Match Probability: A Statistical Guide for Biomedical Research

Abstract

This article provides a comprehensive comparison of Likelihood Ratios (LR) and Random Match Probabilities (RMP) for researchers, scientists, and drug development professionals. It explores the foundational concepts of both statistical measures, detailing their methodologies and applications in areas such as diagnostic test evaluation, forensic evidence interpretation, and drug-target interaction prediction. The content addresses common challenges in calculation and implementation, offers strategies for optimizing their use, and presents a direct comparison of their strengths and limitations. By synthesizing key insights from diverse fields, this guide aims to enhance the rigorous application of these powerful statistical tools in biomedical and clinical research.

Core Concepts: Demystifying Likelihood Ratios and Random Match Probability

The likelihood ratio (LR) serves as a fundamental statistical measure for quantifying the strength of scientific evidence under competing hypotheses. This technical guide examines the LR's mathematical foundation, its application across diverse fields from medical diagnostics to forensic science, and its position in the ongoing methodological discourse contrasting likelihood ratios with random match probabilities. We detail the core principles governing LR calculation and interpretation, provide structured protocols for its implementation, and visualize the underlying logical relationships. Within a broader research context, the LR provides a coherent framework for evaluating diagnostic test efficacy and evidentiary strength, enabling scientists to precisely articulate how observed data updates prior beliefs about specific hypotheses.

The likelihood ratio (LR) is a core statistical tool for interpreting diagnostic test results and scientific evidence. Formally defined, it is the likelihood that a given test result would occur in a patient with the target condition compared to the likelihood that the same result would occur in a patient without the condition [1]. This ratio provides a direct measure of diagnostic accuracy that is more robust than predictive values, as it remains independent of disease prevalence, enabling broader application across different clinical and research settings [2].

Mathematically, the LR is derived from conditional probabilities comparing two mutually exclusive hypotheses. In diagnostic medicine, these are typically the presence (D+) or absence (D-) of a disease, given a specific test result (T). The LR for a positive test result (LR+) and a negative test result (LR-) are calculated using the test's sensitivity and specificity [3]:

- Positive Likelihood Ratio (LR+):

LR+ = Sensitivity / (1 - Specificity) - Negative Likelihood Ratio (LR-):

LR- = (1 - Sensitivity) / Specificity

These formulae transform the inherent characteristics of a diagnostic test (sensitivity and specificity) into a metric that directly quantifies how a test result shifts the probability of disease [4]. The LR's power stems from its foundation in Bayes' Theorem, which describes how prior probability (pre-test probability) is updated with new evidence (test results) to yield a posterior probability (post-test probability) [5]. The mathematical relationship is most efficiently calculated using odds:

This calculation requires conversion between probability and odds, where odds = probability / (1 - probability) and probability = odds / (1 + odds) [1]. This Bayesian framework establishes the LR as a coherent mechanism for updating belief in light of new evidence, a process fundamental to scientific inference across multiple disciplines.

LR Application Frameworks: Diagnostics versus Forensics

The application and interpretation of likelihood ratios differ meaningfully between medical diagnostics and forensic science, reflecting the distinct questions each field seeks to answer.

Medical Diagnostic Testing

In clinical medicine, LRs are primarily used to quantify how a diagnostic test result changes the probability of a disease. The pre-test probability is typically estimated based on clinical experience, population prevalence, and the patient's presenting symptoms and risk factors [4]. Once a test is performed, the LR specific to the result is applied to update this probability.

The strength of evidence provided by different LR values follows established guidelines, as shown in the table below, which summarizes how LRs of varying magnitudes alter the pre-test probability of disease [3].

Table 1: Interpretation of Likelihood Ratios in Diagnostic Medicine

| Likelihood Ratio Value | Approximate Change in Probability* | Interpretation | |

|---|---|---|---|

| > 10 | +45% | Large increase | Significant evidence to rule-in disease |

| 5 - 10 | +30% | Moderate increase | |

| 2 - 5 | +15% | Slight increase | |

| 1 | 0% | No change | Test result provides no useful information |

| 0.5 - 0.9 | -15% | Slight decrease | |

| 0.2 - 0.5 | -30% | Moderate decrease | |

| < 0.1 | -45% | Large decrease | Significant evidence to rule-out disease |

*Accurate to within 10% for pre-test probabilities between 10% and 90%.

For example, a clinician evaluating a patient for obstructive airway disease might assign a pre-test probability of 10% based on presenting features. If the patient has a smoking history of >40 pack-years—a finding with an LR+ of 20.4—the post-test probability rises to 69%, a substantial shift that may alter management [2]. This process underscores the LR's utility in moving from an initial clinical impression to a more data-informed diagnosis.

Forensic Science and DNA Evidence

In forensic science, particularly DNA analysis, the LR is used to evaluate the strength of evidence linking a suspect to a crime scene. The two competing hypotheses are typically [6]:

- H1 (Prosecution Hypothesis): The DNA profile from the crime scene (E) originated from the suspect (S).

- H0 (Defense Hypothesis): The DNA profile originated from a random, unrelated individual in the population.

The LR is calculated as LR = P(E|H1) / P(E|H0). If the profiles match and potential testing errors are disregarded, the numerator P(E|H1) is essentially 1. The denominator P(E|H0) is the random match probability—the probability that a randomly selected person from the population would have the same DNA profile [6] [7]. Therefore, for a single-source DNA sample, LR = 1 / Random Match Probability [7].

Forensic scientists often use verbal equivalents to communicate the strength of evidence, though these are only guides [7].

Table 2: Verbal Equivalents for Likelihood Ratios in Forensic Science

| Likelihood Ratio (LR) Value | Verbal Equivalent |

|---|---|

| 1 - 10 | Limited evidence to support |

| 10 - 100 | Moderate evidence to support |

| 100 - 1,000 | Moderately strong evidence to support |

| 1,000 - 10,000 | Strong evidence to support |

| > 10,000 | Very strong evidence to support |

This framework allows forensic analysts to present the significance of a DNA match in a logically sound manner, stating, for example, that the evidence is "1000 times more likely if the suspect is the source than if an unrelated random individual is the source" [8].

Experimental Protocols and Methodologies

The accurate calculation and application of LRs require rigorous methodological approaches. Below are detailed protocols for key scenarios.

Protocol for a Diagnostic Test with Dichotomous Outcomes

This protocol calculates LR+ and LR- for a test with simple positive/negative results, using a 2x2 contingency table.

Data Collection: Collect data from a cohort of patients with known disease status (confirmed by a gold-standard test) who have undergone the index diagnostic test.

- a: Disease Positive and Test Positive (True Positive)

- b: Disease Negative and Test Positive (False Positive)

- c: Disease Positive and Test Negative (False Negative)

- d: Disease Negative and Test Negative (True Negative)

Calculate Sensitivity and Specificity:

Sensitivity (Sens) = a / (a + c)Specificity (Spec) = d / (b + d)

Calculate Likelihood Ratios:

Positive Likelihood Ratio (LR+) = Sens / (1 - Spec)Negative Likelihood Ratio (LR-) = (1 - Sens) / Spec

Example Calculation: A study evaluates vaginal self-sampling for HPV mRNA [9].

- Data: 114 (a), 18 (b), 22 (c), 51 (d)

- Sensitivity: 114 / (114 + 22) = 0.84

- Specificity: 51 / (18 + 51) = 0.74

- LR+: 0.84 / (1 - 0.74) = 3.23

- LR-: (1 - 0.84) / 0.74 = 0.22 This LR+ of 3.23 indicates a slight to moderate increase in the odds of disease given a positive test.

Protocol for a Test with Multi-Level or Continuous Outcomes

For tests with more than two outcome levels, interval-specific LRs provide greater power by using more information [10] [5].

Categorize Results: Group test results into multiple ordered categories (e.g., low, intermediate, high) or intervals.

Calculate Interval-Specific LR: For each result interval

i:LR_i = (Proportion of Diseased patients in interval i) / (Proportion of Non-diseased patients in interval i)

Example Calculation: A study on smoking history and obstructive airway disease used four categories [2]. For the highest exposure group (≥40 pack-years):

- Proportion with disease: 42/148 ≈ 28.4%

- Proportion without disease: 2/144 ≈ 1.4%

- LR: 28.4 / 1.4 = 20.3 This high LR strongly increases the probability of disease, while LRs for lower smoking categories provided less diagnostic power.

Protocol for Applying LR to Calculate Post-Test Probability

This protocol allows clinicians to update disease probability after obtaining a test result.

Estimate Pre-test Probability (P_pre): Based on prevalence, clinical experience, and risk factors.

Convert Pre-test Probability to Pre-test Odds:

Pre-test Odds = P_pre / (1 - P_pre)

Calculate Post-test Odds:

Post-test Odds = Pre-test Odds × LR

Convert Post-test Odds to Post-test Probability (P_post):

P_post = Post-test Odds / (1 + Post-test Odds)

Example Calculation: Using the prior example with a pre-test probability of 0.1 and an LR of 20.3 [2]:

- Pre-test Odds = 0.1 / (1 - 0.1) = 0.11

- Post-test Odds = 0.11 × 20.3 ≈ 2.27

- Post-test Probability = 2.27 / (1 + 2.27) ≈ 0.69 A pre-test probability of 10% is thus updated to a post-test probability of 69% following a highly positive test result.

Visualization of Logical Relationships

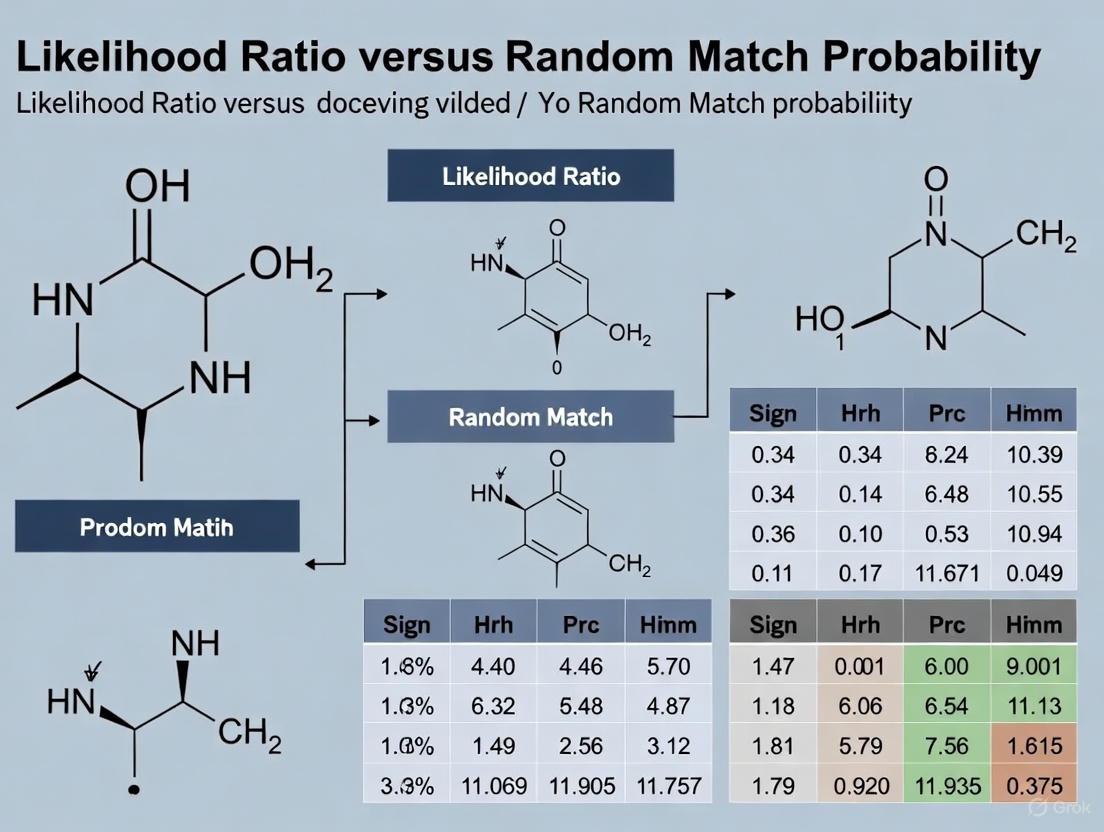

The following diagram illustrates the core logical workflow for applying the Likelihood Ratio, from hypothesis formation through the updating of belief based on the strength of the evidence. This process is foundational to evidence-based evaluation in both medicine and forensics.

The relationship between a test's characteristics and its resulting Likelihood Ratio can be graphically represented using the Receiver Operating Characteristic (ROC) curve. The slope of specific segments of this curve corresponds to different types of LRs, providing a visual intuition of test performance.

Successfully implementing likelihood ratio analysis requires both conceptual understanding and specific analytical tools. The following table details key "research reagents" and their functions in this process.

Table 3: Essential Reagents for Likelihood Ratio Analysis

| Tool/Reagent | Function & Purpose |

|---|---|

| 2x2 Contingency Table | Foundational data structure for organizing counts of true/false positives and negatives against a gold standard reference [1]. |

| Sensitivity & Specificity | Core test characteristics used as inputs for calculating dichotomous LRs; sensitivity reflects the true positive rate, while specificity reflects the true negative rate [3]. |

| Bayesian Calculation Framework | The mathematical engine for converting pre-test probability to post-test probability using odds and the LR. It formalizes the process of updating belief with new evidence [1] [5]. |

| Fagan's Nomogram | A graphical tool that avoids manual arithmetic by allowing a user to draw a line from the pre-test probability through the LR to directly read the post-test probability [2]. |

| ROC Curve Analysis | A graphical plot that visualizes the trade-off between sensitivity and specificity for all possible test cut-offs. The slope of line segments on this curve represents different LRs [5]. |

| Stratified Data Tables (2xk) | Data structure for tests with multi-level (ordinal) results, enabling the calculation of more powerful, interval-specific likelihood ratios [10]. |

| Population Genetic Databases | Essential forensic reagents containing genotype frequencies for calculating the random match probability (P(E|H0)), which forms the denominator of the LR in DNA evidence evaluation [6]. |

Discussion: LR versus Random Match Probability in Scientific Research

The distinction between the likelihood ratio and the random match probability is a critical concept in the interpretation of scientific evidence, particularly in forensic science. While mathematically related, they represent different frameworks for expressing evidential strength.

The random match probability (RMP) is a statement about the evidence itself, answering the question: "What is the probability that a randomly selected, unrelated individual from the population would match the evidentiary profile?" [6]. It is a single probability that is often converted into a statement of rarity, such as "1 in a quadrillion."

In contrast, the likelihood ratio (LR) is a statement about the hypotheses concerning the evidence. It answers the question: "How many times more likely is the evidence if the suspect is the source than if a randomly selected, unrelated individual is the source?" [6] [7]. For a single-source DNA sample where the profiles match, the LR is the reciprocal of the RMP (LR = 1 / RMP) [7].

The LR framework is widely considered the more scientifically rigorous and logically coherent approach for two primary reasons. First, it explicitly compares the probability of the evidence under two competing propositions, which is the core task of forensic evaluation. The RMP, on the other hand, only addresses one proposition (that an unknown person is the source) and can be vulnerable to the "prosecutor's fallacy," where the probability of a match given innocence is mistakenly transposed into the probability of innocence given a match [6]. Second, the LR framework is inherently more flexible. It can be extended to complex situations, such as interpreting DNA mixtures from multiple contributors or cases where the suspect is identified through a database search, where a simple RMP is insufficient or can be misleading [6].

Therefore, within the broader thesis of evidentiary analysis, the likelihood ratio provides a unified and logically sound methodology for quantifying the strength of scientific evidence, directly addressing the core question of how observed data should influence belief between competing scientific hypotheses.

Random Match Probability (RMP) is a fundamental statistical measure in forensic science, particularly in DNA analysis, used to estimate the frequency of a specific profile within a reference population [11] [12]. It answers a specific question: what is the probability that a randomly selected, unrelated individual from a population would match the forensic evidence profile by chance? [12]. This concept exists within a broader scientific discourse on the most statistically sound and logically correct way to present forensic evidence in legal settings, most notably in the ongoing research and debate comparing it to the Likelihood Ratio (LR) [13] [12] [14].

The relationship between RMP and LR is a central theme in modern forensic science. While RMP is a component of the defense's hypothesis in a classic LR framework, a key criticism has emerged that using RMP as a direct proxy for the defense hypothesis (Hd) constitutes a statistical fallacy, as it may ignore other potential factors and interpretations of the evidence [12]. This technical guide explores RMP's calculation, application, and its position within this larger methodological debate for a scientific audience.

Core Conceptual and Mathematical Foundations

Definition and Probabilistic Framework

At its core, RMP is a statement about frequency and probability. In a DNA context, if a specific genetic profile occurs in 1 in 1 million individuals in a population, its RMP is 1/1,000,000 [12]. This means that if a random person is selected from that population, the probability their profile matches the evidence by pure chance is 0.000001.

This concept is built upon the foundation of discrete probability distributions. A probability distribution describes the likelihood of each possible outcome of a random variable [15] [16]. For a discrete random variable (X), its probability distribution lists each possible value (x) and its corresponding probability (P(x)), which must satisfy two conditions:

- Each probability (P(x)) must be between 0 and 1: (0 \leq P(x) \leq 1)

- The sum of all probabilities must be 1: (\sum P(x) = 1) [16]

Table: Example Probability Distribution for a Discrete Random Variable

| Value (x) | -1 | 0 | 1 | 4 |

|---|---|---|---|---|

| P(x) | 0.2 | 0.5 | 0.2 | 0.1 |

The expected value (or mean) of a discrete random variable, denoted (\mu) or (E(X)), represents the average value assumed over numerous trials and is calculated as (\mu = E(X) = \sum xP(x)) [15] [16]. The variance (\sigma^2) and standard deviation (\sigma) measure the variability of the values and are calculated as (\sigma^2 = \sum (x-\mu)^2P(x)) [16].

RMP vs. Likelihood Ratio: A Critical Distinction

The distinction between RMP and Likelihood Ratio is a critical and nuanced one in forensic statistics [14].

- Random Match Probability (RMP) provides a frequency estimate of a profile in a population. It is a straightforward statement about how common or rare a particular characteristic is [11] [12].

Likelihood Ratio (LR) is a ratio of two probabilities and is used to weigh evidence under two competing propositions [12]. In a forensic context, these are typically the prosecution's hypothesis (Hp) and the defense's hypothesis (Hd). The LR is expressed as:

(LR = \frac{P(Evidence|Hp)}{P(Evidence|Hd)})

Where (P(Evidence|Hp)) is the probability of observing the evidence if the prosecution's hypothesis is true, and (P(Evidence|Hd)) is the probability of observing the evidence if the defense's hypothesis is true [12].

A significant point of debate, as highlighted in recent research, is the potential misuse of RMP within the LR framework. It has been argued that it is fallacious to simplistically equate the probability of the evidence given the defense's hypothesis (P(E|Hd)) with the random match probability, as the defense's hypothesis could encompass scenarios beyond a simple "random match" [12]. This misinterpretation can lead to what is known as the "prosecutor's fallacy," potentially misrepresenting the strength of evidence presented to courts [12].

The following diagram illustrates the logical relationship between RMP, LR, and the competing hypotheses in a forensic evaluation.

Experimental and Methodological Approaches

Case Study: RMP Methodology in Forensic Footwear Analysis

The application of RMP extends beyond DNA to other pattern evidence disciplines. A 2023 study on acquired characteristics in forensic footwear databases provides a robust methodological framework for estimating what the authors term Random Match Frequency (RMF) [11]. This distinction in terminology highlights that their calculation is an observed frequency within a specific dataset rather than a predicted probability for a broader population.

Experimental Protocol and Workflow:

- Database Construction: The research utilized the West Virginia University (WVU) footwear database, comprising 1,300 outsoles cataloged by make, model, size, and wear [11].

- Data Acquisition: High-resolution scans (600 PPI) and Handiprint exemplars using fingerprint powder were produced for each outsole. Using oblique illumination and 4X magnification, examiners identified and marked all Randomly Acquired Characteristics (RACs) – unique damage patterns like cuts, scratches, and holes – on the digital images [11].

- Spatial Normalization: To compare RACs across different shoe sizes and models, a spatial normalization procedure was implemented. Each RAC's centroid was mapped to a polar coordinate triple ((r), (r{norm}), (\theta)). The normalized radius ((r{norm})) was calculated by dividing the radius ((r)) by the distance from the origin to the shoe's perimeter at angle (\theta). This allowed every RAC to be mapped to one of 987 spatial cells on a reference Men's size 10 Reebok walking shoe, ensuring comparisons were made for RACs in the same relative position [11].

- RAC Categorization: Each of the 80,668 identified RACs was categorized by shape as either linear, compact, or variable [11].

- Comparison and Matching: The "hold-one-out" method was used to estimate the RMF. Each RAC was sequentially compared to RACs with positional similarity on all other outsoles in the database. Similarity was assessed using a combination of quantitative metrics (like percent area overlap) followed by visual assessment of the most mathematically similar pairs [11].

The workflow for this experimental protocol is detailed below.

Key Research Reagents and Materials

The following table details essential materials and methodological components from the featured footwear RMF study, which could be considered analogous to "research reagents" in a biological context.

Table: Essential Methodological Components for RMF Experimentation

| Component / Solution | Function in the Experimental Protocol |

|---|---|

| Reference Footwear Database (e.g., WVU Database) | Provides a large, characterized sample set (n=1,300) of outsoles with known class characteristics and wear levels for empirical frequency analysis [11]. |

| High-Resolution Imaging System (600 PPI) | Captures minute detail of randomly acquired characteristics (RACs) for subsequent digital analysis and mapping [11]. |

| Spatial Normalization Algorithm | Standardizes the position of RACs from different shoe sizes and designs onto a common coordinate system, enabling valid cross-comparisons based on relative location [11]. |

| Similarity Score Metric (e.g., Percent Area Overlap) | Provides a quantitative, mathematical foundation for initial ranking of RAC similarity, improving efficiency over purely visual comparison [11]. |

| Visual Assessment Protocol | Serves as the final arbiter for determining whether mathematically similar RAC pairs are truly indistinguishable to a human examiner, confirming a "match" [11]. |

Data Presentation and Quantitative Analysis

The large-scale footwear study yielded empirical data on the frequency of indistinguishable RACs. The research reported median probabilities of chance association for different RAC categories, which are directly related to the inverse of the RMF [11].

Table: Estimated Chance Association Probabilities for RAC Types [11]

| RAC Category | Median Probability of Chance Association | Implied Random Match Frequency (1 in X) |

|---|---|---|

| Linear | 1 in 444,126 | ~ 2.25 x 10⁻⁶ |

| Compact | 1 in 291,111 | ~ 3.44 x 10⁻⁶ |

| Variable | 1 in 880,774 | ~ 1.14 x 10⁻⁶ |

These results demonstrate that while the repetition of indistinguishable RACs does occur, the frequencies are extremely low, particularly for complex ("variable") characteristics. This quantitative data provides a scientific basis for examiners to assess the rarity of observed characteristics in casework [11].

Random Match Probability remains a cornerstone of statistical interpretation in forensic science, providing a clear, intuitive measure of the rarity of evidence. Its calculation, as demonstrated in the footwear database study, relies on rigorous methodologies involving large reference datasets, standardized feature mapping, and combined quantitative-qualitative comparison protocols [11].

However, its relationship with the Likelihood Ratio framework is complex and critical. The ongoing research and debate underscore that while RMP is a powerful tool for expressing frequency, its integration into a balanced evaluation of evidence under competing propositions requires careful statistical reasoning to avoid fallacious conclusions [12] [14]. For researchers and practitioners, understanding both the computational methodology of RMP and its proper contextual role relative to the Likelihood Ratio is essential for the scientifically valid presentation of forensic evidence.

This technical guide examines the fundamental mathematical relationship between the Likelihood Ratio (LR) and Random Match Probability (RMP), two pivotal statistical measures in forensic science. Within the ongoing research comparing these methodologies, we demonstrate that for single-source DNA profiles under conditions of an unambiguous match and the assumption that the suspect is unrelated to the true source, the LR is the reciprocal of the RMP (LR = 1/RMP). This paper delineates this core relationship through formal mathematical definitions, provides structured comparisons of their applications across various forensic scenarios, and details experimental protocols for their calculation. The guidance is intended to equip researchers, scientists, and drug development professionals with the analytical framework necessary to apply, interpret, and critically evaluate these statistics in scientific and legal contexts.

In forensic science, particularly in the evaluation of DNA evidence, statistical weight must be assigned to a match between a recovered crime scene sample and a known reference sample from a suspect. The Likelihood Ratio (LR) and Random Match Probability (RMP) are the two dominant statistical frameworks for this purpose, and their relationship forms a cornerstone of forensic interpretation [17]. While they approach the evidence from different philosophical angles, they are mathematically intertwined.

The RMP is defined as the probability that a randomly selected, unrelated individual from a population would coincidentally share the same DNA profile as the one found in the evidence [6] [17]. It is a direct statement about the rarity of the evidence profile. A very small RMP (e.g., one in a billion) indicates that the profile is extremely uncommon, thus strengthening the case that the suspect is the source.

Conversely, the LR is a measure of the strength of the evidence in the context of competing propositions. It compares the probability of observing the evidence under two contrasting hypotheses: the prosecution's hypothesis (H1, typically that the suspect is the source of the evidence) and the defense hypothesis (H0, typically that an unrelated random individual from the population is the source) [7] [6]. An LR greater than 1 supports the prosecution's hypothesis, while an LR less than 1 supports the defense hypothesis.

The Fundamental LR-RMP Relationship

Mathematical Derivation

The core mathematical relationship between the LR and RMP can be formally derived for the simplest case of a single-source DNA profile. The LR is defined as:

LR = P(E | H1) / P(E | H0)

Where:

- P(E | H1) is the probability of the evidence (E) given the prosecution's hypothesis (H1: "the suspect is the source").

- P(E | H0) is the probability of the evidence (E) given the defense's hypothesis (H0: "a random person is the source") [7].

If the suspect is truly the source and the DNA profile has been determined without error, then P(E | H1) = 1. The probability of the evidence under H0 is the probability that a random person would have this profile, which is by definition the Random Match Probability (RMP). Therefore, the equation simplifies to:

Table 1: Interpretation of Likelihood Ratios and Corresponding RMPs

| Likelihood Ratio (LR) | Verbal Equivalent | Random Match Probability (RMP) | Support for Proposition H1 |

|---|---|---|---|

| 1 to 10 | Limited evidence | 1 to 0.1 | Limited |

| 10 to 100 | Moderate evidence | 0.1 to 0.01 | Moderate |

| 100 to 1,000 | Moderately strong | 0.01 to 0.001 | Moderately strong |

| 1,000 to 10,000 | Strong evidence | 0.001 to 0.0001 | Strong |

| > 10,000 | Very strong evidence | < 0.0001 | Very strong |

Adapted from verbal equivalents in [7]

Conceptual Workflow and Relationship

The following diagram illustrates the logical relationship and procedural workflow connecting the evaluation of DNA evidence to the calculation of the RMP and LR.

Diagram 1: Logical pathway from DNA match to LR-RMP relationship.

Application in Different Forensic Scenarios

The simple inverse relationship LR = 1/RMP holds primarily for single-source DNA samples. However, forensic evidence is often more complex, and the choice between using an LR or RMP depends on the nature of the evidence and the questions being asked.

Table 2: Comparison of Statistical Approaches Across Forensic Scenarios

| Scenario | Preferred Method | Rationale and Application Notes |

|---|---|---|

| Single-Source DNA | RMP or LR | Both are functionally equivalent due to the reciprocal relationship (LR=1/RMP). RMP directly states profile rarity, while LR explicitly compares hypotheses [7] [8]. |

| Mixtures (2+ contributors) | Likelihood Ratio (LR) | LR is more powerful as it can incorporate quantitative data (e.g., peak heights/areas) and known contributor profiles (e.g., the victim) to evaluate the probability of a suspect's contribution against an unknown alternative [6] [18]. |

| Mixtures (Undetermined contributors) | Combined Probability of Inclusion (CPI) / RMNE | Used when the number of contributors cannot be determined or alleles cannot be separated. CPI calculates the probability that a random person would be included as a possible contributor, but it is less discriminating than LR [17]. |

| Complex Evidence (Shoeprints, etc.) | Likelihood Ratio (LR) | For evidence where a simple "match" is not binary, the LR framework is extended to consider probabilities of feature similarity scores under competing propositions [19]. |

Experimental Protocols for Calculation

Protocol for Calculating Random Match Probability (RMP)

Objective: To determine the rarity of a single-source DNA profile in a relevant population.

- Genotype Determination: Determine the genotype of the evidence sample at each locus analyzed [17].

- Allele Frequency Calculation: Using a relevant population database, establish the frequency of each observed allele at its respective locus [6].

- Genotype Frequency Calculation: Apply the product rule to calculate the frequency of the observed genotype at each locus.

- For a heterozygous genotype (Aa), the frequency is calculated as

2 * p * q, wherepandqare the frequencies of alleles A and a, assuming Hardy-Weinberg Equilibrium [6]. - For a homozygous genotype (AA), the frequency is calculated as

p².

- For a heterozygous genotype (Aa), the frequency is calculated as

- Overall RMP Calculation: Multiply the genotype frequencies across all independent loci to obtain the overall RMP for the complete DNA profile [17].

Protocol for Calculating a Likelihood Ratio (LR)

Objective: To evaluate the strength of the evidence by comparing two competing propositions.

- Define Propositions: Formulate two mutually exclusive hypotheses.

- Prosecution Proposition (H1): The DNA originated from the suspect and the victim.

- Defense Proposition (H0): The DNA originated from the victim and an unknown, unrelated random individual [17].

- Calculate Probability under H1: Calculate the probability of observing the evidence DNA profile given that H1 is true. In many straightforward comparisons, this probability is 1 [7] [6].

- Calculate Probability under H0: Calculate the probability of observing the evidence DNA profile given that H0 is true. This involves considering all possible genotype combinations for the unknown contributor that are consistent with the mixed profile and summing their probabilities based on population allele frequencies [17].

- Form the Ratio: Divide the probability from Step 2 by the probability from Step 3 to obtain the Likelihood Ratio: LR = P(E | H1) / P(E | H0) [7].

The Scientist's Toolkit: Essential Analytical Components

Table 3: Key Reagents and Resources for Forensic DNA Statistics

| Item / Resource | Function in Analysis |

|---|---|

| Population DNA Databases | Curated sets of genotype data from specific populations (e.g., U.S. Caucasians, Blacks, Hispanics) used to estimate the frequency of alleles and genotypes in the calculation of both RMP and the denominator of the LR [6]. |

| Statistical Software (e.g., STRmix) | Computer programs that implement complex probabilistic models to deconvolute mixed DNA profiles and calculate Likelihood Ratios, incorporating quantitative peak data and accounting for stochastic effects [18] [17]. |

| Allelic Ladders | Standardized mixtures of DNA fragments of known sizes that serve as a reference for determining the alleles present in an evidence or reference sample, which is the foundational step before any statistical analysis [17]. |

| Product Rule Formula | The mathematical principle used to combine genotype frequencies across multiple, independent genetic loci to compute a multi-locus RMP or components of an LR [6] [17]. |

| Verbal Equivalence Scale | A standardized table used to translate a numerical LR value into a qualitative statement (e.g., "moderate support," "very strong support") to aid communication to legal decision-makers [7]. |

The relationship LR = 1 / RMP is a critical concept that bridges two seemingly different approaches to forensic evidence evaluation. This guide has established that while this precise mathematical relationship holds for uncomplicated, single-source DNA profiles, the interpretive landscape is nuanced.

The ongoing research and debate in the field, as noted in the broader thesis context, reveal a clear trajectory. The Likelihood Ratio framework is increasingly regarded as the more powerful and logically coherent method, particularly for complex evidence such as DNA mixtures [18] [19]. Its strength lies in its ability to incorporate more of the available data, such as peak height information, and to explicitly address the issue of propositions posed by both the prosecution and defense. In contrast, the RMP, and especially the Combined Probability of Inclusion (CPI), can "waste information" by not fully utilizing all quantitative aspects of the evidence [18].

Therefore, for researchers and scientists operating at the intersection of statistics and forensic science, understanding the fundamental connection between LR and RMP is essential. However, it is equally important to recognize their distinct domains of optimal application. The choice between them is not merely a mathematical preference but a decision that impacts the clarity, accuracy, and probative value of scientific evidence presented in legal and research settings. The field continues to evolve towards the wider adoption of the LR framework due to its flexibility, robustness, and firm grounding in the logic of evidence interpretation.

This technical guide elucidates the core applications of the likelihood ratio (LR) across two distinct scientific domains: medical diagnostic test assessment and forensic DNA evidence interpretation. Framed within a broader thesis contrasting likelihood ratios with random match probability (RMP), this work delineates the theoretical underpinnings, computational methodologies, and practical implementations of the LR paradigm. For researchers and drug development professionals, we provide a structured analysis of how LRs offer a statistically rigorous framework for quantifying the strength of evidence, overcoming critical limitations inherent in more traditional statistics like RMP, particularly in complex evidential scenarios.

The likelihood ratio (LR) is a fundamental statistical metric for quantifying the strength of evidence in the face of uncertainty. Its power derives from its ability to compare two competing hypotheses directly. In both medicine and forensics, the LR answers a critical question: How many times more likely is the observed evidence under one hypothesis compared to an alternative? [1]

The universal formulation of the LR is:

LR = P(E | H₁) / P(E | H₂)

Where:

- E represents the observed evidence (e.g., a test result, a DNA profile).

- P(E | H₁) is the probability of observing the evidence if hypothesis H₁ is true.

- P(E | H₂) is the probability of observing the evidence if hypothesis H₂ is true.

An LR greater than 1 supports H₁, while an LR less than 1 supports H₂. The further the LR is from 1, the stronger the evidence [3]. This guide explores the application of this core principle in two fields, highlighting its superiority over alternative statistics like Random Match Probability in handling complex, real-world data.

Likelihood Ratios in Medical Diagnostic Testing

In medical diagnostics, LRs provide a direct and intuitive measure of a diagnostic test's ability to revise the pre-test probability of a disease [4] [2]. The two primary metrics are the Positive Likelihood Ratio (LR+) and the Negative Likelihood Ratio (LR-).

Calculation and Interpretation

The formulas for LRs are derived from the test's sensitivity and specificity [4] [3] [1]:

- LR+ = Sensitivity / (1 - Specificity)

- LR- = (1 - Sensitivity) / Specificity

Table 1: Interpretation of Likelihood Ratios in Diagnostics

| LR Value | Approximate Change in Probability* | Interpretation |

|---|---|---|

| > 10 | +45% | Large increase in disease probability |

| 5 - 10 | +30% | Moderate increase |

| 2 - 5 | +15% | Slight increase |

| 1 | 0% | No diagnostic value |

| 0.5 - 1.0 | -15% | Slight decrease |

| 0.1 - 0.5 | -30% | Moderate decrease |

| < 0.1 | -45% | Large decrease in disease probability |

*Accurate for pre-test probabilities between 10% and 90% [3].

Application via Bayes' Theorem

The clinical utility of LRs is realized through their application in Bayes' Theorem to update disease probability [4] [2]. This process involves converting pre-test probability to odds, multiplying by the LR, and converting the resulting post-test odds back to a probability.

- Pre-test Odds = Pre-test Probability / (1 – Pre-test Probability)

- Post-test Odds = Pre-test Odds × LR

- Post-test Probability = Post-test Odds / (1 + Post-test Odds)

This workflow can be visualized as a sequential reasoning process, which is also commonly applied using a Fagan nomogram to bypass calculations [4] [2].

Experimental Protocol for Diagnostic Test Validation

The following protocol outlines the key steps for establishing LRs for a new diagnostic test.

Table 2: Key Reagents for Diagnostic Test Validation

| Research Reagent/Material | Function |

|---|---|

| Biobanked Patient Sera/Samples | Well-characterized samples used as the ground truth for calculating sensitivity and specificity. |

| Reference Standard (Gold Standard) | The definitive method for diagnosing the condition (e.g., PCR, biopsy). Serves as the comparator. |

| Calibrators and Controls | Ensure the analytical precision and accuracy of the test instrument across multiple runs. |

| Statistical Analysis Software (e.g., R, SAS) | Used for all calculations, including sensitivity, specificity, LRs, and confidence intervals. |

Protocol:

- Subject Selection: Recruit a cohort of patients representative of the intended-use population, including both affected and non-affected individuals. The chosen prevalence should not impact LR calculation, as they are based on sensitivity and specificity [2].

- Blinded Testing: Perform the index diagnostic test on all subjects without knowledge of their true disease status (as determined by the gold standard).

- Data Collection: Record all test results. For tests with continuous outcomes (e.g., ferritin levels), categorize results into multiple strata to calculate stratum-specific LRs, which provide more granular information than a single dichotomous LR [2].

- Calculation of Test Properties: Construct a 2x2 contingency table comparing index test results against the gold standard. Calculate sensitivity, specificity, and subsequently, LR+ and LR- [1].

- Validation: Assess the confidence intervals for the LRs and validate the findings in a separate, independent cohort to ensure generalizability.

Likelihood Ratios in Forensic DNA Evidence

In forensic genetics, the LR is the standard framework for evaluating the weight of DNA evidence, comparing prosecution and defense hypotheses [6] [20] [17].

Hypothesis Formulation and Calculation

The core formulation involves two competing hypotheses [6] [7]:

- Hₚ (Prosecution's hypothesis): The DNA profile from the crime scene (E) originated from the suspect (S).

- H₅ (Defense's hypothesis): The DNA profile originated from an unrelated random individual in the population.

The LR is calculated as: LR = P(E | Hₚ) / P(E | H₅)

In a simple single-source DNA match where the profiles match perfectly, the numerator is 1. The denominator is the probability that a random person would have that profile, which is the Random Match Probability (RMP). Thus, the equation simplifies to [6] [7]: LR = 1 / RMP

Advantages of LR over RMP

While mathematically related, the LR framework is fundamentally more powerful and flexible than reporting the RMP alone.

- Scope: The RMP is primarily suited for simple, single-source DNA profiles [17]. The LR, however, can be applied to complex mixed DNA samples containing genetic material from multiple contributors, where deducing a single profile for RMP calculation is not feasible [6] [21].

- Hypothesis Flexibility: The LR allows for the evaluation of any pair of mutually exclusive hypotheses, not just those involving a "random man" [20]. For example, hypotheses could consider relatives of the suspect as alternative contributors.

- Clear Interpretation: The LR directly addresses the question of interest to the court: "How much does this evidence support the prosecution's claim versus the defense's claim?" [20]. An LR of 10,000 means the evidence is 10,000 times more likely if the suspect is the source than if an unrelated random person is the source [7].

Table 3: Comparison of Forensic Statistical Measures

| Feature | Likelihood Ratio (LR) | Random Match Probability (RMP) | Combined Probability of Inclusion (CPI) |

|---|---|---|---|

| Definition | Ratio of the probability of the evidence under two hypotheses. | Probability a random person has a specific DNA profile. | Probability a random person would be included as a possible contributor. |

| Application | Simple and complex DNA profiles, including mixtures. | Primarily simple, single-source profiles. | DNA mixtures where contributors cannot be separated. |

| Hypothesis Testing | Explicitly compares Hₚ vs. H₅. | Does not directly test hypotheses; a stand-alone statistic. | Not based on specific hypotheses about a suspect. |

| Interpretation | "The evidence is X times more likely under Hₚ than under H₅." | "1 in X random people would be expected to match this profile." | "X% of the population cannot be excluded as contributors." |

| Power | Highly discriminating when hypotheses are well-defined. | Highly discriminating for single sources. | Less discriminating than LR or RMP [17]. |

Experimental Protocol for Forensic DNA Interpretation

The following workflow details the standard operating procedure for interpreting a DNA sample and calculating an LR.

Table 4: Essential Materials for Forensic DNA Analysis

| Research Reagent/Kit | Function |

|---|---|

| STR Multiplex PCR Kit | Amplifies multiple Short Tandem Repeat (STR) loci simultaneously from minute quantities of DNA for analysis. |

| Genetic Analyzer & Capillary Electrophoresis | Separates amplified DNA fragments by size to generate an electropherogram (DNA profile). |

| Population Database | Allele frequency databases for relevant populations (e.g., US Caucasian, Black, Hispanic) used to calculate genotype probabilities for the denominator of the LR [6]. |

| Probabilistic Genotyping Software | Advanced software used to deconvolute complex DNA mixtures and calculate LRs by considering all possible genotype combinations [21] [17]. |

Protocol:

- DNA Analysis: Extract DNA from the forensic sample and analyze it using a standardized STR profiling system to generate an electropherogram.

- Profile Assessment: Determine if the profile is from a single source or a mixture. For mixtures, estimate the number of contributors based on the number of alleles per locus and peak height information.

- Hypothesis Formulation: Define the prosecution (Hₚ) and defense (H₅) hypotheses in the specific context of the case. For a two-person mixture, Hₚ might be "The sample contains DNA from the victim and the suspect," while H₅ might be "The sample contains DNA from the victim and an unknown, unrelated person."

- LR Calculation:

- For a single-source profile: The LR is 1/RMP. The RMP is calculated by multiplying the genotype frequencies across all loci, typically applying a correction factor (θ) to account for population substructure [6].

- For a mixed profile: Use probabilistic genotyping software. The software evaluates the probability of the observed peak heights and areas under both Hₚ and H₅, considering all possible genotype combinations for the unknown contributors. The ratio of these probabilities is the LR [17].

- Reporting: Report the LR with a clear statement of the hypotheses used in the calculation. Translate the numerical value into a verbal equivalent (e.g., "strong support") using a standardized scale for communication to the trier of fact [7].

The entire process, from evidence to interpretation, follows a structured path.

Discussion: LR vs. RMP within the Broader Thesis

The core distinction in the LR versus RMP debate centers on the type of question being asked. The RMP answers a specific but limited question: "How common is this DNA profile?" [17] The LR answers a more forensically relevant and general question: "How much does this evidence support one proposition over another?" [20]

The superiority of the LR paradigm is most evident in complex scenarios:

- DNA Mixtures: The RMP is often unusable for mixtures, whereas the LR, especially when computed with probabilistic genotyping software, provides a logically sound and quantifiable measure of evidential weight [6] [17].

- Alternative Hypotheses: The LR framework can accommodate a wide range of propositions beyond a "random man," such as the possibility that a relative of the suspect is the contributor. The RMP cannot easily account for this.

- Communication: While both statistics can be challenging for juries, the LR's direct comparison of the two case-specific hypotheses provides a more structured and transparent basis for expert testimony [21].

A critical limitation of serial application of LRs exists. In medicine, a post-test probability from one test can become the pre-test probability for the next. However, in forensic science, while it may seem intuitive to combine LRs from different, independent pieces of evidence (e.g., DNA and fingerprints) by multiplication, this practice is not formally validated and requires careful consideration of the independence of the underlying evidence [4].

The likelihood ratio is a versatile and powerful statistical tool that provides a unified framework for interpreting evidence across medical diagnostics and forensic science. Its ability to directly compare competing hypotheses makes it intrinsically more informative and flexible than the Random Match Probability. For researchers and drug development professionals, a deep understanding of the LR paradigm is essential for designing robust diagnostic studies, interpreting complex biological data, and critically evaluating scientific evidence. As both fields advance, particularly with the rise of complex biomarkers and probabilistic genotyping, the LR will continue to be the cornerstone of rational, evidence-based decision-making.

In scientific disciplines, from forensic genetics to drug development, quantifying the strength of evidence is fundamental for robust decision-making. Two predominant statistical frameworks for this purpose are the Random Match Probability (RMP) and the Likelihood Ratio (LR). The RMP estimates the probability that a random, unrelated individual would match the evidence profile by chance, thus expressing the rarity of a characteristic [22]. In contrast, the LR is a more recent framework that quantifies the support for one proposition versus another by comparing the probability of the evidence under these competing hypotheses [23]. While RMP has been a long-standing standard, particularly in forensic DNA analysis, the LR framework is increasingly adopted for its ability to handle complex evidence and its alignment with Bayesian reasoning. This guide explores the core concepts, interpretation, and practical applications of LR and RMP values, with a specific focus on the critical challenge of translating numerical results into understandable verbal equivalents for broader scientific communication.

Core Concepts and Definitions

Random Match Probability (RMP)

Random Match Probability (RMP) is a measure of the rarity of a particular DNA profile or other identifying characteristic within a specific population. It answers the question: "What is the probability that a randomly selected, unrelated individual from a given population would match the evidence profile by chance?" [22]. For example, an RMP of 1 in 1 billion for a DNA profile means that it is expected, purely by chance, to occur in one out of every billion unrelated individuals in that population. The power of DNA evidence stems from the multiplicative nature of independent genetic markers, allowing for RMP values that can be exceedingly rare (e.g., 1 in trillions or rarer), thereby providing strong statistical support for an association between evidence and a suspect [22].

Likelihood Ratio (LR)

The Likelihood Ratio (LR) provides a balanced measure of the strength of evidence by comparing two competing propositions, typically the prosecution's hypothesis (Hp) and the defense's hypothesis (Hd) in a forensic context, or more broadly, any pair of mutually exclusive hypotheses. The LR is calculated as:

LR = Probability of Evidence given Hp / Probability of Evidence given Hd

An LR greater than 1 supports the first proposition (Hp), with higher values indicating stronger support. An LR less than 1 supports the alternative proposition (Hd). An LR equal to 1 indicates the evidence is equally probable under both hypotheses and is therefore uninformative [23]. The LR framework's key strength is its formal handling of the probability of the evidence under two explicit, competing scenarios, which helps avoid the pitfalls of associating a random match with guilt.

Key Conceptual Differences

The following table summarizes the fundamental differences between the RMP and LR approaches.

Table 1: Fundamental Differences Between RMP and LR

| Feature | Random Match Probability (RMP) | Likelihood Ratio (LR) |

|---|---|---|

| Core Question | How rare is this profile/characteristic? | How much does the evidence support one proposition over another? |

| Interpretation | Standalone measure of rarity. | Comparative measure of evidential strength. |

| Handling of Uncertainty | Limited in simple presentations; best for single-source, unambiguous profiles. | Can be extended to handle complex evidence, such as mixtures, via probabilistic genotyping. |

| Theoretical Foundation | Frequentist probability. | Bayesian inference. |

| Typical Output | A very small probability (e.g., 1 in a quadrillion). | A ratio (e.g., 10,000, meaning the evidence is 10,000 times more likely under Hp than Hd). |

Experimental and Computational Methodologies

The application of LR frameworks, especially in modern genomics, relies on sophisticated experimental and computational protocols. The workflow below outlines the general process for conducting an LR-based kinship analysis, such as in Forensic Genetic Genealogy (FGG).

Diagram 1: General workflow for an LR-based kinship analysis using SNP data.

Detailed LR Calculation Workflow for Kinship Analysis

A 2025 study provides a novel methodology for integrating LR calculations into Forensic Genetic Genealogy (FGG) and SNP-testing workflows, termed KinSNP-LR [24]. This method is unique for its dynamic selection of highly informative Single Nucleotide Polymorphisms (SNPs) tailored to each case, moving beyond fixed, pre-selected markers. The detailed protocol is as follows:

- Data Foundation: Begin with a large, preselected panel of SNPs from a population database like gnomAD v4. The panel used in the validation study contained 222,366 SNPs that had undergone quality control and were filtered to be outside of "all difficult regions" [24].

- Dynamic SNP Selection:

- Apply Minor Allele Frequency (MAF) Threshold: Filter SNPs to retain those with a high MAF (e.g., > 0.4). SNPs with high MAF have greater discrimination power for relationship inference and are less affected by population substructure [24].

- Ensure Genetic Independence: To comply with the product rule (multiplying LRs across SNPs), selected SNPs must be unlinked. The method selects the first SNP on a chromosome meeting the MAF criterion, then the next SNP that is at least a specified genetic distance away (e.g., 30-50 centimorgans), and continues this process genome-wide [24].

- LR Calculation: For each selected SNP, calculate the likelihood of the observed genotypes under two proposed kinship relationships (e.g., parent-child vs. unrelated). The LR for each SNP is the ratio of these two likelihoods. The cumulative LR is the product of the LRs for all individually selected SNPs [24].

- Validation: The method was validated using data from the 1,000 Genomes Project and simulated pedigrees. Using a subset of 126 highly independent SNPs (MAF > 0.4, minimum 30 cM distance), the method achieved 96.8% accuracy and a weighted F1 score of 0.975 across 2,244 tested relationship pairs, demonstrating high reliability for identifying relationships up to the second degree [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Tools for LR-Based Genomic Analysis

| Tool / Resource | Function / Description | Application in Experiment |

|---|---|---|

| Whole Genome Sequencing (WGS) | A comprehensive method for analyzing entire genomes. Provides the raw data for SNP discovery and genotyping. | Generates the dense, genome-wide SNP data required for kinship inference in FGG [24]. |

| Population Allele Frequency Databases (e.g., gnomAD) | Public repositories of genetic variation across diverse populations. | Serves as the source for SNP panels and population-specific allele frequencies, which are critical for accurate LR calculations [24]. |

| KinSNP-LR (v1.1) | A specialized software tool for dynamic SNP selection and LR calculation from WGS data. | Implements the core methodology for relationship inference, automating SNP filtering and LR computation [24]. |

| Ped-sim | A software tool for simulating pedigrees and phased genotypes. | Used to generate synthetic family data with known relationships to validate the accuracy and performance of the LR framework [24]. |

| IBIS | A tool for detecting Identity-By-Descent (IBD) segments. | Used in validation studies to confirm unrelated relationships among founder individuals in simulated data [24]. |

Presenting and Communicating Statistical Results

The Challenge of Verbal Equivalents

A significant challenge in applying RMP and LR values is effectively communicating their meaning to non-specialists, such as legal decision-makers or professionals in other scientific fields. Research indicates that the existing literature does not conclusively answer the question of the best way to present LRs to maximize understandability [13] [25]. Studies have typically investigated the understanding of the "strength of evidence" in general, rather than focusing specifically on LRs, and notably, "none of the studies that we reviewed tested comprehension of verbal likelihood ratios" [13]. This highlights a critical gap in knowledge. The primary risk is that verbal expressions can be interpreted inconsistently, potentially leading to the over- or under-weighting of scientific evidence.

Review of Empirical Research on Comprehension

Empirical studies have evaluated how laypeople understand different presentation formats. A 2015 experiment examined how people responded to forensic evidence when an expert explained the strength of that evidence using three different formats, including LRs and RMPs [23]. When reviewing this and similar literature, researchers often use indicators of comprehension such as sensitivity (the ability to distinguish between strong and weak evidence), orthodoxy (alignment with normative statistical reasoning), and coherence (consistency in interpretation) [13] [25].

A key and recurrent finding is the "weak evidence effect," where the observed change in a decision-maker's belief after being presented with statistical evidence is often considerably smaller than what a Bayesian calculation would predict [23]. This suggests that numerical LRs or RMPs, especially very large or very small numbers, may not have their full intuitive impact.

Recommendations for Effective Communication

Based on the reviewed research, the following table summarizes the advantages and disadvantages of different presentation formats.

Table 3: Comparison of Statistical Evidence Presentation Formats

| Format | Advantages | Disadvantages | Reported Comprehension Issues |

|---|---|---|---|

| Numerical RMP/LR | Precise and transparent. Allows for detailed calculations. | Can be difficult to grasp, especially very large/small numbers. Prone to being misunderstood (e.g., prosecutor's fallacy). | Jurors may undervalue match evidence or not be sensitive to the probability of a false positive [23]. |

| Verbal Statements (e.g., "strong support") | Intuitive and accessible. Avoids complex numbers. | Highly subjective and open to interpretation. Lacks granularity. | No empirical studies specifically on verbal LRs, but verbal scales for other evidence show high variability in interpretation [13] [25]. |

| Combined Approach (Numerical + Verbal) | Provides both precision and an accessible summary. | The verbal label may anchor and distort the interpretation of the number. | Research is inconclusive on whether this mitigates or compounds misinterpretation [13]. |

Given the current state of research, there is no universally accepted scale for verbal equivalents of LR values. Future research is needed to establish empirically validated verbal expressions that correspond to specific numerical LR ranges. For now, practitioners should:

- Justify Their Scale: If using a verbal scale, clearly define it and explain its basis.

- Use with Caution: Acknowledge that verbal equivalents are interpretive aids, not replacements for the numerical LR.

- Provide Context: Explain what the LR means in simple terms—for example, "An LR of 10,000 means the observed evidence is 10,000 times more likely if hypothesis A is true than if hypothesis B is true."

The interpretation of RMP and LR values is a cornerstone of robust evidence evaluation in scientific research. While the RMP provides a clear measure of the rarity of a characteristic, the LR framework offers a more powerful and logically sound structure for comparing competing hypotheses, especially with complex data like DNA mixtures or kinship analysis. The experimental protocol for KinSNP-LR demonstrates the sophisticated methodologies being developed to apply LR frameworks to cutting-edge genomic data. However, a significant challenge remains in the effective communication of these statistical results. Current empirical literature confirms that no single presentation format—numerical, verbal, or combined—is a perfect solution, and a deep understanding of the potential for misinterpretation is crucial. Future work must focus on developing and validating communication standards, including reliable verbal equivalents, to ensure that the weight of statistical evidence is accurately understood across the scientific and research community.

From Theory to Practice: Calculation and Application in Research

Likelihood ratios (LRs) provide a powerful statistical framework for interpreting diagnostic test results by quantifying how much a given test result will raise or lower the probability of a target disorder. Unlike predictive values, LRs are independent of disease prevalence, making them particularly valuable for applying diagnostic tests across different populations. This technical guide examines LR calculation methodologies, their mathematical relationship to sensitivity and specificity, and their application across medical diagnostics and forensic science, with particular emphasis on the conceptual distinction between likelihood ratios and random match probability in evidentiary interpretation.

Diagnostic tests are routinely utilized in healthcare and forensic settings to determine treatment methods and identify associations; however, many of these tools are subject to error [26]. The validity of a diagnostic test—its ability to measure what it is intended to—is primarily determined by its sensitivity and specificity [26]. These fundamental metrics describe the inherent accuracy of a test regardless of the population being tested.

Sensitivity represents the proportion of true positives out of all patients with a condition, measuring a test's ability to correctly identify those who have the disease [26] [27]. Specificity represents the proportion of true negatives out of all subjects who do not have a disease, measuring the test's ability to correctly identify those who are disease-free [26] [27]. There is typically an inverse relationship between sensitivity and specificity; as sensitivity increases, specificity tends to decrease, and vice versa [26]. Highly sensitive tests are optimal for ruling out disease (high negative predictive value), while highly specific tests are better for ruling in disease (high positive predictive value) [26].

The calculation of these metrics begins with a 2×2 contingency table that cross-classifies test results with true disease status, as shown in Table 1.

Table 1: Diagnostic Test 2×2 Contingency Table Framework

| Disease Present | Disease Absent | Total | |

|---|---|---|---|

| Test Positive | True Positive (TP) | False Positive (FP) | TP + FP |

| Test Negative | False Negative (FN) | True Negative (TN) | FN + TN |

| Total | TP + FN | FP + TN | N |

From this table, sensitivity and specificity are calculated as follows [26]:

- Sensitivity = TP / (TP + FN)

- Specificity = TN / (TN + FP)

Understanding Likelihood Ratios (LRs)

Conceptual Foundation

A likelihood ratio (LR) is the probability of a specific test result in patients with the target disorder divided by the probability of that same result in patients without the disorder [1] [28]. In essence, LRs compare two likelihoods—the frequency of a test result in those with the target disorder compared to the frequency of the same test result in those without the disease [28]. This provides a direct measure of how much a test result will change the probability that a patient has the disease.

LRs offer significant advantages over sensitivity and specificity alone because they [1]:

- Are less likely to change with the prevalence of the disorder

- Can be calculated for several levels of a symptom, sign, or test

- Can be used to combine the results of multiple diagnostic tests

- Can be used to calculate post-test probability for a target disorder

Mathematical Formulations

There are two primary types of LRs, each providing different diagnostic information:

Positive Likelihood Ratio (LR+) indicates how much the odds of the disease increase when a test is positive [26] [4]. It is calculated as:

- LR+ = Sensitivity / (1 - Specificity)

Negative Likelihood Ratio (LR-) indicates how much the odds of the disease decrease when a test is negative [26] [4]. It is calculated as:

- LR- = (1 - Sensitivity) / Specificity

The following diagram illustrates the conceptual relationship between sensitivity, specificity, and likelihood ratios:

Figure 1: Relationship between sensitivity, specificity, and likelihood ratios

Interpretation of LR Values

The value of an LR provides direct insight into the diagnostic usefulness of a test result [1]:

- LR > 1: The test result is associated with the presence of the disease

- LR < 1: The test result is associated with the absence of the disease

- LR = 1: The test result does not change the probability of disease

The further the LR is from 1 (in either direction), the more powerful it is in changing the pre-test to post-test probability of disease. As a general guideline [1]:

- LR+ > 10: Large, often conclusive increase in probability of disease

- LR+ 5-10: Moderate increase in probability of disease

- LR+ 2-5: Small increase in probability of disease

- LR+ 1-2: Minimal increase in probability of disease

- LR- 0.5-1.0: Minimal decrease in probability of disease

- LR- 0.2-0.5: Small decrease in probability of disease

- LR- 0.1-0.2: Moderate decrease in probability of disease

- LR- < 0.1: Large, often conclusive decrease in probability of disease

LR Calculation Methodologies

Basic Calculation from Sensitivity and Specificity

The most straightforward method for calculating LRs involves direct computation from known sensitivity and specificity values. The formulas for these calculations are [26] [4]:

- LR+ = Sensitivity / (1 - Specificity)

- LR- = (1 - Sensitivity) / Specificity

Table 2: LR Calculation from Sensitivity and Specificity

| Metric | Formula | Interpretation |

|---|---|---|

| LR+ | Sensitivity / (1 - Specificity) | How much the odds of disease increase with a positive test |

| LR- | (1 - Sensitivity) / Specificity | How much the odds of disease decrease with a negative test |

Worked Example

Consider a diagnostic test with the following performance characteristics [26]:

- Total patients: 1,000

- True positives: 369

- False negatives: 15

- True negatives: 558

- False positives: 58

First, we calculate the fundamental metrics:

- Sensitivity = 369 / (369 + 15) = 369/384 = 0.961 (96.1%)

- Specificity = 558 / (558 + 58) = 558/616 = 0.906 (90.6%)

Then, we calculate the likelihood ratios:

- LR+ = 0.961 / (1 - 0.906) = 0.961 / 0.094 = 10.22

- LR- = (1 - 0.961) / 0.906 = 0.039 / 0.906 = 0.043

This means a positive test result is approximately 10 times more likely to be seen in someone with the disease than without it, while a negative test result is about 0.043 times as likely (or roughly 1/23) to be seen in someone with the disease than without it [26].

The following diagram illustrates the complete workflow for calculating and applying likelihood ratios in diagnostic decision-making:

Figure 2: Diagnostic decision-making workflow using likelihood ratios

Advanced Calculation Methods

For diagnostic tests with multiple ordered categories or continuous results, LRs can be calculated for each specific range or category of results. This approach provides more granular information than dichotomous (positive/negative) classification [28]. The general formula for multi-category LRs is:

- LR for a specific category = (Proportion of patients with disease in the category) / (Proportion of patients without disease in the same category)

This approach is particularly valuable for laboratory biomarkers measured on continuous scales, where selecting an optimal cut-point is essential for clinical utility [29]. Methods for determining optimal cut-points include:

- Youden index

- Euclidean index

- Diagnostic odds ratio (DOR)

- Maximum product of sensitivity and specificity

Likelihood Ratio Versus Random Match Probability

Conceptual Framework

In forensic science, the relationship between likelihood ratios and random match probability represents a fundamental paradigm for evaluating evidence, particularly in DNA analysis [6] [20]. While these concepts are mathematically related, they represent different approaches to interpreting evidentiary value.

The random match probability is the probability that a person other than the suspect, randomly selected from the population, will have the same profile [6]. In contrast, the likelihood ratio is a measure of the strength of evidence regarding the hypothesis that two profiles came from the same source [6].

Mathematical Relationship

In the simplest case of a DNA match, the likelihood ratio is the reciprocal of the random match probability [6]. If the population frequency of a profile is P(x), then:

- LR = 1 / P(x)

This relationship holds when [6]:

- The suspect matches the evidence sample

- No errors occurred in the DNA typing

- The persons who contributed evidence and suspect samples, if different, are unrelated

The following formula demonstrates this relationship in standard forensic notation [6]:

- LR = P(E|Hp) / P(E|Hd) = 1 / P(x)

Where:

- E is the evidence (matching DNA profiles)

- Hp is the prosecution hypothesis (same source)

- Hd is the defense hypothesis (different, unrelated sources)

- P(x) is the random match probability

Application in Forensic Genetics

In forensic DNA practice, when a trace and suspect DNA profile match at every locus tested, the likelihood ratio simplifies to the inverse of the random match probability [20]. The forensic expert would typically report this probability and leave it to the court to evaluate whether the suspect left the trace.

Table 3: Comparison of Likelihood Ratio and Random Match Probability

| Characteristic | Likelihood Ratio (LR) | Random Match Probability (RMP) |

|---|---|---|

| Definition | Ratio of probability of evidence under two competing hypotheses | Probability of a random person having matching profile |

| Interpretation | Measure of evidential strength for same source | Probability of coincidental match |

| Calculation | LR = P(E|Hp) / P(E|Hd) | Frequency of profile in reference population |

| Relationship | LR = 1 / RMP (in simple DNA match cases) | RMP = 1 / LR (in simple DNA match cases) |

| Application | Weighting prosecution vs. defense hypotheses | Estimating probability of coincidental match |

Application in Clinical Decision-Making

Bayesian Interpretation

LRs are fundamentally connected to Bayesian probability theory, providing a mathematical framework for updating disease probability based on test results [4] [1]. The Bayesian approach recognizes that context is critically important in diagnostic interpretation, as the same test result may have different implications depending on the pre-test probability [4].

The mathematical relationship is expressed as:

- Pre-test odds × LR = Post-test odds

Where:

- Pre-test odds = Pre-test probability / (1 - Pre-test probability)

- Post-test probability = Post-test odds / (Post-test odds + 1)

Practical Application Steps

The application of LRs in clinical practice involves a systematic process [4] [28]:

- Estimate pre-test probability based on prevalence, clinical findings, and risk factors

- Convert pre-test probability to pre-test odds

- Multiply pre-test odds by the appropriate LR (LR+ for positive tests, LR- for negative tests)

- Convert post-test odds to post-test probability

For example, if a patient's pre-test probability of iron deficiency anemia is 50% (pre-test odds of 1:1), and the serum ferritin test has an LR+ of 6 [1]:

- Post-test odds = Pre-test odds × LR = 1 × 6 = 6

- Post-test probability = 6 / (6 + 1) = 86%

Research Reagent Solutions for Diagnostic Test Development

Table 4: Essential Research Reagents for Diagnostic Test Validation

| Reagent/Resource | Function in LR Determination | Application Context |

|---|---|---|

| Reference Standard Materials | Serve as gold standard for determining true disease status | Essential for calculating sensitivity and specificity in validation studies |

| Biomarker Assay Kits | Quantify continuous biomarker levels for ROC analysis | Enable determination of optimal cut-points for diagnostic tests |

| Statistical Software Packages | Perform logistic regression and ROC curve analysis | Facilitate calculation of LRs for multiple test thresholds |

| DNA Profiling Systems | Generate genotype data for match probability calculations | Used in forensic applications to determine random match probabilities |

| Clinical Data Management Systems | Store and organize patient test results and outcomes | Enable construction of 2×2 tables for sensitivity/specificity calculation |

Experimental Protocols for LR Determination

Diagnostic Test Validation Protocol

To establish reliable LRs for a diagnostic test, researchers should follow a standardized validation protocol:

Study Population Selection

- Recruit representative sample of patients with and without the target condition

- Ensure appropriate spectrum of disease severity

- Sample size should provide precise estimates (typically hundreds of participants)

Reference Standard Application

- Apply gold standard diagnostic method to all participants

- Ensure blinded interpretation of both index test and reference standard

- Document all true positives, false positives, true negatives, and false negatives

Statistical Analysis

- Construct 2×2 contingency table

- Calculate sensitivity and specificity with confidence intervals

- Compute LR+ and LR- using standard formulas

- Perform ROC analysis for tests with continuous measures

Forensic DNA Match Calculation Protocol

In forensic genetics, the protocol for determining LRs for DNA evidence includes:

DNA Profiling

- Perform STR analysis on evidence and reference samples

- Compare profiles at all loci tested

- Confirm matching profiles

Population Frequency Estimation

- Use appropriate reference database for relevant population

- Calculate genotype frequency using product rule

- Apply necessary adjustments for population structure

LR Calculation

- Compute LR as reciprocal of random match probability

- Report LR with appropriate confidence statements

Likelihood ratios represent a fundamental metric for quantifying the diagnostic value of test results, providing a mathematically rigorous connection between pre-test and post-test probability. The calculation of LRs from sensitivity and specificity offers a straightforward yet powerful method for evaluating diagnostic tests across medical and forensic contexts. The relationship between likelihood ratios and random match probability highlights the conceptual distinction between these approaches to evidence interpretation, with LRs offering a more direct measure of evidential strength. As diagnostic technologies advance, proper understanding and application of LRs will remain essential for researchers, clinicians, and forensic scientists seeking to optimize test interpretation and evidence evaluation.

Random Match Probability (RMP) serves as a fundamental statistical measure in forensic genetics, quantifying the expected frequency of a specific DNA profile within a population. This technical guide examines the calculation of RMP within the broader research context comparing likelihood ratio (LR) versus RMP methodologies. We detail the theoretical foundations accounting for population genetic principles, provide step-by-step computational protocols, and analyze the statistical adjustments required for robust forensic interpretation. The discussion situates RMP within contemporary forensic statistics, addressing its relationship to alternative frameworks like LR and Combined Probability of Inclusion (CPI), while considering limitations and appropriate applications across various evidence scenarios.

Forensic DNA typing represents the gold standard for human identification in criminal investigations, paternity testing, and mass disaster victim identification [30]. The technique leverages DNA polymorphisms—natural variations in the DNA sequence that differ among individuals—to create unique genetic profiles capable of distinguishing one person from another with extremely high certainty [30]. The statistical interpretation of DNA evidence requires sophisticated population genetic models to quantify the significance of a match between two DNA profiles—typically between evidence from a crime scene and a reference sample from a suspect [6].