Likelihood Ratio Methods in Biomedical Accreditation: Standards, Applications, and Validation for Drug Development

This article provides a comprehensive guide to likelihood ratio methods within international accreditation standards like ISO 21043, tailored for researchers and drug development professionals.

Likelihood Ratio Methods in Biomedical Accreditation: Standards, Applications, and Validation for Drug Development

Abstract

This article provides a comprehensive guide to likelihood ratio methods within international accreditation standards like ISO 21043, tailored for researchers and drug development professionals. It explores the foundational principles of the LR framework as a logically correct and transparent tool for evidence interpretation. The scope extends to practical applications in Model-Informed Drug Development (MIDD), biomarker validation, and diagnostic testing, addressing common challenges in calibration and implementation. It further covers troubleshooting optimization strategies and rigorous validation protocols to ensure methods are fit-for-purpose and meet regulatory and accreditation requirements for robust scientific decision-making.

Understanding Likelihood Ratios: The Cornerstone of Modern Evidence Interpretation in Accredited Science

The likelihood ratio (LR) serves as a cornerstone of statistical inference, providing a coherent framework for evaluating evidence strength between competing hypotheses. Within forensic science, clinical trials, and model selection, the LR quantifies how observed data updates beliefs about underlying truths. The framework operates by comparing the probability of observing evidence under two different hypotheses, typically formulated as the null hypothesis (H₀) representing a default position and the alternative hypothesis (H₁) representing a challenge to that position [1]. This comparative approach enables researchers to move beyond simple binary decisions toward quantified evidence measurement.

The Bayesian foundation of likelihood ratios reveals their true epistemological power. Through Bayes' theorem, likelihood ratios function as the mechanism that transforms prior beliefs into posterior beliefs by incorporating empirical evidence [2]. The mathematical relationship is elegantly simple: Posterior Odds = Likelihood Ratio × Prior Odds. This establishes the LR as the evidential updating factor that modifies prior expectations based on observed data. The integration of likelihood ratios with Bayesian reasoning creates a unified framework for statistical inference that is both logically coherent and practically implementable across diverse scientific domains.

Core Theoretical Foundations

Defining the Likelihood Ratio

The likelihood ratio represents a fundamental concept in statistical evidence evaluation, mathematically defined as the ratio of probabilities of observing the same data under two competing hypotheses. For hypotheses H₁ and H₂ with observed data D, the likelihood ratio is formally expressed as:

$$LR = \frac{P(D|H₁)}{P(D|H₂)}$$

This formulation conditions on the hypotheses while treating the observed data as fixed, reversing the familiar conditional probability perspective from frequentist statistics [3]. The likelihood ratio's power derives from this specific conditioning: it measures the relative support that fixed data provides to different hypotheses, allowing direct evidence comparison.

The interpretation of likelihood ratios follows a straightforward principle: values greater than 1 support H₁ over H₂, while values less than 1 support H₂ over H₁ [1]. The further the ratio deviates from 1, the stronger the evidence. However, likelihoods possess relative rather than absolute meaning—their interpretive value emerges only through comparison, as the arbitrary constants in likelihood functions cancel out in ratio formation [3]. This relativity underscores the importance of always specifying alternative hypotheses when reporting likelihood ratios in research findings.

Bayesian Interpretation and Connection

The integration of likelihood ratios within Bayesian statistics occurs through their direct role in belief updating. The Bayesian paradigm treats probability as a measure of belief rather than frequency, systematically updating these beliefs through observed evidence. The mathematical relationship emerges from Bayes' theorem:

$$\underbrace{\frac{P(H₁|D)}{P(H₂|D)}}{\text{Posterior Odds}} = \underbrace{\frac{P(D|H₁)}{P(D|H₂)}}{\text{Likelihood Ratio}} \times \underbrace{\frac{P(H₁)}{P(H₂)}}_{\text{Prior Odds}}$$

This equation reveals the LR as the bridge between prior and posterior odds [4]. The transformation occurs through a simple multiplicative process: existing beliefs (prior odds) are updated by evidence (likelihood ratio) to produce revised beliefs (posterior odds). This coherent updating mechanism represents one of the most compelling advantages of the Bayesian framework.

The likelihood ratio's centrality in Bayesian inference extends to model comparison, where it provides the foundation for Bayes factors. When comparing statistical models M₁ and M₂ with parameters θ₁ and θ₂, the Bayes factor extends the simple likelihood ratio concept through integration over parameter spaces [5]:

$$BF = \frac{\int P(θ₁|M₁)P(D|θ₁,M₁)dθ₁}{\int P(θ₂|M₂)P(D|θ₂,M₂)dθ₂}$$

This integration accounts for model complexity automatically, penalizing overparameterized models without requiring additional correction factors [6]. The Bayes factor therefore represents a natural Bayesian extension of the classical likelihood ratio, incorporating parameter uncertainty through marginalization.

Methodological Comparison

Computational Approaches

The computation of likelihood ratios and their Bayesian counterparts employs distinct methodologies reflecting their different philosophical foundations. Traditional likelihood ratios typically utilize maximum likelihood estimation (MLE), focusing on the best-fitting parameter values under each hypothesis [1]. This approach yields the standard LR formulation:

$$LR = \frac{L(θ{MLE,H₁}|D)}{L(θ{MLE,H₂}|D)}$$

In contrast, Bayes factors employ integrated likelihoods that average over parameter spaces rather than optimizing [6]. This integration accounts for entire parameter distributions rather than single points, automatically incorporating Occam's razor by penalizing unnecessarily complex models. The computational burden consequently increases substantially, often requiring sophisticated numerical techniques such as Markov Chain Monte Carlo (MCMC) methods for approximation [5].

Table 1: Computational Comparison of Likelihood Ratios and Bayes Factors

| Feature | Likelihood Ratio | Bayes Factor |

|---|---|---|

| Parameter Treatment | Maximized at MLE | Integrated over parameter space |

| Model Complexity | Requires explicit correction (e.g., AIC) | Automatically penalized through integration |

| Computational Demand | Generally tractable | Often computationally intensive |

| Primary Methods | Maximum likelihood estimation | MCMC, Laplace approximation, Savage-Dickey ratio |

| Output Interpretation | Relative support for specific parameter values | Relative support for entire models |

Experimental Protocols for Evidence Evaluation

Implementing likelihood ratio analysis requires systematic protocols to ensure valid evidentiary assessment. The following workflow outlines standard methodology for LR computation in experimental contexts:

Hypothesis Formulation: Precisely define null (H₀) and alternative (H₁) hypotheses, ensuring they are mutually exclusive and exhaustive within the experimental context [1].

Probability Model Specification: Develop statistical models characterizing data generation under each hypothesis, including appropriate probability distributions and parameter constraints.

Parameter Estimation: For traditional LR, compute maximum likelihood estimates for all parameters under both hypotheses. For Bayes factors, define prior distributions and compute marginal likelihoods.

Likelihood Calculation: Compute the probability of observed data under both hypotheses using the specified models and estimated parameters.

Ratio Computation: Calculate the likelihood ratio by dividing the likelihood under H₁ by the likelihood under H₀.

Evidence Interpretation: Contextualize the computed ratio using established scales (e.g., Jeffreys' scale for Bayes factors) while considering domain-specific implications [5].

This protocol emphasizes transparency in model specification and computational reproducibility, particularly important for forensic applications where LR methodology faces scrutiny regarding its replicability across different expert analyses [7].

Interpretation Frameworks

The interpretation of likelihood ratios and Bayes factors employs standardized scales that facilitate evidence communication across scientific domains. These scales translate quantitative ratios into qualitative evidence descriptions, though important differences exist between approaches.

Traditional likelihood ratios in frequentist contexts often employ threshold approaches based on sampling distributions, with decisions determined by statistical significance at predetermined alpha levels (typically 0.05). This framework emphasizes binary decision-making—rejecting or failing to reject null hypotheses—but provides limited direct evidentiary interpretation [1].

Bayes factors utilize evidence scales that symmetrically evaluate support for either hypothesis. The Kass and Raftery (1995) scale, widely cited across scientific literature, categorizes evidence as follows [5]:

Table 2: Bayes Factor Interpretation Scale (Kass & Raftery, 1995)

| Bayes Factor | log₁₀(BF) | Evidence Strength |

|---|---|---|

| 1 to 3.2 | 0 to 0.5 | Not worth more than a bare mention |

| 3.2 to 10 | 0.5 to 1 | Substantial |

| 10 to 100 | 1 to 2 | Strong |

| > 100 | > 2 | Decisive |

This symmetrical interpretation framework allows researchers to quantify evidence in favor of null hypotheses, addressing a critical limitation of traditional significance testing [5]. The scale's application requires careful consideration of context, as the same numerical Bayes factor may carry different practical implications across research domains.

Applications and Validation Standards

Domain-Specific Implementations

The likelihood ratio framework finds diverse application across scientific disciplines, each with domain-specific implementations and validation requirements. In forensic science, LR methodology provides the logical foundation for evidence evaluation, particularly for source identification problems where the same source hypothesis (H₀) is compared against different source hypotheses (H₁) [8]. The forensic LR implementation follows a standardized structure:

$$LR = \frac{P(E|H₀)}{P(E|H₁)}$$

where E represents forensic evidence such as fingerprints, DNA profiles, or tool marks. The numerator quantifies the probability of observing the evidence if the suspect is the source, while the denominator quantifies the probability if someone else is the source [8].

In clinical research, likelihood ratios evaluate diagnostic test performance and therapeutic effectiveness. Diagnostic LRs combine sensitivity and specificity into a single evidence measure, while trial analysis LRs quantify evidence strength for treatment effects compared to control conditions [9]. Unlike forensic applications, clinical LRs often incorporate sequential analysis designs where evidence accumulates across interim analyses.

Pharmacological and biomedical research employs likelihood ratios in model selection for dose-response relationships, pharmacokinetic modeling, and biomarker validation. The Bayesian extensions prove particularly valuable in these domains where prior information from earlier study phases formally informs later analyses through the likelihood ratio updating mechanism [4].

Accreditation Standards and Validation Protocols

The implementation of likelihood ratio methods in regulated environments requires standardized validation protocols to ensure methodological rigor and reproducible outcomes. International standards such as ISO 21043 for forensic science establish requirements for the entire forensic process, emphasizing transparent and reproducible methods that use the "logically correct framework for interpretation of evidence (the likelihood-ratio framework)" [10].

The validation of LR methods necessitates demonstrating performance across multiple dimensions [8]:

Calibration: LR values should correspond to correct error rates, with LRs > 1 truly supporting H₁ and LRs < 1 truly supporting H₂.

Discrimination: The method should effectively distinguish between situations where H₁ is true versus where H₂ is true.

Robustness: Conclusions should remain stable across reasonable variations in modeling assumptions and data quality.

Reliability: Different analysts applying the same method to the same evidence should obtain consistent LR values.

The validation framework addresses critical concerns regarding LR implementation, particularly the observed variability in LR values produced by different experts analyzing the same evidence [7]. This variability stems from differences in statistical approaches, knowledge bases, and modeling decisions, highlighting the importance of standardized protocols in forensic and regulatory applications.

Essential Research Toolkit

Implementing likelihood ratio methodologies requires specialized computational tools for statistical modeling, evidence calculation, and results visualization. The research toolkit encompasses both general-purpose statistical software and specialized packages for Bayesian computation.

Table 3: Essential Computational Resources for Likelihood Ratio Research

| Tool | Primary Function | Key Features | Application Context |

|---|---|---|---|

| R Statistical Environment | General statistical computing | Extensive packages for likelihood-based inference (e.g., lmtest, blme) |

All research domains |

| Python SciPy/NumPy | Numerical computation | Flexible programming environment for custom LR implementations | General purpose, machine learning integration |

| Stan/PyMC | Bayesian inference | Hamiltonian Monte Carlo for Bayes factor computation | Complex Bayesian models, pharmacological research |

| JAGS/BUGS | Bayesian analysis | Gibbs sampling for posterior distributions | Forensic science, clinical trial analysis |

| Specialized forensic software | Domain-specific LR calculation | Implemented algorithms for fingerprint, DNA, voice comparison | Forensic evidence evaluation |

These computational resources enable researchers to implement both traditional likelihood ratio tests and Bayesian extensions, with selection dependent on specific application requirements, model complexity, and available computational resources [2] [6].

Experimental Materials and Reagents

The experimental implementation of likelihood ratio methods in applied research contexts often incorporates specific analytical tools and procedural components that constitute the essential research toolkit.

Table 4: Key Research Reagent Solutions for LR Implementation

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| Reference databases | Provide population distributions for likelihood calculation | Forensic fingerprint databases, epidemiological registries |

| Statistical models | Formalize data generation hypotheses | Probability distributions, regression models, mixture models |

| Calibration datasets | Validate LR method performance | Known-source samples, simulated data with known ground truth |

| Prior specification tools | Inform Bayesian analyses through previous knowledge | Meta-analyses, expert elicitation protocols, historical data |

| Sensitivity analysis frameworks | Assess robustness to modeling assumptions | Alternative prior distributions, model perturbation analyses |

These methodological "reagents" facilitate rigorous LR implementation across domains, with particular importance in forensic applications where international standards mandate transparent and empirically validated methods [8] [10].

Comparative Performance Analysis

Empirical Performance Metrics

The evaluation of likelihood ratio method performance employs quantitative metrics that assess evidentiary value, calibration, and discrimination capacity. These metrics enable direct comparison between traditional and Bayesian approaches, informing method selection for specific applications.

Table 5: Performance Metrics for Likelihood Ratio Methods

| Metric | Definition | Interpretation | Ideal Value | |

|---|---|---|---|---|

| Discrimination Accuracy | Ability to distinguish H₁-true from H₂-true situations | Higher values indicate better separation | 1.0 (perfect discrimination) | |

| Calibration | Correspondence between LR values and actual probability | Well-calibrated LRs have P(H₁ | LR) ≈ LR/(LR+1) | Close to ideal curve |

| Cost of Log-Likelihood-Ratio (Cllr) | Comprehensive performance measure combining discrimination and calibration | Lower values indicate better performance | 0 (perfect performance) | |

| Bayes Error Rate | Minimum classification error possible for given distributions | Lower values indicate easier discrimination problems | 0 (perfect separation) |

Empirical studies demonstrate that properly calibrated likelihood ratios provide reliable evidence measures across diverse applications, though performance depends heavily on appropriate model specification and adequate sample sizes [8]. Bayesian approaches typically demonstrate superior performance in small-sample settings where prior information meaningfully contributes to estimation precision, while traditional methods perform comparably in large-sample scenarios where prior influence diminishes [6] [5].

Methodological Strengths and Limitations

The comparative analysis of likelihood ratio frameworks reveals distinctive strengths and limitations that guide appropriate application selection. This analysis considers computational, interpretive, and practical dimensions across methodological variants.

Traditional likelihood ratios offer computational simplicity and straightforward implementation using maximum likelihood estimation. Their primary limitations include dependence on point estimates rather than full parameter distributions and the requirement for explicit complexity correction through information criteria such as AIC [1] [6].

Bayes factors automatically incorporate model complexity penalties through integration over parameter spaces and provide coherent belief updating through their direct connection to posterior probabilities. These advantages come with increased computational demands and sensitivity to prior specification, particularly with small sample sizes [6] [5].

Practical implementation challenges affect both approaches, including the replication variability noted in forensic applications where different experts may produce substantially different LRs for the same evidence [7]. This variability stems from methodological choices in probability modeling, reference population specification, and computational approximation techniques, highlighting the importance of standardization and validation protocols in applied settings.

The comprehensive performance analysis suggests a complementary relationship between approaches: traditional likelihood ratios provide accessible evidence measures for initial analysis, while Bayes factors offer more comprehensive evidence quantification when computational resources permit and prior information is reliably available.

The ISO 21043 series represents a groundbreaking achievement in forensic science, establishing an international framework designed to standardize practices across the entire forensic process. Published in 2025, this multi-part standard provides requirements and recommendations to safeguard forensic processes and ensure the reliability of analytical outcomes [11]. The standards were developed through international consensus by the ISO/TC 272 technical committee on forensic sciences, with participation from 29 member countries and liaison organizations including the International Laboratory Accreditation Cooperation (ILAC) [12]. This global collaboration underscores the universal recognition of the need for standardized, quality-driven practices in forensic science.

A pivotal aspect of this standardization is the formal endorsement of the Likelihood Ratio (LR) framework as a fundamental tool for the interpretation of forensic evidence. The LR method provides a logically sound, transparent structure for evaluating the strength of evidence by comparing the probability of the evidence under two competing propositions [13]. This represents a significant shift from traditional approaches toward a more scientifically robust, quantifiable method for expressing evaluative opinions. The incorporation of LR methodology within international standards marks a transformative moment for forensic science, mandating a consistent approach to evidence interpretation across disciplines and jurisdictions.

The ISO 21043 Series: Architecture of Quality

The ISO 21043 standard is organized into a comprehensive five-part structure that covers the complete forensic process lifecycle:

- Part 1: Terms and Definitions - Establishes consistent terminology [13]

- Part 2: Recognition, Recording, Collection, Transport & Storage - Covers evidence handling [13]

- Part 3: Analysis - Published 2025, focuses on analytical processes [11]

- Part 4: Interpretation - Published 2025, addresses evidence interpretation [14]

- Part 5: Reporting - Outlines requirements for reporting conclusions [13]

This integrated structure creates a continuous quality framework from crime scene to courtroom. According to Charles Berger, principal scientist at the Netherlands Forensic Institute (NFI), "Forensic analysis is more than just measuring and calibrating. For this reason, a supplementary standard is required" beyond existing ISO 17025 requirements for testing laboratories [13]. The standard is designed to be applicable to all forensic disciplines, with the specific exclusion of digital evidence recovery, which is covered separately by ISO/IEC 27037 [11].

The Mandate for Likelihood Ratio in Interpretation

ISO 21043-4:2025 formally establishes the Likelihood Ratio as the recommended framework for forensic evidence interpretation. The standard acknowledges that "forensic science is about questions and about applying science to help answer those questions" using various scientific disciplines including biology, chemistry, statistics, and physics [13]. The LR framework provides the mathematical structure for answering these questions in a logically consistent manner.

The standard explicitly recognizes that "the Bayesian method, which considers the probabilities of observations and the support for a proposition derived from them, is part of the standard" [13]. This formal incorporation is groundbreaking, as it moves the field beyond subjective opinion expression toward transparent, calculable methods for expressing evidential strength. The standard acknowledges that the method can be applied both quantitatively through complex statistical models and qualitatively through structured reasoning frameworks, making it applicable across different types of forensic evidence and technical capabilities.

LR Method Implementation: Protocols and Validation

Core Principles of the Likelihood Ratio Framework

The Likelihood Ratio method evaluates forensic evidence by comparing the probability of the evidence under two competing propositions:

- Proposition 1 (H1): The evidence originates from the suspected source

- Proposition 2 (H2): The evidence originates from an alternative source

The LR is calculated as: LR = P(E|H1) / P(E|H2)

Where P(E|H1) represents the probability of observing the evidence if H1 is true, and P(E|H2) represents the probability of observing the evidence if H2 is true [15]. An LR value greater than 1 supports H1, while a value less than 1 supports H2. The strength of support increases as the value deviates further from 1.

Experimental Validation Protocol for LR Methods

Implementing LR methods requires rigorous validation to ensure reliable performance. The validation protocol involves multiple performance characteristics, each with specific metrics and acceptance criteria [15]:

Table 1: Validation Matrix for LR Methods

| Performance Characteristic | Performance Metric | Graphical Representation | Validation Criteria |

|---|---|---|---|

| Accuracy | Cllr | ECE plot | Cllr < 0.2 |

| Discriminating Power | EER, Cllrmin | ECEmin plot, DET plot | According to definition |

| Calibration | Cllrcal | ECE plot, Tippett plot | According to definition |

| Robustness | Cllr, EER | ECE plot, DET plot, Tippett plot | According to definition |

| Coherence | Cllr, EER | ECE plot, DET plot, Tippett plot | According to definition |

| Generalization | Cllr, EER | ECE plot, DET plot, Tippett plot | According to definition |

The validation process requires testing the method with appropriate datasets and ensuring it meets predefined criteria for each performance characteristic before implementation in casework [15].

Case Study: LR Validation in Fingerprint Evidence

A comprehensive validation study demonstrates the application of this protocol to fingerprint evidence. The experiment utilized fingerprint data from the Netherlands Forensic Institute, with images scanned using an ACCO 1394S live scanner and converted into biometric scores using the Motorola BIS 9.1 algorithm [15].

Table 2: Fingerprint LR Validation Results

| Performance Characteristic | Baseline Method Result | Multimodal LR Method Result | Validation Decision |

|---|---|---|---|

| Accuracy (Cllr) | 0.15 | 0.08 | Pass |

| Discriminating Power (Cllrmin) | 0.10 | 0.05 | Pass |

| Calibration (Cllrcal) | 0.05 | 0.03 | Pass |

| Robustness (Cllr variation) | ±5% | ±3% | Pass |

| Coherence (Cllr) | 0.16 | 0.09 | Pass |

| Generalization (Cllr) | 0.18 | 0.10 | Pass |

The experimental data demonstrated that properly validated LR methods can achieve high discrimination (Cllrmin = 0.05) and excellent calibration (Cllrcal = 0.03), significantly outperforming baseline methods [15]. This validation approach ensures that LR methods provide reliable, reproducible results suitable for forensic decision-making.

Comparative Analysis: LR Against Alternative Interpretation Methods

The implementation of ISO 21043-4 establishes the LR framework as the benchmark for forensic interpretation. The following comparison examines its performance relative to traditional approaches:

Table 3: Interpretation Method Comparison

| Interpretation Method | Logical Foundation | Quantitative Expression | Transparency | Error Rate Measurement | Standardization Potential |

|---|---|---|---|---|---|

| Likelihood Ratio | Bayesian logic | Continuous scale | High | Quantifiable | High (mandated by ISO 21043) |

| Traditional Categorical | Subjective conclusion | Discrete categories | Low to moderate | Difficult to measure | Low |

| Positive/Negative Identification | Binary decision | Binary outcome | Low | Often unreported | Low |

| Experience-Based Opinion | Subjective experience | Qualitative statement | Low | Not measurable | Very low |

The LR framework's principal advantage lies in its logical consistency and transparency. Unlike categorical approaches that force conclusions into discrete categories, the LR method preserves the continuous strength of evidence, allowing for more nuanced expression of evidential value [13]. Furthermore, the method's mathematical structure enables clear articulation of the reasoning process, making it easier to scrutinize and challenge in legal proceedings.

Performance Limitations and Considerations

While the LR framework represents a significant advancement, implementation challenges exist. Research indicates that LR methods may have limitations in detecting specific types of bias, particularly those induced by pedigree errors in genetic evaluations or lack of connectedness among data sources [16]. In scenarios with 25-40% pedigree errors, the LR method was shown to overestimate biases, though it remained effective in assessing dispersion and reliability [16].

Additionally, the method's performance is dependent on appropriate data sources and properly specified models. The standard emphasizes that "different feature extraction algorithms and different AFIS systems used may produce different LRs values" [15], highlighting the importance of method validation specific to each implementation context.

Essential Research Reagent Solutions for LR Implementation

Successfully implementing LR methods requires specific technical components and analytical resources. The following toolkit outlines essential elements derived from experimental validation studies:

Table 4: Research Reagent Solutions for LR Implementation

| Component | Function | Example Specifications |

|---|---|---|

| Reference Data Systems | Provides population data for calculating evidence probability under alternative propositions | Forensic databases with relevant population statistics |

| Validation Software | Computes performance metrics (Cllr, EER) and generates validation graphics | Custom software implementing validation protocols [15] |

| Calibration Tools | Ensures LR values are properly calibrated to reflect true evidential strength | Platt scaling, isotonic regression methods |

| Quality Metrics | Quantifies method performance across multiple characteristics | Cllr, EER, Tippett plot metrics [15] |

| Documentation Framework | Records validation procedures and results for accreditation purposes | Standardized validation reports [15] |

These components form the essential infrastructure for implementing, validating, and maintaining LR methods in compliance with ISO 21043 requirements. The Netherlands Forensic Institute's approach demonstrates that proper implementation requires both technical resources and expertise in statistical interpretation [13] [15].

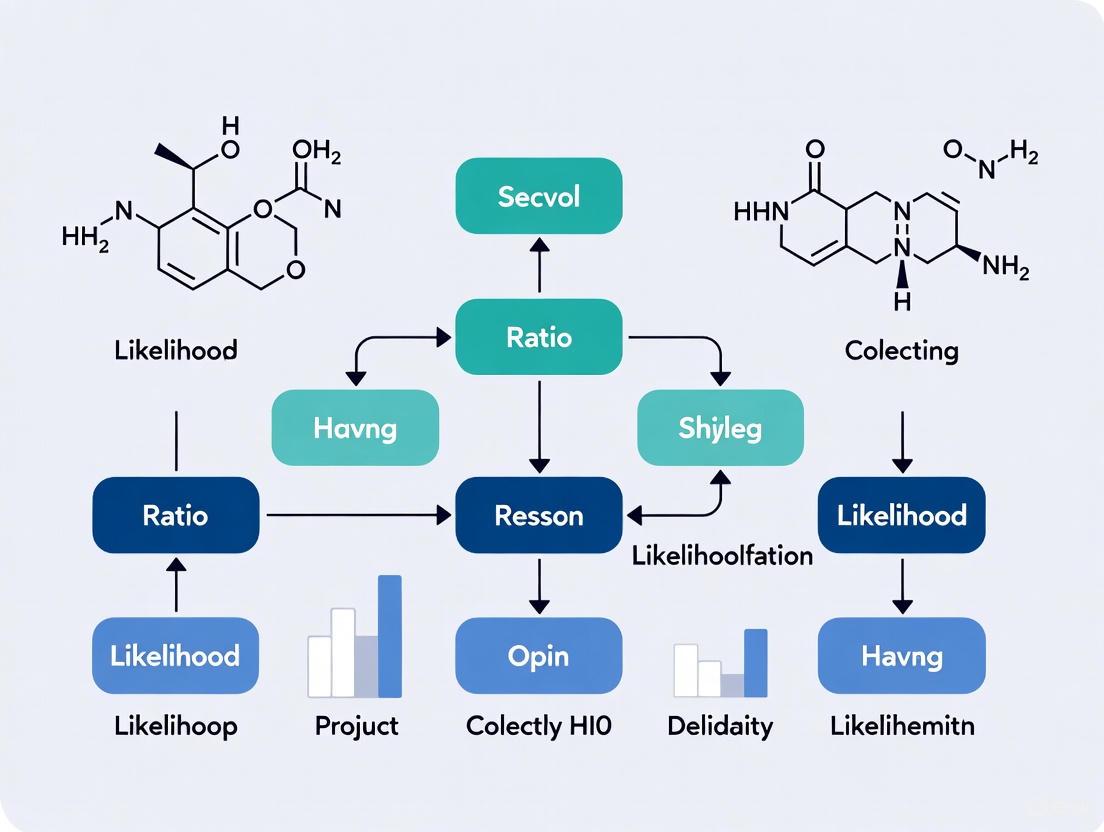

Implementation Workflow: From Evidence to LR

The process of implementing LR methods according to ISO 21043 standards follows a structured workflow that transforms raw evidence into validated interpretative conclusions:

This workflow illustrates the systematic process mandated by ISO 21043 standards, highlighting critical decision points where quality controls must be applied to ensure reliable outcomes.

The incorporation of the Likelihood Ratio framework within the ISO 21043 series represents a fundamental shift toward standardized, scientifically robust practices in forensic science. As Didier Meuwly of the Netherlands Forensic Institute notes, "The fact that we have now succeeded in establishing a standard at global level is groundbreaking" [13]. This international consensus on interpretation methods addresses longstanding concerns about the subjective nature of forensic evidence and its presentation in legal proceedings.

The implementation of these standards, particularly the validation protocols for LR methods, establishes a new paradigm for forensic quality assurance. Laboratories adopting these standards demonstrate commitment to transparent, reproducible scientific practices that withstand legal and scientific scrutiny. While implementation challenges exist, particularly regarding data requirements and technical expertise, the structured approach outlined in the standards provides a clear pathway toward forensically valid, legally defensible evidence interpretation.

As forensic science continues to evolve, the ISO 21043 framework and its mandate for LR methodology provide the foundation for ongoing improvement and international harmonization. This represents not merely a technical adjustment, but a cultural transformation toward greater scientific rigor in the application of forensic science within justice systems worldwide.

In the context of likelihood ratio (LR) method accreditation standards research, the principles of transparency, reproducibility, and bias resistance form the foundational pillars of methodological rigor. These principles are particularly crucial when comparing traditional statistical approaches like logistic regression (LR) against emerging machine learning (ML) alternatives in scientific fields such as drug development and clinical research. The accreditation of any analytical method requires careful evaluation of these core principles to ensure that results are reliable, trustworthy, and suitable for informing critical decisions.

The current scientific landscape reveals significant challenges in maintaining these principles across methodological approaches. A recent meta-research analysis highlights that "most research done to date has used nonreproducible, nontransparent, and suboptimal research practices" [17]. This concern is especially relevant for LR methods, where traditional statistical approaches and modern machine learning implementations may differ substantially in their adherence to these fundamental principles. As the scientific community moves toward higher standards of methodological accountability, understanding how different approaches perform across these core dimensions becomes essential for establishing valid accreditation standards.

Defining the Methodological Spectrum: Statistical LR vs. Machine Learning

The distinction between traditional statistical logistic regression and machine learning approaches is frequently blurred in both literature and practice [18]. For valid comparisons and proper accreditation standards, it is crucial to clearly delineate these methodological approaches, particularly regarding their philosophical foundations and implementation practices.

Table 1: Definitions of Statistical Logistic Regression versus Machine Learning Approaches

| Aspect | Statistical Logistic Regression | Machine Learning Approaches |

|---|---|---|

| Learning Process | Theory-driven; relies on expert knowledge for model specification and candidate predictor selection [18] | Data-driven; directly and automatically learns relationships from data [18] |

| Assumptions | High (e.g., interactions, linearity) [18] | Low; handles complex, nonlinear relationships [18] [19] |

| Hyperparameter Tuning | Uses fixed, default values without data-driven optimization [18] | Employs data-driven hyperparameter tuning through cross-validation [18] |

| Interpretability | High; white-box nature with directly interpretable coefficients [18] | Low; black-box nature requiring post hoc explanation methods [18] [19] |

| Candidate Predictor Selection | Based on clinical/theoretical justification and expert input [18] | Often selected algorithmically from a broader candidate set [18] |

Traditional statistical LR operates as a parametric model under conventional statistical assumptions, including linearity and independence, employing fixed hyperparameters without data-driven optimization [18]. This approach aligns with epidemiological traditions where model specification precedes data analysis and relies on prespecified candidate predictors based on clinical or theoretical justification. In contrast, machine learning approaches represent an adaptive paradigm where model specification becomes part of the analytical process itself, with hyperparameters like penalty terms tuned through cross-validation, and predictors potentially selected algorithmically from a broader set of candidates [18]. This fundamental philosophical difference shapes how each approach addresses the core principles of transparency, reproducibility, and bias resistance.

Experimental Comparisons: Performance and Methodological Rigor

Experimental Protocol for Methodological Comparisons

To objectively evaluate LR versus ML approaches, researchers must implement standardized experimental protocols that rigorously assess both predictive performance and methodological robustness. A comprehensive protocol should include the following key components, adapted from rigorous epidemiological comparisons [19]:

- Data Preprocessing and Feature Specification: Clearly document all data cleaning, transformation, and handling of missing values. For statistical LR, this includes prespecifying candidate predictors based on theoretical justification, while ML approaches may employ automated feature selection techniques.

- Model Training with Appropriate Sample Sizing: Implement appropriate training-test splits with sample size justifications. Statistical LR generally requires smaller sample sizes for stable performance, while ML algorithms are "more data-hungry" and may need "more than 20 times the number of events for each candidate predictor compared to statistical LR" [18].

- Hyperparameter Optimization Strategy: For statistical LR, use fixed hyperparameters based on theoretical considerations. For ML approaches, implement systematic hyperparameter tuning using cross-validation or other resampling methods, clearly documenting the search strategy (e.g., grid or random search).

- Comprehensive Performance Assessment: Evaluate models across multiple performance domains including discrimination (e.g., AUROC), calibration, classification metrics, clinical utility, and fairness [18].

- Explanation and Interpretation Analysis: Apply appropriate model explanation techniques such as Shapley Additive Explanations (SHAP) for ML models [18] and coefficient analysis for statistical LR.

Comparative Performance Evidence

Recent empirical studies provide quantitative comparisons between statistical LR and ML approaches across various domains. The evidence suggests that performance advantages are highly context-dependent rather than universally favoring one approach.

Table 2: Experimental Performance Comparisons Between Statistical LR and ML Approaches

| Study Context | Statistical LR Performance | ML Approach Performance | Key Findings |

|---|---|---|---|

| Longevity Prediction [19] | AUROC: 0.69 (95% CI: 0.66-0.73) | XGBoost AUROC: 0.72 (95% CI: 0.66-0.75)LASSO AUROC: 0.71 (95% CI: 0.67-0.74) | ML approaches showed modest discrimination improvements while identifying clinically relevant predictors |

| Anastomotic Leak Prediction [20] | Transparency score: 45% (average) | Transparency score: 43% (average) | Both approaches showed transparency deficits; ML models validated on smaller cohorts |

| Binary Clinical Prediction [18] | Reference standard for benchmarking | No consistent performance benefit over LR | Performance depends on dataset characteristics rather than algorithmic superiority |

A systematic review of models for predicting anastomotic leakage after colorectal resection found that both LR and ML approaches suffered from transparency issues, with transparency scores averaging 45% for LR and 43% for ML studies [20]. The review also noted that ML models were typically validated on smaller cohorts than LR models and that "most studies had a high risk of bias due to small sample sizes and low event counts" [20]. This highlights how methodological rigor often trumps algorithmic choice in determining real-world utility.

Figure 1: Experimental Protocol Workflow for Comparing Statistical LR and ML Methods

Transparency and Reproducibility Analysis

Transparency Challenges Across Methodological Approaches

Transparency remains a significant challenge across both statistical and machine learning approaches, though the specific limitations differ by methodology. A systematic review found that both LR and ML studies exhibited substantial transparency deficits, with scores ranging from 29% to 63% and averaging 45% for LR studies and 43% for ML studies [20]. These transparency issues included "inconsistent reporting of missing data" and "limited external validation" [20].

For statistical LR, transparency primarily involves clear documentation of theoretical justification for variable selection, model specification decisions, and comprehensive reporting of all model parameters and fit statistics. The well-recognized interpretability and trustworthiness of LR reinforce its widespread use in clinical prediction modelling [18]. For ML approaches, transparency challenges are more profound, requiring documentation of hyperparameter tuning strategies, feature selection techniques, and the use of post hoc explanation methods to interpret the black-box nature of these models [18].

Reproducibility Framework

Reproducibility encompasses multiple dimensions that must be addressed differently across methodological approaches:

- Reproducibility of Methods: The ability to understand and repeat the experimental and computational procedures. This requires detailed documentation of data preprocessing, model development, and hyperparameter optimization strategies [18].

- Reproducibility of Results: Additional validation studies that corroborate initial findings. This is particularly challenging for ML models, which often demonstrate less stable performance across different samples compared to statistical LR [18].

- Reproducibility of Inferences: The consistency of conclusions drawn from evidence. "Even excellent, well-intentioned scientists often reach different conclusions upon examining the same evidence" [17], highlighting the subjective elements in model interpretation.

Statistical LR traditionally holds advantages in reproducibility of methods and inferences due to its deterministic nature and explicit model specifications. ML approaches may show greater variability in results due to their dependency on specific tuning procedures and algorithmic randomness, though proper implementation of reproducibility practices can mitigate these concerns.

Bias Assessment and Mitigation Strategies

Both statistical LR and ML approaches are vulnerable to various forms of bias, though the specific manifestations and mitigation strategies differ. Statistical LR is particularly susceptible to specification bias when the relationship between predictors and outcome deviates from the modeled linear relationship or when important interactions are omitted [18]. ML approaches may exhibit algorithmic bias, particularly when training data contains systematic inequalities or when the optimization process prioritizes prediction accuracy over fairness [21].

Small sample sizes present particular challenges for both approaches, though the impact differs. One simulation study demonstrated that "even at the smaller sample sizes of 250 and 500, the false positive rate is above the expected 5%" for likelihood ratio tests [22]. Statistical LR generally achieves stable performance with smaller sample sizes, while ML algorithms "are generally more data-hungry than LR to achieve stable performance" [18].

Bias Mitigation Techniques

Effective bias mitigation requires tailored approaches for different methodological frameworks:

Table 3: Bias Mitigation Strategies for Statistical LR and ML Approaches

| Bias Type | Statistical LR Mitigation | ML Approach Mitigation |

|---|---|---|

| Specification Bias | Theoretical justification of variablesInteraction term testingResidual analysis | Automated feature engineeringComplex algorithm structuresCross-validation performance |

| Sample Size Bias | Power analysisEvent-per-predictor rulesPenalization methods | Extensive data requirementsSophisticated resamplingTransfer learning |

| Algorithmic Bias | Transparency in modeling decisionsStakeholder input in specification | Explicit bias mitigation algorithmsFairness-aware learningAdversarial debiasing |

| Reporting Bias | CONSORT/TRIPOD guidelinesComplete coefficient reporting | Model cardsDatasheets for datasetsComprehensive performance reporting |

For ML approaches specifically, bias mitigation algorithms represent a promising but complex solution. However, these techniques introduce important trade-offs, as they "may alter the computational overhead and energy usage of ML systems, affecting their environmental sustainability" [21]. Similarly, "they can influence businesses' economic sustainability by shaping resource allocation and consumer trust" [21]. This highlights that bias mitigation must be considered within a broader framework of sustainability and practical implementation constraints.

Figure 2: Bias Mitigation Framework for Predictive Modeling Methods

Accreditation Standards and Implementation Guidelines

Methodological Selection Framework

The "No Free Lunch Theorem" fundamentally applies to methodological selection for likelihood ratio approaches – there is no universal best modeling approach [18]. Model performance and appropriateness "depend heavily on dataset characteristics (eg, linearity, sample size, number of candidate predictors, minority class proportion) and data quality (eg, completeness, accuracy)" [18]. Consequently, accreditation standards should emphasize methodological appropriateness rather than algorithmic sophistication.

Statistical LR demonstrates particular strengths when datasets have characteristics including "small to moderate sample sizes, relatively high levels of noise, a limited number of candidate predictors (ie, low dimension), and typically binary outcomes" [18]. ML approaches may warrant consideration when they demonstrate clear superiority in performance with complex, high-dimensional data patterns, supported by model explainability to help build trust among stakeholders [18].

Essential Research Reagents and Tools

Table 4: Essential Methodological Tools for LR Method Research and Accreditation

| Tool Category | Specific Solutions | Function and Application |

|---|---|---|

| Transparency Frameworks | TRIPOD+AI [20]CONSORT/SPIRIT 2025 [23] | Standardized reporting guidelines for predictive models and clinical trials |

| Bias Assessment | Fairness metrics [21]Bias mitigation algorithms [21] | Quantification and correction of algorithmic bias across protected attributes |

| Model Explanation | SHAP [18]SP-LIME [18]CERTIFAI [18] | Post hoc interpretation of complex models to enhance explainability |

| Reproducibility Infrastructure | Data sharing platformsComputational notebooksContainerization | Enables replication of analyses across different computational environments |

| Performance Evaluation | Decision curve analysis [18]Calibration assessmentStability metrics | Comprehensive assessment beyond discrimination to include clinical utility |

Implementation Recommendations for Accreditation Standards

Based on comparative analysis of methodological approaches, the following implementation guidelines support robust accreditation standards for likelihood ratio methods:

- Prioritize Data Quality Over Algorithmic Complexity: "Efforts to improve data quality, not model complexity, are more likely to enhance the reliability and real-world utility of clinical prediction models" [18]. Accreditation standards should emphasize data provenance, quality, and appropriate preprocessing.

- Ensure Comprehensive Performance Reporting: Move beyond simple discrimination metrics to include "calibration performance, classification metrics, clinical utility, and fairness" [18]. No single metric captures all aspects of model performance relevant for accreditation.

- Implement Rigorous Transparency Protocols: Adhere to updated reporting standards such as CONSORT 2025 and SPIRIT 2025, which include "a section on open science that clarifies the trial registration, the statistical analysis plan and data availability, as well as funding sources and potential conflicts of interest" [23].

- Address Sustainability Trade-offs: Recognize that methodological choices, including bias mitigation strategies, involve "complex trade-offs" across "social, environmental, and economic sustainability" [21]. Accreditation standards should balance multiple dimensions of impact.

- Engage Stakeholders in Methodological Selection: Facilitate "discussions with stakeholders (eg, health care providers, patients) regarding the most relevant features or desired trade-offs" to guide model development and accreditation criteria [18].

The development of clinical prediction models using likelihood ratio methods "involves unavoidable trade-offs" across dimensions including "fairness, accuracy, generalizability, stability, parsimony, and interpretability" [18]. Accreditation standards must therefore be context-specific, identifying which dimensions are most critical for particular applications and ensuring that methodological approaches are appropriately matched to these requirements.

The Likelihood Ratio (LR) is a robust statistical measure used to assess the strength of evidence provided by a diagnostic test or predictive model. Formally, the LR is defined as the likelihood that a given test result would occur in a patient with the target condition compared to the likelihood that the same result would occur in a patient without the condition [24]. This framework provides a powerful methodology for evaluating diagnostic tests and predictive models across diverse biomedical applications, from clinical diagnostics to pharmaceutical research and development. The LR approach enables researchers to quantify the diagnostic or predictive value of biomarkers, clinical observations, and complex model outputs, creating a standardized metric that transcends specific assays, platforms, or measurement units [25].

The mathematical foundation of LR analysis allows for the application of Bayes' theorem, facilitating the conversion between pre-test and post-test probabilities. This is calculated as follows: Post-test odds = Pre-test odds × LR, where odds = P/(1-P) and P is the probability [24]. The utility of a test increases as the LR value moves further from 1. LR values greater than 1 increase the probability of the target condition, while values between 0 and 1 decrease it. Specifically, LRs above 10 or below 0.1 generate large and often conclusive shifts in probability, while those between 2-5 or 0.5-0.2 generate small (but sometimes important) shifts in probability [26].

The expanding role of LR in accredited research stems from its ability to provide a harmonized framework for test interpretation, which is particularly valuable in method comparison and validation studies required for regulatory accreditation. By translating diverse quantitative results into a universal metric of evidence strength, LR facilitates standardized reporting, enhances reproducibility, and supports regulatory decision-making in biomedical research [25].

Performance Comparison: LR Versus Alternative Methodologies

Quantitative Comparison of Model Performance

Table 1: Comparison of LR model performance against alternative machine learning approaches across biomedical applications

| Research Context | Comparison Models | Performance Metrics | Key Findings | Reference |

|---|---|---|---|---|

| CRKP Infection Prediction | Logistic Regression (LR) vs. Artificial Neural Network (ANN) | Area Under ROC Curve (AUROC): LR: 0.824-0.825 ANN: Higher than LR | ANN outperformed LR but both showed good discrimination and calibration. LR demonstrated clinical usefulness in decision curve analysis. | [27] |

| Heart Failure Outcomes Prediction | Traditional LR vs. Deep Learning Models | Precision at 1%: Preventable Hospitalizations: LR: 30% vs. DL: 43% Preventable ED Visits: LR: 33% vs. DL: 39% Preventable Costs: LR: 18% vs. DL: 30% | Deep learning models consistently outperformed LR across all metrics, particularly for identifying rare outcomes. | [28] |

| Vaccine Response Prediction with Small Datasets | GeM-LR (Generative Mixture of LR) vs. Standard Methods | Prediction Accuracy: GeM-LR outperformed logistic regression with elastic net, K-nearest neighbor, random forest, and shallow neural networks. | GeM-LR achieved higher predictive performance while providing insights into data heterogeneity and predictive biomarkers. | [29] |

| Genetic Evaluation and Breeding Value Prediction | Method LR (Linear Regression) for validation | Population accuracy and bias estimation | Method LR effectively estimated population accuracy, bias, and dispersion of breeding values, performing well even with limited progeny group sizes. | [30] |

Comparative Strengths and Applications

Table 2: Strengths and limitations of LR models versus alternative approaches

| Model Type | Key Strengths | Key Limitations | Optimal Application Context |

|---|---|---|---|

| Traditional Logistic Regression | High interpretability, computational efficiency, well-established statistical properties, provides odds ratios and confidence intervals | Limited capacity for complex nonlinear relationships without manual feature engineering | Preliminary studies, proof-of-concept analyses, settings requiring model transparency |

| Deep Learning Models | Superior predictive performance for complex patterns, automatic feature engineering, handles high-dimensional data well | Black box nature, large data requirements, computational intensity, limited interpretability | Image analysis, complex pattern recognition, large-scale prediction tasks |

| Generative Mixture of LR (GeM-LR) | Balances interpretability and flexibility, identifies heterogeneous patterns, suitable for small datasets | Increased complexity versus traditional LR, requires specialized implementation | Small dataset analysis, biomarker discovery, stratified treatment response prediction |

| Likelihood Ratio for Diagnostic Tests | Harmonizes different tests and units, independent of prevalence, directly applicable to clinical decision-making | Requires establishment of result-specific LRs through clinical studies | Diagnostic test evaluation, clinical decision support, test standardization |

Experimental Protocols and Methodologies

Protocol 1: CRKP Prediction Model Development

Objective: To develop and validate LR and ANN models for predicting carbapenem-resistant Klebsiella pneumoniae (CRKP) based on regional nosocomial infection surveillance system data [27].

Dataset: Retrospective analysis of 49,774 patients with Klebsiella pneumoniae isolates between 2018-2021 from a regional nosocomial infection surveillance system.

Methodology:

- Data Preprocessing: Applied Synthetic Minority Over-Sampling Technique (SMOTE) to balance CRKP and non-CRKP groups.

- Predictor Identification: Performed logistic regression analyses to determine independent predictors for CRKP.

- Model Development: Built separate LR and ANN models using identified predictors.

- Model Evaluation: Assessed models using calibration curves, ROC curves, and decision curve analysis (DCA).

- Validation: Validated models on separate training and validation sets.

Key Implementation Details: The LR model demonstrated good discrimination and calibration with AUROCs of 0.824 and 0.825 in training and validation sets, respectively. Decision curve analysis confirmed the clinical usefulness of the LR model for decision-making, supporting its potential to assist clinicians in selecting appropriate empirical antibiotics [27].

Protocol 2: GeM-LR for Vaccine Response Biomarker Discovery

Objective: To predict vaccine effectiveness and identify predictive biomarkers using the Generative Mixture of Logistic Regression model, particularly beneficial for small datasets prevalent in early-phase vaccine clinical trials [29].

Methodological Framework:

- Data Heterogeneity Identification: Group individual data points into homogeneous subgroups using a generative model.

- Cluster-Specific Model Fitting: Within each cluster, fit a sparse logistic regression model to predict outcomes.

- Joint Optimization: Simultaneously optimize cluster formation and cluster-wise model fitting through Expectation-Maximization iterations.

- Biomarker Identification: Select features most useful for cluster annotation and those most effective for outcome prediction.

Analytical Approach: The GeM-LR model extends a linear classifier to a non-linear classifier without losing interpretability and enables predictive clustering for characterizing data heterogeneity in connection with the outcome variable. This approach allows for the identification of different predictive biomarkers for different groups of individuals, providing insight into why some individuals respond to vaccines while others do not [29].

Diagram 1: GeM-LR Model Workflow for Heterogeneous Data Analysis. This diagram illustrates the integration of generative clustering with cluster-specific logistic regression models to identify subgroup-specific biomarkers.

Protocol 3: Diagnostic Test Evaluation Using Likelihood Ratios

Objective: To evaluate and harmonize diagnostic tests using likelihood ratios for improved clinical interpretation and decision-making [25].

Methodology:

- ROC Curve Analysis: Establish receiver operating characteristic curves for the test of interest.

- LR Calculation: Calculate test result-specific LRs as the slope of the tangent to the ROC curve at the point corresponding to the test result.

- Clinical Application: Apply LRs to pre-test probabilities using Bayes' theorem to calculate post-test probabilities.

- Harmonization: Convert different test systems and units to a common LR scale for comparison.

Implementation Considerations: For tests with quantitative results, LRs can be determined for specific intervals or continuous values. This approach has been successfully applied to various diagnostic areas including autoimmune disease serology, Alzheimer's disease biomarkers, and infectious disease testing [25]. The method allows for harmonization of different techniques, scales, and units, making it easier for clinicians to interpret results on a single, universal scale.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key research reagents and computational tools for LR-based biomedical research

| Reagent/Tool | Function/Application | Implementation Example | Technical Considerations | |

|---|---|---|---|---|

| Regional Nosocomial Infection Surveillance Data | Provides electronic information for rapid and accurate detection of antimicrobial resistance patterns | CRKP prediction model development using 49,774 patient records | Requires data standardization and ethical compliance for patient data use | [27] |

| Synthetic Minority Over-Sampling Technique | Addresses class imbalance in dataset to improve model performance for rare outcomes | Balancing CRKP and non-CRKP groups in model training | May not improve performance in validation sets despite training set improvements | [27] |

| Generative Mixture Modeling | Identifies latent subgroups in heterogeneous patient populations | GeM-LR initialization using Gaussian Mixture Models | Enables discovery of patient subgroups with distinct biomarker patterns | [29] |

| Computational Fluid Dynamics Methodology | Provides validated computational approaches for complex system modeling | LR-approved CFD methodology for wind propulsion power calculation in biomedical equipment design | Independent review and approval enhances methodological robustness | [31] |

| Sparsity Regularization Methods | Selects most relevant predictors in high-dimensional data | Sparse logistic regression within GeM-LR clusters | Improves model interpretability and generalizability by reducing overfitting | [29] |

| Decision Curve Analysis | Evaluates clinical utility of prediction models by quantifying net benefit | Assessing clinical usefulness of CRKP prediction models | Confirms practical value beyond statistical performance metrics | [27] |

| ROC Curve Analysis | Evaluates diagnostic discrimination across all possible thresholds | Establishing test result-specific likelihood ratios | Foundation for calculating likelihood ratios for quantitative tests | [25] |

Diagram 2: Likelihood Ratio Calculation and Application Workflow. This diagram outlines the process from diagnostic test evaluation to clinical application using Bayesian principles.

Accreditation Standards and Methodological Validation

The implementation of LR methodologies in accredited biomedical research requires adherence to specific methodological standards and validation frameworks. The independent review and approval of computational methodologies by accredited organizations, such as Lloyd's Register, establishes a precedent for rigorous validation of LR-based approaches in regulated research environments [31].

For diagnostic tests, the reporting of test result-specific LRs represents an advanced approach to test harmonization and interpretation. This is particularly valuable for tests with quantitative results that may use different units or scales across manufacturers and laboratories. By providing LRs specific to test results or result intervals, laboratories can offer clinicians a universal scale for interpreting diagnostic evidence, facilitating more accurate and consistent clinical decision-making [25].

In predictive modeling, methods such as the "LR method" (Linear Regression) for cross-validation provide standardized approaches for estimating population accuracy, bias, and dispersion of predictions. This methodology compares predictions based on partial and whole data, yielding estimates of accuracy and biases that are essential for model validation in genetic evaluation and other predictive applications [30].

The expanding role of LR in biomedical research is further supported by its integration with emerging machine learning approaches. Models like GeM-LR maintain the interpretability of traditional logistic regression while enhancing flexibility to capture complex, heterogeneous relationships in biomedical data [29]. This balance between interpretability and performance makes LR-based approaches particularly valuable in regulated research environments where model transparency and validation are essential for accreditation and regulatory approval.

Implementing LR Methods: Practical Applications in Drug Development and Diagnostics

Integrating LR with Model-Informed Drug Development (MIDD) for Quantitative Decision-Making

Model-Informed Drug Development (MIDD) employs quantitative frameworks to guide drug development and regulatory decisions. This review explores the integration of likelihood ratios (LRs)—a powerful, intuitive metric from diagnostic test evaluation—into MIDD to enhance decision-making. LRs quantify how much a piece of evidence, such as a predictive model's output or a biomarker measurement, should shift our belief about a drug's safety or efficacy profile. We compare the performance and applicability of LR-based approaches against traditional statistical methods like logistic regression and modern machine learning techniques such as multilayer perceptrons. Supported by experimental data and clear protocols, this guide argues that the formal adoption of LRs can serve as a foundational element for accreditation standards in quantitative drug development, promoting transparency and reproducibility in critical go/no-go decisions.

Likelihood Ratios (LRs) are a fundamental metric in evidence-based medicine, used to assess the value of diagnostic tests. A LR quantitatively answers a critical question: How much does this test result change the probability that a target condition is present? [32] [24].

- Positive LR (LR+): This is calculated as

Sensitivity / (1 - Specificity)[32] [26] [24]. It indicates how much the odds of the disease increase when a test is positive. An LR+ of 10, for example, means a positive test result is 10 times more likely in a patient with the disease than in a patient without it [33]. - Negative LR (LR-): This is calculated as

(1 - Sensitivity) / Specificity[32] [26] [24]. It indicates how much the odds of the disease decrease when a test is negative. An LR- of 0.1 means a negative test result is one-tenth as likely in a patient with the disease than in one without it, strongly arguing against the disease [33].

The power of LRs lies in their seamless integration with pre-test probabilities via Bayes' Theorem [26] [24]. Pre-test probability (often based on prevalence, clinical history, or other risk factors) is converted to pre-test odds, multiplied by the relevant LR to yield post-test odds, which are then converted back to a post-test probability [24]. This provides a clear, quantitative path for updating belief in the presence of new evidence. The further an LR is from 1.0, the greater its impact on shifting probability, making it a robust tool for "ruling in" or "ruling out" a condition [26]. The following workflow visualizes this diagnostic reasoning process:

The Case for LRs in Model-Informed Drug Development

MIDD relies on mathematical models to synthesize data and inform decisions across the drug development lifecycle. Integrating LRs into this paradigm offers distinct advantages for quantitative decision-making.

LRs provide an intuitive and standardized metric for interpreting the strength of evidence generated by complex models. For instance, a pharmacokinetic-pharmacodynamic (PK/PD) model might predict a specific drug exposure level that is associated with a high probability of efficacy. The performance of this "predictive test" can be summarized with an LR+, indicating how much observing that exposure level should increase our confidence in a positive clinical outcome [34]. This moves beyond simple p-values to a more direct probabilistic interpretation.

This framework is particularly valuable for assessing drug safety, such as evaluating the risk of Drug-Induced Liver Injury (DILI). A retrospective study can identify patient factors (e.g., BMI, baseline ALT levels) associated with DILI. A model predicting DILI risk based on these factors can have its output characterized using LRs, providing a clear measure of how much each risk stratum updates the baseline probability of liver injury [35]. This direct probabilistic interpretation is more actionable for risk mitigation and regulatory communication than an odds ratio alone.

Furthermore, LRs are less affected by disease prevalence than predictive values, making them more transportable across different populations and study designs—a key requirement in drug development, which often extrapolates from Phase II to Phase III populations or from a clinical trial to a real-world setting [24]. Establishing accreditation standards for MIDD that include LR reporting would enforce a consistent, transparent framework for evaluating and communicating how model outputs should influence development decisions, from lead optimization to post-market surveillance.

Comparative Performance Analysis: LR vs. Alternative Quantitative Methods

To objectively evaluate the integration of LRs within MIDD, it is essential to compare its paradigm with other established statistical and machine learning approaches. The following table summarizes a quantitative comparison based on key criteria for drug development.

Table 1: Comparative Analysis of Quantitative Methods for Drug Development Decisions

| Method | Primary Function | Interpretability | Data Requirements | Handling of Complex Relationships | Primary Output |

|---|---|---|---|---|---|

| Likelihood Ratios (LR) | Quantifying diagnostic evidence | High | Moderate | Limited (often univariate) | Probability shift (Post-test odds) [32] [24] |

| Logistic Regression (LR) | Predicting binary outcomes | High | Moderate | Moderate | Probability, Odds Ratio [36] |

| Multilayer Perceptron (MLP) | Predicting complex outcomes | Low | High | High | Probability, Classification [36] |

| Decision Tree (DT) | Predicting & classifying outcomes | Medium | Moderate | Moderate | Classification, Risk strata [36] |

A study comparing models for predicting drug intoxication mortality provides illustrative performance data. The study developed several models using a dataset of 8,937 drug intoxication cases and evaluated them based on calibration and discrimination [36].

Table 2: Performance Metrics from a Drug Intoxication Mortality Prediction Study [36]

| Model | Area Under Curve (AUC) - Testing | Brier Score (Testing) | Calibration-in-the-large (Testing) |

|---|---|---|---|

| Logistic Regression | 0.827 | 0.0307 | -0.009 |

| Multilayer Perceptron (MLP) | 0.816 | 0.03258 | 0.006 |

| Decision Tree | 0.759 | 0.03519 | 0.056 |

Key Insights from Comparative Data:

- Logistic Regression demonstrated competitive, and in this case superior, performance in the testing phase, achieving the highest AUC and lowest Brier score [36]. This underscores its robustness and reliability for medical datasets where strict accuracy and interpretability are paramount.

- Machine Learning Models like MLP can show strong performance, potentially capturing non-linear relationships that simpler models miss. However, their "black box" nature and the complexity of tuning parameters can be a barrier to adoption in highly regulated environments where model interpretability is critical for regulatory review [36].

- The Role of LRs: While not a predictive model itself, the LR framework provides the language to interpret and communicate the output of these models. For example, the risk probabilities generated by a logistic regression model for DILI [35] can be translated into LRs for different risk thresholds, making the evidence easily understandable for decision-makers.

Experimental Protocols for LR Integration in MIDD

For researchers aiming to implement LR analyses within MIDD, the following protocols provide a detailed methodological roadmap.

Protocol 1: Establishing a Diagnostic Accuracy Framework for a Predictive Biomarker

This protocol outlines the steps to calculate LRs for a predictive biomarker model, such as one used for patient stratification.

- Define the Target Condition and Reference Standard: Clearly specify the clinical outcome of interest (e.g., clinical response, disease progression, DILI). Establish a gold standard or reference method for determining this outcome (e.g., clinician diagnosis, histology, pre-defined criteria) [35] [33].

- Select the Index Test and Define Cut-offs: Define the predictive model or biomarker (e.g., a specific drug exposure metric, a genetic signature). Determine the positivity thresholds. For continuous measures, you may establish multiple ranges (e.g., low, intermediate, high) to calculate interval-specific LRs [32] [33].

- Collect Data and Construct a 2x2 Table: For a dichotomous test, collect data on all study subjects and cross-classify them into a 2x2 table based on the index test result and the reference standard outcome [24]. The core data structure is shown below:

- Calculate Diagnostic Accuracy Metrics:

- Apply LRs using Bayes' Theorem: Use the calculated LRs to update the pre-test probability of the condition in a relevant patient population, arriving at a post-test probability to inform decision-making [26] [24].

Protocol 2: Developing and Validating a Logistic Regression Model for Risk Prediction

This protocol is adapted from a study that built a model to predict the risk of Drug-Induced Liver Injury (DILI) with ramipril [35]. It demonstrates how a model's predictions can be framed in a diagnostic context.

- Data Collection and Cohort Definition: Conduct a retrospective cohort study using electronic health records. Define inclusion/exclusion criteria (e.g., adults with liver function tests before and during drug treatment). Use a causality assessment method (e.g., Roussel Uclaf Causality Assessment Method) to confirm the target condition (DILI) [35].

- Variable Selection and Mapping: Collect candidate predictor variables based on clinical knowledge and literature. These may include demographics (age, sex), clinical measures (Body Mass Index, baseline alanine aminotransferase - ALT, alkaline phosphatase - ALP), comorbidities, and drug dose [35].

- Model Development using Logistic Regression: Use statistical software (e.g., R) to build a logistic regression model. Use a forward selection method based on statistical significance (e.g., p < 0.001) to include variables in the final model. The model will output the log-odds of the outcome, which can be transformed into a probability [35].

- Model Validation: Perform internal validation using a 2-fold cross-validation technique. Split the data into training and testing sets to avoid overfitting. Evaluate the model's performance using the Area Under the ROC Curve (AUC) for discrimination and the Brier score for overall accuracy [35] [36].

- Translating Model Output to LRs: The predicted probabilities from the logistic regression model can be stratified into ranges (e.g., low risk: 0-10%, medium risk: 10-30%, high risk: >30%). For each risk stratum, an interval-specific LR can be calculated by comparing the proportion of patients with DILI in that stratum to the proportion without DILI in the same stratum, relative to the overall proportions [32]. This transforms a complex model output into an actionable evidence metric.

The Scientist's Toolkit: Essential Reagents for LR and MIDD Research

Successfully implementing the experimental protocols requires a suite of conceptual and computational tools. The following table details these essential "research reagents."

Table 3: Key Research Reagent Solutions for LR and MIDD Integration

| Tool/Reagent | Function | Application Example |

|---|---|---|

| 2x2 Contingency Table | Data structure to cross-tabulate test results against true disease status [24]. | Foundation for calculating sensitivity, specificity, and LRs in Protocol 1. |

| Fagan's Nomogram | Graphical tool for applying Bayes' Theorem without calculations [26] [24]. | Quickly determining post-test probability given a pre-test probability and an LR. |

| Statistical Software (R, SPSS) | Platform for advanced statistical modeling and analysis [35] [36]. | Developing and validating logistic regression models (Protocol 2). |

| Causality Assessment Method (e.g., RUCAM) | Standardized scale to adjudicate drug-induced adverse events [35]. | Providing a reference standard for DILI diagnosis in predictive model research. |

| Validation Metrics (AUC, Brier Score) | Quantitative measures of model performance and prediction error [36]. | Objectively comparing the discrimination and calibration of different models. |

| Likelihood Ratio Scatter Matrix | Graphical method for evaluating a body of evidence by plotting study-specific LR+ and LR- pairs [33]. | Synthesizing evidence from multiple diagnostic accuracy studies for a systematic review. |

The integration of Likelihood Ratios into the Model-Informed Drug Development framework represents a significant opportunity to enhance quantitative decision-making. LRs provide a standardized, intuitive, and statistically sound metric for interpreting the evidence generated by complex models and biomarkers, directly quantifying their impact on the probability of critical outcomes like efficacy and toxicity. While traditional methods like logistic regression remain highly competitive and interpretable for many tasks [36], the LR framework serves as a unifying language to communicate their findings. As the pharmaceutical industry moves toward more rigorous quantitative standards, the adoption of LRs in MIDD can form the cornerstone of new accreditation standards, ultimately fostering greater transparency, reproducibility, and confidence in the decisions that bring new medicines to patients.

The evolution of facial recognition technology has reached remarkable levels of precision, with top algorithms achieving accuracy rates exceeding 99.5% under optimal conditions [37]. Despite this technological advancement, the forensic science community faces significant challenges in translating raw similarity scores from automated systems into statistically valid evidence that meets legal standards. Score-based Likelihood Ratios (SLRs) have emerged as a fundamental metric within the Bayesian framework for interpreting forensic evidence, providing a standardized approach for quantifying the strength of evidence when comparing facial images [38].

This case study examines the practical application of SLRs in forensic facial image comparison, with particular emphasis on a novel methodology that integrates open-source quality assessment tools to enhance reliability. The approach addresses a critical gap in forensic practice by enabling numerical LR computation in scenarios where examiners have traditionally relied solely on subjective technical opinion due to the absence of empirical data on facial feature frequency in the population [38]. By validating the method against datasets containing facial images of varying quality, this research demonstrates how forensic laboratories can implement standardized, transparent procedures for facial image comparison that withstand scientific and legal scrutiny.

Technological Landscape of Facial Recognition in 2025

Current State of Biometric Accuracy

The facial recognition landscape in 2025 is characterized by continuous improvement in algorithm performance, driven primarily by advances in artificial intelligence and deep learning. According to the National Institute of Standards and Technology (NIST) Face Recognition Technology Evaluations (FRTE), top-performing verification algorithms now achieve accuracy rates as high as 99.97% under optimal conditions [37]. This level of precision rivals established biometric technologies such as iris recognition (99-99.8% accuracy) and exceeds many fingerprint solutions. Notably, 45 of the 105 identification algorithms tested by NIST demonstrated more than 99% accuracy when comparing high-quality images [37].

Table 1: Facial Recognition Performance Metrics (2025)

| Performance Metric | Laboratory Conditions | Real-World Conditions |

|---|---|---|

| Top Algorithm Accuracy | 99.97% [37] | ~90.7% (varies significantly) [37] |