Leveraging the Dirichlet-Multinomial Model for Advanced Authorship Attribution in Biomedical Literature

This article provides a comprehensive guide to the Dirichlet-Multinomial (DM) model and its application to authorship attribution, a critical task in validating scientific authorship and detecting academic fraud.

Leveraging the Dirichlet-Multinomial Model for Advanced Authorship Attribution in Biomedical Literature

Abstract

This article provides a comprehensive guide to the Dirichlet-Multinomial (DM) model and its application to authorship attribution, a critical task in validating scientific authorship and detecting academic fraud. Tailored for researchers and drug development professionals, we cover the model's foundational theory for analyzing multivariate count data, its methodological implementation for profiling writing style, strategies for overcoming real-world data challenges like overdispersion and zero-inflation, and rigorous validation techniques against competing models. By bridging robust statistical methodology with practical application, this resource empowers professionals to conduct more reliable and interpretable authorship analysis.

Foundations of the Dirichlet-Multinomial Model: From Theory to Authorship Analysis

The Fundamental Challenge: Overdispersion in Multivariate Count Data

Multivariate count data, where each observation is a vector of non-negative integers, is ubiquitous in many scientific fields. In genomics, this manifests as counts of RNA-seq fragments across different exon sets or transcripts of a gene [1]. In text analysis and authorship attribution, documents are represented as vectors of word counts across a vocabulary, a fundamental data structure for computational linguistics [2] [3]. The multinomial distribution is the foundational probability model for such data, representing the null model that assumes a fixed probability vector for all observations. However, this assumption of stability is often violated in real-world data, which frequently exhibit overdispersion—a phenomenon where the variance in the observed data significantly exceeds the variance predicted by the multinomial model [4] [3].

This overdispersion arises from unobserved heterogeneity. In the context of text, different documents or authors have inherent, latent variations in their word usage probabilities that are not captured by a single, fixed probability vector. Applying a standard multinomial model to such overdispersed data leads to a critical failure: an underestimation of the uncertainty, resulting in overly confident and misleading inferences [4]. Hypothesis tests, such as those for differential word usage, become severely anti-conservative, with inflated Type I error rates, while clustering algorithms can produce unstable and inaccurate groupings [1] [3].

Table 1: Comparative Performance of Models for Multivariate Count Data in Hypothesis Testing

| Model | Controlled Type I Error | High Power | Correlation Structure |

|---|---|---|---|

| Multinomial (MN) | No [1] | Yes [1] | Negative only [1] |

| Dirichlet-Multinomial (DM) | No [1] | Yes [1] | Negative [1] |

| Negative Multinomial (NM) | No [1] | Yes [1] | Positive [1] |

| Generalized Dirichlet-Multinomial (GDM) | Yes [1] | Yes [1] | General [1] |

The Dirichlet-Multinomial Model: A Robust Alternative

The Dirichlet-multinomial (DM) model is a natural and powerful extension of the multinomial that directly accounts for overdispersion. It is a compound probability distribution where the probability vector p for each observation is not fixed but is itself a random variable drawn from a Dirichlet distribution [5]. This hierarchical structure provides a mechanistic way to model extra-multinomial variation.

The generative process for a DM distribution is as follows:

- For each observation

i, draw a probability vectorp_ifrom a Dirichlet distribution with parameter vectorα:p_i ~ Dirichlet(α). - Given this observation-specific

p_i, generate the count vectorx_ifrom a Multinomial distribution:x_i ~ Multinomial(n, p_i)[5] [4].

By marginalizing over the latent p_i, we obtain the Dirichlet-multinomial distribution. Its key advantage is the more realistic mean-variance relationship. While for the multinomial, the variance for component j is Var(X_j) = n * p_j * (1 - p_j), for the DM distribution it is Var(X_j) = n * p_j * (1 - p_j) * [(n + α_0) / (1 + α_0)], where α_0 = Σα_k [5]. The extra dispersion factor (n + α_0) / (1 + α_0) is always greater than 1, formally capturing the overdispersion present in the data. The concentration parameter α_0 controls the degree of overdispersion; smaller values indicate greater heterogeneity between observations [4].

This model can also be understood through an urn model representation. Imagine an urn filled with balls of K colors, with initial counts proportional to the Dirichlet parameter α. Instead of drawing n balls from a single urn (the multinomial case), the DM process involves drawing one ball, noting its color, and then returning it to the urn along with an additional ball of the same color. This "rich-get-richer" mechanism, repeated for n draws, introduces a correlation between draws and increases the variance, making it an excellent model for word burstiness in text [5].

Experimental Protocol: Implementing DM-Based Analysis for Authorship Attribution

This protocol provides a step-by-step guide for applying a Dirichlet-multinomial model to cluster documents for authorship attribution, using the Dirichlet Multinomial Mixture (DMM) model.

Materials and Data Pre-processing

- Text Corpus: A collection of documents (e.g., essays, articles, social media posts) of unknown authorship.

- Software: R (with

DRIMSeq[6] [7] ormglm[1] packages) or Python (withPyMC[4]). - Pre-processing Pipeline:

- Tokenization: Split each document into individual words (tokens).

- Cleaning: Remove punctuation, numbers, and non-alphabetic characters.

- Normalization: Convert all text to lowercase.

- Stop-word Removal: Filter out common but uninformative words (e.g., "the", "and").

- Stemming/Lemmatization: Reduce words to their root form (e.g., "running" → "run").

- Vectorization: Create a document-term matrix (DTM) where rows are documents, columns are unique words (the vocabulary), and values are raw count frequencies.

Workflow for Dirichlet Multinomial Mixture (DMM) Clustering

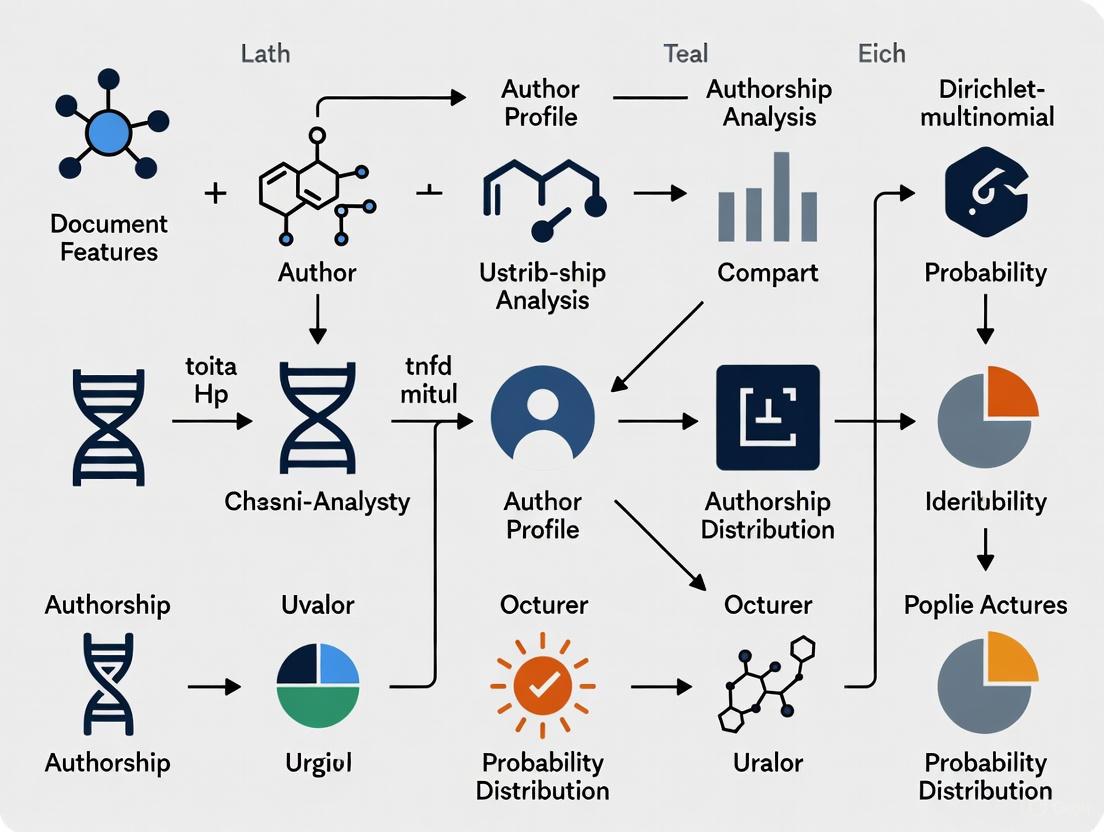

The following diagram illustrates the complete analytical workflow for model-based clustering of text documents using the Dirichlet Multinomial Mixture.

Step-by-Step Procedure

- Model Initialization: Specify the maximum number of potential authors (clusters),

G. The model will infer the effective number of clusters from the data [2]. - Parameter Estimation via EM Algorithm:

- E-Step: For each document

iand clusterg, compute the posterior probabilityt_igthat documentibelongs to clusterg, given the current parameter estimates. - M-Step: Update the Dirichlet parameters for each cluster

gusing the documents weighted by their membership probabilitiest_ig[3].

- E-Step: For each document

- Iteration: Repeat the E and M steps until the log-likelihood converges (i.e., the change falls below a pre-specified tolerance, e.g., 1e-5).

- Cluster Assignment: Assign each document to the cluster for which it has the highest posterior probability

t_ig. - Validation: Evaluate clustering quality using internal metrics (e.g., held-out likelihood) or external metrics (e.g., purity, Normalized Mutual Information) if ground-truth labels are available [2].

Performance and Validation: Empirical Evidence

The superiority of the DM framework over the standard multinomial is demonstrated across multiple domains. In a simulation study of RNA-seq data, which shares the multivariate count structure of text data, the multinomial-logit model exhibited a Type I error rate of 0.97 for a null predictor, a severe inflation over the expected 0.05. In contrast, the Generalized Dirichlet-Multinomial (GDM) model, a more flexible relative of the DM, successfully controlled the Type I error at 0.07 while maintaining high power to detect true effects [1].

In text clustering, methods based on the Dirichlet Multinomial Mixture (DMM) have shown remarkable effectiveness, particularly for short texts. A hybrid approach combining DMM with a fuzzy matching algorithm demonstrated an 83% improvement in purity and a 67% enhancement in Normalized Mutual Information (NMI) across six benchmark datasets compared to other topic modeling methods [2]. This performance is attributed to the model's inherent ability to handle the sparsity and high dimensionality of short text data.

Table 2: Key Reagents and Computational Tools for DM Analysis

| Research Reagent / Tool | Function / Description | Application Context |

|---|---|---|

| Document-Term Matrix (DTM) | A numerical representation of text where rows are documents and columns are word counts. | The fundamental input data structure for all subsequent analysis [2] [3]. |

| Dirichlet Prior | A distribution over the simplex used to model variability in multinomial probabilities. | Accounts for overdispersion; its concentration parameter controls the degree of heterogeneity [5] [4]. |

| EM Algorithm | An iterative optimization method for finding maximum likelihood estimates in latent variable models. | The standard procedure for fitting Dirichlet Multinomial Mixture (DMM) models [3]. |

| Gibbs Sampling | A Markov Chain Monte Carlo (MCMC) algorithm for obtaining a sequence of observations from a complex distribution. | An alternative Bayesian method for fitting DM models, as implemented in PyMC [4]. |

| BIC / AIC | Bayesian/Akaike Information Criterion; metrics for model selection that balance fit and complexity. | Used to select the optimal number of clusters G in an unsupervised setting [8]. |

Comparative Model Framework and Pathway

The DM model exists within a broader ecosystem of generalized linear models for multivariate counts. The following diagram situates the DM model relative to its peers based on its underlying correlation assumptions and guiding principles, highlighting its specific niche for negatively correlated, overdispersed counts.

For authorship attribution and text analysis, where data is fundamentally multivariate counts plagued by overdispersion, the standard multinomial model is an insufficient and risky choice. The Dirichlet-multinomial framework provides a principled, robust, and empirically validated alternative that explicitly accounts for the heterogeneity between documents or authors. Its integration into mixture models and clustering algorithms offers a powerful toolkit for uncovering latent authorship patterns, providing a solid statistical foundation for research in this domain.

The Dirichlet-multinomial (DMN) distribution is a fundamental discrete multivariate probability distribution for categorical count data that exhibits overdispersion—a phenomenon where the observed variance in data exceeds the variance expected under a standard multinomial model [5] [9]. Also known as the Dirichlet compound multinomial distribution or multivariate Pólya distribution, it arises naturally as a mixture distribution where a probability vector p is first drawn from a Dirichlet distribution, and then count data is generated from a multinomial distribution using this random vector [5].

This distribution provides a robust framework for analyzing multivariate count data where observations are correlated or exhibit extra-multinomial variation, making it particularly valuable for authorship attribution research where word counts across documents often demonstrate such properties [9] [10]. The DMN distribution effectively models the inherent variability in language use across different authors and documents, addressing the limitation of the standard multinomial distribution which assumes a fixed probability vector for all observations [4].

Mathematical Foundations

Distribution Formulation

The Dirichlet-multinomial distribution is parameterized by the number of trials n and a concentration parameter vector α = (α₁, ..., αₖ), where all αᵢ > 0 and α₀ = ∑αₖ [5]. The probability mass function for a random vector x = (x₁, ..., xₖ) is given by:

where Γ(·) represents the gamma function, and the support consists of non-negative integers xᵢ such that Σxᵢ = n [5].

This formulation can be understood through a hierarchical model:

- First, draw a probability vector p from a Dirichlet distribution: p ~ Dirichlet(α)

- Then, draw counts x from a multinomial distribution: x ~ Multinomial(n, p)

The resulting marginal distribution after integrating out p is the Dirichlet-multinomial distribution [5] [4].

Moment Properties

The moments of the DMN distribution provide insight into its behavior and applicability:

Table 1: Moment Properties of the Dirichlet-Multinomial Distribution

| Measure | Formula |

|---|---|

| Mean | E(Xᵢ) = n × (αᵢ/α₀) |

| Variance | Var(Xᵢ) = n × (αᵢ/α₀) × (1 - αᵢ/α₀) × [(n + α₀)/(1 + α₀)] |

| Covariance | Cov(Xᵢ, Xⱼ) = -n × (αᵢαⱼ/α₀²) × [(n + α₀)/(1 + α₀)] for i ≠ j |

These moments reveal two key characteristics: the means match those of the multinomial distribution, but the variances are inflated by a factor of (n + α₀)/(1 + α₀), confirming the distribution's capacity to model overdispersed data [5]. All covariances are negative, as an increase in one component necessitates decreases in others due to the fixed sum constraint [5].

Relationship to Other Distributions

The DMN distribution generalizes several important distributions:

- When n = 1, it reduces to the categorical distribution

- When K = 2, it becomes the beta-binomial distribution

- As α₀ → ∞ with αᵢ/α₀ fixed, it approximates the multinomial distribution

- It can approximate the multinomial distribution arbitrarily well for large α [5]

Applications in Authorship Attribution Research

In authorship attribution, documents are typically represented as word count vectors, which are multivariate categorical data constrained to sum to the document length. The DMN distribution provides a natural framework for modeling such data, addressing key challenges:

Accounting for Overdispersion

Traditional multinomial models assume homogeneous word usage across documents by the same author, which rarely holds true in practice. The DMN distribution accommodates the extra variation (overdispersion) in word frequencies that arises from factors such as:

- Writing style variations within an author's works

- Different topics or genres influencing word choice

- Contextual factors affecting language use

This overdispersion is quantified by the concentration parameter α₀, with smaller values indicating greater overdispersion relative to the multinomial distribution [4] [10].

Modeling Author-Specific Characteristics

The DMN distribution can represent each author's writing style through their unique parameter vector αₐᵤₜₕₒᵣ. Documents by the same author share the same underlying Dirichlet prior, capturing their consistent stylistic patterns while accommodating document-specific variations.

Table 2: DMN Components in Authorship Attribution

| Component | Representation | Interpretation in Authorship |

|---|---|---|

| α vector | (α₁, α₂, ..., αₖ) | Author's stylistic signature (relative preference for different words) |

| α₀ | ∑αᵢ | Consistency of author's style (inverse of overdispersion) |

| p ~ Dir(α) | Document-specific word probabilities | Variation in word usage across documents by same author |

| x ~ Mult(n, p) | Observed word counts | Actual word frequencies in a specific document |

Experimental Protocols for Authorship Attribution

Data Preprocessing Protocol

Input: Raw text documents of known authorship Output: Document-term matrix with normalized counts

Text Cleaning

- Remove punctuation, numbers, and special characters

- Convert to lowercase

- Handle encoding issues

Tokenization

- Split text into individual words

- Consider n-grams for phrase detection (optional)

Vocabulary Selection

- Select the K most frequent words across corpus

- Alternatively, use domain-specific vocabulary

- Remove stop words if appropriate for the domain

Count Matrix Creation

- For each document, count occurrences of each vocabulary word

- Create document-term matrix D where Dᵢⱼ = count of word j in document i

Document Length Normalization

- Optionally normalize counts by document length if analyzing proportions

- For DMN modeling, use raw counts with appropriate n parameter

Model Fitting Procedure

Objective: Estimate DMN parameters for each author from training documents

Figure 1: DMN Parameter Estimation Workflow

Parameter Initialization

- For each author, initialize α vector based on word frequencies

- Set αᵢ = max(1, μᵢ × κ) where μᵢ is mean proportion of word i

- κ is a tuning parameter (typically 10-100)

Likelihood Computation

Maximum Likelihood Estimation

- Use optimization algorithms (Newton-Raphson, EM, or gradient-based)

- Apply constraints to ensure αᵢ > 0

- Monitor convergence via log-likelihood changes

Model Validation

- Assess goodness-of-fit using held-out data

- Check residual dispersion

- Compare with alternative models (multinomial, other mixtures)

Authorship Classification Protocol

Objective: Attribute documents of unknown authorship to the most likely author

Feature Extraction

- Process unknown document through same preprocessing pipeline

- Extract word counts for the same vocabulary used in training

Likelihood Calculation

- For each candidate author, compute log-likelihood of document counts under their DMN model

- Use the DMN probability mass function with estimated parameters

Authorship Assignment

- Assign to author with highest log-likelihood

- Compute likelihood ratios for confidence assessment

- Apply thresholding for rejection option when no good match exists

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Tools for DMN-Based Authorship Research

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Text Processing | NLTK, SpaCy, Stanford NLP | Tokenization, lemmatization, preprocessing |

| DMN Implementation | dirmult R package, PyMC Python, VGAM |

Parameter estimation, model fitting |

| Computational Tools | scipy.special.gammaln, custom stable implementations |

Accurate log-likelihood computation [10] |

| Visualization | matplotlib, seaborn, arviz |

Model diagnostics, result presentation |

| Optimization | optim in R, scipy.optimize in Python |

Maximum likelihood estimation |

Computational Considerations

Implementing the DMN distribution requires careful computational handling, particularly for the log-gamma calculations in the likelihood function. Standard implementations can suffer from numerical instability when the overdispersion parameter approaches zero (near-multinomial case) [10]. Recommended solutions include:

Stable Computation Methods

- Use log-gamma functions directly rather than computing gamma functions

- Implement specialized algorithms for accurate computation of differences of log-gamma functions [10]

- Consider asymptotic expansions for large arguments

Efficient Computation

- For large vocabularies, utilize sparse representations for word counts

- Implement vectorized operations for likelihood calculations

- Use approximation methods for very large datasets

Advanced Methodologies

Dirichlet Mixture Models for Multiple Authors

For analyzing corpora with multiple potential authors, Dirichlet Multinomial Mixtures (DMM) provide a powerful clustering approach:

Figure 2: Dirichlet Mixture Model for Author Discovery

This approach automatically groups documents with similar stylistic characteristics, potentially corresponding to different authors or author groups [11]. The model can determine the optimal number of clusters using evidence framework or other model selection criteria [11].

Handling Sparse High-Dimensional Data

Authorship attribution often involves large vocabularies with sparse word distributions. The DMN distribution naturally handles sparsity through the Dirichlet prior, which effectively smooths probability estimates for rare words [12]. For extreme sparsity, consider:

Zero-Inflated Extensions

- Models that explicitly account for excess zeros

- Two-component mixtures for burstiness in word usage

Hierarchical Extensions

- Share statistical strength across words through hierarchical priors

- Model correlations between word usage patterns

Covariate Integration

To account for external factors influencing writing style (e.g., time period, genre, subject matter), DMN regression models can incorporate covariates:

where μᵢ is the expected count for word i, and X₁,...,Xₚ are document covariates [12] [13]. This enables separation of author effects from other influencing factors.

Interpretation Guidelines

Parameter Analysis

Interpreting fitted DMN models involves examining both the estimated α parameters and derived quantities:

Relative Word Importance

- Words with high αᵢ/α₀ values are characteristic of an author's style

- Compare relative ratios across authors for distinctive patterns

Style Consistency

- Large α₀ values indicate consistent word usage across documents

- Small α₀ values suggest high variability in word choice

Overdispersion Assessment

- Compare with multinomial model using likelihood ratio tests

- Assess whether overdispersion is adequately captured

Model Diagnostics

Essential diagnostic checks for DMN models in authorship attribution:

Goodness-of-Fit

- Posterior predictive checks comparing observed and simulated data

- Residual analysis for systematic patterns

Classification Performance

- Cross-validation accuracy on held-out documents

- Confusion matrix analysis for common misattributions

Robustness Analysis

- Sensitivity to vocabulary selection

- Stability across different preprocessing choices

The Dirichlet-multinomial distribution provides a principled, flexible framework for authorship attribution that properly accounts for the overdispersed nature of word count data. By moving beyond the limitations of the multinomial distribution, it enables more accurate author characterization and classification, particularly valuable when dealing with diverse documents written across different contexts or time periods.

The Dirichlet-multinomial (DM) model provides a robust probabilistic framework for analyzing multivariate count data, making it exceptionally suitable for authorship attribution research. In stylometry, an author's writing style can be quantified by representing documents as multivariate counts of linguistic features—including word frequencies, syntactic patterns, and character n-grams [12]. The DM model effectively captures the inherent overdispersion in such data, where the variance of observed feature counts exceeds what would be expected under a simple multinomial model [14]. This overdispersion arises naturally in writing style due to the complex interplay of consistent authorial habits and contextual variations within and between documents.

The DM distribution is constructed as a compound probability distribution, where the multinomial probability parameters themselves follow a Dirichlet distribution [5]. For a document represented as a vector of counts (\mathbf{y} = (y1, y2, \dots, yK)) across (K) linguistic features with total count (y+ = \sum{j=1}^K yj), the DM probability mass function is given by:

[ P(\mathbf{y} | \boldsymbol{\alpha}) = \frac{\Gamma(y+ + 1)\Gamma(\alpha+)}{\Gamma(y+ + \alpha+)} \prod{j=1}^K \frac{\Gamma(yj + \alphaj)}{\Gamma(\alphaj)\Gamma(y_j + 1)} ]

where (\boldsymbol{\alpha} = (\alpha1, \alpha2, \dots, \alphaK)) are the Dirichlet parameters, and (\alpha+ = \sum{j=1}^K \alphaj) [5]. The flexibility of this model to account for extra-multinomial variation makes it particularly valuable for distinguishing between authors based on their characteristic writing patterns.

Biological Interpretation of DM Parameters in Writing Style Analysis

Intraclass and Interclass Correlation Structures

The parameters of the Dirichlet-multinomial model provide critical insights into the correlation structure of linguistic features, offering a biological interpretation of writing style patterns. The intraclass correlation measures the similarity or clustering tendency of specific linguistic features within documents by the same author, while interclass correlations capture the relationships between different linguistic features across an author's body of work [12].

The DM model's mean and variance specifications reveal this correlation structure mathematically. The expected count for linguistic feature (j) is:

[ E(Yj) = y+ \frac{\alphaj}{\alpha+} ]

with variance:

[ \operatorname{Var}(Yj) = y+ \frac{\alphaj}{\alpha+} \left(1 - \frac{\alphaj}{\alpha+}\right) \left(\frac{y+ + \alpha+}{1 + \alpha_+}\right) ]

The covariance between different features (i) and (j) is:

[ \operatorname{Cov}(Yi, Yj) = -y+ \frac{\alphai \alphaj}{\alpha+^2} \left(\frac{y+ + \alpha+}{1 + \alpha_+}\right) ]

for (i \neq j) [5]. The negative covariance structure inherent in the standard DM model implies that linguistic features compete within a fixed compositional space—increased use of one feature necessarily reduces the available probability mass for others [5]. However, extended DM models can accommodate both positive and negative correlations, providing a more flexible framework for capturing the complex relationships between stylistic elements [12].

Interpretation of Correlation Patterns in Authorship

The correlation structures captured by DM parameters reflect fundamental aspects of authorial style. A high intraclass correlation for specific lexical features indicates an author's consistent preference for certain words or phrases across documents, representing their stylistic signature. Positive interclass correlations between certain syntactic constructions may reveal an author's characteristic sentence patterns, while negative correlations might reflect mutually exclusive stylistic choices [12].

For example, an author might demonstrate either complex, multi-clause sentences or concise, direct constructions, but rarely both in the same document. This pattern would manifest as negative correlations between features representing these contrasting styles. The DM model's ability to quantify these relationships provides a mathematical foundation for understanding an author's distinctive compositional habits beyond simple frequency counts.

Table 1: Interpretation of DM Correlation Structures in Writing Style Analysis

| Correlation Type | Mathematical Expression | Stylistic Interpretation | Authorship Significance |

|---|---|---|---|

| High Intraclass Correlation | (\rho = \frac{1}{1+\alpha_+}) [15] | Consistent use of specific words/phrases across documents | Strong authorial fingerprint; reliable for attribution |

| Positive Interclass Correlation | (\operatorname{Cov}(Yi,Yj) > 0) [12] | Co-occurrence of certain syntactic patterns | Characteristic style complexes; e.g., formal vocabulary with complex syntax |

| Negative Interclass Correlation | (\operatorname{Cov}(Yi,Yj) < 0) [5] | Mutual exclusion of certain constructions | Stylistic trade-offs; e.g., dialogue vs. description |

Experimental Protocols for DM-Based Authorship Analysis

Data Preparation and Feature Engineering

The first critical step in DM-based authorship analysis involves transforming raw texts into multivariate count data suitable for DM modeling. This process begins with text preprocessing, including tokenization, lowercasing, and removal of punctuation. Subsequently, feature selection identifies the most stylistically informative elements, which may include:

- Lexical features: Word unigrams, bigrams, or trigrams with sufficient frequency

- Character features: Character n-grams (typically 3-5 grams)

- Syntactic features: Part-of-speech tags, syntactic production rules

- Structural features: Paragraph length, sentence complexity measures

The selected features are then converted to frequency counts per document, creating a document-term matrix where rows represent documents and columns represent feature counts. To address the high-dimensionality of linguistic data, feature reduction techniques such as filtering by minimum frequency or maximum number of features are typically applied [14]. The resulting count data preserves the compositional nature of writing style while accommodating the constraints of the DM framework.

Parameter Estimation and Model Fitting

Once the count data is prepared, DM parameters are estimated using maximum likelihood estimation (MLE) or Bayesian methods. The likelihood function for the DM model is:

[ \mathcal{L}(\boldsymbol{\alpha} | \mathbf{Y}) = \prod{i=1}^n \frac{\Gamma(y{i+} + 1)\Gamma(\alpha+)}{\Gamma(y{i+} + \alpha+)} \prod{j=1}^K \frac{\Gamma(y{ij} + \alphaj)}{\Gamma(\alphaj)\Gamma(y{ij} + 1)} ]

where (y{ij}) is the count of feature (j) in document (i), and (y{i+}) is the total count of features in document (i) [10]. Computational challenges in evaluating the log-likelihood function, particularly when (\alpha_+) is small, can be addressed using specialized algorithms that provide numerical stability [10].

For authorship attribution tasks, a separate DM model is typically estimated for each candidate author using documents with known authorship. The estimated parameters (\boldsymbol{\alpha}^{(a)}) for author (a) capture the author-specific correlation structure of linguistic features. These author-specific models can then be used to calculate the probability of unseen documents under each model for attribution decisions.

Correlation Analysis and Interpretation

The estimated DM parameters provide the foundation for analyzing intraclass and interclass correlations in writing style. The intraclass correlation coefficient (ICC) for linguistic features under the DM model can be calculated as:

[ ICC = \frac{1}{1 + \alpha_+} ]

which decreases as (\alpha_+) increases [15]. A higher ICC indicates greater homogeneity of feature usage within an author's documents, suggesting a more consistent stylistic fingerprint.

For interclass correlations, the covariance structure derived from the DM parameters reveals how different linguistic features co-vary in an author's style. Features with strong positive correlations represent stylistic elements that tend to co-occur, while negative correlations indicate mutually exclusive patterns. These relationships can be visualized through correlation networks, where nodes represent linguistic features and edges represent significant correlations, providing intuitive insight into an author's stylistic structure.

Diagram 1: Stylometric analysis workflow for DM correlation modeling shows the sequential process from data collection through stylistic interpretation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for DM-Based Stylometric Analysis

| Tool/Resource | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| DM Likelihood Calculator | Stable computation of log-likelihood | Parameter estimation | Use algorithms addressing numerical instability near ψ=0 [10] |

| Spike-and-Slab Priors | Bayesian variable selection | Feature significance testing | Identifies stylistically informative features [12] |

| Sparse Group Penalization | Regularized regression | High-dimensional feature spaces | Selects relevant covariates and associated features [14] |

| Dirichlet-Multinomial Regression | Covariate effect testing | Author characteristic analysis | Links composition to covariates via log-linear model [14] |

| Network Fusion Methods | Incorporating prior structure | Document relationship modeling | Uses network information to improve clustering [3] |

Advanced Analytical Frameworks

Mixed-Effects Extensions for Multi-Level Stylistic Analysis

For complex authorship problems involving multiple documents per author across different genres or time periods, Dirichlet-multinomial mixed models provide enhanced analytical capabilities. These models incorporate random effects to account for within-author correlations while examining fixed effects of stylistic covariates [16]. The model structure can be represented as:

[ \log(E[\mathbf{Y}{id}]) = \mathbf{X}{id}\boldsymbol{\beta} + \mathbf{Z}{id}\mathbf{u}i + \boldsymbol{\epsilon}_{id} ]

where (\mathbf{Y}{id}) is the vector of feature counts for document (d) by author (i), (\mathbf{X}{id}) contains fixed effect covariates, (\boldsymbol{\beta}) represents fixed effects, (\mathbf{Z}{id}) contains random effect covariates, and (\mathbf{u}i) represents author-specific random effects [16].

This framework is particularly valuable for:

- Longitudinal stylometric analysis: Tracking style evolution over an author's career

- Genre-adaptive attribution: Accounting for systematic variation across genres

- Multi-author collaboration analysis: Disentangling individual contributions in joint works

The mixed-model approach naturally handles the hierarchical structure of stylistic data while providing appropriate uncertainty quantification for authorship conclusions.

Network-Based Stylistic Similarity Analysis

Recent methodological advances incorporate network information into DM-based clustering through Dirichlet-multinomial network fusion (DMNet) [3]. This approach combines count data modeling with known relationships between documents (e.g., chronological proximity, publication venue similarity) using a weighted group L1 fusion penalty:

[ \hat{\boldsymbol{\alpha}} = \arg\min{\boldsymbol{\alpha}} \left{ -\ell(\boldsymbol{\alpha};\mathbf{Y}) + \lambda \sum{i < i'} w{ii'} \|\boldsymbol{\alpha}i - \boldsymbol{\alpha}{i'}\|2 \right} ]

where (\ell(\boldsymbol{\alpha};\mathbf{Y})) is the DM log-likelihood, (w_{ii'}) measures the known similarity between documents (i) and (i'), and (\lambda) controls the degree of fusion [3].

This network-enhanced approach enables:

- Incorporation of external evidence: Using metadata to inform stylistic clustering

- Robust author profiling: Identifying characteristic features stable across related documents

- Style community detection: Discovering groups of authors with similar stylistic patterns

The integration of network information creates a more comprehensive analytical framework that combines textual content with contextual relationships for improved authorship analysis.

The Dirichlet-multinomial model provides a powerful mathematical framework for quantifying and interpreting intraclass and interclass correlations in writing style. By moving beyond simple frequency counts to model the covariance structure of linguistic features, the DM approach captures the complex statistical patterns that constitute authorial style. The correlation parameters offer biologically meaningful interpretations of stylistic consistency and feature relationships, providing deeper insight into the mechanisms of written expression.

The experimental protocols and analytical frameworks presented here establish a rigorous methodology for DM-based authorship analysis, from basic parameter estimation to advanced mixed-effects and network-based extensions. As stylometric research continues to evolve, these DM-based approaches will play an increasingly important role in the scientific study of writing style, enabling more nuanced attribution models and richer understanding of authorial characteristics.

Within authorship attribution research, the Dirichlet-Multinomial (DM) model provides a robust probabilistic framework for characterizing an author's unique stylistic fingerprint. This approach fundamentally operates on the principle that authors use high-frequency function words (e.g., conjunctions, prepositions, and articles) unconsciously and consistently, regardless of the text's topic [17]. The DM model treats the frequencies of these function words in a text as a sample from an underlying multinomial distribution, the parameters of which are specific to each author [17]. By clustering the parameters of these multinomial distributions, the DM model can group texts written by the same author, providing a powerful tool for resolving authorship disputes [17]. This document details the generative process, experimental protocols, and key reagents for implementing this methodology in scholarly research.

The Generative Process of the Dirichlet-Multinomial Model

The core of the DM model is a generative process, a probabilistic recipe that describes how a set of observed texts is assumed to have been produced. It posits that each document is generated by first drawing a author-specific topic distribution, and then generating words based on that distribution.

Underlying Bayesian Model and Workflow

The following diagram illustrates the complete generative process and analytical workflow for authorship attribution using the Dirichlet-Multinomial model.

Diagram Title: DM Model Generative Process

Mathematical Foundation

The generative process for a corpus of documents is formalized as follows [17]:

For each topic (writing style) k among K topics:

- Draw a distribution over the vocabulary: βk ~ Dirichlet(λβ)

- Here, β_k represents the probability of each function word within the distinctive style k.

For each document d in the corpus of D documents:

- Draw a document-specific distribution over topics (writing styles): θ_d ~ Dirichlet(α)

- The hyperparameter α influences the concentration of topics within a document.

For each of the N_d word positions in document d:

- (a) Select a topic: z{d,n} ~ Multinomial(θd)

- (b) Generate the word: w{d,n} ~ Multinomial(β{z_{d,n}})

This process results in the observed words that constitute the document. The key for authorship attribution is that the parameters θ_d of the multinomial distribution are treated as latent variables that characterize an author's style [17]. A Dirichlet process prior can be placed on these parameters to form a Dirichlet Process Mixture Model (DPMM), which allows for a flexible number of clusters (i.e., authors) to be identified from the data [17].

Experimental Protocols for Authorship Attribution

Protocol 1: Data Preparation and Function Word Selection

Objective: To prepare a standardized corpus of texts and select a set of discriminatory function words for analysis.

- Text Collection: Gather a corpus of texts, including documents with known authorship and any disputed documents.

- Pre-processing:

- Clean texts by removing punctuation, numbers, and converting all text to lowercase.

- Tokenize the texts into individual words.

- Function Word Selection:

- Compile an initial list of high-frequency, context-independent function words (e.g., "the", "and", "of", "in", "to", "a").

- The final selection of K function words is typically determined by a subject matter expert to ensure their suitability for discriminating between authors [17].

- Feature Extraction: For each document, count the frequency of each of the K selected function words. The data for each document is thus a vector of counts that sums to the total number of function words in that document.

Protocol 2: Model Fitting and Cluster Analysis

Objective: To fit the DM Mixture Model to the count data and determine the probabilistic clustering of texts by author.

- Model Specification: Define the DM mixture model with a Dirichlet process prior for clustering, as described in Section 2.

- Computational Algorithm: Use a Markov Chain Monte Carlo (MCMC) sampling algorithm, such as a collapsed Gibbs sampler, to generate samples from the posterior distribution of the model parameters [17]. This involves:

- Initializing model parameters and hyperparameters.

- Iteratively sampling the cluster assignments for each text and the associated multinomial parameters.

- Posterior Inference: After a sufficient number of MCMC iterations (discarding an initial burn-in period):

- Analyze the posterior distribution of cluster assignments to estimate the probability that any two texts were written by the same author.

- Summarize the output to obtain a final clustering of texts, acknowledging the quantifiable uncertainty in the resultant clusters [17].

Application to the Federalist Papers

A classic application of this protocol is the analysis of the Federalist Papers [17].

- Knowns: Alexander Hamilton (51 papers), James Madison (14 papers), John Jay (5 papers).

- Disputed: 12 papers with contested authorship between Hamilton and Madison.

- Process: The DM model with a Dirichlet process prior was applied to function word frequencies from these texts. The model successfully clustered most known papers correctly and assigned the disputed papers to Madison with high probability, demonstrating the practical utility of the approach [17].

The Scientist's Toolkit: Key Research Reagents

The following table details the essential "research reagents" and computational tools required for implementing the DM model for authorship attribution.

Table 1: Essential Research Reagents and Tools for DM Model-Based Authorship Analysis

| Reagent/Tool | Type | Function in the Experiment |

|---|---|---|

| Corpus of Texts | Data | The primary input data. Includes texts of known authorship for model training and disputed texts for analysis [17]. |

| Function Word List | Data/Parameter | A set of K prepositions, conjunctions, and articles. Serves as the model's features, representing the author's unconscious stylistic "word prints" [17]. |

| Dirichlet Prior (α) | Model Parameter | A hyperparameter that controls the prior distribution over topic proportions and influences the concentration of writing styles within and across documents [17]. |

| Markov Chain Monte Carlo (MCMC) Sampler | Computational Algorithm | The engine for Bayesian inference. Used to draw samples from the complex posterior distribution of model parameters and cluster assignments [17]. |

| Collapsed Gibbs Sampler | Specific MCMC Algorithm | A computationally efficient sampling algorithm that marginalizes out some parameters (like θd and βk) to improve mixing and convergence of the Markov chain [17]. |

Data Presentation and Interpretation

The quantitative outputs of the DM model analysis are typically presented in two key forms:

Table 2: Key Quantitative Outputs from a DM Model Analysis

| Output Type | Description | Interpretation in Authorship |

|---|---|---|

| Posterior Cluster Assignment Probabilities | A matrix showing the probability that each text belongs to each identified author cluster. | A disputed text assigned to "Cluster 1" with a probability of 0.95 provides strong evidence that it was written by the author characterizing that cluster. |

| Author-Specific Word Probabilities (β_k) | For each cluster, a vector of probabilities for each function word. | Reveals the author's unique stylistic signature. E.g., one author may use "upon" with a probability of 0.015, while another uses it at 0.002. |

The DM model's primary advantage over methods assuming multivariate normality is its inherent respect for the discrete, compositional nature of count data, avoiding the pitfalls of spurious correlations [17]. Furthermore, the Bayesian framework provides a natural and quantifiable measure of uncertainty for the authorship assignments, which is a significant advancement over deterministic clustering algorithms [17].

In authorship attribution research, a fundamental challenge is to statistically model the word counts or term frequencies extracted from documents. These data are inherently compositional, meaning the word counts from a single document are constrained to sum to a fixed total (the total number of words analyzed) and carry only relative information [16]. The standard multinomial distribution has traditionally been used to model such categorical count data, but it carries a critical limitation: it assumes all observations arise from a single, fixed probability vector of word usage [4]. In reality, writing style naturally varies between documents—even by the same author—due to changes in topic, genre, or temporal evolution of style. This real-world variability creates overdispersion, where the observed variance in word counts significantly exceeds what the multinomial model can account for [4].

The Dirichlet-multinomial (DM) model directly addresses this limitation by introducing a hierarchical structure that naturally accommodates overdispersed count data. Rather than assuming a fixed probability vector for all documents, the DM model treats each document as having its own unique probability vector drawn from a common Dirichlet distribution [5] [4]. This approach has been shown to "outperform alternatives for analysis of microbiome and other ecological count data" [18], and similar advantages extend to textual analysis. In literary style evolution tracking, for instance, DM models have successfully detected stylistic change points by accounting for this extra variance [19]. This technical note explores the quantitative advantages of DM models and provides detailed protocols for their application in authorship attribution research.

Quantitative Advantages of Dirichlet-Multinomial Models

Theoretical Foundation and Variance Structure

The Dirichlet-multinomial model is a compound distribution formed by combining the Dirichlet distribution with the multinomial distribution. In this hierarchical structure, the observed word counts for document i (X_i) are generated through a two-step process: first, a document-specific probability vector pi* is drawn from a Dirichlet distribution with parameter vector α; then, the word counts *Xi* are drawn from a Multinomial distribution parameterized by pi* and the total word count *ni* [5]. Mathematically, this is represented as:

pi* ~ Dirichlet(α) *Xi* | pi* ~ Multinomial(*ni, p_i)

This structure creates a more flexible covariance framework that better reflects real-world variability in word usage. The following table summarizes the key differences in moment properties between the standard multinomial and Dirichlet-multinomial distributions:

Table 1: Comparative Properties of Multinomial and Dirichlet-Multinomial Distributions

| Property | Multinomial Distribution | Dirichlet-Multinomial Distribution |

|---|---|---|

| Mean | E(X_i) = n·π_i | E(X_i) = n·(α_i/α_0) |

| Variance | Var(X_i) = n·π_i·(1-π_i) | Var(X_i) = n·(α_i/α_0)(1-α_i/α_0)[(n+α_0)/(1+α_0)] |

| Covariance | Cov(X_i,X_j) = -n·π_i·π_j | Cov(X_i,X_j) = -n·(α_i·α_j)/(α_0^2)·[(n+α_0)/(1+α_0)] |

| Dispersion | Fixed relationship between mean and variance | Extra variance controlled by concentration parameter α_0 |

where α_0 = Σα_k represents the concentration parameter [5]. The key advantage emerges in the variance formula: the DM variance equals the multinomial variance multiplied by the factor [(n+α_0)/(1+α_0)], which is always greater than 1 for finite α_0 [5]. This multiplicative factor quantitatively represents the model's ability to account for the extra variance observed in real-world word usage patterns.

Empirical Performance Advantages

Research across multiple disciplines has demonstrated the superior performance of Dirichlet-multinomial models compared to standard multinomial approaches. In controlled simulations, DMM was "better able to detect shifts in relative abundances than analogous analytical tools, while identifying an acceptably low number of false positives" [18]. This enhanced sensitivity to meaningful patterns—while controlling false discoveries—is particularly valuable in authorship attribution, where correctly identifying subtle stylistic shifts can determine conclusions about authorship or stylistic evolution.

In a literary style analysis application, Dirichlet-multinomial change point regression successfully tracked the evolution of literary style by identifying periods of stylistic consistency interrupted by abrupt changes [19]. The model's ability to account for extra variance in word usage was crucial for distinguishing true stylistic evolution from random fluctuations. Similarly, in microbiome research—where data shares the compositional characteristics of textual data—DM models identified "several potentially pathogenic, bacterial taxa as more abundant" in specific patient groups, while "these differences went undetected with different statistical approaches" [18].

The following workflow diagram illustrates the analytical process of implementing a Dirichlet-multinomial model for authorship attribution:

Diagram 1: Dirichlet-Multinomial Analysis Workflow for Authorship Attribution. This workflow encompasses text processing, model specification with hierarchical structure, and parameter estimation options.

Experimental Protocols for Authorship Attribution

Data Preparation and Feature Selection

Protocol 1: Text Preprocessing and Feature Engineering

Text Acquisition and Cleaning: Obtain digital texts of known authorship. Clean the data by removing metadata, standardizing orthography, and handling special characters. Document this process for reproducibility.

Tokenization and Linguistic Processing:

- Split texts into word-level or character-level tokens using consistent rules

- Apply lemmatization or stemming to group word variants

- Remove extremely high-frequency and low-frequency words that carry little stylistic information

- Optionally, extract syntactic features (part-of-speech n-grams) or lexical richness measures

Document-Term Matrix Construction:

- Create a matrix where rows represent documents or text samples

- Columns represent the selected linguistic features (words, character n-grams, etc.)

- Cells contain frequency counts of each feature in each document

- Normalize by document length if analyzing fixed-length text samples

Table 2: Research Reagent Solutions for Textual Analysis

| Research Reagent | Function in Analysis | Implementation Examples |

|---|---|---|

| Text Corpus | Primary research material | Project Gutenberg, proprietary author collections, historical archives |

| Tokenization Engine | Text segmentation into analyzable units | NLTK, SpaCy, Stanford CoreNLP, custom rule-based systems |

| Feature Selection Algorithm | Identifies stylistically relevant features | χ² test, mutual information, frequency-based filtering, linguistic knowledge |

| Computational Framework | Statistical modeling and inference | PyMC (Python), Stan (R/Python), custom Gibbs sampling implementations |

Model Implementation and Estimation Techniques

Protocol 2: Dirichlet-Multinomial Model Specification and Estimation

Model Specification:

The Dirichlet-multinomial model for authorship attribution can be formally specified as:

α = conc × frac

p_i* ~ Dirichlet(α) for each document i

X_i ~ Multinomial(n_i, p_i) for each document *i

where frac represents the expected fraction of each word across the corpus, and conc (concentration parameter) controls the degree of overdispersion [4].

Estimation Method Selection:

- Hamiltonian Monte Carlo (HMC): Provides the most accurate estimates of parameters but can be computationally intensive for very large vocabularies [18]

- Variational Inference (VI): Offers greater computational efficiency suitable for rapid prototyping or very large datasets [18]

- Gibbs Sampling: A Markov Chain Monte Carlo method particularly well-suited to Dirichlet-multinomial models due to conjugate relationships

Implementation Steps:

- Initialize parameters with reasonable starting values (e.g., empirical word frequencies for frac)

- Run the chosen estimation algorithm with sufficient iterations for convergence

- Monitor convergence using trace plots and diagnostic statistics (e.g., Gelman-Rubin statistic)

- Validate model fit through posterior predictive checks comparing simulated data to observed data

The following diagram illustrates the hierarchical structure of the Dirichlet-multinomial model and its relationship to the observed data:

Diagram 2: Hierarchical Structure of the Dirichlet-Multinomial Model. The model accounts for extra variance through document-specific probability vectors drawn from a common Dirichlet distribution.

Model Validation and Interpretation

Protocol 3: Validation and Analysis of Results

Posterior Predictive Checks:

- Simulate new datasets from the fitted model parameters

- Compare the distribution of simulated data to observed data

- Assess whether the model adequately captures the variance and covariance structure of the actual word counts

Model Comparison Metrics:

- Calculate information criteria (WAIC, LOOCV) for model selection

- Compare log-likelihood on held-out test data

- Evaluate classification accuracy for authorship attribution tasks

Interpretation of Parameters:

- Examine the posterior distribution of frac to identify words most characteristic of different authors or styles

- Analyze the concentration parameter α_0 to quantify the degree of overdispersion in the corpus

- For change point models, identify locations where stylistic parameters shift significantly [19]

Sensitivity Analysis:

- Test model robustness to different prior specifications

- Evaluate stability with different feature sets

- Assess performance across different document lengths and sample sizes

Advanced Extensions and Applications

Addressing Model Limitations

While the standard Dirichlet-multinomial model represents a significant advancement over simple multinomial models, recent research has identified opportunities for further refinement. The traditional DM model imposes a "rigid covariance structure" that inherently produces negative correlations between features [12]. In authorship attribution, this limitation might underestimate the co-occurrence of certain words or stylistic features.

To address this, extended flexible Dirichlet-multinomial (EFDM) models have been developed that "accommodate both negative and positive dependence among taxa" [12]. In textual applications, this translates to better modeling of words that tend to co-occur within authors or stylistic traditions. These extended models maintain the interpretability of standard DM models while offering greater flexibility in capturing complex correlation structures in word usage patterns.

Additionally, zero-inflated Dirichlet-multinomial models have been proposed to address the "excessive presence of zeros" in sparse data [12], which commonly occurs in authorship attribution when dealing with large vocabularies and short documents.

Applications in Authorship Research

The Dirichlet-multinomial framework supports several advanced analytical approaches in authorship attribution:

Authorship Verification: Quantifying the probability that an unattributed text was written by a specific author based on stylistic consistency with known works

Stylistic Change Point Detection: Identifying locations within texts or across an author's career where writing style significantly shifts, potentially indicating collaborative writing, genre changes, or temporal evolution [19]

Influence Tracing: Modeling the relationship between authors by analyzing patterns of word usage similarity while accounting for natural variation

Genre Classification: Distinguishing between textual categories based on characteristic word usage patterns while accommodating within-genre variability

The Dirichlet-multinomial model's capacity to account for extra variance in word usage makes it particularly valuable for analyzing texts with inherent stylistic heterogeneity, such as collaborative works, multi-genre corpora, or texts written across an author's developing career.

Methodology in Action: Building an Authorship Attribution Framework with DM Models

The efficacy of authorship attribution (AA) research is fundamentally dependent on the strategic selection and engineering of discriminative features that capture an author's unique stylistic fingerprint. Within the advanced statistical framework of a Dirichlet-multinomial (DM) model, feature engineering transcends mere descriptor selection; it involves curating the multivariate count data that the model analyzes to infer authorship. Traditional DM distributions, while effective for modeling overdispersed count data like n-gram frequencies, impose a rigid covariance structure with inherent negative correlations between taxa, or in this context, between features [12]. This limitation can hinder the model's ability to capture the complex co-occurrence relationships present in an author's stylistic choices. The recently proposed Extended Flexible Dirichlet-Multinomial (EFDM) model overcomes this by generalizing the DM distribution, accommodating both negative and positive dependence among features and providing a more powerful and interpretable tool for understanding complex authorial patterns [12]. This document outlines detailed protocols for selecting and evaluating n-grams and stylometric features, framing them as the foundational input for such sophisticated mixture models, thereby enabling more accurate and reliable authorship attribution.

Core Stylometric Features and Their Quantitative Profiles

Stylometric features can be categorized based on the linguistic level they probe. The following table summarizes the primary feature types used in state-of-the-art authorship identification.

Table 1: Taxonomy of Core Stylometric Features for Authorship Attribution

| Feature Category | Sub-category | Description | Key Strengths | Considerations for DM/EFDM Models |

|---|---|---|---|---|

| N-grams [20] [21] | Character N-grams | Contiguous sequences of n characters. |

Captures lexical, morphological, and structural patterns; language-agnostic [20]. | High-dimensional sparse counts; ideal for DM/EFDM. |

| Word N-grams | Contiguous sequences of n words. |

Captures lexical patterns, idioms, and common phrases. | High dimensionality; sensitive to topic vocabulary. | |

| POS N-grams | Sequences of n Part-of-Speech tags. |

Topic-independent; captures syntactic patterns [21]. | Represents grammatical structure as count data. | |

| Syntactic Features | Syntactic N-grams (SN-grams) | Paths in syntactic dependency trees [21] [22]. | Captures non-linear, hierarchical sentence structure. | Complex feature extraction; generates structured count data. |

| Mixed SN-grams | Integrates words, POS, and dependency tags in one n-gram [22]. | Richer representation of syntactic-semantic structure. | Very high-dimensional; requires robust model like EFDM. | |

| Lexical & Content Features | Function Words | Frequency of prepositions, conjunctions, pronouns, etc. | Unconscious use; highly discriminative and topic-agnostic [22]. | Low-dimensional, dense counts. |

| Vocabulary Richness | Measures like Type-Token Ratio (TTR), hapax legomena. | Captures author's lexical diversity. | Can be derived from primary count data. | |

| Structural Features | Punctuation Marks | Frequency of commas, periods, colons, etc. | Easy to extract; consistent across topics. | Simple count data. |

| Sentence/Word Length | Average and distribution of lengths. | Simple yet effective stylistic marker. | Continuous data; requires different modeling approach. |

The performance of these features varies across tasks and datasets. The following table synthesizes quantitative findings from recent evaluations, providing a benchmark for researchers.

Table 2: Comparative Performance of Different N-gram Features in Authorship Tasks

| Feature Type | Sample Features | Reported Performance (Context) | Key Insights | |||

|---|---|---|---|---|---|---|

| Character N-grams | "ing", "the" (for n=4) | High performance in authorship attribution [20] [21]. | Robust and effective baseline; captures nuanced style aspects. | |||

| Syntactic N-grams (Dependency) | nsubj(likes, She), dobj(likes, coffee) |

Competitive results in detecting writing style changes over time [21]. | Captures conscious syntactic choices; less thematic dependence. | |||

| Mixed SN-grams | `PRP | nsubj | likes | VERB` (combining POS, dependency, word) | Outperformed homogeneous n-grams on PAN-CLEF 2012 dataset [22]. | Integrating multiple linguistic layers creates a more powerful style marker. |

| POS N-grams | PRON VERB ADP DET |

Effective for topic-independent style analysis [21]. | Useful for controlling for thematic content in texts. |

Experimental Protocols for Feature Evaluation

Protocol: Evaluating Feature Efficacy for Style Change Detection

This protocol is designed to test the hypothesis that an author's style changes significantly over time, using different n-gram features as style markers [21].

1. Problem Definition & Corpus Preparation:

- Objective: To determine if the writing style of an author in their early works is distinguishable from their later works.

- Corpus Assembly: For a chosen author, compile a minimum of six full-length novels. Label and partition the data into two classes: "Initial" (three oldest novels) and "Final" (three most recent novels) [21].

2. Feature Extraction & Vectorization:

- Text Pre-processing: Apply consistent cleaning (e.g., lowercasing, removing extra whitespace). For syntactic features, process texts with a dependency parser (e.g., Stanford Parser, spaCy) [22].

- Feature Generation: Extract the following n-gram features from each document:

- Character N-grams: Generate n-grams of length 4-6.

- Word N-grams: Generate n-grams of length 1-3.

- POS N-grams: First tag the text, then generate n-grams of length 3-4 from the tag sequence.

- Syntactic N-grams: Extract sequences of dependency relations from the parsed trees [21].

- Vectorization: Represent each document as a vector of normalized n-gram frequencies (CountVectorizer or TfidfVectorizer in Python).

3. Dimensionality Reduction (Optional):

- Apply techniques like Principal Component Analysis (PCA) or Latent Semantic Analysis (LSA) to project the high-dimensional feature space into a lower-dimensional one for analysis and visualization [21].

4. Model Training & Evaluation:

- Classifier: Employ a Logistic Regression classifier, known for its interpretability and efficiency [21].

- Training: Train the classifier on the vectorized features to distinguish between "Initial" and "Final" classes.

- Validation: Use a hold-out set or cross-validation. A classification accuracy significantly above the baseline (e.g., 50%) indicates a statistically significant style change detectable by the chosen features [21].

5. Interpretation:

- Analyze the model coefficients to identify the specific n-grams (e.g., certain character sequences or syntactic patterns) most predictive of the author's early or late period.

Protocol: Integrating Feature Counts into a Dirichlet-Multinomial Model

This protocol details the process of preparing stylometric feature data for authorship attribution using an EFDM regression model, which generalizes the standard DM model [12].

1. Problem Framing:

- Objective: Attribute an anonymous text

D_unknownto one ofKcandidate authors. - Data: For each candidate author, have a corpus of

Mknown texts.

2. Feature Selection & Count Matrix Construction:

- Define Feature Set: From the entire corpus of known texts, select a discriminative feature set

F(e.g., the top 1000 most frequent character 5-grams). This set defines theDdimensions (taxa) in the multinomial model [12]. - Create Count Vectors: For each text

j(both known and unknown), count the occurrences of each feature inF. Letn_jbe the total number of feature tokens in textj. The text is then represented by a count vectorY_j = (y_j1, ..., y_jD), whereΣ y_jr = n_j[12]. - Formulate Response Matrix: The data for the

Kauthors is a matrix of these multivariate count vectors.

3. EFDM Model Specification:

- The EFDM model treats the multinomial parameter

Πas a random variable following a structured mixture distribution, which allows for more flexible correlations than the standard Dirichlet [12]. - The model regresses the marginal mean of the multivariate count response

Y(i.e., the expected feature frequencies) onto covariates, providing clear interpretability [12].

4. Model Inference via Bayesian Estimation:

- Estimation: Use a tailored Hamiltonian Monte Carlo (HMC) method for posterior inference [12].

- Variable Selection: Implement a spike-and-slab prior distribution to automatically select the most important features that discriminate between authors [12].

- Attribution: Compute the posterior probability of

D_unknownbelonging to the feature distribution of each candidate author. The author with the highest posterior probability is assigned attribution.

Visualizing Workflows and Model Relationships

Diagram: Stylometric Feature Engineering Pipeline

Diagram: Dirichlet-Multinomial Model Framework for Authorship

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for Authorship Feature Engineering

| Tool/Resource | Type | Primary Function in Authorship Analysis | Example Applications |

|---|---|---|---|

| Stanford Parser [22] | Software Library | Syntactic parsing of text to generate constituency and dependency trees. | Extraction of syntactic n-grams and dependency relations for deep style analysis [22]. |

| spaCy / Stanza [22] | NLP Library | Industrial-strength natural language processing, including tokenization, POS tagging, and dependency parsing. | Fast and efficient pre-processing and feature extraction (POS n-grams, syntactic features) [22]. |

| Scikit-learn | Python Library | Machine learning toolkit for feature vectorization (Count/TfidfVectorizer), dimensionality reduction (PCA), and classification (SVM, Logistic Regression). | Prototyping and evaluating feature sets using traditional ML models [21]. |

| PAN-CLEF Datasets [22] | Benchmark Data | Standardized corpora for evaluating authorship verification, attribution, and style change detection. | Comparative evaluation of novel feature engineering methods against established baselines [22]. |

| Spike-and-Slab HMC [12] | Statistical Method | Bayesian variable selection procedure integrated with Hamiltonian Monte Carlo sampling. | Identifying the most discriminative subset of n-gram features within an EFDM regression model [12]. |

Authorship attribution (AA) seeks to identify the author of a given text, a task with significant applications in forensic linguistics, plagiarism detection, and intellectual property disputes. Traditional AA methods often struggle with trustworthiness and interpretability, particularly across different domains, languages, and stylistic variations, due to the absence of uncertainty quantification and adaptive limitations. The Dirichlet-Multinomial (DM) model offers a robust probabilistic framework for this challenge. This application note details a comprehensive Bayesian workflow for DM-based authorship attribution, enabling researchers to move from prior specification to posterior inference with calibrated uncertainty, enhancing both reliability and interpretability for critical decision-making in research and development.

Theoretical Foundation: Dirichlet-Multinomial Model for AA

The Dirichlet-Multinomial (DM) model is a generative probabilistic framework ideal for modeling discrete frequency data, such as word or token counts in documents written by different authors.

Generative Process: The model assumes that each author is characterized by a probability vector over a vocabulary of words or features. This vector, specific to author ( k ), is denoted as ( \thetak ). A Dirichlet prior is placed over this probability vector: ( \thetak \sim \text{Dirichlet}(\alpha) ), where ( \alpha ) is the concentration parameter. Subsequently, for a document ( i ) attributed to author ( k ), the observed word counts ( xi ) are assumed to be generated from a multinomial distribution: ( xi \sim \text{Multinomial}(ni, \thetak) ), where ( n_i ) is the total number of words in the document [11].

Advantages for AA: This model naturally accounts for the discrete, sparse, and over-dispersed nature of text count data. The Dirichlet prior acts as a smooth regularizer, mitigating overfitting, especially with limited data. Furthermore, the Bayesian formulation inherently provides a distribution over the parameters, allowing for principled uncertainty quantification about both the author-specific word distributions and the predicted authorship of new documents [11].

Bayesian Workflow and Experimental Protocol

A rigorous Bayesian workflow is essential for robust inference. The following protocol outlines the key stages for applying the DM model to authorship attribution.

The diagram below illustrates the iterative, cyclical nature of a full Bayesian workflow for DM authorship attribution.

Detailed Experimental Protocol

Phase 1: Data Preprocessing and Feature Engineering

- Corpus Compilation: Assemble a corpus of documents with verified authorship. Split the corpus into training (for model fitting) and test (for evaluation) sets, ensuring a balanced representation of authors where possible.

- Feature Extraction: From the raw text, extract linguistic features. Common choices include:

- Lexical: Character or word ( n )-grams (e.g., most frequent 1000-5000 unigrams/bigrams).

- Syntactic: Part-of-speech (POS) tags, punctuation marks, sentence length distributions.

- Structural: Paragraph length, presence of specific formatting.

- Feature Representation: For each document, create a feature vector representing the counts of each selected feature. The entire dataset can then be represented as a frequency matrix, where rows correspond to documents and columns to features [11].

Phase 2: Prior Specification and Model Instantiation

- Prior Elicitation: The concentration parameter ( \alpha ) of the Dirichlet prior must be specified. A common practice is to use a weakly informative hyperprior, such as ( \alpha \sim \text{Gamma}(a, b) ), where ( a ) and ( b ) are small values (e.g., 1.0, 0.1) to allow the data to dominate the posterior. Prior predictive checks can be used to assess the reasonableness of the chosen prior [23].

- Model Instantiation: Formalize the generative model. For ( K ) authors and a vocabulary of size ( V ):

- For each author ( k \in [1, K] ): ( \thetak \sim \text{Dirichlet}(\alpha) )

- For each document ( i ) by author ( k ): ( xi \sim \text{Multinomial}(ni, \thetak) )

Phase 3: Posterior Inference

- Inference Algorithm: Due to the non-conjugacy in more complex hierarchical formulations, approximate inference methods are often required.

- Markov Chain Monte Carlo (MCMC): Algorithms like Gibbs sampling or Hamiltonian Monte Carlo (HMC) can be used to draw samples from the true posterior distribution of ( \theta ) and other parameters.

- Variational Inference (VI): A faster alternative that approximates the true posterior with a simpler distribution, optimizing for closeness (e.g., KL-divergence). This is suitable for very large datasets [24].

- Software Implementation: Utilize probabilistic programming languages (PPLs) such as Stan, PyMC, or TensorFlow Probability, which provide built-in support for these inference algorithms.

Phase 4: Model Evaluation and Validation

- Predictive Performance: Use the held-out test set. For each test document, compute the posterior predictive distribution over authors. The author with the highest posterior probability is the prediction. Report standard metrics like Accuracy, Precision, Recall, and F1-Score [25].

- Uncertainty Quantification: Assess the model's calibration. For documents where the model predicts an author with high posterior probability, it should be correct with high frequency. Analyze the distribution of the maximum posterior probabilities to gauge confidence.

- Model Checking: Use posterior predictive checks to see if data simulated from the fitted model resembles the actual observed data. Check for systematic discrepancies.

Phase 5: Interpretation and Application

- Author Profiling: Examine the posterior distributions of the author-specific parameters ( \theta_k ) to identify the most discriminative words or features for each author, enabling interpretable insights into writing style [25].

- Authorship Prediction: Apply the fully trained and validated model to attribute authorship to anonymous documents, reporting both the most likely author and the associated uncertainty (e.g., posterior probabilities for the top candidates).

Performance Benchmarks and Comparative Analysis

The table below summarizes quantitative performance data from recent, relevant Bayesian and advanced authorship attribution models, providing a benchmark for expected outcomes.

Table 1: Performance Benchmarks of Authorship Attribution Models

| Model / Framework | Dataset(s) | Key Metric | Reported Performance | Key Feature |

|---|---|---|---|---|

| BEDAA (Bayesian DeBERTa) [25] | Multiple AA Tasks | F1-Score | Improvement up to 19.69% | Uncertainty-aware, interpretable |

| LLM (Llama-3-70B) + Bayesian [26] | IMDb, Blog (10 authors) | One-Shot Accuracy | 85% | Utilizes deep reasoning of LLMs |

| Dirichlet Multinomial Mixtures (DMM) [11] | Microbial Data (Conceptual) | Cluster Fit (Evidence) | Identifies distinct metacommunities | Clusters communities into 'metacommunities' |

The following table lists essential computational tools and resources for implementing the described Bayesian DM workflow for authorship attribution.

Table 2: Research Reagent Solutions for Bayesian DM Authorship Attribution

| Item / Resource | Type | Function / Application | Example / Note |

|---|---|---|---|

| Probabilistic Programming Language | Software | Specifying Bayesian models and performing inference. | Stan, PyMC, TensorFlow Probability |

| DMM Model Software | Software | Fitting Dirichlet Multinomial Mixture models. | microbedmm [11] |

| Feature Extraction Library | Software | Converting raw text into feature count vectors. | Scikit-learn, NLTK, SpaCy |

| Pre-trained LLM | Model / Software | Providing baseline probability outputs or embeddings for comparison. | Llama-3-70B [26] |

| Bayesian Analysis Toolbox | Software | Supplementary Bayesian analysis and visualization. | VBA, TAPAS [23] |

| Curated Text Corpus | Data | Training and validating the authorship attribution model. | Blog posts, movie reviews, academic articles [26] |

Advanced Application: Uncertainty Decomposition and Decision Support

For high-stakes applications, such as in pharmaceutical development where document provenance is critical, a deeper analysis of uncertainty is warranted. The BEDAA framework demonstrates the power of uncertainty decomposition—breaking down predictive uncertainty into its constituent parts, such as aleatoric (data inherent) and epistemic (model uncertainty) [25]. This allows professionals to distinguish between cases that are inherently ambiguous and cases where the model lacks sufficient knowledge.

The logical flow for leveraging uncertainty in a decision-making context is outlined below.

This structured approach to Bayesian workflow with Dirichlet-Multinomial models provides a reliable, interpretable, and uncertainty-aware framework for authorship attribution, directly addressing the needs of researchers and professionals requiring evidential robustness in their analyses.

Implementing Spike-and-Slab Priors for Automated Feature Selection in High-Dimensional Vocabularies

High-dimensional vocabularies present significant challenges for authorship attribution research, where the number of potential word-based features dramatically exceeds the number of document samples. This curse of dimensionality leads to data sparsity, increased computational complexity, and high risk of model overfitting, where algorithms learn noise instead of genuine authorship patterns [27]. Feature selection emerges as a crucial preprocessing step to identify the most relevant vocabulary elements while discarding redundant ones, thereby improving model performance, reducing training time, and enhancing interpretability [27] [28].

Within this context, the Dirichlet-multinomial model provides a natural framework for modeling word count distributions across documents, while spike-and-slab priors offer a sophisticated Bayesian approach for automated feature selection. These two-component priors combine a "spike" component that concentrates mass near zero to exclude irrelevant features with a "slab" component that allows nonzero estimates for relevant features, effectively identifying the vocabulary elements most predictive of authorship [29]. This integration enables researchers to simultaneously perform feature selection and model estimation within a unified probabilistic framework, providing uncertainty quantification for feature importance—a significant advantage over traditional deterministic selection methods [30] [31].

Theoretical Foundations

Dirichlet-Multinomial Framework for Text Data