Implementing Technology Readiness Levels in Digital Forensic Readiness: A Framework for Courtroom-Admissible Innovation

This article provides a comprehensive framework for implementing Technology Readiness Levels (TRLs) in digital forensic readiness, addressing the critical gap between technological innovation and legal admissibility.

Implementing Technology Readiness Levels in Digital Forensic Readiness: A Framework for Courtroom-Admissible Innovation

Abstract

This article provides a comprehensive framework for implementing Technology Readiness Levels (TRLs) in digital forensic readiness, addressing the critical gap between technological innovation and legal admissibility. Targeting forensic researchers, practitioners, and laboratory managers, we explore foundational TRL concepts adapted from NASA and other high-reliability fields, then present methodological approaches for applying these levels to digital forensic tools and processes. The content examines common implementation challenges including tool validation, courtroom compliance under Daubert/Frye standards, and cross-border jurisdictional issues, while providing optimization strategies for overcoming technical and legal barriers. Through comparative analysis of current forensic technologies and validation frameworks aligned with international standards like ISO/IEC 27037, this guide enables professionals to systematically advance digital forensic capabilities while ensuring evidence integrity and courtroom readiness.

Understanding Technology Readiness Levels: From NASA to Digital Forensics

Technology Readiness Levels (TRLs) are a systematic metric used to assess the maturity of a particular technology. Developed by the National Aeronautics and Space Administration (NASA) in the 1970s, the TRL scale provides a common framework for engineers, managers, and researchers to consistently evaluate and communicate the readiness of a technology for operational deployment [1] [2]. The scale ranges from TRL 1, representing basic principle observation, to TRL 9, indicating a system proven in successful mission operations [3].

This framework has evolved from its original seven-level NASA scale to the current nine-level system that has been widely adopted across multiple sectors including defense, energy, and European Union research programs [2] [4]. For digital forensic readiness research, the TRL framework offers a structured approach to quantify technological maturity in a field characterized by rapid evolution and increasing significance in criminal investigations [5] [6].

The Nine Technology Readiness Levels

The nine TRLs represent a progression from basic research to proven operational application. The following table summarizes the core definition and activities for each level according to the NASA framework.

Table 1: The Nine Technology Readiness Levels of the NASA Framework

| TRL | Description | Hardware Focus | Software Focus | Exit Criteria |

|---|---|---|---|---|

| TRL 1 | Basic principles observed and reported [7] | Scientific knowledge generation underpinning technology concepts [7] | Scientific knowledge generation underpinning software architecture and mathematical formulation [7] | Peer-reviewed publication of research underlying proposed concept [7] |

| TRL 2 | Technology concept and/or application formulated [7] | Invention begins; practical application identified but speculative [7] | Practical application identified; basic principles coded; experiments with synthetic data [7] | Documented description of application/concept addressing feasibility and benefit [7] |

| TRL 3 | Analytical and experimental critical function and/or characteristic proof of concept [7] | Analytical studies contextualize technology; laboratory demonstrations validate predictions [7] | Limited functionality development to validate critical properties using non-integrated components [7] | Documented analytical/experimental results validating predictions of key parameters [7] |

| TRL 4 | Component and/or breadboard validation in laboratory environment [7] | Low-fidelity breadboard built/operated to demonstrate basic functionality [7] | Key software components integrated/validated; begin architecture development [7] | Documented test performance demonstrating agreement with analytical predictions [7] |

| TRL 5 | Component and/or breadboard validation in relevant environment [7] | Medium-fidelity brassboard built/operated in simulated operational environment [7] | End-to-end software elements implemented/interfaced with existing systems [7] | Documented test performance demonstrating agreement with analytical predictions [7] |

| TRL 6 | System/subsystem model or prototype demonstration in a relevant environment [7] | High-fidelity prototype built/operated in relevant environment [7] | Prototype implementations demonstrated on full-scale realistic problems [7] | Documented test performance demonstrating agreement with analytical predictions [7] |

| TRL 7 | System prototype demonstration in an operational environment [7] | High-fidelity engineering unit built/operated in relevant environment [7] | Prototype software with all key functionality available; well-integrated with operational systems [7] | Documented test performance demonstrating agreement with analytical predictions [7] |

| TRL 8 | Actual system completed and "flight qualified" through test and demonstration [7] | Final product in final configuration demonstrated through test [7] | Software fully debugged/integrated; all documentation completed; V&V completed [7] | Documented test performance verifying analytical predictions [7] |

| TRL 9 | Actual system "flight proven" through successful mission operations [7] | Final product successfully operated in actual mission [7] | Software thoroughly debugged/integrated; all documentation completed; sustaining support in place [7] | Documented mission operational results [7] |

TRL Assessment Methodology and Protocols

Technology Readiness Assessment Process

Formal Technology Readiness Assessment (TRA) involves systematic evaluation of a technology against the parameters defined for each TRL level. The process requires documented evidence that a technology has achieved the required maturity before progressing to the next level [2]. The assessment examines program concepts, technology requirements, and demonstrated capabilities through rigorous testing and validation protocols [2].

For digital forensic technologies, this assessment must address unique challenges including rapid technological evolution, diverse device ecosystems, and legal admissibility requirements [5] [8]. The methodology should incorporate quantitative evaluation approaches, such as Bayesian networks, to measure the plausibility of hypotheses based on digital evidence [9].

Experimental Protocols for TRL Advancement

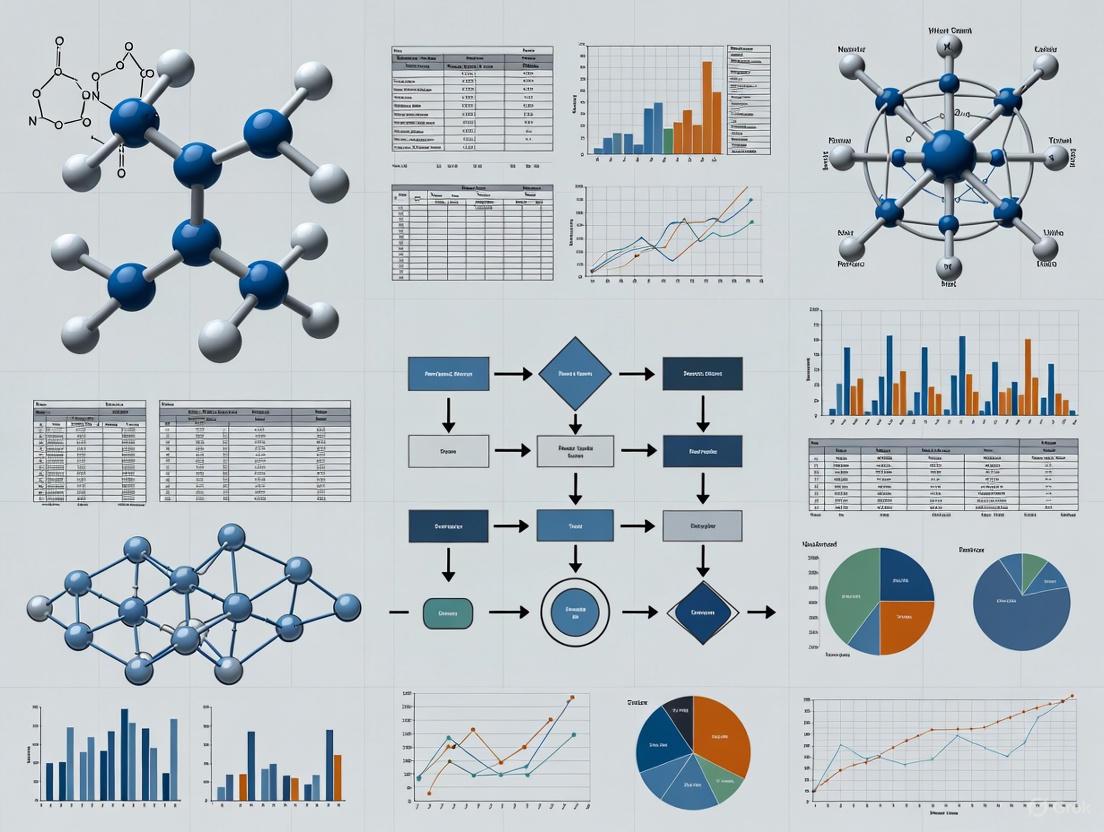

Advancing through TRL levels requires specific experimental protocols and validation methodologies. The following workflow illustrates the progressive validation requirements across the TRL spectrum:

TRL Progression Workflow

Key experimental protocols for critical transition points:

TRL 2 to TRL 3 Transition Protocol: Proof-of-Concept Validation

- Conduct analytical studies to place technology in appropriate context

- Develop laboratory demonstrations to validate analytical predictions

- Construct and operate limited functionality prototype

- For digital forensics: Validate core algorithms with synthetic datasets [7]

TRL 4 to TRL 5 Transition Protocol: Relevant Environment Testing

- Integrate basic technological components into representative system

- Test integrated model in simulated operational environment

- Establish performance benchmarks against requirements

- For digital forensics: Test with real device images in controlled forensic workstation environment [3]

TRL 6 to TRL 7 Transition Protocol: Operational Environment Demonstration

- Build high-fidelity engineering unit addressing scaling issues

- Demonstrate performance in actual operational environment

- Validate integration with collateral systems

- For digital forensics: Deploy in live investigative context with appropriate legal safeguards [7]

Application to Digital Forensic Readiness Research

Current State of Digital Forensic Technologies

Digital forensic research faces unique challenges in technology maturation. Current assessments indicate many digital forensic methods operate at intermediate TRLs (4-6), with limited standardization and quantitative validation [9]. A survey of legal practitioners indicates significant challenges in digital evidence processing, including backlogs, tool reliability, and interpretation complexities [6].

The emerging Internet of Things (IoT) forensics field operates at even lower TRLs (2-4), characterized by diverse proprietary platforms, volatile data storage, and limited forensic tool compatibility [8]. This creates a critical gap between technological innovation and judicial admissibility requirements.

Quantitative Assessment Framework

Advancing digital forensic technologies requires quantitative assessment methodologies. Bayesian networks provide a mathematical framework for evaluating hypothesis plausibility based on digital evidence [9]. The following equation formalizes this approach:

Bayesian Assessment for Digital Evidence

Bayes' Theorem for digital evidence evaluation:

[ \frac{Pr(H|E)}{Pr(\bar{H}|E)} = \frac{Pr(H)}{Pr(\bar{H})} \cdot \frac{Pr(E|H)}{Pr(E|\bar{H})} ]

Where the posterior odds ratio (left) equals the prior odds ratio multiplied by the likelihood ratio [9]. This approach enables quantitative assessment of digital evidence weight, supporting progression to higher TRLs through measurable reliability metrics.

Implementation Challenges in Digital Forensics

The "Valley of Death" between TRL 6 and TRL 7 presents particular challenges for digital forensic technologies [3]. This transition requires moving from laboratory validation to operational demonstration in real investigative contexts. Key barriers include:

- Legal and Ethical Constraints: Operational testing requires appropriate legal frameworks

- Dataset Availability: Limited access to realistic digital evidence corpora

- Tool Validation: Establishing error rates and reliability metrics for judicial acceptance

- Standardization: Developing consensus standards for forensic process validation

Research Reagent Solutions for Digital Forensic Readiness

Advancing TRLs in digital forensic research requires specialized "research reagents" - standardized tools, datasets, and methodologies that enable reproducible experimentation and validation.

Table 2: Essential Research Reagents for Digital Forensic TRL Advancement

| Research Reagent | Function | TRL Application Range | Implementation Example |

|---|---|---|---|

| Reference Digital Evidence Corpora | Provides standardized datasets for method validation and comparison | TRL 3-6 | NIST CFReDS (Computer Forensic Reference Data Sets) for controlled experimentation [9] |

| Forensic Process Automation Frameworks | Enables reproducible execution of forensic techniques across multiple trials | TRL 4-7 | Robot Framework implementations for digital forensic workflow automation |

| Bayesian Network Models | Quantifies evidentiary strength and hypothesis plausibility | TRL 4-8 | Custom Bayesian networks for specific digital evidence types (e.g., file system artifacts) [9] |

| Digital Forensic Tool Validation Suites | Measures tool reliability, error rates, and performance characteristics | TRL 5-8 | NIST Computer Forensic Tool Testing (CFTT) methodologies adapted for research contexts |

| Legal Admissibility Frameworks | Guides development of judicially acceptable evidence handling procedures | TRL 7-9 | Daubert standard compliance checklists for novel forensic techniques |

The NASA TRL framework provides a robust methodology for assessing and advancing technological maturity in digital forensic research. By implementing structured assessment protocols, quantitative evaluation methods, and standardized research reagents, the field can systematically address current limitations and bridge the gap between innovative research and operational deployment. The progression from theoretical concepts (TRL 1) to court-admissible methodologies (TRL 9) requires disciplined approach to validation, demonstration, and operational proof essential for integrating digital forensic advances into the criminal justice system.

The digital forensics field is in a state of rapid evolution, driven by technological advancements including cloud computing, artificial intelligence, and the proliferation of Internet of Things devices [10]. This acceleration creates a critical translation problem where research innovations struggle to mature into operationally ready tools that practitioners can reliably use in investigations and legal proceedings. The global digital forensics market reflects this urgency, projected to reach $18.2 billion by 2030 with a compound annual growth rate of 12.2% [10].

Technology Readiness Levels offer a proven framework for assessing technological maturity, originally developed by NASA in the 1970s for space exploration technologies [11]. The TRL scale provides a consistent metric for understanding technology evolution, ranging from basic principle observation to proven operational deployment [11]. This systematic approach to maturity assessment remains underutilized in digital forensics research, where a significant gap persists between academic prototypes and court-admissible tools.

The consequences of this research-practice gap are substantial. Law enforcement and judicial systems often struggle to adapt to technological changes, resulting in possible misinterpretations of digital evidence in criminal trials [5]. Operational, technical, and management constraints hinder the accurate processing of digital traces, creating a critical need for standardized forensic practices and rigorous validation procedures [5]. This application note establishes protocols for implementing TRLs specifically within digital forensics research contexts.

Technology Readiness Levels: A Standardized Framework

The TRL Scale and Definitions

Technology Readiness Levels comprise a nine-point scale that enables consistent comparison of technological maturity across different domains. The framework has been adapted by numerous organizations including the United States Department of Defense, the European Union, and various industrial sectors [11]. The standardized definitions and examples relevant to digital forensics are presented in Table 1.

Table 1: Technology Readiness Levels with Digital Forensics Examples

| TRL | Description | Digital Forensics Example |

|---|---|---|

| 1 | Basic principles observed and reported | Paper-based study of a novel artifact's properties in Windows registry or file system structures |

| 2 | Technology concept formulated | Speculative application of principles to envision new forensic analysis technique |

| 3 | Experimental proof of concept | Active R&D with laboratory measurements to validate analytical predictions about artifact behavior |

| 4 | Technology validated in lab | Component validation through designed investigation; analysis of technology parameter operating range |

| 5 | Technology validated in relevant environment | Validation of semi-integrated system/model in simulated forensic environment |

| 6 | Technology demonstrated in relevant environment | Prototype system verified and demonstrated in simulated operational environment |

| 7 | System model/prototype demonstration in operational environment | Prototype system verified in actual investigative environment with real data sources |

| 8 | System complete and qualified | Full system produced and qualified through testing in operational environments |

| 9 | Actual system proven in operational environment | System successfully deployed for multiple investigations and accepted as evidence in court proceedings |

The "Valley of Death" in Technology Development

A critical challenge in technology development occurs between TRL 4-7, often termed the "Valley of Death" where promising technologies frequently stall due to lack of coordinated support [11]. Universities and government funding sources typically focus on TRLs 1-4, while the private sector concentrates on TRLs 7-9, creating a funding and development gap that prevents many research innovations from reaching operational use [11]. This valley is particularly problematic in digital forensics, where the rapid pace of technological change demands efficient translation of research into practice.

Digital Forensics Challenges and TRL Alignment

Contemporary Digital Forensics Challenges

Digital forensics professionals face multifaceted challenges that underscore the need for a maturity assessment framework. These challenges directly impact the effective development and deployment of forensic technologies across the TRL spectrum:

Technical Challenges: Anti-forensic techniques including encryption, data hiding, steganography, and data wiping actively undermine forensic tools and methodologies [12]. According to a 2024 cybersecurity report, 68% of cybercriminals use encryption to hide evidence, creating significant decryption challenges for investigators [12].

Data Scale and Complexity: The proliferation of cloud storage and IoT devices has created investigative environments characterized by data fragmentation, jurisdictional complexity, and petabyte-scale unstructured data [10]. By 2025, over 60% of newly generated data will reside in the cloud, distributed across geographically dispersed servers [10].

Legal and Regulatory Constraints: Privacy laws and regulations differ worldwide, with regions like Europe (GDPR), the United States (CLOUD Act), and China (Cybersecurity Law) maintaining strict legal frameworks that complicate digital evidence collection and analysis [12].

Tool Development Limitations: Traditional forensic tools, designed for localized data, struggle with modern distributed environments and the increasing sophistication of anti-forensic techniques [12] [13].

TRL-Digital Forensics Challenge Mapping

Table 2: Mapping Digital Forensics Challenges to TRL Transition Points

| TRL Transition | Associated Digital Forensics Challenges | Risk Mitigation Strategy |

|---|---|---|

| TRL 3-4 (Lab to component validation) | Defining forensically relevant parameters and operating ranges | Establish baseline forensic artifact preservation metrics |

| TRL 4-5 (Lab to relevant environment) | Transitioning from controlled to simulated real-world conditions | Implement representative data sets from multiple sources (cloud, mobile, IoT) |

| TRL 6-7 (Relevant to operational environment) | Legal admissibility requirements, tool reliability testing | Engage legal experts early, conduct peer validation studies |

| TRL 8-9 (Qualified to proven system) | Court acceptance, standardization across jurisdictions | Publish validation studies, establish certification protocols |

TRL Assessment Protocols for Digital Forensics Research

TRL Assessment Workflow

The following experimental protocol provides a structured methodology for assessing Technology Readiness Levels in digital forensics research and development.

Phase 1: Foundation Protocol

Objective: Establish baseline requirements and success metrics for digital forensics technology development.

Materials:

- Forensic data sets (known ground truth)

- Standard reference materials (NIST CFReDS or similar)

- Documentation framework

Methodology:

- Use Case Definition: Clearly articulate the specific forensic problem being addressed (e.g., cloud data acquisition, IoT artifact extraction, encrypted data recovery).

- Core Artifact Identification: Identify the specific digital artifacts (registry entries, file system structures, memory patterns, network traces) relevant to the technology.

- Success Metric Establishment: Define quantitative and qualitative success metrics including:

- Artifact recovery rate (>95% for TRL 7+)

- False positive/negative rates (<2% for TRL 8+)

- Processing throughput (MB/sec, devices/hour)

- Evidence integrity preservation (hash verification)

- Requirements Documentation: Document functional, performance, and legal requirements including adherence to standards such as ISO 27037.

Deliverables: Requirements specification document, validation test plan, success criteria checklist.

Phase 2: Validation Protocol

Objective: Validate technology performance in increasingly realistic environments.

Materials:

- Controlled test environment

- Simulated case data sets

- Reference tools for comparison

- Performance monitoring framework

Methodology:

- Component Testing (TRL 4-5):

- Isolate individual technology components

- Validate against standardized data sets

- Establish parameter operating ranges

- Document failure modes and limitations

Integrated System Testing (TRL 5-6):

- Deploy technology in simulated operational environment

- Test with heterogeneous data sources (cloud, mobile, desktop)

- Validate against anti-forensic techniques (encryption, data hiding)

- Assess usability by trained examiners

Operational Pilot (TRL 6-7):

- Deploy in active investigative environment with supervision

- Process real case data alongside established tools

- Document evidence handling and chain of custody

- Assess performance under realistic constraints

Deliverables: Validation report, performance benchmarks, limitation documentation, user feedback analysis.

Phase 3: Integration Protocol

Objective: Establish technology readiness for operational deployment and court acceptance.

Materials:

- Multi-jurisdictional test environments

- Legal review framework

- Certification documentation

- Training materials

Methodology:

- Multi-Jurisdictional Testing (TRL 7-8):

- Validate technology across different legal frameworks

- Test with international data privacy requirements (GDPR, CLOUD Act)

- Verify performance with region-specific artifacts

Legal Admissibility Review (TRL 8):

- Document validation methodology for court presentation

- Establish expert witness qualification requirements

- Prepare challenge testing protocols (Daubert/Frye standards)

- Document known limitations and error rates

Court Validation (TRL 9):

- Successful evidence admission in multiple cases

- Peer recognition and adoption

- Integration into standard operating procedures

- Independent validation studies

Deliverables: Legal admissibility package, certification documentation, training curriculum, operational deployment guide.

The Digital Forensics Researcher's Toolkit

Essential Research Reagent Solutions

Table 3: Digital Forensics Research Toolkit: Essential Materials and Solutions

| Tool Category | Specific Examples | Research Function | TRL Application Range |

|---|---|---|---|

| Reference Data Sets | NIST CFReDS, Digital Corpora, M57-Patrol | Controlled validation environments for reproducible testing | TRL 3-7 |

| Forensic Processing Platforms | Belkasoft X, Autopsy, FTK, X-Ways | Integrated analysis environments for tool validation | TRL 4-8 |

| Specialized Acquisition Tools | Cellebrite UFED, Tableau TX1, Falcon Neo | Hardware and software for evidence acquisition from diverse sources | TRL 5-8 |

| Validation Frameworks | NIST OSF, DoD Cyber Crime Center CFReDS | Standardized testing protocols and metrics | TRL 4-8 |

| Anti-Forensic Challenge Sets | Encrypted containers, steganographic tools, data wiping utilities | Testing tool resilience against obfuscation techniques | TRL 5-8 |

| Legal Standards Documentation | ISO 27037, ASTM E2763, Daubert criteria | Ensuring legal compliance and admissibility requirements | TRL 7-9 |

Case Study: TRL Application in Digital Forensics Tool Development

Cloud Forensics Tool Maturation Pathway

The following case study illustrates a practical application of TRLs in digital forensics tool development, specifically addressing cloud data acquisition challenges.

Initial Research (TRL 1-3): Basic principles of cloud API interactions were observed and documented. A technology concept was formulated for using legitimate user credentials to access cloud data through simulated app clients, circumventing some jurisdictional challenges [13].

Laboratory Validation (TRL 4-5): Component validation established that the technique could successfully download and decrypt user data from social media platforms and cloud services using APIs. The technology was validated in a lab environment with controlled test accounts and data sets.

Relevant Environment Demonstration (TRL 6-7): A prototype system was demonstrated in a simulated but forensically relevant environment, processing data from multiple cloud services simultaneously. The tool successfully maintained evidence integrity while navigating service rate limits and authentication challenges.

Operational Deployment (TRL 8-9): The complete system was qualified in operational environments, addressing real case requirements. The technology was proven through successful deployment in multiple investigations, with evidence admitted in legal proceedings [13].

TRL Assessment Framework for AI-Based Analysis Tools

The integration of artificial intelligence in digital forensics presents particular TRL assessment challenges, especially regarding transparency and legal admissibility.

The systematic application of Technology Readiness Levels in digital forensics research provides a critical framework for bridging the persistent gap between academic innovation and operational deployment. As digital evidence becomes increasingly central to legal proceedings and criminal investigations, the need for rigorously validated, legally admissible tools has never been greater.

The TRL framework offers a standardized methodology for assessing technological maturity that can be adapted to the unique challenges of digital forensics, including rapid technological change, anti-forensic techniques, data scale and complexity, and legal admissibility requirements. By implementing the protocols and assessment methodologies outlined in this application note, digital forensics researchers can systematically advance their technologies from basic research to court-ready solutions.

Future directions for TRL development in digital forensics should include domain-specific adaptations for cloud forensics, IoT forensics, and AI-based analysis tools. Additionally, the integration of complementary frameworks such as Safe-by-Design principles can further enhance technology development by proactively addressing safety, security, and ethical considerations throughout the development lifecycle [14]. Through the consistent application of TRL assessment methodologies, the digital forensics community can accelerate the translation of research innovations into tools that effectively combat cybercrime and support the administration of justice.

This document provides a current state analysis of the Technology Readiness Level (TRL) landscape for digital forensic tools and methodologies. The analysis is framed within the context of implementing TRLs in digital forensic readiness research, offering researchers a structured framework to assess the maturity and operational deployment potential of emerging technologies. The field of digital forensics is undergoing rapid transformation, driven by technological advancements and increasingly sophisticated cyber threats [15]. The evolution from analyzing standalone computers to dealing with mobile devices, cloud environments, and the Internet of Things (IoT) has necessitated a more structured approach to technology assessment and adoption [16]. This document presents a detailed analysis of the current TRL landscape, standardized experimental protocols for validation, and visualizations of key workflows to aid researchers and development professionals in evaluating the maturity of digital forensic technologies.

Current TRL Analysis of Digital Forensic Domains

The maturity of digital forensic tools and techniques varies significantly across different sub-domains. The table below summarizes the assessed TRL for major areas as of 2025, providing a quantitative overview of the landscape.

Table 1: Technology Readiness Level (TRL) Analysis of Digital Forensic Domains (2025)

| Digital Forensic Domain | Assessed TRL (1-9) | Key Tools & Technologies | Primary Challenges & Limitations |

|---|---|---|---|

| Computer Forensics | 9 (Full Operational Deployment) | EnCase, FTK Imager, Autopsy, The Sleuth Kit [17] | High data volumes, SSD wear-leveling, full-disk encryption [16] [12] |

| Mobile Device Forensics | 8-9 (System Complete & Qualified) | Cellebrite, Magnet AXIOM, Belkasoft X [17] [13] | Hardware diversity, advanced encryption (iOS/Android), secure bootloaders, locked bootloaders [18] [13] |

| Cloud Forensics | 6-7 (Technology Demonstrated & Prototyped) | API-based tools (e.g., for Facebook, Instagram), Magnet AXIOM [17] [13] | Jurisdictional issues, data fragmentation, multi-tenancy, provider cooperation, legal access complexities [18] [13] |

| AI/ML for Media Analysis | 6-7 (Technology Demonstrated & Prototyped) | BelkaGPT, DeepPatrol, Yahoo OpenNSFW [19] [13] | Algorithmic bias, training data requirements, computational resource demands, potential for false positives/negatives [18] [13] |

| Blockchain & Cryptocurrency Forensics | 5-6 (Technology Validated & Demonstrated) | Specialized blockchain explorers, transaction graph analysis tools [20] | Anonymity features (privacy coins, mixers), cross-chain transactions, volume of data, regulatory gaps [20] |

| IoT & Vehicle Forensics | 4-5 (Technology Validated in Lab) | Custom hardware interpreters, proprietary protocol analyzers [16] | Hardware heterogeneity, lack of standardization, proprietary protocols, physical access challenges [16] |

Experimental Protocols for Tool and Methodology Validation

To ensure the reliability and admissibility of digital evidence, rigorous validation of tools and methodologies is essential. The following protocols provide a framework for researchers to assess the performance of digital forensic technologies systematically.

Protocol for Validating AI-Based Evidence Analysis Tools

Objective: To quantitatively evaluate the accuracy, efficiency, and reliability of an AI-powered tool in analyzing large volumes of digital media, specifically for identifying illicit content.

Materials & Reagents:

- Test Machine: Workstation with High-Performance GPU (e.g., NVIDIA RTX A6000 or equivalent).

- Software Under Test: AI analysis tool (e.g., DeepPatrol or equivalent [19]).

- Reference Datasets: Curated dataset of known media files (videos and images) with verified content annotations.

- Control Software: Established, non-AI-based forensic media analyzer.

- Metrics Logging System: Software for recording processing times and results.

Procedure:

- System Setup: Install the AI tool and control software on the test machine. Ensure all drivers and dependencies are current.

- Dataset Preparation: Ingest the reference dataset. The dataset must include a known number of target files (e.g., containing specific objects or content) among a larger set of neutral files.

- Baseline Establishment: Run the control software on the dataset. Record the total processing time, the number of true positives, false positives, true negatives, and false negatives.

- Test Execution: Process the same dataset using the AI tool under test. Record the same metrics as in step 3.

- Data Analysis:

- Calculate precision and recall for both the control and AI tool.

- Compare the total processing time and time per file.

- Document any instances where the AI tool failed to process a file or produced an error.

- Validation: The tool's performance is considered validated for this specific task if it meets or exceeds pre-defined thresholds for accuracy (e.g., >95% precision and recall) and demonstrates a significant efficiency gain over the control without introducing unacceptable error rates [19].

Protocol for Cloud Evidence Acquisition and Verification

Objective: To acquire digital evidence from a cloud service provider (CSP) in a forensically sound manner that preserves the integrity of the evidence and maintains a legally defensible chain of custody.

Materials & Reagents:

- Legal Authority: A valid search warrant, subpoena, or production order specific to the CSP and data in question.

- Acquisition Workstation: Computer with trusted forensic software installed.

- Forensic Software: Tool capable of cloud data acquisition via CSP APIs (e.g., Magnet AXIOM, Belkasoft X [17] [13]).

- Write-Blocking Hardware: To prevent alteration of any local evidence.

- Secure Storage: Encrypted, tamper-evident storage for acquired evidence files.

Procedure:

- Legal Compliance Check: Verify that the legal authority is valid and encompasses the data to be acquired from the specific CSP.

- Tool Configuration: Configure the forensic software with the appropriate legal credentials and API keys as permitted by the CSP and the legal authority.

- Evidence Acquisition:

- Initiate the acquisition process from the workstation.

- The tool will interact with the CSP's API to download user data. All data transfers must occur over encrypted channels (e.g., TLS).

- Upon completion, the tool should generate a comprehensive log of all actions and a list of downloaded files.

- Integrity Verification: The acquisition tool should automatically generate a cryptographic hash (e.g., SHA-256) of the acquired evidence container.

- Chain of Custody Documentation:

- Record the date, time, investigator, tool used, legal authority, and the hash value in the case file.

- Transfer the evidence container to secure storage, documenting all transfers.

- Verification: The acquisition is verified by confirming that the tool's log shows no critical errors and that the hash value can be reproduced at a later time, proving the evidence's integrity [18] [13].

Visualization of Digital Forensic Workflows

The following diagrams, generated using Graphviz DOT language, illustrate core logical relationships and workflows in digital forensic tool validation and evidence handling.

TRL Assessment Workflow for Digital Forensic Tools

Digital Evidence Validation Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential tools, platforms, and software that constitute the core "research reagents" for modern digital forensic investigations.

Table 2: Essential Digital Forensic Research Reagents & Platforms

| Tool/Platform Name | Category/Type | Primary Function in Research & Investigation |

|---|---|---|

| Volatility 3 [17] | Memory Forensics Framework | Open-source tool for analyzing RAM dumps to identify malware, rootkits, and system activity. Critical for investigating live systems. |

| Wireshark [17] | Network Protocol Analyzer | Captures and inspects network traffic in real-time. Essential for network forensics and understanding communication patterns. |

| Cellebrite UFED [17] | Mobile Forensic Solution | Extracts, decodes, and analyzes data from smartphones and tablets. Supports physical, logical, and cloud extraction from mobile devices. |

| Magnet AXIOM [17] | Integrated Forensic Suite | Recovers and analyzes evidence from computers, smartphones, and cloud sources. Provides a unified interface for complex, multi-source cases. |

| Belkasoft X [13] | Digital Forensic Platform | Analyzes data from computers, mobile devices, and cloud storage. Features AI modules (BelkaGPT) for processing text-based evidence. |

| EnCase Forensic [17] | Digital Forensic Suite | Provides disk imaging, evidence analysis, and comprehensive reporting. Widely used in law enforcement and corporate investigations for its court-admissibility. |

| FileTSAR [19] | Large-Scale Network Forensics | Tool for capturing and analyzing network traffic to reassemble files and data in large-scale enterprise network investigations. |

| DeepPatrol [19] | AI-Based Media Analysis | Uses deep learning to automatically detect and classify content in videos and images, significantly reducing manual review time. |

| The Sleuth Kit (+Autopsy) [17] | File System Analysis | Open-source library and GUI for analyzing disk images and recovering files. A fundamental tool for file system-level forensics. |

For researchers and professionals in digital forensics and drug development, the scientific validity of a technique is only one part of the challenge. The other is ensuring that evidence or data derived from these methods will be deemed admissible in a court of law. The concepts of "readiness" for court are formally defined by a set of legal criteria established in seminal cases: Daubert, Frye, and Mohan. These standards act as the legal gatekeepers, determining whether expert testimony based on novel scientific methods can be presented to a jury. Framing technology development within the context of these legal standards is crucial, as it aligns the scientific process with the judiciary's requirements for reliability and relevance. This document outlines the application of these legal foundations, integrating them with the structured assessment framework of Technology Readiness Levels (TRLs) to provide a comprehensive roadmap for achieving both technical and legal readiness.

Core Legal Standards Defining Scientific Admissibility

The Daubert Standard

Established in the 1993 U.S. Supreme Court case Daubert v. Merrell Dow Pharmaceuticals, Inc., this standard assigns the trial judge the role of a "gatekeeper" for scientific evidence [21]. Its purpose is to ensure that all expert testimony is not only relevant but also reliable. Under Daubert, the court's assessment is flexible, focusing on the methodology and reasoning underlying the expert's opinion rather than just the conclusion [21] [22].

The following table summarizes the five key factors judges consider under Daubert [21] [22]:

Table 1: The Five Factors of the Daubert Standard

| Factor | Description | Exemplary Question for Researchers |

|---|---|---|

| Testing & Falsifiability | Whether the theory or technique can be (and has been) tested. | Can the methodology be independently validated, and has it been? |

| Peer Review | Whether the method has been subjected to peer review and publication. | Have the principles and results been scrutinized by the broader scientific community? |

| Error Rate | The existence of a known or potential error rate and the standards controlling the technique's operation. | What is the established rate of false positives/negatives, and are there protocols to minimize error? |

| Standards & Controls | The existence and maintenance of standards controlling the technique's operation. | Are there documented, standardized protocols for applying the method? |

| General Acceptance | The extent to which the method is generally accepted within the relevant scientific community. | Is the technique widely regarded as reliable by other experts in the field? |

The Daubert standard was later clarified and expanded in two subsequent Supreme Court cases, General Electric Co. v. Joiner (1997) and Kumho Tire Co. v. Carmichael (1999), which together are known as the "Daubert Trilogy" [21] [22]. Kumho Tire specifically extended the judge's gatekeeping obligation to all expert testimony, including non-scientific technical and other specialized knowledge [21].

The Frye Standard

The Frye standard, originating from the 1923 case Frye v. United States, is the predecessor to Daubert [23]. This standard provides a more straightforward test, often called the "general acceptance" test. It holds that an expert opinion is admissible if the scientific technique on which it is based is "generally accepted" as reliable by the relevant scientific community [23] [24]. The focus is narrowly on the consensus within the field, without the explicit, multi-factor reliability assessment mandated by Daubert. While the federal courts and a majority of states have adopted Daubert, several key jurisdictions, including California, Illinois, New York, and Pennsylvania, continue to adhere to the Frye standard [23] [25].

The Mohan Standard

In Canada, the admissibility of expert evidence is governed by the standard set forth in R. v. Mohan [26] [27]. This test involves a four-threshold requirement for admissibility. The proposed expert evidence must be [26] [27]:

- Relevant to a material issue in the case.

- Necessary to assist the trier of fact (the judge or jury) in reaching a correct conclusion.

- Not excluded by any other exclusionary rule of evidence.

- Presented by a properly qualified expert.

The Supreme Court of Canada has further refined the application of Mohan into a two-stage process. First, the party proposing the evidence must meet the four threshold requirements. Second, the judge performs a cost-benefit analysis, or "gatekeeper" inquiry, to weigh the potential risks and benefits of admitting the evidence, considering factors such as its reliability and the potential for it to mislead the trier of fact [26].

Comparative Analysis of Admissibility Standards

The following table provides a direct comparison of the three primary standards to highlight their distinct focuses and applications.

Table 2: Comparative Analysis of Daubert, Frye, and Mohan Standards

| Feature | Daubert Standard | Frye Standard | Mohan Standard |

|---|---|---|---|

| Jurisdiction | U.S. Federal Courts; Majority of U.S. States [25] | A minority of U.S. States (e.g., CA, IL, NY, PA) [23] [25] | Canadian Courts [26] [27] |

| Core Question | Is the testimony based on reliable methodology and is it relevant? [21] | Is the scientific technique generally accepted in the relevant field? [23] | Is the evidence relevant, necessary, and presented by a qualified expert? [26] |

| Judge's Role | Active gatekeeper who assesses foundational reliability [22] | Gatekeeper who defers to the scientific community's consensus [23] | Gatekeeper who applies a threshold test and then a discretionary cost-benefit analysis [26] |

| Scope of Application | Applies to all expert testimony (scientific, technical, other specialized knowledge) [21] | Primarily applied to novel scientific evidence [23] | Applies to all expert opinion evidence [26] |

| Key Strengths | Flexible, case-specific analysis of reliability; allows for new, valid science [21] [25] | Bright-line rule; promotes uniformity and avoids "junk science" [23] | Balanced, principled approach that emphasizes necessity and prejudice [26] |

Integrating TRLs with Legal Admissibility: Application Notes for Researchers

For a technology to be "ready for court," its development must be pursued with both technical and legal admissibility in mind. Technology Readiness Levels (TRLs) provide a systematic framework for assessing maturity, from basic research (TRL 1) to full deployment (TRL 9) [1] [28]. The following diagram and table map the critical legal admissibility considerations onto this development lifecycle.

Table 3: TRL-Legal Integration Framework: Key Actions and Deliverables

| TRL Stage | Primary Legal Objective | Required Research Actions | Critical Documentation Deliverables |

|---|---|---|---|

| TRL 1-2Basic Principles | Establish a foundation for future "general acceptance" (Frye) and testing (Daubert). | Conduct foundational research; identify relevant scientific community; formulate technology concept. | Literature reviews; published papers on basic principles; hypothesis statements. |

| TRL 3-4Proof of Concept | Generate initial data on validity to satisfy Daubert's "testing" and "peer review" factors. | Develop and test proof-of-concept in lab; submit findings for peer-reviewed publication. | Lab study protocols; proof-of-concept model results; draft manuscripts; peer review reports. |

| TRL 5-7Validation & Prototyping | Address Daubert's "error rate" and "standards" factors; demonstrate reliability in real-world conditions. | Test prototype in relevant environment; quantify and analyze error rates; develop standard operating procedures (SOPs). | Validation study reports; error rate calculations; draft SOPs; performance benchmarks. |

| TRL 8-9System Complete | Solidify "general acceptance" and demonstrate that all Daubert/Mohan criteria are met. | Conduct final operational testing; obtain certifications; publish final results; train other labs on the method. | Finalized SOPs; certification documents; independent validation studies; training materials. |

Experimental Protocols for Demonstrating Legal Readiness

To systematically build a case for the admissibility of a novel technique, researchers should implement the following experimental protocols, designed to generate the evidence required by the legal standards.

Protocol for Validation and Error Rate Assessment (AddressingDaubert)

1. Objective: To empirically determine the false positive and false negative rates of the methodology under controlled conditions that simulate its intended operational use. 2. Materials: * A validated and agreed-upon reference dataset (e.g., known samples, ground-truthed digital images). * The experimental apparatus or software tool being validated. * Blinded samples for testing. 3. Methodology: * Sample Preparation: Create a test set comprising both positive and negative controls, along with unknown samples, ensuring the ground truth is known only to the study coordinator. * Blinded Analysis: Have one or more analysts apply the methodology to the test set without knowledge of the expected outcomes. * Data Collection: Record all results, including any indeterminate outcomes. 4. Data Analysis: * Calculate the rates of false positives, false negatives, and overall accuracy. * Perform statistical analysis (e.g., confidence intervals) to express the uncertainty of the error rate estimates. 5. Deliverable: A formal validation report detailing the study design, raw data, calculated error rates, and statistical analysis, ready for disclosure in legal proceedings.

Protocol for Establishing "General Acceptance" (AddressingFrye)

1. Objective: To document the degree to which the underlying principles and methodology are accepted within the relevant scientific community. 2. Materials: * Access to scientific literature databases (e.g., PubMed, IEEE Xplore, Google Scholar). * Survey tools for polling expert communities (if necessary). 3. Methodology: * Literature Review: Conduct a systematic review of peer-reviewed publications that utilize, validate, or critique the methodology. Document the number of publications, the prestige of the journals, and the conclusions drawn. * Citation Analysis: Track the adoption and citation of key papers to demonstrate influence and acceptance. * Standards and Guidelines: Identify any industry standards, guidelines from professional bodies (e.g., SWGDE, ASTM), or regulatory approvals that incorporate the method. 4. Deliverable: A "General Acceptance Dossier" containing the literature review summary, citation analysis, copies of key supportive publications, and references to relevant standards and guidelines.

1. Objective: To evaluate an organization's operational preparedness to conduct a digital forensic investigation that yields legally admissible evidence. 2. Methodology: * Scoping: Define the assessment's boundaries (e.g., specific systems, types of incidents). * Information Gathering: Collect and review all relevant policies (incident response, data retention, evidence handling), procedures, and existing incident response plans. * Gap Analysis: Interview key personnel and compare current capabilities against industry best practices (e.g., ISO/IEC 27037) and legal admissibility requirements. * Reporting: Document findings and provide prioritized recommendations for improvement. 3. Key Assessment Components: * Policies & Procedures: Existence and quality of evidence handling and preservation protocols. * Tools & Technologies: Validation status and adequacy of forensic tools (per Daubert protocols). * Skills & Expertise: Qualifications and training of the digital forensics team. * Documentation & Reporting: Robustness of practices for maintaining chain of custody and generating final reports. 4. Deliverable: A DFRA report that provides a roadmap for enhancing the organization's technical and procedural readiness for court.

The Scientist's Toolkit: Essential Reagents for Legal Readiness

This toolkit comprises the non-physical "reagents" and materials required to build a legally defensible scientific method.

Table 4: Essential Toolkit for Achieving Legal Readiness

| Toolkit Component | Function in Achieving Legal Readiness | Primary Legal Standard Addressed |

|---|---|---|

| Standard Operating Procedures (SOPs) | Documents the exact, repeatable methodology, ensuring consistency and allowing for scrutiny. Essential for demonstrating reliable application. | Daubert (Standards), Mohan (Reliability) |

| Validation Study Report | Provides the empirical evidence for the method's accuracy, precision, and error rate. The core document for proving reliability. | Daubert (Testing, Error Rate) |

| Peer-Reviewed Publications | Serves as objective, third-party endorsement of the method's validity and contributes directly to establishing "general acceptance." | Daubert (Peer Review), Frye (General Acceptance) |

| Chain of Custody Documentation | A log that tracks the possession, handling, and transfer of physical or digital evidence. Critical for authenticating evidence and proving its integrity. | All (Foundation for Admissibility) |

| Qualified Expert CV | Establishes the witness's credentials, demonstrating they have the requisite "knowledge, skill, experience, training, or education" to provide an opinion. | Daubert, Frye, Mohan |

| General Acceptance Dossier | A curated collection of literature, standards, and survey data that argues for the method's widespread adoption in the field. | Frye, Daubert (General Acceptance Factor) |

| Code/Algorithm Repository | For digital methods, a version-controlled repository allows for transparency, peer review, and independent verification of the underlying code. | Daubert (Testing, Scrutiny) |

For researchers, scientists, and professionals in drug development and other regulated industries, digital evidence is increasingly critical for protecting intellectual property, validating research integrity, and complying with rigorous quality standards. The concept of Technology Readiness Levels (TRL), a systematic metric for measuring technological maturity, provides an ideal framework for structuring digital forensic readiness [28]. Originally developed by NASA for space missions, the TRL scale divides technology development into 9 distinct stages, from basic principles (TRL 1) to a proven operational system (TRL 9) [29] [30].

This document applies this structured approach to digital forensic readiness, transforming it from an ad-hoc reactive function into a strategically managed capability. Digital Forensic Readiness is defined as the preparation of an organization to efficiently collect, preserve, and analyze digital evidence when incidents occur, with the goals of minimizing business disruption, reducing investigation costs, and ensuring legal admissibility [31]. By adopting a TRL-gated framework, organizations can methodically progress from basic forensic principles to a fully operational, proactive digital forensics system integrated within their quality and compliance infrastructure.

Technology Readiness Levels: A Framework for Forensic Capability Development

The TRL framework provides a common language for assessing the maturity of forensic capabilities, enabling objective evaluation and targeted investment. For digital forensic readiness, the nine levels can be grouped into three primary phases: conceptual research (TRL 1-3), development and validation (TRL 4-6), and deployment and operation (TRL 7-9) [30].

Table 1: Technology Readiness Levels for Digital Forensic Readiness

| TRL | Stage Description | Forensic Readiness Activities | Outputs & Evidence |

|---|---|---|---|

| TRL 1 | Basic principles observed and reported | Fundamental research on forensic techniques; literature review of forensic standards (ISO 27037, 27041) [32]. | Scientific publications; research papers on forensic artifact behavior. |

| TRL 2 | Technology concept formulated | Hypothesize forensic readiness concept; identify potential applications for IP protection and data integrity monitoring. | Documented concept paper linking forensic capabilities to organizational risks [31]. |

| TRL 3 | Experimental proof of concept | Validate key forensic hypotheses in lab environment; test evidence collection from key systems. | Proof-of-concept report; validated hypotheses for evidence collection [30]. |

| TRL 4 | Technology validated in lab | Build lab-scale forensic prototype; test evidence collection from isolated R&D systems. | Functional prototype; validated basic evidence collection capabilities [30]. |

| TRL 5 | Validation in relevant environment | Refine forensic components; test integration with other security systems in a simulated environment. | Integrated component testing report; refined forensic nodes [30]. |

| TRL 6 | Technology demonstrated in relevant environment | Full prototype demonstrated in operational research environment with real data. | System prototype demonstration report; validated in relevant environment [29]. |

| TRL 7 | System prototype in operational environment | Forensic system prototype tested in live operational environment (e.g., specific research division). | Operational prototype report; successful testing in real environment [28]. |

| TRL 8 | System complete and qualified | Full forensic readiness system finalized and qualified through testing; integrated with compliance workflows. | Final system documentation; qualification/certification records [28]. |

| TRL 9 | Actual system proven in operational environment | Continuous forensic readiness operations; successful evidence use in actual incidents or audits. | Incident reports; audit findings; continuous improvement records [28]. |

A critical concept in technology maturation is the "Valley of Death" – the gap between early innovation (TRL 3-4) and operational deployment (TRL 7-8) where many promising technologies fail [28]. In digital forensics, this often manifests as promising research concepts that never translate into operational capabilities. The structured TRL approach helps organizations navigate this valley by forcing explicit consideration of non-technical risks including market uncertainty, regulatory requirements, operational integration, and business model viability [28].

From Reactive to Proactive: A Maturity Model

Traditional digital forensics is predominantly reactive, initiating evidence collection after an incident has been detected. This approach often results in lost evidence, prolonged investigation times, and higher costs [31]. In contrast, proactive digital forensics embeds evidence collection capabilities into the IT infrastructure before incidents occur, enabling faster, more effective investigations and stronger cyber resilience [31] [33].

The relationship between proactive capabilities and maturity levels can be visualized as a progression from completely reactive to fully proactive operations. The following diagram illustrates this maturity pathway and its alignment with the TRL framework:

The transition across this maturity model requires systematic implementation of proactive capabilities. Research indicates that organizations implementing proactive forensic readiness frameworks can achieve significant operational improvements, including a 37.5% reduction in investigation time (from 4.0 to 2.5 hours) and a 19% improvement in log completeness (from 76% to 95%) [33].

Application Notes: Implementing a Proactive Forensic Readiness Framework

Core Principles and Strategic Alignment

Implementing a proactive digital forensic readiness framework requires foundationally aligning forensic capabilities with organizational risks and business objectives. The process begins not with tool acquisition, but with risk management – identifying what requires protection and where potential evidence resides [31]. For research organizations, this particularly involves protecting intellectual property, clinical trial data, and critical research infrastructure.

The forensic readiness implementation process should follow a structured, phased approach that systematically links business risks to technical evidence sources. The following workflow outlines this evidence mapping process:

This risk-based approach ensures forensic capabilities directly address the most critical business protection needs. For each identified risk scenario, organizations should define specific evidence requirements including file types, retention periods, metadata preservation needs, and supporting forensic documentation such as chain of custody procedures [31].

Experimental Protocol: Proactive Digital Forensics Implementation

The following detailed protocol provides a methodology for implementing and validating a Proactive Digital Forensics Standard Operating Procedure (P-DEFSOP), adapted from research demonstrating measurable improvements in forensic effectiveness [33].

Protocol Title: Implementation and Validation of a Proactive Digital Forensics Framework (P-DEFSOP)

Objective: To establish a proactive forensic capability that reduces investigation time, improves evidence completeness, and enables more effective incident response.

Materials & Requirements:

- IT infrastructure supporting critical business services

- Security Information and Event Management (SIEM) or similar log aggregation platform

- Forensic analysis workstations with appropriate tools

- Documented risk inventory and IT asset inventory

Procedure:

Forensic Readiness Assessment (Weeks 1-2)

- Conduct a gap analysis of current forensic capabilities against ISO 27037 guidelines for digital evidence handling [31].

- Map critical risks to business services, IT assets, and potential evidence sources using the workflow outlined in Section 4.1.

- Identify and document evidence sources for high-priority risks, including log files, endpoint telemetry, cloud audit logs, and backups [31].

Control Implementation & Configuration (Weeks 3-6)

- Implement or enhance logging configurations based on identified evidence requirements, focusing on completeness and integrity.

- Configure automated log collection to secure, centralized storage with appropriate access controls.

- Establish evidence handling policies addressing collection methods, preservation techniques, and chain of custody requirements [31].

- Define and document escalation criteria linking forensic monitoring to incident response procedures.

Testing & Validation (Weeks 7-10)

- Design red team/blue team simulation scenarios targeting high-risk areas identified in the risk assessment.

- Execute simulation exercises, capturing metrics for both the existing (reactive) process and the new P-DEFSOP framework.

- Measure and compare key performance indicators including:

- Log completeness rate (percentage of relevant events captured)

- Investigation time (time to reconstruct attack sequences and identify root cause)

- Evidence quality (ability to map events to adversarial tactics using frameworks like MITRE ATT&CK)

Training & Integration (Weeks 11-12)

- Conduct training for relevant staff on forensic preservation procedures and incident awareness.

- Integrate P-DEFSOP with existing security operations and incident response plans.

- Establish a continuous improvement process for refining forensic capabilities based on lessons learned from testing and actual incidents.

Validation Metrics: Successful implementation should demonstrate measurable improvements in forensic effectiveness. Comparative results from research implementations show the potential improvements achievable through this protocol:

Table 2: Quantitative Comparison of Reactive vs. Proactive Forensic Approaches

| Evaluation Metric | Reactive Model (Without P-DEFSOP) | Proactive Model (With P-DEFSOP) | Improvement |

|---|---|---|---|

| Log Completeness Rate | 76% | 95% | +19% |

| Missing/Corrupted Log Rate | 24% | 5% | -19% |

| Average Investigation Time | 4.0 hours | 2.5 hours | -37.5% |

| Evidence Mapping to ATT&CK | Fragmented, inconsistent | Systematic, comprehensive | Significant clarity improvement |

The Scientist's Toolkit: Essential Digital Forensic Research Reagents

Building and maintaining proactive digital forensic capabilities requires specific technical resources and frameworks. The following table details essential "research reagents" for digital forensic readiness:

Table 3: Essential Research Reagents for Digital Forensic Readiness

| Tool/Category | Function & Purpose | Implementation Example |

|---|---|---|

| Configuration Management Database (CMDB) | Provides dynamic inventory of IT assets, enabling linkage between business services and evidence sources [31]. | ServiceNow CMDB; custom asset management system. |

| Security Information & Event Management (SIEM) | Centralizes log collection and storage; enables proactive monitoring and automated alerting. | Splunk Enterprise Security; Elastic Security; Microsoft Sentinel. |

| Digital Forensic Frameworks | Provides standardized methodologies for evidence handling, ensuring legal admissibility. | ISO 27037 (Evidence Handling) [31]; ACPO Principles [34]. |

| MITRE ATT&CK Framework | Knowledge base of adversary behaviors; enables mapping of forensic artifacts to attack techniques. | Mapping log events to specific ATT&CK tactics and techniques [33]. |

| Timeline Reconstruction Tools | Enables forensic examiners to infer past activities by analyzing digital artifacts chronologically [32]. | Log2timeline/Plaso; custom event correlation scripts. |

| Evidence Preservation Tools | Creates forensically sound copies of digital evidence while maintaining integrity and chain of custody. | Forensic disk imagers (FTK Imager, Guymager); write blockers. |

| Open-Source Knowledge Bases | Community-driven resources documenting forensic techniques, weaknesses, and mitigations. | SOLVE-IT Digital Forensics Knowledge Base [34]. |

Digital forensic readiness is no longer a specialized IT function but a fundamental component of organizational resilience, particularly for research-driven industries where evidence integrity is paramount. By applying the structured, gated approach of Technology Readiness Levels, organizations can systematically evolve their capabilities from basic reactive measures to sophisticated proactive systems. This maturation process transforms digital forensics from an investigative tool used after incidents occur to an integrated business capability that reduces operational risk, supports regulatory compliance, and protects critical intellectual property. The quantitative improvements demonstrated in research – including nearly 40% faster investigations and significantly more complete evidence – provide compelling evidence for investing in this proactive approach [33].

A Step-by-Step Framework for Implementing TRLs in Digital Forensic Operations

The digital forensics field faces an unprecedented evolution, driven by the proliferation of digital devices, cloud computing, artificial intelligence (AI), and the Internet of Things (IoT). According to Grand View Research (2023), the global digital forensics market is projected to reach $18.2 billion by 2030, with a compound annual growth rate of 12.2% [10]. This rapid expansion necessitates a structured framework for assessing technological maturity from conceptualization to operational deployment. Technology Readiness Levels (TRLs), a measurement system originally developed by NASA in the 1970s, provide a standardized methodology for evaluating the maturity level of a particular technology [1] [28]. For digital forensics researchers and practitioners, the TRL framework offers a common language for tracking development progress, managing risk, and making strategic decisions about technology funding and deployment [35].

This application note establishes a comprehensive mapping of contemporary digital forensic technologies to the nine TRL stages, with particular emphasis on the critical "Valley of Death" (TRLs 4-7) where many innovations fail to mature [28]. By providing explicit experimental protocols and validation criteria for each stage, this framework supports the broader thesis that systematic technology readiness assessment is essential for advancing digital forensic readiness in an era of increasingly complex cyber threats.

Technology Readiness Levels comprise a nine-level scale that enables consistent comparison of technological maturity across different types of innovation. Table 1 summarizes the core definition and purpose of each TRL, adapted for the digital forensics context.

Table 1: Technology Readiness Levels (TRLs) - Definitions and Digital Forensics Context

| TRL | Definition | Focus in Digital Forensics | Typical Funding Sources |

|---|---|---|---|

| TRL 1 | Basic principles observed and reported [1] | Fundamental research on binary data analysis, data structure theory, cryptographic principles | Basic research grants, academic funding [35] |

| TRL 2 | Technology concept formulated [28] | Application of principles to forensic challenges; invention of novel acquisition/analysis concepts | Early-stage research grants [35] |

| TRL 3 | Experimental proof of concept [1] | Validation of feasibility through laboratory experiments; initial prototype development | SBIR/STTR Phase I, proof-of-concept grants [35] [28] |

| TRL 4 | Technology validated in lab environment [1] | Component integration and testing in controlled forensic laboratory conditions | SBIR/STTR Phase II, seed funding [35] |

| TRL 5 | Technology validated in relevant environment [1] | Testing in simulated forensic environment with real-world data sets | SBIR/STTR Phase II, venture capital seed rounds [35] |

| TRL 6 | Technology demonstrated in relevant environment [1] | Full prototype testing in operational forensic laboratory setting | Later-stage venture capital, strategic partnerships [35] |

| TRL 7 | System prototype demonstration in operational environment [1] | Field testing in active investigative contexts with real casework | Venture capital, corporate investment [35] [28] |

| TRL 8 | System complete and qualified [1] | Technology integrated into standard forensic workflows; compliance testing | Corporate venture arms, government procurement [35] |

| TRL 9 | Actual system proven in operational environment [1] | Routine deployment in forensic investigations; established evidentiary reliability | Commercial revenue, government procurement [35] |

The progression from TRL 1 to TRL 9 represents a pathway from basic scientific observation to proven operational capability. For digital forensics technologies, this pathway must address not only technical functionality but also legal admissibility, ethical implementation, and integration with established investigative workflows [36] [37].

Figure 1: Technology Readiness Pathway for Digital Forensics, highlighting the critical "Valley of Death" (TRLs 4-7) where many innovations fail to transition to operational use [28].

Digital Forensics Technology Landscape

The contemporary digital forensics field encompasses a diverse technological landscape addressing multiple evidence sources and analytical approaches. Table 2 maps current digital forensic technologies to their approximate TRL levels, demonstrating the varied maturity across different subdomains.

Table 2: Current Digital Forensics Technologies Mapped to TRL Levels

| Technology Category | Example Technologies | Current TRL | Key Challenges | Primary Applications |

|---|---|---|---|---|

| AI-Powered Evidence Triage | Machine learning for log analysis, NLP for communication review [13] | 7-8 | Algorithmic bias, "black box" models undermining court credibility [10] | Large-scale data analysis, pattern recognition [13] |

| Cloud Forensics | API-based data acquisition, cross-platform evidence correlation [13] | 6-7 | Data fragmentation, jurisdictional conflicts, encryption [10] [13] | Investigation of cloud-based evidence distributed across servers [10] |

| IoT Device Forensics | Vehicle infotainment analysis, smart home device data extraction [10] [13] | 5-7 | Proprietary protocols, volatile data, diverse architectures [10] | Collision reconstruction (e.g., Tesla EDR data), smart home investigations [10] |

| Blockchain Forensics | Cryptocurrency transaction tracking, wallet identification [38] | 7-8 | Privacy coins, mixing services, cross-chain transactions | Money laundering investigation, ransomware payment tracking [38] |

| Deepfake Detection | AI-driven media authentication, neural network analysis [39] | 6-7 | Rapidly evolving generation techniques, quality improvements [39] | Authentication of audio/video evidence, combating disinformation [39] |

| Anti-Forensic Detection | Metadata analysis for tampering detection, steganography detection [13] | 7-8 | Increasing sophistication of data hiding techniques [13] | Identification of evidence tampering, recovery of hidden data [13] |

| Mobile Forensics | Advanced logical/physical extraction, cloud data acquisition [13] | 9 | Device encryption, secure boot processes, hardware security [13] | Ubiquitous in criminal investigations, corporate investigations [13] |

The TRL distribution in Table 2 demonstrates that while some digital forensic technologies have reached operational maturity (TRL 9), others remain in development and validation phases, particularly those addressing emerging technologies and complex anti-forensic techniques.

TRL-Specific Application Notes and Protocols

TRL 1-3: Basic Research to Proof of Concept

The initial TRL stages focus on establishing fundamental understanding and demonstrating feasibility through controlled experimentation.

TRL 1-2 Application Notes: At these stages, research focuses on observing fundamental principles and formulating technology concepts. Recent work has included studying the fundamental properties of generative adversarial networks (GANs) to understand deepfake generation patterns and conceptualizing detection methodologies [10]. Research into blockchain transaction patterns has enabled the formulation of concepts for tracking cryptocurrency movements across distributed ledgers [38].

TRL 3 Experimental Protocol: Deepfake Detection Proof of Concept

Objective: To validate the core hypothesis that AI-generated media contains detectable artifacts through experimental proof of concept.

Materials and Reagents:

- Dataset Curation: Collect 1,000 verified authentic videos and 1,000 AI-generated videos using open-source generation tools

- Computational Environment: High-performance workstation with GPU acceleration (minimum 8GB VRAM)

- Analysis Framework: Python with OpenCV, TensorFlow, and customized feature extraction libraries

Methodology: 1. Feature Extraction: Implement algorithms to extract potential artifact signatures from visual and audio streams, focusing on: - Facial micro-expressions and blink rate analysis - Blood flow patterns via subtle color variations - Audio-visual synchronization metrics - Compression artifact consistency 2. Model Development: Train basic machine learning classifiers (SVMs, Random Forests) on extracted features 3. Validation: Use k-fold cross-validation (k=5) to assess detection accuracy

Success Criteria: Statistical significance (p<0.05) in distinguishing authentic from synthetic media with accuracy exceeding 65% (significantly above random chance).

TRL 4-5: Laboratory to Relevant Environment Validation

These intermediate stages bridge the gap between theoretical concepts and practical applications, typically where the "Valley of Death" begins.

TRL 4 Application Notes: Technologies are validated in laboratory environments that simulate real-world conditions. For example, cloud forensic tools are tested with localized private cloud deployments that replicate major cloud service architectures [13]. IoT forensic methodologies are validated using controlled smart home test environments with representative device combinations [10].

TRL 5 Experimental Protocol: Cloud Forensic Tool Validation

Objective: To validate cloud forensic acquisition tools in a relevant simulated environment.

Materials and Reagents:

- Test Environment: Private cloud deployment mirroring API structures of major providers (AWS, Azure, Google Cloud)

- Target Applications: Social media simulators with representative data structures

- Forensic Tools: Custom-developed API-based acquisition tools and commercial alternatives

Methodology:

- Evidence Seeding: Populate test environment with known data quantities (1TB structured and unstructured data)

- Acquisition Testing: Execute acquisition tools against simulated cloud environments with:

- Varying network conditions (latency, packet loss)

- Different authentication scenarios (OAuth, API keys)

- Rate limiting and other API restrictions

- Data Verification: Compare acquired data to seeded ground truth using hash verification and content analysis

Success Criteria: Acquisition of >95% of seeded data without alteration, with comprehensive metadata preservation and proper error handling for network disruptions.

TRL 6-7: Relevant to Operational Environment Demonstration

These stages involve testing fully functional prototypes in increasingly realistic environments, representing the latter portion of the "Valley of Death."

TRL 6 Application Notes: A fully functional prototype is demonstrated in a relevant environment. For example, a complete digital forensics workstation with integrated AI triage capabilities is tested in a representative laboratory using real (but anonymized) case data [13]. The prototype must demonstrate end-to-end functionality from acquisition to reporting.

TRL 7 Experimental Protocol: AI-Powered Evidence Triage Field Test

Objective: To demonstrate a system prototype in an operational environment with real investigators.

Materials and Reagents:

- Test Data: Anonymized historical case data from 10 previous investigations (5 criminal, 5 corporate)

- Hardware: Standard issue forensic workstations deployed to participating laboratories

- Software: AI triage system with natural language processing and pattern recognition capabilities

Methodology:

- Baseline Establishment: Have experienced investigators process test cases using existing tools, recording time and key findings

- Prototype Deployment: Install AI triage system in operational forensic laboratories

- Controlled Comparison: Have the same investigators process the same cases using the AI triage system

- Metrics Collection: Record:

- Time to identify key evidence

- Percentage of relevant evidence identified

- False positive/negative rates

- User satisfaction and workflow integration

Success Criteria: Statistically significant reduction in processing time (>25%) while maintaining or improving evidence identification rates compared to baseline methods.

TRL 8-9: System Completion to Operational Deployment

The final TRL stages represent the transition from prototype to fully operational technology.

TRL 8 Application Notes: The technology is finalized and qualified through rigorous testing. For digital forensics, this includes not only technical testing but also validation against legal standards for evidence admissibility [36] [37]. Technologies at this stage have completed integration with established forensic workflows and have all necessary documentation for operational use.

TRL 9 Application Notes: The technology has been proven through successful operational deployment. Examples include established mobile forensics tools that are routinely used in criminal investigations [13] and forensic write-blocking hardware that has been validated through years of use in evidentiary contexts. Technologies at TRL 9 are included in standard operating procedures and have established training programs.

The Digital Forensics Researcher's Toolkit

Table 3 details essential research reagents, tools, and platforms that support development and validation across TRL stages.

Table 3: Essential Research Reagents and Tools for Digital Forensics Technology Development

| Tool/Reagent Category | Specific Examples | Primary Function | TRL Application Range |

|---|---|---|---|

| Reference Data Sets | NIST CFRePP, GovDocs1, M57-Patrol, DARPA TCE | Provide standardized data for development and validation | TRL 1-7 |

| Forensic Acquisition Tools | FTK Imager, Belkasoft Evidence Center, Cellebrite UFED | Create forensic images of digital evidence | TRL 3-9 |

| Analysis Platforms | Belkasoft X, Autopsy, Exterro FTK, Griffeye Analyze DI | Enable examination and interpretation of digital evidence | TRL 4-9 |

| Specialized Hardware | Tableau write blockers, forensic workstations, mobile device programmers | Maintain evidence integrity and enable device access | TRL 4-9 |