Implementing Blind Testing in Forensic Crime Laboratories: A Practical Guide to Overcoming Bias and Enhancing Scientific Integrity

This article provides a comprehensive roadmap for implementing blind proficiency testing in forensic crime laboratories.

Implementing Blind Testing in Forensic Crime Laboratories: A Practical Guide to Overcoming Bias and Enhancing Scientific Integrity

Abstract

This article provides a comprehensive roadmap for implementing blind proficiency testing in forensic crime laboratories. Covering foundational principles, methodological frameworks, troubleshooting strategies, and validation protocols, it addresses critical challenges like contextual bias and systemic resistance. Drawing on real-world case studies from pioneering laboratories and current standards development, this guide equips forensic researchers, scientists, and laboratory managers with evidence-based strategies to enhance methodological rigor, ensure scientific independence, and build stakeholder confidence in forensic results through robust quality management systems.

Understanding Blind Testing: The Critical Foundation for Unbiased Forensic Science

Proficiency testing is a fundamental component of quality assurance in forensic laboratories, serving to monitor the performance of individual examiners and the reliability of laboratory systems. The determination of testing performance against pre-established criteria through interlaboratory comparisons provides essential data on forensic science reliability [1]. Within this framework, a critical distinction exists between open proficiency tests, where examiners are aware they are being tested, and blind proficiency tests, where examiners are unaware they are being tested and believe they are processing actual casework [2] [3].

The 2009 National Academy of Sciences (NAS) report highlighted significant concerns about forensic science practice, noting that traditional proficiency testing in many disciplines "is not sufficiently rigorous" and specifically calling for "routine, mandatory proficiency testing that emulates a realistic, representative cross-section of casework" [3]. This was echoed by the 2016 report by the President's Council of Advisors on Science and Technology, intensifying calls for blind proficiency testing implementation across forensic disciplines [3].

Table 1: Key Definitions in Proficiency Testing

| Term | Definition | Key Characteristics |

|---|---|---|

| Blind Proficiency Test | Determination of testing performance where examiners are unaware they are being tested [1] [3] | Mimics actual casework; tests entire laboratory pipeline; avoids test-taking behavior |

| Open Proficiency Test | Determination of testing performance where examiners are aware they are being tested [3] | Allows for test-taking behavior; may not represent routine casework; widely mandated for accreditation |

| Interlaboratory Comparison | Organization performance evaluation of tests on similar items by multiple laboratories under predetermined conditions [1] | Assesses relative performance; can involve qualitative or quantitative data; useful when formal proficiency tests unavailable |

Comparative Analysis: Blind vs. Open Proficiency Testing

Fundamental Differences and Advantages

Blind proficiency testing offers several distinct advantages over traditional open testing approaches. By definition, blind tests resemble actual cases and integrate seamlessly into normal workflow, thereby testing the entire laboratory pipeline from evidence submission to reporting of results [2] [3]. This approach avoids changes in behavior that occur when an examiner knows they are being tested—a phenomenon documented in early studies showing analysts behave differently during proficiency testing than during routine casework [3].

A significant advantage of blind testing is its capacity to detect misconduct, representing one of the only methods capable of identifying systematic issues that might otherwise remain undetected in traditional open testing frameworks [2]. The ecological validity of blind tests ensures they more accurately reflect real-world performance metrics and error rates, providing stakeholders with more realistic assessments of forensic laboratory capabilities [2].

Implementation Statistics and Current Adoption

Despite these advantages, adoption of blind proficiency testing remains limited across the forensic science landscape. A 2014 Bureau of Justice survey of publicly funded forensic crime laboratories revealed that while 97% of the country's 409 public forensic labs reported using some form of proficiency testing, only 10% reported using blind tests [4]. Significant disparities exist between laboratory types, with federal forensic facilities more likely to implement blind testing compared to local or state laboratories [2] [4].

Table 2: Performance Outcomes from Blind Proficiency Testing Empirical Study

| Performance Metric | Result | Context |

|---|---|---|

| False Positive Errors | 0% | No false positive errors committed by examiners [3] |

| Sufficient Quality for AFIS Entry | 92.0% | 346 of 376 latent prints deemed sufficient for database search [3] |

| Successful Source Identification | 41.7% | Percentage of print searches resulting in candidate list containing true source when present in AFIS [3] |

| Correct Examiner Conclusions | 51.1% | Based on ground truth assessment of all submitted prints [3] |

| Average Print Quality | 53.4/100 | Using LQMetrics software (0-to-100 scale) [3] |

Experimental Protocols for Blind Proficiency Testing

Implementation Framework and Workflow

The Houston Forensic Science Center (HFSC) implemented a comprehensive blind quality control (BQC) program in November 2017, providing an exemplary model for systematic blind testing implementation [3]. The program is facilitated and maintained by HFSC's Quality Division, which is organizationally separate from the laboratory sections, ensuring that BQC cases are prepared and introduced by personnel without connection to the actual testing process.

The target submission rate for the HFSC program is 5% of the average number of cases completed per month during the previous year, translating to approximately 9-10 BQC cases per month administered across the entire latent print unit [3]. Cases are created to mimic real casework with the intent that analysts will be completely unaware the cases are not authentic, thereby ensuring no special treatment occurs during analysis.

Latent Print Quality Assessment Protocol

A critical component of blind proficiency testing involves the objective assessment of latent print quality using standardized metrics. The HFSC study utilized the Latent Quality Metrics (LQMetrics) software within the FBI's Universal Latent Workstation (ULW) to examine relationships between objective print quality and case outcomes [3]. This global quality metric provides an overall score for quality and clarity of an entire latent print on a 0-to-100 scale.

The experimental protocol involved:

- Collection of 376 latent prints submitted as part of 144 blind cases over 2.5 years

- Categorization using the "Good, Bad, and Ugly" classification system based on quality metrics

- Statistical analysis of associations between quality metrics and examiner sufficiency determinations, conclusions, and accuracy

- Distribution analysis of prints across quality categories to assess representativeness

This objective assessment revealed that prints were evenly distributed across the Good, Bad, and Ugly categories, with an average quality score of 53.4, indicating significantly greater representativeness compared to open proficiency tests which typically contain prints of higher quality and lower complexity [3].

Implementation Challenges and Strategic Recommendations

Obstacles to Widespread Adoption

Forensic laboratories face significant logistical and cultural obstacles in implementing blind proficiency testing programs. Meetings convened with directors and quality assurance managers of local and state laboratories revealed several consistent challenges [2] [4]:

- Resource constraints including staffing limitations and budgetary restrictions

- Case creation complexities requiring meticulous design to mimic actual casework

- Administrative burden on quality divisions responsible for program management

- Cultural resistance to testing methods that may reveal performance issues

- Legal and jurisdictional concerns regarding the use of test results in legal proceedings

Local and state laboratories face particularly significant barriers compared to federal facilities, with one study noting that representatives from seven forensic laboratory systems ranging in size from single laboratories with fewer than 50 employees to seven-laboratory systems with over 200 employees expressed significant interest in blind testing alongside numerous practical concerns [4].

Essential Research Reagent Solutions

Table 3: Essential Research Materials for Blind Proficiency Testing Programs

| Reagent/Material | Function/Application | Implementation Example |

|---|---|---|

| LQMetrics Software | Objective quality assessment of latent prints using algorithms incorporating feature count, ridge contrast, and clarity [3] | Integrated within FBI's Universal Latent Workstation; provides 0-100 quality score |

| Blind Case Specimens | Physical or digital test materials that mimic actual casework for seamless integration into workflow [2] [3] | Created by independent Quality Division; submitted at ~5% of monthly caseload |

| Quality Management System | Organizational framework for tracking case outcomes, print quality, and performance metrics [1] [3] | Maintained by separate Quality Division; monitors entire pipeline from submission to reporting |

| Statistical Analysis Tools | Quantitative assessment of relationships between quality metrics and examiner performance [3] | Identifies significant associations between print quality and examiner conclusions/accuracy |

Regulatory Framework and Future Directions

Legislative Developments and Standards

Recent legislative initiatives reflect growing recognition of the importance of blind proficiency testing in forensic science. New York State Bill 2025-A3969 and its Senate counterpart S1274 propose significant reforms to the state's Commission on Forensic Science, including updates to membership, powers, duties, and procedures that would modernize forensic oversight [5] [6]. These bills aim to "strengthen forensic science in criminal courts, improve public trust, and reduce wrongful convictions while preserving the right to a fair trial" through more robust testing and accountability mechanisms [6].

The regulatory landscape continues to evolve, with the Forensic Science Regulator's Codes of Practice and Conduct emphasizing that unexpected performances in proficiency testing and interlaboratory comparisons are classified as non-conformities requiring investigation and corrective action [1].

Strategic Implementation Framework

Successful implementation of blind proficiency testing programs requires a systematic approach that addresses both technical and organizational challenges. Based on empirical research and practitioner experience, the following strategic framework is recommended:

Future directions for blind proficiency testing include expanded implementation across forensic disciplines, development of standardized quality metrics for various evidence types, and integration of blind testing data into overall quality management systems. As the field continues to evolve, blind testing is positioned to become an increasingly essential component of forensic science reliability and validity assurance.

Forensic science aims to provide objective, reliable evidence within the criminal justice system. However, a significant body of research demonstrates that forensic decision-making is vulnerable to contextual biases, where extraneous information unrelated to the evidence itself can systematically skew analytical results. This form of confirmation bias occurs when examiners' judgments are influenced by their exposure to case context, domain-irrelevant information, or expectations [7].

The paradox of expertise suggests that while experience is valuable, it may also promote reliance on top-down cognitive processing, causing experts to utilize prior knowledge and expectations when making decisions rather than evaluating all available information objectively [7]. This effect is particularly pronounced when dealing with ambiguous evidence, where the strength of the evidence is low, providing less cognitive anchor for the decision-maker [7]. The implications are substantial, as studies have documented contextual bias influencing diverse forensic disciplines including fingerprint analysis, DNA interpretation, facial recognition, and more [8] [7].

Experimental Evidence: Quantifying Contextual Bias Effects

Key Experimental Findings on Bias Influence

Table 1: Summary of Experimental Findings on Contextual Bias in Forensic Decision-Making

| Study Focus | Experimental Design | Key Findings | Impact on Decision Metrics |

|---|---|---|---|

| Face Recognition Decisions [7] | 3 (Bias: positive/negative/control) × 2 (Evidence strength: weak/strong) × 2 (Target presence: absent/present) mixed-design; N=195 | Significant interaction between bias and target presence | Accuracy & Confidence: Increased with positive bias when target presentDecision Time: Decreased with positive bias when target present |

| Fingerprint Analysis [8] | Comparison of declared vs. blind proficiency testing | Examiners changed match decisions to non-match or "cannot decide" when biased away from match | Behavioral Change: Knowing a prior examiner's decision influenced subsequent analysis |

| DNA Analysis [8] | Presentation of contextual information biasing analysts | Forensic scientists susceptible to cognitive bias when analyzing ambiguous DNA samples | Error Rate: Increased with biasing contextual information |

| Drug Testing [8] | Comparison of blind vs. declared proficiency tests across 24 laboratories | False negatives higher in blind tests compared to declared tests | Error Disparity: Examiners missed more drug samples when unaware of testing |

Detailed Experimental Protocol: Contextual Bias in Forensic Face Recognition

Objective: To determine if and how forensically relevant face recognition decisions are influenced by biasing information, and whether face recognition ability mitigates such bias [7].

Materials and Research Reagent Solutions:

Table 2: Essential Research Materials and Their Functions

| Item/Reagent | Function/Application | Specifications |

|---|---|---|

| Cambridge Face Memory Test+ (CFMT+) | Measures baseline face recognition ability of participants | Standardized test routinely used in super-recognizer research |

| Closed Circuit Television (CCTV) Footage | Stimulus material emulating real-world forensic evidence | 36 videos showing a person walking down a corridor; varying quality |

| Biasing Statements | Experimental manipulation to induce contextual bias | Three conditions: positive bias (target matches video), negative bias (target doesn't match), control (no statement) |

| Target Face Images | Comparison stimuli for matching decisions | High-quality images presented after video exposure |

| Response Recording System | Data collection on decision parameters | Measures accuracy, confidence (Likert scale), and decision time |

Methodology:

Participant Screening & Recruitment:

- Recruit 195 participants with varied face recognition abilities

- Assess all participants using CFMT+ to establish baseline ability rather than relying on pre-classified groups

- Assign participants randomly to either strong or weak evidence conditions

Experimental Procedure:

- Present 36 videos emulating CCTV footage through a standardized interface

- For each video, display one of three statement types according to experimental condition:

- Positive bias: "Target face matched the face in the video"

- Negative bias: "Target face did not match the face in the video"

- Control: No statement provided

- Following each video, present a target face image

- Prompt participant to decide if target matches the face seen in the video

- Record accuracy, confidence level, and decision time for each trial

Data Analysis:

- Employ mixed-design ANOVA to analyze effects of bias type, evidence strength, and target presence

- Examine interaction effects between bias conditions and decision parameters

- Use CFMT+ scores as a covariate to determine if face recognition ability attenuates bias effects

Blind Testing as a Mitigation Protocol

Implementation Framework for Forensic Laboratories

Fundamental Principles:

- Blind Proficiency Testing: Samples submitted through normal analysis pipeline without examiner awareness they are being tested [8]

- Declared (Open) Proficiency Testing: Tests provided labeled as tests, often addressing specific analytical components [8]

- Linear Sequential Unmasking (LSU): Examiner first documents unique features of forensic evidence without access to reference material, then accesses reference material and documents any changes to original analyses [7]

Houston Forensic Science Center (HFSC) Implementation Model [8] [9]: The HFSC has established one of the most robust blind testing programs in a non-federal forensic laboratory, operational across multiple disciplines including toxicology, firearms, latent print comparison, latent print processing, biology, digital forensics, and forensic multimedia.

Table 3: Comparison of Proficiency Testing Approaches in Forensic Science

| Characteristic | Declared Proficiency Testing | Blind Proficiency Testing |

|---|---|---|

| Awareness | Examiner knows they are being tested | Examiner unaware of testing situation |

| Ecological Validity | May differ substantially from casework [8] | Must resemble actual cases to maintain deception [8] |

| Behavioral Impact | Examiners may dedicate extra time/attention [8] | Normal work patterns and pace |

| Scope of Testing | Often targets specific analytical components | Tests entire laboratory pipeline from evidence submission to reporting |

| Error Detection Capacity | Can detect mistakes and malpractice | Can detect mistakes, malpractice, AND misconduct [8] |

| Current Adoption | Majority of forensic laboratories [8] | ~10% of forensic labs (39% of federal labs) [8] |

Step-by-Step Implementation Protocol

Phase 1: Infrastructure Development

- Establish Case Management System: Implement case managers as buffers between test requestors and laboratory analysts to enable blind submission of proficiency samples [9]

- Design Authentic Test Materials: Develop mock evidence samples that closely resemble real casework in complexity and presentation [8]

- Create Documentation Protocol: Establish standardized procedures for test administration, data collection, and analysis

Phase 2: Pilot Implementation

- Select Initial Discipline: Choose one forensic discipline for initial implementation (HFSC began in 2015 with multiple disciplines) [8]

- Coordinate with Quality Division: Engage quality assurance personnel to maintain objectivity in test design and evaluation

- Implement Blind Samples: Introduce mock evidence through normal case intake procedures without alerting examiners

Phase 3: Full Integration

- Expand Across Disciplines: Extend program to additional forensic disciplines following successful pilot

- Develop Statistical Foundation: Collect performance data to calculate error rates for each discipline as practiced in the laboratory [9]

- Continuous Quality Improvement: Use results to identify process improvements across evidence handling, testing, and reporting

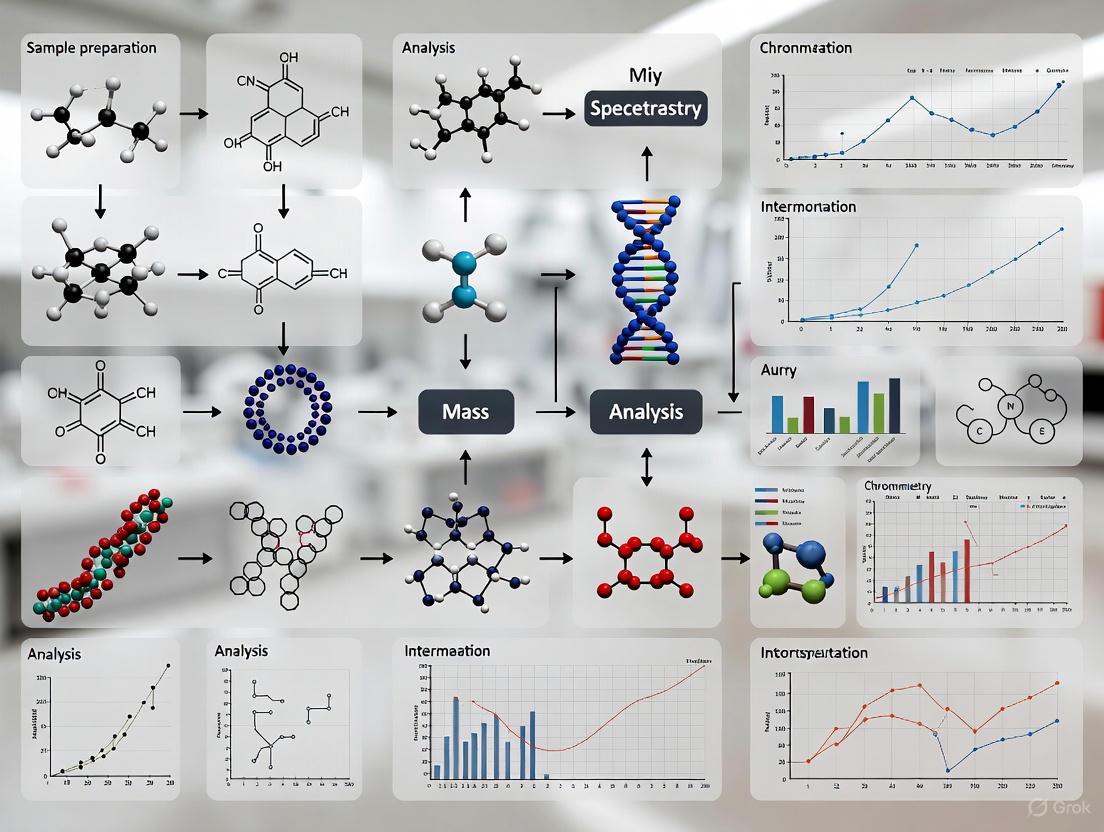

Visualizing Blind Testing Implementation

Workflow Diagram: Blind Proficiency Testing Protocol

Diagram 1: Blind testing workflow in forensic laboratories.

Contextual Bias Experimental Design

Diagram 2: Contextual bias experimental design for face recognition.

The implementation of blind proficiency testing represents a critical advancement in addressing contextual bias and establishing the statistical foundation necessary for forensic science to meet scientific and legal standards for reliability [9]. The experimental evidence demonstrates that contextual bias systematically influences forensic decision-making across multiple disciplines, particularly when evidence is ambiguous or examiners utilize top-down processing approaches.

The Houston Forensic Science Center model provides a practical template for laboratories seeking to implement blind testing protocols, demonstrating that robust programs can be established without substantial budget increases [9]. As forensic science continues to evolve, the integration of blind testing and linear sequential unmasking protocols offers the most promising pathway for quantifying error rates, improving analytical quality, and ensuring that forensic evidence presented in judicial proceedings meets the standards of scientific validity contemplated in Daubert [9].

The implementation of advanced technologies and standardized protocols in forensic crime laboratories is a critical component of modern criminal justice systems. This statistical overview examines the current adoption landscape, focusing on market trends, technological integration, and operational challenges within forensic laboratories. The data and analyses presented herein are framed within a broader research context exploring the implementation of blind testing methodologies, providing a baseline understanding of the infrastructure and capabilities that form the foundation for such rigorous scientific practices.

Forensic laboratories worldwide are navigating a complex convergence of biological evidence analysis and digital forensics, demanding rigorous standardization and specialized handling protocols [10]. This environment is characterized by rapid technological advancement alongside significant operational pressures, including growing evidence backlogs and resource constraints [11]. Understanding this landscape is essential for researchers, scientists, and drug development professionals seeking to implement advanced quality control measures like blind testing, as the feasibility and design of such protocols are directly influenced by existing laboratory capacities, technological adoption rates, and funding environments.

Market Size and Growth Projections

The forensic technology market demonstrates consistent growth, driven by increasing demand for analytical capabilities in criminal investigations. The global DNA forensics market, a core segment of forensic laboratory technology, is projected to grow from $3.3 billion in 2025 to $4.7 billion by 2030, reflecting a compound annual growth rate (CAGR) of 7.7% [12] [13]. Alternative estimates suggest a slightly lower CAGR of 6.98%, projecting growth from $3.2 billion in 2025 to $5.87 billion by 2034 [14]. This growth trajectory underscores the expanding role of DNA analysis in both criminal and civil applications.

The broader forensic lab equipment market shows similar expansion, expected to increase from $1.53 billion in 2025 to $2.30 billion by 2030 at a CAGR of 8.5% [15]. Within the United States specifically, the forensic equipment and supplies market is anticipated to advance at an even more rapid pace (CAGR of 12.92%), growing from $9.69 billion in 2025 to $20.09 billion by 2033 [16]. These figures indicate significant investment in laboratory infrastructure, which creates opportunities for implementing advanced testing protocols.

Table 1: Global Market Size and Growth Projections for Forensic Technologies

| Market Segment | 2024/2025 Base Size | 2030/2034 Projected Size | CAGR | Source |

|---|---|---|---|---|

| DNA Forensics | $3.3 billion (2025) | $4.7 billion (2030) | 7.7% | [12] [13] |

| DNA Forensics | $3.2 billion (2025) | $5.87 billion (2034) | 6.98% | [14] |

| Forensic Lab Equipment | $1.53 billion (2025) | $2.30 billion (2030) | 8.5% | [15] |

| U.S. Forensic Equipment & Supplies | $9.69 billion (2025) | $20.09 billion (2033) | 12.92% | [16] |

Regional Adoption Patterns

North America currently dominates the forensic technology landscape, accounting for the largest market share (42%) in the DNA forensics segment in 2024 [14]. The U.S. DNA forensics market alone was valued at $879.06 million in 2024 and is predicted to reach approximately $1,757.80 million by 2034 [14]. This dominance is attributed to advanced infrastructure, robust regulatory frameworks, and substantial investments in forensic technologies [14].

The Asia-Pacific region is emerging as the fastest-growing market, fueled by rapid technological advancements, increasing forensic capabilities, and rising awareness about the importance of DNA analysis in criminal investigations [14]. Countries such as China, India, Japan, and South Korea are witnessing significant growth in the adoption of DNA forensics technologies, driven by expanding forensic facilities and growing investments in research and development [14].

Europe maintains a substantial market share supported by stringent quality standards and increasing R&D initiatives across member nations [16]. Meanwhile, Latin America and the Middle East & Africa are witnessing gradual market progression, backed by improving economic conditions and growing awareness of advanced forensic solutions [16].

Table 2: Regional Market Analysis and Growth Patterns

| Region | Market Share (2024) | Growth Trend | Key Growth Drivers |

|---|---|---|---|

| North America | 42% (DNA Forensics) | Steady growth (CAGR 7.18% for U.S.) | Advanced infrastructure, robust regulatory frameworks, substantial investment [14] |

| Asia-Pacific | Not specified | Fastest growing region | Rapid technological advancements, expanding forensic facilities, government initiatives [14] |

| Europe | Substantial share | Stable growth | Stringent quality standards, R&D initiatives, sustainability goals [16] |

| Latin America, Middle East & Africa | Gradual progression | Gradual market progression | Improving economic conditions, rising urbanization, growing awareness [16] |

Technology Segment Adoption

Product Type Segmentation

The DNA forensics market is segmented by product type, with kits and consumables dominating through the forecast period [12]. This segment's prominence reflects the ongoing, high-volume nature of DNA analysis in forensic laboratories. The analyzers and sequencers segment is also observing notable growth, driven by technological advancements and their crucial role in analyzing and sequencing DNA samples [14].

Equipment segmentation reveals strong adoption of DNA analyzers, liquid chromatography systems, gas chromatography systems, spectroscopy equipment, microscopes, and laboratory centrifuges [15]. The drug testing/toxicology segment is projected to witness notable market growth, fueled by rising drug abuse and overdose rates [15]. For instance, according to the 2023 United States National Survey on Drug Use and Health (NSDUH), approximately 48.5 million Americans had a substance use disorder, creating exponential demand for forensic equipment to measure drug traces [15].

Analytical Technique Implementation

Polymerase chain reaction (PCR) amplification currently dominates the methodology segment in DNA forensics [14]. Capillary electrophoresis (CE) is expected to show substantial growth during the forecast period due to its high resolution and sensitivity in separating DNA fragments based on size and charge [14].

Next-generation sequencing (NGS) represents a transformative technology driving market growth, enabling rapid and cost-effective analysis of DNA samples [14]. The integration of artificial intelligence (AI) and machine learning into forensic processes is also gaining traction, enabling improved analysis and automation [12]. The National Institute of Justice (NIJ) has identified innovative research on the use of AI within the criminal justice system as a key interest area for 2025 [17].

Laboratory Workflow Protocol for DNA Analysis

The following protocol outlines the standard workflow for forensic DNA analysis, incorporating technological implementations and quality control measures relevant for blind testing methodologies.

Evidence Intake and Triage

Procedure:

- Documentation: Record evidence following strict chain-of-custody procedures using Laboratory Information Management Systems (LIMS) for automated, immutable record-keeping [10].

- Assessment: Evaluate evidence for probative value and assign priority based on case type (violent crime vs. property crime).

- Triage Implementation: Utilize structured protocols including submission review processes involving both lab analysts and prosecutors [11].

Technical Note: Laboratories implementing LEAN-inspired workflow redesign, such as Connecticut's facility, have reduced average DNA turnaround from backlogged conditions to under 60 days [11].

DNA Extraction and Quantification

Procedure:

- Extraction Method Selection: Choose appropriate extraction method based on sample type (blood, bones, hair) and condition (degraded vs. intact).

- Automated Extraction: Implement automated systems where possible to increase throughput and reduce contamination risk.

- Quantification: Precisely measure DNA concentration using quantitative PCR (qPCR) or digital PCR methods.

Technical Note: The Michigan State Police validated low-input and degraded DNA extraction methods through a competitive CEBR grant, resulting in a 17% increase in interpretable DNA profiles from complex evidence within 12 months [11].

Amplification and STR Analysis

Procedure:

- PCR Amplification: Amplify target Short Tandem Repeat (STR) regions using commercial amplification kits.

- Capillary Electrophoresis: Separate amplified fragments using CE systems.

- Data Analysis: Interpret STR profiles using probabilistic genotyping software where appropriate.

Technical Note: Technological innovations now allow a single sample to be analyzed in under 90 minutes, enabling near-instant identification directly in the field [12].

Quality Assurance and Data Reporting

Procedure:

- Control Samples: Process positive and negative controls alongside evidence samples.

- Technical Review: Conduct independent review of all data by second qualified analyst.

- Statistical Interpretation: Calculate match probabilities using population-specific databases.

- Report Generation: Issue findings with standardized terminology that conveys statistical probabilities associated with analytical results [10].

Diagram Title: Forensic DNA Analysis Workflow with Blind Testing Integration

Research Reagent Solutions and Essential Materials

Implementation of standardized protocols requires specific research reagents and laboratory equipment. The following table details key solutions essential for forensic DNA analysis procedures.

Table 3: Essential Research Reagent Solutions for Forensic DNA Analysis

| Item | Function | Application in Protocol |

|---|---|---|

| DNA Extraction Kits | Isolation of DNA from various biological sources | Initial sample processing; critical for low-input and degraded samples [11] |

| PCR Amplification Kits | Amplification of target STR regions | DNA profiling; enables analysis of minute quantities of DNA [14] |

| STR Analysis Kits | Multiplex PCR targeting forensic STR markers | Generating DNA profiles for comparison; compatible with CE systems [12] |

| Capillary Electrophoresis Systems | Separation of amplified DNA fragments by size | Fragment analysis; provides high resolution and sensitivity [14] |

| Quantitative PCR (qPCR) Reagents | Quantification of human DNA and assessment of quality | Determining optimal amplification input; detecting inhibitors [17] |

| Laboratory Information Management Systems (LIMS) | Automated tracking of evidence and results | Maintaining chain-of-custody; ensuring data integrity [10] |

| Rapid DNA Kits | Automated extraction, amplification, and analysis | Field deployment; processing samples in <90 minutes [12] |

| Probabilistic Genotyping Software | Statistical interpretation of complex DNA mixtures | Data analysis; objective assessment of evidentiary value [11] |

Laboratory Implementation Challenges and Innovations

Operational and Resource Constraints

Forensic laboratories face significant challenges in technology implementation. Between 2017 and 2023, turnaround times for DNA casework increased by 88%, despite technological advancements [11]. The 2019 NIJ Needs Assessment estimated a $640 million annual shortfall just to meet current demand, with another $270 million needed to address the opioid crisis [11].

Federal funding constraints exacerbate these challenges. The DOJ's proposed FY 2026 budget would slash the Paul Coverdell Forensic Science Improvement Grants by roughly 70%, from $35 million to just $10 million [11]. Similarly, the Capacity Enhancement for Backlog Reduction (CEBR) program remains funded at roughly $94-95 million in FY 2024, well below the $151 million level authorized by Congress [11].

Innovative Implementation Models

Despite constraints, laboratories are developing innovative implementation models:

Technical Innovation Grants: Laboratories like the Michigan State Police have used competitive CEBR grants to validate low-input and degraded DNA extraction methods, expanding capability to analyze difficult sexual assault kits and touch DNA cases [11].

Workflow Redesign: Connecticut's laboratory implemented a LEAN-inspired workflow redesign, reducing average DNA turnaround to under 60 days and achieving zero audit deficiencies for three consecutive years [11].

Regional Partnerships: Shelby County, Tennessee partnered with the Memphis City Council in 2025 to fund a $1.5 million regional crime lab integrating DNA, ballistics, and digital forensics to reduce reliance on overburdened state labs [11].

Efficiency Methodologies: The Louisiana State Police Crime Laboratory implemented Lean Six Sigma principles through an NIJ Efficiency Grant, reducing average turnaround time from 291 days to just 31 days while tripling case throughput [11].

The current adoption landscape of forensic technologies in laboratory implementation reflects a dynamic interplay between technological advancement and operational challenges. The consistent market growth and regional expansion patterns demonstrate increasing reliance on forensic science capabilities across criminal justice systems. However, successful implementation of advanced methodologies, including blind testing protocols, must account for significant resource constraints and workflow variations across laboratories.

The statistical overview presented herein provides researchers with critical baseline data for designing studies that accommodate real-world laboratory conditions. Future implementation efforts should leverage innovative funding models, workflow efficiencies, and strategic partnerships to advance forensic science capabilities while maintaining the rigorous standards required for admissible scientific evidence.

Forensic science serves as a critical backbone of modern criminal investigations, yet its integrity faces fundamental challenges when laboratories operate under law enforcement control [18]. The concept of forensic independence refers to the structural separation of crime laboratories from direct law enforcement and prosecutorial oversight, creating conditions where scientific analysis can proceed free from institutional pressures [18] [19]. This separation addresses the pervasive risk of contextual bias, where forensic examiners' interpretations may be influenced—consciously or unconsciously—by knowledge of case details or pressure to support prosecutorial objectives [18]. A landmark 2009 National Academy of Sciences (NAS) report identified fragmentation, lack of standardization, and contextual bias as critical weaknesses in the United States forensic system, recommending structural independence as a fundamental solution [18].

The crisis of forensic independence represents more than an administrative challenge—it reflects a fundamental conflict between scientific and institutional loyalties [19]. When scientists challenge prosecutorial narratives or expose systemic problems, they frequently experience professional retaliation, forced resignations, or career marginalization, creating a chilling effect on scientific dissent [19]. These patterns demonstrate deeper cultural mechanisms that protect institutional authority by marginalizing those who threaten the myth of forensic objectivity [19]. This analysis examines the empirical evidence supporting structural independence, presents implementation protocols for blind testing methodologies, and provides practical frameworks for laboratories transitioning toward independent operation.

Quantitative Evidence: Error Rates and Performance Disparities

Substantial quantitative evidence demonstrates how structural relationships impact forensic outcomes. Comparative studies reveal significant disparities in error rates between different testing methodologies and organizational structures, highlighting the critical need for reform.

Table 1: Comparative Error Rates in Declared vs. Blind Proficiency Testing

| Testing Type | Study/Context | False Positive Rate | False Negative Rate | Key Findings |

|---|---|---|---|---|

| Declared Proficiency Tests | Drug Testing Labs (1970s) | Lower in declared tests | Lower in declared tests | Laboratories performed better when aware they were being tested [8] |

| Blind Proficiency Tests | Drug Testing Labs (1970s) | Varied by study | Higher in blind tests | Missed more drug samples when unaware of testing [8] |

| Declared Proficiency Tests | Blood Lead Testing (2001) | Lower | Lower | Error rates higher in blind tests; labs made special efforts for known tests [8] |

| Blind Proficiency Tests | Blood Lead Testing (2001) | Higher | Higher | Demonstrated more realistic performance assessment [8] |

The implementation rates of blind testing programs further reveal structural influences on forensic quality assurance. As of 2014, only 10% of forensic laboratories conducted blind proficiency tests, with federal labs implementing these measures at dramatically higher rates (39%) compared to state, county, and municipal labs (5-8%) [8]. This disparity suggests that structural and resource factors significantly impact a laboratory's capacity to implement robust quality assurance measures.

Table 2: Implementation Rates of Blind Proficiency Testing by Laboratory Type

| Laboratory Type | Blind Testing Implementation Rate (2002) | Blind Testing Implementation Rate (2014) | Change Over Time |

|---|---|---|---|

| Federal Laboratories | >20% | 39% | Significant increase |

| State Laboratories | >20% | 5-8% | Substantial decrease |

| County/Municipal Laboratories | >20% | 5-8% | Substantial decrease |

| All Laboratories Combined | >20% | 10% | Significant decrease |

Quantitative analysis of forensic genetic evidence further demonstrates how methodological choices impact results. A 2022 study analyzing 156 real casework samples found that probabilistic genotyping software produced significantly different likelihood ratios (LRs) depending on the analytical approach [20]. Quantitative tools (STRmix and EuroForMix) generally produced higher LRs than qualitative software (LRmix Studio), with differences also observed between the two quantitative tools [20]. These variations highlight how the choice of analytical methodology—not just the underlying evidence—can substantially impact the perceived strength of forensic evidence.

Experimental Protocols: Implementing Blind Quality Control

Blind Proficiency Testing Implementation Protocol

The Houston Forensic Science Center (HFSC) has developed and implemented one of the most comprehensive blind quality control programs in a non-federal forensic laboratory, providing a validated model for other institutions [21]. The following protocol details the implementation process:

Phase 1: Program Design and Planning

- Conduct workflow analysis: Map the complete evidence submission and analysis workflow for each forensic discipline, identifying all potential intervention points for blind sample introduction [21].

- Establish administrative structure: Create a dedicated Quality Division responsible for creating, tracking, and evaluating blind samples, separate from casework operations [21].

- Define scope and frequency: Determine which forensic disciplines will participate (HFSC includes toxicology, seized drugs, firearms, latent prints, forensic biology, and digital multimedia) and establish testing frequency based on case volume and risk assessment [21].

- Develop sample creation protocols: Design realistic blind samples that mirror actual casework in complexity and presentation, with known ground truth for evaluation [21].

Phase 2: Sample Implementation and Monitoring

- Submit blind samples through normal channels: Introduce blind samples into the regular casework flow without special identification to preserve the blind nature of testing [21].

- Document examiner interactions: Track how examiners process samples compared to regular casework, including time allocation and methodological approaches [8].

- Monitor discovery rates: Record instances where analysts identify samples as potential proficiency tests (HFSC data shows only 51 of 973 samples were detected) [21].

Phase 3: Analysis and Corrective Action

- Evaluate results against ground truth: Compare examiner conclusions with known sample characteristics to identify discrepancies [8].

- Categorize errors: Classify identified errors using standardized typologies (mistakes, malpractice, or misconduct) to guide appropriate corrective responses [8].

- Implement systematic improvements: Use findings to refine methodologies, enhance training, and address systemic issues identified through testing [21].

Bayesian Analysis Protocol for Digital Evidence Quantification

Digital forensics has traditionally lacked the quantitative rigor of other forensic disciplines, but Bayesian methods offer a solution for quantifying evidentiary strength [22]. The following protocol adapts Bayesian analysis for digital evidence evaluation:

Phase 1: Hypothesis Formulation

- Define prosecution hypothesis (Hₚ): Clearly articulate the proposition regarding how digital evidence came to exist, such as "The defendant intentionally uploaded the illicit file." [22]

- Define defense hypothesis (Hₑ): Establish a mutually exclusive alternative explanation, such as "The file was deposited via malware without the user's knowledge." [22]

- Ensure exhaustive alternatives: Confirm that Hₚ and Hₑ represent all possible explanations for the evidence existence [22].

Phase 2: Evidence Identification and Categorization

- Inventory digital artifacts: Identify all relevant digital evidence items including files, registry entries, network logs, and timestamps [22].

- Map evidence to hypotheses: Determine how each evidence item relates to both Hₚ and Hₑ, identifying which hypothesis better explains its existence [22].

- Establish dependencies: Document relationships between evidence items to inform Bayesian network structure [22].

Phase 3: Bayesian Network Construction

- Create node structure: Design network nodes representing hypotheses, evidence items, and intermediate conclusions [22].

- Assign conditional probabilities: Populate probability tables for each node based on expert elicitation, empirical data, or established databases [22].

- Validate network structure: Test network logic and probability assignments through peer review and sensitivity analysis [22].

Phase 4: Likelihood Ratio Calculation

- Compute prior odds: Establish initial probability ratio between Hₚ and Hₑ based on non-evidential considerations [22].

- Calculate likelihood ratio: Determine the ratio of probabilities of observing the evidence under Hₚ versus Hₑ [22].

- Derive posterior odds: Multiply prior odds by likelihood ratio to obtain final probability ratio incorporating the evidence [22].

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing robust forensic protocols requires specific methodological tools and analytical frameworks. The following table details essential "research reagents" for forensic independence and blind testing implementation.

Table 3: Essential Research Reagents for Forensic Independence and Blind Testing

| Tool/Reagent | Function/Application | Implementation Example |

|---|---|---|

| Blind Proficiency Samples | Testing the entire laboratory pipeline without examiner awareness | HFSC created 973 blind samples across multiple disciplines [21] |

| Probabilistic Genotyping Software | Quantifying genetic evidence through likelihood ratio calculation | STRmix and EuroForMix used for DNA mixture interpretation [20] |

| Bayesian Network Models | Quantifying the strength of digital evidence under alternative hypotheses | Applied to illicit file sharing cases with posterior probability calculations [22] |

| Context Management Protocols | Limiting exposure to potentially biasing case information | Implementing information firewall between investigators and examiners [18] |

| Standardized Error Typology | Categorizing and responding to identified discrepancies | Classifying errors as mistakes, malpractice, or misconduct [8] |

| Quantitative Complexity Models | Evaluating alternative explanations for digital evidence presence | Calculating odds against Trojan Horse Defense using operational complexity [22] |

Structural Independence Implementation Framework

Achieving genuine forensic independence requires systematic restructuring of laboratory governance, funding, and operational protocols. The following diagram illustrates the essential components of an independent forensic science system.

The structural independence framework requires specific implementation mechanisms:

Civilian Oversight Boards: Establishing independent governance bodies with representation from scientific communities, legal experts, and public stakeholders to set policies and review laboratory performance [18] [23].

Whistleblower Protection Protocols: Implementing robust employment safeguards for scientists who identify systemic problems or challenge prosecutorial narratives, preventing the professional retaliation documented in multiple cases [19].

Dedicated Funding Streams: Creating financial mechanisms separate from law enforcement budgets to eliminate resource dependencies that create institutional pressure [23].

Equal Access Requirements: Mandating that forensic services and raw data are equally available to prosecution and defense, preventing the information asymmetry that currently undermines challengability [23].

Mandatory Blind Verification: Implementing systematic blind checks for consequential forensic analyses, creating structural circuits against contextual bias [8] [21].

Structural independence represents a foundational requirement rather than an administrative preference for forensic science. The empirical evidence demonstrates that current structures within law enforcement hierarchies produce measurable biases that compromise scientific integrity [18] [8] [19]. The implementation of blind testing protocols, Bayesian quantitative frameworks, and independent governance models provides a practical pathway toward forensic science that prioritizes methodological rigor over institutional objectives.

As the 2009 NAS report recognized and subsequent research has confirmed, the structural integration of forensic science with law enforcement creates incompatible institutional missions [18]. The professional retaliation against scientists who challenge official narratives, the differential performance in blind versus declared testing, and the documented resistance to methodological transparency all indicate systemic rather than incidental problems [8] [19]. The protocols and frameworks presented here provide laboratory directors, researchers, and policy makers with evidence-based tools to advance forensic science toward genuine scientific independence, restoring public trust through methodological rigor rather than institutional authority.

Application Notes: The Critical Role of Blind Testing in Forensic Science

The National Academy of Sciences (NAS) Report and Its Legacy

The 2009 report from the National Academy of Sciences, "Strengthening Forensic Science in the United States: A Path Forward," served as a watershed moment for forensic science, critically evaluating the scientific foundations of many forensic disciplines. While the report did not issue a single, isolated recommendation on blind testing, its overarching critique implicitly advocated for practices that would reduce cognitive bias and improve validity, thereby creating a pivotal opening for the discussion of blind testing as a fundamental corrective measure [8].

Subsequent official bodies strengthened this call. The National Commission on Forensic Science (NCFS) in 2016 recommended that all Department of Justice Forensic Science Service Providers “seek proficiency testing programs that provide sufficiently rigorous samples that are representative of the challenges of forensic casework” [8]. The President’s Council of Advisors on Science and Technology (PCAST) in the same year delivered an even more forceful statement: “PCAST believes that test-blind proficiency testing of forensic examiners should be vigorously pursued, with the expectation that it should be in wide use, at least in large laboratories, within the next five years” [8]. These endorsements underscore that blind testing is not merely a technical best practice but a legal and ethical imperative for ensuring the reliability of forensic evidence presented in court.

Quantitative Landscape of Proficiency Testing in Forensic Laboratories

The implementation of blind testing in forensic laboratories remains limited and uneven. The following table summarizes key data on proficiency testing practices, highlighting the gap between federal and non-federal laboratories.

Table 1: Adoption Rates of Proficiency Testing in U.S. Forensic Laboratories

| Laboratory Type | Any Proficiency Testing (2014) | Blind Proficiency Testing (2014) | Blind Testing (2002) |

|---|---|---|---|

| All Forensic Labs | 98% | 10% | ~20% |

| Federal Labs | Information Missing | 39% | Information Missing |

| State, County, Municipal Labs | Information Missing | 5-8% | Information Missing |

Data adapted from studies cited in PMC [8].

Beyond adoption rates, the ecological validity of tests is a major concern. Commercial declared tests often differ from real casework in task difficulty and sample quality. For instance, latent print tests have been shown to feature higher-quality prints than those encountered in actual cases, failing to assess examiner performance under realistic conditions [8]. Furthermore, examiner behavior changes when they know they are being tested, such as dedicating more time to the analysis, which invalidates the test as a true measure of routine operational accuracy [8].

Error Classification and the Unique Power of Blind Tests

Understanding the types of errors that occur in forensic analysis is crucial for appreciating the value of blind testing. The framework below categorizes nonconforming work and illustrates why blind tests are indispensable.

Diagram 1: A taxonomy of nonconforming work in forensic analysis, highlighting the unique capability of blind testing to uncover misconduct.

As shown, while mistakes and malpractice can be caught through standard quality assurance procedures, misconduct is uniquely resistant to detection. Blind testing is one of the few tools available that can reveal such deliberate deviations, as the examiner, unaware the sample is a test, has no reason to alter their behavior to avoid detection [8].

Experimental Protocols for Implementing Blind Proficiency Testing

General Workflow for a Forensic Blind Proficiency Test

A robust blind testing program requires meticulous planning and execution to be both effective and ethically sound. The following diagram and protocol outline the core workflow.

Diagram 2: End-to-end workflow for a blind proficiency test, showing the critical role of an independent test coordinator.

Protocol 1: General Framework for a Blind Proficiency Test

- Objective: To assess the accuracy and reliability of the forensic analysis pipeline under realistic, operational conditions, free from the bias introduced by declared testing.

- Principle: A test sample, for which the ground truth is known, is introduced into the standard casework flow without the knowledge of the examiners or personnel involved in its analysis.

Steps:

- Test Design and Sample Preparation (Blinding):

- An Independent Test Coordinator (or QA manager) designs the test. This individual must be separate from the analytical team.

- Select or create a test sample that is forensically realistic and challenging, mirroring the complexity and quality of genuine casework [8].

- For disciplines like toxicology or drug analysis, carefully prepare test items. This includes proper labeling, ensuring sample stability during any required shipment, and correct storage conditions. Document relevant physicochemical properties (e.g., solubility, volatility) to prevent experimental failure [24].

- Record the known "ground truth" of the sample and securely store this information.

Submission and Documentation (Submission):

- The Independent Test Coordinator submits the blind test sample through the laboratory's standard evidence intake system, mimicking a real case submission.

- Document all steps to maintain the chain of custody.

Routine Analysis (Analysis):

- The sample is assigned to a forensic examiner or casework team through normal laboratory procedures.

- The examiner conducts the analysis using the laboratory's standard operating procedures, under the assumption that it is a real case.

Result Reporting (Reporting):

- The examiner completes their analysis and generates a final report, which is submitted through the standard channels.

Post-Test Evaluation (Evaluation):

- The Independent Test Coordinator receives the final report and compares the examiner's results against the known "ground truth."

- The evaluation should assess not only the final conclusion (e.g., identification, exclusion, inconclusive) but also the quality of the process and the conformity of the work.

Unblinding and Feedback (Debriefing):

- The examiner is informed that the case was a proficiency test.

- The results are shared and discussed in a constructive, non-punitive manner focused on education and systemic improvement. Unblinding is also critical for long-term method development, as it enriches model databases and supports mechanistic understanding [24].

Protocol for a Blind Test of an In Vitro Toxicology Assay

This protocol adapts the general blind testing principles to the context of evaluating New Approach Methodologies (NAMs), such as assays for respiratory sensitization.

Table 2: Key Research Reagent Solutions for a Blind In Vitro Toxicology Study

| Item/Tool | Function in Blind Testing Protocol |

|---|---|

| ALIsens Model (or equivalent) | A complex in vitro test system that mimics the human airway at the air-liquid interface, used for identifying respiratory sensitizers [24]. |

| Coded Test Items | The chemicals under investigation. They are blinded with a unique code to prevent recognition by the testing team. |

| Positive Control Items | Chemicals with known positive effects (respiratory sensitizers). Included to verify the test system is responsive. |

| Negative Control Items | Chemicals with known negative effects (non-sensitizers). Included to verify the test system's specificity. |

| Vehicle Control | The solvent (e.g., DMSO, culture medium) used to dissolve the test items. Serves as the baseline for measurement. |

| Sealed Safety Data Sheets (SDS) | Provided for emergency access only to ensure researcher safety while maintaining the blind for hazardous substances [24]. |

Protocol 2: Blind Testing of Respiratory Sensitizers Using an In Vitro Model

Objective: To objectively evaluate the performance of a complex in vitro test system (e.g., ALIsens) for correctly identifying respiratory sensitizers without bias.

Pre-Test Considerations and Preparations:

- Sponsor-Coordinator Partnership: An independent sponsor (e.g., a collaborating lab or internal QA unit) prepares and codes all test items, including relevant positive, negative, and vehicle controls.

- Chemical Eligibility and Safety: The sponsor must vet chemicals for safety. For substances of very high concern (SVHCs), sealed Safety Data Sheets are provided for emergency access [24].

- Sample Logistics: The sponsor is responsible for providing sufficient quantities of each test item, ensuring sample stability during shipment, and disclosing critical physicochemical information (e.g., volatility, reactivity) to the testing team to prevent experimental failure [24].

- Pre-defined Acceptance Criteria: Establish criteria for system validity (e.g., positive and negative controls must yield expected results) before the blind data is unsealed.

Experimental Steps:

- Receipt and Storage: The testing laboratory receives the coded test items. Personnel store them as per the provided instructions without knowledge of their identity or class.

- Randomization: The order of testing for the coded items is randomized to eliminate sequence-based artifacts.

- Exposure and Data Collection: The test items are applied to the in vitro model (e.g., at the air-liquid interface) according to the standardized protocol. All subsequent endpoint measurements (e.g., biomarker release, gene expression, cytopathology) are collected and linked only to the sample code.

- Data Analysis: Data analysts process the data and generate predictions (e.g., "sensitizer" or "non-sensitizer") using only the sample codes.

- Result Submission and Unblinding: The laboratory's final coded predictions are submitted to the independent sponsor. The sponsor unblinds the codes and compares the predictions to the known ground truth to calculate accuracy, sensitivity, and specificity.

- Knowledge Enrichment: The results and, where feasible, the underlying data are used to enrich the assay's database, supporting its continued development and regulatory acceptance [24].

From Theory to Practice: Implementing Effective Blind Testing Protocols Across Forensic Disciplines

The scientific validity of forensic science disciplines has been subject to significant scrutiny since the 2009 National Academy of Sciences (NAS) report, which revealed that no forensic method other than nuclear DNA analysis has been rigorously shown to consistently and reliably support source conclusions [9]. This scientific challenge creates a legal dilemma, as courts following the Daubert standard are instructed to consider the "potential error rate" of scientific evidence, yet most forensic disciplines lack the empirical data to quantify these rates [9]. In response to this challenge, the Houston Forensic Science Center (HFSC) has pioneered the implementation of a blind quality control (blind QC) program in firearms examination, providing a model for developing the statistical foundation necessary to demonstrate forensic methodology reliability [25].

Blind proficiency testing represents a paradigm shift in quality assurance for forensic sciences. Unlike traditional "open" proficiency tests, where examiners know they are being tested, blind tests are submitted through normal casework pipelines without examiner knowledge, thereby capturing more realistic performance data and eliminating the "Hawthorne effect" where examiners may alter their behavior when aware of being evaluated [8] [26]. While only approximately 10% of forensic laboratories conducted blind proficiency tests as of 2014, HFSC has emerged as a leader in implementing this rigorous assessment approach across multiple disciplines, including firearms examination [8].

Organizational Context and Operational Structure

The Houston Forensic Science Center operates as an independent local government corporation that provides forensic services to the City of Houston's law enforcement agencies [27]. This operational independence from law enforcement represents a significant structural feature that supports the implementation of robust quality control measures. The firearms examination section within HFSC conducts analysis on firearms-related evidence, including microscopic examination and comparison of fired bullets and cartridge cases to determine whether evidence was fired from the same firearm [28] [25].

HFSC has established itself as a leader in ballistic imaging, having served as one of only six facilities in the country approved by the Bureau of Alcohol, Tobacco, Firearms and Explosives (ATF) to provide training for the National Integrated Ballistic Information Network (NIBIN) [29]. This nationwide system of ballistic imaging devices compares markings on fired cartridge cases to identify firearms used in multiple crimes. Since acquiring its first NIBIN unit in 1999, HFSC forensic scientists have linked more than 3,000 firearm crimes across multiple law enforcement jurisdictions [28] [29].

Standard Firearms Examination Methodology

The firearms examination process at HFSC follows rigorously defined procedures based on the Association of Firearms and Tool Mark Examiners (AFTE) standards [25]. When a firearm is submitted for analysis, examiners first test its functionality and create a set of test fires—cartridge cases and bullets known to have been fired from that specific firearm. These known samples are then compared to submitted fired evidence (unknown samples) using comparison microscopes to examine markings made during the firing process [25].

The HFSC firearms section employs a defined range of conclusions for reporting results, as detailed in Table 1. This range includes Identification, Elimination, Inconclusive, Unsuitable, and Insufficient conclusions, with specific criteria governing each determination [25]. The conclusion framework acknowledges the practical limitations of firearms identification, noting that identifications are made "to the practical, not absolute, exclusion of all other firearms" [25].

Table 1: Firearms Examination Range of Conclusions

| Conclusion | Criteria |

|---|---|

| Identification | "A sufficient correspondence of individual characteristics will lead the examiner to the conclusion that both items originated from the same source." |

| Elimination | "A disagreement of class characteristics will lead the examiner to the conclusion that the items did not originate from the same source." |

| Inconclusive | "An insufficient correspondence of individual and/or class characteristics will lead the examiner to the conclusion that no identification or elimination could be made." |

| Unsuitable | "A lack of suitable microscopic characteristics will lead the examiner to the conclusion that the items are unsuitable for identification." |

| Insufficient | Item has discernible class characteristics but no individual characteristics, or characteristics are of such poor quality that precludes a definitive opinion. |

HFSC employs a verification process for all cases involving comparisons or suitability determinations. Each case is examined by a secondary examiner, and a third examiner conducts additional technical and administrative review before final reporting [25]. This multi-layered review process provides quality control checks throughout the examination workflow.

Blind Quality Control Program: Implementation and Protocol

Program Foundation and Governance

HFSC implemented its blind QC program in firearms examination in December 2015 as part of a broader organizational initiative to enhance quality assurance across multiple forensic disciplines [30] [25]. The program is facilitated and maintained by HFSC's Quality Division, which operates independently from the laboratory sections to ensure objectivity and prevent potential bias [25]. This organizational separation is critical to maintaining the integrity of the blind testing process, as quality personnel who are not associated with testing procedures prepare and introduce mock cases into the regular workflow.

The fundamental intent of the blind QC program is to supplement open proficiency tests required for accreditation, providing a more comprehensive assessment of the entire quality management system from evidence submission to reporting of results [30]. The program was designed to address specific limitations of traditional proficiency testing, including the lack of realism in test materials and the potential for altered examiner behavior when aware of being tested [8] [26].

Blind Case Creation and Submission Protocol

The blind QC case creation and submission process follows a meticulously designed protocol to ensure cases closely resemble routine casework:

- Case Conception: Firearms section management develops mock cases and determines ground truth and expected results prior to submission [30].

- Case Preparation: Quality Division personnel prepare blind cases to mimic real casework in all aspects, including packaging, paperwork, and distribution methods [25].

- Submission Integration: Blind cases are submitted into the normal workflow at a rate approximating 5% of the monthly firearms examination case output from the previous year, equating to approximately one blind submission per month [25].

- Submission Method: Cases are submitted by Quality Division members through standard channels, maintaining the appearance of routine case requests from stakeholders [30].

This comprehensive approach ensures that examiners remain unaware they are processing test cases, thereby capturing authentic performance data under normal working conditions.

Case Evaluation and Assessment Methodology

Once a blind QC case is completed, firearms section management reviews the results against predetermined criteria to determine satisfactory completion. A satisfactory result may include either: (1) a result that conforms to the known ground truth, or (2) a result that does not necessarily conform to the known ground truth but is technically sound based on applicable standards in the field [30]. This assessment framework acknowledges that inconclusive conclusions may represent appropriate professional judgments when evidence quality is limited, rather than examination errors.

The following diagram illustrates the complete blind QC workflow at HFSC:

Quantitative Results and Performance Metrics

Blind Testing Outcomes

Between December 2015 and June 2021, HFSC's firearms blind QC program reported 51 blind cases resulting in 570 analysis and comparison determinations [30] [25]. The comprehensive results demonstrated a strong foundation for the reliability of firearms examination methodologies, with no false identifications or false eliminations reported across all determinations.

Table 2: Summary of Firearms Blind QC Program Results (Dec 2015 - Jun 2021)

| Metric | Result |

|---|---|

| Analysis Period | December 2015 - June 2021 |

| Total Blind Cases | 51 cases |

| Total Determinations | 570 analysis and comparison conclusions |

| False Identifications | 0 (no identifications declared for non-matching pairs) |

| False Eliminations | 0 (no eliminations declared for matching pairs) |

| Inconclusive Rates | 40.3% of comparisons where ground truth was identification or elimination |

The complete absence of erroneous conclusions (false identifications or eliminations) across all 570 determinations provides compelling evidence for the reliability of firearms examination procedures when followed correctly [30] [25]. This finding is particularly significant given that these results were obtained under blind conditions that more accurately reflect real-world performance than traditional proficiency testing.

Analysis of Inconclusive Determinations

A detailed analysis of the 40.3% inconclusive rate revealed important patterns in examiner performance and evidence characteristics. Notably, bullets were the primary contributors to inconclusive results, accounting for 61.8% of inconclusive determinations, compared to 21.5% for cartridge cases [30] [25]. This disparity highlights the inherent challenges in bullet comparison due to factors such as fragmentation, deformation, and quality of impressed markings.

Further analysis demonstrated that variables including assigned examiners, training programs, examiner experience levels, and intended case complexity did not significantly contribute to inconclusive results [30]. This consistency across examiner demographics and case types suggests that inconclusive determinations primarily reflect appropriate professional judgments based on evidence quality rather than examiner proficiency issues. The data showed markedly different inconclusive rates based on ground truth: 74% for cases with a ground truth of elimination versus 31% for cases with a ground truth of identification [25].

Implementation Framework and Research Toolkit

Essential Components for Blind Testing Implementation

Successful implementation of a blind proficiency testing program in firearms examination requires specific structural components and resources. Based on the HFSC model, the following research toolkit outlines essential elements:

Table 3: Research Reagent Solutions for Blind Testing Implementation

| Component | Function | Implementation Example |

|---|---|---|

| Independent Quality Division | Facilitates blind case preparation and submission without examiner awareness; maintains objectivity | Organizational separation from laboratory sections [25] |

| Case Management System | Acts as buffer between test requestors and laboratory analysts; enables blind case integration | HFSC's system that manages case workflow and distribution [9] |

| Firearms Reference Collection | Provides sources for creating mock evidence with established ground truth | Controlled firearms used to generate test fires for blind cases [30] |

| Data Tracking Infrastructure | Collects and analyzes performance metrics across multiple cases and examiners | System for tracking 570+ determinations across 51+ cases [25] |

| Standardized Assessment Criteria | Provides consistent framework for evaluating examiner performance against ground truth | HFSC's satisfactory result criteria accounting for technically sound inconclusives [30] |

Operational Requirements and Resource Allocation

The HFSC model demonstrates that effective blind testing programs require specific operational parameters:

- Submission Rate: Blind cases should comprise approximately 5% of monthly case output to maintain adequate assessment frequency without overwhelming system capacity [25].

- Case Variety: Mock cases must represent the full spectrum of complexity encountered in routine casework, including challenging evidence conditions that may warrant inconclusive determinations [30].

- Documentation Standards: Comprehensive documentation protocols must capture all aspects of case creation, submission, examination, and assessment to ensure data integrity and facilitate analysis [30] [25].

Discussion: Implications for Forensic Science Practice

Validity and Error Rate Assessment

The HFSC blind testing program represents a significant advancement in addressing the Daubert dilemma for forensic sciences by generating the empirical data necessary to quantify method reliability and error rates [9]. The finding of zero erroneous conclusions across 570 determinations provides compelling evidence for the foundational validity of firearms examination methodology when properly conducted and reviewed [25]. This data-driven approach moves beyond anecdotal claims of reliability to establish statistical support for practice standards.

The systematic documentation of inconclusive rates under blind conditions provides valuable insights into the practical application of forensic methodology. Rather than representing examination failures, appropriate inconclusive determinations reflect professional judgment and adherence to methodological standards when evidence quality is insufficient for definitive conclusions [30] [25]. This nuanced understanding is essential for proper interpretation of forensic results in legal contexts.

Comparative Analysis with Traditional Proficiency Testing

The HFSC blind testing results highlight critical distinctions between blind and traditional open proficiency testing. While open tests may inadvertently encourage special practices such as increased verification or extended analysis time, blind testing captures typical performance under normal working conditions [8] [26]. This ecological validity makes blind testing particularly valuable for assessing actual laboratory performance rather than optimal performance under test conditions.

Research comparing blind and declared proficiency tests in other testing industries has demonstrated that error rates may differ significantly between the two approaches [8]. Studies in drug testing laboratories found higher false negative rates in blind tests, suggesting that laboratories may employ enhanced diligence when aware of testing [8]. These findings support the implementation of blind testing as a more accurate measure of routine performance.

Implementation Challenges and Cultural Considerations

Despite the demonstrated benefits, implementing blind proficiency testing presents significant challenges, including logistical complexities in case creation and submission, resource allocation requirements, and the cultural history of traditional proficiency testing in forensic laboratories [8] [26]. A survey of latent print examiners found generally ambivalent views toward blind testing, though examiners with direct experience in laboratories using blind testing held significantly more positive perceptions [26]. This suggests that increased exposure and education may help overcome initial resistance.

HFSC's experience demonstrates that successful implementation requires commitment from laboratory leadership and a systematic approach to addressing operational challenges [8] [30]. The organization's status as an independent entity separate from law enforcement may have facilitated the adoption of innovative quality assurance measures like blind testing [27] [9].

The Houston Forensic Science Center's firearms examination blind quality control program represents a pioneering approach to addressing fundamental questions of reliability and validity in forensic science. By implementing a rigorous system of blind testing that integrates seamlessly with normal casework, HFSC has generated valuable empirical data on actual performance under realistic conditions. The results demonstrate that properly conducted firearms examinations can achieve high levels of reliability, with no false identifications or eliminations across more than 570 blind determinations.

The HFSC model provides a template for other forensic laboratories seeking to implement blind testing programs and develop statistical foundations for their disciplines. Future directions should include expanding blind testing to additional forensic disciplines, developing standardized protocols for interlaboratory comparisons, and establishing benchmarks for performance evaluation across different laboratory settings. As blind testing becomes more widespread, the forensic science community will be better positioned to provide the statistical data required by Daubert and to demonstrate the scientific rigor of forensic methodologies.

The successful implementation of blind testing at HFSC illustrates that despite logistical and cultural challenges, robust proficiency testing that accurately measures real-world performance is achievable within operational forensic laboratories. This approach represents a critical step toward strengthening the scientific foundation of forensic practice and enhancing the quality and reliability of evidence presented in legal proceedings.

Blind quality control (QC) samples represent a critical advancement in forensic science quality assurance, moving beyond traditional declared proficiency testing to provide a more authentic assessment of laboratory performance. When forensic analysts are aware they are being tested, their behavior often changes—a phenomenon known as the Hawthorne effect—potentially inflating accuracy rates and compromising the ecological validity of the assessment [8]. Blind QC samples, which are introduced into the normal workflow without analysts' knowledge, address this limitation by testing the entire forensic pipeline from evidence receipt to final reporting.