How to Choose a Spectroscopic Technique: A 2025 Guide for Scientists and Drug Developers

Selecting the optimal spectroscopic technique is a critical decision that directly impacts the success of research and development projects.

How to Choose a Spectroscopic Technique: A 2025 Guide for Scientists and Drug Developers

Abstract

Selecting the optimal spectroscopic technique is a critical decision that directly impacts the success of research and development projects. This guide provides a comprehensive framework for researchers, scientists, and drug development professionals to navigate the complex landscape of modern spectroscopy. It covers foundational principles of major techniques like UV-Vis, IR, Raman, Mass Spectrometry, and NMR, aligns methodological choices with specific applications in biomedicine, and offers practical troubleshooting and optimization strategies. By presenting direct comparisons and validation criteria, this article empowers professionals to make informed, confident decisions that enhance analytical accuracy, efficiency, and innovation in their work.

Understanding the Spectroscopy Landscape: Core Principles and What Each Technique Reveals

Spectroscopy is a class of analytical techniques that measures the interaction between electromagnetic radiation and matter to identify and quantify chemical compounds [1]. The fundamental principle rests on the fact that when light encounters a material, several specific interactions can occur: light can be absorbed, reflected, transmitted, or emitted [2]. The precise manner in which a substance absorbs or emits light creates a unique spectral pattern, often called a "chemical fingerprint," that can be used to identify the material and reveal details about its molecular structure [1].

This chemical fingerprint arises because the energy of light is quantized. Light can be described as a stream of particles called photons, each carrying a specific amount of energy that is inversely related to its wavelength [2]. When a molecule is exposed to a spectrum of light, it will only absorb those specific photons whose energy exactly matches the energy required to drive an internal change within the molecule, such as promoting an electron to a higher energy level or increasing the vibration of its atomic bonds [2] [1]. By analyzing which wavelengths are absorbed, scientists can deduce critical information about the sample's composition.

The Fundamental Principles of Spectroscopy

The Nature of Light

Light, or electromagnetic radiation, exhibits a dual nature, behaving as both a wave and a particle [2]. As a wave, it is characterized by its wavelength—the distance between successive peaks—which determines its color and place in the electromagnetic spectrum [2]. The full spectrum includes gamma rays, X-rays, ultraviolet (UV) light, the visible rainbow, infrared (IR) light, microwaves, and radio waves [2]. As a particle, light consists of photons, with each photon's energy being directly determined by its wavelength; a blue photon carries more energy than a red photon, for instance [2].

Molecular Energy Levels and Absorption

Matter is composed of atoms and molecules that can exist only in specific, quantized energy states. The three primary processes by which a molecule absorbs radiation are [3]:

- Rotational transitions: Absorption leads to a higher rotational energy level.

- Vibrational transitions: Absorption leads to an increased vibrational energy level.

- Electronic transitions: Electrons are raised to a higher energy orbital.

The energy required for these transitions varies by orders of magnitude, with electronic transitions requiring the most energy (UV/Visible light), vibrational transitions requiring less (Infrared light), and rotational transitions requiring the least (Microwave region) [3]. For absorption to occur, the energy of the incoming photon must exactly match the energy difference between two allowed states in the molecule. Furthermore, the interaction must cause a net change in the dipole moment of the molecule (a change in the distribution of electrical charge) as it vibrates or rotates [3]. Molecules like O₂ and N₂, which have no dipole moment, cannot directly absorb IR radiation [3].

Table 1: Quantitative Overview of the Electromagnetic Spectrum in Spectroscopy

| Spectral Region | Wavelength Range | Primary Molecular Transition | Common Applications |

|---|---|---|---|

| Ultraviolet/Visible (UV-Vis) | 200–800 nm [4] | Electronic | Chemical, biological, and environmental analysis [4] |

| Near-Infrared (NIR) | 800–2500 nm [4] | Vibrational (Overtone) | Pharmaceuticals, agriculture, food quality [5] [4] |

| Mid-Infrared (MIR) | ~2.5–25 µm [1] | Vibrational (Fundamental) | Identification of chemical compounds and molecular structures [1] |

Infrared Spectroscopy: A Closer Look at Vibrational Modes

The "Chemical Fingerprint" Region

Infrared (IR) spectroscopy is a powerful technique that leverages the principle of vibrational transitions. Its basic principle relies on molecules' ability to absorb infrared radiation with frequencies that precisely match the natural vibrational frequencies of their chemical bonds [1]. Since these vibrational frequencies are unique to specific bonds and molecular structures, the resulting absorption spectrum serves as a highly distinctive chemical fingerprint [1]. The mid-infrared region (approximately 5 to 25 microns) is particularly useful because its energies coincide directly with the fundamental vibrations of molecular bonds [1].

Molecular Vibrations

When infrared radiation interacts with a molecule, the absorbed energy excites the natural vibrations of its chemical bonds. These vibrations are categorized into two main types [1]:

- Stretching: Changes in the interatomic distance along the bond axis. This can be symmetric or asymmetric.

- Bending: Changes in the bond angle between bonds, with subtypes including scissoring, rocking, twisting, and wagging.

For a diatomic molecule, this interaction can be modeled as two atoms connected by a spring, obeying the principles of Hooke's law, where the frequency of vibration depends on the masses of the atoms and the stiffness of the bond between them [3]. The specific wavelengths absorbed reveal the types of bonds present (e.g., C-H, O-H, C=O) and their molecular environment, allowing for definitive identification.

Instrumentation and Experimental Protocol

Key Components of an IR Spectrometer

The instrumentation for IR spectroscopy typically consists of four fundamental components, each playing a vital role in the analysis [1]:

- IR Light Source: Emits a broad spectrum of infrared light, often in the mid-infrared range (5-25 µm).

- Sample Holder: The location where the sample (gas, liquid, or solid) is placed and interacts with the infrared light. The holder must be suited to the sample's state.

- Detector: Captures the infrared radiation that passes through the sample and converts it into an electrical signal.

- Data Analysis Software: Processes the signal from the detector, generates a graph of absorption versus wavelength (the spectrum), and often includes libraries for automated compound identification.

Detailed Experimental Protocol for IR Identity Testing

Identity testing is a critical application of IR spectroscopy in regulated industries like pharmaceuticals to confirm the chemical composition of raw materials and final products [6]. The following protocol ensures accurate and reliable comparisons between a test sample and a reference material.

Step 1: Sample Preparation

- Selection of Technique: Choose a sample preparation technique appropriate for the sample's physical state (e.g., attenuated total reflection (ATR) for solids, liquid cells for solutions) [6]. Crucially, the exact same technique must be used for both the sample and the reference material. Different techniques can alter the spectral appearance, leading to incorrect conclusions (see Figure 4 in [6]).

- Handling: If the sampling technique is manually intensive (e.g., preparing KBr pellets), best practice is to have the same trained operator prepare both samples to minimize user-induced variability [6].

Step 2: Instrument Configuration

- Standardized Parameters: Set and meticulously document the instrumental scanning parameters. These must remain identical for both sample and reference runs [6]:

- Resolution: Typically 4 cm⁻¹ or 8 cm⁻¹ for standard quality control. Higher resolution provides sharper peaks but requires longer scan times.

- Number of Scans: 16, 32, or 64 scans are common. Averaging multiple scans improves the signal-to-noise ratio.

- Apodization Function: A standard function (e.g., Happ-Genzel) should be selected and held constant.

- Instrument: For the most rigorous comparison, the sample and reference should be run on the same FT-IR instrument to avoid subtle instrument-specific artifacts [6].

Step 3: Data Collection & Analysis

- Acquisition: Run the background spectrum (without the sample), followed by the sample spectrum and the reference spectrum.

- Comparison: Visually or digitally overlay the sample and reference spectra. For a positive identification, all significant absorption peaks (peaks and valleys) must match in both position (wavenumber, cm⁻¹) and relative intensity [6]. The software can perform a correlation calculation to provide a numerical match score.

Table 2: Essential Research Reagent Solutions and Materials for Spectroscopic Analysis

| Item | Function / Application |

|---|---|

| FT-IR Spectrometer | The core instrument used to expose the sample to IR light and measure the absorption spectrum [1]. |

| ATR (Attenuated Total Reflection) Accessory | Allows for direct analysis of solids and liquids without extensive preparation, ideal for complex samples [1] [6]. |

| KBr (Potassium Bromide) | Used to create pellets for solid sample analysis, as it is transparent to IR light [6]. |

| Spectral Library/Database | A collection of known compound spectra stored in software; essential for automated identification of unknowns [1]. |

| Data Preprocessing Software | Applies mathematical functions (e.g., Min-Max Normalization, Standardization) to raw spectral data to reduce noise and enhance features for more accurate analysis [5]. |

Data Processing and Spectral Analysis

The Need for Data Preprocessing

Spectroscopic data are complex "big data" records, typically consisting of reflectance or absorbance values measured at numerous wavelengths (e.g., from 400-2500 nm in 1 nm increments) [5]. Raw data from spectrometers are often distorted by noise from optical interference or instrument electronics and can be affected by environmental factors like temperature and electric fluctuations [5]. Consequently, preprocessing is a crucial step to clean the data, remove artifacts, and enhance the relevant spectral features before any quantitative analysis or identification is performed [5].

Common Preprocessing Techniques

Preprocessing methods are mathematical transformations applied to spectral signatures and can be broadly grouped into functional, statistical, and geometric types [5]. Among the most effective and widely used are statistical techniques, which are easy to apply and adapt well to the data. Two prominent methods are [5]:

- Standard Normal Variate (SNV) / Z-Score Standardization: This transformation centers the data by subtracting the mean (( \mu )) and scales it by dividing by the standard deviation (( \sigma )) for each variable: ( Zi = (Xi - \mu) / \sigma ). The result is a distribution with a mean of 0 and a variance of 1.

- Min-Max Normalization (MMN) / Affine Transformation: This function scales the data to a fixed range, typically [0, 1]. It is calculated as: ( f(x) = (x - r{\text{min}}) / (r{\text{max}} - r_{\text{min}}) ).

These transformations preserve the fundamental shape and features of the original distribution (including local maxima, minima, and trends) while accentuating peaks and valleys that might otherwise remain hidden, thereby improving the results of subsequent multivariate statistical and classification analyses [5].

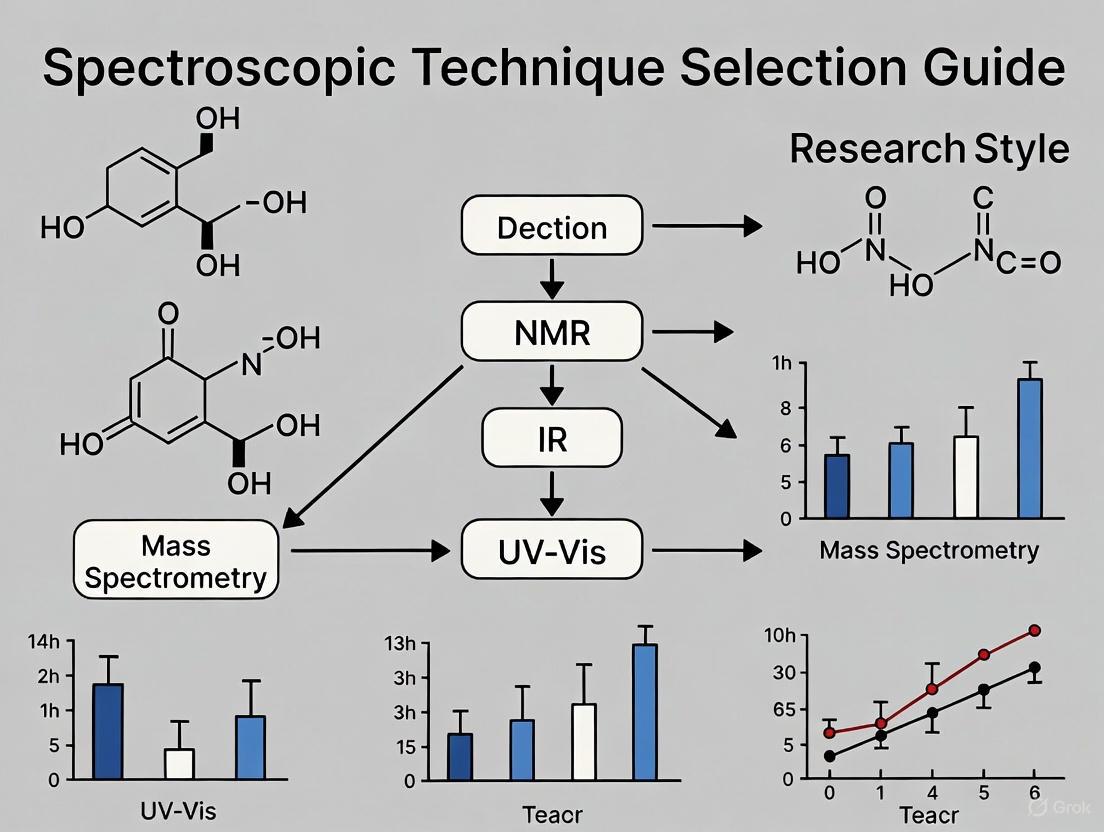

Selecting a Spectroscopic Technique

Choosing the right spectroscopic method is critical for research success. Several key factors must be balanced based on the analytical needs [4]:

- Sensitivity: The instrument's ability to detect low-intensity signals, crucial for trace element analysis or low-concentration compounds.

- Spectral Resolution: Determines how well the spectrometer can distinguish between closely spaced spectral lines, which is essential for analyzing complex samples with many peaks.

- Wavelength Range: Dictates the types of analyses possible. UV-Vis spectrometers (200-800 nm) are ideal for many chemical and biological analyses, while NIR spectrometers (800-2500 nm) are better suited for organic compounds in pharmaceuticals and agriculture [4].

- Speed: Important for high-throughput environments where rapid data acquisition is necessary.

- Practical Considerations: The physical size and portability of the instrument must be considered for fieldwork, and the price must be evaluated against both performance requirements and budget constraints [4].

The final choice often involves a careful balance between size, price, and performance to find the instrument that best meets the specific application requirements [4].

Ultraviolet-Visible (UV-Vis) spectroscopy is a foundational analytical technique in research and industrial laboratories for quantifying an array of substances. The technique operates on the principle of measuring the absorption of ultraviolet (10–400 nm) and visible (400–700 nm) light by a sample [7]. When the energy of this light matches the energy required to promote a molecular electron from a lower to a higher energy state, absorption occurs [8] [7]. The resulting absorption spectrum provides a fingerprint that is invaluable for identifying compounds, determining their concentration, and assessing their purity [9] [10]. For scientists selecting a spectroscopic method, UV-Vis spectroscopy offers a powerful, relatively inexpensive, and easily implemented tool for the analysis of any molecule that contains a chromophore—a light-absorbing group with conjugated electrons [9].

The core of the technique is the measurement of electronic transitions. The energy carried by photons in the UV-Vis range is sufficient to excite valence electrons, most commonly from the highest occupied molecular orbital (HOMO) to the lowest unoccupied molecular orbital (LUMO) [8] [11]. For organic molecules, the most relevant transitions are of the π→π* and n→π* types, which occur in molecules with conjugated pi-electron systems or non-bonding electrons [8] [12]. The specific wavelengths at which a compound absorbs, and the intensity of that absorption, are directly influenced by the nature of its chromophores and the extent of conjugation, making UV-Vis particularly sensitive to molecular structure [8].

Fundamental Principles and Electronic Transitions

The Beer-Lambert Law

The quantitative aspect of UV-Vis spectroscopy is governed by the Beer-Lambert Law. This law establishes a linear relationship between the absorbance of a solution and the concentration of the absorbing species, making it the cornerstone of concentration determination [9] [13]. The law is mathematically expressed as:

A = εlc

Where:

- A is the measured Absorbance (a unitless quantity) [10] [12].

- ε is the Molar Absorptivity (or molar extinction coefficient), with units of L mol⁻¹ cm⁻¹, which is a constant indicating how strongly a compound absorbs light at a specific wavelength [8] [13].

- l is the Path Length, the distance the light travels through the sample, typically 1 cm in standard cuvettes [13] [7].

- c is the Molar Concentration of the absorbing species in mol L⁻¹ [13].

The absorbance can also be defined in terms of light intensities, where I₀ is the intensity of incident light and I is the intensity of transmitted light: A = log₁₀(I₀/I) [12]. For optimal accuracy, it is recommended to maintain absorbance values within the 0.2 to 0.8 range, as deviations from the Beer-Lambert law can occur at very high concentrations due to factors such as saturation and stray light [9] [10].

Types of Electronic Transitions

In molecules, the absorption of UV-Vis light causes electronic transitions between molecular orbitals. The probability and energy of these transitions depend on the electronic structure of the molecule. The table below summarizes the common electronic transitions in organic molecules.

Table 1: Common Electronic Transitions in UV-Vis Spectroscopy

| Transition Type | Orbitals Involved | Typical Energy & Wavelength (λ) | Molar Absorptivity (ε) | Example Chromophores |

|---|---|---|---|---|

| π → π* | Bonding π to Antibonding π* | Higher Energy / Shorter λ (e.g., ~180 nm for isolated C=C) [8] | High (>10,000) [8] | Alkenes, conjugated polyenes [8] |

| n → π* | Non-bonding to Antibonding π* | Lower Energy / Longer λ (e.g., ~290 nm for C=O) [8] | Low (10-100) [8] | Carbonyl compounds [8] |

| σ → σ* | Bonding σ to Antibonding σ* | Very High Energy / Very Short λ (<150 nm) [9] | - | C-C, C-H single bonds [9] |

| n → σ* | Non-bonding to Antibonding σ* | ~150-250 nm [9] | - | Alcohols, amines [9] |

A key structural feature that dramatically affects absorption is conjugation. Conjugation, the alternating pattern of single and double bonds, lowers the energy gap between the HOMO and LUMO orbitals. This results in a bathochromic shift, meaning the absorption maximum (λmax) moves to a longer wavelength, often from the UV into the visible region, causing the compound to appear colored [8]. For instance, while ethene absorbs at 171 nm, the conjugated diene 1,3-butadiene absorbs at 217 nm [8] [13].

Figure 1: Electronic transitions occur when a molecule absorbs light, promoting an electron from the ground state to a higher-energy excited state. The measurement of this absorption forms the basis of UV-Vis spectroscopy [8] [11].

Instrumentation and the Scientist's Toolkit

A UV-Vis spectrophotometer is designed to pass monochromatic light through a sample and precisely measure the intensity of light that is transmitted. The core components of a standard instrument are illustrated in the diagram below and detailed in the subsequent toolkit table.

Figure 2: A simplified schematic of a UV-Vis spectrophotometer's key components and the path of light through the system [10].

Table 2: Essential Research Reagent Solutions and Materials for UV-Vis Spectroscopy

| Item | Function & Importance | Technical Considerations |

|---|---|---|

| Spectrophotometer | The core instrument containing a light source, monochromator, and detector. | Dual-beam instruments improve stability by comparing sample and reference beams simultaneously [11]. The spectral bandwidth should be narrow for high resolution [9]. |

| Cuvettes | A container to hold the liquid sample during analysis. | Must be transparent to the wavelengths used. Quartz is essential for UV work (<330 nm); glass or plastic may be used for visible light only [10]. Standard path length is 1.00 cm [13]. |

| Solvents | A medium to dissolve the analyte. | Must be optically transparent in the spectral region of interest. Common choices include water, ethanol, and hexane. The solvent can affect the absorption spectrum (solvatochromism) [9]. |

| Reference/Blank | A solution containing all components except the analyte. | Used to zero the instrument, accounting for absorbance from the solvent and cuvette. This is critical for obtaining accurate analyte absorbance [10]. |

| Standard Solutions | Solutions of the analyte with accurately known concentrations. | Used to construct a calibration curve, which is the most reliable method for quantitative analysis and verifies the linearity of the Beer-Lambert law for the system [13] [7]. |

Experimental Protocols and Applications

Quantitative Analysis: Determining Concentration

The most common application of UV-Vis spectroscopy is the quantitative determination of an analyte's concentration. The following protocol outlines the best-practice methodology using a calibration curve.

Protocol: Concentration Determination via Calibration Curve

- Preparation of Standard Solutions: Prepare a series of solutions with known concentrations of the analyte. The concentrations should bracket the expected concentration of the unknown sample. Use precise volumetric glassware for accuracy [13] [7].

- Selection of Analytical Wavelength: Using one of the standard solutions, obtain a full absorption spectrum to identify λmax, the wavelength of maximum absorbance. This wavelength will be used for all quantitative measurements as it provides the greatest sensitivity and is less susceptible to errors from small instrumental wavelength shifts [9] [13].

- Measurement of Absorbance:

- Construction of Calibration Curve: Plot the measured absorbance (y-axis) against the known concentration (x-axis) for each standard solution. Use linear regression to fit a straight line (y = mx + b) through the data points. The plot should be linear, obeying the Beer-Lambert law (A = εlc), where the slope is equal to εl [13] [7].

- Analysis of Unknown Sample: Measure the absorbance of the unknown sample at the same λmax and under the same instrumental conditions. Use the equation of the calibration curve to calculate the unknown concentration: cunknown = (Aunknown - b) / m [7].

Table 3: Example Data for a Calibration Curve for Protein Quantification at 280 nm

| Solution | Concentration (mg/mL) | Absorbance at 280 nm (AU) |

|---|---|---|

| Standard 1 | 0.2 | 0.09 |

| Standard 2 | 0.4 | 0.21 |

| Standard 3 | 0.6 | 0.32 |

| Standard 4 | 0.8 | 0.44 |

| Standard 5 | 1.0 | 0.58 |

| Unknown | To be determined | 0.35 |

Note: In this example, the calibration curve yields a linear equation of A = 0.56c + 0.01. Substituting the unknown's absorbance (0.35) gives a concentration of approximately 0.61 mg/mL. This method is routinely used to estimate protein concentration based on the absorption of aromatic amino acids like tryptophan and tyrosine [11].*

Qualitative Analysis and Purity Assessment

Beyond quantification, UV-Vis spectroscopy is a vital tool for qualitative analysis and purity checks.

- Identification of Compounds: The absorption spectrum, specifically the value of λmax and the molar absorptivity (ε), provides information about the presence of specific chromophores and the degree of conjugation in a molecule [13]. While not a definitive identification tool like NMR or mass spectrometry, it can offer strong supporting evidence and is useful for monitoring chemical reactions where chromophores change [12].

- Purity Checks: A classic application is checking the purity of nucleic acids (DNA/RNA). The ratio of absorbance at 260 nm (nucleic acid absorption) to 280 nm (protein absorption), known as the A260/A280 ratio, is a standard metric. A ratio of ~1.8 is generally accepted for pure DNA, while a lower ratio suggests protein contamination [10]. Similarly, the A260/A230 ratio can indicate contamination by salts or organic compounds [10].

Strengths, Limitations, and Role in Technique Selection

When evaluating UV-Vis spectroscopy against other analytical techniques, its specific profile of strengths and limitations must be considered.

Strengths:

- High Sensitivity: For strongly absorbing chromophores, very low concentrations (down to 10⁻⁵ M or lower) can be measured [13].

- Ease of Use: The technique is straightforward to implement and requires minimal sample preparation [9].

- Cost-Effectiveness: Instruments are relatively inexpensive to purchase and maintain compared to techniques like NMR or MS [9].

- Non-Destructive: The sample can often be recovered after analysis [10].

- Excellent for Quantification: It is a premier technique for determining the concentration of absorbing species in solution [9] [13].

Limitations:

- Limited Structural Information: UV-Vis provides information about chromophores but gives little detail about the overall molecular structure, unlike NMR or IR spectroscopy [12].

- Requirement for a Chromophore: The analyte must absorb in the UV-Vis region. Colorless compounds with no suitable chromophores cannot be analyzed directly [9].

- Solution-Based: Measurements are typically performed in solution, which may not be suitable for all samples [9].

- Spectral Overlap: It can be difficult to analyze mixtures if the components have overlapping absorption bands [9].

Positioning in the Researcher's Toolkit: UV-Vis spectroscopy is an ideal first-line technique for routine quantification and purity assessment of compounds known to contain chromophores. Its role is complementary to other methods. For instance, while Nuclear Magnetic Resonance (NMR) spectroscopy excels at determining detailed molecular structure, and Mass Spectrometry (MS) provides molecular weight and fragmentation patterns, UV-Vis is unparalleled for fast, accurate concentration measurement in aqueous or organic solutions. In a drug development context, it is indispensable for tasks like monitoring protein concentration during purification, assessing nucleic acid purity, and tracking the progress of reactions involving conjugated molecules.

Infrared (IR) spectroscopy is a fundamental analytical technique that probes molecular vibrations to identify functional groups and characterize chemical structures. While traditional dispersive IR spectroscopy laid the groundwork, Fourier Transform Infrared (FT-IR) spectroscopy has revolutionized the field since the 1970s, offering superior speed, sensitivity, and precision [14]. This technical guide explores the core principles of IR and FT-IR spectroscopy, detailing their applications in modern research and providing a structured framework for scientists to select the appropriate technique for their analytical needs.

The broad applicability of FT-IR is enhanced by advanced data processing techniques, notably chemometric methods like principal components analysis (PCA), partial least squares (PLS) modeling, and discriminant analysis (DA). These techniques extract meaningful information from complex spectral data, allowing for accurate classification and quantitative analysis [15]. FT-IR's ability to provide rapid, non-destructive analysis is particularly advantageous in fields requiring high-throughput screening or real-time monitoring, such as pharmaceutical development and environmental science [15] [16].

Fundamental Principles: From IR to FT-IR

Dispersive IR Spectroscopy

Traditional dispersive IR spectroscopy, the original technique dating to the early 1900s, operates by separating infrared light into its constituent wavelengths before measuring sample absorption [14].

- Working Mechanism: A broad-spectrum IR beam passes through a diffraction grating that spatially disperses different wavelengths. A slit isolates specific wavelengths sequentially, directing monochromatic light through the sample to a detector [14].

- Technical Limitations: This sequential measurement process is inherently slow, as it checks each wavelength individually. The need for mechanical adjustment of the diffraction grating also introduces reproducibility challenges and limits signal-to-noise ratio compared to modern FT-IR systems [14].

FT-IR Spectroscopy: A Technological Revolution

FT-IR spectroscopy supersedes dispersive techniques through the use of an interferometer and mathematical transformation, enabling simultaneous measurement of all infrared frequencies [14].

- Core Components: A typical FT-IR spectrometer employs a Michelson interferometer consisting of a beam splitter, fixed mirror, and moving mirror. The beam splitter divides the IR source, creating two beams that travel different path lengths before recombining to produce an interference pattern [14].

- Interferogram and Fourier Transformation: The recombined beam passes through the sample to a detector, recording an interferogram—a complex pattern encoding absorption information for all frequencies. The Fourier Transform algorithm decodes this pattern, converting it into a conventional IR spectrum showing absorption versus wavenumber [14].

- Advantages Over Dispersive IR: FT-IR provides significantly faster acquisition, higher sensitivity, better wavelength accuracy, and greater signal-to-noise ratio due to the Fellgett's (multiplex) advantage and the Jacquinot's (throughput) advantage [14].

Figure 1: FT-IR Instrumentation and Data Flow. This workflow illustrates the path from IR source to final spectrum, highlighting the critical role of interferometry and Fourier Transform processing.

Sampling Techniques in FT-IR Analysis

Transmission vs. Attenuated Total Reflectance (ATR)

FT-IR encompasses multiple sampling techniques tailored to different sample types and analytical requirements. The two primary methods are transmission and Attenuated Total Reflectance (ATR), each with distinct advantages and limitations [17].

Transmission Spectroscopy, the traditional approach, involves passing IR light directly through a prepared sample. Solid samples typically require preparation as KBr pellets, where the analyte is dispersed in a potassium bromide matrix, while liquids are analyzed between NaCl or CaF₂ windows [17]. Although transmission can produce high-quality spectra compatible with extensive library databases, its sample preparation is often laborious and technique-sensitive. Challenges include the hygroscopic nature of KBr, potential window fogging from aqueous samples, and interference from air bubbles in liquid cells [17].

ATR Spectroscopy has gained prominence for its minimal sample preparation requirements. In ATR, IR light passes through an Internal Reflection Element (IRE) crystal with a high refractive index (e.g., diamond, ZnSe, or Ge). The beam interacts with the sample through an evanescent wave that penetrates 0.5-2 microns into the material in contact with the crystal [17]. ATR accessories apply pressure via a clamping arm to ensure optimal sample-crystal contact for solids, while liquids can be directly applied [17]. ATR spectra exhibit slight peak position and intensity variations compared to transmission due to optical effects from refractive index changes, but these differences are well-characterized [17].

Comparative Analysis of FT-IR Sampling Techniques

Table 1: Key Comparison Between Transmission and ATR FT-IR Techniques

| Parameter | Transmission FT-IR | ATR-FT-IR |

|---|---|---|

| Sample Preparation | Requires extensive preparation (KBr pellets for solids, specific cells for liquids) | Minimal preparation; direct analysis of solids, liquids, pastes |

| Analysis Time | Longer due to preparation requirements | Rapid (seconds to minutes) |

| Sample Integrity | Often destructive; difficult sample recovery | Generally non-destructive; easy sample recovery |

| Reproducibility | Variable; depends on preparation skill | High reproducibility across sample types |

| Spectral Libraries | Extensive libraries available | Fewer libraries, but users can create custom databases |

| Ideal Applications | Qualitative analysis where high-quality spectra are paramount | High-throughput analysis, solids, powders, polymers, aqueous samples |

Emerging Sampling Technologies

Recent advancements in sampling techniques continue to expand FT-IR capabilities. Optical-Photothermal Infrared (O-PTIR) spectroscopy represents a breakthrough, enabling non-contact, sub-micron resolution analysis without the physical contact required by ATR [18]. O-PTIR uses a pulsed, tunable IR laser combined with a visible probe laser to detect photothermal effects, producing transmission-like spectra while maintaining sample integrity [18]. This technique is particularly valuable for analyzing heterogeneous samples, delicate materials, and applications requiring high spatial resolution beyond the diffraction limit of conventional IR spectroscopy [18].

Experimental Protocols for Functional Group Analysis

Standard Operating Procedure: ATR-FTIR Analysis of Solid Pharmaceutical Compounds

This protocol details the characterization of active pharmaceutical ingredients (APIs) using diamond ATR-FTIR, a common application in drug development [16].

- Instrument Calibration: Perform daily background and wavelength calibration using a polystyrene standard. Verify instrument performance against established peak positions and intensities before sample analysis.

- Sample Preparation: For solid APIs, ensure the compound is finely powdered using an agate mortar and pestle. Clean the ATR crystal with isopropanol and lint-free tissue, ensuring no residue remains. Apply the powder directly to the diamond crystal surface.

- Spectrum Acquisition: Apply consistent pressure to the sample using the ATR accessory's clamping mechanism. Collect spectra over the range of 4000-400 cm⁻¹ with 4 cm⁻¹ resolution. Accumulate 64 scans per spectrum to optimize signal-to-noise ratio while maintaining practical acquisition time.

- Data Analysis: Identify key functional group vibrations by comparing observed peaks to reference spectra. Critical regions include: 3500-3200 cm⁻¹ (O-H, N-H stretches), 3000-2850 cm⁻¹ (C-H stretches), 1800-1650 cm⁻¹ (C=O stretches), and 1650-1550 cm⁻¹ (N-H bends, C=C stretches). Use chemometric tools like PCA for complex mixture analysis [15].

Protein Dynamics Monitoring via Hydrogen/Deuterium Exchange

FT-IR spectroscopy provides valuable insights into protein dynamics and conformational changes through amide hydrogen/deuterium (H/D) exchange experiments [15].

- Sample Preparation: Prepare protein solution in appropriate buffer (e.g., 20 mM phosphate, pH 7.4). Initiate H/D exchange by rapidly diluting the protein solution into D₂O buffer. Control temperature precisely throughout the experiment.

- Time-Resolved Spectral Acquisition: Collect FT-IR spectra at defined time intervals (seconds to hours) following deuterium exchange. Focus on the amide I (1600-1700 cm⁻¹) and amide II (1480-1580 cm⁻¹) regions, which are sensitive to protein secondary structure and H/D exchange kinetics.

- Data Interpretation: Monitor the decay of the amide II band (primarily N-H bending) at ~1550 cm⁻¹ and the concomitant rise of the amide II' band (N-D bending) at ~1450 cm⁻¹. Plot intensity changes versus time to derive H/D exchange rates, which reflect protein flexibility and solvent accessibility [15].

- Method Limitations: This approach is semi-quantitative and most effective for monitoring dynamics on timescales of minutes to hours. Factors including buffer composition, temperature stability, and protein concentration can affect accuracy [15].

Figure 2: ATR-FTIR Experimental Workflow. This diagram outlines the key steps in solid sample analysis, from preparation through spectral interpretation.

Technical Specifications and Quantitative Performance

Key Performance Metrics in FT-IR Spectroscopy

Table 2: Quantitative Performance Metrics for FT-IR Analysis

| Performance Parameter | Typical Range | Application Significance |

|---|---|---|

| Spectral Range | 4000-400 cm⁻¹ (Mid-IR) | Covers fundamental molecular vibrations for functional group identification |

| Spectral Resolution | 0.5-16 cm⁻¹ | Higher resolution (0.5-4 cm⁻¹) needed for gas analysis; 4-8 cm⁻¹ sufficient for most solids/liquids |

| Signal-to-Noise Ratio | 30,000:1 to 50,000:1 (peak-to-peak) | Critical for detecting minor components and accurate quantitative analysis |

| Absorption Linearity | >0.999% over 0-3 AU | Essential for quantitative applications and concentration determinations |

| Measurement Time | Seconds to minutes (depending on technique) | ATR typically faster (seconds) than transmission methods |

| Spatial Resolution (Microscopy) | ~10 μm (conventional); <1 μm (O-PTIR) | Determines ability to analyze small sample features and heterogeneous materials [18] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for FT-IR Spectroscopy

| Item | Specification/Type | Function/Application |

|---|---|---|

| ATR Crystals | Diamond, ZnSe, Ge | Internal Reflection Elements for ATR measurements; diamond offers durability, ZnSe for general purpose, Ge for high refractive index needs [17] |

| Pellet Materials | Potassium Bromide (KBr) | Matrix for transmission analysis of solid samples; hygroscopic, requires careful handling and drying [17] |

| Window Materials | NaCl, CaF₂, KBr | Transmission cells for liquid and gas analysis; NaCl economical but water-sensitive, CaF₂ water-resistant [17] |

| Calibration Standards | Polystyrene films | Wavelength and intensity calibration verification; provides known reference peaks at specific wavenumbers |

| Liquid Cells | Fixed or variable pathlength (0.025-1 mm) | Controlled thickness for liquid sample analysis in transmission mode; pathlength selection depends on sample absorptivity [16] |

| Microscope Accessories | MCT detectors, FPA arrays | Enhanced detection for microspectroscopy and imaging applications; enables chemical mapping of heterogeneous samples [18] |

Application Case Studies in Pharmaceutical Research

Polymorph Characterization in API Development

Different crystalline forms (polymorphs) of pharmaceutical compounds significantly impact drug stability, solubility, and bioavailability. FT-IR spectroscopy serves as a powerful tool for polymorph identification and monitoring [16].

- Experimental Approach: Using the Golden Gate High Temperature ATR Accessory, researchers can monitor polymorphic transitions in real-time. For paracetamol, variable temperature ATR-FTIR clearly distinguishes Form I (monoclinic) from Form II (orthorhombic) through characteristic shifts in the N-H and C=O stretching regions [16].

- Technical Details: Spectral differences between polymorphs may manifest as peak splitting, frequency shifts of 5-20 cm⁻¹, or intensity variations in fingerprint regions (1500-500 cm⁻¹). These subtle changes provide fingerprints for specific crystal structures [16].

- Regulatory Impact: The sensitivity of FT-IR to polymorphic form supports Quality by Design (QbD) initiatives and Process Analytical Technology (PAT) frameworks in pharmaceutical manufacturing, enabling real-time monitoring of critical quality attributes [16].

Drug-Excipient Compatibility Screening

FT-IR spectroscopy rapidly identifies potential incompatibilities between APIs and formulation excipients during preformulation stages [16].

- Methodology: Prepare physical mixtures of API with individual excipients and monitor spectral changes after storage under accelerated conditions (e.g., 40°C/75% RH). Key indicators include shifts in API characteristic peaks, appearance of new peaks, or changes in peak widths [16].

- Case Example: ATR-FTIR revealed incompatibility between levodopa (Parkinson's medication) and common excipients like magnesium stearate, demonstrated by alterations in carboxylate and amine group vibrations [16].

- Advantages over Traditional Methods: FT-IR provides molecular-level insights into degradation pathways and interaction mechanisms, far exceeding the capabilities of traditional techniques like DSC or HPLC which may detect changes but not identify chemical causes [16].

Strategic Technique Selection Framework

Choosing between IR, FT-IR, and other analytical techniques requires systematic evaluation of research objectives, sample characteristics, and analytical requirements.

- Sample Considerations: FT-IR with ATR accommodates virtually all sample types—solids, liquids, powders, pastes, and films—with minimal preparation. Transmission FT-IR remains valuable for quantitative analysis and when library matching is essential. For sub-micron features or delicate samples, O-PTIR provides superior spatial resolution without sample contact [17] [18].

- Information Requirements: FT-IR excels at functional group identification, quantitative analysis of specific compounds, polymer characterization, and monitoring chemical reactions in real-time. When molecular fingerprinting is the primary goal, FT-IR offers unparalleled specificity and sensitivity [15] [16].

- Practical Constraints: For field applications or point-of-care testing, portable FT-IR devices offer analytical capabilities outside traditional laboratory settings. In quality control environments, FT-IR's speed, non-destructive nature, and minimal sample preparation enable high-throughput analysis [15] [16].

The continued evolution of FT-IR spectroscopy, including the development of portable devices and advanced chemometric tools, ensures its expanding role in pharmaceutical development, clinical diagnostics, environmental monitoring, and materials science [15]. By understanding the fundamental principles, sampling techniques, and applications detailed in this guide, researchers can strategically leverage FT-IR spectroscopy to address complex analytical challenges across scientific disciplines.

Raman spectroscopy is a powerful analytical technique used for the chemical identification, characterization, and quantification of substances by examining how light interacts with molecular bonds [19]. When light illuminates a substance, most of the scattered light retains the same energy (elastic Rayleigh scattering), but a tiny fraction (approximately 0.0000001%) undergoes inelastic scattering, emerging with a different energy—this is the Raman effect [19]. This energy shift corresponds directly to the vibrational frequencies of the molecular bonds in the sample, creating a unique "chemical fingerprint" that forms the basis for analysis [19]. The technique is named after C.V. Raman, who first observed this phenomenon in 1928, for which he was awarded the Nobel Prize in 1930 [19].

The core principle involves measuring the energy difference between the incident laser light and the Raman-scattered light, known as the Raman shift, which is measured in reciprocal centimeters (cm⁻¹) [19]. This shift is independent of the excitation laser's wavelength and provides specific information about the molecular structure and chemical composition of the sample. Since its discovery, technological advancements, particularly the development of lasers, sensitive detectors, and optical filters, have transformed Raman spectroscopy from a specialized research tool into a versatile analytical technique widely used across numerous scientific and industrial fields [20].

Fundamental Principles and Theory

The Raman Effect and Molecular Vibrations

The Raman effect originates from the interaction between light and the chemical bonds within a molecule. These bonds are in constant motion, vibrating at specific frequencies unique to each molecule and bond type [19]. When monochromatic laser light interacts with a molecule, the electric field of the light can temporarily distort the electron cloud around the bonds, inducing a transient dipole moment. The energy required to cause this distortion relates to a property known as the bond's polarizability [21].

For a vibrational mode to be "Raman-active," the vibration must cause a change in the polarizability of the molecule during the vibration [21]. When this condition is met, some photons from the incident laser light will undergo inelastic scattering. In this process, the molecule may gain or lose vibrational energy, resulting in the scattered photon having a lower (Stokes shift) or higher (Anti-Stokes shift) energy than the incident photon [19]. The resulting spectrum, which plots the intensity of the scattered light against the Raman shift, reveals the characteristic vibrational fingerprint of the material under investigation.

Visualizing the Raman Scattering Process

The following diagram illustrates the fundamental process of Raman scattering and the resulting energy transitions.

Advantages and Disadvantages in Technique Selection

Raman spectroscopy offers a distinct set of benefits and limitations that must be carefully considered when selecting a spectroscopic technique for a research problem. Its value becomes particularly evident when compared and contrasted with other methods, such as Infrared (IR) spectroscopy.

Key Advantages

The strengths of Raman spectroscopy that make it a preferred choice in many scenarios are shown in the table below.

Table 1: Key Advantages of Raman Spectroscopy

| Advantage | Description | Practical Implication |

|---|---|---|

| Minimal Sample Preparation [22] [23] | Solids, liquids, and gases can often be analyzed as-is. | Increases throughput, reduces artifact introduction, and preserves sample integrity. |

| Non-Destructive Analysis [22] [19] | The technique typically uses low-power lasers that do not damage the sample. | Ideal for valuable, rare, or irreplaceable samples (e.g., artworks, forensic evidence). |

| Compatibility with Aqueous Solutions [22] | Water is a weak Raman scatterer. | Enables direct study of biological systems and reactions in their native aqueous environments. |

| Container Flexibility [19] | Laser light can pass through transparent packaging like glass and polymers. | Allows for analysis through vials or plastic bags, preventing contamination and simplifying process control. |

| Spatial Resolution | Capable of collecting spectra from volumes less than 1 μm in diameter [23]. | Enables detailed mapping of component distribution in heterogeneous materials. |

| Remote Sensing [22] | Laser and scattered light can be transmitted via fiber optic cables. | Facilitates analysis in hazardous environments or hard-to-reach locations. |

Key Limitations and Challenges

Despite its advantages, the technique has several inherent limitations.

Table 2: Key Limitations of Raman Spectroscopy

| Limitation | Description | Common Mitigation Strategies |

|---|---|---|

| Weak Raman Signal [22] [19] | The Raman effect is inherently weak, leading to low sensitivity for trace analysis. | Use of high-power lasers, long acquisition times, or enhanced techniques like SERS [24]. |

| Fluorescence Interference [22] [19] | Sample fluorescence can swamp the much weaker Raman signal, obscuring the spectrum. | Use of longer wavelength lasers (e.g., 785 nm, 1064 nm) to avoid electronic excitation [19] [20]. |

| Unsuitability for Metals/Alloys [22] [23] | Free electrons in metals prevent the Raman effect. | Alternative techniques, such as X-ray diffraction or energy-dispersive X-ray spectroscopy, are required. |

| Laser-Induced Sample Damage [23] | Localized heating from the intense laser beam can degrade or alter the sample. | Use of lower laser power, defocusing the beam, or rotating the sample. |

Experimental Protocols in Pharmaceutical Development

Raman spectroscopy plays a crucial role in the pharmaceutical industry by addressing key needs such as ensuring drug purity, authenticity, and efficacy [25]. The following section details specific experimental protocols for two critical applications.

Protocol 1: Real-Time Reaction Monitoring

This protocol is used to monitor the synthesis of a new drug or intermediate in real-time, determining reaction kinetics, mechanisms, and endpoints [20].

1. Objective: To monitor the Fischer esterification of benzoic acid to produce methyl benzoate and determine the reaction rate constant and yield [20].

2. Materials and Equipment:

- Raman spectrometer (dispersive or FT-based) equipped with a 1064 nm laser to minimize fluorescence [20].

- Fiber-optic immersion probe rated for the reaction temperature and solvent.

- 3-neck round-bottom flask, reflux condenser, heating mantle with magnetic stirrer.

- Benzoic acid (reactant), methanol (solvent), concentrated sulfuric acid (catalyst).

3. Experimental Procedure:

- Setup: Assemble the reaction apparatus. Place the Raman immersion probe directly into one neck of the reaction flask, ensuring the probe window is fully immersed and away from the vortex caused by stirring.

- Initialization: Charge the flask with benzoic acid and methanol. Begin stirring and heating to the target temperature (e.g., 60°C). Collect a background spectrum of the heated solvent.

- Reaction Start: Add the catalyst (sulfuric acid) to initiate the reaction. This marks time zero.

- Data Acquisition: Configure the spectrometer to collect spectra automatically at fixed intervals (e.g., every 45 seconds for 70 minutes). Use acquisition parameters such as 500 mW laser power and a 5-minute integration time to achieve a high signal-to-noise ratio [20].

- Completion: After the reaction time is complete, cool the mixture and terminate data collection.

4. Data Analysis:

- Identify unique Raman peaks for the reactant (benzoic acid at 780 cm⁻¹) and the product (methyl benzoate at 817 cm⁻¹) [20].

- Plot the normalized intensity of these characteristic peaks versus time.

- Fit the data to an appropriate kinetic model (e.g., a first-order rate law) to determine the rate constant and final reaction yield.

Protocol 2: Polymorph and Crystallization Monitoring

This protocol is critical for identifying and controlling the specific polymorphic form of an Active Pharmaceutical Ingredient (API), as different forms can have varying properties like solubility and bioavailability [25].

1. Objective: To monitor the synthesis and subsequent crystallization of a proprietary API and identify the polymorphic form.

2. Materials and Equipment:

- FT-Raman spectrometer for superior wavenumber stability during long experiments [20].

- Laboratory reactor with temperature control.

- API solution or slurry.

3. Experimental Procedure:

- Initial Spectrum: Collect a reference spectrum of the reactant mixture in the reactor.

- Synthesis Monitoring: Begin the synthesis process, collecting spectra at regular intervals. Monitor the appearance of a unique product peak (e.g., at 1150 cm⁻¹) [20].

- Crystallization Initiation: Once synthesis is complete, induce crystallization, often by lowering the temperature of the reactor.

- Crystallization Monitoring: Continue collecting spectra, focusing on spectral shifts that indicate crystallization (e.g., a peak shift from 1234 cm⁻¹ to 1240 cm⁻¹) [20].

- Final Characterization: Collect a high-quality spectrum of the final crystalline product for polymorph identification.

4. Data Analysis:

- Univariate Analysis: Track the intensity of key peaks or the magnitude of spectral shifts over time to monitor the progression of synthesis and crystallization.

- Chemometric Modeling (Multivariate): Build a quantitative model that correlates the entire spectral dataset to the concentration of the product and the degree of crystallinity. This provides a more robust and accurate measurement of the process [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful Raman experimentation relies on a set of key components and reagents.

Table 3: Essential Research Reagents and Materials for Raman Spectroscopy

| Item | Function / Role in Experimentation |

|---|---|

| Monochromator / Interferometer | The core component that separates the Raman scattered light by wavelength for detection [20]. |

| Diode Laser (e.g., 785 nm) | A common, power-efficient, and stable laser source that provides the monochromatic light for excitation, minimizing fluorescence for many samples [20]. |

| 1064 nm Laser (for FT-Raman) | An infrared laser used specifically to virtually eliminate fluorescence interference in challenging samples [20]. |

| Charge-Coupled Device (CCD) Detector | A highly sensitive, two-dimensional detector that allows for rapid spectral acquisition, crucial for kinetic studies and mapping [20]. |

| Fiber-Optic Immersion Probe | Enables the delivery of laser light and collection of scattered light directly inside a reaction vessel for in-situ monitoring [20]. |

| Notch / Edge Filters | Critical optical components that block the intense elastically scattered Rayleigh light while allowing the weak Raman signal to pass to the detector [20]. |

| Microscope Objective (for Microscopy) | Focuses the laser to a diffraction-limited spot (<1 µm) for high-spatial-resolution analysis and chemical imaging [19]. |

| SERS-Active Substrate (e.g., Au/Ag nanoparticles) | Used in Surface-Enhanced Raman Spectroscopy to amplify the weak Raman signal by several orders of magnitude for trace analysis [24]. |

Advanced Raman Techniques

To overcome inherent limitations like weak signal strength or poor spatial resolution, several advanced Raman techniques have been developed. The relationships and primary applications of these techniques are visualized below.

- Surface-Enhanced Raman Spectroscopy (SERS): This technique uses nanostructured metallic surfaces (typically gold or silver) to dramatically enhance the Raman signal by several orders of magnitude, enabling detection down to the single-molecule level [24]. It is invaluable for detecting trace analytes, such as explosives or unmodified DNA [24].

- Tip-Enhanced Raman Spectroscopy (TERS): TERS combines Raman spectroscopy with atomic force microscopy (AFM), using a metallic AFM tip to provide massive signal enhancement at the nanoscale. It achieves spatial resolution beyond the optical diffraction limit, allowing for chemical mapping of individual carbon nanotubes and single strands of DNA [24].

- Coherent Anti-Stokes Raman Scattering (CARS): A nonlinear, coherent technique where two laser beams (pump and Stokes) interact with the sample to generate a strong, coherent signal at the anti-Stokes frequency. This provides much higher signals than spontaneous Raman and is naturally resistant to fluorescence interference, making it a powerful tool for high-speed, label-free bio-imaging [24].

- Stimulated Raman Scattering (SRS): Another coherent technique, SRS involves the stimulated excitation of molecular vibrations, resulting in a very strong signal. It has become a prominent tool for real-time imaging of biomolecules inside living cells and tissues with high chemical specificity [24].

Raman spectroscopy stands as a versatile and powerful member of the spectroscopic toolkit, offering unique capabilities for non-destructive, label-free chemical analysis. Its strengths—including minimal sample preparation, compatibility with water, and flexibility for in-situ and remote monitoring—make it indispensable in fields ranging from pharmaceuticals and materials science to biology and cultural heritage preservation. While challenges like weak signal intensity and fluorescence persist, ongoing technological innovations and the development of advanced techniques like SERS and TERS continue to expand its applications and sensitivity. When selecting an analytical technique, researchers must weigh these factors against their specific needs, but for gaining detailed molecular structural insights through light scattering, Raman spectroscopy remains a premier choice.

Atomic and molecular spectroscopy are foundational techniques in analytical chemistry, yet they operate on distinct principles and yield different types of information. The core difference lies in their subject of analysis: atomic spectroscopy probes free atoms, typically in their ground state, providing information about elemental identity and concentration, while molecular spectroscopy investigates molecules, yielding insights into molecular structure, bonding, and functional groups [26] [11].

When electromagnetic radiation interacts with matter, the resulting transitions create a spectrum that serves as a unique fingerprint. In atomic spectroscopy, this interaction causes valence electrons in atoms to transition to higher energy levels, producing sharp, discrete line spectra due to the fixed energy differences between atomic orbitals [26] [27]. In contrast, molecular spectroscopy involves more complex transitions because molecules possess additional degrees of freedom. Beyond electronic transitions, molecules can undergo vibrational and rotational transitions, resulting in band spectra characterized by groups of tightly packed, overlapping lines [26] [27]. This fundamental distinction in the nature of the spectra is a direct consequence of the more complex energy landscape in molecules.

Table 1: Core Differences Between Atomic and Molecular Spectroscopy

| Feature | Atomic Spectroscopy | Molecular Spectroscopy |

|---|---|---|

| Analytical Target | Elements (metals and metalloids) [26] | Molecules (organic and inorganic compounds) [26] |

| Spectrum Produced | Discrete line spectra [27] | Band spectra (closely packed lines) [27] |

| Transitions Observed | Electronic (valence electrons) [26] [11] | Electronic, vibrational, and rotational [26] [11] |

| Primary Information | Elemental identity and concentration [11] | Molecular identity, structure, and functional groups [11] |

| Typical Sample State | Often requires destruction and atomization [26] | Can often analyze solids, liquids, and gases directly [27] |

Key Techniques and Their Analytical Capabilities

Atomic Spectroscopy Techniques

Atomic spectroscopy encompasses several key techniques, primarily distinguished by their method of atomization and detection. Atomic Absorption Spectroscopy (AAS) is a workhorse technique for detecting specific elements in liquid or solid samples. Its principle is that ground-state atoms can selectively absorb light at characteristic wavelengths, with the amount of absorption being proportional to the element's concentration [26]. AAS is renowned for its high accuracy (typically 0.5-5%) and sensitivity for metal analysis [26].

Inductively Coupled Plasma techniques represent a more advanced suite of methods. When coupled with Optical Emission Spectroscopy (ICP-OES) or Mass Spectrometry (ICP-MS), they offer exceptional sensitivity and the ability to perform simultaneous multi-element analysis. ICP-MS, in particular, is powerful for isotope ratio analysis and ultra-trace level detection, as demonstrated in the nuclear material characterization work of Benjamin T. Manard, the 2025 Emerging Leader in Atomic Spectroscopy [28]. These techniques have revolutionized practices in fields like medicine, pharmaceuticals, and environmental monitoring by enabling the detection of trace toxins and previously unknown elements in materials [26].

Molecular Spectroscopy Techniques

Molecular spectroscopy offers a diverse toolkit for compound analysis, with techniques spanning the electromagnetic spectrum. UV-Vis Spectroscopy operates in the 200-800 nm range and involves exciting valence electrons between molecular orbitals, such as from the Highest Occupied Molecular Orbital (HOMO) to the Lowest Unoccupied Molecular Orbital (LUMO) [11] [27]. It is widely used for quantitative analysis, such as determining protein concentration via the Beer-Lambert Law [11].

Infrared (IR) and Near-Infrared (NIR) Spectroscopy probe molecular vibrations. IR spectroscopy measures fundamental vibrations, providing detailed fingerprints for molecular identification and functional group analysis [29]. NIR spectroscopy, which examines overtones and combination bands, is ideal for analyzing complex organic materials like agricultural and pharmaceutical products, often with the aid of chemometrics [29].

Fluorescence Spectroscopy measures the light re-emitted by molecules after photon absorption, offering extreme sensitivity for trace analysis and bioimaging applications [11] [27]. Advanced forms like Fluorescence Lifetime Imaging (FLIM) can probe microenvironmental changes in tissues and cells, a specialty of Lingyan Shi, the 2025 Emerging Leader in Molecular Spectroscopy [30]. Raman Spectroscopy, which relies on inelastic light scattering, is complementary to IR and is particularly useful for aqueous samples and studying symmetric molecular vibrations [29].

Table 2: Common Molecular Spectroscopy Techniques and Applications

| Technique | Wavelength Range | Transitions Probed | Example Applications |

|---|---|---|---|

| UV-Vis Spectroscopy [29] [27] | 190–800 nm | Valence electrons (HOMO-LUMO) [11] | Protein quantification [11], drug purity in HPLC [29] |

| Infrared (IR) Spectroscopy [29] | ~2.5–25 µm (Mid-IR) | Fundamental molecular vibrations [11] [29] | Polymer identification, functional group analysis [29] |

| Near-Infrared (NIR) Spectroscopy [29] [27] | 760–2500 nm | Overtone and combination vibrations [29] [27] | Moisture content in agriculture, pharmaceutical QA [29] [27] |

| Fluorescence Spectroscopy [11] [27] | Varies (UV-Vis-NIR) | Electronic (emission from excited states) [11] | Biological imaging [11], sensor design [30] |

| Raman Spectroscopy [29] | Varies (often Vis-NIR) | Molecular vibrations (inelastic scattering) [29] | Aqueous sample analysis, material science [29] |

Experimental Protocols and Workflows

Protocol for Elemental Analysis via Atomic Absorption Spectroscopy

The quantification of a specific metal, such as lead in a water sample, using AAS follows a rigorous multi-step protocol. First, sample preparation is critical. The liquid sample may require acid digestion to break down complexes and release the metal ions into solution. A series of standard solutions with known concentrations of the target element are prepared for calibration [26].

The prepared sample is then nebulized and atomized. In flame AAS, the liquid sample is drawn up and converted into a fine aerosol via a nebulizer. This aerosol is mixed with fuel and oxidant gases and transported into a flame, where the heat (typically 2000-3000°C) breaks down the molecules, creating a cloud of free, ground-state atoms [26]. The measurement follows: Light from a hollow cathode lamp, which emits the element-specific wavelength, is passed through the atom cloud. The atoms absorb a fraction of this light, and a monochromator isolates the specific wavelength before a detector measures its intensity [26]. Finally, data analysis is performed. The absorbance of the standard solutions is measured to create a calibration curve. The absorbance of the unknown sample is then interpolated from this curve to determine the concentration, following the principle that absorbance is proportional to concentration (Beer-Lambert Law) [26].

Protocol for Compound Analysis via UV-Vis Spectroscopy

A common molecular spectroscopy protocol is the quantification of protein concentration using UV-Vis spectroscopy. The process begins with system setup and calibration. The UV-Vis spectrometer is initialized and a baseline correction (blanking) is performed using the solvent that contains the protein (e.g., a buffer solution) to account for any solvent absorption [11].

For the sample measurement, the protein solution is placed in a transparent cuvette, typically with a path length of 1 cm. The cuvette is inserted into the sample compartment, and the absorbance is measured at 280 nm. This specific wavelength is chosen because the aromatic amino acids in proteins (tryptophan, tyrosine, and phenylalanine) have strong absorption peaks here [11]. The calculation of concentration relies on the Beer-Lambert Law: A = ε * c * l, where A is the measured absorbance, ε is the molar absorptivity of the protein (a constant), c is the concentration, and l is the path length. If the molar absorptivity is known, the concentration can be directly calculated [11]. This method is a staple in life sciences and pharmaceutical labs for monitoring protein purification.

Diagram 1: UV-Vis Analysis Workflow

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials essential for conducting experiments in atomic and molecular spectroscopy.

Table 3: Essential Research Reagent Solutions and Materials

| Item Name | Function/Description | Application Context |

|---|---|---|

| Hollow Cathode Lamps [26] | Provides element-specific, narrow-line light source for excitation. | Atomic Absorption Spectroscopy (AAS) |

| Certified Reference Materials (CRMs) [28] | Standard with known analyte concentration for instrument calibration and method validation. | Quantitative analysis in both AAS and ICP-MS |

| Deuterium (D2) and Halogen Lamps [27] | Combined light source providing continuous spectrum across UV and Visible regions. | UV-Vis Spectrophotometry |

| UV-Transparent Cuvettes [11] | Container (e.g., quartz) that holds liquid sample without absorbing UV light. | UV-Vis and Fluorescence Spectroscopy |

| Deuterium Oxide (D₂O) [30] | Used as a metabolic tracer; carbon-deuterium bonds act as vibrational labels. | SRS Microscopy for tracking biomolecule synthesis |

| Acids for Digestion (HNO₃, HCl) [26] | High-purity acids used to dissolve solid samples and create a uniform liquid matrix. | Sample preparation for ICP-MS and AAS |

| Fluorescent Probes (e.g., Fluorescein) [11] | Molecules that absorb light at one wavelength and emit at a longer wavelength. | Fluorescence spectroscopy and bioimaging |

| Solid-Phase Microextraction Cartridges [28] | Miniaturized columns with resin to isolate and pre-concentrate analytes. | Pre-concentration of trace elements (e.g., U, Pu) before ICP-MS |

Decision Framework: Selecting the Appropriate Technique

Choosing between atomic and molecular spectroscopy hinges on the specific analytical question. The following decision workflow provides a logical path for technique selection based on the nature of the sample and the information required.

Diagram 2: Technique Selection Framework

Guidance for Elemental Analysis

Choose atomic spectroscopy when the analytical problem requires knowing which elements are present and in what amounts [26] [11]. This is the definitive choice for:

- Trace Metal Analysis: Detecting and quantifying heavy metals (e.g., Pb, Cd, Hg) in environmental, biological, or food samples [26].

- Multi-element Screening: When the presence of multiple unknown elements is suspected, techniques like ICP-OES or ICP-MS are ideal for their simultaneous multi-element capability [28].

- Isotopic Information: For applications in nuclear forensics, geochronology, or metabolic tracing, ICP-MS is the preferred technique due to its ability to discriminate between isotopes [28].

Guidance for Molecular and Structural Analysis

Choose molecular spectroscopy when the problem involves identifying specific compounds, understanding molecular structure, or characterizing functional groups [26] [11]. This family of techniques is essential for:

- Compound Identification and Purity: UV-Vis spectroscopy is routinely used as an HPLC detector to verify drug compound identity and purity in pharmaceuticals [29].

- Functional Group and Structure Elucidation: IR and Raman spectroscopy are powerful for identifying functional groups (e.g., carbonyl, hydroxyl) and studying molecular bonding [29].

- Biomolecular and Metabolic Studies: Fluorescence spectroscopy and advanced techniques like Stimulated Raman Scattering (SRS) microscopy are indispensable for studying biomolecules, tracking metabolic activity with labels like deuterated compounds, and imaging tissues [11] [30].

Considering Practical Constraints

The final decision must also account for practical laboratory constraints:

- Destructive vs. Non-Destructive: AAS and ICP are destructive techniques, as the sample is consumed during atomization. In contrast, many molecular techniques like NIR and Raman can be non-destructive, allowing the sample to be recovered [26] [29].

- Sample Throughput and Automation: ICP-MS and modern UV-Vis systems can be highly automated, which is critical for high-throughput laboratories.

- Cost and Expertise: AAS and UV-Vis are generally more affordable and easier to operate than ICP-MS or high-field NMR, which require significant capital investment and specialized expertise [26] [4].

Mass Spectrometry (MS) is a powerful analytical technique that identifies and quantifies molecules based on their mass-to-charge ratio (m/z). Unlike spectroscopic methods that rely on light absorption or emission, MS provides unparalleled precision in determining molecular weight and structure by measuring how molecules behave as charged particles in electric and magnetic fields [31] [32]. This capability makes it indispensable in modern research and drug development for analyzing a wide range of clinically relevant analytes, from small organic molecules to complex biological macromolecules like proteins [31].

The fundamental principle of MS is that it converts sample molecules into gas-phase ions, which are then separated according to their m/z and detected [32]. The resulting mass spectrum presents a plot of ion intensity against m/z, providing a unique fingerprint for substance identification and quantification [31] [33]. When coupled with chromatographic techniques like gas or liquid chromatography, mass spectrometers expand analytical capabilities across diverse clinical and research applications [31].

Fundamental Principles: The Mass-to-Charge Ratio

The mass-to-charge ratio (m/z) is the cornerstone physical quantity in mass spectrometry that determines ion trajectory within the mass analyzer [34]. This ratio represents the mass of an ion (m) divided by its number of charges (z), with classical electrodynamics establishing that two particles with identical m/z values will follow the same path in a vacuum when subjected to identical electric and magnetic fields [34].

For ions carrying a single charge (z=1), which is typical for small molecules, the m/z value is numerically equivalent to the molecular mass in Daltons (Da) [31] [34]. However, larger molecules such as proteins and peptides typically carry multiple charges, meaning the m/z value represents only a fraction of the ion's actual mass [31]. For example, an ion with a mass of 100 Da carrying two charges (z=2) will be detected at m/z 50 [34].

The motion of charged particles in a mass spectrometer is governed by fundamental physical laws. The Lorentz force law (F = Q(E + v × B)) describes the force applied to ions in electric and magnetic fields, while Newton's second law of motion (F = ma) determines their resulting acceleration [34]. These equations combine to show that (m/Q)a = E + v × B, demonstrating that the mass-to-charge ratio fundamentally controls ion motion in the instrument [34].

Instrumentation and Workflow

A mass spectrometer consists of three essential components that work in sequence: an ionization source, a mass analyzer, and an ion detection system [32] [33]. The sophisticated coordination of these components enables precise molecular analysis.

Core Components

Table 1: Core Components of a Mass Spectrometer

| Component | Function | Common Techniques |

|---|---|---|

| Ionization Source | Converts sample molecules into gas-phase ions [32] | Electrospray Ionization (ESI), Matrix-Assisted Laser Desorption/Ionization (MALDI), Electron Ionization (EI) [31] [35] |

| Mass Analyzer | Separates ions based on mass-to-charge (m/z) ratios [32] | Time-of-Flight (TOF), Orbitrap, Quadrupole [35] [33] |

| Ion Detection System | Measures abundance of separated ions [32] | Electron Multiplier [31] |

The MS Process Workflow

The following diagram illustrates the sequential process of mass spectrometry analysis, from sample introduction to data output:

Tandem Mass Spectrometry (MS/MS)

For advanced structural analysis, tandem mass spectrometry (MS/MS) employs multiple rounds of mass analysis [35]. In MS/MS, specific precursor ions from an initial MS1 scan are selectively isolated and fragmented using techniques like collision-induced dissociation (CID) [35]. The resulting fragment ions are then analyzed in a second mass analysis stage (MS2) to generate detailed fragmentation patterns [35]. This workflow is depicted below:

Interpreting Mass Spectrometry Data

A mass spectrum presents m/z ratios on the x-axis and relative ion abundance on the y-axis [31] [33]. The most abundant ion is designated the base peak, set to 100% relative intensity, with all other peaks measured relative to this value [31].

Key features in mass spectral interpretation include:

- Molecular Ion Peak (Parent Peak): Represents the intact, ionized molecule and corresponds to its molecular weight [31]. For example, hexane (C₆H₁₄) produces a molecular ion peak at m/z 86 [31].

- M+1 Peak: Results from the natural abundance of heavier isotopes (e.g., ¹³C instead of ¹²C), providing information about elemental composition [31].

- Fragmentation Pattern: Characteristic fragment ions reveal structural information as molecules break apart in predictable ways following ionization [31].

In proteomics applications, MS2 spectra are matched to theoretical fragmentation patterns of peptides using specialized algorithms, enabling protein identification [35]. The fragmentation of peptides occurs at specific bonds, producing predictable series of fragment ions (a, b, c and x, y, z ions) that can be computationally matched to identify the peptide sequence [35].

Experimental Protocols

Sample Preparation Methodology

Proper sample preparation is crucial for successful mass spectrometry analysis, particularly when dealing with complex biological matrices [31]. Standard protocols include:

- Protein Precipitation: Followed by centrifugation or filtration to remove interfering proteins [31].

- Extraction Techniques: Solid-phase extraction or liquid-liquid extraction to concentrate analytes and remove matrix components [31].

- Chemical Derivatization: Addition of specific functional groups to improve volatility, thermal stability, chromatographic properties, or ionization efficiency [31].

- Proteolytic Digestion: For protein analysis, proteins are typically digested with a protease like trypsin to generate peptides suitable for MS analysis [35].

Chromatographic Coupling

Mass spectrometry is frequently coupled with separation techniques to reduce sample complexity:

- Gas Chromatography/MS (GC/MS): Ideal for volatile, thermally stable compounds. Electron ionization at 70 eV is commonly used, producing characteristic, reproducible fragmentation patterns [31].

- Liquid Chromatography/MS (LC/MS): Suitable for non-volatile or thermally labile compounds. Soft ionization techniques like electrospray ionization (ESI) leave molecular ions largely intact, with fragmentation occurring in the mass analyzer [31].

Research Reagent Solutions

Table 2: Essential Research Reagents and Materials for Mass Spectrometry

| Reagent/Material | Function |

|---|---|

| Trypsin | Protease that digests proteins into peptides for proteomic analysis [35] |

| Derivatization Reagents | Chemical modifiers that enhance analyte properties for MS detection [31] |

| Solid-Phase Extraction Cartridges | Concentrate analytes and remove interfering matrix components [31] |

| Chromatography Columns | Separate complex mixtures before MS analysis (LC or GC) [31] [35] |

| Calibration Standards | Compounds with known m/z for instrument mass calibration [33] |

| Matrix Compounds | For MALDI ionization to facilitate sample desorption and ionization [35] |

Advantages Over Other Analytical Techniques

Mass spectrometry offers distinct advantages that make it particularly valuable for research and drug development:

Table 3: Advantages of Mass Spectrometry

| Advantage | Description |

|---|---|

| High Sensitivity | Capable of detecting trace-level analytes down to the zeptomole scale [36] |

| Accurate Mass Measurement | Provides precise molecular weight information for confident compound identification [32] [36] |

| Structural Elucidation | MS/MS fragmentation provides detailed structural information beyond molecular weight [36] |

| Wide Applicability | Analyzes diverse sample types including organic, inorganic, and biological macromolecules [36] |

| Quantitative Capability | Enables highly accurate quantitative measurements when calibrated with standards [36] |

| Integration with Separation | Couples effectively with GC and LC for enhanced analysis of complex mixtures [31] [36] |

Compared to other analytical approaches like infrared spectroscopy and nuclear magnetic resonance, mass spectrometry excels in sensitivity, molecular weight determination, and overall versatility across diverse applications [36].

Challenges and Considerations

Despite its powerful capabilities, mass spectrometry presents several analytical challenges that researchers must address:

- Mass Calibration: Small variations can lead to m/z shifts, particularly problematic in high-resolution instruments, requiring realignment to common m/z bins [33].

- Ambiguous Peak Assignment: A single peak may represent multiple biomolecular ions due to isomers or insufficient mass resolution [33].

- Isotopic Distributions: Single molecules produce multiple peaks at different m/z values due to natural isotopic abundance, complicating spectral interpretation [33].

- Ion Suppression/Enhancement: Analytes with higher ionization efficiency produce disproportionately high intensities, preventing direct assessment of relative abundance without proper controls [33].

- Interference Factors: Improper sample handling or contaminants can alter molecular concentrations and produce inaccurate mass spectra [31].