Hierarchy of Propositions and Activity Level Evaluation: A Framework for Credible AI in Drug Development

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on integrating the forensic science principles of the hierarchy of propositions and activity level evaluation into the...

Hierarchy of Propositions and Activity Level Evaluation: A Framework for Credible AI in Drug Development

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on integrating the forensic science principles of the hierarchy of propositions and activity level evaluation into the assessment of AI models for regulatory decision-making. With the FDA's recent draft guidance proposing a risk-based credibility framework for AI, this content explores the foundational concepts, methodological applications, and optimization strategies for establishing model trust. It addresses common challenges, outlines validation techniques against emerging standards, and positions these evaluative frameworks as critical tools for accelerating the development of safe and effective drugs.

Understanding the Hierarchy of Propositions and Activity Level Evaluation in Biomedical Contexts

Within the hierarchy of propositions framework for evaluating scientific evidence, activity-level propositions represent a critical tier of interpretation beyond the source of a biological stain. This framework demands that forensic scientists move beyond merely identifying the biological material (e.g., "the DNA originates from Person X") and instead assess the findings in the context of competing propositions about how that material was transferred during an alleged activity (e.g., "the DNA was transferred via direct contact versus an indirect route"). The evaluation of forensic genetics findings given activity-level propositions is an emerging discipline that has gained critical importance due to increasing analytical sensitivity and advances in probabilistic genotyping. This progression places a growing demand on forensic biologists to assist the judiciary with activity-level inferences in a balanced, robust, and transparent manner [1]. This document outlines the core protocols and application notes for implementing this sophisticated level of forensic evaluation, with a specific focus on data quality, statistical frameworks, and their practical application in drug development research.

Core Concepts and Definitions

The Hierarchy of Propositions

The hierarchy of propositions is a fundamental concept in evidence interpretation, organizing explanations for scientific findings into different levels. Activity-level propositions sit between source-level and offense-level propositions, focusing on the actions that could have led to the evidence being found. For example, in a case where a suspect's DNA is found on a broken window, the source-level proposition might be "The DNA originated from the suspect," while the activity-level propositions could be "The suspect broke the window" versus "The suspect innocently touched the window earlier." The evaluation requires considering the probability of the evidence under each of these competing activity scenarios [1].

The Likelihood Ratio Framework

The quantitative assessment of activity-level propositions is formally conducted using the likelihood ratio (LR). The LR measures the strength of the evidence by comparing the probability of the evidence under the prosecution's proposition to the probability of the evidence under the defense's proposition. In mathematical terms:

LR = Pr(E | Hp) / Pr(E | Hd)

Where:

Erepresents the scientific evidenceHpis the prosecution's proposition (e.g., direct contact occurred)Hdis the defense's proposition (e.g., indirect transfer occurred)

An LR greater than 1 supports the prosecution's proposition, while an LR less than 1 supports the defense's proposition. The magnitude indicates the strength of this support [1].

Quantitative Data Quality Assurance for Activity-Level Evaluation

Robust activity-level evaluation depends fundamentally on high-quality quantitative data. Quantitative data quality assurance is the systematic process and procedures used to ensure the accuracy, consistency, reliability, and integrity of data throughout the research process. Effective quality assurance helps identify and correct errors, reduce biases, and ensure the data meets the standards needed for analysis and reporting [2].

Data Cleaning and Validation Protocols

Prior to statistical analysis, data must undergo rigorous cleaning and validation:

- Checking for duplications: Identify and remove identical copies of data, particularly important in online data collection where respondents may complete questionnaires multiple times [2].

- Managing missing data: Establish percentage thresholds for inclusion/exclusion of incomplete data (e.g., 50% vs. 100% completeness). Use statistical tests like Little's Missing Completely at Random (MCAR) test to determine the pattern of missingness and inform appropriate imputation methods if needed [2].

- Identifying anomalies: Run descriptive statistics for all measures to detect values that deviate from expected patterns (e.g., Likert scale responses outside the valid scoring range) [2].

Data Analysis Foundations

Activity-level evaluation requires appropriate statistical analysis of cleaned data:

- Assessing normality: Test data distribution using measures of kurtosis (peakedness/flatness) and skewness (deviation around the mean), with values of ±2 indicating normality. Formal tests like Kolmogorov-Smirnov and Shapiro-Wilk provide additional evidence of distribution normality [2].

- Psychometric validation: Establish reliability and validity of standardized instruments. Report Cronbach's alpha scores (>0.7 considered acceptable) to demonstrate internal consistency of constructs being measured [2].

Table 1: Key Data Quality Assurance Thresholds for Activity-Level Evaluation

| Quality Dimension | Measurement Approach | Acceptance Threshold | Statistical Test |

|---|---|---|---|

| Data Completeness | Percentage of missing data per participant/question | ≥50% for inclusion (adjustable) | Little's MCAR Test |

| Normality of Distribution | Kurtosis and Skewness | Values within ±2 range | Kolmogorov-Smirnov, Shapiro-Wilk |

| Instrument Reliability | Internal consistency | Cronbach's Alpha >0.7 | Cronbach's Alpha Test |

| Anomaly Detection | Descriptive statistics | All values within expected ranges | Frequency analysis, visual inspection |

Experimental Protocols for Activity-Level Evaluation

Case Assessment and Interpretation Protocol

Proper case assessment is prerequisite for meaningful activity-level evaluation:

- Case Context Review: Examine all available case information, including alleged activities, timing, and environmental factors.

- Proposition Formulation: Define competing activity-level propositions that are forensically relevant, mutually exclusive, and exhaustive.

- Relevant Data Identification: Determine what data (transfer probabilities, persistence times, background prevalence) are needed to inform probabilities for the likelihood ratio calculation.

- Bayesian Network Construction: Develop graphical models representing the relationship between activities, transfer, persistence, and recovery of biological material [1].

Bayesian Network Modeling for Activity Propositions

Bayesian networks provide a robust framework for evaluating complex activity scenarios:

Node Definition: Identify key variables (nodes) in the network, including:

- Activity nodes (e.g., "Direct contact," "Secondary transfer")

- Transfer nodes (e.g., "DNA transferred")

- Persistence nodes (e.g., "DNA persisted")

- Recovery nodes (e.g., "DNA detected")

Conditional Probability Assignment: Define probability distributions for each node conditional on its parent nodes, based on experimental data and case circumstances.

Evidence Propagation: Enter findings as evidence in the network and calculate the likelihood ratio by comparing posterior probabilities under competing propositions [1].

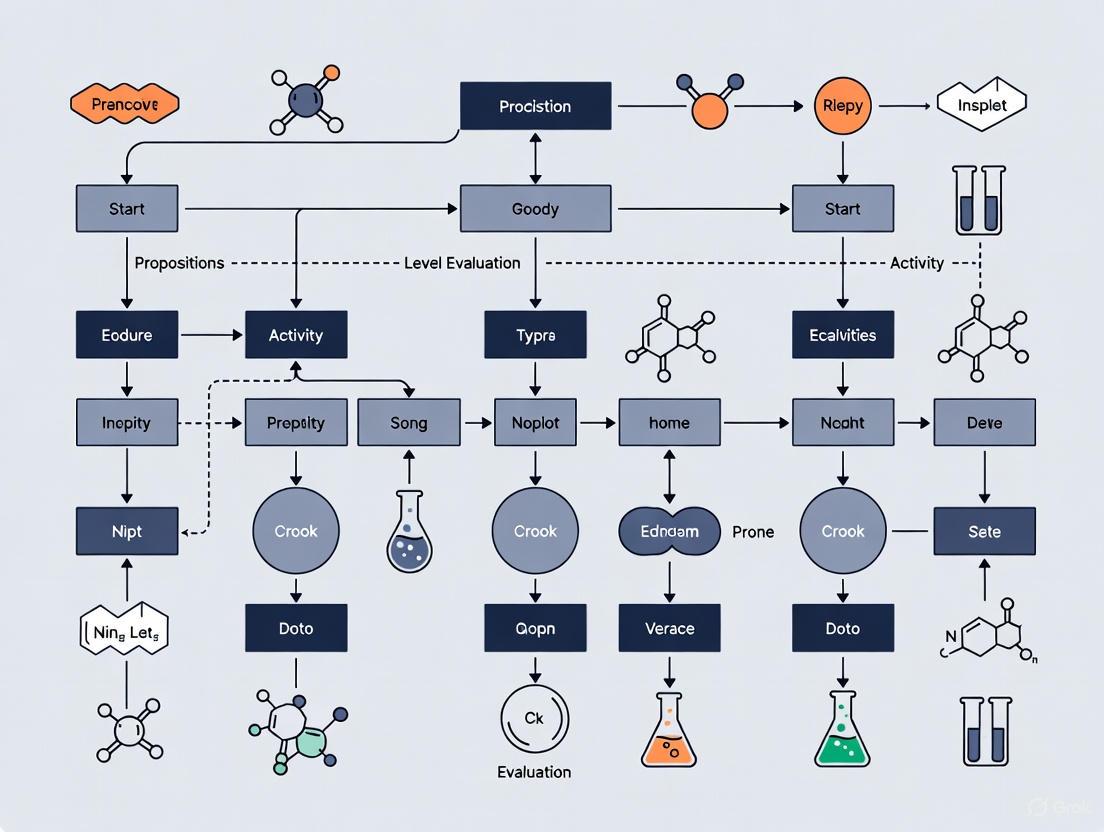

Diagram 1: Bayesian network for activity evaluation

Application in Drug Development Research

The principles of activity-level evaluation extend beyond traditional forensics into pharmaceutical research, particularly in clinical trial data interpretation and drug development pipelines.

Biomarker Data Interpretation in Alzheimer's Clinical Trials

In Alzheimer's disease drug development, biomarkers play a crucial role in establishing trial eligibility and serving as outcomes. The 2025 Alzheimer's disease drug development pipeline includes 182 trials with 138 drugs, where biomarkers are among the primary outcomes for 27% of active trials [3]. Activity-level reasoning helps distinguish between propositions such as "Biomarker change resulted from disease progression" versus "Biomarker change resulted from drug intervention."

Table 2: Alzheimer's Drug Development Pipeline (2025) - Biomarker Applications

| Therapeutic Category | Percentage of Pipeline | Biomarker Use in Eligibility | Biomarker Use as Outcome | Primary Activity-Level Propositions |

|---|---|---|---|---|

| Biological DTTs | 30% | Required for amyloid-targeting | Primary in 42% of trials | Drug engaged target vs. Non-specific effect |

| Small Molecule DTTs | 43% | Often required | Secondary in most trials | Target modulation vs. Off-target effect |

| Cognitive Enhancers | 14% | Seldom required | Rare | Symptom improvement vs. Practice effect |

| Neuropsychiatric Symptom Drugs | 11% | Not required | Not typically measured | Specific symptom reduction vs. Placebo effect |

Structure-Based Drug Design and Bayesian Frameworks

In structure-based drug design, advanced computational methods like MSCoD employ Bayesian updating frameworks with multi-scale information bottleneck (MSIB) and multi-head cooperative attention (MHCA) mechanisms. These approaches model complex protein-ligand interactions that are inherently multi-scale, hierarchical, and asymmetric [4]. The framework evaluates propositions about molecular binding through iterative compatibility assessment between generated ligand samples and protein binding sites.

Experimental Protocol: MSCoD Framework Implementation

Input Preparation:

- Protein structure input:

P = {(xP(i), vP(i))} i=1 to NPwherexPrepresents 3D atomic coordinates andvPrepresents atomic features. - Ligand initialization:

M = {(xM(i), vM(i))} i=1 to NMwith similar coordinate and feature representations [4].

- Protein structure input:

Multi-Scale Feature Extraction:

- Implement Multi-Scale Information Bottleneck (MSIB) for hierarchical feature extraction via semantic compression at multiple abstraction levels.

- Process both local atomic details and global molecular patterns.

Cooperative Attention Mechanism:

- Apply multi-head cooperative attention (MHCA) with asymmetric protein-to-ligand attention.

- Model diverse interaction types while managing the dimensionality gap between proteins and ligands.

Bayesian Updating Cycle:

- Generate ligand candidates using neural network

Φbased on current parametersθi-1and protein context. - Evaluate compatibility between generated ligands

m̂and protein binding site. - Update parameters using update function

PU:θi-1 → Φ m̂ → PU θi[4].

- Generate ligand candidates using neural network

Diagram 2: MSCoD framework workflow for drug design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Activity-Level Evaluation

| Tool/Reagent | Function/Purpose | Application Context | Implementation Notes |

|---|---|---|---|

| Probabilistic Genotyping Software | Interprets complex DNA mixtures using statistical models | Forensic DNA transfer studies | Required for activity-level evaluation of trace evidence |

| Bayesian Network Software | Graphical modeling of relationships between variables | Activity proposition evaluation | Enables transparent representation of competing hypotheses |

| Multi-Scale Information Bottleneck (MSIB) | Hierarchical feature extraction via semantic compression | Structure-based drug design | Captures protein-ligand interactions at multiple scales [4] |

| Multi-Head Cooperative Attention (MHCA) | Models asymmetric protein-ligand interactions | Computational drug discovery | Handles dimensionality gap between proteins and ligands [4] |

| Clinical Trial Biomarkers | Objective measures of biological processes | Alzheimer's drug development | 27% of AD trials use biomarkers as primary outcomes [3] |

| Data Quality Assurance Protocols | Ensures accuracy, consistency, reliability of data | All quantitative research | Includes duplication checks, missing data management, anomaly detection [2] |

Integrated Qual-Quant Data Collection Framework

Effective activity-level evaluation requires integration of quantitative measurements with qualitative context. A unified data collection system addresses limitations of purely quantitative approaches:

Unified Participant Identifiers: Implement consistent unique IDs that track all participant interactions across multiple data collection points, eliminating fragmentation and manual matching [5].

Simultaneous Qual-Quant Collection: Design workflows that capture structured metrics and open-ended input in the same process, enabling real-time connection between numerical patterns and explanatory narratives [5].

Real-Time Qualitative Processing: Analyze open-ended responses as they arrive using automated theme detection, allowing emerging patterns to inform ongoing analysis while intervention is still possible [5].

This integrated approach is particularly valuable for interpreting complex activity scenarios where statistical patterns require contextual explanation, such as distinguishing between transfer mechanisms in forensic evidence or understanding variable drug responses in clinical populations.

The evaluation of scientific findings given activity-level propositions represents a sophisticated framework that moves beyond simple source attribution to address the actions and mechanisms that produced the evidence. Implementation requires rigorous quantitative data quality assurance, appropriate statistical analysis using likelihood ratios, Bayesian network modeling for complex scenarios, and integrated data collection systems that combine quantitative measurements with qualitative context. These principles find application across diverse fields from forensic genetics to structure-based drug design, where the Alzheimer's drug development pipeline demonstrates the practical value of biomarker data in evaluating therapeutic mechanisms of action. The MSCoD framework exemplifies how Bayesian updating approaches with multi-scale feature extraction can advance molecular design by systematically evaluating propositions about protein-ligand interactions. As analytical sensitivity continues to increase across scientific disciplines, robust methodologies for activity-level evaluation will become increasingly essential for transparent and defensible interpretation of complex scientific evidence.

The Role of Likelihood Ratios in Quantitative Evidence Evaluation

Within the framework of the hierarchy of propositions for activity-level evaluation research, the quantification of evidential strength is paramount. The likelihood ratio (LR) has emerged as a fundamental metric for this purpose, providing a coherent and transparent method for weighing evidence across diverse scientific disciplines. The LR is a robust statistical measure that enables researchers and legal decision-makers to update their beliefs about competing propositions based on new data [6]. Its application spans forensic science, medical diagnostics, and pharmaceutical development, offering a unified approach to evidence evaluation. This article outlines the theoretical underpinnings of the LR, details protocols for its calculation in various contexts, and provides visual tools to aid in its interpretation and application, all situated within the advanced research context of activity-level propositions.

Theoretical Foundation of the Likelihood Ratio

The Likelihood Ratio is a measure of the strength of evidence for comparing two competing propositions. It is defined as the ratio of the probability of observing the evidence under one proposition (typically the prosecution's proposition, Hp) to the probability of observing the same evidence under an alternative proposition (typically the defense's proposition, Hd), given the background information I [6].

The fundamental formula for the LR is: V = Pr(E | Hp, I) / Pr(E | Hd, I)

The power of the LR is realized through its application in Bayes' Theorem, which provides a formal mechanism for updating prior beliefs in the face of new evidence. The odds form of Bayes' Theorem illustrates this relationship [6]: Posterior Odds = Likelihood Ratio × Prior Odds

Where:

- Posterior Odds: The odds in favor of a proposition after considering the evidence E.

- Prior Odds: The odds in favor of the proposition before considering evidence E.

- Likelihood Ratio: The factor that converts the prior odds to the posterior odds.

This framework is not merely a theoretical construct but is essential for rational decision-making under uncertainty. Its application forces the explicit consideration of the propositions and the role of the evidence in distinguishing between them [7] [6]. The LR possesses several critical properties:

- Values greater than 1: Support the first proposition (Hp).

- Values less than 1: Support the alternative proposition (Hd).

- Value of 1: The evidence is inconclusive and does not alter the prior odds [8].

Table 1: Interpreting Likelihood Ratio Values

| LR Value | Interpretation of Evidence Support |

|---|---|

| > 10,000 | Extremely strong support for Hp |

| 1,000 - 10,000 | Very strong support for Hp |

| 100 - 1,000 | Strong support for Hp |

| 10 - 100 | Moderate support for Hp |

| 1 - 10 | Limited support for Hp |

| 1 | No support for either proposition |

| 0.1 - 1 | Limited support for Hd |

| 0.01 - 0.1 | Moderate support for Hd |

| 0.001 - 0.01 | Strong support for Hd |

| < 0.001 | Very strong support for Hd |

LR Applications Across Scientific Disciplines

Forensic Science and the Hierarchy of Propositions

In forensic science, the LR is the recommended method for evaluating evidence, particularly within the hierarchy of propositions, which ranges from source level to activity level. At the sub-source level (e.g., DNA mixtures), the LR is used to assess whether a person of interest (POI) is a contributor to a sample. Different proposition pairs can be formulated, each with specific strengths and applications [8]:

- Simple Propositions: Compare the probability of the evidence given the POI and an unknown contributor versus two unknown contributors. These are commonly reported.

- Conditional Propositions: Assume the contribution of multiple known individuals under Hp and all but one under Hd. These offer higher power to differentiate true from false donors.

- Compound Propositions: Consider multiple POIs together under Hp versus all unknown contributors under Hd. These can misstate the weight of evidence if not reported alongside simple LRs [8].

Research on DNA mixtures has demonstrated that conditional propositions have a much higher ability to differentiate true from false donors than simple propositions, making them particularly valuable for activity-level analysis where the presence of multiple individuals is a key case circumstance [8].

Medical Diagnostics and Clinical Trials

In medicine, LRs are used to assess the value of diagnostic tests. The positive likelihood ratio (LR+) and negative likelihood ratio (LR-) combine sensitivity and specificity into a single metric that indicates how much a test result shifts the probability of a disease [9] [10].

LR+ = Sensitivity / (1 - Specificity) LR- = (1 - Sensitivity) / Specificity

These LRs are then used in Bayes' theorem to update the pre-test probability of a disease to a post-test probability. For quantitative tests, the LR for a specific result is equal to the slope of the tangent to the Receiver Operating Characteristic (ROC) curve at the point corresponding to that result [11]. Advanced techniques, such as fitting Bézier curves to ROC data, allow for the estimation of LRs for every possible test result without assuming an underlying distribution, thereby standardizing the reporting of quantitative diagnostic results [11].

In pharmaceutical development and database studies, the LR (often termed the Diagnostic Likelihood Ratio, DLR) is pivotal for connecting validation study results to the planning of new studies. It helps estimate the positive predictive value (PPV) in a planned database study based on disease prevalence and the performance of a phenotype algorithm, thus enabling the assessment of misclassification bias at the study design phase [12].

Table 2: LR Impact on Post-Test Probability

| Likelihood Ratio | Approximate Change in Probability | Effect on Post-Test Probability |

|---|---|---|

| 0.1 | -45% | Large Decrease |

| 0.2 | -30% | Moderate Decrease |

| 0.5 | -15% | Slight Decrease |

| 1 | 0% | None |

| 2 | +15% | Slight Increase |

| 5 | +30% | Moderate Increase |

| 10 | +45% | Large Increase |

Note: These estimates are accurate to within 10% for pre-test probabilities between 10% and 90% [9].

Experimental Protocols and Calculation Methods

Protocol 1: Calculating LRs for Forensic DNA Mixtures

This protocol utilizes probabilistic genotyping software (e.g., STRmix) to compute LRs for complex DNA mixtures, a common scenario in activity-level evaluation.

Materials:

- Probabilistic Genotyping Software (e.g., STRmix): Essential for modeling DNA mixture profiles and calculating probability densities under different propositions [8].

- Electropherogram Data: Raw data from capillary electrophoresis of amplified STR markers.

- Biological Profiles: DNA profiles of persons of interest (POIs) and other known contributors.

- Population Allele Frequency Data: Used to estimate the probability of observing DNA profiles from unknown individuals.

Procedure:

- Profile Interpretation: Analyze the electropherogram to determine the number of potential contributors and their approximate mixture proportions. Set an analytical threshold (e.g., 100-125 RFU) to distinguish signal from noise [8].

- Define Proposition Pairs: Formulate mutually exclusive propositions at the sub-source level.

- Example Simple Proposition Pair:

- Hp: The DNA originated from POI and one unknown individual.

- Hd: The DNA originated from two unknown individuals [8].

- Example Conditional Proposition Pair (for a 4-person mixture with 4 POIs):

- Hp: The DNA originated from POI1, POI2, POI3, and POI4.

- Hd: The DNA originated from POI2, POI3, POI4, and one unknown individual [8].

- Example Simple Proposition Pair:

- Software Deconvolution: Input the evidence profile, known profiles, and proposition pairs into the software. The software performs a Markov Chain Monte Carlo (MCMC) exploration to find plausible genotype combinations.

- LR Calculation: The software calculates the LR using the formula: LR = Pr(E | Hp, I) / Pr(E | Hd, I) Where the probabilities are derived from the model fits to the evidence under each proposition.

- Validation and Reporting: Re-deconvolute samples if necessary (e.g., if LRs for true donors are 0). Report the calculated LR along with the specific propositions used.

Protocol 2: Deriving LRs from Quantitative Diagnostic Test Data

This protocol describes a distribution-free method using Bézier curves to estimate the LR for any specific quantitative test result based on ROC curve data [11].

Materials:

- ROC Curve Data: Empirical data points relating True Positive Rate (Sensitivity) and False Positive Rate (1-Specificity) across various test thresholds.

- Software for Curve Fitting: A platform capable of regression analysis and implementing the Bézier curve algorithm (e.g., R, Python, or Microsoft Excel with RGP function) [11].

Procedure:

- Data Input: Compile the empirical ROC points, calculating the corresponding (1-Specificity, Sensitivity) coordinates.

- Parameterize the Curve: Introduce a parameter, t, which ranges from 0 to 1. A common initial estimate is t = (x + y)/2 for each empirical ROC point (x, y). Adjust the range proportionally if the data does not span the entire (0,1) interval [11].

- Fit Cubic Bézier Curves: Fit separate cubic Bernstein polynomials for the x-coordinate (1-Specificity) and y-coordinate (Sensitivity) as functions of t. B(t) = (1-t)³P₀ + 3(1-t)²tP₁ + 3(1-t)t²P₂ + t³P₃ The coefficients of these polynomials are determined using a least-squares regression against the empirical data.

- Calculate Control Points: From the polynomial coefficients (a, b, c, d), calculate the four control points (P₀, P₁, P₂, P₃) that define the Bézier curve for both x and y coordinates [11].

- Compute Slope (LR): The LR for a value of t is equal to the slope of the tangent to the Bézier curve at that point. This slope is calculated as the derivative of the y-polynomial with respect to the x-polynomial: LR(t) = (dy/dt) / (dx/dt) [11].

- Map Test Results to LRs: Establish a relationship between the actual quantitative test results and the parameter t (or directly to the calculated LRs) using a fitting function (e.g., least-squares approximation). This creates a continuous function from which an LR can be obtained for any test result.

Visualization and Decision-Support Tools

Workflow for LR Calculation in Forensic Evidence Evaluation

The following diagram illustrates the logical flow for evaluating forensic evidence using the LR, from the initial discovery of evidence to the final interpretation in the context of a case. This workflow emphasizes the critical role of the hierarchy of propositions.

Bayesian Updating with a Likelihood Ratio

This diagram visualizes the core mechanism of Bayes' Theorem, showing how the Likelihood Ratio acts upon the Prior Odds to yield the Posterior Odds.

Table 3: Key Research Reagent Solutions for LR Implementation

| Tool / Resource | Function / Application | Example / Note |

|---|---|---|

| Probabilistic Genotyping Software (PGS) | Calculates LRs for complex DNA mixtures by modeling all possible genotype combinations. | STRmix, EuroForMix; uses MCMC for deconvolution [8]. |

| SAILR Software | Provides a user-friendly GUI for calculating LRs in various forensic statistics applications. | Developed under the Netherlands Forensic Institute; implements hierarchical random effects models [6]. |

| Bézier Curve Fitting Algorithm | Enables distribution-free estimation of LRs for quantitative diagnostic test results from ROC data. | Can be implemented in R, Python, or Excel; provides LR as slope of tangent to ROC curve [11]. |

| Assumptions Lattice & Uncertainty Pyramid | Framework for assessing the impact of modeling choices and assumptions on the reported LR value. | Addresses criticism that LRs without uncertainty characterization may be misleading [7]. |

| Diagnostic Likelihood Ratio (DLR) | Summarizes performance of phenotype algorithms in database studies; connects sensitivity/specificity to PPV via prevalence. | DLR+ = Sensitivity / (1-Specificity); pivotal for planning pharmacoepidemiology studies [12]. |

The likelihood ratio stands as a cornerstone of quantitative evidence evaluation, providing a rigorous, transparent, and logically sound framework for updating beliefs in the face of uncertainty. Its application, from activity-level propositions in forensic science to diagnostic testing in medicine and risk assessment in drug development, demonstrates its remarkable versatility. The successful implementation of LRs requires careful attention to proposition formulation, appropriate statistical modeling, and a thorough understanding of associated uncertainties. The protocols and tools outlined in this article provide a foundation for researchers and professionals to robustly apply likelihood ratios, thereby strengthening the scientific basis of decision-making in their respective fields.

Parallels with FDA's Risk-Based Credibility Assessment Framework

The U.S. Food and Drug Administration (FDA) has established a risk-based credibility assessment framework to guide the use of artificial intelligence (AI) in drug development and regulatory decision-making [13]. This framework provides recommendations for sponsors using AI to produce data supporting regulatory decisions about the safety, effectiveness, or quality of drugs and biological products [14]. The approach is centered on ensuring model credibility—defined as trust in the performance of an AI model for a particular context of use (COU) [15].

The FDA's framework addresses several key challenges in AI implementation, including: dataset variability that may introduce bias, the need for methodological transparency in complex computational models, difficulties in quantifying uncertainty of accuracy, and the necessity of life-cycle maintenance to address data drift [16]. This framework represents the FDA's first comprehensive guidance on AI for drug and biological product development, reflecting the agency's experience with over 500 drug and biological product submissions containing AI components since 2016 [13].

The Seven-Step Credibility Assessment Process

The FDA's framework is structured around a systematic seven-step process for assessing AI model credibility [16] [17]. This process enables sponsors to evaluate AI models based on their specific context of use and potential risk factors.

Table 1: The Seven-Step FDA Credibility Assessment Framework for AI Models

| Step | Process Component | Key Activities and Considerations |

|---|---|---|

| 1 | Define Question of Interest | Formulate specific research or regulatory question addressed by AI model [16]. |

| 2 | Define Context of Use (COU) | Specify AI model role, scope, operating conditions, and evidentiary sources [16]. |

| 3 | Assess AI Model Risk | Evaluate model influence and decision consequence using risk matrix [16]. |

| 4 | Develop Credibility Assessment Plan | Create detailed plan for establishing output credibility, tailored to COU and risk level [17]. |

| 5 | Execute Plan | Implement credibility assessment activities per established plan [17]. |

| 6 | Document Results | Record assessment results in credibility assessment report, including deviations [17]. |

| 7 | Determine Model Adequacy | Decide if model is adequate for COU; adjust or mitigate risk if needed [17]. |

Detailed Protocol for Risk Assessment (Step 3)

The risk assessment protocol involves evaluating two primary dimensions: model influence and decision consequence [16].

Materials and Methods:

- Risk assessment matrix template

- Model documentation including architecture and training data

- Context of use specification

- Regulatory decision pathway mapping

Experimental Protocol:

- Characterize Model Influence: Assess the contribution of evidence derived from the AI model relative to other evidence sources. Assign a risk rating (low, medium, high) based on the model's relative contribution to the overall evidence package [16].

- Evaluate Decision Consequence: Describe the significance of adverse outcomes resulting from incorrect decisions based on the AI model output. Consider impact on patient safety, drug effectiveness, and product quality [16].

- Construct Risk Matrix: Plot model influence against decision consequence to determine overall model risk level for the specific COU.

- Document Rationale: Provide detailed justification for all risk assignments, including evidence sources and decision consequence analysis.

Detailed Protocol for Credibility Assessment Plan Development (Step 4)

The credibility assessment plan establishes the activities needed to demonstrate that AI model outputs are credible for the specific COU [17].

Materials and Methods:

- Model architecture documentation

- Training and validation datasets

- Performance metric specifications

- Regulatory submission templates

Experimental Protocol:

- Describe Model Architecture: Document model inputs, outputs, features, feature selection process, and parameters. Provide rationale for choosing the specific modeling approach [16].

- Characterize Training Data: Detail training datasets, including data sources, preprocessing steps, and relevance/reliability assessments to support fitness for use [16].

- Document Development Process: Describe model training and evaluation methodology, including hyperparameter exploration, architecture variations, and validation approaches [16].

- Define Performance Metrics: Establish quantitative and qualitative metrics for evaluating model performance, aligned with the COU and commensurate with model risk.

- Plan External Validation: If required based on risk assessment, design independent validation procedures using appropriate external datasets.

Experimental Design and Methodological Considerations

Credibility Assessment Experimental Framework

The FDA's risk-based approach requires designing credibility assessment activities tailored to the specific context of use and model risk level [13]. The appropriate assessment methodology depends on the model's position within the risk matrix established in Step 3.

Table 2: Credibility Assessment Activities by Model Risk Level

| Risk Level | Data Quality Requirements | Validation Approach | Documentation Level | FDA Engagement |

|---|---|---|---|---|

| Low Risk | Standard fitness-for-use assessment | Internal validation with cross-validation | Summary documentation with key parameters | Optional early engagement |

| Medium Risk | Enhanced relevance and reliability assessment | External validation with comparable datasets | Comprehensive documentation with rationale | Recommended early engagement |

| High Risk | Extensive data provenance and quality metrics | Independent external validation with diverse datasets | Extensive documentation with audit trail | Required early and ongoing engagement |

Protocol for AI Model Lifecycle Maintenance

The FDA emphasizes the need for ongoing monitoring and maintenance of AI models throughout their deployment lifecycle to address concept drift, data drift, and performance degradation [16].

Materials and Methods:

- Performance monitoring dashboard

- Change management system

- Model version control framework

- Regulatory reporting templates

Experimental Protocol:

- Establish Performance Baselines: Document initial model performance metrics across relevant demographic, clinical, and operational subgroups.

- Implement Monitoring Framework: Decontinuous monitoring of model inputs, outputs, and performance metrics using statistical process control methods.

- Define Trigger Thresholds: Establish predetermined thresholds for performance degradation that trigger model retraining or reassessment.

- Develop Change Management Protocol: Create standardized procedures for implementing model changes, including documentation, testing, and validation requirements.

- Plan for Periodic Reassessment: Schedule regular comprehensive model reassessments, with frequency based on model risk level and observed performance stability.

Visualization of the Credibility Assessment Framework

FDA AI Assessment Workflow: This diagram illustrates the sequential seven-step process for AI model credibility assessment, highlighting decision points and lifecycle maintenance requirements.

Research Reagent Solutions for Credibility Assessment

Table 3: Essential Methodological Tools for AI Credibility Assessment

| Research Tool Category | Specific Methodologies | Function in Credibility Assessment |

|---|---|---|

| Data Quality Assessment | Relevance analysis, Reliability verification, Completeness assessment | Ensures training data is appropriate and representative for specific COU [16]. |

| Model Transparency | Architecture documentation, Feature selection rationale, Parameter justification | Provides methodological clarity for FDA evaluation of model development process [16]. |

| Performance Validation | Cross-validation, External validation, Sensitivity analysis | Quantifies model accuracy, robustness, and generalizability for regulatory decision-making [17]. |

| Bias Evaluation | Subgroup analysis, Fairness metrics, Demographic stratification | Identifies and mitigates potential biases in model outputs across patient populations [16]. |

| Uncertainty Quantification | Confidence intervals, Probability calibration, Error distribution analysis | Characterizes reliability and limitations of model predictions for risk assessment [16]. |

| Change Management | Version control, Performance monitoring, Drift detection | Maintains model credibility throughout deployment lifecycle and manages updates [16]. |

Application in Drug Development Stages

The FDA's risk-based credibility framework applies across multiple stages of drug development, from nonclinical research through post-marketing surveillance [15]. The framework's flexibility allows adaptation to different contexts while maintaining rigorous standards for model credibility.

Protocol for Clinical Development Application

Use Case: AI model for predicting adverse drug reactions in clinical trial populations [16]

Materials and Methods:

- Clinical trial data including demographic, laboratory, and adverse event records

- AI model platform for predictive analytics

- Validation framework with historical data

- Regulatory documentation templates

Experimental Protocol:

- Define COU Specification: Clearly delineate the model's intended use for identifying potential adverse reaction risks in specific patient subpopulations during clinical development.

- Establish Model Boundaries: Document limitations and constraints, including applicable patient populations, drug classes, and clinical contexts.

- Implement Multi-level Validation:

- Internal validation using cross-validation techniques on development data

- Temporal validation using data from different time periods

- External validation using independent datasets from comparable clinical studies

- Conduct Subgroup Analysis: Assess model performance across relevant demographic and clinical subgroups to identify potential performance disparities.

- Document Decision Impact: Analyze potential consequences of false positive and false negative predictions on patient safety and development decisions.

Protocol for Manufacturing Quality Application

Use Case: AI model for predicting drug product quality attributes during manufacturing [16]

Materials and Methods:

- Manufacturing process data

- Quality testing results

- Real-time monitoring systems

- Good Manufacturing Practice (GMP) documentation

Experimental Protocol:

- Align with Regulatory Requirements: Ensure model development and implementation complies with CGMP regulations, particularly quality unit responsibilities under 21 CFR 211.22 and 211.68 [16].

- Integrate Process Understanding: Incorporate domain knowledge about manufacturing processes and quality attributes into model design and validation.

- Establish Real-time Monitoring: Implement continuous performance tracking with alert thresholds for model performance degradation.

- Document Change Control: Create rigorous change management procedures for model updates, including impact assessment and validation requirements.

- Maintain Audit Trail: Preserve comprehensive documentation of model development, validation, and performance history for regulatory inspection.

The FDA's risk-based credibility assessment framework provides a structured yet flexible approach for integrating AI into drug development while maintaining rigorous standards for regulatory decision-making. By following the seven-step process and implementing appropriate credibility assessment activities commensurate with model risk, sponsors can leverage AI technologies to advance drug development while ensuring patient safety and product quality.

Establishing the 'Context of Use' as the Foundation for AI Model Evaluation

In the rapidly evolving field of artificial intelligence, particularly within drug development and healthcare, the evaluation of AI models has traditionally emphasized technical performance metrics such as accuracy, precision, and recall [18]. While these quantitative measures are necessary, they represent an incomplete picture—equivalent to evaluating evidence at the source level without considering the activity level propositions that define real-world application [19]. This document establishes a framework for anchoring AI model evaluation firmly within its specific Context of Use (COU), adopting principles from forensic science's hierarchy of propositions to create more robust, meaningful, and clinically relevant evaluation protocols.

The Context of Use explicitly defines the purpose, operating conditions, and intended audience for an AI model within a specific decision-making process [20]. By framing evaluation within this context, we shift from asking "Is this model accurate?" to the more pertinent question: "Can this model reliably support a specific decision or action within defined parameters?" This approach directly mirrors activity-level evaluation in forensic science, which assesses findings given specific activity propositions rather than source-level characteristics alone [19]. The following sections provide detailed protocols for implementing this framework through quantitative assessment, experimental validation, and comprehensive reporting.

Conceptual Foundation: The Hierarchy of Propositions Framework

The Hierarchy of Propositions in Forensic Science and AI

The hierarchy of propositions provides a logical framework for evaluating scientific findings across multiple levels of specificity and relevance [19]. Originally developed in forensic science, this hierarchy establishes that the value of scientific evidence increases when evaluated against propositions that are more closely aligned with the ultimate questions requiring resolution. This framework translates powerfully to AI model evaluation, particularly in high-stakes domains like drug development.

In forensic contexts, the hierarchy progresses from sub-source level (concerned with source identification) to activity level (concerned with actions and events) [19]. Similarly, in AI evaluation, we can conceptualize a parallel hierarchy that progresses from technical validation to clinical utility:

- Sub-Source Level: Focused on component performance (e.g., individual algorithm accuracy)

- Source Level: Concerned with overall model performance on benchmark datasets

- Activity Level: Addresses performance in supporting specific decisions or actions

- Offense Level: Pertains to ultimate impact on patient outcomes or regulatory decisions

The most significant analytical leverage comes from elevating evaluation to the activity level, where models are assessed against their capacity to reliably inform specific decisions within the defined Context of Use [19].

Activity-Level Evaluation in AI

Activity-level evaluation in AI requires a fundamental shift from assessing "what the model is" to "what the model does" in specific contexts. Rather than asking whether a model correctly predicts molecular binding, we evaluate whether it provides sufficient evidence to prioritize one compound over another in a specific phase of drug development. This approach acknowledges that the same model may have different utilities across different contexts.

This framework has solid logical foundations supported by Bayesian reasoning [19]. The evaluation considers the probability of obtaining the model's outputs given competing propositions about its utility for specific activities. The likelihood ratio formula provides a quantitative framework for this comparison:

$$LR = \frac{Pr(E|Hp,I)}{Pr(E|Hd,I)}$$

Where E represents the model's performance evidence, Hp and Hd represent competing propositions about the model's utility for a specific activity, and I represents the contextual information defining the use case [19]. This formulation allows for balanced, robust, and transparent assessment of AI models within their specific Context of Use.

Quantitative Evaluation Framework: The APPRAISE-AI Tool

The APPRAISE-AI tool provides a validated, quantitative method for evaluating the methodological and reporting quality of AI prediction models for clinical decision support [20]. This tool enables standardized assessment across six critical domains, with a maximum overall score of 100 points. The domains and their weightings are summarized in Table 1.

Table 1: APPRAISE-AI Evaluation Domains and Scoring

| Domain | Points | Evaluation Focus | Key Components |

|---|---|---|---|

| Clinical Relevance | 10 | Clinical problem definition and appropriateness | Clinical need, outcome definition, clinical applicability |

| Data Quality | 13 | Representativeness and preprocessing | Data source, diversity, preprocessing, labeling accuracy |

| Methodological Conduct | 25 | Technical soundness of model development | Data splitting, sample size, reference comparison, bias assessment |

| Robustness of Results | 16 | Reliability and generalizability | Performance measures, calibration, error analysis, explainability |

| Reporting Quality | 21 | Transparency and completeness | Model specification, limitations, discussion, abstract |

| Reproducibility | 15 | Availability of materials for replication | Code, data, model availability with documentation |

Application Protocol for APPRAISE-AI

Protocol 1: Quantitative Evaluation Using APPRAISE-AI

Purpose: To systematically evaluate AI model quality and readiness for specific Context of Use.

Materials:

- AI model documentation and performance results

- Dataset characteristics and preprocessing details

- Validation strategy documentation

- Code and model availability information

Procedure:

- Domain Scoring: For each of the 24 items across 6 domains, assign points based on predefined criteria [20].

- Clinical Relevance Assessment: Evaluate whether the model addresses a clearly defined clinical need with appropriate outcome definitions (10 points).

- Data Quality Evaluation: Assess data sources, diversity, preprocessing, and labeling accuracy (13 points).

- Methodological Review: Score data splitting strategies, sample size adequacy, reference comparisons, and bias assessment (25 points).

- Robustness Analysis: Evaluate performance measures, calibration, error analysis, and explainability approaches (16 points).

- Reporting Quality Assessment: Review model specification, limitations, discussion, and abstract completeness (21 points).

- Reproducibility Check: Verify code, data, and model availability with sufficient documentation (15 points).

- Overall Scoring: Calculate total score (0-100) with interpretation:

- 0-39: Low quality

- 40-59: Moderate quality

- 60-79: High quality

- 80-100: Excellent quality

Interpretation: Higher scores indicate stronger methodological and reporting quality. Studies scoring below 40 require substantial improvement before clinical application. Scores should be interpreted within the specific Context of Use, as domain importance may vary across applications.

Experimental Protocols for Context-Specific Validation

Protocol for Clinical Relevance Validation

Protocol 2: Clinical Context Validation Framework

Purpose: To validate that AI model outputs align with clinical decision requirements within the specified Context of Use.

Materials:

- Defined clinical workflow diagram

- Decision points requiring AI support

- Clinical expert panel (3-5 specialists)

- Validation cases (20-30 representative scenarios)

Procedure:

- Workflow Mapping: Document the clinical workflow, identifying specific decision points where the AI model will provide support.

- Output Requirements Definition: For each decision point, specify the required output format, precision, and timing constraints.

- Expert Panel Review: Convene clinical experts to review model outputs against clinical requirements using structured assessment forms.

- Scenario Testing: Present validation cases to both the model and clinical experts, comparing recommendations.

- Utility Assessment: Measure time-to-decision, confidence levels, and alignment with expert consensus.

- Integration Testing: Evaluate model integration into clinical workflows, assessing interface requirements and result presentation.

Success Criteria: Model outputs must achieve ≥90% clinical appropriateness rating from expert panel and reduce decision time by ≥30% without reducing accuracy.

Protocol for Robustness and Error Analysis

Protocol 3: Contextual Robustness Assessment

Purpose: To evaluate model performance across expected variations within the Context of Use.

Materials:

- Primary validation dataset

- Challenge datasets representing edge cases and distribution shifts

- Performance monitoring infrastructure

- Statistical analysis software

Procedure:

- Contextual Variable Identification: Identify factors within the Context of Use that may affect model performance (e.g., demographic variables, technical variations, temporal changes).

- Stratified Performance Analysis: Evaluate model performance across subgroups defined by contextual variables.

- Challenge Testing: Assess performance on specifically curated datasets representing realistic challenges within the Context of Use.

- Stability Monitoring: Implement continuous performance monitoring with statistical process control methods.

- Error Pattern Analysis: Systematically categorize and analyze errors to identify failure modes specific to the Context of Use.

- Boundary Condition Mapping: Define the operational boundaries within which the model maintains acceptable performance.

Success Criteria: Model performance must remain within predefined acceptable ranges across all contextual variables and challenge conditions identified as relevant to the Context of Use.

Visualization Framework: Experimental Workflows and Logical Relationships

AI Model Evaluation Hierarchy

Context of Use Evaluation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: AI Evaluation Research Reagents and Solutions

| Tool/Resource | Function | Application Context |

|---|---|---|

| APPRAISE-AI Tool | Quantitative quality assessment across 6 domains [20] | Standardized evaluation of AI study methodology and reporting |

| TRIPOD-AI Checklist | Reporting guideline for prediction model studies [20] | Ensuring transparent and complete reporting of AI model development |

| Scikit-learn | Machine learning metrics and validation techniques [18] | Technical performance evaluation and baseline comparisons |

| SHAP/LIME | Model interpretability and explanation generation [18] | Understanding model predictions and establishing trustworthiness |

| TensorFlow Model Analysis | Specialized evaluation for TensorFlow models [18] | Fairness assessment and bias detection in neural networks |

| MLflow | Experiment tracking and model performance logging [18] | Reproducible evaluation and version control across model iterations |

| WebAIM Contrast Checker | Color contrast verification for visualizations [21] [22] | Ensuring accessibility of model outputs and visual explanations |

Implementation Guidelines and Best Practices

Data Quality and Representation Requirements

High-quality, representative data forms the foundation of reliable AI evaluation within a specific Context of Use [18]. The evaluation must assess whether training and validation data adequately represent the target population and usage conditions. APPRAISE-AI evaluates data sources based on routinely captured proxies of diversity, including number of institutions, healthcare setting, and geographical location [20]. Particular emphasis should be placed on incorporating historically underrepresented groups, such as community-based, rural, or lower-income populations, to ensure equitable performance across the intended application spectrum.

Data preprocessing steps must be thoroughly documented and evaluated, including how data were abstracted, how missing data were handled, and how features were modified, transformed, and/or removed [20]. While methods to address class imbalance were previously emphasized, recent evidence suggests they may worsen model calibration despite no clear improvement in discrimination [20]. Data splitting strategies should be graded according to established hierarchies of validation strategies, with external validation representing the highest level of evidence for generalizability [20].

Evaluation Metrics Selection Framework

Selecting appropriate evaluation metrics requires alignment with the specific Context of Use rather than defaulting to generic measures. While area under the receiver operating characteristic curve (AUC) is commonly reported, other measures may be more relevant depending on the clinical context [20]. For imaging applications, the Metrics Reloaded recommendations provide specialized guidance [20]. Beyond discrimination, evaluation must assess model calibration—the agreement between predictions and observed outcomes—particularly for probabilistic outputs.

Decision curve analysis provides a crucial evaluation dimension by quantifying whether an AI model provides more net benefit than alternative approaches [20]. This analysis enables determination of whether the model does more good than harm within the specific clinical context. Performance should be evaluated against appropriate reference standards, including clinician judgment, traditional regression approaches, and existing models [20]. Comprehensive error analysis should categorize mistakes by clinical significance rather than just quantitative frequency.

Reproducibility and Transparency Standards

The reproducibility crisis in AI research necessitates rigorous standards for transparency and replicability [20]. Evaluation should include assessment of code availability, data accessibility, and model sharing practices. The APPRAISE-AI tool allocates 15 points specifically for reproducibility, emphasizing the importance of making research materials publicly available to enable verification and replication [20].

Documentation should include detailed model specifications, training procedures, hyperparameter selections, and computational requirements. Limitations should be explicitly acknowledged, including potential biases, known failure modes, and boundary conditions for safe operation. Model cards or similar documentation should provide standardized summaries of performance characteristics across different population subgroups and conditions [20]. Version control and dependency documentation ensure that results can be replicated as software ecosystems evolve.

Establishing the Context of Use as the foundation for AI model evaluation represents a paradigm shift from technical validation to utility assessment. By adopting principles from forensic science's hierarchy of propositions, specifically activity-level evaluation, we create a more robust, relevant, and practical framework for assessing AI models in drug development and healthcare [19]. The integrated approach combining quantitative assessment using tools like APPRAISE-AI [20] with context-specific validation protocols ensures that models are evaluated against the decisions they intend to support rather than abstract performance metrics.

This framework emphasizes that model quality is relative to context—a model excellent for one application may be inadequate for another. By explicitly defining the Context of Use and evaluating models against activity-level propositions, researchers and drug development professionals can make more informed decisions about model deployment, ultimately accelerating the translation of AI innovations into clinical practice while maintaining rigorous safety and efficacy standards.

Implementing Proposition Hierarchies for AI Model Credibility and Regulatory Submissions

A Step-by-Step Guide to Defining the Context of Use (COU)

The Context of Use (COU) is a foundational concept in the regulatory landscape for artificial intelligence (AI) in drug development. It provides a precise description of how a specific AI model will be applied to address a particular problem or question within the drug development lifecycle. As outlined by the U.S. Food and Drug Administration (FDA) in its 2025 guidance, a clearly defined COU is the critical first step in a risk-based framework for establishing AI model credibility [23] [24]. Defining the COU is not merely an administrative exercise; it determines the scope of model validation, the necessary level of documentation, and the evidence required to support regulatory submissions for new drugs and biological products [23].

The importance of the COU stems from the multifaceted applications of AI in drug development. AI methods can predict patient outcomes, enhance understanding of disease progression, and analyze complex datasets from real-world evidence or digital health technologies [24]. Given this wide range of potential uses, the same AI model may require different levels of validation and evidence depending on its specific context. A well-articulated COU ensures that all stakeholders, including regulatory bodies, have a shared understanding of the model's intended purpose, its operational boundaries, and its role in decision-making processes [23] [25]. This guide provides a step-by-step protocol for researchers and drug development professionals to define the COU effectively, framed within the broader research on activity-level evaluation.

A Step-by-Step Protocol for Defining the Context of Use

The process of defining the COU is iterative and should be integrated into the early stages of AI model development. The following steps, aligned with the FDA's proposed framework, offer a detailed protocol for establishing a comprehensive COU [23].

Step 1: Precisely Articulate the Target Question

The process begins with a clear and unambiguous statement of the scientific or clinical problem the AI model is intended to address. This "Question of Interest" should be specific, focused, and framed in the context of drug development.

- Action: Draft a single-sentence question that defines the core objective.

- Protocol:

- Identify the Decision Point: Determine the specific decision in the drug development process that the AI model will inform (e.g., patient stratification, safety monitoring, product quality control).

- Define the Variables: Explicitly state the input variables (e.g., patient biomarkers, clinical measurements, image data) and the desired output (e.g., a prediction, a classification, a probability).

- Document the Rationale: Justify why this question is important and how its resolution will support drug safety, efficacy, or quality.

Table 1: Examples of Target Questions in Drug Development

| Drug Development Phase | Example Target Question |

|---|---|

| Clinical Development | "Which subjects are at low enough risk of a serious adverse event to forgo post-dose inpatient monitoring?" [23] |

| Commercial Manufacturing | "Does this vial of Drug B meet the specified fill volume specification?" [23] |

| Target Discovery | "Does this small molecule compound interact with the intended protein target with high affinity?" |

Step 2: Specify the AI Model's Application Scenario

This step details the specific role and operational boundaries of the AI model in answering the target question. The application scenario describes how the model's output will be integrated with other evidence to inform the final decision.

- Action: Create a detailed narrative of the model's function and its interaction with other data sources.

- Protocol:

- Describe the Model's Function: Specify whether the model will provide a definitive answer or serve as one piece of supporting evidence. For instance, will it be the sole determinant for a decision, or will its output be combined with results from clinical studies or other assays?

- Define Operational Boundaries: Outline the conditions under which the model will be used. This includes the specific patient population, the type of medical product, the data sources (e.g., electronic health records, clinical trial data, real-world data), and any technical constraints.

- Map the Integration Pathway: Explain how the model's output will be used in practice. Will it trigger an automated action, or will it be reviewed by a human expert as part of a larger body of evidence?

Step 3: Create a COU Definition Document

Consolidate the information from Steps 1 and 2 into a formal COU definition document. This document serves as the single source of truth for the model's intended use and is essential for internal alignment and regulatory communication.

- Action: Produce a structured document that will be referenced throughout the model's lifecycle.

- Protocol:

- Use a Standardized Template: Adopt a consistent format for all COU definitions within your organization to ensure completeness and ease of review.

- Incorporate Feedback: Circulate the draft COU document among relevant stakeholders, including biostatisticians, clinical scientists, and regulatory affairs professionals, to refine the definition.

- Version Control: Maintain strict version control for the COU document. Any changes to the model's intended use must be reflected in an updated COU and may trigger re-validation.

The following workflow diagram visualizes the key decision points in the COU definition process.

Integrating COU into the AI Credibility Assessment Framework

Defining the COU is the critical first phase of the broader AI credibility assessment, a multi-step, risk-based process [23]. The COU directly informs the subsequent evaluation of model risk and the design of the validation plan. The following diagram illustrates this integrated framework and the central role of the COU.

From COU to Risk Assessment

Once the COU is defined, the next step is to evaluate the associated model risk. The FDA guidance recommends a two-dimensional risk assessment based on Model Influence and Decision Consequence [23].

- Model Influence: This dimension evaluates how much the AI model's output contributes to the final decision. A model that is the sole decision factor has high influence, whereas a model that is one of several advisory inputs has lower influence.

- Decision Consequence: This dimension assesses the severity of harm to a patient or consumer that could result from an incorrect decision based on the model's output. Consequences can range from minor inconvenience to life-threatening events.

Table 2: AI Model Risk Assessment Parameters

| Risk Dimension | Description | Low-Risk Example | High-Risk Example |

|---|---|---|---|

| Model Influence | "The degree to which the AI model output contributes to the decision." | Model output is one of several pieces of evidence reviewed by an expert. | Model output is the sole, automated determinant for a critical decision (e.g., patient eligibility). |

| Decision Consequence | "The severity of patient harm from an incorrect model-based decision." | Incorrect output leads to a minor delay in a non-critical manufacturing process. | Incorrect output leads to a life-threatening adverse event going unmonitored in a clinical trial [23]. |

Leveraging the COU for Credibility Assessment Planning

The defined COU and the resulting risk level directly determine the rigor and extent of the activities required to establish model credibility. A high-risk model, such as one used to make pivotal safety decisions, will necessitate a more extensive and rigorous credibility assessment plan than a low-risk model used for internal hypothesis generation [23]. This plan typically covers:

- Model Design and Development: Justification of the chosen algorithm, data preprocessing steps, and feature selection.

- Data Quality and Management: Documentation of data sources, provenance, and handling of missing or biased data.

- Model Performance Testing: Establishment of performance acceptance criteria and testing against those criteria using appropriate validation datasets.

- Model Operational Monitoring: Plans for ongoing monitoring of model performance in its real-world context to detect drift or degradation.

Experimental Protocols for COU-Driven Model Validation

The experiments used to validate an AI model must be tailored to its specific COU. The following protocols provide a framework for designing these validation studies.

Protocol 1: Performance Benchmarking Against the COU

This protocol ensures the model meets pre-defined performance standards relevant to its intended use.

- Define Acceptance Criteria: Based on the COU, establish quantitative performance thresholds for metrics such as accuracy, precision, recall, area under the curve (AUC), or context-specific metrics.

- Curate Validation Datasets: Assemble datasets that are representative of the real-world population and conditions specified in the COU. Ensure these datasets are independent of the training data.

- Execute Validation Run: Run the model on the validation dataset and calculate the performance metrics.

- Compare and Report: Compare the results against the pre-defined acceptance criteria. Document any deviations and their justifications.

Protocol 2: Robustness and Sensitivity Analysis

This protocol tests the model's stability and reliability when faced with variations in input data, a critical consideration for activity-level evaluation.

- Identify Key Input Variables: From the COU, determine which input variables are most likely to vary or contain noise in a real-world setting.

- Perturb Input Data: Systematically introduce realistic variations or noise into the validation dataset (e.g., slight variations in image acquisition, missing data points, demographic shifts).

- Measure Performance Impact: Re-run the model on the perturbed datasets and measure the change in performance metrics.

- Establish Robustness Thresholds: Determine the level of variation at which model performance becomes unacceptably degraded, as per the COU requirements.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successfully defining the COU and conducting subsequent validation requires a set of methodological "reagents" and tools.

Table 3: Essential Reagents for COU Definition and AI Model Validation

| Tool / Reagent | Function in COU Process |

|---|---|

| Structured COU Template | A standardized document (e.g., based on FDA guidance) to ensure all critical elements of the COU are captured consistently [23]. |

| Risk Assessment Matrix | A tool (often a 2x2 or 3x3 grid) to visually plot and determine overall model risk based on Influence and Consequence scores [23]. |

| Credibility Assessment Plan Template | A pre-defined outline for designing validation activities, covering data management, model training, performance testing, and bias evaluation [23]. |

| Version Control System (e.g., Git) | Essential for tracking changes not only to the model code but also to the COU document and validation protocols, ensuring a clear audit trail. |

| Electronic Lab Notebook (ELN) | Provides a secure, timestamped environment for documenting the rationale behind the COU, stakeholder feedback, and validation results. |

| Regulatory Submission Gateway | Familiarity with FDA programs (e.g., Emerging Technology Program - ETP, Innovative Science & Technology Approaches - ISTAND) for early engagement on novel COUs [23]. |

Defining the Context of Use is a disciplined, strategic process that forms the bedrock of credible and regulatory-compliant AI in drug development. By meticulously articulating the target question and application scenario, research teams can accurately assess risk, design fit-for-purpose validation experiments, and build the evidence necessary to support regulatory submissions. As AI continues to evolve and the proposed AI2ET (AI-enabled Ecosystem for Therapeutics) framework gains traction, the principles of a well-defined COU will remain paramount for ensuring that AI-driven tools are deployed safely, effectively, and ethically to bring new therapies to patients [25]. Adherence to this step-by-step guide empowers scientists and regulators to navigate the complexity of AI with a shared, clear understanding of its intended purpose.

Structuring AI Model Development Around Competing Propositions

The integration of a proposition-hierarchical framework, adapted from forensic science, provides a robust methodological foundation for validating artificial intelligence (AI) models in drug development. This approach structures evidence evaluation around competing propositions or hypotheses, enabling rigorous assessment of an AI model's output given specific activity-level scenarios [26]. Within the hierarchy of propositions, activity-level evaluation addresses the question of how a specific set of data or evidence came to be generated through particular activities or processes. For AI systems in pharmaceutical research, this translates to evaluating model predictions against competing propositions about underlying biological mechanisms, drug-target interactions, or clinical outcomes. The Bayesian network methodology offers a mathematically formalized structure for this evaluative process, quantifying the strength of evidence for one proposition over another based on observed data [26].

This framework is particularly valuable for establishing regulatory compliance and model trustworthiness in high-stakes domains like drug development, where AI systems must provide auditable, evidence-based rationales for their predictions [27]. By implementing a nested model for AI design and validation, researchers can systematically address potential threats at each layer of the AI process—from regulatory requirements and domain specifications to data provenance, model architecture, and prediction integrity [27].

Table 1: Core Components of Proposition-Based AI Evaluation

| Component | Description | Application in Drug Development |

|---|---|---|

| Competing Propositions | Alternative explanations or hypotheses about the activity that generated the data | Competing mechanisms of action, differential diagnosis, or therapeutic efficacy scenarios |

| Bayesian Network Framework | Graphical model representing probabilistic relationships between variables | Quantifying evidence strength for pharmaceutical hypotheses given experimental data |

| Activity-Level Propositions | Specific statements about how evidence came to exist through particular activities | Evaluating how AI model predictions align with specific biological pathways or drug effects |

| Evidence Evaluation | Systematic assessment of data supporting one proposition over another | Validating AI predictions against preclinical and clinical evidence standards |

Theoretical Framework: Bayesian Networks for Competing Propositions

Foundational Principles

Bayesian Networks (BNs) provide a probabilistic graphical framework for evaluating competing propositions under uncertainty. These networks represent variables as nodes and conditional dependencies as directed edges, enabling transparent reasoning about complex evidentiary relationships [26]. The narrative BN construction methodology aligns AI model validation with established forensic science practices, creating an accessible structure for both experts and regulatory bodies to interpret [26]. For drug development professionals, this translates to a quantifiable method for evaluating how strongly experimental data supports one pharmacological proposition over another.

The mathematical foundation of this approach relies on Bayes' theorem, which updates prior beliefs about competing propositions based on new evidence. Formally, this is represented as:

[ P(Proposition|Evidence) = \frac{P(Evidence|Proposition) \times P(Proposition)}{P(Evidence)} ]

Where the likelihood ratio ( \frac{P(Evidence|Proposition₁)}{P(Evidence|Proposition₂)} ) quantifies the strength of evidence for Proposition₁ against Proposition₂ [26].

Hierarchical Proposition Framework

The hierarchy of propositions in AI model validation spans from source-level to activity-level evaluations:

- Source-Level Propositions: Address the origin of data or evidence (e.g., "Does this genomic data originate from healthy or diseased tissue?").

- Activity-Level Propositions: Concern the specific processes that generated the data (e.g., "Was this transcriptomic signature produced by Drug A's specific mechanism of action or a general stress response?").

- Offense-Level Propositions: Relate to ultimate conclusions about drug efficacy or safety (e.g., "Does this combined evidence demonstrate that Drug B provides clinically meaningful improvement over standard care?").

Activity-level evaluation occupies a crucial middle ground, linking raw data characteristics to higher-order scientific conclusions about drug mechanisms and effects.

Protocol for Implementing Proposition-Based AI Validation

Phase 1: Proposition Definition and Network Structure

Objective: Define competing propositions and map their relational structure using Bayesian networks.

Methodology:

- Identify Competing Propositions: Formulate at least two mutually exclusive propositions regarding the activity that generated the observed data.

- Example: "The observed gene expression pattern results from Compound X's specific target engagement" versus "The observed gene expression pattern results from non-specific cellular toxicity."

Define Network Nodes: Identify key variables relevant to evaluating the propositions, including:

- Experimental observations (e.g., biomarker measurements, imaging features)

- Contextual factors (e.g., patient demographics, disease stage)

- Mechanism-specific indicators (e.g., pathway activation signatures)

Establish Conditional Dependencies: Map probabilistic relationships between nodes based on established biological knowledge and preliminary data.

Specify Prior Probabilities: Assign initial probability estimates for root nodes based on literature review or historical data.

Deliverable: A structured Bayesian network diagram with clearly defined nodes, edges, and conditional probability tables.

Diagram 1: Bayesian Network for Drug Mechanism Propositions

Phase 2: Data Integration and Quantitative Analysis

Objective: Populate the Bayesian network with experimental data to calculate likelihood ratios for competing propositions.

Methodology:

- Experimental Data Collection: Gather quantitative data relevant to each observation node:

- High-throughput screening results

- 'Omics measurements (genomics, transcriptomics, proteomics)

- Phenotypic screening data

- Clinical laboratory values

Conditional Probability Estimation: Determine probability distributions for child nodes given parent node states using:

- Historical experimental data

- Control group measurements

- Literature-derived reference ranges

Likelihood Ratio Calculation: Compute the ratio of probabilities for the observed data under each competing proposition:

[ LR = \frac{P(Evidence|Proposition₁)}{P(Evidence|Proposition₂)} ]

- Sensitivity Analysis: Assess how changes in probability estimates affect the likelihood ratio to identify critical assumptions or data gaps.

Table 2: Quantitative Data Analysis Methods for Proposition Evaluation

| Analysis Method | Application | Implementation Tools |

|---|---|---|

| Cross-Tabulation | Analyze relationships between categorical variables (e.g., target presence/absence vs. outcome) | SPSS, R, Python Pandas [28] |

| MaxDiff Analysis | Identify strongest differentiating evidence among multiple indicators | Specialized survey tools, statistical packages [28] |

| Gap Analysis | Compare actual vs. expected experimental results under each proposition | Excel, ChartExpo, custom scripts [28] |

| Text Analysis | Evaluate scientific literature support for competing propositions | Natural language processing tools, word clouds [28] |

| Correlation Analysis | Measure strength of relationship between evidence components | R, Python, correlation matrices [29] |

Phase 3: Validation and Regulatory Alignment

Objective: Ensure the proposition-based evaluation meets regulatory standards for AI in healthcare.

Methodology:

- Regulatory Requirement Mapping: Identify relevant regulations (e.g., FDA AI/ML guidelines, EU AI Act) and map their requirements to the validation framework [27].

Ethical and Technical Requirement Categorization:

- Ethical: Privacy, data governance, societal well-being, safety

- Technical: Human agency, robustness, transparency, fairness [27]