Future-Proofing Digital Forensics: Robust Validation Strategies for Rapidly Evolving Tools in 2025

This article provides a comprehensive framework for researchers and forensic professionals to develop and implement robust validation strategies for digital forensics tools, which are evolving at an unprecedented pace.

Future-Proofing Digital Forensics: Robust Validation Strategies for Rapidly Evolving Tools in 2025

Abstract

This article provides a comprehensive framework for researchers and forensic professionals to develop and implement robust validation strategies for digital forensics tools, which are evolving at an unprecedented pace. It addresses the critical need to maintain scientific integrity and legal admissibility amidst the integration of AI, cloud computing, and disruptive technologies. Covering foundational principles, methodological applications, troubleshooting of common pitfalls, and comparative analysis techniques, the guide equips professionals to ensure their tool validation processes are as dynamic and resilient as the technologies they assess.

The Critical Need for Validation in a High-Velocity Digital Forensics Landscape

Frequently Asked Questions (FAQs)

What is forensic validation and why is it critical in digital forensics? Forensic validation is the fundamental process of testing and confirming that forensic techniques, tools, and methods yield accurate, reliable, and repeatable results [1]. It is a professional and ethical necessity because it ensures that forensic conclusions are supported by scientific integrity and are robust enough to stand in court [1]. In digital forensics, it is crucial for establishing scientific credibility and gaining legal acceptance under standards like Daubert [1]. Without it, findings can be severely undermined, leading to legal exclusion of evidence or miscarriages of justice [1].

What is the difference between tool, method, and analysis validation? Forensic validation encompasses three distinct but interconnected components [1]:

- Tool Validation: Confirms that the forensic software or hardware performs as intended, extracting and reporting data correctly without altering the original source.

- Method Validation: Confirms that the procedures and steps followed by a forensic analyst produce consistent outcomes across different cases, devices, and practitioners.

- Analysis Validation: Evaluates whether the interpreted data accurately reflects its true meaning and context, ensuring the software presents a valid representation of the underlying evidence.

How does the rapid evolution of technology impact forensic validation? The digital forensics field is evolving at an unprecedented pace due to advancements in cloud storage, AI, and mobile devices, with around 90% of all crimes now involving digital footprints [2] [3]. This demands continuous validation of tools and methods [1] [4]. Forensic tools are frequently updated, and without proper re-validation, they may introduce errors, omit critical data, or fail to handle new types of evidence from sources like IoT devices or encrypted applications [1] [2].

What are the core principles guiding forensic validation? The core principles are [1] [5]:

- Reproducibility: Results must be repeatable by other qualified professionals using the same method.

- Transparency: All procedures, software versions, logs, and chain-of-custody records must be thoroughly documented.

- Error Rate Awareness: The known error rates of forensic methods should be understood and disclosed.

- Peer Review: Validation processes should be reviewed by the broader forensic community to ensure scrutiny.

- Continuous Validation: Tools and methods must be frequently revalidated to keep pace with technological change.

Troubleshooting Guides

Issue 1: Inconsistent Results Between Forensic Tools

Problem: Two different forensic tools extracting data from the same source (e.g., a mobile phone) yield different results, casting doubt on the evidence's reliability [1].

Solution:

- Cross-Validation: Systematically compare the outputs across multiple, validated forensic tools to identify and investigate inconsistencies [1].

- Use Known Datasets: Test the tools against a "ground truth" dataset with a known and verified content to verify their parsing and extraction capabilities [1].

- Review Tool Logs: Scrutinize the logs and reports generated by each tool. Transparent and auditable logs are essential for understanding the tool's actions and identifying potential points of failure [1].

- Consult Testing Bodies: Refer to test findings from organizations like the National Institute of Standards and Technology (NIST) Computer Forensics Tool Testing (CFTT) program, which develops rigorous methodologies for tool testing [5].

Issue 2: Validating Tools in the Age of Artificial Intelligence (AI)

Problem: AI and Large Language Models (LLMs) in forensic tools can produce "black box" results that are difficult for an expert to explain or validate, challenging the principle of transparency [1] [4].

Solution:

- Do Not Blindly Trust: Treat AI-generated findings as leads, not conclusive evidence. Experts must not blindly trust automated results [1].

- Ground-Truth Verification: Use AI tools that ground their outputs in actual case artifacts. For example, an offline AI assistant like BelkaGPT processes only case-specific data, allowing an examiner to trace an AI-generated insight back to the original source evidence (e.g., a specific SMS or email) [4].

- Rigorous Interpretation: Validate and interpret AI-generated findings with the same rigor as traditional methods. This includes using the core principles of reproducibility and peer review to assess the AI's performance on your specific data [1].

Issue 3: Adhering to Standards and Ensuring Legal Admissibility

Problem: Ensuring that the validation process itself meets established legal and scientific standards to prevent evidence from being challenged or excluded in court.

Solution:

- Follow a Methodological Approach: Adopt a structured validation process that includes planning, execution, and thorough documentation to ensure thoroughness and repeatability [5]. The process should include requirements analysis, unit testing, integration testing, system testing, and validation testing against legal standards [5].

- Leverage Established Frameworks: Utilize guidelines and best practices from authoritative bodies such as SWGDE (Scientific Working Group on Digital Evidence) and the NIST CFTT program [6] [5].

- Implement Comprehensive Documentation: Maintain detailed documentation of the entire validation process, including the test plan, test cases, procedures, and results. This provides transparency and facilitates auditing [5]. Reports should disclose fundamental principles, methodology, limitations, and areas of scientific controversy to meet calls for increased transparency [7].

Experimental Protocols and Workflows

Protocol 1: Core Digital Forensics Tool Validation

This methodology is based on the NIST CFTT framework and general forensic validation principles [5].

Objective: To verify that a digital forensics tool (e.g., Cellebrite UFED, Magnet AXIOM) accurately acquires, extracts, and reports data from a digital source.

Materials:

- Device or forensic image for testing (e.g., a smartphone with known data).

- The forensic tool to be validated.

- A second, previously validated tool for cross-comparison.

- Hashing utility (e.g., within ProDiscover or FTK Imager).

- Write-blocking hardware.

Procedure:

- Preparation: Create a controlled test environment. Using a write-blocker, create a forensic image (e.g., .dd or .E01 file) of the source device.

- Integrity Verification: Generate a cryptographic hash (e.g., SHA-256) of the source evidence and the acquired image. The hashes must match to prove data integrity [1] [8].

- Tool Execution: Process the forensic image using the tool under test. Execute its key functions, such as file system parsing, data carving, and keyword searching.

- Output Analysis: Document all findings from the tool. Pay close attention to any errors, omissions, or unexplained anomalies in the report.

- Cross-Validation: Process the same forensic image using a second, validated tool. Compare the outputs from both tools for consistency.

- Documentation: Record every step, tool version, configuration, and result. This log is critical for transparency and reproducibility [1].

Protocol 2: Image and Video Authentication Examination

This protocol is derived from SWGDE's Best Practices for Image Authentication [6].

Objective: To determine if a questioned image or video is an accurate representation of the original data or if it has been manipulated.

Materials:

- The questioned image or video file.

- Forensic multimedia analysis tools (e.g., Amped Authenticate, Adobe Photoshop).

- Metadata extraction tools (e.g., ExifTool).

- Hashing algorithm utility.

Procedure:

- Evidence Integrity: Verify the integrity of the submitted file by comparing its hash value with any available original hash [8].

- Metadata Analysis: Extract and analyze all embedded metadata (EXIF, etc.) for inconsistencies, such as mismatched dates or editing software tags [8].

- Error Level Analysis (ELA): Perform ELA to identify areas of an image that may have been altered by showing different compression levels.

- Clone Detection: Use specialized algorithms to detect regions of an image that may have been copied and pasted (compositing) [6].

- Computer-Generated Imagery (CGI) Detection: Examine the image for tell-tale signs of CGI, such as unrealistic skin textures, inconsistencies in lighting and shadows, or anatomical inaccuracies [6].

- Format-Specific Artifacts: Look for artifacts specific to the file format and compression that may indicate tampering.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key resources and their functions in forensic validation research.

| Research Reagent / Material | Function in Forensic Validation |

|---|---|

| NIST CFTT Framework [5] | Provides standardized methodologies, test claims, and case categories for the objective testing of computer forensic tools. |

| SWGDE Guidelines [6] | Offers published best practices, standards, and technical notes for digital and multimedia forensics, such as image authentication. |

| Forensic Software Suites (e.g., Cellebrite, Magnet AXIOM, Belkasoft X) [1] [4] | The primary tools under test; used for data acquisition, parsing, and analysis from various digital sources. |

| Validated Hash Algorithms (e.g., SHA-256, MD5) [1] [8] | Creates a unique digital fingerprint for data to verify evidence integrity before and after examination. |

| Known Test Datasets & Images [1] [5] | Serves as "ground truth" evidence with verified content to test a tool's accuracy and performance. |

| Cross-Validation Tools [1] | A second, independently validated tool used to compare results and identify inconsistencies in the primary tool's output. |

The table below summarizes key quantitative requirements and metrics relevant to forensic validation and related digital evidence handling.

| Metric / Requirement | Standard / Threshold | Applicable Context |

|---|---|---|

| WCAG Text Contrast (Minimum) [9] | 4.5:1 (normal text), 3:1 (large text) | Accessibility of forensic software interfaces and generated reports. |

| WCAG Non-text Contrast (Minimum) [9] | 3:1 | Contrast for user interface components and graphical objects in software. |

| Average Data on a Smartphone [3] | >60,000 messages, >32,000 images, >1,000 videos | Illustrates the data volume and complexity faced in modern mobile forensics. |

| Forensic Result Reproducibility [5] | Must produce same results on same equipment (repeatable) and similar results on different systems (reproducible). | Core principle for scientific credibility and legal admissibility. |

Troubleshooting Guides

Guide 1: Troubleshooting Common Validation Gaps

Problem: A forensic tool update has altered how it parses a specific application's database, potentially creating inaccurate evidence.

| Symptom | Potential Cause | Diagnostic Action | Solution |

|---|---|---|---|

| Tool output differs from a known dataset. | Tool algorithm change; Data corruption. | Run tool against a control set of known data; Calculate hash values for integrity [1]. | Revert to a validated tool version; Use a different tool for cross-validation [1]. |

| An expert cannot explain the methodology behind a tool's output. | Over-reliance on "black box" automated tools, especially AI-based ones [10]. | Require the expert to document the tool's function and their own validation steps. | Ensure the expert's testimony reflects a reliable application of the methodology to the facts [11]. |

| Evidence is excluded due to unreliable application of method. | Failure to demonstrate the "good grounds" for the expert's opinion [12]. | Pre-trial Daubert hearing to review the expert's basis and application. | The proponent must show the testimony is based on sufficient facts/data per Rule 702 [11]. |

Guide 2: Troubleshooting Daubert and Rule 702 Challenges

Problem: A motion to exclude your digital forensic expert testimony has been filed under Daubert/Rule 702.

| Symptom | Potential Cause | Diagnostic Action | Solution |

|---|---|---|---|

| Court questions if the method is "product of reliable principles." | Use of a novel or non-peer-reviewed technique. | Identify published standards, peer-reviewed literature, or general acceptance for the method. | Cite the tool's forensic validation studies and its widespread use in the field [1]. |

| Opposing counsel argues the expert's opinion is incorrect. | Conflating the questions of admissibility and correctness [11]. | Distinguish the reliability of the method from the accuracy of the conclusion. | Argue that the "evidentiary requirement of reliability is lower than the merits standard of correctness" [11]. |

| Court failed to provide a rationale for admitting expert testimony. | Inadequate record for appellate review [12]. | Ensure all admissibility decisions and the reasoning behind them are documented. | Create a clear record showing the court fulfilled its gatekeeping role [12]. |

Frequently Asked Questions (FAQs)

On Validation and Error

Q1: What is the core purpose of forensic validation in a legal context? Forensic validation ensures that the tools and methods used to analyze evidence are accurate, reliable, and legally admissible. It acts as a fundamental safeguard against error and bias, helping to establish scientific credibility and gain acceptance under legal standards like Daubert [1].

Q2: What are the key components of a robust validation process? A robust validation process includes three key components [1]:

- Tool Validation: Confirming that the forensic software or hardware performs as intended without altering the source data.

- Method Validation: Verifying that the analytical procedures produce consistent outcomes across different cases and practitioners.

- Analysis Validation: Ensuring that the interpreted data accurately reflects its true meaning and context.

Q3: What is a real-world example of an operational error due to inadequate validation? In Florida v. Casey Anthony, the prosecution's digital forensic expert initially testified that 84 searches for "chloroform" were made on a computer. Through defense-led validation, it was shown the forensic software had grossly overstated this number; only a single search had occurred. This highlights how tool error can dramatically alter a case's narrative [1].

On Legal Admissibility

Q4: What was the significance of the 2023 amendment to Federal Rule of Evidence 702? The 2023 amendment clarified and emphasized two key points [11]:

- The proponent of expert testimony must prove its admissibility by a preponderance of the evidence (the "more likely than not" standard).

- The expert's opinion must reflect a reliable application of principles and methods to the case's facts. This was a textual change to reinforce that experts must "stay within the bounds" of what their basis and methodology can support.

Q5: How does the recent EcoFactor v. Google decision impact digital forensic experts? The May 2025 Federal Circuit decision in EcoFactor tightens the standard for expert testimony, particularly on the sufficiency of underlying data. The court ordered a new trial because the expert's opinion on royalty rates was contrary to the plain language of the license agreements he relied on. This signals that courts will more strictly exclude testimony not grounded in sufficient facts and data [12].

Q6: What is the difference between a question of admissibility and a question of weight? This is a critical distinction [11]:

- Admissibility: A question for the judge. Is the expert's testimony reliable enough to be presented to the jury? This is governed by Rule 702 and Daubert.

- Weight: A question for the jury. How much credibility should the jury give to the expert's testimony? Attacks on the expert's conclusions are typically matters of weight, but only after the testimony has been deemed admissible.

Experimental Protocols for Validation

Protocol 1: Validating a Digital Forensics Tool After an Update

Objective: To confirm that a forensic tool (e.g., Cellebrite UFED, Magnet AXIOM) accurately extracts and parses data after a software update.

Methodology:

- Create a Control Dataset: Using clean devices, generate a known set of artifacts (e.g., SMS messages, emails, app data) and document them thoroughly [1].

- Acquire Evidence: Use the updated tool to extract data from the control devices. Use hash values (e.g., SHA-256) to verify the integrity of the acquired image [1].

- Analyze and Compare: Run the tool's analysis on the extracted image. Compare the output against the known control dataset.

- Cross-Validate: Use a different, previously validated tool to analyze the same control dataset [1].

- Document Results: Record any discrepancies, tool version, and all steps taken. This documentation is crucial for transparency and court testimony [1].

Protocol 2: Establishing a Reliable Methodology for Expert Testimony

Objective: To build a methodology for expert analysis that meets the admissibility standards of Rule 702 and Daubert.

Methodology:

- Define the Scope: Clearly outline the boundaries of the examination based on the case facts.

- Select Tools and Methods: Choose tools and methods that are generally accepted in the field or have been peer-reviewed. Justify this selection.

- Apply the Method: Execute the analysis, ensuring every step is documented and reproducible by another qualified professional [1].

- Interpret Findings: Form an opinion based strictly on the output of the analysis, ensuring it "stays within the bounds" of what the methodology can support [11].

- Prepare for Testimony: Be ready to explain the "good grounds" for the opinion, including the tool's validation, the method's reliability, and the steps taken to ensure a reliable application to the facts [12].

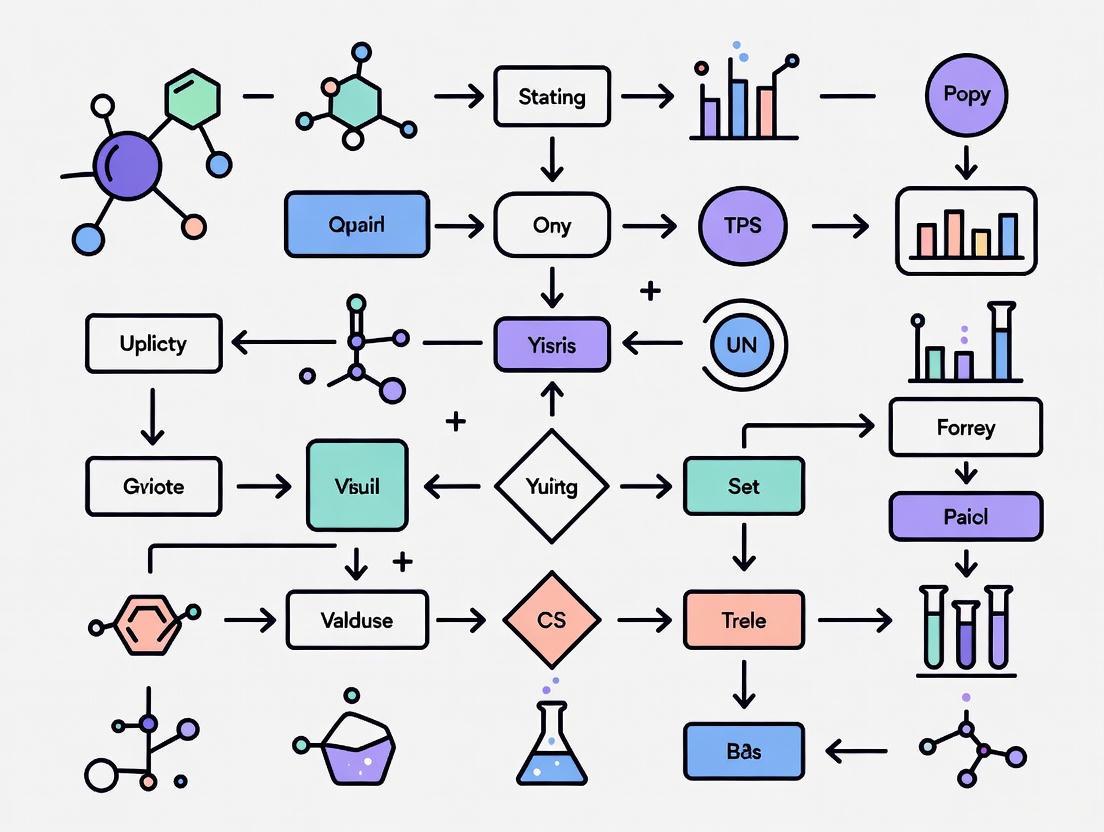

Workflow Visualization

Digital Forensics Admissibility Workflow

The Scientist's Toolkit: Essential Research Reagents for Digital Forensics

This table details key solutions and materials essential for conducting validated digital forensic research and analysis.

| Item | Function & Purpose |

|---|---|

| Validated Forensic Suites (e.g., Cellebrite, Magnet AXIOM, Belkasoft) | Core software for acquiring, analyzing, and reporting on digital evidence. Regular validation ensures their accuracy and reliability in court [1] [4]. |

| Hash Value Algorithms (e.g., SHA-256, MD5) | Cryptographic functions used to verify the integrity of digital evidence, proving it was not altered during the acquisition or analysis process [1]. |

| Control Datasets | Known sets of digital artifacts used to test and validate the output of forensic tools, helping to identify errors after tool updates [1]. |

| Cross-Validation Tools | A second, independent forensic tool used to verify the results of the primary tool, identifying potential tool-specific errors or omissions [1]. |

| AI-Assisted Analysis Tools (e.g., BelkaGPT) | Offline AI tools that help analyze massive volumes of text-based evidence (chats, emails) for patterns and topics, while maintaining evidence integrity and privacy [4]. |

| Comprehensive Logging Systems | Meticulous documentation of all procedures, tool versions, and analyst actions. This ensures transparency, reproducibility, and provides a clear audit trail [1]. |

Your Technical Support Center

This guide provides troubleshooting support for researchers and scientists validating digital forensics tools in a landscape being reshaped by AI, complex cloud environments, and advanced encryption.

Troubleshooting Guides

Issue 1: Inability to Access or Analyze Cloud Data for an Investigation Researchers often face hurdles when forensic data resides in complex, distributed cloud environments [10] [13].

Troubleshooting Steps:

- Identify Data Jurisdiction: First, determine the cloud service provider (CSP) and the geographic location of the data servers. Cross-border data laws can severely restrict access [10] [13].

- Formalize Legal Request: Collaborate with your legal department to submit a formal data request to the CSP, ensuring compliance with relevant regulations like GDPR or the U.S. CLOUD Act [10] [13].

- Leverage Cloud APIs: Use forensic tools that can interact with cloud APIs (e.g., from AWS, Azure, GCP) to extract log and metadata, rather than relying on physical disk imaging [13].

- Preserve Ephemeral Data: Prioritize collecting volatile data from temporary resources like containers, as they can be destroyed quickly based on automated policies [13].

Validation Protocol: To validate a new cloud forensic tool, create a controlled test environment within a major cloud platform. Populate it with sample data fragments across different services (e.g., object storage, virtual machines) and run the tool. A valid tool should successfully identify and reassemble these data fragments from different locations via API calls, providing a coherent evidence timeline.

Issue 2: AI Tool Fails to Properly Identify Deepfake Media in Clinical Trial Data The proliferation of AI-generated synthetic media is a major challenge, and analysis tools must be constantly updated [10].

Troubleshooting Steps:

- Verify Training Data: Confirm that the AI model powering your tool was recently trained on a diverse and current dataset of deepfakes. Outdated models cannot detect new generation techniques [10].

- Check for Algorithm Transparency: Be aware that "black box" AI models can undermine the credibility of findings in a scientific or legal context. Seek tools that provide some level of explainability for their decisions [10].

- Cross-Reference with Metadata: Do not rely solely on the AI's output. Perform a manual check of the file's digital metadata (e.g., EXIF data) for inconsistencies in creation times and device signatures.

- Update or Supplement Tooling: If the tool consistently fails, it may be necessary to procure a more advanced tool or use specialized, validated services for deepfake detection, which have achieved accuracy rates up to 92% in controlled tests [10].

Validation Protocol: To test a deepfake detection tool, assemble a verified dataset containing both authentic and AI-generated images/videos. The dataset should include samples generated by the latest publicly available AI models. Run the tool against this dataset and measure its accuracy, precision, and recall. A robust tool must perform with high accuracy (e.g., >90%) to be considered valid for research integrity purposes [10].

Issue 3: Encrypted Data Obstructs Critical Forensic Timeline Strong encryption can render data unrecoverable without the keys, halting an investigation [14] [15] [16].

Troubleshooting Steps:

- Attempt Key Recovery: Before technical attacks, always investigate if the password or key is available from the user, involved parties, or associated password managers [15].

- Assess Encryption Type: Identify the encryption technology used. Note that techniques like Honey Encryption can deceive brute-force attacks by producing plausible-looking but incorrect data, confusing the investigator [14].

- Evaluate Computational Feasibility: For strong, modern encryption (e.g., AES-256), brute-force decryption is often computationally infeasible and can take an extremely long time, even with sophisticated software [15].

- Consider Alternative Evidence: If the data cannot be decrypted, pivot to analyzing unencrypted metadata, cloud access logs, or communication records from the same individual to build an alternative timeline [13].

Validation Protocol: To validate a tool's capability against encrypted data, create encrypted containers or disks using different algorithms (e.g., AES-256, Blowfish) and key strengths. A tool's validity should not be measured solely on its ability to crack encryption (which is often impossible), but on its ability to correctly identify the encryption in use, safely mount encrypted drives for imaging when keys are available, and integrate with other investigative workflows.

Frequently Asked Questions (FAQs)

Q1: How can we trust the results from an AI-based forensics tool when the technology is changing so fast? Trust is built through continuous validation. AI models, especially "black box" systems, can lack transparency [10]. Establish a routine where you test new AI tools against a benchmark dataset with known outcomes before applying them to live research data. Focus on tools that provide details on their training data and algorithms.

Q2: Our data is spread across multiple cloud providers (multi-cloud). What is the biggest forensic challenge this creates? The primary challenge is fragmentation and complexity [17] [13]. Data is distributed across different platforms with varying security controls, logging formats, and data access APIs. This makes it difficult to get a unified view of evidence. Furthermore, legal jurisdictions for data stored in different geographic regions can complicate and delay evidence collection [10] [13].

Q3: With the rise of quantum computing, is our current encrypted data safe? There is a growing concern about the "harvest now, decrypt later" threat, where adversaries collect encrypted data today to decrypt it later when quantum computers become powerful enough [18]. This is driving the transition to post-quantum cryptography (PQC). NIST has released new PQC standards, and organizations are now beginning to inventory and plan upgrades for their cryptographic systems [18].

Q4: What is the most common misconception about digital evidence? A common misconception is that anything stored on a digital device can always be retrieved [15]. In reality, overwritten or physically damaged data can be permanently lost. Furthermore, opening files directly on a suspect device can change file metadata (like "last accessed" times), potentially tampering with evidence and rendering it inadmissible. Only trained investigators with proper tools should handle original evidence [15].

Quantitative Data on the Changing Landscape

Table 1: The State of AI Adoption and Impact (2025) This table summarizes key data on how organizations are using AI, highlighting both its broadening use and the challenges in scaling it effectively [19].

| Metric | Value | Implication for Researchers |

|---|---|---|

| Organizations using AI | 88% | AI tools are becoming standard, making their validation critical. |

| Organizations scaling AI | ~33% | Most are still in early phases, so best practices are still emerging. |

| Experiencing EBIT impact | 39% | Measuring tangible value from AI remains a challenge for many. |

| AI-driven innovation | 64% | The primary benefit is often qualitative improvement in capabilities. |

| Expecting workforce decrease | 32% | AI is expected to impact staffing models, potentially automating some tasks. |

Table 2: Emerging Encryption Technologies & Trends This table outlines advanced encryption methods that are redefining what is possible to secure and, therefore, to forensically examine [14] [16] [18].

| Technology | Core Principle | Research & Forensic Consideration |

|---|---|---|

| Homomorphic Encryption | Allows computation on encrypted data without decrypting it first [14]. | Could allow analysis of private genomic/patient data without violating privacy, but also prevents direct forensic inspection of the underlying data. |

| Honey Encryption | Deceives attackers by returning plausible-looking fake data when wrong keys are used [14]. | Could misdirect an investigation by providing false leads and wasting computational resources. |

| Multi-Party Computation (MPC) | Splits data into parts for separate servers; no single server has the complete dataset [14]. | Complicates evidence gathering as data is inherently fragmented and requires cooperation from multiple entities. |

| Post-Quantum Crypto (PQC) | Algorithms designed to be secure against attacks from both classical and quantum computers [18]. | Preparing for a future where current encryption standards may be broken, ensuring long-term data confidentiality. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Forensics "Reagents" for Tool Validation This table details key materials and tools required for rigorous experimentation and validation of digital forensics methodologies.

| Item | Function in Validation |

|---|---|

| Forensic Disk Images | A pristine, bit-for-bit copy of a storage device. Used as a standardized, repeatable baseline to test and compare the data extraction and analysis capabilities of different tools. |

| Verified Data Set (e.g., for AI/Deepfakes) | A collection of digital files (images, video, documents) where the ground truth (e.g., authentic vs. synthetic) is known. Essential for benchmarking the accuracy and reliability of AI-based analysis tools. |

| Cloud API Simulator | A controlled environment that mimics the APIs of major cloud providers (AWS, Azure, GCP). Allows for safe, legal, and repeatable testing of cloud forensic tools without interacting with live, production systems. |

| Encrypted Test Vectors | A set of files and containers encrypted with known algorithms (AES, RSA, etc.) and passwords. Critical for validating a tool's ability to handle, identify, and support the analysis of encrypted data. |

| Log Generator | Software that produces standardized, synthetic log data simulating various application and security events. Used to test the performance and parsing accuracy of tools that perform timeline reconstruction and anomaly detection. |

Workflow: Tool Validation in a Changing Landscape

The following diagram maps the logical workflow for validating a digital forensics tool against the challenges posed by AI, cloud, and encryption. This process emphasizes adaptability and continuous testing.

In the rapidly evolving field of digital forensics, the validation of tools and methodologies is paramount for ensuring the reliability of evidence in criminal investigations and legal proceedings. This technical support center resource analyzes documented failures in digital evidence validation through a series of case studies, extracting critical troubleshooting guidance for researchers and forensic professionals. The content is structured to directly address common experimental and operational challenges, providing actionable protocols to strengthen validation frameworks against technological obsolescence and methodological flaws.

Case Analysis: Documented Failures in Digital Evidence

The following table summarizes key real-world instances where digital evidence validation failures compromised judicial outcomes.

| Case | Nature of Digital Evidence | Validation Failure | Consequence | Quantitative Impact |

|---|---|---|---|---|

| David Camm [20] | Phone call logs & email metadata | Flawed timeline analysis; misinterpreted digital timestamps | Wrongful conviction; two trials over a decade | Multiple erroneous timestamps led to wrongful incarceration for years [20] |

| Amanda Knox [20] | Phone records & internet browsing history | Forensic tools failed to correctly interpret phone records; data misread | Wrongful implication and conviction | Initial claim of 84 searches for "chloroform"; validated result: 1 search [1] |

| Casey Anthony [1] | Computer search history | Forensic software (tool validation error) grossly overstated search term frequency | Misleading evidence presented to jury | |

| FBI Audio Evidence [20] | Audio recording for voice analysis | Flawed forensic techniques; unreliable voice matching on poor-quality audio | Risk of wrongful conviction; suspect acquitted | Reliance on unvalidated audio analysis technique [20] |

| "Phantom" IP Address [20] | IP address logs for cybercrime location | Relying solely on IP logs without validating for spoofing | Wrongful arrest | IP address was spoofed, not from accused's device [20] |

Troubleshooting FAQs: Addressing Common Validation Challenges

1. Our forensic tool extracted data, but the output seems inconsistent with the device's activity log. How do we troubleshoot this?

This indicates a potential tool validation failure. The core issue is that the forensic tool may not have accurately parsed the data structure from the specific device or operating system version [1].

- Step 1: Verify Tool Version and Scope. Confirm that your tool version is certified for the evidence source (e.g., specific smartphone model and OS version). Check the vendor's release notes for known issues or parsing limitations [1].

- Step 2: Cross-Validate with a Secondary Tool. Use a different forensic tool or a custom script to extract and parse the same data set. Compare the outputs for discrepancies [1].

- Step 3: Examine Raw Hexadecimal Data. Use a hex editor to view the raw data from the evidence image. Manually verify the data structure and compare it against the tool's parsing results to identify where the misinterpretation occurred [1].

- Solution: Implement a rigorous tool validation protocol before use on evidence, testing tools against known datasets with verified answers [1].

2. How can we definitively determine if a key audio file has been edited or tampered with before we base our analysis on it?

This is a core function of audio authentication services. The process involves a methodological analysis to detect anomalies.

- Step 1: Check File Metadata and Hash Value. Analyze the file's header, container structure, and creation metadata for inconsistencies. Calculate a hash value upon receipt to ensure integrity during your examination [20].

- Step 2: Waveform and Spectral Analysis. Visually inspect the audio waveform for abrupt silences, unnatural clipping, or abrupt transitions. Use spectral analysis to look for gaps in the frequency background that suggest splices [20].

- Step 3: Electrical Network Frequency (ENF) Analysis. Compare the embedded power grid frequency in the recording with a database of historical ENF data. Inconsistent ENF patterns can indicate editing or composite recording [20].

- Solution: Establish a standard operating procedure for audio authentication that includes these technical checks to validate the integrity of audio evidence before deep-content analysis [20].

3. Our investigation hinges on a file's metadata (e.g., creation timestamp), but the defense is challenging its reliability. How do we defend our interpretation?

This challenge attacks the analysis validation component. Your defense must demonstrate that your interpretation is accurate and accounts for variables.

- Step 1: Acknowledge and Explain Inherent Variables. Proactively address factors that affect metadata: time zone configurations, clock drift of the source device, system updates that alter timestamps, and user-driven changes [21].

- Step 2: Corroborate with Multiple Data Sources. Do not rely on a single metadata point. Corroborate the timeline using network logs, application artifacts, and metadata from other related files to build a consistent narrative [21].

- Step 3: Document Your Validation Methodology. Detail the steps taken to validate the metadata, including the tools used and their known error rates, and your reasoning for dismissing alternative explanations [1] [22].

- Solution: Build a multi-faceted timeline of events. The strength of your conclusion comes from the convergence of multiple validated data points, not from a single piece of metadata [21].

Experimental Protocol: Implementing a Core Validation Strategy

This protocol provides a detailed methodology for cross-tool validation, a critical experiment to ensure result reliability.

Objective: To validate the accuracy and completeness of data extracted from a digital evidence source by comparing the outputs of multiple forensic tools.

Principle: Forensic findings must be reproducible and not an artifact of a single tool's functionality or bug [1].

Materials & Reagents:

- Evidence Source: A forensically sound image (e.g.,

.ddor.E01file) of the device under investigation. - Primary Forensic Tool: Your standard tool (e.g., Cellebrite UFED, Magnet AXIOM, MSAB XRY).

- Secondary Forensic Tool(s): At least one alternative tool from a different vendor or an open-source alternative (e.g., Autopsy).

- Hardware: A dedicated, forensically sterile examination workstation.

- Hashing Utility: (e.g.,

md5deep,HashMyFiles) to verify data integrity.

Step-by-Step Methodology:

Evidence Integrity Verification:

- Before any processing, calculate the hash value (MD5, SHA-1) of the evidence image and verify it matches the hash taken at the time of acquisition. Document this match [1].

Tool Configuration and Execution:

- Primary Tool Analysis: Process the evidence image through your primary tool using a standard parsing profile. Export a detailed report of key artifacts (e.g., call logs, messages, specific files).

- Secondary Tool Analysis: Process the same evidence image through the secondary tool(s), using a comparable parsing profile. Export a similar report.

Data Comparison and Analysis:

- Artifact Count: Compare the number of artifacts recovered for each category (e.g., SMS, photos, emails) between the tools. Note any significant discrepancies.

- Content Fidelity: For a sample of critical artifacts, perform a deep comparison. Check for consistency in content, timestamps, and associated metadata.

- Error Logging: Document any parsing errors or warnings reported by each tool.

Interpretation and Reporting:

- Consistency: If tool outputs are consistent, this strengthens the validity of the findings.

- Inconsistency: If discrepancies are found, do not default to the primary tool. Investigate the root cause by checking raw data or using a third tool. Document the investigation and justify the final conclusion based on all available data [1].

Workflow Visualization: Digital Evidence Validation Framework

The following diagram illustrates the logical workflow for a comprehensive digital evidence validation process, integrating technology, methodology, and analysis checks.

This table details key "research reagent solutions" and their functions in the context of digital forensics validation.

| Tool / Material | Primary Function | Role in Validation |

|---|---|---|

| Forensic Write Blockers | Hardware/software to prevent evidence alteration during acquisition. | Ensures the integrity of the source evidence, which is the foundation of all subsequent validation [23]. |

| Hashing Algorithms (MD5, SHA-256) | Generate unique digital fingerprints for data. | Core to verifying evidence integrity throughout the investigative process; any change alters the hash [1] [23]. |

| Cross-Validation Software Suite | Multiple forensic tools from different vendors (e.g., Cellebrite, Magnet AXIOM). | Allows for comparative analysis to identify tool-specific errors or omissions, a key validation practice [1]. |

| Forensic Image Files (.E01, .dd) | Bit-for-bit copies of digital storage media. | Serve as the standardized, pristine input for all tool testing and validation experiments [23]. |

| Known Test Datasets | Curated datasets with pre-identified artifacts and known answers. | The "control group" for testing and validating forensic tools and methods against expected results [1]. |

| Hex Editors | Software to view and manipulate raw hexadecimal data of a file. | Enables manual verification of tool output by inspecting the raw data structure, bypassing tool interpretation [24]. |

| Standard Operating Procedure (SOP) Documents | Detailed, step-by-step protocols for forensic processes. | Ensures method validation by enforcing consistency, reproducibility, and adherence to best practices [22] [23]. |

Building a Dynamic Validation Framework: From Tool Testing to AI Interpretation

In digital forensics, validation is the fundamental process of testing and confirming that forensic techniques and tools yield accurate, reliable, and repeatable results. For researchers and professionals, establishing a rigorous validation protocol is critical for ensuring the scientific integrity and legal admissibility of digital evidence, especially given the rapid evolution of digital technologies and tools [1].

A comprehensive validation framework encompasses three distinct but interconnected components:

- Tool Validation: Ensuring forensic software or hardware performs as intended without altering source data.

- Method Validation: Confirming investigative procedures produce consistent outcomes across different cases and practitioners.

- Analysis Validation: Evaluating whether interpreted data accurately reflects its true meaning and context [1].

The V3 Validation Framework: Verification, Analytical Validation, and Clinical Validation

The V3 Framework, developed by the Digital Medicine Society (DiMe) and adapted for forensic contexts, provides a structured approach to building evidence that supports the reliability and relevance of digital measures and tools [25]. This holistic framework is particularly valuable for validating novel tools incorporating artificial intelligence and machine learning.

Core Components of the V3 Framework

Table 1: The Three Components of the V3 Validation Framework

| Component | Definition | Primary Focus | Key Question |

|---|---|---|---|

| Verification | Ensures digital technologies accurately capture and store raw data [25]. | Technical performance of data acquisition systems. | Does the tool correctly record and preserve the raw source data? |

| Analytical Validation | Assesses the precision and accuracy of algorithms that transform raw data into meaningful metrics [25]. | Data processing algorithms and their outputs. | Does the algorithm correctly and reliably process raw data into a meaningful output? |

| Clinical Validation | Confirms that digital measures accurately reflect the relevant biological or functional states for their intended context of use [25]. | Biological/functional relevance and real-world applicability. | Does the output accurately represent the real-world phenomenon it claims to measure? |

Essential Research Reagents and Tools

Table 2: Digital Forensics Tools and Their Functions in Validation Research

| Tool Name | Primary Function | Role in Validation |

|---|---|---|

| Autopsy | Digital forensics platform and graphical interface for comprehensive device analysis [26]. | Validates method reproducibility through timeline analysis, hash filtering, and keyword search capabilities. |

| Cellebrite UFED | Extracts and analyzes data from mobile devices, cloud services, and computers [26]. | Serves as a reference tool for cross-validation and tool output comparison. |

| Magnet AXIOM | Collects, analyzes, and reports evidence from multiple digital sources [26]. | Enables validation of analytical workflows across different data types and sources. |

| Bulk Extractor | Scans files, directories, or disk images to extract specific information without parsing file systems [26]. | Provides independent verification of data extraction completeness and accuracy. |

| FTK Imager | Creates forensic images of digital media while preserving original evidence integrity [26]. | Establishes baseline for tool verification by ensuring evidence integrity before analysis. |

| ExifTool | Reads, writes, and edits metadata in various file types [26]. | Validates metadata extraction and interpretation across different file formats. |

| X-Ways Forensics | Analyzes file systems, individual files, and disk images with support for multiple file systems [26]. | Enables cross-tool validation through its support for diverse file systems and hashing functions. |

Troubleshooting Guides and FAQs

Tool Validation Issues

Q: How do I handle inconsistent results between different forensic tools analyzing the same evidence?

A: Inconsistent tool outputs indicate a potential tool validation failure. Follow this protocol:

- Establish a baseline: Use a forensic imager like FTK Imager to create a verified bit-for-bit copy of the evidence [26].

- Calculate hash values (MD5, SHA-256) for the evidence before and after imaging to confirm data integrity [1].

- Run controlled tests with known datasets on both tools to identify parsing discrepancies.

- Document all variations including software versions, operating environments, and configuration settings [1].

- Escalate to vendor support with detailed documentation of the inconsistencies for investigation.

Q: What should I do when a tool update breaks existing validation?

A: Tool updates require revalidation:

- Maintain previous versions of tools alongside new versions during transition periods.

- Perform comparative testing using standardized test cases with both versions.

- Document version-specific behaviors and update validation protocols accordingly.

- Implement continuous validation practices where tools are regularly tested against benchmark datasets [1].

Method Validation Challenges

Q: How can I ensure my analytical methods remain valid when dealing with encrypted applications?

A: Encryption challenges require method adaptation:

- Document method limitations explicitly when encryption prevents complete data access.

- Focus on accessible artifacts such as metadata, cache files, and behavioral data.

- Use multiple complementary tools to extract and correlate available evidence.

- Validate against known scenarios where ground truth is established to verify method effectiveness on accessible data points.

- Peer review methods to ensure they meet current professional standards despite limitations [1].

Q: What is the proper response when quality control checks fail during method validation?

A: Failed QC checks require immediate action:

- Stop all analytical work using the method until the issue is resolved.

- Investigate root causes including reagent integrity, instrument calibration, analyst competency, and environmental factors.

- Implement corrective actions based on root cause analysis.

- Revalidate the method after corrections to demonstrate restored performance.

- Document everything including the failure, investigation, corrective actions, and revalidation results.

Analysis Validation Problems

Q: How should I address potential AI algorithm "black box" issues in newer forensic tools?

A: Unexplainable AI outputs require rigorous validation:

- Demand transparency from vendors about training data, algorithms, and potential biases.

- Conduct ground truth testing where algorithm outputs are compared against manually verified results.

- Establish known error rates for the specific tool and context of use [1].

- Use alternative methods to verify AI-generated findings rather than relying solely on automated results [1].

- Document all verification steps to demonstrate due diligence in addressing black box limitations.

Q: What steps are necessary when digital evidence authenticity is challenged due to deepfake potential?

A: Deepfake challenges require enhanced validation protocols:

- Implement deepfake detection tools that identify subtle inconsistencies in media files.

- Use cryptographic verification where possible (digital signatures, blockchain timestamps).

- Corroborate with multiple evidence sources to establish consistency across different data types.

- Document chain of custody meticulously to eliminate opportunities for evidence tampering.

- Consult specialized tools for media authentication and maintain expertise in emerging manipulation techniques [2].

Case Examples: Validation Failures and Solutions

Case Study 1: Florida vs. Casey Anthony (2011)

Problem: A digital forensics expert initially testified that 84 searches for "chloroform" had been conducted on the Anthony family computer, suggesting extensive planning. This number was later challenged through rigorous validation [1].

Validation Solution: Defense experts forensically validated the actual search data and discovered the forensic software had grossly overstated the number of searches. Their analysis confirmed only a single instance of the search term had occurred [1].

Lesson: Never trust tool outputs without independent validation. Always verify critical findings through multiple methods and tools.

Case Study 2: Massachusetts vs. Karen Read (2025)

Problem: Mobile device timestamps and data artifacts required careful interpretation as operating system logs could be misleading without proper context [1].

Validation Solution: Cellebrite Senior Digital Intelligence Expert Ian Whiffin conducted tests across multiple devices to ensure the accuracy of his conclusions, demonstrating the necessity of thorough validation processes in forensic analysis [1].

Lesson: Context is critical in digital forensics. Validate tool outputs against known device behaviors and environmental factors.

Core Principles of Forensic Validation

Regardless of the specific tools or methods being validated, all forensic validation protocols should adhere to these core principles [1]:

- Reproducibility: Results must be repeatable by other qualified professionals using the same method.

- Transparency: All procedures, software versions, logs, and chain-of-custody records must be thoroughly documented.

- Error Rate Awareness: Forensic methods should have known error rates that can be disclosed in reports and during testimony.

- Peer Review: Validation processes should be reviewed by the broader forensic community.

- Continuous Validation: Because technology evolves rapidly, tools and methods must be frequently revalidated.

Frequently Asked Questions (FAQs)

Q1: Why can't I just rely on the results from a single, reputable digital forensics tool? Digital forensics tools, while sophisticated, are not infallible. They can suffer from parsing errors, software bugs, or unsupported data formats [27]. Relying on a single tool introduces the risk of basing critical conclusions on inaccurate or misleading data. Using multiple tools to corroborate findings acts as a quality control measure, ensuring that the results are consistent and reliable, which is a cornerstone of scientific and legal integrity [27] [1].

Q2: A hash verification failed during my evidence acquisition. What does this mean and what should I do? A failed hash verification means that the digital fingerprint of your copy does not match the original evidence. This indicates that the data was altered during the acquisition process, compromising its integrity [28] [29]. You must not proceed with analysis on this compromised copy.

- Action Plan:

- Stop and Document: Immediately halt the process and document the error.

- Check the Hardware: Investigate potential hardware issues, such as a faulty write-blocker or bad sectors on the source drive.

- Re-acquire the Evidence: Repeat the acquisition process using different hardware or a different tool if possible. Continue until you achieve a matching hash value.

Q3: How do I create a known-data set for testing my forensic tools? A known-data set is a curated collection of files with documented content and properties, used as a ground truth for validation.

- Methodology:

- Define Scope: Assemble a set of files that represent the data types you commonly encounter (e.g., documents, images, databases, application-specific files).

- Generate Baseline Hashes: Calculate and record the hash values (using SHA-256 or SHA-3) for every file in this set. This is your "known good" baseline [28] [29].

- Document Properties: Record other metadata, such as file sizes, timestamps, and the presence of specific keywords or data artifacts.

- Test Tool Output: Run your forensic tool against this known set. Compare the tool's reported hashes, recovered files, and parsed data against your baseline. Any discrepancy requires investigation [1].

Q4: Two different tools are reporting different timestamps for the same system event. How can I determine which is correct? This is a classic scenario for cross-tool corroboration. Discrepancies often arise from how tools interpret underlying data structures or time zone settings [27].

- Troubleshooting Guide:

- Consult a Third Tool: Introduce a third, forensically sound tool to analyze the same data. The result that appears in two out of three tools is more likely to be correct.

- Validate Against System Logs: Examine other system artifacts or logs that might record the same event to see which timestamp aligns.

- Check Time Zone Configurations: Ensure all tools are configured with the same time zone settings (e.g., UTC vs. local time) for the analysis [27].

- Research the Artifact: Investigate the technical documentation for the specific database or log file from which the timestamp was extracted to understand how the time value is stored [27].

Technical Guides & Experimental Protocols

Guide: Implementing Cross-Tool Corroboration

Cross-tool corroboration is the practice of verifying digital evidence by analyzing it with multiple independent forensic tools and comparing the results [27] [1].

Detailed Methodology:

- Evidence Acquisition: Create a forensic image (e.g.,

.E01or.ddfile) of the digital evidence. Verify the integrity of this image with a hash value (e.g., SHA-256) before proceeding [28]. - Tool Selection: Select at least two forensically recognized tools from different vendors (e.g., Magnet AXIOM, Autopsy, Cellebrite Physical Analyzer) for analysis [30].

- Parallel Processing: Load the forensic image into each selected tool. Process the data using similar parameters.

- Data Point Comparison: Systematically compare the outputs for key data points. The table below outlines critical artifacts to check.

Table: Key Artifacts for Cross-Tool Corroboration

| Artifact Category | Specific Data Points to Compare | Common Sources of Discrepancy |

|---|---|---|

| File System Metadata | File creation, modification, access timestamps; deleted file records [27]. | Different interpretations of $STANDARD_INFORMATION vs. $FILE_NAME attributes in NTFS. |

| Application Data | Parsed browser history, chat messages (WhatsApp, Signal), social media activity [30]. | Tools may have different parsers for evolving application database schemas. |

| Location Data | GPS coordinates, Wi-Fi access point locations, timestamps of location events [27]. | Misinterpretation of carved data (see diagram below) versus parsed data from known databases [27]. |

| System Events | User logins, application executions, shutdown times [27]. | Variances in decoding Windows Event Logs or system cache files. |

- Resolve Discrepancies: If inconsistencies are found, use the troubleshooting steps outlined in FAQ A4. Document the resolution process thoroughly.

- Report Findings: The final report should transparently state which tools were used, note any discrepancies found, and explain how they were resolved, reinforcing the reliability of the final conclusions [1].

Diagram: Cross-Tool Corroboration Workflow

Guide: Hash Verification for Evidence Integrity

Hash verification uses cryptographic algorithms to generate a unique digital fingerprint (hash value) for a set of data. This ensures the data has not been altered from its original state [28] [29].

Detailed Methodology:

- Algorithm Selection: Prefer robust, modern algorithms like SHA-256 or SHA-3. Avoid deprecated algorithms like MD5 and SHA-1 for critical applications due to known vulnerabilities [28] [29].

- Initial Hash Generation: After creating a forensic image of the original evidence, use a trusted tool to calculate its hash value. This value must be documented and preserved.

- Verification Hash Generation: Each time the evidence is accessed or copied for analysis, calculate a new hash value of the copy.

- Comparison: Compare the verification hash with the initial hash. If the values are identical, the evidence is intact. The table below compares common hashing algorithms.

Table: Comparison of Common Hashing Algorithms

| Algorithm | Output Length (bits) | Security Status | Recommended Use |

|---|---|---|---|

| MD5 | 128 | Deprecated (vulnerable to collisions) | Not recommended for new systems [28]. |

| SHA-1 | 160 | Deprecated (vulnerable to collisions) | Inadequate for modern cryptography [28]. |

| SHA-256 | 256 | Secure (part of SHA-2 family) | Recommended for current applications; industry standard [28] [29]. |

| SHA-512 | 512 | Secure (part of SHA-2 family) | Recommended for heightened security needs [29]. |

| SHA-3 | Variable | Secure (newest standard) | Recommended for future-proofing applications [29]. |

Diagram: Hash Verification Process for Data Integrity

Guide: Known-Data Set Testing for Tool Validation

Known-data set testing, also referred to as validation data set testing in machine learning, involves using a curated set of data with a known "ground truth" to evaluate the performance and accuracy of a forensic tool or method [31] [1].

Detailed Methodology:

- Data Set Curation: Create a controlled data environment, such as a clean virtual machine or a storage device. Populate it with files of various types (documents, images, databases). Introduce specific, documented user activities (e.g., web browsing, file deletions, application use) and known data artifacts.

- Establish Ground Truth: Before using the tool under test, thoroughly document the entire known-data set. This includes:

- A complete file listing with paths.

- SHA-256 hashes of all files.

- A log of all performed activities with correct timestamps.

- A list of specific keywords and data artifacts known to be present.

- Tool Processing: Process the known-data set with the forensic tool you are validating. Use standard procedures to image the drive and analyze its contents.

- Output Analysis: Compare the tool's output against your ground truth. Key aspects to validate include:

- Documentation and Re-validation: Document any false positives (data reported that isn't present) or false negatives (missing known data). This process is not one-time; it must be repeated when the tool is updated or when new data types need to be supported [1].

Diagram: Known-Data Set Testing Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Digital Forensics Tools and Functions for Validation

| Tool Name | Primary Function | Role in Validation Protocols |

|---|---|---|

| Magnet AXIOM | Comprehensive digital forensics suite for computers, mobile devices, and cloud data [30]. | Used in cross-tool corroboration to verify artifacts recovered by other tools. Its AI-powered categorization can be tested with known-data sets. |

| Cellebrite Physical Analyzer | Advanced mobile forensics tool for data extraction and decoding from smartphones and tablets [30]. | Critical for validating mobile artifact parsing. Known-data sets on mobile devices test its recovery of deleted data from new OS versions. |

| Autopsy | Open-source digital forensics platform with a user-friendly interface [30]. | An accessible tool for researchers to perform cross-tool checks and validate findings from commercial tools using the same evidence image. |

| Volatility | Open-source framework for advanced memory (RAM) forensics analysis [30]. | Used to validate the presence of runtime artifacts and volatile system state against disk-based evidence. |

| FTK Imager | Forensic imaging and preview tool by Exterro [30]. | A core reagent for creating forensic images and verifying their integrity via hash values before any analysis begins. |

| Wireshark | Network protocol analyzer for deep packet inspection [30]. | Used to validate network-related artifacts found on a endpoint device by comparing them against actual network traffic captures. |

FAQs on AI and Machine Learning in Digital Forensics

Q1: Why is the "black box" nature of some AI models a problem for digital forensics? The "black box" problem refers to the inability to understand how a complex AI model arrives at a specific decision or prediction. In digital forensics, this is critical because courts require evidence to be reliable and its origins understandable. Forensic conclusions must withstand legal scrutiny under standards like the Daubert Standard, which evaluates the scientific validity and known error rates of methods. Using an unexplainable AI output can lead to evidence being excluded or miscarriages of justice [1].

Q2: What are the most common interpretability methods for machine learning models? The most common model-agnostic methods (applicable to any AI model) are:

- SHAP (SHapley Additive exPlanations): Based on game theory, it assigns each feature in a dataset an importance value for a particular prediction [33].

- LIME (Local Interpretable Model-agnostic Explanations): Approximates a complex "black box" model with a simpler, interpretable model (like a linear model) around a specific prediction to explain it [34] [33].

- Anchors: Provides explanations through high-precision, easy-to-understand "if-then" rules that "anchor" the prediction, meaning changes to other features won't affect the outcome [33].

Q3: How can I validate the output of an AI-based forensic tool? Validation ensures tools are accurate, reliable, and legally admissible. The process should include [1]:

- Tool Validation: Confirming the software/hardware performs as intended without altering source data.

- Method Validation: Verifying that forensic procedures produce consistent outcomes across different cases and practitioners.

- Analysis Validation: Ensuring the interpreted data accurately reflects the true meaning and context of the evidence. Key practices involve using cryptographic hashing to confirm data integrity, comparing tool outputs against known datasets, and cross-validating results with multiple tools.

Q4: Our AI tool flagged an image as synthetic. What steps should we take? An automated flag is a starting point, not a conclusion. Your protocol should include:

- Human Review: A certified forensic specialist must manually validate the finding [35].

- Provenance Analysis: Check metadata for creation logs, device identifiers, or AI model parameters that indicate synthetic origin [35].

- Cross-Tool Validation: Use a different, independently validated tool to analyze the same image.

- Contextual Correlation: Correlate the finding with other digital artifacts (e.g., browser history, application logs) to build a holistic timeline [4].

Q5: What is the role of a human expert when using automated AI forensics? AI serves as a powerful assistant, but the human expert is indispensable. The expert is responsible for [35]:

- Oversight and Validation: Providing final judgment on AI-generated alerts and conclusions.

- Contextual Understanding: Interpreting findings within the broader context of the investigation.

- Legal Testimony: Explaining and defending the methodology and findings in court based on their "knowledge, skill, experience, training, or education."

Interpretability Methods at a Glance

The table below summarizes three key interpretability methods, helping you select the right approach for your validation needs.

| Method | Core Principle | Best For | Key Advantages | Key Limitations |

|---|---|---|---|---|

| SHAP (SHapley Additive exPlanations) | Assigns each feature a contribution value for a prediction based on game theory [33]. | Global & local explanation; understanding overall feature importance. | Solid theoretical foundation; provides contrastive explanations [33]. | Computationally expensive for non-tree models [33]. |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates a local, interpretable model to approximate the black-box model's prediction for a single instance [34] [33]. | Understanding individual predictions. | Easy to use; provides a fidelity measure for explanation reliability [33]. | Explanations can be unstable for very similar data points [34] [33]. |

| Anchors | Generates high-precision "if-then" rules that anchor a prediction [33]. | Creating human-readable, rule-based explanations for specific cases. | Explanations are very easy to understand; highly efficient [33]. | Runtime depends on model performance; settings require configuration [33]. |

Experimental Protocol for Validating AI Forensic Tools

This detailed protocol provides a methodological framework for researchers to validate the outputs of AI-driven forensic tools, ensuring scientific rigor and legal defensibility.

1. Hypothesis and Scope Definition

- Objective: Clearly state what the AI tool is supposed to detect (e.g., "This tool accurately identifies AI-generated deepfake videos.").

- Constraints: Define the tool's operational limits, such as supported file formats, required metadata, and expected error rates.

2. Creation of a Controlled Validation Dataset

- Curation: Assemble a dataset with known inputs and expected outputs. This must include:

- Positive Samples: Confirmed instances of the target (e.g., verified deepfake videos).

- Negative Samples: Confirmed authentic media.

- Ambiguous Samples: Challenging cases to test the tool's limits.

- Data Integrity: Use cryptographic hashes (e.g., SHA-256) to ensure the dataset remains unaltered throughout testing [1].

3. Execution of Tool Testing

- Blinded Analysis: To prevent bias, the analyst should run the tool without knowing which samples are positive or negative.

- Process Documentation: Meticulously record the tool's name, version, and all settings and parameters used during analysis [1].

4. Interpretation and Cross-Validation

- Primary Analysis: Record the tool's raw outputs (e.g., "85% probability of being synthetic").

- Cross-Validation: Process the same dataset with a different, independently validated tool or method to identify discrepancies [1].

- Interpretability Application: Use methods like SHAP or LIME on a subset of results to understand which features (e.g., pixel patterns, metadata) influenced the AI's decision [34] [33].

5. Statistical and Holistic Review

- Performance Metrics: Calculate standard metrics (Accuracy, Precision, Recall, F1-Score) to quantify performance.

- Error Analysis: Manually investigate all false positives and false negatives to identify patterns or weaknesses in the AI model.

- Contextualization: Integrate findings with other evidence to assess the tool's practical utility in a real investigation [4].

The Digital Forensic Scientist's Toolkit

This table lists essential "research reagents" and their functions for conducting rigorous AI validation in a digital forensics context.

| Tool / Resource | Primary Function in Validation |

|---|---|

| Interpretability Libraries (SHAP, LIME) | Provides model-agnostic functions to "open" the black box and explain individual AI predictions [33]. |

| Validated Forensic Suites (e.g., Belkasoft X, Cellebrite) | Industry-standard tools for acquiring and analyzing digital evidence; serve as a benchmark for cross-validation [4] [1]. |

| Cryptographic Hashing Tools | Generate unique digital fingerprints (hashes) for data to ensure integrity and prove evidence has not been altered from collection through analysis [1]. |

| Controlled Datasets | Act as the "ground truth" for testing and calibrating AI tools, containing known positive, negative, and edge-case samples. |

| Legal Standards Framework (Daubert, FRE 901) | Provides the legal criteria for evaluating the admissibility of scientific evidence, guiding the entire validation methodology [35] [1]. |

Workflow for AI Output Validation

The diagram below outlines a logical, step-by-step workflow for validating a finding from an AI-based forensic tool, incorporating cross-validation and human expertise.

Troubleshooting Common Scenarios

Scenario 1: Inconsistent results between two different AI forensic tools.

- Investigation Steps:

- Verify Input Consistency: Ensure both tools analyzed the exact same evidence file (use hash verification) [1].

- Check Tool Versions: An outdated tool version may lack the latest detection models. Document all versions [1].

- Analyze with a Third Method: Use a fundamental, non-AI technique (e.g., manual metadata examination) to break the tie.

- Leverage Interpretability: Run SHAP or LIME on both tools' outputs to see if they are focusing on different features, which explains the discrepancy [33].

Scenario 2: An AI model's explanation (e.g., from LIME) is unstable.

- Problem: Slight changes in input data lead to vastly different explanations.

- Solutions:

- Use a More Robust Method: Consider switching to SHAP, which has a more solid theoretical foundation and may offer more stable explanations for your model type [33].

- Aggregate Explanations: Don't rely on a single explanation. Run the interpretability method multiple times with slight perturbations and look for consistently important features.

- Validate the Validator: Ensure the dataset used to generate explanations (for LIME) is representative and of high quality.

Developing and Using Custom Scripts and Open-Source Tools for Independent Verification

Frequently Asked Questions (FAQs)

1. What are the primary benefits of using open-source tools for verification in digital forensics? Open-source tools offer significant advantages, including cost-effectiveness due to no licensing fees, high customizability to fit specific research needs, and transparency into their inner workings which is crucial for validation and peer review [36]. Furthermore, the collaborative nature of their development often leads to rapid problem-solving and innovation [36].

2. How can I verify the results from an AI-driven forensic tool, like an offline LLM? Independent verification of AI tools is critical. For LLMs like BelkaGPT, a key methodology is to ground all AI outputs in actual case artifacts [4]. You should cross-reference the AI's findings—such as detected topics or emotional tones in communications—with the original, raw data (e.g., SMS, emails) [4]. Establishing a baseline with known data and comparing the tool's output against manual analysis or other tools can further validate its accuracy.

3. Our investigation involves data from a cloud application. What is a common method for acquiring this data for verification? A prevalent technique is to use tools that simulate application clients via their official APIs [4]. By providing valid user account credentials (e.g., for legal access), these tools can download user data from servers of applications like Facebook or Telegram. The server perceives this as a legitimate user request, which can help circumvent certain jurisdictional and technical barriers to data acquisition [2] [4].

4. What are the best practices for ensuring the integrity of evidence when using custom scripts? Always work on a forensic copy of the original data. Your custom scripts should incorporate robust logging to document every action performed on the data. Furthermore, using checksums (e.g., SHA-256) at every stage of processing—before, during, and after analysis—provides a verifiable chain of integrity for the evidence [4].

5. We are encountering sophisticated anti-forensic techniques. What verification strategies can we employ? To counter anti-forensics, employ a layered verification approach. Use advanced file recovery tools to retrieve deleted data and perform deep metadata analysis to detect inconsistencies that indicate tampering [4]. For data hiding techniques like steganography, utilize specialized counter-steganography tools to uncover information concealed within image or other files [4].

6. How can we efficiently handle the verification of evidence from a large volume of IoT devices? Automation is essential. Implement analysis presets in your forensic tools tailored to different IoT device types to streamline repetitive tasks [4]. Establish standardized workflows for evidence extraction and processing to ensure consistency and reduce human error across the large dataset [4].

Troubleshooting Common Scenarios

Scenario 1: Inconsistent Output from an Open-Source Analysis Script

- Problem: A custom Python script for parsing log files yields different results on different machines, threatening the reproducibility of your experiment.

- Investigation & Solution:

- Environment Check: First, verify that all dependencies (e.g., Python version, library versions like

pandasorlxml) are identical across environments. Use virtual environments and dependency files (e.g.,requirements.txt) to lock the versions. - Data Input Verification: Confirm that the input log files are exact binary copies. Recaculate their hash values (MD5, SHA-1) and compare them to ensure no corruption or difference.

- Code Review: Check for paths or operations in the script that are not platform-agnostic (e.g., hard-coded Windows paths used on a Linux system).

- Environment Check: First, verify that all dependencies (e.g., Python version, library versions like

- Preventive Protocol: Containerize the script and its environment using Docker to guarantee consistent execution across all research setups [36].

Scenario 2: Failed Acquisition from a Mobile Device with Advanced Encryption

- Problem: A modern smartphone resists standard data extraction methods, preventing access to critical evidence for verification.

- Investigation & Solution:

- Methodology Assessment: Do not rely on a single acquisition method. Explore alternative methods supported by your forensic platform, such as logical, file system, physical, and cloud extractions [4].

- Tool Capability Check: Ensure your forensic tools are updated to the latest version, as they frequently add support for new device models and security patches.

- Brute-Force Considerations: In a secure, controlled lab environment, some tools offer brute-force unlocking capabilities. These should only be attempted in a manner that minimizes the risk of data corruption and is legally compliant [4].

- Preventive Protocol: Maintain a toolkit with multiple forensic acquisition tools and stay informed on the latest bypass techniques through continuous training [4].

Scenario 3: Suspected Deepfake Media in Evidence

- Problem: A video file submitted as evidence is suspected of being a deepfake, potentially compromising the investigation's integrity.

- Investigation & Solution:

- Tool-Based Detection: Utilize specialized deepfake detection software that can identify subtle AI-generated artifacts. These tools analyze inconsistencies in video frames, audio frequencies, and pixel patterns that are invisible to the human eye [2].

- Metadata & Provenance Analysis: Scrutinize the file's metadata for signs of manipulation or editing software. Establish the file's provenance—its origin and chain of custody—to identify any gaps or anomalies.

- Multi-Tool Correlation: Do not rely on a single tool's output. Verify findings across multiple detection platforms and correlate them with other digital evidence from the case.

- Preventive Protocol: Develop a standard operating procedure (SOP) for the automatic screening of all image and video evidence through a trusted deepfake detection tool as part of the initial evidence intake process [2].

Experimental Protocols for Tool Verification

Protocol 1: Validation of an AI-Powered Evidence Triage Tool

- Objective: To independently verify the accuracy and reliability of an AI tool (e.g., BelkaGPT) in identifying relevant information from a large text corpus.

- Methodology:

- Create a Ground Truth Dataset: Curate a dataset of text artifacts (e.g., emails, chats) where the relevant information (e.g., specific keywords, names, transaction amounts) has been manually and meticulously identified and tagged.

- Execute Tool Analysis: Process the ground truth dataset with the AI tool, using its standard analysis functions (e.g., topic detection, entity extraction).

- Compare and Calculate Metrics: Compare the tool's output against the manual ground truth. Calculate standard metrics such as: